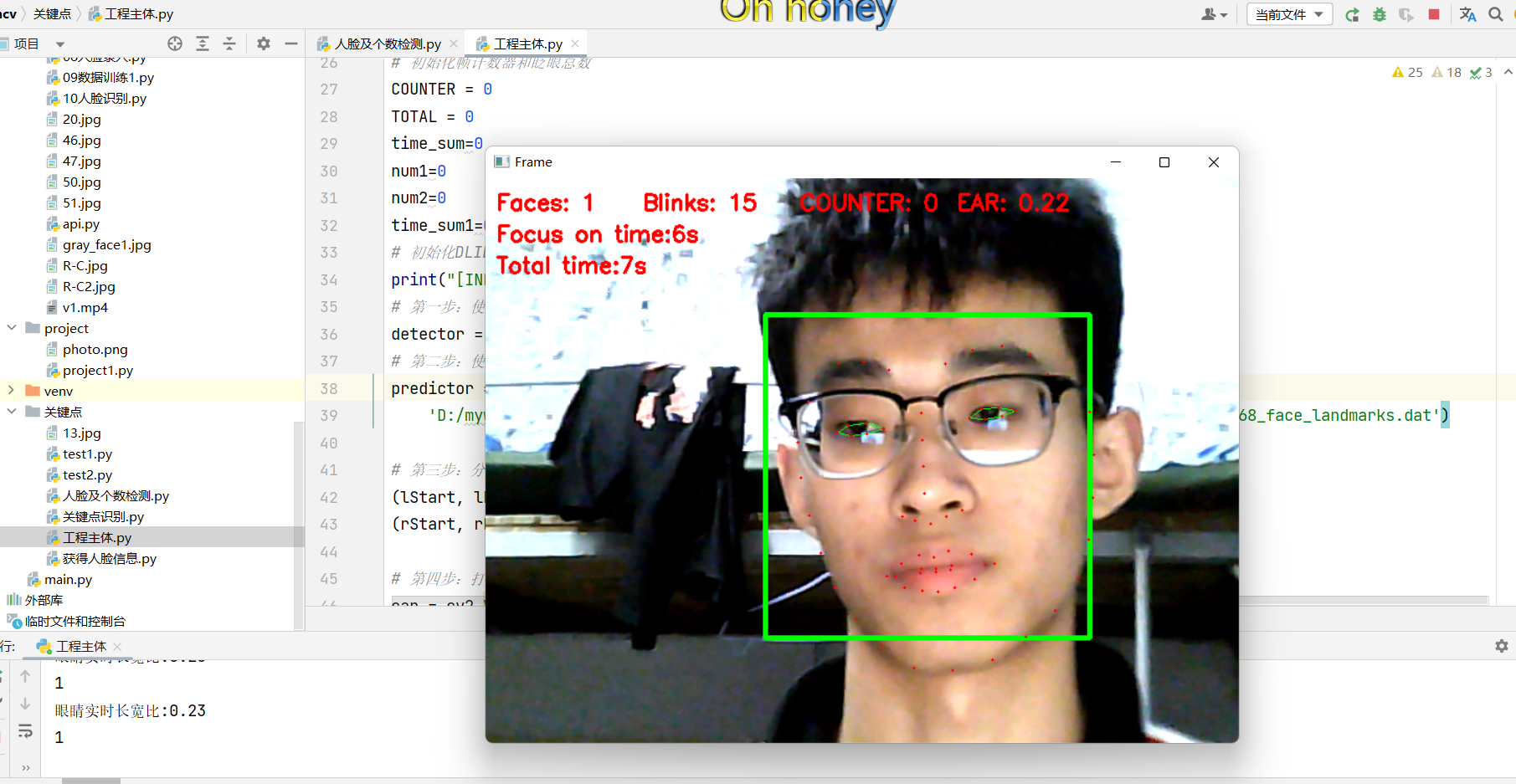

今日总结-dlib实现人脸关键点识别并进行专注度时间计算

人脸关键点识别及专注度监测分析效果如上:

代码如下:

from scipy.spatial import distance as dist from imutils import face_utils import imutils import dlib import cv2 import time time_start = time.time() def eye_aspect_ratio(eye): # 垂直眼标志(X,Y)坐标 A = dist.euclidean(eye[1], eye[5]) # 计算两个集合之间的欧式距离 B = dist.euclidean(eye[2], eye[4]) # 计算水平之间的欧几里得距离 # 水平眼标志(X,Y)坐标 C = dist.euclidean(eye[0], eye[3]) # 眼睛长宽比的计算 ear = (A + B) / (2.0 * C) # 返回眼睛的长宽比 return ear # 定义两个常数 # 眼睛长宽比 # 闪烁阈值 EYE_AR_THRESH = 0.2 EYE_AR_CONSEC_FRAMES = 3 # 初始化帧计数器和眨眼总数 COUNTER = 0 TOTAL = 0 time_sum=0 num1=0 num2=0 time_sum1=0 # 初始化DLIB的人脸检测器(HOG),然后创建面部标志物预测 print("[INFO] loading facial landmark predictor...") # 第一步:使用dlib.get_frontal_face_detector() 获得脸部位置检测器 detector = dlib.get_frontal_face_detector() # 第二步:使用dlib.shape_predictor获得脸部特征位置检测器 predictor = dlib.shape_predictor( 'D:/myworkspace/fatigue_detecting-master/fatigue_detecting-master/model/shape_predictor_68_face_landmarks.dat') # 第三步:分别获取左右眼面部标志的索引 (lStart, lEnd) = face_utils.FACIAL_LANDMARKS_IDXS["left_eye"] (rStart, rEnd) = face_utils.FACIAL_LANDMARKS_IDXS["right_eye"] # 第四步:打开cv2 本地摄像头 cap = cv2.VideoCapture(0) # 从视频流循环帧 while True: # 第五步:进行循环,读取图片,并对图片做维度扩大,并进灰度化 ret, frame = cap.read() frame = imutils.resize(frame, width=720) gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY) # 第六步:使用detector(gray, 0) 进行脸部位置检测 rects = detector(gray, 0) num2 = len(rects) num1 += 1 if (num2 ==0): TOTAL += 1 # 第七步:循环脸部位置信息,使用predictor(gray, rect)获得脸部特征位置的信息 for rect in rects: shape = predictor(gray, rect) # 第八步:将脸部特征信息转换为数组array的格式 shape = face_utils.shape_to_np(shape) # 第九步:提取左眼和右眼坐标 leftEye = shape[lStart:lEnd] rightEye = shape[rStart:rEnd] # 第十步:构造函数计算左右眼的EAR值,使用平均值作为最终的EAR leftEAR = eye_aspect_ratio(leftEye) rightEAR = eye_aspect_ratio(rightEye) ear = (leftEAR + rightEAR) / 2.0 # 第十一步:使用cv2.convexHull获得凸包位置,使用drawContours画出轮廓位置进行画图操作 leftEyeHull = cv2.convexHull(leftEye) rightEyeHull = cv2.convexHull(rightEye) cv2.drawContours(frame, [leftEyeHull], -1, (0, 255, 0), 1) cv2.drawContours(frame, [rightEyeHull], -1, (0, 255, 0), 1) # 第十二步:进行画图操作,用矩形框标注人脸 left = rect.left() top = rect.top() right = rect.right() bottom = rect.bottom() cv2.rectangle(frame, (left, top), (right, bottom), (0, 255, 0), 3) ''' 分别计算左眼和右眼的评分求平均作为最终的评分,如果小于阈值,则加1,如果连续3次都小于阈值,则表示进行了一次眨眼活动 ''' # 第十三步:循环,满足条件的,眨眼次数+1 if ear < EYE_AR_THRESH: # 眼睛长宽比:0.2 COUNTER += 1 else: # 如果连续3次都小于阈值,则表示进行了一次眨眼活动 if COUNTER >= EYE_AR_CONSEC_FRAMES: # 阈值:3 TOTAL += 5 # 重置眼帧计数器 COUNTER = 0 # 第十四步:进行画图操作,68个特征点标识 for (x, y) in shape: cv2.circle(frame, (x, y), 1, (0, 0, 255), -1) # 第十五步:进行画图操作,同时使用cv2.putText将眨眼次数进行显示 cv2.putText(frame, "Faces: {}".format(len(rects)), (10, 30), cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2) cv2.putText(frame, "Blinks: {}".format(TOTAL), (150, 30), cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2) cv2.putText(frame, "COUNTER: {}".format(COUNTER), (300, 30), cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2) cv2.putText(frame, "EAR: {:.2f}".format(ear), (450, 30), cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2) cv2.putText(frame, "Total time:" + str(int(time_sum))+"s", (10, 90), cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2) cv2.putText(frame,"Focus on time:"+str(int(time_sum1))+"s" , (10,60), cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2) print('眼睛实时长宽比:{:.2f} '.format(ear)) time_sum1=time_sum*(num1-TOTAL)/num1 print(num2) num2 = 0 cv2.imshow("Frame", frame) time_end = time.time() time_sum = time_end - time_start if cv2.waitKey(1) & 0xFF == ord('q'): break # 释放摄像头 release camera cap.release() # do a bit of cleanup cv2.destroyAllWindows()

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 震惊!C++程序真的从main开始吗?99%的程序员都答错了

· winform 绘制太阳,地球,月球 运作规律

· 【硬核科普】Trae如何「偷看」你的代码?零基础破解AI编程运行原理

· 上周热点回顾(3.3-3.9)

· 超详细:普通电脑也行Windows部署deepseek R1训练数据并当服务器共享给他人