作业缘由:https://edu.cnblogs.com/campus/gzcc/GZCC-16SE1/homework/3159

爬虫对象:权利的游戏

爬虫网址:https://movie.douban.com/subject/3016187/

为了防止爬取过程中ip被禁,我们需要设置一定的爬取间隔:

- import time

- time.sleep(5)

另外,还需要使用合理的user-agent模拟真实的浏览器去提取内容:

headers={ 'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/67.0.3396.99 Safari/537.36' }

# -*-coding:utf-8-*- import urllib.request from bs4 import BeautifulSoup def getHtml(url): """获取url页面""" headers = {'User-Agent':'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.94 Safari/537.36'} req = urllib.request.Request(url,headers=headers) req = urllib.request.urlopen(req) content = req.read().decode('utf-8') return content def getComment(url): """解析HTML页面""" html = getHtml(url) soupComment = BeautifulSoup(html, 'html.parser') comments = soupComment.findAll('span', 'short') onePageComments = [] for comment in comments: # print(comment.getText()+'\n') onePageComments.append(comment.getText()+'\n') return onePageComments if __name__ == '__main__': f = open('权利的游戏page10.txt', 'w', encoding='utf-8') for page in range(10): # 豆瓣爬取多页评论需要验证。 url = 'https://movie.douban.com/subject/3016187/comments?start=' + str(20*page) + '&limit=20&sort=new_score&status=P' print('第%s页的评论:' % (page+1)) print(url + '\n') for i in getComment(url): f.write(i) print(i) print('\n')

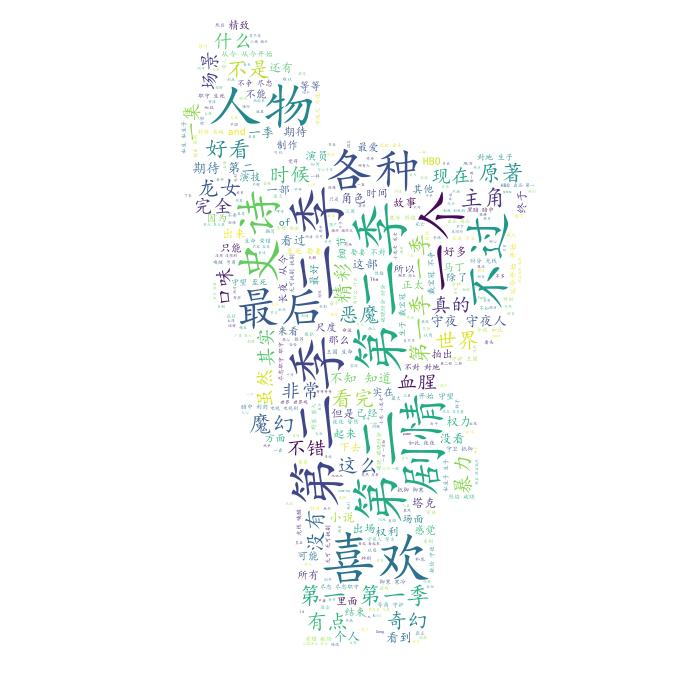

获取词云

#-*-coding:utf-8-*- import matplotlib.pyplot as plt from wordcloud import WordCloud from scipy.misc import imread import jieba text = open("权利的游戏page10.txt","rb").read() #结巴分词 wordlist = jieba.cut(text,cut_all=True) wl = " ".join(wordlist) #print(wl)#输出分词之后的txt #把分词后的txt写入文本文件 #fenciTxt = open("fenciHou.txt","w+") #fenciTxt.writelines(wl) #fenciTxt.close() #设置词云 wc = WordCloud(background_color = "white", #设置背景颜色 mask = imread('quan.jpg'), #设置背景图片 max_words = 2000, #设置最大显示的字数 stopwords = ["的", "这种", "这样", "还是", "就是", "这个"], #设置停用词 font_path = "C:\Windows\Fonts\simkai.ttf", # 设置为楷体 常规 #设置中文字体,使得词云可以显示(词云默认字体是“DroidSansMono.ttf字体库”,不支持中文) max_font_size = 60, #设置字体最大值 random_state = 30, #设置有多少种随机生成状态,即有多少种配色方案 ) myword = wc.generate(wl)#生成词云 wc.to_file('result.jpg')