阿里云ECS搭建kubernetes1.11

环境信息

说明

1、使用kubeadm安装集群

虚拟机信息

|

hostname |

memory |

cpu |

disk |

role |

|

node1.com |

4G |

2C |

vda20G vdb20G |

master |

|

node1.com |

4G |

2C |

vda20G vdb20G |

node |

其中vda为系统盘,vdb为docker storage,用于存储容器和镜像

配置主机名

#以下在两个节点执行

hostnamectl set-hostname node1.com hostnamectl set-hostname node2.com

配置阿里云k8s yum repo

#以下在两个节点执行

cat <<EOF > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF yum update -y yum upgrade yum clean all yum makecache

安装docker

#在两个节点执行

yum install -y docker

配置docker-storage为deviceMapper

#在两个节点执行

#创建pv pvcreate /dev/vdb #使用上述创建的pv创建docker-vg vgcreate docker-vg /dev/vdb #配置docker使用docker-vg作为后端存储 echo VG=docker-vg > /etc/sysconfig/docker-storage-setup docker-storage-setup #将docker-vg的docker-pool这个lv扩展到100% lvextend -l 100%VG /dev/docker-vg/docker-pool #启动docker并设置开机自启 systemctl start docker systemctl enable docker

安装其他需要的软件

#以下在两个节点执行

yum install -y bridge-utils

关闭防火墙、swap和selinux

#以下在两个节点执行

systemctl stop firewalld && systemctl disable firewalld swapoff -a setenforce 0

各节点配置主机名解析

#在两个节点执行

cat <<EOF >> /etc/hosts 172.31.2.130 node1.com 172.31.2.131 node2.com EOF

安装kubelet kubeadm kubectl

#以下在master执行

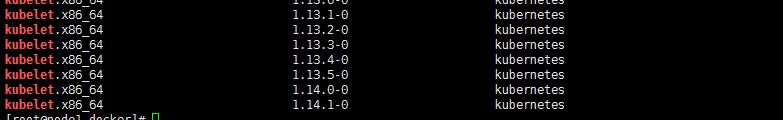

#查看yum repo中kubelet可用的版本 yum list --showduplicates | grep kubelet

#安装 kubelet-1.11.1 、kubeadm-1.11.1 和kubectl-1.11.1

yum install -y kubelet-1.11.1 yum install -y kubectl-1.11.1

yum install -y kubeadm-1.11.1

由于安装kubeadm会自动安装kubectl、kubelet,安装kubeadm-1.11.1依赖安装的kubectl和kubelet版本并不是1.11.1,而是最新的

(1)可以将非1.11.1的组件通过yum remove再重新安装

(2)按照上述顺序先安装Kubectl-1.11.1和kubelet-1.11.1就没有问题

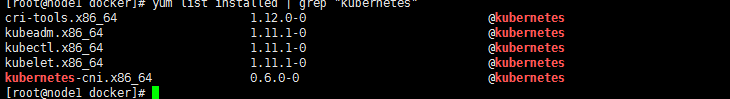

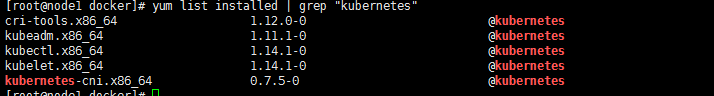

#查看上述安装是否是对应1.11版本

yum list installed | grep "kubernetes"

正确安装完之后如下所示

#配置kubelet开机启动

systemctl enable kubelet

拉取镜像

以下在master执行

docker pull mirrorgooglecontainers/kube-apiserver-amd64:v1.11.1 docker pull mirrorgooglecontainers/pause-amd64:3.1 docker pull mirrorgooglecontainers/kube-controller-manager-amd64:v1.11.1 docker pull mirrorgooglecontainers/kube-scheduler-amd64:v1.11.1 docker pull mirrorgooglecontainers/kube-proxy-amd64:v1.11.1 docker pull mirrorgooglecontainers/etcd-amd64:3.2.18 docker pull coredns/coredns:1.1.3 docker tag mirrorgooglecontainers/kube-apiserver-amd64:v1.11.1 k8s.gcr.io/kube-apiserver-amd64:v1.11.1 docker tag mirrorgooglecontainers/pause-amd64:3.1 k8s.gcr.io/pause:3.1 docker tag mirrorgooglecontainers/kube-controller-manager-amd64:v1.11.1 k8s.gcr.io/kube-controller-manager-amd64:v1.11.1 docker tag mirrorgooglecontainers/kube-scheduler-amd64:v1.11.1 k8s.gcr.io/kube-scheduler-amd64:v1.11.1 docker tag mirrorgooglecontainers/kube-proxy-amd64:v1.11.1 k8s.gcr.io/kube-proxy-amd64:v1.11.1 docker tag mirrorgooglecontainers/etcd-amd64:3.2.18 k8s.gcr.io/etcd-amd64:3.2.18 docker tag coredns/coredns:1.1.3 k8s.gcr.io/coredns:1.1.3

以下在node执行

docker pull coredns/coredns:1.1.3 docker pull mirrorgooglecontainers/pause-amd64:3.1 docker pull mirrorgooglecontainers/kube-proxy-amd64:v1.11.1 docker tag coredns/coredns:1.1.3 k8s.gcr.io/coredns:1.1.3 docker tag mirrorgooglecontainers/pause-amd64:3.1 k8s.gcr.io/pause:3.1 docker tag mirrorgooglecontainers/kube-proxy-amd64:v1.11.1 k8s.gcr.io/kube-proxy-amd64:v1.11.1

使用kubeadm初始化集群

#此处pod-network-cidr地址范围应与下面的flannel yaml中定义的一致

kubeadm init --kubernetes-version=v1.11.1 --pod-network-cidr=10.244.0.0/16

mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

加入计算节点到集群中

以下在需要加入集群的节点中执行

#获取加入集群需要使用的hash值 openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //' #获取加入集群需要使用的token值 kubeadm token list

#如果上述命令没有token,说明已过期,通过如下命令重新生成

kubeadm token create#使用kubeadm加入集群 kubeadm join node1.com:6443 --token <token> --discovery-token-ca-cert-hash sha256:<hash>

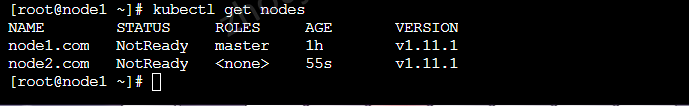

此时kubectl get nodes如下,因为还没有配置网络插件

配置Flannel网路插件

#新建kube-flannel.yaml文件 --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1beta1 metadata: name: flannel rules: - apiGroups: - "" resources: - pods verbs: - get - apiGroups: - "" resources: - nodes verbs: - list - watch - apiGroups: - "" resources: - nodes/status verbs: - patch --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1beta1 metadata: name: flannel roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: flannel subjects: - kind: ServiceAccount name: flannel namespace: kube-system --- apiVersion: v1 kind: ServiceAccount metadata: name: flannel namespace: kube-system --- kind: ConfigMap apiVersion: v1 metadata: name: kube-flannel-cfg namespace: kube-system labels: tier: node app: flannel data: cni-conf.json: | { "name": "cbr0", "plugins": [ { "type": "flannel", "delegate": { "hairpinMode": true, "isDefaultGateway": true } }, { "type": "portmap", "capabilities": { "portMappings": true } } ] } net-conf.json: | { "Network": "10.244.0.0/16", "Backend": { "Type": "vxlan" } } --- apiVersion: extensions/v1beta1 kind: DaemonSet metadata: name: kube-flannel-ds namespace: kube-system labels: tier: node app: flannel spec: template: metadata: labels: tier: node app: flannel spec: hostNetwork: true nodeSelector: beta.kubernetes.io/arch: amd64 tolerations: - key: node-role.kubernetes.io/master operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: registry.cn-shanghai.aliyuncs.com/gcr-k8s/flannel:v0.10.0-amd64 command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: registry.cn-shanghai.aliyuncs.com/gcr-k8s/flannel:v0.10.0-amd64 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr - --iface=eth0 resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: true env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg

#使用kubectl创建kube-flannel ds kubectl apply -f kube-flannel.yaml

#部署完成查看flannel pod和节点的状态

部署测试应用

#部署nginx deployment kubectl create -f https://kubernetes.io/docs/user-guide/nginx-deployment.yaml #expose deployment,类型为NodePort kubectl expose deployment nginx-deployment --type=NodePort

设置master参与调度

#取消master节点的taints污点属性

kubectl taint node node1.com node-role.kubernetes.io/master-

安装过程中遇到的问题

1、kubectl kubelet kubeadm的版本不一致导致安装失败

#通过一条命令yum install -y kubectl-1.11.1 kubelet-1.11.1 kubeadm-1.11.1 报依赖问题无法安装 #需要通过逐一使用yum进行安装 yum install -y kubelet-1.11.1 yum install -y kubectl-1.11.1 yum install -y kubeadm-1.11.1

#逐一安装之后,通过以下命令发现版本不是1.11.1 yum list installed | grep "kubernetes"

#将不是1.11.1版本的remove之后,重新install即可

#原因可能是yum install kubeadm-1.11.1时会附带安装高版本的kubelet 和 kubectl

2、flannel pod启动失败,CrashLoopBackOff,通过kubectl logs {pod_name}如下

I0815 00:25:37.646559 1 main.go:201] Could not find valid interface matching ens32: error looking up interface ens32: route ip+net: no such network interface E0815 00:25:37.646628 1 main.go:225] Failed to find interface to use that matches the interfaces and/or regexes provided

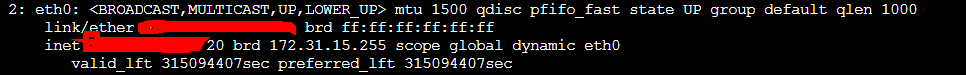

需要查看虚拟机的网卡名称,如下,为eth0,并与flannel pod的yaml文件中--iface=eth0 arg保持一致

3、部署nginx后,无法通过公网ip:nodePort访问

需要在阿里云控制台中为对应的实例配置安全组规则:开放30000-32767端口

4、docker-storage出现问题时可以通过如下方式重置docker-storage

#重置docker-storage

rm -rf /etc/sysconfig/docker-storage rm -rf /var/lib/docker #报如下错误 rm: cannot remove ‘/var/lib/docker/devicemapper’: Device or resource busy rm: cannot remove ‘/var/lib/docker/containers’: Device or resource busy #通过 umount /var/lib/docker/devicemapper umount /var/lib/docker/containers #即可删除/var/lib/docker目录 docker-storage-setup --reset

#配置docker使用docker-vg作为后端存储

echo VG=docker-vg > /etc/sysconfig/docker-storage-setup

docker-storage-setup

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 开发者必知的日志记录最佳实践

· SQL Server 2025 AI相关能力初探

· Linux系列:如何用 C#调用 C方法造成内存泄露

· AI与.NET技术实操系列(二):开始使用ML.NET

· 记一次.NET内存居高不下排查解决与启示

· 阿里最新开源QwQ-32B,效果媲美deepseek-r1满血版,部署成本又又又降低了!

· 开源Multi-agent AI智能体框架aevatar.ai,欢迎大家贡献代码

· Manus重磅发布:全球首款通用AI代理技术深度解析与实战指南

· 被坑几百块钱后,我竟然真的恢复了删除的微信聊天记录!

· 没有Manus邀请码?试试免邀请码的MGX或者开源的OpenManus吧