ubuntu部署kubeadm1.13.1高可用

kubeadm的主要特性已经GA了,网上看很多人说1.13有bug在1.13.1进行的更新,具体我也没怎么看,有兴趣的朋友可以查查,不过既然有人提到了我们就不要再去踩雷了,就用现在的1.13.1来部署集群。kubeadm对于初学k8s来说是很友好的,从部署到完成熟练的话一个单节点可能也就20分钟左右,不过在1.13之前用kubeadm最大的问题就是****,不过从1.13开始就可以imageRepository参数下载国内镜像再也不用担心镜像下载问题。稍微吐槽一下,建议大家再部署过程中还是以官网为主,大多数网上的文档千篇一律都不知道谁复制谁的,由于环境不同很多部署方式也加入了自己的理解,本人非大佬没有那么深的理解所以就是一步步按照自己和官网搭建的,可能有理解不到位或者欠缺的地方望大家多多提宝贵意见,废话不多说上正题。

说下环境方面: 系统: Ubuntu 16.04.5 内核: 4.4.0-141-generic etcd:etcd-v3.2.24 docker: 18.06.1-ce kubelet:1.13.1

etcd集群上一章节已经写过这里不过多说明,etcd采用三台机器自带高可用,master采用keepalived三台高可用,etcd及master共用机器,机器资源充足可将master单独部署,官方建议最少机器配置2G

172.16.10.1 etcd master keepalived

172.16.10.2 etcd master keepalived

172.16.10.3 etcd master keepalived

172.16.10.4 node

172.16.10.5 node

172.16.10.6 node

172.16.10.210 vip

1.安装前准备

关闭selinux 防火墙 swap分区(否则在init初始化无法进行),准备工作较为基础此处不过多编写

$ vi /etc/sysctl.d/k8s-sysctl.conf

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

$ sysctl -p /etc/sysctl.d/k8s-sysctl.conf2.安装docker

#Ubuntu安装dcoker存储库

apt-get install -y apt-transport-https ca-certificates curl software-properties-common

#添加Docker官方的GPG密钥

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo apt-key add -

非必须,视情况而定

此处由于网络问题没有办法在线添加,可将gpg文件下载下来导入

sudo apt-key add apt-key.gpg

#设置stable存储库

add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable"

#更新apt包索引

apt-get update

#安装docker最新版本18.06

apt install docker-ce=18.06.1~ce~3-0~ubuntu

3.安装kubeadm,kubelet和kubectl(建议版本号与Kubernetes的版本匹配)

apt-get update && apt-get install -y apt-transport-https curl

curl -s https://packages.cloud.google.com/apt/doc/apt-key.gpg | apt-key add -

cat <<EOF >/etc/apt/sources.list.d/kubernetes.list

deb https://apt.kubernetes.io/ kubernetes-xenial main

EOF

apt-get update

apt-get install -y kubelet kubeadm kubectl

apt-mark hold kubelet kubeadm kubectl4.配置主节点上的kubelet使用的cgroup驱动程序(小坑,前面版本的部署基本都没怎么配置docker驱动和kubelet都是一致的,这里忽略了导致后面出现的报错且日志中没有反馈这方面报错信息)dcoker info查看下驱动,修改/etc/default/kubelet

我这里是cgroupfs ,保证驱动一致,多说一句很多文档对这里没有提到,1.13和以前在这里配置略微不同,老版本这里都是修改/etc/systemd/system/kubelet.service.d/10-kubeadm.conf配置就可以,kubelet默认驱动配置是system

cat /etc/defaule/kubelet

KUBELET_EXTRA_ARGS=--cgroup-driver=cgroupfs

需要重新启动kubelet

systemctl daemon-reload

systemctl restart kubelet5.如果需要控制控制单台计算机上的所有节点,则需要SSH,这里不过多说明,自行搜索配置

6.三台master节点搭建keepalived

#安装依赖

apt-get install libssl-dev openssl libpopt-dev -y

#安装keepalived包

apt-get install keepalived –y

#修改配置

master1

vrrp_instance VI_1 {

state MASTER #master节点需修改

interface ens160 #查看本机网卡名

virtual_router_id 51

priority 100 #权重设置优先级

advert_int 1

authentication {

auth_type PASS

auth_pass 1111 #需要相同

}

virtual_ipaddress {

172.16.10.210 #vip

}

}

master2

vrrp_instance VI_1 {

state BACKUP

interface ens160

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.16.10.210

}

}

master3

vrrp_instance VI_1 {

state BACKUP

interface ens160

virtual_router_id 51

priority 80

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.16.10.210

}

}

启动三台机器的keepalived

systemctl start keepalived

查看vip是否在绑定的网卡下,可关闭其中一台机器的keepalived服务,vip是否漂移到其他机器

安装haproxy(三台机器都要安装)

apt-get -y install haproxy

cat << EOF > /etc/haproxy/haproxy.cfg

global

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 4000

user haproxy

group haproxy

daemon

defaults

mode tcp

log global

retries 3

timeout connect 10s

timeout client 1m

timeout server 1m

frontend kubernetes

bind *:6443

mode tcp

default_backend kubernetes-master

backend kubernetes-master

balance roundrobin

server master 192.168.10.1:6443 check maxconn 2000

server master2 192.168.10.2:6443 check maxconn 2000

server master3 192.168.10.3:6443 check maxconn 2000

EOF

systemctl enable haproxy

systemctl start haproxy7.创建一个初始化配置文件kubeadm-config.yml,采用flannel网络(视自身情况)

apiVersion: kubeadm.k8s.io/v1beta1

kind: ClusterConfiguration

kubernetesVersion: v1.13.1 # kubernetes的版本

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

controlPlaneEndpoint: "172.16.10.210:6443"

apiServer:

certSANs:

- 172.16.10.1

- 172.16.10.2

- 172.16.10.3

- 172.16.10.210

- 127.0.0.1

etcd: #ETCD的地址

external:

endpoints:

- http://172.16.10.1:2379

- http://172.16.10.2:2379

- http://172.16.10.3:2379

networking:

podSubnet: 10.244.0.0/16 # pod网络的网段flannel,如其他网络可视自身情况

8.初始化init

kubeadm init --config=kubeadm-config.yml

初始化大概持续10分钟左右名主要下载镜像,可提前下载

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.13.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.13.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.13.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.13.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.2.24

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.2.6

出现上图所示,证明初始化成功

保留token,已便后面添加节点用

kubeadm join --token <token> <master-ip>:<master-port> --discovery-token-ca-cert-hash sha256:<hash>kubeadm token list(可查看生成的token)

运行以下命令

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config安装网络组件flannel

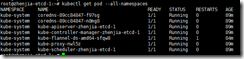

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/bc79dd1505b0c8681ece4de4c0d86c5cd2643275/Documentation/kube-flannel.yml查看节点状态kubectl get nodekubectl get pod --all-namespaces

这里master节点安装完成,如果pod状态不是running查看系统日志或者使用kubectl describe pod –n kube-system+pod名查看

9.将证书文件从第一个master节点复制到其余master节点,集群etcd采用的是http没有加证书

USER=root # customizable

CONTROL_PLANE_IPS="172.16.10.2 172.16.10.3"

for host in ${CONTROL_PLANE_IPS}; do

scp /etc/kubernetes/pki/ca.crt "${USER}"@$host:

scp /etc/kubernetes/pki/ca.key "${USER}"@$host:

scp /etc/kubernetes/pki/sa.key "${USER}"@$host:

scp /etc/kubernetes/pki/sa.pub "${USER}"@$host:

scp /etc/kubernetes/pki/front-proxy-ca.crt "${USER}"@$host:

scp /etc/kubernetes/pki/front-proxy-ca.key "${USER}"@$host:

#scp /etc/kubernetes/pki/etcd/ca.crt "${USER}"@$host:etcd-ca.crt

#scp /etc/kubernetes/pki/etcd/ca.key "${USER}"@$host:etcd-ca.key

scp /etc/kubernetes/admin.conf "${USER}"@$host:

done其他master节点操作

USER=root # customizable

mkdir -p /etc/kubernetes/pki/etcd

mv /home/${USER}/ca.crt /etc/kubernetes/pki/

mv /home/${USER}/ca.key /etc/kubernetes/pki/

mv /home/${USER}/sa.pub /etc/kubernetes/pki/

mv /home/${USER}/sa.key /etc/kubernetes/pki/

mv /home/${USER}/front-proxy-ca.crt /etc/kubernetes/pki/

mv /home/${USER}/front-proxy-ca.key /etc/kubernetes/pki/

mv /home/${USER}/etcd-ca.crt /etc/kubernetes/pki/etcd/ca.crt

mv /home/${USER}/etcd-ca.key /etc/kubernetes/pki/etcd/ca.key

mv /home/${USER}/admin.conf /etc/kubernetes/admin.conf执行kubeadm join启动kubeadm join 172.16.10.210:6443 --token r2tshb.svfb47rz7fdg874p --discovery-token-ca-cert-hash sha256:b2721cafb1e3979b19040aeebc544277998e15b683c93ee7360b28d363473c9a

其余节点重复上述操作。

node节点加入集群执行kubeadm join,如果出现报错想要初始化init执行kubeadm reset

浙公网安备 33010602011771号

浙公网安备 33010602011771号