Pytorch实战学习(六):基础CNN

《PyTorch深度学习实践》完结合集_哔哩哔哩_bilibili

Basic Convolution Neural Network

1、全连接网络

线性层串行—全连接网络

每一个输入和输出都有权重--全连接层

全连接网络在处理图像时,直接将每一行像素拼接成向量,丧失了图像的空间结构

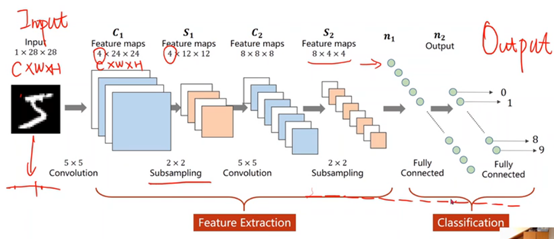

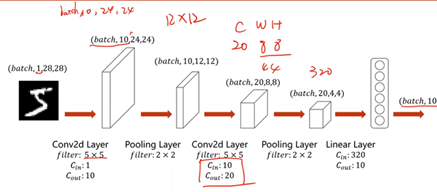

2、CNN结构

CNN在处理图像时,保留了图像的空间结构信息

卷积神经网络:卷积运算(特征提取)à转换成向量à全连接网络(分类)

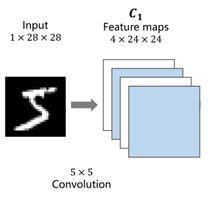

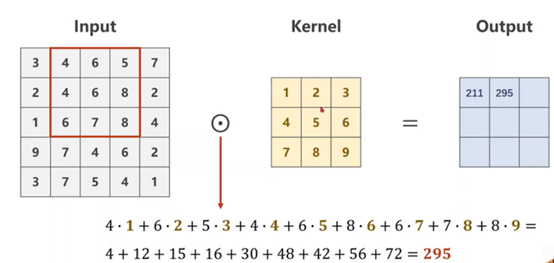

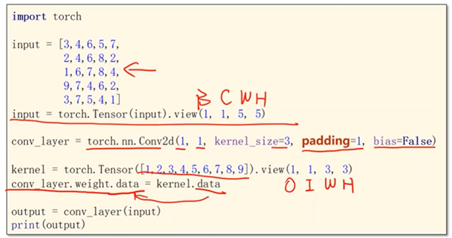

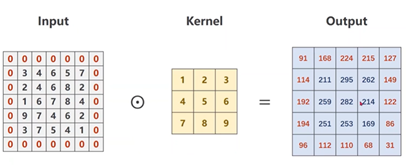

3、卷积过程

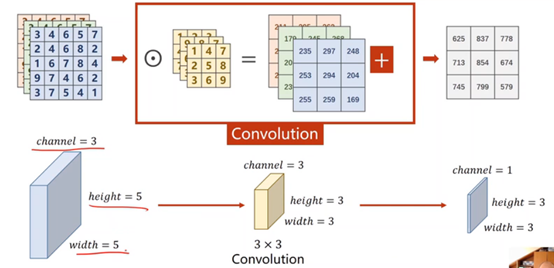

1×28×28是C(channle)×W(width)×H(Hight),就是通道数×图像宽度×图像高度

①单通道卷积(矩阵数乘)

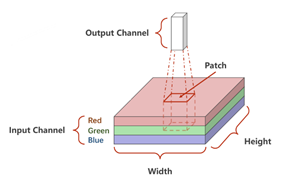

②三通道卷积

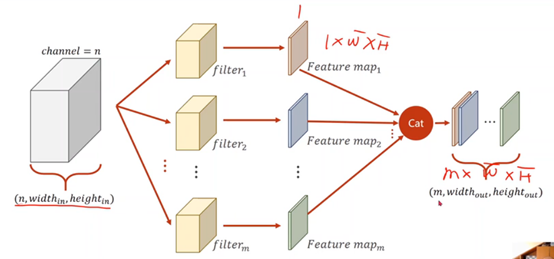

③N通道卷积

每一个卷积核的通道数量 = 输入的通道数量

卷积核的个数 = 输出的通道数量

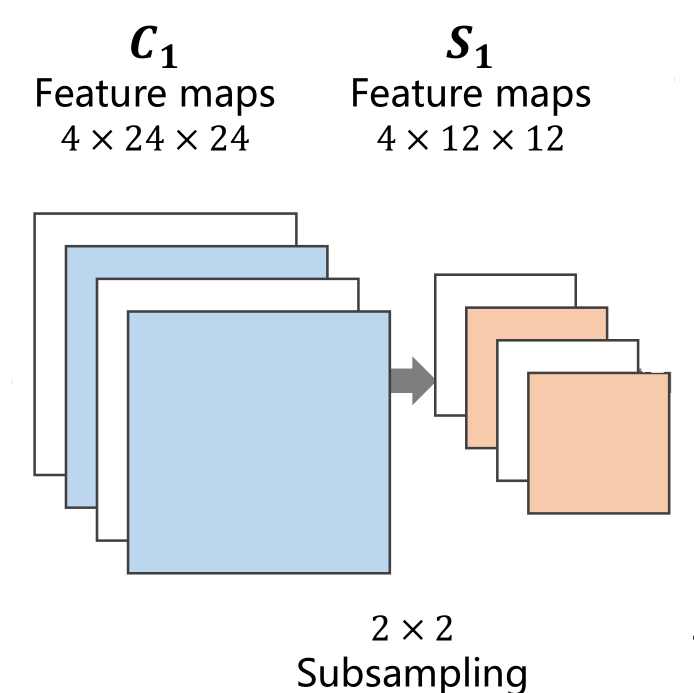

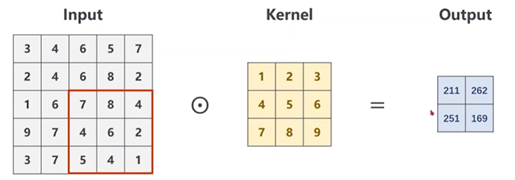

4、下采样(subsampling)---Max Pooling

下采样的目的是减少特征图像的数据量,降低运算需求。在下采样过程中,通道数(Channel)保持不变,图像的宽度和高度发生改变

5、全连接层

先将原先多维的卷积结果通过全连接层转为一维的向量,再通过多层全连接层将原向量转变为可供输出的向量。

卷积和下采样都是在特征提取

全连接层才是分类

6、CNN

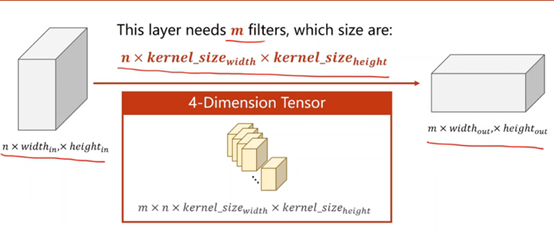

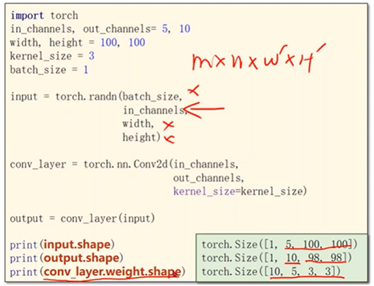

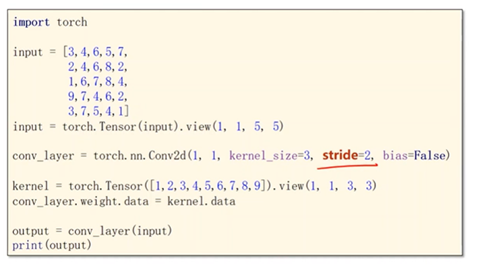

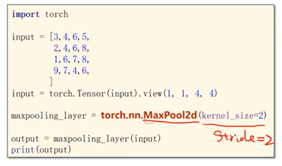

①卷积操作

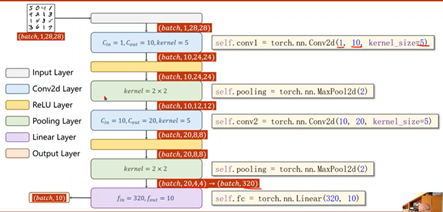

Pytorch输入数据必须是小批量数据,设置batch_size

需要确定的值:输入的通道(in_channels)、输出的通道(out_channels)、卷积核的大小(kernel_size:3x3)

②Padding,向外填充

③Stride—步长

有效降低图像的宽度和高度

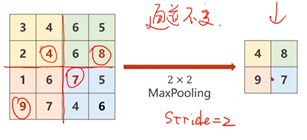

④下采样:Max Pooling Layer

默认Stride=2

⑤整体结构

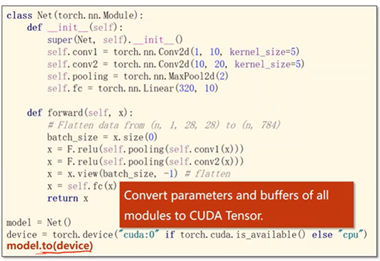

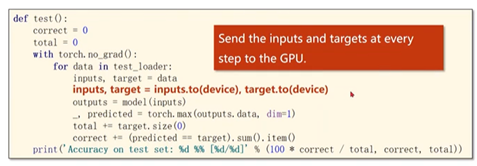

⑥用CPU或GPU进行模型的训练和测试

torch.device

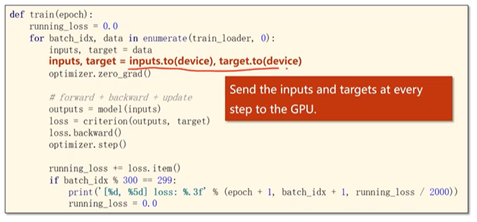

完整代码

import torch from torchvision import transforms from torchvision import datasets from torch.utils.data import DataLoader import torch.nn.functional as F import torch.optim as optim # prepare dataset batch_size = 64 transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))]) train_dataset = datasets.MNIST(root='../dataset/mnist/', train=True, download=True, transform=transform) train_loader = DataLoader(train_dataset, shuffle=True, batch_size=batch_size) test_dataset = datasets.MNIST(root='../dataset/mnist/', train=False, download=True, transform=transform) test_loader = DataLoader(test_dataset, shuffle=False, batch_size=batch_size) # design model using class class Net(torch.nn.Module): def __init__(self): super(Net, self).__init__() self.conv1 = torch.nn.Conv2d(1, 10, kernel_size=5) self.conv2 = torch.nn.Conv2d(10, 20, kernel_size=5) self.pooling = torch.nn.MaxPool2d(2) self.fc = torch.nn.Linear(320, 10) def forward(self, x): # flatten data from (n,1,28,28) to (n, 784) batch_size = x.size(0) x = F.relu(self.pooling(self.conv1(x))) x = F.relu(self.pooling(self.conv2(x))) x = x.view(batch_size, -1) # -1 此处自动算出的是320 x = self.fc(x) return x model = Net() ## Device—选择是用GPU还是用CPU训练 device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") model.to(device) # construct loss and optimizer criterion = torch.nn.CrossEntropyLoss() optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5) # training cycle forward, backward, update def train(epoch): running_loss = 0.0 for batch_idx, data in enumerate(train_loader, 0): inputs, target = data inputs, target = inputs.to(device), target.to(device) optimizer.zero_grad() outputs = model(inputs) loss = criterion(outputs, target) loss.backward() optimizer.step() running_loss += loss.item() if batch_idx % 300 == 299: print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300)) running_loss = 0.0 def test(): correct = 0 total = 0 with torch.no_grad(): for data in test_loader: images, labels = data images, labels = images.to(device), labels.to(device) outputs = model(images) _, predicted = torch.max(outputs.data, dim=1) total += labels.size(0) correct += (predicted == labels).sum().item() print('accuracy on test set: %d %% ' % (100*correct/total)) if __name__ == '__main__': for epoch in range(10): train(epoch) test()

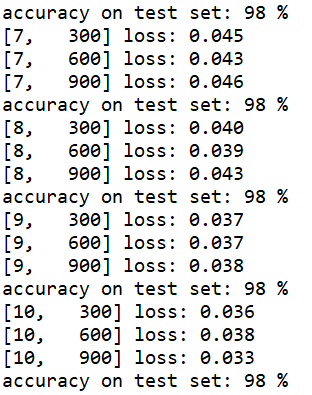

运行结果