4-线性回归

python中*运算符的使用

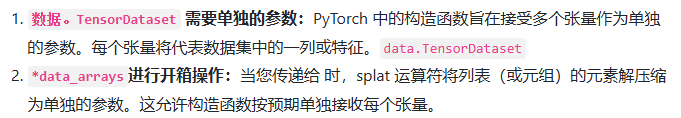

用于将可迭代对象(如列表或元组)的元素解压缩为单独的参数

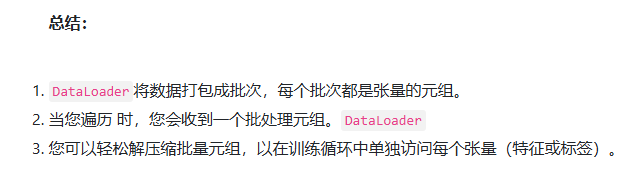

当我们从Dataloader取出来的时候,又会将压缩为的单独参数分开

import torch

from torch.utils import data

# 准备数据

true_w = torch.tensor([2, -3.4])

true_b = 4.2

def synthetic_data(w, b, num_examples):

x = torch.normal(0, 1, size=(num_examples, 2))

y = torch.matmul(x, w) + b

y += torch.normal(0, 1, size = y.shape)

return x, y.reshape((-1, 1))

features, labels = synthetic_data(true_w, true_b, 1000)

# 获取数据

def load_data(data_arrays, batch_size, is_train = True):

dataset = data.TensorDataset(*data_arrays) # 使用data.TensorDataset函数将输入的data_arrays中的张量组合成一个数据集

return data.DataLoader(dataset, batch_size, shuffle=is_train) # 使用data.DataLoader函数将数据集包装成一个数据加载器

data_iter = load_data((features, labels), 10)

# 构造模型

net = torch.nn.Sequential(torch.nn.Linear(2, 1)) # 输入特征形状 输出特征形状

net[0].weight.data.normal_(0, 0.01)

net[0].bias.data.fill_(0)

# 定义损失函数和优化器

loss = torch.nn.MSELoss()

optimizer = torch.optim.SGD(net.parameters(), lr=0.01)

# 训练

epochs = 10

for epoch in range(epochs):

for X, Y in data_iter:

l = loss(net(X), Y)

optimizer.zero_grad()

l.backward()

optimizer.step()

l = loss(net(features), labels)

print('epoch:{}, loss:{}'.format(epoch+1, l.item()))

浙公网安备 33010602011771号

浙公网安备 33010602011771号