搭建MongoDB分片集群

一、什么是分片

分片是一种跨多台机器分布数据的方法。MongoDB 使用分片来支持超大数据集和高吞吐量操作的部署。

存在大型数据集或高吞吐量应用程序的数据库系统可能对单个服务器的容量构成挑战。例如,较高的查询速率可能会耗尽服务器的 CPU 容量。大于系统 RAM 的工作集大小会对磁盘驱动器的 I/O 容量造成压力。

有两种方法可解决系统增长问题:垂直扩展和水平扩展。

Vertical Scaling(垂直扩展)涉及增大单个服务器的容量,例如使用更强大的 CPU、添加更多 RAM 或增加存储空间量。可用技术所存在的限制可能会导致单个机器对给定工作负载来说不够强大。此外,基于云的提供商存在基于可用硬件配置的硬上限。因此,垂直扩展存在实际的最大值。

Horizontal Scaling(横向扩展)涉及将系统数据集和负载划分到多个服务器,以及按需增加服务器以提高容量。虽然单个机器的总体速度或容量可能不高,但每个机器均可处理总体工作负载的一部分,因此可能会比单个高速、高容量服务器提供更高的效率。扩展部署的容量只需按需添加额外的服务器,而这可能会比单个机器的高端硬件的整体成本更低。但代价在于它会增大部署的基础设施与维护的复杂性。

MongoDB 支持通过水平扩展进行分片

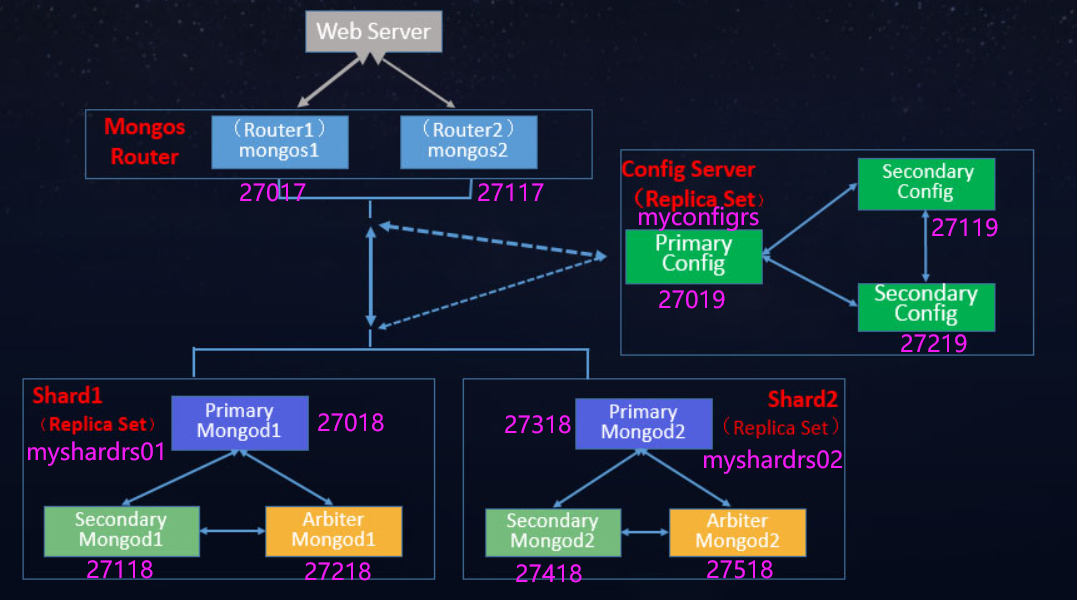

二、分片集群

1、组件构成

- 分片:每个分片都包含分片数据的一个子集。每个分片都必须作为一个副本集

- mongos :

mongos充当查询路由器,在客户端应用程序和分片集群之间提供接口。mongos可以支持对冲读,以最大限度地减少延迟。 - 配置服务器:配置服务器会存储集群的元数据和配置设置。 从 MongoDB 3.4 开始,配置服务器必须作为副本集 (CSRS) 部署。

2、分片集群内各组件间交互

三、数据如何切分

基于分片切分后的数据块称为 chunk,一个分片后的集合会包含多个 chunk,每个 chunk 位于哪个分片(Shard) 则记录在 Config Server(配置服务器)上。

Mongos 在操作分片集合时,会自动根据分片键找到对应的 chunk,并向该 chunk 所在的分片发起操作请求。

数据是根据分片策略来进行切分的,而分片策略则由 分片键(ShardKey)+分片算法(ShardStrategy)组成。

四、分片策略

1、哈希分片

哈希分片涉及计算分片键字段值的哈希值。然后,根据哈希分片键值为每个数据段分配一个范围。

在使用哈希索引解析查询时,MongoDB 会自动计算哈希值。应用程序无需计算哈希值

虽然分片键的范围可能“相近”,但它们的哈希值却不太可能位于同一数据段。基于哈希值的数据分配可促进更均匀的数据分布,尤其是在分片键单调

然而,哈希分布意味着对分片键进行基于范围的查询时,不太可能以单个分片为目标,从而导致更多的集群范围的广播操作

2、范围分片

范围分片涉及根据分片键值将数据划分为多个范围。然后,根据分片键值为每个数据段分配一个范围。

具有“相近”数值的一系列分片键更有可能位于同一个数据段上。这允许进行有针对性的操作,因为 mongos 只能将操作路由到包含所需数据的分片。

范围分片的效率取决于所选分片键。考虑不周的分片键可能会导致数据分布不均,从而抵消分片的某些好处甚或导致性能瓶颈。请参阅针对基于范围的分片的分片键选择

五、分片集群架构

两个分片节点副本集(3+3)+ 一个配置节点副本集(3)+两个路由节点(2)

共11个服务节点。

六、搭建分片集群

1、涉及主机

| 角色 | 主机名 | IP地址 |

|---|---|---|

| 分片节点1 | shard1 | 192.168.112.10 |

| 分片节点2 | shard2 | 192.168.112.20 |

| 配置节点 | config-server | 192.168.112.30 |

| 路由节点 | router | 192.168.112.40 |

2、所有主机安装MongoDB

wget https://fastdl.mongodb.org/linux/mongodb-linux-x86_64-rhel70-4.4.6.tgz

tar xzvf mongodb-linux-x86_64-rhel70-4.4.6.tgz -C /usr/local

cd /usr/local/

ln -s /usr/local/mongodb-linux-x86_64-rhel70-4.4.6 /usr/local/mongodb

vim /etc/profile

export PATH=/usr/local/mongodb/bin/:$PATH

source /etc/profile

3、分片节点副本集的创建

3.1、第一套副本集shard1

shard1主机操作

3.1.1、准备存放数据和日志的目录

mkdir -p /usr/local/mongodb/sharded_cluster/myshardrs01_27018/log \

/usr/local/mongodb/sharded_cluster/myshardrs01_27018/data/db \

/usr/local/mongodb/sharded_cluster/myshardrs01_27118/log \

/usr/local/mongodb/sharded_cluster/myshardrs01_27118/data/db \

/usr/local/mongodb/sharded_cluster/myshardrs01_27218/log \

/usr/local/mongodb/sharded_cluster/myshardrs01_27218/data/db

3.1.2、创建配置文件

myshardrs01_27018

vim /usr/local/mongodb/sharded_cluster/myshardrs01_27018/mongod.conf

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/myshardrs01_27018/log/mongod.log"

logAppend: true

storage:

dbPath: "/usr/local/mongodb/sharded_cluster/myshardrs01_27018/data/db"

journal:

enabled: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/myshardrs01_27018/log/mongod.pid"

net:

bindIp: localhost,192.168.112.10

port: 27018

replication:

replSetName: myshardrs01

sharding:

clusterRole: shardsvr

myshardrs01_27118

vim /usr/local/mongodb/sharded_cluster/myshardrs01_27118/mongod.conf

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/myshardrs01_27118/log/mongod.log"

logAppend: true

storage:

dbPath: "/usr/local/mongodb/sharded_cluster/myshardrs01_27118/data/db"

journal:

enabled: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/myshardrs01_27118/log/mongod.pid"

net:

bindIp: localhost,192.168.112.10

port: 27118

replication:

replSetName: myshardrs01

sharding:

clusterRole: shardsvr

myshardrs01_27218

vim /usr/local/mongodb/sharded_cluster/myshardrs01_27218/mongod.conf

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/myshardrs01_27218/log/mongod.log"

logAppend: true

storage:

dbPath: "/usr/local/mongodb/sharded_cluster/myshardrs01_27218/data/db"

journal:

enabled: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/myshardrs01_27218/log/mongod.pid"

net:

bindIp: localhost,192.168.112.10

port: 27218

replication:

replSetName: myshardrs01

sharding:

clusterRole: shardsvr

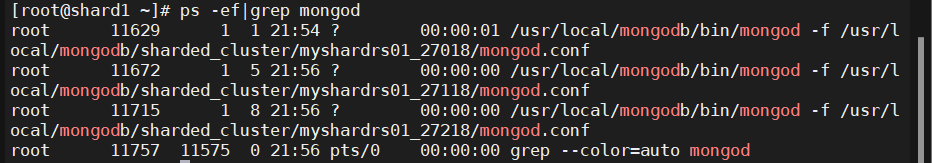

3.1.3、启动第一套副本集:一主一副本一仲裁

/usr/local/mongodb/bin/mongod -f /usr/local/mongodb/sharded_cluster/myshardrs01_27018/mongod.conf

/usr/local/mongodb/bin/mongod -f /usr/local/mongodb/sharded_cluster/myshardrs01_27118/mongod.conf

/usr/local/mongodb/bin/mongod -f /usr/local/mongodb/sharded_cluster/myshardrs01_27218/mongod.conf

ps -ef|grep mongod

3.1.4、初始化副本集、添加副本,仲裁节点

连接主节点

mongo --host 192.168.112.10 --port 27018

- 初始化副本集

rs.initiate()

{

"info2" : "no configuration specified. Using a default configuration for the set",

"me" : "192.168.112.10:27018",

"ok" : 1

}

myshardrs01:SECONDARY>

myshardrs01:PRIMARY>

- 添加副本节点

rs.add("192.168.112.10:27118")

{

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1714658449, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1714658449, 1)

}

- 添加仲裁节点

rs.addArb("192.168.112.10:27218")

{

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1714658509, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1714658509, 1)

}

-

查看副本集(关注各成员角色信息、健康状况即可)

-

rs.conf()

- 作用:此命令用于显示当前副本集的配置信息。它返回一个文档,包含了副本集的名称、版本、协议版本、成员列表(包括每个成员的ID、主机地址、优先级、角色等信息)、设置选项等详细配置细节。

- 应用场景:当你需要查看或修改副本集的配置时(比如添加新成员、调整成员优先级、查看仲裁节点配置等),可以使用此命令。获取到的配置信息可以进一步用于重新配置副本集(

rs.reconfig())。

-

rs.status()

- 作用:此命令提供了副本集当前状态的快照,包括各成员的运行状态、主从角色、健康状况、同步进度、心跳信息、选举信息等。它帮助你了解副本集是否运行正常,成员之间是否保持同步,以及是否有主节点故障等情况。

- 应用场景:当监控副本集健康状态、排查连接问题、检查数据同步情况或确认主节点变更时,使用此命令非常有用。通过检查

rs.status()的输出,管理员可以快速识别并解决集群中的问题。

-

3.2、第二套副本集shard2

shard2主机操作

3.2.1、准备存放数据和日志的目录

mkdir -p /usr/local/mongodb/sharded_cluster/myshardrs02_27318/log \

/usr/local/mongodb/sharded_cluster/myshardrs02_27318/data/db \

/usr/local/mongodb/sharded_cluster/myshardrs02_27418/log \

/usr/local/mongodb/sharded_cluster/myshardrs02_27418/data/db \

/usr/local/mongodb/sharded_cluster/myshardrs02_27518/log \

/usr/local/mongodb/sharded_cluster/myshardrs02_27518/data/db

3.1.2、创建配置文件

myshardrs02_27318

vim /usr/local/mongodb/sharded_cluster/myshardrs02_27318/mongod.conf

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/myshardrs02_27318/log/mongod.log"

logAppend: true

storage:

dbPath: "/usr/local/mongodb/sharded_cluster/myshardrs02_27318/data/db"

journal:

enabled: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/myshardrs02_27318/log/mongod.pid"

net:

bindIp: localhost,192.168.112.20

port: 27318

replication:

replSetName: myshardrs02

sharding:

clusterRole: shardsvr

myshardrs02_27418

vim /usr/local/mongodb/sharded_cluster/myshardrs02_27418/mongod.conf

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/myshardrs02_27418/log/mongod.log"

logAppend: true

storage:

dbPath: "/usr/local/mongodb/sharded_cluster/myshardrs02_27418/data/db"

journal:

enabled: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/myshardrs02_27418/log/mongod.pid"

net:

bindIp: localhost,192.168.112.20

port: 27418

replication:

replSetName: myshardrs02

sharding:

clusterRole: shardsvr

myshardrs02_27518

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/myshardrs02_27518/log/mongod.log"

logAppend: true

storage:

dbPath: "/usr/local/mongodb/sharded_cluster/myshardrs02_27518/data/db"

journal:

enabled: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/myshardrs02_27518/log/mongod.pid"

net:

bindIp: localhost,192.168.112.20

port: 27518

replication:

replSetName: myshardrs02

sharding:

clusterRole: shardsvr

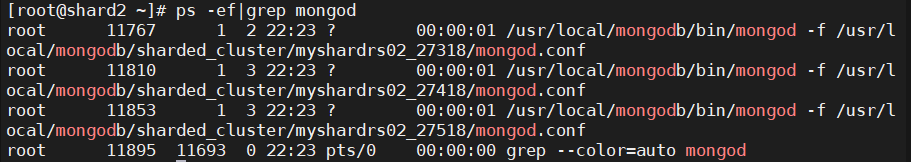

3.2.3、启动第二套副本集:一主一副本一仲裁

/usr/local/mongodb/bin/mongod -f /usr/local/mongodb/sharded_cluster/myshardrs02_27318/mongod.conf

/usr/local/mongodb/bin/mongod -f /usr/local/mongodb/sharded_cluster/myshardrs02_27418/mongod.conf

/usr/local/mongodb/bin/mongod -f /usr/local/mongodb/sharded_cluster/myshardrs02_27518/mongod.conf

ps -ef|grep mongod

3.2.4、初始化副本集、添加副本,仲裁节点

连接主节点

mongo --host 192.168.112.20 --port 27318

- 初始化副本集

rs.initiate()

{

"info2" : "no configuration specified. Using a default configuration for the set",

"me" : "192.168.112.20:27318",

"ok" : 1

}

myshardrs02:SECONDARY>

myshardrs02:PRIMARY>

- 添加副本节点

rs.add("192.168.112.20:27418")

{

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1714660065, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1714660065, 1)

}

- 添加仲裁节点

rs.addArb("192.168.112.20:27518")

{

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1714660094, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1714660094, 1)

}

- 查看副本集

rs.status()

{

"set" : "myshardrs02",

"date" : ISODate("2024-05-02T14:29:57.930Z"),

"myState" : 1,

"term" : NumberLong(1),

"syncSourceHost" : "",

"syncSourceId" : -1,

"heartbeatIntervalMillis" : NumberLong(2000),

"majorityVoteCount" : 2,

"writeMajorityCount" : 2,

"votingMembersCount" : 3,

"writableVotingMembersCount" : 2,

"optimes" : {

"lastCommittedOpTime" : {

"ts" : Timestamp(1714660197, 1),

"t" : NumberLong(1)

},

"lastCommittedWallTime" : ISODate("2024-05-02T14:29:57.868Z"),

"readConcernMajorityOpTime" : {

"ts" : Timestamp(1714660197, 1),

"t" : NumberLong(1)

},

"readConcernMajorityWallTime" : ISODate("2024-05-02T14:29:57.868Z"),

"appliedOpTime" : {

"ts" : Timestamp(1714660197, 1),

"t" : NumberLong(1)

},

"durableOpTime" : {

"ts" : Timestamp(1714660197, 1),

"t" : NumberLong(1)

},

"lastAppliedWallTime" : ISODate("2024-05-02T14:29:57.868Z"),

"lastDurableWallTime" : ISODate("2024-05-02T14:29:57.868Z")

},

"lastStableRecoveryTimestamp" : Timestamp(1714660147, 1),

"electionCandidateMetrics" : {

"lastElectionReason" : "electionTimeout",

"lastElectionDate" : ISODate("2024-05-02T14:27:07.797Z"),

"electionTerm" : NumberLong(1),

"lastCommittedOpTimeAtElection" : {

"ts" : Timestamp(0, 0),

"t" : NumberLong(-1)

},

"lastSeenOpTimeAtElection" : {

"ts" : Timestamp(1714660027, 1),

"t" : NumberLong(-1)

},

"numVotesNeeded" : 1,

"priorityAtElection" : 1,

"electionTimeoutMillis" : NumberLong(10000),

"newTermStartDate" : ISODate("2024-05-02T14:27:07.802Z"),

"wMajorityWriteAvailabilityDate" : ISODate("2024-05-02T14:27:07.899Z")

},

"members" : [

{

"_id" : 0,

"name" : "192.168.112.20:27318",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 403,

"optime" : {

"ts" : Timestamp(1714660197, 1),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2024-05-02T14:29:57Z"),

"syncSourceHost" : "",

"syncSourceId" : -1,

"infoMessage" : "",

"electionTime" : Timestamp(1714660027, 2),

"electionDate" : ISODate("2024-05-02T14:27:07Z"),

"configVersion" : 3,

"configTerm" : -1,

"self" : true,

"lastHeartbeatMessage" : ""

},

{

"_id" : 1,

"name" : "192.168.112.20:27418",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 132,

"optime" : {

"ts" : Timestamp(1714660187, 1),

"t" : NumberLong(1)

},

"optimeDurable" : {

"ts" : Timestamp(1714660187, 1),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2024-05-02T14:29:47Z"),

"optimeDurableDate" : ISODate("2024-05-02T14:29:47Z"),

"lastHeartbeat" : ISODate("2024-05-02T14:29:57.035Z"),

"lastHeartbeatRecv" : ISODate("2024-05-02T14:29:57.113Z"),

"pingMs" : NumberLong(0),

"lastHeartbeatMessage" : "",

"syncSourceHost" : "192.168.112.20:27318",

"syncSourceId" : 0,

"infoMessage" : "",

"configVersion" : 3,

"configTerm" : -1

},

{

"_id" : 2,

"name" : "192.168.112.20:27518",

"health" : 1,

"state" : 7,

"stateStr" : "ARBITER",

"uptime" : 102,

"lastHeartbeat" : ISODate("2024-05-02T14:29:57.035Z"),

"lastHeartbeatRecv" : ISODate("2024-05-02T14:29:57.068Z"),

"pingMs" : NumberLong(0),

"lastHeartbeatMessage" : "",

"syncSourceHost" : "",

"syncSourceId" : -1,

"infoMessage" : "",

"configVersion" : 3,

"configTerm" : -1

}

],

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1714660197, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1714660197, 1)

}

4、配置节点副本集的创建

config-server主机操作

4.1、准备存放数据和日志的目录

mkdir -p /usr/local/mongodb/sharded_cluster/myconfigrs_27019/log \

/usr/local/mongodb/sharded_cluster/myconfigrs_27019/data/db \

/usr/local/mongodb/sharded_cluster/myconfigrs_27119/log \

/usr/local/mongodb/sharded_cluster/myconfigrs_27119/data/db \

/usr/local/mongodb/sharded_cluster/myconfigrs_27219/log \

/usr/local/mongodb/sharded_cluster/myconfigrs_27219/data/db

4.2、创建配置文件

myconfigrs_27019

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/myconfigrs_27019/log/mongod.log"

logAppend: true

storage:

dbPath: "/usr/local/mongodb/sharded_cluster/myconfigrs_27019/data/db"

journal:

enabled: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/myconfigrs_27019/log/mongod.pid"

net:

bindIp: localhost,192.168.112.30

port: 27019

replication:

replSetName: myconfigr

sharding:

clusterRole: configsvr

注意:这里的配置文件与分片节点的配置文件不一样

分片角色:shardsvr为分片节点,configsvr配置节点

myconfigrs_27119

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/myconfigrs_27119/log/mongod.log"

logAppend: true

storage:

dbPath: "/usr/local/mongodb/sharded_cluster/myconfigrs_27119/data/db"

journal:

enabled: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/myconfigrs_27119/log/mongod.pid"

net:

bindIp: localhost,192.168.112.30

port: 27119

replication:

replSetName: myconfigr

sharding:

clusterRole: configsvr

myconfigrs_27219

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/myconfigrs_27219/log/mongod.log"

logAppend: true

storage:

dbPath: "/usr/local/mongodb/sharded_cluster/myconfigrs_27219/data/db"

journal:

enabled: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/myconfigrs_27219/log/mongod.pid"

net:

bindIp: localhost,192.168.112.30

port: 27219

replication:

replSetName: myconfigr

sharding:

clusterRole: configsvr

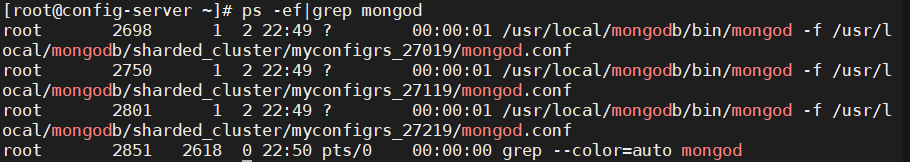

4.3、启动副本集

/usr/local/mongodb/bin/mongod -f /usr/local/mongodb/sharded_cluster/myconfigrs_27019/mongod.conf

/usr/local/mongodb/bin/mongod -f /usr/local/mongodb/sharded_cluster/myconfigrs_27119/mongod.conf

/usr/local/mongodb/bin/mongod -f /usr/local/mongodb/sharded_cluster/myconfigrs_27219/mongod.conf

ps -ef|grep mongod

4.4、初始化副本集、添加副本节点

连接主节点

mongo --host 192.168.112.30 --port 27019

- 初始化副本集

rs.initiate()

{

"info2" : "no configuration specified. Using a default configuration for the set",

"me" : "192.168.112.30:27019",

"ok" : 1,

"$gleStats" : {

"lastOpTime" : Timestamp(1714702204, 1),

"electionId" : ObjectId("000000000000000000000000")

},

"lastCommittedOpTime" : Timestamp(0, 0)

}

- 添加副本节点

rs.add("192.168.112.30:27119")

{

"ok" : 1,

"$gleStats" : {

"lastOpTime" : {

"ts" : Timestamp(1714702333, 2),

"t" : NumberLong(1)

},

"electionId" : ObjectId("7fffffff0000000000000001")

},

"lastCommittedOpTime" : Timestamp(1714702333, 2),

"$clusterTime" : {

"clusterTime" : Timestamp(1714702335, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1714702333, 2)

}

rs.add("192.168.112.30:27219")

{

"ok" : 1,

"$gleStats" : {

"lastOpTime" : {

"ts" : Timestamp(1714702365, 2),

"t" : NumberLong(1)

},

"electionId" : ObjectId("7fffffff0000000000000001")

},

"lastCommittedOpTime" : Timestamp(1714702367, 1),

"$clusterTime" : {

"clusterTime" : Timestamp(1714702367, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1714702365, 2)

}

- 查看副本集

rs.conf()

{

"_id" : "myconfigr",

"version" : 3,

"term" : 1,

"configsvr" : true,

"protocolVersion" : NumberLong(1),

"writeConcernMajorityJournalDefault" : true,

"members" : [

{

"_id" : 0,

"host" : "192.168.112.30:27019",

"arbiterOnly" : false,

"buildIndexes" : true,

"hidden" : false,

"priority" : 1,

"tags" : {

},

"slaveDelay" : NumberLong(0),

"votes" : 1

},

{

"_id" : 1,

"host" : "192.168.112.30:27119",

"arbiterOnly" : false,

"buildIndexes" : true,

"hidden" : false,

"priority" : 1,

"tags" : {

},

"slaveDelay" : NumberLong(0),

"votes" : 1

},

{

"_id" : 2,

"host" : "192.168.112.30:27219",

"arbiterOnly" : false,

"buildIndexes" : true,

"hidden" : false,

"priority" : 1,

"tags" : {

},

"slaveDelay" : NumberLong(0),

"votes" : 1

}

],

"settings" : {

"chainingAllowed" : true,

"heartbeatIntervalMillis" : 2000,

"heartbeatTimeoutSecs" : 10,

"electionTimeoutMillis" : 10000,

"catchUpTimeoutMillis" : -1,

"catchUpTakeoverDelayMillis" : 30000,

"getLastErrorModes" : {

},

"getLastErrorDefaults" : {

"w" : 1,

"wtimeout" : 0

},

"replicaSetId" : ObjectId("6634477c7677719ed2388701")

}

}

5、路由节点的创建和操作

router主机操作

5.1、创建连接第一个路由节点

5.1.1、准备存放日志的目录

mkdir -p /usr/local/mongodb/sharded_cluster/mymongos_27017/log

5.1.2、创建配置文件

vim /usr/local/mongodb/sharded_cluster/mymongos_27017/mongos.conf

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/mymongos_27017/log/mongod.log"

logAppend: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/mymongos_27017/log/mongod.pid"

net:

bindIp: localhost,192.168.112.40

port: 27017

sharding:

configDB: myconfigrs/192.168.112.30:27019,192.168.112.30:27119,192.168.112.30:27219

5.1.3、启动mongos

/usr/local/mongodb/bin/mongos -f /usr/local/mongodb/sharded_cluster/mymongos_27017/mongos.conf

5.1.4、客户端登录mongos

mongo --port 27017

mongos> use test1

switched to db test1

mongos> db.q1.insert({a:"a1"})

WriteCommandError({

"ok" : 0,

"errmsg" : "unable to initialize targeter for write op for collection test1.q1 :: caused by :: Database test1 could not be created :: caused by :: No shards found",

"code" : 70,

"codeName" : "ShardNotFound",

"operationTime" : Timestamp(1714704496, 3),

"$clusterTime" : {

"clusterTime" : Timestamp(1714704496, 3),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

})

此时写入不了数据

通过路由节点操作,现在只是连接了配置节点

还没有连接分片数据节点,因此无法写入业务数据

5.2、添加分片副本集

5.2.1、将第一套分片副本集添加进来

sh.addShard("myshardrs01/192.168.112.10:27018,192.168.112.10:27118,192.168.112.10:27218")

{

"shardAdded" : "myshardrs01",

"ok" : 1,

"operationTime" : Timestamp(1714705107, 3),

"$clusterTime" : {

"clusterTime" : Timestamp(1714705107, 3),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

5.2.2、查看分片状态情况

sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("6634477c7677719ed2388706")

}

shards:

{ "_id" : "myshardrs01", "host" : "myshardrs01/192.168.112.10:27018,192.168.112.10:27118", "state" : 1 }

most recently active mongoses:

"4.4.6" : 1

autosplit:

Currently enabled: yes

balancer:

Currently enabled: yes

Currently running: no

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

No recent migrations

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

5.2.3、将第二套分片副本集添加进来

sh.addShard("myshardrs02/192.168.112.20:27318,192.168.112.20:27418,192.168.112.20:27518")

{

"shardAdded" : "myshardrs02",

"ok" : 1,

"operationTime" : Timestamp(1714705261, 2),

"$clusterTime" : {

"clusterTime" : Timestamp(1714705261, 2),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

5.2.4、查看分片状态情况

sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("6634477c7677719ed2388706")

}

shards:

{ "_id" : "myshardrs01", "host" : "myshardrs01/192.168.112.10:27018,192.168.112.10:27118", "state" : 1 }

{ "_id" : "myshardrs02", "host" : "myshardrs02/192.168.112.20:27318,192.168.112.20:27418", "state" : 1 }

most recently active mongoses:

"4.4.6" : 1

autosplit:

Currently enabled: yes

balancer:

Currently enabled: yes

Currently running: no

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

34 : Success

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

config.system.sessions

shard key: { "_id" : 1 }

unique: false

balancing: true

chunks:

myshardrs01 990

myshardrs02 34

too many chunks to print, use verbose if you want to force print

5.3、移除分片

如果只剩下最后一个shard,是无法删除的。

移除时会自动转移分片数据,需要一个时间过程。

完成后,再次执行删除分片命令才能真正删除。

#示例:移除分片myshardrs02

use db

db.runCommand({removeShard: "myshardrs02"})

5.4、开启分片功能

-

sh.enableSharding("库名")

-

sh.shardCollection("库名.集合名",{"key":1})

mongos> sh.enableSharding("test")

{

"ok" : 1,

"operationTime" : Timestamp(1714706298, 6),

"$clusterTime" : {

"clusterTime" : Timestamp(1714706298, 6),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

5.5、针对分片策略--哈希分片

router主机操作

对comment这个集合使用hash方式分片

sh.shardCollection("test.comment",{"nickname":"hashed"})

{

"collectionsharded" : "test.comment",

"collectionUUID" : UUID("61ea1ec6-500b-4d00-86ed-3b2cce81a021"),

"ok" : 1,

"operationTime" : Timestamp(1714706573, 25),

"$clusterTime" : {

"clusterTime" : Timestamp(1714706573, 25),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

- 查看分片状态

sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("6634477c7677719ed2388706")

}

shards:

{ "_id" : "myshardrs01", "host" : "myshardrs01/192.168.112.10:27018,192.168.112.10:27118", "state" : 1 }

{ "_id" : "myshardrs02", "host" : "myshardrs02/192.168.112.20:27318,192.168.112.20:27418", "state" : 1 }

most recently active mongoses:

"4.4.6" : 1

autosplit:

Currently enabled: yes

balancer:

Currently enabled: yes

Currently running: no

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

512 : Success

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

config.system.sessions

shard key: { "_id" : 1 }

unique: false

balancing: true

chunks:

myshardrs01 512

myshardrs02 512

too many chunks to print, use verbose if you want to force print

{ "_id" : "test", "primary" : "myshardrs02", "partitioned" : true, "version" : { "uuid" : UUID("7f240c27-83dd-48eb-be8c-2e1336ec9190"), "lastMod" : 1 } }

test.comment

shard key: { "nickname" : "hashed" }

unique: false

balancing: true

chunks:

myshardrs01 2

myshardrs02 2

{ "nickname" : { "$minKey" : 1 } } -->> { "nickname" : NumberLong("-4611686018427387902") } on : myshardrs01 Timestamp(1, 0)

{ "nickname" : NumberLong("-4611686018427387902") } -->> { "nickname" : NumberLong(0) } on : myshardrs01 Timestamp(1, 1)

{ "nickname" : NumberLong(0) } -->> { "nickname" : NumberLong("4611686018427387902") } on : myshardrs02 Timestamp(1, 2)

{ "nickname" : NumberLong("4611686018427387902") } -->> { "nickname" : { "$maxKey" : 1 } } on : myshardrs02 Timestamp(1, 3)

5.5.1、插入数据测试

哈希分片

向comment集合循环插入1000条数据做测试

mongos> use test

switched to db test

mongos> for(var i=1;i<=1000;i++){db.comment.insert({_id:i+"",nickname:"Test"+i})}

WriteResult({ "nInserted" : 1 })

mongos> db.comment.count()

1000

5.5.2、分别登录两个分片节点主节点,统计文档数量

-

myshardrs01:

-

mongo --host 192.168.112.10 --port 27018 myshardrs01:PRIMARY> show dbs admin 0.000GB config 0.001GB local 0.001GB test 0.000GB myshardrs01:PRIMARY> use test switched to db test myshardrs01:PRIMARY> db.comment.count() 505 -

myshardrs01:PRIMARY> db.comment.find() { "_id" : "2", "nickname" : "Test2" } { "_id" : "4", "nickname" : "Test4" } { "_id" : "8", "nickname" : "Test8" } { "_id" : "9", "nickname" : "Test9" } { "_id" : "13", "nickname" : "Test13" } { "_id" : "15", "nickname" : "Test15" } { "_id" : "16", "nickname" : "Test16" } { "_id" : "18", "nickname" : "Test18" } { "_id" : "19", "nickname" : "Test19" } { "_id" : "20", "nickname" : "Test20" } { "_id" : "21", "nickname" : "Test21" } { "_id" : "25", "nickname" : "Test25" } { "_id" : "26", "nickname" : "Test26" } { "_id" : "27", "nickname" : "Test27" } { "_id" : "31", "nickname" : "Test31" } { "_id" : "32", "nickname" : "Test32" } { "_id" : "33", "nickname" : "Test33" } { "_id" : "35", "nickname" : "Test35" } { "_id" : "36", "nickname" : "Test36" } { "_id" : "38", "nickname" : "Test38" } Type "it" for more基于哈希值的数据分配

-

-

myshardrs02:

-

mongo --port 27318 myshardrs02:PRIMARY> show dbs admin 0.000GB config 0.001GB local 0.001GB test 0.000GB myshardrs02:PRIMARY> use test switched to db test myshardrs02:PRIMARY> db.comment.count() 495 -

myshardrs02:PRIMARY> db.comment.find() { "_id" : "1", "nickname" : "Test1" } { "_id" : "3", "nickname" : "Test3" } { "_id" : "5", "nickname" : "Test5" } { "_id" : "6", "nickname" : "Test6" } { "_id" : "7", "nickname" : "Test7" } { "_id" : "10", "nickname" : "Test10" } { "_id" : "11", "nickname" : "Test11" } { "_id" : "12", "nickname" : "Test12" } { "_id" : "14", "nickname" : "Test14" } { "_id" : "17", "nickname" : "Test17" } { "_id" : "22", "nickname" : "Test22" } { "_id" : "23", "nickname" : "Test23" } { "_id" : "24", "nickname" : "Test24" } { "_id" : "28", "nickname" : "Test28" } { "_id" : "29", "nickname" : "Test29" } { "_id" : "30", "nickname" : "Test30" } { "_id" : "34", "nickname" : "Test34" } { "_id" : "37", "nickname" : "Test37" } { "_id" : "39", "nickname" : "Test39" } { "_id" : "44", "nickname" : "Test44" } Type "it" for more基于哈希值的数据分配

-

5.6、针对分片策略--范围分片

router主机操作

使用作者年龄字段作为片键,按照年龄的值进行分片

mongos> sh.shardCollection("test.author",{"age":1})

{

"collectionsharded" : "test.author",

"collectionUUID" : UUID("9b8055c8-7a35-49d5-9e8f-07d6004b19b3"),

"ok" : 1,

"operationTime" : Timestamp(1714709641, 12),

"$clusterTime" : {

"clusterTime" : Timestamp(1714709641, 12),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

5.6.1、插入数据测试

范围分片

向author集合循环插入20000条测试数据

mongos> use test

switched to db test

mongos> for (var i=1;i<=2000;i++){db.author.save({"name":"test"+i,"age":NumberInt(i%120)})}

WriteResult({ "nInserted" : 1 })

mongos> db.author.count()

2000

5.6.2、分别登录两个分片节点主节点,统计文档数量

-

myshardrs02

-

myshardrs02:PRIMARY> show dbs admin 0.000GB config 0.001GB local 0.001GB test 0.000GB myshardrs02:PRIMARY> use test switched to db test myshardrs02:PRIMARY> show collections author comment myshardrs02:PRIMARY> db.author.count() 2000

-

-

myshardrs01

-

myshardrs01:PRIMARY> db.author.count() 0

-

-

发现所有的数据都集中在了一个分片副本上

-

如果发现没有分片:

-

系统繁忙,正在分片中

-

数据块(chunk)没有填满,默认的数据块尺寸是64M,填满后才会向其他片的数据库填充数据,为了测试可以改小,但是生产环境请勿改动

-

use config

db.settings.save({_id:"chunksize",value:1})

# 改成1M

db.settings.save({_id:"chunksize",value:64})

6、再添加一个路由节点

router主机操作

6.1、准备存放日志的目录

mkdir -p /usr/local/mongodb/sharded_cluster/mymongos_27117/log

6.2、创建配置文件

vim /usr/local/mongodb/sharded_cluster/mymongos_27117/mongos.conf

systemLog:

destination: file

path: "/usr/local/mongodb/sharded_cluster/mymongos_27117/log/mongod.log"

logAppend: true

processManagement:

fork: true

pidFilePath: "/usr/local/mongodb/sharded_cluster/mymongos_27117/log/mongod.pid"

net:

bindIp: localhost,192.168.112.40

port: 27117

sharding:

configDB: myconfigrs/192.168.112.30:27019,192.168.112.30:27119,192.168.112.30:27219

6.3、启动mongos

/usr/local/mongodb/bin/mongos -f /usr/local/mongodb/sharded_cluster/mymongos_27117/mongos.conf

6.4、客户端登录mongos

使用mongo客户端登录27117

发现第二个路由无需配置,因为分片配置都保存到了配置服务器中了

mongo --port 27117

mongos> sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("6634477c7677719ed2388706")

}

shards:

{ "_id" : "myshardrs01", "host" : "myshardrs01/192.168.112.10:27018,192.168.112.10:27118", "state" : 1 }

{ "_id" : "myshardrs02", "host" : "myshardrs02/192.168.112.20:27318,192.168.112.20:27418", "state" : 1 }

active mongoses:

"4.4.6" : 2

autosplit:

Currently enabled: yes

balancer:

Currently enabled: yes

Currently running: no

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

512 : Success

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

config.system.sessions

shard key: { "_id" : 1 }

unique: false

balancing: true

chunks:

myshardrs01 512

myshardrs02 512

too many chunks to print, use verbose if you want to force print

{ "_id" : "test", "primary" : "myshardrs02", "partitioned" : true, "version" : { "uuid" : UUID("7f240c27-83dd-48eb-be8c-2e1336ec9190"), "lastMod" : 1 } }

test.author

shard key: { "age" : 1 }

unique: false

balancing: true

chunks:

myshardrs02 1

{ "age" : { "$minKey" : 1 } } -->> { "age" : { "$maxKey" : 1 } } on : myshardrs02 Timestamp(1, 0)

test.comment

shard key: { "nickname" : "hashed" }

unique: false

balancing: true

chunks:

myshardrs01 2

myshardrs02 2

{ "nickname" : { "$minKey" : 1 } } -->> { "nickname" : NumberLong("-4611686018427387902") } on : myshardrs01 Timestamp(1, 0)

{ "nickname" : NumberLong("-4611686018427387902") } -->> { "nickname" : NumberLong(0) } on : myshardrs01 Timestamp(1, 1)

{ "nickname" : NumberLong(0) } -->> { "nickname" : NumberLong("4611686018427387902") } on : myshardrs02 Timestamp(1, 2)

{ "nickname" : NumberLong("4611686018427387902") } -->> { "nickname" : { "$maxKey" : 1 } } on : myshardrs02 Timestamp(1, 3)

6.5、查看之前测试的数据

mongos> show dbs

admin 0.000GB

config 0.003GB

test 0.000GB

mongos> use test

switched to db test

mongos> db.comment.count()

1000

mongos> db.author.count()

2000

至此两个分片节点副本集(3+3)+ 一个配置节点副本集(3)+两个路由节点(2)的分片集群搭建完成

浙公网安备 33010602011771号

浙公网安备 33010602011771号