自定义 hadoop MapReduce InputFormat 切分输入文件

转自 http://my.oschina.net/leejun2005/blog/133424

在上一篇中,我们实现了按 cookieId 和 time 进行二次排序,现在又有新问题:假如我需要按 cookieId 和 cookieId&time 的组合进行分析呢?此时最好的办法是自定义 InputFormat,让 mapreduce 一次读取一个 cookieId 下的所有记录,然后再按 time 进行切分 session,逻辑伪码如下:

for OneSplit in MyInputFormat.getSplit() // OneSplit 是某个 cookieId 下的所有记录

for session in OneSplit // session 是按 time 把 OneSplit 进行了二次分割

for line in session // line 是 session 中的每条记录,对应原始日志的某条记录

1、原理:

InputFormat是MapReduce中一个很常用的概念,它在程序的运行中到底起到了什么作用呢?

InputFormat其实是一个接口,包含了两个方法:

public interface InputFormat<K, V> {

InputSplit[] getSplits(JobConf job, int numSplits) throws IOException;

RecordReader<K, V> getRecordReader(InputSplit split,

JobConf job,

Reporter reporter) throws IOException;

}

InputSplit[] getSplits(JobConf job, int numSplits) throws IOException;

RecordReader<K, V> getRecordReader(InputSplit split,

JobConf job,

Reporter reporter) throws IOException;

}

这两个方法有分别完成着以下工作:

方法 getSplits 将输入数据切分成splits,splits的个数即为map tasks的个数,splits的大小默认为块大小,即64M

方法 getRecordReader 将每个 split 解析成records, 再依次将record解析成<K,V>对

也就是说 InputFormat完成以下工作: InputFile --> splits --> <K,V>

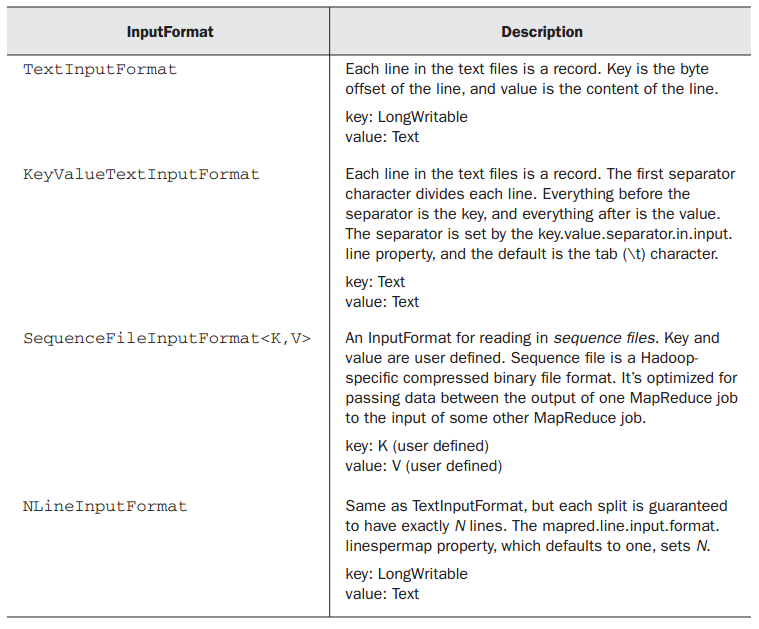

系统常用的 InputFormat 又有哪些呢?

其中Text InputFormat便是最常用的,它的 <K,V>就代表 <行偏移,该行内容>

然而系统所提供的这几种固定的将 InputFile转换为 <K,V>的方式有时候并不能满足我们的需求:

此时需要我们自定义 InputFormat ,从而使Hadoop框架按照我们预设的方式来将

InputFile解析为<K,V>

在领会自定义 InputFormat 之前,需要弄懂一下几个抽象类、接口及其之间的关系:

InputFormat(interface), FileInputFormat(abstract class), TextInputFormat(class),

RecordReader (interface), Line RecordReader(class)的关系

FileInputFormat implements InputFormat

TextInputFormat extends FileInputFormat

TextInputFormat.get RecordReader calls Line RecordReader

Line RecordReader implements RecordReader

对于InputFormat接口,上面已经有详细的描述

再看看 FileInputFormat,它实现了 InputFormat接口中的 getSplits方法,而将 getRecordReader与isSplitable留给具体类(如 TextInputFormat )实现, isSplitable方法通常不用修改,所以只需要在自定义的 InputFormat中实现

getRecordReader方法即可,而该方法的核心是调用 Line RecordReader(即由LineRecorderReader类来实现 " 将每个s plit解析成records, 再依次将record解析成<K,V>对" ),该方法实现了接口RecordReader

public interface RecordReader<K, V> {

boolean next(K key, V value) throws IOException; K createKey();

V createValue();

long getPos() throws IOException;

public void close() throws IOException;

float getProgress() throws IOException;

}

因此自定义InputFormat的核心是自定义一个实现接口RecordReader类似于LineRecordReader的类,该类的核心也正是重写接口RecordReader中的几大方法,

定义一个InputFormat的核心是定义一个类似于LineRecordReader的,自己的RecordReader

2、代码:

package MyInputFormat; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.io.compress.CompressionCodec; import org.apache.hadoop.io.compress.CompressionCodecFactory; import org.apache.hadoop.mapreduce.InputSplit; import org.apache.hadoop.mapreduce.JobContext; import org.apache.hadoop.mapreduce.RecordReader; import org.apache.hadoop.mapreduce.TaskAttemptContext; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; public class TrackInputFormat extends FileInputFormat<LongWritable, Text> { @SuppressWarnings("deprecation") @Override public RecordReader<LongWritable, Text> createRecordReader( InputSplit split, TaskAttemptContext context) { return new TrackRecordReader(); } @Override protected boolean isSplitable(JobContext context, Path file) { CompressionCodec codec = new CompressionCodecFactory( context.getConfiguration()).getCodec(file); return codec == null; } }

1 package MyInputFormat; 2 3 import java.io.IOException; 4 import java.io.InputStream; 5 6 import org.apache.commons.logging.Log; 7 import org.apache.commons.logging.LogFactory; 8 import org.apache.hadoop.conf.Configuration; 9 import org.apache.hadoop.fs.FSDataInputStream; 10 import org.apache.hadoop.fs.FileSystem; 11 import org.apache.hadoop.fs.Path; 12 import org.apache.hadoop.io.LongWritable; 13 import org.apache.hadoop.io.Text; 14 import org.apache.hadoop.io.compress.CompressionCodec; 15 import org.apache.hadoop.io.compress.CompressionCodecFactory; 16 import org.apache.hadoop.mapreduce.InputSplit; 17 import org.apache.hadoop.mapreduce.RecordReader; 18 import org.apache.hadoop.mapreduce.TaskAttemptContext; 19 import org.apache.hadoop.mapreduce.lib.input.FileSplit; 20 21 /** 22 * Treats keys as offset in file and value as line. 23 * 24 * @deprecated Use 25 * {@link org.apache.hadoop.mapreduce.lib.input.LineRecordReader} 26 * instead. 27 */ 28 public class TrackRecordReader extends RecordReader<LongWritable, Text> { 29 private static final Log LOG = LogFactory.getLog(TrackRecordReader.class); 30 31 private CompressionCodecFactory compressionCodecs = null; 32 private long start; 33 private long pos; 34 private long end; 35 private NewLineReader in; 36 private int maxLineLength; 37 private LongWritable key = null; 38 private Text value = null; 39 // ---------------------- 40 // 行分隔符,即一条记录的分隔符 41 private byte[] separator = "END\n".getBytes(); 42 43 // -------------------- 44 45 public void initialize(InputSplit genericSplit, TaskAttemptContext context) 46 throws IOException { 47 FileSplit split = (FileSplit) genericSplit; 48 Configuration job = context.getConfiguration(); 49 this.maxLineLength = job.getInt("mapred.linerecordreader.maxlength", 50 Integer.MAX_VALUE); 51 start = split.getStart(); 52 end = start + split.getLength(); 53 final Path file = split.getPath(); 54 compressionCodecs = new CompressionCodecFactory(job); 55 final CompressionCodec codec = compressionCodecs.getCodec(file); 56 57 FileSystem fs = file.getFileSystem(job); 58 FSDataInputStream fileIn = fs.open(split.getPath()); 59 boolean skipFirstLine = false; 60 if (codec != null) { 61 in = new NewLineReader(codec.createInputStream(fileIn), job); 62 end = Long.MAX_VALUE; 63 } else { 64 if (start != 0) { 65 skipFirstLine = true; 66 this.start -= separator.length;// 67 // --start; 68 fileIn.seek(start); 69 } 70 in = new NewLineReader(fileIn, job); 71 } 72 if (skipFirstLine) { // skip first line and re-establish "start". 73 start += in.readLine(new Text(), 0, 74 (int) Math.min((long) Integer.MAX_VALUE, end - start)); 75 } 76 this.pos = start; 77 } 78 79 public boolean nextKeyValue() throws IOException { 80 if (key == null) { 81 key = new LongWritable(); 82 } 83 key.set(pos); 84 if (value == null) { 85 value = new Text(); 86 } 87 int newSize = 0; 88 while (pos < end) { 89 newSize = in.readLine(value, maxLineLength, 90 Math.max((int) Math.min(Integer.MAX_VALUE, end - pos), 91 maxLineLength)); 92 if (newSize == 0) { 93 break; 94 } 95 pos += newSize; 96 if (newSize < maxLineLength) { 97 break; 98 } 99 100 LOG.info("Skipped line of size " + newSize + " at pos " 101 + (pos - newSize)); 102 } 103 if (newSize == 0) { 104 key = null; 105 value = null; 106 return false; 107 } else { 108 return true; 109 } 110 } 111 112 @Override 113 public LongWritable getCurrentKey() { 114 return key; 115 } 116 117 @Override 118 public Text getCurrentValue() { 119 return value; 120 } 121 122 /** 123 * Get the progress within the split 124 */ 125 public float getProgress() { 126 if (start == end) { 127 return 0.0f; 128 } else { 129 return Math.min(1.0f, (pos - start) / (float) (end - start)); 130 } 131 } 132 133 public synchronized void close() throws IOException { 134 if (in != null) { 135 in.close(); 136 } 137 } 138 139 public class NewLineReader { 140 private static final int DEFAULT_BUFFER_SIZE = 64 * 1024; 141 private int bufferSize = DEFAULT_BUFFER_SIZE; 142 private InputStream in; 143 private byte[] buffer; 144 private int bufferLength = 0; 145 private int bufferPosn = 0; 146 147 public NewLineReader(InputStream in) { 148 this(in, DEFAULT_BUFFER_SIZE); 149 } 150 151 public NewLineReader(InputStream in, int bufferSize) { 152 this.in = in; 153 this.bufferSize = bufferSize; 154 this.buffer = new byte[this.bufferSize]; 155 } 156 157 public NewLineReader(InputStream in, Configuration conf) 158 throws IOException { 159 this(in, conf.getInt("io.file.buffer.size", DEFAULT_BUFFER_SIZE)); 160 } 161 162 public void close() throws IOException { 163 in.close(); 164 } 165 166 public int readLine(Text str, int maxLineLength, int maxBytesToConsume) 167 throws IOException { 168 str.clear(); 169 Text record = new Text(); 170 int txtLength = 0; 171 long bytesConsumed = 0L; 172 boolean newline = false; 173 int sepPosn = 0; 174 do { 175 // 已经读到buffer的末尾了,读下一个buffer 176 if (this.bufferPosn >= this.bufferLength) { 177 bufferPosn = 0; 178 bufferLength = in.read(buffer); 179 // 读到文件末尾了,则跳出,进行下一个文件的读取 180 if (bufferLength <= 0) { 181 break; 182 } 183 } 184 int startPosn = this.bufferPosn; 185 for (; bufferPosn < bufferLength; bufferPosn++) { 186 // 处理上一个buffer的尾巴被切成了两半的分隔符(如果分隔符中重复字符过多在这里会有问题) 187 if (sepPosn > 0 && buffer[bufferPosn] != separator[sepPosn]) { 188 sepPosn = 0; 189 } 190 // 遇到行分隔符的第一个字符 191 if (buffer[bufferPosn] == separator[sepPosn]) { 192 bufferPosn++; 193 int i = 0; 194 // 判断接下来的字符是否也是行分隔符中的字符 195 for (++sepPosn; sepPosn < separator.length; i++, sepPosn++) { 196 // buffer的最后刚好是分隔符,且分隔符被不幸地切成了两半 197 if (bufferPosn + i >= bufferLength) { 198 bufferPosn += i - 1; 199 break; 200 } 201 // 一旦其中有一个字符不相同,就判定为不是分隔符 202 if (this.buffer[this.bufferPosn + i] != separator[sepPosn]) { 203 sepPosn = 0; 204 break; 205 } 206 } 207 // 的确遇到了行分隔符 208 if (sepPosn == separator.length) { 209 bufferPosn += i; 210 newline = true; 211 sepPosn = 0; 212 break; 213 } 214 } 215 } 216 int readLength = this.bufferPosn - startPosn; 217 bytesConsumed += readLength; 218 // 行分隔符不放入块中 219 if (readLength > maxLineLength - txtLength) { 220 readLength = maxLineLength - txtLength; 221 } 222 if (readLength > 0) { 223 record.append(this.buffer, startPosn, readLength); 224 txtLength += readLength; 225 // 去掉记录的分隔符 226 if (newline) { 227 str.set(record.getBytes(), 0, record.getLength() 228 - separator.length); 229 } 230 } 231 } while (!newline && (bytesConsumed < maxBytesToConsume)); 232 if (bytesConsumed > (long) Integer.MAX_VALUE) { 233 throw new IOException("Too many bytes before newline: " 234 + bytesConsumed); 235 } 236 237 return (int) bytesConsumed; 238 } 239 240 public int readLine(Text str, int maxLineLength) throws IOException { 241 return readLine(str, maxLineLength, Integer.MAX_VALUE); 242 } 243 244 public int readLine(Text str) throws IOException { 245 return readLine(str, Integer.MAX_VALUE, Integer.MAX_VALUE); 246 } 247 } 248 }

package MyInputFormat; import java.io.IOException; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; public class TestMyInputFormat { public static class MapperClass extends Mapper<LongWritable, Text, Text, Text> { public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { System.out.println("key:\t " + key); System.out.println("value:\t " + value); System.out.println("-------------------------"); } } public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException { Configuration conf = new Configuration(); Path outPath = new Path("/hive/11"); FileSystem.get(conf).delete(outPath, true); Job job = new Job(conf, "TestMyInputFormat"); job.setInputFormatClass(TrackInputFormat.class); job.setJarByClass(TestMyInputFormat.class); job.setMapperClass(TestMyInputFormat.MapperClass.class); job.setNumReduceTasks(0); job.setMapOutputKeyClass(Text.class); job.setMapOutputValueClass(Text.class); FileInputFormat.addInputPath(job, new Path(args[0])); org.apache.hadoop.mapreduce.lib.output.FileOutputFormat.setOutputPath(job, outPath); System.exit(job.waitForCompletion(true) ? 0 : 1); } }

3、测试数据:

1 a 1_hao123 1 a 1_baidu 1 b 1_google 2END 2 c 2_google 2 c 2_hao123 2 c 2_google 1END 3 a 3_baidu 3 a 3_sougou 3 b 3_soso 2END

4、结果:

key: 0 value: 1 a 1_hao123 1 a 1_baidu 1 b 1_google 2 ------------------------- key: 47 value: 2 c 2_google 2 c 2_hao123 2 c 2_google 1 ------------------------- key: 96 value: 3 a 3_baidu 3 a 3_sougou 3 b 3_soso 2 -------------------------

REF:

自定义hadoop map/reduce输入文件切割InputFormat

http://hi.baidu.com/lzpsky/item/0d9d84c05afb43ba0c0a7b27

MapReduce高级编程之自定义InputFormat

http://datamining.xmu.edu.cn/bbs/home.php?mod=space&uid=91&do=blog&id=190

浙公网安备 33010602011771号

浙公网安备 33010602011771号