Dubbo + Nacos这么玩就失去高可用的能力了

酱香配拿铁喝了伤头,Dubbo配Nacos这么玩也会伤头。本文介绍Dubbo配合Nacos搭建的微服务系统,在Nacos-Server集群重启时出现的问题。过程中通过种种现象、猜测、翻看源码、实践,最终让Nacos-Server平滑重启。

我们常用的微服务框架是SpringCloud那一套,在服务远程调用和注册中心的选型上也有不少方案。在服务远程调用上常用的有:Feign、Dubbo等,在注册中心上常用的有:Nacos、Zookeeper、Consul、Eureka等。我们项目这两块的选型是这样的:RPC调用-Dubbo、注册中心和配置中心-Nacos。

1、故障开端

项目平稳运行了好几年,有一天发现Nacos集群的Server内存有点高,所以想升级下机器配置,然后重启。说干就干,立马在测试环境的3台Nacos-Server集群中,任意选了一台进行停机,暂且叫它Nacos-Server-1吧。接下来就是故障了开端了。

停机之后,测试环境立马有许多服务的接口调不通,等待许久,故障一直没恢复。所以又赶紧把Nacos-Server-1启动起来。要找找原因,否则无法在生产环境重启Nacos-Server。

我一直的观点是:**出现疑难问题时,首先看异常信息,然后猜测原因,再通过实践去验证,最终可以通过源码再去证实。**而不是一上来就看源码,那样比酱香配拿铁更伤头。

2、异常信息

当Nacos-server-1停机时,首先在Nacos-Client(即某个微服务应用)看到异常,主要有2个:

- nacos-client与nacos-server心跳异常

- dubbo微服务调用异常

1、nacos-client与nacos-server心跳异常:

2023-09-06 08:10:09|ERROR|com.alibaba.nacos.client.naming.net.NamingProxy:reqApi|548|com.alibaba.nacos.naming.beat.sender|"request: /nacos/v1/ns/instance/beat failed, servers: [10.20.1.13:8848, 10.20.1.14:8848, 10.20.1.15:8848], code: 500, msg: java.net.SocketTimeoutException: Read timed out"|""

2023-09-06 08:10:09|ERROR|com.alibaba.nacos.client.naming.beat.BeatReactor$BeatTask:run|198|com.alibaba.nacos.naming.beat.sender|"[CLIENT-BEAT] failed to send beat: {"port":0,"ip":"10.21.230.14","weight":1.0,"serviceName":"DEFAULT_GROUP@@consumers:com.cloud.usercenter.api.PartyCompanyMemberApi:1.0:","metadata":{"owner":"ehome-cloud-owner","init":"false","side":"consumer","application.version":"1.0","methods":"queryGroupMemberCount,queryWithValid,query,queryOne,update,insert,queryCount,queryPage,delete,queryList","release":"2.7.8","dubbo":"2.0.2","pid":"6","check":"false","interface":"com.bm001.ehome.cloud.usercenter.api.PartyCompanyMemberApi","version":"1.0","qos.enable":"false","timeout":"20000","revision":"1.2.38-SNAPSHOT","retries":"0","path":"com.bm001.ehome.cloud.usercenter.api.PartyCompanyMemberApi","protocol":"consumer","metadata-type":"remote","application":"xxxx-cloud","sticky":"false","category":"consumers","timestamp":"1693917779436"},"scheduled":false,"period":5000,"stopped":false}, code: 500, msg: failed to req API:/nacos/v1/ns/instance/beat after all servers([10.20.1.13:8848, 10.20.1.14:8848, 10.20.1.15:8848])"|""

2023-09-06 08:10:10|ERROR|com.alibaba.nacos.client.naming.net.NamingProxy:callServer|613|com.alibaba.nacos.naming.beat.sender|"[NA] failed to request"|"com.alibaba.nacos.api.exception.NacosException: java.net.ConnectException: 拒绝连接 (Connection refused)

at com.alibaba.nacos.client.naming.net.NamingProxy.callServer(NamingProxy.java:611)

at com.alibaba.nacos.client.naming.net.NamingProxy.reqApi(NamingProxy.java:524)

at com.alibaba.nacos.client.naming.net.NamingProxy.reqApi(NamingProxy.java:491)

at com.alibaba.nacos.client.naming.net.NamingProxy.sendBeat(NamingProxy.java:426)

at com.alibaba.nacos.client.naming.beat.BeatReactor$BeatTask.run(BeatReactor.java:167)

Caused by: java.io.IOException: Server returned HTTP response code: 502 for URL: http://10.20.1.14:8848/nacos/v1/ns/instance/beat?app=unknown&serviceName=DEFAULT_GROUP%40%40providers%3AChannelOrderExpressApi%3A1.0%3A&namespaceId=dev&port=20880&ip=10.20.0.200

at sun.net.www.protocol.http.HttpURLConnection.getInputStream0(HttpURLConnection.java:1914)

at sun.net.www.protocol.http.HttpURLConnection.getInputStream(HttpURLConnection.java:1512)

at java.net.HttpURLConnection.getResponseCode(HttpURLConnection.java:480)

at com.alibaba.nacos.common.http.client.response.JdkHttpClientResponse.getStatusCode(JdkHttpClientResponse.java:75)

at com.alibaba.nacos.common.http.client.handler.AbstractResponseHandler.handle(AbstractResponseHandler.java:43)

"

2、dubbo微服务调用异常:

2023-09-06 08:09:38|ERROR|runtimeExceptionHandler|135|http-nio-8080-exec-5|"发生系统异常"|"org.apache.dubbo.rpc.RpcException: No provider available from registry 10.20.1.13:8848,10.20.1.14:8848,10.20.1.15:8848 for service ClueAuntMatchApi:1.0 on consumer 10.21.230.14 use dubbo version 2.7.8, please check status of providers(disabled, not registered or in blacklist).

at org.apache.dubbo.registry.integration.RegistryDirectory.doList(RegistryDirectory.java:599)

at org.apache.dubbo.rpc.cluster.directory.AbstractDirectory.list(AbstractDirectory.java:74)

at org.apache.dubbo.rpc.cluster.support.AbstractClusterInvoker.list(AbstractClusterInvoker.java:292)

at org.apache.dubbo.rpc.cluster.support.AbstractClusterInvoker.invoke(AbstractClusterInvoker.java:257)

at org.apache.dubbo.rpc.cluster.interceptor.ClusterInterceptor.intercept(ClusterInterceptor.java:47)

3、根据异常进行猜测

熟悉Dubbo的朋友肯定知道这个错误please check status of providers(disabled, not registered or in blacklist).,基本上是代表:Provider下线了 或者 Consumer没找到Provider。

根据以往使用dubbo + zookeeper的经验,客户端应该会拉取注册中心的Provider的信息,然后本地缓存一份,即使注册中心挂了,应该也能调用到别的服务。不至于出现完全找不到服务提供者的信息。

当思考不出来时,只能靠异常去猜测原因了。根据以上2个异常开始猜测。

3.1、猜测1

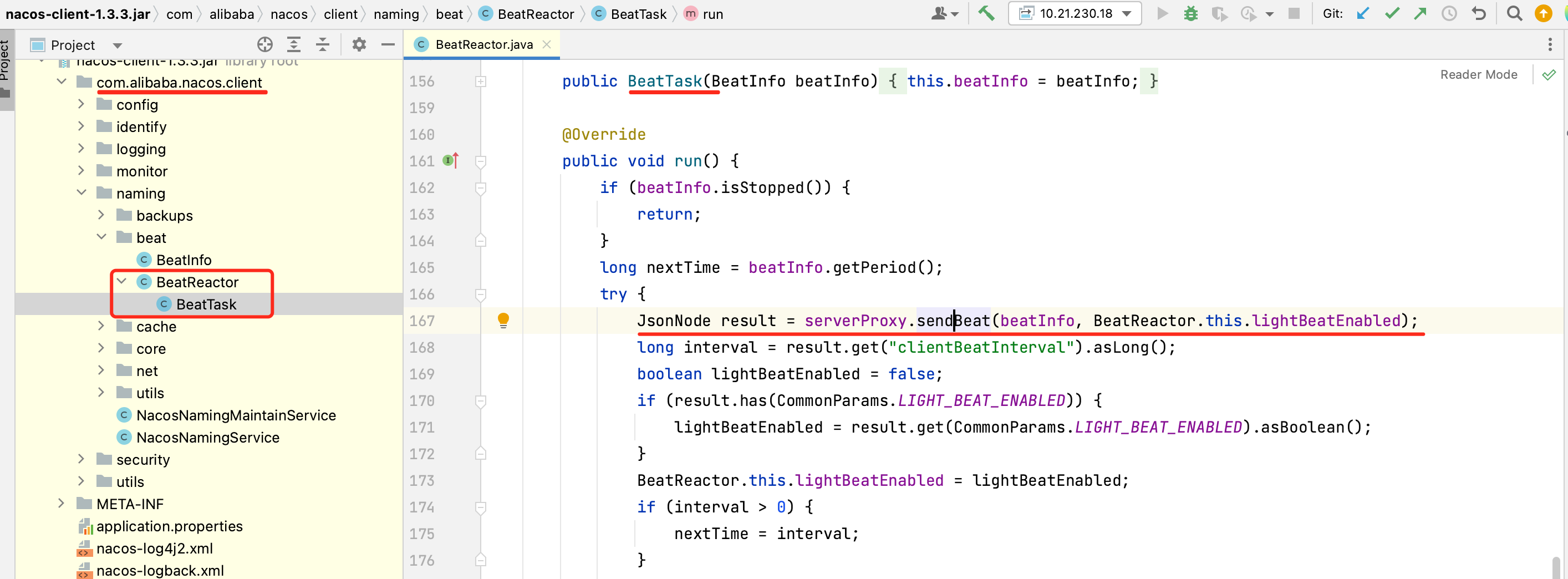

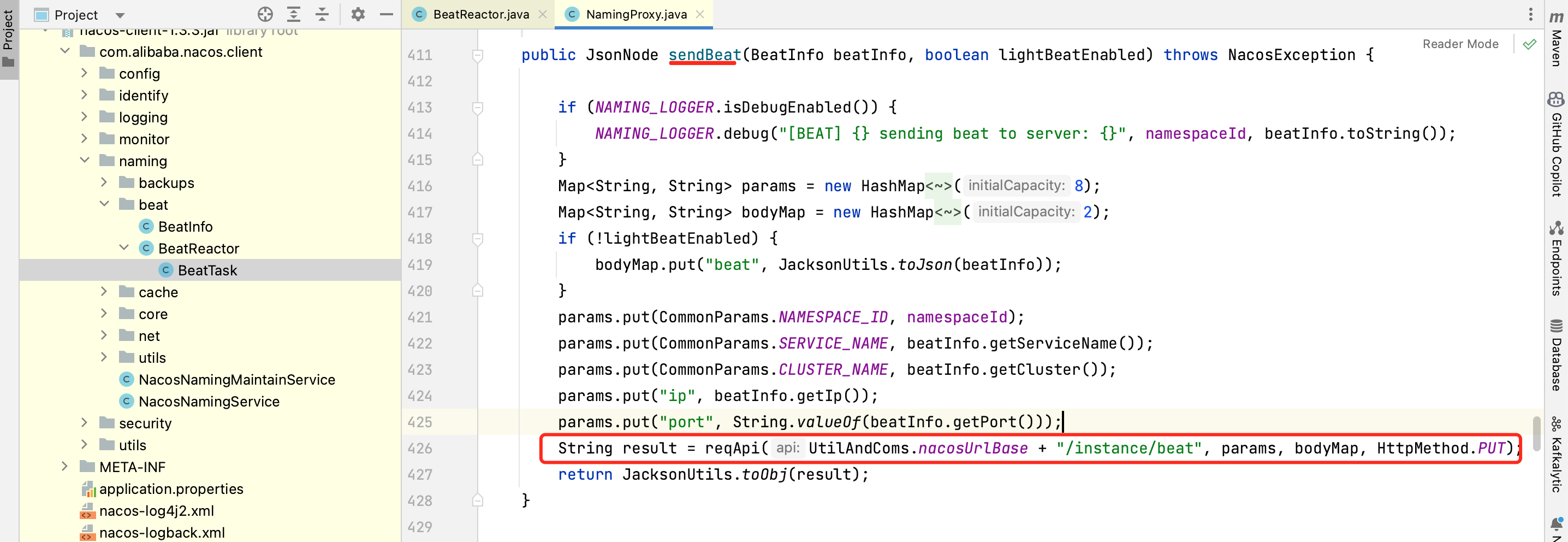

由于nacos-server-1挂了,导致nacos-client与server的心跳异常,导致本地缓存的provider的元数据被清掉了。有了猜测,赶紧查看nacos-client的源代码,找到nacos-client 与 nacos-server 心跳的那一段:

继续往下跟,可以看到这段核心代码:

public String reqApi(String api, Map<String, String> params, Map<String, String> body, List<String> servers,

String method) throws NacosException {

params.put(CommonParams.NAMESPACE_ID, getNamespaceId());

if (CollectionUtils.isEmpty(servers) && StringUtils.isEmpty(nacosDomain)) {

throw new NacosException(NacosException.INVALID_PARAM, "no server available");

}

NacosException exception = new NacosException();

if (servers != null && !servers.isEmpty()) {

Random random = new Random(System.currentTimeMillis());

int index = random.nextInt(servers.size());

for (int i = 0; i < servers.size(); i++) {

String server = servers.get(index);

try {

return callServer(api, params, body, server, method);

} catch (NacosException e) {

exception = e;

if (NAMING_LOGGER.isDebugEnabled()) {

NAMING_LOGGER.debug("request {} failed.", server, e);

}

}

index = (index + 1) % servers.size();

}

}

if (StringUtils.isNotBlank(nacosDomain)) {

for (int i = 0; i < UtilAndComs.REQUEST_DOMAIN_RETRY_COUNT; i++) {

try {

return callServer(api, params, body, nacosDomain, method);

} catch (NacosException e) {

exception = e;

if (NAMING_LOGGER.isDebugEnabled()) {

NAMING_LOGGER.debug("request {} failed.", nacosDomain, e);

}

}

}

}

NAMING_LOGGER.error("request: {} failed, servers: {}, code: {}, msg: {}", api, servers, exception.getErrCode(),

exception.getErrMsg());

throw new NacosException(exception.getErrCode(),

"failed to req API:" + api + " after all servers(" + servers + ") tried: " + exception.getMessage());

}

注意上面的这段代码:

for (int i = 0; i < servers.size(); i++) {

String server = servers.get(index);

try {

return callServer(api, params, body, server, method);

} catch (NacosException e) {

exception = e;

if (NAMING_LOGGER.isDebugEnabled()) {

NAMING_LOGGER.debug("request {} failed.", server, e);

}

}

index = (index + 1) % servers.size();

}

通过以上这一段代码可以知道,nacos-client与nacos-server集群里的随机一台通信,感兴趣的朋友可以继续阅读源代码,跟到最后会发现,只要有一次心跳是正常的,那就认为心跳正常。因为我只停了一台nacos-server,但是与其他两台server依旧可以保持心跳,所以整个心跳过程虽然报错,但是仍然是正常的,所以这个猜测放弃了,继续猜测。

3.2、猜测2

既然dubbo与zookeeper是建立长连接进行socket通信,那dubbo与nacos-server可能也是建立了长连接进行socket通信,某个nacos-server挂了之后,可能因为nacos-server没有zookeeper的选主机制,所以不会自动切换到别的可用的nacos-server去调用。

或者是nacos-server集群选主问题,选主后没有及时通知到consumer,或者consumer与nacos本身通信机制有问题。总之就是因为某种机制,导致没有自动切换到可用的nacos-server上,导致获取不到provider元数据,自然就无法发起调用。

既然有了这个猜想,那就赶紧去证实:

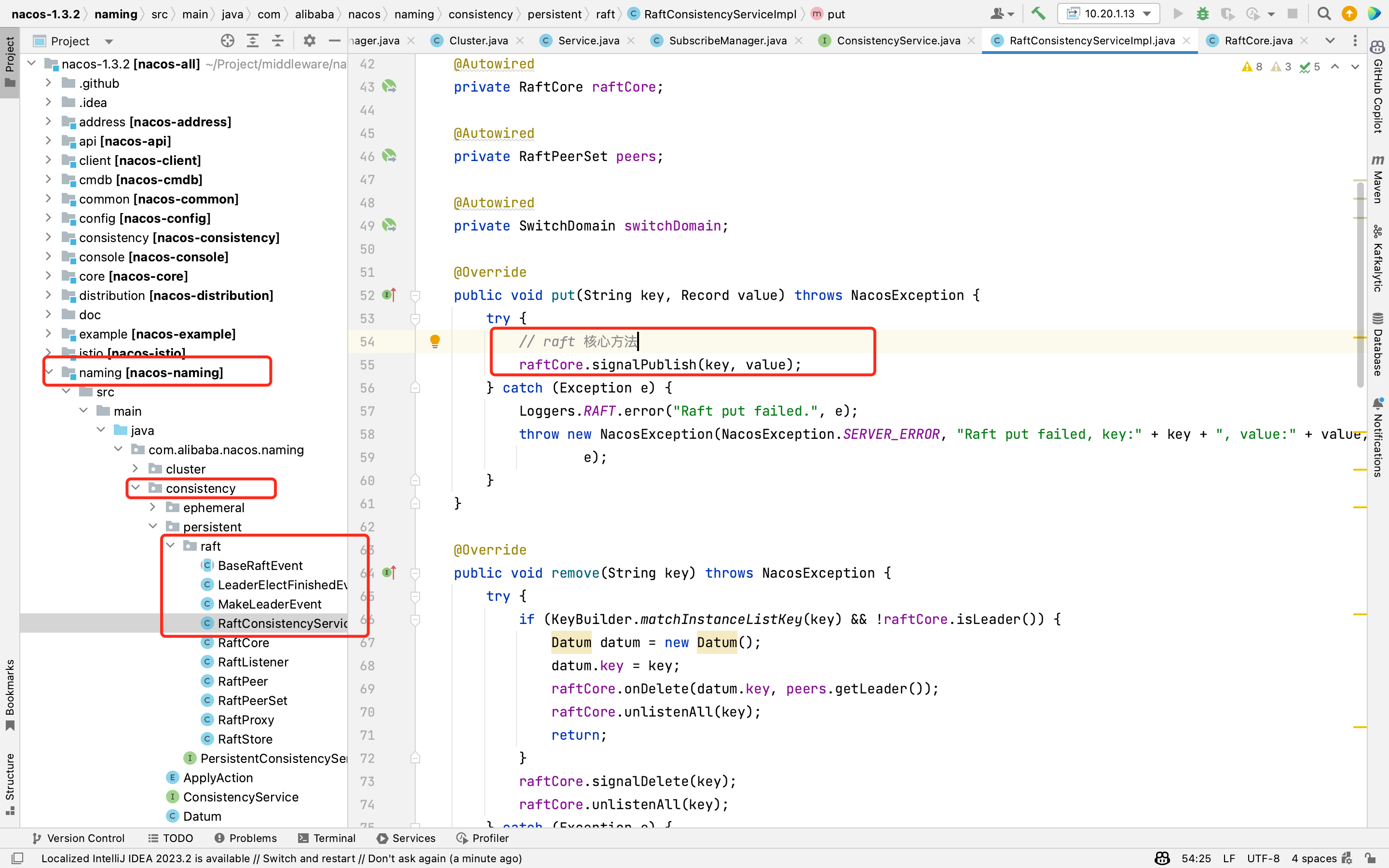

继续翻看nacos源码,发现nacos提供了集群节点之间数据一致性保障,使用的是Raft协议(一致性的选主协议,最后在简单介绍),源代码如下:

既然有选主协议,那就看看为什么通信还是失败了呢?继续从nacos-server的异常信息入手,在nacos-server-1停机时,看到nacos-server的logs下多种异常信息:

在naming-raft.log里,如下异常信息:

java.lang.NullPointerException: null

at com.alibaba.nacos.naming.consistency.persistent.raft.RaftCore.signalDelete(RaftCore.java:275)

at com.alibaba.nacos.naming.consistency.persistent.raft.RaftConsistencyServiceImpl.remove(RaftConsistencyServiceImpl.java:72)

at com.alibaba.nacos.naming.consistency.DelegateConsistencyServiceImpl.remove(DelegateConsistencyServiceImpl.java:53)

at com.alibaba.nacos.naming.core.ServiceManager.easyRemoveService(ServiceManager.java:434)

at com.alibaba.nacos.naming.core.ServiceManager$EmptyServiceAutoClean.lambda$null$1(ServiceManager.java:902)

at java.util.concurrent.ConcurrentHashMap.computeIfPresent(ConcurrentHashMap.java:1769)

at com.alibaba.nacos.naming.core.ServiceManager$EmptyServiceAutoClean.lambda$null$2(ServiceManager.java:891)

at java.util.stream.ForEachOps$ForEachOp$OfRef.accept(ForEachOps.java:183)

at java.util.stream.ReferencePipeline$2$1.accept(ReferencePipeline.java:175)

at java.util.concurrent.ConcurrentHashMap$EntrySpliterator.forEachRemaining(ConcurrentHashMap.java:3606)

at java.util.stream.AbstractPipeline.copyInto(AbstractPipeline.java:482)

at java.util.stream.ForEachOps$ForEachTask.compute(ForEachOps.java:290)

at java.util.concurrent.CountedCompleter.exec(CountedCompleter.java:731)

at java.util.concurrent.ForkJoinTask.doExec(ForkJoinTask.java:289)

at java.util.concurrent.ForkJoinPool.helpComplete(ForkJoinPool.java:1870)

at java.util.concurrent.ForkJoinPool.externalHelpComplete(ForkJoinPool.java:2467)

at java.util.concurrent.ForkJoinTask.externalAwaitDone(ForkJoinTask.java:324)

at java.util.concurrent.ForkJoinTask.doInvoke(ForkJoinTask.java:405)

at java.util.concurrent.ForkJoinTask.invoke(ForkJoinTask.java:734)

at java.util.stream.ForEachOps$ForEachOp.evaluateParallel(ForEachOps.java:159)

at java.util.stream.ForEachOps$ForEachOp$OfRef.evaluateParallel(ForEachOps.java:173)

at java.util.stream.AbstractPipeline.evaluate(AbstractPipeline.java:233)

at java.util.stream.ReferencePipeline.forEach(ReferencePipeline.java:485)

at com.alibaba.nacos.naming.core.ServiceManager$EmptyServiceAutoClean.lambda$run$3(ServiceManager.java:891)

at java.util.concurrent.ConcurrentHashMap.forEach(ConcurrentHashMap.java:1597)

at com.alibaba.nacos.naming.core.ServiceManager$EmptyServiceAutoClean.run(ServiceManager.java:881)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.runAndReset(FutureTask.java:308)

at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$301(ScheduledThreadPoolExecutor.java:180)

at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:294)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

2023-09-07 08:19:25,262 ERROR Raft remove failed.

naming-push.log里,如下异常信息:

java.lang.IllegalStateException: unable to find ackEntry for key: 10.21.140.23,43998,31247629183519634, ack json: {"type": "push-ack", "lastRefTime":"31247629183519634", "data":""}

at com.alibaba.nacos.naming.push.PushService$Receiver.run(PushService.java:677)

at java.lang.Thread.run(Thread.java:748)

2023-09-07 08:17:38,533 ERROR [NACOS-PUSH] error while receiving ack data

naming-distro.log里,如下异常信息:

2023-09-07 08:19:39,904 ERROR receive responsible key timestamp of com.alibaba.nacos.naming.iplist.ephemeral.dev-jzj##DEFAULT_GROUP@@providers:com.bm001.league.ordercenter.api.AdCluePoolApi:1.0: from 10.20.1.13:8848

将这些异常信息结合起来可以推断出,在nacos-server-1停机时,nacos-server集群只剩余2台机器,它们在利用Raft协议进行选主时,出现了异常。导致consumer没有找到主节点,无法建立正确的通信,所以consumer获取不到provider的元数据。

继续证实这个推断吧!

此时同时把nacos-server-1和nacos-server-2同时停机,只保留1台nacos-server时,微服务之间调用就正常了。因为单个节点时,选主正常,consumer很快与nacos-server建立了通信。此时再把3台全部启动后,也是一切正常。至此可以证实2台nacos-server确实存在选主问题。

至此问题解决,安心干活儿去了,哈哈!

3.3、Raft协议

简单讲下Raft协议,Raft协议主要用来满足微服务CAP理论中的CP,保障集群环境下的数据一致性。在Raft理论中,把每一个集群节点定义了三种状态,跟zookeeper的ZAB 协议类似:

- Follower 追随者:集群所有节点一开始都是 Follower。

- Candidate 候选者:当集群的某个节点开始发起投票选举 Leader 的时,先给自己投一票,这时就会从 Follower 变成 Candidate。

- Leader 领导者:当集群的某个节点获得大多数节点(超过一半)的投票,那么就会变成 Leader。

4、总结

经过以上的过程,有3点注意:

1、nacos-client和nacos-server的心跳只是告诉服务器,我这个客户端的服务是正常的,同时nacos-server集群之间会异步同步服务信息。但是具体调用时依赖dubbo,dubbo在调用时,是单独的通道从nacos-server拉取provider的元数据。

2、nacos-server重启时,一定要选在深夜,避开正常流量时间。同时为了保障集群持续可用,集群节点数保持奇数,偶数时会出现选主问题,导致客户端与服务端无法正常通信,无法发起微服务调用。也就失去了nacos集群的能力了。

3、出现疑难问题时,首先看异常信息,然后猜测原因,再通过实践去验证,最终可以通过源码再去证实。

本篇完结!感谢你的阅读,欢迎点赞 关注 收藏 私信!!!

原文链接:http://www.mangod.top/articles/2023/09/07/1694069167853.html、https://mp.weixin.qq.com/s/x0K3mpG3xosstAe5RgNBJQ

浙公网安备 33010602011771号

浙公网安备 33010602011771号