kubeadm部署安装+dashboard+harbor

kubeadm 部署安装+dashboard+harbor

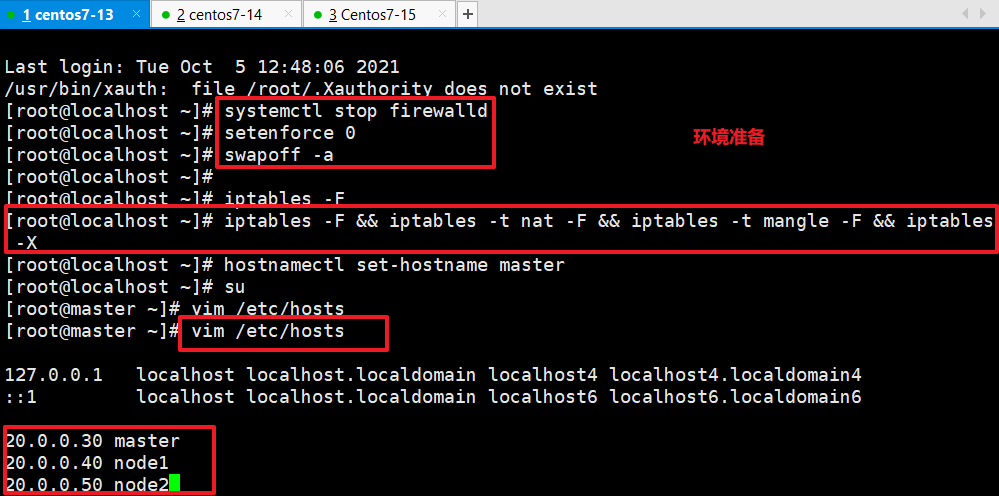

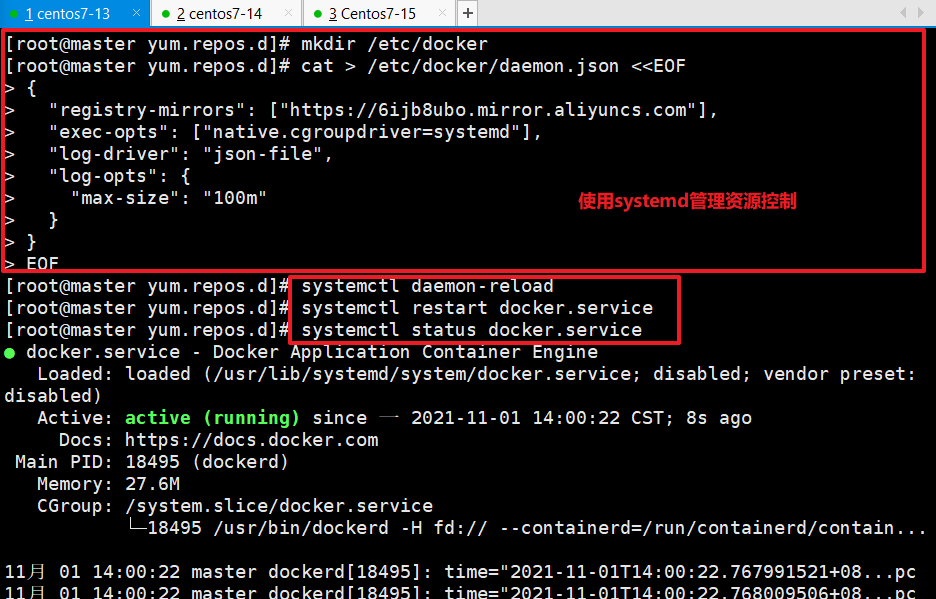

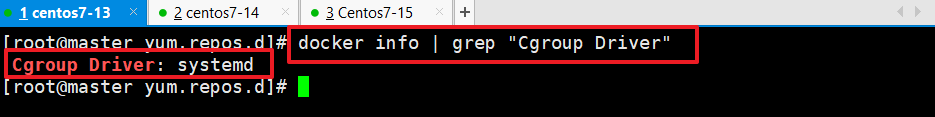

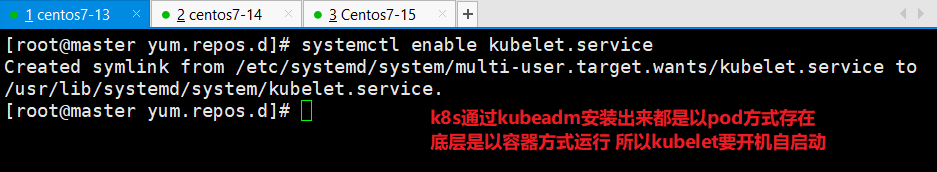

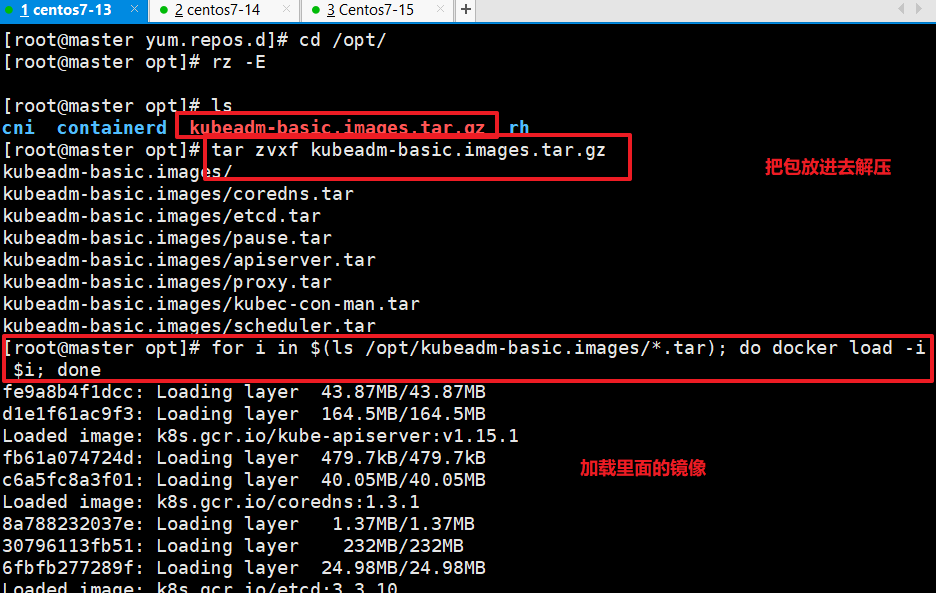

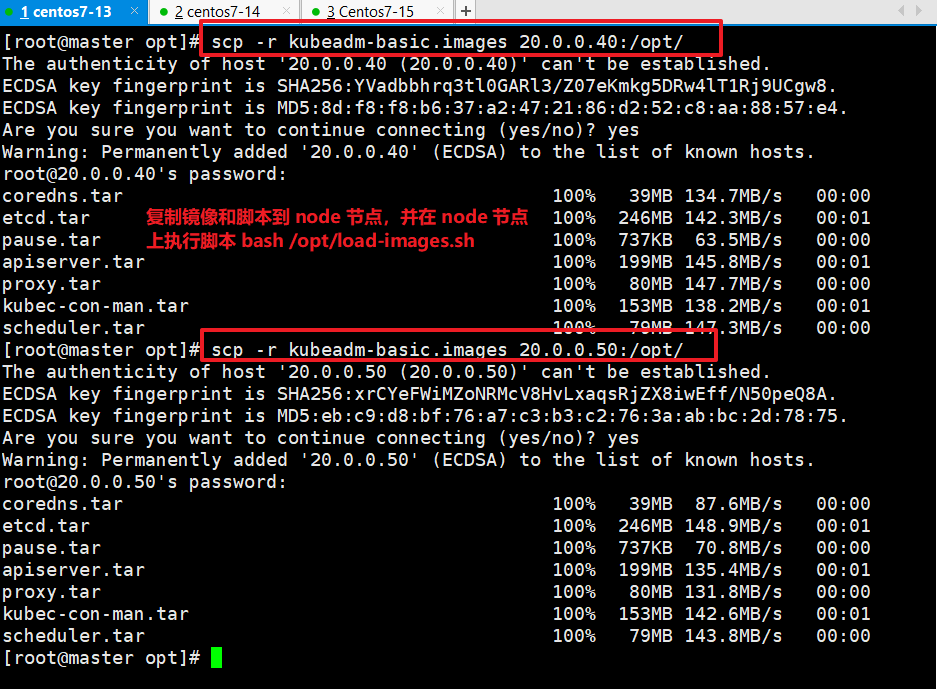

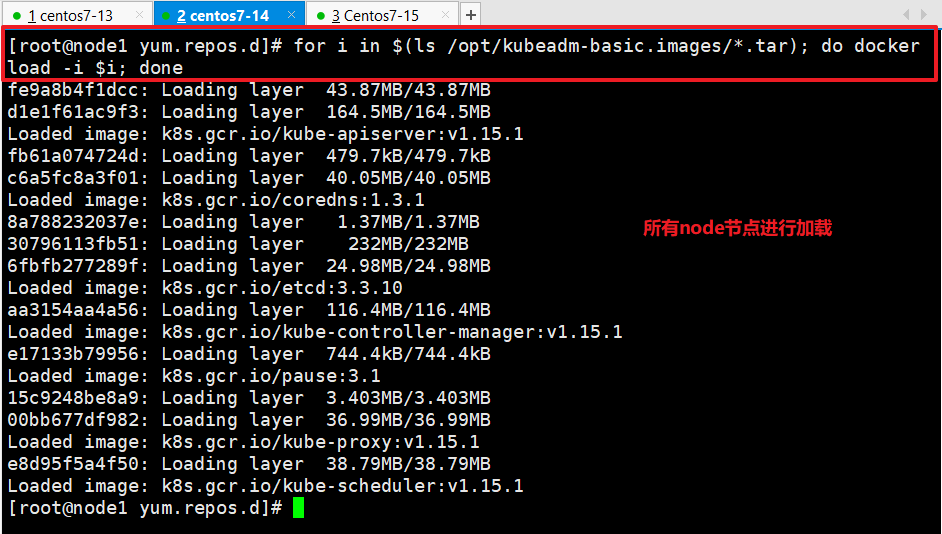

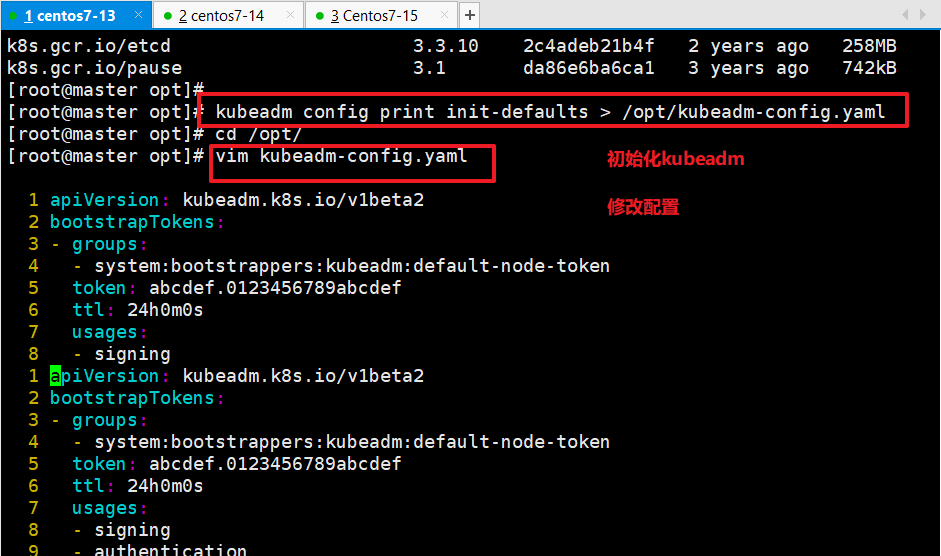

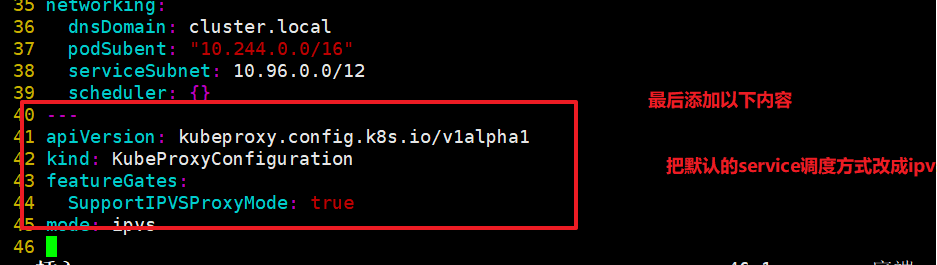

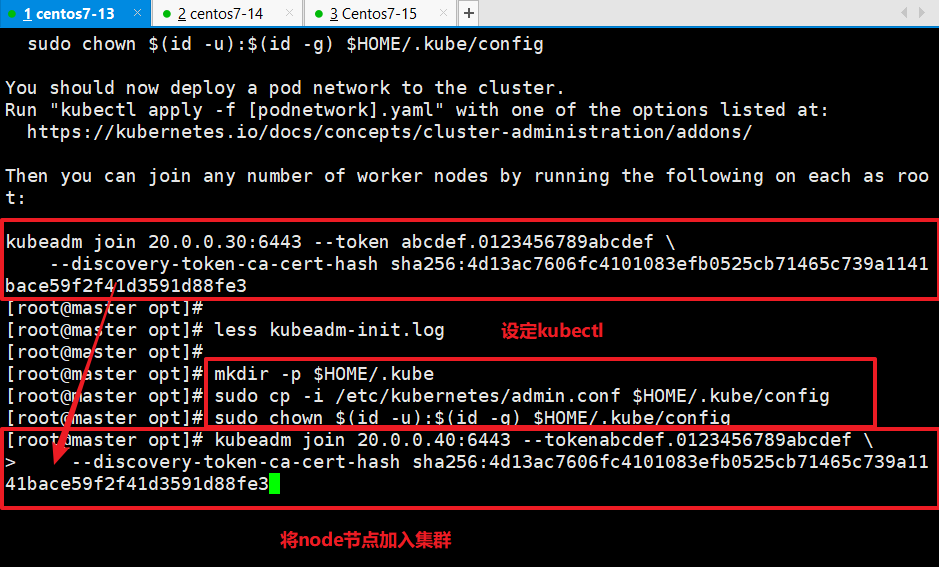

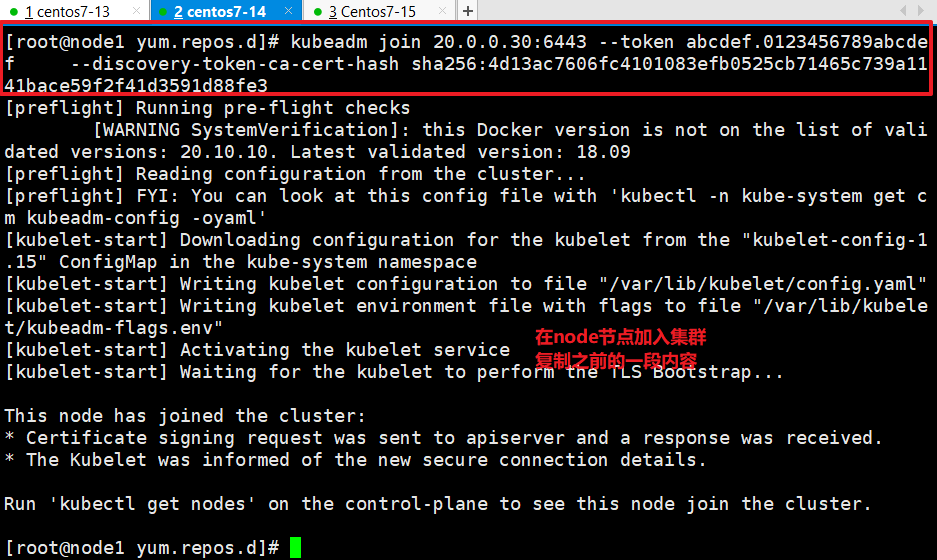

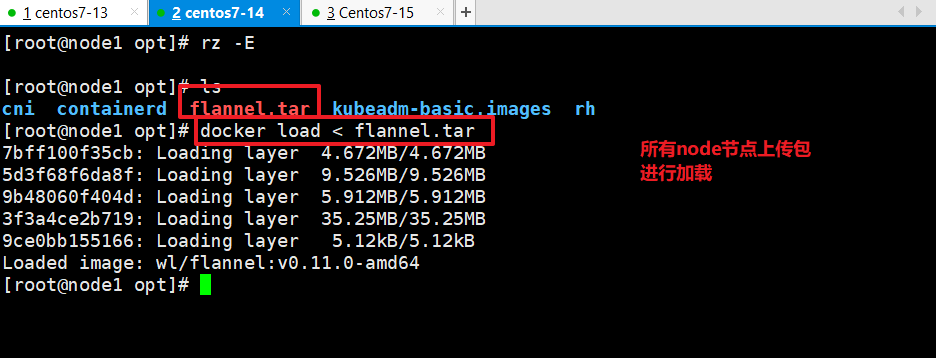

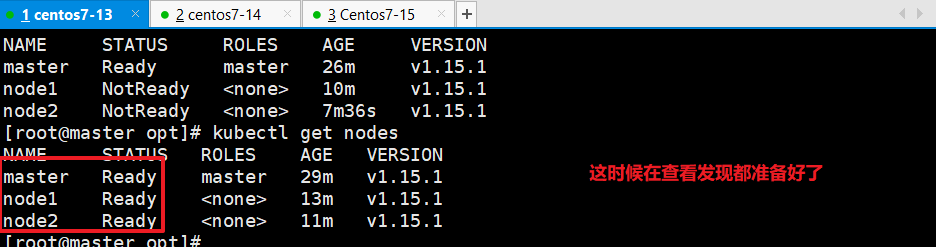

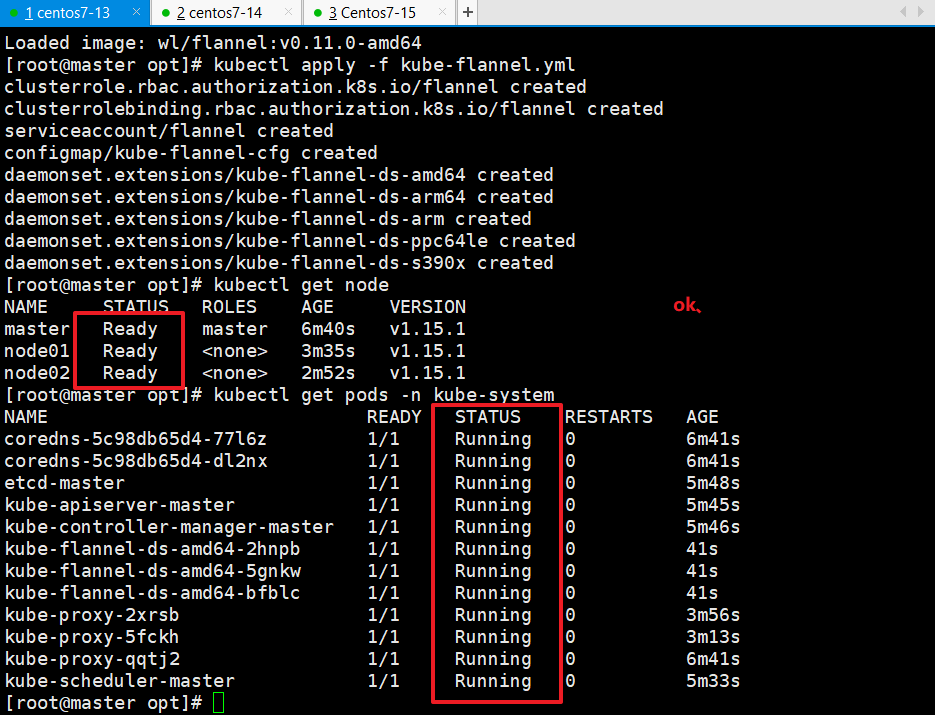

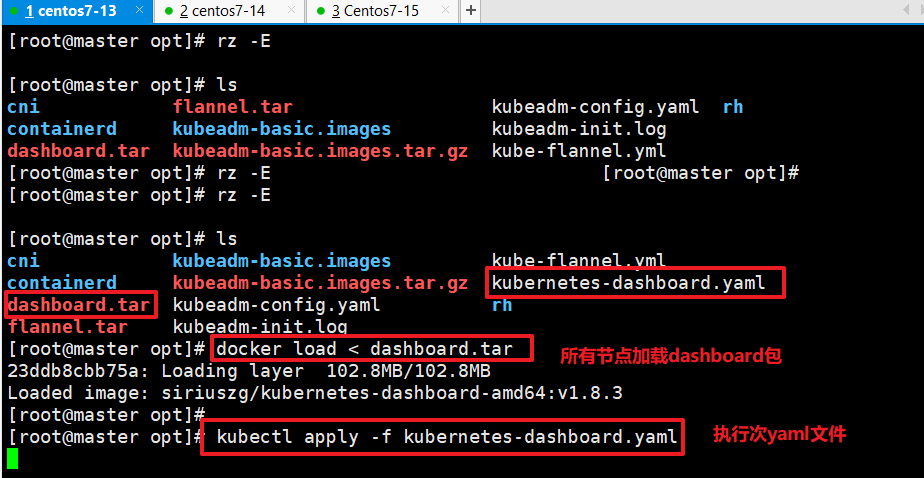

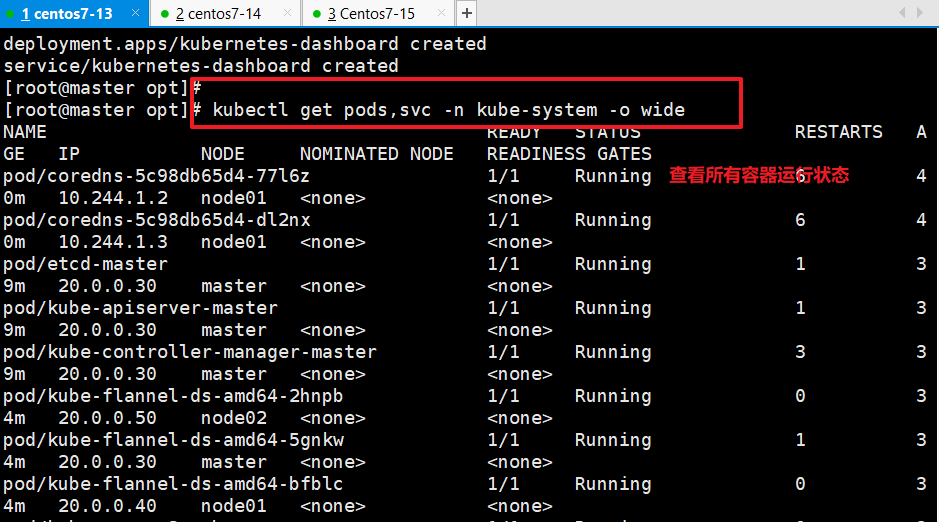

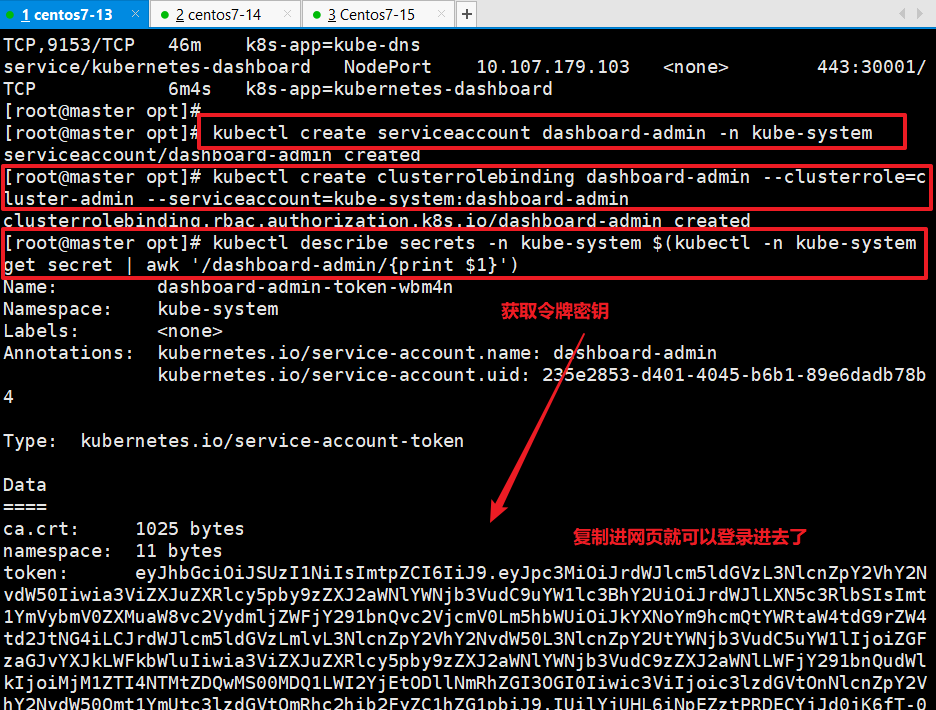

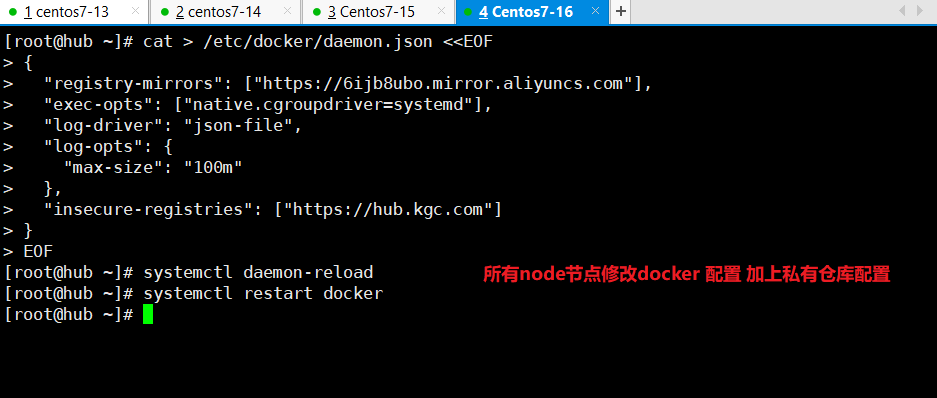

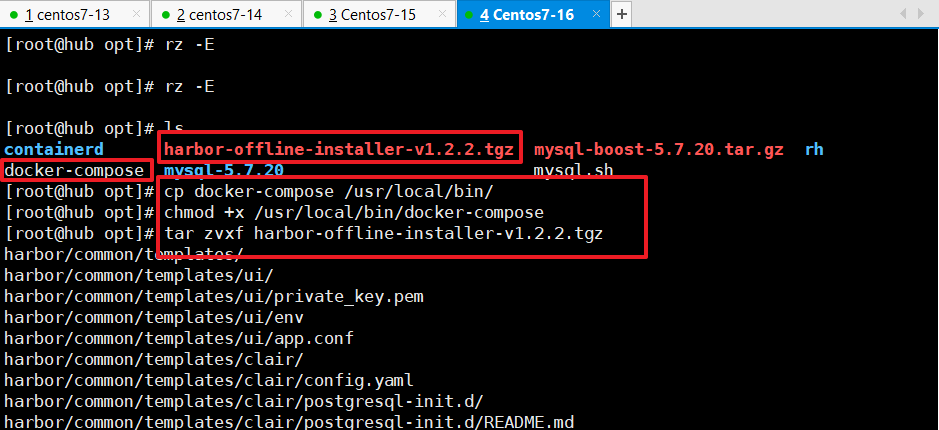

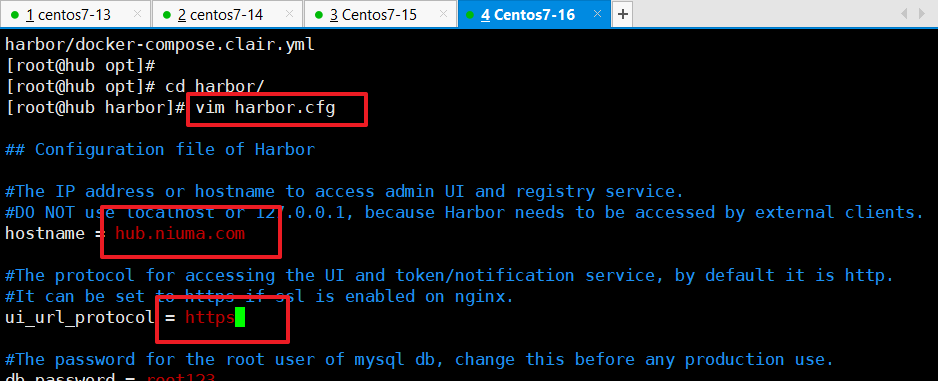

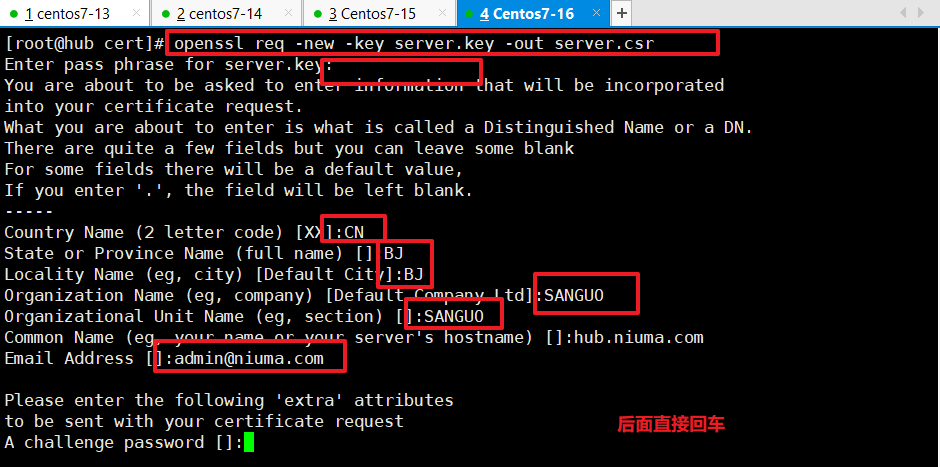

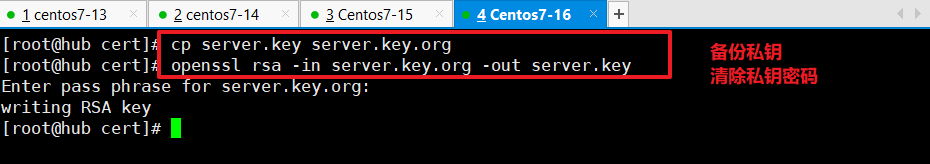

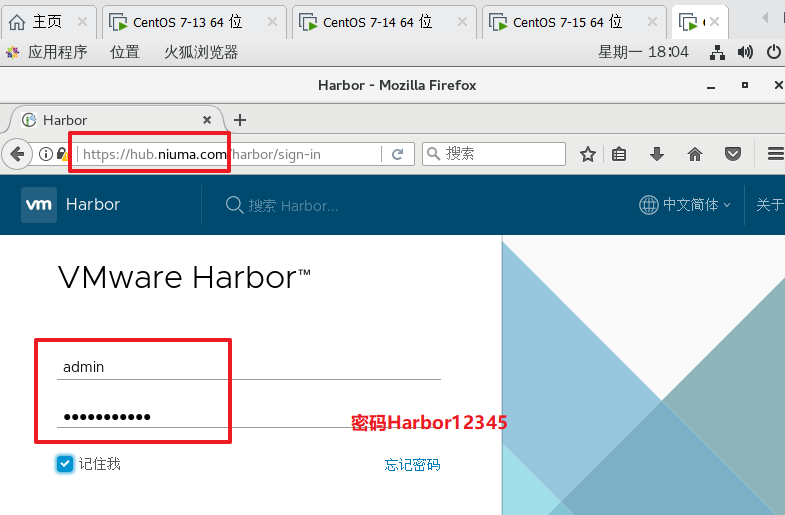

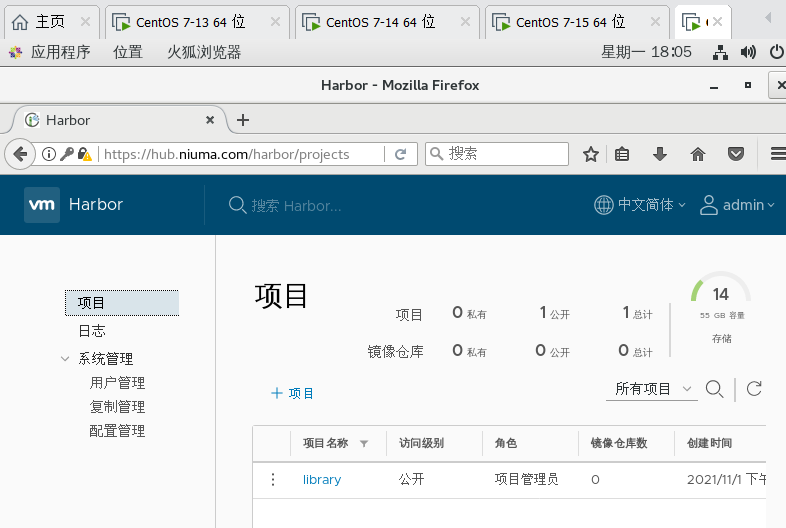

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 400 401 402 403 404 405 406 407 408 409 410 411 412 413 414 415 416 417 418 419 420 421 422 423 424 425 426 427 428 429 430 431 432 433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 448 449 450 451 452 453 454 455 456 457 458 459 460 461 462 463 464 465 466 467 468 469 470 471 472 473 474 475 476 477 478 479 480 | master(2C/4G,cpu核心数要求大于2) 192.168.80.10 docker、kubeadm、kubelet、kubectl、flannelnode01(2C/2G) 192.168.80.11 docker、kubeadm、kubelet、kubectl、flannelnode02(2C/2G) 192.168.80.12 docker、kubeadm、kubelet、kubectl、flannelHarbor节点(hub.kgc.com) 192.168.80.13 docker、docker-compose、harbor-offline-v1.2.21、在所有节点上安装Docker和kubeadm2、部署Kubernetes Master3、部署容器网络插件4、部署 Kubernetes Node,将节点加入Kubernetes集群中5、部署 Dashboard Web 页面,可视化查看Kubernetes资源6、部署 Harbor 私有仓库,存放镜像资源------------------------------ 环境准备 ------------------------------//所有节点,关闭防火墙规则,关闭selinux,关闭swap交换systemctl stop firewalldsystemctl disable firewalldsetenforce 0iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -Xswapoff -a #交换分区必须要关闭sed -ri 's/.*swap.*/#&/' /etc/fstab #永久关闭swap分区,&符号在sed命令中代表上次匹配的结果#加载 ip_vs 模块for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i >/dev/null 2>&1 && /sbin/modprobe $i;done//修改主机名hostnamectl set-hostname masterhostnamectl set-hostname node01hostnamectl set-hostname node02//所有节点修改hosts文件vim /etc/hosts192.168.80.10 master192.168.80.11 node01192.168.80.12 node02//调整内核参数cat > /etc/sysctl.d/kubernetes.conf << EOF#开启网桥模式,可将网桥的流量传递给iptables链net.bridge.bridge-nf-call-ip6tables=1net.bridge.bridge-nf-call-iptables=1#关闭ipv6协议net.ipv6.conf.all.disable_ipv6=1net.ipv4.ip_forward=1EOF//生效参数sysctl --system -------------------- 所有节点安装docker --------------------yum install -y yum-utils device-mapper-persistent-data lvm2 yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo yum install -y docker-ce docker-ce-cli containerd.iomkdir /etc/dockercat > /etc/docker/daemon.json <<EOF{ "registry-mirrors": ["https://6ijb8ubo.mirror.aliyuncs.com"], "exec-opts": ["native.cgroupdriver=systemd"], "log-driver": "json-file", "log-opts": { "max-size": "100m" }}EOF#使用Systemd管理的Cgroup来进行资源控制与管理,因为相对Cgroupfs而言,Systemd限制CPU、内存等资源更加简单和成熟稳定。#日志使用json-file格式类型存储,大小为100M,保存在/var/log/containers目录下,方便ELK等日志系统收集和管理日志。systemctl daemon-reloadsystemctl restart docker.servicesystemctl enable docker.service docker info | grep "Cgroup Driver"Cgroup Driver: systemd-------------------- 所有节点安装kubeadm,kubelet和kubectl --------------------//定义kubernetes源cat > /etc/yum.repos.d/kubernetes.repo << EOF[kubernetes]name=Kubernetesbaseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64enabled=1gpgcheck=0repo_gpgcheck=0gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpgEOFyum install -y kubelet-1.15.1 kubeadm-1.15.1 kubectl-1.15.1//开机自启kubeletsystemctl enable kubelet.service#K8S通过kubeadm安装出来以后都是以Pod方式存在,即底层是以容器方式运行,所以kubelet必须设置开机自启-------------------- 部署K8S集群 -------------------- //查看初始化需要的镜像kubeadm config images list//在 master 节点上传 kubeadm-basic.images.tar.gz 压缩包至 /opt 目录cd /opttar zxvf kubeadm-basic.images.tar.gzfor i in $(ls /opt/kubeadm-basic.images/*.tar); do docker load -i $i; done//复制镜像和脚本到 node 节点,并在 node 节点上执行脚本 bash /opt/load-images.shscp -r kubeadm-basic.images root@node01:/optscp -r kubeadm-basic.images root@node02:/opt//初始化kubeadm方法一:kubeadm config print init-defaults > /opt/kubeadm-config.yamlcd /opt/vim kubeadm-config.yaml......11 localAPIEndpoint:12 advertiseAddress: 192.168.80.10 #指定master节点的IP地址13 bindPort: 6443......34 kubernetesVersion: v1.15.1 #指定kubernetes版本号35 networking:36 dnsDomain: cluster.local37 podSubnet: "10.244.0.0/16" #指定pod网段,10.244.0.0/16用于匹配flannel默认网段38 serviceSubnet: 10.96.0.0/16 #指定service网段39 scheduler: {}--- #末尾再添加以下内容apiVersion: kubeproxy.config.k8s.io/v1alpha1kind: KubeProxyConfigurationmode: ipvs #把默认的service调度方式改为ipvs模式kubeadm init --config=kubeadm-config.yaml --experimental-upload-certs | tee kubeadm-init.log#--experimental-upload-certs 参数可以在后续执行加入节点时自动分发证书文件,k8sV1.16版本开始替换为 --upload-certs#tee kubeadm-init.log 用以输出日志//查看 kubeadm-init 日志less kubeadm-init.log//kubernetes配置文件目录ls /etc/kubernetes///存放ca等证书和密码的目录ls /etc/kubernetes/pki 方法二:kubeadm init \--apiserver-advertise-address=0.0.0.0 \--image-repository registry.aliyuncs.com/google_containers \--kubernetes-version=v1.15.1 \--service-cidr=10.1.0.0/16 \--pod-network-cidr=10.244.0.0/16--------------------------------------------------------------------------------------------初始化集群需使用kubeadm init命令,可以指定具体参数初始化,也可以指定配置文件初始化。可选参数:--apiserver-advertise-address:apiserver通告给其他组件的IP地址,一般应该为Master节点的用于集群内部通信的IP地址,0.0.0.0表示节点上所有可用地址--apiserver-bind-port:apiserver的监听端口,默认是6443--cert-dir:通讯的ssl证书文件,默认/etc/kubernetes/pki--control-plane-endpoint:控制台平面的共享终端,可以是负载均衡的ip地址或者dns域名,高可用集群时需要添加--image-repository:拉取镜像的镜像仓库,默认是k8s.gcr.io--kubernetes-version:指定kubernetes版本--pod-network-cidr:pod资源的网段,需与pod网络插件的值设置一致。通常,Flannel网络插件的默认为10.244.0.0/16,Calico插件的默认值为192.168.0.0/16;--service-cidr:service资源的网段--service-dns-domain:service全域名的后缀,默认是cluster.local---------------------------------------------------------------------------------------------方法二初始化后需要修改 kube-proxy 的 configmap,开启 ipvskubectl edit cm kube-proxy -n=kube-system修改mode: ipvs提示:......Your Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/configYou should now deploy a pod network to the cluster.Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 192.168.80.10:6443 --token rc0kfs.a1sfe3gl4dvopck5 \ --discovery-token-ca-cert-hash sha256:864fe553c812df2af262b406b707db68b0fd450dc08b34efb73dd5a4771d37a2//设定kubectlkubectl需经由API server认证及授权后方能执行相应的管理操作,kubeadm 部署的集群为其生成了一个具有管理员权限的认证配置文件 /etc/kubernetes/admin.conf,它可由 kubectl 通过默认的 “$HOME/.kube/config” 的路径进行加载。mkdir -p $HOME/.kubecp -i /etc/kubernetes/admin.conf $HOME/.kube/configchown $(id -u):$(id -g) $HOME/.kube/config//在 node 节点上执行 kubeadm join 命令加入群集kubeadm join 192.168.80.10:6443 --token rc0kfs.a1sfe3gl4dvopck5 \ --discovery-token-ca-cert-hash sha256:864fe553c812df2af262b406b707db68b0fd450dc08b34efb73dd5a4771d37a2//所有节点部署网络插件flannel方法一://所有节点上传flannel镜像 flannel.tar 到 /opt 目录,master节点上传 kube-flannel.yml 文件cd /optdocker load < flannel.tar//在 master 节点创建 flannel 资源kubectl apply -f kube-flannel.yml 方法二:kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml//在master节点查看节点状态(需要等几分钟)kubectl get nodesNAME STATUS ROLES AGE VERSIONmaster Ready master 71m v1.15.1node01 Ready <none> 99s v1.15.1node02 Ready <none> 96s v1.15.1kubectl get pods -n kube-systemNAME READY STATUS RESTARTS AGEcoredns-bccdc95cf-c9w6l 1/1 Running 0 71mcoredns-bccdc95cf-nql5j 1/1 Running 0 71metcd-master 1/1 Running 0 71mkube-apiserver-master 1/1 Running 0 70mkube-controller-manager-master 1/1 Running 0 70mkube-flannel-ds-amd64-kfhwf 1/1 Running 0 2m53skube-flannel-ds-amd64-qkdfh 1/1 Running 0 46mkube-flannel-ds-amd64-vffxv 1/1 Running 0 2m56skube-proxy-558p8 1/1 Running 0 2m53skube-proxy-nwd7g 1/1 Running 0 2m56skube-proxy-qpz8t 1/1 Running 0 71mkube-scheduler-master 1/1 Running 0 70m//测试 pod 资源创建kubectl create deployment nginx --image=nginxkubectl get pods -o wideNAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATESnginx-554b9c67f9-zr2xs 1/1 Running 0 14m 10.244.1.2 node01 <none> <none>//暴露端口提供服务kubectl expose deployment nginx --port=80 --type=NodePortkubectl get svcNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEkubernetes ClusterIP 10.96.0.1 <none> 443/TCP 25hnginx NodePort 10.96.15.132 <none> 80:32698/TCP 4s//测试访问curl http://node01:32698//扩展3个副本kubectl scale deployment nginx --replicas=3kubectl get pods -o wideNAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATESnginx-554b9c67f9-9kh4s 1/1 Running 0 66s 10.244.1.3 node01 <none> <none>nginx-554b9c67f9-rv77q 1/1 Running 0 66s 10.244.2.2 node02 <none> <none>nginx-554b9c67f9-zr2xs 1/1 Running 0 17m 10.244.1.2 node01 <none> <none>-------------------- 安装dashboard --------------------//所有节点安装dashboard方法一://所有节点上传dashboard镜像 dashboard.tar 到 /opt 目录,master节点上传kubernetes-dashboard.yaml文件cd /opt/docker load < dashboard.tarkubectl apply -f kubernetes-dashboard.yaml方法二:kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0/aio/deploy/recommended.yaml//查看所有容器运行状态kubectl get pods,svc -n kube-system -o wideNAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATESpod/coredns-bccdc95cf-ftbpq 1/1 Running 0 26h 10.244.0.2 master <none> <none>pod/coredns-bccdc95cf-wgs28 1/1 Running 0 26h 10.244.0.3 master <none> <none>pod/etcd-master 1/1 Running 0 26h 192.168.80.10 master <none> <none>pod/kube-apiserver-master 1/1 Running 0 26h 192.168.80.10 master <none> <none>pod/kube-controller-manager-master 1/1 Running 3 26h 192.168.80.10 master <none> <none>pod/kube-flannel-ds-amd64-gkkc5 1/1 Running 0 26h 192.168.80.10 master <none> <none>pod/kube-flannel-ds-amd64-p9fwb 1/1 Running 0 26h 192.168.80.11 node01 <none> <none>pod/kube-flannel-ds-amd64-xr2db 1/1 Running 0 26h 192.168.80.12 node02 <none> <none>pod/kube-proxy-cfx7j 1/1 Running 0 26h 192.168.80.12 node02 <none> <none>pod/kube-proxy-g9qjm 1/1 Running 0 26h 192.168.80.11 node01 <none> <none>pod/kube-proxy-mh8sf 1/1 Running 0 26h 192.168.80.10 master <none> <none>pod/kube-scheduler-master 1/1 Running 2 26h 192.168.80.10 master <none> <none>pod/kubernetes-dashboard-68cbfbd778-ks7dz 1/1 Running 0 12s 10.244.2.3 node02 <none> <none>NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTORservice/kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 47h k8s-app=kube-dnsservice/kubernetes-dashboard NodePort 10.96.148.45 <none> 443:30001/TCP 20h k8s-app=kubernetes-dashboard//使用火狐或者360浏览器访问https://node02:30001/https://192.168.80.12:30001///创建service account并绑定默认cluster-admin管理员集群角色kubectl create serviceaccount dashboard-admin -n kube-systemkubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin//获取令牌密钥kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')Name: dashboard-admin-token-xf4dkNamespace: kube-systemLabels: <none>Annotations: kubernetes.io/service-account.name: dashboard-admin kubernetes.io/service-account.uid: 736a7c1e-0fa1-430a-9244-71cda7899293Type: kubernetes.io/service-account-tokenData====token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4teGY0ZGsiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiNzM2YTdjMWUtMGZhMS00MzBhLTkyNDQtNzFjZGE3ODk5MjkzIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.uNyAUOqejg7UOVCYkP0evQzG9_h-vAReaDtmYuCPdnvAf150eBsfpRPL1QmsDRsWF0xbI2Yb9m1VajMgKGneHCYFBqD-bsw0ffvbYRwM-roRnLtX-qN1kGMUyMU3iB8y_L6x-ZhiLXwjxUYZzO4WurY-e0h3yI0O2n9qQQmencEoz4snUKK4p_nBIcQrexMzO-aqhuQU_6JJQlN0q5jKHqnB11TfNQX1CNmTqN_dpZy0Wm1JzujVEd-6GQg7xawJkoSZjPYKgmN89z3o2o4cRydshUyLlb6Rmw_FSRvRWiobzL6xhWeGND4i7LgDCAr9YPRJ8LMjJYh_dPbN2Dnpxgca.crt: 1025 bytesnamespace: 11 bytes//复制token令牌直接登录网站-------------------- 安装Harbor私有仓库 --------------------//修改主机名hostnamectl set-hostname hub.kgc.com//所有节点加上主机名映射echo '192.168.80.13 hub.kgc.com' >> /etc/hosts//安装 dockeryum install -y yum-utils device-mapper-persistent-data lvm2 yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo yum install -y docker-ce docker-ce-cli containerd.iomkdir /etc/dockercat > /etc/docker/daemon.json <<EOF{ "registry-mirrors": ["https://6ijb8ubo.mirror.aliyuncs.com"], "exec-opts": ["native.cgroupdriver=systemd"], "log-driver": "json-file", "log-opts": { "max-size": "100m" }, "insecure-registries": ["https://hub.kgc.com"]}EOFsystemctl start dockersystemctl enable docker//所有 node 节点都修改 docker 配置文件,加上私有仓库配置cat > /etc/docker/daemon.json <<EOF{ "registry-mirrors": ["https://6ijb8ubo.mirror.aliyuncs.com"], "exec-opts": ["native.cgroupdriver=systemd"], "log-driver": "json-file", "log-opts": { "max-size": "100m" }, "insecure-registries": ["https://hub.kgc.com"]}EOFsystemctl daemon-reloadsystemctl restart docker//安装 Harbor//上传 harbor-offline-installer-v1.2.2.tgz 和 docker-compose 文件到 /opt 目录cd /optcp docker-compose /usr/local/bin/chmod +x /usr/local/bin/docker-composetar zxvf harbor-offline-installer-v1.2.2.tgzcd harbor/vim harbor.cfg5 hostname = hub.kgc.com9 ui_url_protocol = https24 ssl_cert = /data/cert/server.crt25 ssl_cert_key = /data/cert/server.key59 harbor_admin_password = Harbor12345//生成证书mkdir -p /data/certcd /data/cert#生成私钥openssl genrsa -des3 -out server.key 2048输入两遍密码:123456#生成证书签名请求文件openssl req -new -key server.key -out server.csr输入私钥密码:123456输入国家名:CN输入省名:BJ输入市名:BJ输入组织名:KGC输入机构名:KGC输入域名:hub.kgc.com输入管理员邮箱:admin@kgc.com其它全部直接回车#备份私钥cp server.key server.key.org#清除私钥密码openssl rsa -in server.key.org -out server.key输入私钥密码:123456#签名证书openssl x509 -req -days 1000 -in server.csr -signkey server.key -out server.crtchmod +x /data/cert/*cd /opt/harbor/./install.sh浏览器访问:https://hub.kgc.com用户名:admin密码:Harbor12345//在一个node节点上登录harbordocker login -u admin -p Harbor12345 https://hub.kgc.com//上传镜像docker tag nginx:latest hub.kgc.com/library/nginx:v1docker push hub.kgc.com/library/nginx:v1//在master节点上删除之前创建的nginx资源kubectl delete deployment nginxkubectl run nginx-deployment --image=hub.kgc.com/library/nginx:v1 --port=80 --replicas=3kubectl expose deployment nginx-deployment --port=30000 --target-port=80kubectl get svc,podsNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEservice/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 10mservice/nginx-deployment ClusterIP 10.96.222.161 <none> 30000/TCP 3m15sNAME READY STATUS RESTARTS AGEpod/nginx-deployment-77bcbfbfdc-bv5bz 1/1 Running 0 16spod/nginx-deployment-77bcbfbfdc-fq8wr 1/1 Running 0 16spod/nginx-deployment-77bcbfbfdc-xrg45 1/1 Running 0 3m39syum install ipvsadm -yipvsadm -Lncurl 10.96.222.161:30000kubectl edit svc nginx-deployment25 type: NodePort #把调度策略改成NodePortkubectl get svcNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEservice/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 29mservice/nginx-deployment NodePort 10.96.222.161 <none> 30000:32340/TCP 22m浏览器访问:192.168.80.10:32340192.168.80.11:32340192.168.80.12:32340 |

内核参数优化方案:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 | cat > /etc/sysctl.d/kubernetes.conf <<EOFnet.bridge.bridge-nf-call-iptables=1net.bridge.bridge-nf-call-ip6tables=1net.ipv4.ip_forward=1net.ipv4.tcp_tw_recycle=0vm.swappiness=0 #禁止使用 swap 空间,只有当系统内存不足(OOM)时才允许使用它vm.overcommit_memory=1 #不检查物理内存是否够用vm.panic_on_oom=0 #开启 OOMfs.inotify.max_user_instances=8192fs.inotify.max_user_watches=1048576fs.file-max=52706963 #指定最大文件句柄数fs.nr_open=52706963 #仅4.4以上版本支持net.ipv6.conf.all.disable_ipv6=1net.netfilter.nf_conntrack_max=2310720EOF |

自古英雄多磨难

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 从 HTTP 原因短语缺失研究 HTTP/2 和 HTTP/3 的设计差异

· AI与.NET技术实操系列:向量存储与相似性搜索在 .NET 中的实现

· 基于Microsoft.Extensions.AI核心库实现RAG应用

· Linux系列:如何用heaptrack跟踪.NET程序的非托管内存泄露

· 开发者必知的日志记录最佳实践

· TypeScript + Deepseek 打造卜卦网站:技术与玄学的结合

· Manus的开源复刻OpenManus初探

· 写一个简单的SQL生成工具

· AI 智能体引爆开源社区「GitHub 热点速览」

· C#/.NET/.NET Core技术前沿周刊 | 第 29 期(2025年3.1-3.9)