【项目实战】CNN手写识别复杂模型的构造

感谢视频教程:https://www.bilibili.com/video/BV1Y7411d7Ys?p=11

这里开一篇新博客不仅仅是因为教程视频单独出了1p,也是因为这是一种代码编写的套路,特在此做下记录。

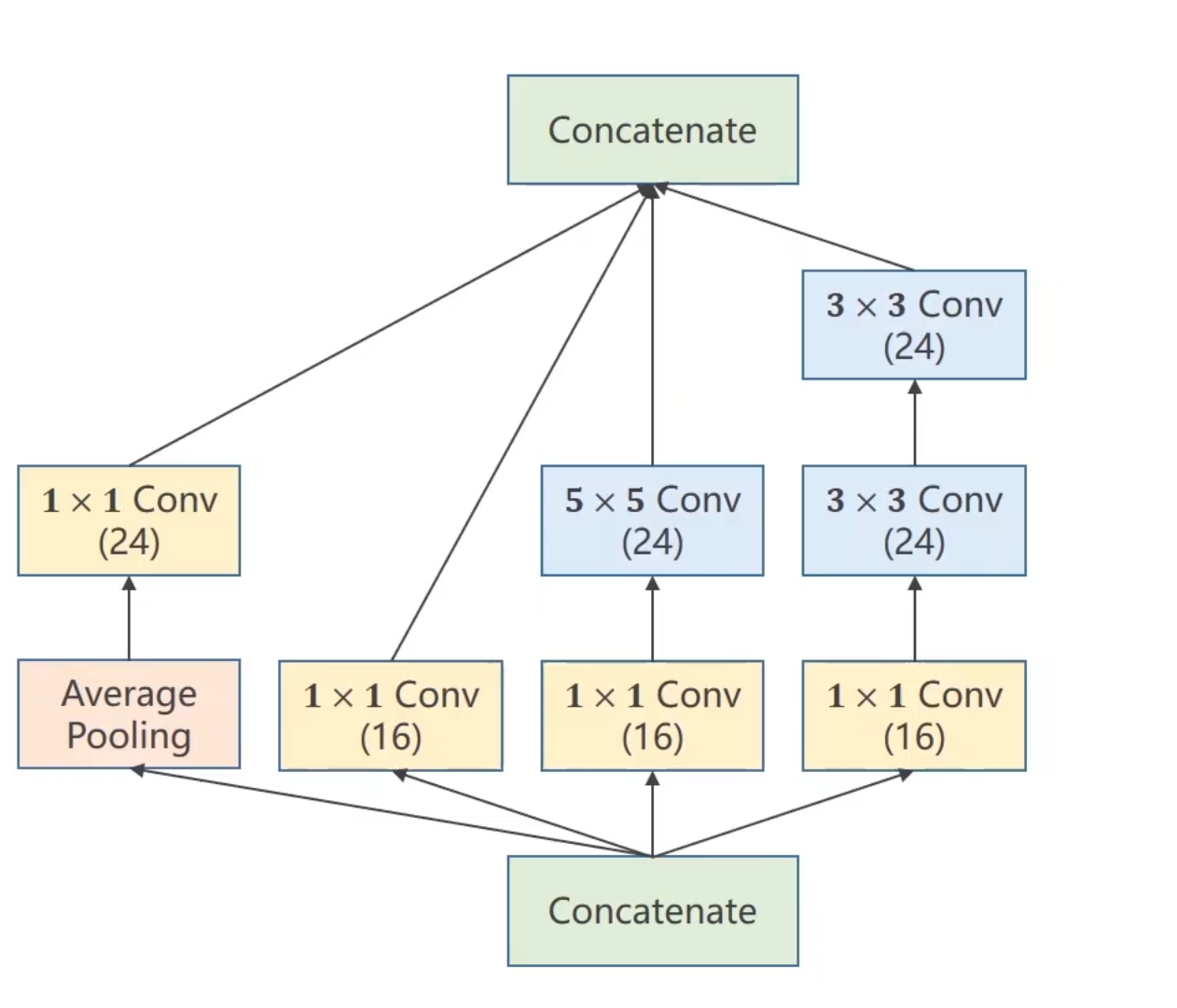

这里我们的模型构造采用如下图示

分为一个1x1池化层,然后一个1x1卷积层输出为16通道,一个先1x1卷积再5x5卷积输出为24通道,最后一个1x1卷积和两个3x3卷积后输出为24通道,这四个卷积层最后合并在一起输出。至于为什么会有1x1卷积核,是因为这样转换通道数的时候可以大大的简化计算步骤,减少代码运行时间

具体的代码设计如下

在昨天的代码基础上,首先由于模型较为复杂,所以我们单独写出一个函数,减少代码的冗余

class InceptionA(nn.Module):

def __init__(self, in_channels): # 每一部分都分开编写

super(InceptionA, self).__init__()

self.branch1x1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_2 = nn.Conv2d(16, 24, kernel_size=5, padding=2) # 因为希望输出的图像大小不变,所以用padding补零

self.branch3x3_1 = nn.Conv2d(in_channels, 16 ,kernel_size=1)

self.branch3x3_2 = nn.Conv2d(16, 24, kernel_size=3, padding=1)

self.branch3x3_3 = nn.Conv2d(24, 24, kernel_size=3, padding=1)

self.branch_pool = nn.Conv2d(in_channels, 24, kernel_size=1)

def forward(self, x):

branck1x1 = self.branch1x1(x)

branck5x5 = self.branch5x5_1(x)

branck5x5 = self.branch5x5_2(branck5x5)

branck3x3 = self.branch3x3_1(x)

branck3x3 = self.branch3x3_2(branck3x3)

branck3x3 = self.branch3x3_3(branck3x3)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branck1x1, branck5x5, branck3x3, branch_pool]

return torch.cat(outputs, dim=1) #这里把维度降为1

然后我们再构建模型即可

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = torch.nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = torch.nn.Conv2d(88, 20, kernel_size=5) # 88=24x3+16

self.incep1 = InceptionA(in_channels=10)

self.incep2 = InceptionA(in_channels=20)

self.mp = nn.MaxPool2d(2) # 这里的2是也是由模型计算出来的

self.fc = nn.Linear(1408, 10) # 真正的工作中这里的1408并不需要我们自己去算

def forward(self, x):

in_size = x.size(0)

x = F.relu(self.mp(self.conv1(x)))

x = self.incep1(x)

x = F.relu(self.mp(self.conv2(x)))

x = self.incep2(x)

x = x.view(in_size, -1)

x = self.fc(x)

return x

``

本文来自博客园,作者:Lugendary,转载请注明原文链接:https://www.cnblogs.com/lugendary/p/16156358.html

浙公网安备 33010602011771号

浙公网安备 33010602011771号