二进制部署kubernetes集群-v1.15.2

实验环境说明

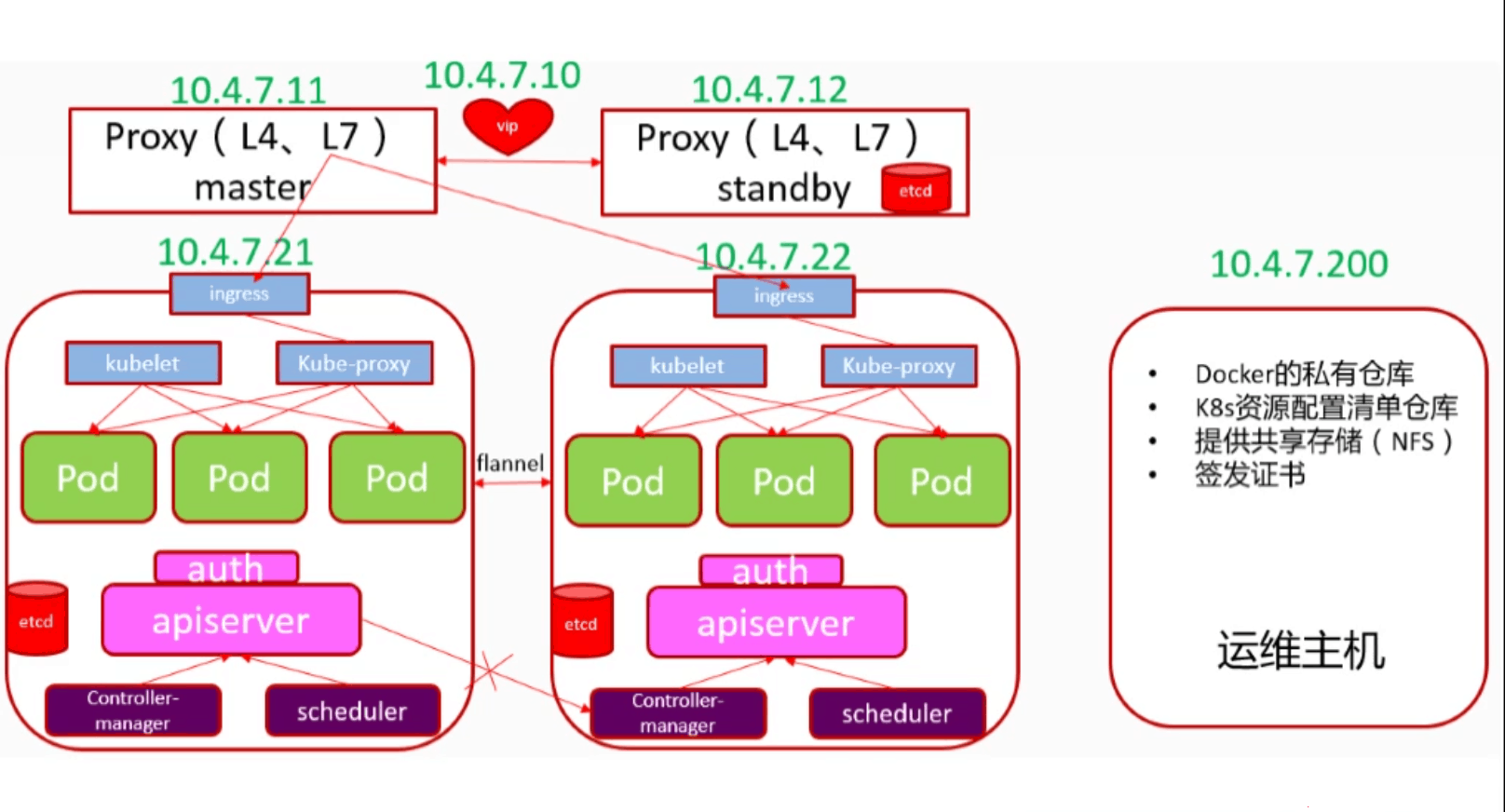

实验架构图

实验主机说明

| 主机名 | IP地址 | 角色 | 硬件配置 |

|---|---|---|---|

| zzgw7-200.host.com | 10.4.7.200 | k8s运维节点 | 2c2g |

| zzgw7-11.host.com | 10.4.7.11 | k8s代理节点 | 2c2g |

| zzgw7-12.host.com | 10.4.7.12 | k8s代理节点 | 2c2g |

| zzgw7-21.host.com | 10.4.7.21 | k8s计算节点 | 2c2g |

| zzgw7-22.host.com | 10.4.7.22 | k8s计算节点 | 2c2g |

调整系统

#配置YUM源

curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

curl -o /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

#关闭SElinux

sed -i 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config

setenforce 0

#关闭firewalld

systemctl disable firewalld.service

systemctl stop firewalld.service

#安装必要工具

yum install -y wget net-tools telnet tree nmap sysstat lrzsz dos2unix bind-utils

统一时间

ntpdate -u ntp.api.bz

前期环境准备

部署 DNS服务

部署节点:zzgw7-11

安装bind9服务

[root@zzgw7-11 ~]# yum install -y bind

修改配置文件

[root@zzgw7-11 ~]# vim /etc/named.conf

12 options {

13 listen-on port 53 { 10.4.7.11; }; # 监听地址

14 //listen-on-v6 port 53 { ::1; }; # 不监听IV6

21 allow-query { any; }; # 允许谁来访问,所有

22 forwarders { 10.4.7.254; }; # 上级DNS,添加

33 recursion yes;

35 dnssec-enable no;

检查配置文件

[root@zzgw7-11 ~]# named-checkconf

# 没有任何输出,说明配置文件无误

修改区域配置文件

添加主机域名host.com,业务域onelpc.com

[root@zzgw7-11 ~]# vim /etc/named.rfc1912.zones

# 文件结尾添加以下内容

zone " host.com" IN {

type master;

file "host.com.zone";

allow-update { 10.4.7.11; };

};

zone " onelpc.com" IN {

type master;

file "onelpc.com.zone";

allow-update { 10.4.7.11; };

};

添加区域数据文件

主机域配置文件

[root@zzgw7-11 ~]# vim /var/named/host.com.zone

$ORIGIN host.com.

$TTL 600 ; 10minutes

@ IN SOA dns.host.com. dnsadmin.host.com. (

2019122301 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.host.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

zzgw7-11 A 10.4.7.11

zzgw7-12 A 10.4.7.12

zzgw7-21 A 10.4.7.21

zzgw7-22 A 10.4.7.22

zzgw7-200 A 10.4.7.200

业务域配置文件

[root@zzgw7-11 ~]# cat /var/named/od.com.zome

$ORIGIN od.com.

$TTL 600 ; 10minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019122301 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

启动 bind9服务

[root@zzgw7-11 ~]# systemctl start named

[root@zzgw7-11 ~]# systemctl enable named

[root@zzgw7-11 ~]# netstat -lntup|grep -w 53

tcp 0 0 10.4.7.11:53 0.0.0.0:* LISTEN 2510/named

tcp6 0 0 :::53 :::* LISTEN 2510/named

udp 0 0 10.4.7.11:53 0.0.0.0:* 2510/named

udp6 0 0 :::53 :::* 2510/named

检查DNS可用性

[root@zzgw7-11 ~]# yum install -y bind-utils

[root@zzgw7-11 ~]# dig -t A zzgw7-21.host.com @10.4.7.11 +short

10.4.7.21

[root@zzgw7-11 ~]# dig -t A zzgw7-200.host.com @10.4.7.11 +short

10.4.7.200

配置DNS客户端

所有主机都需要修改

[root@zzgw-200 ~]# vim /etc/sysconfig/network-scripts/ifcfg-eth0

DNS1="10.4.7.11" #修改DNS1地址

[root@zzgw7-200 ~]# systemctl restart network

[root@zzgw7-200 ~]# cat /etc/resolv.conf

# Generated by NetworkManager

search host.com

nameserver 10.4.7.11

测试访问外网

[root@zzgw7-11 ~]# ping -w1 baidu.com

PING baidu.com (220.181.38.148) 56(84) bytes of data.

测试访问内网

[root@zzgw7-11 ~]# ping -w1 zzgw7-12

PING zzgw7-200.host.com (10.4.7.200) 56(84) bytes of data.

64 bytes from 10.4.7.200 (10.4.7.200): icmp_seq=1 ttl=64 time=0.895 ms

部署签发证书环境

部署主机:ZZGW7-200

安装CFSSL

证书签发工具CFSSL: R1.2

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -O /usr/bin/cfssl

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -O /usr/bin/cfssl-json

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -O /usr/bin/cfssl-certinfo

chmod +x /usr/bin/cfssl*

创建生成CA证书签名请求(csr)的json配置文件

[root@zzgw7-200 ~]# cat /opt/certs/ca-csr.json

{

"CN": "kubernetes-ca",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

],

"ca": {

"expiry": "175200h" # 证书有效时间

}

}

CN: Common Name,浏览器使用该字段验证网站是否合法,一般写的是域名。非常重要。浏览器使用该字段验证网站是否合法

C: Country, 国家

ST: State,州,省

L: Locality,地区,城市

O: Organization Name,组织名称,公司名称

OU: Organization Unit Name,组织单位名称,公司部门

生成CA证书和私钥

[root@zzgw7-200 ~]# cd /opt/certs/

[root@zzgw7-200 certs]# cfssl gencert -initca ca-csr.json |cfssl-json -bare ca

[root@zzgw7-200 certs]# ll

total 16

-rw-r--r-- 1 root root 1001 Mar 28 20:39 ca.csr

-rw-r--r-- 1 root root 332 Mar 28 20:36 ca-csr.json

-rw------- 1 root root 1675 Mar 28 20:39 ca-key.pem #根证书私钥

-rw-r--r-- 1 root root 1354 Mar 28 20:39 ca.pem #根证书

部署 docker环境

部署主机:zzgw7-200,zzgw7-21,zzgw7-22.

这里以zzgw7-200为例

安装 docker

[root@zzgw7-200 certs]# curl -fsSL https://get.docker.com|bash -s docker --mirror Aliyun

配置 docker

[root@zzgw7-200 certs]# mkdir -p /etc/docker /data/docker

[root@zzgw7-200 certs]# cat /etc/docker/daemon.json

{

"graph": "/data/docker",

"storage-driver": "overlay",

"insecure-registries": ["registry.access.redhat.com","quay.io","harbor.od.com"],

"bip": "172.7.200.1/24",

"exec-opts": ["native.cgroupdriver=systemd"],

"live-restore": true

}

################## 配置说明 ##################

# bip要根据宿主机ip变化。

# zzgw7-21.host.com bip 172.7.21.1/24

# zzgw7-22.host.com bip 172.7.22.1/24

# zzgw7-200.host.com bip 172.7.200.1/24

###########################################

启动 docker

[root@zzgw7-200 certs]# systemctl enable docker

[root@zzgw7-200 certs]# systemctl start docker

[root@zzgw7-200 certs]# systemctl status docker

部署 docker镜像私有仓库harbor

部署主机:zzgw7-200

下载软件并解压

harbor官网github地址:https://github.com/goharbor/harbor

[root@zzgw7-200 sotf]# tar xf harbor-offline-installer-v1.8.3.tgz -C /opt/

[root@zzgw7-200 sotf]# mv /opt/harbor/ /opt/harbor-v1.8.3

[root@zzgw7-200 sotf]# ln -s /opt/harbor-v1.8.3/ /opt/harbor

修改 harbor配置文件

[root@zzgw7-200 ~]# egrep -nv '^$|#' /opt/harbor/harbor.yml

5:hostname: harbor.od.com # 配置域名访问

10: port: 180 # 修改服务端口,防止端口冲突

27:harbor_admin_password: Harbor12345 # harbor登录密码

35:data_volume: /data/harbor # harbor数据存放路径

82: location: /data/harbor/logs # harbor日志存放路径

安装 docker-compose

[root@zzgw7-200 ~]# yum install -y python3-pip

[root@zzgw7-200 ~]# pip3 install -i https://pypi.tuna.tsinghua.edu.cn/simple pip -U

[root@zzgw7-200 ~]# pip3 install docker-compose -i https://pypi.tuna.tsinghua.edu.cn/simple

[root@zzgw7-200 sotf]# docker-compose -version

docker-compose version 1.25.4, build unknown

安装 Harbor

[root@zzgw7-200 sotf]# cd /opt/harbor

[root@zzgw7-200 harbor]# ./install.sh

查看 Harbor启动状态

[root@zzgw7-200 harbor]# docker-compose ps

Name Command State Ports

------------------------------------------------------------------------------------------------------

harbor-core /harbor/start.sh Up (health: starting)

harbor-db /entrypoint.sh postgres Up (health: starting) 5432/tcp

harbor-jobservice /harbor/start.sh Up

harbor-log /bin/sh -c /usr/local/bin/ ... Up (health: starting) 127.0.0.1:1514->10514/tcp

harbor-portal nginx -g daemon off; Up (health: starting) 80/tcp

nginx nginx -g daemon off; Up (health: starting) 0.0.0.0:180->80/tcp

redis docker-entrypoint.sh redis ... Up 6379/tcp

registry /entrypoint.sh /etc/regist ... Up (health: starting) 5000/tcp

registryctl /harbor/start.sh Up (health: starting)

配置 harbor的DNS内网解析

DNS主机配置:zzgw7-11

[root@zzgw7-11 ~]# vim /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019122302 ; serial #修改serial,序列号需要前滚

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

harbor A 10.4.7.200 # 添加harbor的A记录

检查DNS解析

[root@zzgw7-11 ~]# systemctl restart named

[root@zzgw7-11 ~]# dig -t A harbor.od.com +short

10.4.7.200

配置nginx 代理harbor

安装 nginx

[root@zzgw7-200 harbor]# yum install -y nginx

配置 nginx

[root@zzgw7-200 harbor]# vim /etc/nginx/conf.d/harbor.od.com.conf

server {

listen 80;

server_name harbor.od.com;

client_max_body_size 1000m;

location / {

proxy_pass http://127.0.0.1:180;

}

}

启动 nginx

[root@zzgw7-200 harbor]# nginx -t

nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

nginx: configuration file /etc/nginx/nginx.conf test is successful

[root@zzgw7-200 harbor]# systemctl start nginx

[root@zzgw7-200 harbor]# systemctl enable nginx

Web 访问登录

默认登录名:admin 默认密码:Harbor12345

密码可以在harbor.yml中修改

创建新项目

上传镜像测试

1.下载测试镜像并打给镜像打一个tag

[root@zzgw7-200 ~]# docker pull nginx:1.7.9

[root@zzgw7-200 ~]# docker images |grep '^nginx'

nginx 1.7.9 84581e99d807 5 years ago 91.6MB

[root@zzgw7-200 harbor]# docker tag 84581e99d807 harbor.od.com/public/nginx:v1.7.9

2.登录harbor仓库

[root@zzgw7-200 harbor]# docker login harbor.od.com

Username: admin #用户名

Password: #登录密码

WARNING! Your password will be stored unencrypted in /root/.docker/config.json.

Configure a credential helper to remove this warning. See

https://docs.docker.com/engine/reference/commandline/login/#credentials-store

Login Succeeded

3.上传镜像

[root@zzgw7-200 harbor]# docker push harbor.od.com/public/nginx:v1.7.9

The push refers to repository [harbor.od.com/public/nginx]

5f70bf18a086: Pushed

4b26ab29a475: Pushed

ccb1d68e3fb7: Pushed

e387107e2065: Pushed

63bf84221cce: Pushed

e02dce553481: Pushed

dea2e4984e29: Pushed

v1.7.9: digest: sha256:b1f5935eb2e9e2ae89c0b3e2e148c19068d91ca502e857052f14db230443e4c2 size: 3012

4.web 页面检查

部署 Master组件

部署 etcd服务

etcd 集群规划

| 主机名 | IP地址 | 角色 |

|---|---|---|

| zzgw7-12.host.com | 10.4.7.12 | etcd lead |

| zzgw7-21.host.com | 10.4.7.21 | etcd follow |

| zzgw7-22.host.com | 10.4.7.22 | etcd follow |

注意:这里部署文档以zzgw7-12.host.com主机为例,另外两台主机安装部署方法类似。

etcd 签发证书

zzgw7-200主机上创建

创建基于根证书的config配置文件

[root@zzgw7-200 certs]# cat ca-config.json

{

"signing": {

"default": {

"expiry": "175200h"

},

"profiles": {

"server": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"server auth"

]

},

"client": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"client auth"

]

},

"peer": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

证书类型

client certificate:客户端使用,用于服务端认证客户端,如etcdctl、etcd proxy、fleetctl、docker客户端

server certificate:服务端使用,客户端以此验证服务端身份,例如docker服务端、kube-apiserver

peer certificate:双向证书,用于etcd集群成员间通信

创建生成自签证书的签名请求(csr)的 json配置文件

[root@zzgw7-200 certs]# cat etcd-peer-csr.json

{

"CN": "k8s-etcd",

# hosts 段把有可能成为etcd节点的主机都填写进去。

"hosts": [

"10.4.7.11",

"10.4.7.12",

"10.4.7.21",

"10.4.7.22"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

生成证书

[root@zzgw7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=peer etcd-peer-csr.json|cfssl-json -bare etcd-peer

[root@zzgw7-200 certs]# ls |grep etcd

etcd-peer.csr

etcd-peer-csr.json

etcd-peer-key.pem

etcd-peer.pem

创建etcd用户

[root@zzgw7-12 ~]# useradd -M -s /sbin/nologin etcd

[root@zzgw7-12 ~]# id etcd

uid=1000(etcd) gid=1000(etcd) groups=1000(etcd)

安装etcd服务

[root@zzgw7-12 ~]# mkdir -p /data/sotf/

[root@zzgw7-12 ~]# cd /data/sotf/

[root@zzgw7-12 sotf]# wget https://github.com/etcd-io/etcd/releases/download/v3.1.20/etcd-v3.1.20-linux-amd64.tar.gz

[root@zzgw7-12 sotf]# tar xf etcd-v3.1.20-linux-amd64.tar.gz -C /opt/

[root@zzgw7-12 sotf]# mv /opt/etcd-v3.1.20-linux-amd64/ /opt/etcd-v3.1.20

[root@zzgw7-12 sotf]# ln -s /opt/etcd-v3.1.20/ /opt/etcd

创建相关目录

[root@zzgw7-12 ~]# mkdir -p /opt/etcd/certs // 存放etcd证书

[root@zzgw7-12 ~]# mkdir -p /data/etcd // 存放etcd数据

[root@zzgw7-12 ~]# mkdir -p /data/logs/etcd-server // 存放etcd日志

[root@zzgw7-12 ~]# chown -R etcd:etcd /data/etcd /data/logs/etcd-server/

拷贝证书与私钥文件

[root@zzgw7-12 ~]# cd /opt/etcd/certs/

[root@zzgw7-12 certs]# scp zzgw7-200:/opt/certs/ca.pem .

[root@zzgw7-12 certs]# scp zzgw7-200:/opt/certs/etcd-peer.pem .

[root@zzgw7-12 certs]# scp zzgw7-200:/opt/certs/etcd-peer-key.pem .

[root@zzgw7-12 certs]# ls

ca.pem etcd-peer-key.pem etcd-peer.pem

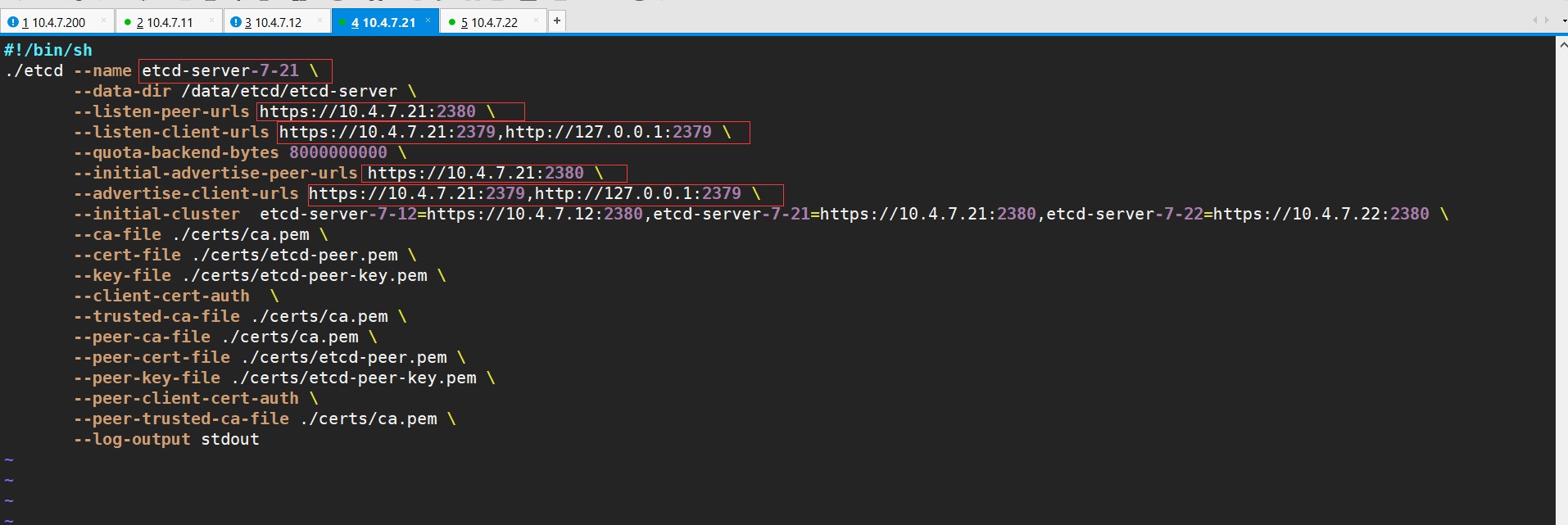

创建etcd服务启动脚本

[root@zzgw7-12 certs]# cat /opt/etcd/etcd-server-startup.sh

#!/bin/sh

./etcd --name etcd-server-7-12 \

--data-dir /data/etcd/etcd-server \

--listen-peer-urls https://10.4.7.12:2380 \

--listen-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--quota-backend-bytes 8000000000 \

--initial-advertise-peer-urls https://10.4.7.12:2380 \

--advertise-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--initial-cluster etcd-server-7-12=https://10.4.7.12:2380,etcd-server-7-21=https://10.4.7.21:2380,etcd-server-7-22=https://10.4.7.22:2380 \

--ca-file ./certs/ca.pem \

--cert-file ./certs/etcd-peer.pem \

--key-file ./certs/etcd-peer-key.pem \

--client-cert-auth \

--trusted-ca-file ./certs/ca.pem \

--peer-ca-file ./certs/ca.pem \

--peer-cert-file ./certs/etcd-peer.pem \

--peer-key-file ./certs/etcd-peer-key.pem \

--peer-client-cert-auth \

--peer-trusted-ca-file ./certs/ca.pem \

--log-output stdout

######################################

#各etcd节点,脚本不同的地方(#根据宿主机IP变化)

--name etcd-server-7-12

--listen-peer-urls

--listen-client-urls

--initial-advertise-peer-urls

--advertise-client-urls

######################################

调整权限

[root@zzgw7-12 certs]# chmod +x /opt/etcd/etcd-server-startup.sh

[root@zzgw7-12 certs]# chown etcd:etcd /opt/etcd-v3.1.20/ -R

安装supervisor

Supervisor是用Python开发的一个client/server服务,是Linux/Unix系统下的一个进程管理工具,不支持Windows系统。它可以很方便的监听、启动、停止、重启一个或多个进程。用Supervisor管理的进程,当一个进程意外被杀死,supervisort监听到进程死后,会自动将它重新拉起,很方便的做到进程自动恢复的功能,

[root@zzgw7-12 certs]# yum install -y supervisor

[root@zzgw7-12 certs]# systemctl start supervisord.service

[root@zzgw7-12 certs]# systemctl enable supervisord.service

创建etcd-server的启动配置

[root@zzgw7-12 certs]# cat /etc/supervisord.d/etcd-server.ini

[program:etcd-server-7-12] ; 根据主机改变

command=/opt/etcd/etcd-server-startup.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/etcd ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=etcd ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/etcd-server/etcd.stdout.log ; stdout log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

stderr_logfile=/data/logs/etcd-server/etcd.stderr.log ; stderr log path, NONE for none; default AUTO

stderr_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stderr_logfile_backups=4 ; # of stderr logfile backups (default 10)

stderr_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stderr_events_enabled=false ; emit events on stderr writes (default false)

启动etcd

[root@zzgw7-12 certs]# supervisorctl update

etcd-server-7-12: added process group

[root@zzgw7-12 certs]# supervisorctl status

etcd-server-7-12 RUNNING pid 17477, uptime 0:01:54

[root@zzgw7-12 opt]# netstat -luntp|grep etcd

tcp 0 0 10.4.7.12:2379 0.0.0.0:* LISTEN 17478/./etcd

tcp 0 0 127.0.0.1:2379 0.0.0.0:* LISTEN 17478/./etcd

tcp 0 0 10.4.7.12:2380 0.0.0.0:* LISTEN 17478/./etcd

安装部署启动检查所有集群

和上述无区别,最主要是修改两个配置文件:

1、/opt/etcd/etcd-server-startup.sh的ip地址

2、/etc/supervisord.d/etcd-server.ini

//修改supervisord启动ini文件的program标签,是为了更好区分主机,生产规范,强迫症患者的福音,不修改不会造成启动失败

检查集群状态

任意节点

# 查看集群健康状态

[root@zzgw7-22 opt]# /opt/etcd/etcdctl cluster-health

member 988139385f78284 is healthy: got healthy result from http://127.0.0.1:2379

member 5a0ef2a004fc4349 is healthy: got healthy result from http://127.0.0.1:2379

member f4a0cb0a765574a8 is healthy: got healthy result from http://127.0.0.1:2379

cluster is healthy

# 查看etcd集群列表

[root@zzgw7-22 opt]# /opt/etcd/etcdctl member list

988139385f78284: name=etcd-server-7-22 peerURLs=https://10.4.7.22:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.22:2379 isLeader=false

5a0ef2a004fc4349: name=etcd-server-7-21 peerURLs=https://10.4.7.21:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.21:2379 isLeader=false

f4a0cb0a765574a8: name=etcd-server-7-12 peerURLs=https://10.4.7.12:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.12:2379 isLeader=true

部署 kube-apiserver服务

集群规划

| 主机名 | IP地址 | 角色 |

|---|---|---|

| zzgw7-21.host.com | 10.4.7.21 | kube-apiserver |

| zzgw7-22.host.com | 10.4.7.22 | kube-apiserver |

| zzgw7-11.host.com | 10.4.7.11 | 4层负载均衡 |

| zzgw7-11.host.com | 10.4.7.12 | 4层负载均衡 |

这里10.4.7.11和10.4.7.12使用nginx做4层负载均衡器,用keepalived跑一个vip:10.4.7.10,代理两个kube-apiserver,实现高可用

这里以hdss21为例,另外一台运算节点部署方法类似

签发client证书

操作主机:zzgw7-200

1. 创建生成证书签名请求(csr)的json配置文件

[root@zzgw7-200 certs]# cat /opt/certs/client-crs.json

{

"CN": "k8s-node",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

2. 生成client证书和私钥

[root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client client-csr.json |cfssl-json -bare client

3. 检查client证书和私钥

[root@zzgw7-200 certs]# ls |grep client

client.csr

client-csr.json

client-key.pem

client.pem

签发apiserver证书

1.创建生成证书签名请求(csr)的json配置文件

[root@zzgw7-200 certs]# vim /opt/certs/apiserver-csr.json

{

"CN": "apiserver",

"hosts": [

"127.0.0.1",

"192.168.0.1",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local",

"10.4.7.10",

"10.4.7.21",

"10.4.7.22",

"10.4.7.23"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

2. 生成kube-apiserver证书和私钥

[root@zzgw7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server apiserver-csr.json |cfssl-json -bare apiserver

3. 检查kube-apiserver证书与私钥

[root@zzgw7-200 certs]# ls |grep apiserver

apiserver.csr

apiserver-csr.json

apiserver-key.pem

apiserver.pem

下载软件,解压,做软链接

下载地址:https://github.com/kubernetes/kubernetes/releases

[root@zzgw7-21 sotf]# tar xf kubernetes-server-linux-amd64.tar.gz -C /opt/

[root@zzgw7-21 sotf]# mv /opt/kubernetes/ /opt/kubernetes-v1.15.2

[root@zzgw7-21 sotf]# ln -s /opt/kubernetes-v1.15.2/ /opt/kubernetes

# 删掉无用的源码包,bin下无用的tag,tar文件,不用adm方式部署,所以不需要这些文件

[root@zzgw7-21 sotf]# cd /opt/kubernetes

[root@zzgw7-21 kubernetes]# rm -rf kubernetes-src.tar.gz

[root@zzgw7-21 kubernetes]#

[root@zzgw7-21 kubernetes]# cd server/bin/

[root@zzgw7-21 bin]# rm -rf *.tar

[root@zzgw7-21 bin]# rm -rf *_tag

# 保留以下文件即可

[root@zzgw7-21 kubernetes]# tree

.

├── addons

├── LICENSES

└── server

└── bin

├── apiextensions-apiserver

├── cloud-controller-manager

├── hyperkube

├── kubeadm

├── kube-apiserver

├── kube-controller-manager

├── kubectl

├── kubelet

├── kube-proxy

├── kube-scheduler

└── mounter

拷贝证书

[root@zzgw7-21 kubernetes]# mkdir -p /opt/kubernetes/server/bin/certs/

[root@zzgw7-21 kubernetes]# cd /opt/kubernetes/server/bin/certs/

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/apiserver.pem .

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/apiserver-key.pem .

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/client-key.pem .

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/client.pem .

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/ca.pem .

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/ca-key.pem .

[root@zzgw7-21 certs]# ll

total 24

-rw------- 1 root root 1675 Mar 29 01:08 apiserver-key.pem

-rw-r--r-- 1 root root 1598 Mar 29 01:07 apiserver.pem

-rw------- 1 root root 1675 Mar 29 01:37 ca-key.pem

-rw-r--r-- 1 root root 1354 Mar 29 01:36 ca.pem

-rw------- 1 root root 1675 Mar 29 01:08 client-key.pem

-rw-r--r-- 1 root root 1371 Mar 29 01:09 client.pem

创建配置

k8s资源配置清单,专门给k8s做日志审计

[root@zzgw7-21 certs]# mkdir /opt/kubernetes/server/bin/conf

[root@zzgw7-21 certs]# cat /opt/kubernetes/server/bin/conf/audit.yaml

apiVersion: audit.k8s.io/v1beta1 # This is required.

kind: Policy

# Don't generate audit events for all requests in RequestReceived stage.

omitStages:

- "RequestReceived"

rules:

# Log pod changes at RequestResponse level

- level: RequestResponse

resources:

- group: ""

# Resource "pods" doesn't match requests to any subresource of pods,

# which is consistent with the RBAC policy.

resources: ["pods"]

# Log "pods/log", "pods/status" at Metadata level

- level: Metadata

resources:

- group: ""

resources: ["pods/log", "pods/status"]

# Don't log requests to a configmap called "controller-leader"

- level: None

resources:

- group: ""

resources: ["configmaps"]

resourceNames: ["controller-leader"]

# Don't log watch requests by the "system:kube-proxy" on endpoints or services

- level: None

users: ["system:kube-proxy"]

verbs: ["watch"]

resources:

- group: "" # core API group

resources: ["endpoints", "services"]

# Don't log authenticated requests to certain non-resource URL paths.

- level: None

userGroups: ["system:authenticated"]

nonResourceURLs:

- "/api*" # Wildcard matching.

- "/version"

# Log the request body of configmap changes in kube-system.

- level: Request

resources:

- group: "" # core API group

resources: ["configmaps"]

# This rule only applies to resources in the "kube-system" namespace.

# The empty string "" can be used to select non-namespaced resources.

namespaces: ["kube-system"]

# Log configmap and secret changes in all other namespaces at the Metadata level.

- level: Metadata

resources:

- group: "" # core API group

resources: ["secrets", "configmaps"]

# Log all other resources in core and extensions at the Request level.

- level: Request

resources:

- group: "" # core API group

- group: "extensions" # Version of group should NOT be included.

# A catch-all rule to log all other requests at the Metadata level.

- level: Metadata

# Long-running requests like watches that fall under this rule will not

# generate an audit event in RequestReceived.

omitStages:

- "RequestReceived"

创建启动脚本

[root@zzgw7-21 bin]# vim /opt/kubernetes/server/bin/kube-apiserver.sh

#!/bin/bash

./kube-apiserver \

--apiserver-count 2 \

--audit-log-path /data/logs/kubernetes/kube-apiserver/audit-log \

--audit-policy-file ./conf/audit.yaml \

--authorization-mode RBAC \

--client-ca-file ./certs/ca.pem \

--requestheader-client-ca-file ./certs/ca.pem \

--enable-admission-plugins NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,MutatingAdmissionWebhook,ValidatingAdmissionWebhook,ResourceQuota \

--etcd-cafile ./certs/ca.pem \

--etcd-certfile ./certs/client.pem \

--etcd-keyfile ./certs/client-key.pem \

--etcd-servers https://10.4.7.12:2379,https://10.4.7.21:2379,https://10.4.7.22:2379 \

--service-account-key-file ./certs/ca-key.pem \

--service-cluster-ip-range 192.168.0.0/16 \

--service-node-port-range 3000-29999 \

--target-ram-mb=1024 \

--kubelet-client-certificate ./certs/client.pem \

--kubelet-client-key ./certs/client-key.pem \

--log-dir /data/logs/kubernetes/kube-apiserver \

--tls-cert-file ./certs/apiserver.pem \

--tls-private-key-file ./certs/apiserver-key.pem \

--v 2

####################### 参数说明 #######################

--apiserver-count 2 // apiserver数量

--audit-log-path // 日志存放位置

--audit-policy-file // 日志审计规则文件

--authorization-mode RBAC // RBAC --基于角色访问的控制

#详细参数说明: ./kube-apiserver help

#######################################################

调整权限与目录

[root@zzgw7-21 bin]# chmod +x kube-apiserver.sh

[root@zzgw7-21 bin]# mkdir -p /data/logs/kubernetes/kube-apiserver

创建supervisor配置

[root@zzgw7-21 bin]# vi /etc/supervisord.d/kube-apiserver.ini

[program:kube-apiserver-7-21]

command=/opt/kubernetes/server/bin/kube-apiserver.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-apiserver/apiserver.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

启动服务并检查

[root@zzgw7-21 bin]# supervisorctl update

kube-apiserver-7-21: added process group

[root@zzgw7-21 certs]# supervisorctl status

etcd-server-7-21 RUNNING pid 17862, uptime 1:32:00

kube-apiserver-7-21 RUNNING pid 18689, uptime 0:02:38

[root@zzgw7-21 certs]# netstat -lntup|grep kube-api

tcp 0 0 127.0.0.1:8080 0.0.0.0:* LISTEN 18690/./kube-apiser

tcp6 0 0 :::6443 :::* LISTEN 18690/./kube-apiser

安装部署启动检查所有节点

zzgw7-22 跟上述基本相同

/etc/supervisord.d/kube-apiserver.ini

需要更改成[program:kube-apiserver-7-22]

配置4层反向代理

操作主机:zzgw7-11,zzgw7-12。

俩个节点除了keepalived.conf配置文件有所不同,其余配置完全一致。

部署nginx

安装nginx

yum install -y nginx

配置nginx

~] # vim /etc/nginx/nginx.conf

//追加到文件最后

stream {

upstream kube-apiserver {

server 10.4.7.21:6443 max_fails=3 fail_timeout=30s;

server 10.4.7.22:6443 max_fails=3 fail_timeout=30s;

}

server {

listen 7443;

proxy_connect_timeout 2s;

proxy_timeout 900s;

proxy_pass kube-apiserver;

}

}

启动nginx

nginx -t

systemctl start nginx

systemctl enable nginx

部署 keepalive

安装keepalive

[root@zzgw7-11 ~]# yum install -y keepalived

编写监控脚本

[root@zzgw7-11 ~]# cat /etc/keepalived/check_port.sh

#!/bin/bash

#keepalived 监控端口脚本

#使用方法:

#在keepalived的配置文件中

#vrrp_script check_port {#创建一个vrrp_script脚本,检查配置

# script "/etc/keepalived/check_port.sh 6379" #配置监听的端口

# interval 2 #检查脚本的频率,单位(秒)

#}

CHK_PORT=$1

if [ -n "$CHK_PORT" ];then

PORT_PROCESS=`ss -lnt|grep $CHK_PORT|wc -l`

if [ $PORT_PROCESS -eq 0 ];then

echo "Port $CHK_PORT Is Not Used,End."

exit 1

fi

else

echo "Check Port Cant Be Empty!"

fi

添加执行权限

[root@zzgw7-11 ~]# chmod +x /etc/keepalived/check_port.sh

配置keepalive(主)

[root@zzgw7-11 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.11

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.4.7.11

nopreempt # 非抢占。当VIP漂移后,即使主节点恢复,VPI也不会漂移回来。

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.4.7.10/24 dev eth0

}

}

配置keepalive(备)

[root@zzgw7-12 opt]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.12

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 251

mcast_src_ip 10.4.7.12

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.4.7.10/24 dev eth0

}

启动keepalive

systemctl start keepalived.service

systemctl enable keepalived.service

部署 kube-controller-manager服务

部署主机

| 主机名 | ip地址 | 角色 |

|---|---|---|

| zzgw7-21.host.com | 10.4.7.21 | controller-manager |

| zzgw7-22.host.com | 10.4.7.22 | controller-manager |

注意:这里部署文档以HDSS7-21.host.com主机为例,另外一台运算节点安装部署方法类似

创建服务启动脚本

[root@zzgw7-21 ~]# vim /opt/kubernetes/server/bin/kube-controller-manager.sh

#!/bin/sh

./kube-controller-manager \

--cluster-cidr 172.7.0.0/16 \

--leader-elect true \

--log-dir /data/logs/kubernetes/kube-controller-manager \

--master http://127.0.0.1:8080 \

--service-account-private-key-file ./certs/ca-key.pem \

--service-cluster-ip-range 192.168.0.0/16 \

--root-ca-file ./certs/ca.pem \

--v 2

授权、创建目录

[root@zzgw7-21 ~]# chmod +x /opt/kubernetes/server/bin/kube-controller-manager.sh

[root@zzgw7-21 ~]# mkdir -p /data/logs/kubernetes/kube-controller-manager

创建supervisor配置

[root@zzgw7-21 ~]# cat /etc/supervisord.d/kube-controller-manager.ini

[program:kube-controller-manager-7-21]

command=/opt/kubernetes/server/bin/kube-controller-manager.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=22 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=false ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-controller-manager/controll.stdout.log ; stdout log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

stderr_logfile=/data/logs/kubernetes/kube-controller-manager/controll.stderr.log ; stderr log path, NONE for none; default AUTO

stderr_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stderr_logfile_backups=4 ; # of stderr logfile backups (default 10)

stderr_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stderr_events_enabled=false ; emit events on stderr writes (default false)

启动服务并检查

[root@zzgw7-21 ~]# supervisorctl update

kube-controller-manager-7-21: added process group

[root@zzgw7-21 ~]# supervisorctl status

etcd-server-7-21 RUNNING pid 1456, uptime 0:01:13

kube-apiserver-7-21 RUNNING pid 1458, uptime 0:01:13

kube-controller-manager-7-21 RUNNING pid 1455, uptime 0:01:13

安装部署启动检查所有集群规划主机

zzgw7-22 跟上述基本相同

/etc/supervisord.d/kube-controller-manager.ini

需要更改成[program:kube-controller-manager-7-22]

部署 kube-scheduler服务

部署主机

| 主机名 | ip地址 | 角色 |

|---|---|---|

| zzgw7-21.host.com | 10.4.7.21 | kube-scheduler |

| zzgw7-22.host.com | 10.4.7.22 | kube-scheduler |

注意:这里部署文档以zzgw7-21为例,另一运算节点类似

创建启动脚本

[root@hdss7-21 ~]# vi /opt/kubernetes/server/bin/kube-scheduler.sh

#!/bin/sh

./kube-scheduler \

--leader-elect \

--log-dir /data/logs/kubernetes/kube-scheduler \

--master http://127.0.0.1:8080 \

--v 2

如果主控节点组件在不同的地方,是需要证书验证的('client-key.pem和client.pem'),实验环境是在一个宿主机,所以这里无需证书.

授权、创建目录

[root@zzgw7-21 ~]# chmod +x /opt/kubernetes/server/bin/kube-scheduler.sh

[root@zzgw7-21 ~]# mkdir -p /data/logs/kubernetes/kube-scheduler

创建supervisor配置

[root@zzgw7-21 ~]# vim /etc/supervisord.d/kube-scheduler.ini

[program:kube-scheduler-7-21]

command=/opt/kubernetes/server/bin/kube-scheduler.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=22 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=false ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-scheduler/scheduler.stdout.log ; stdout log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

stderr_logfile=/data/logs/kubernetes/kube-scheduler/scheduler.stderr.log ; stderr log path, NONE for none; default AUTO

stderr_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stderr_logfile_backups=4 ; # of stderr logfile backups (default 10)

stderr_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stderr_events_enabled=false ; emit events on stderr writes (default false)

启动服务并检查

[root@zzgw7-21 ~]# supervisorctl update

kube-scheduler-7-21: added process group

[root@zzgw7-21 ~]# supervisorctl status

etcd-server-7-21 RUNNING pid 1456, uptime 0:14:56

kube-apiserver-7-21 RUNNING pid 1458, uptime 0:14:56

kube-controller-manager-7-21 RUNNING pid 1455, uptime 0:14:56

kube-scheduler-7-21 RUNNING pid 1741, uptime 0:00:45

安装部署启动检查所有集群规划主机

zzgw7-22 跟上述基本相同

/etc/supervisord.d/ kube-scheduler.ini

需要更改成[program:kube-scheduler-7-22]

查看主控节点健康状态

# 设置软连接

[root@zzgw7-21 ~]# ln -s /opt/kubernetes/server/bin/kubectl /usr/bin/kubectl

# 查看状态

[root@zzgw7-21 ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-2 Healthy {"health": "true"}

etcd-1 Healthy {"health": "true"}

etcd-0 Healthy {"health": "true"}

部署 Node组件

部署 kubelet服务

部署主机

| 主机名 | 角色 | ip |

|---|---|---|

| HDSS7-21.host.com | 10.4.7.21 | kubelet |

| HDSS7-22.host.com | 10.4.7.22 | kubelet |

注意:这里部署文档以 hdss7-21主机为例,另外一台运算节点安装部署方法类似

签发 kubelet证书

运维主机zzgw7-200操作

创建生成证书签名请求(csr)的json配置文件

[root@zzgw7-200 ~]# cd /opt/certs/

[root@zzgw7-200 certs]# cat /opt/certs/kubelet-csr.json

{

"CN": "k8s-kubelet",

"hosts": [

"127.0.0.1",

"10.4.7.10",

"10.4.7.21",

"10.4.7.22",

"10.4.7.23",

"10.4.7.24",

"10.4.7.25",

"10.4.7.26",

"10.4.7.27",

"10.4.7.28"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

生成证书与私钥文件

[root@zzgw7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server kubelet-csr.json | cfssl-json -bare kubelet

[root@zzgw7-200 certs]# ls |grep kubelet

kubelet.csr

kubelet-csr.json

kubelet-key.pem

kubelet.pem

各节点拷贝kueblet证书

[root@zzgw7-21 ~]# cd /opt/kubernetes/server/bin/certs/

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/kubelet.pem .

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/kubelet-key.pem .

创建配置

只需在一个节点操作即可

set-cluster

[root@zzgw7-21 certs]# cd /opt/kubernetes/server/bin/conf/

[root@zzgw7-21 conf]# kubectl config set-cluster myk8s \

--certificate-authority=/opt/kubernetes/server/bin/certs/ca.pem \

--embed-certs=true \

--server=https://10.4.7.10:7443 \

--kubeconfig=kubelet.kubeconfig

#返回结果:

Cluster "myk8s" set.

set-credentials

[root@zzgw7-21 conf]# kubectl config set-credentials k8s-node \

--client-certificate=/opt/kubernetes/server/bin/certs/client.pem \

--client-key=/opt/kubernetes/server/bin/certs/client-key.pem \

--embed-certs=true \

--kubeconfig=kubelet.kubeconfig

#返回结果:

User "k8s-node" set.

set-context

[root@zzgw7-21 conf]# kubectl config set-context myk8s-context \

--cluster=myk8s \

--user=k8s-node \

--kubeconfig=kubelet.kubeconfig

#返回结果:

Context "myk8s-context" modified.

use-context

[root@zzgw7-21 conf]# kubectl config use-context myk8s-context --kubeconfig=kubelet.kubeconfig

# 返回结果

Switched to context "myk8s-context".

查看生成的文件

[root@zzgw7-21 conf]# ll

total 12

-rw-r--r-- 1 root root 2216 Mar 29 01:14 audit.yaml

-rw------- 1 root root 6215 Mar 29 16:45 kubelet.kubeconfig

集群角色绑定到用户

只需在一个节点操作即可

1.创建资源配置文件

[root@zzgw7-21 conf]# cat k8s-node.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: k8s-node

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: k8s-node

2.根据配置文件创建用户

[root@zzgw7-21 conf]# kubectl create -f k8s-node.yaml

// 返回结果.创建角色后会存到etcd里

clusterrolebinding.rbac.authorization.k8s.io/k8s-node created

3.查询集群角色

[root@zzgw7-21 conf]# kubectl get clusterrolebinding k8s-node

NAME AGE

k8s-node 80s

4.拷贝kubelet.kubeconfig 到zzgw7-22上

[root@zzgw7-21 conf]# scp kubelet.kubeconfig zzgw7-22:`pwd`

root@zzgw7-22's password:

kubelet.kubeconfig 100% 6215 5.8MB/s 00:00

准备pause基础镜像

操作主机:zzgw7-200

1.为什么需要这个pause基础镜像?

原因:需要用一个pause基础镜像把这台机器的pod拉起来,因为kubelet是干活的节点,它帮我们调度docker引擎,边车模式,让kebelet控制一个小镜像,先于我们的业务容器起来,让它帮我们业务容器去设置:UTC、NET、IPC,让它先把命名空间占上,业务容易还没起来的时候,pod的ip已经分配出来

2.下载pause镜像

[root@hdss7-200 certs]# docker pull kubernetes/pause

3.提交至docker私有仓库(harbor)中

#查看镜像ID

[root@zzgw7-200 certs]# docker images|grep pause

kubernetes/pause latest f9d5de079539 5 years ago 240kB

#打标签

[root@hdss7-200 certs]# docker tag f9d5de079539 harbor.od.com/public/pause:latest

#推送到harbor仓库

[root@zzgw7-200 certs]# docker push harbor.od.com/public/pause:latest

创建kubelet启动脚本

[root@zzgw7-21 conf]# vim /opt/kubernetes/server/bin/kubelet.sh

#!/bin/sh

./kubelet \

# 匿名登陆,这里设置为不允许

--anonymous-auth=false \

# 这里需要和docker的daemon.json保持一直

--cgroup-driver systemd \

--cluster-dns 192.168.0.2 \

--cluster-domain cluster.local \

--runtime-cgroups=/systemd/system.slice \

--kubelet-cgroups=/systemd/system.slice \

# 设置为不关闭swap分区也正常启动,正常需要关闭swap分区的。

--fail-swap-on="false" \

--client-ca-file ./certs/ca.pem \

--tls-cert-file ./certs/kubelet.pem \

--tls-private-key-file ./certs/kubelet-key.pem \

# 主机名

--hostname-override zzgw7-21.host.com \

--image-gc-high-threshold 20 \

--image-gc-low-threshold 10 \

--kubeconfig ./conf/kubelet.kubeconfig \

--log-dir /data/logs/kubernetes/kube-kubelet \

--pod-infra-container-image harbor.od.com/public/pause:latest \

--root-dir /data/kubelet

注意:kubelet集群各主机的启动脚本略不同,其他节点注意修改:--hostname-override

授权并创建日志目录

[root@zzgw7-21 bin]# chmod +x /opt/kubernetes/server/bin/kubelet.sh

[root@zzgw7-21 bin]# mkdir -p /data/logs/kubernetes/kube-kubelet /data/kubelet

创建 supervisor配置

[root@zzgw7-21 bin]# cat /etc/supervisord.d/kube-kubelet.ini

[program:kube-kubelet-7-21]

command=/opt/kubernetes/server/bin/kubelet.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=22 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=false ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-kubelet/kubelet.stdout.log ; stdout log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

stderr_logfile=/data/logs/kubernetes/kube-kubelet/kubelet.stderr.log ; stderr log path, NONE for none; default AUTO

stderr_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stderr_logfile_backups=4 ; # of stderr logfile backups (default 10)

stderr_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stderr_events_enabled=false ; emit events on stderr writes (default false)

注意:其他主机部署时请注意修改program标签

启动服务并检查

[root@zzgw7-21 bin]# supervisorctl update

kube-kubelet-7-21: added process group

[root@zzgw7-21 bin]# supervisorctl status

etcd-server-7-21 RUNNING pid 1456, uptime 1:24:18

kube-apiserver-7-21 RUNNING pid 1458, uptime 1:24:18

kube-controller-manager-7-21 RUNNING pid 1455, uptime 1:24:18

kube-kubelet-7-21 RUNNING pid 2116, uptime 0:01:38

kube-scheduler-7-21 RUNNING pid 2078, uptime 0:03:55

部署启动检查所有集群规划主机

其他节点类似,有些需要稍许调整:

/opt/kubernetes/server/bin/kubelet.sh

/etc/supervisord.d/kube-kubelet.ini

检查运算节点集群

[root@zzgw7-22 certs]# kubectl get node

NAME STATUS ROLES AGE VERSION

zzgw7-21.host.com Ready <none> 9m v1.15.2

zzgw7-22.host.com Ready <none> 59s v1.15.2

给主机打上角色标签

标签功能是特色管理功能之一

[root@zzgw7-22 certs]# kubectl label node zzgw7-21.host.com node-role.kubernetes.io/node=

// 返回结果

node/zzgw7-21.host.com labeled

[root@zzgw7-22 certs]# kubectl get node

NAME STATUS ROLES AGE VERSION

zzgw7-21.host.com Ready node 11m v1.15.2

zzgw7-22.host.com Ready <none> 3m21s v1.15.2

[root@zzgw7-22 certs]# kubectl label node zzgw7-21.host.com node-role.kubernetes.io/master=

// 返回结果

node/zzgw7-21.host.com labeled

[root@zzgw7-22 certs]# kubectl get node

NAME STATUS ROLES AGE VERSION

zzgw7-21.host.com Ready master,node 11m v1.15.2

zzgw7-22.host.com Ready <none> 3m51s v1.15.2

部署 kube-proxy服务

部署主机

| 主机名 | 角色 | ip |

|---|---|---|

| zzgw7-21.host.com | kube-proxy | 10.4.7.21 |

| zzgw7-22.host.com | kube-proxy | 10.4.7.21 |

注意:这里部署以hdss7-21主机为例,其他运算节点类似

签发kube-proxy证书

运维主机zzgw7-200操作

创建生成证书签名请求(csr)的json配置文件

[root@hdss7-200 certs]# cd /opt/certs/

[root@hdss7-200 certs]# vi kube-proxy-csr.json

i{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

生成kubelet证书和私钥

[root@zzgw7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client kube-proxy-csr.json |cfssl-json -bare kube-proxy-client

检查证书与私钥

[root@zzgw7-200 certs]# ll |grep proxy

-rw-r--r-- 1 root root 1005 Mar 29 17:46 kube-proxy-client.csr

-rw------- 1 root root 1675 Mar 29 17:46 kube-proxy-client-key.pem

-rw-r--r-- 1 root root 1383 Mar 29 17:46 kube-proxy-client.pem

-rw-r--r-- 1 root root 267 Mar 29 17:43 kube-proxy-csr.json

各节点拷贝kube-proxy证书

[root@zzgw7-21 ~]# cd /opt/kubernetes/server/bin/certs/

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/kube-proxy-client.pem .

[root@zzgw7-21 certs]# scp zzgw7-200:/opt/certs/kube-proxy-client-key.pem .

创建配置

只需要在zzgw7-21主机操作,再将生成的配置文件拷贝到各规划节点即可。

1.切换至conf目录

[root@zzgw7-21 certs]# cd ../conf/

[root@zzgw7-21 conf]# pwd

/opt/kubernetes/server/bin/conf

set-cluster

kubectl config set-cluster myk8s \

--certificate-authority=/opt/kubernetes/server/bin/certs/ca.pem \

--embed-certs=true \

--server=https://10.4.7.10:7443 \

--kubeconfig=kube-proxy.kubeconfig

//返回结果

Cluster "myk8s" set.

set-credentials

kubectl config set-credentials kube-proxy \

--client-certificate=/opt/kubernetes/server/bin/certs/kube-proxy-client.pem \

--client-key=/opt/kubernetes/server/bin/certs/kube-proxy-client-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

//返回结果

User "kube-proxy" set.

set-context

kubectl config set-context myk8s-context \

--cluster=myk8s \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

//返回结果

Context "myk8s-context" created.

use-context

kubectl config use-context myk8s-context --kubeconfig=kube-proxy.kubeconfig

//返回结果

Switched to context "myk8s-context".

查看生成配置文件

[root@zzgw7-21 conf]# ll

total 24

-rw-r--r-- 1 root root 2216 Mar 29 01:14 audit.yaml

-rw-r--r-- 1 root root 258 Mar 29 16:52 k8s-node.yaml

-rw------- 1 root root 6215 Mar 29 16:45 kubelet.kubeconfig

-rw------- 1 root root 6235 Mar 29 17:57 kube-proxy.kubeconfig ## 生成的文件

拷贝kube-proxy.kubeconfig 到 zzgw7-22

[root@zzgw7-21 conf]# scp kube-proxy.kubeconfig zzgw7-22:/opt/kubernetes/server/bin/conf/

root@zzgw7-22's password:

kube-proxy.kubeconfig 100% 6235 5.0MB/s 00:00

配置ipvs 转发

查看ipvs模块是否开启

[root@zzgw7-21 conf]# lsmod |grep ip_vs //无结果,说明未开启

开启ipvs模块

[root@zzgw7-21 conf]# vi /root/ipvs.sh

#!/bin/bash

ipvs_mods_dir="/usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs"

for i in $(ls $ipvs_mods_dir|grep -o "^[^.]*")

do

/sbin/modinfo -F filename $i &>/dev/null

if [ $? -eq 0 ];then

/sbin/modprobe $i

fi

done

[root@zzgw7-21 conf]# sh /root/ipvs.sh

检测ipvs模块是否开启

[root@zzgw7-21 conf]# lsmod |grep ip_vs

ip_vs_wrr 12697 0

ip_vs_wlc 12519 0

ip_vs_sh 12688 0

ip_vs_sed 12519 0

ip_vs_rr 12600 0

ip_vs_pe_sip 12740 0

nf_conntrack_sip 33780 1 ip_vs_pe_sip

ip_vs_nq 12516 0

ip_vs_lc 12516 0

ip_vs_lblcr 12922 0

ip_vs_lblc 12819 0

ip_vs_ftp 13079 0

ip_vs_dh 12688 0

ip_vs 145497 24 ip_vs_dh,ip_vs_lc,ip_vs_nq,ip_vs_rr,ip_vs_sh,ip_vs_ftp,ip_vs_sed,ip_vs_wlc,ip_vs_wrr,ip_vs_pe_sip,ip_vs_lblcr,ip_vs_lblc

nf_nat 26583 3 ip_vs_ftp,nf_nat_ipv4,nf_nat_masquerade_ipv4

nf_conntrack 139224 8 ip_vs,nf_nat,nf_nat_ipv4,xt_conntrack,nf_nat_masquerade_ipv4,nf_conntrack_netlink,nf_conntrack_sip,nf_conntrack_ipv4

libcrc32c 12644 4 xfs,ip_vs,nf_nat,nf_conntrack

创建kube-proxy启动脚本

[root@zzgw7-21 conf]# cd /opt/kubernetes/server/bin/

[root@zzgw7-21 bin]# vim kube-proxy.sh

#!/bin/bash

./kube-proxy \

--cluster-cidr 172.7.0.0/16 \

--hostname-override zzgw7-21.host.com \

--proxy-mode=ipvs \

--ipvs-scheduler=nq \

--kubeconfig ./conf/kube-proxy.kubeconfig

注意:其他主机部署时请注意修改--hostname-override该主机的主机名

检查配置、授权、创建日志目录

[root@zzgw7-21 bin]# ll conf/|grep kube-proxy

-rw------- 1 root root 6235 Mar 29 17:57 kube-proxy.kubeconfig

[root@zzgw7-21 bin]# chmod +x kube-proxy.sh

[root@zzgw7-21 bin]# mkdir -p /data/logs/kubernetes/kube-proxy

创建supervisor配置

[root@zzgw7-21 bin]# cat /etc/supervisord.d/kube-proxy.ini

[program:kube-proxy-7-21]

command=/opt/kubernetes/server/bin/kube-proxy.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=22 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=false ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-proxy/proxy.stdout.log ; stdout log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

stderr_logfile=/data/logs/kubernetes/kube-proxy/proxy.stderr.log ; stderr log path, NONE for none; default AUTO

stderr_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stderr_logfile_backups=4 ; # of stderr logfile backups (default 10)

stderr_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stderr_events_enabled=false ; emit events on stderr writes (default false)

注意:其他主机部署时请注意修改program标签

启动服务并检查

[root@zzgw7-21 bin]# supervisorctl update

kube-proxy-7-21: added process group

[root@zzgw7-21 bin]# supervisorctl status

etcd-server-7-21 RUNNING pid 1456, uptime 2:10:21

kube-apiserver-7-21 RUNNING pid 1458, uptime 2:10:21

kube-controller-manager-7-21 RUNNING pid 1455, uptime 2:10:21

kube-kubelet-7-21 RUNNING pid 2116, uptime 0:47:41

kube-proxy-7-21 RUNNING pid 12875, uptime 0:00:33

kube-scheduler-7-21 RUNNING pid 2078, uptime 0:49:58

安装部署启动检查所有集群规划主机

zzgw7-22 跟上述基本相同

/etc/supervisord.d/kube-proxy.ini

需要更改成[program:kube-proxy-7-21]

/opt/kubernetes/server/bin/kube-proxy.sh

需要改成 --hostname-override zzgw-22.host.com

验证kubernetes集群

创建一个资源配置清单

[root@zzgw7-22 certs]# vim /root/nginx-ds.yml

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: nginx-ds

spec:

template:

metadata:

labels:

app: nginx-ds

spec:

containers:

- name: my-nginx

image: harbor.od.com/public/nginx:v1.7.9

ports:

- containerPort: 80

应用资源配置,并检查

[root@zzgw7-22 certs]# kubectl create -f /root/nginx-ds.yml

daemonset.extensions/nginx-ds created

[root@zzgw7-22 certs]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-ds-5jsnc 1/1 Running 0 55s

nginx-ds-rtq7d 1/1 Running 0 55s

[root@zzgw7-22 certs]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ds-5jsnc 1/1 Running 0 27m 172.7.22.2 zzgw7-22.host.com <none> <none>

nginx-ds-rtq7d 1/1 Running 0 27m 172.7.21.2 zzgw7-21.host.com <none> <none>

访问pod资源测试

[root@zzgw7-22 certs]# curl -I 172.7.22.2

HTTP/1.1 200 OK

Server: nginx/1.7.9

Date: Sun, 29 Mar 2020 10:55:11 GMT

Content-Type: text/html

Content-Length: 612

Last-Modified: Tue, 23 Dec 2014 16:25:09 GMT

Connection: keep-alive

ETag: "54999765-264"

Accept-Ranges: bytes

[root@zzgw7-22 certs]# curl -I 172.7.21.2

curl: (7) Failed connect to 172.7.21.2:80; Connection timed out

问题:跨宿主机的pod资源,无法访问。

解决方案:通过CNI网络插件实现POD资源能够跨宿主机就行通信

部署 Addons插件

K8S的CNI网络插件-Flannel

部署主机

| 主机名 | 服务 | ip |

|---|---|---|

| zzgw7-21.host.com | flannel | 10.4.7.21 |

| zzgw7-22.host.com | flannel | 10.4.7.22 |

注意:这里部署文档以zzgw7-21.host.com主机为例,另外一台运算节点安装部署方法类似

下载软件,解压,做软链

[root@hdss7-21 ~]# wget https://github.com/coreos/flannel/releases/download/v0.11.0/flannel-v0.11.0-linux-amd64.tar.gz

[root@zzgw7-21 ~]# mkdir /opt/flannel-v0.11.0

[root@zzgw7-21 ~]# tar xf flannel-v0.11.0-linux-amd64.tar.gz -C /opt/flannel-v0.11.0/

[root@zzgw7-21 ~]# ln -s /opt/flannel-v0.11.0/ /opt/flannel

拷贝证书

[root@zzgw7-21 ~]# mkdir /opt/flannel/cert

[root@zzgw7-21 ~]# cd /opt/flannel/cert/

[root@zzgw7-21 cert]# scp zzgw7-200:/opt/certs/ca.pem .

[root@zzgw7-21 cert]# scp zzgw7-200:/opt/certs/client.pem .

[root@zzgw7-21 cert]# scp zzgw7-200:/opt/certs/client-key.pem .

[root@zzgw7-21 cert]# ll

total 12

-rw-r--r-- 1 root root 1354 Mar 29 19:39 ca.pem

-rw------- 1 root root 1675 Mar 29 19:40 client-key.pem

-rw-r--r-- 1 root root 1371 Mar 29 19:39 client.pem

创建配置文件

[root@zzgw7-21 cert]# cat /opt/flannel/cert/subnet.env

FLANNEL_NETWORK=172.7.0.0/16 #pod资源的IP范围

FLANNEL_SUBNET=172.7.21.1/24 #本机的IP范围

FLANNEL_MTU=1500

FLANNEL_IPMASQ=fals

注意:lannel集群各主机的配置略有不同,SUBNET需要更改

创建flanneld启动脚本

[root@zzgw7-21 cert]# vim /opt/flannel/flanneld.sh

#!/bin/sh

./flanneld \

--public-ip=10.4.7.21 \

--etcd-endpoints=https://10.4.7.12:2379,https://10.4.7.21:2379,https://10.4.7.22:2379 \

--etcd-keyfile=./cert/client-key.pem \

--etcd-certfile=./cert/client.pem \

--etcd-cafile=./cert/ca.pem \

--iface=eth0 \

--subnet-file=./subnet.env \

--healthz-port=2401

授权、创建日志目录

[root@zzgw7-21 cert]# chmod +x /opt/flannel/flanneld.sh

[root@zzgw7-21 cert]# mkdir -p /data/logs/flanneld

操作etcd,增加host-gw

启动flannel之前,需要在etcd中添加网络配置记录

# 给etcdctl做个软连接

[root@zzgw7-21 cert]# ln -s /opt/etcd/etcdctl /usr/bin/

# 写入etcd

[root@zzgw7-21 cert]# etcdctl set /coreos.com/network/config '{"Network": "172.7.0.0/16", "Backend": {"Type": "host-gw"}}'

//返回结果

{"Network": "172.7.0.0/16", "Backend": {"Type": "host-gw"}}

# 查看

[root@zzgw7-21 cert]# etcdctl get /coreos.com/network/config

//返回结果

{"Network": "172.7.0.0/16", "Backend": {"Type": "host-gw"}}

host-gw:直接路由的方式,将容器网络的路由信息直接更新到主机的路由表中,仅适用于二层直接可达的网络

创建supervisor配置

[root@hdss7-21 etcd]# vim /etc/supervisord.d/flannel.ini

[program:flanneld-7-21]

command=/opt/flannel/flanneld.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/flannel ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/flanneld/flanneld.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

stopasgroup=true ;默认为false,进程被杀死时,是否向这个进程组发送stop信号,包括子进程

killasgroup=true ;默认为false,向进程组发送kill信号,包括子进程

启动服务并检查

[root@zzgw7-21 cert]# supervisorctl update

flanneld-7-21: added process group

[root@zzgw7-21 cert]# supervisorctl status

etcd-server-7-21 RUNNING pid 1456, uptime 3:50:11

flanneld-7-21 RUNNING pid 41119, uptime 0:00:33 #

kube-apiserver-7-21 RUNNING pid 1458, uptime 3:50:11

kube-controller-manager-7-21 RUNNING pid 1455, uptime 3:50:11

kube-kubelet-7-21 RUNNING pid 2116, uptime 2:27:31

kube-proxy-7-21 RUNNING pid 12875, uptime 1:40:23

kube-scheduler-7-21 RUNNING pid 2078, uptime 2:29:48

安装部署启动检查所有集群规划节点

其他节点基本和zzgw7-21相同,注意修改一下文件:

# subnet.env

FLANNEL_SUBNET=172.7.22.1/24

# flanneld.sh

--public-ip=10.4.7.22

# /etc/supervisord.d/flannel.ini

[program:flanneld-7-22]

验证集群pod之间的网络互通

[root@zzgw7-21 ~]# ping 172.7.21.2

PING 172.7.21.2 (172.7.21.2) 56(84) bytes of data.

64 bytes from 172.7.21.2: icmp_seq=1 ttl=64 time=2.00 ms

64 bytes from 172.7.21.2: icmp_seq=2 ttl=64 time=0.036 ms

[root@zzgw7-21 ~]# ping 172.7.22.2

PING 172.7.22.2 (172.7.22.2) 56(84) bytes of data.

64 bytes from 172.7.22.2: icmp_seq=1 ttl=63 time=0.301 ms

64 bytes from 172.7.22.2: icmp_seq=2 ttl=63 time=0.367 ms

在各运算节点上优化iptables规则

注意:iptables规则各主机的略有不同,其他运算节点上执行时注意修改。

安装iptables

[root@zzgw7-21 ~]# yum install -y iptables-services

[root@zzgw7-21 ~]# systemctl start iptables

[root@zzgw7-21 ~]# systemctl enable iptables

优化SNAT规则,各运算节点之间的各POD之间的网络通信不再出网

[root@zzgw7-21 ~]# iptables -t nat -D POSTROUTING -s 172.7.21.0/24 ! -o docker0 -j MASQUERADE

[root@zzgw7-21 ~]# iptables -t nat -I POSTROUTING -s 172.7.21.0/24 ! -d 172.7.0.0/16 ! -o docker0 -j MASQUERADE

10.4.7.21主机上的,来源是172.7.21.0/24段的docker的ip,目标ip不是172.7.0.0/16段,网络发包不从docker0桥设备出站的,才进行SNAT转换

删除拒绝所有的规则

[root@zzgw7-21 ~]# iptables-save|grep -i reject

-A INPUT -j REJECT --reject-with icmp-host-prohibited

-A FORWARD -j REJECT --reject-with icmp-host-prohibited

[root@zzgw7-21 ~]# iptables -t filter -D INPUT -j REJECT --reject-with icmp-host-prohibited

[root@zzgw7-21 ~]# iptables -t filter -D FORWARD -j REJECT --reject-with icmp-host-prohibited

各运算节点保存iptables规则

[root@zzgw7-21 ~]# iptables-save >/etc/sysconfig/iptables

[root@zzgw7-21 ~]# service iptables save

iptables: Saving firewall rules to /etc/sysconfig/iptables:[ OK ]

[root@hdss7-21 ~]# systemctl restart docker

容器网络里坦诚相待,不需要遮掩

容器直接直接的访问,将会记录容器的IP地址,而不是宿主机的IP地址。

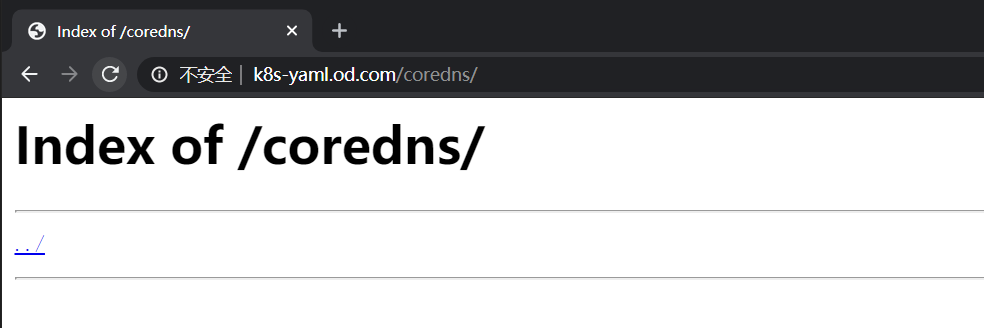

K8S资源配置清单的内网http服务

在运维主机zzgw7-200.host.com上,配置一个nginx虚拟主机,用以提供k8s统一的资源配置清单访问入口。

配置nginx(zzgw7-200)

[root@zzgw7-200 ~]# /etc/nginx/conf.d/k8s-yaml.od.com.conf

server {

listen 80;

server_name k8s-yaml.od.com;

location / {

autoindex on;

default_type text/plain;

root /data/k8s-yaml;

}

}

#检查并重启

[root@hdss7-200 html]# nginx -t

[root@hdss7-200 html]# nginx -s reload

#建立yaml目录和coredns的yaml目录

[root@hdss7-200 ~]# mkdir -p /data/k8s-yaml/coredns

添加A记录(zzgw7-11)

[root@zzgw7-11 ~]# vim /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019122303 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

harbor A 10.4.7.200

k8s-yaml A 10.4.7.200

[root@zzgw7-11 ~]# systemctl restart named

[root@zzgw7-11 ~]# dig -t A k8s-yaml.od.com @10.4.7.11 +short

10.4.7.200

浏览器访问测试

K8S的服务发现插件-CoreDNS

准备coredns镜像

[root@zzgw7-200 ~]# docker pull coredns/coredns:1.6.5

[root@zzgw7-200 ~]# docker tag 70f311871ae1 harbor.od.com/public/coredns:v1.6.5

[root@zzgw7-200 ~]# docker push harbor.od.com/public/coredns:v1.6.5

准备资源配置清单

zzgw7-200操作

进入存放资源清单目录

[root@zzgw7-200 ~]# cd /data/k8s-yaml/coredns/

rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: Reconcile

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: EnsureExists

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

log

health

ready

kubernetes cluster.local 192.168.0.0/16

forward . 10.4.7.11

cache 30

loop

reload

loadbalance

}

deployment.yaml

IapiVersion: apps/v1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/name: "CoreDNS"

spec:

replicas: 1

selector:

matchLabels:

k8s-app: coredns

template:

metadata:

labels:

k8s-app: coredns

spec:

priorityClassName: system-cluster-critical

serviceAccountName: coredns

containers:

- name: coredns

image: harbor.od.com/public/coredns:v1.6.5

args:

- -conf

- /etc/coredns/Corefile

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

service.yaml

apiVersion: v1

kind: Service

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: coredns

clusterIP: 192.168.0.2

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

- name: metrics

port: 9153

protocol: TCP

检查资源配置清单

[root@zzgw7-200 coredns]# ll

total 16

-rw-r--r-- 1 root root 319 Mar 29 23:34 configmap.yaml

-rw-r--r-- 1 root root 1294 Mar 29 23:35 deployment.yaml

-rw-r--r-- 1 root root 954 Mar 29 23:32 rbac.yaml

-rw-r--r-- 1 root root 387 Mar 29 23:39 service.yaml

应用资源配置清单

任意计算节点(zzgw7-21、zzgw7-22)

[root@zzgw7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/coredns/rbac.yaml

serviceaccount/coredns created

clusterrole.rbac.authorization.k8s.io/system:coredns created

clusterrolebinding.rbac.authorization.k8s.io/system:coredns created

[root@zzgw7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/coredns/configmap.yaml

configmap/coredns created

[root@zzgw7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/coredns/deployment.yaml

deployment.apps/coredns created

[root@zzgw7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/coredns/service.yaml

service/coredns created

查看创建的资源

[root@zzgw7-21 ~]# kubectl get all -n kube-system

NAME READY STATUS RESTARTS AGE

pod/coredns-9bc44c684-9m9mb 1/1 Running 0 34m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/coredns ClusterIP 192.168.0.2 <none> 53/UDP,53/TCP,9153/TCP 34m

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/coredns 1/1 1 1 34m

NAME DESIRED CURRENT READY AGE

replicaset.apps/coredns-9bc44c684 1 1 1 34m

验证coredns

[root@zzgw7-21 ~]# dig -t A www.baidu.com @192.168.0.2 +short

www.a.shifen.com.

36.152.44.95

36.152.44.96

[root@zzgw7-21 ~]# dig -t A zzgw7-21.host.com @192.168.0.2 +short

10.4.7.21 ////自建dns是coredns上级dns,所以可以查询到

解析测试

# 创建dp资源

[root@zzgw7-21 ~]# kubectl create deployment nginx-dp --image=harbor.od.com/public/nginx:v1.7.9 -n kube-public

deployment.apps/nginx-dp created

[root@zzgw7-21 ~]# kubectl get pods -n kube-public

NAME READY STATUS RESTARTS AGE

nginx-dp-5dfc689474-m2bq5 1/1 Running 0 5m21s

# 暴露dp资源

[root@zzgw7-21 ~]# kubectl expose deployment nginx-dp --port=80 -n kube-public

service/nginx-dp exposed

[root@zzgw7-21 ~]# kubectl get svc -n kube-public

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx-dp ClusterIP 192.168.157.217 <none> 80/TCP 2s

# dig解析测试

[root@zzgw7-22 ~]# dig -t A nginx-dp.kube-public.svc.cluster.local. @192.168.0.2 +short

192.168.197.109

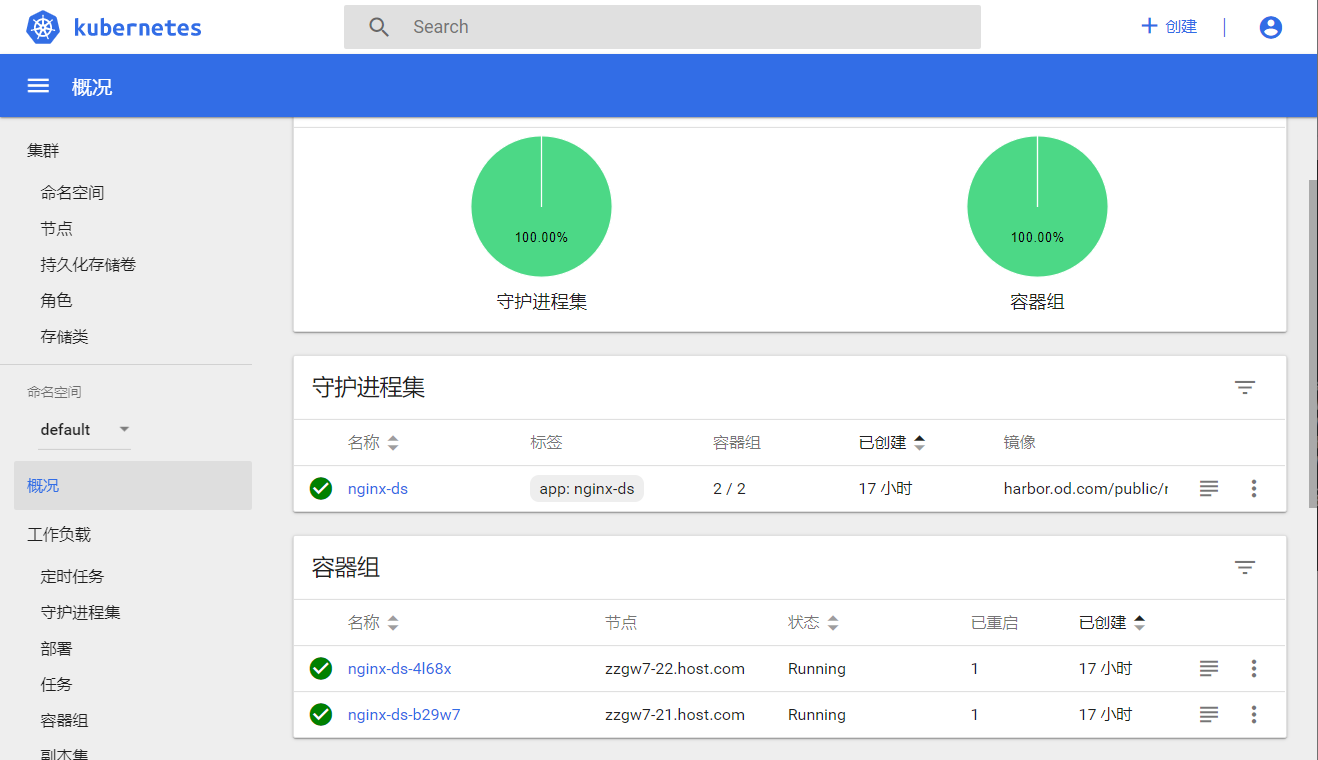

容器中测试

[root@zzgw7-22 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ds-4l68x 1/1 Running 1 15h 172.7.22.3 zzgw7-22.host.com <none> <none>

nginx-ds-b29w7 1/1 Running 1 15h 172.7.21.2 zzgw7-21.host.com <none> <none>

[root@zzgw7-22 ~]# kubectl exec -it nginx-ds-4l68x bash

root@nginx-ds-4l68x:/# ping 192.168.197.109

PING 192.168.197.109 (192.168.197.109): 48 data bytes

56 bytes from 192.168.197.109: icmp_seq=0 ttl=64 time=0.112 ms

56 bytes from 192.168.197.109: icmp_seq=1 ttl=64 time=0.048 ms

K8S的服务暴露插件-Traefik

ingress控制器

Traefik是一个用Golang开发的轻量级的Http反向代理和负载均衡器。由于可以自动配置和刷新backend节点,目前可以被绝大部分容器平台支持,例如Kubernetes,Swarm,Rancher等。由于traefik会实时与Kubernetes API交互,所以对于Service的节点变化,traefik的反应会更加迅速。总体来说traefik可以在Kubernetes中完美的运行.

准备traefik镜像

[root@zzgw7-200 ~]# docker pull traefik:v1.7.2

[root@zzgw7-200 ~]# docker tag traefik:v1.7.2 harbor.od.com/public/traefik:v1.7.2

[root@zzgw7-200 harbor]# docker push harbor.od.com/public/traefik:v1.7.2

准备资源配置清单

官网yaml文件地址:https://github.com/containous/traefik/tree/v1.7/examples/k8s

创建并进入资源清单目录

[root@zzgw7-200 ~]# mkdir -p /data/k8s-yaml/traefik

[root@zzgw7-200 ~]# cd /data/k8s-yaml/traefik

[root@zzgw7-200 traefik]#

rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: traefik-ingress-controller

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: traefik-ingress-controller

rules:

- apiGroups:

- ""

resources:

- services

- endpoints

- secrets

verbs:

- get

- list

- watch

- apiGroups:

- extensions

resources:

- ingresses

verbs:

- get

- list

- watch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: traefik-ingress-controller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: traefik-ingress-controller

subjects:

- kind: ServiceAccount

name: traefik-ingress-controller

namespace: kube-system

daemonset.yaml

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: traefik-ingress

namespace: kube-system

labels:

k8s-app: traefik-ingress

spec:

template:

metadata:

labels:

k8s-app: traefik-ingress

name: traefik-ingress

spec:

serviceAccountName: traefik-ingress-controller

terminationGracePeriodSeconds: 60

containers:

- image: harbor.od.com/public/traefik:v1.7.2

name: traefik-ingress

ports:

- name: controller

containerPort: 80

hostPort: 81

- name: admin-web

containerPort: 8080

securityContext:

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

args:

- --api

- --kubernetes

- --logLevel=INFO

- --insecureskipverify=true

- --kubernetes.endpoint=https://10.4.7.10:7443

- --accesslog

- --accesslog.filepath=/var/log/traefik_access.log

- --traefiklog

- --traefiklog.filepath=/var/log/traefik.log

- --metrics.prometheus

service.yaml

kind: Service

apiVersion: v1

metadata:

name: traefik-ingress-service

namespace: kube-system

spec:

selector:

k8s-app: traefik-ingress

ports:

- protocol: TCP

port: 80

name: controller

- protocol: TCP

port: 8080

name: admin-web

ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: traefik-web-ui

namespace: kube-system

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: traefik.od.com

http:

paths:

- path: /

backend:

serviceName: traefik-ingress-service

servicePort: 8080

应用资源配置清单

[root@zzgw7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/traefik/rbac.yaml

serviceaccount/traefik-ingress-controller created

clusterrole.rbac.authorization.k8s.io/traefik-ingress-controller created

clusterrolebinding.rbac.authorization.k8s.io/traefik-ingress-controller created

[root@zzgw7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/traefik/daemonset.yaml

daemonset.extensions/traefik-ingress created

[root@zzgw7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/traefik/service.yaml

service/traefik-ingress-service created

[root@zzgw7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/traefik/ingress.yaml

ingress.extensions/traefik-web-ui created

检查创建的资源

[root@zzgw7-21 ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-9bc44c684-rb99c 1/1 Running 0 33m

traefik-ingress-fksht 1/1 Running 0 73s

traefik-ingress-mlzzg 1/1 Running 0 73s

添加A记录解析

操作主机:zzgw7-11

[root@zzgw7-11 ~]# cat /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019122304 ; serial # serial序列号前滚

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

harbor A 10.4.7.200

k8s-yaml A 10.4.7.200

traefik A 10.4.7.10 # 添加该条记录

[root@zzgw-11 ~]# systemctl restart named

配置Nginx反向

zzgw7-11和zzgw7-12两台主机上的nginx均需要配置

[root@zzgw7-11 ~]# vim /etc/nginx/conf.d/od.com.conf

upstream default_backend_traefik {

server 10.4.7.21:81 max_fails=3 fail_timeout=10s;

server 10.4.7.22:81 max_fails=3 fail_timeout=10s;

}

server {

server_name *.od.com;

location / {

proxy_pass http://default_backend_traefik;

proxy_set_header Host $http_host;

proxy_set_header x-forwarded-for $proxy_add_x_forwarded_for;

}

}

[root@zzgw7-11 ~]# nginx -t

nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

nginx: configuration file /etc/nginx/nginx.conf test is successful

[root@zzgw7-11 ~]# nginx -s reload

注:泛域名,访问任何业务域,会调用vip,分发流量至traefik81端口

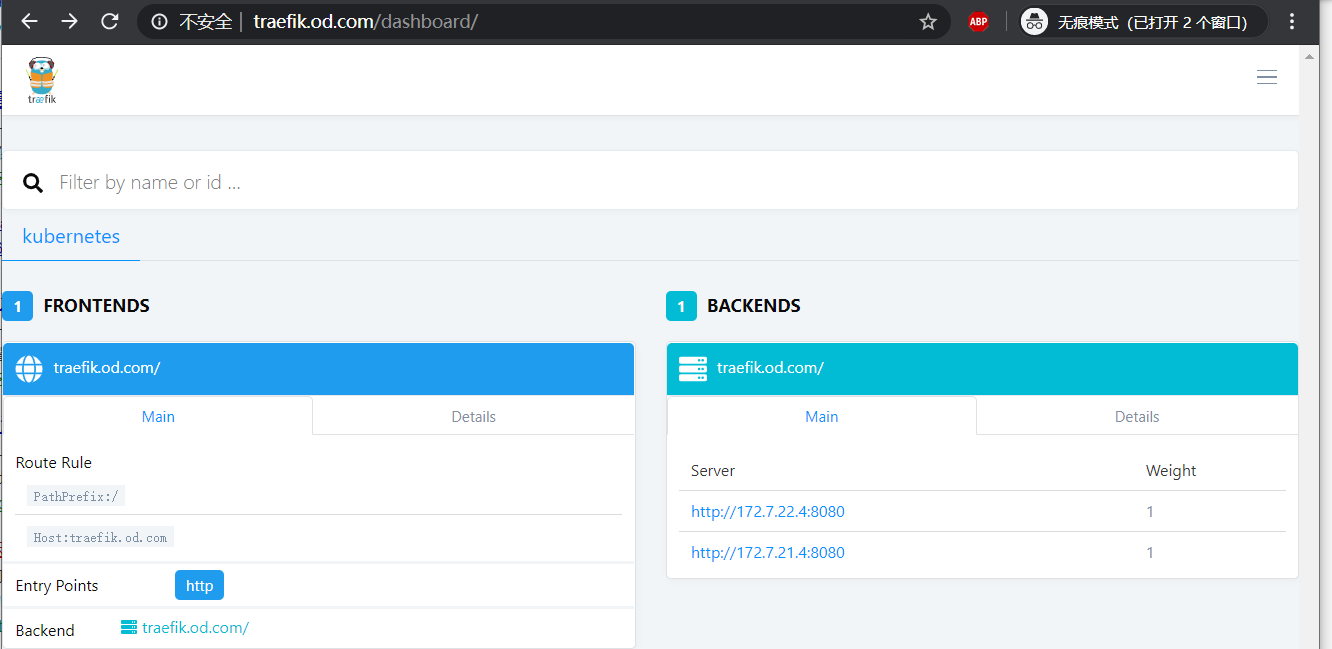

浏览器访问

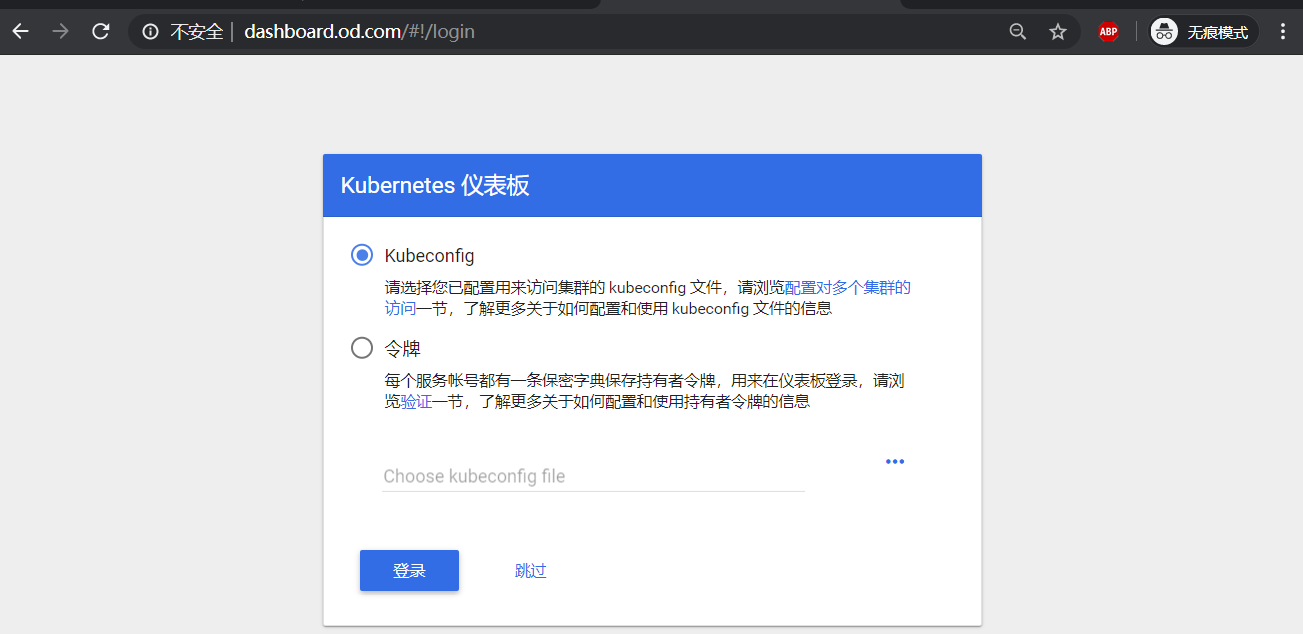

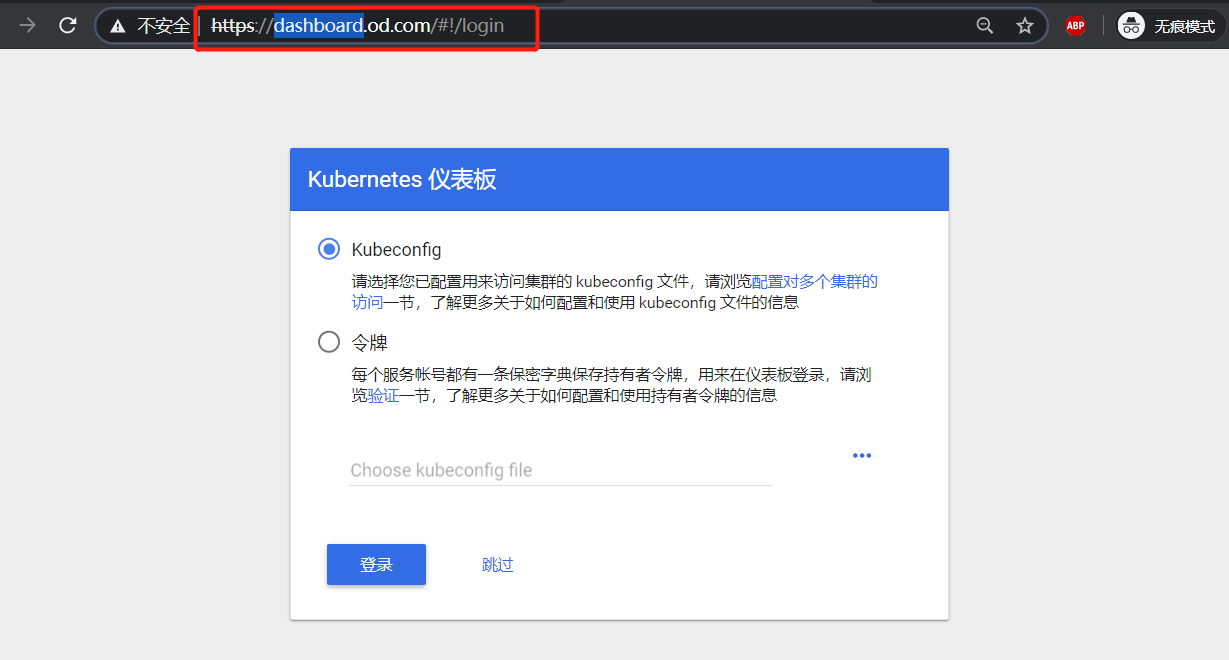

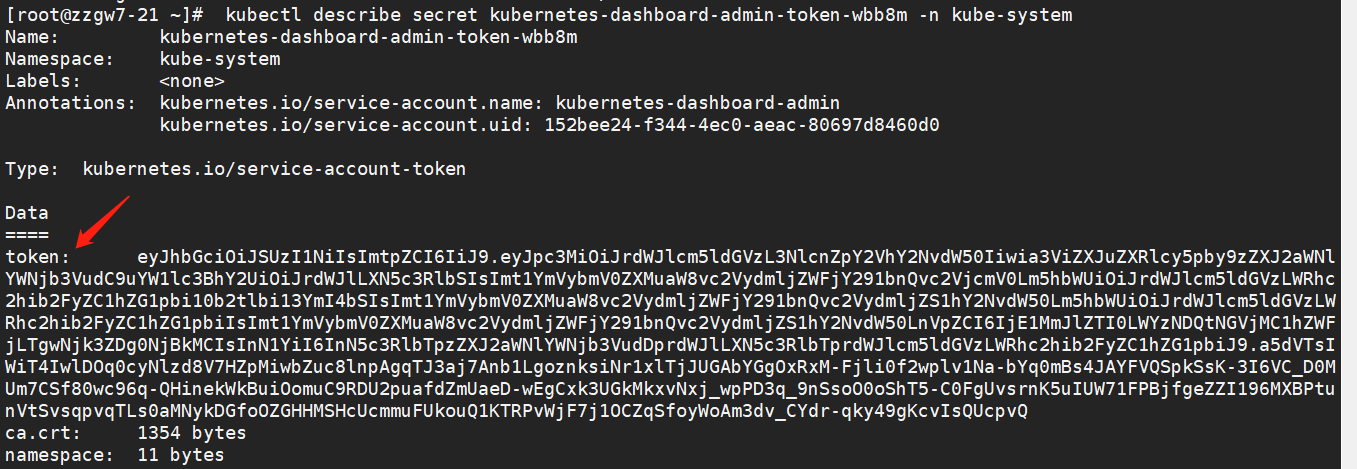

K8S的GUI资源管理插件-dashboard

准备dashboard镜像

# 下载镜像

[root@zzgw7-200 ~]# docker pull k8scn/kubernetes-dashboard-amd64:v1.8.3

# 打标签

[root@zzgw7-200 ~]# docker tag k8scn/kubernetes-dashboard-amd64:v1.8.3 harbor.od.com/public/kubernetes-dashboard:v1.8.3

# 推送到harbor仓库

[root@zzgw7-200 ~]# docker push harbor.od.com/public/kubernetes-dashboard:v1.8.3

准备资源配置清单

创建并进入dashboard资源清单目录

[root@zzgw7-200 ~]# mkdir -p /data/k8s-yaml/dashboard

[root@zzgw7-200 ~]# cd /data/k8s-yaml/dashboard

[root@zzgw7-200 dashboard]#

rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

name: kubernetes-dashboard-admin

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard-admin

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects: