爬虫实战——起点中文网小说的爬取

首先打开起点中文网,网址为:https://www.qidian.com/

本次实战目标是爬取一本名叫《大千界域》的小说,本次实战仅供交流学习,支持作者,请上起点中文网订阅观看。

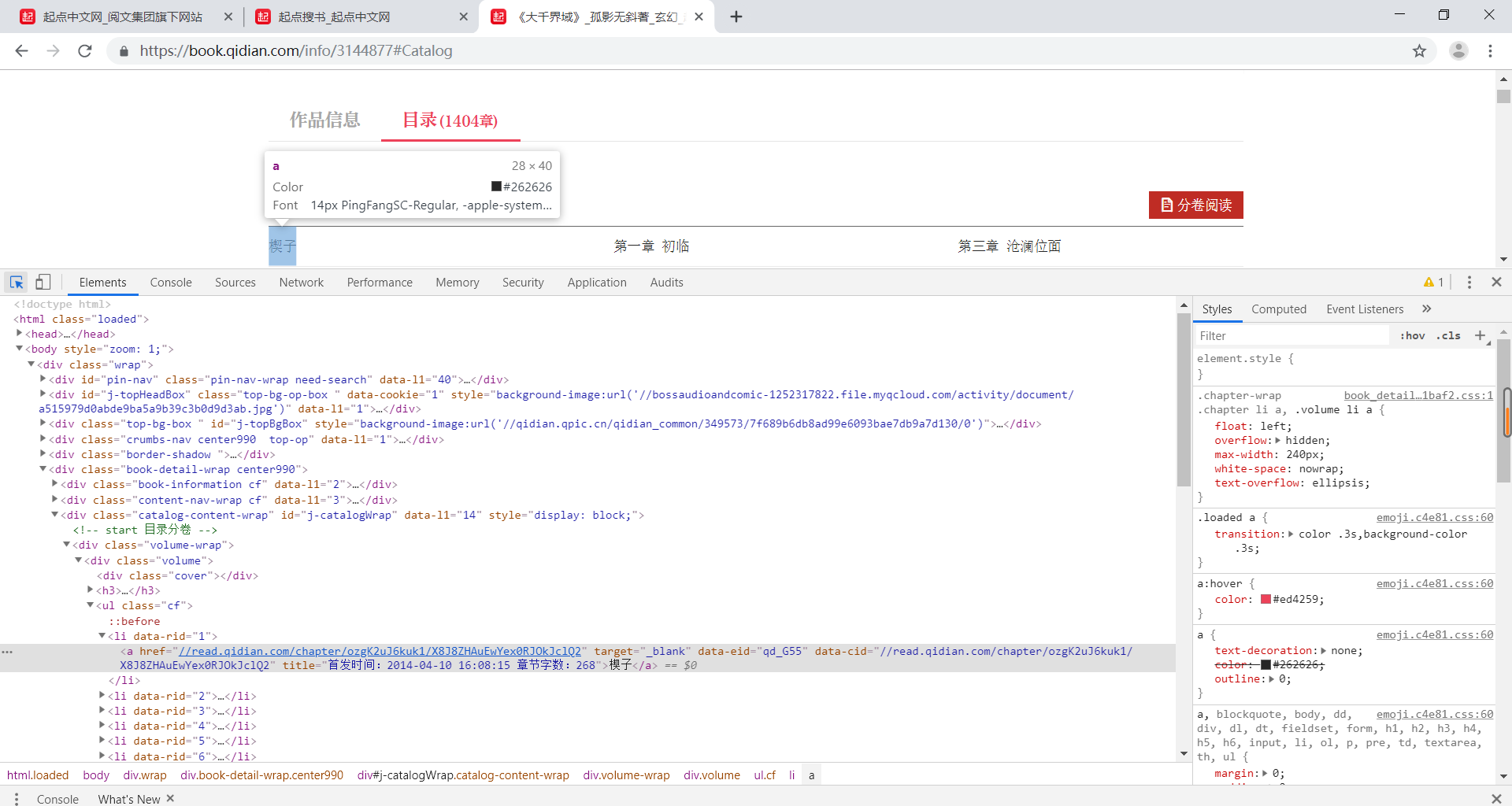

我们首先找到该小说的章节信息页面,网址为:https://book.qidian.com/info/3144877#Catalog

点击检查,获取页面的html信息,我发现每一章都对应一个url链接,故我们只要得到本页面html信息,然后通过Beautifulsoup,re等工具,就可将所有章节的url全部得到存成一个url列表然后挨个访问便可获取到所有章节内容,本次爬虫也就大功告成了!

按照我的想法,我用如下代码获取了页面html,并在后端输出显示,结果发现返回的html信息不全,包含章节链接的body标签没有被爬取到,就算补全了headers信息,还是无法获取到body标签里的内容,看来起点对反爬做的措施不错嘛,这条道走不通,咱们换一条。

import requests

def get():

url = 'https://book.qidian.com/info/3144877#Catalog'

req = requests.get(url)

print(req.text)

if __name__ == '__main__':

get()

既然这个页面是动态加载的,故可能应用ajax与后端数据库进行了数据交互,然后渲染到了页面上,我们只需拦截这次交互请求,获取到交互的数据即可。

打开网页https://book.qidian.com/info/3144877#Catalog,再次右键点击检查即审查元素,因为是要找到数据交互,故点击network里的XHR请求,精确捕获XHR对象,我们发现一个url为https://book.qidian.com/ajax/book/category?_csrfToken=1iiVodIPe2qL9Z53jFDIcXlmVghqnB6jSwPP5XKF&bookId=3144877的请求返回的response是一个包含所有卷id和章节id的json对象,这就是我们要寻找的交互数据。

通过如下代码,便可获取到该json对象

import requests

import random

def random_user_agent():

list = ['Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.36 (KHTML like Gecko) Chrome/44.0.2403.155 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2227.0 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.3; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2226.0 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.4; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2225.0 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.3; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2225.0 Safari/537.36']

seed = random.randint(0, len(list)-1)

return list[seed]

def getJson():

url = 'https://book.qidian.com/ajax/book/category?_csrfToken=BXnzDKmnJamNAgLu4O3GknYVL2YuNX5EE86tTBAm&bookId=3144877'

headers = {'User-Agent': random_user_agent(),

'Referer': 'https://book.qidian.com/info/3144877',

'Cookie': '_csrfToken=BXnzDKmnJamNAgLu4O3GknYVL2YuNX5EE86tTBAm; newstatisticUUID=1564467217_1193332262; qdrs=0%7C3%7C0%7C0%7C1; showSectionCommentGuide=1; qdgd=1; lrbc=1013637116%7C436231358%7C0%2C1003541158%7C309402995%7C0; rcr=1013637116%2C1003541158; bc=1003541158%2C1013637116; e1=%7B%22pid%22%3A%22qd_P_limitfree%22%2C%22eid%22%3A%22qd_E01%22%2C%22l1%22%3A4%7D; e2=%7B%22pid%22%3A%22qd_P_free%22%2C%22eid%22%3A%22qd_A18%22%2C%22l1%22%3A3%7D'

}

res = requests.get(url=url, params=headers)

json_str = res.text

print(json_str)

if __name__ == '__main__':

getJson()

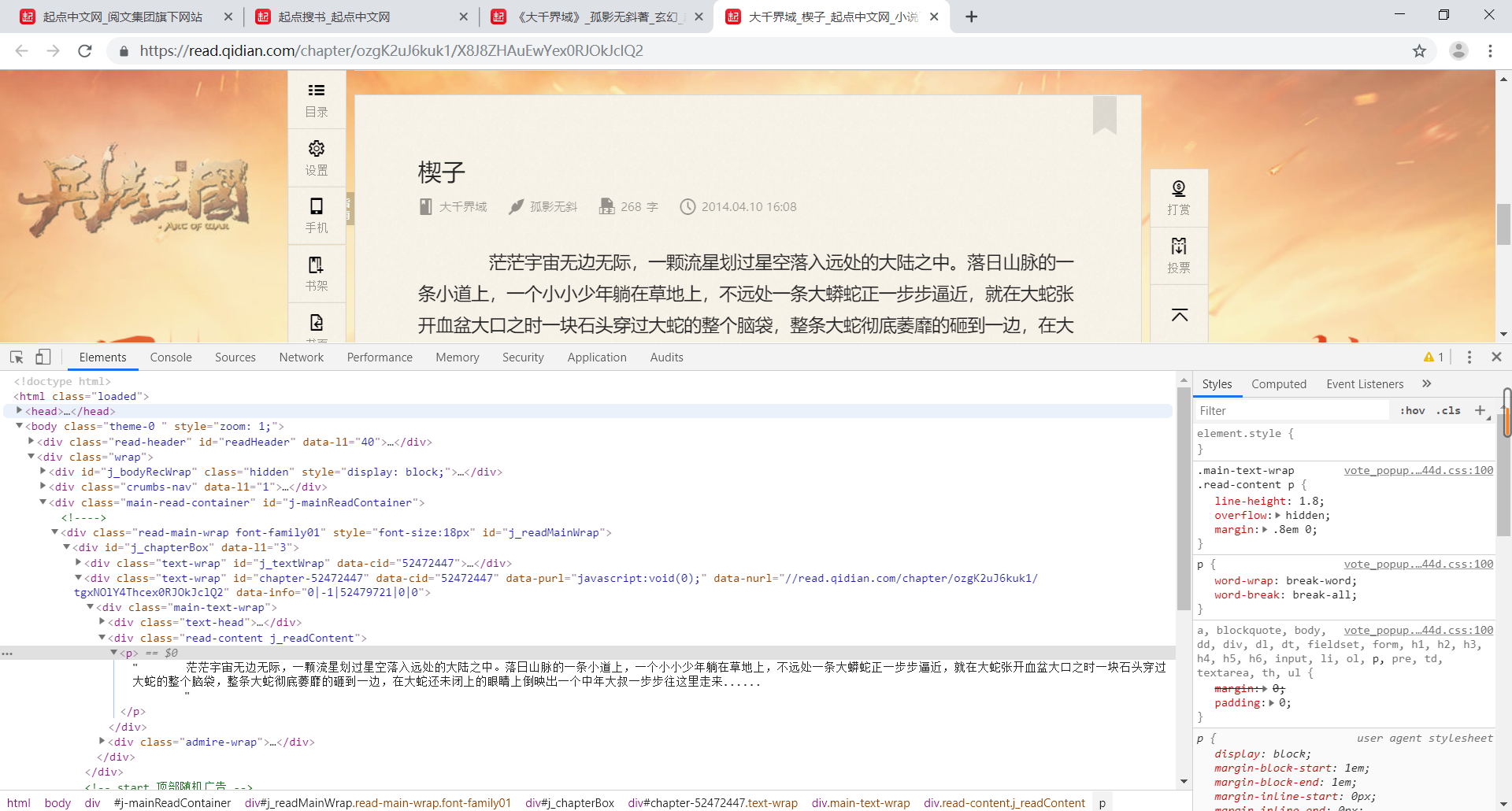

在小说的章节信息页面里我发现有分卷阅读,点击进入后发现该页面包含该卷的所有章节内容,且每一个分卷阅读的前半段url都是https://read.qidian.com/hankread/3144877/,变得只是该卷的id号,例如第一卷初来乍到的id为8478272,故阅读整个第一卷内容的链接为https://read.qidian.com/hankread/3144877/8478272。故我们只需要在上述json对象里截取所有卷id,便可以爬取整本了!

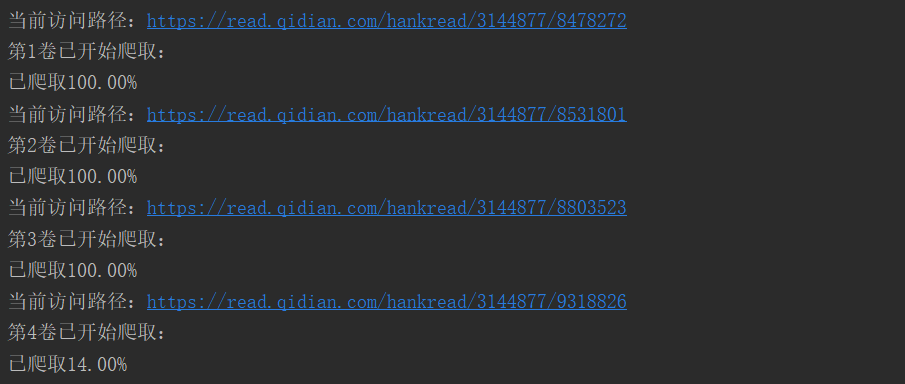

爬取效果如下:

完整代码如下:

import requests

import re

from bs4 import BeautifulSoup

from requests.exceptions import *

import random

import json

import time

import os

import sys

def random_user_agent():

list = ['Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.36 (KHTML like Gecko) Chrome/44.0.2403.155 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2227.0 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.3; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2226.0 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.4; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2225.0 Safari/537.36',

'Mozilla/5.0 (Windows NT 6.3; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/41.0.2225.0 Safari/537.36']

seed = random.randint(0, len(list)-1)

return list[seed]

def getJson():

url = 'https://book.qidian.com/ajax/book/category?_csrfToken=BXnzDKmnJamNAgLu4O3GknYVL2YuNX5EE86tTBAm&bookId=3144877'

headers = {'User-Agent': random_user_agent(),

'Referer': 'https://book.qidian.com/info/3144877',

'Cookie': '_csrfToken=BXnzDKmnJamNAgLu4O3GknYVL2YuNX5EE86tTBAm; newstatisticUUID=1564467217_1193332262; qdrs=0%7C3%7C0%7C0%7C1; showSectionCommentGuide=1; qdgd=1; lrbc=1013637116%7C436231358%7C0%2C1003541158%7C309402995%7C0; rcr=1013637116%2C1003541158; bc=1003541158%2C1013637116; e1=%7B%22pid%22%3A%22qd_P_limitfree%22%2C%22eid%22%3A%22qd_E01%22%2C%22l1%22%3A4%7D; e2=%7B%22pid%22%3A%22qd_P_free%22%2C%22eid%22%3A%22qd_A18%22%2C%22l1%22%3A3%7D'

}

try:

res = requests.get(url=url, params=headers)

if res.status_code == 200:

json_str = res.text

list = json.loads(json_str)['data']['vs']

response = {

'VolumeId_List': [],

'VolumeNum_List': []

}

for i in range(len(list)):

json_str = json.dumps(list[i]).replace(" ", "")

volume_id = re.search('.*?"vId":(.*?),', json_str, re.S).group(1)

volume_num = re.search('.*?"cCnt":(.*?),', json_str, re.S).group(1)

response['VolumeId_List'].append(volume_id)

response['VolumeNum_List'].append(volume_num)

return response

else:

print('No response')

return None

except ReadTimeout:

print("ReadTimeout!")

return None

except RequestException:

print("请求页面出错!")

return None

def getPage(VolId_List, VolNum_List):

'''

通过卷章Id找到要爬取的页面,并返回页面html信息

:param VolId_List: 卷章Id列表

:param VolNum_List: 每一卷含有的章节数量列表

:return:

'''

size = len(VolId_List)

for i in range(size):

path = 'C://Users//49881//Projects//PycharmProjects//Spider2起点中文网//大千界域//卷' + str(i + 1)

mkdir(path)

url = 'https://read.qidian.com/hankread/3144877/'+VolId_List[i]

print('\n当前访问路径:'+url)

headers = {

'User-Agent': random_user_agent(),

'Referer': 'https://book.qidian.com/info/3144877',

'Cookie': 'e1=%7B%22pid%22%3A%22qd_P_hankRead%22%2C%22eid%22%3A%22%22%2C%22l1%22%3A3%7D; e2=%7B%22pid%22%3A%22qd_P_hankRead%22%2C%22eid%22%3A%22%22%2C%22l1%22%3A2%7D; _csrfToken=BXnzDKmnJamNAgLu4O3GknYVL2YuNX5EE86tTBAm; newstatisticUUID=1564467217_1193332262; qdrs=0%7C3%7C0%7C0%7C1; showSectionCommentGuide=1; qdgd=1; e1=%7B%22pid%22%3A%22qd_P_limitfree%22%2C%22eid%22%3A%22qd_E01%22%2C%22l1%22%3A4%7D; e2=%7B%22pid%22%3A%22qd_P_free%22%2C%22eid%22%3A%22qd_A18%22%2C%22l1%22%3A3%7D; rcr=3144877%2C1013637116%2C1003541158; lrbc=3144877%7C52472447%7C0%2C1013637116%7C436231358%7C0%2C1003541158%7C309402995%7C0; bc=3144877'

}

try:

res = requests.get(url=url, params=headers)

if res.status_code == 200:

print('第'+str(i+1)+'卷已开始爬取:')

parsePage(res.text, url, path, int(VolNum_List[i]))

else:

print('No response')

return None

except ReadTimeout:

print("ReadTimeout!")

return None

except RequestException:

print("请求页面出错!")

return None

time.sleep(3)

def parsePage(html, url, path, chapNum):

'''

解析小说内容页面,将每章内容写入txt文件,并存储到相应的卷目录下

:param html: 小说内容页面

:param url: 访问路径

:param path: 卷目录路径

:return: None

'''

if html == None:

print('访问路径为'+url+'的页面为空')

return

soup = BeautifulSoup(html, 'lxml')

ChapInfoList = soup.find_all('div', attrs={'class': 'main-text-wrap'})

alreadySpiderNum = 0.0

for i in range(len(ChapInfoList)):

sys.stdout.write('\r已爬取{0}'.format('%.2f%%' % float(alreadySpiderNum/chapNum*100)))

sys.stdout.flush()

time.sleep(0.5)

soup1 = BeautifulSoup(str(ChapInfoList[i]), 'lxml')

ChapName = soup1.find('h3', attrs={'class': 'j_chapterName'}).span.string

ChapName = re.sub('[\/:*?"<>|]', '', ChapName)

if ChapName == '无题':

ChapName = '第'+str(i+1)+'章 无题'

filename = path+'//'+ChapName+'.txt'

readContent = soup1.find('div', attrs={'class': 'read-content j_readContent'}).find_all('p')

for item in readContent:

paragraph = re.search('.*?<p>(.*?)</p>', str(item), re.S).group(1)

save2file(filename, paragraph)

alreadySpiderNum += 1.0

sys.stdout.write('\r已爬取{0}'.format('%.2f%%' % float(alreadySpiderNum / chapNum * 100)))

def save2file(filename, content):

with open(r''+filename, 'a', encoding='utf-8') as f:

f.write(content+'\n')

f.close()

def mkdir(path):

'''

创建卷目录文件夹

:param path: 创建路径

:return: None

'''

folder = os.path.exists(path)

if not folder:

os.makedirs(path)

else:

print('路径'+path+'已存在')

def main():

response = getJson()

if response != None:

VolId_List = response['VolumeId_List']

VolNum_List = response['VolumeNum_List']

getPage(VolId_List, VolNum_List)

else:

print('无法爬取该小说!')

print("小说爬取完毕!")

if __name__ == '__main__':

main()