Pytorch学习笔记

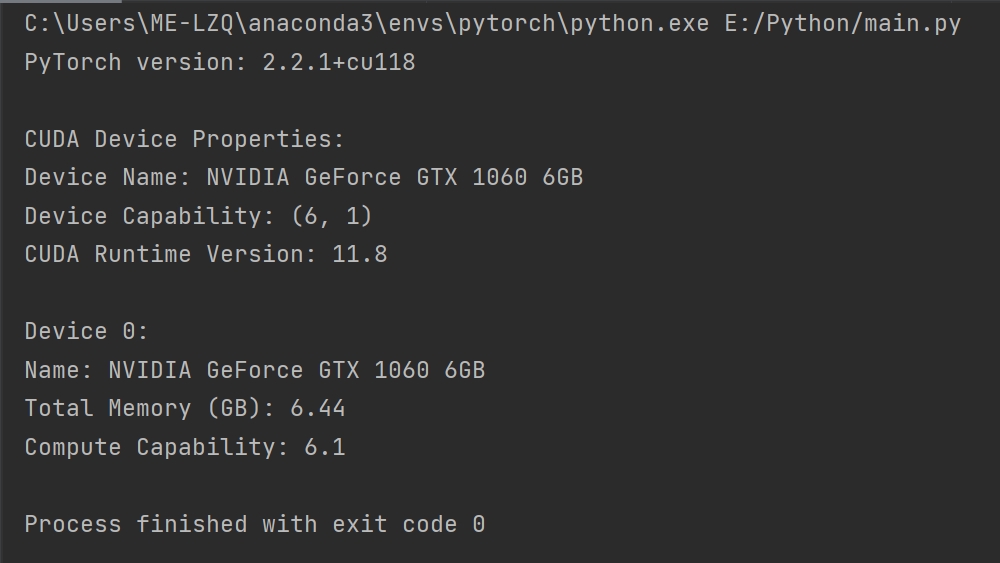

输出关于PyTorch、CUDA设备以及CUDA运行时的相关信息

import torch def check_torch_and_cuda_details(): # 检查PyTorch版本 print("PyTorch version:", torch.__version__) # 检查CUDA是否可用 if torch.cuda.is_available(): device = torch.device("cuda") # 获取CUDA设备属性 print("\nCUDA Device Properties:") print(f"Device Name: {torch.cuda.get_device_name(0)}") print(f"Device Capability: {torch.cuda.get_device_capability(0)}") # 获取CUDA运行时版本(近似于驱动版本) runtime_version = torch.version.cuda print(f"CUDA Runtime Version: {runtime_version}") # 打印所有设备的信息 for i in range(torch.cuda.device_count()): device_info = torch.cuda.get_device_properties(i) print(f"\nDevice {i}:") print(f"Name: {device_info.name}") print(f"Total Memory (GB): {device_info.total_memory / 1e9:.2f}") print(f"Compute Capability: {device_info.major}.{device_info.minor}") else: print("CUDA is not available") if __name__ == '__main__': check_torch_and_cuda_details()

运行结果

创建张量Tensor

import torch import numpy as np # 创建一个未初始化的5x3的矩阵 x = torch.empty(5, 3) print("非初始化矩阵:", x) # 创建一个随机初始化的5x3的浮点数矩阵 x = torch.rand(5, 3) print("随机初始化矩阵:", x) # 创建一个全零的5x3的长整型矩阵 x = torch.zeros(5, 3, dtype=torch.long) print("全零矩阵:", x) # 创建一个从0到9的1D张量(类似numpy数组) x = torch.arange(10) print("从0到9的一维张量:", x) # 创建一个常数张量 x = torch.full((2, 2), 7.0) print("常数值填充的2x2矩阵:", x) # 从numpy数组创建张量 np_array = np.random.randn(2, 2) x = torch.from_numpy(np_array) print("由numpy数组转换而来的张量:", x)

神经网络简单示例 - 线性回归模型

import torch import torch.nn as nn import torch.optim as optim # 定义线性回归模型 class LinearRegression(nn.Module): def __init__(self, input_size, output_size): super(LinearRegression, self).__init__() self.linear = nn.Linear(input_size, output_size) def forward(self, x): return self.linear(x) # 实例化模型 model = LinearRegression(input_size=1, output_size=1) # 定义损失函数和优化器 criterion = nn.MSELoss() optimizer = optim.SGD(model.parameters(), lr=0.01) # 假设我们有一些输入和目标输出数据 inputs = torch.randn(100, 1) targets = torch.randn(100, 1) # 训练循环(例如训练10个epoch) for epoch in range(10): # 这里的epoch数量可以根据实际情况调整 # 前向传播并计算损失 outputs = model(inputs) loss = criterion(outputs, targets) # 打印当前epoch的损失 print(f'Epoch: {epoch + 1}, Loss: {loss.item():.4f}') # 反向传播和优化 optimizer.zero_grad() # 清零梯度缓存 loss.backward() # 反向传播计算梯度 optimizer.step() # 更新权重