K8S-集群-二进制安装

1、环境准备

1.1安装规划

服务器准备

| 服务器 | ip | 组件 |

| k8s-master1 | 192.168.1.11 | ectd、api-server、controller-master、scheduler、docker |

| k8s-node01 | 192.168.1.12 | etcd、kubelet、kube-proxy、docker |

| k8s-node02 | 192.168.1.13 | etcd、kubelet、kube-proxy、docker |

软件版本

| 软件 | 版本 | 备注 |

| OS | centos7 | |

| kubernetes | 1.19.11 | |

| Etcd | v3.4.15 | |

| docker | 19.03.9 | |

| cfssl、cfssljson、cfssl-certinfo | 1.5.0 | 证书自签工具-用的cloudflare的 |

1.2 系统设置

#以下操作3台主机都需要操作

# 1、修改主机名

hostnamectl set-hostname k8s-master1

hostnamectl set-hostname k8s-node01

hostnamectl set-hostname k8s-node02

# 2、主机名解析

cat >> /etc/hosts <<EOF

192.168.1.11 k8s-master1

192.168.1.12 k8s-node01

192.168.1.13 k8s-node02

EOF

# 3、禁用swap

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

# 4、将桥接的IPV4的流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ipv6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

# 5、域名解析、相当于在网卡中添加DNS

echo "nameserver 8.8.8.8" >> /etc/resolv.conf

# 6、时间同步(也可以使用chrony)

yum install -y ntpdate

ntpdate ntp1.aliyun.com

# 7、添加定时器同步

crontab -e

- */30 * * * * /usr/sbin/ntpdate-u ntp1.aliyun.com >> /var/log/ntpdate.log 2>&1

# 8、创建日志目录

mkdir -p /var/log/kubernetes

# 9、禁用防火墙(iptables也是)

systemctl stop firewalld

systemctl disable firewallddocker安装

#需要所有的集群都要安装

# 1、创建一个软件包下载的目录

mkdir -p $HOME/k8s-install && cd $HOME/k8s-install

# 2、下载docker二进制包

wget下载:wget https://download.docker.com/linux/static/stable/x86_64/docker-19.03.9.tgz

- 注意:如果wget无法连接下载直接复制链接到浏览器下载即可

解压:tar zxvf docker-19.03.9.tgz

迁移二进制文件:mv docker/* /usr/local/bin/

查看版本:docker -v

# 3、配置开机启动

-------------------------------------------------

cat > /lib/systemd/system/docker.service << EOF

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/local/bin/dockerd

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

EOF

-----------------------------------------------

# 4、启动docker

systemctl daemon-reload

systemctl enable docker

systemctl start docker3. TLS 证书

证书工具

#这里使用cloudflare的cfssl工具来自签证书

# 1、到指定目录下

cd $HOME/k8s-install

# 2、下载工具

- 如果wget下提示无法连接、可复制网址下载

wget https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssl_1.5.0_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssljson_1.5.0_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssl-certinfo_1.5.0_linux_amd64

# 3、移动

mv cfssl_1.5.0_linux_amd64 /usr/local/bin/cfssl

mv cfssljson_1.5.0_linux_amd64 /usr/local/bin/cfssljson

mv cfssl-certinfo_1.5.0_linux_amd64 /usr/bin/cfssl-certinfo

chmod 777 /usr/local/bin/* 证书归类

生成CA证书和密钥文件如下:| 组件 | 证书 | 密钥 | 备注 |

| etcd | ca.pem | etcd-key.pem | |

| apiserver | ca.pem、apiserver.pem | apiserver-key.pem | |

| controller-manage | ca.pem、kube-controller-manage.pem | ca-key.pem、kube-controller-manage-key.pem | kubeconfig |

| scheduler | ca.pem、kube-scheduler.pem | kube-scheduler-key.pem | kubeconfig |

| kubelet | ca.pem | kubeconfig+token | |

| kube-proxy | ca.pem、kube-proxy.pem | kube-proxy-key.pem | kubeconfig |

| kubectl | ca.pem、admin.pem | admin-key.pem |

CA证书

CA:Certificate Authority

# 1、mkdir -p /root/ssl && cd /root/ssl

# 2、CA证书配置文件

----------------------------------------------------------------------

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

EOF

-------------------------------------------------------------

# 3、CA 证书签名文件

---------------------------------------------------------------

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

],

"ca": {

"expiry": "87600h"

}

}

EOF

----------------------------------------------------

# 4、生成CA证书和密钥(通过上面的两个json文件来配置CA证书和密钥相关信息)

cfssl gencert -initca ca-csr.json |cfssljson -bare ca

# 5、生成三个文件

- ca.csr

- ca-key.pem

- ca.pemetcd证书

#注意:hosts 中的IP地址、分别制定了etcd集群的主机IP(根据自身环境修改)

# 1、证书签名请求文件

-------------------------------------------------

cat > etcd-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"localhost",

"192.168.1.11",

"192.168.1.12",

"192.168.1.13"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "etcd",

"OU": "System"

}

]

}

EOF

---------------------------------------------------------

# 2、生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

# 3、生成三个证书

- etcd.csr

- etcd-key.pem

- etcd.pem kube-apiserver证书

#注意:hosts中的IP地址 分别指定了kubernetes master集群的主机IP个kubenertes服务器的IP(一般是kube-apiserver指定的service-cluster-ip-range网段中的第一个ip、如 10.254.0.1)

# 1、证书签名请求文件

-----------------------------------------------------------

cat > apiserver-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"localhost",

"192.168.1.1",

"192.168.1.2",

"192.168.1.11",

"192.168.1.12",

"192.168.1.13",

"10.254.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

----------------------------------------------------------------

# 2、生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes apiserver-csr.json | cfssljson -bare apiserver

# 3、生成3个证书

- apiserver.pem

- apiserver-key.pem

- apiserver.csrkube-controller-manager证书

# 1、生成签名请求文件

-----------------------------------------------

cat > kube-controller-manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

------------------------------------------------------

# 2、生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

# 3、3个证书

- kube-controller-manager.pem

- kube-controller-manager-key.pem

- kube-controller-manager.csrkube-scheduler证书

# 1、证书签名请求文件

--------------------------------------------------------

cat > kube-scheduler-csr.json << EOF

{

"CN": "system:kube-scheduler",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

---------------------------------------------------------------------

# 2、生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

# 3、3个证书

- kube-scheduler.pem

- kube-scheduler-key.pem

- kube-scheduler.csr admin证书

#后续kube-apiserver使用RBAC对客户端(如 kubelet、kube-proxy、 pod)请求进行授权

#kube-apiserver预定义了一些RBAC使用的RoleBindings、如cluster-admin 将Group system:master 与Role cluster-admin 绑定、该Role授权了调用kube-apiserver的所有API的权限

#文件中的 O 指该整数的Group未system:masters、kubelet使用该证书访问kube-apiserver是、由于证书被CA签名、所以认证通过、同时由于证书用户组为经过预授权的 system:masters 所以被授权访问所有API的权限

# 1、证书签名请求文件

-------------------------------------------------------

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

----------------------------------------------------------------

# 2、生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

admin.pem

admin-key.pem

admin.csr搭建完 kubernetes 集群后,可以通过命令: kubectl get clusterrolebinding cluster-admin -o yaml ,查看到 clusterrolebinding cluster-admin 的 subjects 的 kind 是 Group,name 是 system:masters。 roleRef 对象是 ClusterRole cluster-admin。 即 system:masters Group 的 user 或者 serviceAccount 都拥有 cluster-admin 的角色。 因此在使用 kubectl 命令时候,才拥有整个集群的管理权限。

kube-proxy证书

- CN 指定该证书的User为 system:kube-proxy;

- kube-apiserver 预定义的RoleBinding system:node-proxier 将User system:kube-proxy 与Role system:node-proxier绑定、该Role授予了调用kube-apiserver Proxy相关的API的权限

# 1、证书签名请求文件

---------------------------------------------------------------

cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

---------------------------------------------------------------------------------------------

# 2、生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

- kube-proxy.pem

- kube-proxy-key.pem

- kube-proxy.csr查看证书信息

执行:cfssl-certinfo -cert apiserver.pem

----------------------------------------------------------------------

{

"subject": {

"common_name": "kubernetes",

"country": "CN",

"organization": "k8s",

"organizational_unit": "System",

"locality": "BeiJing",

"province": "BeiJing",

"names": [

"CN",

"BeiJing",

"BeiJing",

"k8s",

"System",

"kubernetes"

]

},

"issuer": {

"common_name": "kubernetes",

"country": "CN",

"organization": "k8s",

"organizational_unit": "System",

"locality": "BeiJing",

"province": "BeiJing",

"names": [

"CN",

"BeiJing",

"BeiJing",

"k8s",

"System",

"kubernetes"

]

},

"serial_number": "318482383509981191015409049295954077632898095735",

"sans": [

"localhost",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local",

"127.0.0.1",

"192.168.1.1",

"192.168.1.2",

"192.168.1.11",

"192.168.1.12",

"192.168.1.13",

"10.254.0.1"

],

"not_before": "2024-06-30T05:45:00Z",

"not_after": "2034-06-28T05:45:00Z",

"sigalg": "SHA256WithRSA",

"authority_key_id": "",

"subject_key_id": "F5:45:42:30:C4:73:05:19:DA:1B:0D:87:30:23:C2:BD:F2:54:D3:C1",

"pem": "-----BEGIN CERTIFICATE-----\nMIIEezCCA2OgAwIBAgIUN8k+dqGJPVcuJCFDutBbEm7lWncwDQYJKoZIhvcNAQEL\nBQAwZTELMAkGA1UEBhMCQ04xEDAOBgNVBAgTB0JlaUppbmcxEDAOBgNVBAcTB0Jl\naUppbmcxDDAKBgNVBAoTA2s4czEPMA0GA1UECxMGU3lzdGVtMRMwEQYDVQQDEwpr\ndWJlcm5ldGVzMB4XDTI0MDYzMDA1NDUwMFoXDTM0MDYyODA1NDUwMFowZTELMAkG\nA1UEBhMCQ04xEDAOBgNVBAgTB0JlaUppbmcxEDAOBgNVBAcTB0JlaUppbmcxDDAK\nBgNVBAoTA2s4czEPMA0GA1UECxMGU3lzdGVtMRMwEQYDVQQDEwprdWJlcm5ldGVz\nMIIBIjANBgkqhkiG9w0BAQEFAAOCAQ8AMIIBCgKCAQEAtTW9yttaA+hbiOu6FJhn\nuiBAaf6lMnJMOzc7hWDVdRgMs18Fq0o8rU9ZEpDO+Wh78o4VZlMGjW3fplQEg34R\nwpND7/VdFkIHLKJTU+QhPVsxXbBoPGmIQ8EEf/PEFWZmg3fWGIBYnEfTwo0Bd+1f\njnjeYPnwxrU952KTKqsTnPRIH1GtoCbVNXvIZcFiViAwtoUENi7eBSzS4vRrYn25\nVqe9QYsUlkgfElyDQyg5Cuj8WsfILopS9KwoV2Bsfny5ZkXR8FehMsQlPcBoIN/b\nn66DqNk2IqinbHusGzxqLoclcyUbmAo5ck69cGufqxnPwimfwR+pZxPI4OzPVnfg\nPQIDAQABo4IBITCCAR0wDgYDVR0PAQH/BAQDAgWgMB0GA1UdJQQWMBQGCCsGAQUF\nBwMBBggrBgEFBQcDAjAMBgNVHRMBAf8EAjAAMB0GA1UdDgQWBBT1RUIwxHMFGdob\nDYcwI8K98lTTwTCBvgYDVR0RBIG2MIGzgglsb2NhbGhvc3SCCmt1YmVybmV0ZXOC\nEmt1YmVybmV0ZXMuZGVmYXVsdIIWa3ViZXJuZXRlcy5kZWZhdWx0LnN2Y4Iea3Vi\nZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVygiRrdWJlcm5ldGVzLmRlZmF1bHQu\nc3ZjLmNsdXN0ZXIubG9jYWyHBH8AAAGHBMCoAQGHBMCoAQKHBMCoAQuHBMCoAQyH\nBMCoAQ2HBAr+AAEwDQYJKoZIhvcNAQELBQADggEBACCNDrq2lo5OrsgcEpPRJeKx\nNBvdHw+wymw06ER6PUjGzEp3I1XGNrk/O72gh7OrRvuFp3grdjUJ2zE9z3/GT36g\n3m0Zjsga9zTJTurYfYaCxBwTKk0q6pU52au+7wbEs8JNOWG3qJM+5eaSgxzKJW5A\n1euuNk9RwPZq0sPtIZNK+y0fgBlUq6bZArySSEEkcJfsGqaEI92nIMD/euZMg/8n\nbossIwqydyA35cy5gVIMONhQb/SvltpuizMVelIoKjc0DmJAL/14+fKGY4HRaa9c\nUSDw9tID1KjFXRn3bdQwyj7ATGrNg4xreS1e2t8+JH/EPp5IXqPAagJE4OFCEE4=\n-----END CERTIFICATE-----\n"

}

--------------------------------------------------------------------------------------------------分发证书-分发到所有的节点

#在master节点上

mkdir -p /etc/kubernetes/pki

cp *.pem /etc/kubernetes/pki/

#这里注意其它主机的目录要先创建(可以使用rsync分发就不需要逐个节点创建目录-参考)

scp /etc/kubernetes/pki/* root@192.168.1.12:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/* root@192.168.1.13:/etc/kubernetes/pki/

#分发脚本:vi /usr/local/bin/xsync

只需执行 xsync <分发的文件>

#注意:需要安装:yum install rsync -y 分发程序(每台节点上都需要安装)

--------------------------------------------------------------------------------

#!/bin/bash

pcount=$#

user="root"

#分发的主机

hosts=(k8s-node01 k8s-node02)

if [ $pcount -lt 1 ]

then

echo No Enough Arguement!

exit;

fi

#2. 遍历集群所有机器

for host in "${hosts[@]}"

do

echo ==================== $host ====================

#3. 递归遍历所有目录

for file in $@

do

#4 判断文件是否存在

if [ -e $file ]

then

#5. 获取全路径、获取执行脚本时输入的路径 如:xsync /opt/module/xx.txt

pdir=$(cd -P $(dirname $file); pwd)

echo pdir=$pdir

#6. 获取当前文件的名称、获取输入的文件名

fname=$(basename $file)

echo fname=$fname

#7. 通过ssh执行命令:在$host主机上递归创建文件夹(如果存在该文件夹)

/usr/bin/ssh $user@$host "mkdir -p $pdir"

#8. 远程同步文件至$host主机的$USER用户的$pdir文件夹下

/usr/bin/rsync -av $pdir/$fname $user@$host:$pdir

else

echo $file Does Not Exists!

fi

done

done

--------------------------------------------------------------------------------------------------安装etcd

节点1:etcd-1 192.168.1.11

节点2:etcd-2 192.168.1.12

节点2:etcd-3 192.168.1.13

#注意:etcd可以是单独的集群节点、不一定是和kubelet等组件安装在一台服务器上

#这里都安装在master1、node1、node2上了

- master-1上的操作(192.168.1.11)-后分发到集群

# 1、去到下载目录

cd /root/k8s-install/

# 2、下载并安装

下载:wget https://github.com/etcd-io/etcd/releases/download/v3.4.15/etcd-v3.4.15-linux-amd64.tar.gz

解压:tar zxvf etcd-v3.4.15-linux-amd64.tar.gz

迁移:mv etcd-v3.4.15-linux-amd64/{etcd,etcdctl} /usr/bin/

# 3、配置文件(再三强调-配置文件要多检查-这一步错了后面就无法正常添加etcd到集群中了)

- ETCD_NAME

- 和有ip的地方

mkdir -p /etc/etcd

----------------------------------------------------------------------------

cat > /etc/etcd/etcd.conf << EOF

#[Member]

ETCD_NAME="etcd-1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.1.11:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.1.11:2379,https://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.1.11:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.1.11:2379"

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.1.11:2380,etcd-2=https://192.168.1.12:2380,etcd-3=https://192.168.1.13:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

------------------------------------------------------------------------------------------------------

#配置文件解析:

[基本设置]

ETCD_NAME="etcd-1" 节点名-唯一值

ETCD_DATA_DIR:etcd存储数据的目录。etcd的数据、快照和日志文件都存储在这个目录中。

ETCD_LISTEN_PEER_URLS:这个节点监听其他etcd节点连接的URL。在集群中,etcd节点通过这个URL进行相互通信

ETCD_LISTEN_CLIENT_URLS:这个节点监听客户端连接的URL。客户端(例如etcdctl)通过这些URL与etcd通信。

[集群设置]

ETCD_INITIAL_ADVERTISE_PEER_URLS:这个节点向集群中其他节点通告的地址。在集群中,其他etcd节点会使用这个URL与当前节点通信。

ETCD_ADVERTISE_CLIENT_URLS:这个节点向客户端通告的地址。客户端(例如etcdctl)会使用这个URL与当前节点通信。

ETCD_INITIAL_CLUSTER:定义了集群中所有etcd节点的名称和对应的 peerURL。这是集群的初始成员列表。

ETCD_INITIAL_CLUSTER_TOKEN:用于标识etcd集群的唯一令牌。可以防止不同集群的etcd节点误加入到其他集群中。

ETCD_INITIAL_CLUSTER_STATE:集群的初始状态。在新建集群时,设置为new;如果向现有集群添加节点,应设置为existing。

# 4、配置开机启动

----------------------------------------------------------------------------------------

cat > /lib/systemd/system/etcd.service << EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

ExecStart=/usr/bin/etcd \

--cert-file=/etc/kubernetes/pki/etcd.pem \

--key-file=/etc/kubernetes/pki/etcd-key.pem \

--peer-cert-file=/etc/kubernetes/pki/etcd.pem \

--peer-key-file=/etc/kubernetes/pki/etcd-key.pem \

--trusted-ca-file=/etc/kubernetes/pki/ca.pem \

--peer-trusted-ca-file=/etc/kubernetes/pki/ca.pem \

--logger=zap

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

----------------------------------------------------------------------------------------------------------------

# 5、将上面的文件分发到集群

- /usr/bin/etcd

- /usr/bin/etcdctl

- /etc/etcd/etcd.conf

- etcd.service

#这里使用分发脚本 xsync

------------------------------------------

xsync /usr/bin/etcd

xsync /usr/bin/etcdctl

xsync /etc/etcd/etcd.conf

xsync /lib/systemd/system/etcd.service

------------------------------------------

# 6、修改其它主机分发的配置文件:/etc/etcd/etcd.conf

- 如etcd-2(node1)

vim /etc/etcd/etcd.conf

-----------------------------------------------------------------------

#[Member]

ETCD_NAME="etcd-2" #改这里ip

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.1.12:2380" ##改这里ip

ETCD_LISTEN_CLIENT_URLS="https://192.168.1.12:2379,https://127.0.0.1:2379" #改ip

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.1.12:2380" #改ip

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.1.12:2379" #改ip

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.1.11:2380,etcd-2=https://192.168.1.12:2380,etcd-3=https://192.168.1.13:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

---------------------------------------------------------------------------------------------------------------------------

-- 如etcd-3(node2)

vim /etc/etcd/etcd.conf

----------------------------------------------------------------------------------------

#[Member]

ETCD_NAME="etcd-3"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.1.13:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.1.13:2379,https://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.1.13:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.1.13:2379"

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.1.11:2380,etcd-2=https://192.168.1.12:2380,etcd-3=https://192.168.1.13:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

---------------------------------------------------------------------------------------------------------

# 7、启动etcd

#注意:这里需要检查检查再检查配置文件再逐个启动(注意当启动一个后快速去启动其他节点的etcd不然启动时会一直连不上其他节点而卡住)

systemctl daemon-reload

systemctl start etcd

systemctl enable etcd

#8、查看运行状态

etcdctl member list --cacert=/etc/kubernetes/pki/ca.pem --cert=/etc/kubernetes/pki/etcd.pem --key=/etc/kubernetes/pki/etcd-key.pem --write-out=table

+------------------+---------+--------+---------------------------+---------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+--------+---------------------------+---------------------------+------------+

| aa869cb0f2e7ed31 | started | etcd-1 | https://192.168.1.11:2380 | https://192.168.1.11:2379 | false |

| b08a644fd7247c5e | started | etcd-3 | https://192.168.1.13:2380 | https://192.168.1.13:2379 | false |

| bb9bd2baaebf7d95 | started | etcd-2 | https://192.168.1.12:2380 | https://192.168.1.12:2379 | false |

+------------------+---------+--------+---------------------------+---------------------------+------------+

#9、查看健康状态

etcdctl endpoint health --cacert=/etc/kubernetes/pki/ca.pem --cert=/etc/kubernetes/pki/etcd.pem --key=/etc/kubernetes/pki/etcd-key.pem --cluster --write-out=table

+---------------------------+--------+-------------+-------+

| ENDPOINT | HEALTH | TOOK | ERROR |

+---------------------------+--------+-------------+-------+

| https://192.168.1.13:2379 | true | 22.185914ms | |

| https://192.168.1.11:2379 | true | 29.667937ms | |

| https://192.168.1.12:2379 | true | 31.239728ms | |

+---------------------------+--------+-------------+-------+

==================================================================================================================================

#如果某一台节点配错ip信息或端口等、启动后其它节点都正常启动、唯有某个节点无法启动、说明这个节点的配置信息有问题、且不一定是有问题的节点的配置文件有问题、必须所有节点都要检查关于这个节点的配置(如ip端口)

如报错:

-{"level":"fatal","ts":"2024-06-03T22:23:52.367+0800","caller":"etcdmain/etcd.go:271","msg":"discovery failed","error":"couldn't find local name。。。。。。。} 这里由于我的ip配错了

- 修改集群IP后(因为文件是分发下去的三个节点都必须改动- 重新daemon-reload)

- ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.177.15:2380,etcd-2=https://192.168.177.16:2380,etcd-3=https://192.168.177.17:2380"

- 再启动、发现又报错:

- request sent was ignored (cluster ID mismatch: remote[d1c51ceb92fa1681]=fa335e6f19dcefbc, local=403adee217790c7b)

- 这是由于 这台配置错的的集群的id已经被记录了、所以无法启动

- 查看集群状态:

- etcdctl member list --cacert=/etc/kubernetes/pki/ca.pem --cert=/etc/kubernetes/pki/etcd.pem --key=/etc/kubernetes/pki/etcd-key.pem --write-out=table

+------------------+---------+--------+------------------------------+-----------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+--------+------------------------------+-----------------------------+------------+

| 107de17488d67a29 | started | etcd-1 | https://192.168.177.15:2380 | https://192.168.177.15:2379 | false |

| d1c51ceb92fa1681 | started | etcd-3 | https://192.168.177.17:2380 | https://192.168.177.17:2379 | false |

| fe8a0b5298c256c3 | started | etcd-2 | https://1192.168.177.16:2380 | | false |

+------------------+---------+--------+------------------------------+-----------------------------+------------+

- 注意要在正常运行的节点上执行、发现etcd-2 节点ip是配错了、需要将这个节点删除再启动即可

- 删除 节点:

- etcdctl member remove fe8a0b5298c256c3 --cacert=/etc/kubernetes/pki/ca.pem --cert=/etc/kubernetes/pki/etcd.pem --key=/etc/kubernetes/pki/etcd-key.pem

- 删除: rm -rf /var/lib/etcd/*

- 需要**把master和node节点的/var/lib/etcd/目录下的缓存都删除一遍,然后重启etcd(如果是新环境可以这样操作、已存在的环境不建议这样操作)

- 将配置文件中的 initial-cluster-state: new (如果是existing改为new)

- 重新启动etcd服务

- systemctl start etcd

- 查看节点运行状态:etcdctl member list --cacert=/etc/kubernetes/pki/ca.pem --cert=/etc/kubernetes/pki/etcd.pem --key=/etc/kubernetes/pki/etcd-key.pem --write-out=table

+------------------+---------+--------+-----------------------------+-----------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+--------+-----------------------------+-----------------------------+------------+

| 107de17488d67a29 | started | etcd-1 | https://192.168.177.15:2380 | https://192.168.177.15:2379 | false |

| cdfed09f52b6bf94 | started | etcd-2 | https://192.168.177.16:2380 | https://192.168.177.16:2379 | false |

| d1c51ceb92fa1681 | started | etcd-3 | https://192.168.177.17:2380 | https://192.168.177.17:2379 | false |

+------------------+---------+--------+-----------------------------+-----------------------------+------------+

- 此时已正常

- 查看节点健康状态

etcdctl endpoint health --cacert=/etc/kubernetes/pki/ca.pem --cert=/etc/kubernetes/pki/etcd.pem --key=/etc/kubernetes/pki/etcd-key.pem --cluster --write-out=table

+-----------------------------+--------+-------------+-------+

| ENDPOINT | HEALTH | TOOK | ERROR |

+-----------------------------+--------+-------------+-------+

| https://192.168.177.15:2379 | true | 8.719025ms | |

| https://192.168.177.16:2379 | true | 12.132317ms | |

| https://192.168.177.17:2379 | true | 13.110553ms | |

+-----------------------------+--------+-------------+-------+

#以上因为配置/etc/etcd/etcd.conf 文件时ip写错了导致后续集群报错所以重头来过了

这是某个节点配置错误后配置改回来后无法加入节点、原因是id对不上了

kubernetes Master节点安装

kubernetes master 节点组件

- kube-apiserver

- kube-scheduler

- kube-controller-manager

- kubelet(非必须、但必要)

- kube-proxy(非必须、但必要)

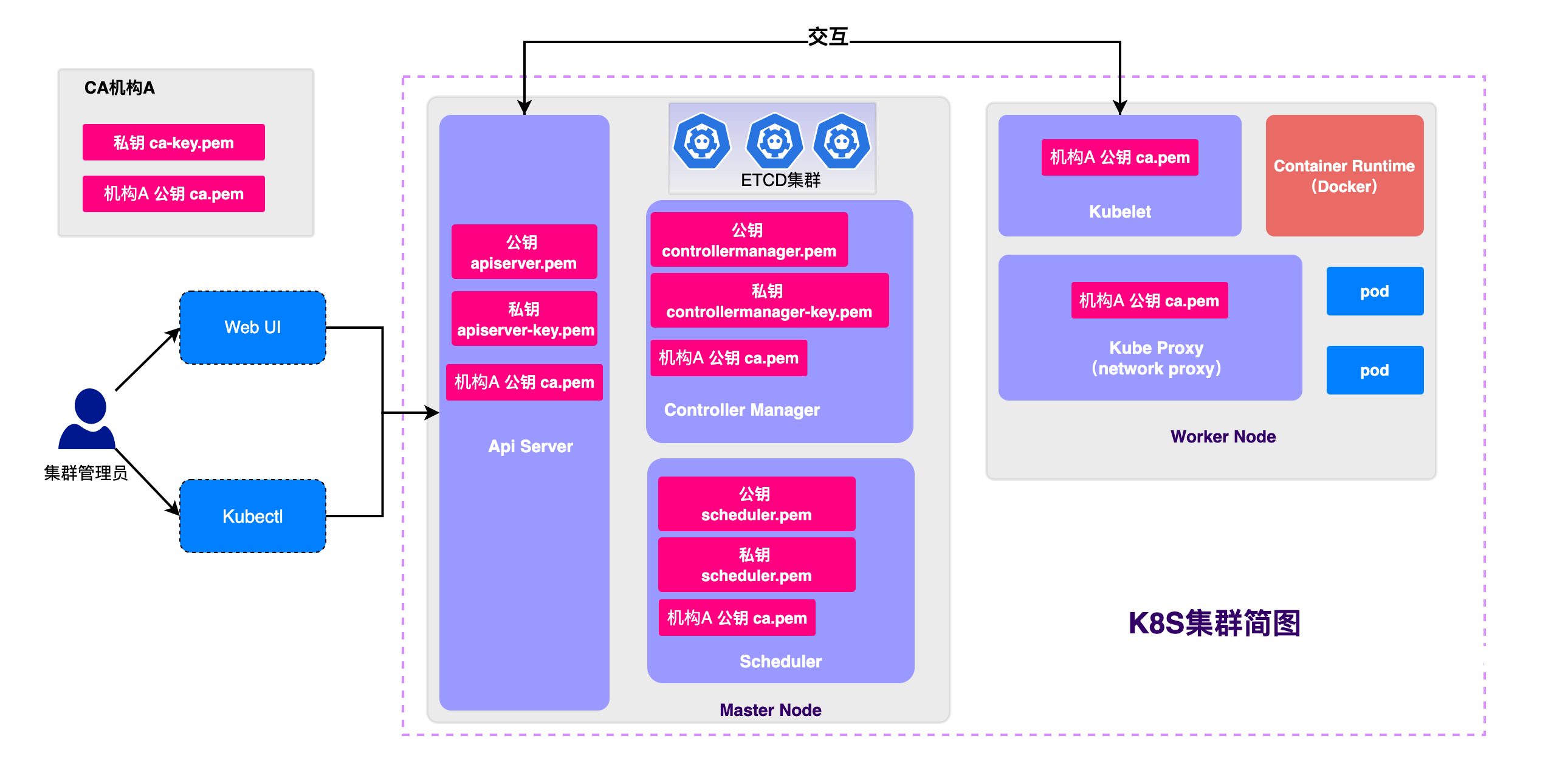

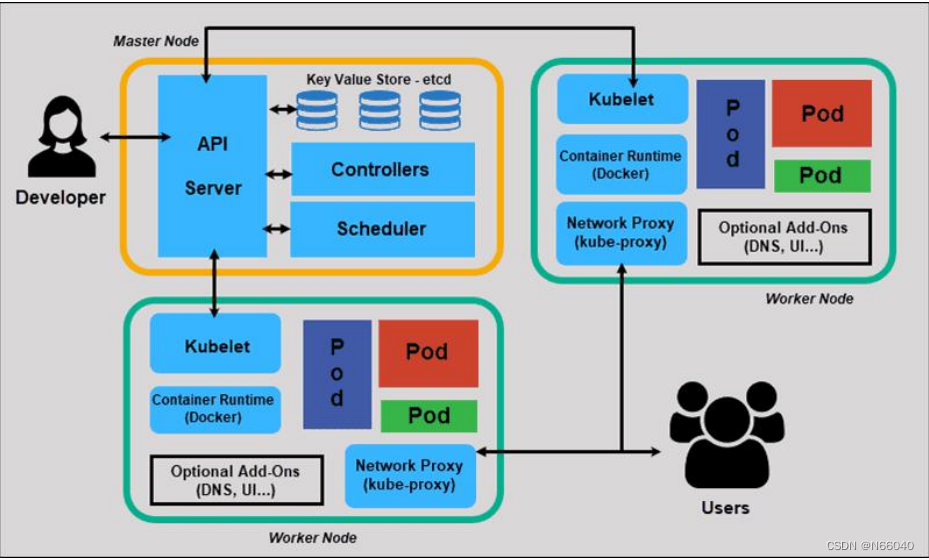

#结合下面的三张图可以看出:

各个组件间的交互都是通过 api-server 来实现的

kube-controller-manager 与 kube-scheduler:它们主要通过 API Server 间接交互。

控制器(kube-controller-manager)和调度器( kube-scheduler)都是与 API Server 通信,而不是直接与 kubelet 通信。

kubelet:kubelet 与 API Server 直接通信,获取 Pod 信息和报告节点状态等。

| 组件 | 作用 | 关联 |

| kube-apiserver | API服务:是kubernetes的核心组件、负责接收来自客户端的请求、并对这些请求进行认证、授权和处理、他也是集群状态的唯一来源、并负责将集群状态更新到etcd中 | 与所有其他组件进行通信 |

| kube-scheduler | 调度器:负责将新创建的 Pod 分配到合适的节点上。调度器也主要通过与 API Server 交互来完成其工作。调度器会从 API Server 获取未调度的 Pod 信息,并根据预定义的策略(如资源需求、亲和性、反亲和性等)选择最合适的节点,然后将调度决定更新回 API Server。 | 与kube-apiserver直接通信与kube-contoller-manager间接通信 |

| kube-contoller-manager | 控制管理器:负责管理 Kubernetes 集群中的各种控制器(例如节点控制器、复制控制器、服务控制器等),这些控制器主要通过与 API Server 交互来完成各自的任务。它们从 API Server 获取集群状态信息,并向 API Server 提交更新请求。 | 与 kube-apiserver 直接通信与kube-scheduler间接通信 |

| kubelet | 工作节点代理:运行在每个节点上的组件,负责管理该节点上的容器。kubelet 会从 API Server 获取调度好的 Pod 信息,并负责容器的创建、启动、停止以及健康检查等。 | 与kube-apiserver个kube-proxy直接通信 |

| kube-proxy | 代理:负责将来自外部的网络流量路由到工作节点上的pod、他维护每个服务的后端端点列表、并使这些列表将流量路由到正确的pod上 | 与kueb-apiserver与kubelet直接通信 |

安装准备

# 1、去到下载源码包路径

cd /root/k8s-install

# 2、下载安装包 - kubernetes-server-linux-amd64.tar.gz

下载:wget https://dl.k8s.io/v1.19.11/kubernetes-server-linux-amd64.tar.gz

解压:tar zxvf kubernetes-server-linux-amd64.tar.gz

cd到:cd kubernetes/server/bin

拷贝:cp kube-apiserver kube-scheduler kube-controller-manager kubectl kubelet kube-proxy /usr/bin api-server

TLS Bootstrapping Token

启用TLS Bootstrapping机制

TLS Bootstrapping:Master apiserver 启用TLS认证后、node节点kubelet和kube-proxy要与kube-apiserver进行通信、必须使用CA签发的有效证书才可以

当Node节点很多时、这种客户端证书颁发需要大量的工作、同样也会增加集群扩展的复杂度。

为了简化流程、kubernetes引入了TLS Bootstrapping机制来自动颁发客户端证书、kubelet会以一个低权限用户自动向apiserver申请证书、kubelet证书有apiserver动态签署。

所以强烈建议在Node节点上使用这种方式、目前主要用于kubelet上、

kube-proxy还是有我们统一颁发一个证书TLS Bootsrapping 工作流程

# 1、执行:获取token

BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

# 2、启动配置文件

- 格式:token,用户名,UID,用户组

cat > /etc/kubernetes/token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:node-bootstrapper"

EOF

配置api-server 的配置文件

--service-cluster-ip-range=10.254.0.0/16: Service IP 段

注意:修改对应的ip地址--检查检查再检查

cat > /etc/kubernetes/kube-apiserver.conf << EOF

KUBE_APISERVER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--etcd-servers=https://192.168.1.11:2379,https://192.168.1.12:2379,https://192.168.1.13:2379 \\

--bind-address=192.168.1.11 \\

--secure-port=6443 \\

--advertise-address=192.168.1.11 \\

--allow-privileged=true \\

--service-cluster-ip-range=10.254.0.0/16 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--enable-bootstrap-token-auth=true \\

--token-auth-file=/etc/kubernetes/token.csv \\

--service-node-port-range=30000-32767 \\

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \\

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \\

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \\

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \\

--client-ca-file=/etc/kubernetes/pki/ca.pem \\

--service-account-key-file=/etc/kubernetes/pki/ca-key.pem \\

--service-account-issuer=api \\

--service-account-signing-key-file=/etc/kubernetes/pki/apiserver-key.pem \\

--etcd-cafile=/etc/kubernetes/pki/ca.pem \\

--etcd-certfile=/etc/kubernetes/pki/etcd.pem \\

--etcd-keyfile=/etc/kubernetes/pki/etcd-key.pem \\

--requestheader-client-ca-file=/etc/kubernetes/pki/ca.pem \\

--proxy-client-cert-file=/etc/kubernetes/pki/apiserver.pem \\

--proxy-client-key-file=/etc/kubernetes/pki/apiserver-key.pem \\

--requestheader-allowed-names=kubernetes \\

--requestheader-extra-headers-prefix=X-Remote-Extra- \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-username-headers=X-Remote-User \\

--enable-aggregator-routing=true \\

--audit-log-maxage=30 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-path=/var/log/kubernetes/k8s-audit.log"

EOF配置api-server开机启动

# 1、配置server 文件

---------------------------------------------------------------------

cat > /lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

---------------------------------------------------------------------

# 2、启动

systemctl daemon-reload

systemctl start kube-apiserver

systemctl status kube-apiserver

systemctl enable kube-apiserverkube-controller-manager

kubeconfig文件

#直接执行命令、注意修改ip

KUBE_CONFIG="/etc/kubernetes/kube-controller-manager.kubeconfig"

KUBE_APISERVER="https://192.168.1.11:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-controller-manager \

--client-certificate=/etc/kubernetes/pki/kube-controller-manager.pem \

--client-key=/etc/kubernetes/pki/kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-controller-manager \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}kube-controller-manger 配置文件

--cluster-cidr=10.244.0.0/16: Pod IP 段

--service-cluster-ip-range=10.254.0.0/16: Service IP 段

cat > /etc/kubernetes/kube-controller-manager.conf << EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--leader-elect=true \\

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \\

--bind-address=127.0.0.1 \\

--allocate-node-cidrs=true \\

--cluster-cidr=10.244.0.0/16 \\

--service-cluster-ip-range=10.254.0.0/16 \\

--cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \\

--cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \\

--root-ca-file=/etc/kubernetes/pki/ca.pem \\

--service-account-private-key-file=/etc/kubernetes/pki/ca-key.pem \\

--cluster-signing-duration=87600h0m0s"

EOF配置开机启动

# 配置kube-controller-manager 的server文件

------------------------------------------------------------------------

cat > /lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

------------------------------------------------------------------------

# 启动

systemctl daemon-reload

systemctl start kube-controller-manager

systemctl status kube-controller-manager

systemctl enable kube-controller-managerscheduler

kubeconfig 文件

# 注意修改ip

KUBE_CONFIG="/etc/kubernetes/kube-scheduler.kubeconfig"

KUBE_APISERVER="https://192.168.1.11:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-scheduler \

--client-certificate=/etc/kubernetes/pki/kube-scheduler.pem \

--client-key=/etc/kubernetes/pki/kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-scheduler \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}kube-scheduler 的配置文件

cat > /etc/kubernetes/kube-scheduler.conf << EOF

KUBE_SCHEDULER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/var/log/kubernetes \

--leader-elect \

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \

--bind-address=127.0.0.1"

EOF配置开机启动

cat > /lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl start kube-scheduler

systemctl status kube-scheduler

systemctl enable kube-schedulerkubelet

参数配置文件

cat > /etc/kubernetes/kubelet-config.yml << EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- 10.254.0.2

clusterDomain: cluster.local

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/pki/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

EOFkubeconfig 配置文件

# 注意修改ip

-------------------------------------------------------------

BOOTSTRAP_TOKEN=$(cat /etc/kubernetes/token.csv | awk -F, '{print $1}')

KUBE_CONFIG="/etc/kubernetes/bootstrap.kubeconfig"

KUBE_APISERVER="https://192.168.1.11:6443"

# 生成 kubelet bootstrap kubeconfig 配置文件

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials "kubelet-bootstrap" \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user="kubelet-bootstrap" \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}kubelet 的配置文件

其中:--kubeconfig=/etc/kubernetes/kubelet.kubeconfig 在加入集群时生成

注意:--hostname-override=master-1 改为实际的节点名

cat > /etc/kubernetes/kubelet.conf << EOF

KUBELET_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--hostname-override=k8s-master1 \\

--network-plugin=cni \\

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/etc/kubernetes/bootstrap.kubeconfig \\

--config=/etc/kubernetes/kubelet-config.yml \\

--cert-dir=/etc/kubernetes/pki \\

--pod-infra-container-image=mirrorgooglecontainers/pause-amd64:3.1"

EOF授权kubelet-bootstrap 用户允许请求证书

防止错误:failed to run Kubelet: cannot create certificate signing request: certificatesigningrequests.certificates.k8s.io is forbidden: User "kubelet-bootstrap" cannot create resource "certificatesigningrequests" in API group "certificates.k8s.io" at the cluster scope

kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

#输出:clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

#删除操作(不要操作):kubectl delete clusterrolebinding kubelet-bootstrap配置开机启动

cat > /lib/systemd/system/kubelet.service << EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

[Service]

EnvironmentFile=/etc/kubernetes/kubelet.conf

ExecStart=/usr/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl start kubelet

systemctl status kubelet

systemctl enable kubelet加入集群

# 1、查看kubelet 证书请求

执行:kubectl get csr

#输出

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-sg6whHULnO44zm2oK7qf_S9jJUIfOMub93hqTk-0whY 44s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

# 2、批准申请

#注意这里要填上面查出来的证书名 NAME

执行:kubectl certificate approve node-csr-sg6whHULnO44zm2oK7qf_S9jJUIfOMub93hqTk-0whY

输出:certificatesigningrequest.certificates.k8s.io/node-csr-sg6whHULnO44zm2oK7qf_S9jJUIfOMub93hqTk-0whY approved

# 3、再次查看证书

执行:kubectl get csr

#输出、此时CONDITION就不一样了

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-HqitHgM-yDKeM6-7u1xWh-9CvhKEmkoC5dUyLaNglnM 4m46s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

# 4、查看节点(由于网络插件还没部署、节点会有准备就绪)

kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady <none> 55s v1.19.11kube-proxy

参数配置文件

clusterCIDR: 10.254.0.0/16: Service IP 段,与apiserver & controller-manager 的--service-cluster-ip-range 一致

注意:hostnameOverride: k8s-master1 改为实际的节点主机名

cat > /etc/kubernetes/kube-proxy-config.yml << EOF

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

metricsBindAddress: 0.0.0.0:10249

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

hostnameOverride: k8s-master1

clusterCIDR: 10.254.0.0/16

EOFkubeonfig 文件

注意修改ip

KUBE_CONFIG="/etc/kubernetes/kube-proxy.kubeconfig"

KUBE_APISERVER="https://192.168.1.11:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-proxy \

--client-certificate=/etc/kubernetes/pki/kube-proxy.pem \

--client-key=/etc/kubernetes/pki/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}kube-proxy 配置文件

cat > /etc/kubernetes/kube-proxy.conf << EOF

KUBE_PROXY_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/var/log/kubernetes \

--config=/etc/kubernetes/kube-proxy-config.yml"

EOF配置开机启动

cat > /lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/kube-proxy.conf

ExecStart=/usr/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl start kube-proxy

systemctl status kube-proxy

systemctl enable kube-proxy授权apisertver访问kubelet的权限

mkdir -p $HOME/k8s-install && cd $HOME/k8s-install

cat > apiserver-to-kubelet-rbac.yaml << EOF

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

- pods/log

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kubernetes

EOF

kubectl apply -f apiserver-to-kubelet-rbac.yaml

#输出:

clusterrole.rbac.authorization.k8s.io/system:kube-apiserver-to-kubelet created

clusterrolebinding.rbac.authorization.k8s.io/system:kube-apiserver created集群管理

kubeconfig 文件

注意修改ip

mkdir -p /root/.kube

KUBE_CONFIG=/root/.kube/config

KUBE_APISERVER="https://192.168.1.11:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials cluster-admin \

--client-certificate=/etc/kubernetes/pki/admin.pem \

--client-key=/etc/kubernetes/pki/admin-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=cluster-admin \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}查看集群配置信息

# 查看 :kubectl config view

#输出:

----------------------------------------------

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: DATA+OMITTED

server: https://192.168.1.11:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: cluster-admin

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: cluster-admin

user:

client-certificate-data: REDACTED

client-key-data: REDACTED查看集群状态

kubectl get cs

#输出

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}命令补全工具

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

#是 Bash 中的进程替换语法,它允许将命令的输出作为文件传递给另一个命令。

#在这里,<(...) 将 kubectl completion bash 命令的输出作为一个临时文件来处理

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrcNode 节点

需要安装的组件

| 组件 | 作用 | 关联 |

| kubelet | 工作节点代理:运行在每个node节点上的组件,负责管理该节点上的容器。kubelet 会从 API Server 获取调度好的 Pod 信息,并负责容器的创建、启动、停止以及健康检查等。 | 与kube-apiserver个kube-proxy通信 |

| kube-proxy | 代理:运行在每个node节点上的组件、负责将来自外部的网络流量路由到工作节点上的pod、他维护每个服务的后端端点列表、并使这些列表将流量路由到正确的pod上 | 与kueb-apiserver与kubelet通信 |

将master节点上的kubelet、kube-proxy相关文件拷贝到node节点

#这里使用分发脚本

#在k8s-master1上操作

# 1、拷贝

xsync /usr/bin/kubelet

xsync /usr/bin/kube-proxy

xsync /lib/systemd/system/kubelet.service

xsync /lib/systemd/system/kube-proxy.service

xsync /etc/kubernetes/kubelet* #kubelet.conf kubelet-config.yml kubelet.kubeconfig

xsync /etc/kubernetes/kube-proxy* #kube-proxy.conf kube-proxy-config.yml kube-proxy.kubeconfig

xsync /etc/kubernetes/pki/

xsync /etc/kubernetes/bootstrap.kubeconfig修改配置

在k8s-node01和k8s-node02上操作

# 1、删除证书申请审批后自动生成的文件、后面当节点加入集群后重新生成(在node01和k8s-node02都要删掉这些文件)

rm /etc/kubernetes/kubelet.kubeconfig

rm /etc/kubernetes/pki/kubelet*

# 创建日志目录

mkdir -p /var/log/kubernetes

# 按实际节点名称修改

#在k8s-node01上

# kubelet

vi /etc/kubernetes/kubelet.conf

--hostname-override=k8s-node01

# kube-proxy

vi /etc/kubernetes/kube-proxy-config.yml

hostnameOverride: k8s-node01

#在k8s-node02上

# kubelet

vi /etc/kubernetes/kubelet.conf

--hostname-override=k8s-node02

# kube-proxy

vi /etc/kubernetes/kube-proxy-config.yml

hostnameOverride: k8s-node02配置开机自启

systemctl daemon-reload

systemctl start kubelet kube-proxy

systemctl status kubelet kube-proxy

systemctl enable kubelet kube-proxy

#此时看到systemctl status kube-proxy 状态提示找不到节点

6月 05 21:26:05 node-2 systemd[1]: Started Kubernetes Proxy.

6月 05 21:26:05 node-2 kube-proxy[2819]: E0605 21:26:05.490756 2819 node.go:125] Failed to retrieve node info: nodes "node-2" not found

6月 05 21:26:06 node-2 kube-proxy[2819]: E0605 21:26:06.518320 2819 node.go:125] Failed to retrieve node info: nodes "node-2" not found

6月 05 21:26:08 node-2 kube-proxy[2819]: E0605 21:26:08.642706 2819 node.go:125] Failed to retrieve node info: nodes "node-2" not found 加入集群(要在master节点去执行)

# 1、获取节点信息(这是node申请加入集群的api请求)

kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-IO9gpTw-HqotJm7ypcjZVJSBnXvXQEqOrDQx4XqwBhU 3s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-Wdwu_hc8ztpUl1iVx9_6I-4HYlRXOg5kHsPaIouOiPs 87s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-sg6whHULnO44zm2oK7qf_S9jJUIfOMub93hqTk-0whY 57m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

# 2、批准接入(将node节点加入集群)

[root@master-1 ~]# kubectl certificate approve node-csr-IO9gpTw-HqotJm7ypcjZVJSBnXvXQEqOrDQx4XqwBhU

certificatesigningrequest.certificates.k8s.io/node-csr-FKBpaWpZV9gcxF4danKlK76iNdMLTNm0S0c84PlzxuY approved

[root@master-1 ~]# kubectl certificate approve node-csr-Wdwu_hc8ztpUl1iVx9_6I-4HYlRXOg5kHsPaIouOiPs

certificatesigningrequest.certificates.k8s.io/node-csr-YHa0S8tzHw5pZpX98AYsziv9aGO0ZqRG-Y962IF-hVs approved

# 3、查看集群节点

#这里STATUS 为NotReady 状态是预期的,后续安装了网络插件后就好

[root@master-1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady <none> 59m v1.19.11

k8s-node01 NotReady <none> 7s v1.19.11

k8s-node02 NotReady <none> 28s v1.19.11

# 4. 设置标签,即更改节点角色

kubectl label node k8s-master1 node-role.kubernetes.io/master=

kubectl label node k8s-node01 node-role.kubernetes.io/node=

kubectl label node k8s-node02 node-role.kubernetes.io/node=

#5、在查看节点:kubectl get node

#此时ROLES改变了

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady master 63m v1.19.11

k8s-node01 NotReady node 3m44s v1.19.11

k8s-node02 NotReady node 4m5s v1.19.11

# 6. 设置污点:使master节点无法创建pod

kubectl taint nodes k8s-master1 node-role.kubernetes.io/master=:NoSchedule

#输出:node/k8s-master1 tainted

# 7、查看k8s-master1节点详细信息中的Taints:

kubectl describe node k8s-master1

--------------------------------------------------------------

Taints: node-role.kubernetes.io/master:NoSchedule #标记master节点不被调度、防止普通 Pod 被调度到 Master 节点上,以确保 Master 节点资源用于集群管理任务

node.kubernetes.io/not-ready:NoSchedule #标记表明节点当前不可用,可能是因为节点尚(ready)未完成启动或因某些问题而导致节点无法被调度器接受

# 8、删除污点:

- kubectl edit node k8s-master1

- 找到Taints :删掉不要的污点即可

CNI 网络

# 节点状态

kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady master 49m v1.19.11

k8s-node01 NotReady node 3m45s v1.19.11

k8s-node02 NotReady node 3m49s v1.19.11

# 检查日志,发现网络插件未安装

journalctl -u kubelet -f

Jun 02 14:24:29 k8s-master1 kubelet[75636]: W0602 14:24:29.172144 75636 cni.go:239] Unable to update cni config: no networks found in /etc/cni/net.d

Jun 02 14:24:32 k8s-master1 kubelet[75636]: E0602 14:24:32.958021 75636 kubelet.go:2129] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized其中涉及的IP段,要与 kube-controller-manager中 “–cluster-cidr” 一致

安装CNI网络插件

注意:所有节点都要操作

mkdir -p $HOME/k8s-install/network && cd $_

wget https://github.com/containernetworking/plugins/releases/download/v0.9.1/cni-plugins-linux-amd64-v0.9.1.tgz

mkdir -p /opt/cni/bin

tar zxvf cni-plugins-linux-amd64-v0.9.1.tgz -C /opt/cni/bin

#关闭SELinux

- 临时关闭:sudo setenforce 0

- 若要永久禁用 SELinux,需要编辑 /etc/selinux/config 文件:

- 找到 SELINUX=enforcing 或 SELINUX=permissive 的行,将其改为:SELINUX=disabled方案一:calico

Calico是一个纯三层的数据中心网络方案,是目前Kubernetes主流的网络方案。

只需再k8s-master1节点操作

mkdir -p $HOME/k8s-install/network && cd $HOME/k8s-install/network

# 1. 下载插件

curl https://docs.projectcalico.org/v3.20/manifests/calico.yaml -O

#注意:这里我使用 wget https://docs.projectcalico.org/manifests/calico.yaml 下载的calico.yaml中的 policy/v1 与k8s版本(policy/v1beta)对不上、所以一直报错

#参考:https://blog.csdn.net/qq_46237746/article/details/125453966

# CIDR的值,与 kube-controller-manager中“--cluster-cidr=10.244.0.0/16” 一致

#2、 修改

vi calico.yaml

----------------------------------------------------------------------------------------------

4598 # The default IPv4 pool to create on startup if none exists. Pod IPs will be

4599 # chosen from this range. Changing this value after installation will have

4600 # no effect. This should fall within `--cluster-cidr`.

4601 - name: CALICO_IPV4POOL_CIDR

4602 value: "10.244.0.0/16"

# 3、安装网络插件 pod

kubectl apply -f calico.yaml

# 4、 查看(要等一会)

#看到READY 都跑起来说明已正常

- kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-577f77cb5c-b9hbx 1/1 Running 0 3m46s 10.244.58.193 k8s-node02 <none> <none>

calico-node-n9945 1/1 Running 0 3m46s 192.168.1.12 k8s-node01 <none> <none>

calico-node-qcjq8 1/1 Running 0 3m46s 192.168.1.13 k8s-node02 <none> <none>

calico-node-t56j9 1/1 Running 0 3m46s 192.168.1.11 k8s-master1 <none> <none>

# 5、查看节点 node

- kubectl get node

#此时STATUS 都为Ready

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 3h23m v1.19.11

k8s-node01 Ready node 143m v1.19.11

k8s-node02 Ready node 143m v1.19.11 方案二:fannel

只需再k8s-master1节点操作

mkdir -p $HOME/k8s-install/network && cd $HOME/k8s-install/network

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

kubectl apply -f kube-flannel.yml

vi kube-flannel.yml

"Network": "10.244.0.0/16",

kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

kube-flannel-ds-8qnnx 1/1 Running 0 10s

kube-flannel-ds-979lc 1/1 Running 0 16m

kube-flannel-ds-kgmgg 1/1 Running 0 16m

kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 85m v1.19.11

k8s-node01 Ready node 40m v1.19.11

k8s-node02 Ready node 40m v1.19.11Addons

CoreDNS

CoreDNS用于集群内部Service名称解析

mkdir -p $HOME/k8s-install/coredns && cd $HOME/k8s-install/coredns

wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/coredns.yaml.sed

wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/deploy.sh

chmod +x deploy.sh

export CLUSTER_DNS_SVC_IP="10.254.0.2"

export CLUSTER_DNS_DOMAIN="cluster.local"

./deploy.sh -i ${CLUSTER_DNS_SVC_IP} -d ${CLUSTER_DNS_DOMAIN} | kubectl apply -f -

# 查询状态

kubectl get pods -n kube-system | grep coredns

coredns-746fcb4bc5-nts2k 1/1 Running 0 6m2s

# 验证 busybox1.33.1有问题

kubectl run -it --rm dns-test --image=busybox:1.28.4 /bin/sh

If you don't see a command prompt, try pressing enter.

/ # nslookup kubernetes #这是进入到容器中输入命令:nslookup kubernetes

Server: 10.254.0.2

Address 1: 10.254.0.2 kube-dns.kube-system.svc.cluster.local

Name: kubernetes

Address 1: 10.254.0.1 kubernetes.default.svc.cluster.localDNS问题排查

# dns service

kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.254.0.2 <none> 53/UDP,53/TCP,9153/TCP 4m52s

# endpoints 是否正常

kubectl get endpoints kube-dns -n kube-system

NAME ENDPOINTS AGE

kube-dns 10.244.58.194:53,10.244.58.194:53,10.244.58.194:9153 5m5s

# coredns 增加解析日志

CoreDNS 配置参数说明:

errors: 输出错误信息到控制台。

health:CoreDNS 进行监控检测,检测地址为 http://localhost:8080/health 如果状态为不健康则让 Pod 进行重启。

ready: 全部插件已经加载完成时,将通过 endpoints 在 8081 端口返回 HTTP 状态 200。

kubernetes:CoreDNS 将根据 Kubernetes 服务和 pod 的 IP 回复 DNS 查询。

prometheus:是否开启 CoreDNS Metrics 信息接口,如果配置则开启,接口地址为 http://localhost:9153/metrics

forward:任何不在Kubernetes 集群内的域名查询将被转发到预定义的解析器 (/etc/resolv.conf)。

cache:启用缓存,30 秒 TTL。

loop:检测简单的转发循环,如果找到循环则停止 CoreDNS 进程。

reload:监听 CoreDNS 配置,如果配置发生变化则重新加载配置。

loadbalance:DNS 负载均衡器,默认 round_robin。

# 编辑 coredns 配置

kubectl edit configmap coredns -n kube-system

-----------------------------------------------------

# Please edit the object below. Lines beginning with a '#' will be ignored,

# and an empty file will abort the edit. If an error occurs while saving this file will be

# reopened with the relevant failures.

#

apiVersion: v1

data:

Corefile: |

.:53 {

log #添加

errors

health {

lameduck 5s

}

ready

kubernetes cluster.local in-addr.arpa ip6.arpa {

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

forward . /etc/resolv.conf {

max_concurrent 1000

}

cache 30

loop

reload

loadbalance

}

kind: ConfigMap

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","data":{"Corefile":".:53 {\n errors\n health {\n lameduck 5s\n }\n ready\n kubernetes cluster.local in-addr.arpa ip6.arpa {\n fallthrough in-addr.arpa ip6.arpa\n }\n prometheus :9153\n forward . /etc/resolv.conf {\n max_concurrent 1000\n }\n cache 30\n loop\n reload\n loadbalance\n}\n"},"kind":"ConfigMap","metadata":{"annotations":{},"name":"coredns","namespace":"kube-system"}}

creationTimestamp: "2024-06-30T10:39:52Z"

name: coredns

namespace: kube-system

resourceVersion: "33556"

selfLink: /api/v1/namespaces/kube-system/configmaps/coredns

uid: 56e2cf15-0341-4aa6-9abe-2325567f46f2回滚操作:

wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/rollback.sh

chmod +x rollback.sh

export CLUSTER_DNS_SVC_IP="10.254.0.2"

export CLUSTER_DNS_DOMAIN="cluster.local"

./rollback.sh -i ${CLUSTER_DNS_SVC_IP} -d ${CLUSTER_DNS_DOMAIN} | kubectl apply -f -

kubectl delete --namespace=kube-system deployment corednsDashboard

这是k8s web管理界面

mkdir -p $HOME/k8s-install/dashboard && cd $HOME/k8s-install/dashboard

# 1. 下载并安装

curl https://raw.githubusercontent.com/kubernetes/dashboard/v2.2.0/aio/deploy/recommended.yaml -o dashboard.yaml

kubectl apply -f dashboard.yaml

# 2. 检查运行状态

kubectl get pods -n kubernetes-dashboard -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

dashboard-metrics-scraper-79c5968bdc-bcl8x 1/1 Running 0 24s 10.244.58.196 k8s-node02 <none> <none>

kubernetes-dashboard-9f9799597-9vc5m 1/1 Running 0 24s 10.244.159.129 k8s-master1 <none> <none>

# 3. 检查服务状态

kubectl get svc -n kubernetes-dashboard -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

dashboard-metrics-scraper ClusterIP 10.254.95.79 <none> 8000/TCP 69s k8s-app=dashboard-metrics-scraper

kubernetes-dashboard ClusterIP 10.254.100.239 <none> 443/TCP 69s k8s-app=kubernetes-dashboard

# 4. 服务改为NodePort方式

kubectl edit svc kubernetes-dashboard -n kubernetes-dashboard

type: ClusterIP => type: NodePort #让外部直接通过node 的ip:端口去访问

#5. 再查看

[root@k8s-master1 dashboard]# kubectl get svc -n kubernetes-dashboard -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

dashboard-metrics-scraper ClusterIP 10.254.95.79 <none> 8000/TCP 2m35s k8s-app=dashboard-metrics-scraper

kubernetes-dashboard NodePort 10.254.100.239 <none> 443:31099/TCP 2m35s k8s-app=kubernetes-dashboard

# 6. 创建service account并绑定默认cluster-admin管理员集群角色:

kubectl create serviceaccount dashboard-admin -n kube-system #创建角色

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin #绑定角色

# 7. 获取访问 token

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

Name: dashboard-admin-token-ggx45

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: dashboard-admin

kubernetes.io/service-account.uid: f4bbc842-c178-4dc5-a37f-2e98d58bc756

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1310 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IlJqcGoyRGNSVlpPeEw5QzdJdllLZ1NRY2tMbThyN1ZyNzJIOFVfS3B3NjgifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tZ2d4NDUiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiZjRiYmM4NDItYzE3OC00ZGM1LWEzN2YtMmU5OGQ1OGJjNzU2Iiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.D1Zxhsky7h_k0cGxK9cHAd-YC4hlUlQ7j5s6oAW7ztEUeGi8GG4kSBslc1DTcvmkzA_0kVUkIy38Oi9arQ75HOhub9LIF-pzH8aGor0c9CMNR_XQi-l288Q8_u9_CBcR9eNtITSAmqmuaKCrTw2Y5hzVk_s59M6Tq5QaQ-hth--3U0CtBUEdX6JNRwhr1RX0LkQRY4E6veawUVS1QdVJ3-VVPRUU2A15vkNASwcJSpbzdywOpjBpRQv6NSpXAyIeq2BvyJfAaXax3Wfq7wOnMx4mzVaQXHkRCyJEd_6P73hFxdYnxkLbUFkj02Rt8VcRZfEeC2cO1M_-wrOUon_-Zw

# 8. 访问web

https://192.168.1.11:31099高可用-新增master节点

| 角色 | ip | 组件 | 备注 |

| k8s-master1 | 192.168.1.11 | etcd, api-server, controller-manager, scheduler, kubelet, kube-proxy, docker | |

| k8s-node01 | 192.168.1.12 | etcd, kubelet, kube-proxy, docker | |

| k8s-node02 | 192.168.1.13 | etcd, kubelet, kube-proxy, docker | |

| k8s-master2 | 192.168.1.14 | etcd, api-server, controller-manager, scheduler, kubelet, kube-proxy, docker | 新增节点 |

准备操作(k8s-Master1)

kube-apiserver 证书更新

在新增节点的IP段未在证书中时需要如下操作:

mkdir -p /root/ssl && cd /root/ssl

# 1. 证书签名请求文件

cat > apiserver-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"localhost",

"192.168.1.1",

"192.168.1.2",

"192.168.1.3",

"192.168.1.11",

"192.168.1.12",

"192.168.1.13",

"192.168.1.14",

"192.168.1.15",

"10.254.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

# 2. 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes apiserver-csr.json | cfssljson -bare apiserver

-----------------------

apiserver.csr

apiserver-key.pem

apiserver.pem

-----------------------

# 3. 证书更新(k8s-master1)

cp apiserver*.pem /etc/kubernetes/pki #覆盖原证书

#将证书拷贝到node节点

scp apiserver*.pem root@k8s-node01:/etc/kubernetes/pki

scp apiserver*.pem root@k8s-node02:/etc/kubernetes/pki

#node节点授权(node节点操作)

chown root:root /etc/kubernetes/pki/apiserver*.pem

# 4. 重启 apiserver (k8s-master1上操作)

systemctl restart kube-apiserver

systemctl status kube-apiserver增加主机

在 k8s-master1, k8s-node01, k8s-node02 上制作

echo '192.168.1.14 k8s-master2' >> /etc/hosts

扩容 Master

初始化

# 1. 修改主机名

hostnamectl set-hostname k8s-master2

# 2. 主机名解析

cat >> /etc/hosts <<EOF

192.168.1.11 k8s-master1

192.168.1.12 k8s-node01

192.168.1.13 k8s-node02

192.168.1.14 k8s-master2

EOF

# 3. 禁用 swap

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

# 4. 将桥接的IPv4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

# 5. 域名解析

echo "nameserver 8.8.8.8" >> /etc/resolv.conf

# 6. 时间同步

yum install ntpdate -y

ntpdate ntp1.aliyun.com

crontab -e

*/30 * * * * /usr/sbin/ntpdate-u ntp1.aliyun.com >> /var/log/ntpdate.log 2>&1

# 7. 日志目录

mkdir -p /var/log/kubernetes

# 8. 禁用SELiunx

临时:sudo setenforce 0

若要永久禁用 SELinux,需要编辑 /etc/selinux/config 文件:找到 SELINUX=enforcing 或 SELINUX=permissive 的行,将其改为:SELINUX=disabled克隆

#k8s-master1 上执行

# 1. 将下列文件分发到k8s-master2上(yum install -y rsync)

#注意!!!!:这里如果你使用的是分发脚本、需要改一下分发脚本中的主机、不要分发到之前node的主机上去了导致覆盖

xsync /usr/bin/kube*

xsync /lib/systemd/system/kube*.service

xsync /etc/kubernetes

xsync /root/.kube/config

xsync /usr/local/bin/docker*

xsync /usr/local/bin/runc

xsync /usr/local/bin/containerd*

xsync /usr/local/bin/ctr

xsync /etc/docker

xsync /lib/systemd/system/docker.service

#或者使用scp拷贝(这个将master1上的文件拷贝到master2上的对应目录即可)

- 先压缩

tar zcvf master-node-clone.tar.gz /usr/bin/kube* /lib/systemd/system/kube*.service /etc/kubernetes /root/.kube/config /usr/local/bin/docker* /usr/local/bin/runc /usr/local/bin/containerd* /usr/local/bin/ctr /etc/docker /lib/systemd/system/docker.service

- 再scp到k8s-master2上

scp master-node-clone.tar.gz root@192.168.80.49:/root

----------------------------------------------------------------------------------------

# 2. k8s-master2 执行

rm -f /etc/kubernetes/kubelet.kubeconfig

rm -f /etc/kubernetes/pki/kubelet*更新配置

在k8s-master2上执行

#1.修改

vi /etc/kubernetes/kube-apiserver.conf

--bind-address=192.168.1.14 \

--advertise-address=192.168.1.14 \

#2. 修改配置文件

sed -i 's#k8s-master1#k8s-master2#' /etc/kubernetes/*

sed -i 's#192.168.1.11:6443#192.168.1.14:6443#' /etc/kubernetes/*

#遇到:sed: 无法编辑文件 /etc/kubernetes/pki: 不是一个普通文件 不用管、这是一个目录

#3.修改

vi /root/.kube/config

server: https://192.168.1.14:6443

#4. 开机启动

systemctl daemon-reload

systemctl start docker kube-apiserver kube-controller-manager kube-scheduler kubelet kube-proxy

systemctl status docker kube-apiserver kube-controller-manager kube-scheduler kubelet kube-proxy

systemctl enable docker kube-apiserver kube-controller-manager kube-scheduler kubelet kube-proxy查看集群状态

#kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}加入集群

# 1、查看api申请

kubectl get csr

NAME AGE SIGNERNAME

node-csr-nohunS7Jmlb9Qu3ncV5TszVdoETyt1QiW440rAuWrec 2m20s kubernetes.io/kube-apiserver-client-kubel

#同意加入集群

- kubectl certificate approve node-csr-nohunS7Jmlb9Qu3ncV5TszVdoETyt1QiW440rAuWrec

#查看node

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 6h3m v1.19.11

k8s-master2 NotReady <none> 21s v1.19.11

k8s-node01 Ready node 5h3m v1.19.11

k8s-node02 Ready node 5h3m v1.19.11打上标签和设置污点

#设置标签

kubectl label node k8s-master2 node-role.kubernetes.io/master=

#输出:node/k8s-master2 labeled

#查看

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 6h6m v1.19.11

k8s-master2 NotReady master 3m9s v1.19.11

k8s-node01 Ready node 5h6m v1.19.11

k8s-node02 Ready node 5h6m v1.19.11

# 设置污点

kubectl taint nodes k8s-master2 node.role.kubernetes.io/master=:NoSchedule

#输出:node/k8s-master2 tainted

# 节点信息

kubectl get nodes --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s-master1 Ready master 6h8m v1.19.11 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-master1,kubernetes.io/os=linux,node-role.kubernetes.io/master=

k8s-master2 NotReady master 5m51s v1.19.11 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-master2,kubernetes.io/os=linux,node-role.kubernetes.io/master=

k8s-node01 Ready node 5h9m v1.19.11 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-node01,kubernetes.io/os=linux,node-role.kubernetes.io/node=

k8s-node02 Ready node 5h9m v1.19.11 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-node02,kubernetes.io/os=linux,node-role.kubernetes.io/node=

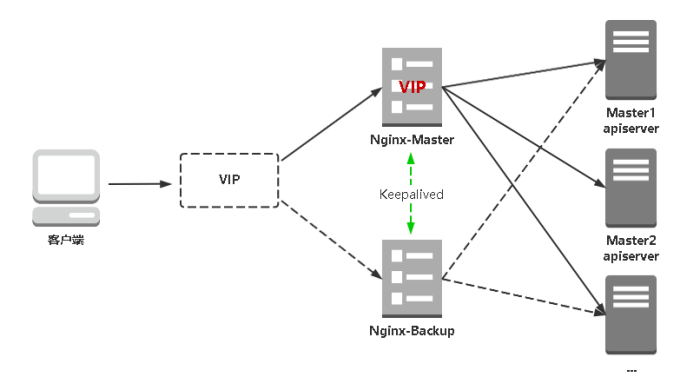

高可用的负载均衡

Nginx: 主流Web服务和反向代理服务器,这里用四层实现对apiserver实现负载均衡。

Keepalived: 主流高可用软件,基于VIP绑定实现服务器双机热备。Keepalived主要根据Nginx运行状态判断是否需要故障转移(漂移VIP),例如当Nginx主节点挂掉,VIP会自动绑定在Nginx备节点,从而保证VIP一直可用,实现Nginx高可用。

服务器规划:

| 角色 | ip | 组件 |

| k8s-master1 | 192.168.1.11 | kube-apiserver |

| k8s-master2 | 192.168.1.14 | kube-apiserver |

| k8s-loadbalancer1 | 192.168.1.21 | nginx, keepalived |

| k8s-loadbalancer2 | 192.168.1.22 | nginx, keepalived |

| VIP | 192.168.1.250 | 虚拟ip(不是主机) |

安装软件

#设置主机名:

hostnamectl set-hostname k8s-loadbalancer1

hostnamectl set-hostname k8s-loadbalancer2

#安装软件(两台都要安装)

yum install nginx keepalived -y配置nginx

user: 可以改为root

cat > /etc/nginx/nginx.conf << "EOF"

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

upstream k8s-apiserver{

server 192.168.1.11:6443; # Master1 APISERVER IP:PORT

server 192.168.1.14:6443; # Master2 APISERVER IP:PORT

}

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

include /etc/nginx/mime.types;

include /etc/nginx/conf.d/*.conf;

default_type application/octet-stream;

server {

listen 16443;

server_name localhost;

location / {

proxy_pass http://k8s-apiserver; #如果这里需要https就加上证书即可

}

}

}keepalived 配置 (master)

cat > /etc/keepalived/keepalived.conf << EOF

! Configuration File for keepalived

global_defs {

#keepalived机器标识,无特殊作用,一般为机器名

router_id LVS_DEVEL

}

# 检查nginx状态的脚本,健康监测脚本、chk_nginx为脚本名

vrrp_script chk_nginx {

script "/etc/keepalived/nginx_check.sh" # 脚本路径

interval 2 # 脚本执行间隔时间

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface ens160 # 当前进行vrrp通讯的网络接口卡(当前centos的网卡) 用ifconfig查看你具体的网卡

virtual_router_id 100 # 虚拟路由编号,主从要一至

priority 100 # 优先级,数值越大,获取处理请求的优先级越高 master要大于slave

advert_int 1 ##主备之间通信检查的时间间隔,单位秒

unicast_src_ip 192.168.1.21 # 本机ip

#检查脚本,与vrrp_script对应

track_script {

chk_nginx

}

##keepalived之间认证类型为密码

authentication {

auth_type PASS # 指定认证方式。PASS简单密码认证(推荐),AH:IPSEC认证(不推荐)

auth_pass 1111 # 指定认证所使用的密码。最多8位

}

##虚拟IP池

virtual_ipaddress {

# 指定VIP地址、访问地址、虚拟ip随意定义

192.168.1.250/24

}

}

EOFkeepalived 配置 (slave)

cat > /etc/keepalived/keepalived.conf << EOF

! Configuration File for keepalived

global_defs {

#keepalived机器标识,无特殊作用,一般为机器名

router_id LVS_DEVEL

}

# 检查nginx状态的脚本,健康监测脚本、chk_nginx为脚本名

vrrp_script chk_nginx {

script "/etc/keepalived/nginx_check.sh" # 脚本路径

interval 2 # 脚本执行间隔时间

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface ens160 # 当前进行vrrp通讯的网络接口卡(当前centos的网卡) 用ifconfig查看你具体的网卡

virtual_router_id 100 # 虚拟路由编号,主从要一至

priority 90 # 优先级,数值越大,获取处理请求的优先级越高 master要大于slave

advert_int 1 ##主备之间通信检查的时间间隔,单位秒

unicast_src_ip 192.168.1.22 # 本机ip

#检查脚本,与vrrp_script对应

track_script {

chk_nginx

}

##keepalived之间认证类型为密码

authentication {

auth_type PASS # 指定认证方式。PASS简单密码认证(推荐),AH:IPSEC认证(不推荐)

auth_pass 1111 # 指定认证所使用的密码。最多8位

}

##虚拟IP池

virtual_ipaddress {

# 指定VIP地址、访问地址、虚拟ip随意定义

192.168.1.250/24

}

}

EOFkeepalived 检查脚本

cat > /etc/keepalived/check_nginx.sh << "EOF"

#!/bin/bash

count=$(ss -antp |grep 16443 |egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

exit 1

else

exit 0

fi

EOF 启动服务

systemctl daemon-reload

systemctl start nginx keepalived

systemctl enable nginx keepalived

状态检查

curl -k http://192.168.1.250:16443/version

{

"major": "1",

"minor": "19",

"gitVersion": "v1.19.11",

"gitCommit": "c6a2f08fc4378c5381dd948d9ad9d1080e3e6b33",

"gitTreeState": "clean",

"buildDate": "2021-05-12T12:19:22Z",

"goVersion": "go1.15.12",

"compiler": "gc",

"platform": "linux/amd64"

}Worker Node 连接到 LB VIP

sed -i 's#192.168.1.11:6443#192.168.1.250:16443#' /etc/kubernetes/*

systemctl restart kubelet kube-proxy

删除节点

# 1. k8s-master2 上,停止kubelet进程

systemctl stop kubelet

# 2. 检查 k8s-master2 是否已下线

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 40h v1.19.11

k8s-master2 NotReady master 12h v1.19.11

k8s-node01 Ready node 40h v1.19.11

k8s-node02 Ready node 40h v1.19.11

# 3. 删除节点

kubectl drain k8s-master2

node/k8s-master2 cordoned

error: unable to drain node "k8s-master2", aborting command...

There are pending nodes to be drained:

k8s-master2

error: cannot delete DaemonSet-managed Pods (use --ignore-daemonsets to ignore): kube-system/calico-node-lwj2r

# 4. 强制下线

kubectl drain k8s-master2 --ignore-daemonsets

node/k8s-master2 already cordoned

WARNING: ignoring DaemonSet-managed Pods: kube-system/calico-node-lwj2r

node/k8s-master2 drained

# 5. 下线状态

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 40h v1.19.11

k8s-master2 Ready,SchedulingDisabled master 12h v1.19.11

k8s-node01 Ready node 39h v1.19.11

k8s-node02 Ready node 39h v1.19.11

# 6. 恢复操作 (如有必要)

kubectl uncordon k8s-master2

node/k8s-master2 uncordoned

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 40h v1.19.11

k8s-master2 Ready master 12h v1.19.11

k8s-node01 Ready node 39h v1.19.11

k8s-node02 Ready node 39h v1.19.11

# 7. 彻底删除

kubectl delete node k8s-master2

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 41h v1.19.11

k8s-node01 Ready node 40h v1.19.11

k8s-node02 Ready node 40h v1.19.11

浙公网安备 33010602011771号

浙公网安备 33010602011771号