MongoDB集群配置

1 MongoDB 分片(高可用)

1.1 准备工作

- 三台虚拟机

- 安装MongoDB

- 虚拟机相互之间可以相互通信

- 虚拟机与主机之间可以相互通信

1.2 安装MongoDB

在Ubuntu16.04 中安装 MongoDB 。参考步骤MongoDB官方网站

-

安装时会报错

E: The method driver /usr/lib/apt/methods/https could not be found. N: Is the package apt-transport-https installed?提示需要安装apt-transport-https

sudo apt-get install -y apt-transport-https

1.3 启动MongoDB

sudo service mongod start

检查是否启动成功

sudo cat /var/log/mongodb/mongod.log

2019-04-19T15:40:52.808+0800 I NETWORK [initandlisten] waiting for connections on port 27017

2 MongoDB 分片

分片(sharding)是将数据拆分,将其分散存到不同机器上的过程。MongoDB 支持自动分片,可以使数据库架构对应用程序不可见。对于应用程序来说,好像始终在使用一个单机的 MongoDB 服务器一样,另一方面,MongoDB 自动处理数据在分片上的分布,也更容易添加和删除分片。类似于MySQL中的分库。

2.1 基础组件

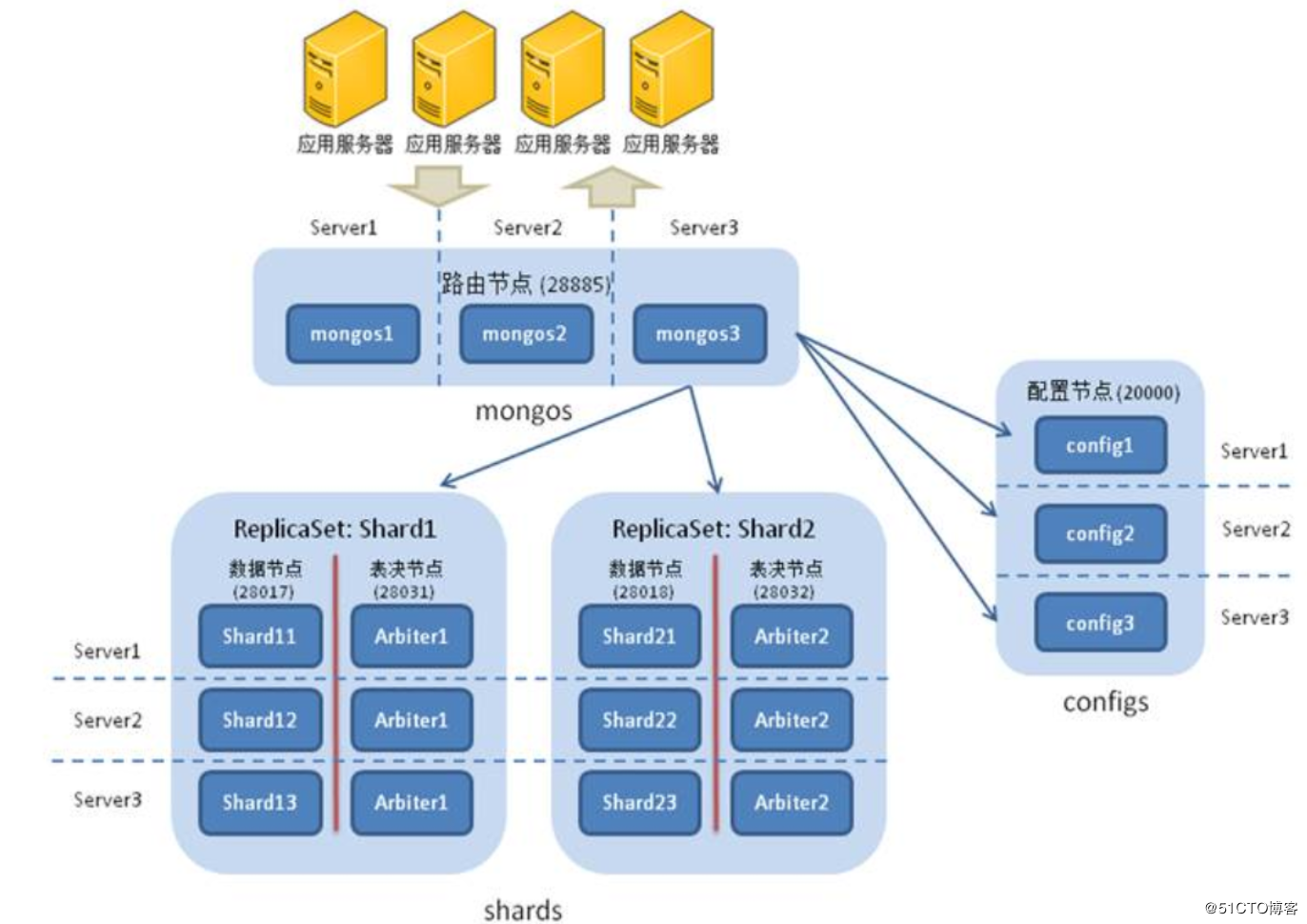

其利用到了四个组件:mongos,config server,shard,replica set

mongos:数据库集群请求的入口,所有请求需要经过mongos进行协调,无需在应用层面利用程序来进行路由选择,mongos其自身是一个请求分发中心,负责将外部的请求分发到对应的shard服务器上,mongos作为统一的请求入口,为防止mongos单节点故障,一般需要对其做HA。可以理解为在微服务架构中的路由Eureka。

config server:配置服务器,存储所有数据库元数据(分片,路由)的配置。mongos本身没有物理存储分片服务器和数据路由信息,只是存缓存在内存中来读取数据,mongos在第一次启动或后期重启时候,就会从config server中加载配置信息,如果配置服务器信息发生更新会通知所有的mongos来更新自己的状态,从而保证准确的请求路由,生产环境中通常也需要多个config server,防止配置文件存在单节点丢失问题。理解为 配置中心

shard:在传统意义上来讲,如果存在海量数据,单台服务器存储1T压力非常大,无论考虑数据库的硬盘,网络IO,又有CPU,内存的瓶颈,如果多台进行分摊1T的数据,到每台上就是可估量的较小数据,在mongodb集群只要设置好分片规则,通过mongos操作数据库,就可以自动把对应的操作请求转发到对应的后端分片服务器上。真正的存储

replica set:在总体mongodb集群架构中,对应的分片节点,如果单台机器下线,对应整个集群的数据就会出现部分缺失,这是不能发生的,因此对于shard节点需要replica set来保证数据的可靠性,生产环境通常为2个副本+1个仲裁。 副本保持数据的HA

2.2 架构图

3 安装部署

为了节省服务器,采用多实例配置,三个mongos,三个config server,单个服务器上面运行不通角色的shard(为了后期数据分片均匀,将三台shard在各个服务器上充当不同的角色。),在一个节点内采用replica set保证高可用,对应主机与端口信息如下:

| 主机名 | IP地址 | 组件mongos | 组件config server | shard |

|---|---|---|---|---|

| 主节点: 22001 | ||||

| mongodb-1 | 192.168.90.130 | 端口:20000 | 端口:21000 | 副本节点:22002 |

| 仲裁节点:22003 | ||||

| 主节点: 22002 | ||||

| mongodb-2 | 192.168.90.131 | 端口:20000 | 端口:21000 | 副本节点:22001 |

| 仲裁节点:22003 | ||||

| 主节点: 22003 | ||||

| mongodb-3 | 192.168.90.132 | 端口:20000 | 端口:21000 | 副本节点:22001 |

| 仲裁节点:22002 |

3.1 部署配置服务器集群

3.1.1 先创建对应的文件夹

mkdir -p /usr/local/mongo/mongoconf/{data,log,config}

touch mongoconfg.conf

touch mongoconf.log

3.1.2 填写对应的配置文件

# mongod.conf

# for documentation of all options, see:

# http://docs.mongodb.org/manual/reference/configuration-options/

# Where and how to store data.

storage:

dbPath: /usr/local/mongo/mongoconf/data

journal:

enabled: true

commitIntervalMs: 200

# engine:

# mmapv1:

# wiredTiger:

# where to write logging data.

systemLog:

destination: file

logAppend: true

path: /usr/local/mongo/mongoconf/log/mongoconf.log

# network interfaces

net:

port: 21000

bindIp: 0.0.0.0

maxIncomingConnections: 1000

# how the process runs

processManagement:

fork: true

#security:

#operationProfiling:

#replication:

replication:

replSetName: replconf

#sharding:

sharding:

clusterRole: configsvr

## Enterprise-Only Options:

#auditLog:

#snmp:

配置集群需要指定 data ,log ,以及对应的sharding角色

3.1.3 启动config集群

在三台机器上都配置好对应的文件后可以启动config集群

mogod -f /usr/local/mongo/mongoconf/conf/mongoconf.conf

3.1.4 副本初始化操作

进入任意一台机器(130为例),做集群副本初始化操作

mongo 192.168.90.130

config = {

_id:"replconf"

members:[

{_id:0,host:"192.168.90.130:21000"}

{_id:0,host:"192.168.90.131:21000"}

{_id:0,host:"192.168.90.132:21000"}

]

}

rs.initiate(config)

//查看集群状态

rs.status()

返回结果如下

{

"set" : "replconf",

"date" : ISODate("2019-04-21T06:38:50.164Z"),

"myState" : 2,

"term" : NumberLong(3),

"syncingTo" : "192.168.90.131:21000",

"syncSourceHost" : "192.168.90.131:21000",

...

"members" : [

{

"_id" : 0,

"name" : "192.168.90.130:21000",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 305,

"optime" : {

"ts" : Timestamp(1555828718, 1),

"t" : NumberLong(3)

},

"optimeDurable" : {

"ts" : Timestamp(1555828718, 1),

"t" : NumberLong(3)

},

"optimeDate" : ISODate("2019-04-21T06:38:38Z"),

"optimeDurableDate" : ISODate("2019-04-21T06:38:38Z"),

"lastHeartbeat" : ISODate("2019-04-21T06:38:49.409Z"),

"lastHeartbeatRecv" : ISODate("2019-04-21T06:38:49.408Z"),

"pingMs" : NumberLong(0),

"lastHeartbeatMessage" : "",

"syncingTo" : "192.168.90.131:21000",

"syncSourceHost" : "192.168.90.131:21000",

"syncSourceId" : 1,

"infoMessage" : "",

"configVersion" : 1

},

{

"_id" : 1,

"name" : "192.168.90.131:21000",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 307,

"optime" : {

"ts" : Timestamp(1555828718, 1),

"t" : NumberLong(3)

},

"optimeDurable" : {

"ts" : Timestamp(1555828718, 1),

"t" : NumberLong(3)

},

"optimeDate" : ISODate("2019-04-21T06:38:38Z"),

"optimeDurableDate" : ISODate("2019-04-21T06:38:38Z"),

"lastHeartbeat" : ISODate("2019-04-21T06:38:49.380Z"),

"lastHeartbeatRecv" : ISODate("2019-04-21T06:38:49.635Z"),

"pingMs" : NumberLong(0),

"lastHeartbeatMessage" : "",

"syncingTo" : "",

"syncSourceHost" : "",

"syncSourceId" : -1,

"infoMessage" : "",

"electionTime" : Timestamp(1555828429, 1),

"electionDate" : ISODate("2019-04-21T06:33:49Z"),

"configVersion" : 1

},

{

"_id" : 2,

"name" : "192.168.90.132:21000",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 319,

"optime" : {

"ts" : Timestamp(1555828718, 1),

"t" : NumberLong(3)

},

"optimeDate" : ISODate("2019-04-21T06:38:38Z"),

"syncingTo" : "192.168.90.131:21000",

"syncSourceHost" : "192.168.90.131:21000",

"syncSourceId" : 1,

"infoMessage" : "",

"configVersion" : 1,

"self" : true,

"lastHeartbeatMessage" : ""

}

],

"ok" : 1,

...

}

可以看出config集群部署成功,且通过选举机制选定131为primary节点。

3.2 部署Shard分片集群

3.2.1 分别对三片数据存储创建对应的文件夹

mkdir -p shard1/{data,conf,log}

mkdir -p shard2/{data,conf,log}

mkdir -p shard3/{data,conf,log}

touch shard1/log/shard1.log

touch shard2/log/shard2.log

touch shard3/log/shard3.log

3.2.2 创建对应的配置文件

# mongod.conf

# for documentation of all options, see:

# http://docs.mongodb.org/manual/reference/configuration-options/

# Where and how to store data.

storage:

dbPath: /usr/local/mongo/shard1/data

journal:

enabled: true

commitIntervalMs: 200

mmapv1:

smallFiles: true

# wiredTiger:

# where to write logging data.

systemLog:

destination: file

logAppend: true

path: /usr/local/mongo/shard1/log/shard1.log

# network interfaces

net:

port: 22001

bindIp: 0.0.0.0

maxIncomingConnections: 1000

# how the process runs

processManagement:

fork: true

#security:

#operationProfiling:

#replication:

replication:

replSetName: shard1

oplogSizeMB: 4096

#sharding:

sharding:

clusterRole: shardsvr

## Enterprise-Only Options:

#auditLog:

#snmp:

分别建立shard1,shard2,shard3 的配置文件

3.2.3 启动对应的shard

mongod -f /usr/local/mongo/shard1/conf/shard1.conf

mongod -f /usr/local/mongo/shard2/conf/shard2.conf

mongod -f /usr/local/mongo/shard3/conf/shard3.conf

使用

netstat -lntup

来查看进程

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 0.0.0.0:22001 0.0.0.0:* LISTEN 1891/mongod

tcp 0 0 0.0.0.0:22002 0.0.0.0:* LISTEN 1974/mongod

tcp 0 0 0.0.0.0:22003 0.0.0.0:* LISTEN 2026/mongod

tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN -

tcp 0 0 127.0.0.1:6010 0.0.0.0:* LISTEN -

tcp 0 0 0.0.0.0:21000 0.0.0.0:* LISTEN 1779/mongod

发现进程全部启动

3.2.4 指定对应shard集群的副本

在130 上登录

mongo 192.168.90.130:22001

指定集群以及对应的arbiter节点

config = {

_id:"shard1",

members:[

{_id:0,host:"192.168.90.130:22001"},

{_id:1,host:"192.168.90.131:22001"},

{_id:2,host:"192.168.90.132:22001",arbiterOnly:true}

]

}

rs.initate(config)

rs.status()

在131,132 节点登录,哪一个节点初始化 即哪一个节点第一次优先作为primary节点

config = {

_id:"shard2",

members:[

{_id:0,host:"192.168.90.130:22002",arbiterOnly:true},

{_id:1,host:"192.168.90.131:22002"},

{_id:2,host:"192.168.90.132:22002"}

]

}

rs.initate(config)

rs.status()

config = {

_id:"shard3",

members:[

{_id:0,host:"192.168.90.130:22001"},

{_id:1,host:"192.168.90.131:22001",arbiterOnly:true},

{_id:2,host:"192.168.90.132:22001"}

]

}

rs.initate(config)

rs.status()

3.3 配置mongos路由集群

3.3.1 创建对应文件

mkdir -p ./mongos/{config,log}

因为mongos不需要存储数据或者元数据信息,只负责处理请求分发,当启动时到config集群中取得元数据信息加载到内存使用。

3.3.2 配置文件

# mongod.conf

# for documentation of all options, see:

# http://docs.mongodb.org/manual/reference/configuration-options/

# Where and how to store data.

# engine:

# mmapv1:

# wiredTiger:

# where to write logging data.

systemLog:

destination: file

logAppend: true

path: /usr/local/mongo/mongos/log/mongos.log

# network interfaces

net:

port: 20000

bindIp: 0.0.0.0

maxIncomingConnections: 1000

# how the process runs

processManagement:

fork: true

sharding:

configDB: replconf/mongo0:21000,mongo1:21000,mongo2:21000

## Enterprise-Only Options:

#auditLog:

3.3.3 启动mongos

mongos -f /usr/local/mongo/mongos/conf/mongos.conf

查看集群启动情况

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 0.0.0.0:22001 0.0.0.0:* LISTEN 1891/mongod

tcp 0 0 0.0.0.0:22002 0.0.0.0:* LISTEN 1974/mongod

tcp 0 0 0.0.0.0:22003 0.0.0.0:* LISTEN 2026/mongod

tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN -

tcp 0 0 127.0.0.1:6010 0.0.0.0:* LISTEN -

tcp 0 0 0.0.0.0:20000 0.0.0.0:* LISTEN 2174/mongos

tcp 0 0 0.0.0.0:21000 0.0.0.0:* LISTEN 1779/mongod

3.3.4 在admin表中填写shard分片信息

登录任意一台mongos,在admin表中加入信息

use admin

db.runCommand(

{

addShard:"shard1/192.168.90.130:22001,192.168.90.131:22001,192.168.90.132:22001"

}

)

db.runCommand(

{

addShard:"shard2/192.168.90.130:22002,192.168.90.131:22002,192.168.90.132:22002"

}

)

db.runCommand(

{

addShard:"shard3/192.168.90.130:22003,192.168.90.131:22003,192.168.90.132:22003"

}

)

sh.status()

返回信息:

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("5cba82d33290d8f4fb3ac8f7")

}

shards:

{ "_id" : "shard1", "host" : "shard1/192.168.90.130:22001,192.168.90.132:22001", "state" : 1 }

{ "_id" : "shard2", "host" : "shard2/192.168.90.130:22002,192.168.90.131:22002", "state" : 1 }

{ "_id" : "shard3", "host" : "shard3/192.168.90.131:22003,192.168.90.132:22003", "state" : 1 }

active mongoses:

....

3.3.5 给对应的表进行分片

use admin

db.runCommand({

shardCollection:"lishubindb.table1",key:{_id:"hashed"}

})

db.runCommand({

listshards:1

})

3.4 测试

加入10W条数据到table1集合中

use lishubindb

for(var i=0;i<100000;i++){

db.table1.insert({

"name":"lishubin"+i,"num":i

})

}

观察分片情况

db.table1.status()

"ns" : "lishubindb.table1",

"count" : 100000,

"size" : 5888890,

"storageSize" : 2072576,

"totalIndexSize" : 4694016,

"indexSizes" : {

"_id_" : 1060864,

"_id_hashed" : 3633152

},

"avgObjSize" : 58,

"maxSize" : NumberLong(0),

"nindexes" : 2,

"nchunks" : 6,

"shards" : {

"shard3" : {

"ns" : "lishubindb.table1",

"size" : 1969256,

"count" : 33440,

"avgObjSize" : 58,

"storageSize" : 712704,

"capped" : false,

...

},

"shard2" : {

"ns" : "lishubindb.table1",

"size" : 1973048,

"count" : 33505,

"avgObjSize" : 58,

"storageSize" : 708608,

"capped" : false,

...

},

"shard1" : {

"ns" : "lishubindb.table1",

"size" : 1946586,

"count" : 33055,

"avgObjSize" : 58,

"storageSize" : 651264,

"capped" : false,

...

},