kafka概念使用简介注意点

使用场景

大数据量、低并发、高可用、订阅消费场景

概念理解

分区个数与消费者个数

分区个数 = 消费者个数 :最合适状态

分区个数 > 消费者个数 :某些消费者要承担更多的分区数据消费

分区个数 < 消费者个数 :浪费资源

当“某些消费者要承担更多的分区数据消费”,消费者接收的数据不能保证全局有序性,但能保证同一分区的数据是有序的

groupId作用

采用同一groupId,分区个数 >= 消费者个数,每个消费者都会消费数据

采用同一groupId,分区个数<消费者个数,某些消费者不会接收数据

采用不同groupId,各个groupId的消费者相互不受影响

命令行使用

启动:.\bin\windows\kafka-server-start.bat .\config\server.properties

创建topic:.\bin\windows\kafka-topics.bat --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic lilei

开启生产者:kafka-console-producer.bat --broker-list localhost:9092 --topic lilei

开启消费者:kafka-console-consumer.bat --zookeeper localhost:2181 --topic lilei

java api使用

api 包

<dependency> <groupId>org.apache.kafka</groupId> <artifactId>kafka_2.11</artifactId> <version>1.0.0</version> </dependency>

生产者

package com.lilei.kafka.liei_kafka; import java.util.Properties; import kafka.javaapi.producer.Producer; import kafka.producer.KeyedMessage; import kafka.producer.ProducerConfig; public class KafkaProducer { private final Producer<String, String> producer; public final static String TOPIC = "topic3"; private KafkaProducer() { Properties props = new Properties(); // 此处配置的是kafka的端口 props.put("metadata.broker.list", "127.0.0.1:9092"); props.put("zk.connect", "127.0.0.1:2181"); // 配置value的序列化类 props.put("serializer.class", "kafka.serializer.StringEncoder"); // 配置key的序列化类 props.put("key.serializer.class", "kafka.serializer.StringEncoder"); props.put("request.required.acks", "-1"); producer = new Producer<String, String>(new ProducerConfig(props)); } void produce() { int messageNo = 0; final int COUNT = Integer.MAX_VALUE; while (messageNo < COUNT) { String key = String.valueOf(messageNo); try { Thread.sleep(300); } catch (InterruptedException e) { // TODO Auto-generated catch block e.printStackTrace(); } String data = "hello kafka message " + key; producer.send(new KeyedMessage<String, String>(TOPIC, key, data)); System.out.println(data); messageNo++; } } public static void main(String[] args) { new KafkaProducer().produce(); } }

消费者

package com.lilei.kafka.liei_kafka; import java.util.HashMap; import java.util.List; import java.util.Map; import java.util.Properties; import kafka.consumer.ConsumerConfig; import kafka.consumer.ConsumerIterator; import kafka.consumer.KafkaStream; import kafka.javaapi.consumer.ConsumerConnector; import kafka.message.MessageAndMetadata; import kafka.serializer.StringDecoder; import kafka.utils.VerifiableProperties; public class KafkaConsumer { private final ConsumerConnector consumer; private KafkaConsumer() { Properties props = new Properties(); // zookeeper 配置 props.put("zookeeper.connect", "localhost:2181"); // group 代表一个消费组 props.put("group.id", "vvvxyzv"); // zk连接超时 props.put("zookeeper.session.timeout.ms", "5000"); props.put("zookeeper.sync.time.ms", "10000"); props.put("rebalance.max.retries", "10"); props.put("rebalance.backoff.ms", "2000"); props.put("auto.commit.interval.ms", "1000"); props.put("auto.offset.reset", "smallest"); // 序列化类 props.put("serializer.class", "kafka.serializer.StringEncoder"); ConsumerConfig config = new ConsumerConfig(props); consumer = kafka.consumer.Consumer.createJavaConsumerConnector(config); } void consume() { String topic = "topic3"; Map<String, Integer> topicCountMap = new HashMap<String, Integer>(); topicCountMap.put(topic, new Integer(1)); StringDecoder keyDecoder = new StringDecoder(new VerifiableProperties()); StringDecoder valueDecoder = new StringDecoder(new VerifiableProperties()); Map<String, List<KafkaStream<String, String>>> consumerMap = consumer.createMessageStreams(topicCountMap, keyDecoder, valueDecoder); KafkaStream<String, String> stream = consumerMap.get(topic).get(0); ConsumerIterator<String, String> it = stream.iterator(); while (it.hasNext()) { MessageAndMetadata<String,String> mam = it.next(); System.out.println(mam.key()+"---"+mam.message()); } // System.out.println("<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<" + it.next().message() + "<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<"); } public static void main(String[] args) { new KafkaConsumer().consume(); } }

注意点

使用的kafka api版本要注意,在不合适或者存在bug的状态下,会报: kafka.common.ConsumerRebalanceFailedException

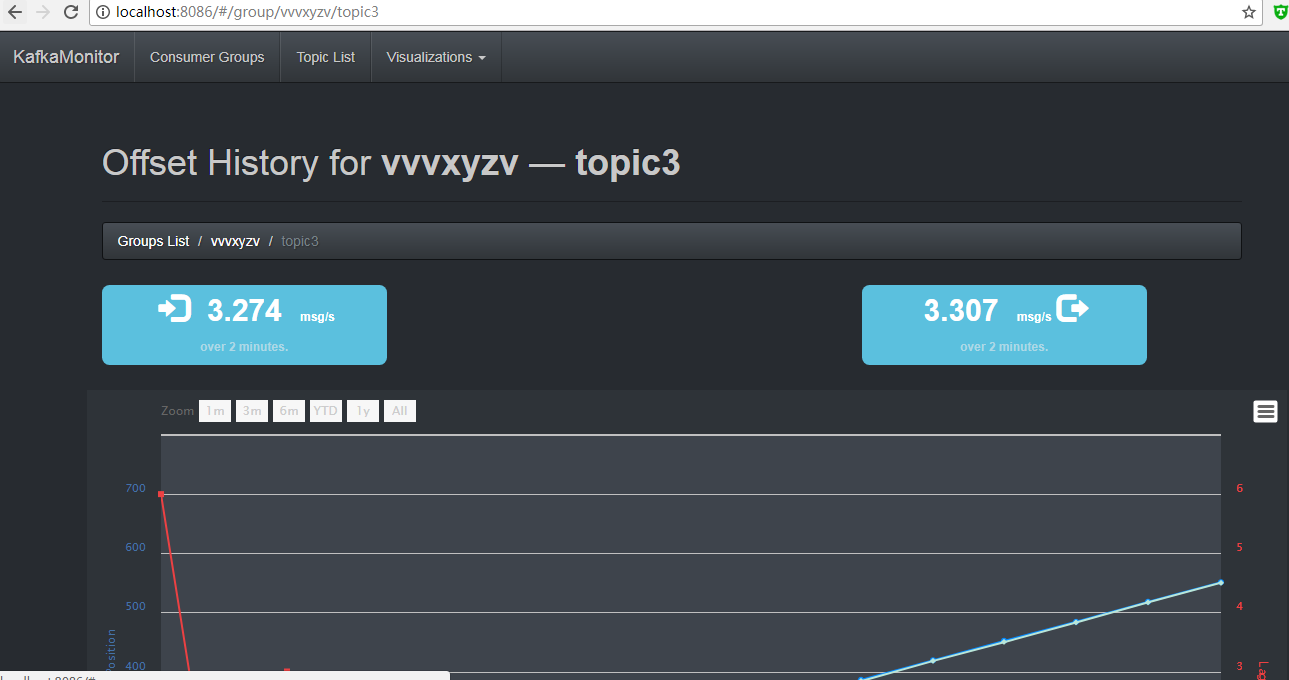

监控

java -cp KafkaOffsetMonitor-assembly-0.2.0.jar com.quantifind.kafka.offsetapp.OffsetGetterWeb --zk localhost:2181 --port 8086 --refresh 10.seconds --retain 2.days