Oracle 12cR1 RAC集群安装(一)--环境准备

Oracle 12cR1 RAC集群安装文档:

Oracle 12cR1 RAC集群安装(一)--环境准备

Oracle 12cR1 RAC集群安装(二)--使用图形界面安装

Oracle 12cR1 RAC集群安装(三)--静默安装

------------------------------------------------------------------------------------------------------------

基本环境

| 操作系统版本 | RedHat6.7 |

| 数据库版本 | 12.1.0.2 |

| 数据库名称 | testdb |

| 数据库实例 | testdb1、testdb2 |

(一)安装服务器硬件要求

| 配置项目 | 参数要求 |

| 网卡 | 每台服务器至少2个网卡: --公网网卡:带宽至少1GB --私网网卡:带宽至少1GB,建议使用10GB,用于集群节点之间的内部通信 注意:所有节点的网卡接口名称必须相同。必然要节点1使用网卡eth0来做公网网卡,那么节点2也必须使用eth0来做公网网卡。 |

| 内存 | 根据是否安装GI,内存要求为: --如果只安装单节点数据库,至少1GB内存 --如果要安装GI,至少需要4GB内存 |

| 临时磁盘空间 | 至少1GB的 /tmp 空间 |

| 本地磁盘空间 | 磁盘空间要求如下: --至少为Grid home分配8GB的空间。Oracle建议分配100GB,为后续打补丁预留出空间 --至少为Grid base分配12GB的空间,GI base主要用于存放Oracle cluster和Oracle ASM的日志文件 --在GI Base下预留10GB的额外空间,用于存放TFA数据 --如果要安装Oracle软件,那么还需要准备6.4GB的空间 建议:如果磁盘充足,建议分别给GI和oracle各100GB空间 |

| 交换空间(swap) |

交换空间要求如下: |

(二)服务器IP地址规划

| 服务器名称 | 公网IP地址(public IP) | 虚拟IP地址(VIP) | SCAN IP地址 | 私网IP地址 |

| node1 | eth0:192.168.10.11 | 192.168.10.13 | 192.168.10.10 | eth1:10.10.10.11 |

| node2 | eth0:192.168.10.12 | 192.168.10.14 | 192.168.10.10(相同) | eth1:10.10.10.12 |

注意:

1.一共包含2个IP网段,公网、虚拟、SCAN必须在同一个网段(192.168.10.0/24),私网在另一个网段(10.10.10.0/24)。

2.主机名不能包含下划线“_”,例如:host_node1,这样是错误的,可以带有中划线“-”

(三)配置主机网络

(1)修改主机名

以节点1为例,重启主机生效。

[root@template ~]# vim /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=node1

(2)修改IP地址

每台服务器的公网IP与虚拟IP均需要修改,这里以节点1的公网IP修改为例,公网使用的网卡是eth0,使用下面方法修改eth0的配置

#进入网卡配置目录 cd /etc/sysconfig/network-scripts/ #修改eth0的网卡配置 vim ifcfg-eth0 DEVICE=eth0 HWADDR=00:0c:29:f8:80:bb TYPE=Ethernet ONBOOT=yes IPADDR=192.168.10.11 NETMASK=255.255.255.0

其他网卡类似,修改完成后重启网卡

[root@node1 ~]# service network restart

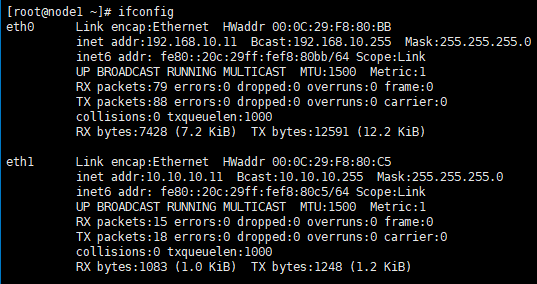

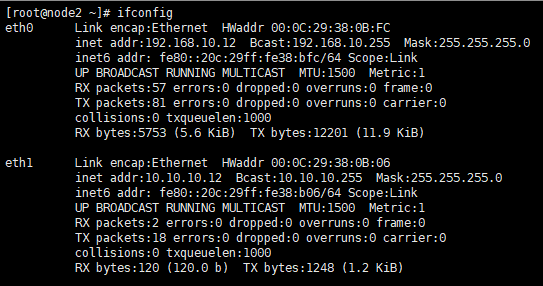

最终2台服务器的网卡配置信息如下图

node1:

node2:

(3)修改/etc/hosts文件,2个节点都做相同的修改

[root@node1 ~]# vim /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.10.11 node1 192.168.10.12 node2 192.168.10.13 node1-vip 192.168.10.14 node2-vip 192.168.10.10 node-scan 10.10.10.11 node1-priv 10.10.10.12 node2-priv

(3)关闭防火墙

#临时关闭,重启主机后恢复原来的状态

service iptables stop

#永久关闭,重启生效

chkconfig iptables off

(4)关闭seLinux

将参数SELINUX=enforcing改为SELINUX=disabled

[root@node1 ~]# vim /etc/selinux/config

# This file controls the state of SELinux on the system. # SELINUX= can take one of these three values: # enforcing - SELinux security policy is enforced. # permissive - SELinux prints warnings instead of enforcing. # disabled - No SELinux policy is loaded. SELINUX=disabled # SELINUXTYPE= can take one of these two values: # targeted - Targeted processes are protected, # mls - Multi Level Security protection. SELINUXTYPE=targeted

重启服务器生效。

(四)创建用户和用户组,创建软件安装目录,配置用户环境变量

(1)创建用户oracle和grid,以及相关的用户组

/usr/sbin/groupadd -g 1000 oinstall /usr/sbin/groupadd -g 1020 asmadmin /usr/sbin/groupadd -g 1021 asmdba /usr/sbin/groupadd -g 1022 asmoper /usr/sbin/groupadd -g 1031 dba /usr/sbin/groupadd -g 1032 oper useradd -u 1100 -g oinstall -G asmadmin,asmdba,asmoper,oper,dba grid useradd -u 1101 -g oinstall -G dba,asmdba,oper oracle

(2)创建GI和Oracle软件的安装目录,并授权

mkdir -p /u01/app/12.1.0/grid mkdir -p /u01/app/grid mkdir /u01/app/oracle chown -R grid:oinstall /u01 chown oracle:oinstall /u01/app/oracle chmod -R 775 /u01/

软件安装目录结构如下:

(3)配置grid的环境变量

[grid@node1 ~]$ vi .bash_profile # 在文件结尾添加如下内容 export TMP=/tmp export TMPDIR=$TMP export ORACLE_SID=+ASM1 export ORACLE_BASE=/u01/app/grid export ORACLE_HOME=/u01/app/12.1.0/grid export PATH=/usr/sbin:$PATH export PATH=$ORACLE_HOME/bin:$PATH export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib export CLASSPATH=$ORACLE_HOME/JRE:$ORACLE_HOME/jlib:$ORACLE_HOME/rdbms/jlib umask 022

执行命令“source .bash_profile”使环境变量生效。

注意:如果是节点2,黄色参数需要改成ORACLE_SID=+ASM2。

(4)配置oracle的环境变量

[oracle@node1 ~]$ vim .bash_profile #在文件结尾添加一下内容 export TMP=/tmp export TMPDIR=$TMP export ORACLE_SID=testdb1 export ORACLE_BASE=/u01/app/oracle export ORACLE_HOME=$ORACLE_BASE/product/12.1.0/db_1 export TNS_ADMIN=$ORACLE_HOME/network/admin export PATH=/usr/sbin:$PATH export PATH=$ORACLE_HOME/bin:$PATH export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib export CLASSPATH=$ORACLE_HOME/JRE:$ORACLE_HOME/jlib:$ORACLE_HOME/rdbms/jlib umask 022

执行命令“source .bash_profile”使环境变量生效。

注意:如果是节点2,黄色参数需要改成ORACLE_SID=testdb2。

(五)配置内核参数和资源限制

(1)配置操作系统的内核参数

在/etc/sysctl.conf文件结尾添加参数

kernel.msgmnb = 65536 kernel.msgmax = 65536 kernel.shmmax = 68719476736 kernel.shmall = 4294967296 fs.aio-max-nr = 1048576 fs.file-max = 6815744 kernel.shmall = 2097152 kernel.shmmax = 2002012160 kernel.shmmni = 4096 kernel.sem = 250 32000 100 128 net.ipv4.ip_local_port_range = 9000 65500 net.core.rmem_default = 262144 net.core.rmem_max = 4194304 net.core.wmem_default = 262144 net.core.wmem_max = 1048586 net.ipv4.tcp_wmem = 262144 262144 262144 net.ipv4.tcp_rmem = 4194304 4194304 4194304 kernel.panic_on_oops = 1

sysctl -p生效。

(2)配置oracle和grid用户的资源限制

在/etc/security/limits.conf结尾添加参数

grid soft nproc 2047 grid hard nproc 16384 grid soft nofile 1024 grid hard nofile 65536 oracle soft nproc 2047 oracle hard nproc 16384 oracle soft nofile 1024 oracle hard nofile 65536

(3)配置/etc/pam.d/login文件,在结尾添加参数

session required pam_limits.so

(六)软件包安装

使用yum工具安装缺失的软件包,软件包信息如下

binutils-2.20.51.0.2-5.11.el6 (x86_64) compat-libcap1-1.10-1 (x86_64) compat-libstdc++-33-3.2.3-69.el6 (x86_64) compat-libstdc++-33-3.2.3-69.el6 (i686) gcc-4.4.4-13.el6 (x86_64) gcc-c++-4.4.4-13.el6 (x86_64) glibc-2.12-1.7.el6 (i686) glibc-2.12-1.7.el6 (x86_64) glibc-devel-2.12-1.7.el6 (x86_64) glibc-devel-2.12-1.7.el6 (i686) ksh libgcc-4.4.4-13.el6 (i686) libgcc-4.4.4-13.el6 (x86_64) libstdc++-4.4.4-13.el6 (x86_64) libstdc++-4.4.4-13.el6 (i686) libstdc++-devel-4.4.4-13.el6 (x86_64) libstdc++-devel-4.4.4-13.el6 (i686) libaio-0.3.107-10.el6 (x86_64) libaio-0.3.107-10.el6 (i686) libaio-devel-0.3.107-10.el6 (x86_64) libaio-devel-0.3.107-10.el6 (i686) libXext-1.1 (x86_64) libXext-1.1 (i686) libXtst-1.0.99.2 (x86_64) libXtst-1.0.99.2 (i686) libX11-1.3 (x86_64) libX11-1.3 (i686) libXau-1.0.5 (x86_64) libXau-1.0.5 (i686) libxcb-1.5 (x86_64) libxcb-1.5 (i686) libXi-1.3 (x86_64) libXi-1.3 (i686) make-3.81-19.el6 sysstat-9.0.4-11.el6 (x86_64)

其中x86_64代表64位操作系统,i686代表32位操作系统,只需安装对应版本即可。

使用下面命令安装

yum install -y binutils* yum install -y compat-libcap1* yum install -y compat-libstdc++* yum install -y gcc* yum install -y gcc-c++* yum install -y glibc* yum install -y glibc-devel* yum install -y ksh yum install -y libgcc* yum install -y libstdc++* yum install -y libstdc++-devel* yum install -y libaio* yum install -y libaio-devel* yum install -y libXext* yum install -y libXtst* yum install -y libX11* yum install -y libXau* yum install -y libxcb* yum install -y libXi* yum install -y make* yum install -y sysstat*

(七)配置共享磁盘

oracle对于存放OCR磁盘组的大小要求如下

| 存储的文件类型 | 卷数量(磁盘数量) | 卷大小 |

| 投票具有外部冗余的文件 | 1 | 每个投票文件卷至少300 MB |

| 具有外部冗余的Oracle Cluster Registry(OCR)和Grid Infrastructure Management Repository | 1 | 包含Grid Infrastructure Management Repository(5.2 GB + 300 MB表决文件+ 400 MB OCR)的OCR卷至少为5.9 GB,对于超过四个节点的集群,每个节点加500 MB。 例如,六节点群集分配应为6.9 GB。 |

| Oracle Clusterware文件(OCR和投票文件)和Grid Infrastructure Management Repository,由Oracle软件提供冗余 | 3 |

每个OCR卷至少400 MB 例如,对于6节点群集,大小为14.1 GB: |

在这次安装中,磁盘规划如下:

| 磁盘组名称 | 磁盘数量 | 单个磁盘大小 | 功能说明 |

| OCR | 3 | 10GB | 存放OCR及GI management repository |

| DATA | 2 | 10GB | 存放数据库的数据 |

| ARCH | 1 | 10GB | 存放归档数据 |

(1)配置共享磁盘的方法

使用udev配置磁盘有2种方法,第一种是直接fdisk格式化磁盘,拿到/dev/sd*1的磁盘,然后使用udev绑定raw,第二种是获取wwid来绑定设备,生产中太长使用第二种方法。

(2)方法1:直接使用raw

(2.1)格式化磁盘,在1个节点上执行

# 在节点1上格式化,以/dev/sdb为例: [root@node1 ~]# fdisk /dev/sdb The number of cylinders for this disk is set to 3824. There is nothing wrong with that, but this is larger than 1024, and could in certain setups cause problems with: 1) software that runs at boot time (e.g., old versions of LILO) 2) booting and partitioning software from other OSs (e.g., DOS FDISK, OS/2 FDISK) Command (m for help): n Command action e extended p primary partition (1-4) p Partition number (1-4): 1 First cylinder (1-3824, default 1): Using default value 1 Last cylinder or +size or +sizeM or +sizeK (1-3824, default 3824): Command (m for help): w The partition table has been altered! Calling ioctl() to re-read partition table. Syncing disks.

(2.2)在2个节点上配置raw设备

[root@node1 ~]# vi /etc/udev/rules.d/60-raw.rules # 在后面添加 ACTION=="add", KERNEL=="sdb1", RUN+="/bin/raw /dev/raw/raw1 %N" ACTION=="add", KERNEL=="sdc1", RUN+="/bin/raw /dev/raw/raw2 %N" ACTION=="add", KERNEL=="sdd1", RUN+="/bin/raw /dev/raw/raw3 %N" ACTION=="add", KERNEL=="sde1", RUN+="/bin/raw /dev/raw/raw4 %N" ACTION=="add", KERNEL=="sdf1", RUN+="/bin/raw /dev/raw/raw5 %N" ACTION=="add", KERNEL=="sdg1", RUN+="/bin/raw /dev/raw/raw6 %N" KERNEL=="raw[1]", MODE="0660", OWNER="grid", GROUP="asmadmin" KERNEL=="raw[2]", MODE="0660", OWNER="grid", GROUP="asmadmin" KERNEL=="raw[3]", MODE="0660", OWNER="grid", GROUP="asmadmin" KERNEL=="raw[4]", MODE="0660", OWNER="grid", GROUP="asmadmin" KERNEL=="raw[5]", MODE="0660", OWNER="grid", GROUP="asmadmin" KERNEL=="raw[6]", MODE="0660", OWNER="grid", GROUP="asmadmin"

(2.3)启动裸设备,2个节点都要执行

[root@node1 ~]# start_udev

(2.4)查看裸设备,2个节点都要查看,如果有节点不能看到下面的raw设备信息,重启节点

[root@node1 ~]# raw -qa /dev/raw/raw1: bound to major 8, minor 17 /dev/raw/raw2: bound to major 8, minor 33 /dev/raw/raw3: bound to major 8, minor 49 /dev/raw/raw4: bound to major 8, minor 65 /dev/raw/raw5: bound to major 8, minor 81 /dev/raw/raw6: bound to major 8, minor 97

(3)方法2:使用wwid来绑定设备

(3.1)编辑/etc/scsi_id.config文件,2个节点都要编辑

[root@node1 ~]# echo "options=--whitelisted --replace-whitespace" >> /etc/scsi_id.config

(3.2)将磁盘wwid信息写入99-oracle-asmdevices.rules文件,2个节点都要编辑

[root@node1 ~]# for i in b c d e f g ; > do > echo "KERNEL==\"sd*\", BUS==\"scsi\", PROGRAM==\"/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/\$name\", RESULT==\"`/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/sd$i`\", NAME=\"asm-disk$i\", OWNER=\"grid\", GROUP=\"asmadmin\", MODE=\"0660\"" >> /etc/udev/rules.d/99-oracle-asmdevices.rules > done

(3.3)查看99-oracle-asmdevices.rules文件,2个节点都要查看

[root@node1 ~]# cd /etc/udev/rules.d/ [root@node1 rules.d]# more 99-oracle-asmdevices.rules KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name", RESULT= ="36000c293f718a0dcf1f7b410fb9fd1d9", NAME="asm-diskb", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name", RESULT= ="36000c296f46877bf6cff9febd7700fb9", NAME="asm-diskc", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name", RESULT= ="36000c2902c030ca8a0b0a4a32ab547c7", NAME="asm-diskd", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name", RESULT= ="36000c2982ad4757618bd0d06d54d04b8", NAME="asm-diske", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name", RESULT= ="36000c29872b79e70266a992e788836b6", NAME="asm-diskf", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name", RESULT= ="36000c29b1260d00b8faeb3786092143a", NAME="asm-diskg", OWNER="grid", GROUP="asmadmin", MODE="0660"

(3.4)启动设备,2个节点都要执行

[root@node1 rules.d]# start_udev

Starting udev: [ OK ]

(3.5)确认磁盘已经添加成功

[root@node1 rules.d]# cd /dev [root@node1 dev]# ls -l asm* brw-rw---- 1 grid asmadmin 8, 16 Aug 13 05:31 asm-diskb brw-rw---- 1 grid asmadmin 8, 32 Aug 13 05:31 asm-diskc brw-rw---- 1 grid asmadmin 8, 48 Aug 13 05:31 asm-diskd brw-rw---- 1 grid asmadmin 8, 64 Aug 13 05:31 asm-diske brw-rw---- 1 grid asmadmin 8, 80 Aug 13 05:31 asm-diskf brw-rw---- 1 grid asmadmin 8, 96 Aug 13 05:31 asm-diskg

(八)用户等效性配置

oracle在安装包已经提供了grid和oracle ssh节点互信配置的工具,直接使用配置非常方便

(1)配置grid用户等效性

(1.1)解压grid安装包,在节点1执行

[grid@node1 ~]$ ls linuxamd64_12102_grid_1of2.zip linuxamd64_12102_grid_2of2.zip [grid@node1 ~]$ unzip -q linuxamd64_12102_grid_1of2.zip [grid@node1 ~]$ unzip -q linuxamd64_12102_grid_2of2.zip [grid@node1 ~]$ ls grid linuxamd64_12102_grid_1of2.zip linuxamd64_12102_grid_2of2.zip

(1.2)配置节点互信

[grid@node1 sshsetup]$ pwd /home/grid/grid/sshsetup [grid@node1 sshsetup]$ ls sshUserSetup.sh [grid@node1 sshsetup]$ ./sshUserSetup.sh -hosts "node1 node2" -user grid -advanced

配置记录如下:

1 The output of this script is also logged into /tmp/sshUserSetup_2019-08-13-06-12-30.log 2 Hosts are node1 node2 3 user is grid 4 Platform:- Linux 5 Checking if the remote hosts are reachable 6 PING node1 (192.168.10.11) 56(84) bytes of data. 7 64 bytes from node1 (192.168.10.11): icmp_seq=1 ttl=64 time=0.014 ms 8 64 bytes from node1 (192.168.10.11): icmp_seq=2 ttl=64 time=0.043 ms 9 64 bytes from node1 (192.168.10.11): icmp_seq=3 ttl=64 time=0.032 ms 10 64 bytes from node1 (192.168.10.11): icmp_seq=4 ttl=64 time=0.040 ms 11 64 bytes from node1 (192.168.10.11): icmp_seq=5 ttl=64 time=0.042 ms 12 13 --- node1 ping statistics --- 14 5 packets transmitted, 5 received, 0% packet loss, time 3999ms 15 rtt min/avg/max/mdev = 0.014/0.034/0.043/0.011 ms 16 PING node2 (192.168.10.12) 56(84) bytes of data. 17 64 bytes from node2 (192.168.10.12): icmp_seq=1 ttl=64 time=3.05 ms 18 64 bytes from node2 (192.168.10.12): icmp_seq=2 ttl=64 time=0.716 ms 19 64 bytes from node2 (192.168.10.12): icmp_seq=3 ttl=64 time=0.807 ms 20 64 bytes from node2 (192.168.10.12): icmp_seq=4 ttl=64 time=1.37 ms 21 64 bytes from node2 (192.168.10.12): icmp_seq=5 ttl=64 time=0.704 ms 22 23 --- node2 ping statistics --- 24 5 packets transmitted, 5 received, 0% packet loss, time 4007ms 25 rtt min/avg/max/mdev = 0.704/1.331/3.053/0.896 ms 26 Remote host reachability check succeeded. 27 The following hosts are reachable: node1 node2. 28 The following hosts are not reachable: . 29 All hosts are reachable. Proceeding further... 30 firsthost node1 31 numhosts 2 32 The script will setup SSH connectivity from the host node1 to all 33 the remote hosts. After the script is executed, the user can use SSH to run 34 commands on the remote hosts or copy files between this host node1 35 and the remote hosts without being prompted for passwords or confirmations. 36 37 NOTE 1: 38 As part of the setup procedure, this script will use ssh and scp to copy 39 files between the local host and the remote hosts. Since the script does not 40 store passwords, you may be prompted for the passwords during the execution of 41 the script whenever ssh or scp is invoked. 42 43 NOTE 2: 44 AS PER SSH REQUIREMENTS, THIS SCRIPT WILL SECURE THE USER HOME DIRECTORY 45 AND THE .ssh DIRECTORY BY REVOKING GROUP AND WORLD WRITE PRIVILEDGES TO THESE 46 directories. 47 48 Do you want to continue and let the script make the above mentioned changes (yes/no)? 49 yes 50 51 The user chose yes 52 Please specify if you want to specify a passphrase for the private key this script will create for the local host. Passphrase is used to encrypt the private key and makes SSH much more secure. Type 'yes' or 'no' and then press enter. In case you press 'yes', you would need to enter the passphrase whenever the script executes ssh or scp. 53 The estimated number of times the user would be prompted for a passphrase is 4. In addition, if the private-public files are also newly created, the user would have to specify the passphrase on one additional occasion. 54 Enter 'yes' or 'no'. 55 yes 56 57 The user chose yes 58 Creating .ssh directory on local host, if not present already 59 Creating authorized_keys file on local host 60 Changing permissions on authorized_keys to 644 on local host 61 Creating known_hosts file on local host 62 Changing permissions on known_hosts to 644 on local host 63 Creating config file on local host 64 If a config file exists already at /home/grid/.ssh/config, it would be backed up to /home/grid/.ssh/config.backup. 65 Removing old private/public keys on local host 66 Running SSH keygen on local host 67 Enter passphrase (empty for no passphrase): 备注:输入回车 68 Enter same passphrase again: 备注:输入回车 69 Generating public/private rsa key pair. 70 Your identification has been saved in /home/grid/.ssh/id_rsa. 71 Your public key has been saved in /home/grid/.ssh/id_rsa.pub. 72 The key fingerprint is: 73 a0:48:eb:ab:7d:39:0d:cf:d2:29:49:cd:f0:a0:85:9d grid@node1 74 The key's randomart image is: 75 +--[ RSA 1024]----+ 76 | | 77 | | 78 | .o .. | 79 | ..oE. . | 80 | oo.* S | 81 | .. o + | 82 | .. O . | 83 | . .B * | 84 |..o. + | 85 +-----------------+ 86 Creating .ssh directory and setting permissions on remote host node1 87 THE SCRIPT WOULD ALSO BE REVOKING WRITE PERMISSIONS FOR group AND others ON THE HOME DIRECTORY FOR grid. THIS IS AN SSH REQUIREMENT. 88 The script would create ~grid/.ssh/config file on remote host node1. If a config file exists already at ~grid/.ssh/config, it would be backed up to ~grid/.ssh/config.backup. 89 The user may be prompted for a password here since the script would be running SSH on host node1. 90 Warning: Permanently added 'node1,192.168.10.11' (RSA) to the list of known hosts. 91 grid@node1's password: 备注:输入节点1 grid的密码 92 Done with creating .ssh directory and setting permissions on remote host node1. 93 Creating .ssh directory and setting permissions on remote host node2 94 THE SCRIPT WOULD ALSO BE REVOKING WRITE PERMISSIONS FOR group AND others ON THE HOME DIRECTORY FOR grid. THIS IS AN SSH REQUIREMENT. 95 The script would create ~grid/.ssh/config file on remote host node2. If a config file exists already at ~grid/.ssh/config, it would be backed up to ~grid/.ssh/config.backup. 96 The user may be prompted for a password here since the script would be running SSH on host node2. 97 Warning: Permanently added 'node2,192.168.10.12' (RSA) to the list of known hosts. 98 grid@node2's password: 备注:输入节点2 grid的密码 99 Done with creating .ssh directory and setting permissions on remote host node2. 100 Copying local host public key to the remote host node1 101 The user may be prompted for a password or passphrase here since the script would be using SCP for host node1. 102 grid@node1's password: 备注:输入节点1 grid的密码 103 Done copying local host public key to the remote host node1 104 Copying local host public key to the remote host node2 105 The user may be prompted for a password or passphrase here since the script would be using SCP for host node2. 106 grid@node2's password: 备注:输入节点2 grid的密码 107 Done copying local host public key to the remote host node2 108 Creating keys on remote host node1 if they do not exist already. This is required to setup SSH on host node1. 109 110 Creating keys on remote host node2 if they do not exist already. This is required to setup SSH on host node2. 111 Generating public/private rsa key pair. 112 Your identification has been saved in .ssh/id_rsa. 113 Your public key has been saved in .ssh/id_rsa.pub. 114 The key fingerprint is: 115 3e:55:4b:9f:63:a3:6c:1f:4c:ca:aa:d2:d1:93:97:46 grid@node2 116 The key's randomart image is: 117 +--[ RSA 1024]----+ 118 | | 119 | | 120 | o | 121 | oEo . | 122 | S..o..B | 123 | ...+o+* o | 124 | .o. +* o | 125 | . .. o . . | 126 | .... . | 127 +-----------------+ 128 Updating authorized_keys file on remote host node1 129 Updating known_hosts file on remote host node1 130 The script will run SSH on the remote machine node1. The user may be prompted for a passphrase here in case the private key has been encrypted with a passphrase. 131 Updating authorized_keys file on remote host node2 132 Updating known_hosts file on remote host node2 133 The script will run SSH on the remote machine node2. The user may be prompted for a passphrase here in case the private key has been encrypted with a passphrase. 134 cat: /home/grid/.ssh/known_hosts.tmp: No such file or directory 135 cat: /home/grid/.ssh/authorized_keys.tmp: No such file or directory 136 SSH setup is complete. 137 138 ------------------------------------------------------------------------ 139 Verifying SSH setup 140 =================== 141 The script will now run the date command on the remote nodes using ssh 142 to verify if ssh is setup correctly. IF THE SETUP IS CORRECTLY SETUP, 143 THERE SHOULD BE NO OUTPUT OTHER THAN THE DATE AND SSH SHOULD NOT ASK FOR 144 PASSWORDS. If you see any output other than date or are prompted for the 145 password, ssh is not setup correctly and you will need to resolve the 146 issue and set up ssh again. 147 The possible causes for failure could be: 148 1. The server settings in /etc/ssh/sshd_config file do not allow ssh 149 for user grid. 150 2. The server may have disabled public key based authentication. 151 3. The client public key on the server may be outdated. 152 4. ~grid or ~grid/.ssh on the remote host may not be owned by grid. 153 5. User may not have passed -shared option for shared remote users or 154 may be passing the -shared option for non-shared remote users. 155 6. If there is output in addition to the date, but no password is asked, 156 it may be a security alert shown as part of company policy. Append the 157 additional text to the <OMS HOME>/sysman/prov/resources/ignoreMessages.txt file. 158 ------------------------------------------------------------------------ 159 --node1:-- 160 Running /usr/bin/ssh -x -l grid node1 date to verify SSH connectivity has been setup from local host to node1. 161 IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. Please note that being prompted for a passphrase may be OK but being prompted for a password is ERROR. 162 The script will run SSH on the remote machine node1. The user may be prompted for a passphrase here in case the private key has been encrypted with a passphrase. 163 Tue Aug 13 06:12:55 HKT 2019 164 ------------------------------------------------------------------------ 165 --node2:-- 166 Running /usr/bin/ssh -x -l grid node2 date to verify SSH connectivity has been setup from local host to node2. 167 IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. Please note that being prompted for a passphrase may be OK but being prompted for a password is ERROR. 168 The script will run SSH on the remote machine node2. The user may be prompted for a passphrase here in case the private key has been encrypted with a passphrase. 169 Tue Aug 13 06:12:58 HKT 2019 170 ------------------------------------------------------------------------ 171 ------------------------------------------------------------------------ 172 Verifying SSH connectivity has been setup from node1 to node1 173 IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. 174 Tue Aug 13 06:12:55 HKT 2019 175 ------------------------------------------------------------------------ 176 ------------------------------------------------------------------------ 177 Verifying SSH connectivity has been setup from node1 to node2 178 IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. 179 Tue Aug 13 06:12:59 HKT 2019 180 ------------------------------------------------------------------------ 181 -Verification from complete- 182 SSH verification complete.

(2)配置oracle用户等效性

oracle用户的节点互信与grid类似,sshUserSetup.sh 工具在oracle的安装包下面

[oracle@node1 sshsetup]$ ./sshUserSetup.sh -hosts "node1 node2" -user oracle -advanced

(八)安装前的预检查

(1)安装cvudisk包,2个节点都要执行

[root@node1 grid]# cd grid/rpm/ [root@node1 rpm]# ls cvuqdisk-1.0.9-1.rpm [root@node1 rpm]# rpm -ivh cvuqdisk-1.0.9-1.rpm Preparing... ########################################### [100%] Using default group oinstall to install package 1:cvuqdisk ########################################### [100%]

节点1通过scp命令将cvudisk包拷贝到节点2,安装即可

(2)执行预检查,在节点1执行即可

[root@node1 grid]# su - grid [grid@node1 ~]$ cd grid/ [grid@node1 grid]$ ls install response rpm runcluvfy.sh runInstaller sshsetup stage welcome.html [grid@node1 grid]$ ./runcluvfy.sh stage -pre crsinst -n node1,node2 -fixup -verbose>check.log

确保所有项都通过,检查结果如下。

1 Performing pre-checks for cluster services setup 2 3 Checking node reachability... 4 5 Check: Node reachability from node "node1" 6 Destination Node Reachable? 7 ------------------------------------ ------------------------ 8 node1 yes 9 node2 yes 10 Result: Node reachability check passed from node "node1" 11 12 13 Checking user equivalence... 14 15 Check: User equivalence for user "grid" 16 Node Name Status 17 ------------------------------------ ------------------------ 18 node2 passed 19 node1 passed 20 Result: User equivalence check passed for user "grid" 21 22 Checking node connectivity... 23 24 Checking hosts config file... 25 Node Name Status 26 ------------------------------------ ------------------------ 27 node1 passed 28 node2 passed 29 30 Verification of the hosts config file successful 31 32 33 Interface information for node "node1" 34 Name IP Address Subnet Gateway Def. Gateway HW Address MTU 35 ------ --------------- --------------- --------------- --------------- ----------------- ------ 36 eth0 192.168.10.11 192.168.10.0 0.0.0.0 192.168.0.1 00:0C:29:F8:80:BB 1500 37 eth1 10.10.10.11 10.10.10.0 0.0.0.0 192.168.0.1 00:0C:29:F8:80:C5 1500 38 eth2 192.168.0.109 192.168.0.0 0.0.0.0 192.168.0.1 00:0C:29:F8:80:CF 1500 39 40 41 Interface information for node "node2" 42 Name IP Address Subnet Gateway Def. Gateway HW Address MTU 43 ------ --------------- --------------- --------------- --------------- ----------------- ------ 44 eth0 192.168.10.12 192.168.10.0 0.0.0.0 192.168.0.1 00:0C:29:38:0B:FC 1500 45 eth1 10.10.10.12 10.10.10.0 0.0.0.0 192.168.0.1 00:0C:29:38:0B:06 1500 46 eth2 192.168.0.108 192.168.0.0 0.0.0.0 192.168.0.1 00:0C:29:38:0B:10 1500 47 48 49 Check: Node connectivity of subnet "192.168.10.0" 50 Source Destination Connected? 51 ------------------------------ ------------------------------ ---------------- 52 node1[192.168.10.11] node2[192.168.10.12] yes 53 Result: Node connectivity passed for subnet "192.168.10.0" with node(s) node1,node2 54 55 56 Check: TCP connectivity of subnet "192.168.10.0" 57 Source Destination Connected? 58 ------------------------------ ------------------------------ ---------------- 59 node1 : 192.168.10.11 node1 : 192.168.10.11 passed 60 node2 : 192.168.10.12 node1 : 192.168.10.11 passed 61 node1 : 192.168.10.11 node2 : 192.168.10.12 passed 62 node2 : 192.168.10.12 node2 : 192.168.10.12 passed 63 Result: TCP connectivity check passed for subnet "192.168.10.0" 64 65 66 Check: Node connectivity of subnet "10.10.10.0" 67 Source Destination Connected? 68 ------------------------------ ------------------------------ ---------------- 69 node1[10.10.10.11] node2[10.10.10.12] yes 70 Result: Node connectivity passed for subnet "10.10.10.0" with node(s) node1,node2 71 72 73 Check: TCP connectivity of subnet "10.10.10.0" 74 Source Destination Connected? 75 ------------------------------ ------------------------------ ---------------- 76 node1 : 10.10.10.11 node1 : 10.10.10.11 passed 77 node2 : 10.10.10.12 node1 : 10.10.10.11 passed 78 node1 : 10.10.10.11 node2 : 10.10.10.12 passed 79 node2 : 10.10.10.12 node2 : 10.10.10.12 passed 80 Result: TCP connectivity check passed for subnet "10.10.10.0" 81 82 83 Check: Node connectivity of subnet "192.168.0.0" 84 Source Destination Connected? 85 ------------------------------ ------------------------------ ---------------- 86 node1[192.168.0.109] node2[192.168.0.108] yes 87 Result: Node connectivity passed for subnet "192.168.0.0" with node(s) node1,node2 88 89 90 Check: TCP connectivity of subnet "192.168.0.0" 91 Source Destination Connected? 92 ------------------------------ ------------------------------ ---------------- 93 node1 : 192.168.0.109 node1 : 192.168.0.109 passed 94 node2 : 192.168.0.108 node1 : 192.168.0.109 passed 95 node1 : 192.168.0.109 node2 : 192.168.0.108 passed 96 node2 : 192.168.0.108 node2 : 192.168.0.108 passed 97 Result: TCP connectivity check passed for subnet "192.168.0.0" 98 99 100 Interfaces found on subnet "192.168.0.0" that are likely candidates for VIP are: 101 node1 eth2:192.168.0.109 102 node2 eth2:192.168.0.108 103 104 Interfaces found on subnet "192.168.10.0" that are likely candidates for a private interconnect are: 105 node1 eth0:192.168.10.11 106 node2 eth0:192.168.10.12 107 108 Interfaces found on subnet "10.10.10.0" that are likely candidates for a private interconnect are: 109 node1 eth1:10.10.10.11 110 node2 eth1:10.10.10.12 111 Checking subnet mask consistency... 112 Subnet mask consistency check passed for subnet "192.168.10.0". 113 Subnet mask consistency check passed for subnet "10.10.10.0". 114 Subnet mask consistency check passed for subnet "192.168.0.0". 115 Subnet mask consistency check passed. 116 117 Result: Node connectivity check passed 118 119 Checking multicast communication... 120 121 Checking subnet "192.168.10.0" for multicast communication with multicast group "224.0.0.251"... 122 Check of subnet "192.168.10.0" for multicast communication with multicast group "224.0.0.251" passed. 123 124 Check of multicast communication passed. 125 126 Check: Total memory 127 Node Name Available Required Status 128 ------------ ------------------------ ------------------------ ---------- 129 node2 3.729GB (3910180.0KB) 4GB (4194304.0KB) failed 130 node1 3.729GB (3910180.0KB) 4GB (4194304.0KB) failed 131 Result: Total memory check failed 132 133 Check: Available memory 134 Node Name Available Required Status 135 ------------ ------------------------ ------------------------ ---------- 136 node2 3.4397GB (3606804.0KB) 50MB (51200.0KB) passed 137 node1 3.1282GB (3280116.0KB) 50MB (51200.0KB) passed 138 Result: Available memory check passed 139 140 Check: Swap space 141 Node Name Available Required Status 142 ------------ ------------------------ ------------------------ ---------- 143 node2 3.8594GB (4046844.0KB) 3.729GB (3910180.0KB) passed 144 node1 3.8594GB (4046844.0KB) 3.729GB (3910180.0KB) passed 145 Result: Swap space check passed 146 147 Check: Free disk space for "node2:/usr,node2:/var,node2:/etc,node2:/sbin" 148 Path Node Name Mount point Available Required Status 149 ---------------- ------------ ------------ ------------ ------------ ------------ 150 /usr node2 / 44.1611GB 65MB passed 151 /var node2 / 44.1611GB 65MB passed 152 /etc node2 / 44.1611GB 65MB passed 153 /sbin node2 / 44.1611GB 65MB passed 154 Result: Free disk space check passed for "node2:/usr,node2:/var,node2:/etc,node2:/sbin" 155 156 Check: Free disk space for "node1:/usr,node1:/var,node1:/etc,node1:/sbin" 157 Path Node Name Mount point Available Required Status 158 ---------------- ------------ ------------ ------------ ------------ ------------ 159 /usr node1 / 43.4079GB 65MB passed 160 /var node1 / 43.4079GB 65MB passed 161 /etc node1 / 43.4079GB 65MB passed 162 /sbin node1 / 43.4079GB 65MB passed 163 Result: Free disk space check passed for "node1:/usr,node1:/var,node1:/etc,node1:/sbin" 164 165 Check: Free disk space for "node2:/tmp" 166 Path Node Name Mount point Available Required Status 167 ---------------- ------------ ------------ ------------ ------------ ------------ 168 /tmp node2 /tmp 44.1611GB 1GB passed 169 Result: Free disk space check passed for "node2:/tmp" 170 171 Check: Free disk space for "node1:/tmp" 172 Path Node Name Mount point Available Required Status 173 ---------------- ------------ ------------ ------------ ------------ ------------ 174 /tmp node1 /tmp 43.4062GB 1GB passed 175 Result: Free disk space check passed for "node1:/tmp" 176 177 Check: User existence for "grid" 178 Node Name Status Comment 179 ------------ ------------------------ ------------------------ 180 node2 passed exists(1100) 181 node1 passed exists(1100) 182 183 Checking for multiple users with UID value 1100 184 Result: Check for multiple users with UID value 1100 passed 185 Result: User existence check passed for "grid" 186 187 Check: Group existence for "oinstall" 188 Node Name Status Comment 189 ------------ ------------------------ ------------------------ 190 node2 passed exists 191 node1 passed exists 192 Result: Group existence check passed for "oinstall" 193 194 Check: Group existence for "dba" 195 Node Name Status Comment 196 ------------ ------------------------ ------------------------ 197 node2 passed exists 198 node1 passed exists 199 Result: Group existence check passed for "dba" 200 201 Check: Membership of user "grid" in group "oinstall" [as Primary] 202 Node Name User Exists Group Exists User in Group Primary Status 203 ---------------- ------------ ------------ ------------ ------------ ------------ 204 node2 yes yes yes yes passed 205 node1 yes yes yes yes passed 206 Result: Membership check for user "grid" in group "oinstall" [as Primary] passed 207 208 Check: Membership of user "grid" in group "dba" 209 Node Name User Exists Group Exists User in Group Status 210 ---------------- ------------ ------------ ------------ ---------------- 211 node2 yes yes yes passed 212 node1 yes yes yes passed 213 Result: Membership check for user "grid" in group "dba" passed 214 215 Check: Run level 216 Node Name run level Required Status 217 ------------ ------------------------ ------------------------ ---------- 218 node2 5 3,5 passed 219 node1 5 3,5 passed 220 Result: Run level check passed 221 222 Check: Hard limits for "maximum open file descriptors" 223 Node Name Type Available Required Status 224 ---------------- ------------ ------------ ------------ ---------------- 225 node2 hard 65536 65536 passed 226 node1 hard 65536 65536 passed 227 Result: Hard limits check passed for "maximum open file descriptors" 228 229 Check: Soft limits for "maximum open file descriptors" 230 Node Name Type Available Required Status 231 ---------------- ------------ ------------ ------------ ---------------- 232 node2 soft 1024 1024 passed 233 node1 soft 1024 1024 passed 234 Result: Soft limits check passed for "maximum open file descriptors" 235 236 Check: Hard limits for "maximum user processes" 237 Node Name Type Available Required Status 238 ---------------- ------------ ------------ ------------ ---------------- 239 node2 hard 16384 16384 passed 240 node1 hard 16384 16384 passed 241 Result: Hard limits check passed for "maximum user processes" 242 243 Check: Soft limits for "maximum user processes" 244 Node Name Type Available Required Status 245 ---------------- ------------ ------------ ------------ ---------------- 246 node2 soft 2047 2047 passed 247 node1 soft 2047 2047 passed 248 Result: Soft limits check passed for "maximum user processes" 249 250 Check: System architecture 251 Node Name Available Required Status 252 ------------ ------------------------ ------------------------ ---------- 253 node2 x86_64 x86_64 passed 254 node1 x86_64 x86_64 passed 255 Result: System architecture check passed 256 257 Check: Kernel version 258 Node Name Available Required Status 259 ------------ ------------------------ ------------------------ ---------- 260 node2 2.6.32-573.el6.x86_64 2.6.32 passed 261 node1 2.6.32-573.el6.x86_64 2.6.32 passed 262 Result: Kernel version check passed 263 264 Check: Kernel parameter for "semmsl" 265 Node Name Current Configured Required Status Comment 266 ---------------- ------------ ------------ ------------ ------------ ------------ 267 node1 250 250 250 passed 268 node2 250 250 250 passed 269 Result: Kernel parameter check passed for "semmsl" 270 271 Check: Kernel parameter for "semmns" 272 Node Name Current Configured Required Status Comment 273 ---------------- ------------ ------------ ------------ ------------ ------------ 274 node1 32000 32000 32000 passed 275 node2 32000 32000 32000 passed 276 Result: Kernel parameter check passed for "semmns" 277 278 Check: Kernel parameter for "semopm" 279 Node Name Current Configured Required Status Comment 280 ---------------- ------------ ------------ ------------ ------------ ------------ 281 node1 100 100 100 passed 282 node2 100 100 100 passed 283 Result: Kernel parameter check passed for "semopm" 284 285 Check: Kernel parameter for "semmni" 286 Node Name Current Configured Required Status Comment 287 ---------------- ------------ ------------ ------------ ------------ ------------ 288 node1 128 128 128 passed 289 node2 128 128 128 passed 290 Result: Kernel parameter check passed for "semmni" 291 292 Check: Kernel parameter for "shmmax" 293 Node Name Current Configured Required Status Comment 294 ---------------- ------------ ------------ ------------ ------------ ------------ 295 node1 2002012160 2002012160 2002012160 passed 296 node2 2002012160 2002012160 2002012160 passed 297 Result: Kernel parameter check passed for "shmmax" 298 299 Check: Kernel parameter for "shmmni" 300 Node Name Current Configured Required Status Comment 301 ---------------- ------------ ------------ ------------ ------------ ------------ 302 node1 4096 4096 4096 passed 303 node2 4096 4096 4096 passed 304 Result: Kernel parameter check passed for "shmmni" 305 306 Check: Kernel parameter for "shmall" 307 Node Name Current Configured Required Status Comment 308 ---------------- ------------ ------------ ------------ ------------ ------------ 309 node1 2097152 2097152 391018 passed 310 node2 2097152 2097152 391018 passed 311 Result: Kernel parameter check passed for "shmall" 312 313 Check: Kernel parameter for "file-max" 314 Node Name Current Configured Required Status Comment 315 ---------------- ------------ ------------ ------------ ------------ ------------ 316 node1 6815744 6815744 6815744 passed 317 node2 6815744 6815744 6815744 passed 318 Result: Kernel parameter check passed for "file-max" 319 320 Check: Kernel parameter for "ip_local_port_range" 321 Node Name Current Configured Required Status Comment 322 ---------------- ------------ ------------ ------------ ------------ ------------ 323 node1 between 9000 & 65500 between 9000 & 65500 between 9000 & 65535 passed 324 node2 between 9000 & 65500 between 9000 & 65500 between 9000 & 65535 passed 325 Result: Kernel parameter check passed for "ip_local_port_range" 326 327 Check: Kernel parameter for "rmem_default" 328 Node Name Current Configured Required Status Comment 329 ---------------- ------------ ------------ ------------ ------------ ------------ 330 node1 262144 262144 262144 passed 331 node2 262144 262144 262144 passed 332 Result: Kernel parameter check passed for "rmem_default" 333 334 Check: Kernel parameter for "rmem_max" 335 Node Name Current Configured Required Status Comment 336 ---------------- ------------ ------------ ------------ ------------ ------------ 337 node1 4194304 4194304 4194304 passed 338 node2 4194304 4194304 4194304 passed 339 Result: Kernel parameter check passed for "rmem_max" 340 341 Check: Kernel parameter for "wmem_default" 342 Node Name Current Configured Required Status Comment 343 ---------------- ------------ ------------ ------------ ------------ ------------ 344 node1 262144 262144 262144 passed 345 node2 262144 262144 262144 passed 346 Result: Kernel parameter check passed for "wmem_default" 347 348 Check: Kernel parameter for "wmem_max" 349 Node Name Current Configured Required Status Comment 350 ---------------- ------------ ------------ ------------ ------------ ------------ 351 node1 1048586 1048586 1048576 passed 352 node2 1048586 1048586 1048576 passed 353 Result: Kernel parameter check passed for "wmem_max" 354 355 Check: Kernel parameter for "aio-max-nr" 356 Node Name Current Configured Required Status Comment 357 ---------------- ------------ ------------ ------------ ------------ ------------ 358 node1 1048576 1048576 1048576 passed 359 node2 1048576 1048576 1048576 passed 360 Result: Kernel parameter check passed for "aio-max-nr" 361 362 Check: Kernel parameter for "panic_on_oops" 363 Node Name Current Configured Required Status Comment 364 ---------------- ------------ ------------ ------------ ------------ ------------ 365 node1 1 1 1 passed 366 node2 1 1 1 passed 367 Result: Kernel parameter check passed for "panic_on_oops" 368 369 Check: Package existence for "binutils" 370 Node Name Available Required Status 371 ------------ ------------------------ ------------------------ ---------- 372 node2 binutils-2.20.51.0.2-5.43.el6 binutils-2.20.51.0.2 passed 373 node1 binutils-2.20.51.0.2-5.43.el6 binutils-2.20.51.0.2 passed 374 Result: Package existence check passed for "binutils" 375 376 Check: Package existence for "compat-libcap1" 377 Node Name Available Required Status 378 ------------ ------------------------ ------------------------ ---------- 379 node2 compat-libcap1-1.10-1 compat-libcap1-1.10 passed 380 node1 compat-libcap1-1.10-1 compat-libcap1-1.10 passed 381 Result: Package existence check passed for "compat-libcap1" 382 383 Check: Package existence for "compat-libstdc++-33(x86_64)" 384 Node Name Available Required Status 385 ------------ ------------------------ ------------------------ ---------- 386 node2 compat-libstdc++-33(x86_64)-3.2.3-69.el6 compat-libstdc++-33(x86_64)-3.2.3 passed 387 node1 compat-libstdc++-33(x86_64)-3.2.3-69.el6 compat-libstdc++-33(x86_64)-3.2.3 passed 388 Result: Package existence check passed for "compat-libstdc++-33(x86_64)" 389 390 Check: Package existence for "libgcc(x86_64)" 391 Node Name Available Required Status 392 ------------ ------------------------ ------------------------ ---------- 393 node2 libgcc(x86_64)-4.4.7-23.el6 libgcc(x86_64)-4.4.4 passed 394 node1 libgcc(x86_64)-4.4.7-23.el6 libgcc(x86_64)-4.4.4 passed 395 Result: Package existence check passed for "libgcc(x86_64)" 396 397 Check: Package existence for "libstdc++(x86_64)" 398 Node Name Available Required Status 399 ------------ ------------------------ ------------------------ ---------- 400 node2 libstdc++(x86_64)-4.4.7-23.el6 libstdc++(x86_64)-4.4.4 passed 401 node1 libstdc++(x86_64)-4.4.7-23.el6 libstdc++(x86_64)-4.4.4 passed 402 Result: Package existence check passed for "libstdc++(x86_64)" 403 404 Check: Package existence for "libstdc++-devel(x86_64)" 405 Node Name Available Required Status 406 ------------ ------------------------ ------------------------ ---------- 407 node2 libstdc++-devel(x86_64)-4.4.7-23.el6 libstdc++-devel(x86_64)-4.4.4 passed 408 node1 libstdc++-devel(x86_64)-4.4.7-23.el6 libstdc++-devel(x86_64)-4.4.4 passed 409 Result: Package existence check passed for "libstdc++-devel(x86_64)" 410 411 Check: Package existence for "sysstat" 412 Node Name Available Required Status 413 ------------ ------------------------ ------------------------ ---------- 414 node2 sysstat-9.0.4-27.el6 sysstat-9.0.4 passed 415 node1 sysstat-9.0.4-27.el6 sysstat-9.0.4 passed 416 Result: Package existence check passed for "sysstat" 417 418 Check: Package existence for "gcc" 419 Node Name Available Required Status 420 ------------ ------------------------ ------------------------ ---------- 421 node2 gcc-4.4.7-23.el6 gcc-4.4.4 passed 422 node1 gcc-4.4.7-23.el6 gcc-4.4.4 passed 423 Result: Package existence check passed for "gcc" 424 425 Check: Package existence for "gcc-c++" 426 Node Name Available Required Status 427 ------------ ------------------------ ------------------------ ---------- 428 node2 gcc-c++-4.4.7-23.el6 gcc-c++-4.4.4 passed 429 node1 gcc-c++-4.4.7-23.el6 gcc-c++-4.4.4 passed 430 Result: Package existence check passed for "gcc-c++" 431 432 Check: Package existence for "ksh" 433 Node Name Available Required Status 434 ------------ ------------------------ ------------------------ ---------- 435 node2 ksh ksh passed 436 node1 ksh ksh passed 437 Result: Package existence check passed for "ksh" 438 439 Check: Package existence for "make" 440 Node Name Available Required Status 441 ------------ ------------------------ ------------------------ ---------- 442 node2 make-3.81-20.el6 make-3.81 passed 443 node1 make-3.81-20.el6 make-3.81 passed 444 Result: Package existence check passed for "make" 445 446 Check: Package existence for "glibc(x86_64)" 447 Node Name Available Required Status 448 ------------ ------------------------ ------------------------ ---------- 449 node2 glibc(x86_64)-2.12-1.212.el6_10.3 glibc(x86_64)-2.12 passed 450 node1 glibc(x86_64)-2.12-1.212.el6_10.3 glibc(x86_64)-2.12 passed 451 Result: Package existence check passed for "glibc(x86_64)" 452 453 Check: Package existence for "glibc-devel(x86_64)" 454 Node Name Available Required Status 455 ------------ ------------------------ ------------------------ ---------- 456 node2 glibc-devel(x86_64)-2.12-1.212.el6_10.3 glibc-devel(x86_64)-2.12 passed 457 node1 glibc-devel(x86_64)-2.12-1.212.el6_10.3 glibc-devel(x86_64)-2.12 passed 458 Result: Package existence check passed for "glibc-devel(x86_64)" 459 460 Check: Package existence for "libaio(x86_64)" 461 Node Name Available Required Status 462 ------------ ------------------------ ------------------------ ---------- 463 node2 libaio(x86_64)-0.3.107-10.el6 libaio(x86_64)-0.3.107 passed 464 node1 libaio(x86_64)-0.3.107-10.el6 libaio(x86_64)-0.3.107 passed 465 Result: Package existence check passed for "libaio(x86_64)" 466 467 Check: Package existence for "libaio-devel(x86_64)" 468 Node Name Available Required Status 469 ------------ ------------------------ ------------------------ ---------- 470 node2 libaio-devel(x86_64)-0.3.107-10.el6 libaio-devel(x86_64)-0.3.107 passed 471 node1 libaio-devel(x86_64)-0.3.107-10.el6 libaio-devel(x86_64)-0.3.107 passed 472 Result: Package existence check passed for "libaio-devel(x86_64)" 473 474 Check: Package existence for "nfs-utils" 475 Node Name Available Required Status 476 ------------ ------------------------ ------------------------ ---------- 477 node2 nfs-utils-1.2.3-64.el6 nfs-utils-1.2.3-15 passed 478 node1 nfs-utils-1.2.3-64.el6 nfs-utils-1.2.3-15 passed 479 Result: Package existence check passed for "nfs-utils" 480 481 Checking availability of ports "6200,6100" required for component "Oracle Notification Service (ONS)" 482 Node Name Port Number Protocol Available Status 483 ---------------- ------------ ------------ ------------ ---------------- 484 node2 6200 TCP yes successful 485 node1 6200 TCP yes successful 486 node2 6100 TCP yes successful 487 node1 6100 TCP yes successful 488 Result: Port availability check passed for ports "6200,6100" 489 490 Checking availability of ports "42424" required for component "Oracle Cluster Synchronization Services (CSSD)" 491 Node Name Port Number Protocol Available Status 492 ---------------- ------------ ------------ ------------ ---------------- 493 node2 42424 TCP yes successful 494 node1 42424 TCP yes successful 495 Result: Port availability check passed for ports "42424" 496 497 Checking for multiple users with UID value 0 498 Result: Check for multiple users with UID value 0 passed 499 500 Check: Current group ID 501 Result: Current group ID check passed 502 503 Starting check for consistency of primary group of root user 504 Node Name Status 505 ------------------------------------ ------------------------ 506 node2 passed 507 node1 passed 508 509 Check for consistency of root user's primary group passed 510 511 Starting Clock synchronization checks using Network Time Protocol(NTP)... 512 513 Checking existence of NTP configuration file "/etc/ntp.conf" across nodes 514 Node Name File exists? 515 ------------------------------------ ------------------------ 516 node2 yes 517 node1 yes 518 The NTP configuration file "/etc/ntp.conf" is available on all nodes 519 NTP configuration file "/etc/ntp.conf" existence check passed 520 No NTP Daemons or Services were found to be running 521 PRVF-5507 : NTP daemon or service is not running on any node but NTP configuration file exists on the following node(s): 522 node2,node1 523 Result: Clock synchronization check using Network Time Protocol(NTP) failed 524 525 Checking Core file name pattern consistency... 526 Core file name pattern consistency check passed. 527 528 Checking to make sure user "grid" is not in "root" group 529 Node Name Status Comment 530 ------------ ------------------------ ------------------------ 531 node2 passed does not exist 532 node1 passed does not exist 533 Result: User "grid" is not part of "root" group. Check passed 534 535 Check default user file creation mask 536 Node Name Available Required Comment 537 ------------ ------------------------ ------------------------ ---------- 538 node2 0022 0022 passed 539 node1 0022 0022 passed 540 Result: Default user file creation mask check passed 541 Checking integrity of file "/etc/resolv.conf" across nodes 542 543 Checking the file "/etc/resolv.conf" to make sure only one of 'domain' and 'search' entries is defined 544 545 WARNING: 546 PRVF-5640 : Both 'search' and 'domain' entries are present in file "/etc/resolv.conf" on the following nodes: node1,node2 547 548 Check for integrity of file "/etc/resolv.conf" passed 549 550 Check: Time zone consistency 551 Result: Time zone consistency check passed 552 553 Checking integrity of name service switch configuration file "/etc/nsswitch.conf" ... 554 Checking if "hosts" entry in file "/etc/nsswitch.conf" is consistent across nodes... 555 Checking file "/etc/nsswitch.conf" to make sure that only one "hosts" entry is defined 556 More than one "hosts" entry does not exist in any "/etc/nsswitch.conf" file 557 All nodes have same "hosts" entry defined in file "/etc/nsswitch.conf" 558 Check for integrity of name service switch configuration file "/etc/nsswitch.conf" passed 559 560 561 Checking daemon "avahi-daemon" is not configured and running 562 563 Check: Daemon "avahi-daemon" not configured 564 Node Name Configured Status 565 ------------ ------------------------ ------------------------ 566 node2 no passed 567 node1 no passed 568 Daemon not configured check passed for process "avahi-daemon" 569 570 Check: Daemon "avahi-daemon" not running 571 Node Name Running? Status 572 ------------ ------------------------ ------------------------ 573 node2 no passed 574 node1 no passed 575 Daemon not running check passed for process "avahi-daemon" 576 577 Starting check for /dev/shm mounted as temporary file system ... 578 579 Check for /dev/shm mounted as temporary file system passed 580 581 Starting check for /boot mount ... 582 583 Check for /boot mount passed 584 585 Starting check for zeroconf check ... 586 587 ERROR: 588 589 PRVE-10077 : NOZEROCONF parameter was not specified or was not set to 'yes' in file "/etc/sysconfig/network" on node "node2" 590 PRVE-10077 : NOZEROCONF parameter was not specified or was not set to 'yes' in file "/etc/sysconfig/network" on node "node1" 591 592 Check for zeroconf check failed 593 594 Pre-check for cluster services setup was unsuccessful on all the nodes. 595 596 ****************************************************************************************** 597 Following is the list of fixable prerequisites selected to fix in this session 598 ****************************************************************************************** 599 600 -------------- --------------- ---------------- 601 Check failed. Failed on nodes Reboot required? 602 -------------- --------------- ---------------- 603 zeroconf check node2,node1 no 604 605 606 607 Execute "/tmp/CVU_12.1.0.2.0_grid/runfixup.sh" as root user on nodes "node1,node2" to perform the fix up operations manually 608 609 Press ENTER key to continue after execution of "/tmp/CVU_12.1.0.2.0_grid/runfixup.sh" has completed on nodes "node1,node2" 610 611 Fix: zeroconf check 612 Node Name Status 613 ------------------------------------ ------------------------ 614 node2 failed 615 node1 failed 616 617 ERROR: 618 PRVG-9023 : Manual fix up command "/tmp/CVU_12.1.0.2.0_grid/runfixup.sh" was not issued by root user on node "node2" 619 620 PRVG-9023 : Manual fix up command "/tmp/CVU_12.1.0.2.0_grid/runfixup.sh" was not issued by root user on node "node1" 621 622 Result: "zeroconf check" could not be fixed on nodes "node2,node1" 623 Fix up operations for selected fixable prerequisites were unsuccessful on nodes "node2,node1"

后续:安装Grid infrastructure和RDBMS,以及创建数据库,见文档:

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· .NET Core 中如何实现缓存的预热?

· 从 HTTP 原因短语缺失研究 HTTP/2 和 HTTP/3 的设计差异

· AI与.NET技术实操系列:向量存储与相似性搜索在 .NET 中的实现

· 基于Microsoft.Extensions.AI核心库实现RAG应用

· Linux系列:如何用heaptrack跟踪.NET程序的非托管内存泄露

· TypeScript + Deepseek 打造卜卦网站:技术与玄学的结合

· Manus的开源复刻OpenManus初探

· AI 智能体引爆开源社区「GitHub 热点速览」

· 三行代码完成国际化适配,妙~啊~

· .NET Core 中如何实现缓存的预热?

2018-08-14 【Linux资源管理】一款优秀的linux监控工具——nmon