9 云计算系列之Cinder的安装与NFS作为cinder后端存储

preface

在前面我们知道了如何搭建Openstack的keystone,glance,nova,neutron,horizon这几个服务,然而在这几个服务中唯独缺少存储服务,那么下面我们就学习块存储服务。

Cinder块存储服务

块存储服务(cinder)为实例提供块存储。存储的分配和消耗是由块存储驱动器,或者多后端配置的驱动器决定的。还有很多驱动程序可用:NAS/SAN,NFS,ISCSI,Ceph等。典型情况下,块服务API和调度器服务运行在控制节点上。取决于使用的驱动,卷服务器可以运行在控制节点、计算节点或单独的存储节点。

它由下面4个组件来组成的:

1.cinder-api:

接受API请求,并将请求调度到cinder-volume 执行

2.cinder-volume

与块存储服务,例如cinder-scheduler 的进程直接交互。它也可以与这些进程通过一个消息队列交互。 cinder-volume服务响应到块存储服务的读写请求来维持状态。它也可以和多种存储驱动交互

3.cinder-scheduler守护进程

选择最优存储提供节点来创建卷。其与nova-scheduler组件类似。

4.cinder-backup daemon

cinder-backup服务提供任何种类备份卷到一个备份存储提供者。就像cinder-volume服务,它与多种存储提供者在驱动架构下进行交互。

5.消息队列

在块存储的进程之间路由信息。

在没有cinder服务的时候,我们的云主机磁盘是在/var/lib/nova/instances/虚拟机ID 下面,如下所示

[root@linux-node2 instances]# ll -rt /var/lib/nova/instances/

total 8

drwxr-xr-x. 2 nova nova 69 Feb 8 20:26 afda0b61-a8f8-4e27-bf42-b20503496fe1 # 默认就在本地磁盘作为存储实体

-rw-r--r--. 1 nova nova 45 Feb 8 21:36 compute_nodes

drwxr-xr-x. 2 nova nova 100 Feb 8 21:36 _base

drwxr-xr-x. 2 nova nova 4096 Feb 8 21:36 locks

部署安装它

我们可以参照官网来安装:http://docs.openstack.org/newton/install-guide-rdo/cinder-controller-install.html

我们在linux-node1节点上安装它

1.创建数据库与数据库用户

这一步我们之前在安装keystone的时候已经完成了。那就在啰嗦一下吧:

mysql> CREATE DATABASE cinder;

mysql> GRANT ALL PRIVILEGES ON cinder.* TO 'cinder'@'localhost' \

IDENTIFIED BY 'cinder';

mysql> GRANT ALL PRIVILEGES ON cinder.* TO 'cinder'@'%' \

IDENTIFIED BY 'cinder';

2.创建Openstack 用户

这个Openstack 的用户我们也创建完成了在安装keystone的时候,那就在啰嗦一下如何创建吧:

[root@linux-node1 ~]# source admin_openrc

[root@linux-node1 ~]# openstack user create --domain default --password-prompt cinder

[root@linux-node1 ~]# openstack role add --project service --user cinder admin

3.安装cinder服务

[root@linux-node1 ~]# yum install openstack-cinder

4.配置cinder

[root@linux-node1 ~]# vim /etc/cinder/cinder.conf

[DEFAULT]

transport_url = rabbit://openstack:openstack@192.168.56.11

auth_strategy = keystone

[database]

connection = mysql+pymysql://cinder:cinder@192.168.56.11/cinder

[keystone_authtoken]

auth_uri = http://192.168.56.11:5000

auth_url = http://192.168.56.11:35357

memcached_servers = 192.168.56.11:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = cinder

password = cinder

[oslo_concurrency]

lock_path = /var/lib/cinder/tmp

5.同步数据库

[root@linux-node1 ~]# su -s /bin/sh -c "cinder-manage db sync" cinder

[root@linux-node1 ~]# mysql -h 192.168.56.11 -ucinder -pcinder

MariaDB [(none)]> use cinder;

MariaDB [cinder]> show tables;

[root@linux-node1 ~]# mysql -h 192.168.56.11 -ucinder -pcinder -e "use cinder;show tables" |wc -l # 核对下看是不是共34行

34

6.配置计算服务

[root@linux-node1 ~]# vim /etc/nova/nova.conf

[cinder]

os_region_name = RegionOne

7.重启计算服务,并且设置cinder服务开机自启

[root@linux-node1 ~]# systemctl restart openstack-nova-api.service

[root@linux-node1 ~]# systemctl enable openstack-cinder-api.service openstack-cinder-scheduler.service

[root@linux-node1 ~]# systemctl start openstack-cinder-api.service openstack-cinder-scheduler.service

8.检查端口是否起来和日至是否有异常:

[root@linux-node1 ~]# netstat -lnpt |grep 8776

tcp 0 0 0.0.0.0:8776 0.0.0.0:* LISTEN 6256/python2

[root@linux-node1 ~]# tail /var/log/cinder/api.log

9.注册服务

openstack endpoint create --region RegionOne volume public http://192.168.56.11:8776/v1/%\(tenant_id\)s

openstack endpoint create --region RegionOne volume internal http://192.168.56.11:8776/v1/%\(tenant_id\)s

openstack endpoint create --region RegionOne volume admin http://192.168.56.11:8776/v1/%\(tenant_id\)s

openstack endpoint create --region RegionOne volumev2 public http://192.168.56.11:8776/v2/%\(tenant_id\)s

openstack endpoint create --region RegionOne volumev2 internal http://192.168.56.11:8776/v2/%\(tenant_id\)s

openstack endpoint create --region RegionOne volumev2 admin http://192.168.56.11:8776/v2/%\(tenant_id\)s

[root@linux-node1 ~]# openstack endpoint create --region RegionOne volume public http://192.168.56.11:8776/v1/%\(tenant_id\)s

+--------------+--------------------------------------------+

| Field | Value |

+--------------+--------------------------------------------+

| enabled | True |

| id | 69fa1e44b92a4511b87e6bba900a9d7a |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 79eaa15817444e518f08a31555a1cb36 |

| service_name | cinder |

| service_type | volume |

| url | http://192.168.56.11:8776/v1/%(tenant_id)s |

+--------------+--------------------------------------------+

[root@linux-node1 ~]# openstack endpoint create --region RegionOne volume internal http://192.168.56.11:8776/v1/%\(tenant_id\)s

+--------------+--------------------------------------------+

| Field | Value |

+--------------+--------------------------------------------+

| enabled | True |

| id | 4cc826bb78f848979303b478d7bb66ab |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 79eaa15817444e518f08a31555a1cb36 |

| service_name | cinder |

| service_type | volume |

| url | http://192.168.56.11:8776/v1/%(tenant_id)s |

+--------------+--------------------------------------------+

[root@linux-node1 ~]# openstack endpoint create --region RegionOne volume admin http://192.168.56.11:8776/v1/%\(tenant_id\)s

+--------------+--------------------------------------------+

| Field | Value |

+--------------+--------------------------------------------+

| enabled | True |

| id | c70163f3372449ef8978514aa19d5cad |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 79eaa15817444e518f08a31555a1cb36 |

| service_name | cinder |

| service_type | volume |

| url | http://192.168.56.11:8776/v1/%(tenant_id)s |

+--------------+--------------------------------------------+

[root@linux-node1 ~]# openstack endpoint create --region RegionOne volumev2 public http://192.168.56.11:8776/v2/%\(tenant_id\)s

+--------------+--------------------------------------------+

| Field | Value |

+--------------+--------------------------------------------+

| enabled | True |

| id | 028b68c6a48a49be81760c3359c3be3f |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 5452eb159d5a420187697669fbb0fb31 |

| service_name | cinderv2 |

| service_type | volumev2 |

| url | http://192.168.56.11:8776/v2/%(tenant_id)s |

+--------------+--------------------------------------------+

[root@linux-node1 ~]# openstack endpoint create --region RegionOne volumev2 internal http://192.168.56.11:8776/v2/%\(tenant_id\)s

+--------------+--------------------------------------------+

| Field | Value |

+--------------+--------------------------------------------+

| enabled | True |

| id | 96aaa6d2023e457bafce320a3116fafa |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 5452eb159d5a420187697669fbb0fb31 |

| service_name | cinderv2 |

| service_type | volumev2 |

| url | http://192.168.56.11:8776/v2/%(tenant_id)s |

+--------------+--------------------------------------------+

[root@linux-node1 ~]# openstack endpoint create --region RegionOne volumev2 admin http://192.168.56.11:8776/v2/%\(tenant_id\)s

+--------------+--------------------------------------------+

| Field | Value |

+--------------+--------------------------------------------+

| enabled | True |

| id | d1cfc448bbad4d6db86e5bf79da4fb29 |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 5452eb159d5a420187697669fbb0fb31 |

| service_name | cinderv2 |

| service_type | volumev2 |

| url | http://192.168.56.11:8776/v2/%(tenant_id)s |

+--------------+--------------------------------------------+

[root@linux-node1 ~]# openstack service list # 查看食肉注册成功

+----------------------------------+----------+----------+

| ID | Name | Type |

+----------------------------------+----------+----------+

| 5452eb159d5a420187697669fbb0fb31 | cinderv2 | volumev2 |

| 75791c905b92412ca4390b3970726f75 | glance | image |

| 79eaa15817444e518f08a31555a1cb36 | cinder | volume |

| 84f33de0de8c4da18cfb7f213b63a638 | nova | compute |

| c4dadf8bf2f74561b7408a5089541432 | neutron | network |

| d24e9eacb30a4c9fa6d1109c856f6b11 | keystone | identity |

+----------------------------------+----------+----------+

[root@linux-node1 ~]# openstack endpoint list |grep cinder # 查看食肉注册成功

| 028b68c6a48a49be81760c3359c3be3f | RegionOne | cinderv2 | volumev2 | True | public | http://192.168.56.11:8776/v2/%(tenant_id)s |

| 4cc826bb78f848979303b478d7bb66ab | RegionOne | cinder | volume | True | internal | http://192.168.56.11:8776/v1/%(tenant_id)s |

| 69fa1e44b92a4511b87e6bba900a9d7a | RegionOne | cinder | volume | True | public | http://192.168.56.11:8776/v1/%(tenant_id)s |

| 96aaa6d2023e457bafce320a3116fafa | RegionOne | cinderv2 | volumev2 | True | internal | http://192.168.56.11:8776/v2/%(tenant_id)s |

| c70163f3372449ef8978514aa19d5cad | RegionOne | cinder | volume | True | admin | http://192.168.56.11:8776/v1/%(tenant_id)s |

| d1cfc448bbad4d6db86e5bf79da4fb29 | RegionOne | cinderv2 | volumev2 | True | admin | http://192.168.56.11:8776/v2/%(tenant_id)s |

安装存储节点

在安装存储节点之前,我们需要明白的是,我们在存储节点上使用LVM生成可以存储的卷组,然后通过ISCSI来提供可用存储的卷组供云主机使用。

存储节点我在linux-node2上安装,步骤如下:

- 安装LVM且设置为开机自启动,大多数CentOs都自带LVM命令。

[root@linux-node2 ~]# yum install lvm2

[root@linux-node2 ~]# systemctl enable lvm2-lvmetad.service

[root@linux-node2 ~]# systemctl start lvm2-lvmetad.service

- 创建LVM物理卷与卷组

[root@linux-node2 ~]# pvcreate /dev/sdb

[root@linux-node2 ~]# vgcreate cinder-volumes /dev/sdb

在/etc/lvm/lvm.conf 添加一个过滤器,只接受/dev/sdb设备,拒绝其他所有设备每个过滤器组中的元素都以a开头,即为 accept,或以 r 开头,即为reject,并且包括一个设备名称的正则表达式规则。过滤器组必须以r/.*/结束,过滤所有保留设备。可以使用 :命令:vgs -vvvv 来测试过滤器。

[root@linux-node2 ~]# vim /etc/lvm/lvm.conf

devices { # 切记,一定要在devices下面

filter = [ "a/sda/", "a/sdb/", "r/.*/"]

}

- 安装并配置Cinder

[root@linux-node2 ~]# yum install openstack-cinder targetcli python-keystone

安装完成以后,我们配置下cinder的配置文件,为了方便起见,我们从linux-node1上copy配置文件到linux-node2上。

[root@linux-node1 ~]# scp /etc/cinder/cinder.conf root@192.168.56.12:/etc/cinder/

我们在此基础之上添加几条配置即可。

[root@linux-node2 ~]# vim /etc/cinder/cinder.conf

[DEFAULT]

enabled_backends = lvm

glance_api_servers = http://192.168.56.11:9292

iscsi_ip_address = 192.168.56.12 # 写成本地的IP即可

[lvm]

volume_driver = cinder.volume.drivers.lvm.LVMVolumeDriver

volume_group = cinder-volumes

iscsi_protocol = iscsi

iscsi_helper = lioadm

配置完成后,我们总的来看看这个cinder.conf的配置文件

[root@linux-node2 ~]# egrep "^([a-Z]|\[)" /etc/cinder/cinder.conf

[DEFAULT]

transport_url = rabbit://openstack:openstack@192.168.56.11

glance_api_servers = http://192.168.56.11:9292

auth_strategy = keystone

enabled_backends = lvm

iscsi_ip_address = 192.168.56.12

[database]

connection = mysql+pymysql://cinder:cinder@192.168.56.11/cinder

[keystone_authtoken]

auth_uri = http://192.168.56.11:5000

auth_url = http://192.168.56.11:35357

memcached_servers = 192.168.56.11:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = cinder

password = cinder

[oslo_concurrency]

lock_path = /var/lib/cinder/tmp

[lvm]

volume_driver = cinder.volume.drivers.lvm.LVMVolumeDriver

volume_group = cinder-volumes

iscsi_protocol = iscsi

iscsi_helper = lioadm

4.启动服务

确认无误后,我们启动cinder服务

[root@linux-node2 ~]# systemctl enable openstack-cinder-volume.service target.service

[root@linux-node2 ~]# systemctl start openstack-cinder-volume.service target.service

5.验证存储服务是否正常工作

在linux-node1上操作

[root@linux-node1 ~]# source /root/admin_openrc

[root@linux-node1 ~]# openstack volume service list

+------------------+-----------------------------+------+---------+-------+----------------------------+

| Binary | Host | Zone | Status | State | Updated At |

+------------------+-----------------------------+------+---------+-------+----------------------------+

| cinder-scheduler | linux-node1.example.com | nova | enabled | up | 2017-02-09T13:22:53.000000 |

| cinder-volume | linux-node2.example.com@lvm | nova | enabled | up | 2017-02-09T13:22:51.000000 |

+------------------+-----------------------------+------+---------+-------+----------------------------+

能够识别到host,且状态为UP状态,那么就说明搭建成功了。

创建云硬盘

在上面的步骤操作完成后,我们就可以在Openstack Horizon上查看到卷了。如下图所示,使用demo用户登陆

我们点击右边的创建卷,创建完成就可以给指定的云主机使用了,操作流程如下:

绑定指定主机即可

使用云硬盘

我们给虚拟机添加完云硬盘以后,我们就可以使用它了,我们登陆刚才添加云硬盘的虚拟机,然后执行下面的命令进行格式化分区后使用:

[root@host-192-168-56-101 ~]# fdisk -l

Disk /dev/vda: 3221 MB, 3221225472 bytes, 6291456 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0x00067c89

Device Boot Start End Blocks Id System

/dev/vda1 2048 6291455 3144704 8e Linux LVM

Disk /dev/vdb: 1073 MB, 1073741824 bytes, 2097152 sectors # 刚才添加上的1G硬盘

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk /dev/mapper/centos-root: 3217 MB, 3217031168 bytes, 6283264 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

格式化并挂载

[root@host-192-168-56-101 ~]# mkfs.ext4 /dev/vdb

[root@host-192-168-56-101 ~]# mount /dev/vdb /mnt/

[root@host-192-168-56-101 ~]# df -hT

Filesystem Type Size Used Avail Use% Mounted on

/dev/mapper/centos-root xfs 3.0G 1004M 2.1G 33% /

devtmpfs devtmpfs 235M 0 235M 0% /dev

tmpfs tmpfs 245M 0 245M 0% /dev/shm

tmpfs tmpfs 245M 4.3M 241M 2% /run

tmpfs tmpfs 245M 0 245M 0% /sys/fs/cgroup

tmpfs tmpfs 49M 0 49M 0% /run/user/0

/dev/vdb ext4 976M 2.6M 907M 1% /mnt # 挂载使用了。

[root@host-192-168-56-101 ~]#

在使用中云盘是不可以删除的。但是可以这么干,把这块云硬盘先卸载,然后重新分配到另一个云主机上使用这个云硬盘上的数据。

此时我们回到linux-node2上查看lvm的使用情况,你会发现我们在Openstack创建的云硬盘其实就等同于我们在cinder存储节点上创建同样大小的LVM卷。如下所示:

[root@linux-node2 ~]# lvdisplay

--- Logical volume ---

LV Path /dev/cinder-volumes/volume-c5bbd596-0dab-408f-885f-941fc83e51df

LV Name volume-c5bbd596-0dab-408f-885f-941fc83e51df

VG Name cinder-volumes

LV UUID w3sQDU-MGsW-nJbB-zUxX-HXyH-0wXC-y9z9aH

LV Write Access read/write

LV Creation host, time linux-node2.example.com, 2017-02-09 21:31:57 +0800

LV Status available

# open 0

LV Size 1.00 GiB

Current LE 256

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 8192

Block device 253:0

使用NFS做cinder的后端存储

在公司内部开发或者功能性测试的时候,我们可以考虑使用NFS这种简单的方式来作为cinder的存储解决方案。因为开发或者功能性测试对磁盘IO要求不高,大多数功能的内部交换机,网络都是千兆的,计算一下每秒也能达128MB/S,能够满足大多数日常办公的使用。所以我们在这里就聊聊使用NFS做cinder的后端存储。在生产环境下,业界比较多的是采用Gluster和Ceph作为cinder的存储后端。我们先讲通过NFS来做cinder后端,后面我们会说说如何采用Ceph来做Cinder的后端。

我们可以参考Openstack上的wiki来弄:https://wiki.openstack.org/wiki/How_to_deploy_cinder_with_NFS

我们继续在linux-node2上安装NFS

1.安装配置NFS

[root@linux-node2 ~]# yum -y install nfs-utils rpcbind

[root@linux-node2 ~]# cat /etc/exports

/data/nfs *(rw,no_root_squash) # 把/data/nfs共享出去,

[root@linux-node2 ~]# mkfs.ext4 /dev/sdc

[root@linux-node2 ~]# mount /dev/sdc /data/nfs/

[root@linux-node2 ~]# systemctl restart nfs

[root@linux-node2 ~]# systemctl enabled nfs

[root@linux-node2 ~]# systemctl restart rpcbind

[root@linux-node2 ~]# systemctl enabled rpcbind

[root@linux-node2 ~]# showmount -e localhost

Export list for localhost:

/data/nfs *

2.配置cinder

首先我们需要知道,cinder是通过在cinder.conf配置文件来配置驱动从而使用不同的存储介质的,所以如果我们使用NFS作为存储介质,那么就需要配置成NFS的驱动,那么问题来了,如何找到NFS的驱动呢?请看下面查找步骤:

[root@linux-node2 ~]# cd /usr/lib/python2.7/site-packages/cinder # 切换到cinder的模块包里

[root@linux-node2 cinder]# cd volume/drivers/ # 找到卷的驱动

[root@linux-node2 drivers]# grep Nfs nfs.py # 过滤下Nfs就能找到

class NfsDriver(driver.ExtendVD, remotefs.RemoteFSDriver): # 这个class定义的类就是Nfs的驱动名字了

找到驱动名字以后,我们开始配置cinder.conf

[root@linux-node2 drivers]# tail /etc/cinder/cinder.conf

[DEFAULT]

enabled_backends = nfs # 设置存储后端为NFS

[nfs]

volume_driver = cinder.volume.drivers.nfs.NfsDriver # 写上驱动的名字

nfs_shares_config = /etc/cinder/nfs_shares # 待会创建这个nfs的配置文件

nfs_mount_point_base = $state_path/mnt

创建nfs配置文件

[root@linux-node2 drivers]# cat /etc/cinder/nfs_shares

192.168.56.12:/data/nfs

[root@linux-node2 drivers]# chown root:cinder /etc/cinder/nfs_shares

[root@linux-node2 drivers]# chmod 640 /etc/cinder/nfs_shares

[root@linux-node2 drivers]# ll /etc/cinder/nfs_shares # 确保权限一致

-rw-r-----. 1 root cinder 24 Feb 10 21:57 /etc/cinder/nfs_shares

3.重启cinder服务

[root@linux-node2 drivers]# systemctl restart openstack-cinder-volume

4.在控制节点(linux-node1) 检测是否注册成功的cinder服务

[root@linux-node1 ~]# openstack volume service list

+------------------+-----------------------------+------+---------+-------+----------------------------+

| Binary | Host | Zone | Status | State | Updated At |

+------------------+-----------------------------+------+---------+-------+----------------------------+

| cinder-scheduler | linux-node1.example.com | nova | enabled | up | 2017-02-10T14:01:38.000000 |

| cinder-volume | linux-node2.example.com@lvm | nova | enabled | down | 2017-02-10T14:00:32.000000 | # 这个down是属于正常情况,因为我们把lvm改成了NFS。

| cinder-volume | linux-node2.example.com@nfs | nova | enabled | up | 2017-02-10T14:00:51.000000 |

+------------------+-----------------------------+------+---------+-------+----------------------------+

5.在控制节点创建NFS类型,然后与 linux-node2.example.com@nfs 进行绑定

[root@linux-node1 ~]# source admin_openrc

[root@linux-node1 ~]# cinder type-create NFS

+--------------------------------------+------+-------------+-----------+

| ID | Name | Description | Is_Public |

+--------------------------------------+------+-------------+-----------+

| e7c50520-6d21-4314-a802-09ae8d799252 | NFS | - | True |

+--------------------------------------+------+-------------+-----------+

[root@linux-node1 ~]# cinder type-create ISCSI # 如果又使用LVM又使用NFS的话,那么也创建下它吧。

+--------------------------------------+-------+-------------+-----------+

| ID | Name | Description | Is_Public |

+--------------------------------------+-------+-------------+-----------+

| 80980708-8247-45f5-b8a4-072efb6d5054 | ISCSI | - | True |

+--------------------------------------+-------+-------------+-----------+

进行绑定,把卷与卷类型进行绑定。

我们先对NFS的Volume节点赋一个名字

[root@linux-node2 ~]# vim /etc/cinder/cinder.conf

[nfs]

volume_driver = cinder.volume.drivers.nfs.NfsDriver

nfs_shares_config = /etc/cinder/nfs_shares

nfs_mount_point_base = $state_path/mnt

volume_backend_name = NFS-Storage # 只要添加这个,指定一个名字

进行绑定:

[root@linux-node1 ~]# cinder type-key NFS set volume_backend_name=NFS-Storage

参数解释下:

- type-key 后面写的自己定义的一个名字。

- volume_backend_name 就是我们在上面volume节点的cinder.conf通过volume_backend_name设置的名字

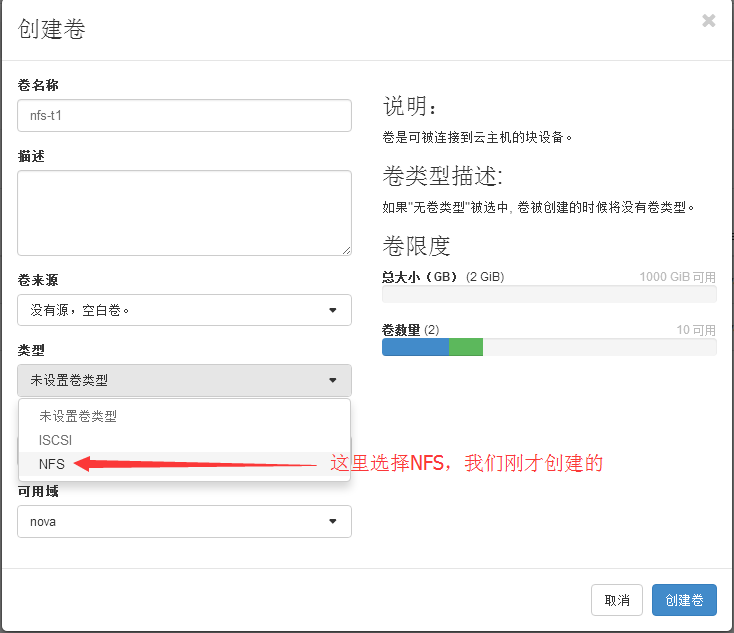

6.创建云硬盘,硬盘类型选择NFS。

7.挂载在指定的云主机上就可以使用这个硬盘了。

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 10年+ .NET Coder 心语,封装的思维:从隐藏、稳定开始理解其本质意义

· .NET Core 中如何实现缓存的预热?

· 从 HTTP 原因短语缺失研究 HTTP/2 和 HTTP/3 的设计差异

· AI与.NET技术实操系列:向量存储与相似性搜索在 .NET 中的实现

· 基于Microsoft.Extensions.AI核心库实现RAG应用

· TypeScript + Deepseek 打造卜卦网站:技术与玄学的结合

· 阿里巴巴 QwQ-32B真的超越了 DeepSeek R-1吗?

· 【译】Visual Studio 中新的强大生产力特性

· 10年+ .NET Coder 心语 ── 封装的思维:从隐藏、稳定开始理解其本质意义

· 【设计模式】告别冗长if-else语句:使用策略模式优化代码结构

2016-02-13 python运维开发之路02

2016-02-13 借助二分法匹配时间戳实现快速查找日志内容