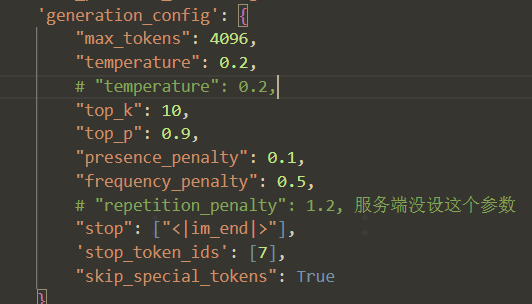

vllm服务推理参数

stop: List of string。【生成文本时,碰到此token就会停下,但结果不会包含此token】

stop_token_ids: List of string。【生成id时,碰到此id就会停止,会包含此id,比如 tokenizer.eos_token_id [im_end]】

最终判断是否停止,是两个的并集【同时考虑】

参考:

https://docs.vllm.ai/en/latest/offline_inference/sampling_params.html

浙公网安备 33010602011771号

浙公网安备 33010602011771号