1、部署Kubernetes云计算平台,至少准备两台服务器,此处为3台

Kubernetes Master节点:192.168.0.111 Kubernetes Node1节点:192.168.0.112 Kubernetes Node2节点:192.168.0.113

2、每台服务器主机都运行如下命令

systemctl stop firewalld systemctl disable firewalld yum -y install ntp ntpdate pool.ntp.org #保证每台服务器时间一致性 systemctl start ntpd systemctl enable ntpd

3、Kubernetes Master 安装与配置 Kubernetes Master节点上安装etcd和Kubernetes、flannel网络,命令如下

yum install kubernetes-master etcd flannel -y

Master /etc/etcd/etcd.conf 配置文件,代码如下

cat>/etc/etcd/etcd.conf<<EOF # [member] ETCD_NAME=etcd1 ETCD_DATA_DIR="/data/etcd" #ETCD_WAL_DIR="" #ETCD_SNAPSHOT_COUNT="10000" #ETCD_HEARTBEAT_INTERVAL="100" #ETCD_ELECTION_TIMEOUT="1000" ETCD_LISTEN_PEER_URLS="http://192.168.0.111:2380" ETCD_LISTEN_CLIENT_URLS="http://192.168.0.111:2379,http://127.0.0.1:2379" ETCD_MAX_SNAPSHOTS="5" #ETCD_MAX_WALS="5" #ETCD_CORS="" # #[cluster] ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.0.111:2380" # if you use different ETCD_NAME (e.g. test), set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..." ETCD_INITIAL_CLUSTER="etcd1=http://192.168.0.111:2380,etcd2=http://192.168.0.112:2380,etcd3=http://192.168.0.113:2380" #ETCD_INITIAL_CLUSTER_STATE="new" #ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_ADVERTISE_CLIENT_URLS="http://192.168.0.111:2379" #ETCD_DISCOVERY="" #ETCD_DISCOVERY_SRV="" #ETCD_DISCOVERY_FALLBACK="proxy" #ETCD_DISCOVERY_PROXY="" # #[proxy] #ETCD_PROXY="off" #ETCD_PROXY_FAILURE_WAIT="5000" #ETCD_PROXY_REFRESH_INTERVAL="30000" #ETCD_PROXY_DIAL_TIMEOUT="1000" #ETCD_PROXY_WRITE_TIMEOUT="5000" #ETCD_PROXY_READ_TIMEOUT="0" # #[security] #ETCD_CERT_FILE="" #ETCD_KEY_FILE="" #ETCD_CLIENT_CERT_AUTH="false" #ETCD_TRUSTED_CA_FILE="" #ETCD_PEER_CERT_FILE="" #ETCD_PEER_KEY_FILE="" #ETCD_PEER_CLIENT_CERT_AUTH="false" #ETCD_PEER_TRUSTED_CA_FILE="" # #[logging] #ETCD_DEBUG="false" # examples for -log-package-levels etcdserver=WARNING,security=DEBUG #ETCD_LOG_PACKAGE_LEVELS="" EOF mkdir -p /data/etcd/;chmod 757 -R /data/etcd/ systemctl restart etcd.service

Master /etc/kubernetes/config配置文件,命令如下:

cat>/etc/kubernetes/config<<EOF # kubernetes system config # The following values are used to configure various aspects of all # kubernetes i, including # kube-apiserver.service # kube-controller-manager.service # kube-scheduler.service # kubelet.service # kube-proxy.service # logging to stderr means we get it in the systemd journal KUBE_LOGTOSTDERR="--logtostderr=true" # journal message level, 0 is debug KUBE_LOG_LEVEL="--v=0" # Should this cluster be allowed to run privileged docker containers KUBE_ALLOW_PRIV="--allow-privileged=false" # How the controller-manager, scheduler, and proxy find the apiserver KUBE_MASTER="--master=http://192.168.0.111:8080" EOF

将Kubernetes 的apiserver进程的服务地址告诉kubernetes的controller-manager,scheduler,proxy进程。

Master /etc/kubernetes/apiserver 配置文件,代码如下:

cat>/etc/kubernetes/apiserver<<EOF # kubernetes system config # The following values are used to configure the kube-apiserver # The address on the local server to listen to. KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0" # The port on the local server to listen on. KUBE_API_PORT="--port=8080" # Port minions listen on KUBELET_PORT="--kubelet-port=10250" # Comma separated list of nodes in the etcd cluster KUBE_ETCD_SERVERS="--etcd-servers=http://192.168.0.111:2379,http://192.168.0.112:2379,http://192.168.0.113:2379" # Address range to use for i KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16" # default admission control policies #KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota" KUBE_ADMISSION_CONTROL="--admission_control=NamespaceLifecycle,NamespaceExists,LimitRanger,ResourceQuota" # Add your own! KUBE_API_ARGS="" EOF for i in etcd kube-apiserver kube-controller-manager kube-scheduler ;do systemctl restart $i ;systemctl enable $i ;systemctl status $i;done

启动Kubernetes Master节点上的etcd, apiserver, controller-manager和scheduler进程及状态

4、Kubernetes Node1安装配置

在Kubenetes Node1节点上安装flannel、docker和Kubernetes

yum install kubernetes-node etcd docker flannel*rhsm* -y

在Node1节点上配置

vim node1 /etc/etcd/etcd.conf 配置如下

cat>/etc/etcd/etcd.conf<<EOF ########## # [member] ETCD_NAME=etcd2 ETCD_DATA_DIR="/data/etcd" #ETCD_WAL_DIR="" #ETCD_SNAPSHOT_COUNT="10000" #ETCD_HEARTBEAT_INTERVAL="100" #ETCD_ELECTION_TIMEOUT="1000" ETCD_LISTEN_PEER_URLS="http://192.168.0.112:2380" ETCD_LISTEN_CLIENT_URLS="http://192.168.0.112:2379,http://127.0.0.1:2379" ETCD_MAX_SNAPSHOTS="5" #ETCD_MAX_WALS="5" #ETCD_CORS="" #[cluster] ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.0.112:2380" # if you use different ETCD_NAME (e.g. test), set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..." ETCD_INITIAL_CLUSTER="etcd1=http://192.168.0.111:2380,etcd2=http://192.168.0.112:2380,etcd3=http://192.168.0.113:2380" #ETCD_INITIAL_CLUSTER_STATE="new" #ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_ADVERTISE_CLIENT_URLS="http://192.168.0.112:2379" #ETCD_DISCOVERY="" #ETCD_DISCOVERY_SRV="" #ETCD_DISCOVERY_FALLBACK="proxy" #ETCD_DISCOVERY_PROXY="" #[proxy] #ETCD_PROXY="off" #ETCD_PROXY_FAILURE_WAIT="5000" #ETCD_PROXY_REFRESH_INTERVAL="30000" #ETCD_PROXY_DIAL_TIMEOUT="1000" #ETCD_PROXY_WRITE_TIMEOUT="5000" #ETCD_PROXY_READ_TIMEOUT="0" # #[security] #ETCD_CERT_FILE="" #ETCD_KEY_FILE="" #ETCD_CLIENT_CERT_AUTH="false" #ETCD_TRUSTED_CA_FILE="" #ETCD_PEER_CERT_FILE="" #ETCD_PEER_KEY_FILE="" #ETCD_PEER_CLIENT_CERT_AUTH="false" #ETCD_PEER_TRUSTED_CA_FILE="" # #[logging] #ETCD_DEBUG="false" # examples for -log-package-levels etcdserver=WARNING,security=DEBUG #ETCD_LOG_PACKAGE_LEVELS="" EOF mkdir -p /data/etcd/;chmod 757 -R /data/etcd/;service etcd restart

配置信息告诉flannel进程etcd服务的位置以及在etcd上网络配置信息的节点位置。

Node1 kubernetes配置 vim 配置 /etc/kubernetes/config

cat>/etc/kubernetes/config<<EOF # kubernetes system config # The following values are used to configure various aspects of all # kubernetes services, including # kube-apiserver.service # kube-controller-manager.service # kube-scheduler.service # kubelet.service # kube-proxy.service # logging to stderr means we get it in the systemd journal KUBE_LOGTOSTDERR="--logtostderr=true" # journal message level, 0 is debug KUBE_LOG_LEVEL="--v=0" # Should this cluster be allowed to run privileged docker containers KUBE_ALLOW_PRIV="--allow-privileged=false" # How the controller-manager, scheduler, and proxy find the apiserver KUBE_MASTER="--master=http://192.168.0.111:8080" EOF

配置/etc/kubernetes/kubelet代码如下

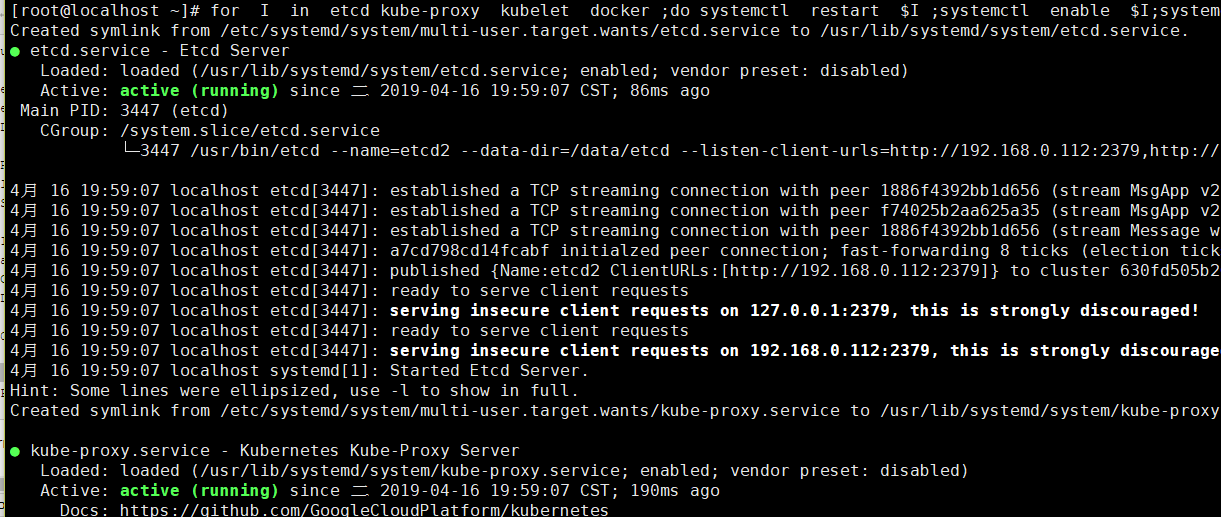

cat>/etc/kubernetes/kubelet<<EOF ### # kubernetes kubelet (minion) config # The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces) KUBELET_ADDRESS="--address=0.0.0.0" # The port for the info server to serve on KUBELET_PORT="--port=10250" # You may leave this blank to use the actual hostname KUBELET_HOSTNAME="--hostname-override=192.168.0.112" # location of the api-server KUBELET_API_SERVER="--api-servers=http://192.168.0.111:8080" # pod infrastructure container #KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=192.168.0.123:5000/centos68" KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=registry.access.redhat.com/rhel7/pod-infrastructure:latest" # Add your own! KUBELET_ARGS="" EOF for I in etcd kube-proxy kubelet docker ;do systemctl restart $I ;systemctl enable $I;systemctl status $I ;done iptables -P FORWARD ACCEPT

分别启动Kubernetes Node节点上kube-proxy、kubelet、docker、flanneld进程并查看其状态

4、在Kubernetes Node2节点上安装flannel、docker和Kubernetes

yum install kubernetes-node etcd docker flannel *rhsm* -y

Node2 节点配置Etcd配置

Node2 /etc/etcd/etcd.config 配置flannel内容如下:

cat>/etc/etcd/etcd.conf<<EOF ########## # [member] ETCD_NAME=etcd3 ETCD_DATA_DIR="/data/etcd" #ETCD_WAL_DIR="" #ETCD_SNAPSHOT_COUNT="10000" #ETCD_HEARTBEAT_INTERVAL="100" #ETCD_ELECTION_TIMEOUT="1000" ETCD_LISTEN_PEER_URLS="http://192.168.0.113:2380" ETCD_LISTEN_CLIENT_URLS="http://192.168.0.113:2379,http://127.0.0.1:2379" ETCD_MAX_SNAPSHOTS="5" #ETCD_MAX_WALS="5" #ETCD_CORS="" #[cluster] ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.0.113:2380" # if you use different ETCD_NAME (e.g. test), set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..." ETCD_INITIAL_CLUSTER="etcd1=http://192.168.0.111:2380,etcd2=http://192.168.0.112:2380,etcd3=http://192.168.0.113:2380" #ETCD_INITIAL_CLUSTER_STATE="new" #ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_ADVERTISE_CLIENT_URLS="http://192.168.0.113:2379" #ETCD_DISCOVERY="" #ETCD_DISCOVERY_SRV="" #ETCD_DISCOVERY_FALLBACK="proxy" #ETCD_DISCOVERY_PROXY="" #[proxy] #ETCD_PROXY="off" #ETCD_PROXY_FAILURE_WAIT="5000" #ETCD_PROXY_REFRESH_INTERVAL="30000" #ETCD_PROXY_DIAL_TIMEOUT="1000" #ETCD_PROXY_WRITE_TIMEOUT="5000" #ETCD_PROXY_READ_TIMEOUT="0" # #[security] #ETCD_CERT_FILE="" #ETCD_KEY_FILE="" #ETCD_CLIENT_CERT_AUTH="false" #ETCD_TRUSTED_CA_FILE="" #ETCD_PEER_CERT_FILE="" #ETCD_PEER_KEY_FILE="" #ETCD_PEER_CLIENT_CERT_AUTH="false" #ETCD_PEER_TRUSTED_CA_FILE="" # #[logging] #ETCD_DEBUG="false" # examples for -log-package-levels etcdserver=WARNING,security=DEBUG #ETCD_LOG_PACKAGE_LEVELS="" EOF mkdir -p /data/etcd/;chmod 757 -R /data/etcd/;service etcd restart

Node2 Kubernetes 配置

vim /etc/kubernete/config

cat>/etc/kubernetes/config<<EOF # kubernetes system config # The following values are used to configure various aspects of all # kubernetes services, including # kube-apiserver.service # kube-controller-manager.service # kube-scheduler.service # kubelet.service # kube-proxy.service # logging to stderr means we get it in the systemd journal KUBE_LOGTOSTDERR="--logtostderr=true" # journal message level, 0 is debug KUBE_LOG_LEVEL="--v=0" # Should this cluster be allowed to run privileged docker containers KUBE_ALLOW_PRIV="--allow-privileged=false" # How the controller-manager, scheduler, and proxy find the apiserver KUBE_MASTER="--master=http://192.168.0.111:8080" EOF

配置文件/etc/kubernetes/kubelet 代码如下

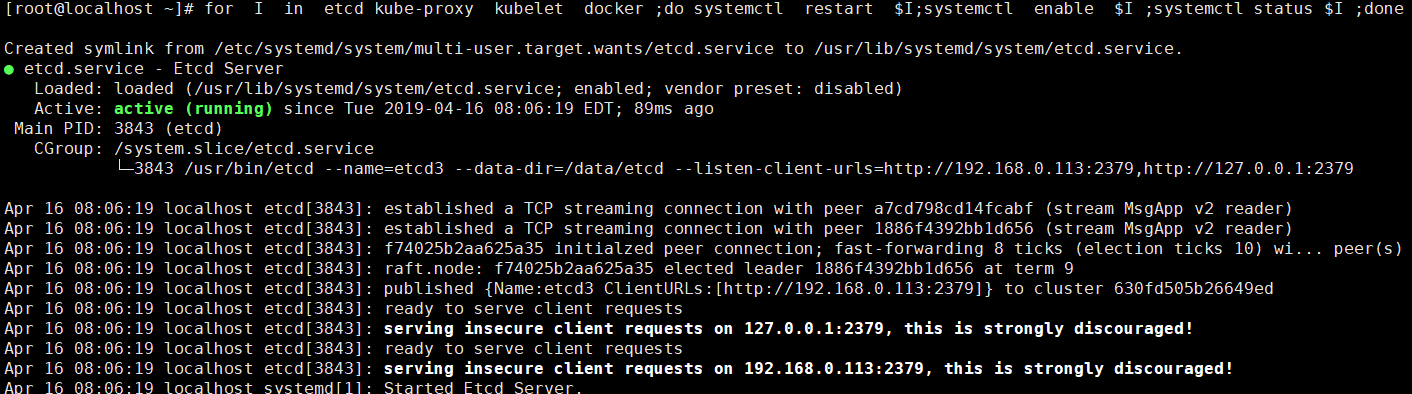

cat>/etc/kubernetes/kubelet<<EOF ### # kubernetes kubelet (minion) config # The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces) KUBELET_ADDRESS="--address=0.0.0.0" # The port for the info server to serve on KUBELET_PORT="--port=10250" # You may leave this blank to use the actual hostname KUBELET_HOSTNAME="--hostname-override=192.168.0.113" # location of the api-server KUBELET_API_SERVER="--api-servers=http://192.168.0.111:8080" # pod infrastructure container #KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=192.168.0.123:5000/centos68" KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=registry.access.redhat.com/rhel7/pod-infrastructure:latest" # Add your own! KUBELET_ARGS="" EOF for I in etcd kube-proxy kubelet docker ;do systemctl restart $I;systemctl enable $I ;systemctl status $I ;done iptables -P FORWARD ACCEPT

此时可以在Master节点上使用kubectl get nodes 查看加入到kubernetes集群的两个Node节点:此时kubernetes集群环境搭建完成

5、Kubernetes flanneld网络配置

Kubernetes整个集群所有的服务器(Master minion)配置Flanneld,/etc/sysconfig/flanneld 代码如下

cat>/etc/sysconfig/flanneld<<EOF # Flanneld configuration options # etcd url location. Point this to the server where etcd runs FLANNEL_ETCD_ENDPOINTS="http://192.168.0.111:2379" # etcd config key. This is the configuration key that flannel queries # For address range assignment FLANNEL_ETCD_PREFIX="/atomic.io/network" # Any additional options that you want to pass #FLANNEL_OPTIONS="" EOF service flanneld restart

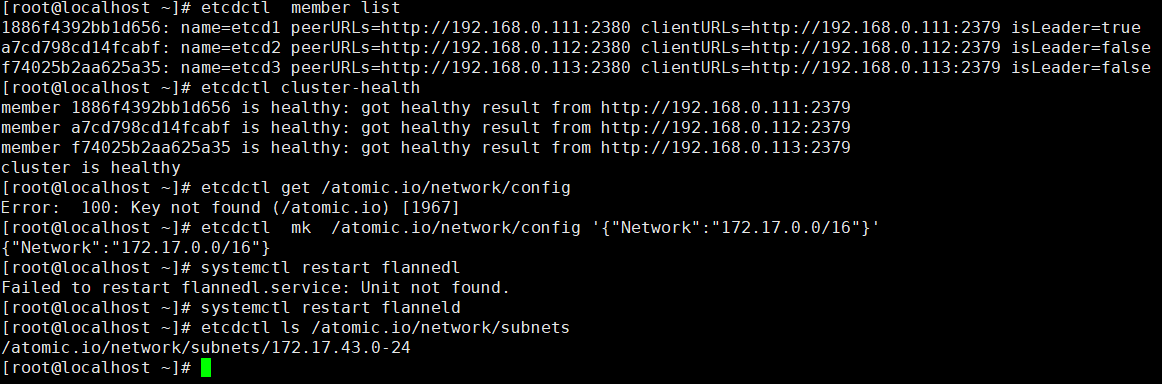

在Master 服务器,测试Etcd集群是否正常,同时在Etcd配置中心创建flannel网络配置

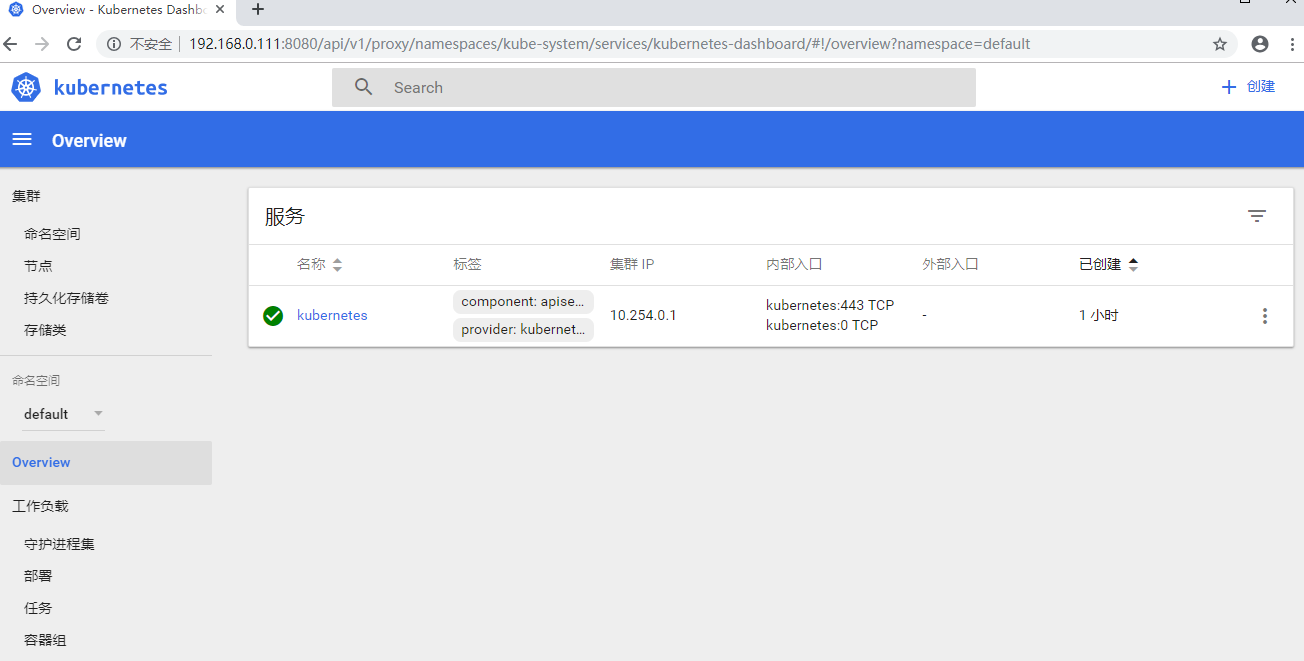

6、Kubernetes Dashboard UI界面

Kubernetes实现的最重要的工作是对Docker容器集群统一的管理和调度,通常使用命令行来操作Kubernetes集群及各个节点,命令行操作非常不方便,如果使用UI界面来可视化操作,会更加方便的管理和维护。

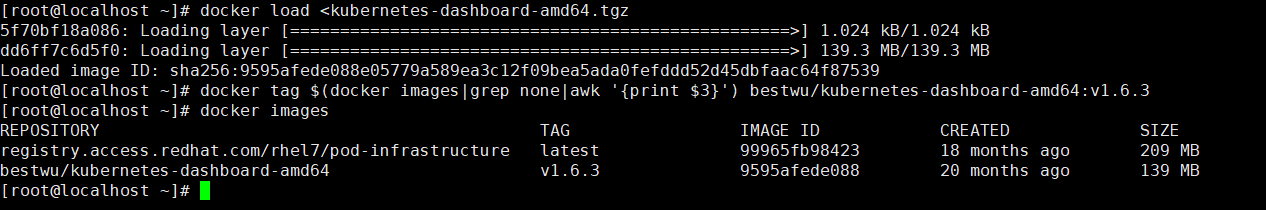

在Node节点提前导入两个列表镜像 如下为配置kubernetes dashboard完整过程

1 docker load <pod-infrastructure.tgz,将导入的pod镜像名称修改,命令如下:

docker tag $(docker images|grep none|awk '{print $3}') registry.access.redhat.com/rhel7/pod-infrastructure

2 docker load <kubernetes-dashboard-amd64.tgz,将导入的pod镜像名称修改,命令如下:

docker tag $(docker images|grep none|awk '{print $3}') bestwu/kubernetes-dashboard-amd64:v1.6.3

然后在Master端,创建dashboard-controller.yaml,代码如下

apiVersion: extensions/v1beta1 kind: Deployment metadata: name: kubernetes-dashboard namespace: kube-system labels: k8s-app: kubernetes-dashboard kubernetes.io/cluster-service: "true" spec: selector: matchLabels: k8s-app: kubernetes-dashboard template: metadata: labels: k8s-app: kubernetes-dashboard annotations: scheduler.alpha.kubernetes.io/critical-pod: '' scheduler.alpha.kubernetes.io/tolerations: '[{"key":"CriticalAddonsOnly", "operator":"Exists"}]' spec: containers: - name: kubernetes-dashboard image: bestwu/kubernetes-dashboard-amd64:v1.6.3 resources: # keep request = limit to keep this container in guaranteed class limits: cpu: 100m memory: 50Mi requests: cpu: 100m memory: 50Mi ports: - containerPort: 9090 args: - --apiserver-host=http://192.168.0.111:8080 livenessProbe: httpGet: path: / port: 9090 initialDelaySeconds: 30 timeoutSeconds: 30

创建dashboard-service.yaml,代码如下:

apiVersion: v1 kind: Service metadata: name: kubernetes-dashboard namespace: kube-system labels: k8s-app: kubernetes-dashboard kubernetes.io/cluster-service: "true" spec: selector: k8s-app: kubernetes-dashboard ports: - port: 80 targetPort: 9090

创建dashboard dashborad pods模块:

kubectl create -f dashboard-controller.yaml

kubectl create -f dashboard-service.yaml

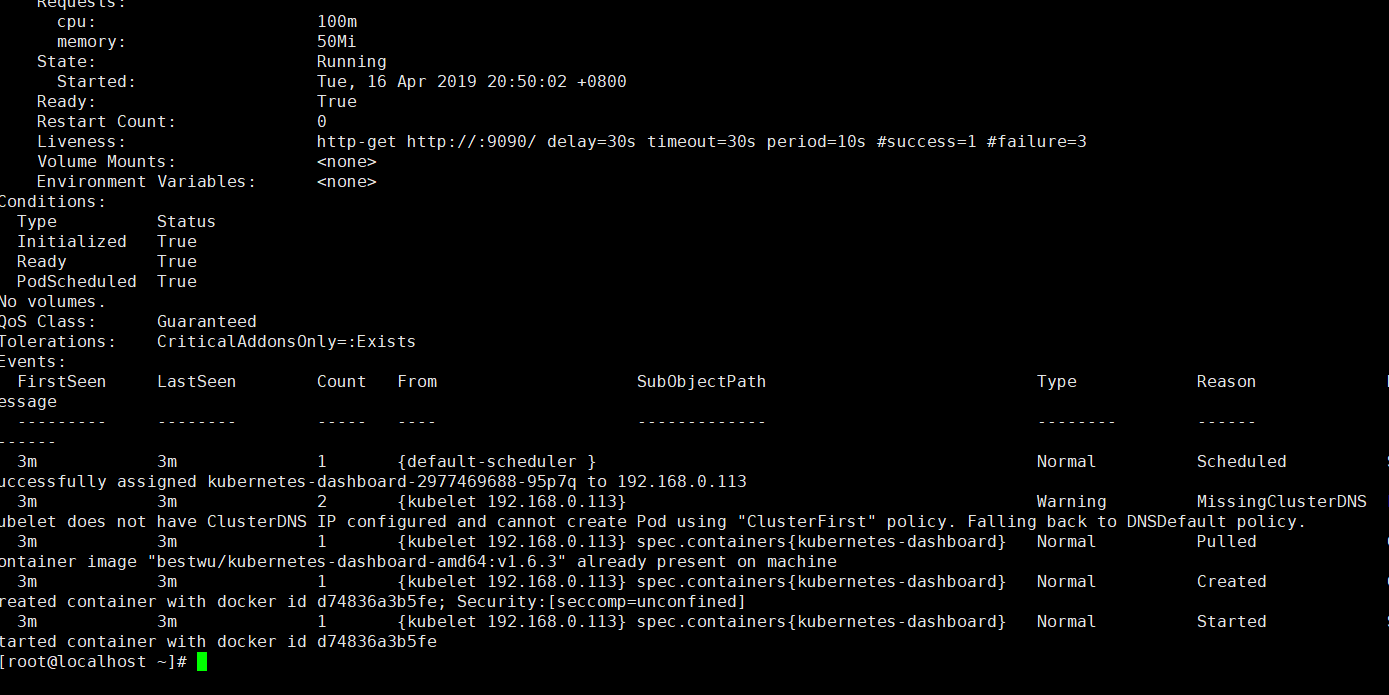

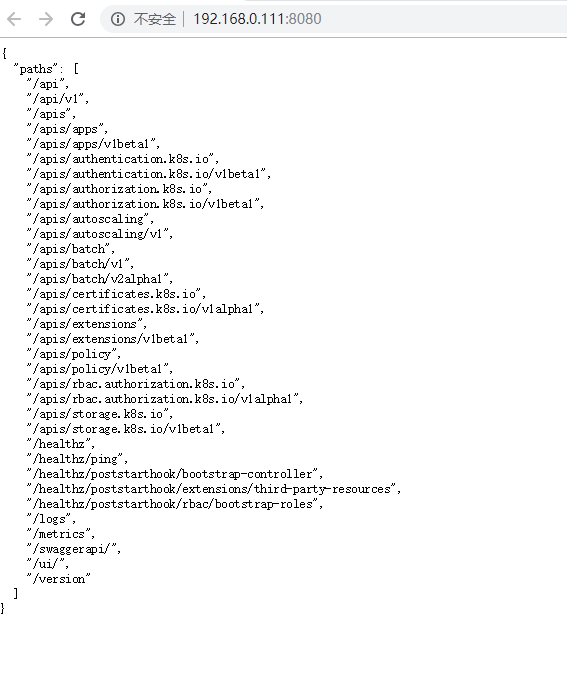

创建完成后,查看Pods和Service的详细信息:

kubectl get namespace kubectl get deployment --all-namespaces kubectl get svc --all-namespaces kubectl get pods --all-namespaces kubectl get pod -o wide --all-namespaces kubectl describe service/kubernetes-dashboard --namespace="kube-system" kubectl describe pod/kubernetes-dashboard-468712587-754dc --namespace="kube-system" kubectl delete pod/kubernetes-dashboard-468712587-754dc --namespace="kube-system"--grace-period=0 --force

wget http://mirror.centos.org/centos/7/os/x86_64/Packages/python-rhsm-certificates-1.19.10-1.el7_4.x86_64.rpm

rpm2cpio python-rhsm-certificates-1.19.10-1.el7_4.x86_64.rpm | cpio -iv --to-stdout ./etc/rhsm/ca/redhat-uep.pem | tee /etc/rhsm/ca/redhat-uep.pem

注释:rpm2cpio命令用于将rpm软件包转换为cpio格式的文件

cpio命令主要是用来建立或者还原备份档的工具程序,cpio命令可以复制文件到归档包中,或者从归档包中复制文件。

-i 还原备份档

-v 详细显示指令的执行过程