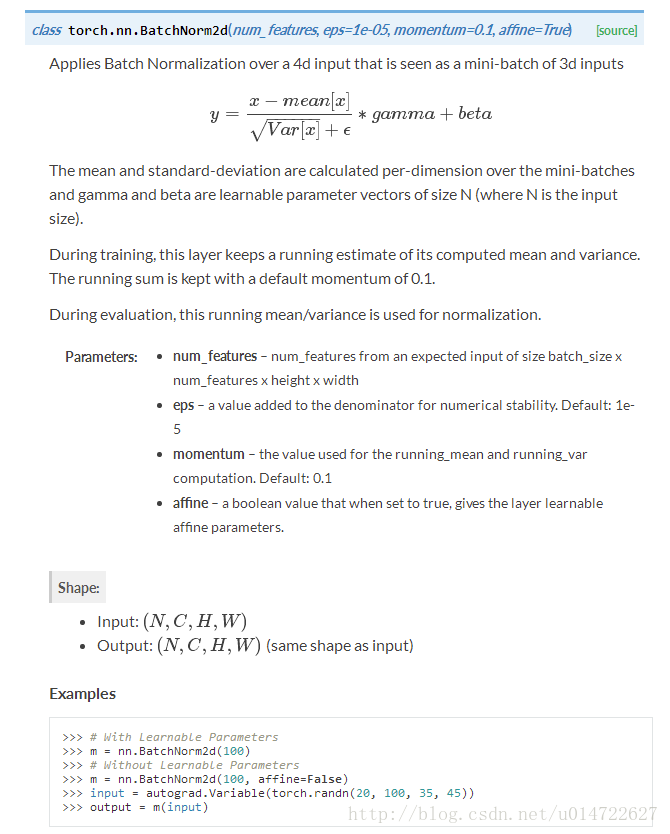

Pytorch-nn.BatchNorm2d()

Pytorch官方文档:

测试代码:

转自:https://blog.csdn.net/tmk_01/article/details/80679549

import torch

import torch.nn as nn

m = nn.BatchNorm2d(2,affine=True) #weight(gamma)和bias(beta)将被使用

input = torch.randn(1,2,3,4)

output = m(input)

print("输入图片:")

print(input)

print("归一化权重(公式中的gamma):")

print(m.weight)

print("归一化偏置(公式中的beta):")

print(m.bias)

print("归一化的输出:")

print(output)

print("输出的尺度:")

print(output.size())

# i = torch.randn(1,1,2)

print("输入的第一个维度:")

print(input[0][0])

firstDimenMean = torch.Tensor.mean(input[0][0])

firstDimenVar= torch.Tensor.var(input[0][0],False) #Bessel's Correction贝塞尔校正不会被使用

print(m.eps)

print("输入的第一个维度平均值:")

print(firstDimenMean)

print("输入的第一个维度方差:")

print(firstDimenVar)

bacthnormone = \

((input[0][0][0][0] - firstDimenMean)/(torch.pow(firstDimenVar+m.eps,0.5) ))\

* m.weight[0] + m.bias[0]

print(bacthnormone)

代码运行结果:

输入图片:

tensor([[[[-1.1622, -0.9170, -0.6798, -0.0270],

[ 0.2687, -1.6046, -0.2142, -0.3561],

[ 0.2908, -0.1012, 1.3594, 1.1316]],

[[ 0.4689, 1.4049, 1.2324, -1.3721],

[-0.1498, -0.3207, 0.5072, -1.2563],

[ 1.5934, -0.8010, 0.1270, 0.5993]]]])

归一化权重(公式中的gamma):

Parameter containing:

tensor([0.8681, 0.7207], requires_grad=True)

归一化偏置(公式中的beta):

Parameter containing:

tensor([0., 0.], requires_grad=True)

归一化的输出:

tensor([[[[-1.0344, -0.7794, -0.5326, 0.1463],

[ 0.4538, -1.4945, -0.0484, -0.1960],

[ 0.4767, 0.0691, 1.5881, 1.3512]],

[[ 0.2279, 0.9400, 0.8088, -1.1729],

[-0.2429, -0.3729, 0.2570, -1.0848],

[ 1.0834, -0.7384, -0.0323, 0.3271]]]],

grad_fn=<ThnnBatchNormBackward>)

输出的尺度:

torch.Size([1, 2, 3, 4])

输入的第一个维度:

tensor([[-1.1622, -0.9170, -0.6798, -0.0270],

[ 0.2687, -1.6046, -0.2142, -0.3561],

[ 0.2908, -0.1012, 1.3594, 1.1316]])

1e-05

输入的第一个维度平均值:

tensor(-0.1676)

输入的第一个维度方差:

tensor(0.6967)

tensor(-1.0344, grad_fn=<ThAddBackward>)

BatchNorm深度理解可参考:

https://zhuanlan.zhihu.com/p/30922689

4.Normalizing activations in a network

5.Fitting Batch Norm into a neural network

6.Why does Batch Norm work?

7. Batch Norm at test time

浙公网安备 33010602011771号

浙公网安备 33010602011771号