Jenkins学习笔记

1:Jenkins管理

1:Jenkins的介绍与部署

Jenkins是一个自动化工具,目前发颤已经超过了15个年头了,是一款比较成熟的CI/CD工具,能够轻松实现自动化集成,发布,当我们建立好流水线之后期间无需专业人员的接入,开发人员随时可以发布配置

部分应用场景:

1:集成svc/git客户端实现源代码下载检出

2:集成maven/ant/gradle/npm等构建工具实现源码编译打包单元测试

3:集成sonarqube对代码进行质量检查(坏味道,复杂度,新增bug等)

4:集成saltstack/ansible实现自动化部署发布

5:集成Jmeter/Soar/Kubernetes/......

6:可以自定义插件或者脚本通过Jenkins传参运行

7:可以说Jenkkins比较灵活,插件比较丰富,日常运维工作都可以自动化完成

# 部署Jenkins

1:安装JDK(可以是jdk8也可以是jdk11)

[root@cdk-server ~]# yum install -y java-11-openjdk java-11-openjdk-devel.x86_64

2:安装Jenkins

[root@cdk-server ~]# yum install -y https://mirror.tuna.tsinghua.edu.cn/jenkins/redhat-stable/jenkins-2.361.4-1.1.noarch.rpm

3:Jenkins安装方式总结

---:基于WAR包部署(需要一个Java服务器,比如Tomcat,或者直接通过java -jar jenkins.war都可以)

---:基于Docker部署(最快且最方便的方法,一条Docker命令即可启动一个Jenkins容器)

---:基于Linux/Mac/Windows各种软件安装用具安装

4:启动Jenkins(这里因为是yum部署,所以应该自带了systemd的管理)

# 它的启动其实也是通过java -jar启动的

# 它的配置在/etc/sysconfig/jenkins内,我们可以修改参数

[root@cdk-server ~]# systemctl enable jenkins.service --now

5:修改配置(镜像源)

# 我们需要在/var/lib/jenkins/updates这个配置内修改一下它的插件源,改成我们国内的

[root@cdk-server ~]# cp default.json default.json-bak

[root@cdk-server ~]# sed -i s#https://updates.jenkins.io/download#https://mirrors.tuna.tsinghua.edu.cn/jenkins#g default.json

[root@cdk-server ~]# sed -i s#http://www.google.com#https://www.baidu.com#g default.json default.json

# 重启Jenkins

[root@cdk-server updates]# systemctl restart jenkins.service

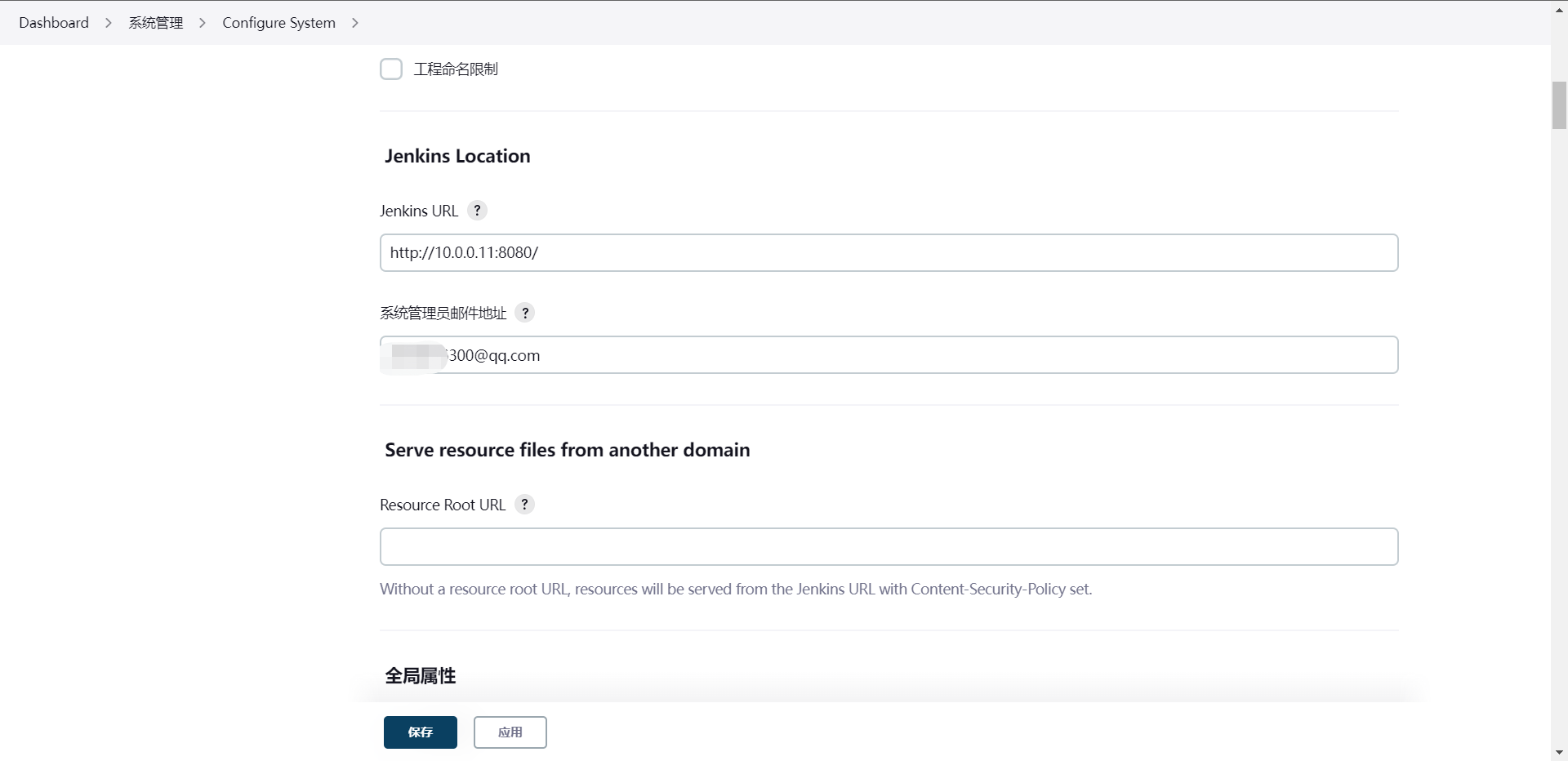

6:访问Jenkins(公网可能涉及反向代理和LB等操作,我这里是本地,我就直接IP访问了)

http://10.0.0.11:8080

从这里可以看出它给我们一个初始化密码的文件路径,我们去获取一下这个密码

[root@cdk-server updates]# cat /var/lib/jenkins/secrets/initialAdminPassword

910355f3a0474f3abdc313c00a93570a

拿到这个密码之后我们去初始化一下Jenkins

7:开始初始化Jenkins

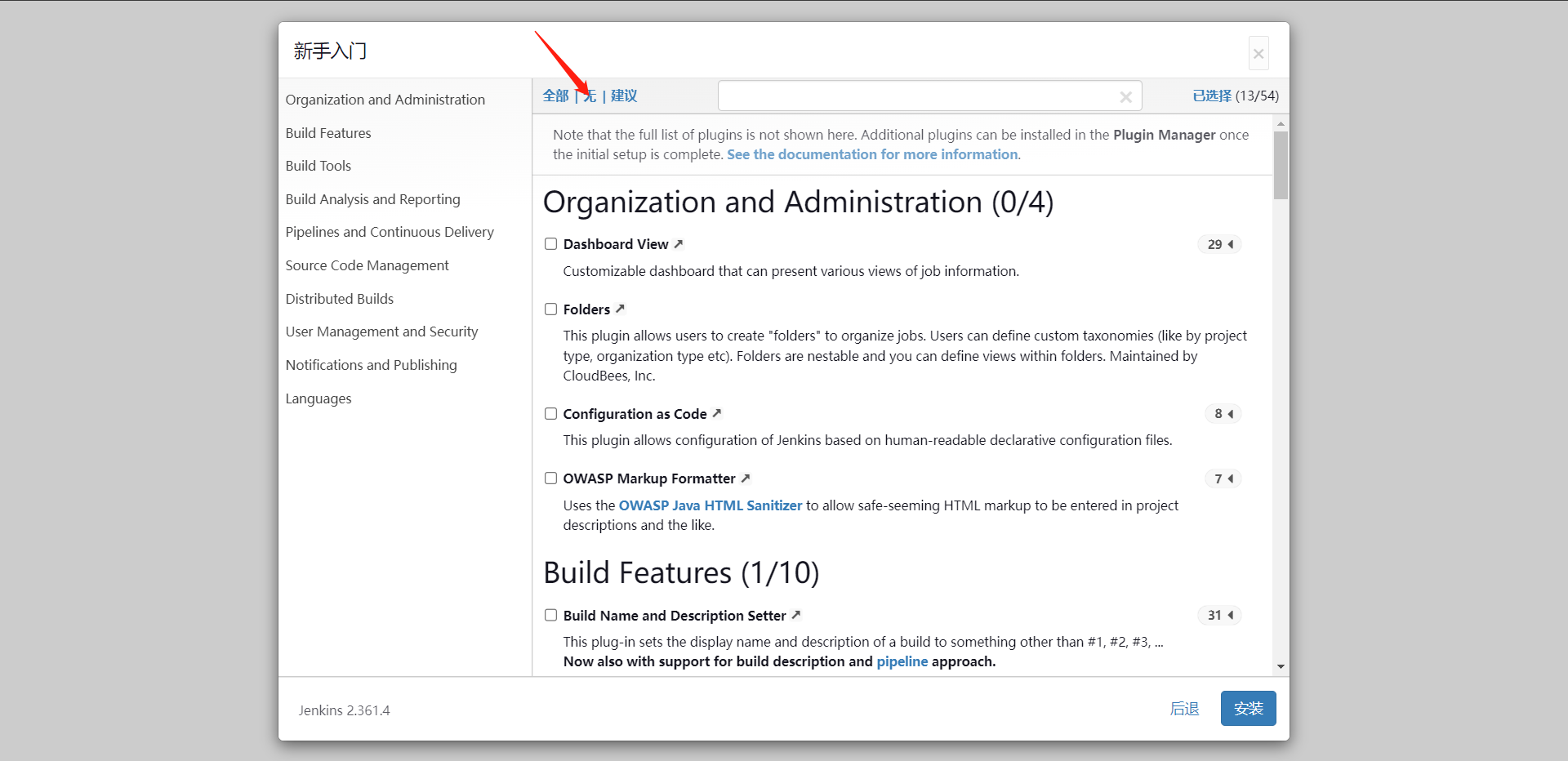

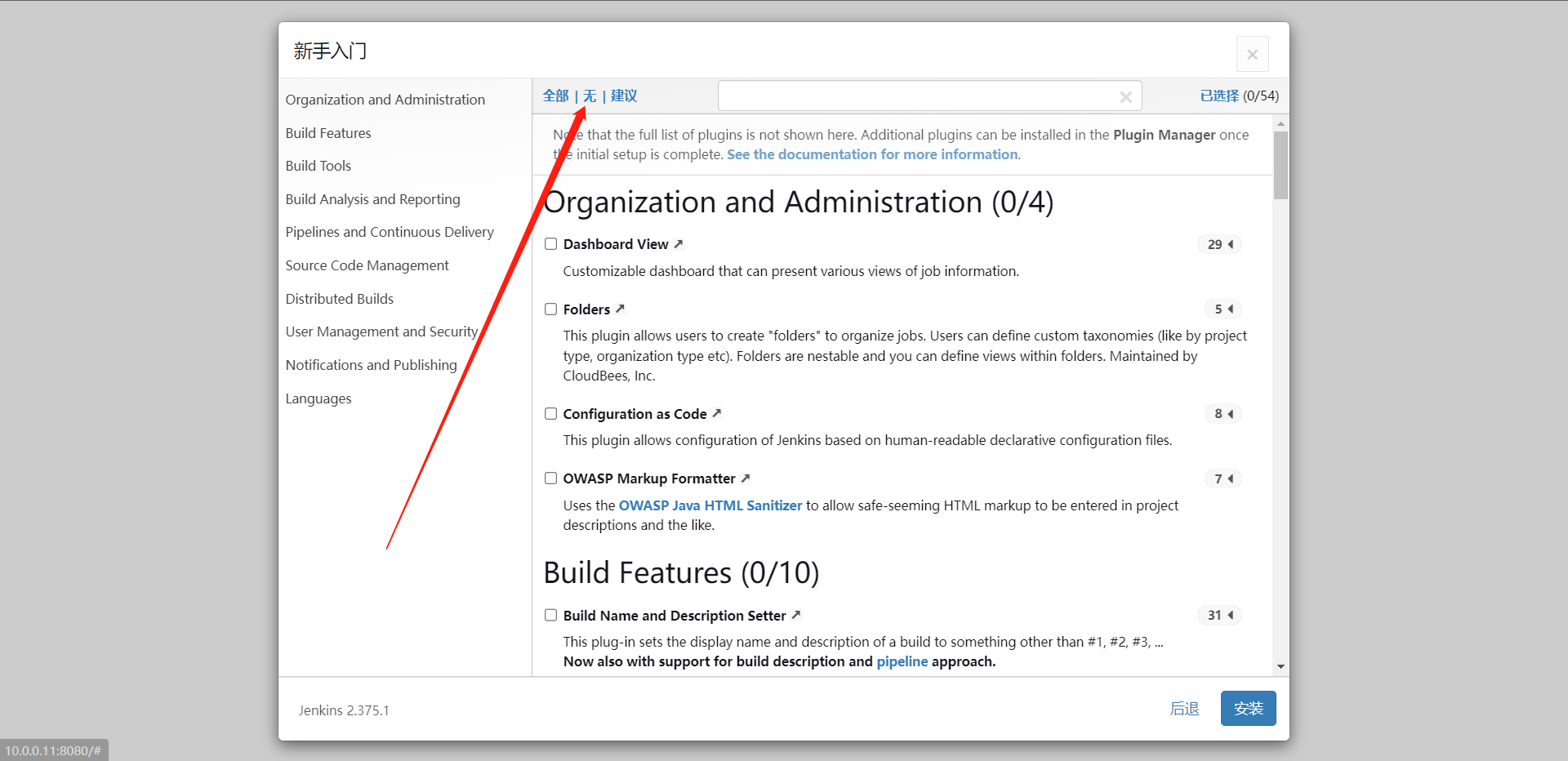

这个时候我们选择`选择插件来安装`这个选项,

我们点击`无`不装任何插件(因为耗时间),点击安装

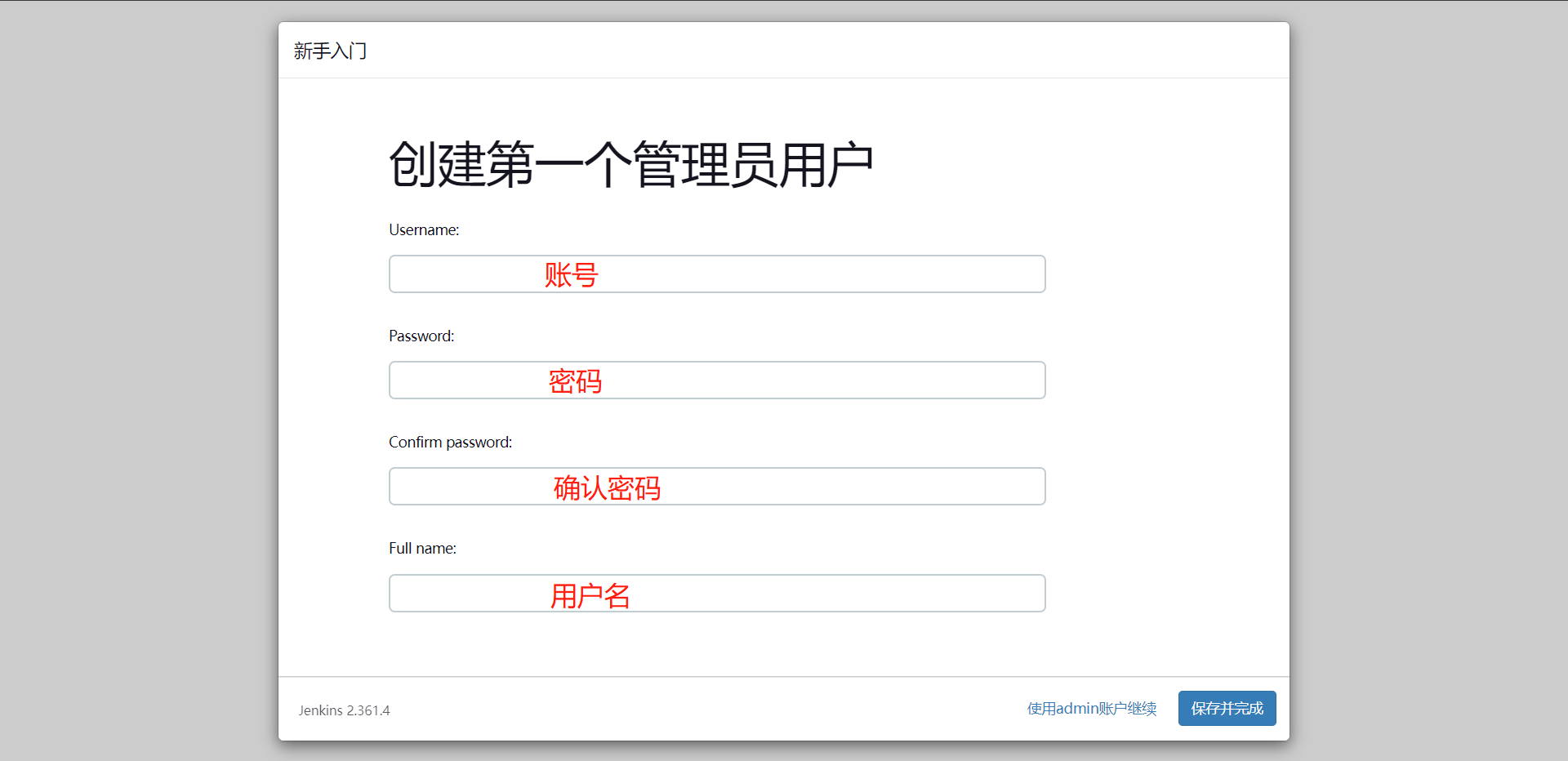

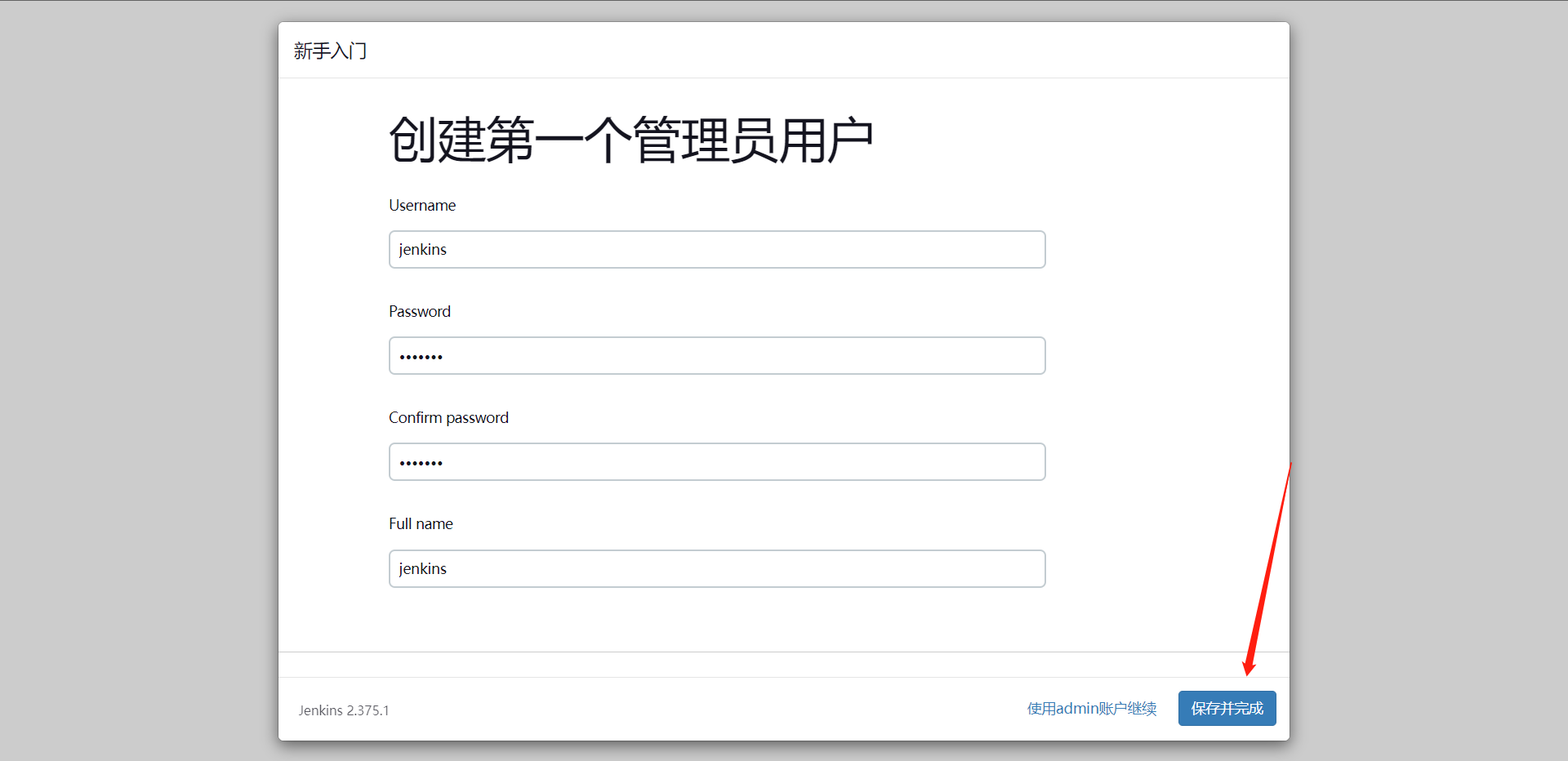

输入完成之后点击保存并完成

这里是配置访问地址(如果用的是域名,这里就会显示域名),点击保存并完成

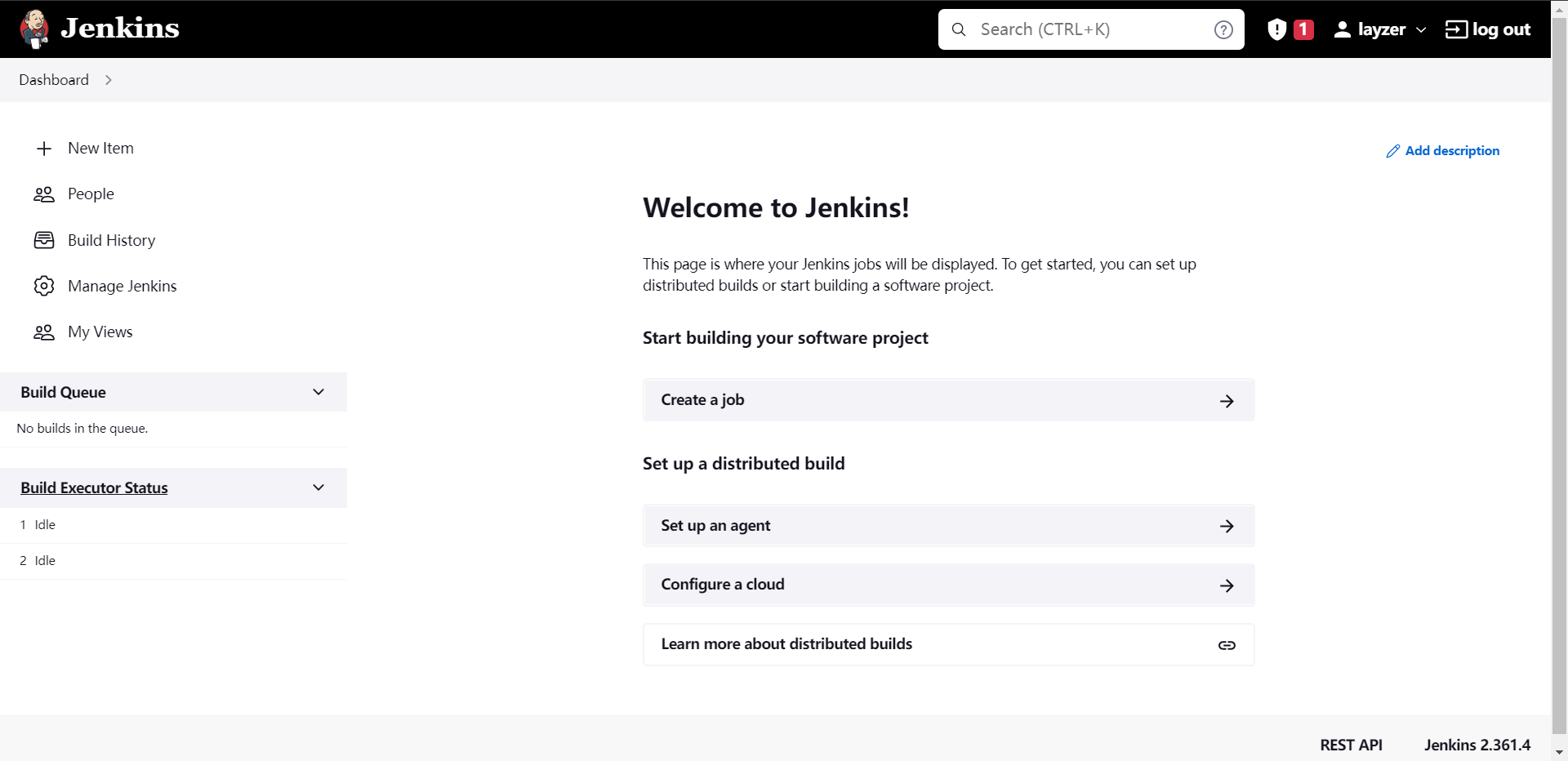

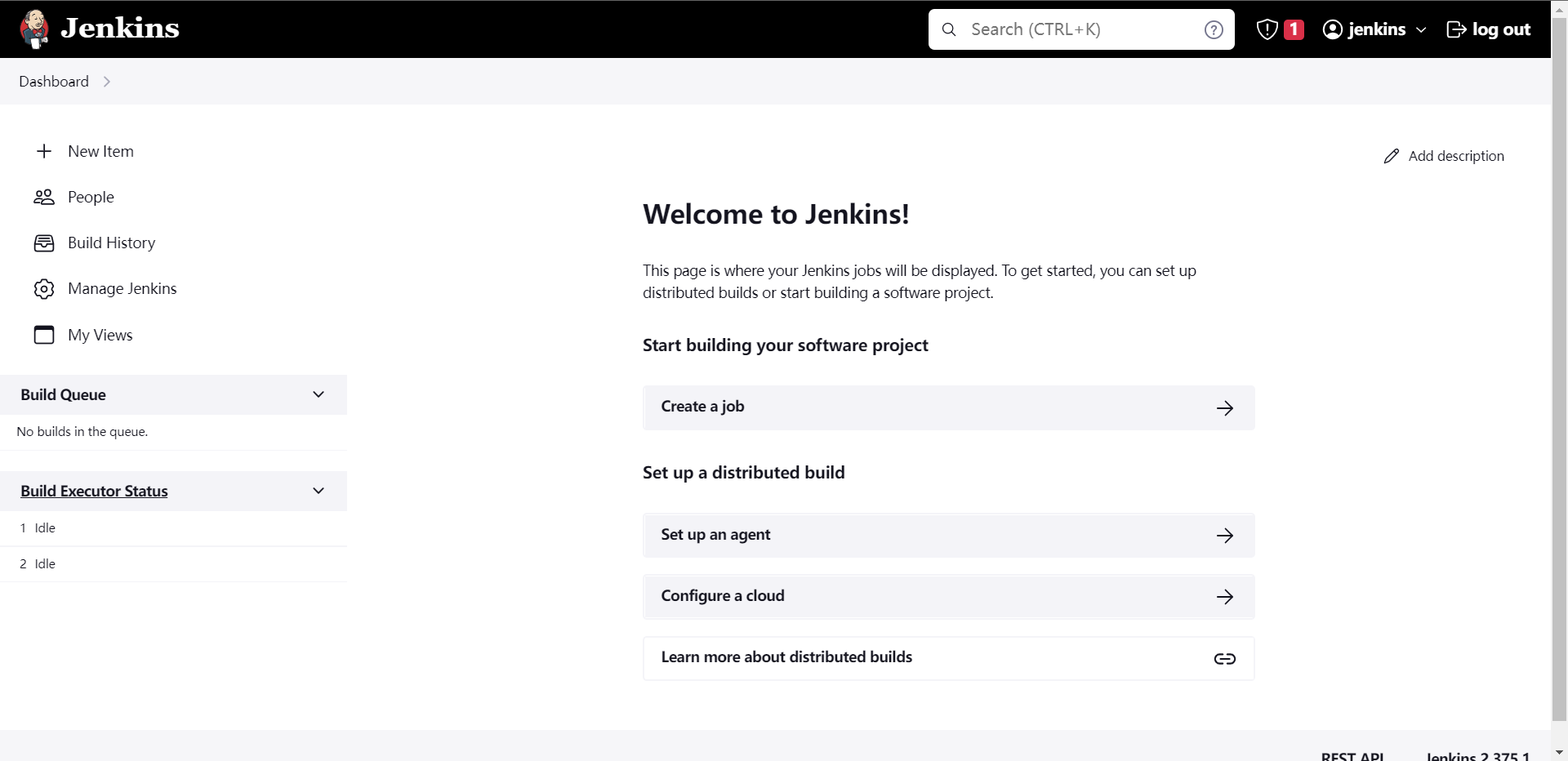

这样Jenkins就初始化完成了,我们点击`开始使用Jenkins`

这里就是Jenkins的界面了。

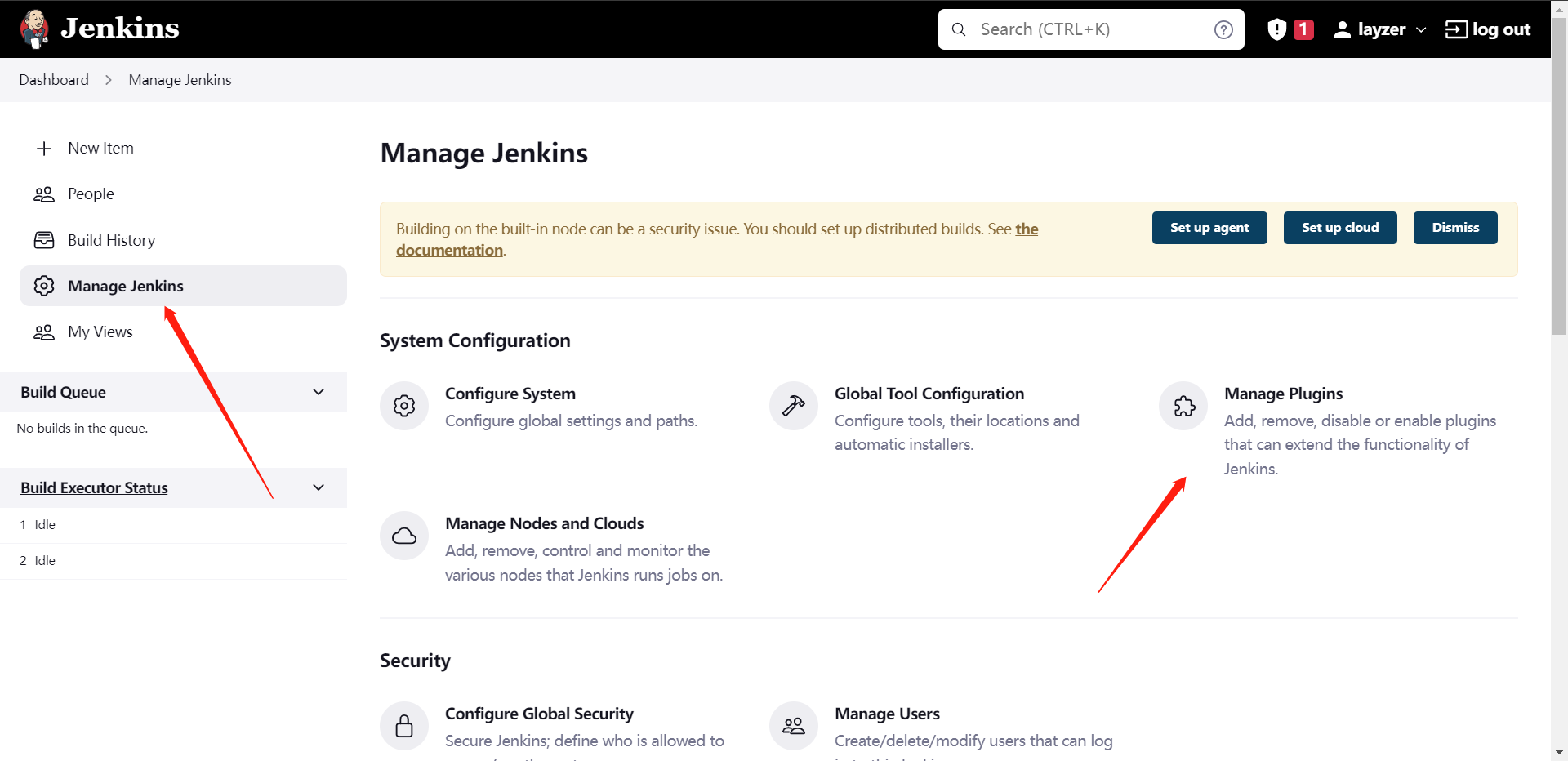

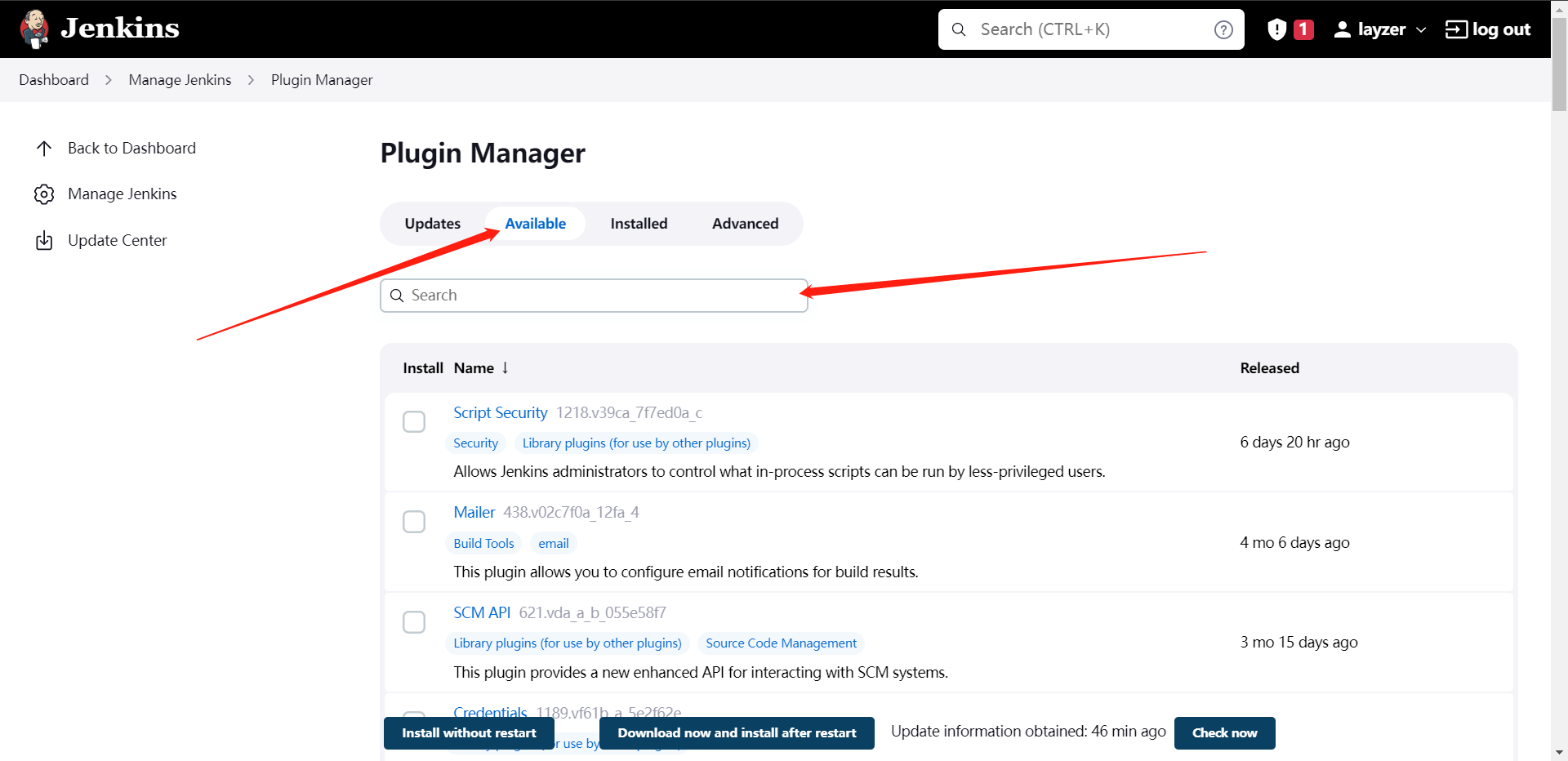

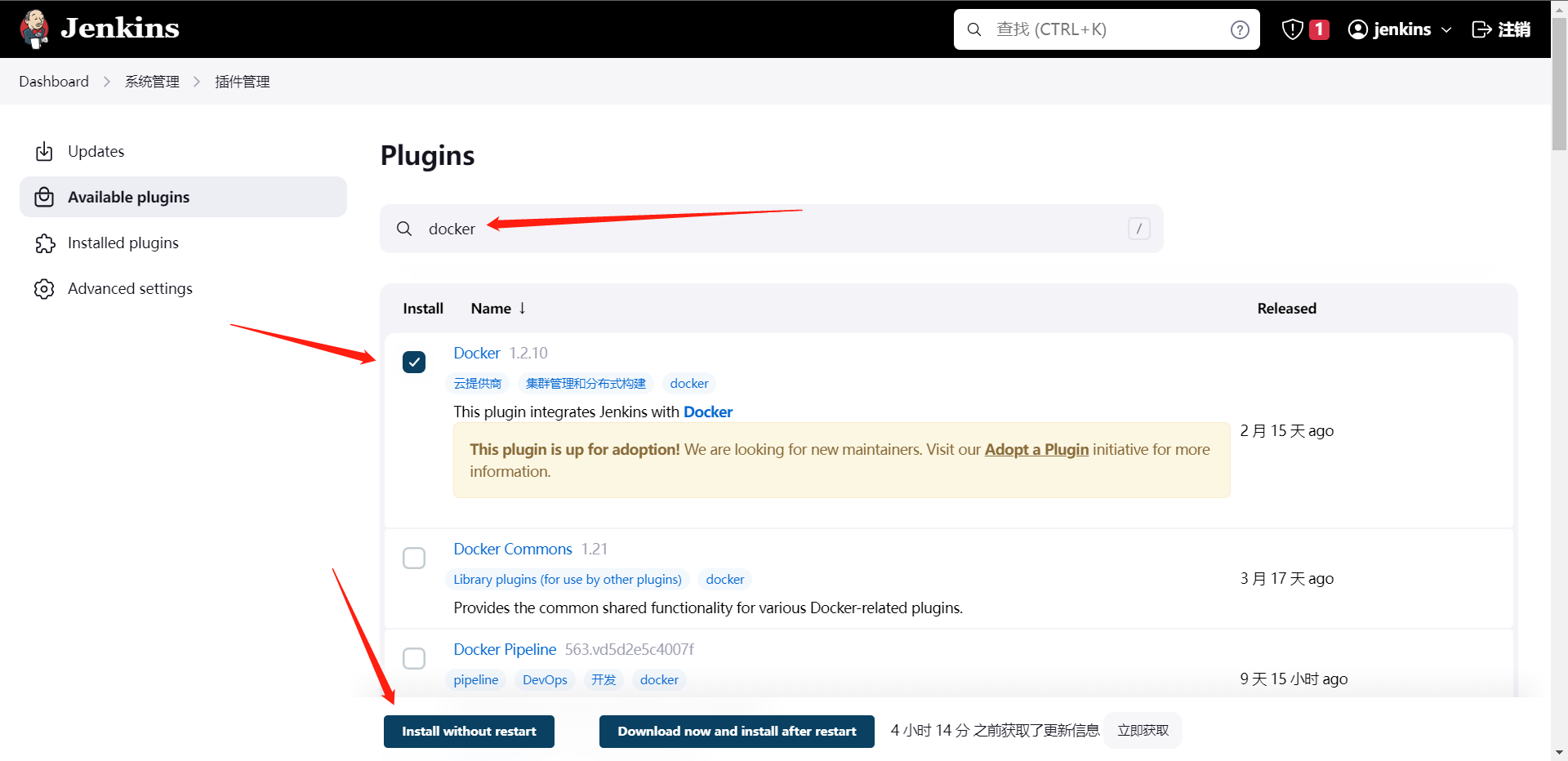

8:安装所需插件

1:Pipeline

2:Git

3:Blue Ocean

4:Git Parameter

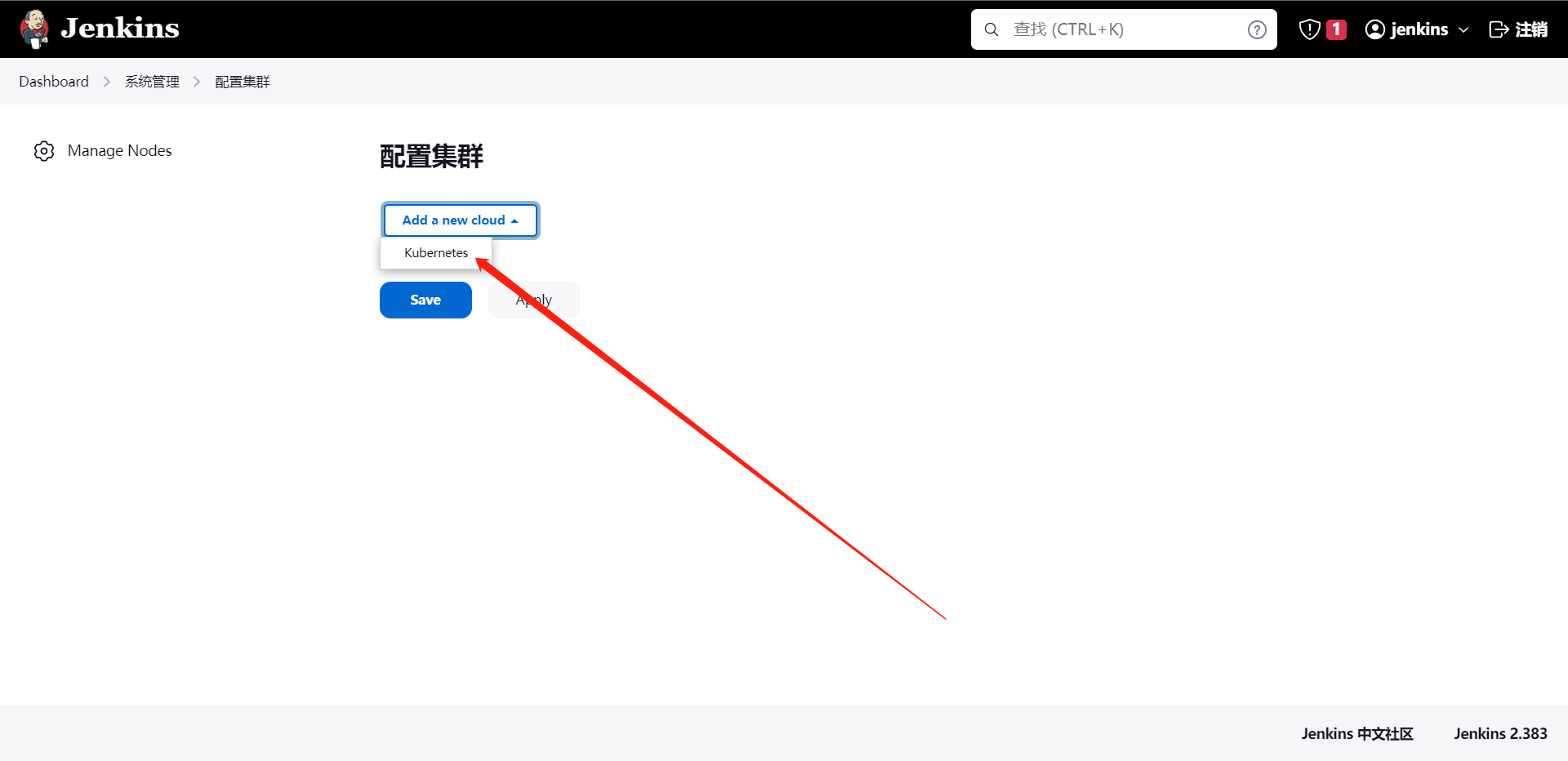

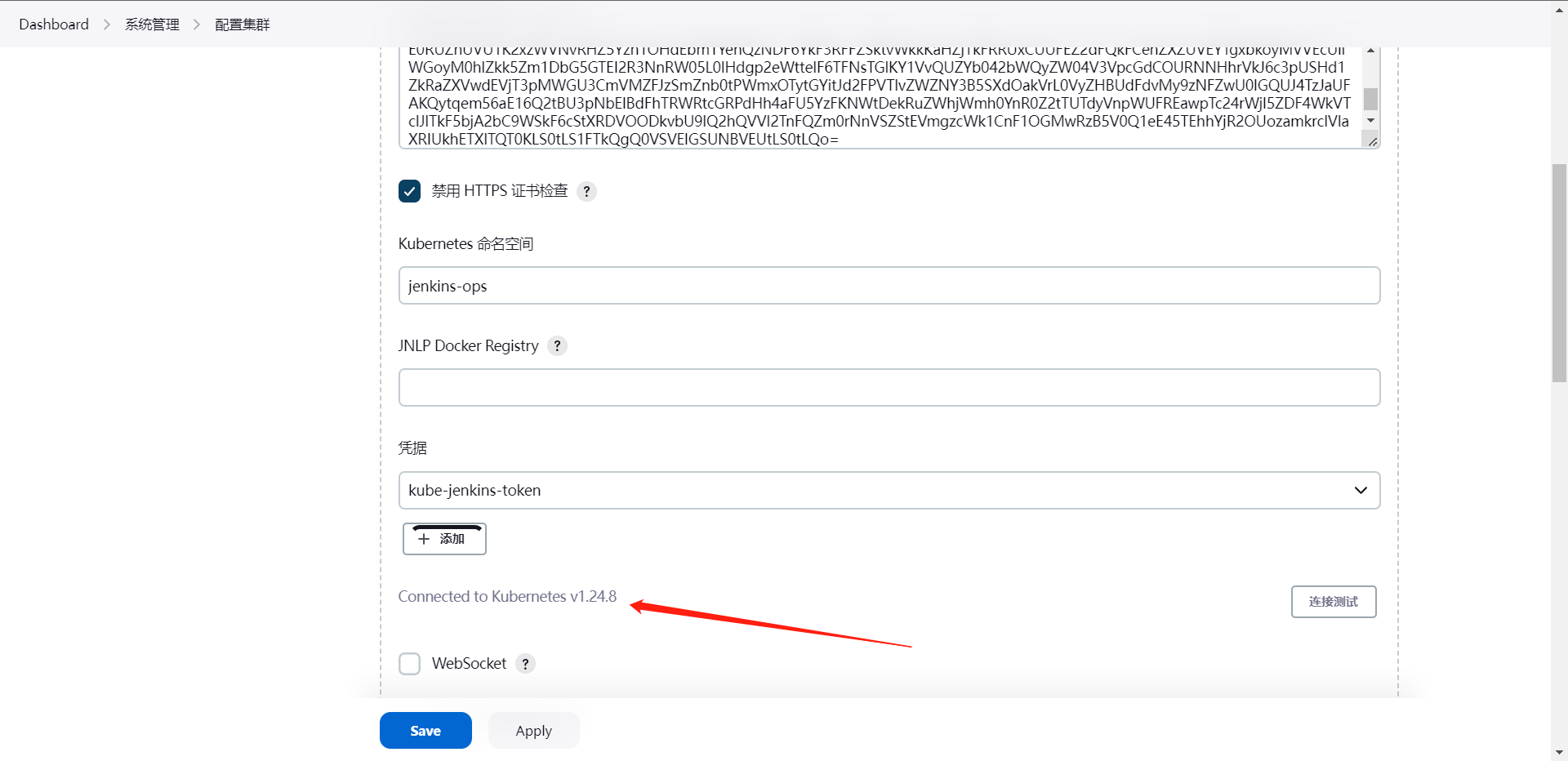

5:kubernetes

6:Config File Provider

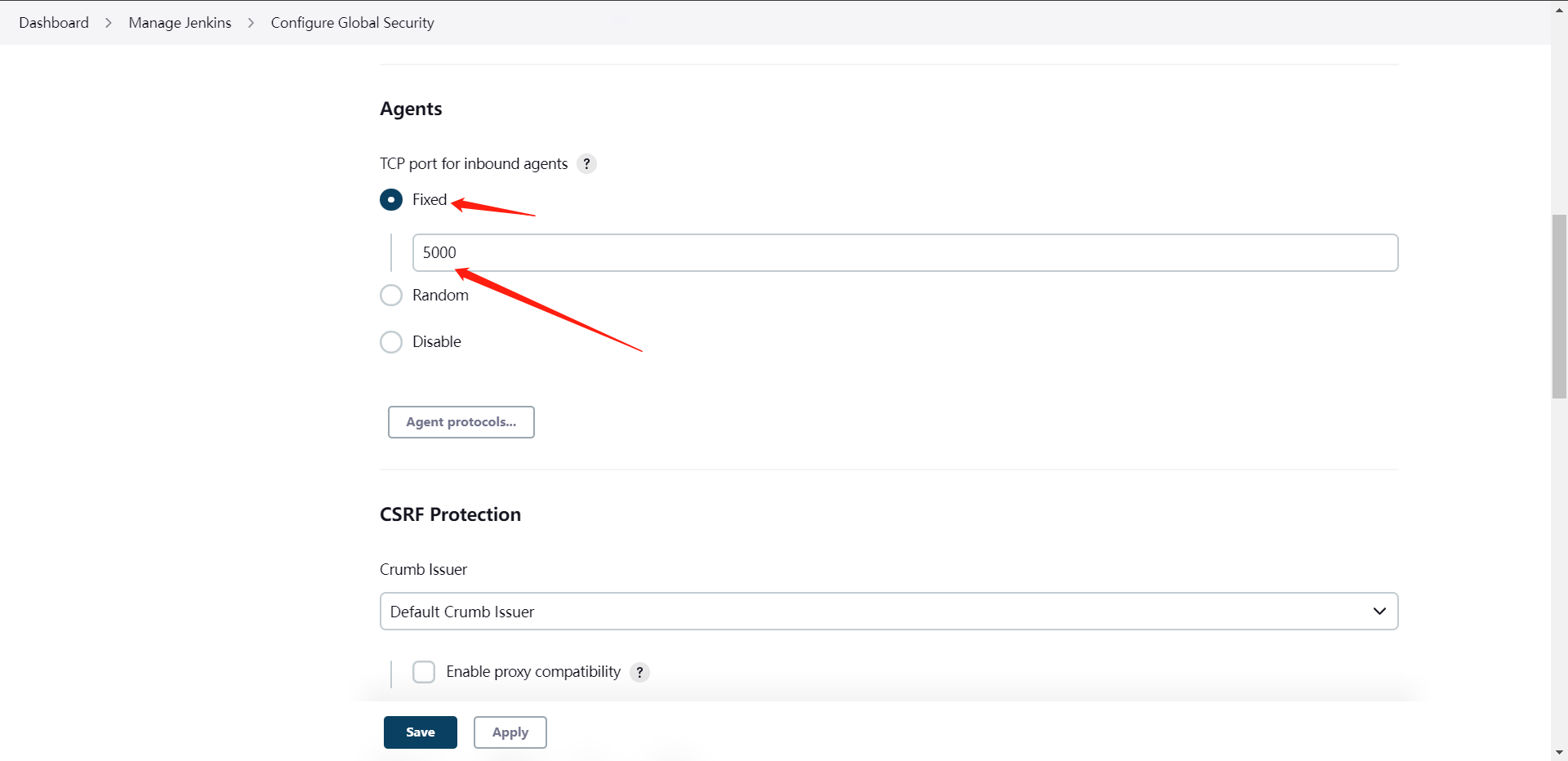

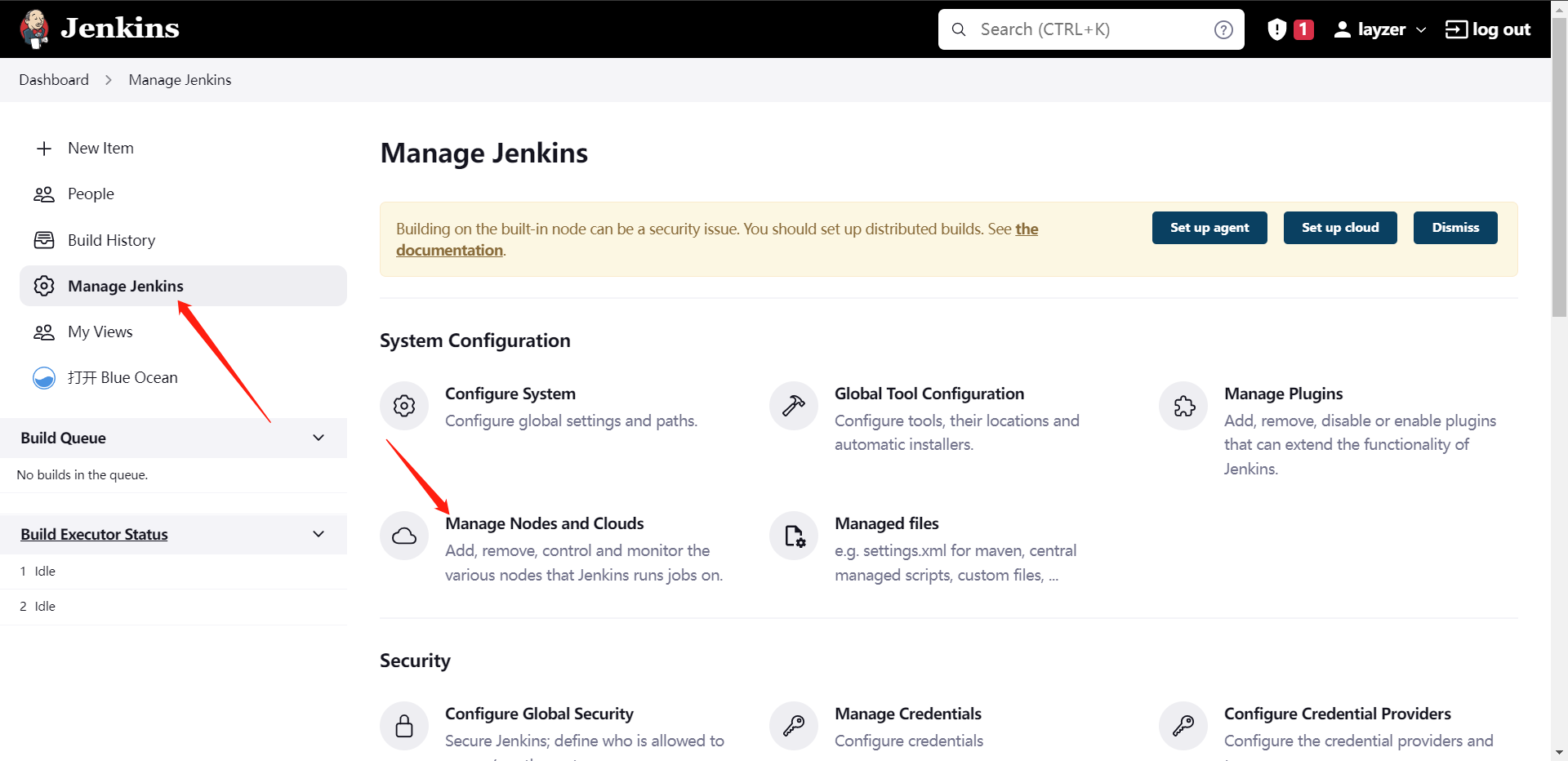

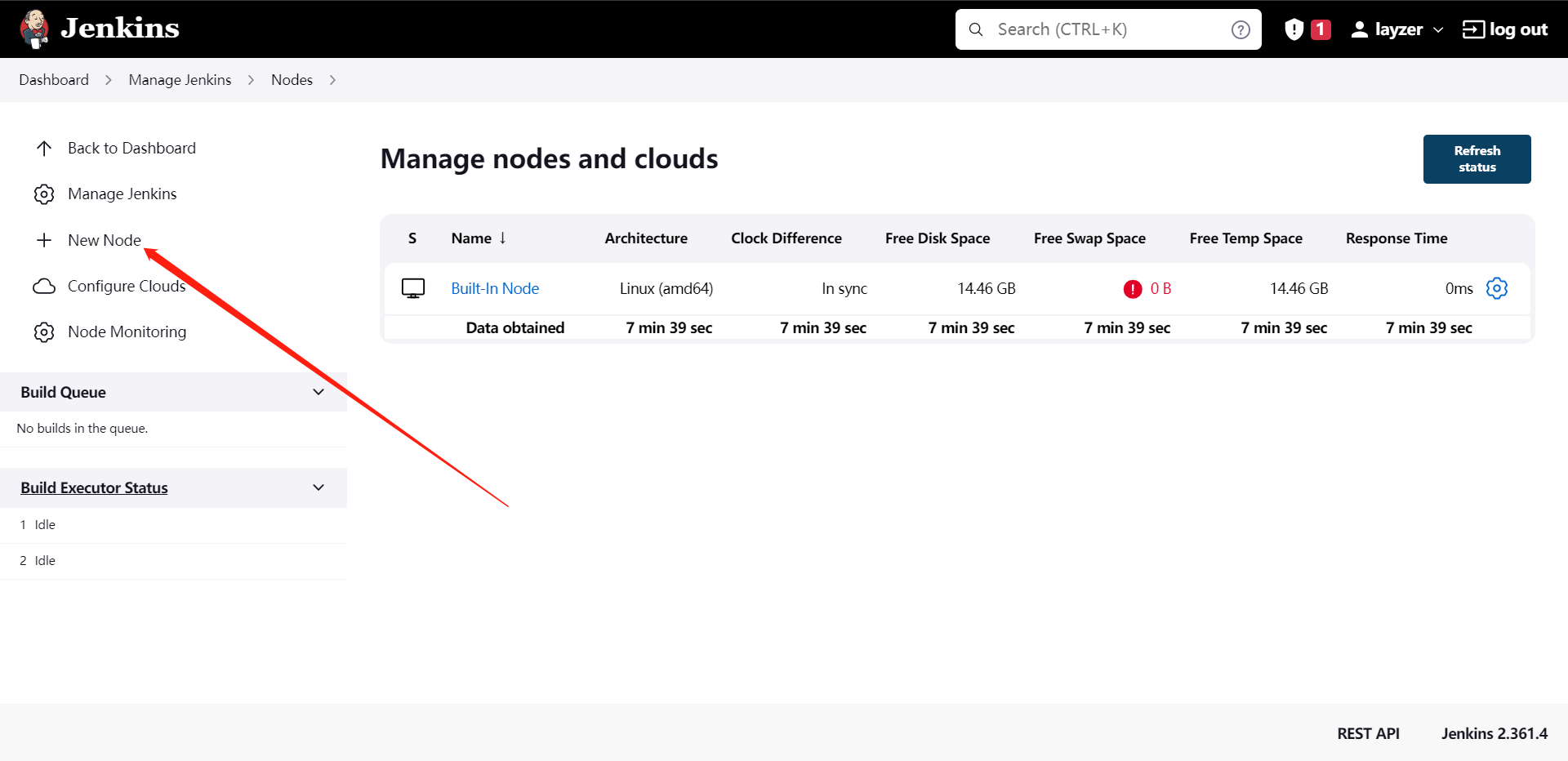

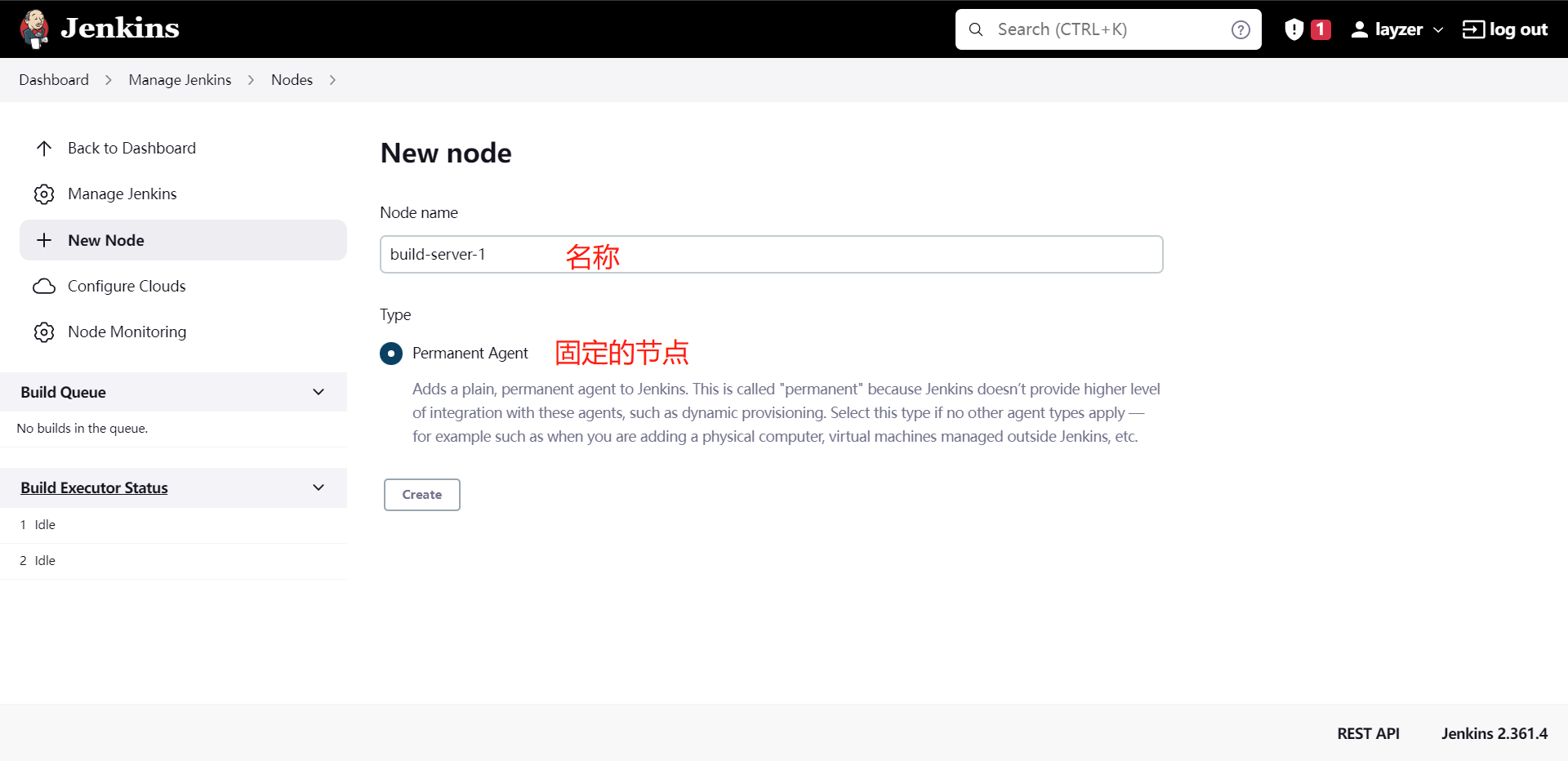

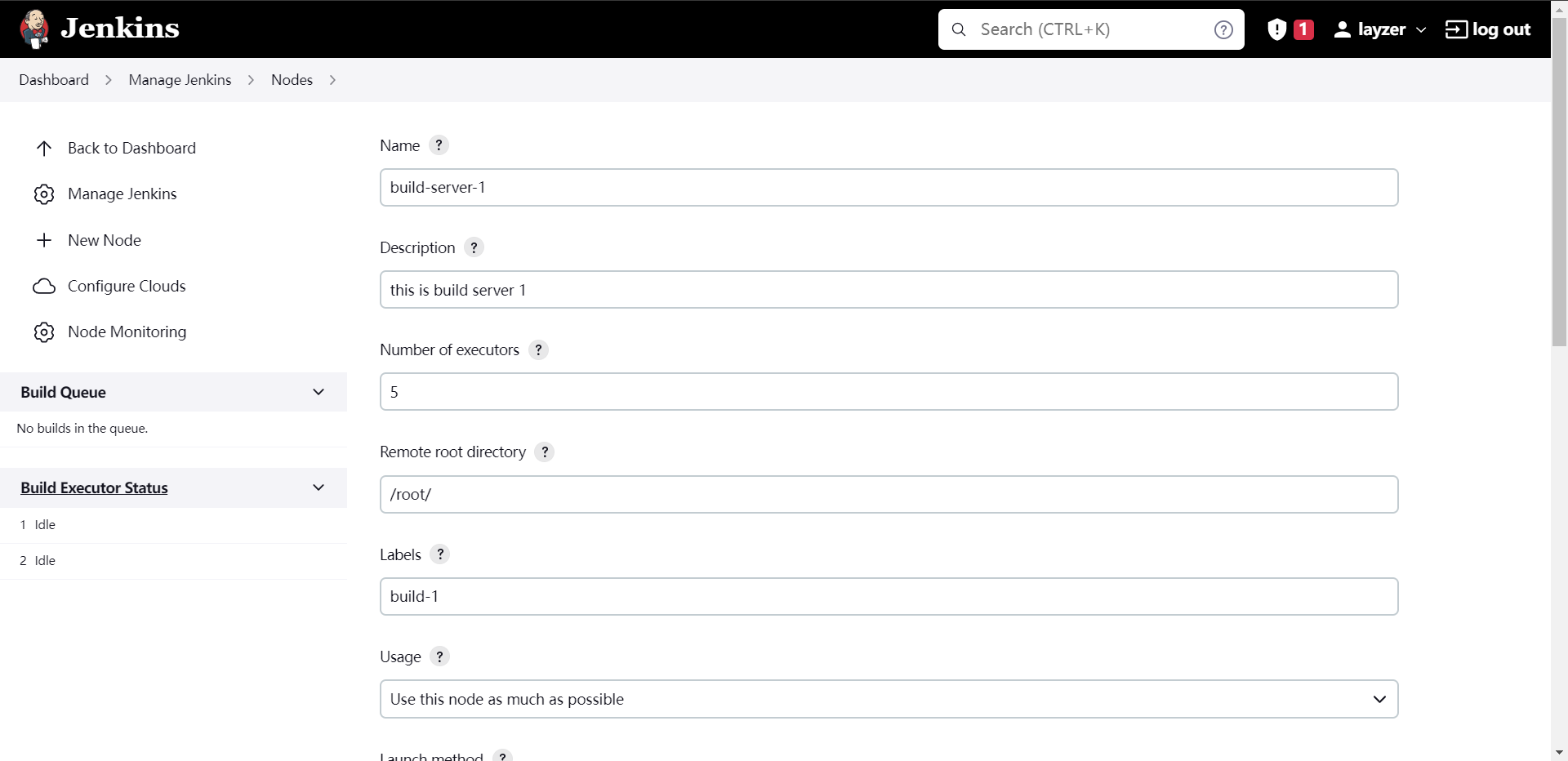

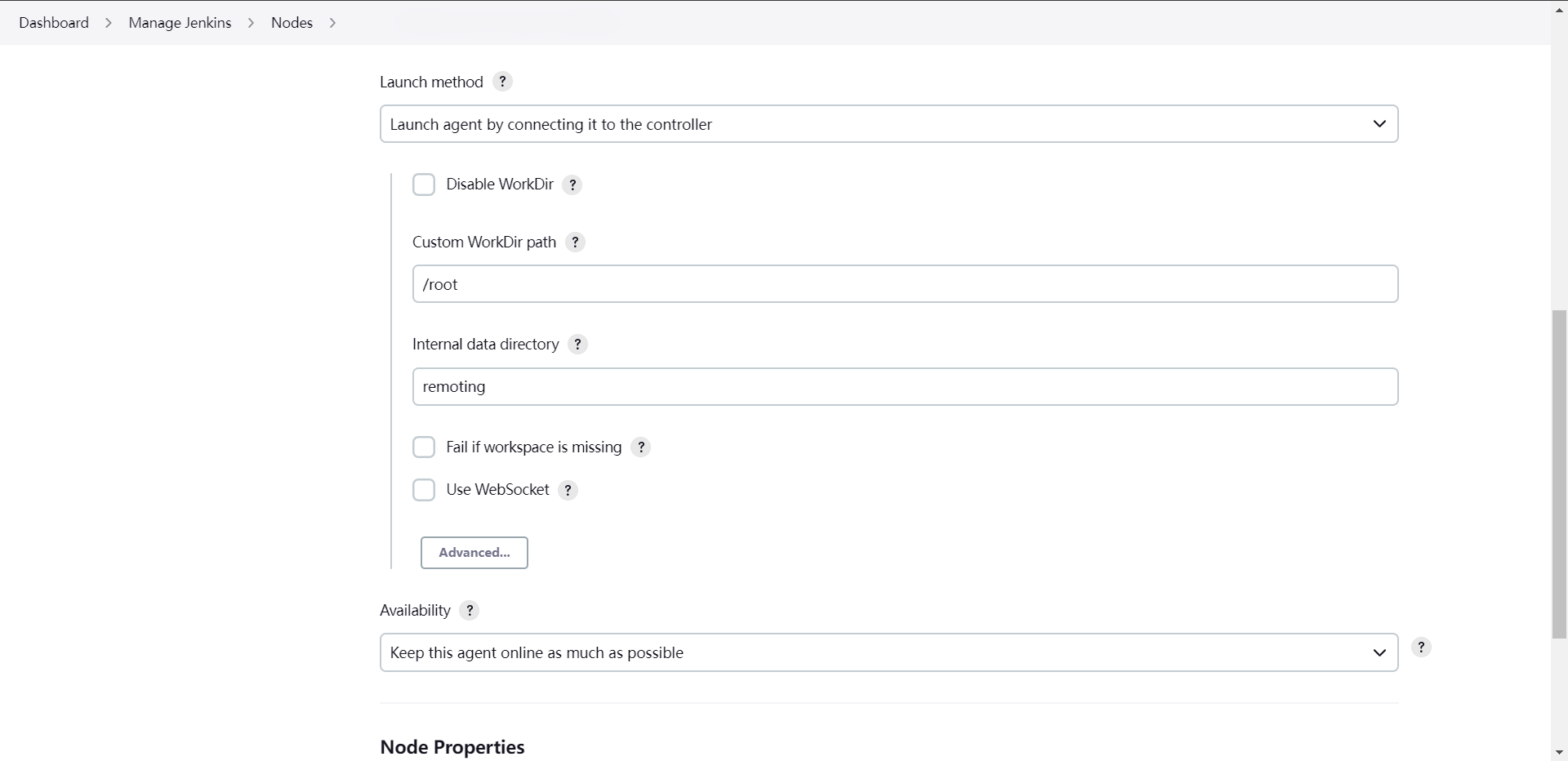

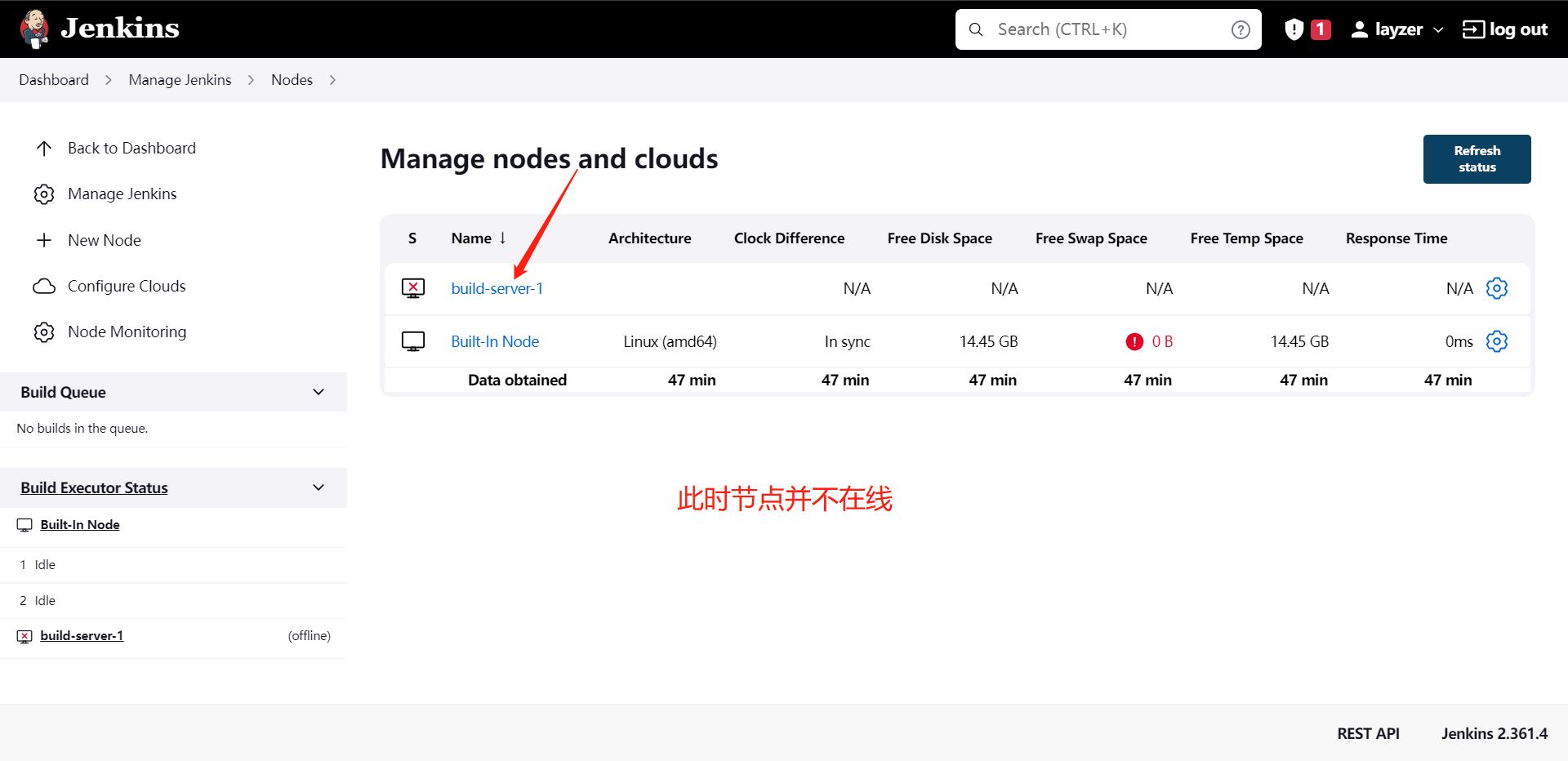

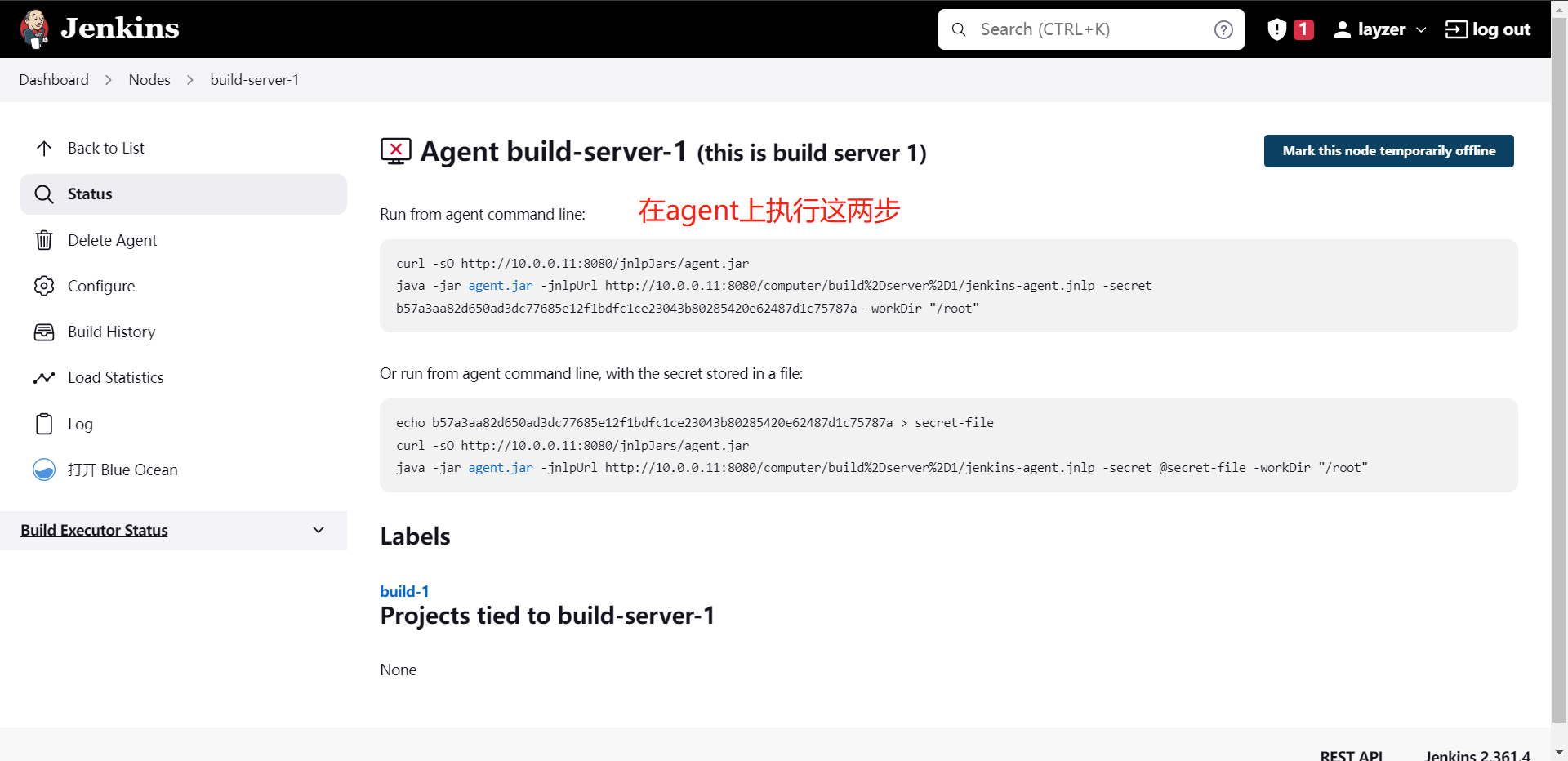

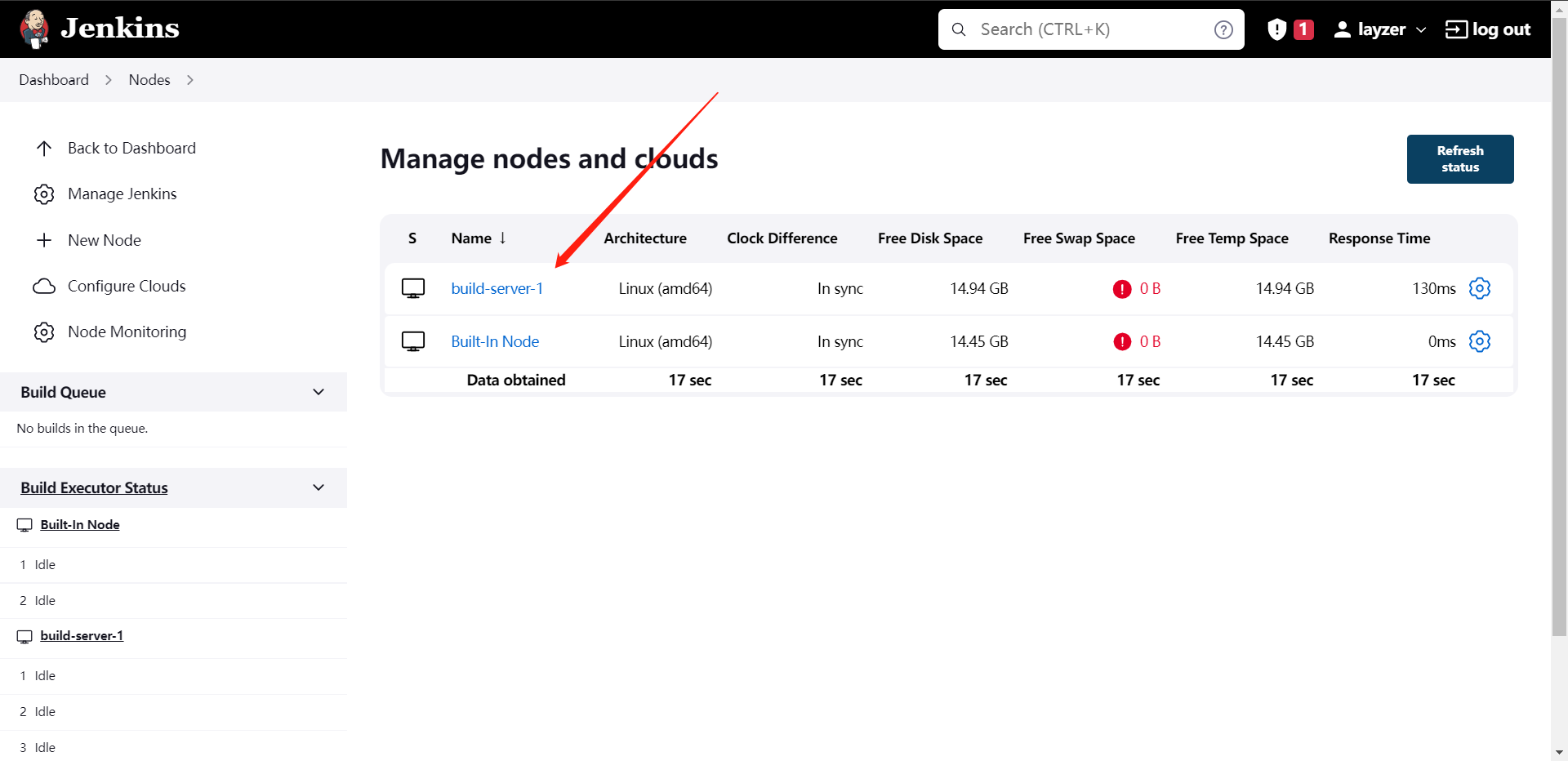

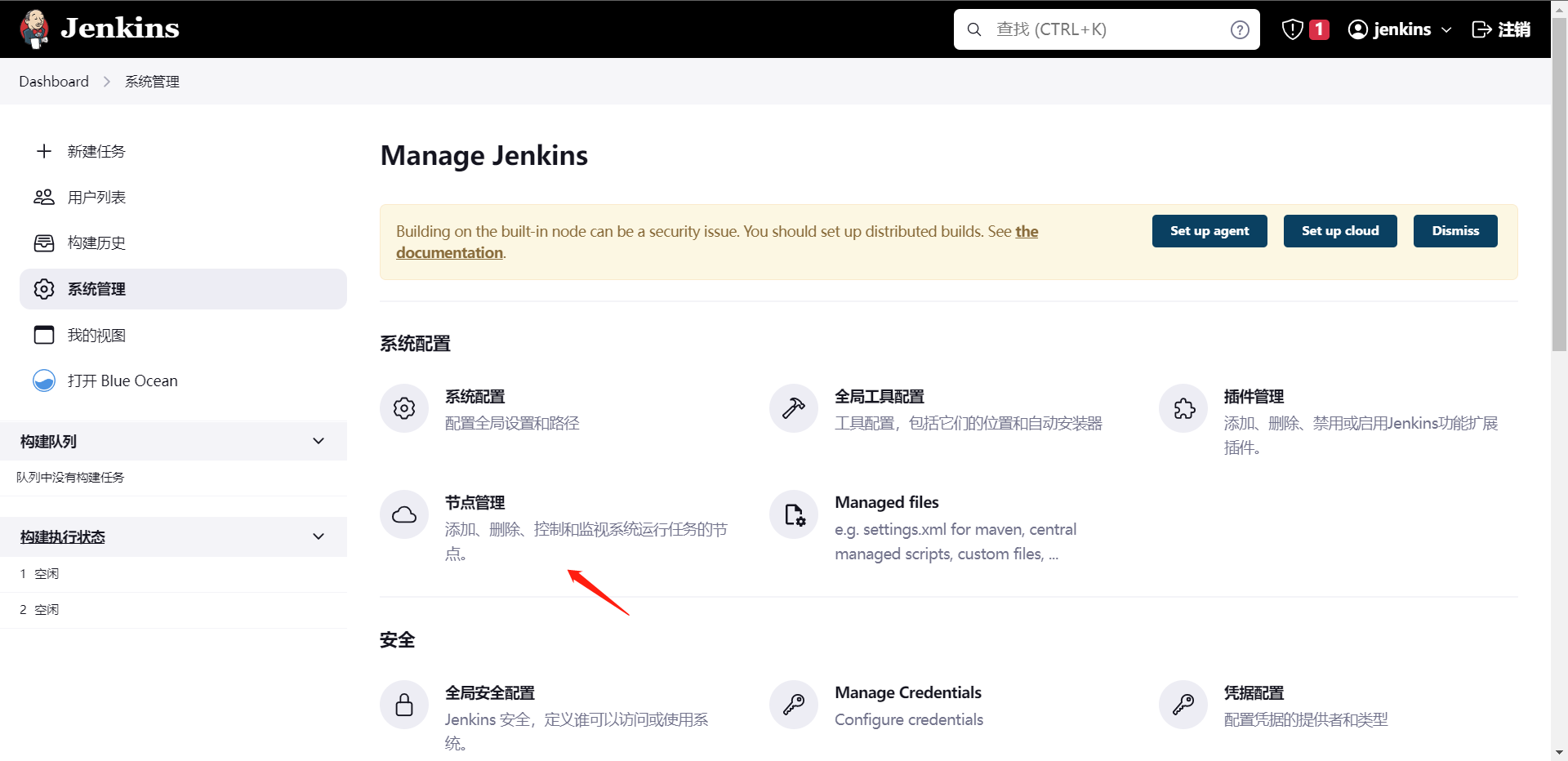

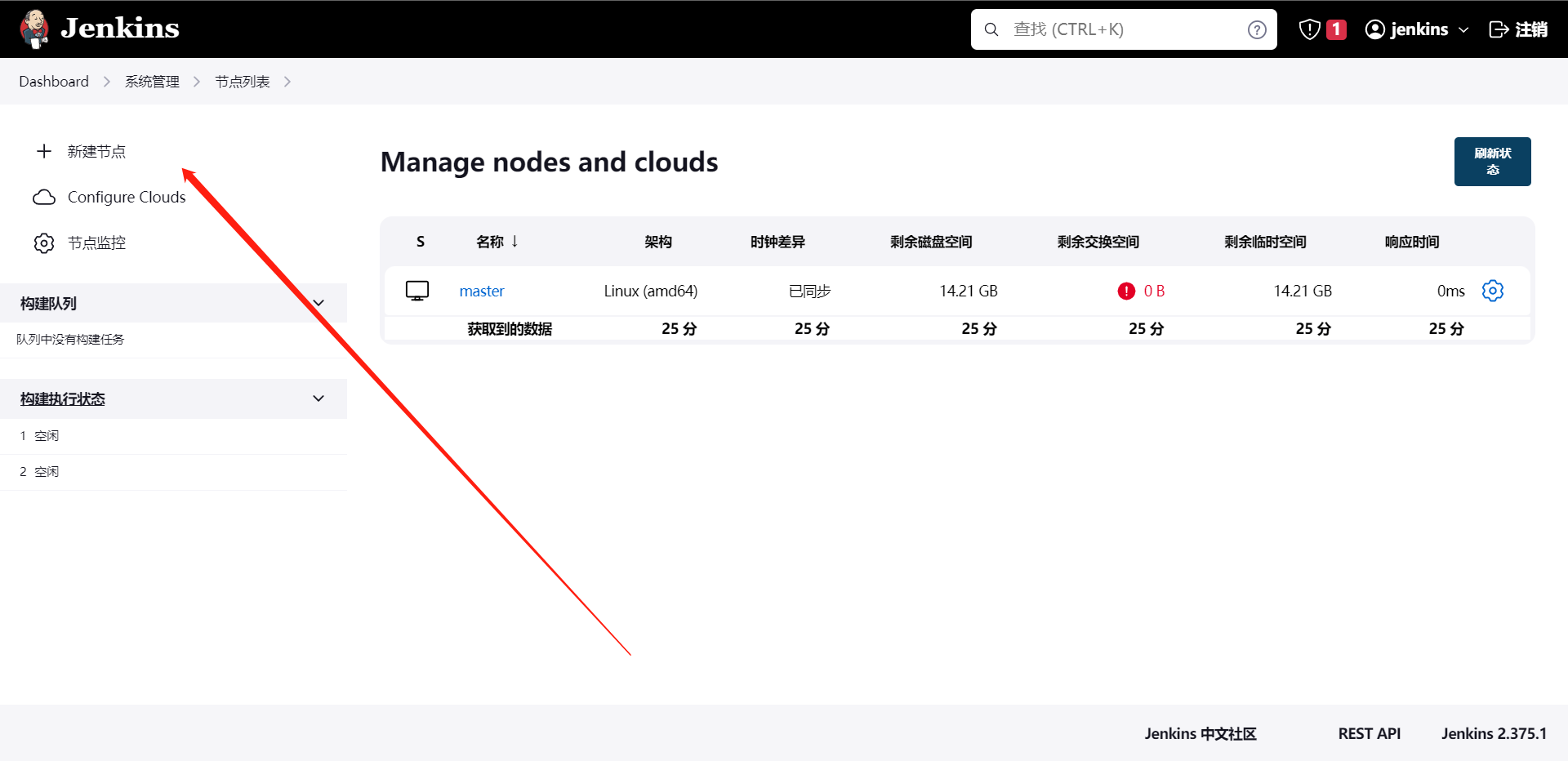

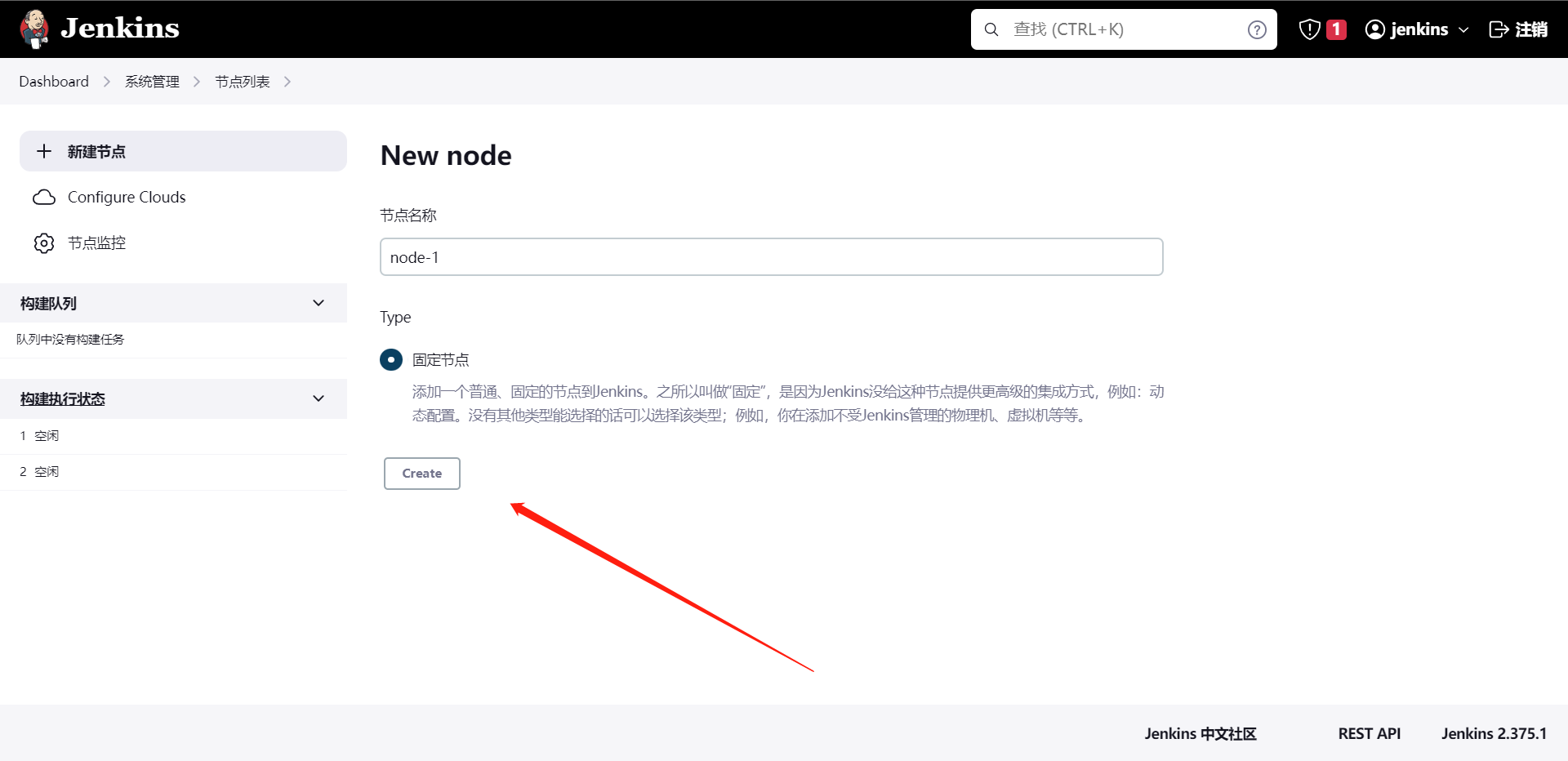

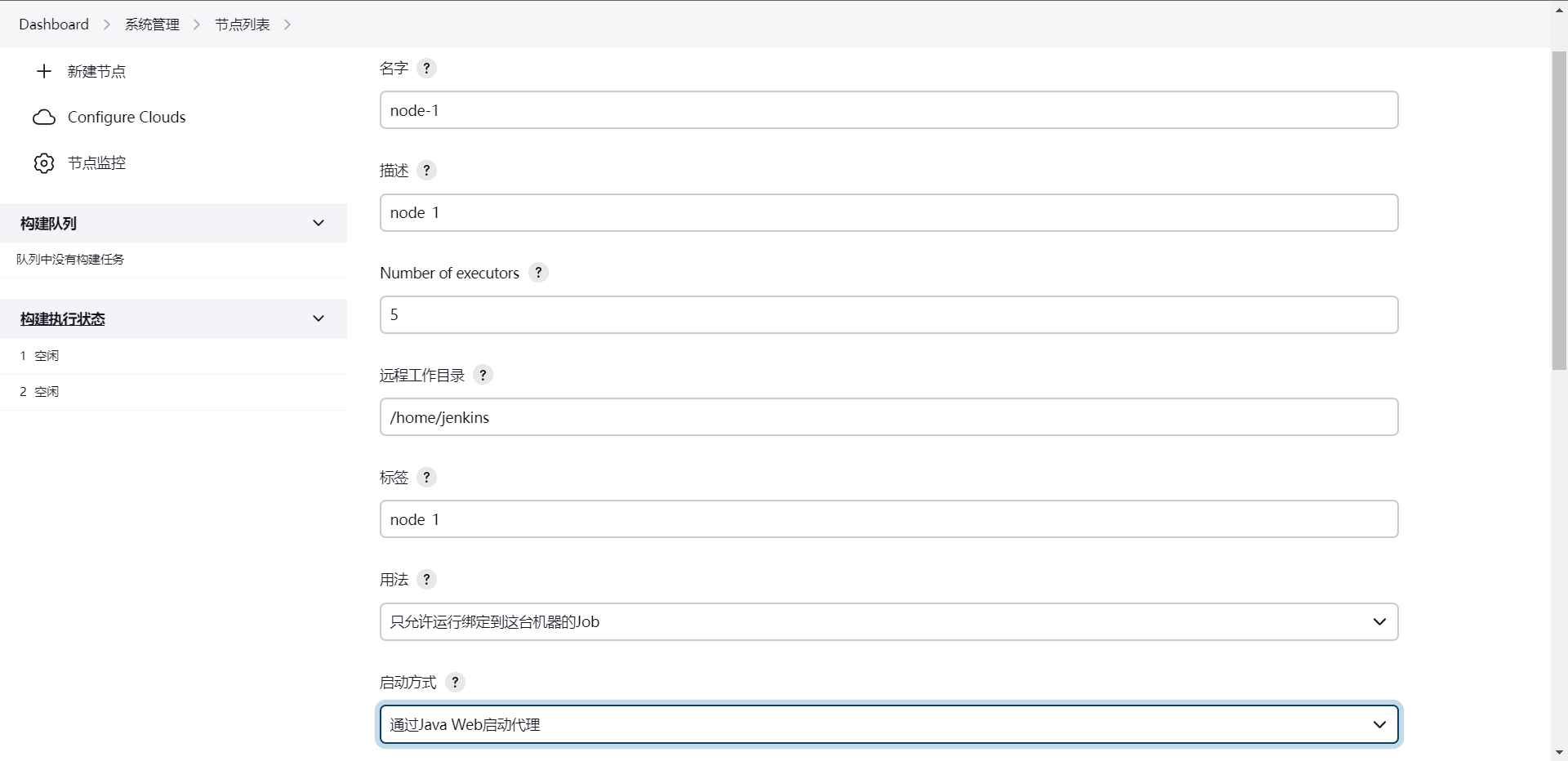

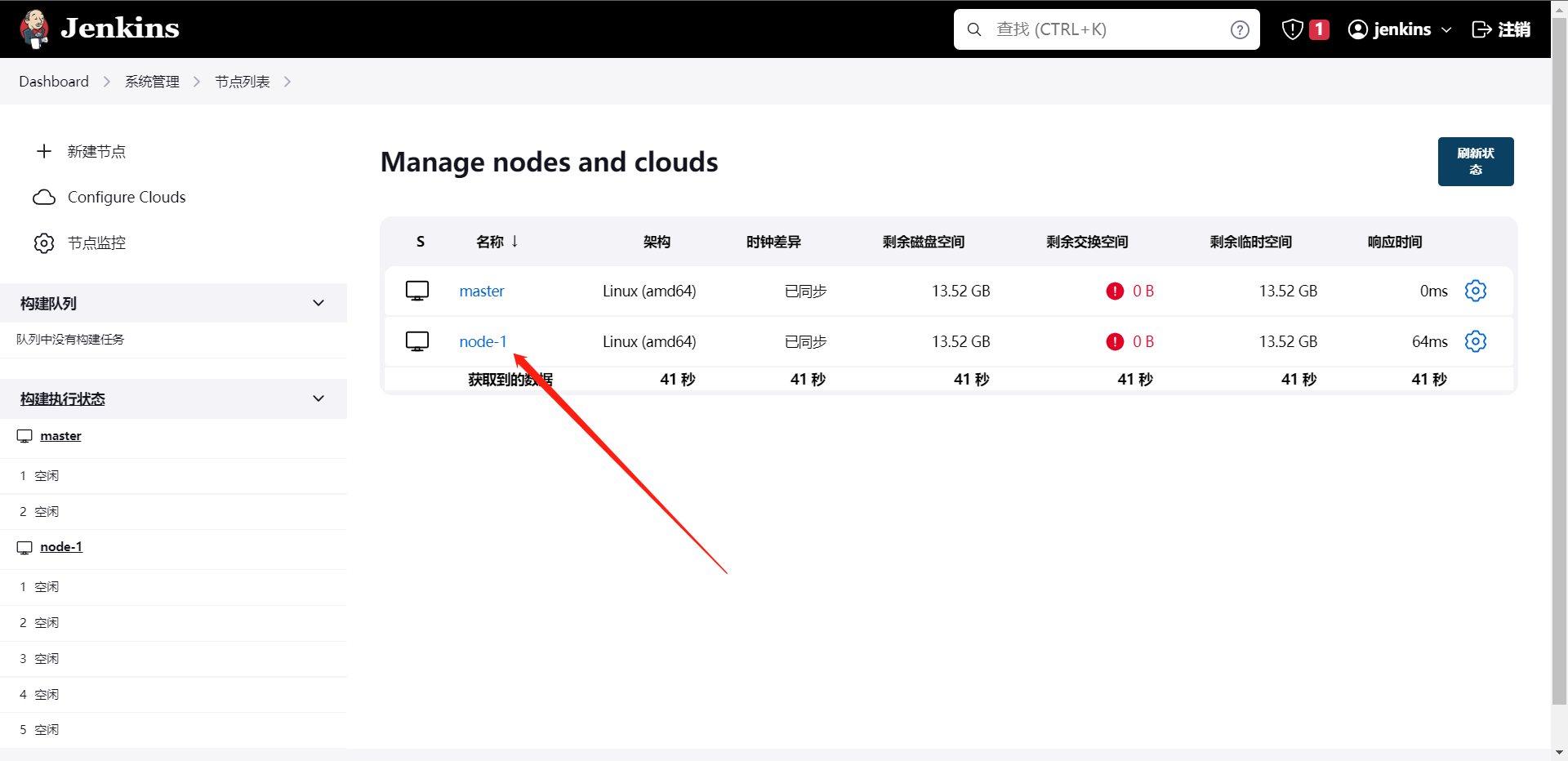

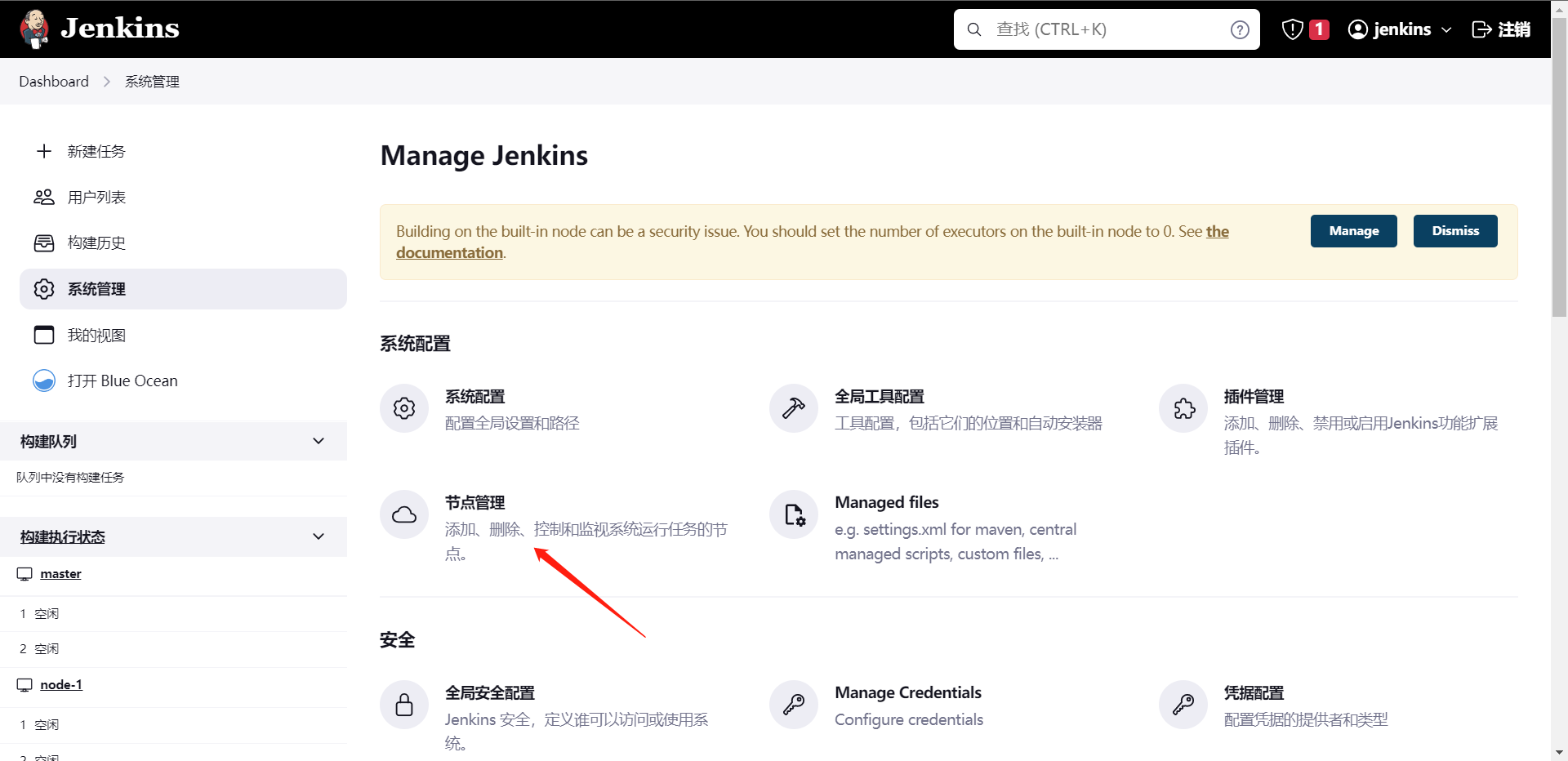

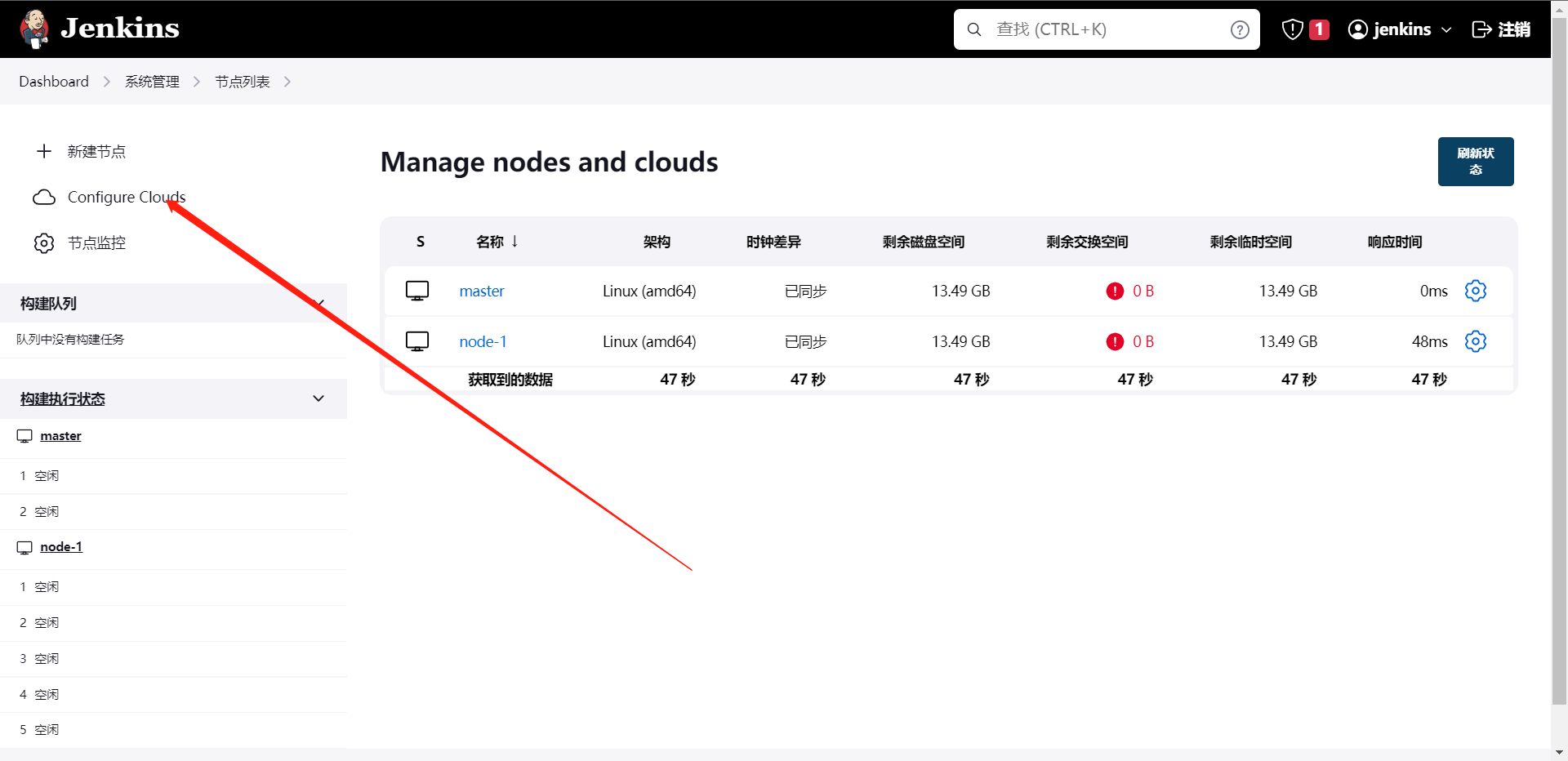

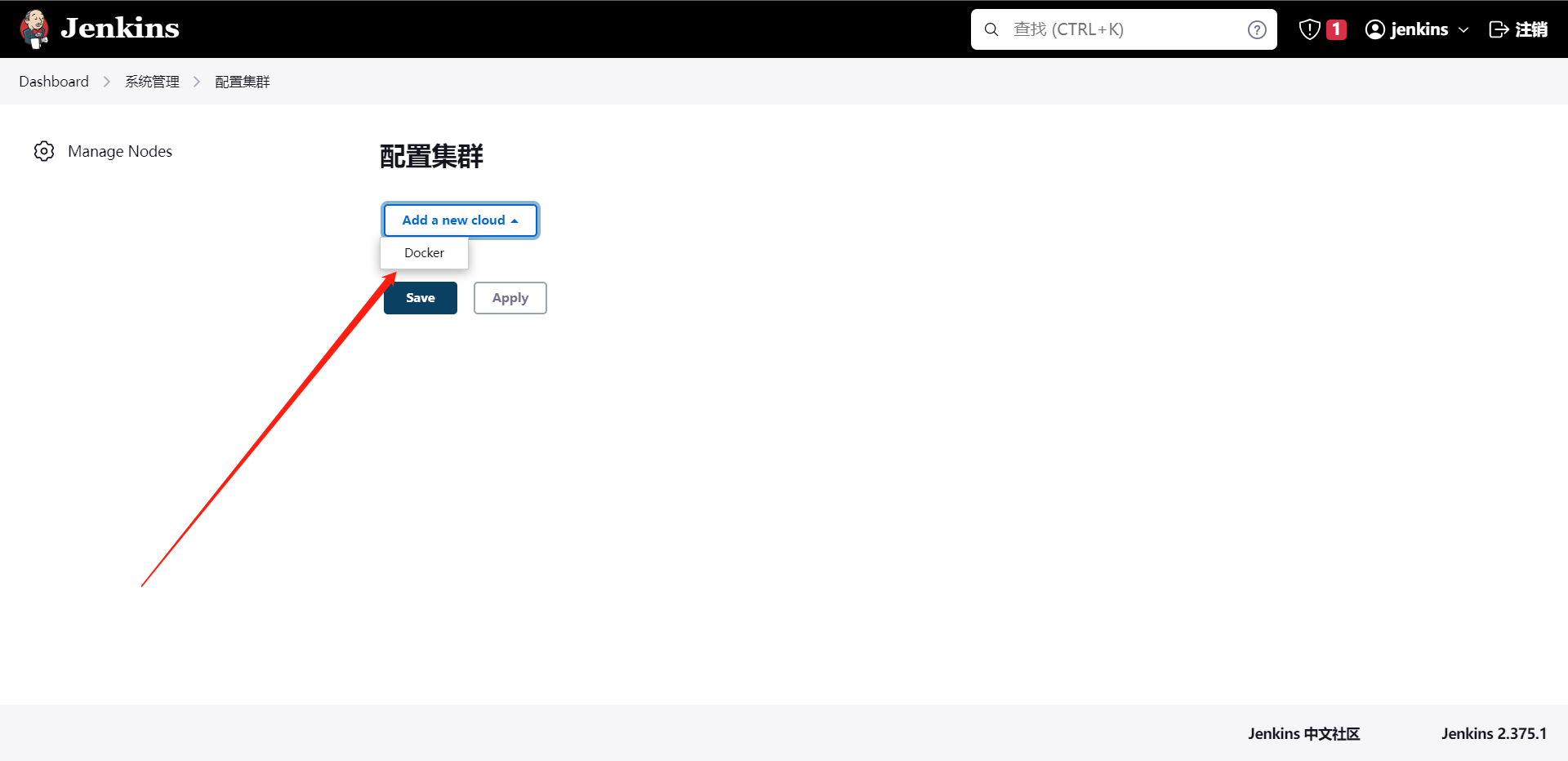

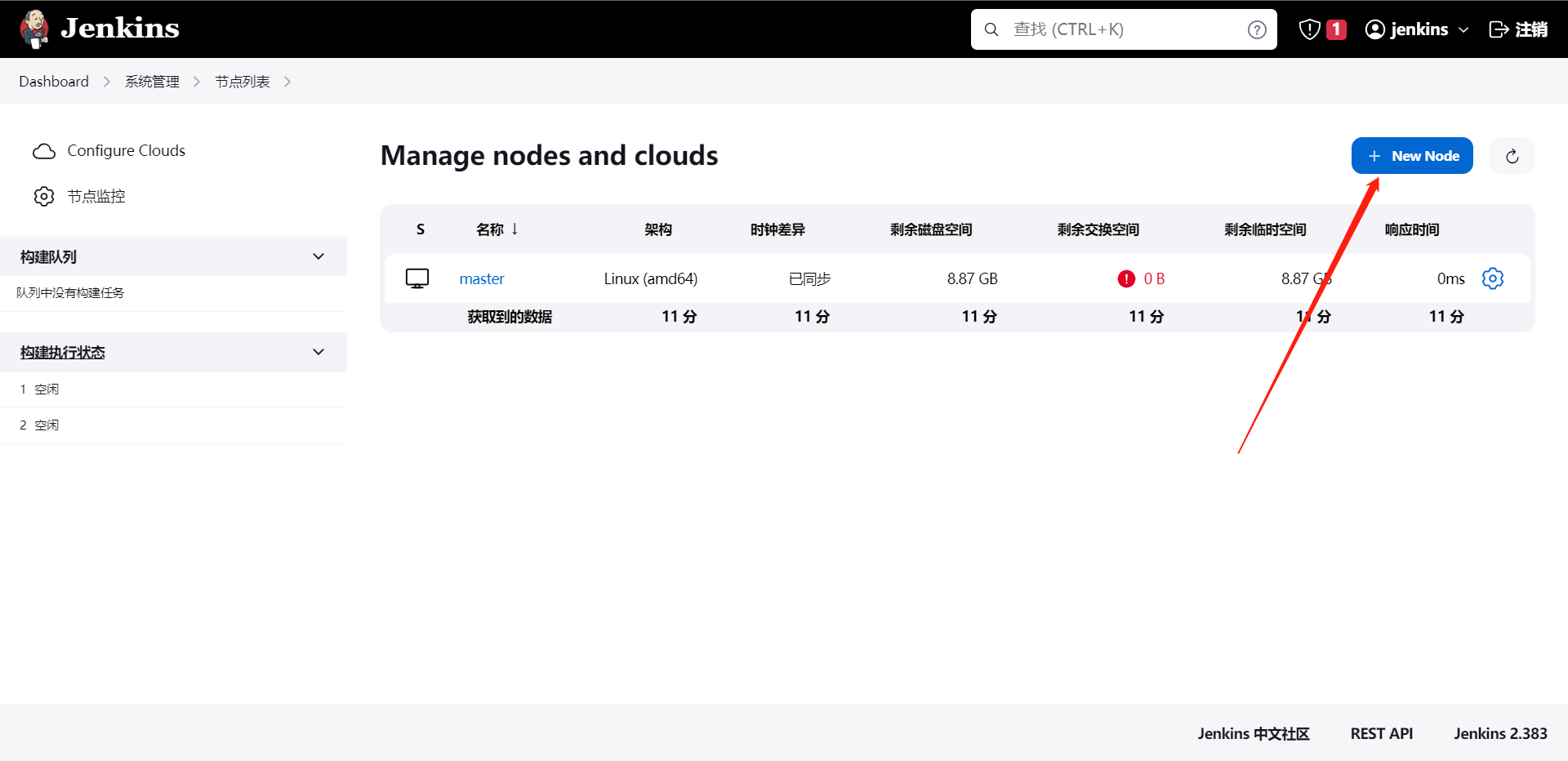

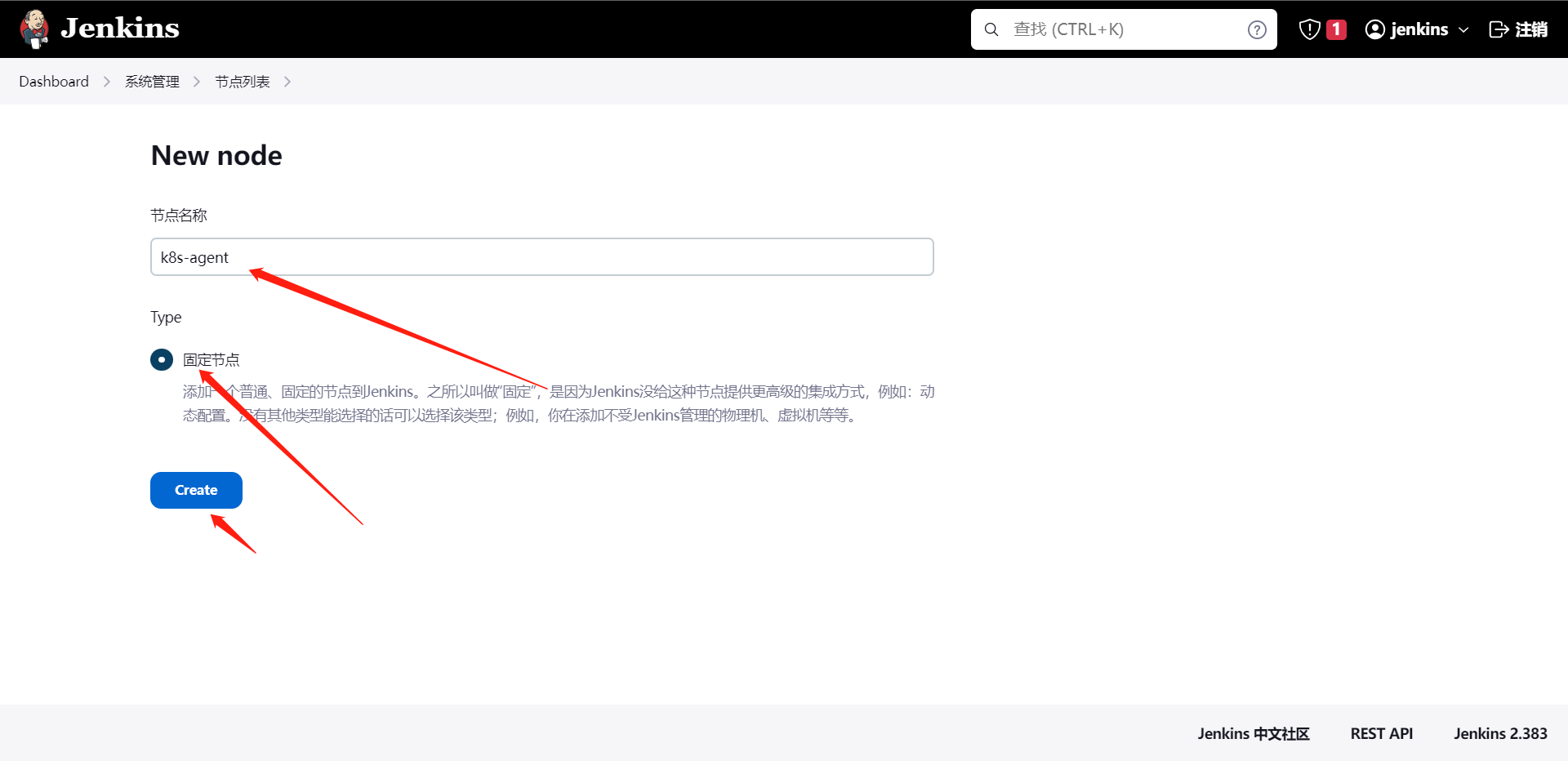

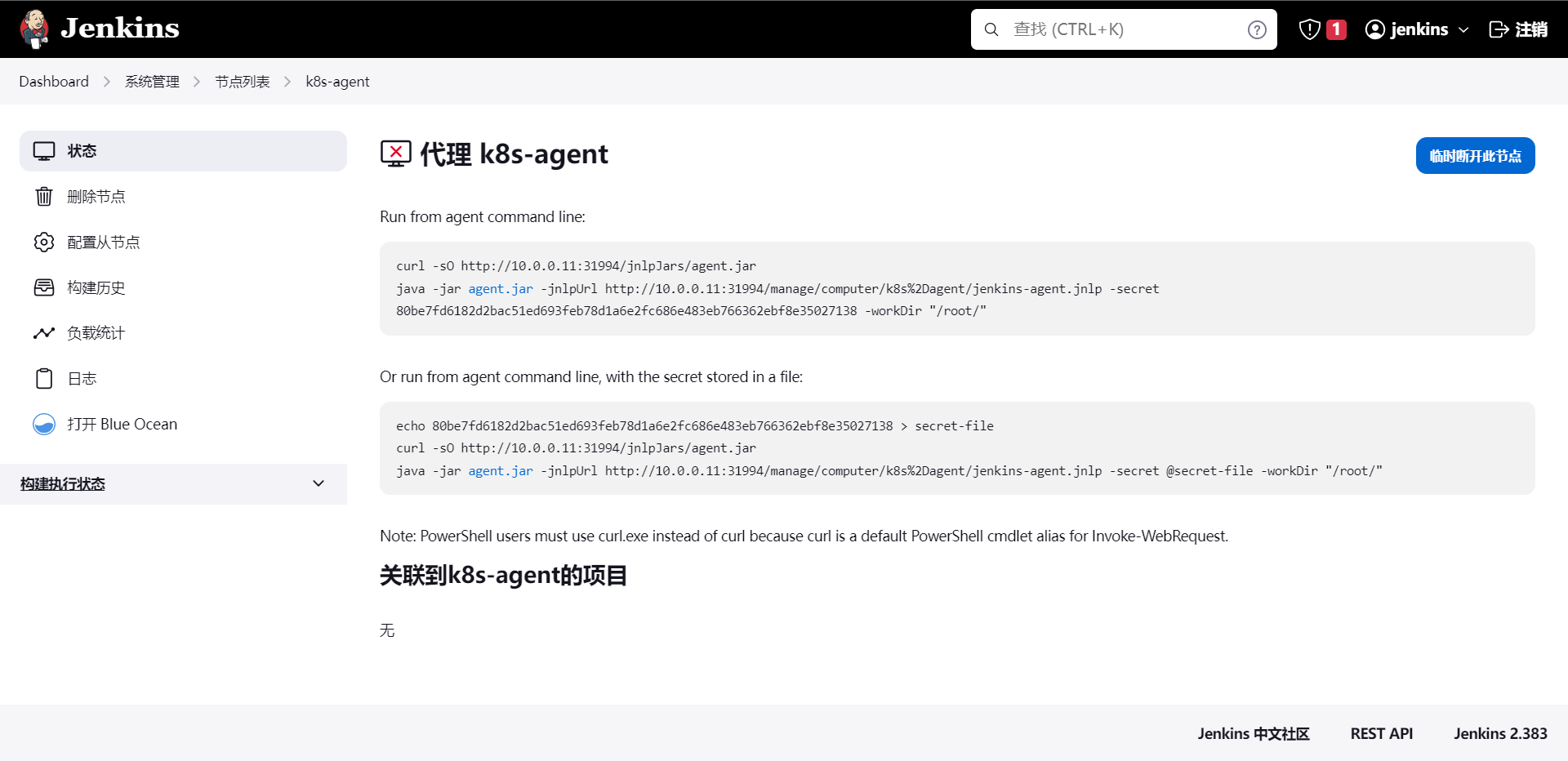

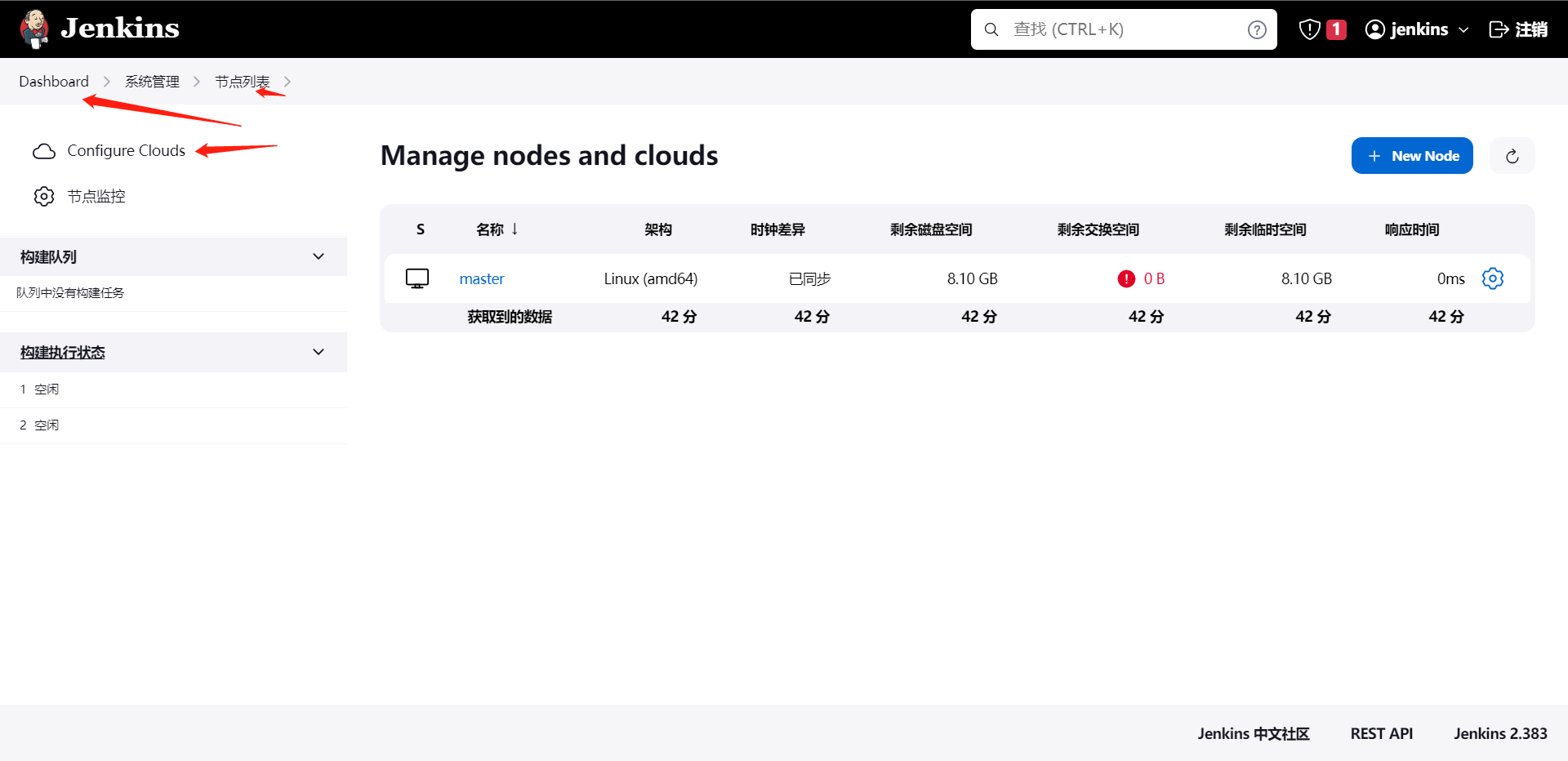

2:Jenkins添加agent节点

因为我们不管跑什么job,肯定是需要有一个节点来维持的,所以我们需要给Jenkins添加一个节点(Node)

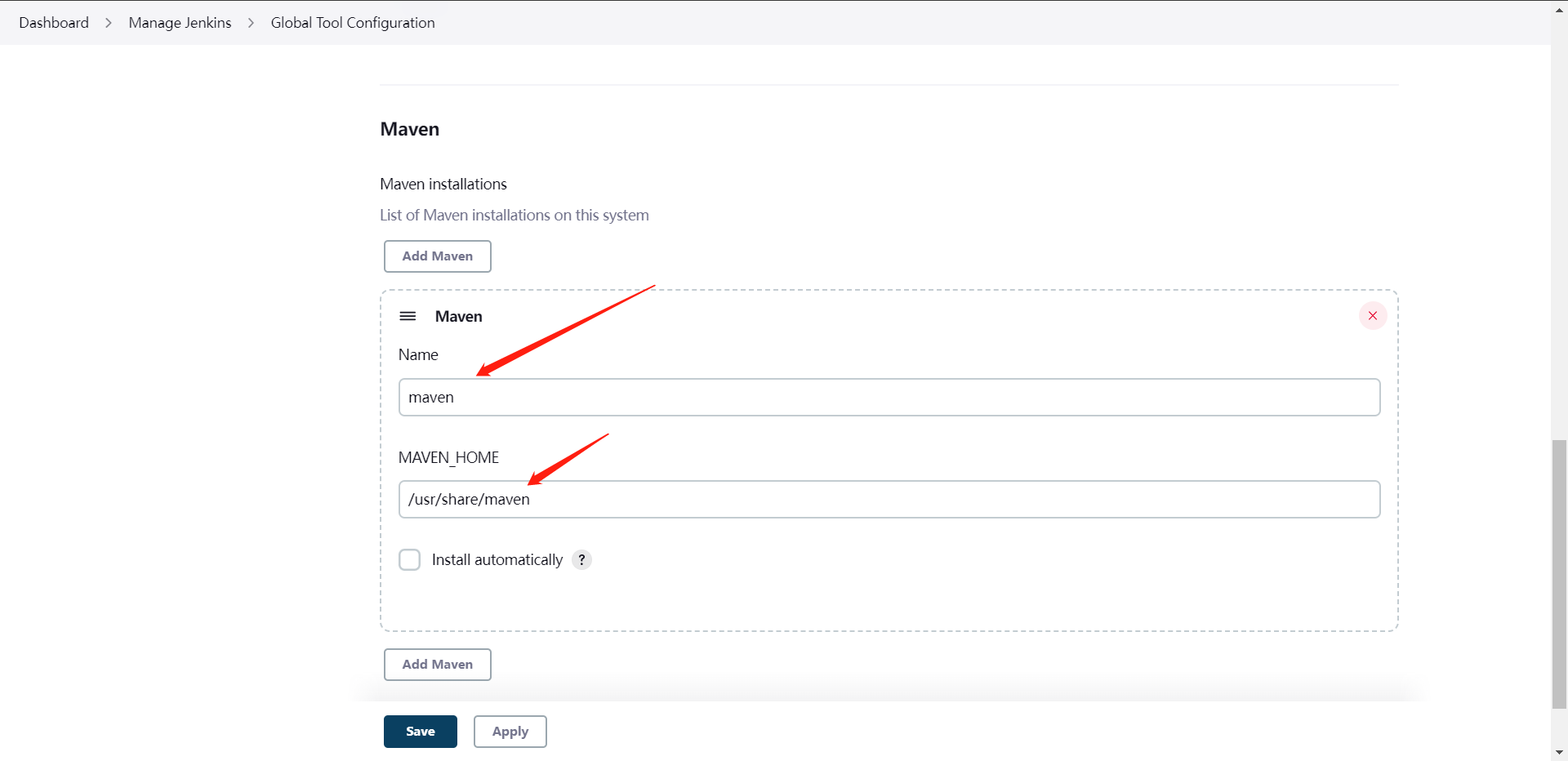

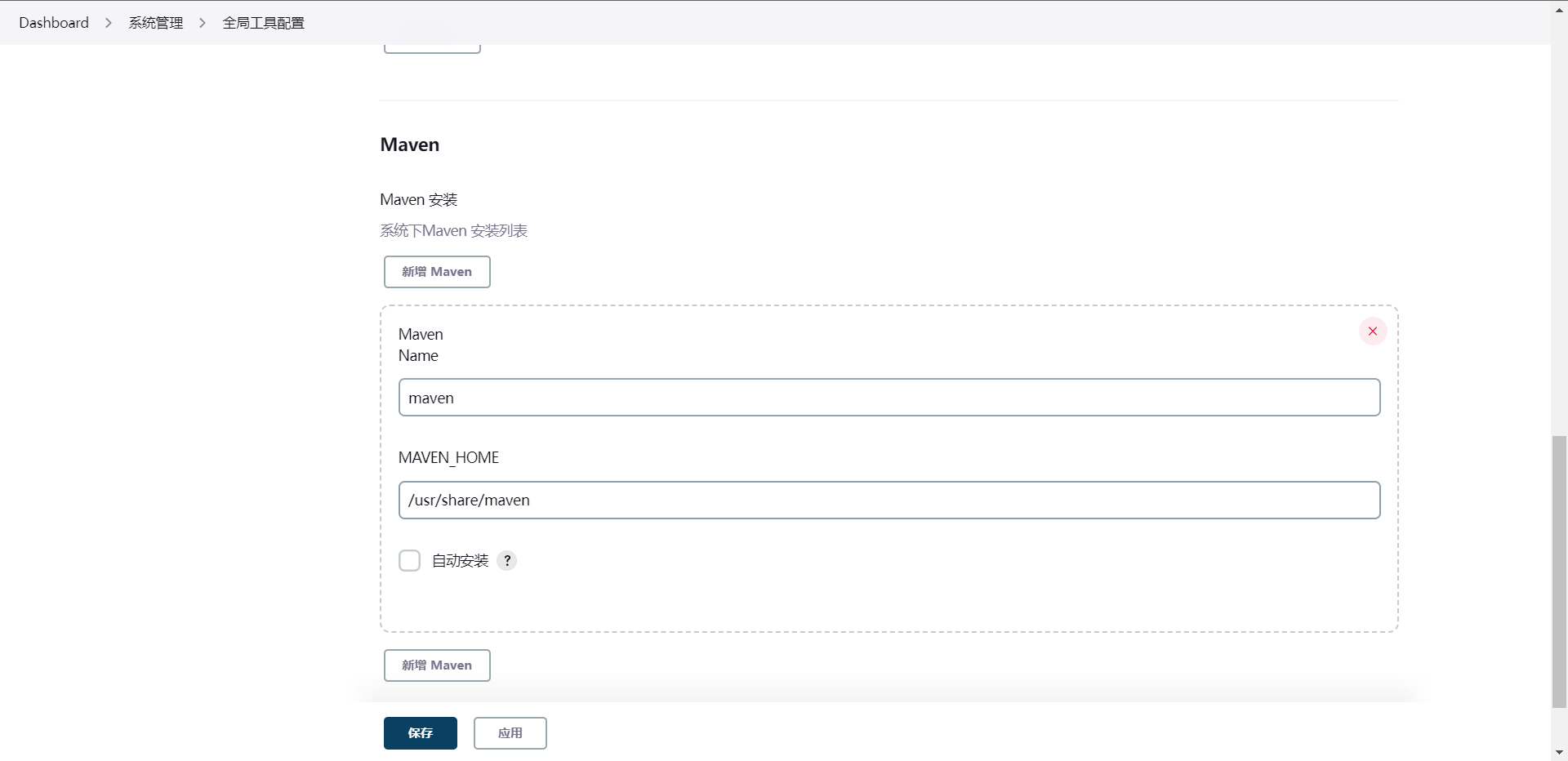

这里的maven可以二进制装,也可以用yum装,总之需要填写maven的目录,我这里用的是yum

[root@cdk-server updates]# yum install -y maven

[root@cdk-server updates]# mvn --version

Apache Maven 3.6.3 (Red Hat 3.6.3-14)

Maven home: /usr/share/maven

Java version: 11.0.17, vendor: Red Hat, Inc., runtime: /usr/lib/jvm/java-11-openjdk-11.0.17.0.8-2.el9_0.x86_64

Default locale: en_US, platform encoding: UTF-8

OS name: "linux", version: "5.14.0-70.13.1.el9_0.x86_64", arch: "amd64", family: "unix"

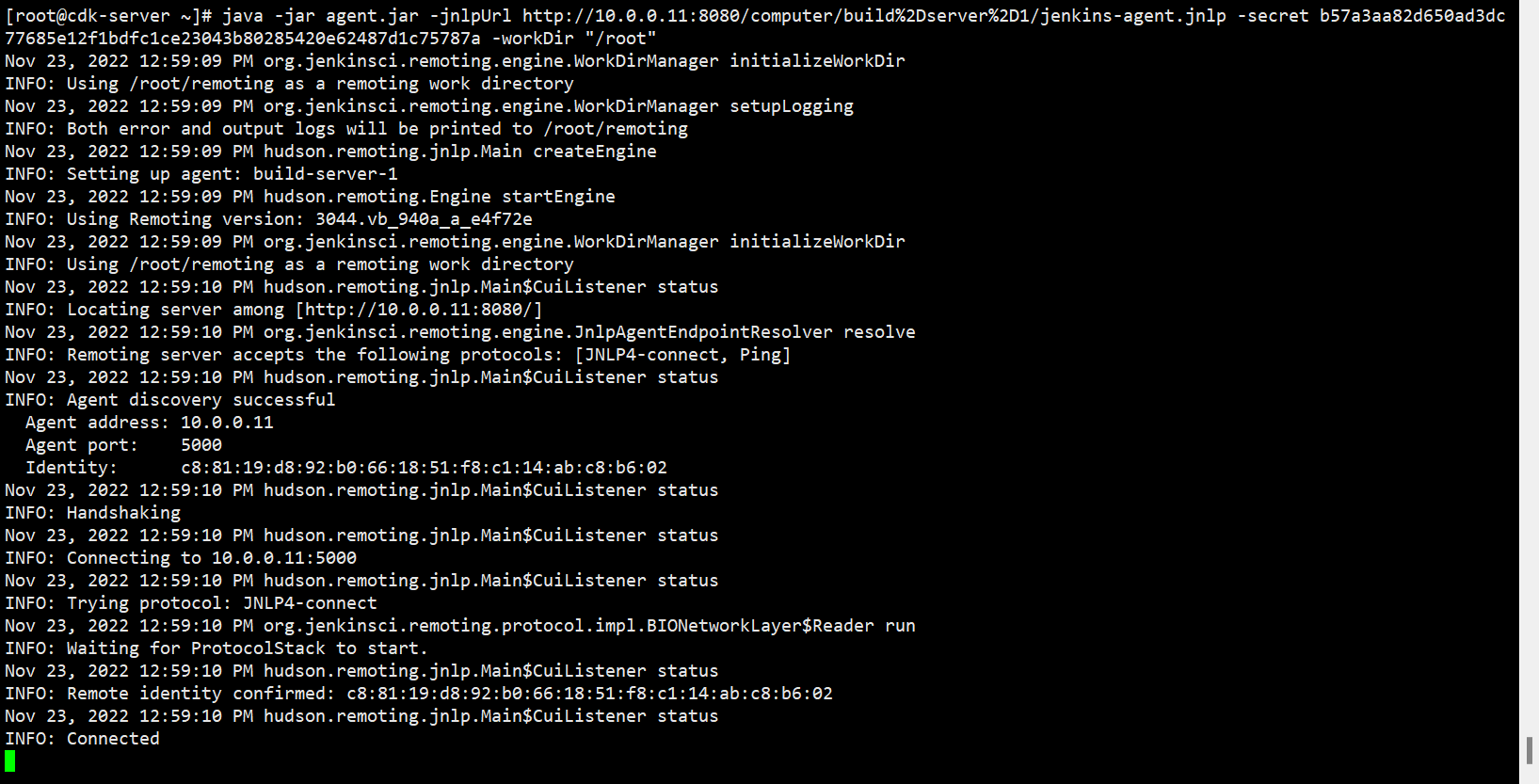

我们的agent节点也是需要安装java环境的哈,和Jenkins服务器操作一样的,然后执行上面的命令

当然我们完全可以使用nohup去管理这个进程,或者我们使用systemd来管理或者进程都是可以的,这个大家私下自己试着做一下就可以了。

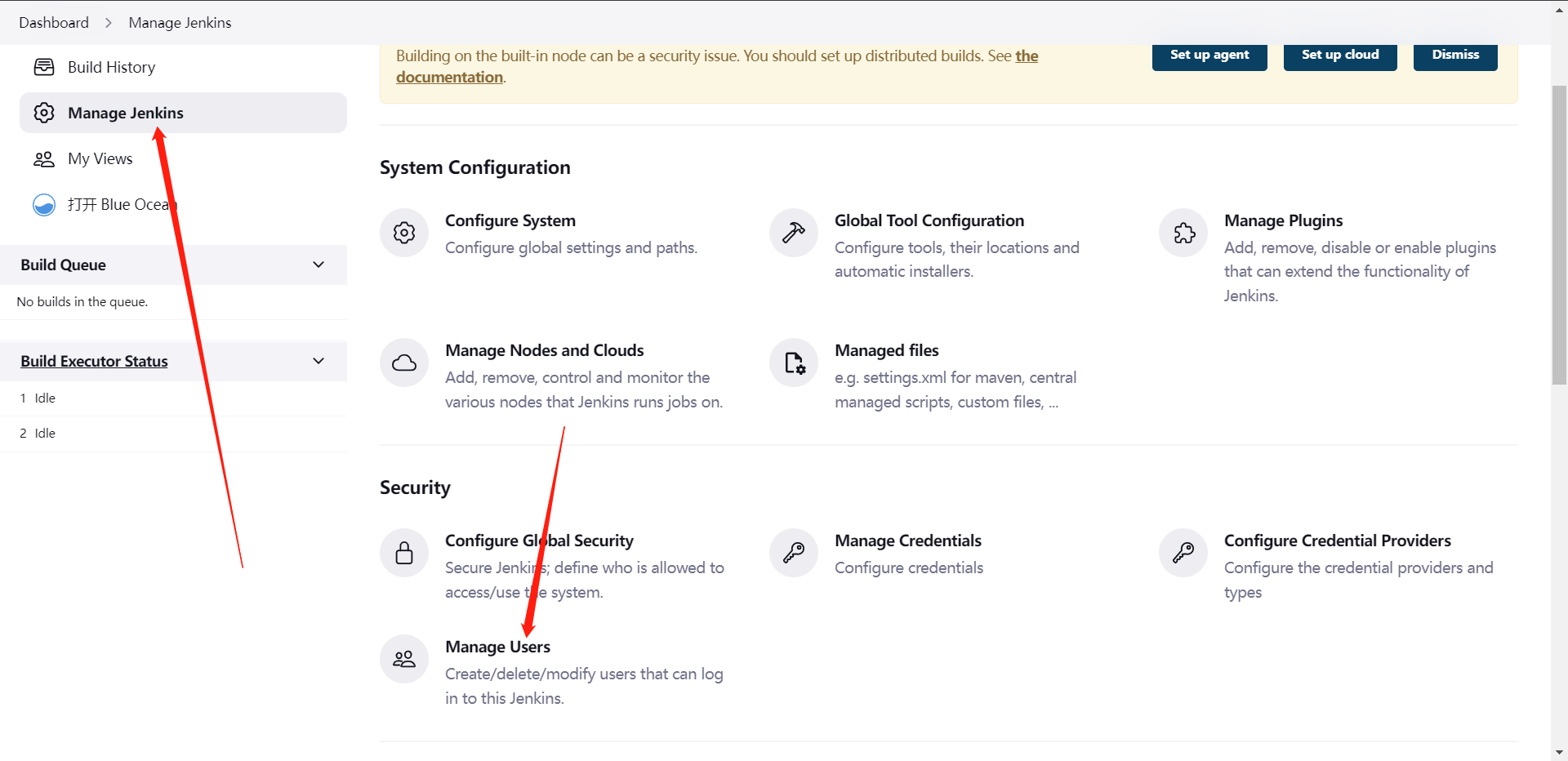

3:Jenkins用户管理

用户认证方式:

1:默认Jenkins自带数据库认证

2:LDAP认证

3:ActiveDirectory认证(AD域)

4:Gitlab/Github认证

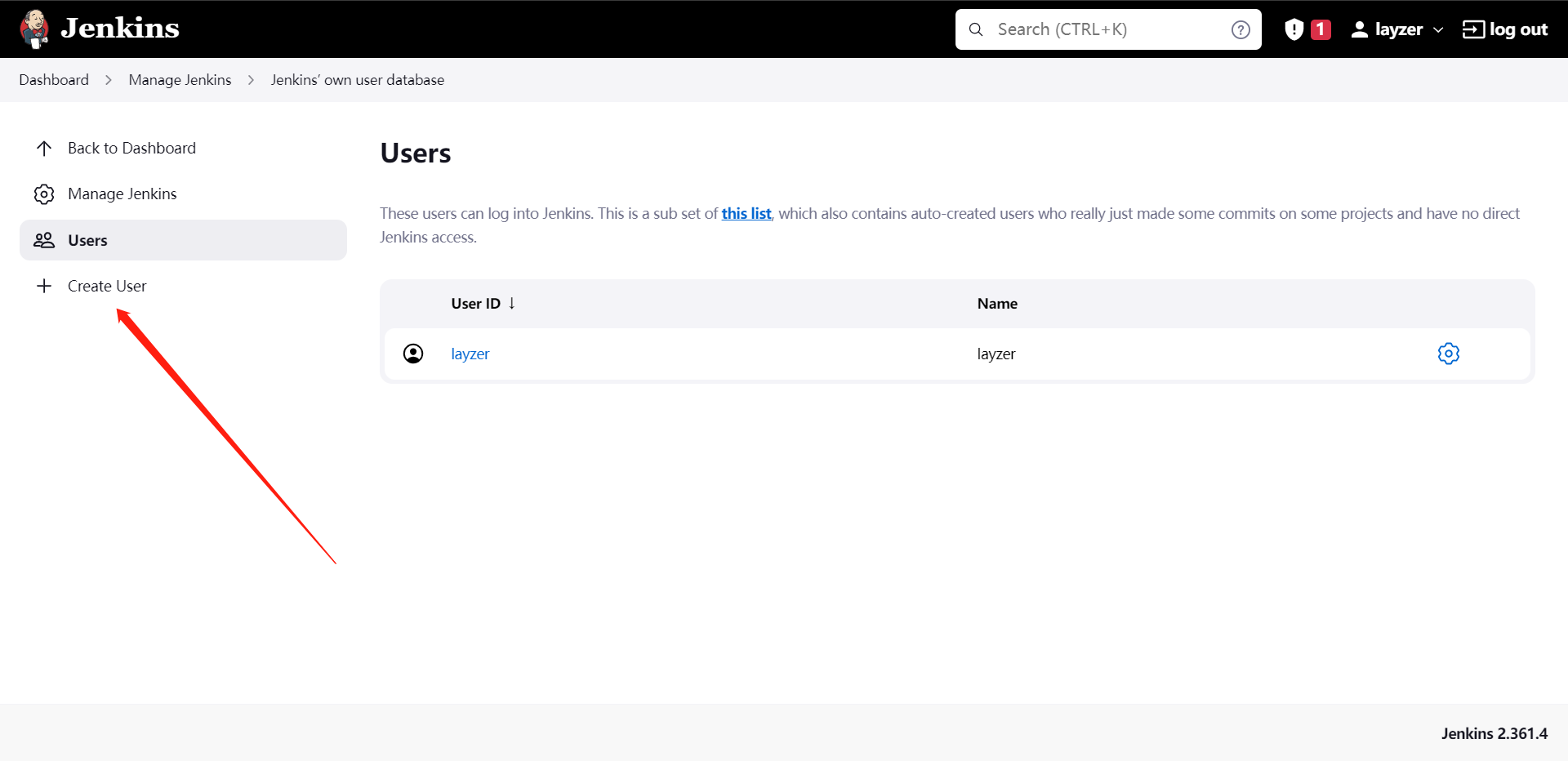

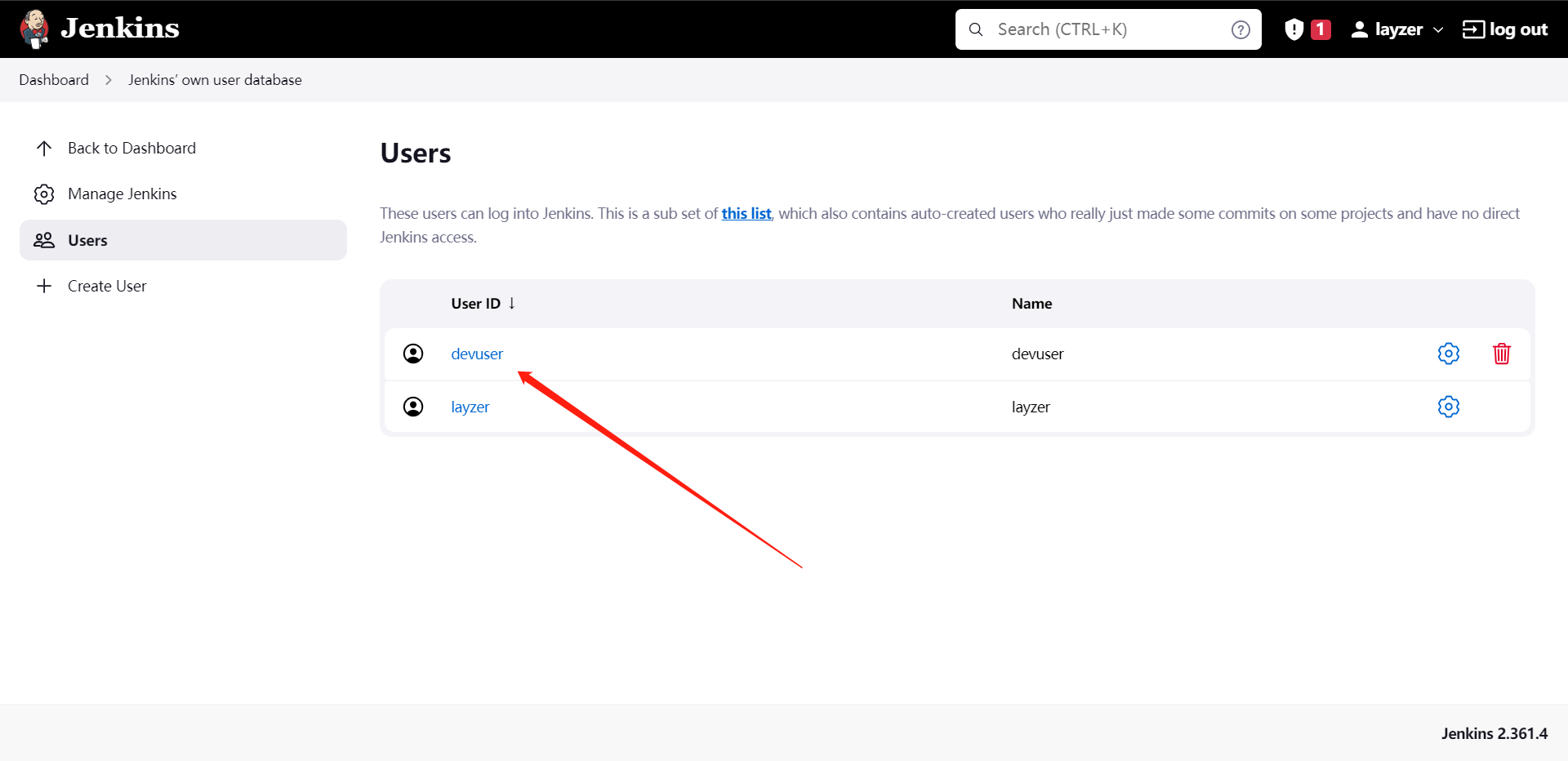

用户管理:

1:新增用户

2:删除用户

我这里就不演示了,主要还是我们如何针对用户授权,我们需要给用户某些流水线的权限等信息。

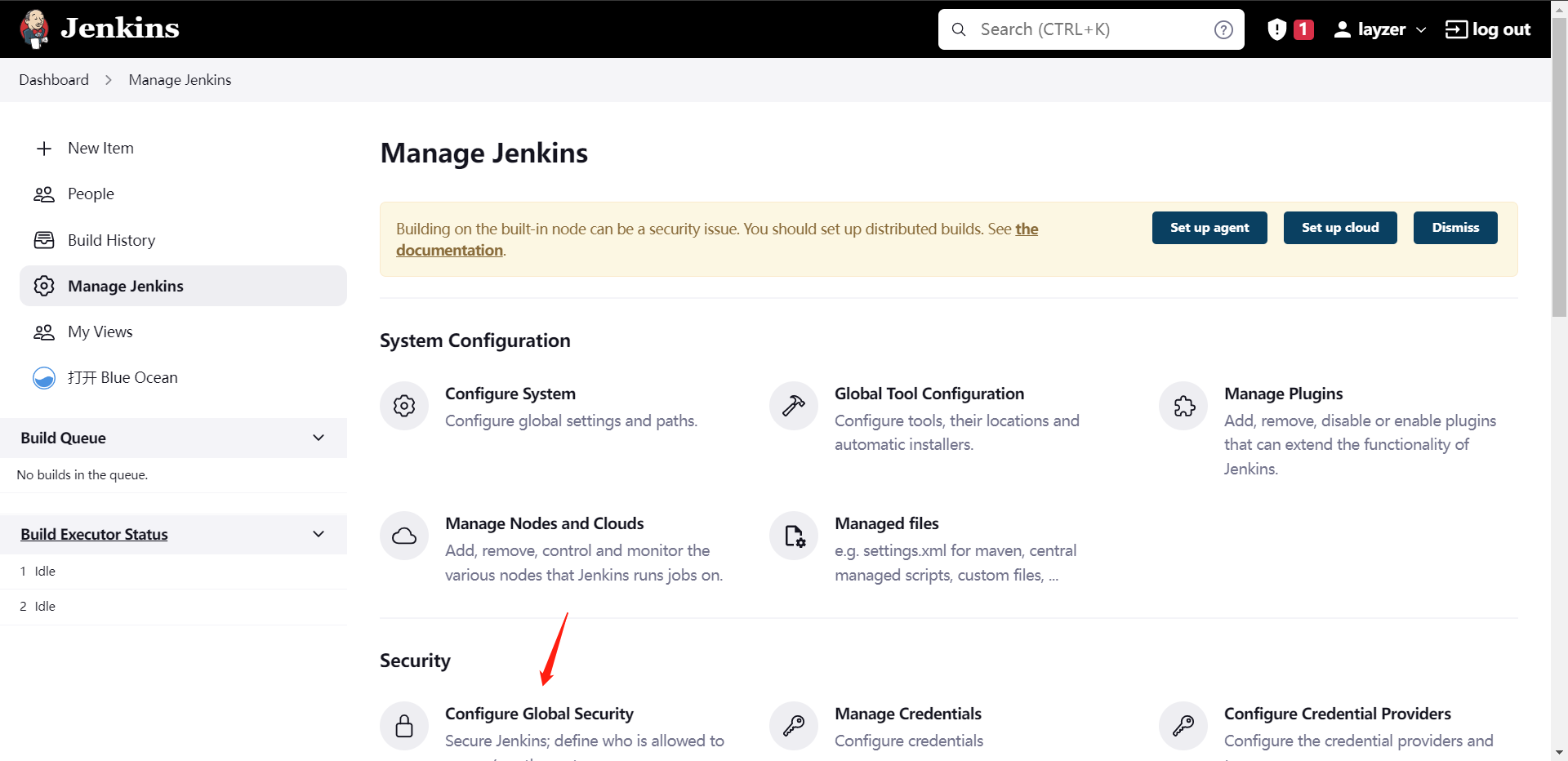

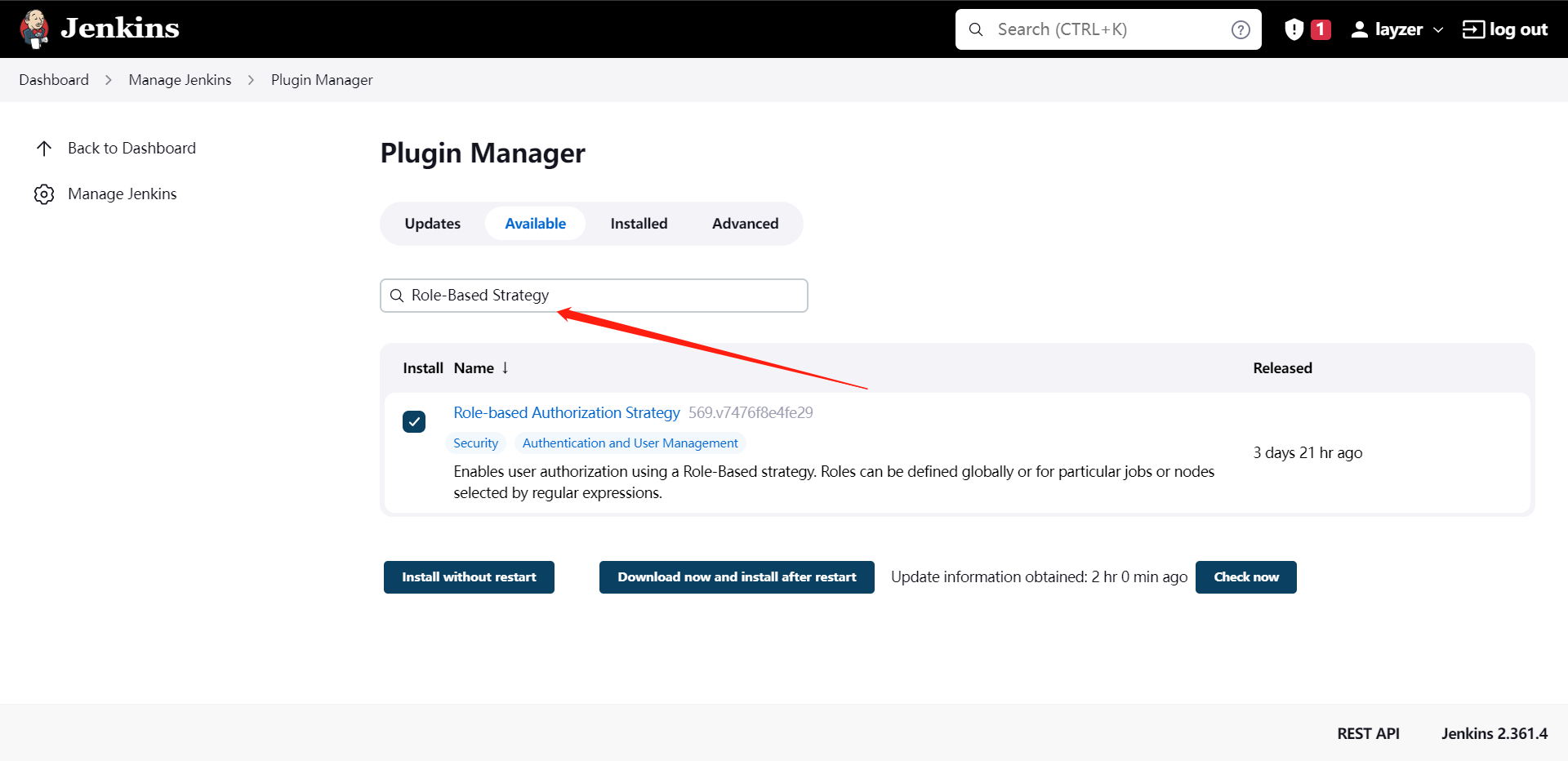

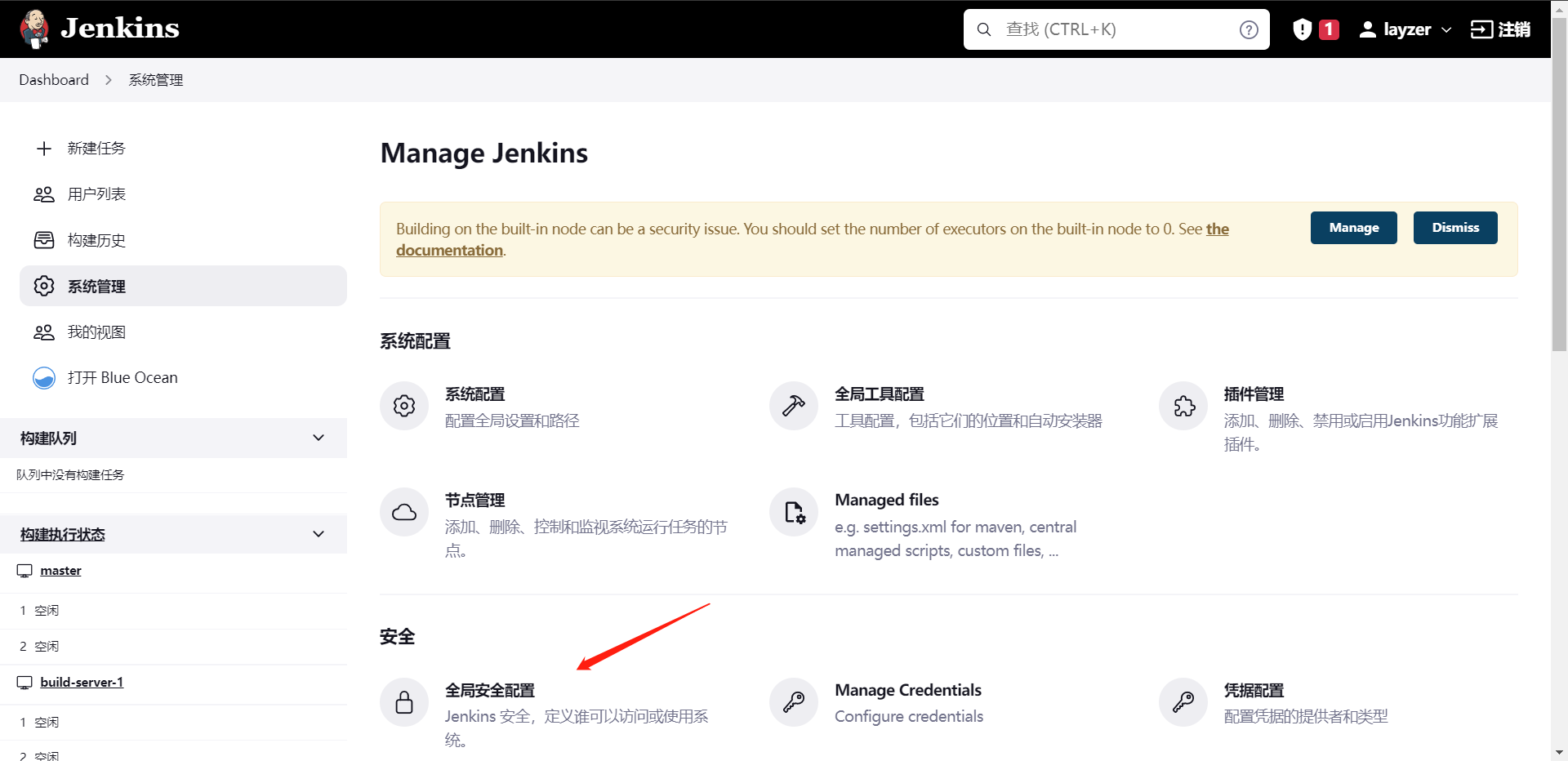

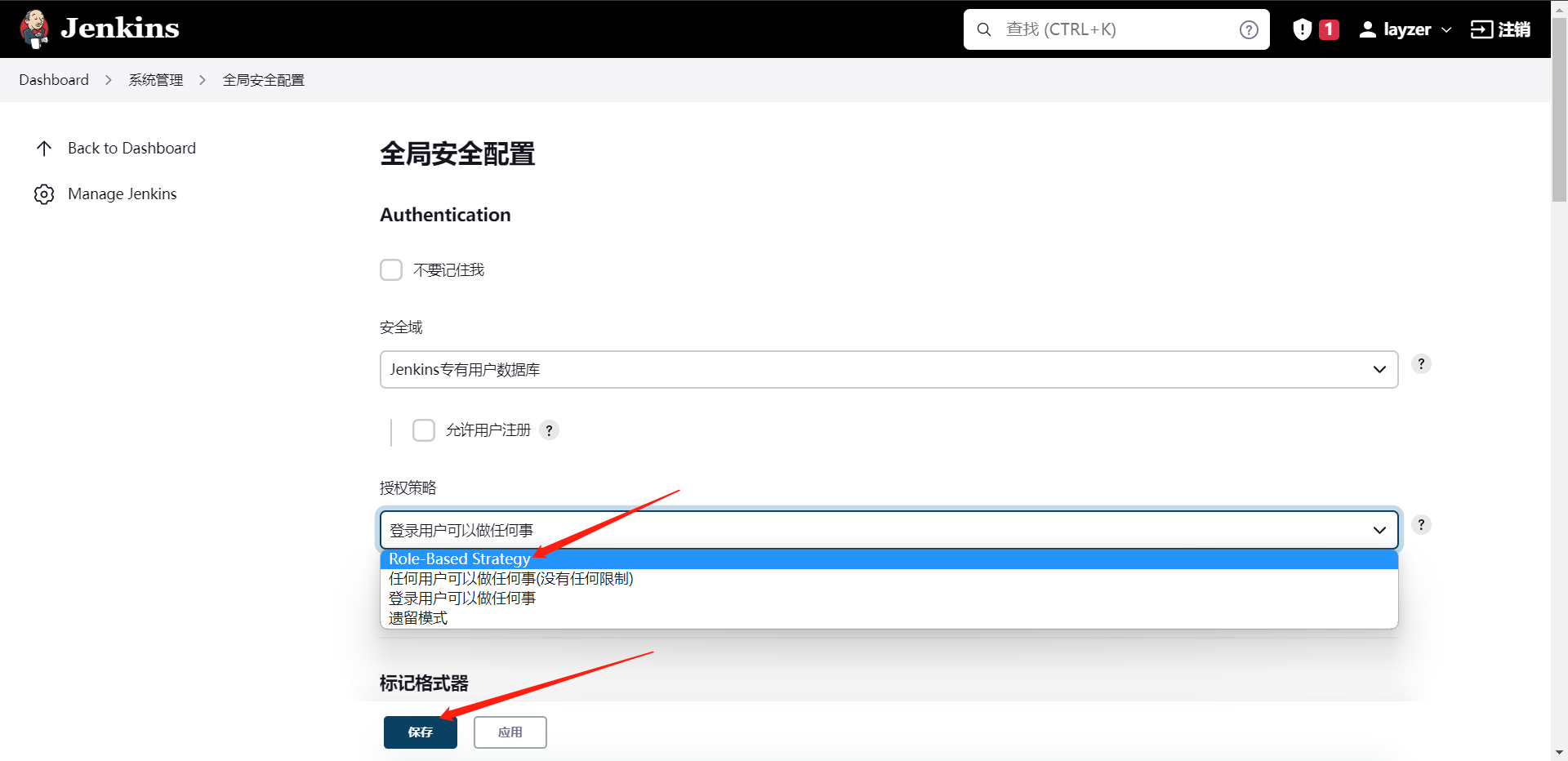

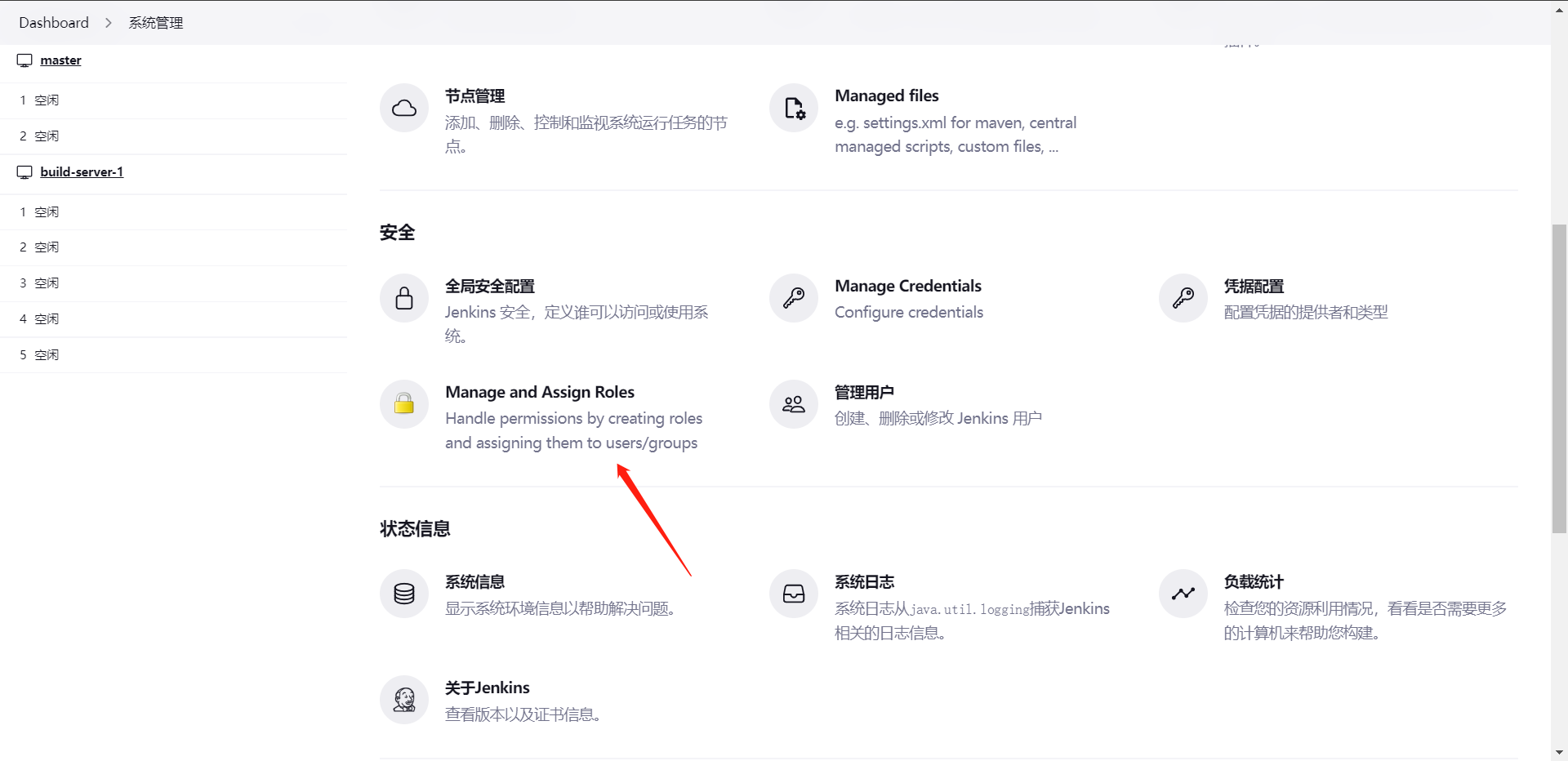

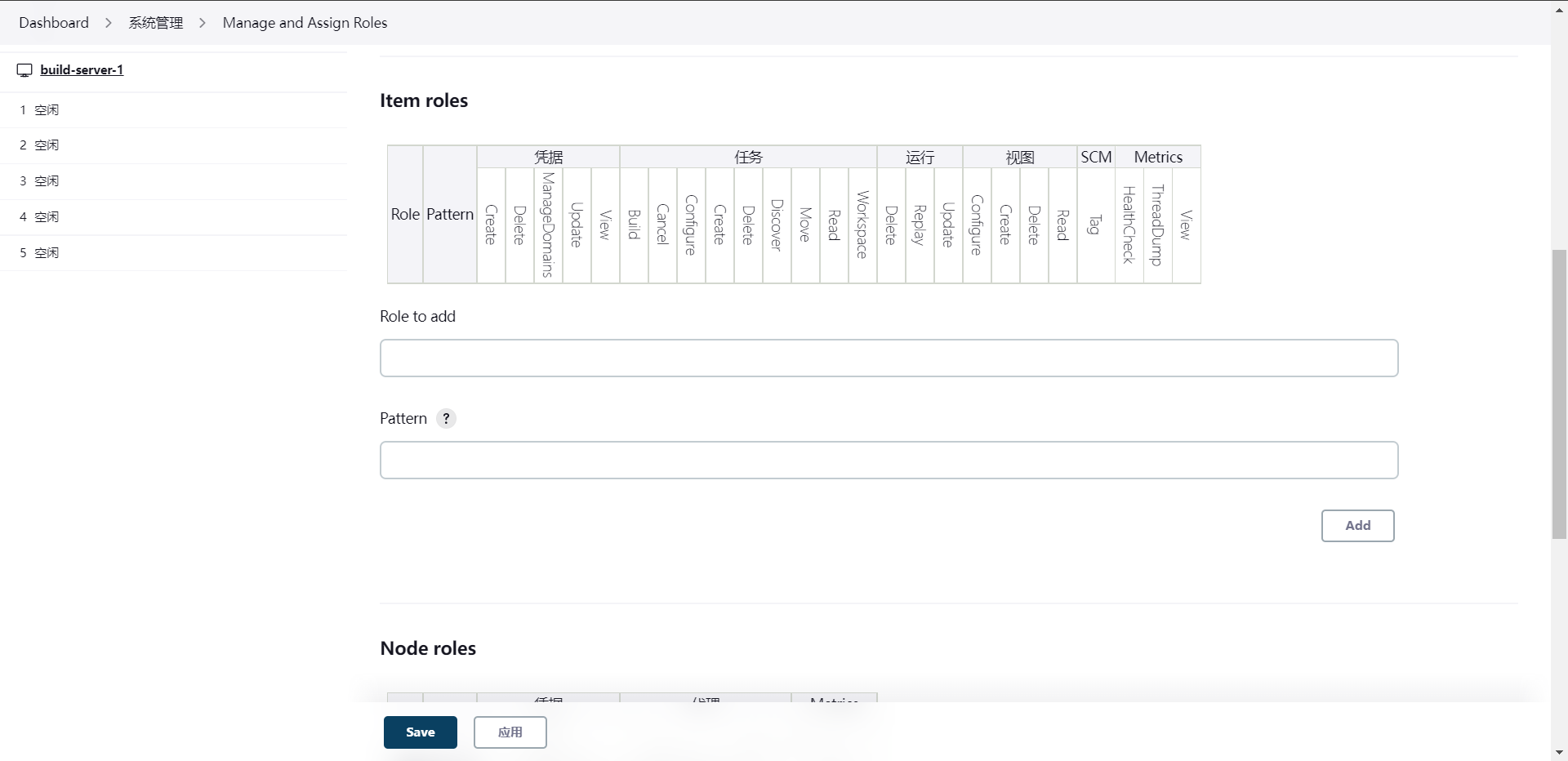

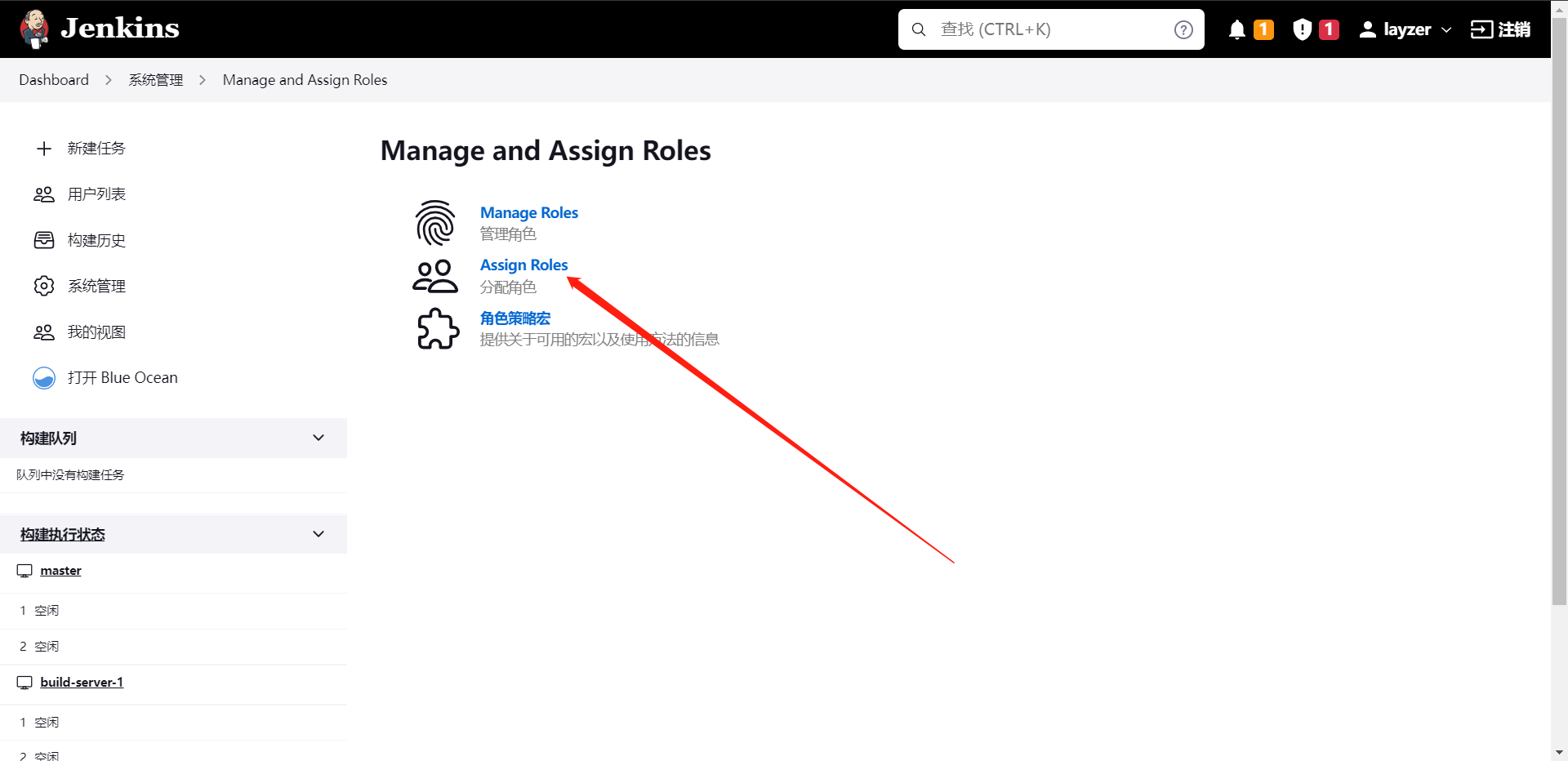

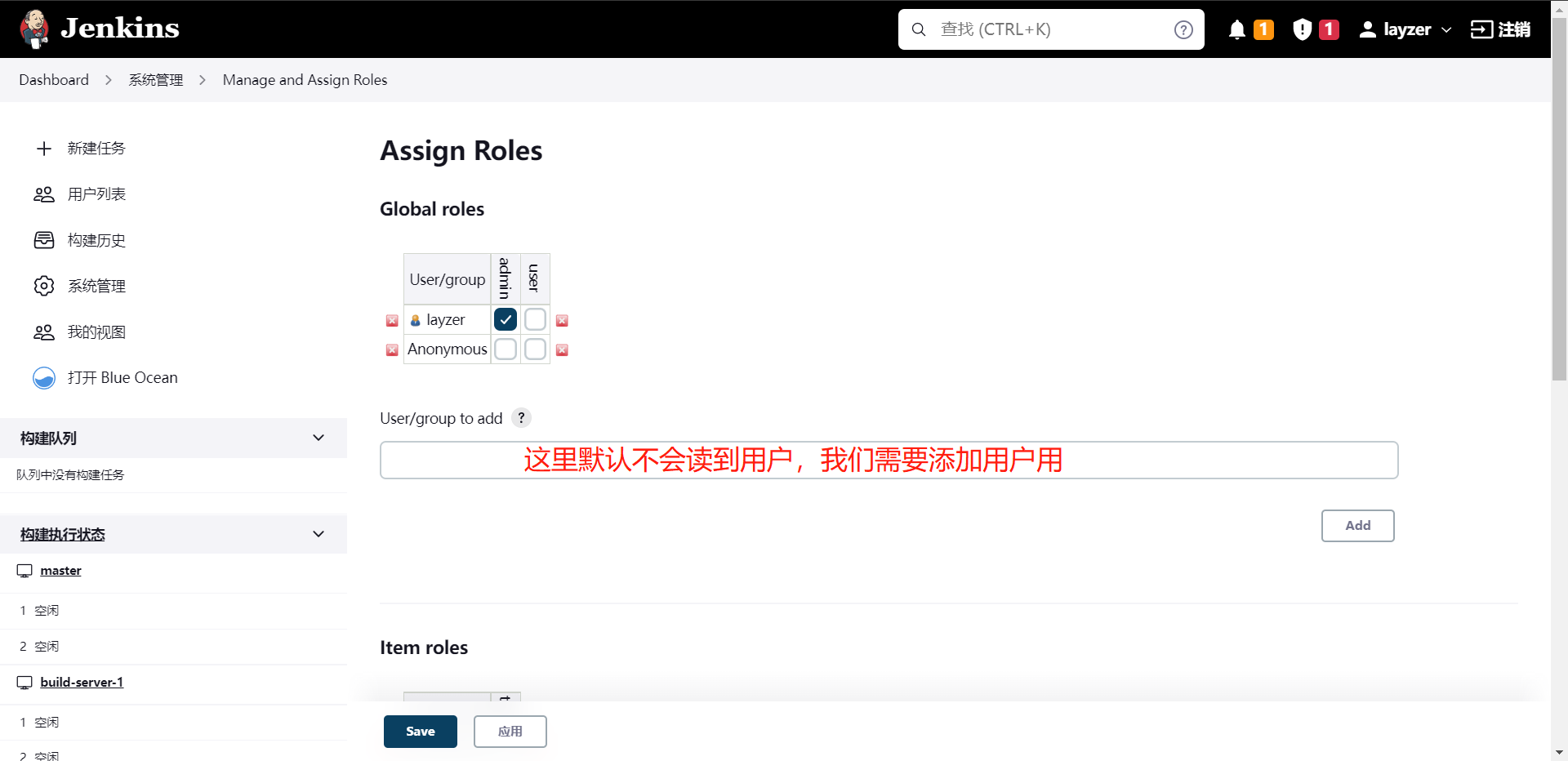

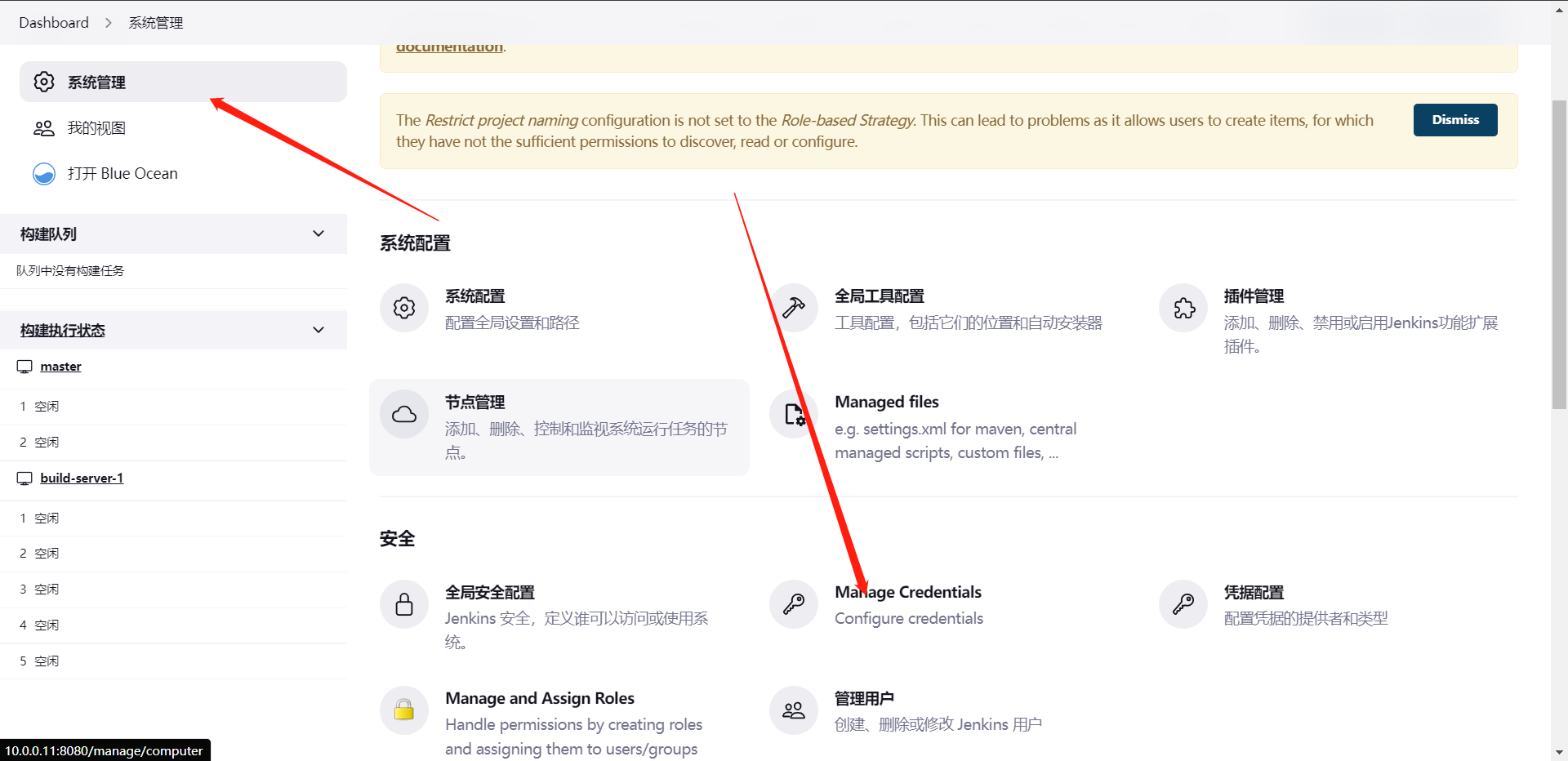

4:Jenkins权限管理

上面我们看到了用户管理,那么既然有用户,那么铁定少不了权限,所以,我们需要针对某些用户设置某些权限,那么下面我们就来学学权限是如何管理的

1:安装插件

Role-Based Strategy

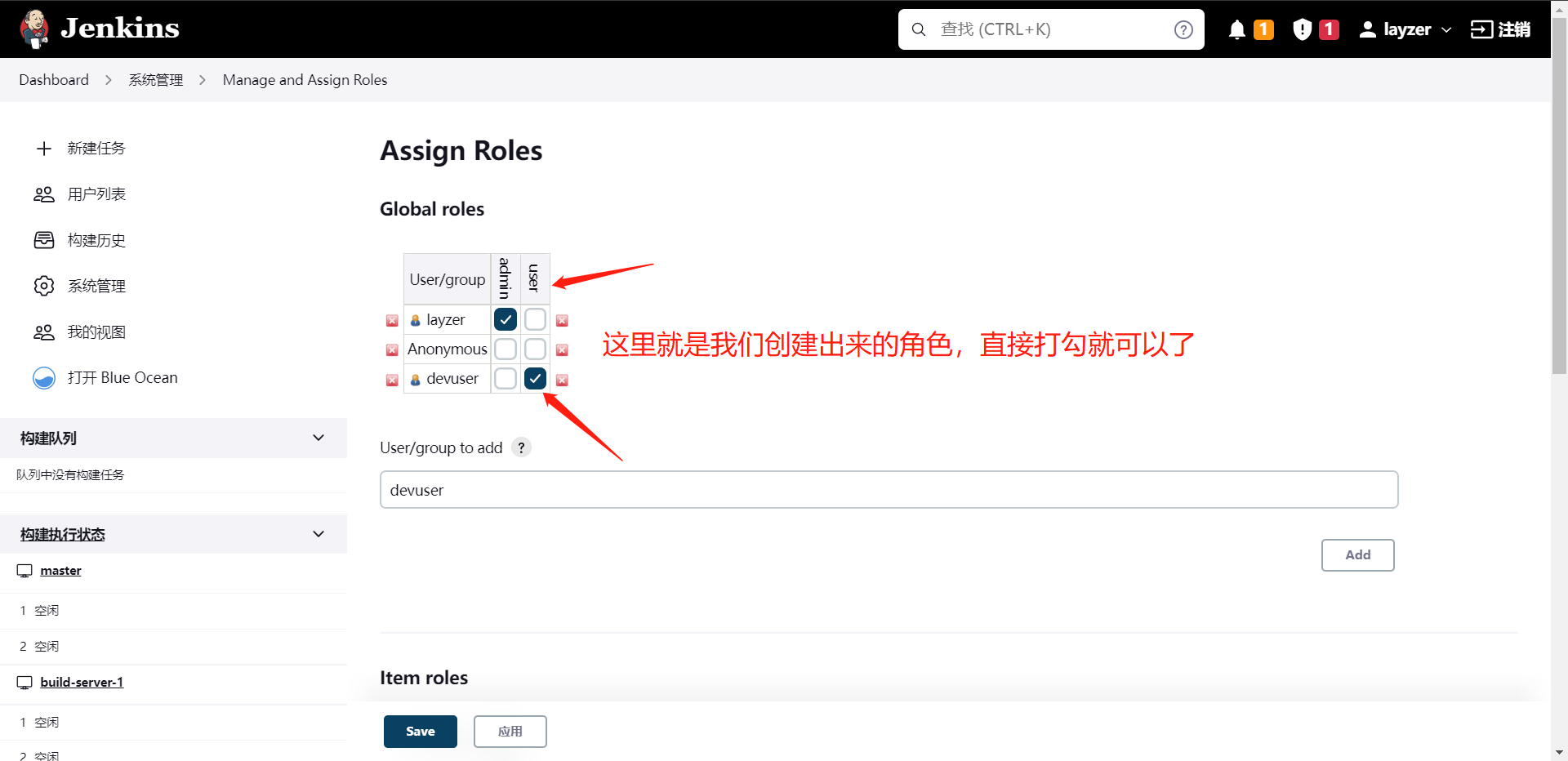

随后这个时候我们就不得不去创建一个测试帐号了(前面有点坑,忘了创建了)

Jenkins权限这一块分为

1:项目权限

2:用户权限

# 我这里还装了一个中文插件,大家有需要的可以自己去装一下,直接搜Chinese就搜到了。

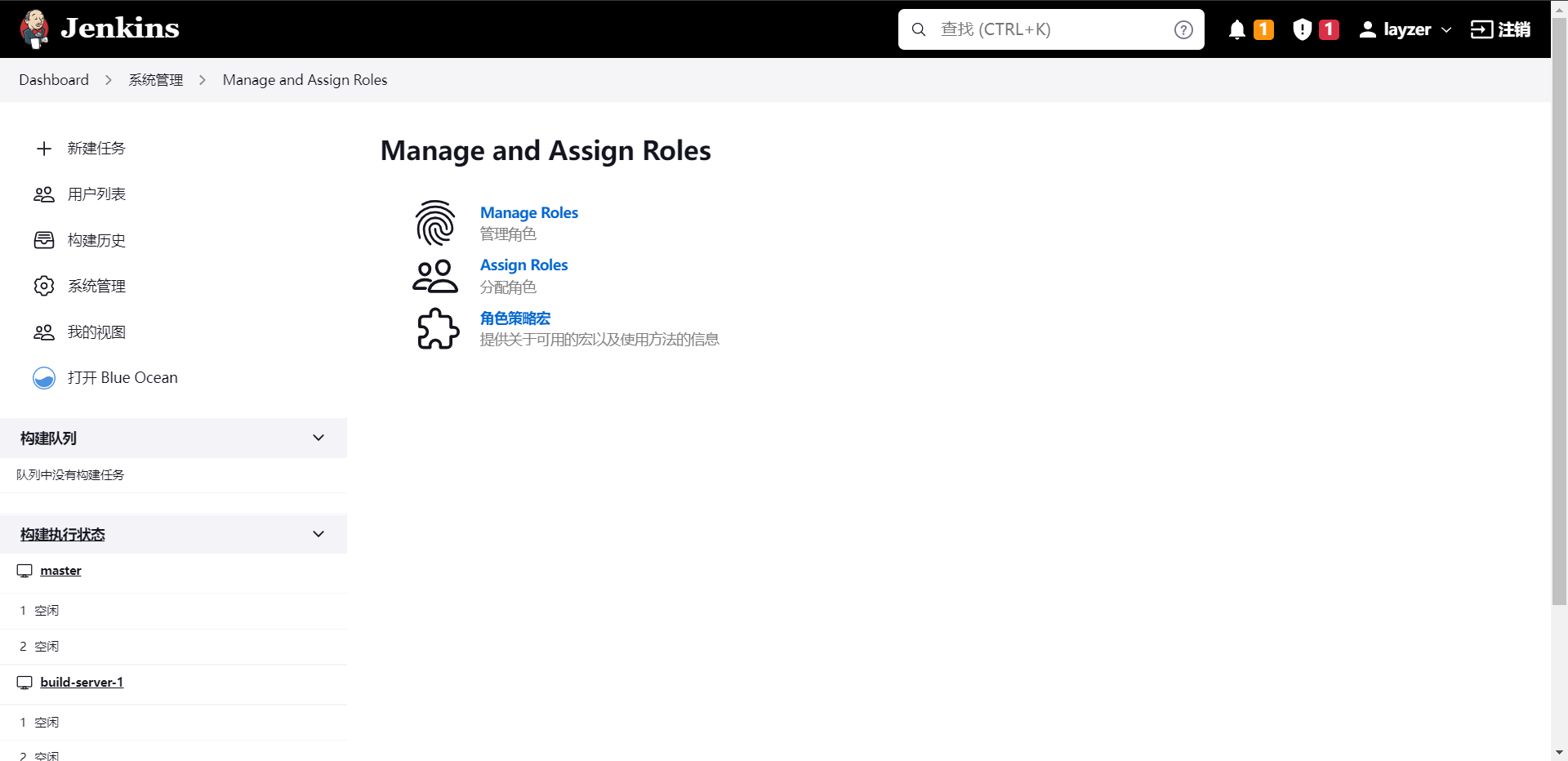

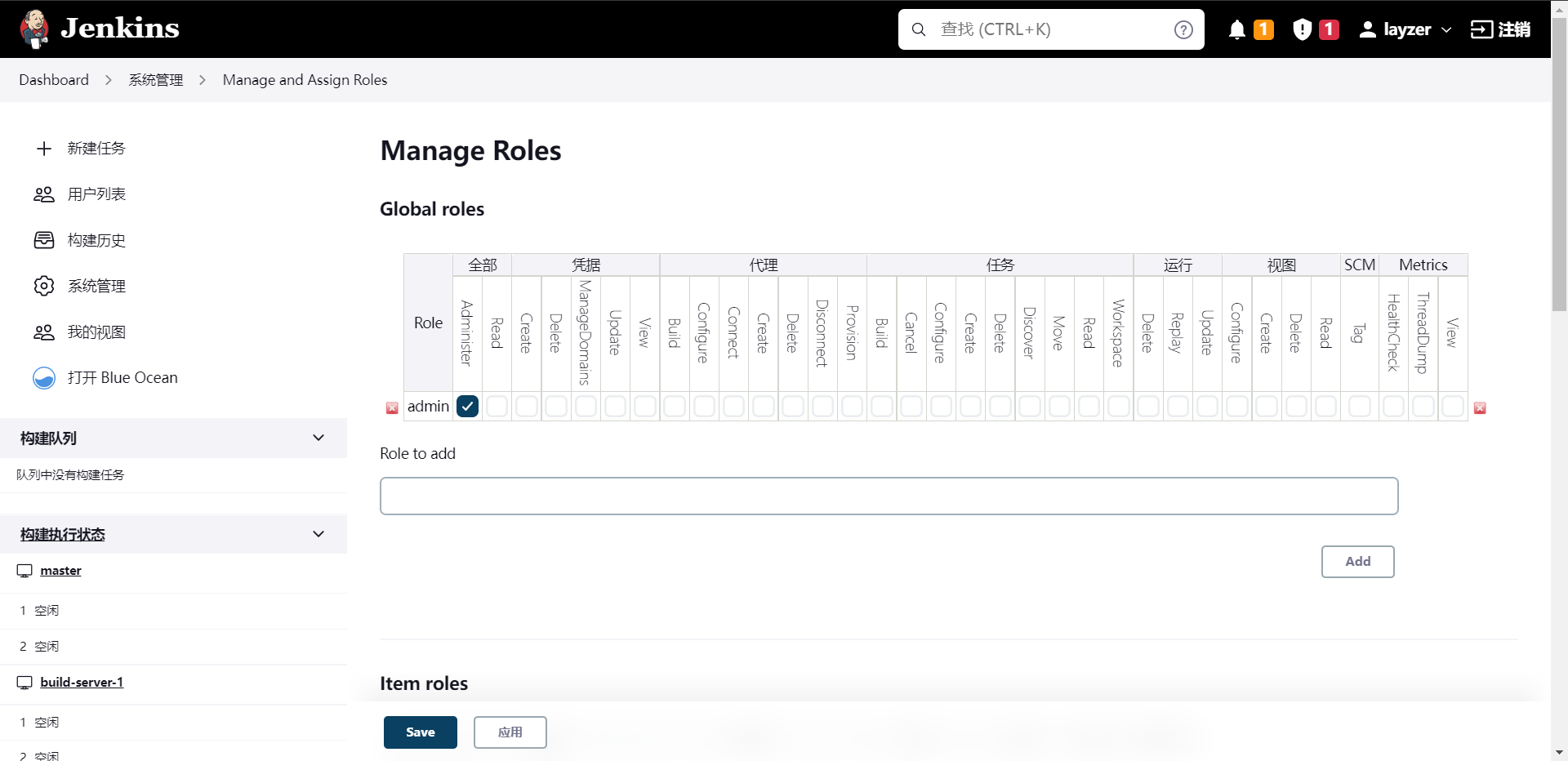

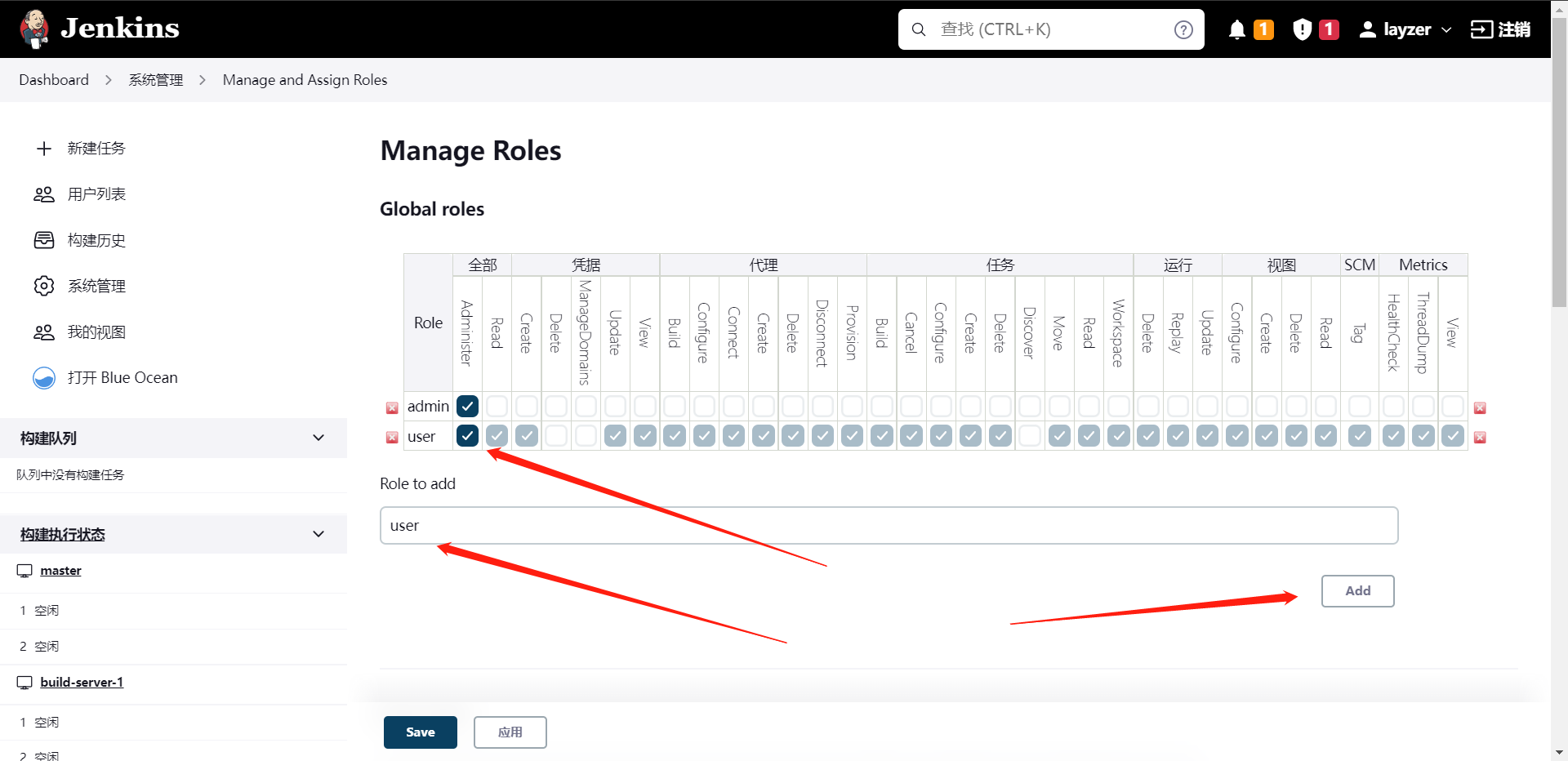

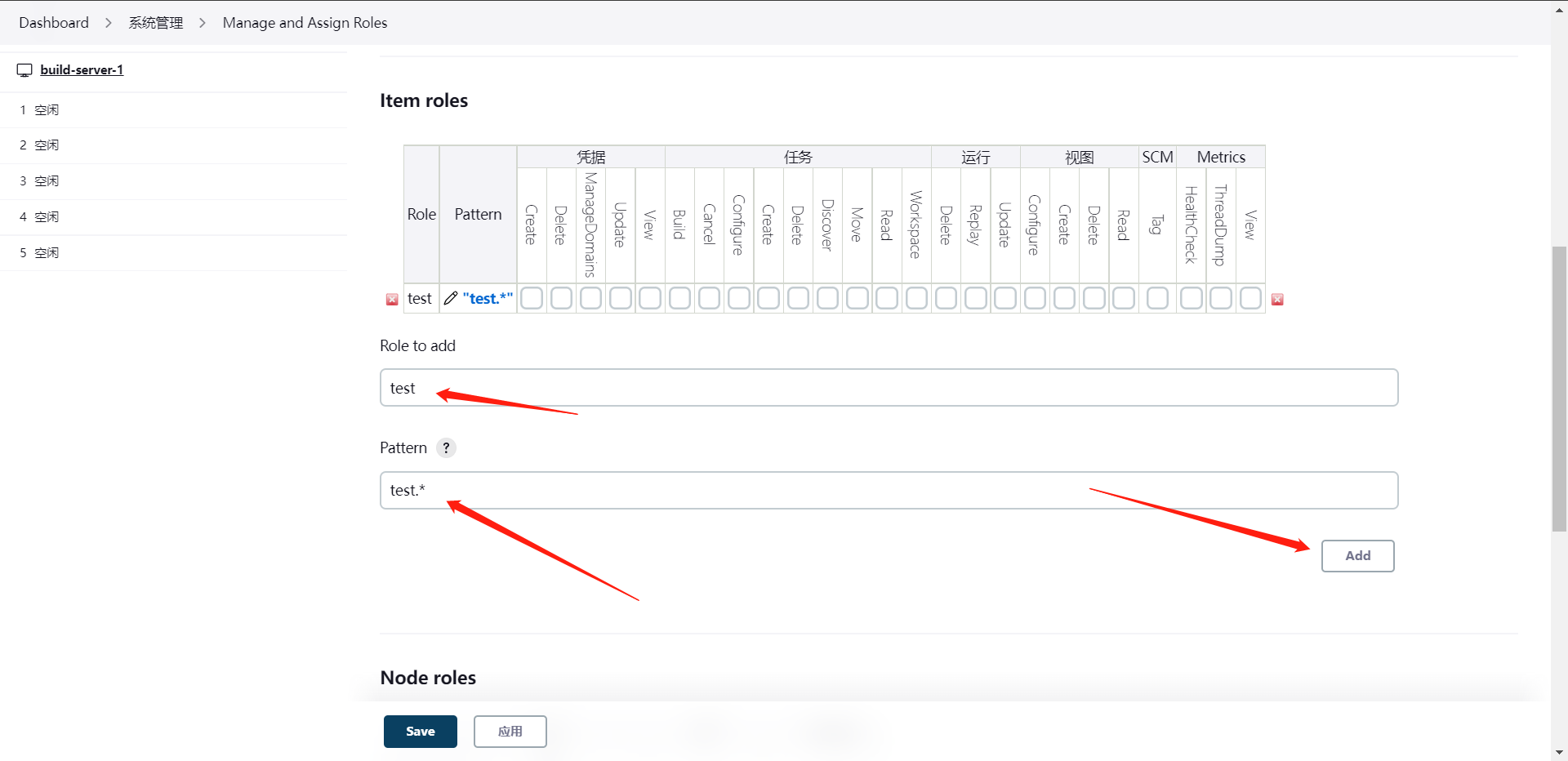

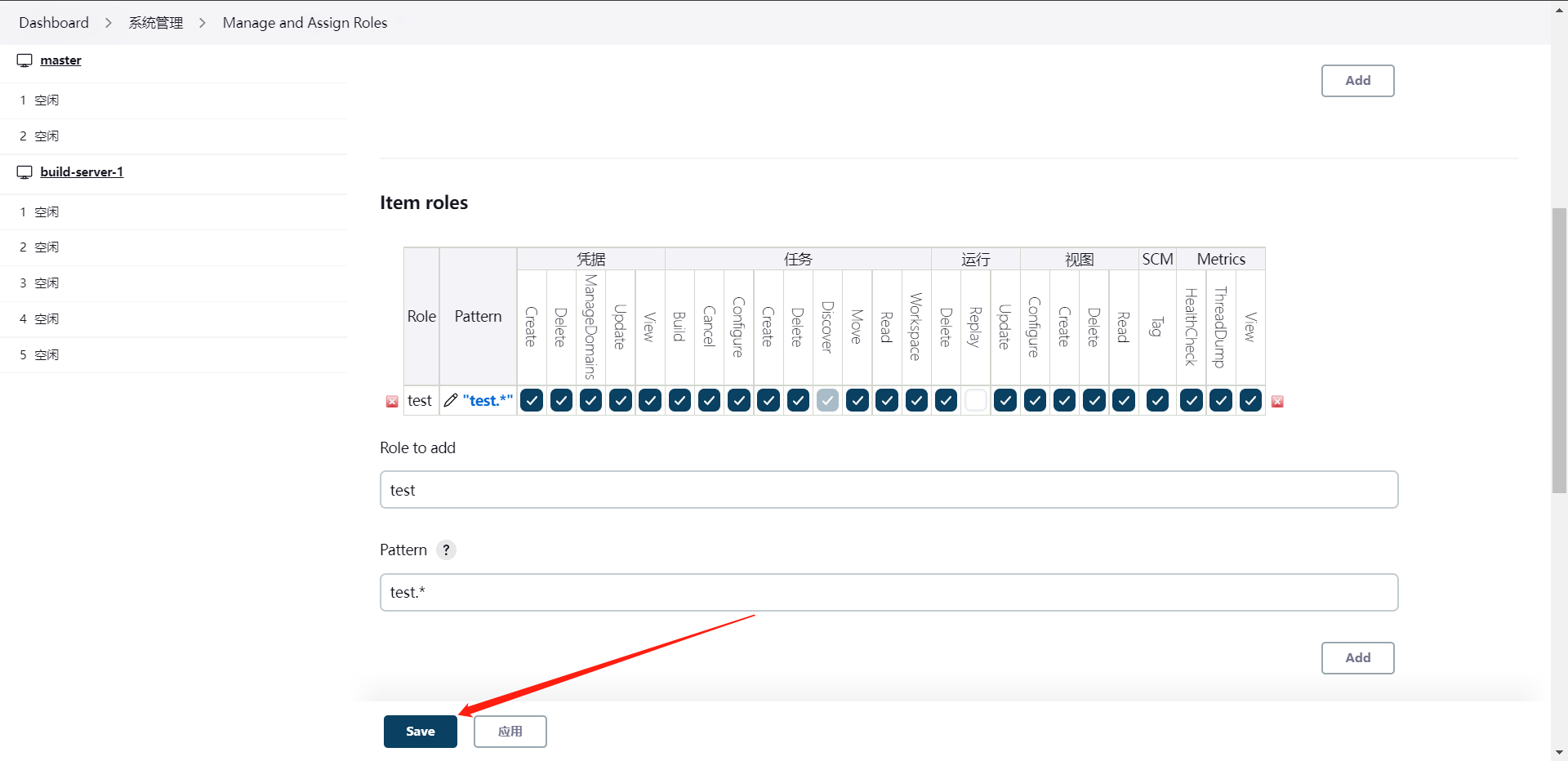

那么我们首先看的是`管理角色`,也就是说它可以将一组权限分给一个角色,然后将角色绑定给一个或者多个用户,这样就等于给这个用户赋予了角色所拥有的权限了。

这里要着重说明一下,这里的权限划分的其实还是比较细的,我们可以根据用户赋予指定权限,当然也可以创建一个角色分权限给角色,然后再分角色给用户

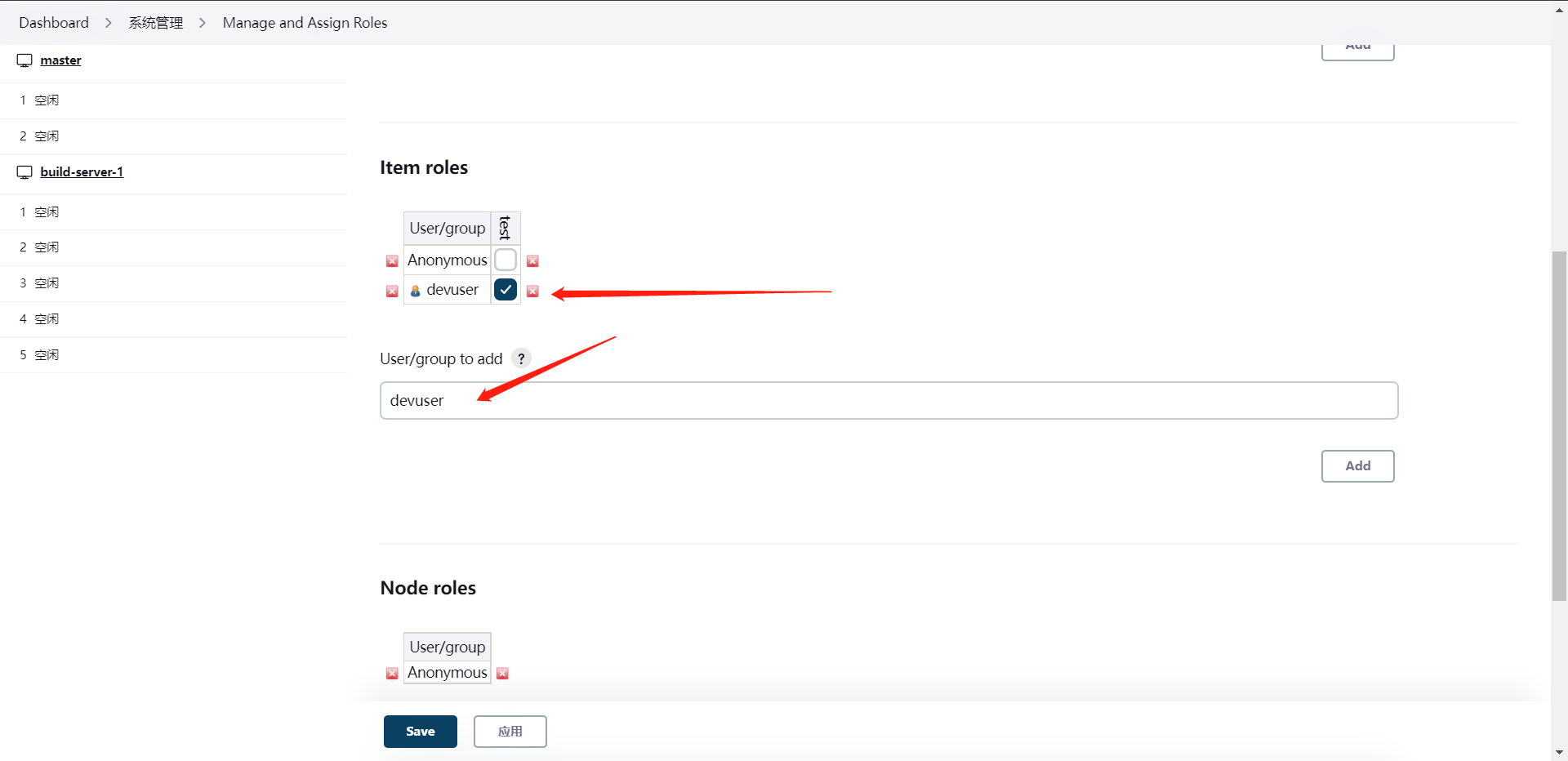

这里是基于项目,我们来做一下。

这样我们两个角色的权限就创建好了,然后我们就可以去分配角色了

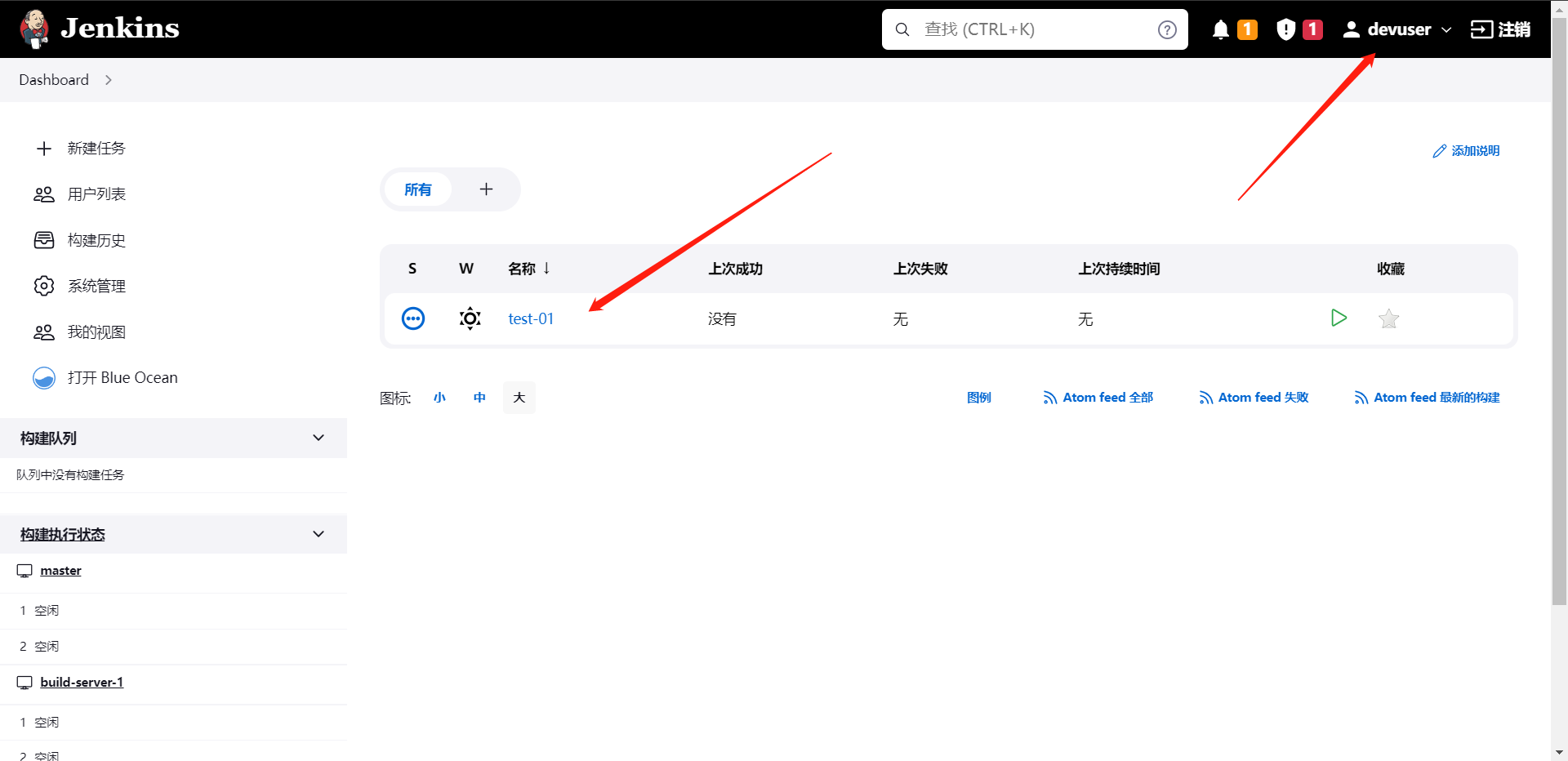

这样完事儿之后我们就可以尝试登录一下那个用户了,但是在登录前我们需要去创建一个项目符合我们的项目权限规则

且混账户查看权限是否生效

我这里给的权限是比较高的,大家有兴趣的可以去根据用户分配一下,比如说开发,不能更改流水线,不能删除流水线,不能创建流水线等操作,这个都可以实现

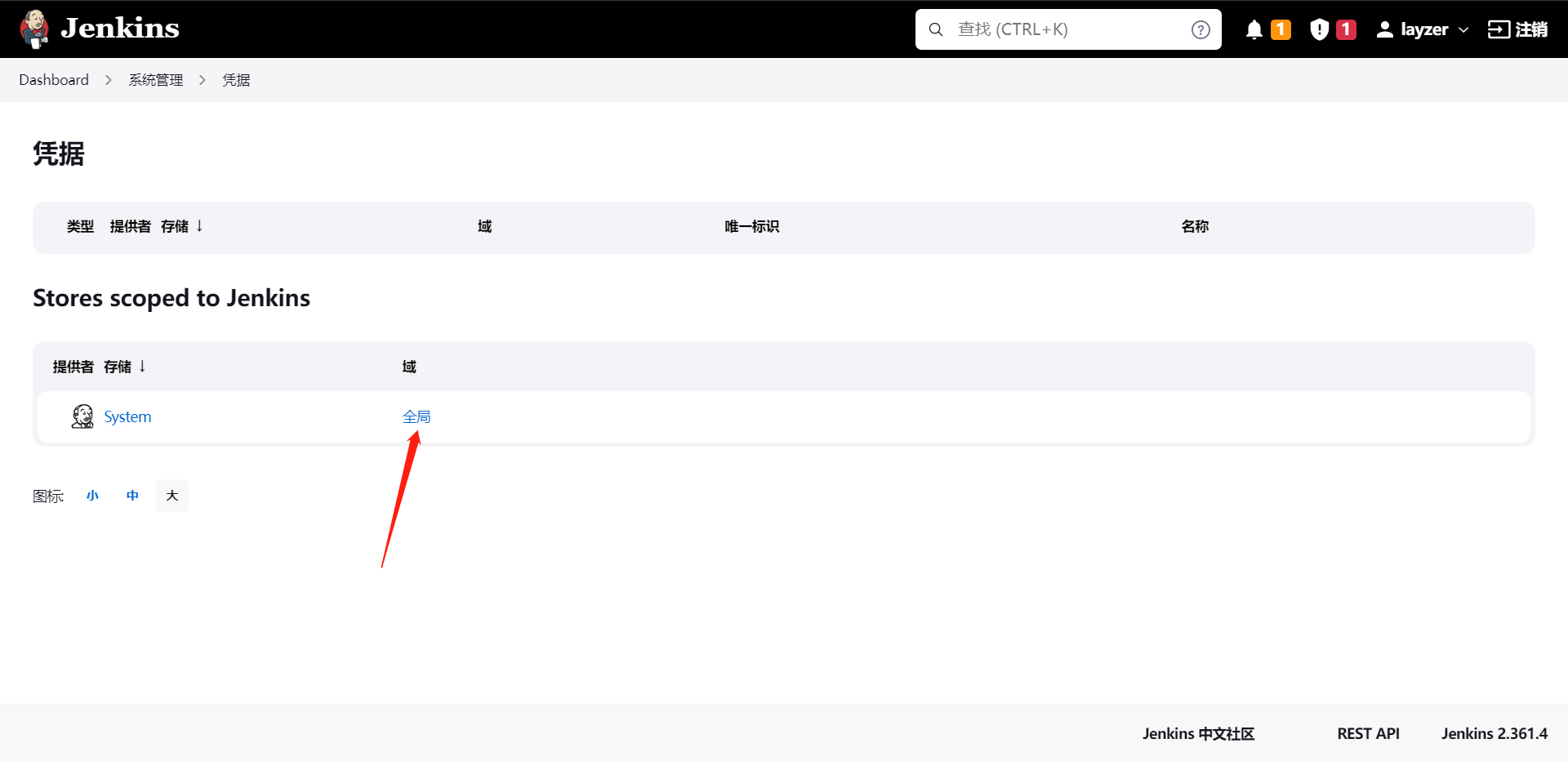

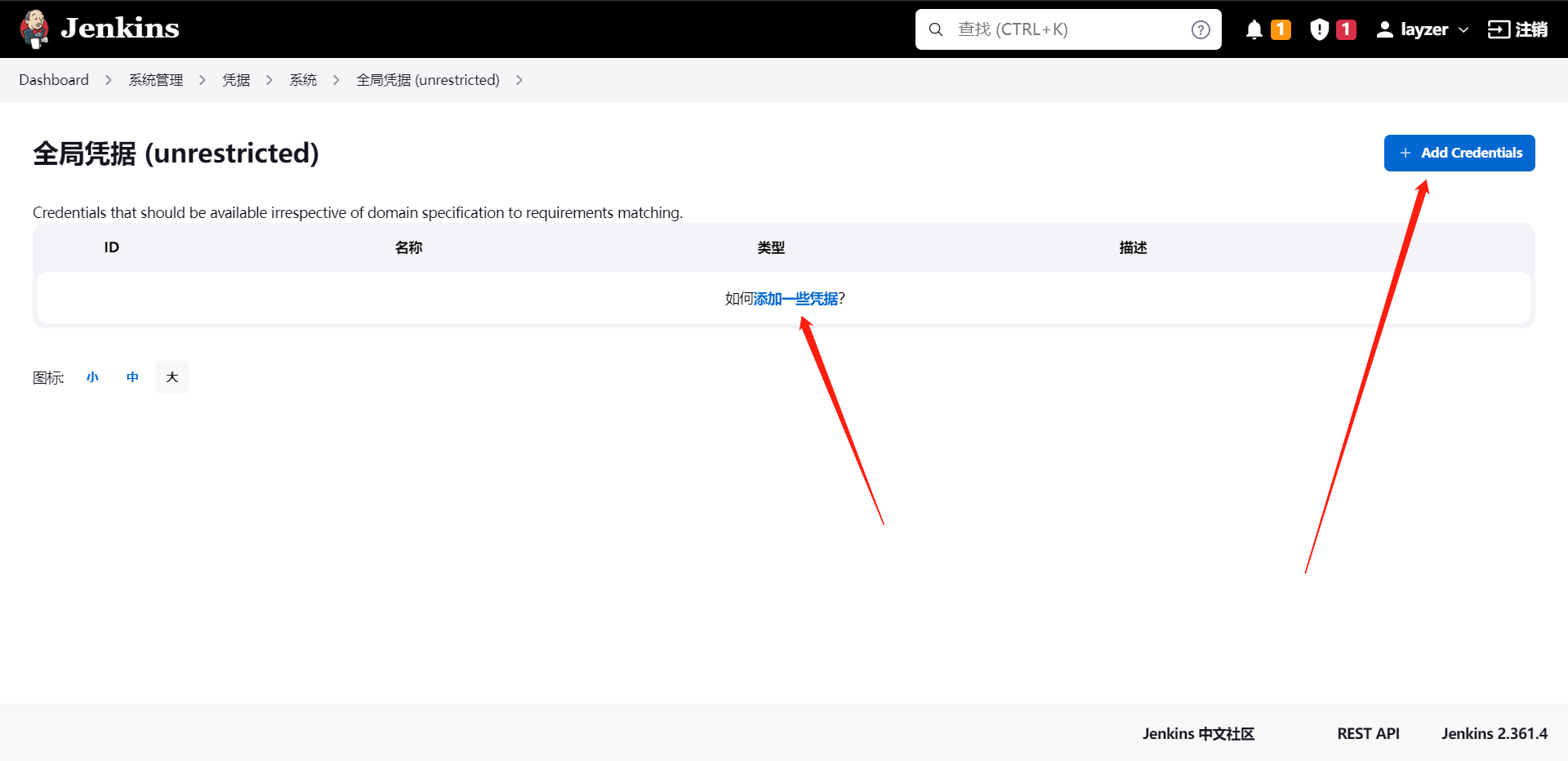

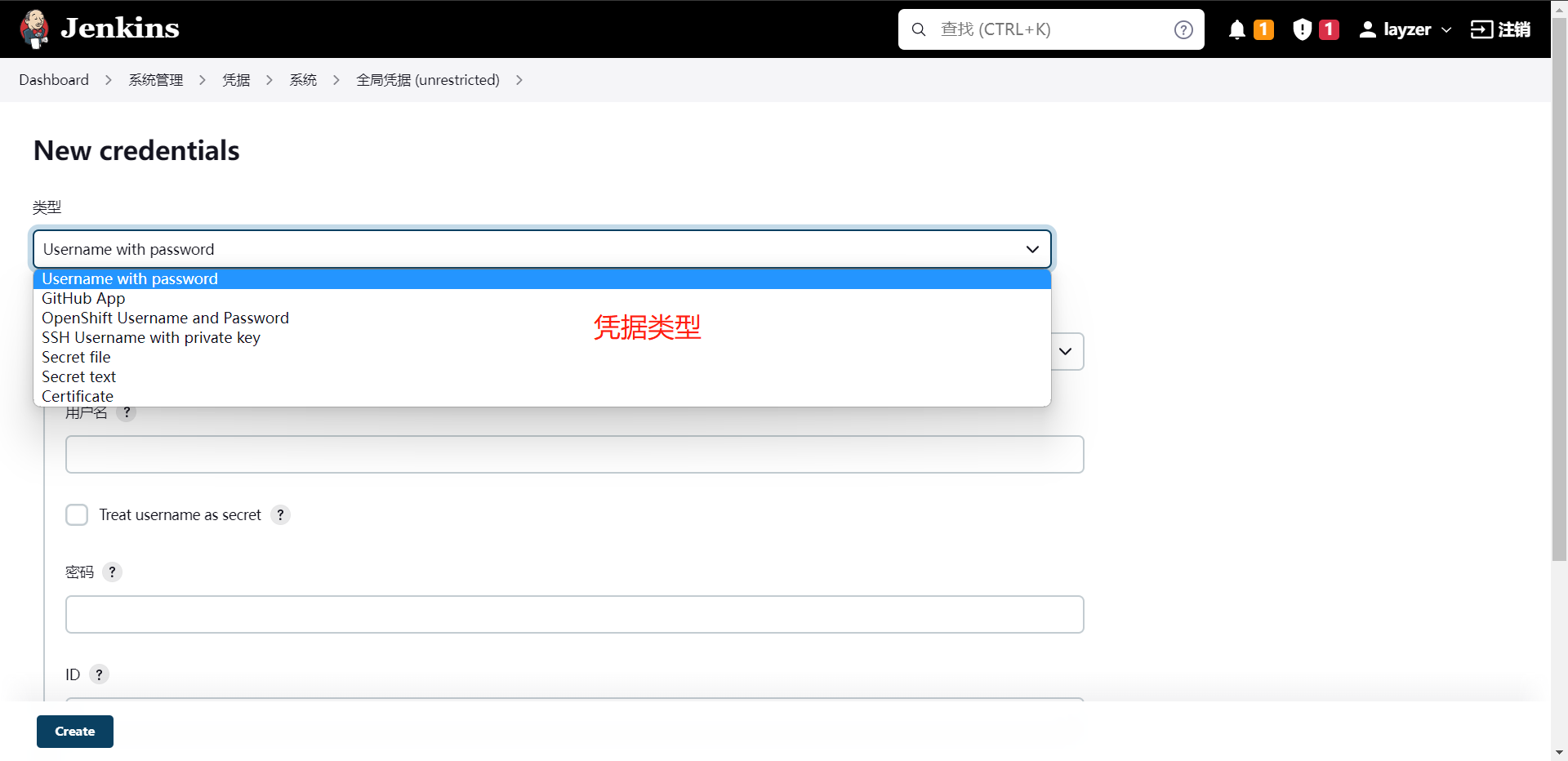

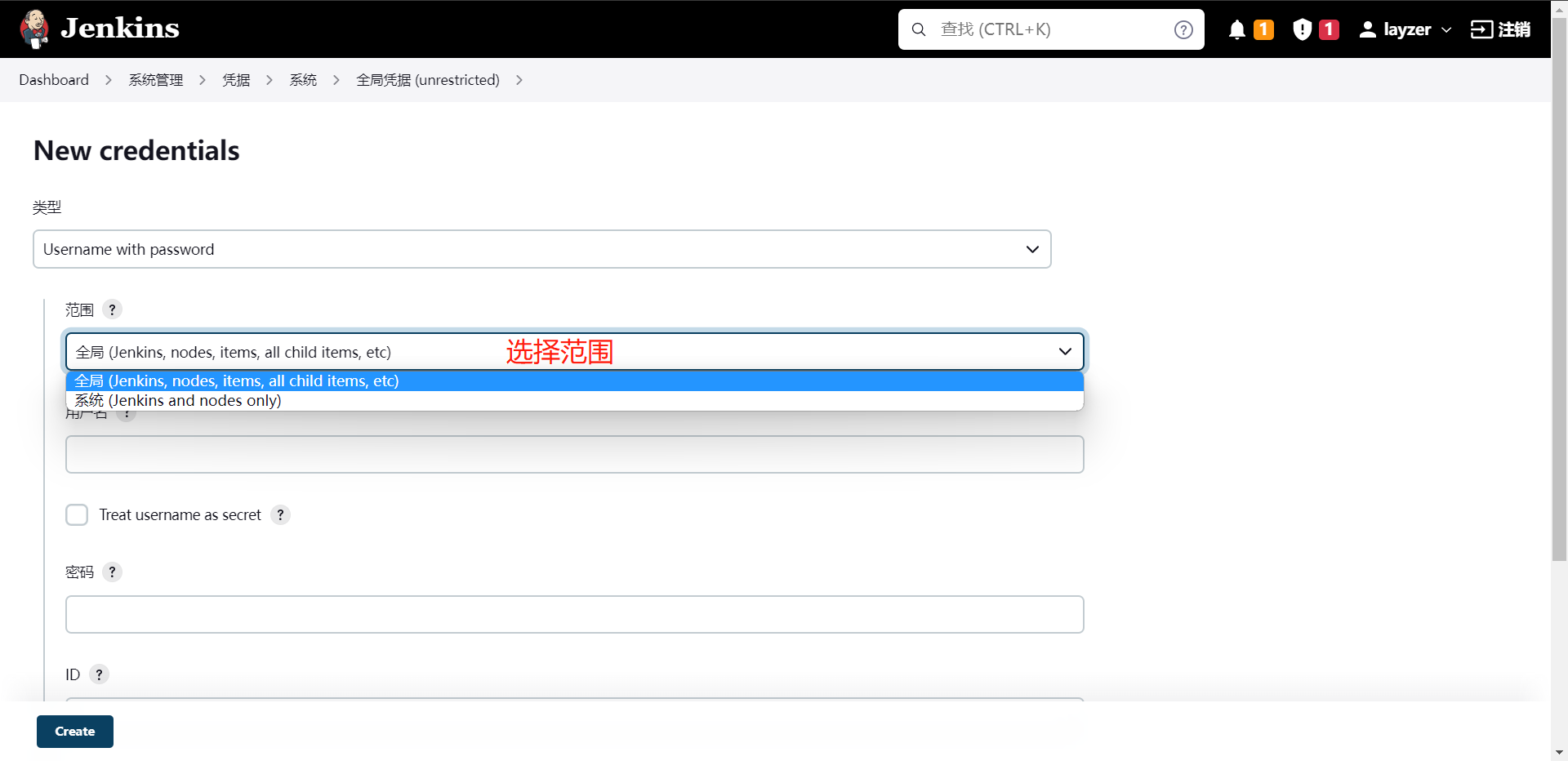

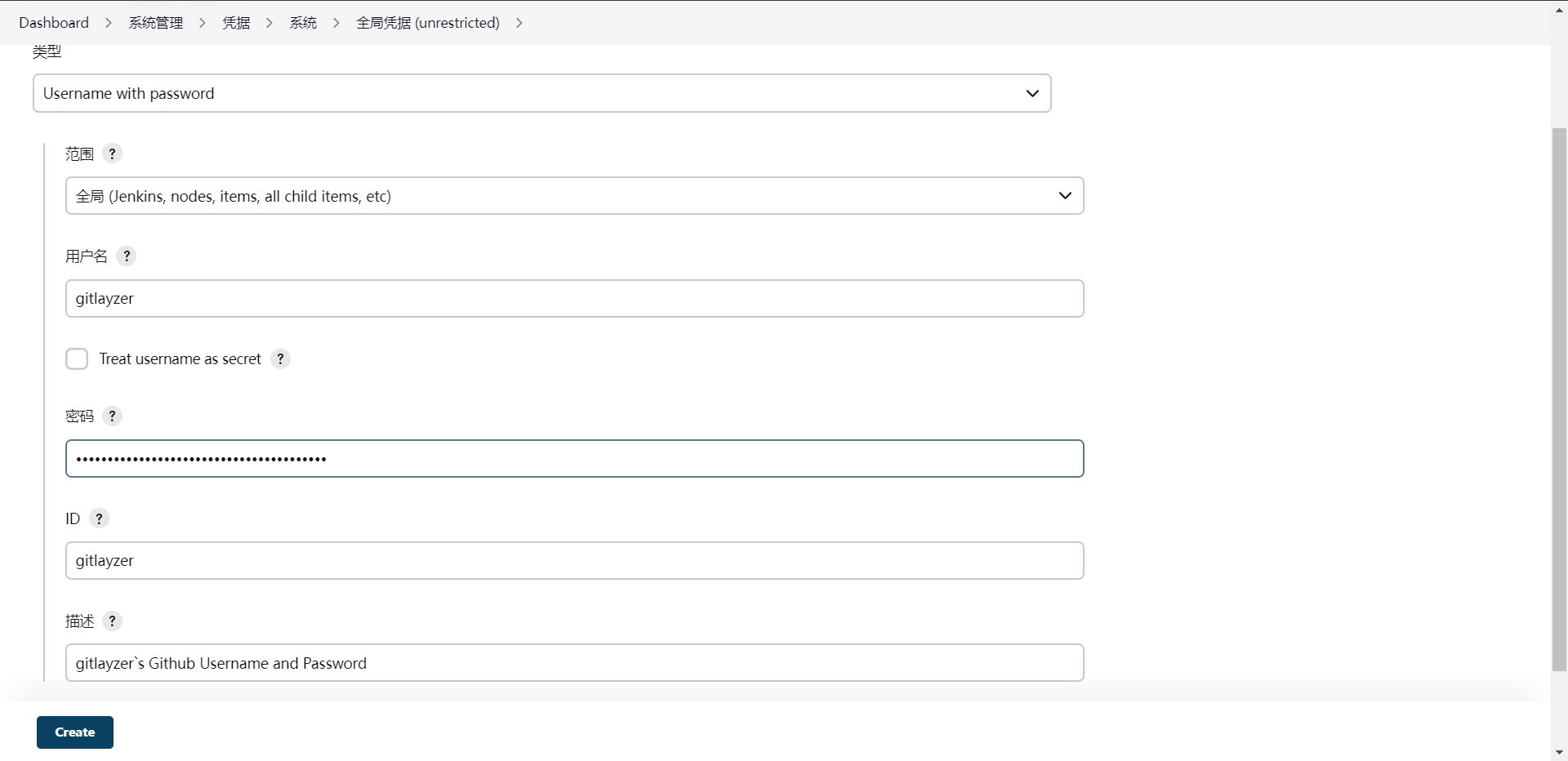

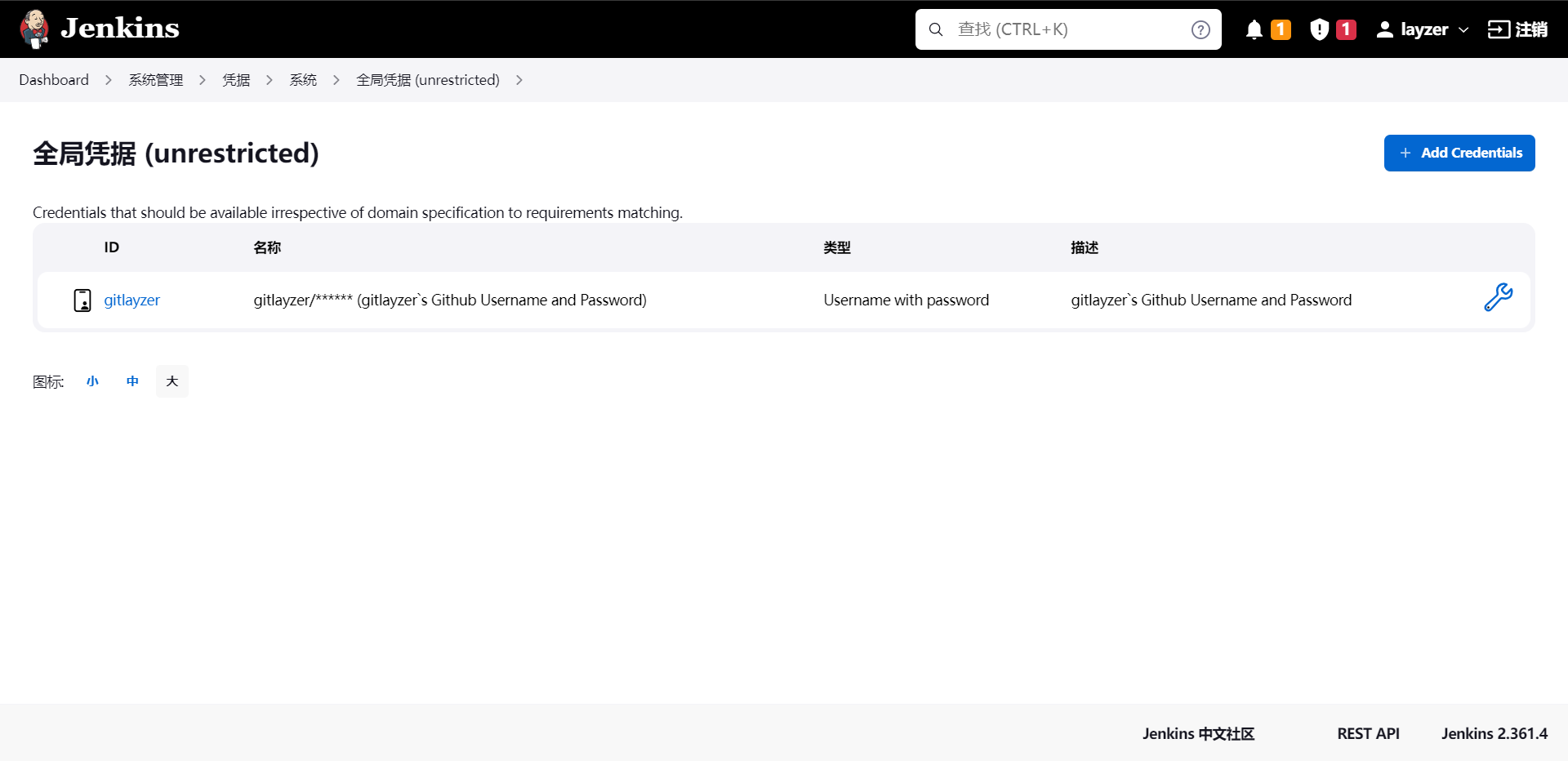

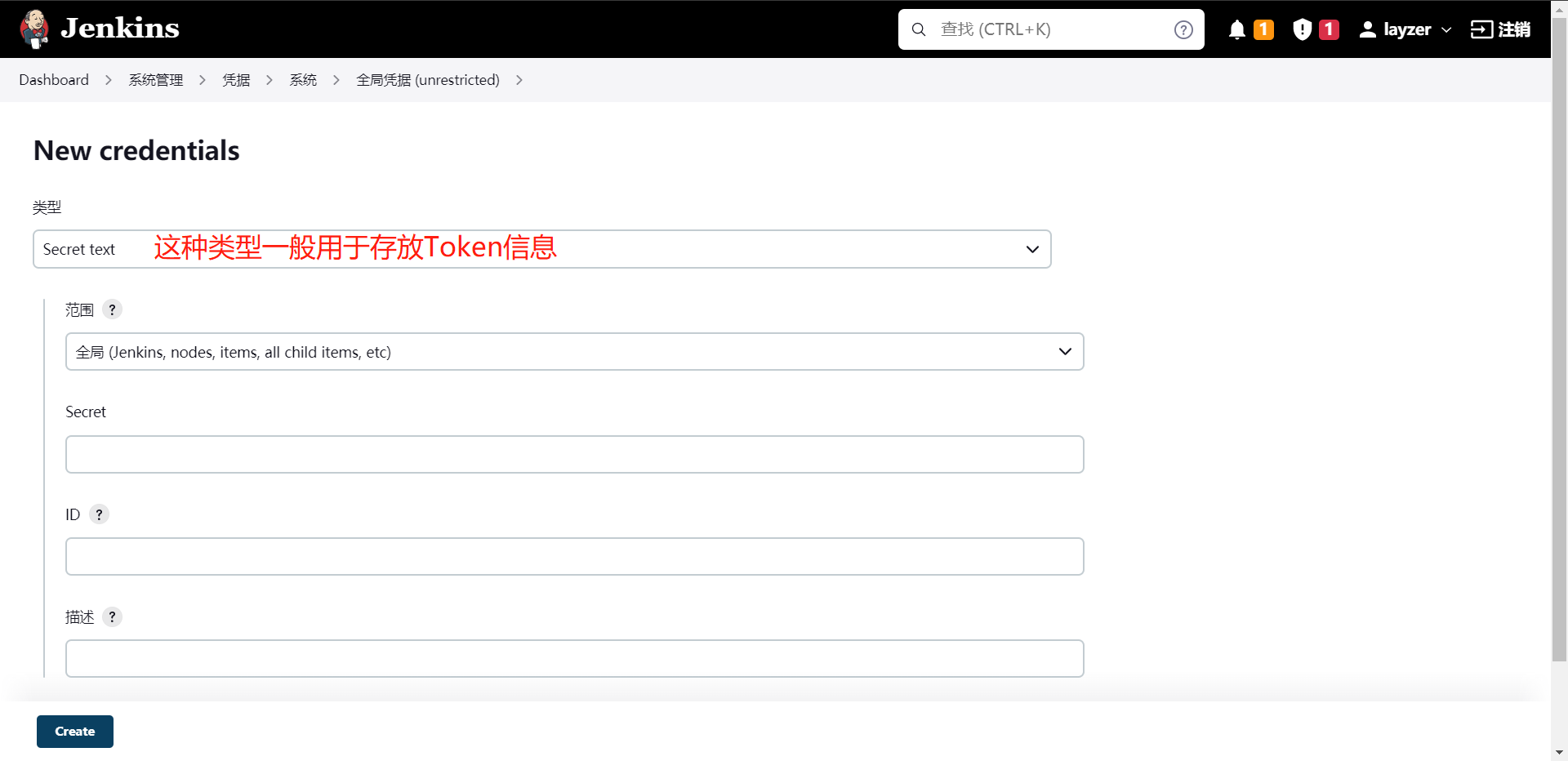

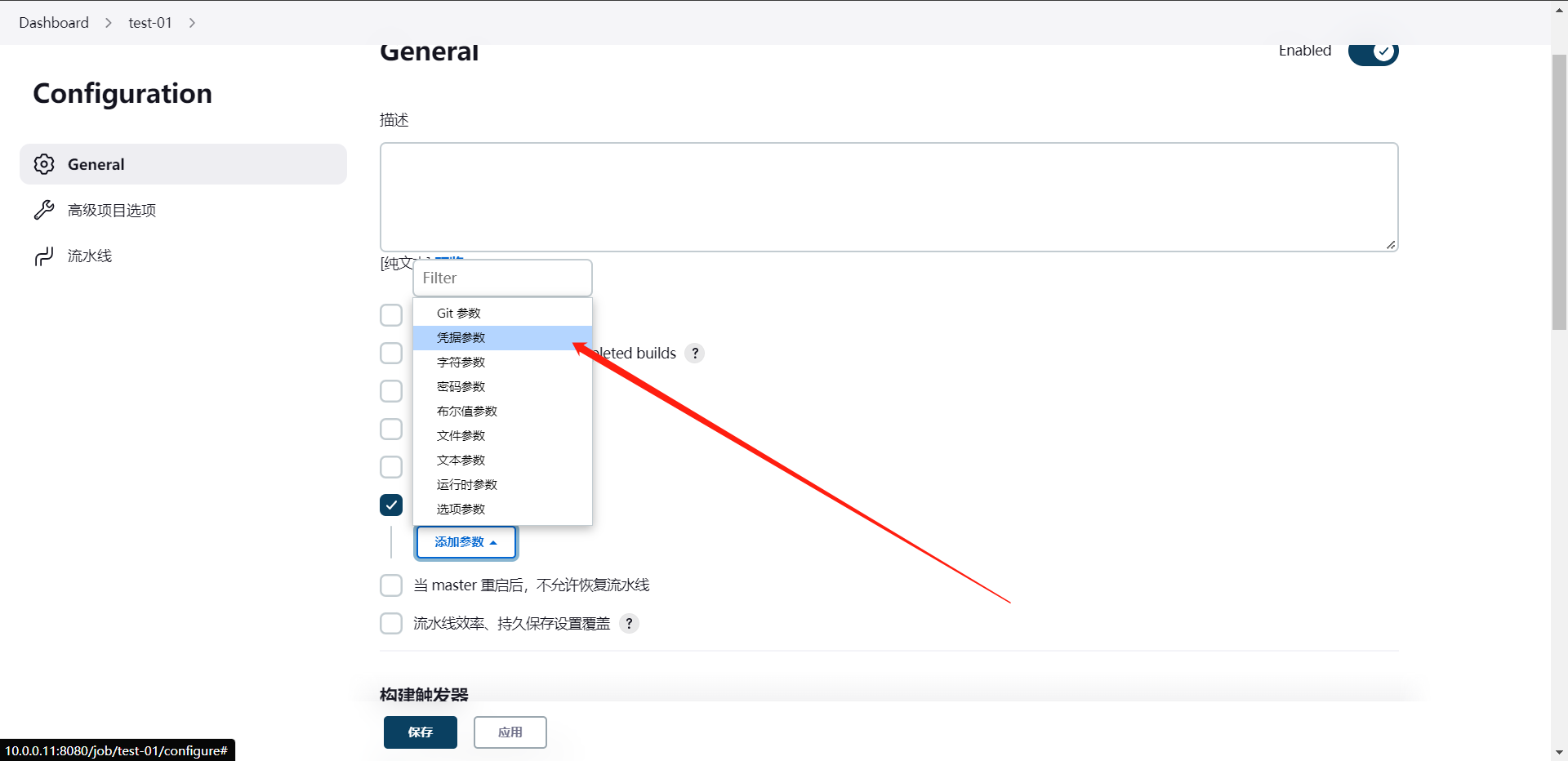

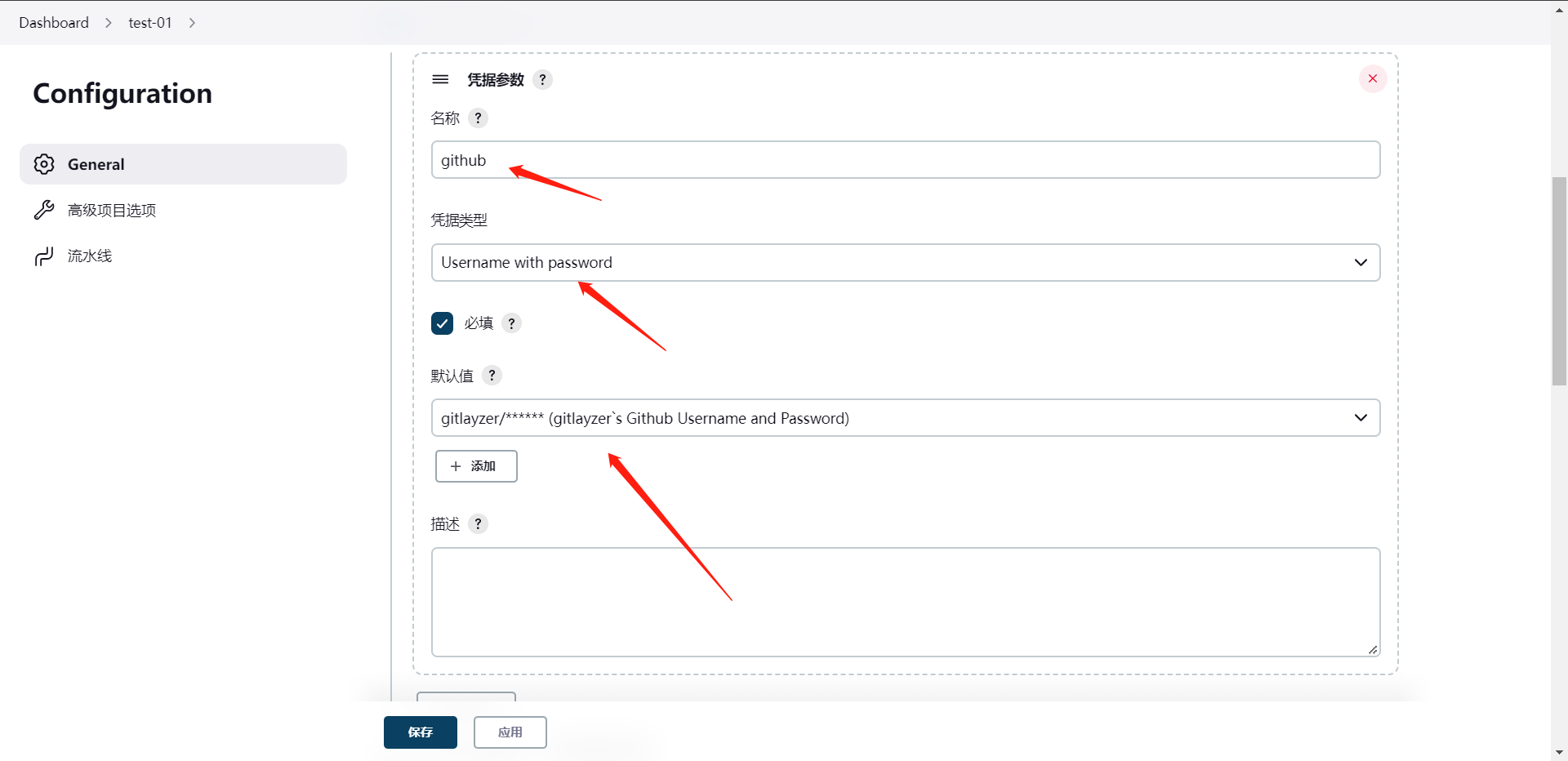

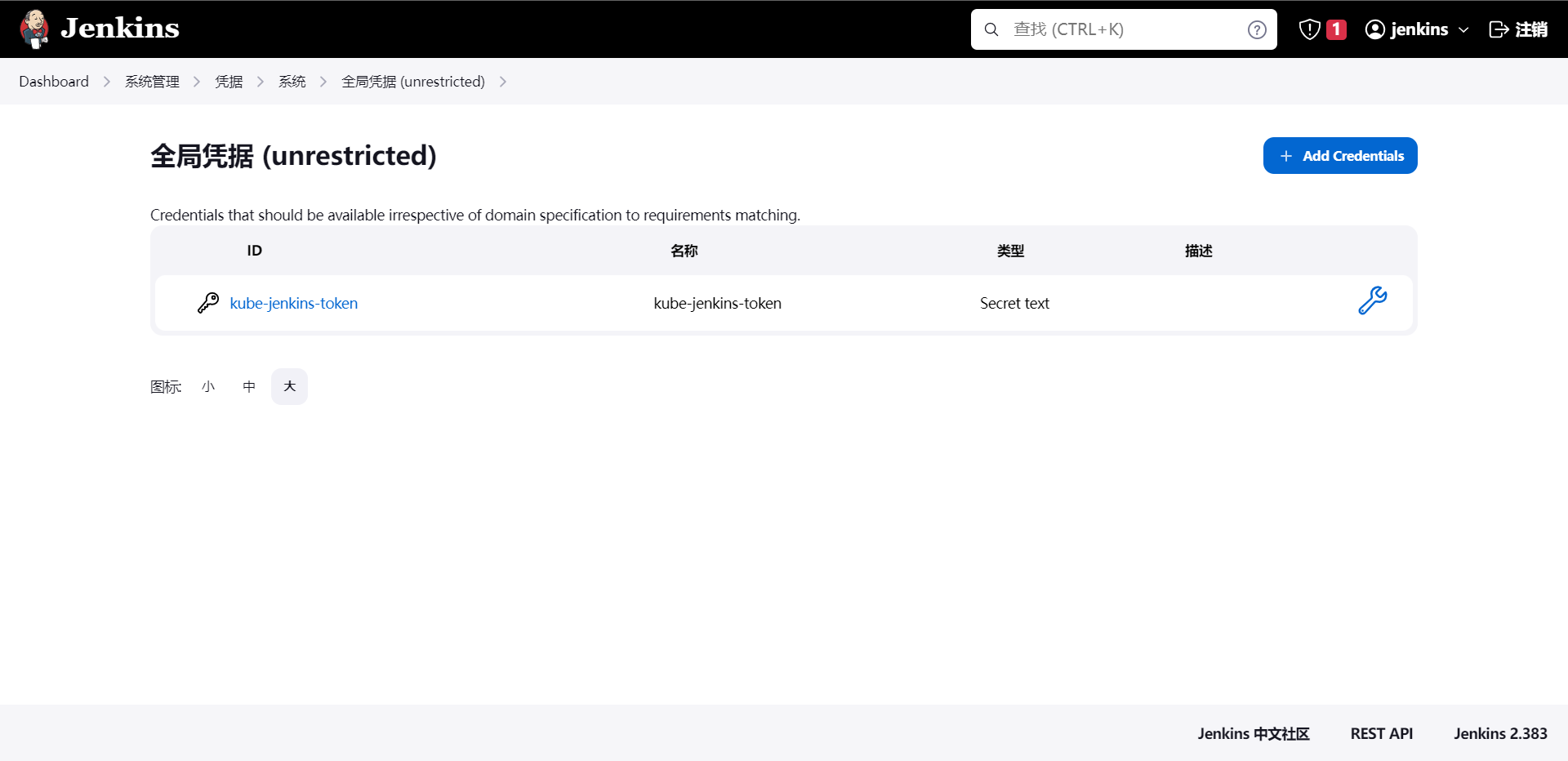

5:Jenkins凭据管理与应用

这个凭据我们使用起来是非常的多的,比如我们会用到的Gitlab/Github/BitBucket,Harbor/Jfrog/Nexus/等验证的账号密码需要存储,那么就可以使用凭据管理来存储这些数据。

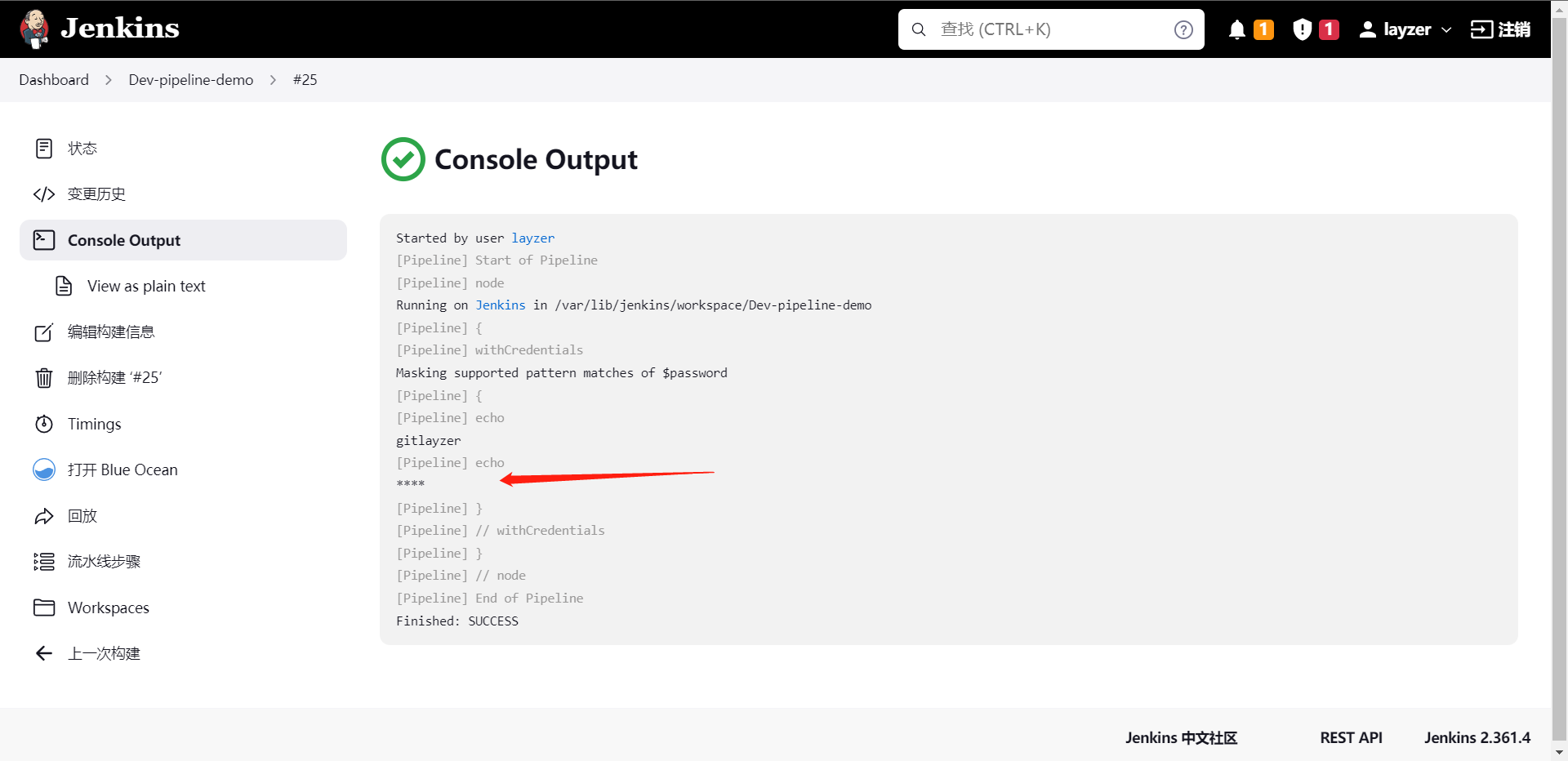

那么既然我们配置了凭据,那么我们什么时候才会去用凭据呢?那么我们就使用上面创建好的项目来操作使用一下流水线

当我们配置到这里之后,我们的自动化过程中就可以使用变量来引用这些配置,然后自动的填入我们想要使用的地方,那么后面实战我们会来应用的。

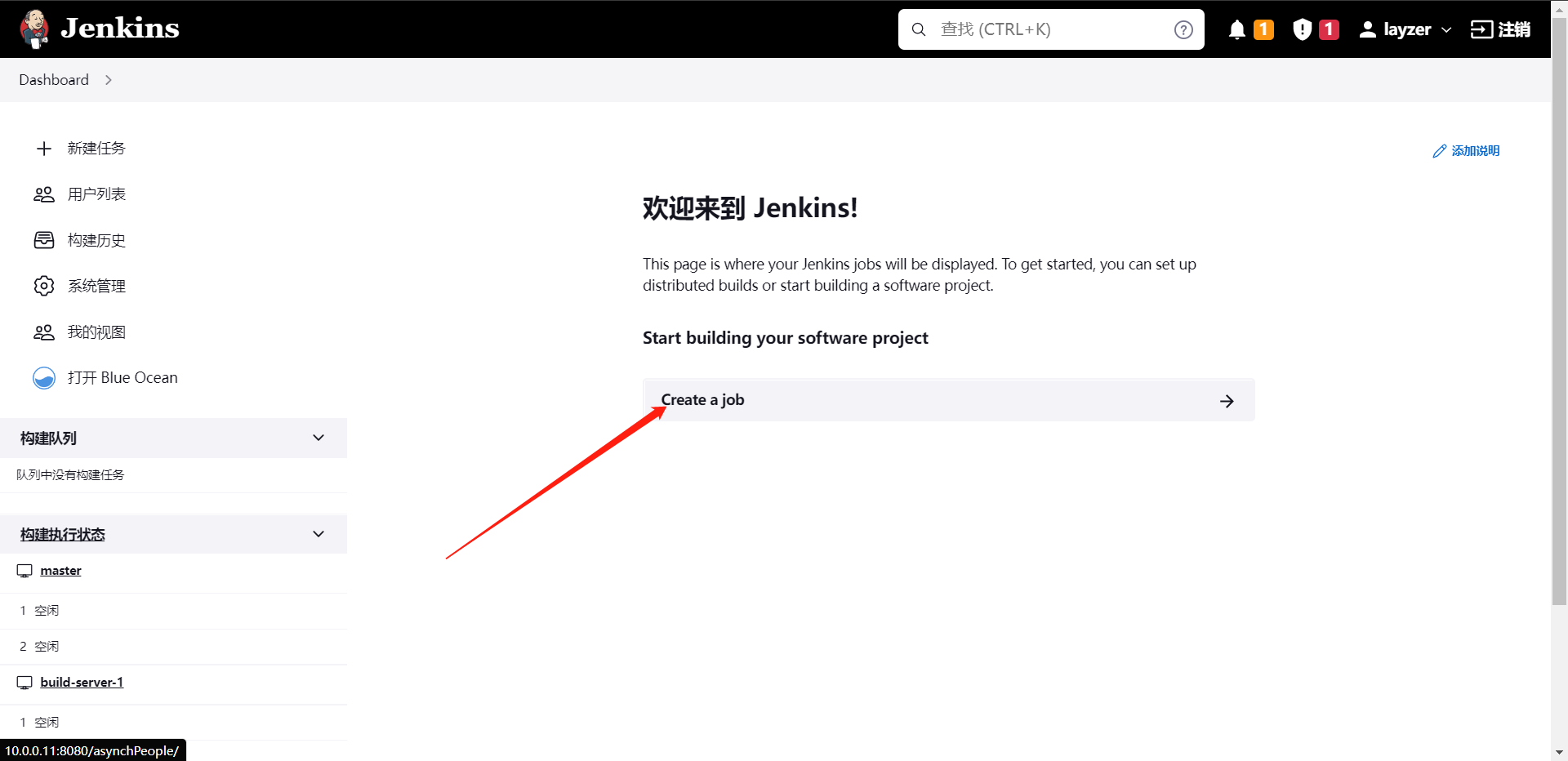

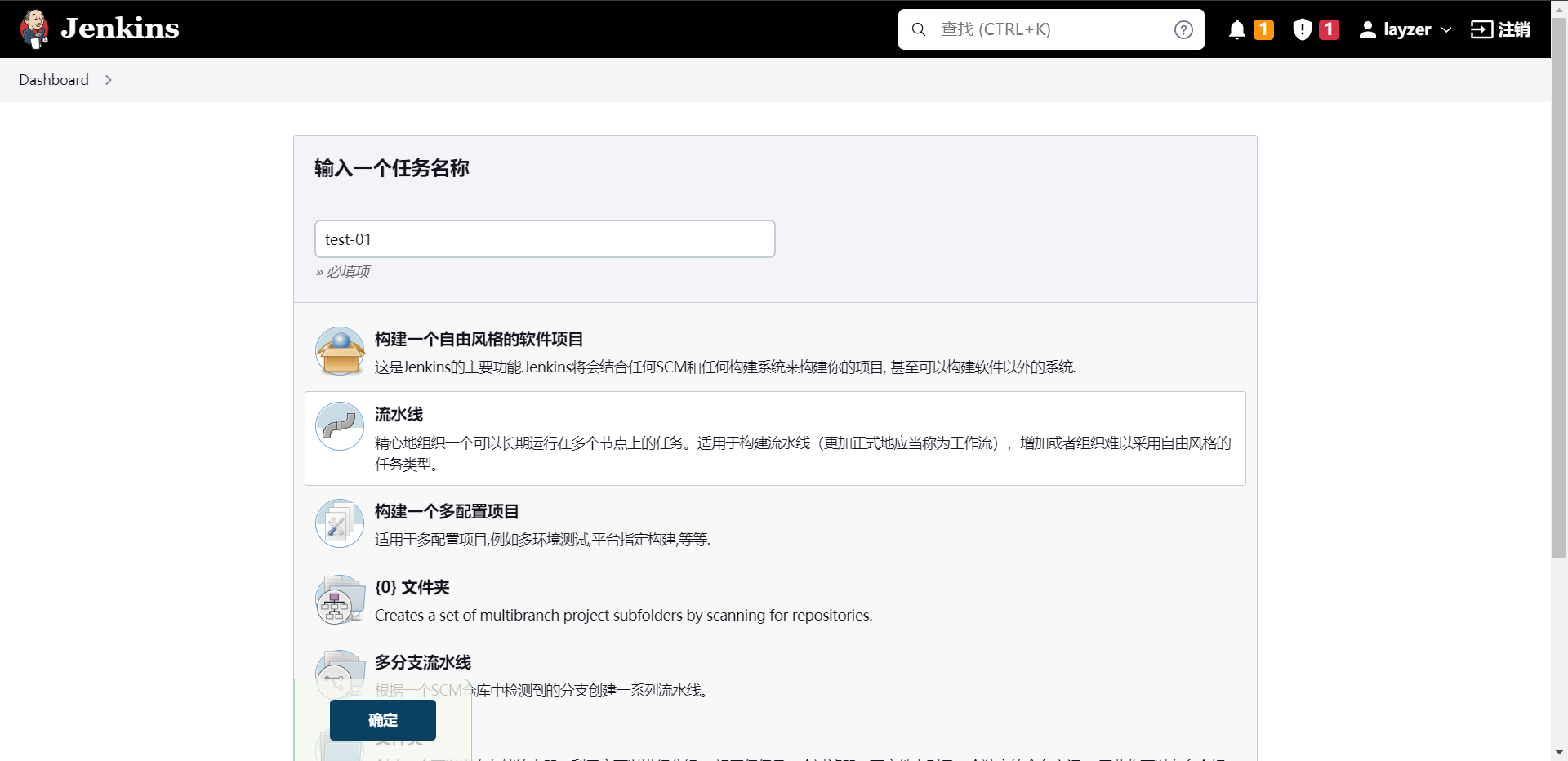

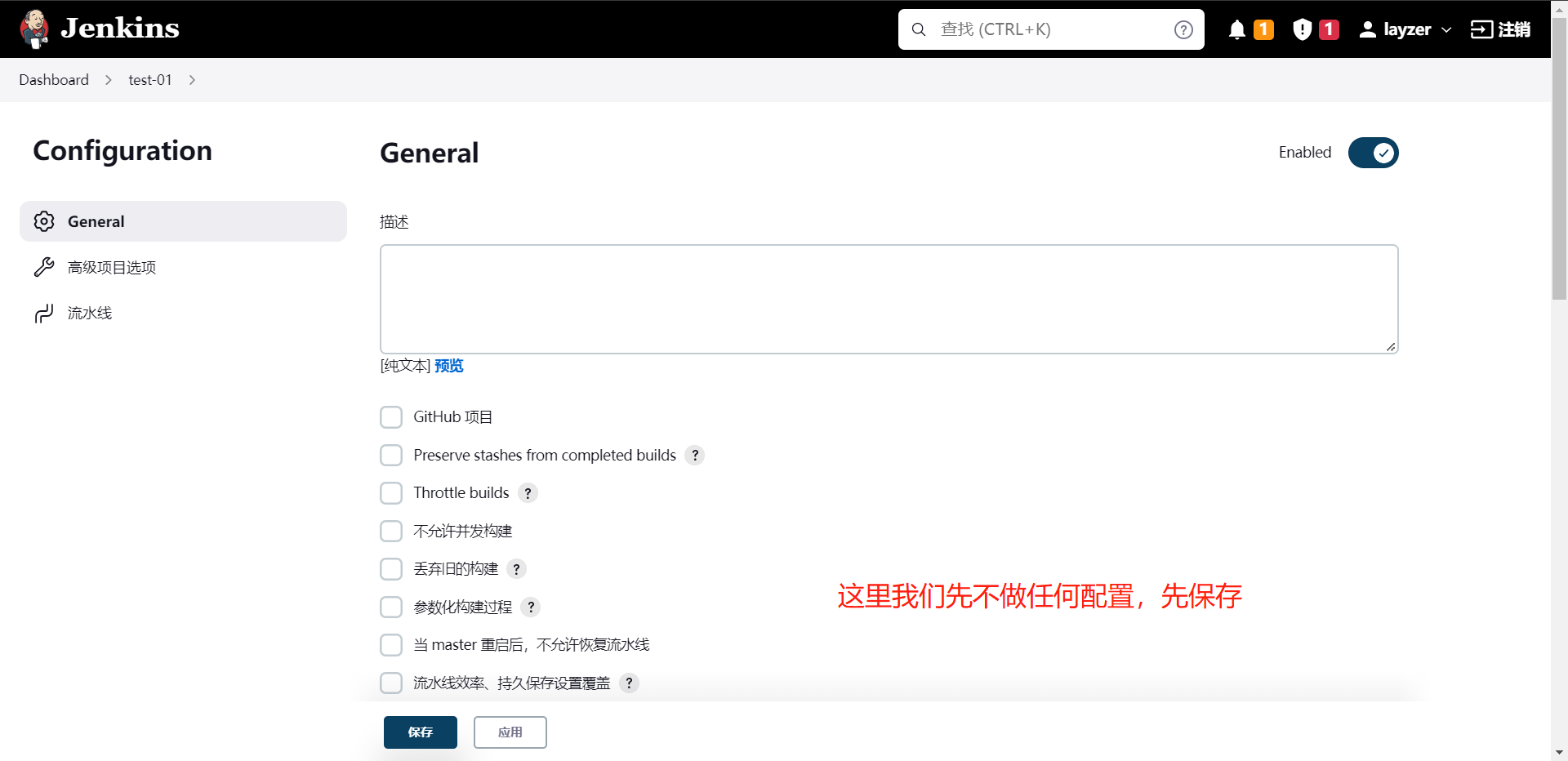

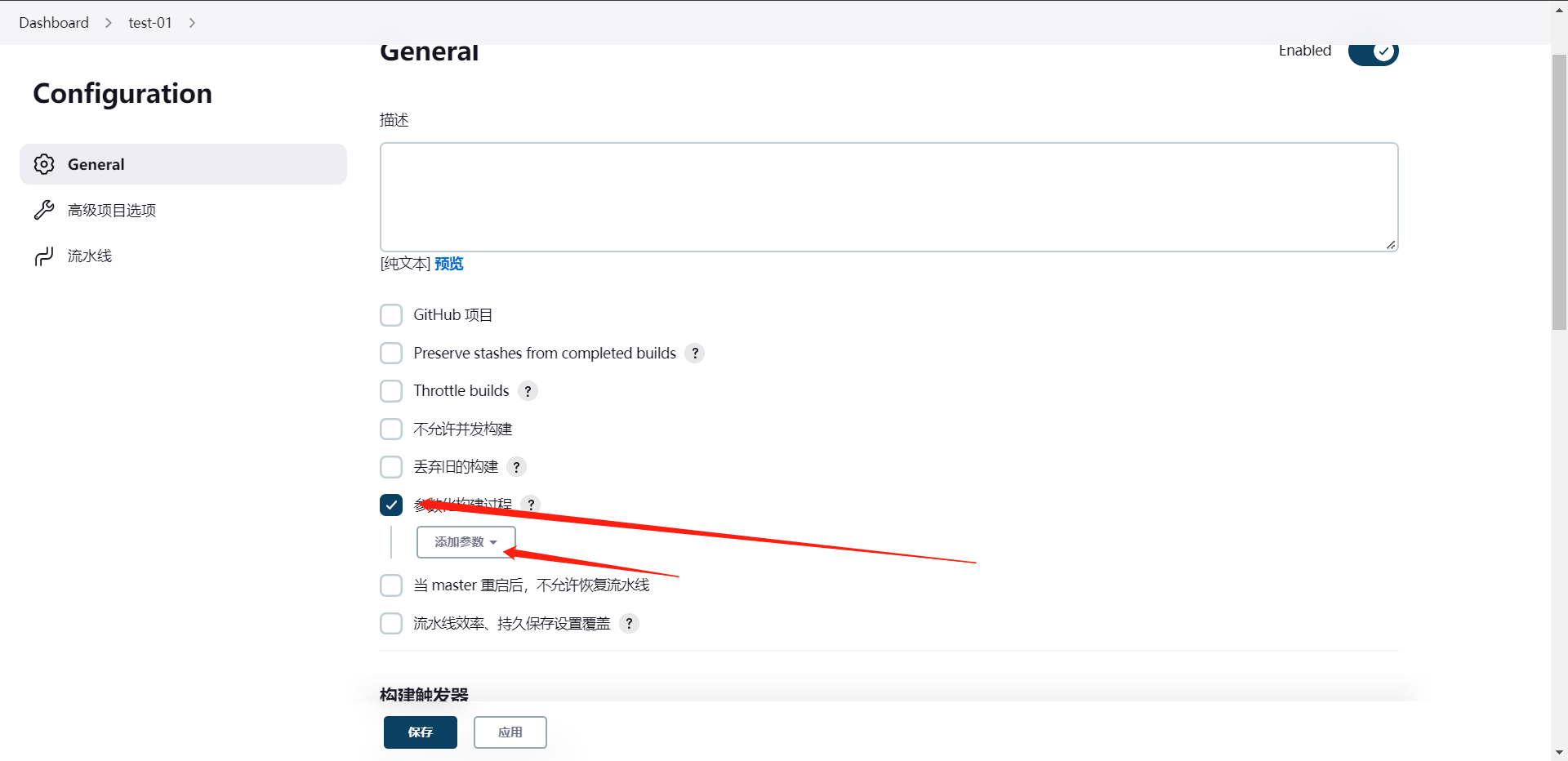

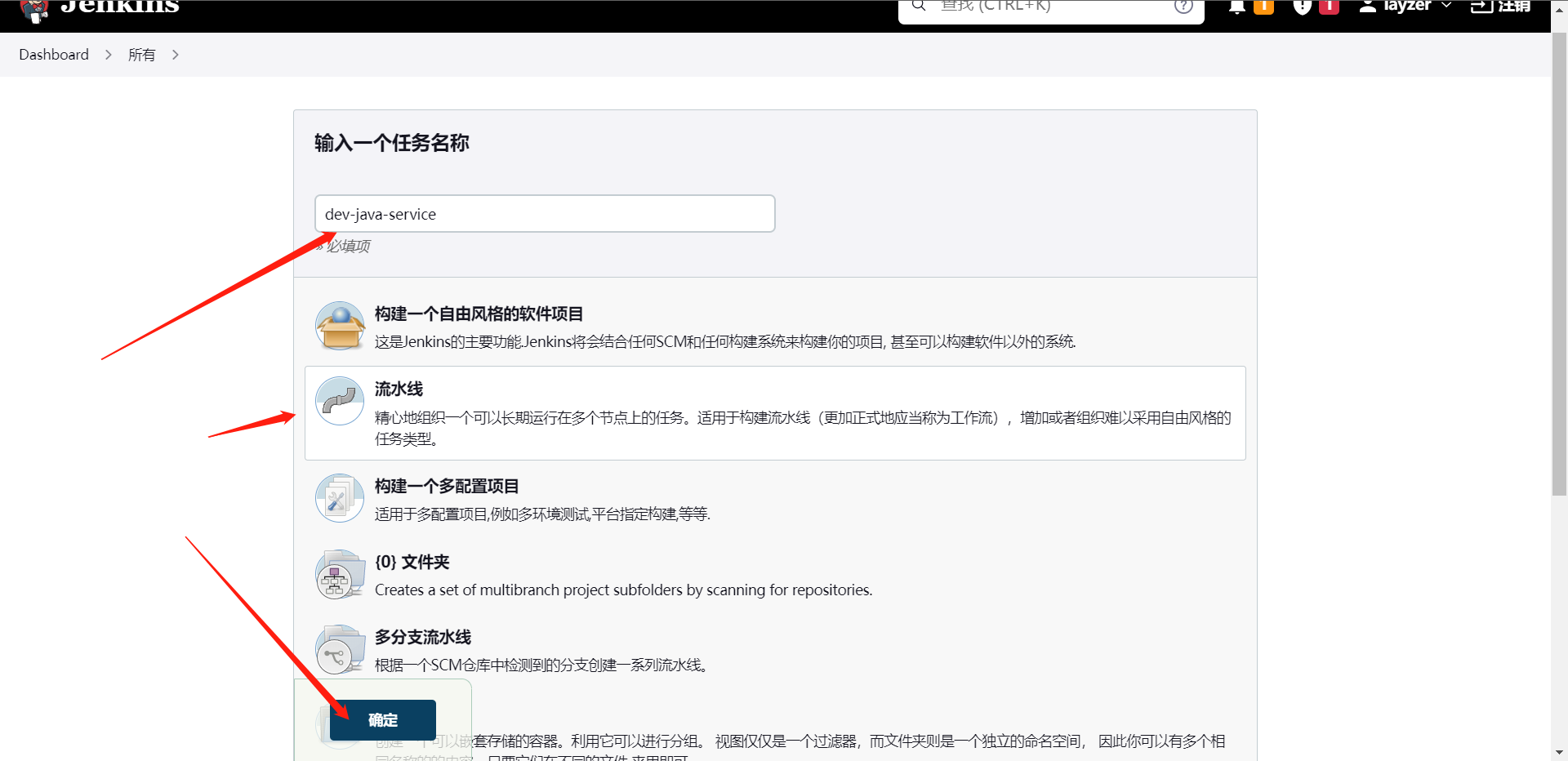

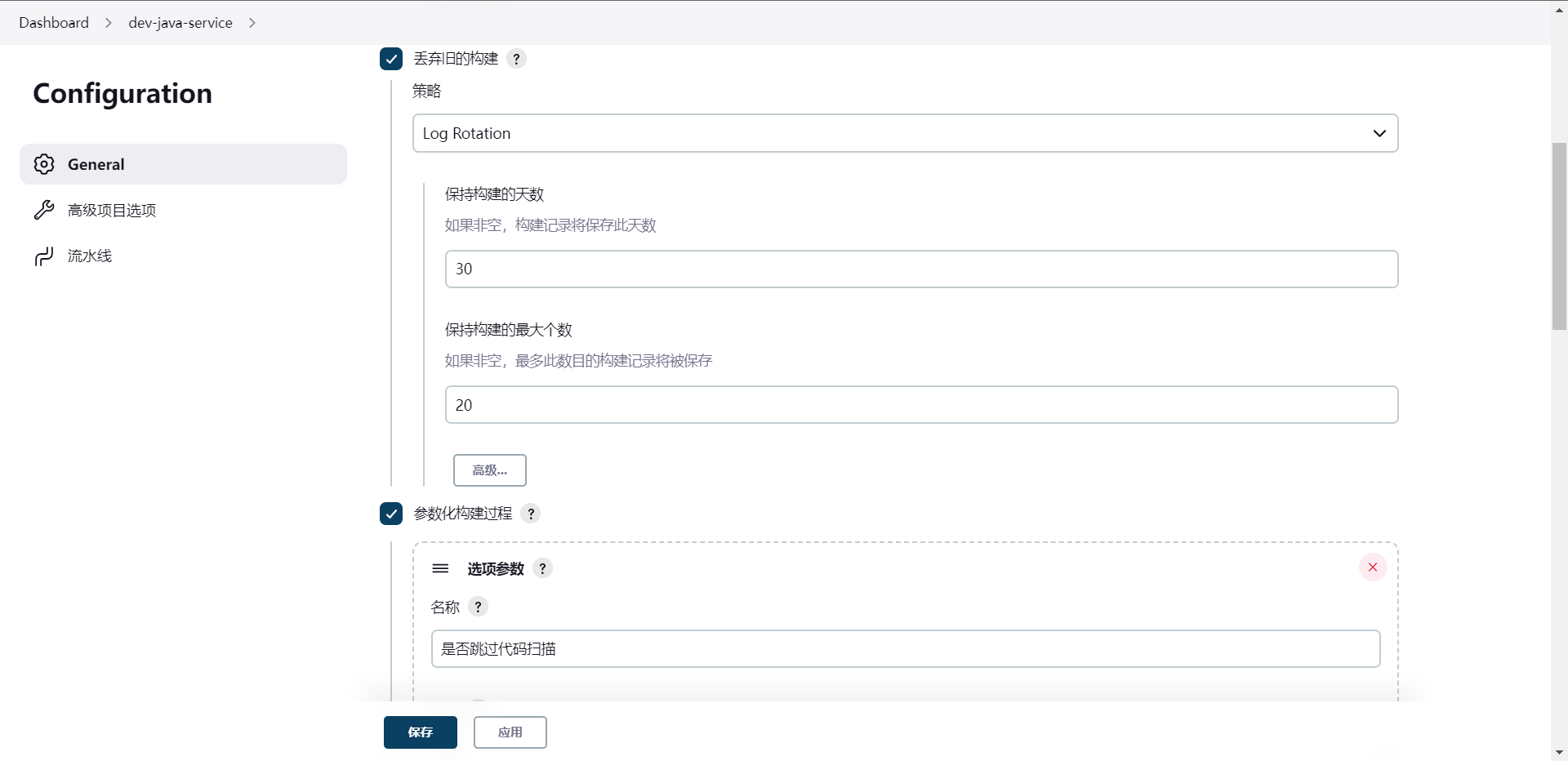

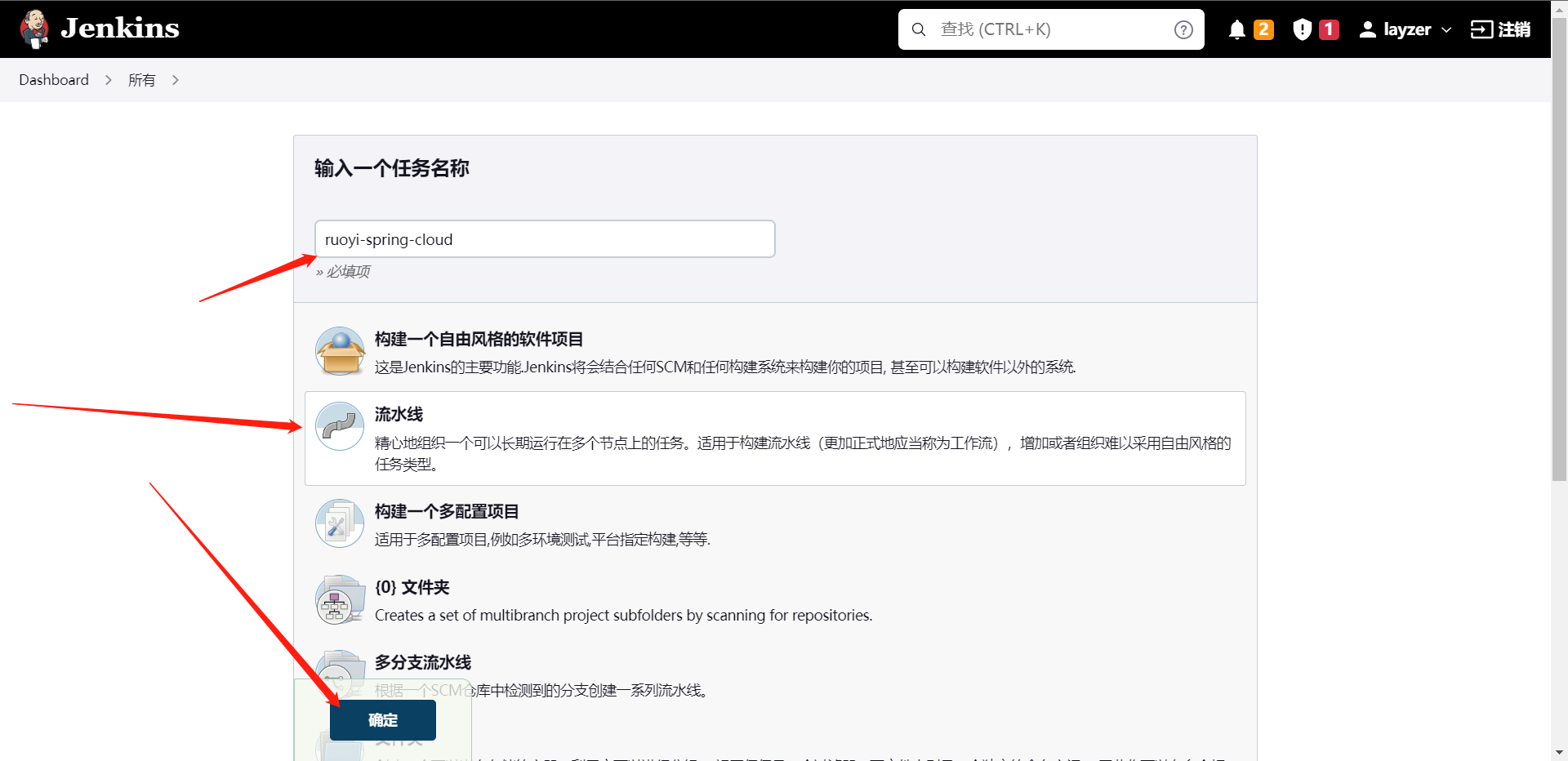

6:Jenkins项目管理

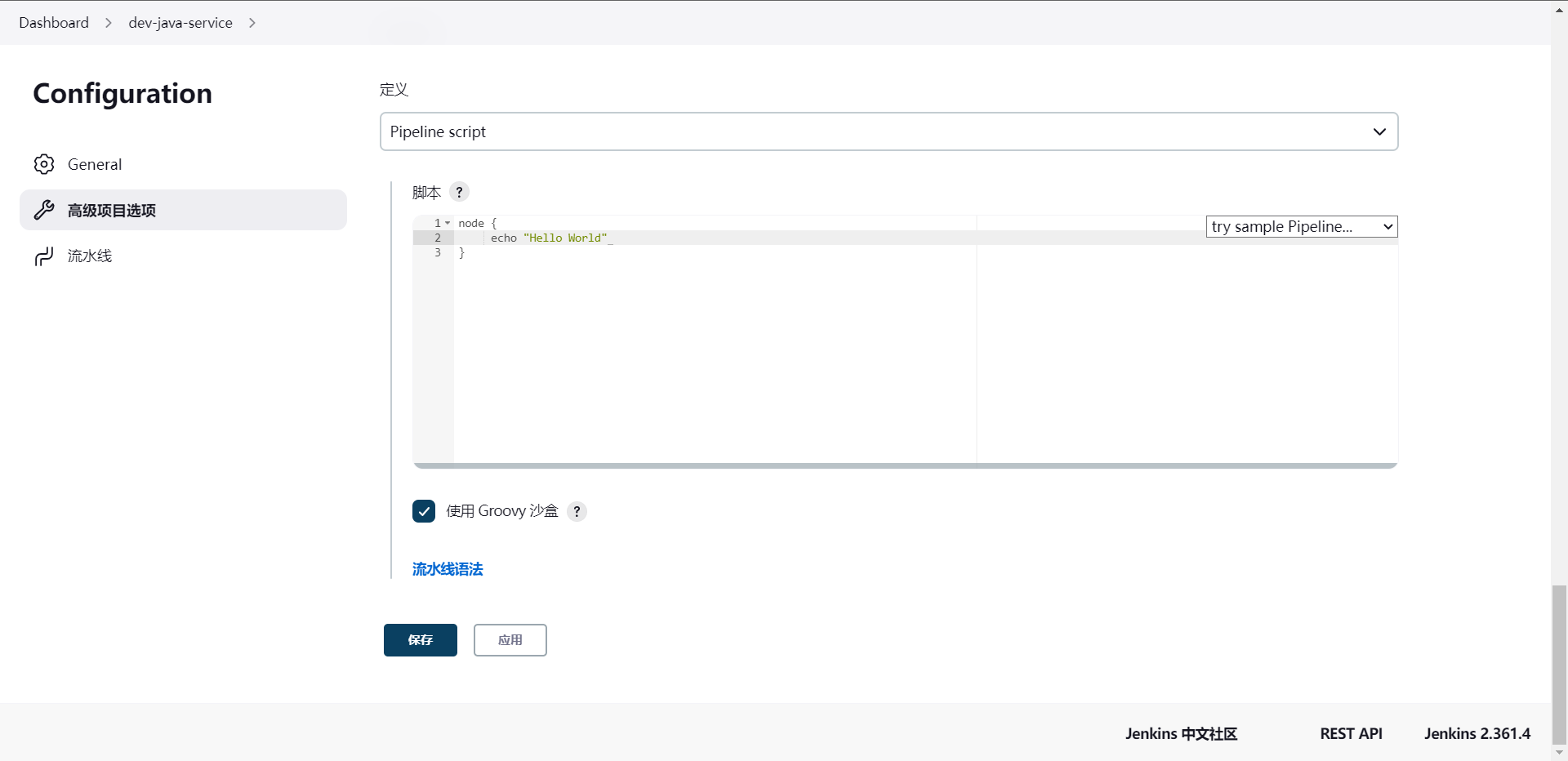

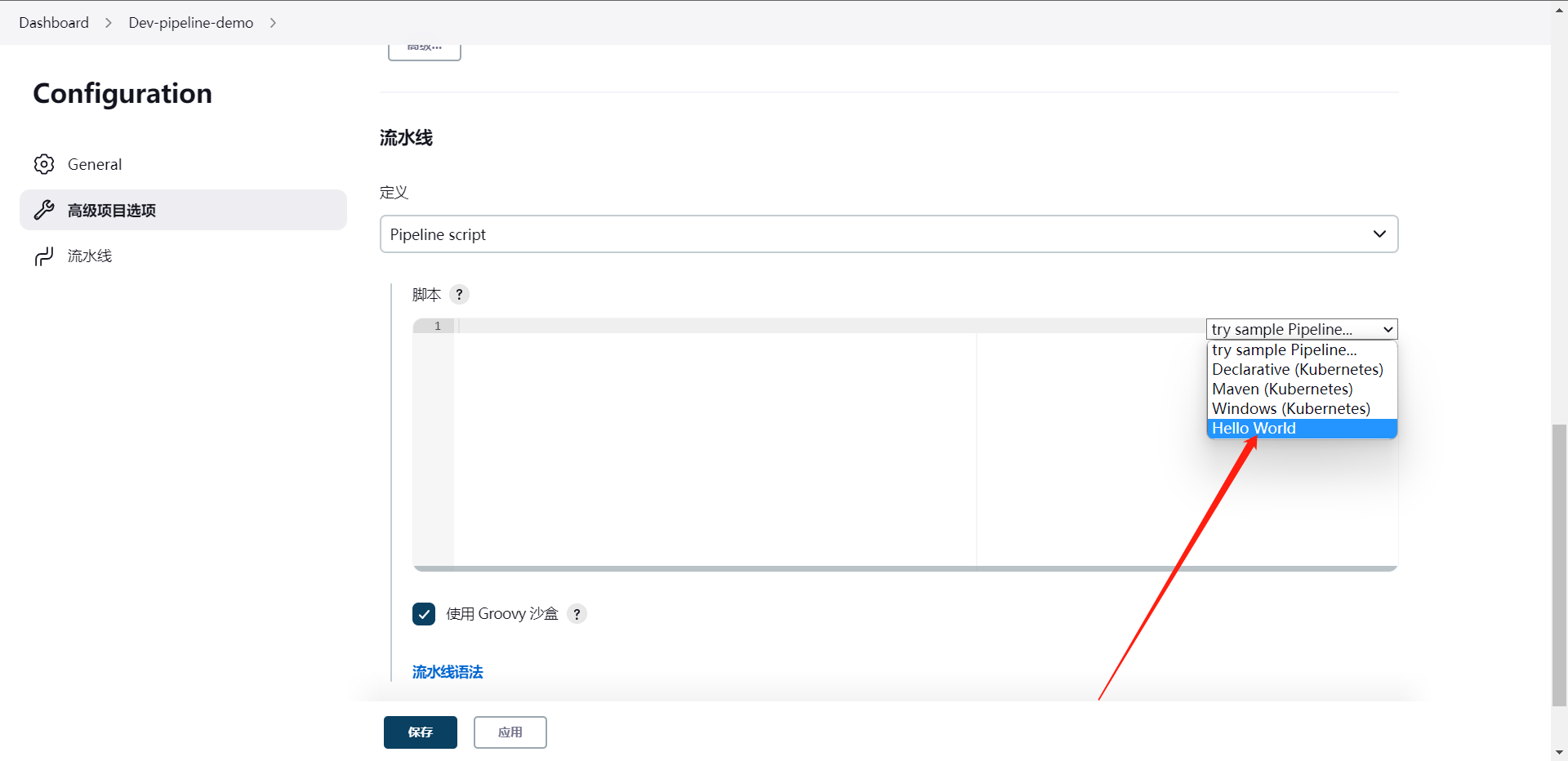

如何创建一个流水线项目,我们直接走流水线去创建

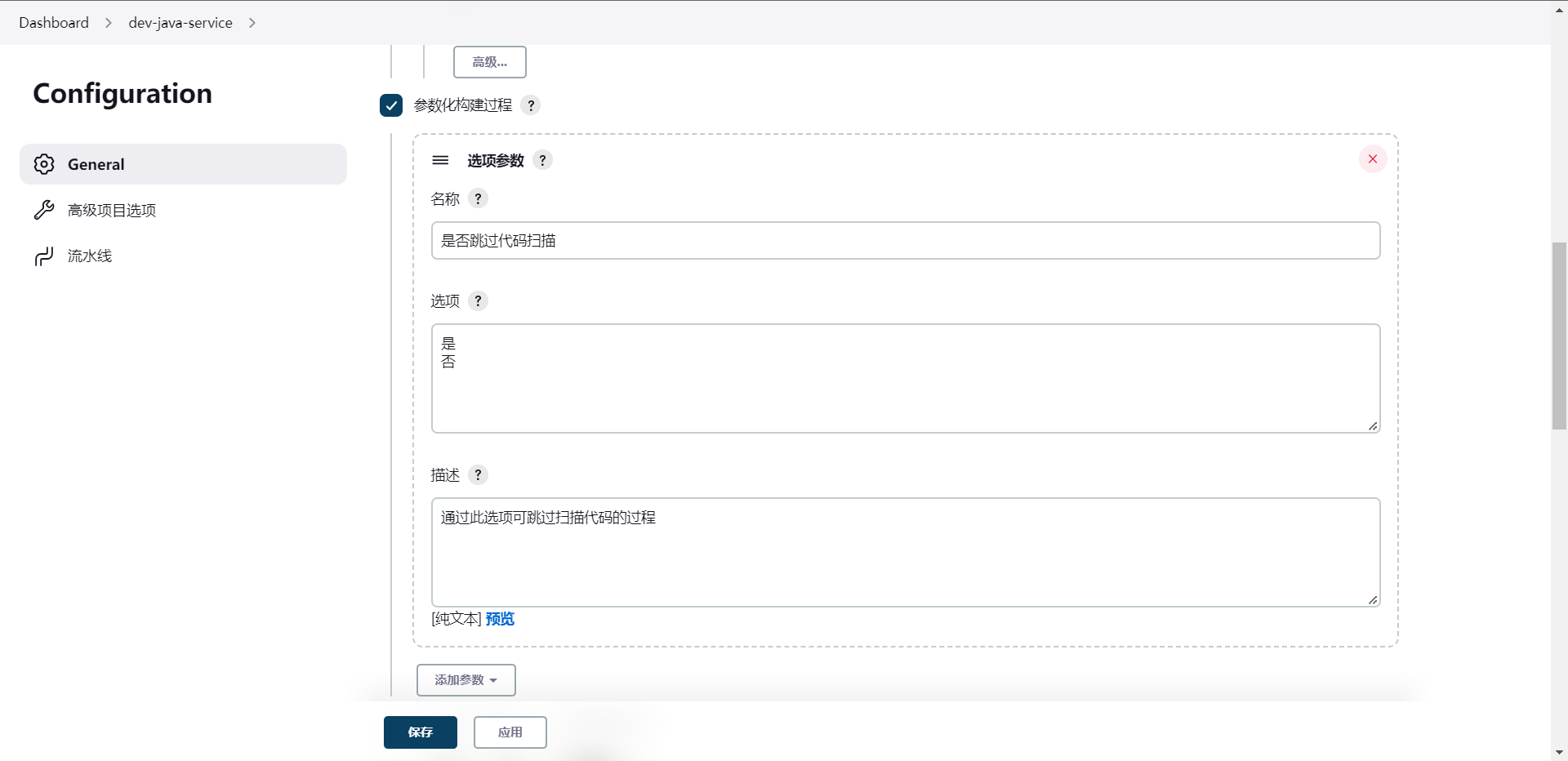

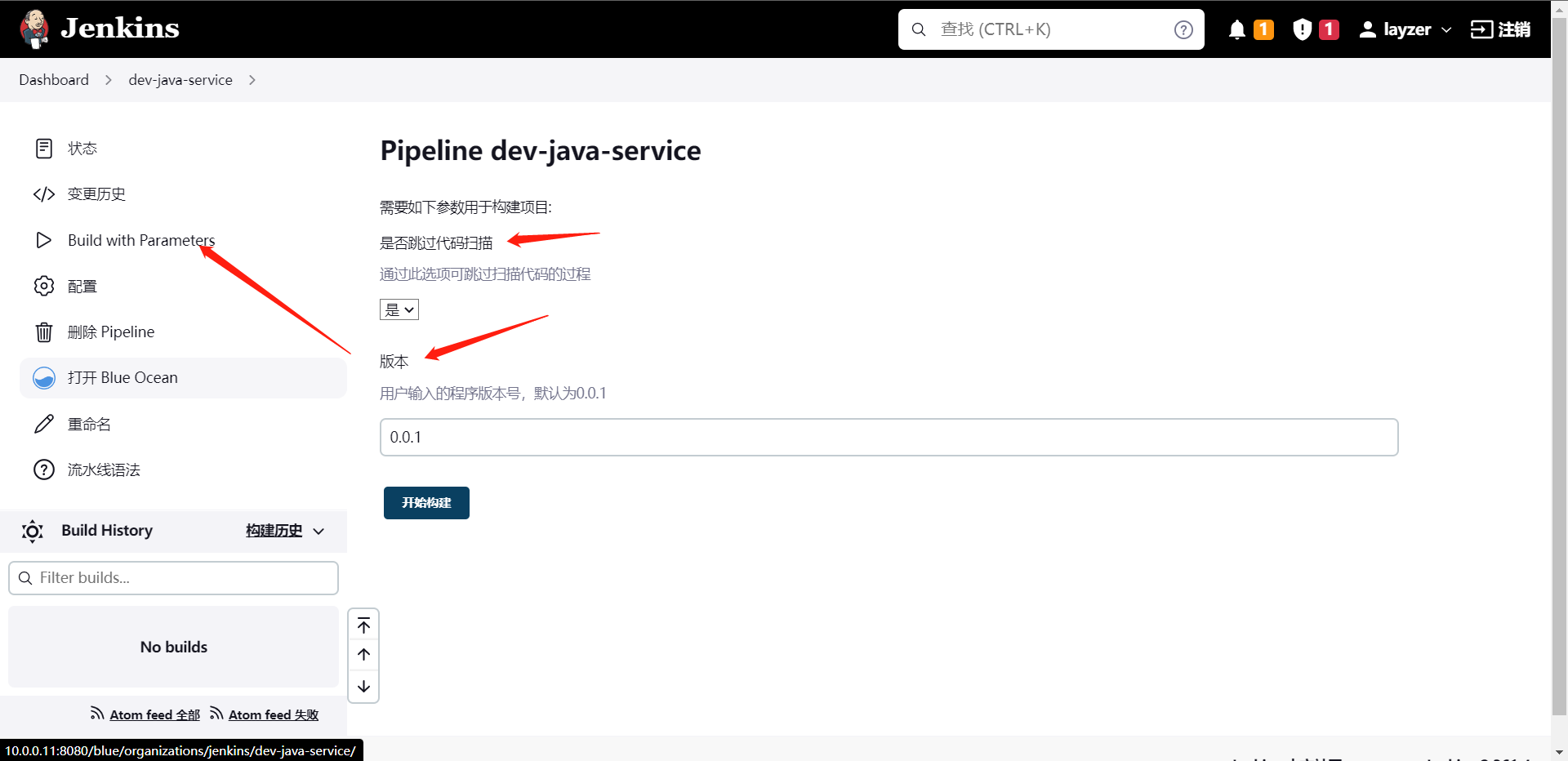

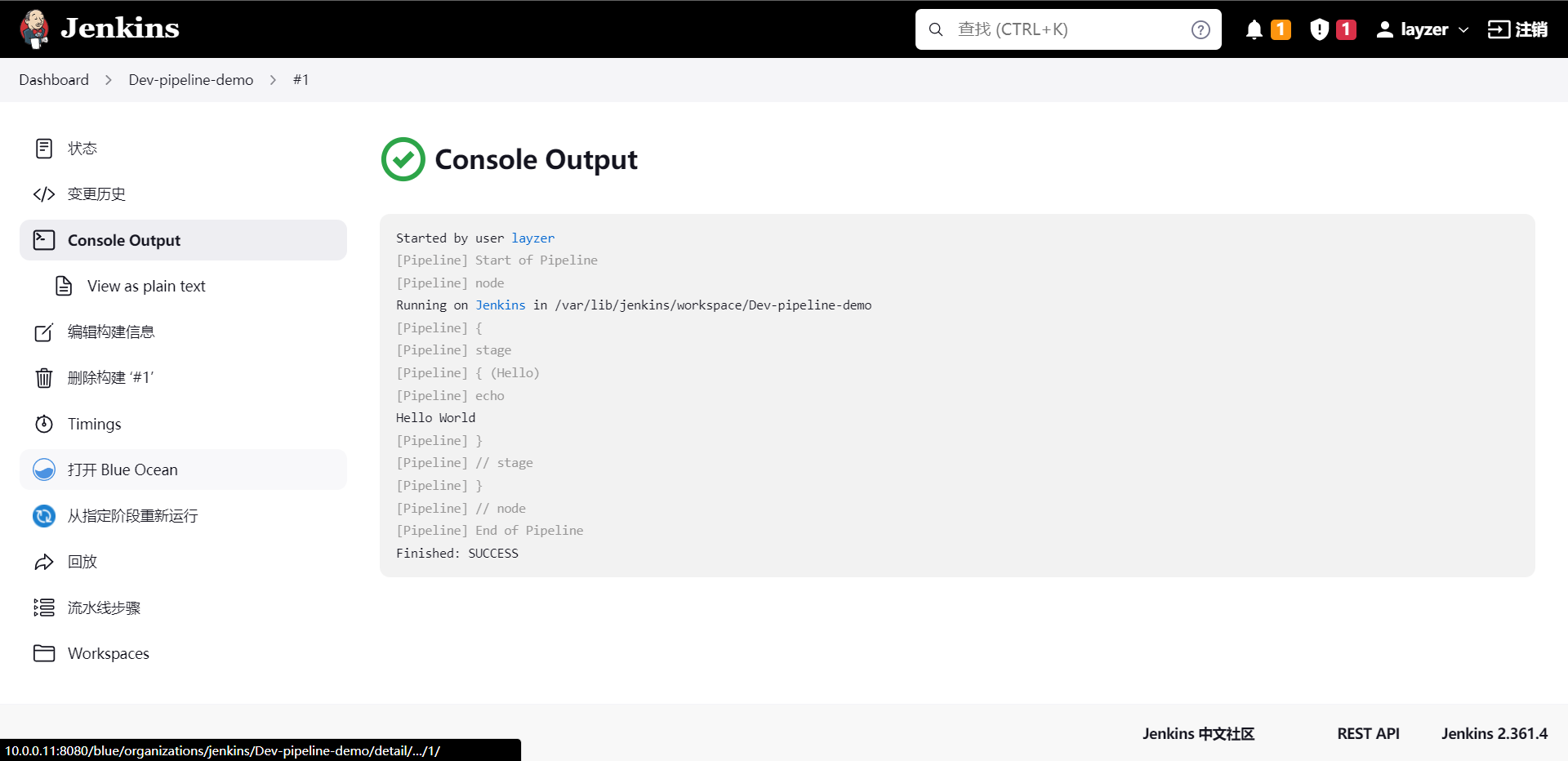

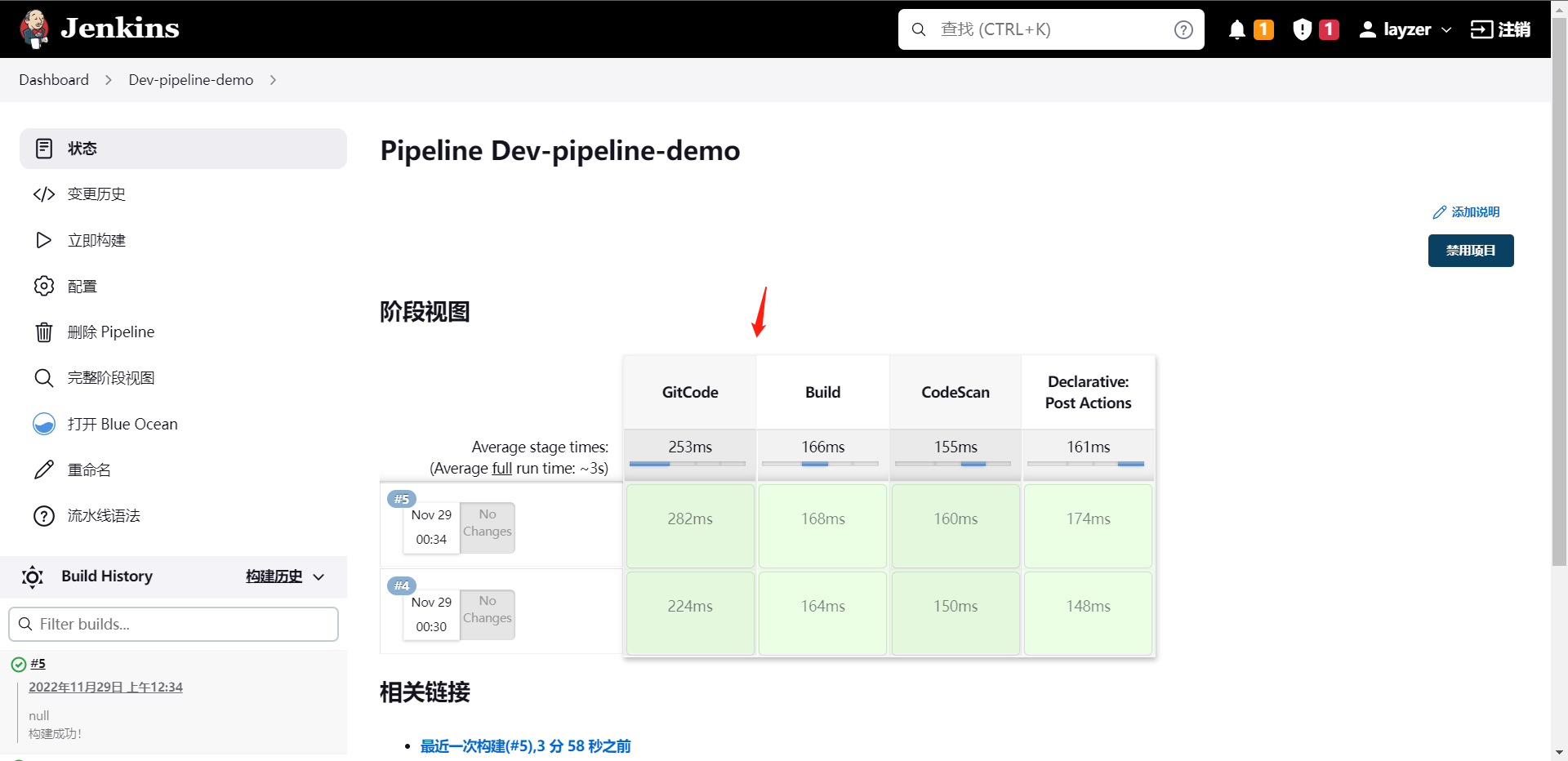

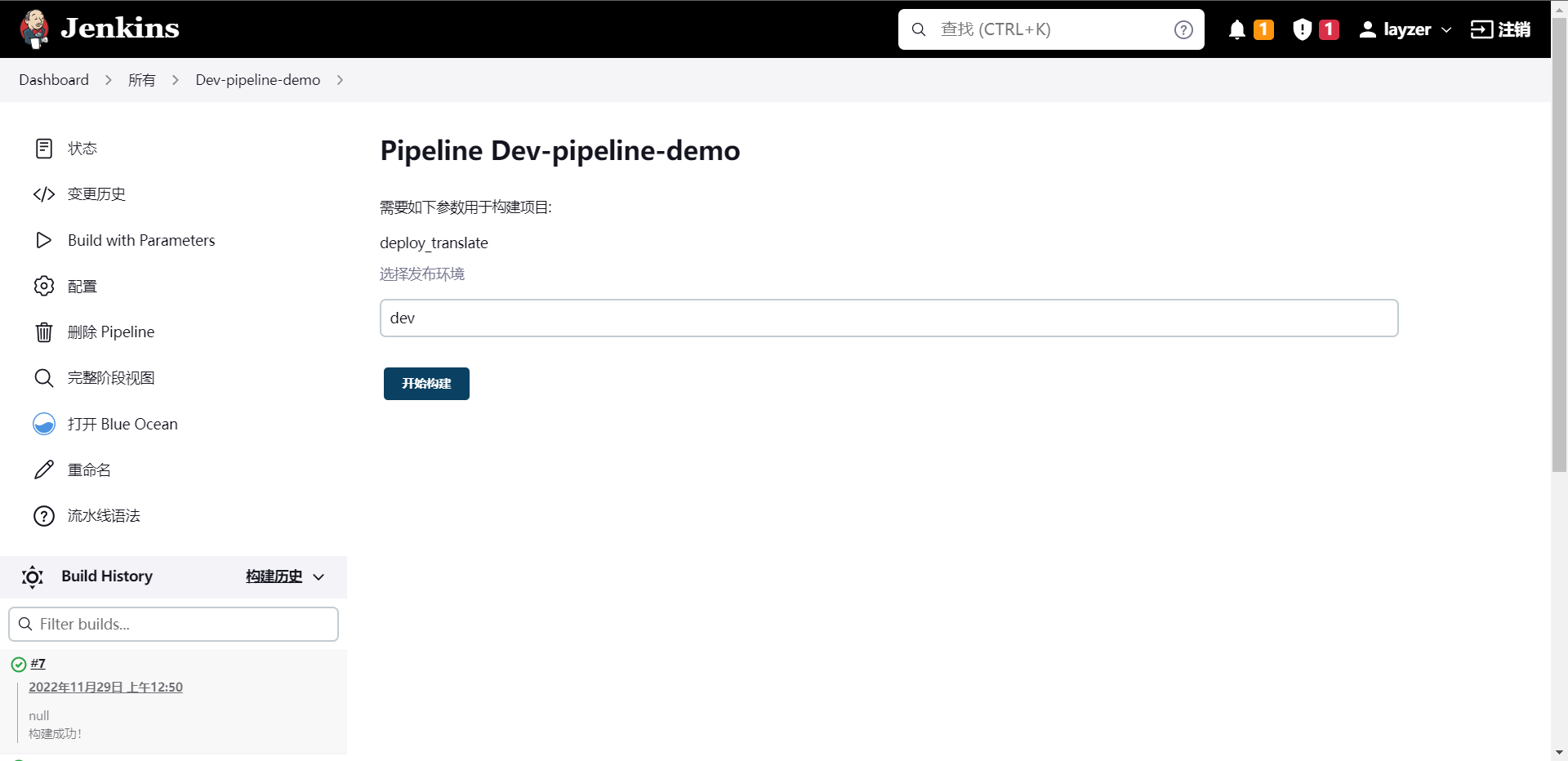

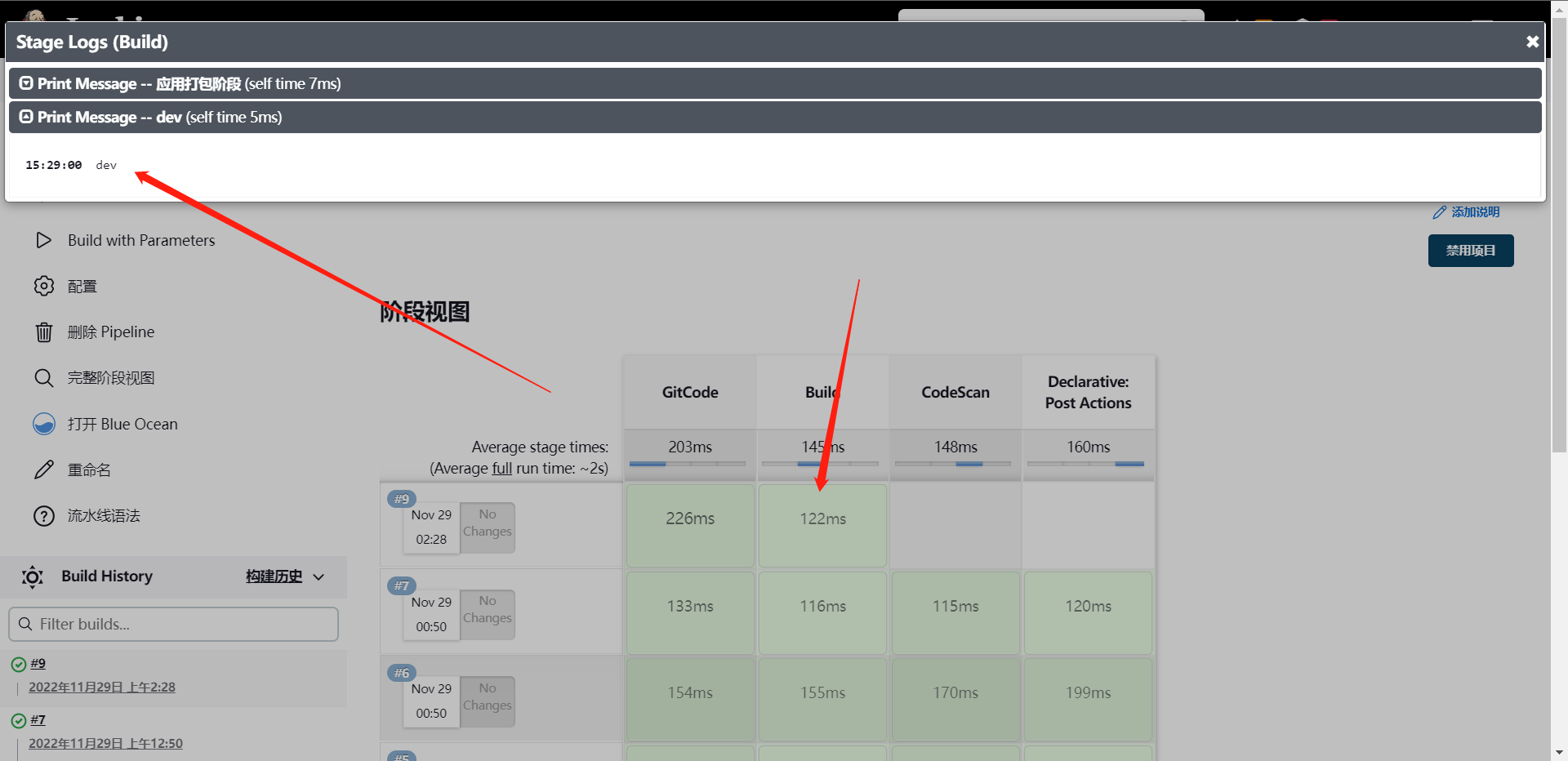

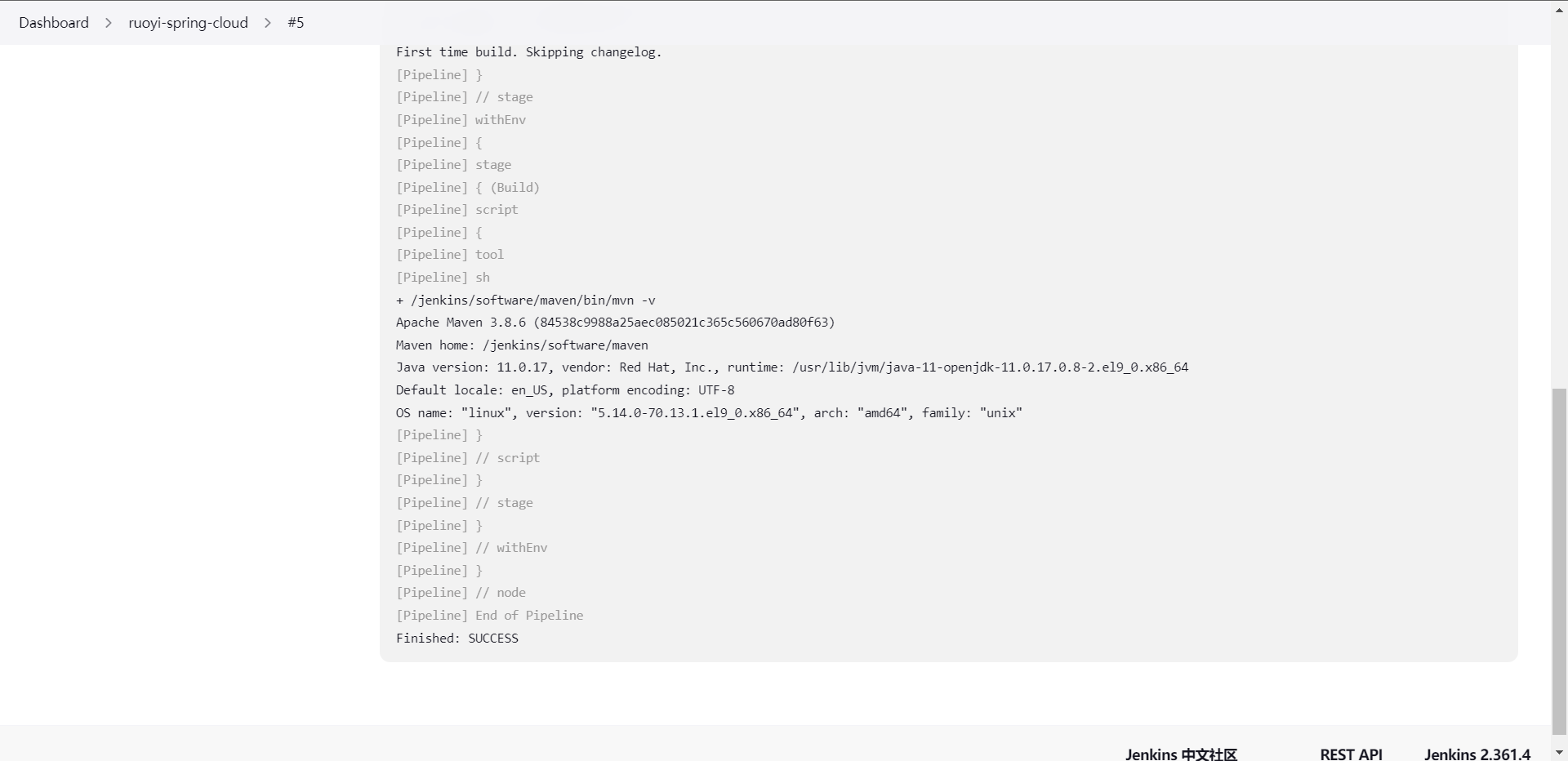

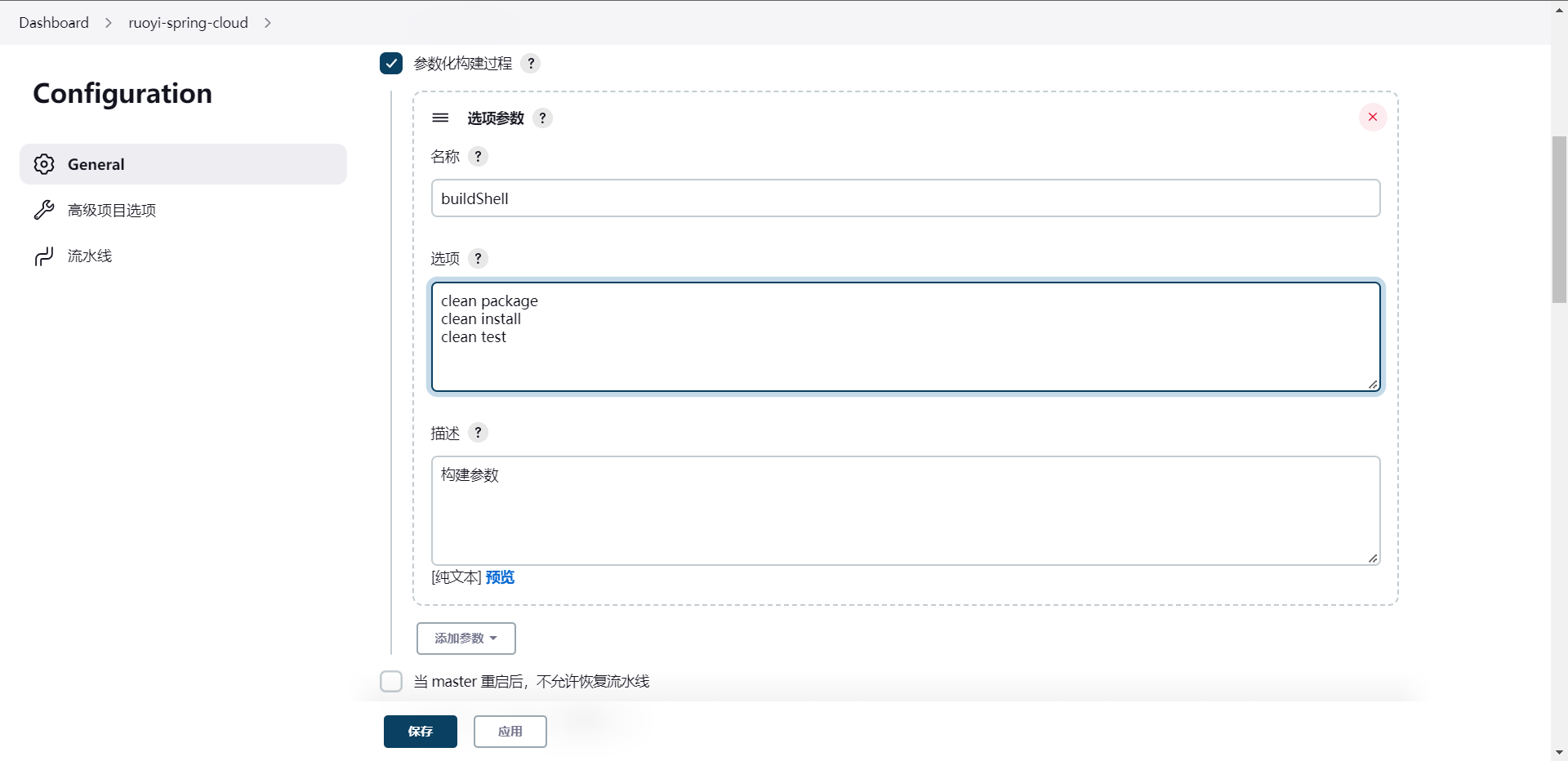

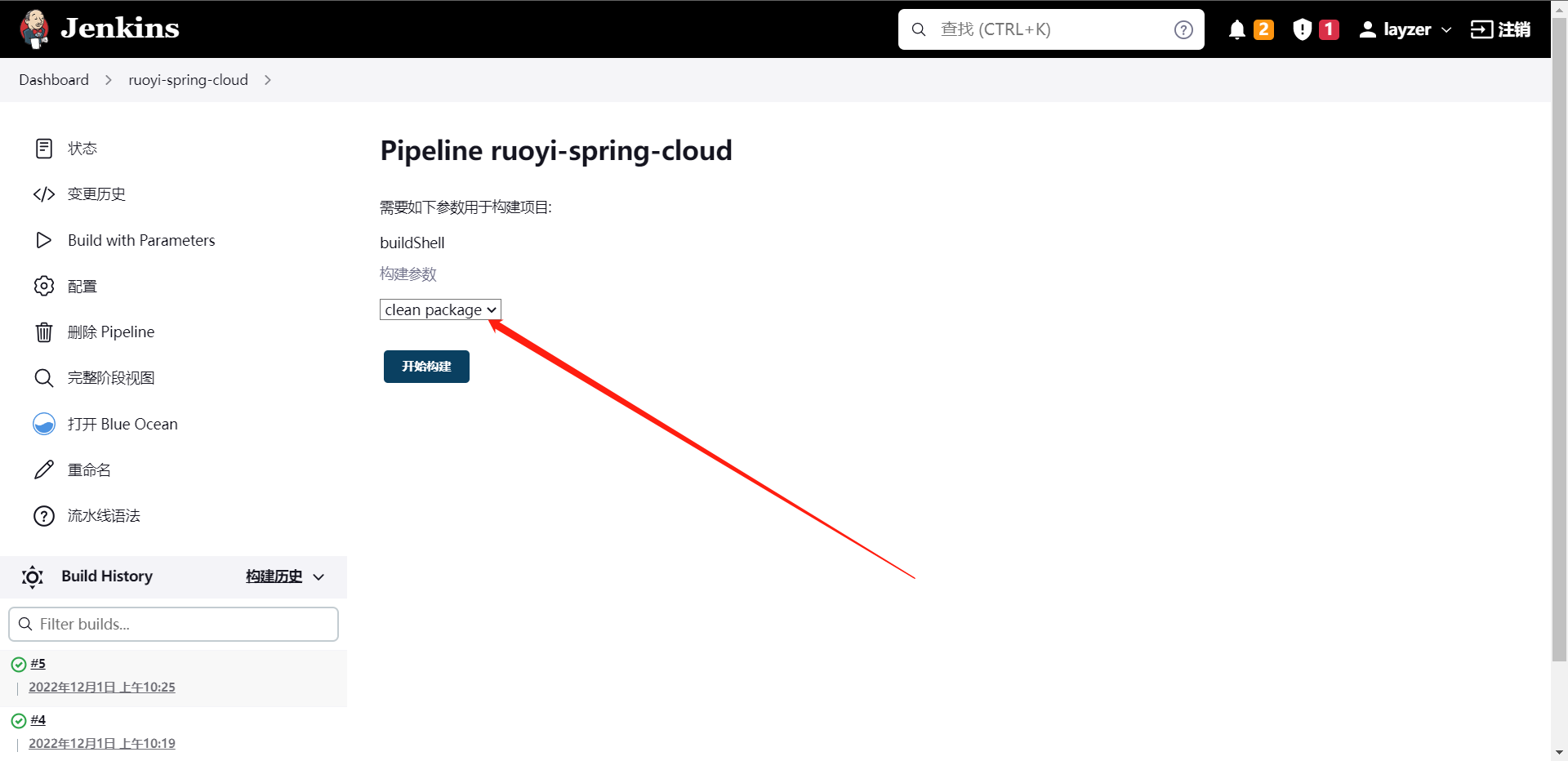

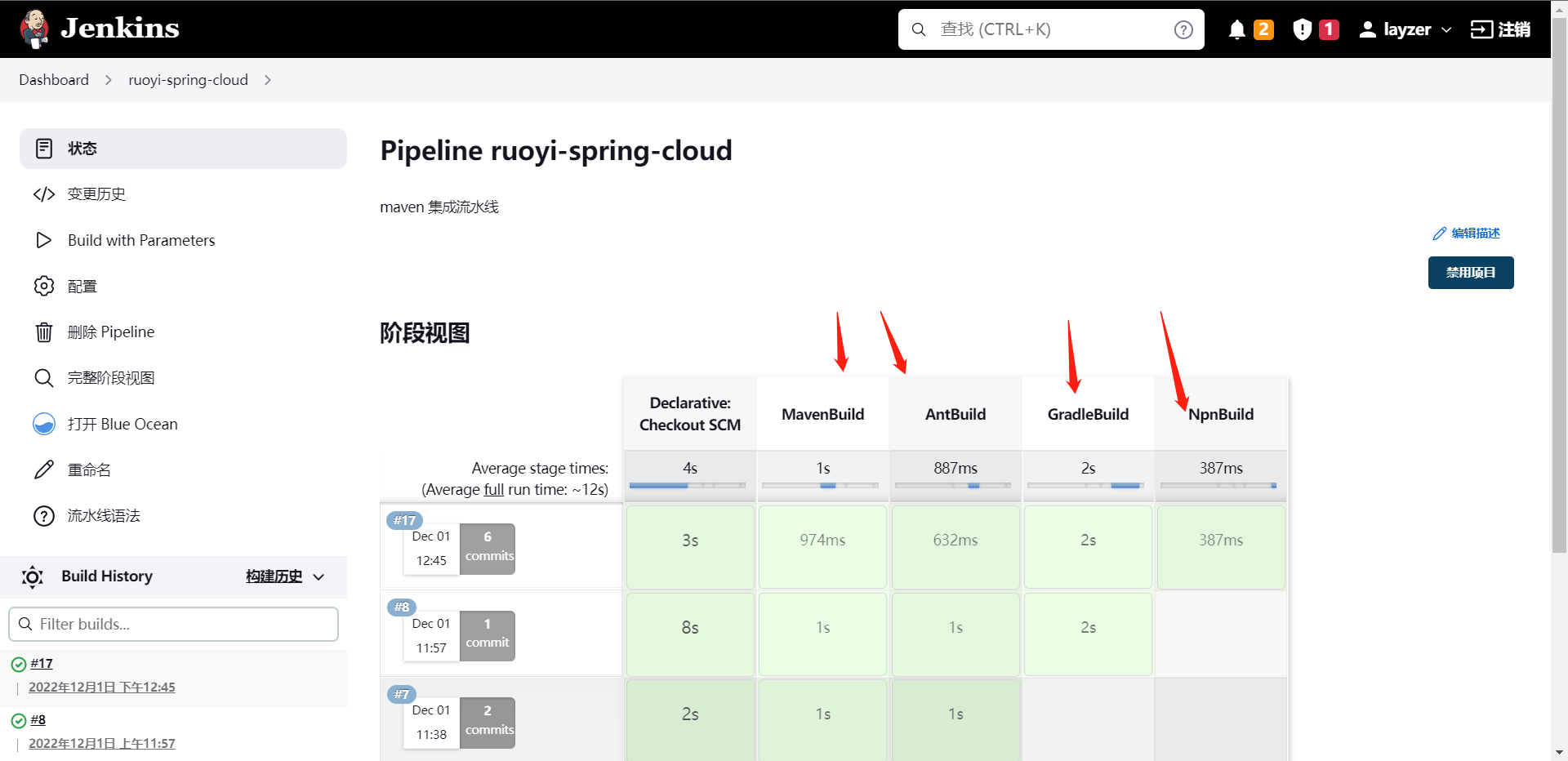

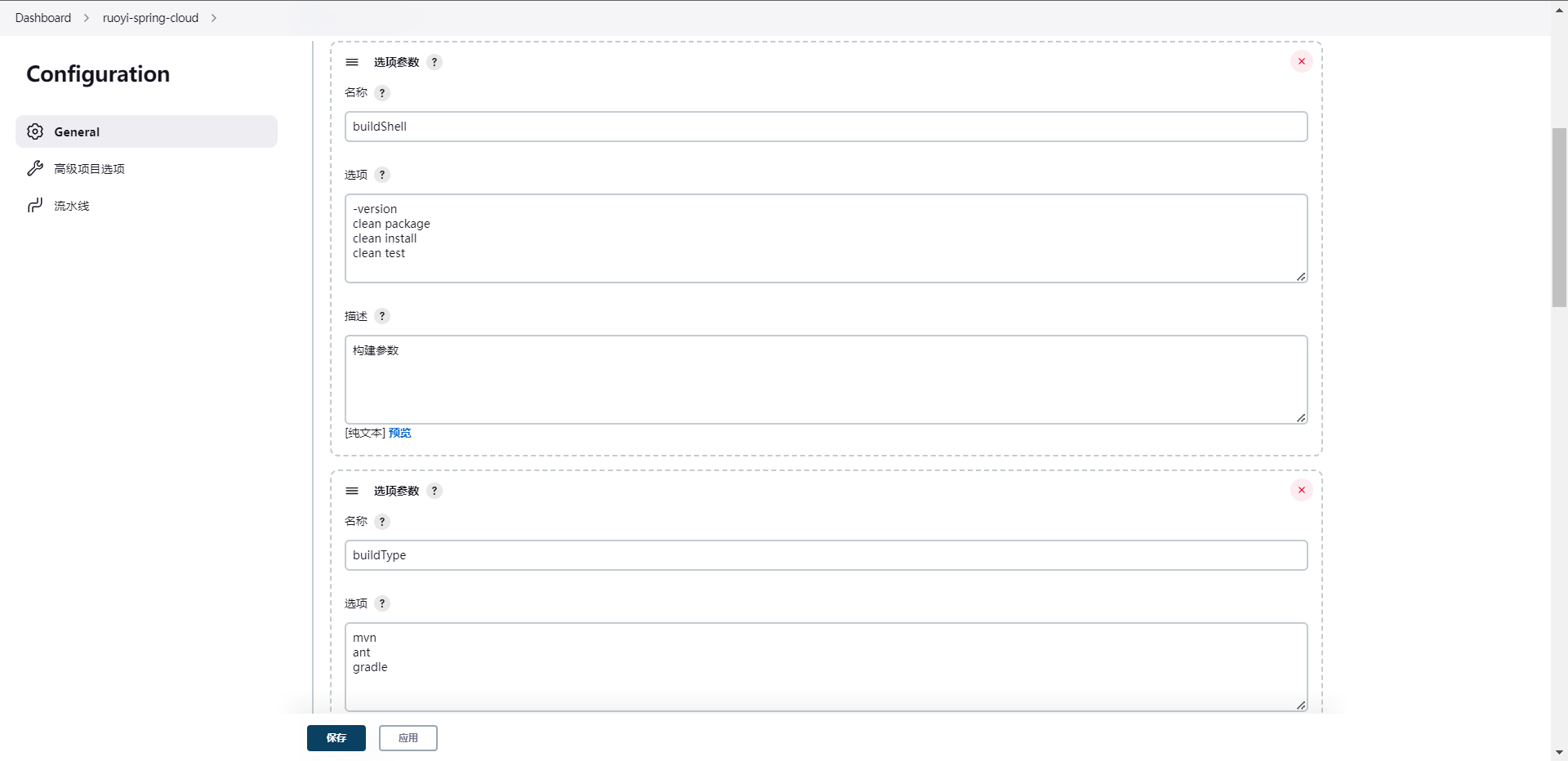

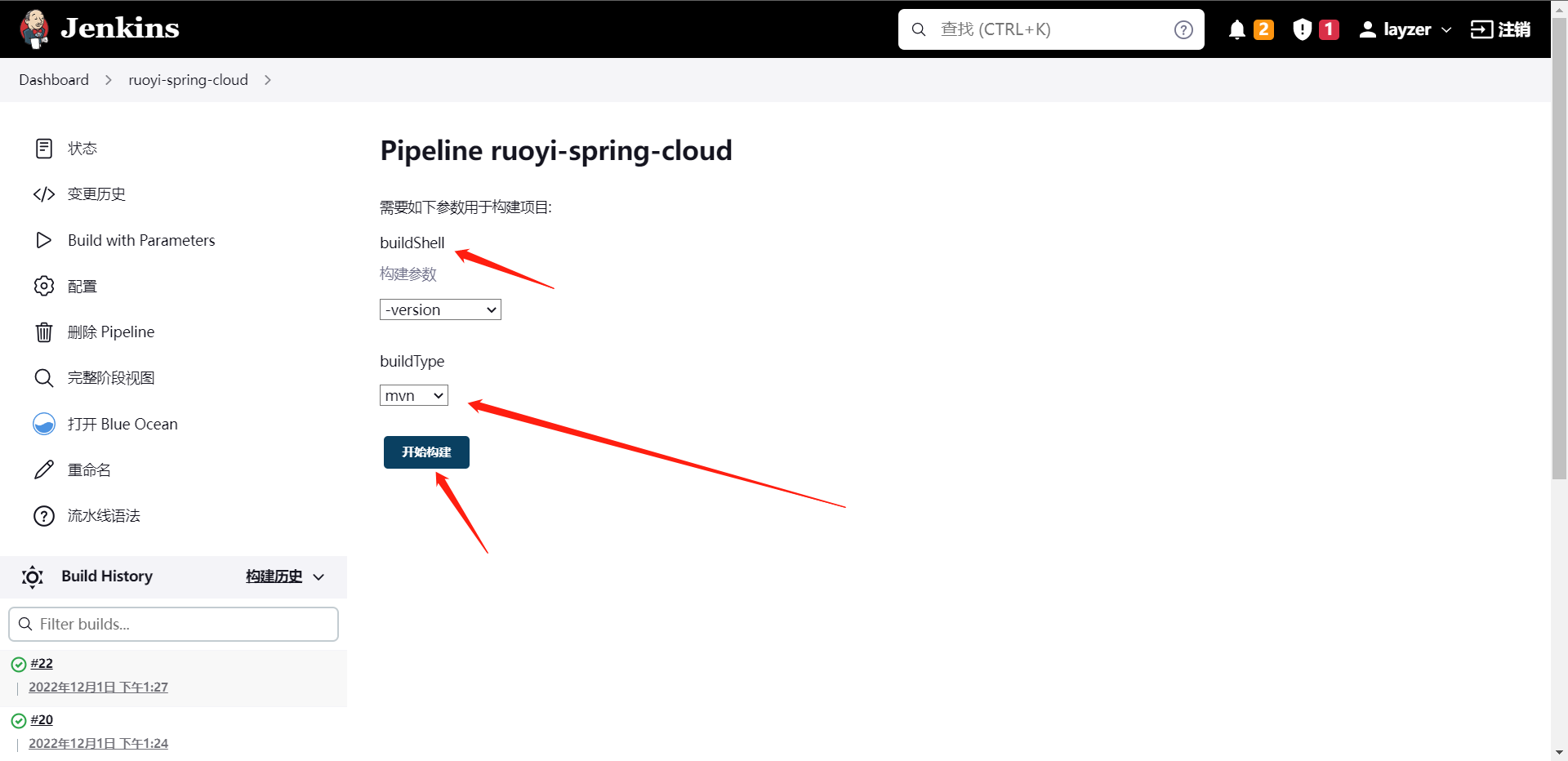

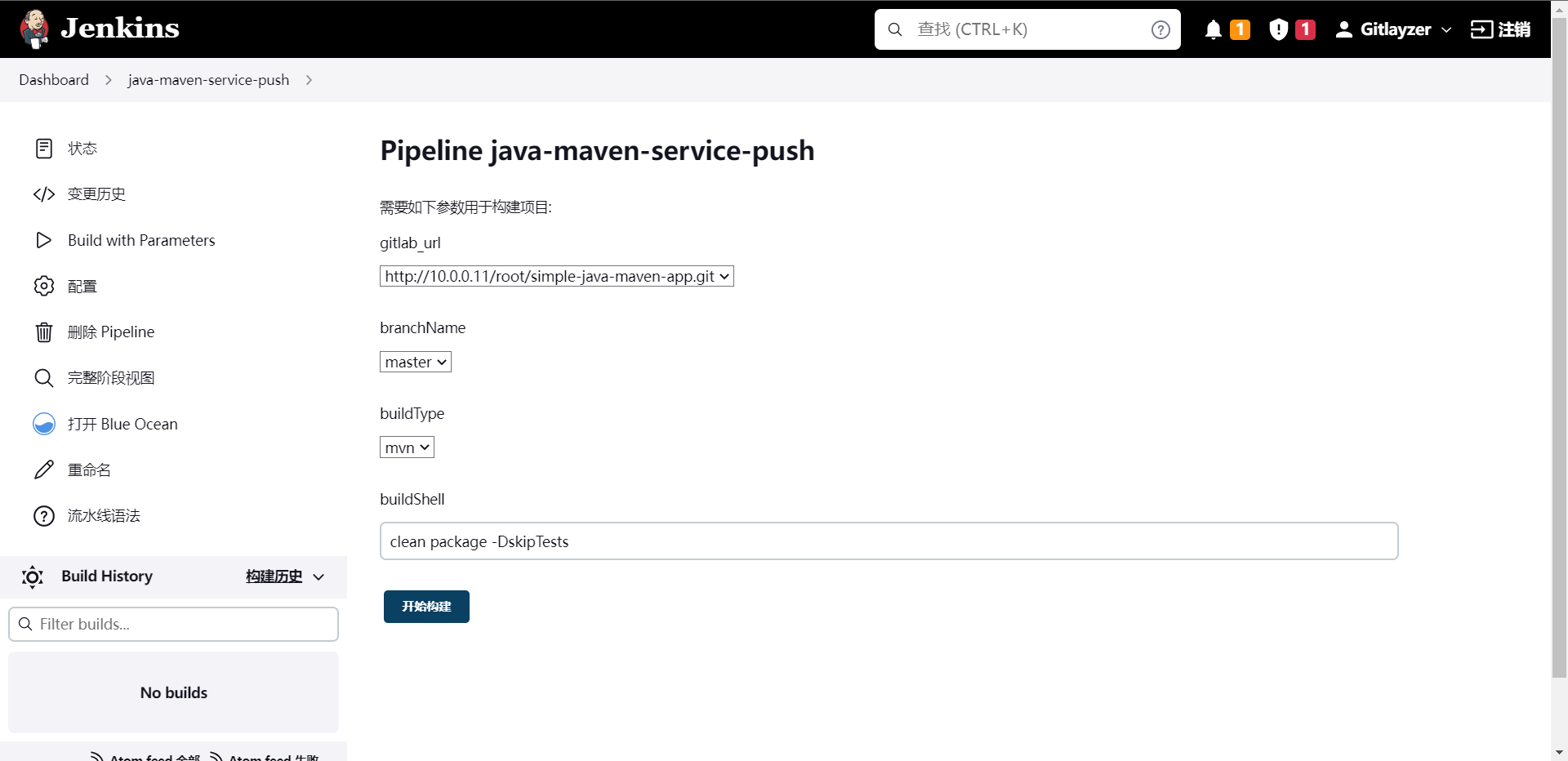

保存后去执行流水线我们可以看到,我们的可选参数就在这里出现了,然后我们执行一下看看结果。

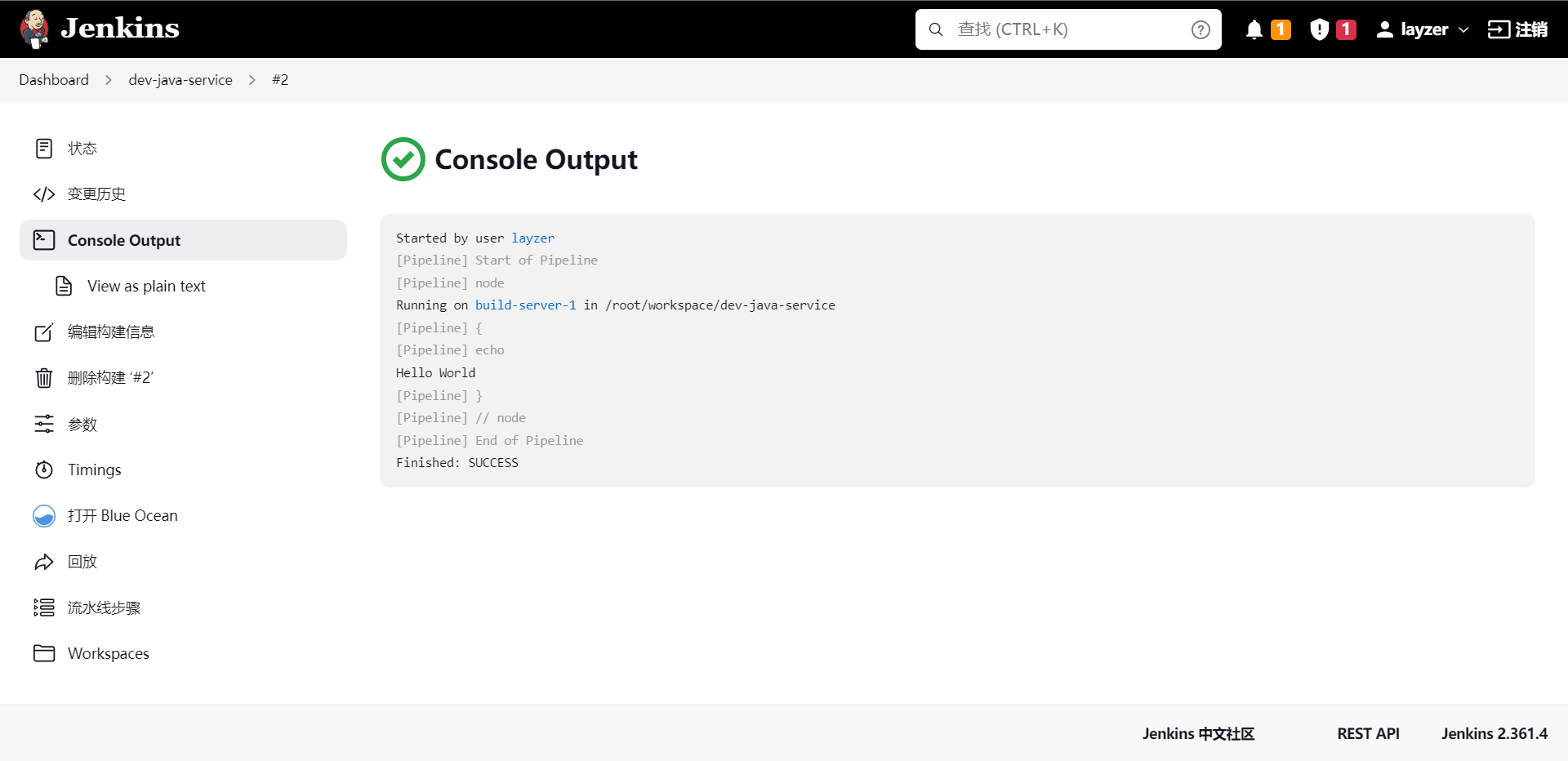

这就是流水线的执行结果,我们从这里就可以看到执行的一些个日志之类的。

这个只是我们创建一个项目中的某个流水线的方法,那么我们如果有很多个项目怎么去管理它呢,其实这个方法也很简单,我们都知道Windows和Linux有目录这个概念,在Jenkins内其实也有这个概念,我们可以通过创建多个目录来管理流水线。当然了,这个功能在Jenkins内叫做`文件夹`

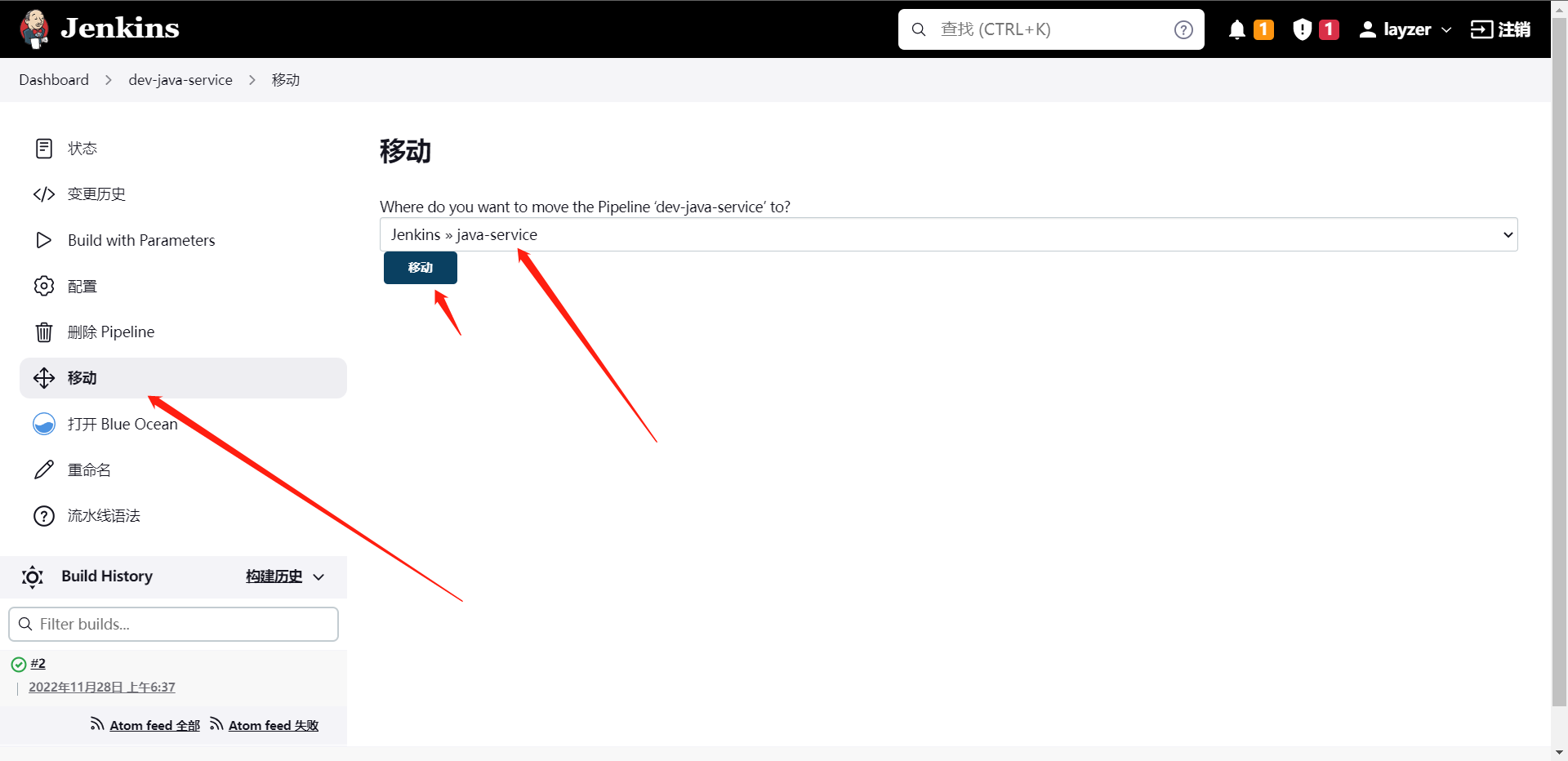

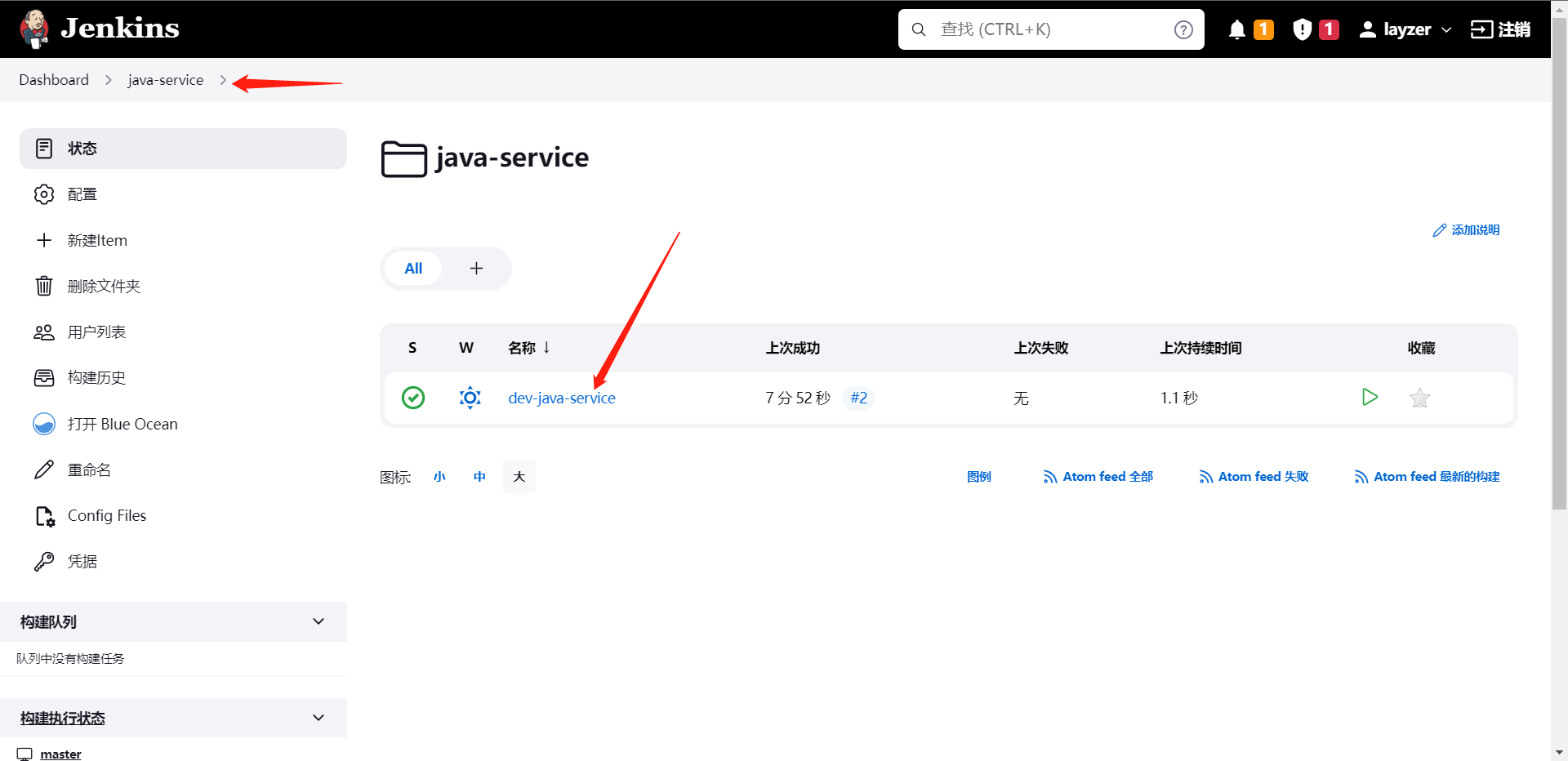

我们创建完文件夹之后,我们可以将一个任务移动到这个文件夹下。

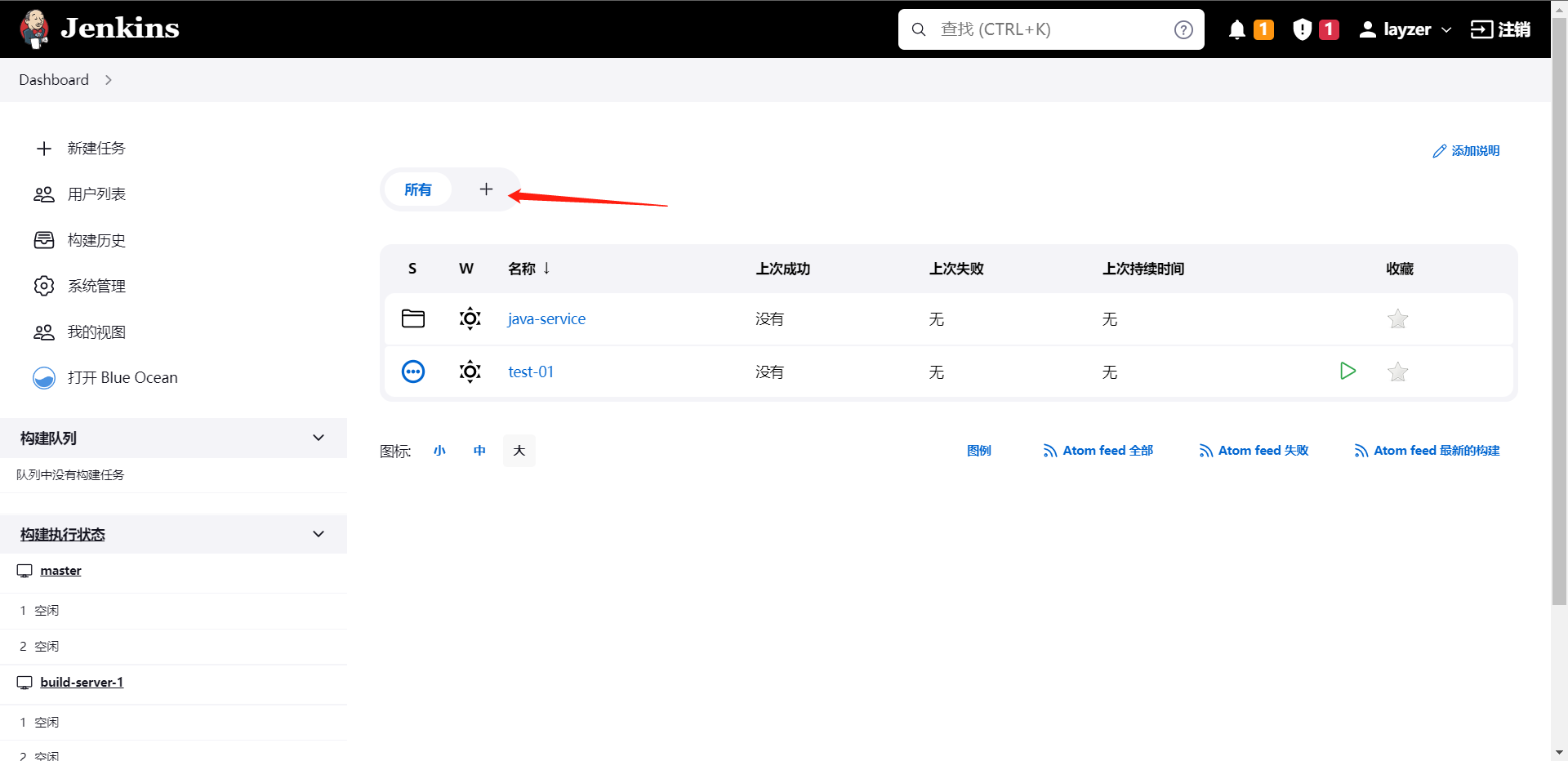

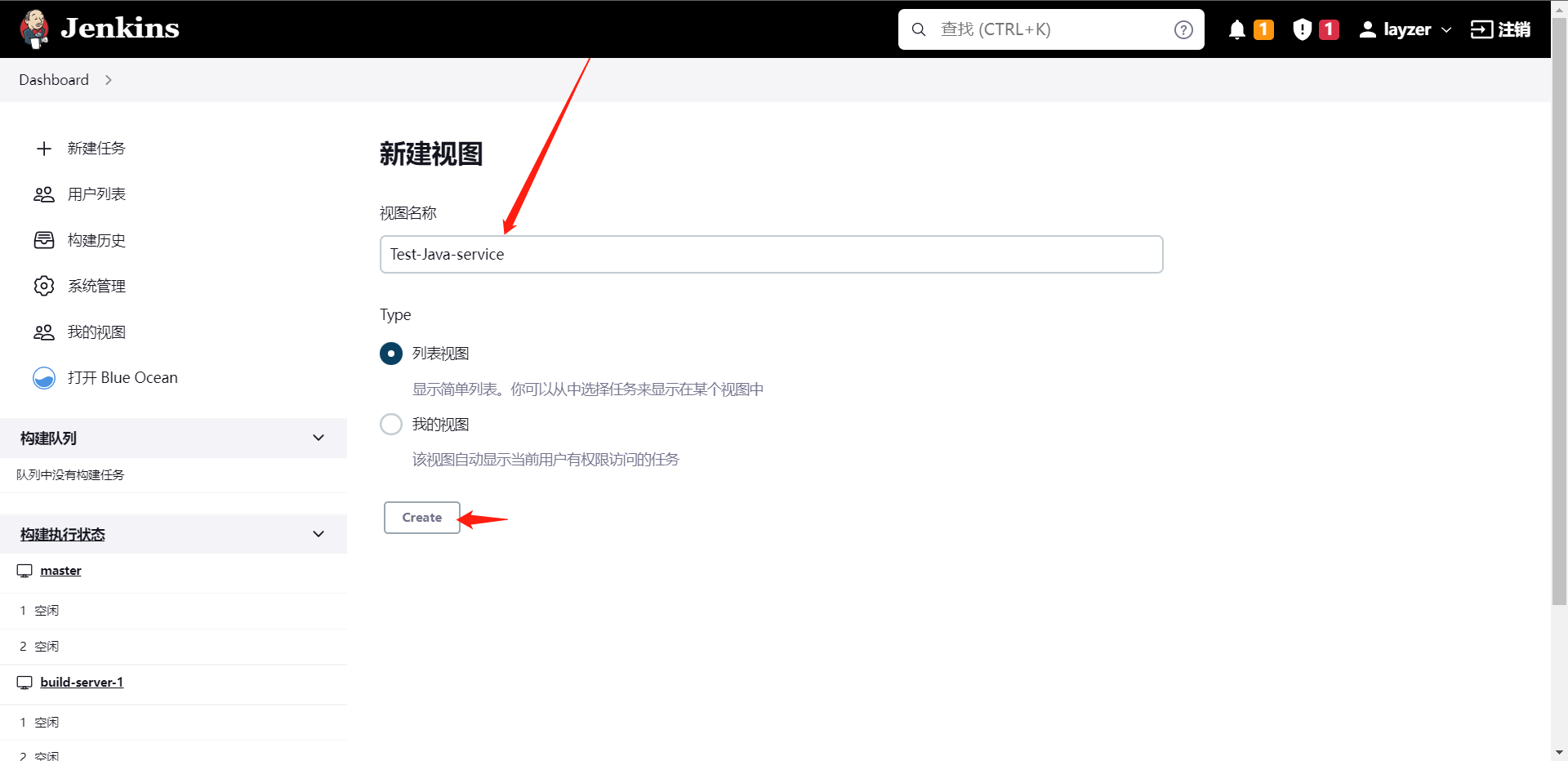

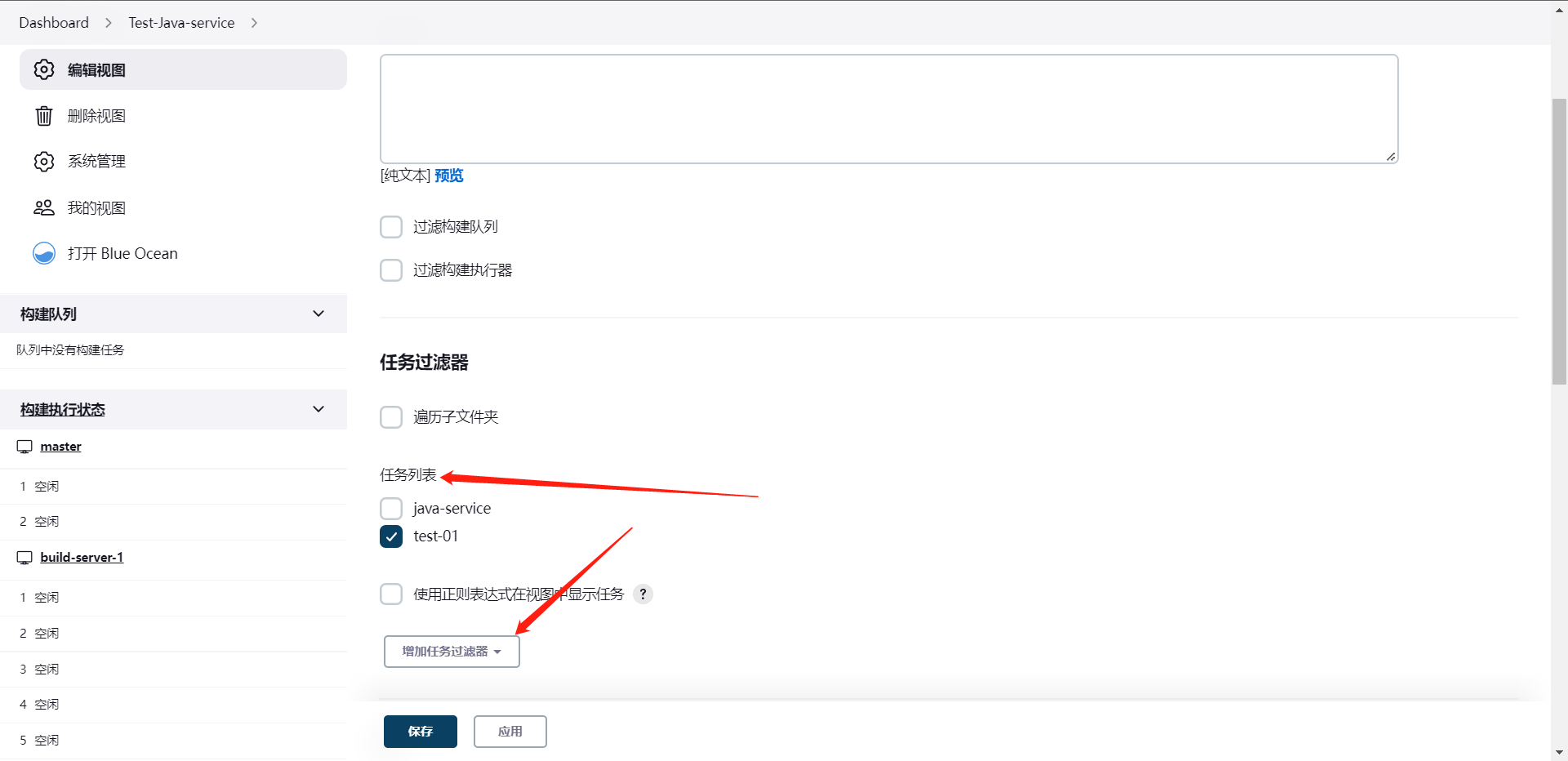

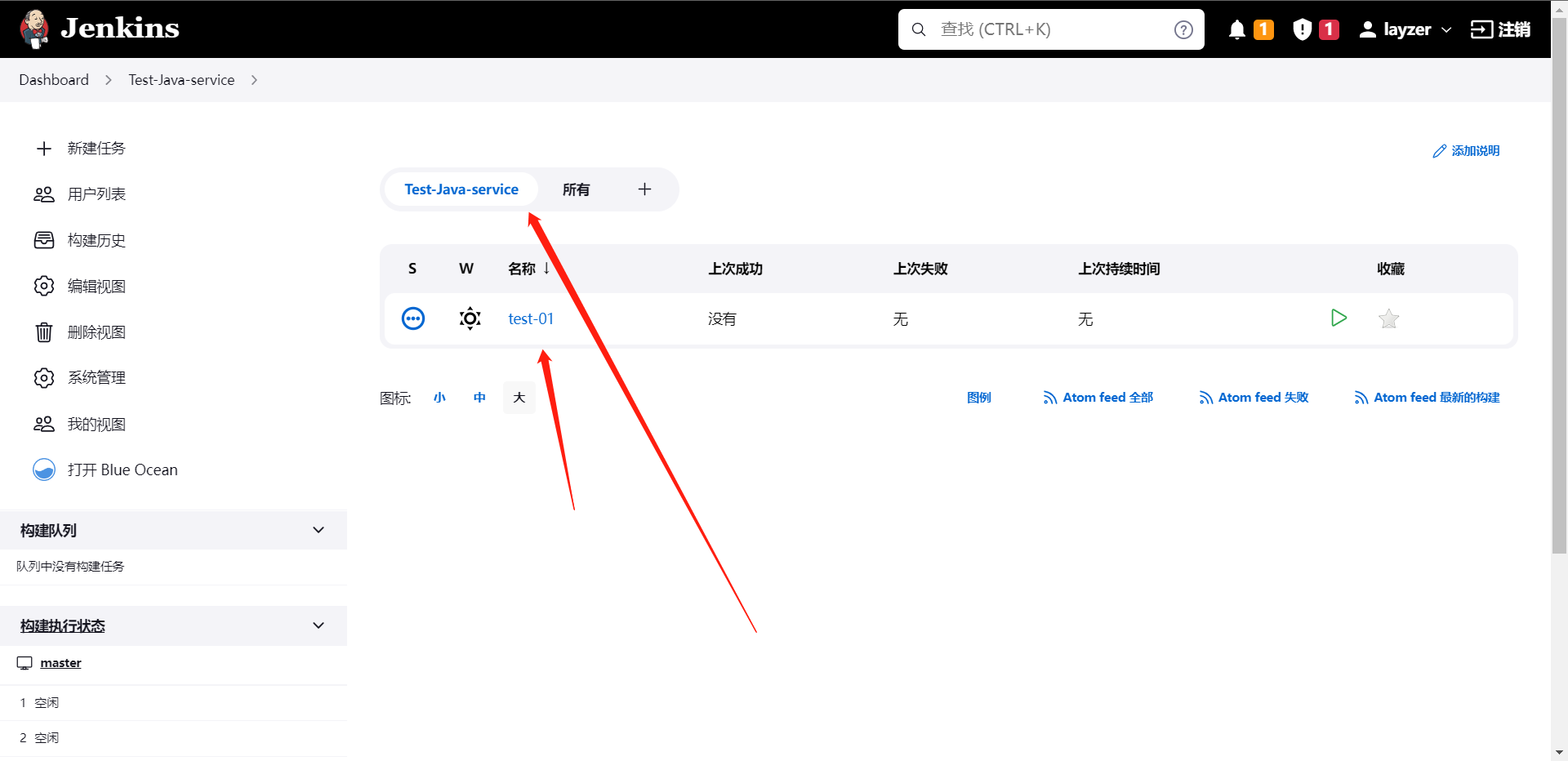

当然了,除了这种管理方式之外,我门还可以利用视图来管理项目,具体操作如下

那么这个就是以视图划分项目了。

2:流水线基础

1:JenkinsFile

# pipeline定义

1:流水线是通过Jenkinsfile描述

2:安装声明式插件Pipeline Declarative

3:Jenkinsfile的组成

3.1:定义node节点/workspace

3.2:指定指定运行选项

3.3:指定stages阶段

3.4:指定构建后操作

pipeline {

agent any

stages {

stage('Hello') {

steps {

echo 'Hello World'

}

}

}

}

我们接下来就是来学Pipeline怎么写,基本的框架是什么样子的,其实真要讲起来流水线,我们上面写的就是一条流水线,只是这条流水线的功能并不是那么的全面

pipeline {

agent {

node {

label "build-1" // 流水线运行的标签或者名称

// customWorkspace "${workspace}" // 这个是指定工作目录的,这个是可选项

}

}

options {

timestamps() // 日志会有时间,这里需要一个插件,大家记得装,否则这个功能用不了 `Timestamper`

skipDefaultCheckout() // 删除隐式chackout scm语句

disableConcurrentBuilds() // 禁止并行

timeout(time: 1, unit: "HOURS") // 流水线超时设置(1小时)

}

// 指定stages阶段(可以是一个,也可以是多个)

stages {

// 拉取代码阶段

stage('GitCode') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

}

}

}

}

stage('Build') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("应用打包阶段")

}

}

}

}

stage('CodeScan') {

steps {

timeout(time: 30, unit: "MINUTES"){

script{

println("代码扫码阶段")

}

}

}

}

}

// 构建后操作

post {

// 总是执行脚本

// 比如触发hook告知管理员流水线被触发了

always {

script {

println("Always")

}

}

// 流水线成功执行后执行操作

// currentBuild是一个全局变量,description是一个构建描述

// 状态变更

success {

script {

currentBuild.description += "\n 构建成功!"

}

}

// 流水线执行失败后执行操作

// 比如触发hook告知管理员流水线被触发了

failure {

script {

currentBuild.description += "\n 构建失败!"

}

}

// 流水线取消后执行操作

// 比如触发hook告知管理员流水线被触发了

aborted {

script {

currentBuild.description += "\n 构建取消!"

}

}

}

}

上面其实就是一个比较常用的pipeline操作了,基本上包含了我们所需要的东西了。

这就是我们日常的流水线的运维了,

2:Pipeline语法

2.1:agent

agent是用于指定流水线运行的节点,它的参数如下:

1:any:在任何可用的节点上执行pipeline

2:none:没有指定agent的时候默认。

3:label:在指定标签上的节点运行Pipeline

4:node:允许额外的选项

pipeline {

agent {

label "<labelName>"

}

}

or

pipeline {

agent {

node {

label "<labelName>"

// 这里还可以添加其他额外选项

}

}

}

2.2:post

定义一个或者多个Steps,这些阶段根据流水线或阶段的完成情况而运行(取决于流水线中post部分的位置),post支持以下post-condition块中的其中之一,

always,changed,failure,success,unstable和aborted,这些条件块允许在post部分的步骤执行,取决于流水线或阶段的完成状态

1:always:无论流水线或者阶段的完成状态

2:changed:只有当前流水线或者阶段完成状态与之前不同时

3:failure:只有当流水线或者阶段状态为"failure"时运行

4:success:只有当流水线或者阶段状态为"success"时运行

5:unstable:只有当流水线阶段或者状态为"unstable"时运行,例如:测试失败

6:aborted:只有当流水线或者阶段状态为"aboted"时运行,例如:手动取消流水线

......

// 构建后操作

post {

// 总是执行脚本

// 比如触发hook告知管理员流水线被触发了

always {

script {

println("Always")

}

}

// 流水线成功执行后执行操作

// currentBuild是一个全局变量,description是一个构建描述

// 状态变更

success {

script {

currentBuild.description += "\n 构建成功!"

}

}

// 流水线执行失败后执行操作

// 比如触发hook告知管理员流水线被触发了

failure {

script {

currentBuild.description += "\n 构建失败!"

}

}

// 流水线取消后执行操作

// 比如触发hook告知管理员流水线被触发了

aborted {

script {

currentBuild.description += "\n 构建取消!"

}

}

}

......

2.3:stages

这里就是我们的流水线执行的阶段了,主要是stages内包含多个stage,stage内又可以包含多个steps,主要用于交付我们的自动化任务,比如:构建,测试,部署等操作

......

// 指定stages阶段(可以是一个,也可以是多个)

stages {

// 拉取代码阶段

stage('GitCode') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

}

}

}

}

stage('Build') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("应用打包阶段")

}

}

}

}

stage('CodeScan') {

steps {

timeout(time: 30, unit: "MINUTES"){

script{

println("代码扫码阶段")

}

}

}

}

}

......

2.4:steps

它是定义在stages下的一个步骤,我们一般常用的就是一个阶段一个步骤,当然这些都是可以自定义的。

......

// 拉取代码阶段

stage('GitCode') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

}

}

}

}

......

2.5:environment

environment指令指定一个键值对序列,该序列被定义为所有步骤的环境变量,或者用于特定阶段的步骤,这取决于environment在流水线内的位置,这个指令支持一个特殊方法,credentials(),该方法可用于Jenkins环境变量中通过标识符访问预定义的凭证,对于类型是"Secret Text"的凭证,credentials()将确保指定的环境变量包含秘密文本内容,对于类型为"SStandart username and password"的凭证,指定环境变量指定为username:password,并且额外的两个环境变量将被自动定义,分别为:`MYYARNAME_USR`和`MYYARNAME_PSW`

pipeline {

agent {

node {

label "build-1"

}

}

environment {

version = "0.0.1"

}

options {

timestamps()

skipDefaultCheckout()

disableConcurrentBuilds()

timeout(time: 1, unit: "HOURS")

}

stages {

stage('GitCode') {

environment {

token = credentials("token")

}

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

}

}

}

}

stage('Build') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("应用打包阶段")

}

}

}

}

stage('CodeScan') {

steps {

timeout(time: 30, unit: "MINUTES"){

script{

println("代码扫码阶段")

}

}

}

}

}

}

2.6:options

options指令允许从流水线内部配置特定于流水线的选项,流水线提供了许多这样的选项,比如:buildDiscarder,但也可以由插件提供,比如:timestamps

1:buildDiscarder:为最近的流水线运行的特定数量保存组件和控制台输出。

2:disableConcurrentBuilds:不允许同时执行流水线,可用于防止同时访问共享资源等

3:overrideIndexTriggers:允许覆盖分支索引触发器的默认处理

4:skipDefaultCheckout:在agent指令中,跳过从代码仓库拉取代码的默认情况

5:skipStagesAfterUnstable:一旦构建状态变成UNSTABLE,跳过该阶段

6:checkoutToSubdirectory:在工作空间的子目录中自动的执行源代码拉取

7:timeout:设置流水线超时的时间,在此之后流水线将被Jenkins终止

8:retry:在流水线失败时,指定尝试运行次数

9:timestamps:预测所有由流水线生成的控制台输出,与该流水线发出的时间一致

2.7:paramters

这个我们称之为参数,也就是我们运行项目的时候指定的一个参数比如指定发布应用,发布版本,发布环境,等信息

pipeline {

agent {

node {

label "build-1"

}

}

parameters {

string (name: "deploy_translate", defaultValue: "dev", description: '选择发布环境')

// booleanParam(name: "skip_codescan", defaultValue: "true", description: "是否跳过代码检查")

}

environment {

version = "0.0.1"

}

options {

timestamps()

skipDefaultCheckout()

disableConcurrentBuilds()

timeout(time: 1, unit: "HOURS")

}

stages {

stage('GitCode') {

environment {

token = credentials("token")

}

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

println("${deploy_translate}")

}

}

}

}

stage('Build') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("应用打包阶段")

println("${deploy_translate}")

}

}

}

}

}

}

可以看到这里就是参数,我们通过在这里定义我们的参数然后从stages内获取到参数然后进行指定的操作。这个就称之为参数化,当然这个参数化不一定要写进pipeline内,也可以在前面我们讲的流水线的web内配置,也是可以的。

2.8:trigger

名为触发器,主要用于我们可能会用到的定时任务等操作

// 定期执行

triggers {

cron ('H */4 * * 1-5')

}

// pollSCM与cron定义类似,但是由Jenins定期检测源码变化。

triggers {

pollSCM('H */4 * * 1-5')

}

// upstream接受逗号分隔的工作字符串和阈值,当字符串中的任何作业以最小阈值结束时,流水线被重新触发

triggers {

upstream(upstreamProject: 'job-one,job-two', threshold: hudson.model.Result.SUCCESS)

}

2.9:tool

获取自动安装或手动部署工具的环境变量,支持maven/jdk/gradle,工具的名称必须在系统设置---全局工具配置中定义

pipeline {

agent {

node {

label "build-1"

}

}

parameters {

string (name: "deploy_translate", defaultValue: "dev", description: '选择发布环境')

}

environment {

version = "0.0.1"

}

options {

timestamps()

skipDefaultCheckout()

disableConcurrentBuilds()

timeout(time: 1, unit: "HOURS")

}

stages {

stage('GitCode') {

environment {

token = credentials("token")

}

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

println("${deploy_translate}")

mvn = tool "maven"

sh "${mvn} --version"

}

}

}

}

stage('Build') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("应用打包阶段")

println("${deploy_translate}")

}

}

}

}

}

}

2.10:input

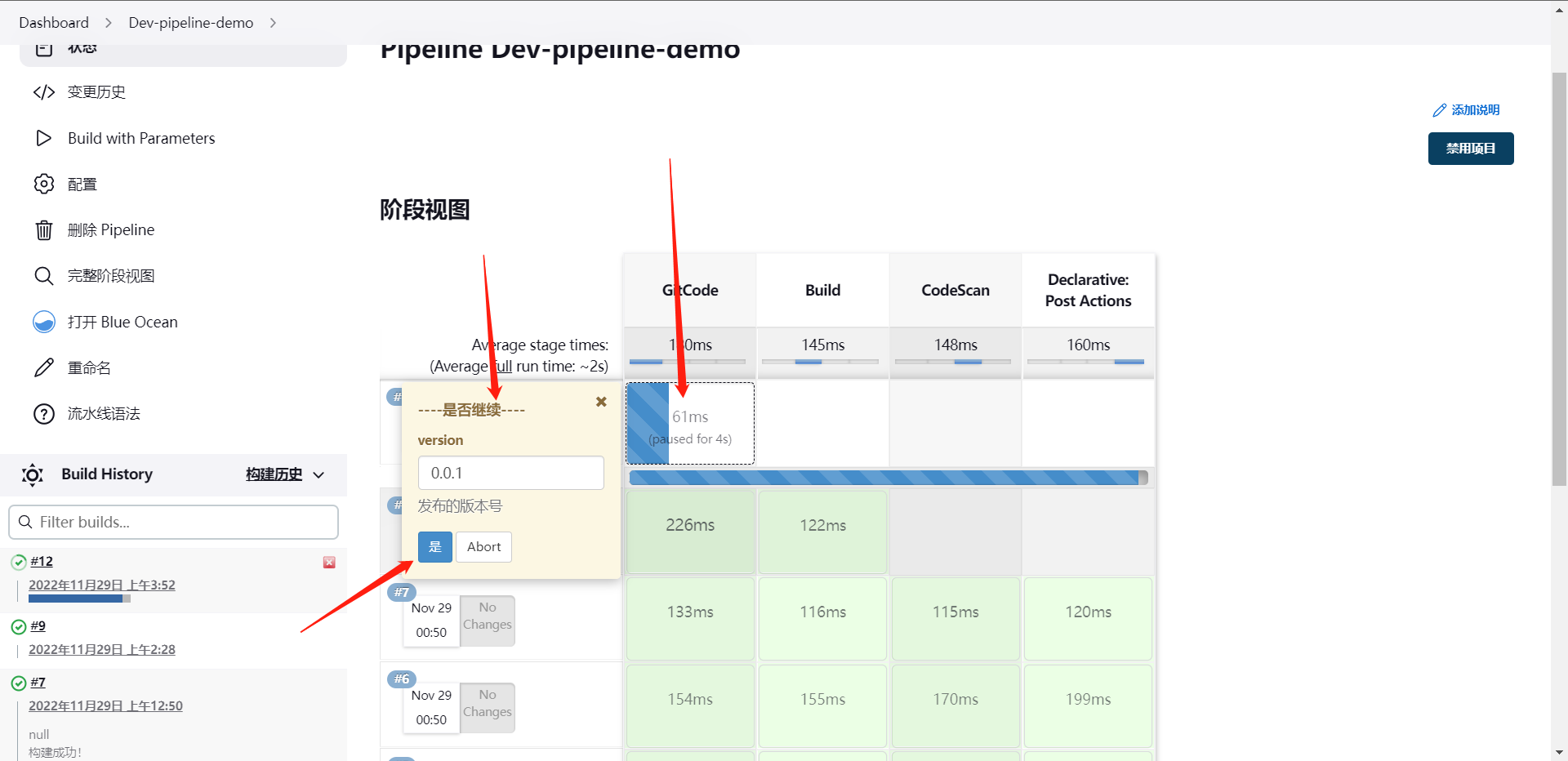

input用户在执行各个阶段的时候由人工确认是否继续进行。

1:message:呈现给用户的提示信息

2:id:可选,默认为stage名称

3:ok:默认表单上的ok文本

4:submitter:可选,以逗号分割的用户列表,或允许提交的外部组名,默认允许任何用户

5:submitterParameter:环境变量的可选名称,如果存在,用submitter名称设置

6:parameters:提示提交者提供一个可选的参数列表

pipeline {

agent {

node {

label "build-1"

}

}

parameters {

string (name: "deploy_translate", defaultValue: "dev", description: '选择发布环境')

}

options {

timestamps()

skipDefaultCheckout()

disableConcurrentBuilds()

timeout(time: 1, unit: "HOURS")

}

stages {

stage('GitCode') {

input {

message "----是否继续----"

ok "是"

submitter "zhangsan,lisi"

parameters {

string(name: "version", defaultValue: "0.0.1", description: "发布的版本号")

}

}

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

println("${version}")

}

}

}

}

stage('Build') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("应用打包阶段")

println("${deploy_translate}")

}

}

}

}

}

}

2.11:when

when语法允许流水线根据指定的条件决定是否应该执行该阶段,when语法必须包含至少一个条件,如果when有多个条件,所有子条件必须返回true阶段才能执行,这与子条件在allOf条件下嵌套的情况相同

// 内置条件:branch当正在构建分支与模式匹配的分支匹配到,执行这个阶段,这只适用于多分支流水线

when {

branch "master"

}

// environment当指定环境变量是给定的值时,执行这个步骤

when {

environment name: "deploy" value: "prd"

}

// expression当指定的Groovy表达式评估为true时,执行这个阶段

when {

expression {

return params.SKIP_CODESCAN

}

}

// not当嵌套条件为错位时,运行这个阶段

when {

not {

branch "master"

}

}

// allOf当所有嵌套条件都正确时,执行这个阶段,必须包含至少一个条件

when {

allOf {

branch "master"; environment name: "deploy" value: "prd"

}

}

// anyOf当至少有一个嵌套条件为真时执行这个阶段,必须包含至少一个条件

when {

anyOf {

branch "master"; branch "staging"

}

}

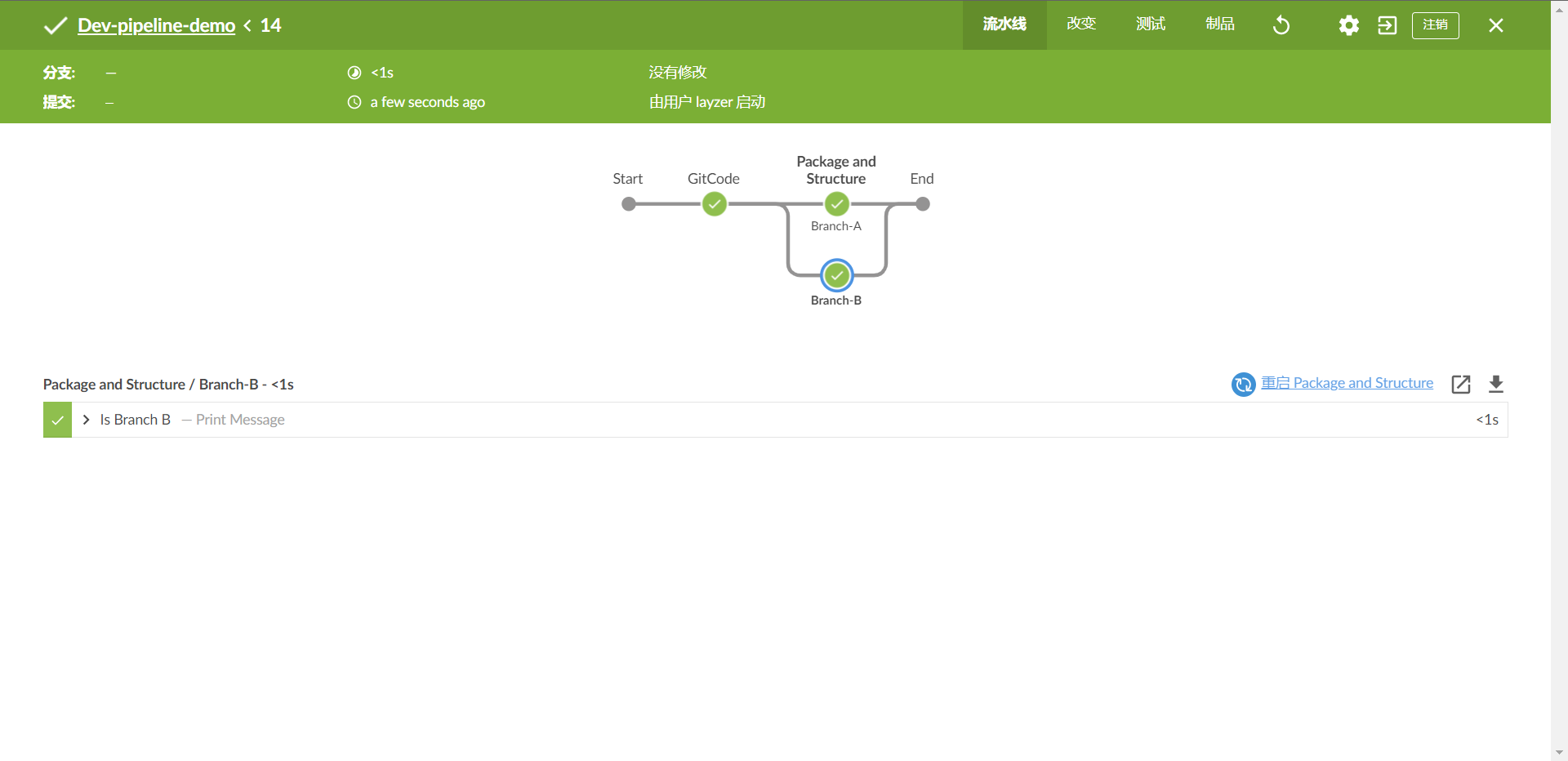

2.12:parallel

这个是并行,声明式流水线阶段可以在他们的内部声明多个嵌套阶段,他们将并行执行,注意,一个阶段必须只有一个steps或者parallel的阶段,嵌套阶段本身不能包含进一步的parallel阶段,但是其他阶段的行为与任何其他stage parallel的阶段不能包含agent或tool阶段,因为他们没有i相关的steps,另外添加failFast true到包含parallel的stage中。当其中一个进程失败时,你可以强制所有的parallel阶段都被终止

pipeline {

agent {

node {

label "build-1"

}

}

options {

timestamps()

skipDefaultCheckout()

disableConcurrentBuilds()

timeout(time: 1, unit: "HOURS")

}

stages {

stage('GitCode') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

}

}

}

}

stage("Package and Structure") {

failFast true

parallel {

stage ("Branch-A") {

agent {

label "build-1"

}

steps {

echo "Is Branch A"

}

}

stage ("Branch-B") {

agent {

// 这可以用不同的节点去做并行

label "build-1"

}

steps {

echo "Is Branch B"

}

}

}

}

}

}

从这里可以看出,两个stage时被并行了,但是实际上这两个阶段时不可能并行的哈,我这里只是做一个演示。

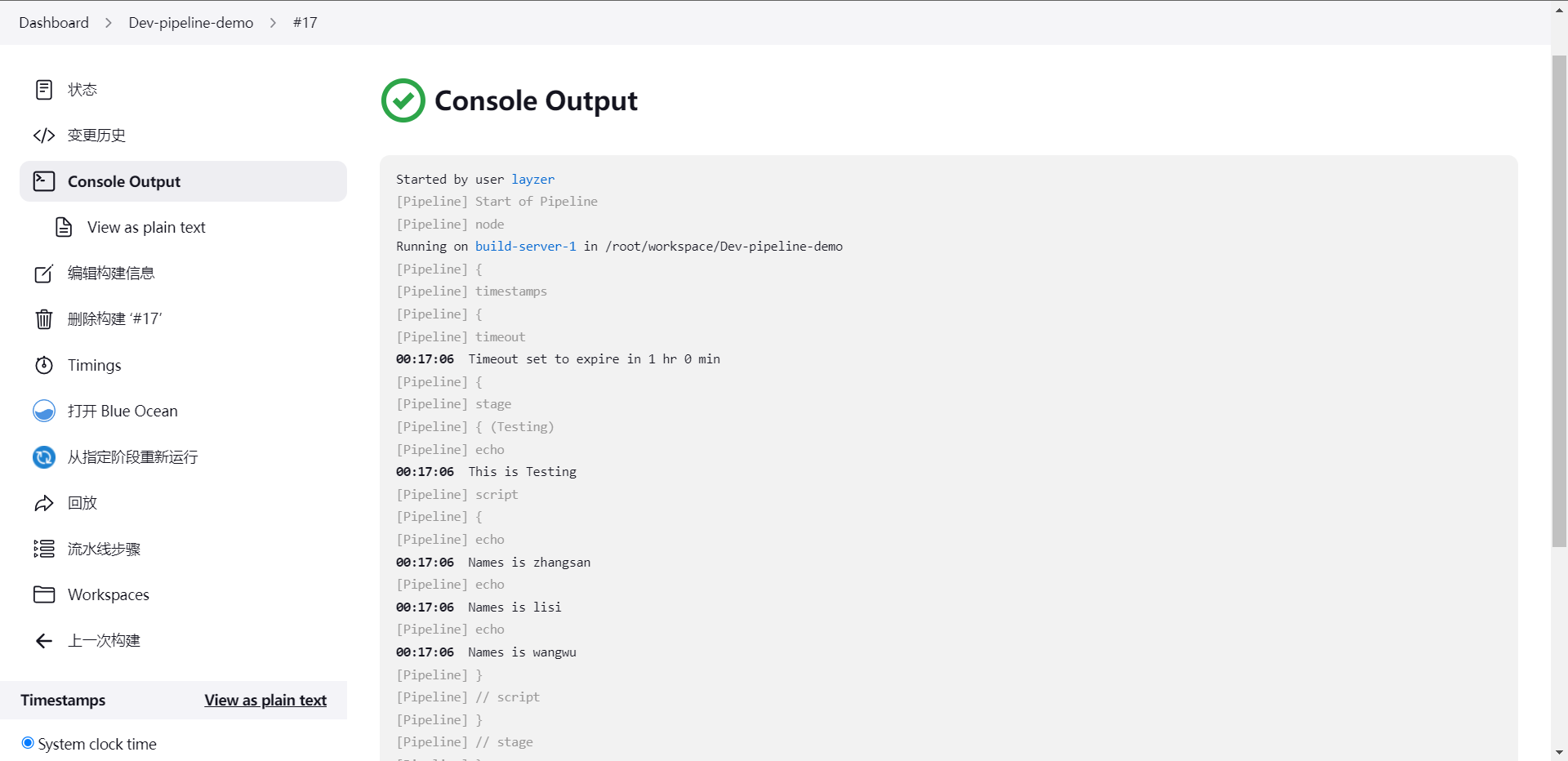

2.13:step

script步骤需要[script-pipeline]块并在声明式流水线中执行,对于大多数用例来说,声明式流水线中的`脚本`步骤是不必要的,但是它可以提供一些有用的方法,非平凡规模和复杂性的script块应该被移动到共享库

pipeline {

agent {

node {

label "build-1"

}

}

options {

timestamps()

skipDefaultCheckout()

disableConcurrentBuilds()

timeout(time: 1, unit: "HOURS")

}

stages {

stage('Testing') {

steps {

echo "This is Testing"

script {

def names = ['zhangsan','lisi','wangwu']

for (int i = 0; i < names.size(); ++i) {

echo "Names is ${names[i]}"

}

}

}

}

}

}

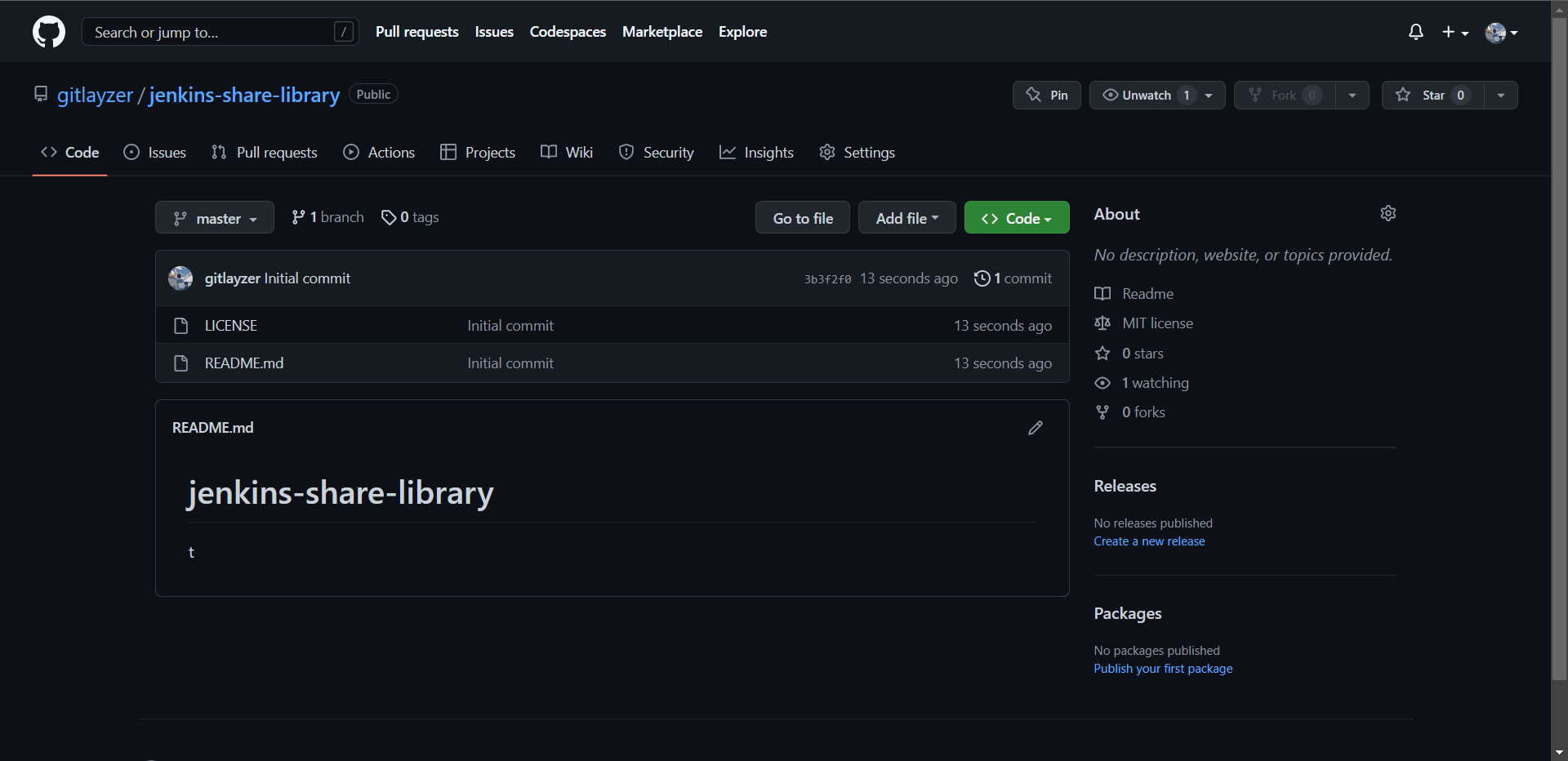

3:Jenkins共享库

我们可能通常听到的是JenkinsShareLibrary,那么我们来看看对它的概述吧

1:src目录类似于标准java源目录结构,执行流水线时,此目录将添加到类路径中。

2:vars目录托管脚本文件,这些脚本文件在"管道"中作为变量公开。

3:resources目录允许libraryResource从外部库中使用步骤来加载相关联的非Groovy文件。

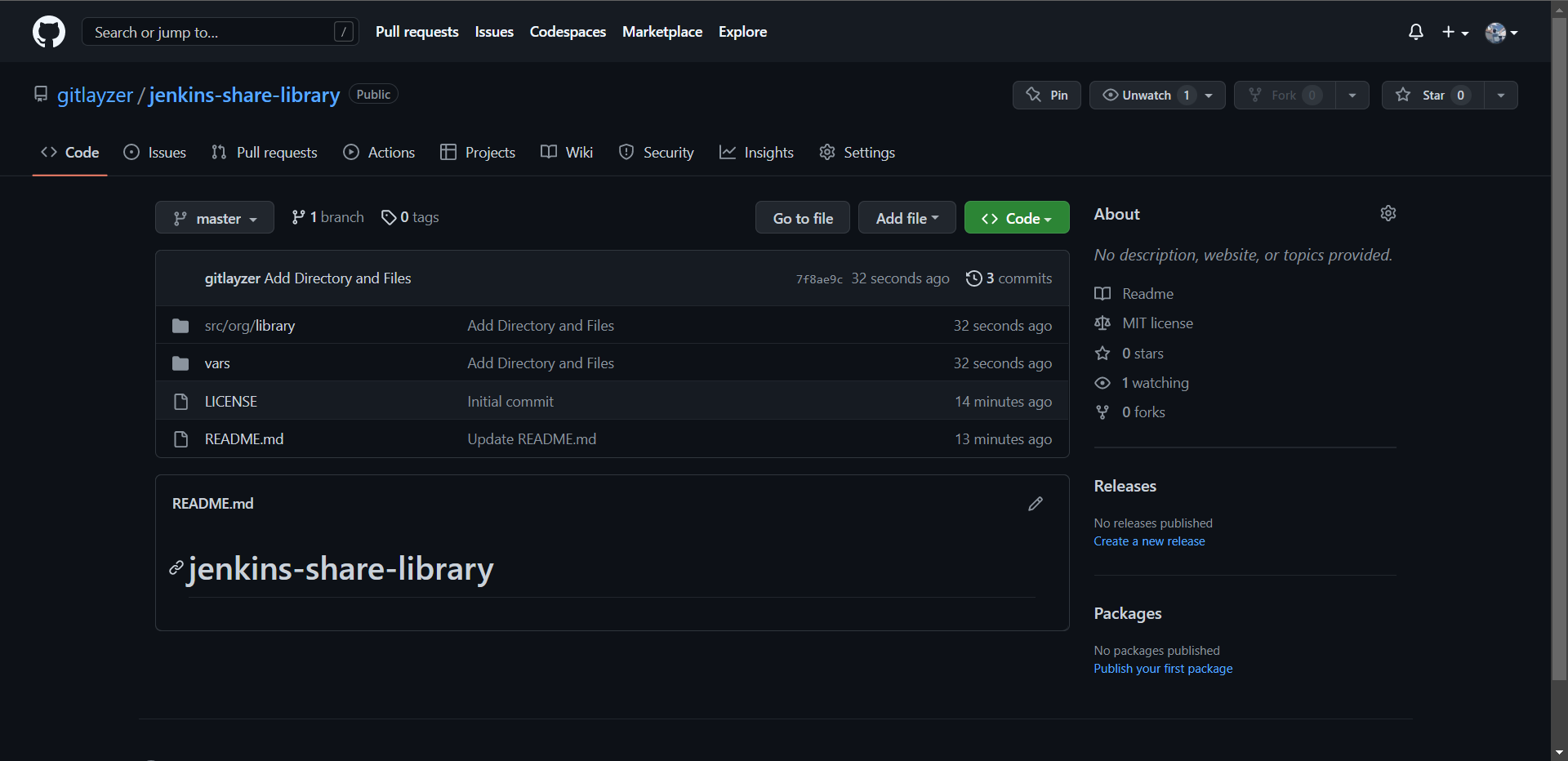

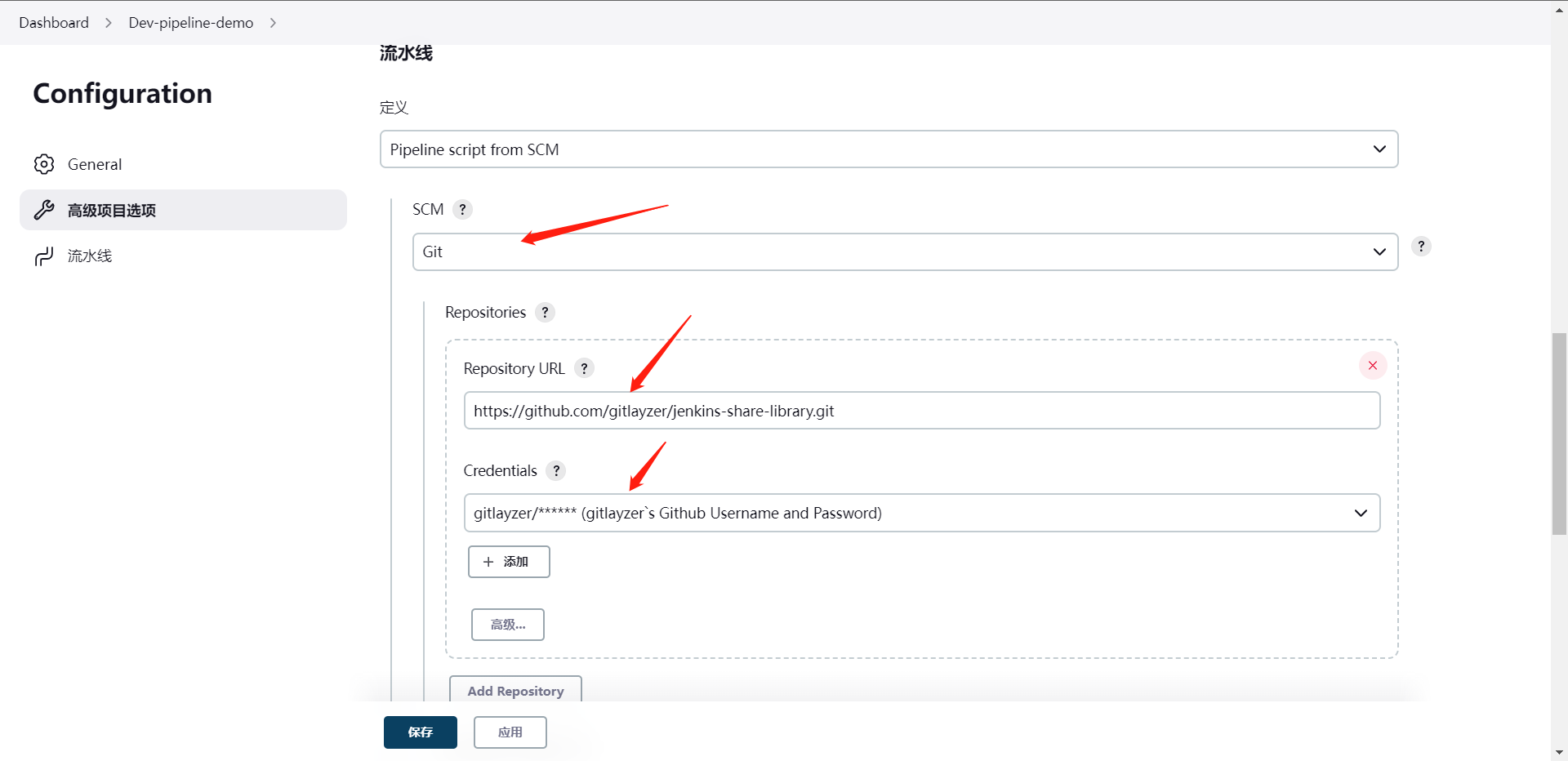

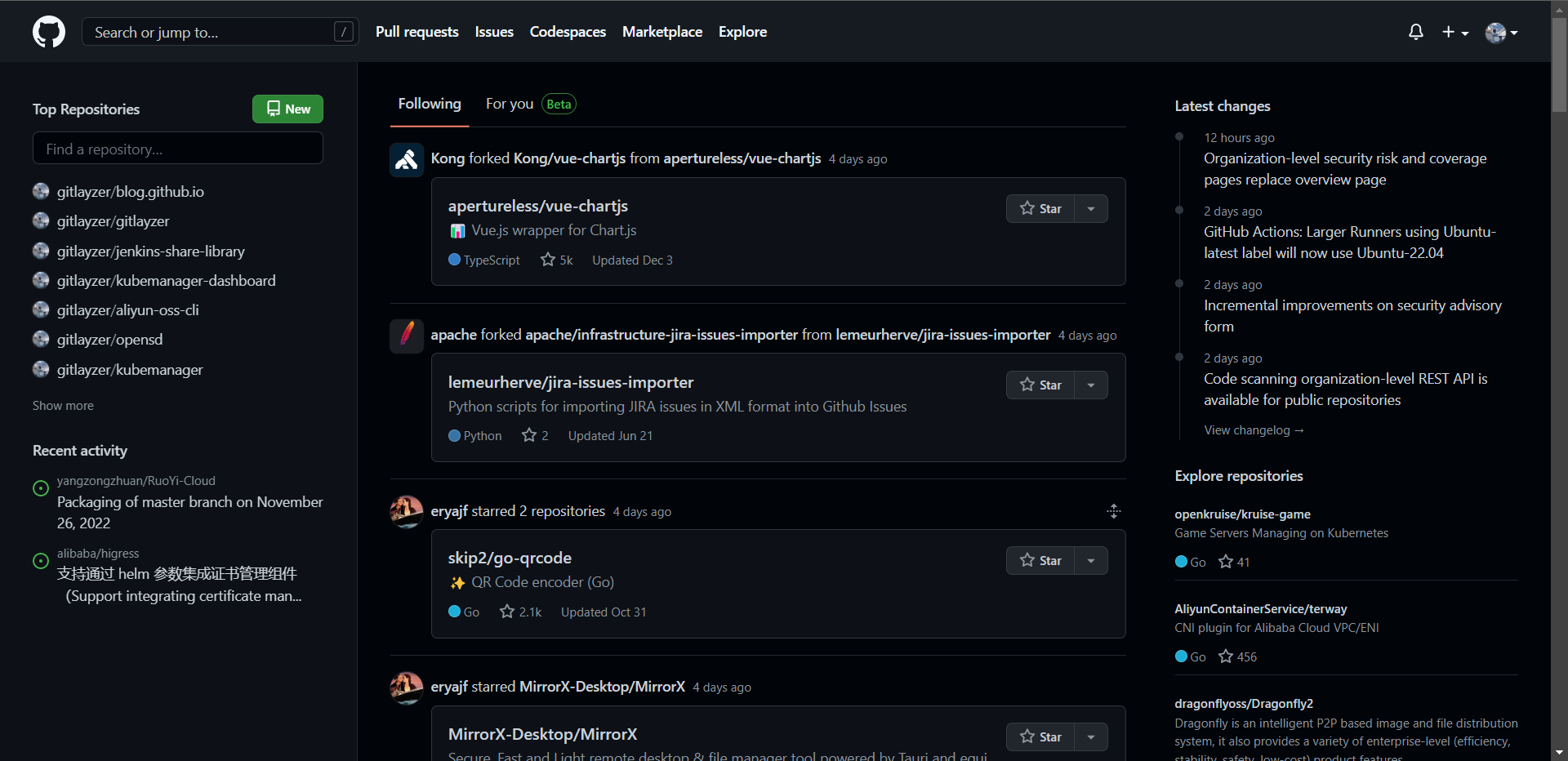

这里我走Github去创建一个仓库了

[root@cdk-server ~]# git clone https://github.com/gitlayzer/jenkins-share-library.git

[root@cdk-server ~]# cd jenkins-share-library/

[root@cdk-server jenkins-share-library]# mkdir -p src/org/library

[root@cdk-server jenkins-share-library]# touch src/org/library/tool.groovy

[root@cdk-server jenkins-share-library]# cat src/org/library/tool.groovy

package org.library

// 打印信息的方法

def PrintMsg(content){

print(content)

}

[root@cdk-server jenkins-share-library]# mkdir vars

[root@cdk-server jenkins-share-library]# touch vars/hello.groovy

[root@cdk-server jenkins-share-library]# cat vars/hello.groovy

# 这里不需要写package,因为它里面的内容其实就是全局的方法而已

def call(){

print("Hello")

}

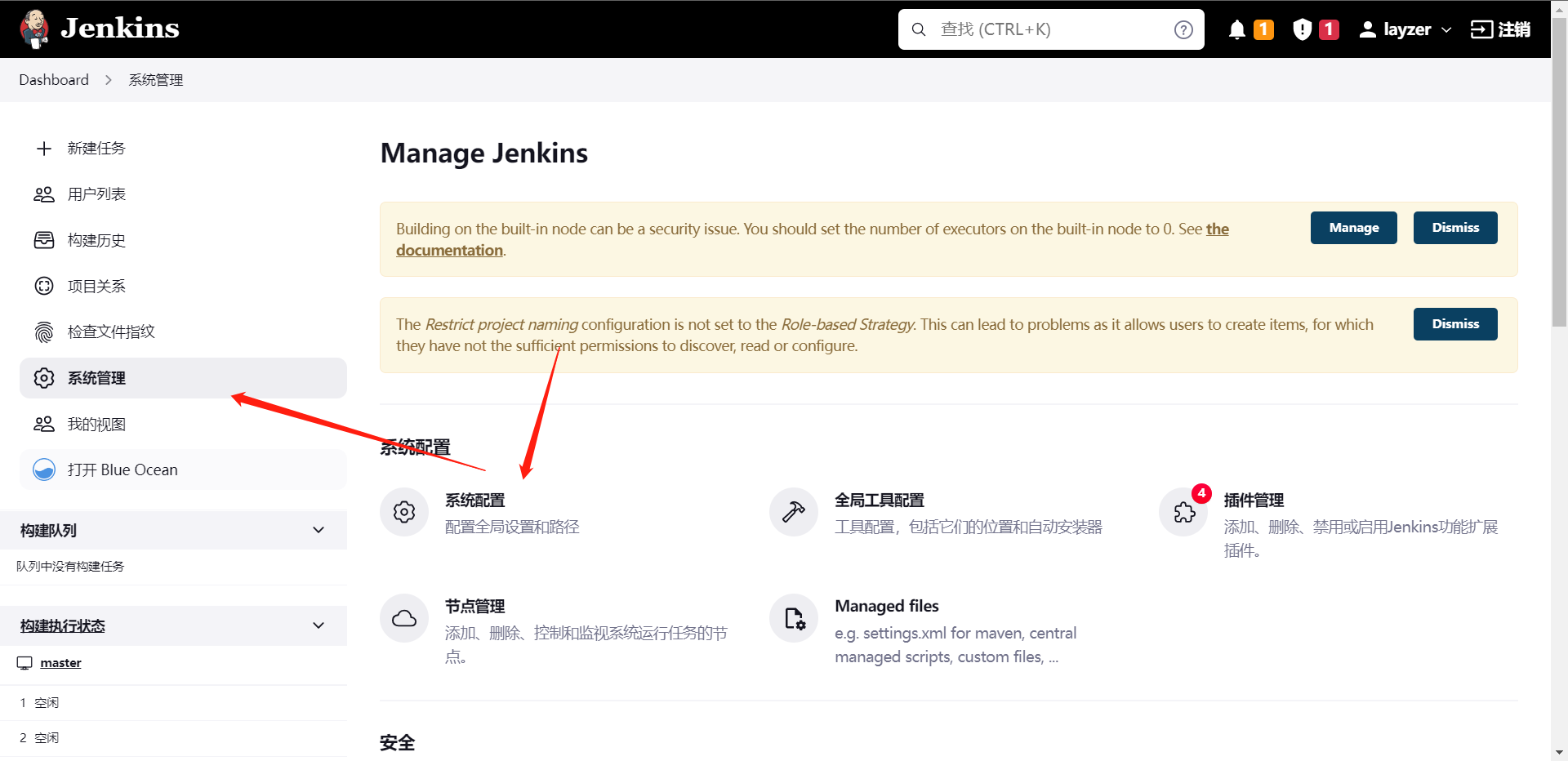

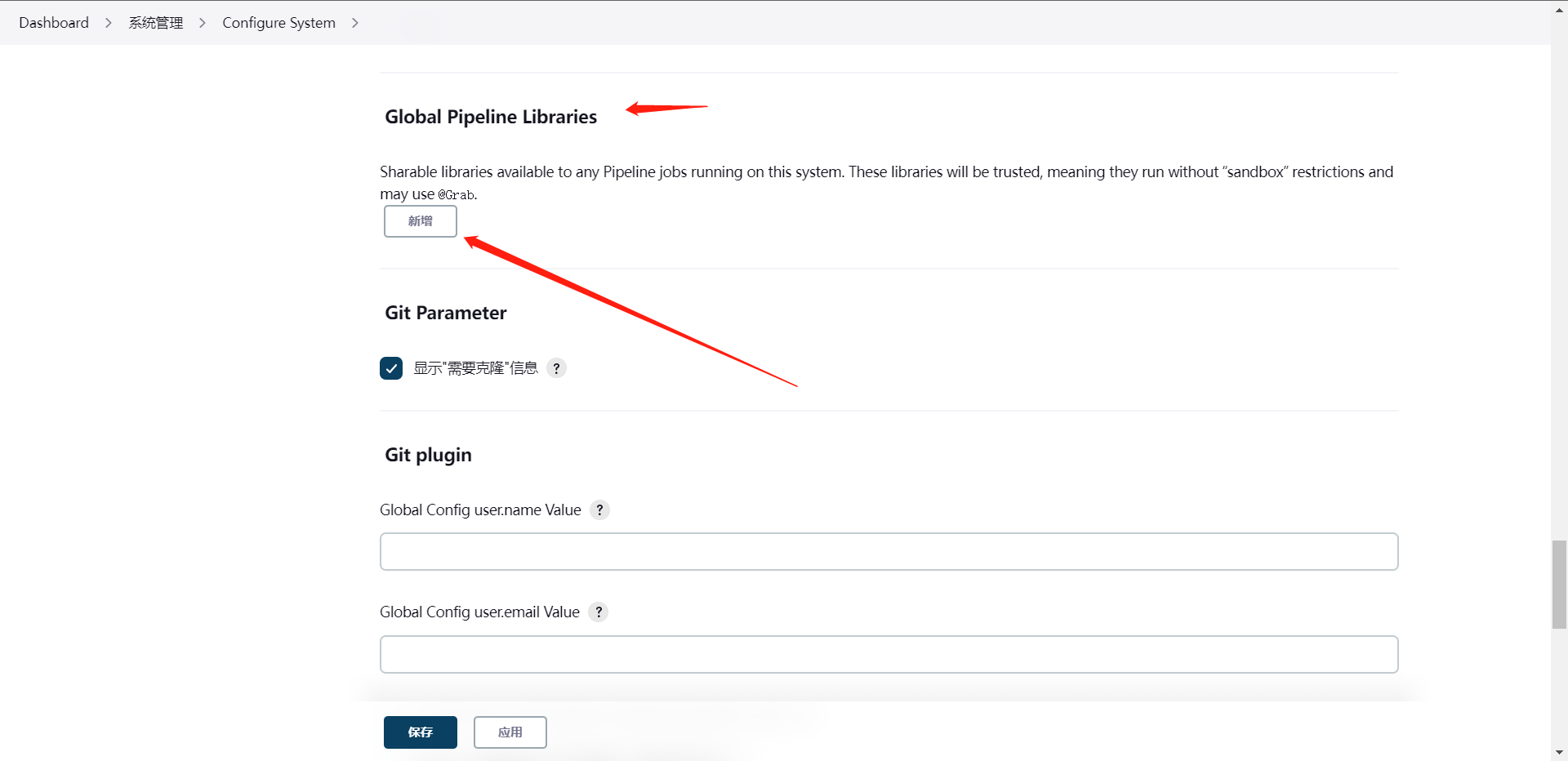

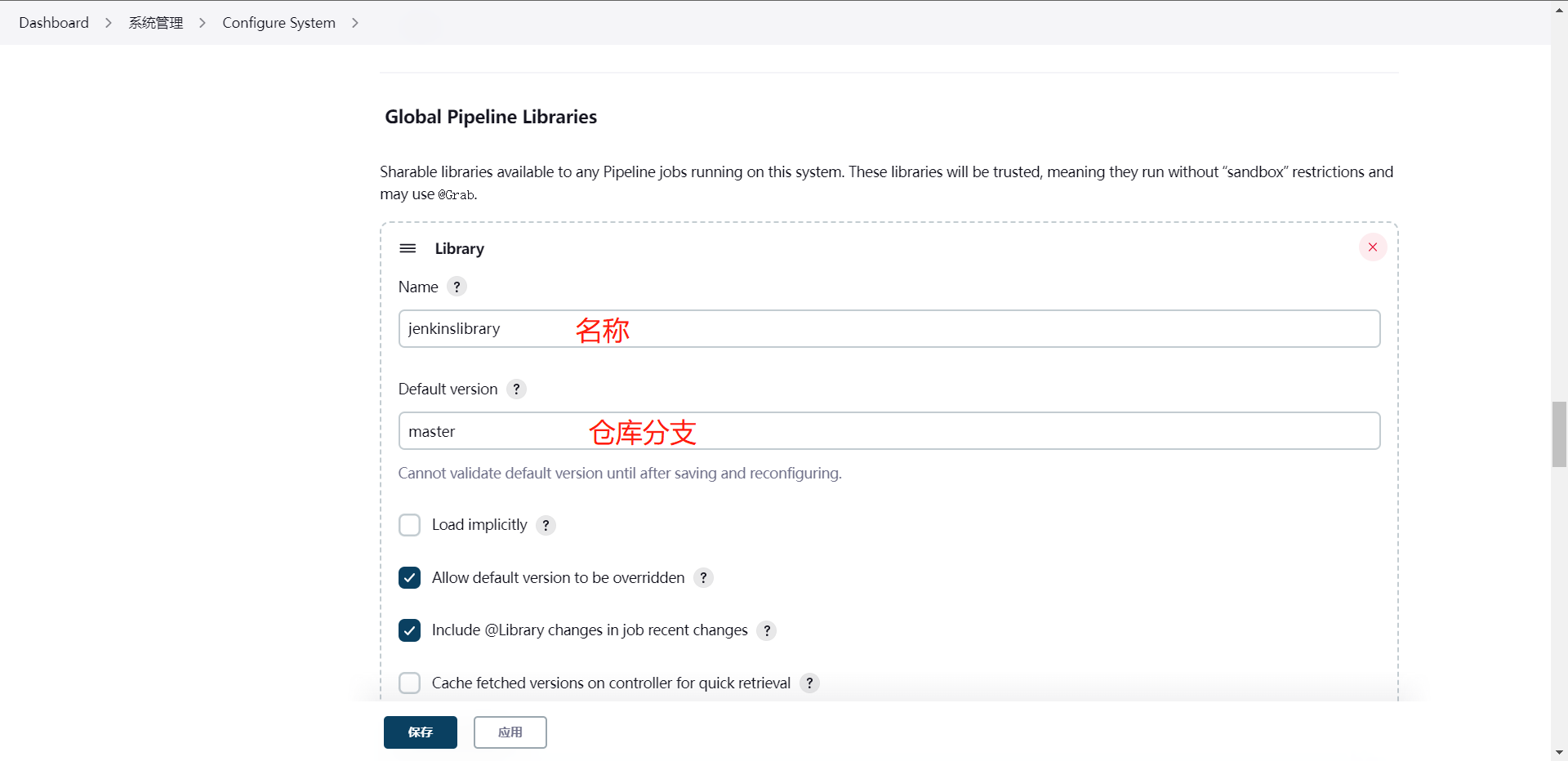

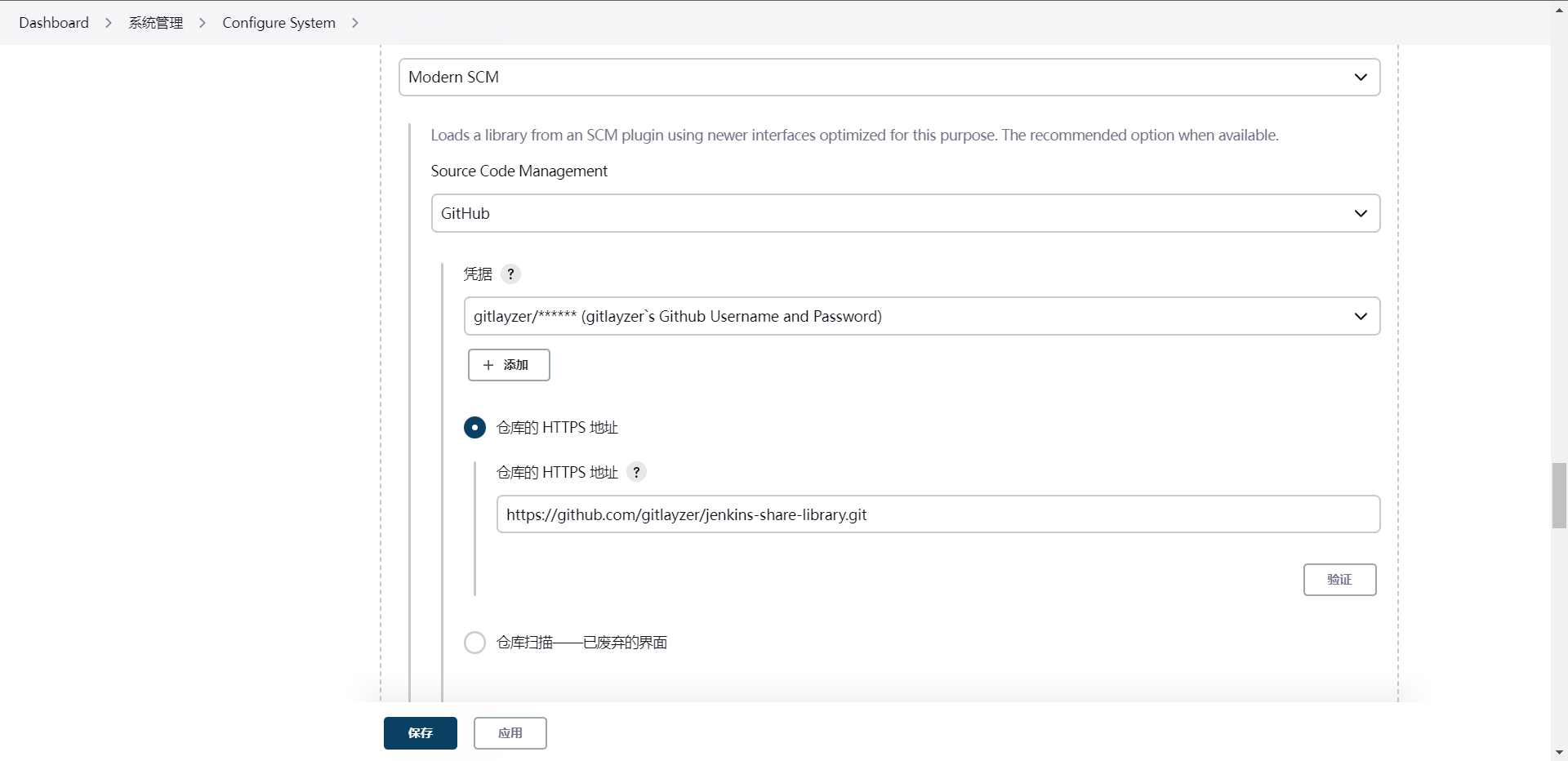

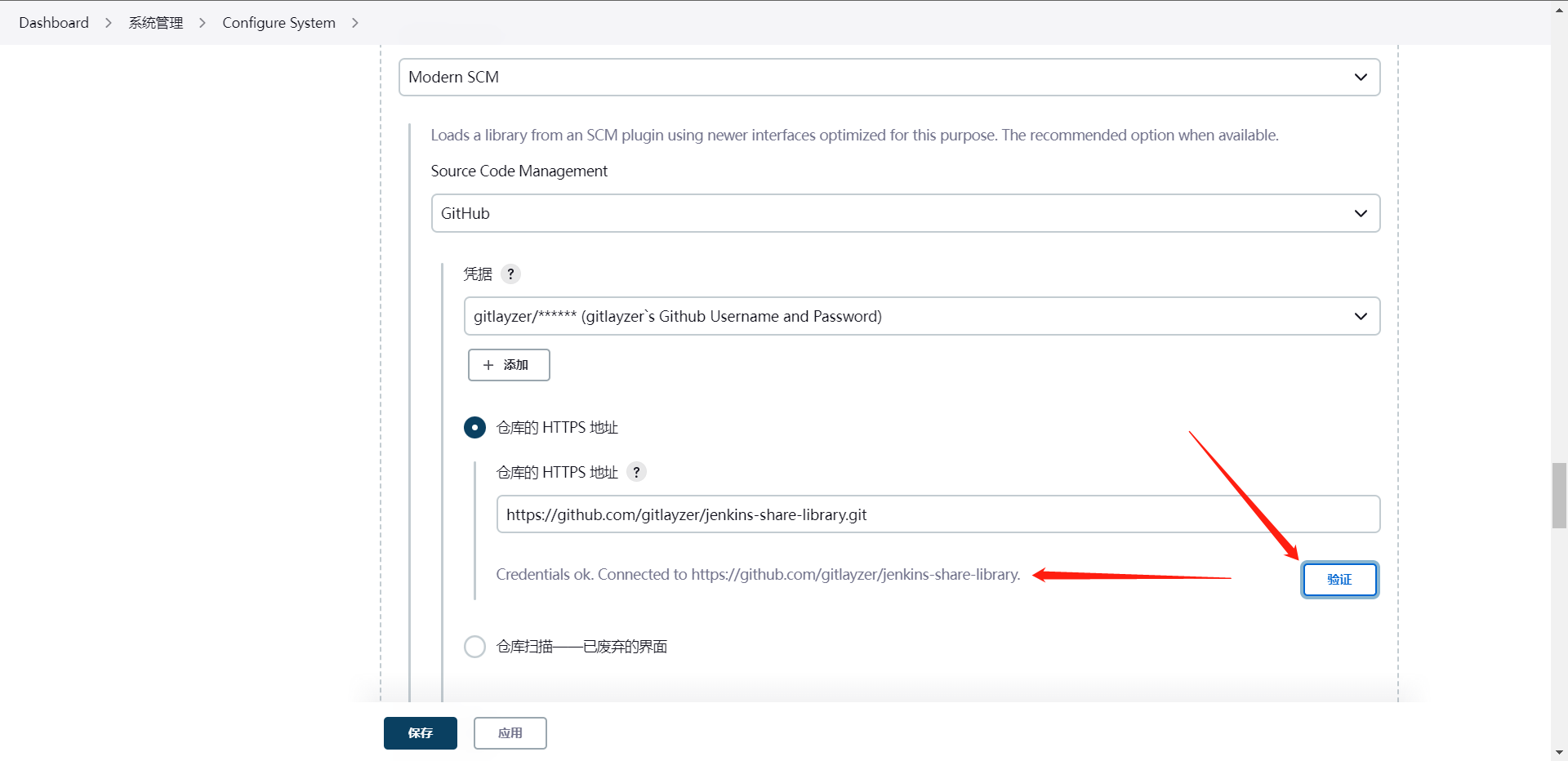

此时此刻我们需要去使用这个库,当然就要涉及到Jenkins的配置了,那么我们来看看如何配置Jenkins

其他的默认,然后就可以保存了,然后我们就可以使用JenkinsFile来使用我们的library了

#!groovy

// 引用Jenkins内配置的Library

@Library('jenkinslibrary') _

// 引用org/library/tools.groovy文件

def tools = new org.library.tool()

pipeline {

agent {

node {

label "build-1"

}

}

stages {

stage('GitCode') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

// 调用sharelibrary的方法

tools.PrintMsg("This is ShareLibrary")

}

}

}

}

stage('Build') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("应用打包阶段")

}

}

}

}

stage('CodeScan') {

steps {

timeout(time: 30, unit: "MINUTES"){

script{

println("代码扫码阶段")

}

}

}

}

}

post {

always {

script {

println("Always")

}

}

success {

script {

currentBuild.description += "\n 构建成功!"

}

}

failure {

script {

currentBuild.description += "\n 构建失败!"

}

}

aborted {

script {

currentBuild.description += "\n 构建取消!"

}

}

}

}

[root@cdk-server jenkins-share-library]# cat jenkinsfile

#!groovy

@Library('jenkinslibrary') _

def tools = new org.library.tool()

pipeline {

agent {

node {

label "build-1"

}

}

stages {

stage('GitCode') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("代码拉取阶段")

// 调用sharelibrary的方法

tools.PrintMsg("This is ShareLibrary")

}

}

}

}

stage('Build') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

println("应用打包阶段")

}

}

}

}

stage('CodeScan') {

steps {

timeout(time: 30, unit: "MINUTES"){

script{

println("代码扫码阶段")

}

}

}

}

}

post {

always {

script {

println("Always")

}

}

success {

script {

currentBuild.description += "\n 构建成功!"

}

}

failure {

script {

currentBuild.description += "\n 构建失败!"

}

}

aborted {

script {

currentBuild.description += "\n 构建取消!"

}

}

}

}

# 提交代码

[root@cdk-server jenkins-share-library]# git add .

[root@cdk-server jenkins-share-library]# git commit -m "Add Jenkinsfile"

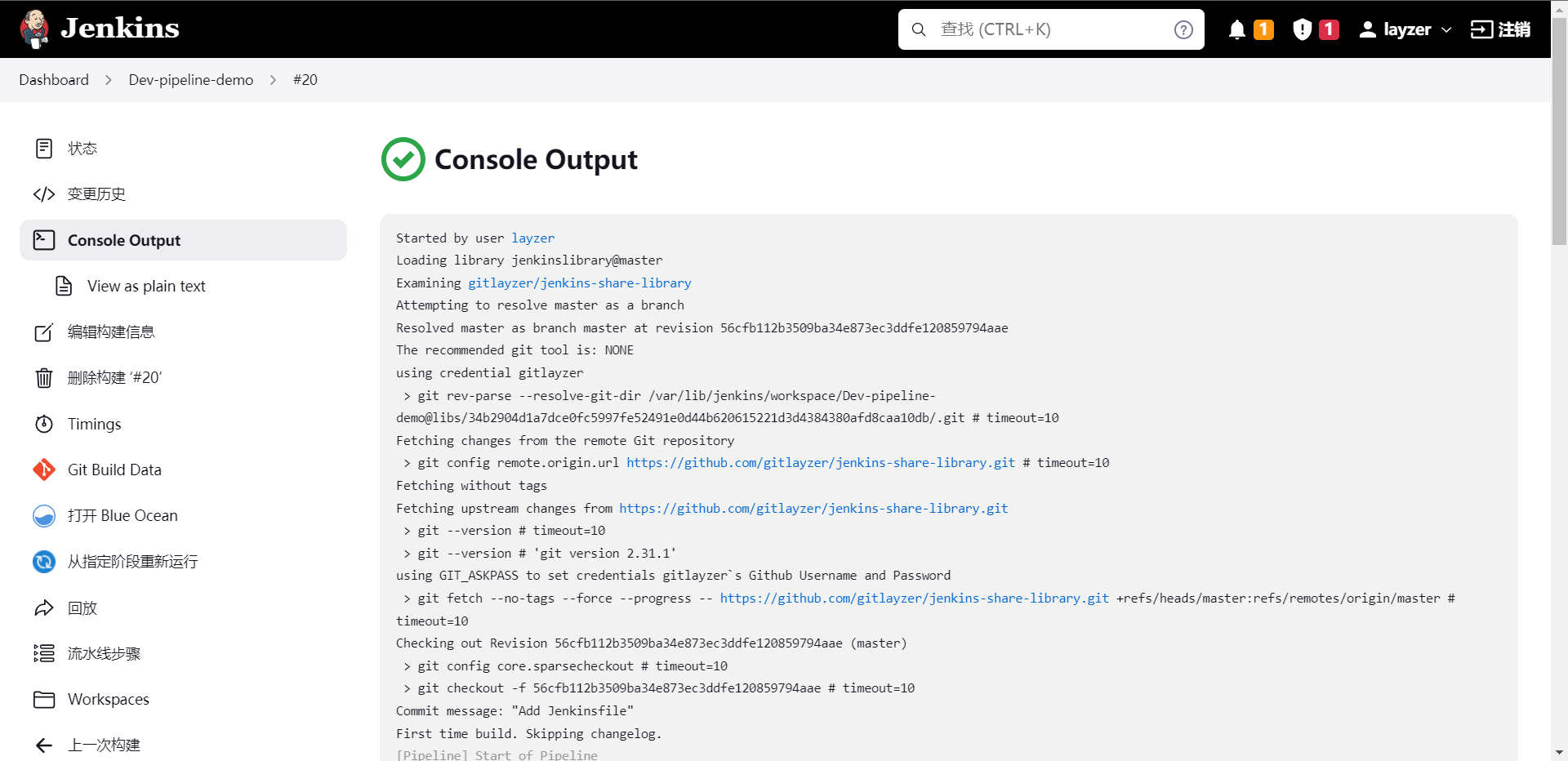

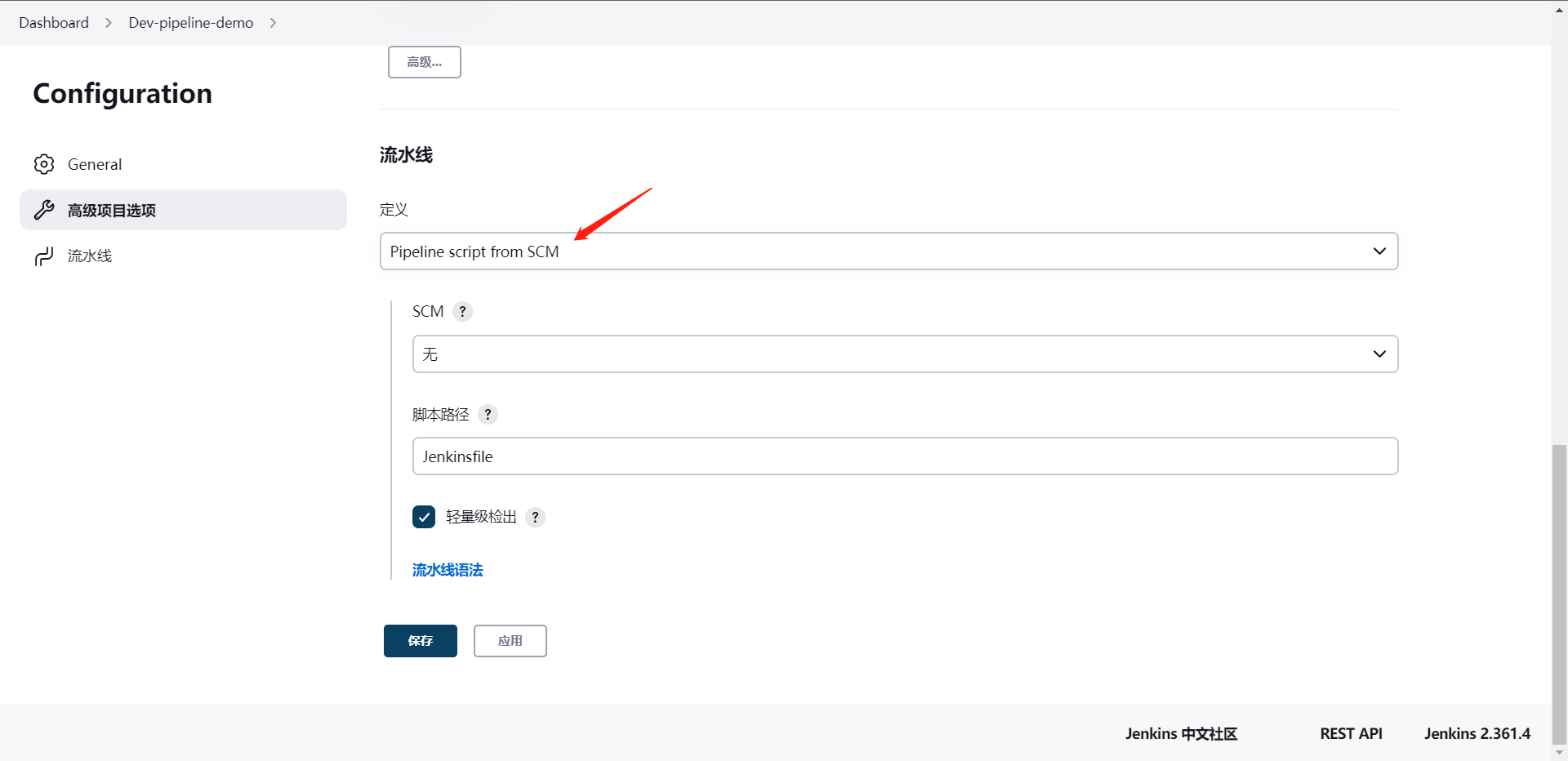

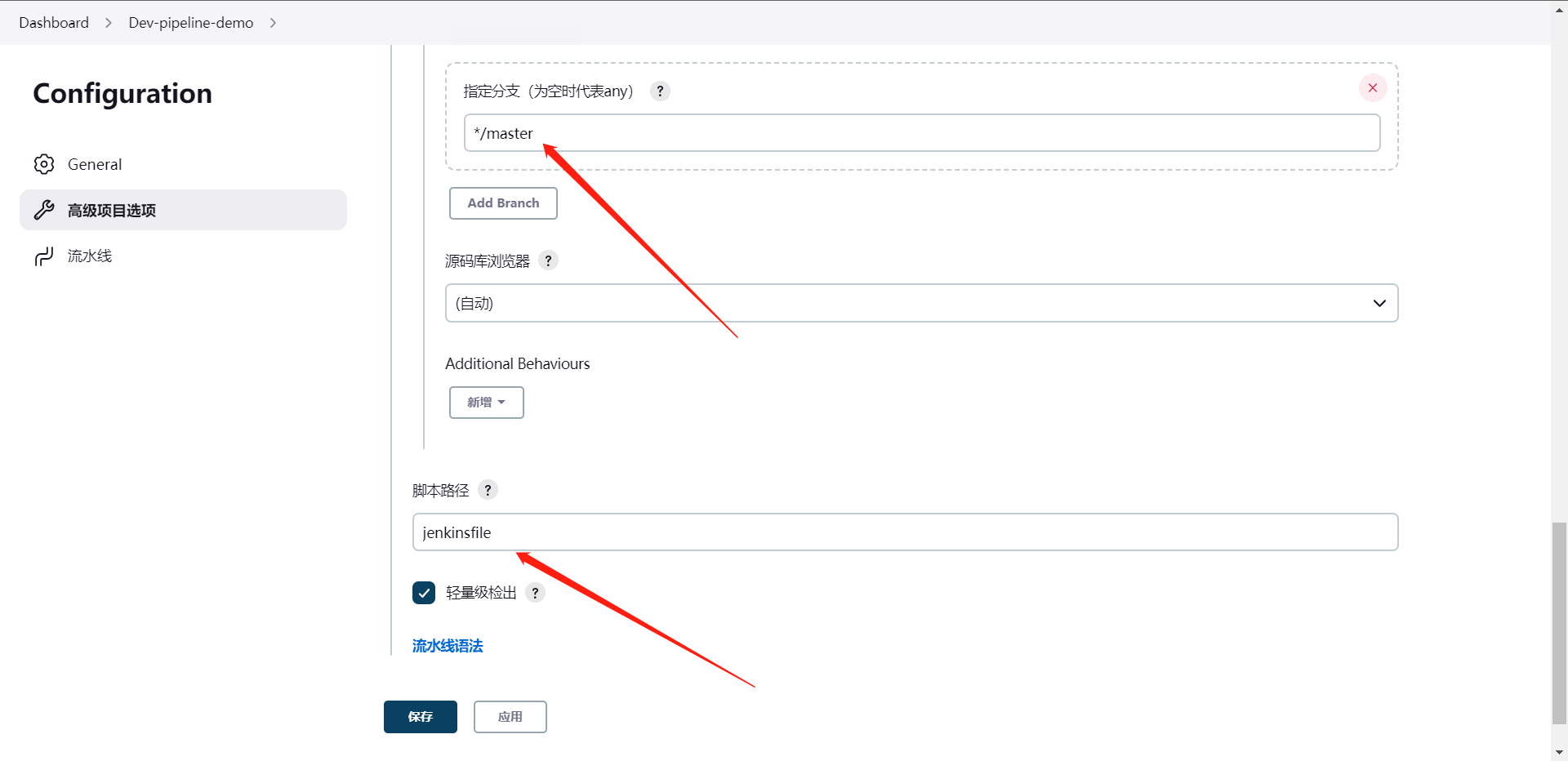

当然我们使用这个jenkinsfile的方法也是可以直接创建流水线

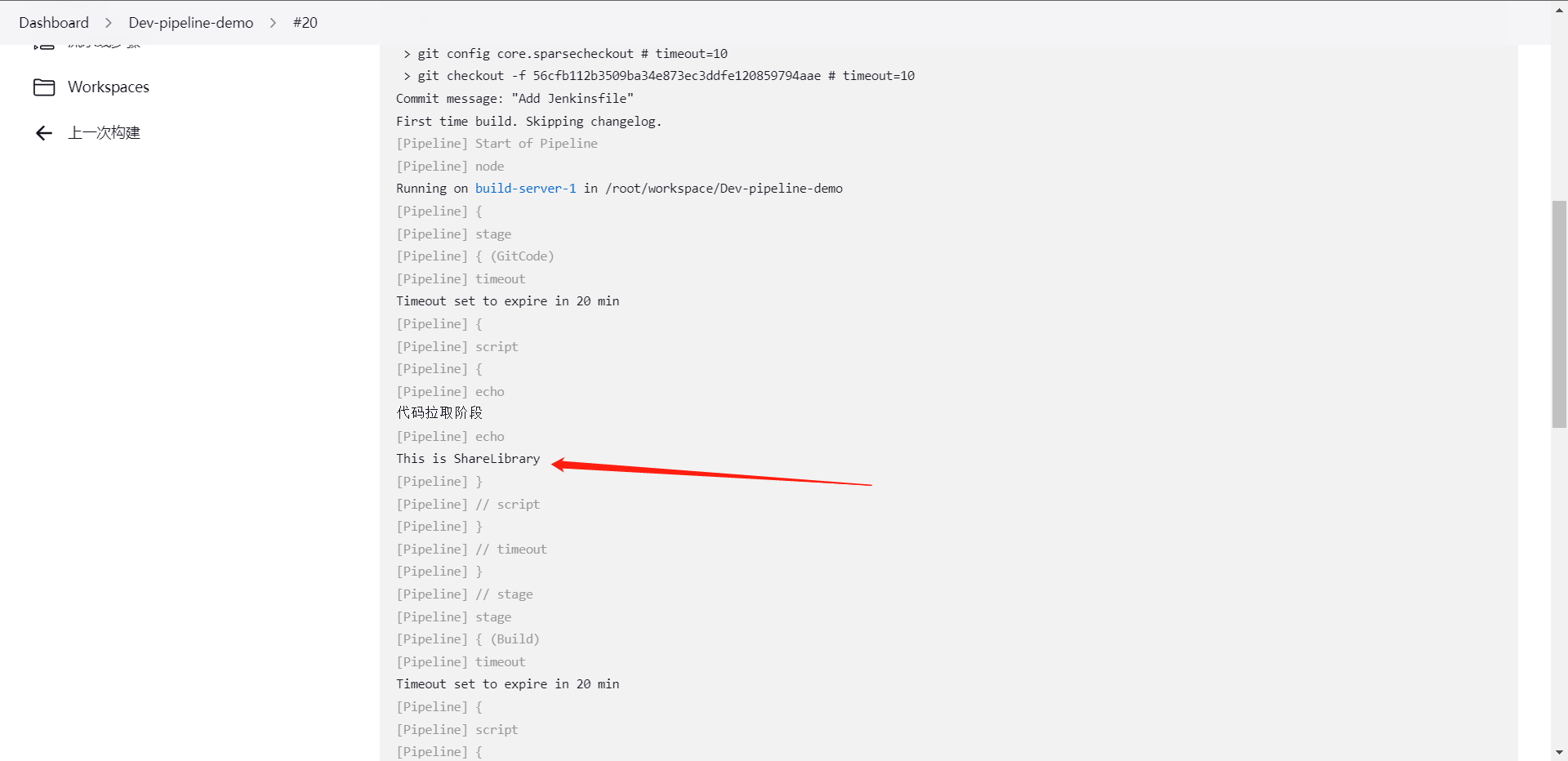

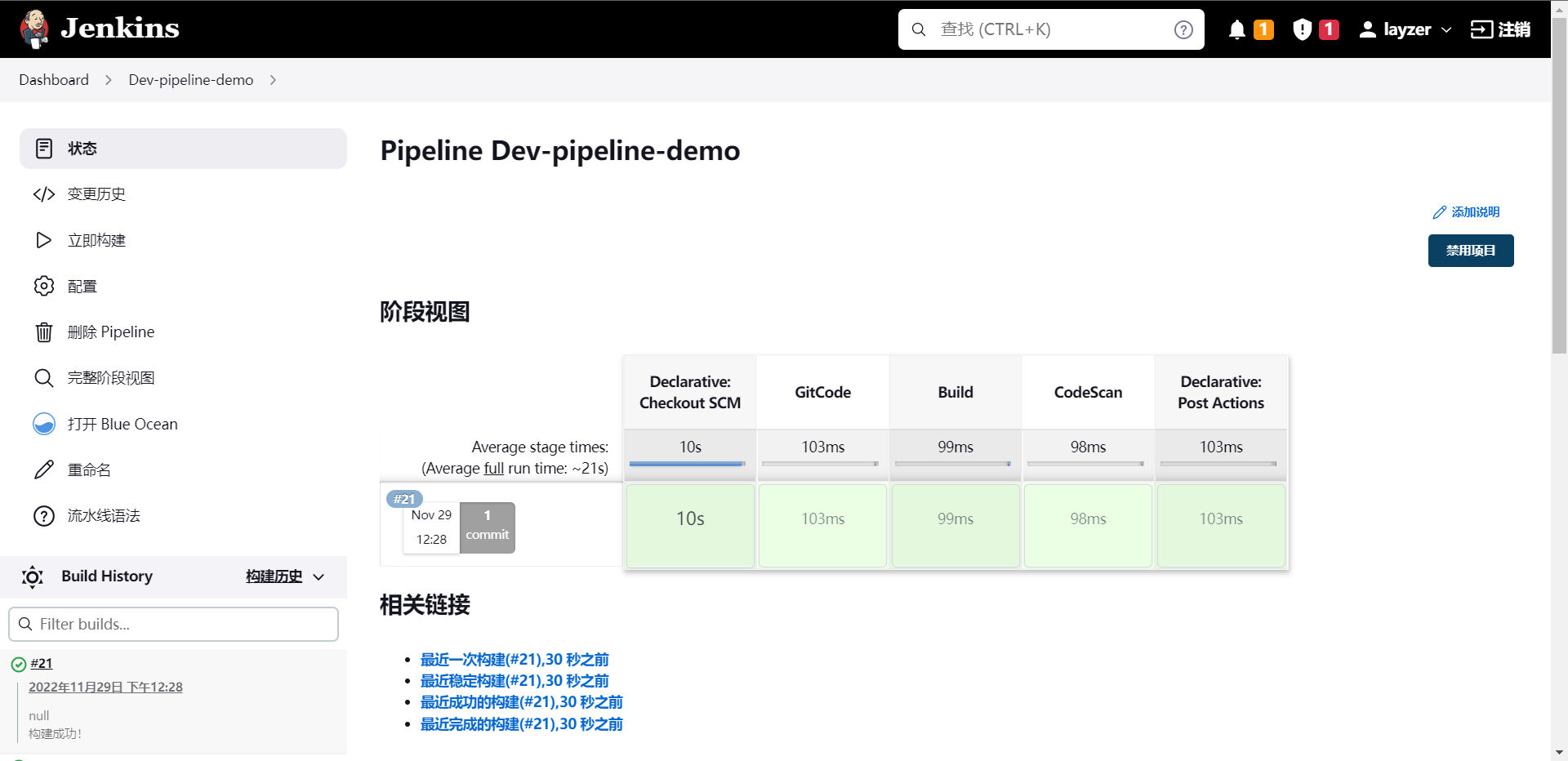

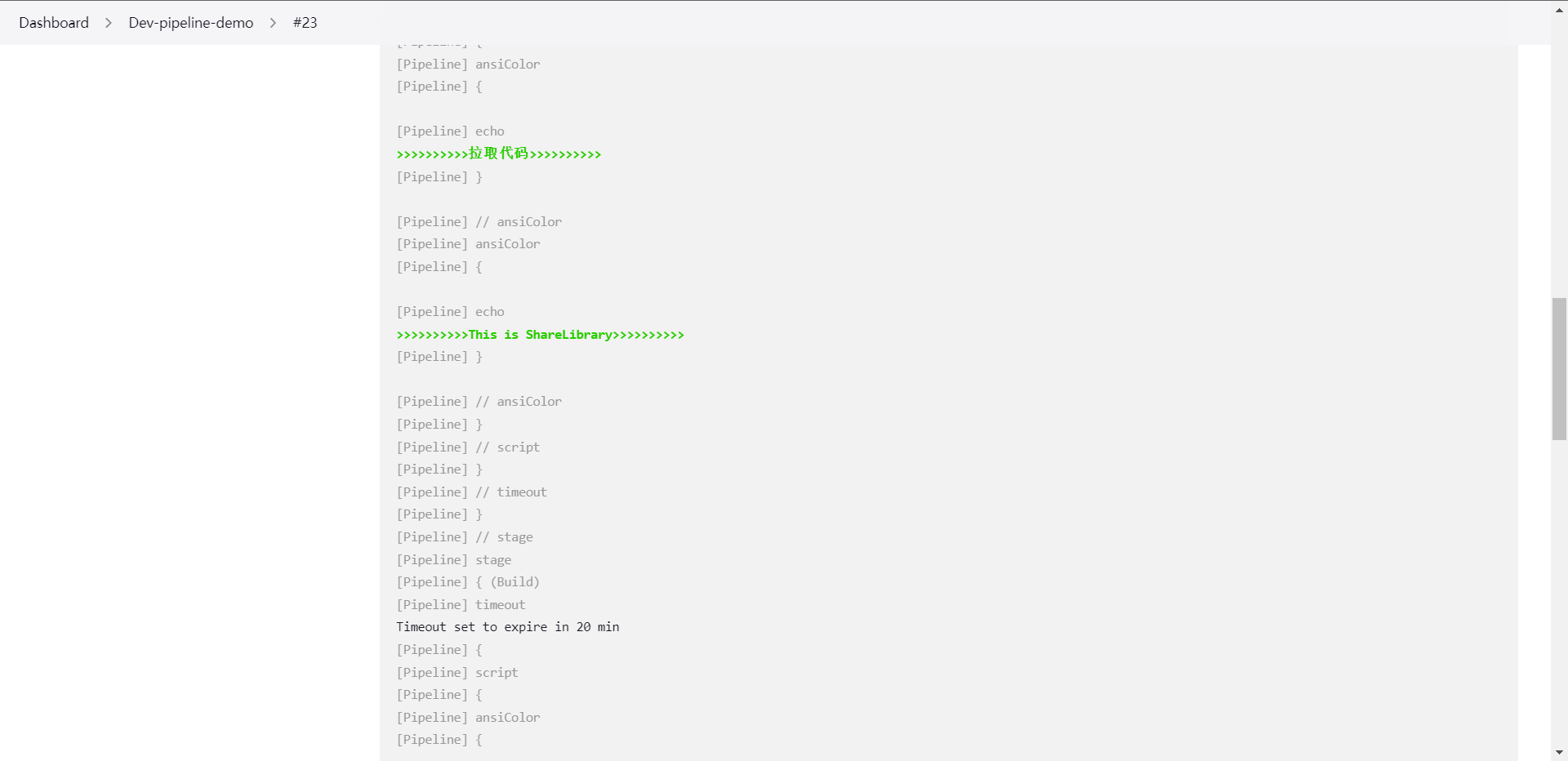

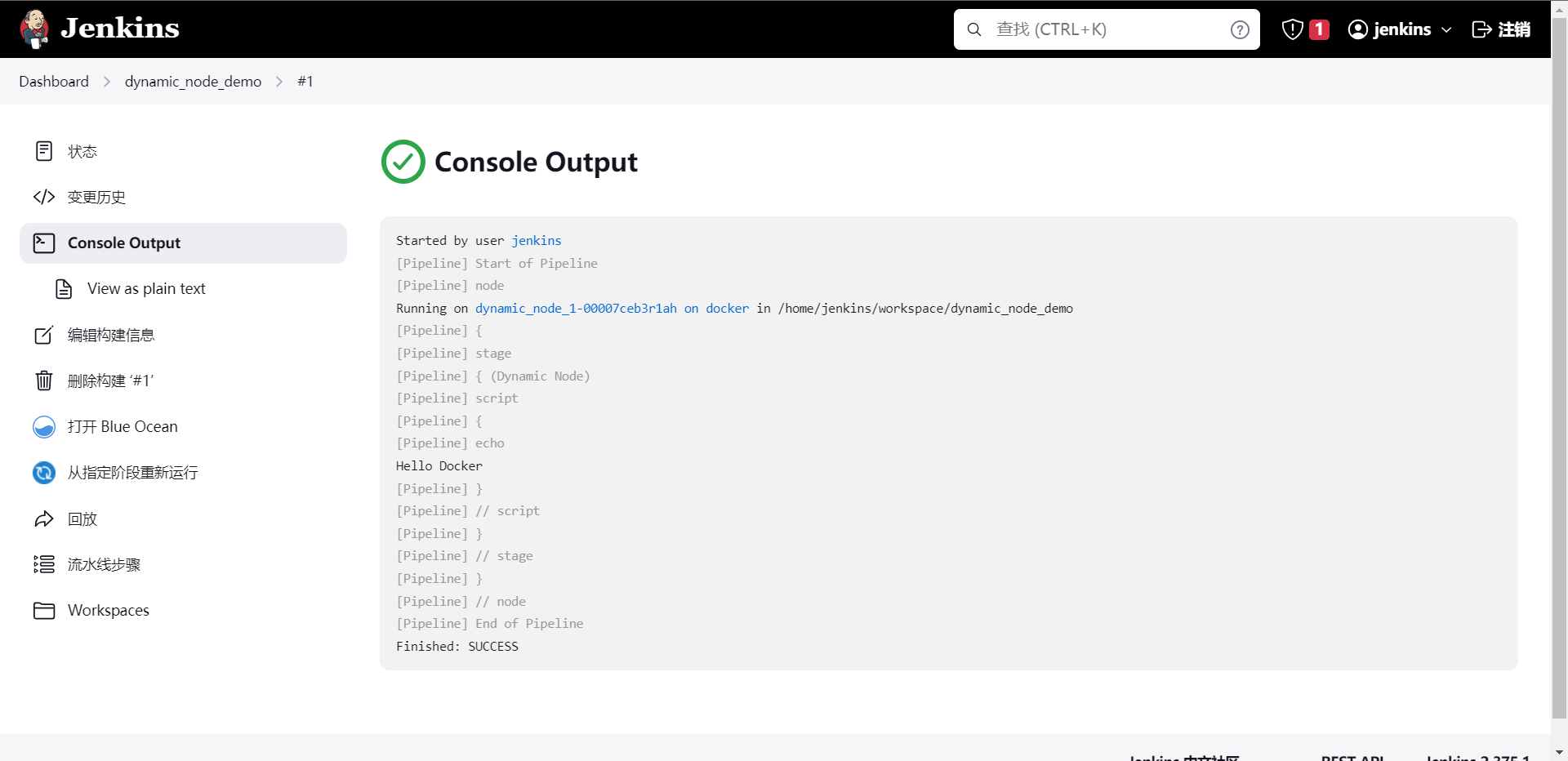

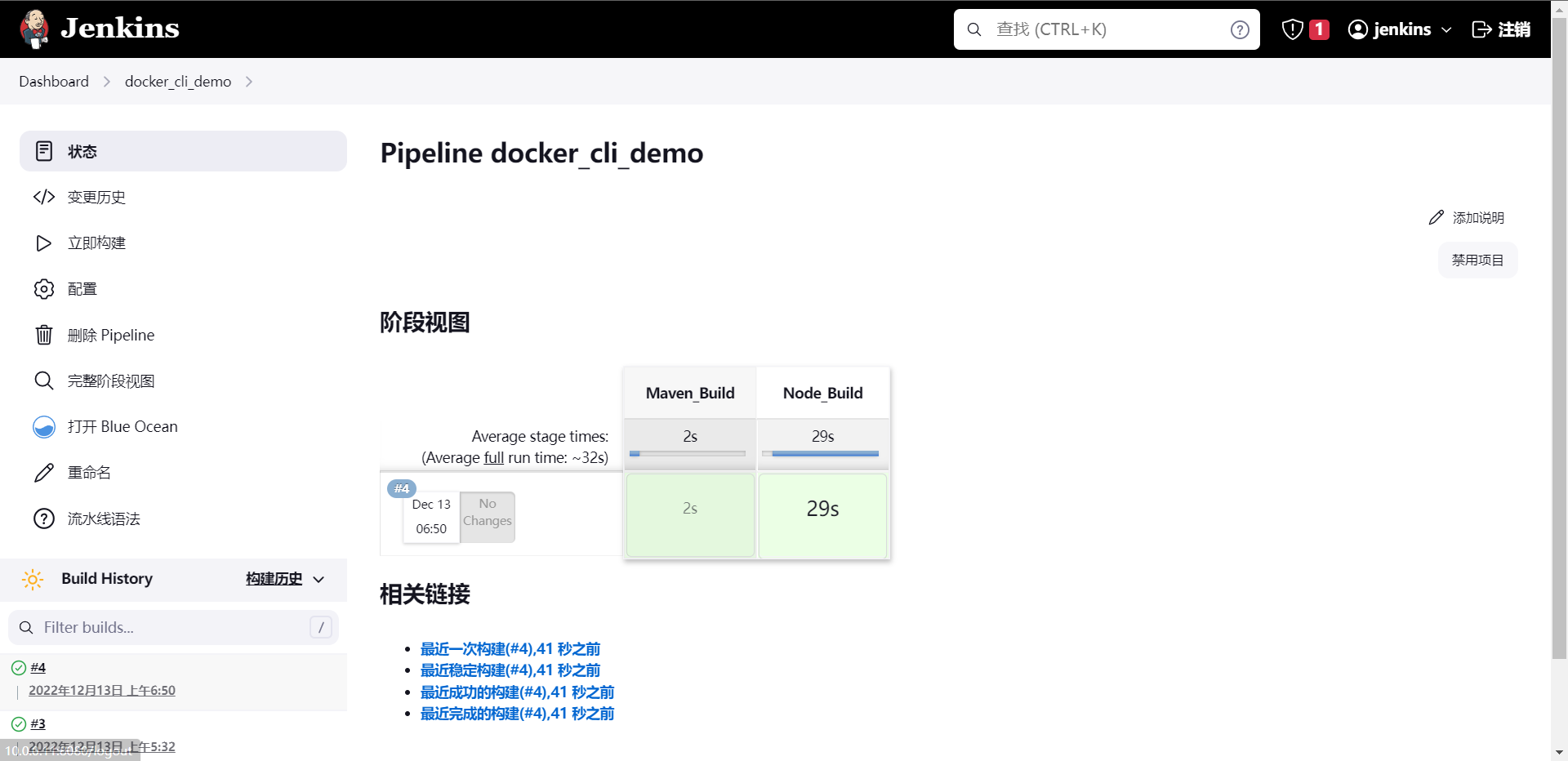

上图是按照pipeline的方法去执行的Library,可以看到我们的方法生效了,我们总结一下

1:建立共享库,并编写groovy

2:配置JenkinsLibrary

3:在Jenkinsfile中需要使用@Library引用jenkins内配置的Library

4:在阶段内使用引入的Library方法。

最后我们来看看Jenkinsfile在github上如何引用这个pipeline

这就另一种引用jenkinsfile的方法了,那么这么做的好处我们大家也应该都可以想到,我们可以根据git的分支来针对jenkinsfile的版本进行一个可控的操作。

那么这个时候可能有人会问了,刚刚vars下的文件怎么用啊,这个其实非常的简单,我们知道timestamps()怎么用吧,那vars下面的东西就是这么用的。

那么我们其实还可以针对这个玩点花的,比如我想让打印出来的东西搞点颜色,这个其实也是可以的。

1:安装插件(AnsiColor)

2:封装方法到tool.groovy

def PrintMsg(value,color){

colors = ['red' : "\033[40;31m >>>>>>>>>>>${value}<<<<<<<<<<< \033[0m",

'green' : "[1;32m>>>>>>>>>>${value}>>>>>>>>>>[m",]

ansiColor('xterm') {

println(colors[color])

}

}

将更改后的文件再次提交到仓库,然后我们尝试去使用这个方法,直接去修改仓库内的Jenkinsfile就可以了

#!groovy

// 引用Jenkins内配置的Library

@Library('jenkinslibrary') _

// 引用org/library/tools.groovy文件

def tools = new org.library.tool()

pipeline {

agent {

node {

label "build-1"

}

}

stages {

stage('GitCode') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

tools.PrintMsg("拉取代码", "green")

}

}

}

}

stage('Build') {

steps {

timeout(time: 20, unit: "MINUTES"){

script{

tools.PrintMsg("打包", "green")

}

}

}

}

stage('CodeScan') {

steps {

timeout(time: 30, unit: "MINUTES"){

script{

tools.PrintMsg("代码扫码", "green")

}

}

}

}

}

post {

always {

script {

tools.PrintMsg("Always", "green")

}

}

success {

script {

tools.PrintMsg("构建成功!", "green")

}

}

failure {

script {

tools.PrintMsg("构建失败!", "red")

}

}

aborted {

script {

tools.PrintMsg("构建取消!", "red")

}

}

}

}

同步完代码之后我们再次运行一下流水线

可以看到这个时候我们的流水线的输出就带有颜色了,当然这个其实也算是一种观感上的优化。

3:Groovy基础

1:Groovy简介

1:groovy是一种功能强大的,可选类型和动态的语言,支持Java平台

2:皆在提高开发人员的生产力得益于简洁,熟悉且简单易学的语法。

3:可以与任何java程序集成,并且为您的应用程序提供强大的功能,包括脚本编写功能,特定领域语言编写,运行时和编译时元编程以及函数式编程

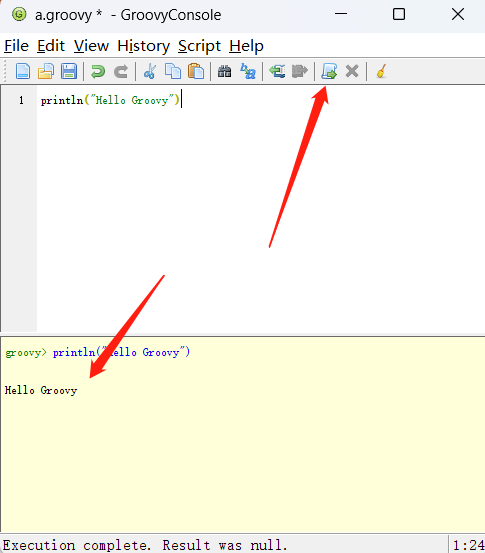

当然了安装Groovy也非常的简单,我这里是windows使用的是choco工具来安装的直接运行命令启动groovyconsol

2:Groovy数据类型

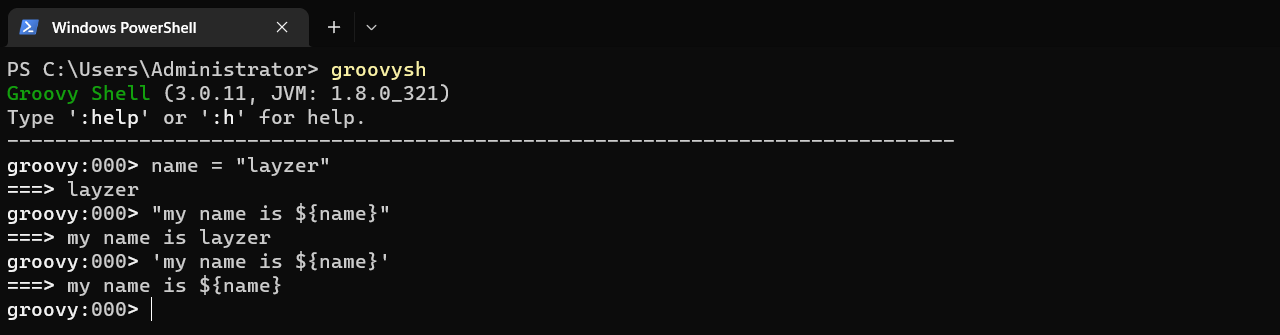

1:字符串表示:单引号,双引号,三引号

2:常用方法:

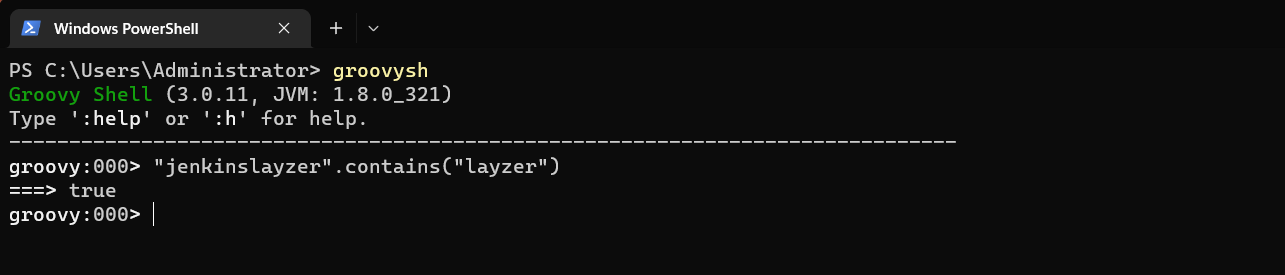

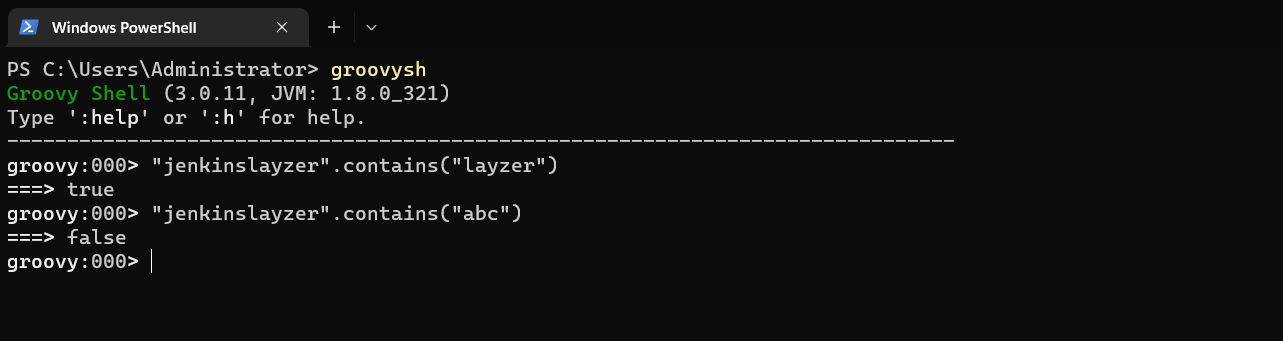

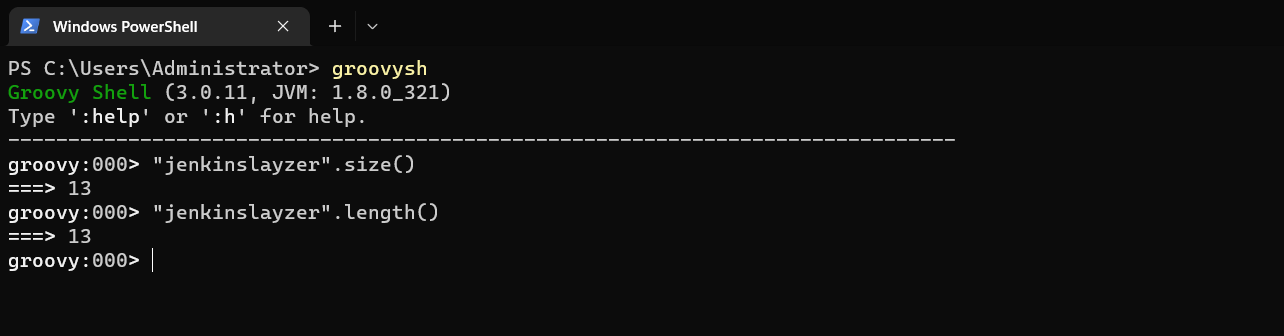

2.1:contains():是否包含特定内容,返回true/false

2.2:size()/length():字符串数量大小长度

2.3:toString():转换成String类型

2.4:indexOf():元素的索引

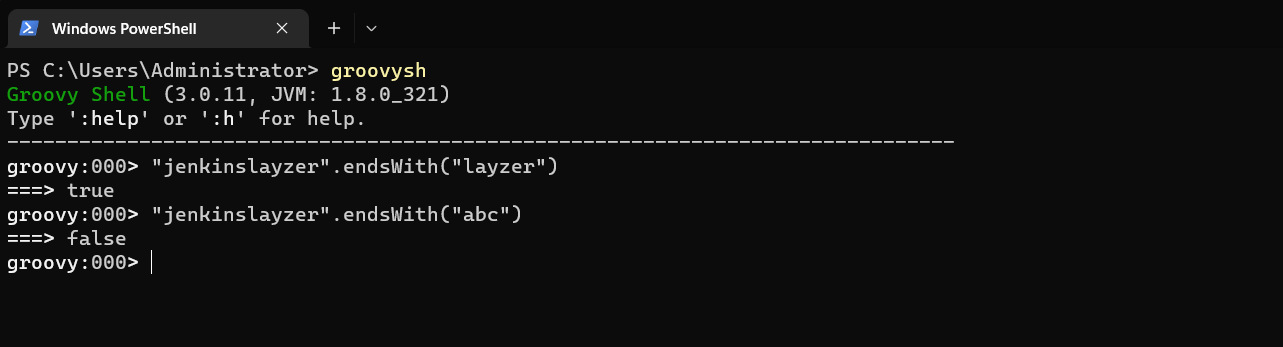

2.5:endsWith():是否指定字符结尾

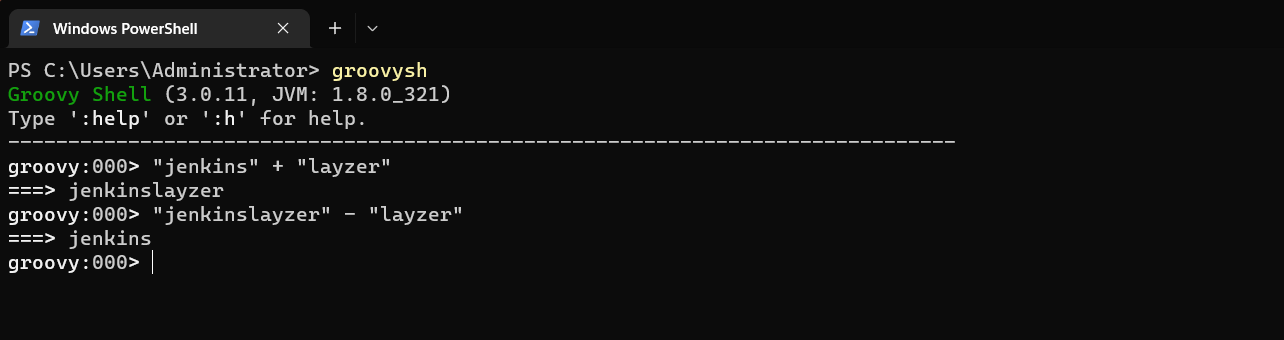

2.6:minus() plus():去掉,增加字符串

2.7:reverse():反向排序

2.8:substring(1,2):字符串指定索引开始的子字符串

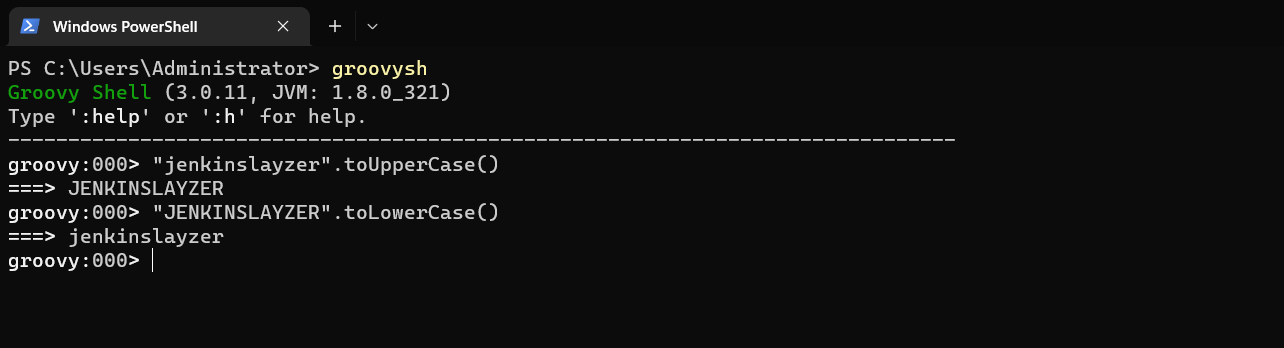

2.9:toUpperCase() toLowerCase():字符串大小写转换

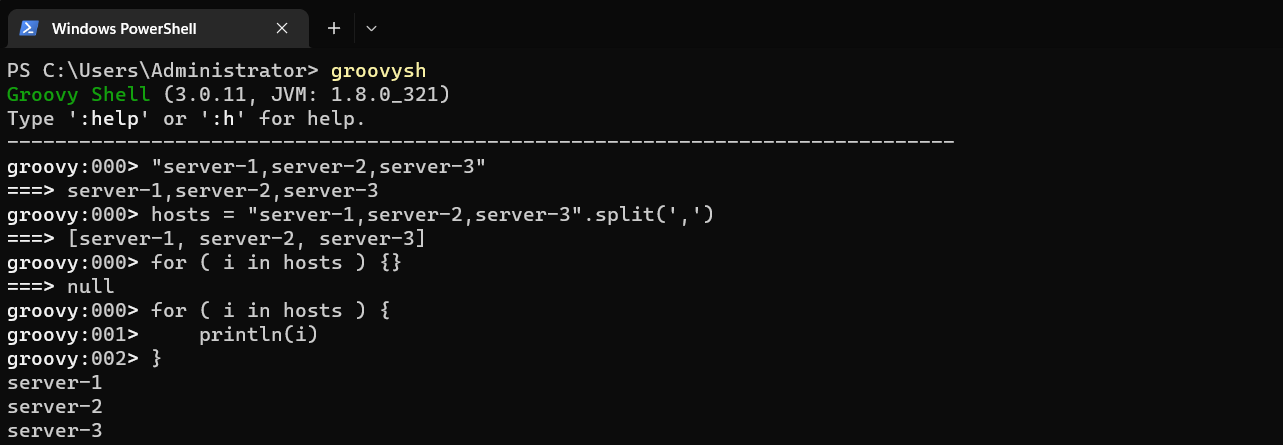

2.10:split():字符串分割,默认空格分割,返回列表

下面我们来实战看看这些操作

学过开发的可能都能看出这是单引号和双引号的区别,也就是双引号可以解析变量,单引号并不能解析变量

1:字符串

根据上述,我们学到了第一个方法,判断一个字符串是否包含某个内容,我们可以看到是包含的,但是后面的就不包含了。

当然我我们也可以判断以什么结尾

判断字符串长度与数量

这是我们常见的加减运算

字符串大小写转换

我们可以分割字符,然后获取值进行使用。

2:列表

1:列表符号:[]

2:常用方法:

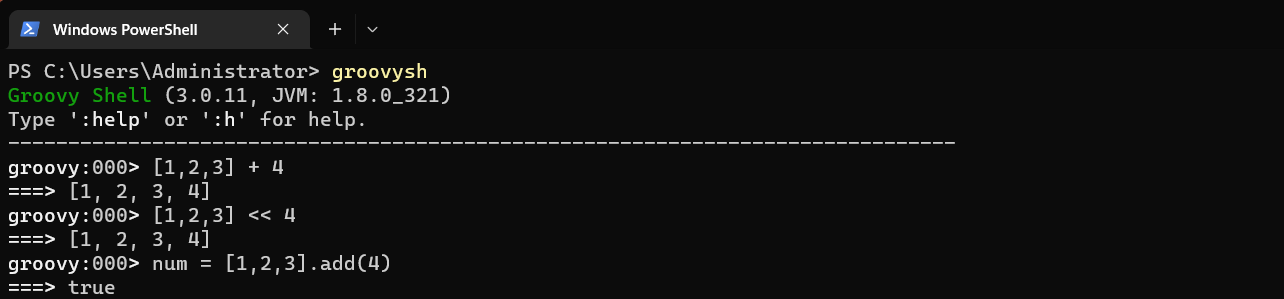

2.1:+ - += -=:元素增加减少

2.2:add():添加元素

2.3:isEmpty:判断是否为空

2.4:intersect([2,3]) disjoint([1])取交集,判断是否有交集

2.5:flatten():合并嵌套的列表

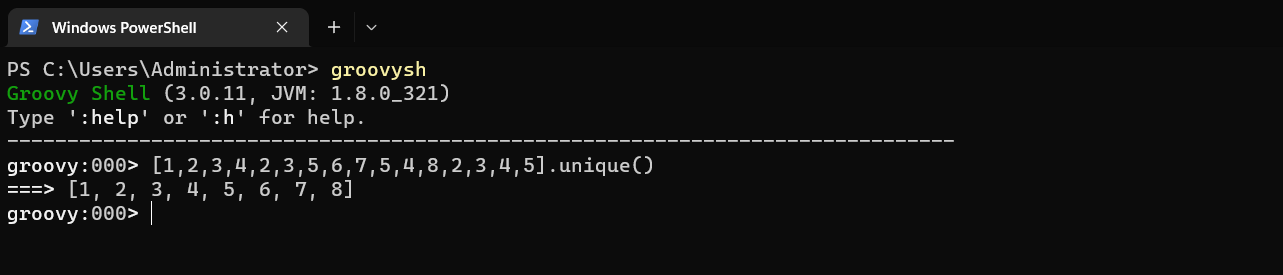

2.6:unique():去重

2.7:reverse() sort():反转 升序

2.8:count():元素个数

2.9:join():将元素按照参数链接

2.10:sum() min() max():求和,最小值,最大值

2.11:contains():包含特定元素

2.12:remove() removeAll():删除元素,删除所有元素

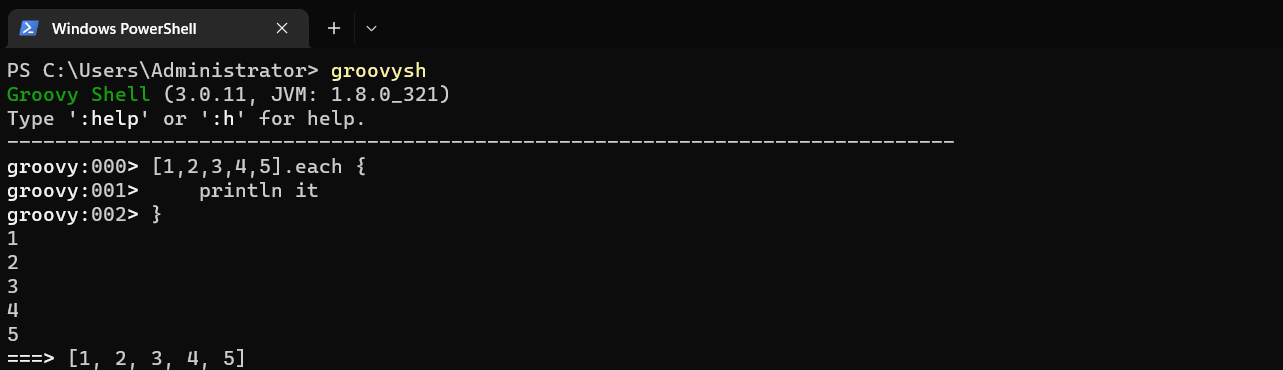

2.13:each():遍历

为列表添加数据的方法

去重操作

使用指定符号链接所有的元素

遍历数据

3:字典

1:表示:[:]

2:常用方法:

2.1:size():map大小

2.2:['key'] .key get():获取value

2.3:isEmpty():判断是否为空

2.4:containKey():判断是否包含key

2.5:containValue():判断是否包含value

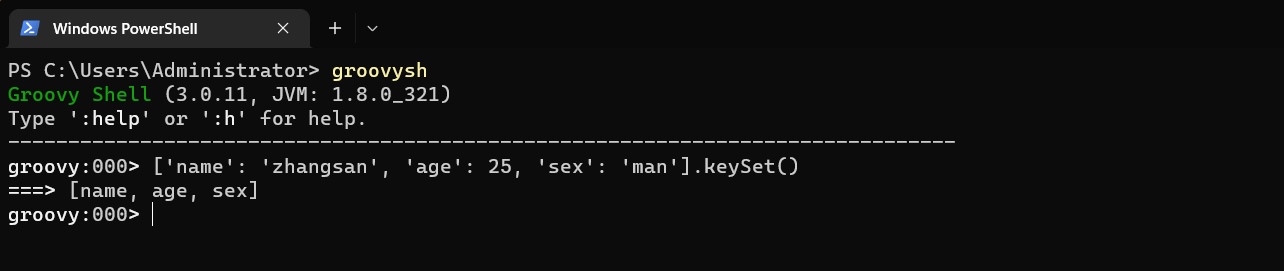

2.6:ketSet():生成Key的列表

2.7:each():遍历map

2.8:remove('key'):删除元素(k-v)

将map的key和value转换为列表

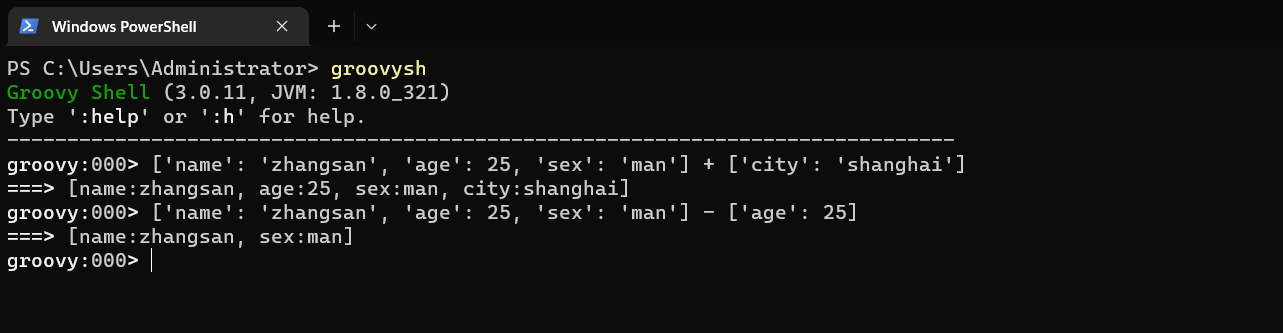

map的加减

总结一句话,基本使用和列表一模一样,

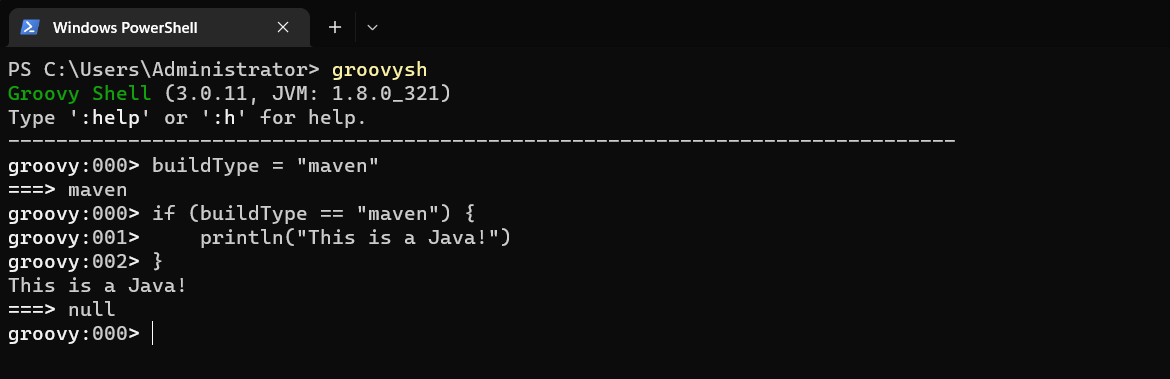

3:Groovy条件语句

1:if

// 语法

if (表达式) {

// xxx

} else if (表达式) {

// xxx

} else {

// xxx

}

这是我们的一个小实例,当然了,多分枝其实基本套代码就可以了。

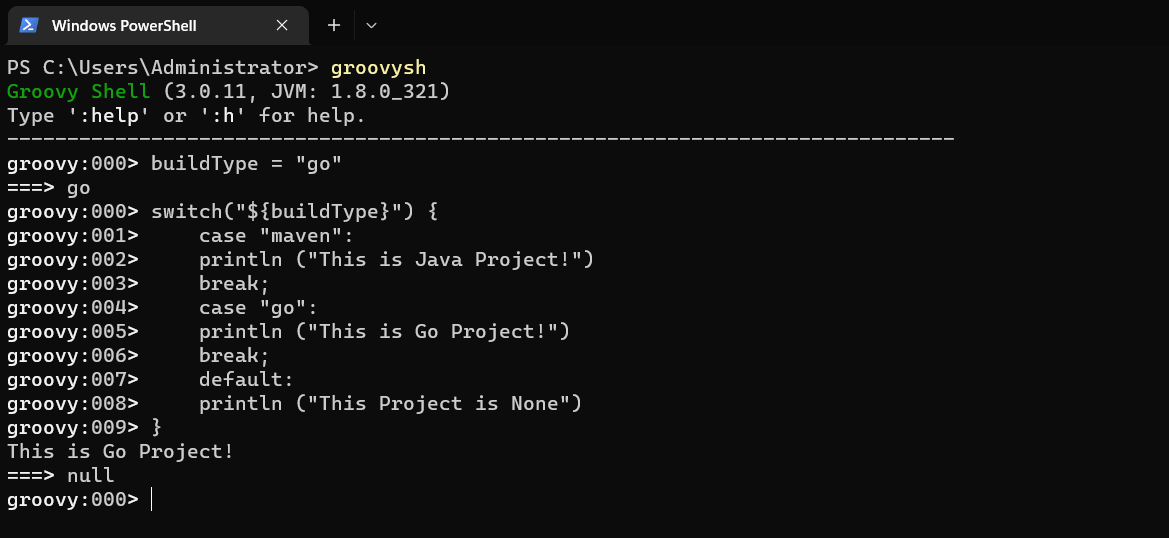

2:switch

语法:

switch("${buildType}") {

case "maven":

# //

break;

case "gradle":

# //

break;

default:

# //

}

switch("${buildType}") {

case "maven":

println ("This is Java Project!")

break;

case "go":

println ("This is Go Project!")

break;

default:

println ("This Project is None")

}

可以看出只要是学过编程的都知道这个switch的用法,其实就是用于匹配值,匹配到了则运行,否则就继续匹配,如果没有匹配的case,就走default操作。

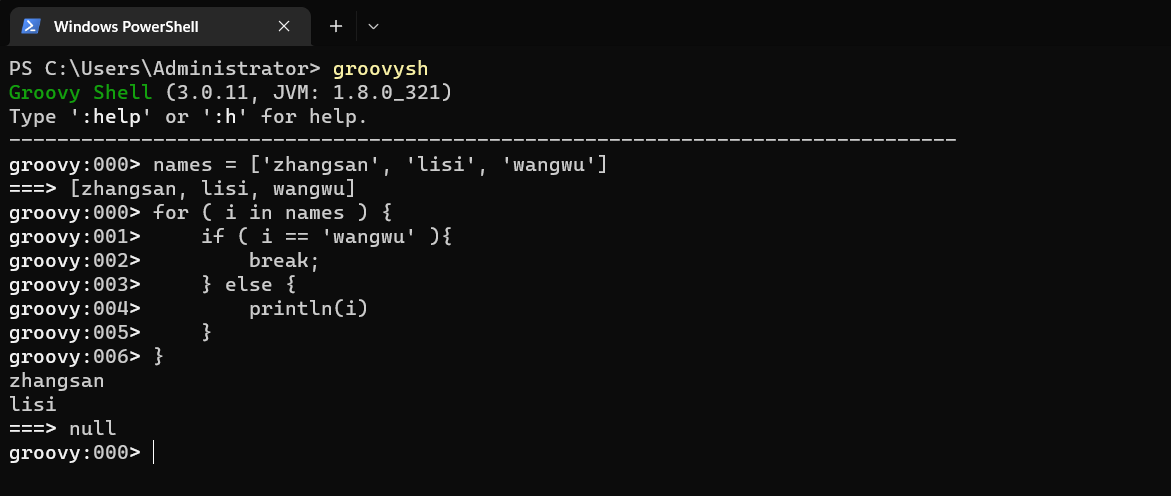

3:for/while

# for语法

names = ['zhangsan', 'lisi', 'wangwu']

for ( i in names ) {

if ( i == 'wangwu' ){

break;

} else {

println(i)

}

}

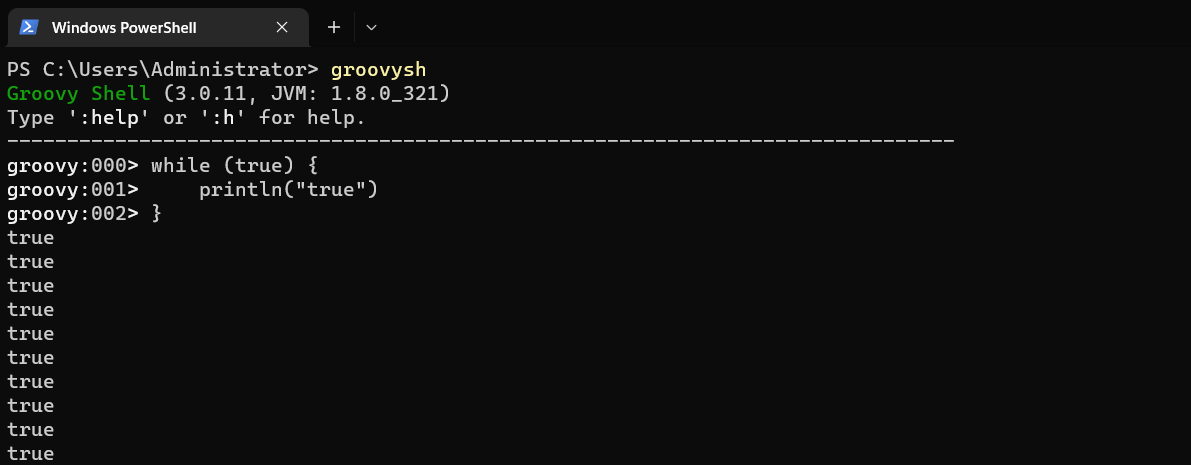

# while语法(这是个死循环,大家注意不要这么玩)

while (true) {

println("true")

}

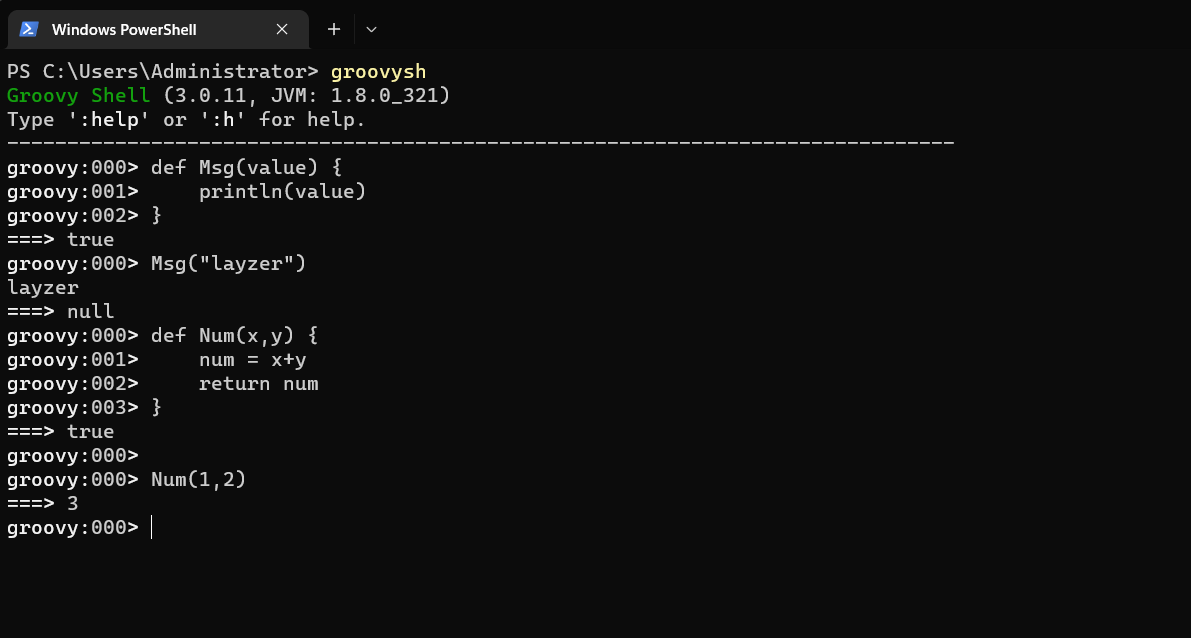

4:Groovy函数

1:使用def定义函数

2:语法:

def Msg(value) {

println(value)

# xxx

return value

}

def Msg(value) {

println(value)

}

Msg("layzer")

或者

def Msg() {

println(value)

}

Msg()

或者

def Num(x,y) {

num = x+y

return num

}

Num(1,2)

5:Groovy正则表达式

正则在我们Groovy内其实也是非常的实用的,比如我们不规范的编写分支的时候,我们想匹配一个分支,这个时候我们就会用到它了

@NonCPS

String getBranch(String branchName) {

def matcher = (branchName =~ "RELEASE-[0-9]{4}")

if (matcher.find()) {

newBranchName = matcher[0]

} else {

newBranchName = branchName

}

newBranchName

}

我们可以通过这个方法来调用RELEASE-1111,RELEASE-1122,RELEASE-1342sfads等分支

6:PIpeline常用DSL

1:readJSON-Json数据格式化

def response = readJSON text: "${scanResult}"

println(scanResult)

// 原生方法

import groovy.json.*

@NonCPS

def GetJson(text) {

def prettyJson = JsonOutput.perttyPrint(text)

new JsonSlurperClassic().parseText(prettyJson)

}

2:withCredentials(隐藏密码)

// 加密使用账号密码,在现实的console中不会显示账号密码

node {

withCredentials([usernamePassword(credentialsId: 'gitlayzer', passwordVariable: 'password', usernameVariable: 'username')]) {

println(username)

println(password)

}

}

3:checkout(拉取代码)

// Git

checkout([$class: 'GitSCM',

branches: [[name: "branchName"]],

doGenerateSubmoduleConfiguration: false,

extensions: [],

submoduleCfg: [],

userRe,pteConfigs: [[credentialsId: "${credentialsId}",

url: "${srcUrl}"]]])

// Svn

checkout([$class: 'SubversionSCM', additionalCredentials: [],

filterChangelog: false,

ignoreDirPropChanges: false,

loations: [[credentialsId: "${credentialsId}",

depthOption: 'infinity',

ignoreExternalsOption: true,

remote: "${svnUrl}"]],

workspaceUpdater: [$class: 'CheckoutUpdater']])

4:publishHTML(生成HTML报告)

publishHTML([

allowMissing: false,

alwaysLinkToLastBuild: false,

keepAll: true,

reportDir: './report/'

repoName: 'InterfaceTestReport',

reportTitles: 'HTML'])

5:input(交互式)

def result = input message: '选择xxxx',

ok: '提交',

parameters: [

extendedChoice(

description: 'xxxxx',

descriptionPropertyValue: '',

multiSelectDelimiter: ',',

name: 'failePositiveCases',

quoteValue: false,

saveJSONParameterToFile: false,

type: 'PT_CHECKBOX',

value: '1.2.3',

visibleItemCount: 99)]

println(result)

6:BuildUser(获取构建用户)(需要装插件)

wrap([$class: 'BuildUser']) {

echo "full name is $BUILD_USER"

echo "user id is $BUILD_USER_ID"

echo "user email is $BUILD_USER_EMAIL"

}

7:httpRequest(触发Hook或者接口)(需要装插件)

ApiUrl = "http://xxxxx.com/api/hooks/java-service?msg=${msg}"

Result = httpRequest authentiation: 'xxxxxx',

quiet: true,

contentType: 'APPLIACTION_JSON',

url: "${ApiUrl}"

4:构建工具集成

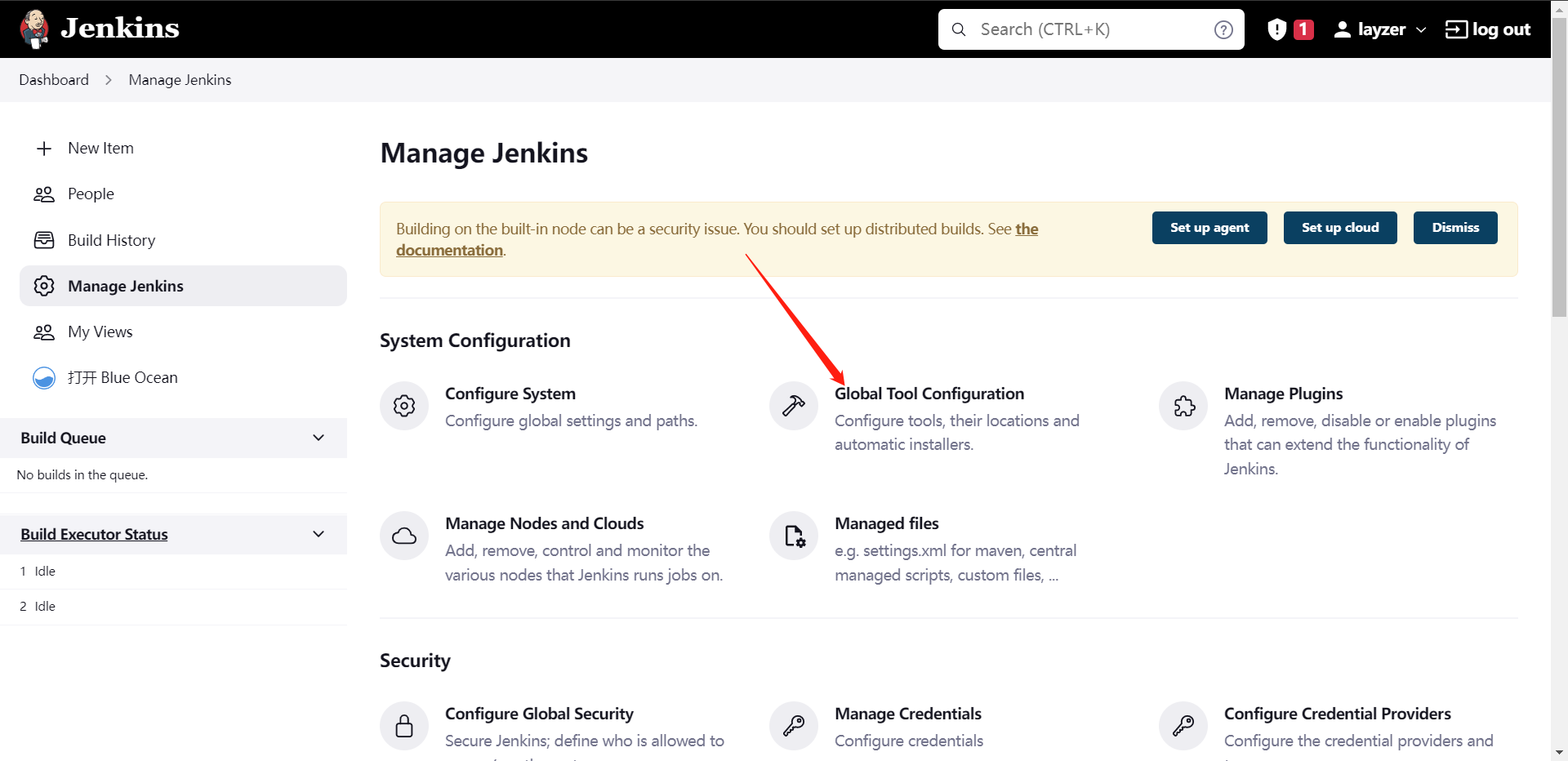

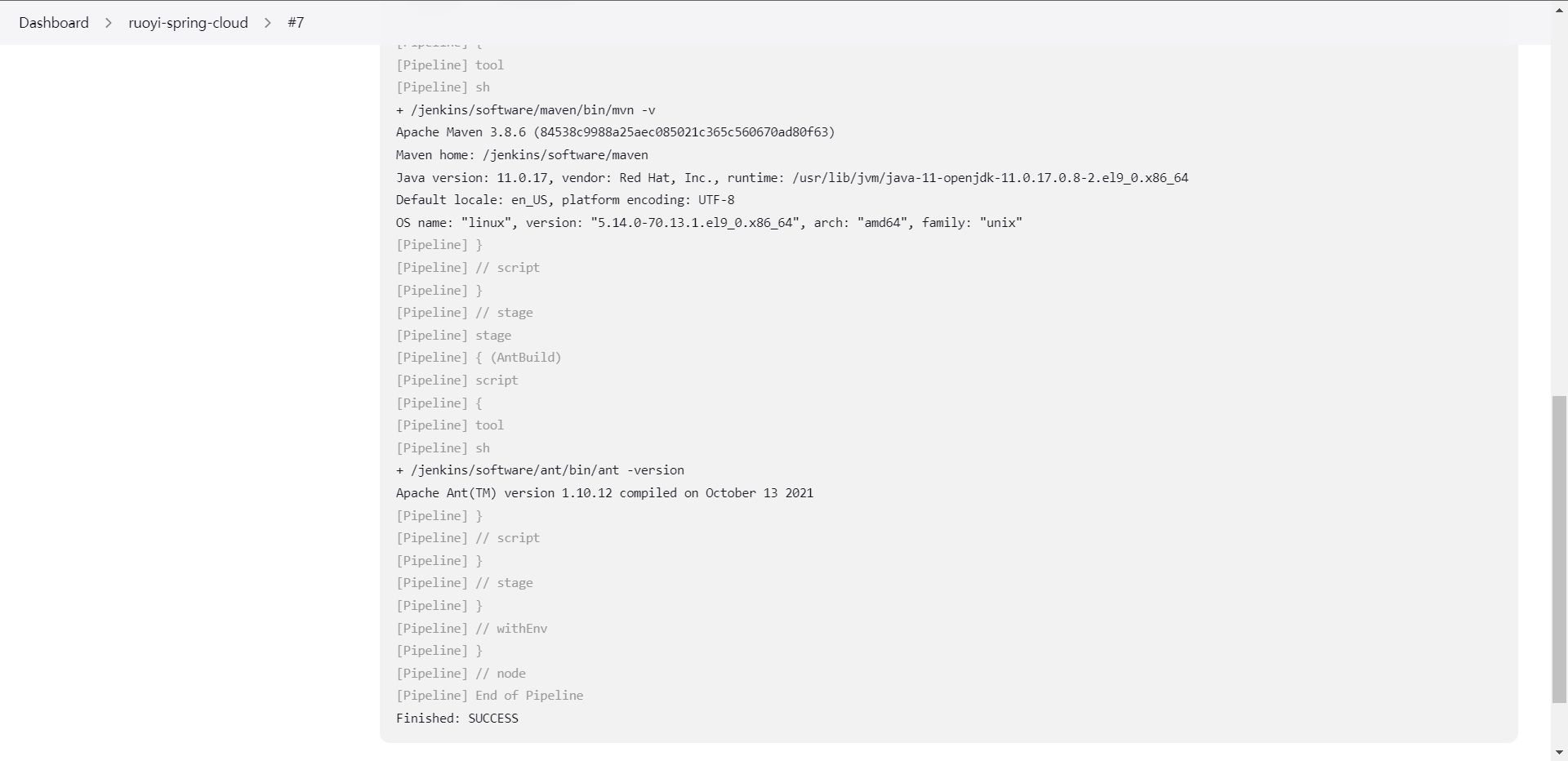

1:Maven

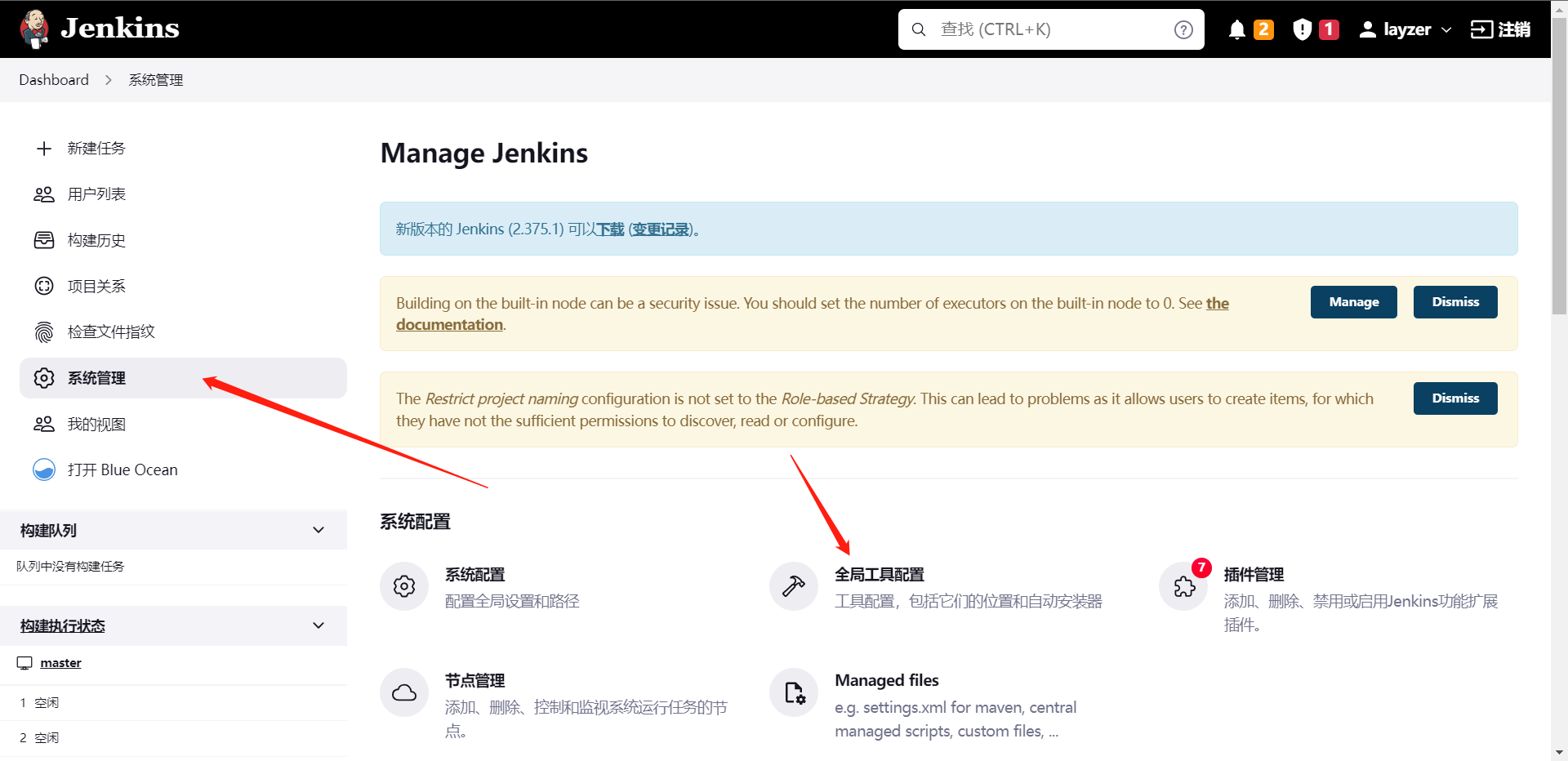

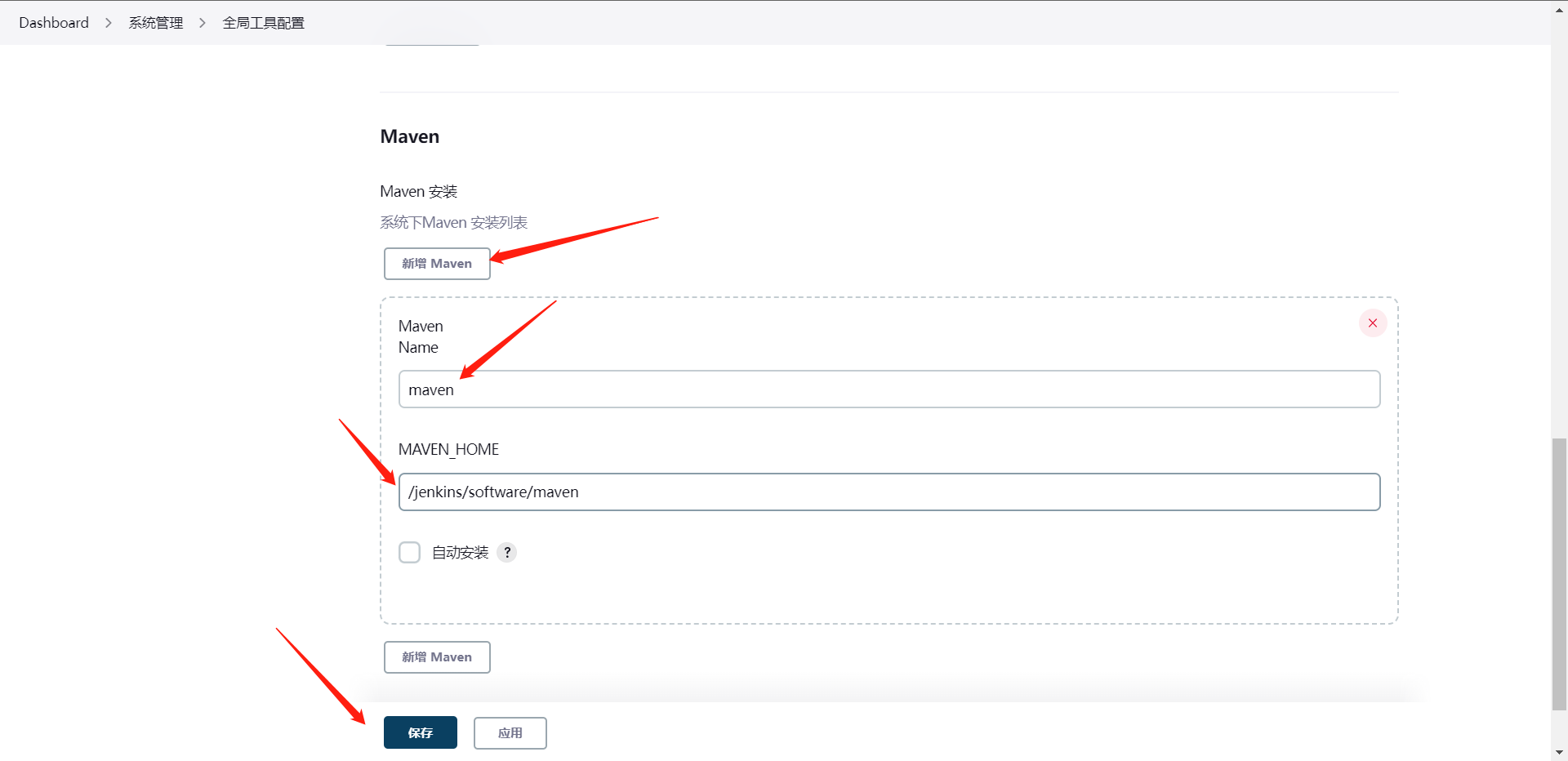

maven相信大家都知道,是一个Java项目打包工具,那么我们如何将Maven集成到Jenkins内呢,这也是我们这一章里的要集成的第一个工具,这个其实我们前面集成过了,但是我们可以再整一下

1:我们需要安装JDK/JRE(这个我是Yum安装的java11)

2:安装Maven, 下载地址:https://maven.apache.org/download.cgi

[root@cdk-server ~]# mkdir -p /jenkins/software

[root@cdk-server ~]# cd /jenkins/software/

[root@cdk-server software]# wget https://dlcdn.apache.org/maven/maven-3/3.8.6/binaries/apache-maven-3.8.6-bin.tar.gz

[root@cdk-server software]# tar xf apache-maven-3.8.6-bin.tar.gz

[root@cdk-server software]# mv apache-maven-3.8.6 maven

[root@cdk-server maven]# cat /etc/profile | tail -n 2

export MAVEN_HOME=/jenkins/software/maven

export PATH=$PATH:$MAVEN_HOME/bin

[root@cdk-server maven]# source /etc/profile

[root@cdk-server maven]# mvn --version

Apache Maven 3.8.6 (84538c9988a25aec085021c365c560670ad80f63)

Maven home: /jenkins/software/maven

Java version: 11.0.17, vendor: Red Hat, Inc., runtime: /usr/lib/jvm/java-11-openjdk-11.0.17.0.8-2.el9_0.x86_64

Default locale: en_US, platform encoding: UTF-8

OS name: "linux", version: "5.14.0-70.13.1.el9_0.x86_64", arch: "amd64", family: "unix"

# 这里配置完成之后我们就可以去UI配置Maven的地址

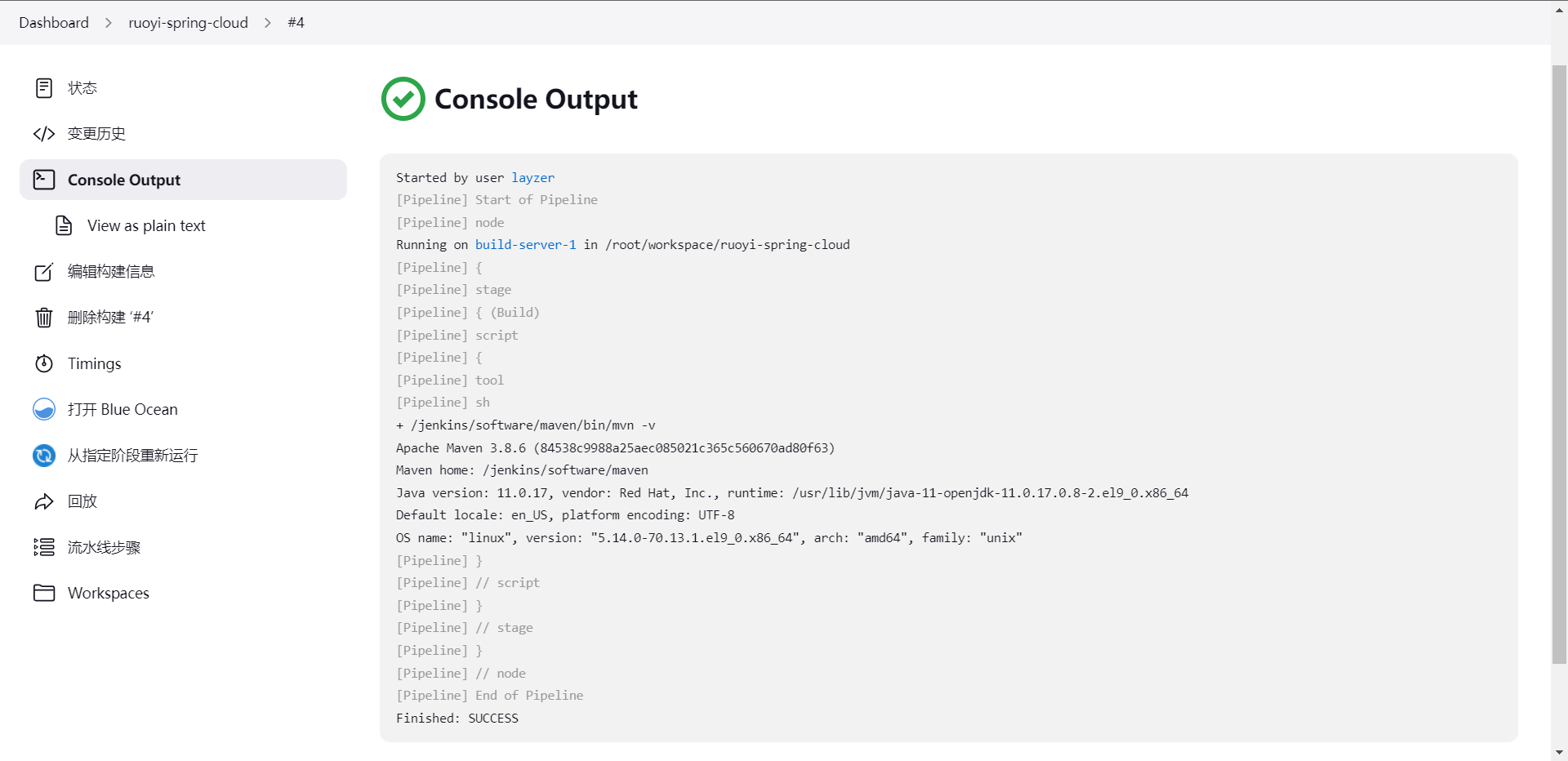

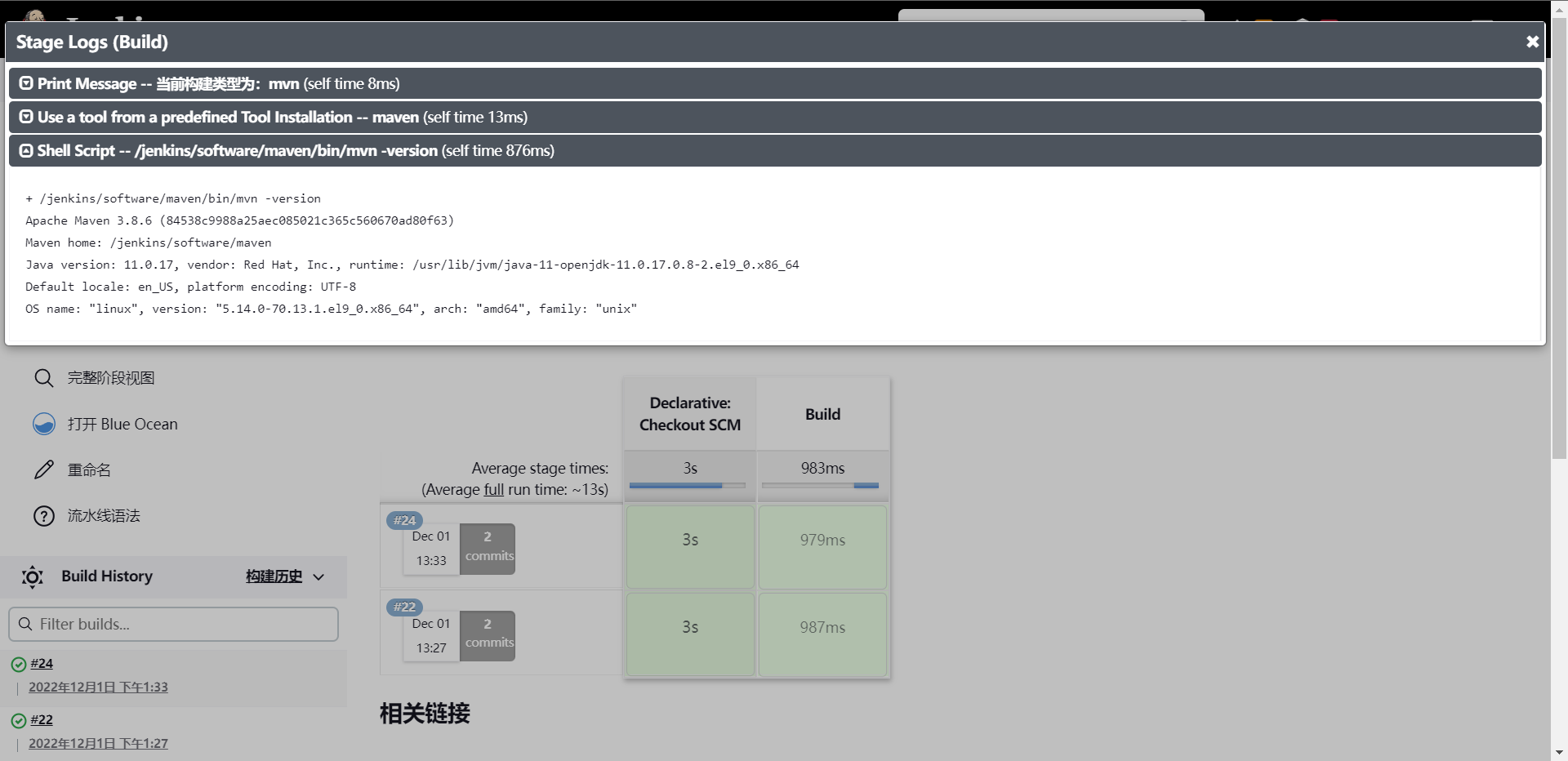

当然这个方法只是其中一种方法,还有另一种方法写进sharelibrary,当然这个大家可以自己去研究,那么下面我们就是来测试一下这个maven是否可用了,

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("Build") {

steps {

script{

mavenhome = tool "maven"

sh "${mavenhome}/bin/mvn -v"

}

}

}

}

}

我们顺便也写一下sharelibrary吧

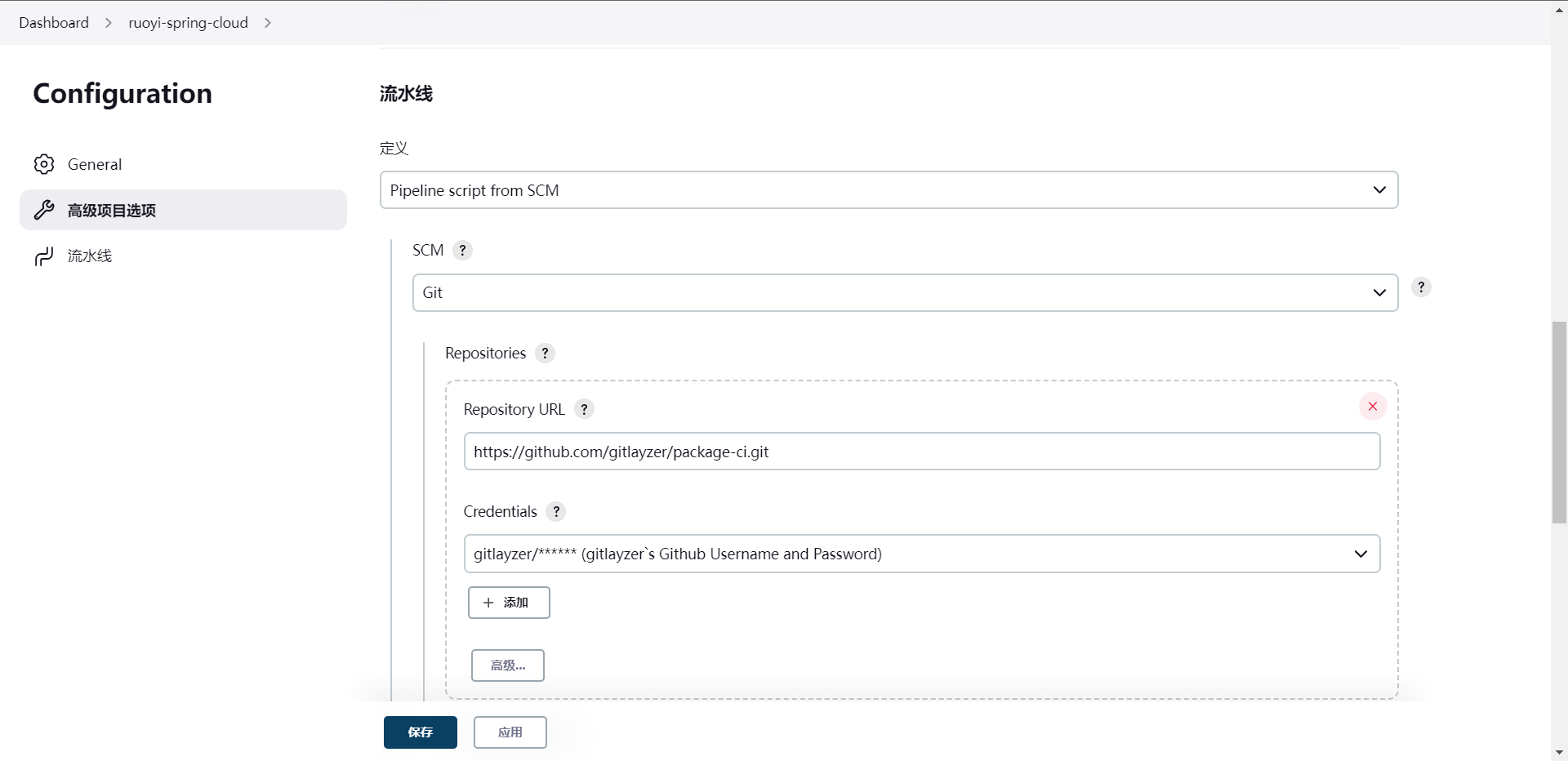

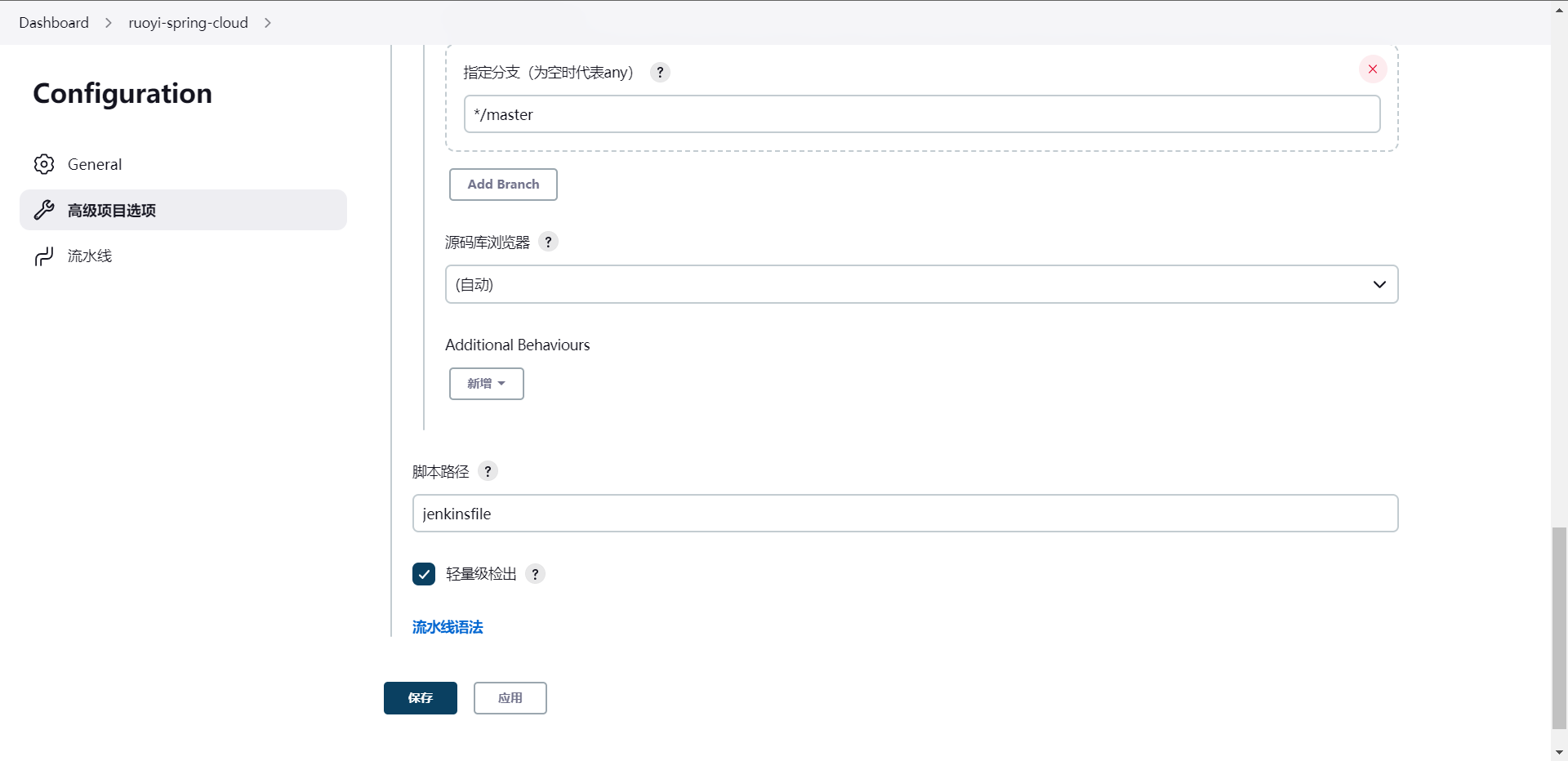

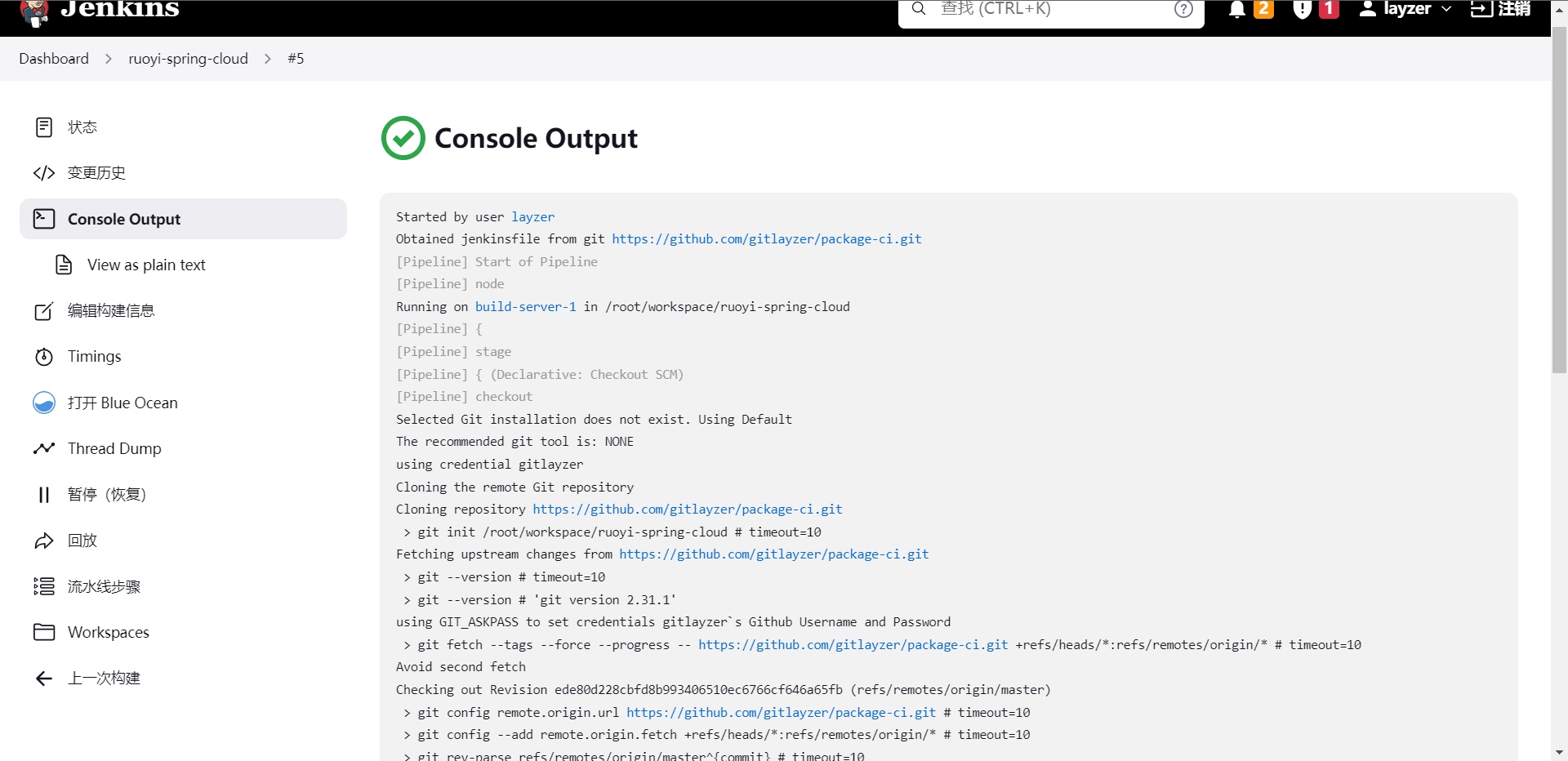

[root@cdk-server ~]# git clone https://github.com/gitlayzer/package-ci.git

[root@cdk-server ~]# cd package-ci/

[root@cdk-server package-ci]# cat jenkinsfile

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("Build") {

steps {

script{

mavenhome = tool "maven"

sh "${mavenhome}/bin/mvn -v"

}

}

}

}

}

[root@cdk-server package-ci]# git add .

[root@cdk-server package-ci]# git commit -m "add jenkinsfile"

[master ede80d2] add jenkinsfile

1 file changed, 18 insertions(+)

create mode 100644 jenkinsfile

[root@cdk-server package-ci]# git push origin master

Username for 'https://github.com': gitlayzer

Password for 'https://gitlayzer@github.com':

Enumerating objects: 4, done.

Counting objects: 100% (4/4), done.

Delta compression using up to 2 threads

Compressing objects: 100% (3/3), done.

Writing objects: 100% (3/3), 446 bytes | 446.00 KiB/s, done.

Total 3 (delta 0), reused 0 (delta 0), pack-reused 0

To https://github.com/gitlayzer/package-ci.git

5d988a9..ede80d2 master -> master

当然我们可以把构建写死,这个都是可以做到的,我们简单写一下

String buildShell = "${env.buildShell}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("Build") {

steps {

script{

mavenhome = tool "maven"

sh "${mavenhome}/bin/mvn ${buildShell}"

}

}

}

}

}

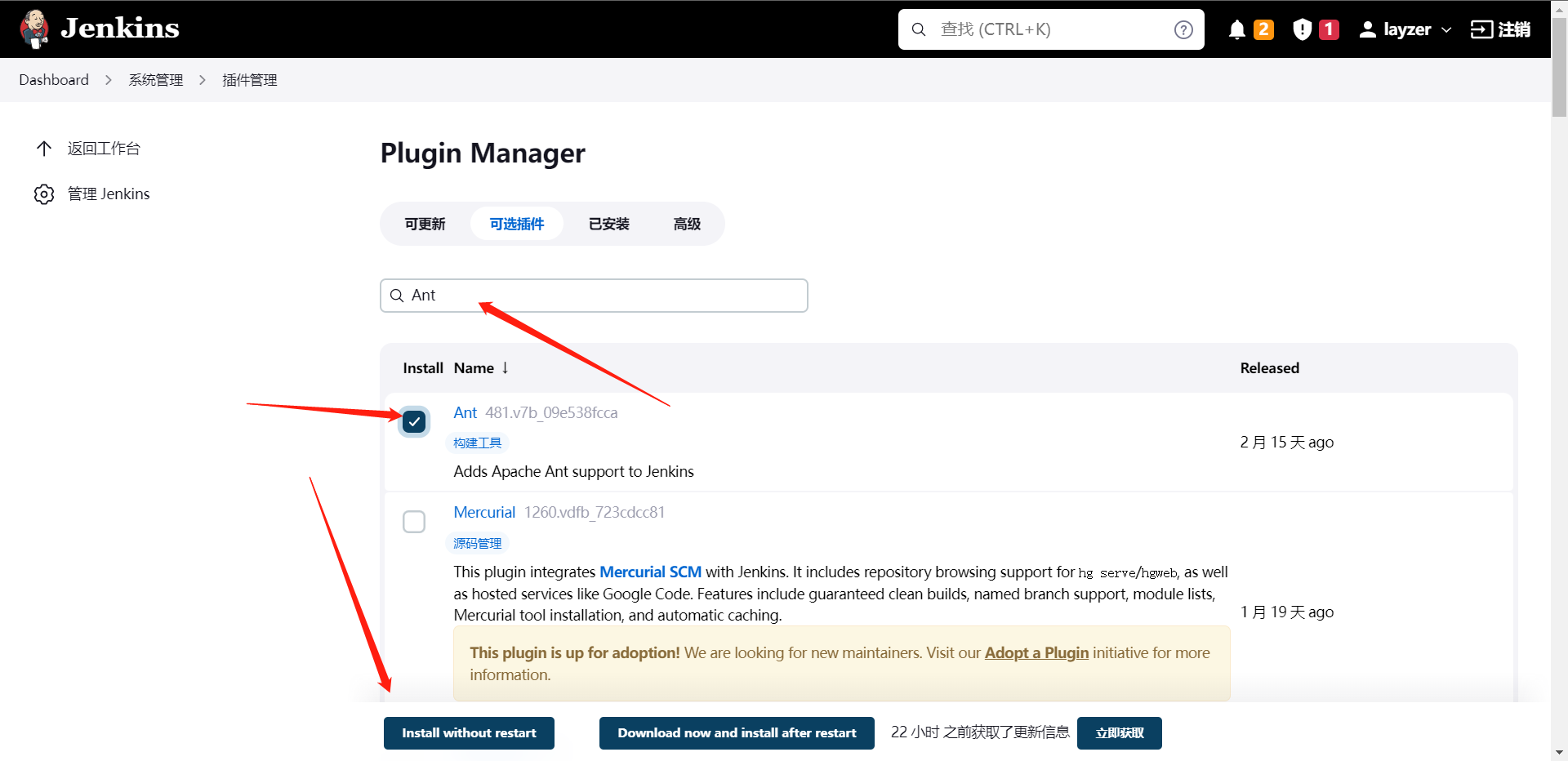

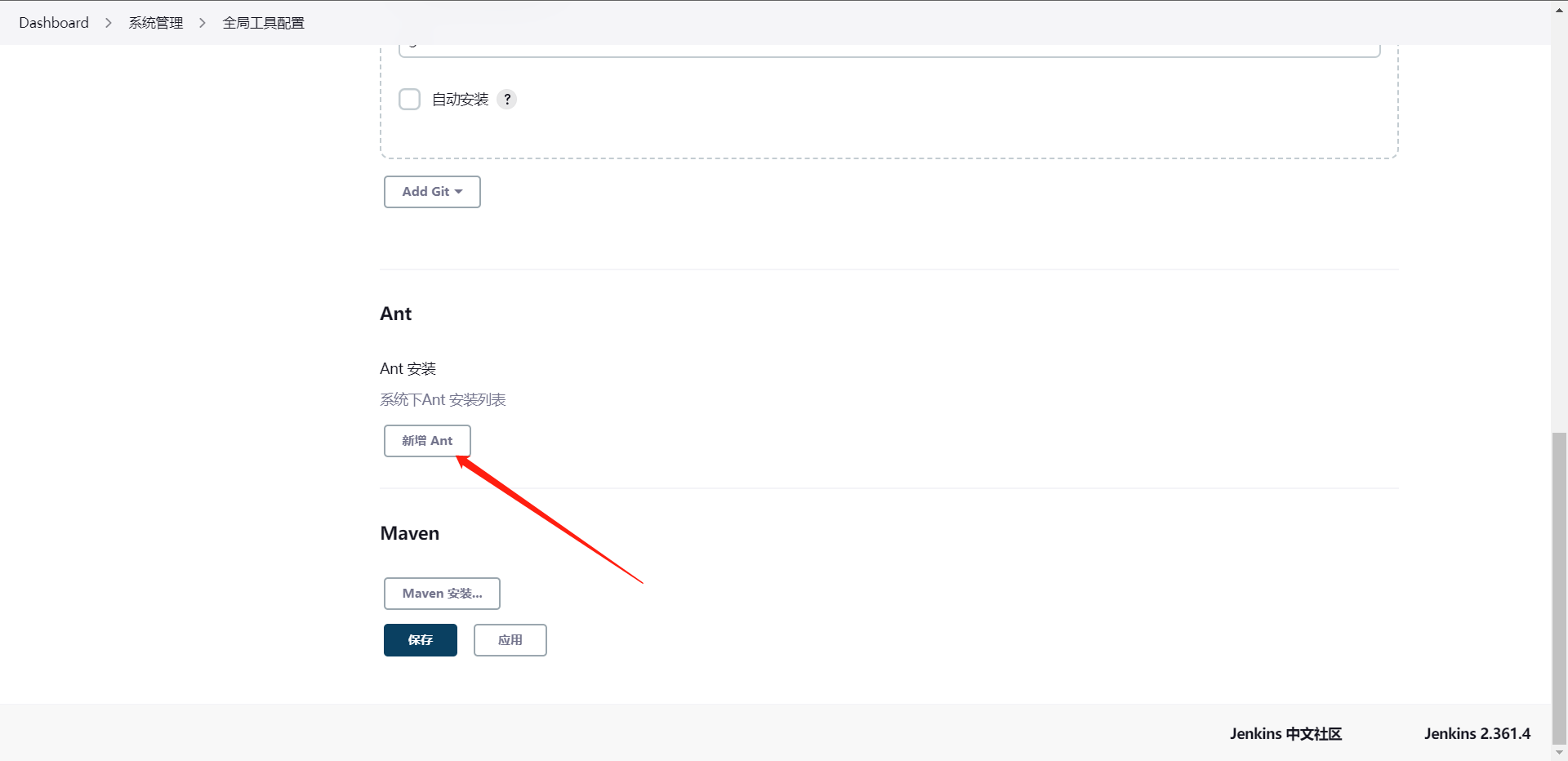

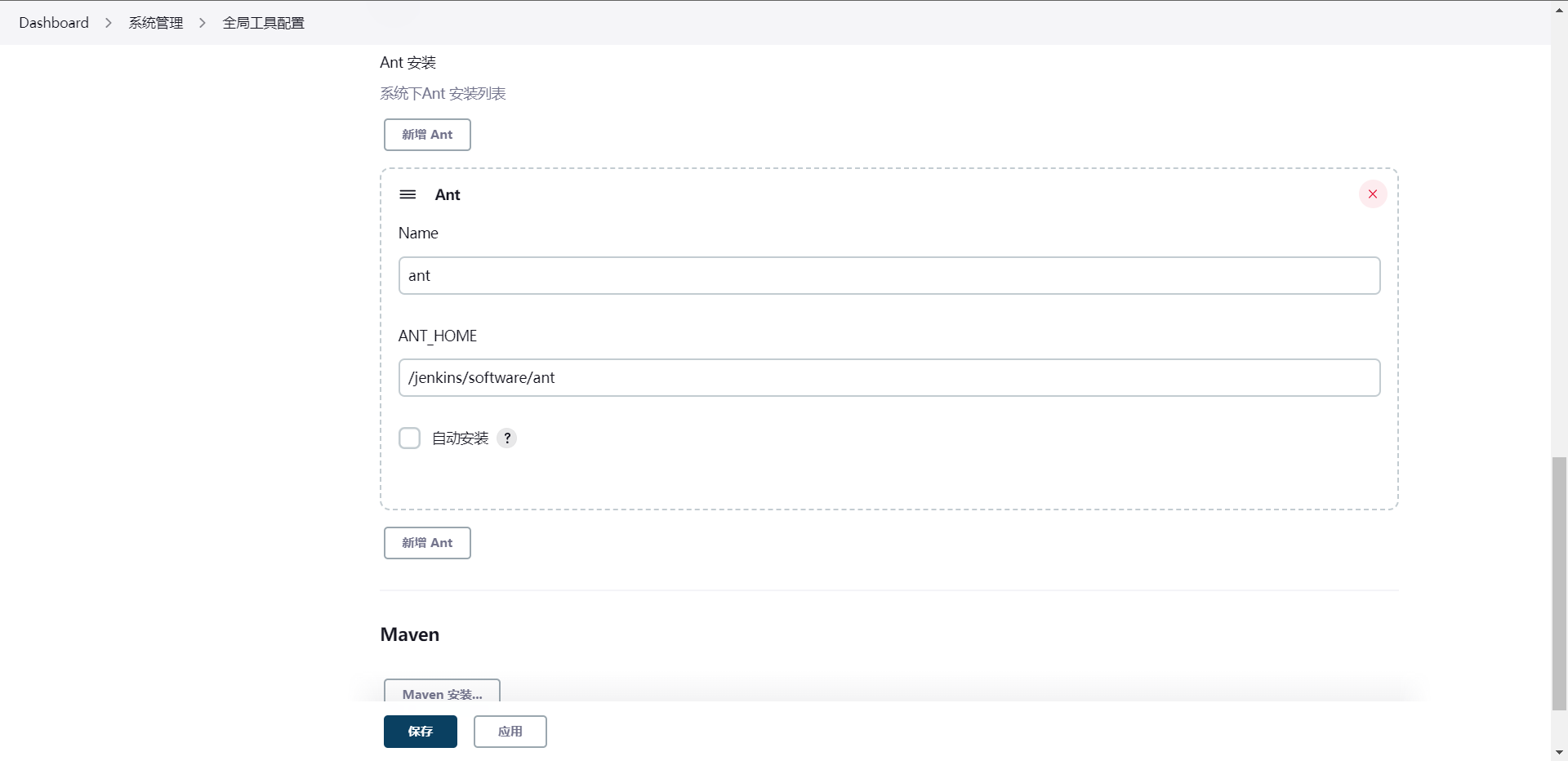

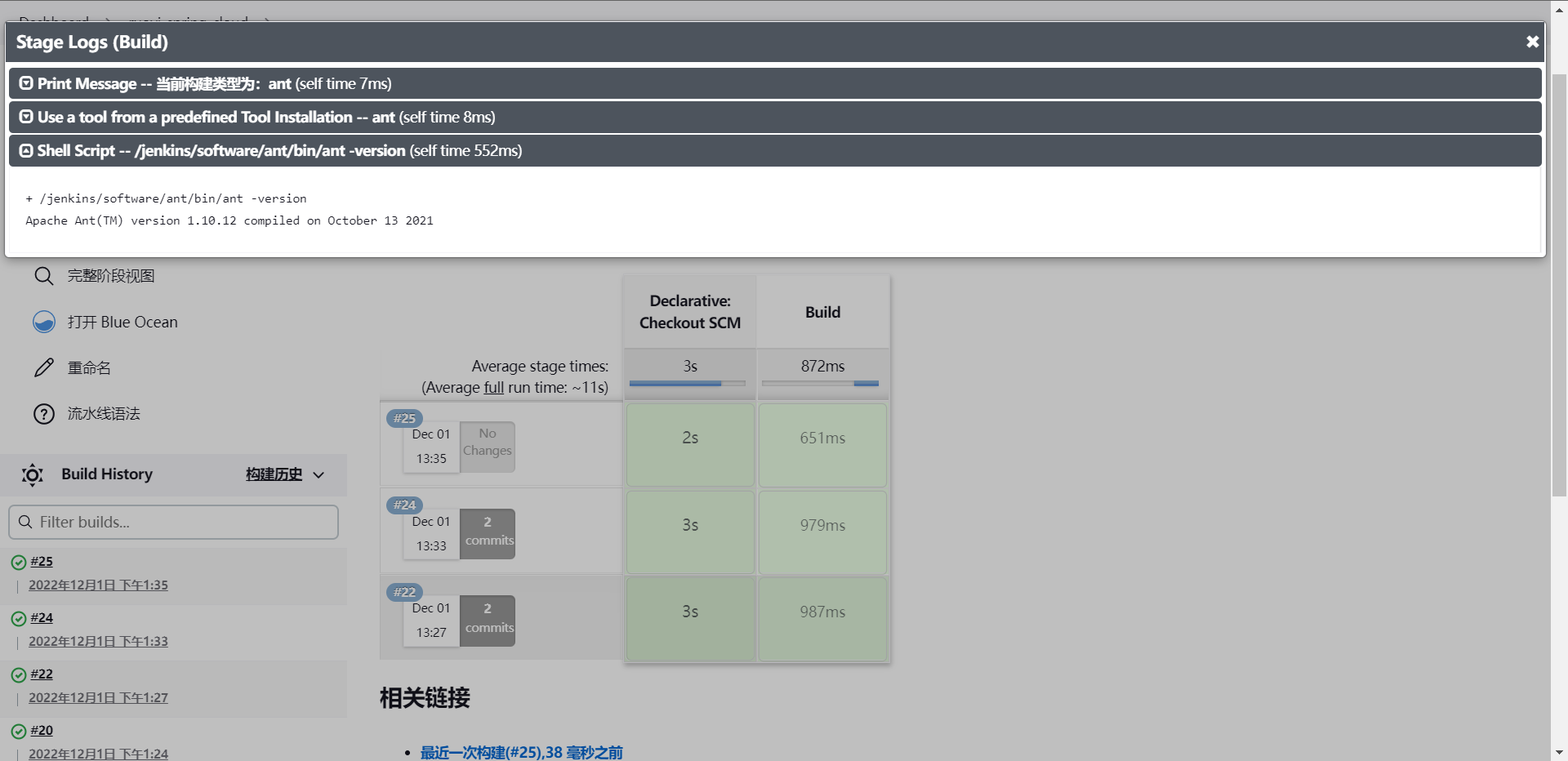

2:Ant

1:下载ant:https://dlcdn.apache.org//ant/binaries/apache-ant-1.10.12-bin.tar.gz

[root@cdk-server software]# wget https://dlcdn.apache.org//ant/binaries/apache-ant-1.10.12-bin.tar.gz

[root@cdk-server software]# tar xf apache-ant-1.10.12-bin.tar.gz

[root@cdk-server software]# mv apache-ant-1.10.12 ant

[root@cdk-server software]# cat /etc/profile | tail -n 3

export MAVEN_HOME=/jenkins/software/maven

export ANT_HOME=//jenkins/software/ant

export PATH=$PATH:$MAVEN_HOME/bin:$ANT_HOME/bin

[root@cdk-server software]# source /etc/profile

[root@cdk-server software]# ant -version

Apache Ant(TM) version 1.10.12 compiled on October 13 2021

String buildShell = "${env.buildShell}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("MavenBuild") {

steps {

script{

mavenhome = tool "maven"

sh "${mavenhome}/bin/mvn ${buildShell}"

}

}

}

stage("AntBuild") {

steps {

script{

anthome = tool "ant"

sh "${anthome}/bin/ant ${buildShell}"

}

}

}

}

}

基本和maven是一样的操作。

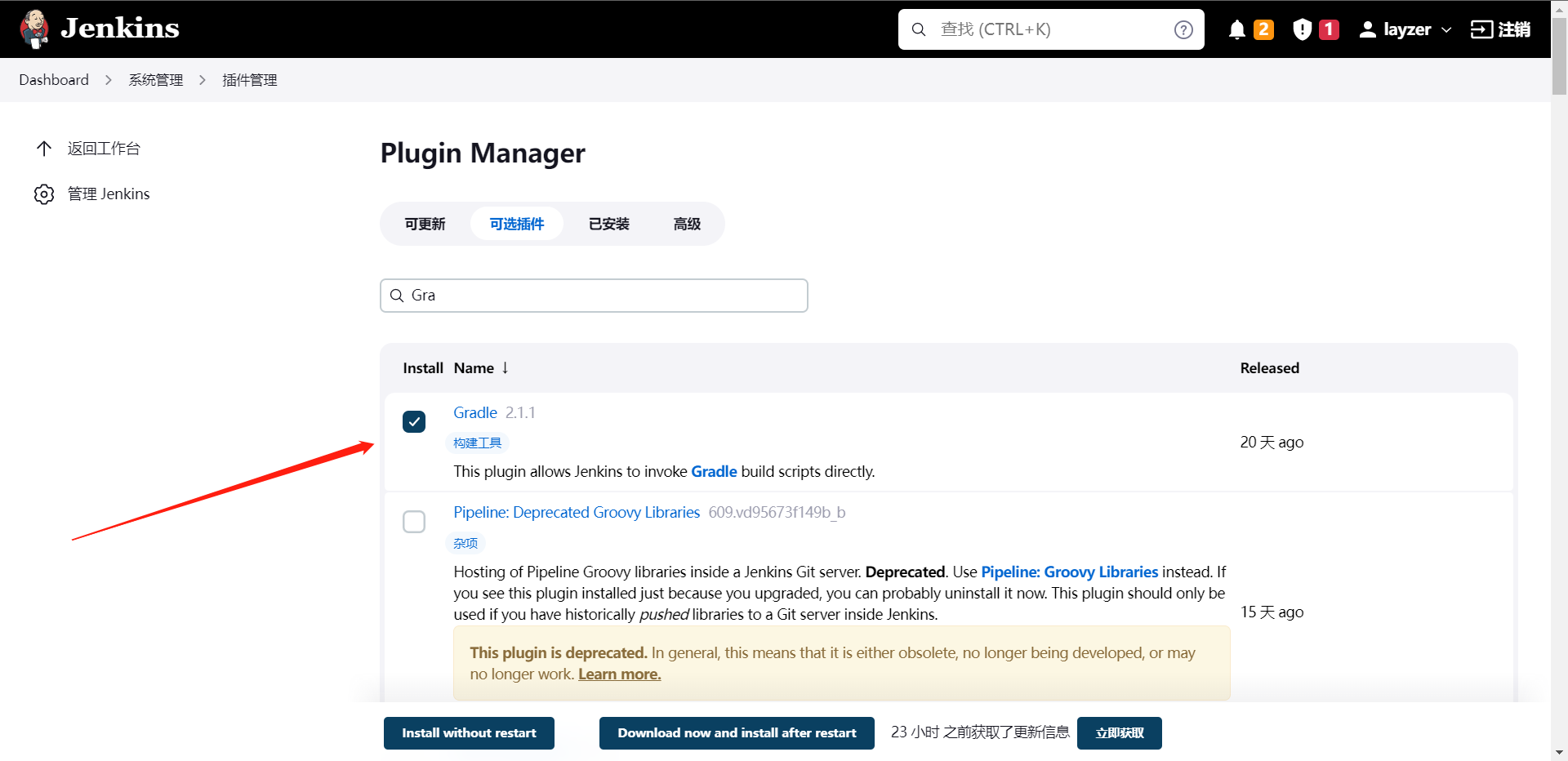

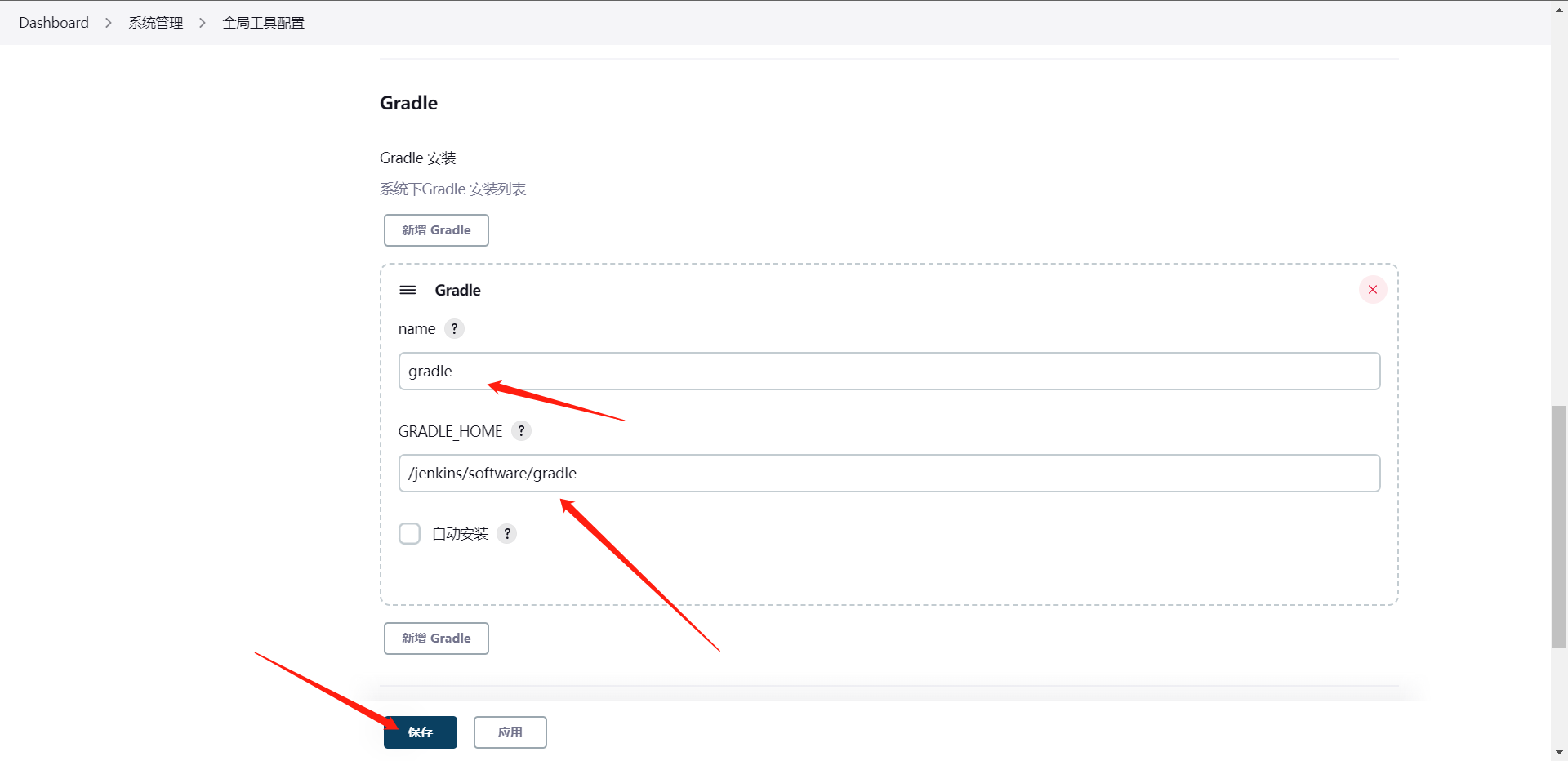

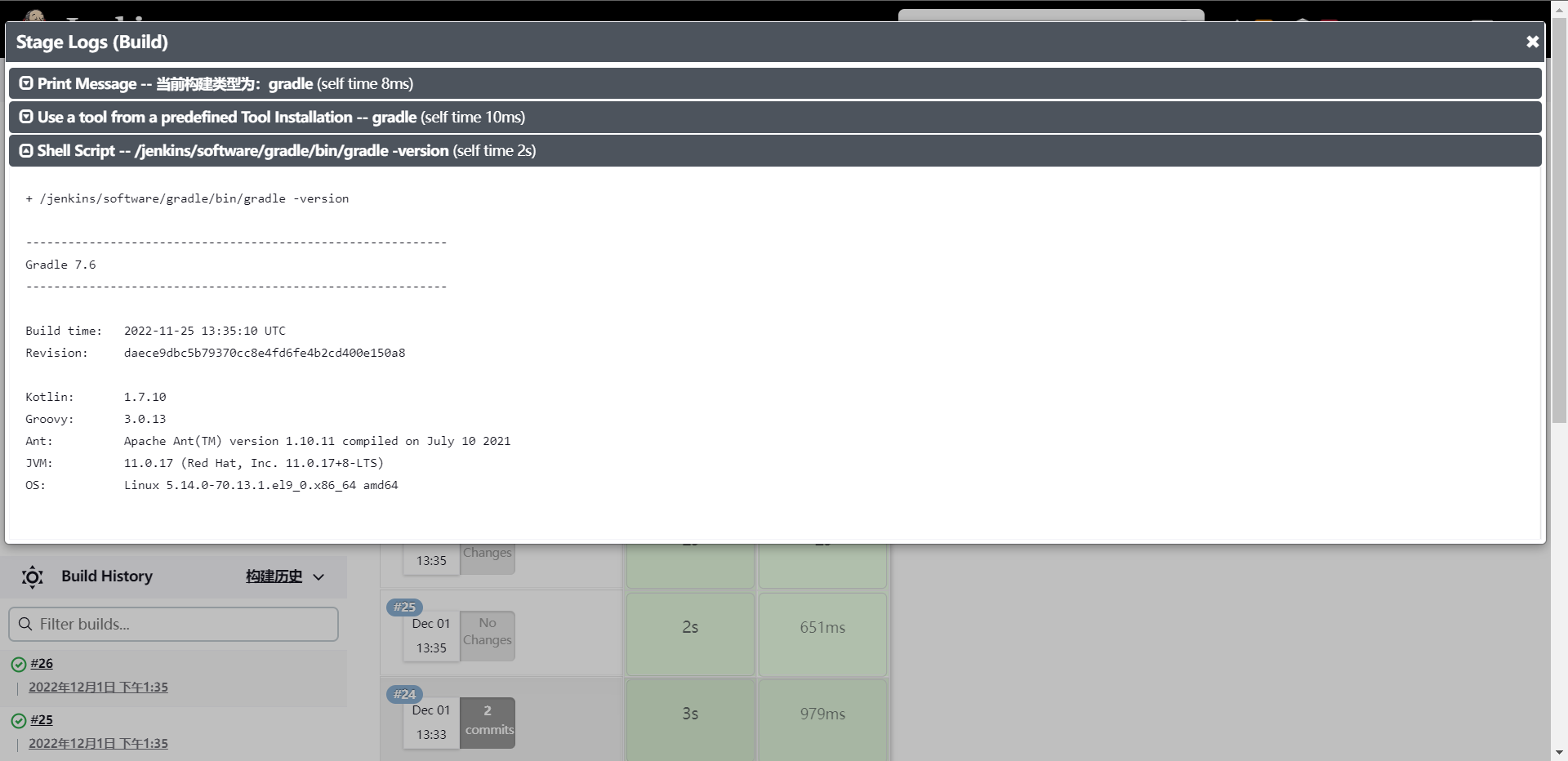

3:Gradle

1:下载gradle:https://gradle.org/next-steps/?version=7.6&format=bin

[root@cdk-server software]# unzip gradle-7.6-bin.zip

[root@cdk-server software]# mv gradle-7.6 gradle

[root@cdk-server software]# cat /etc/profile | tail -n 4

export MAVEN_HOME=/jenkins/software/maven

export ANT_HOME=/jenkins/software/ant

export GRADLE_HOME=/jenkins/software/gradle

export PATH=$PATH:$MAVEN_HOME/bin:$ANT_HOME/bin:$GRADLE_HOME/bin

[root@cdk-server software]# source /etc/profile

String buildShell = "${env.buildShell}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("MavenBuild") {

steps {

script{

mavenhome = tool "maven"

sh "${mavenhome}/bin/mvn ${buildShell}"

}

}

}

stage("AntBuild") {

steps {

script{

anthome = tool "ant"

sh "${anthome}/bin/ant ${buildShell}"

}

}

}

stage("GradleBuild") {

steps {

script{

gradlehome = tool "gradle"

sh "${gradlehome}/bin/gradle ${buildShell}"

}

}

}

}

}

[root@cdk-server package-ci]# git add .

[root@cdk-server package-ci]# git commit -m "Add Gradle Code"

[master 6557e7a] Add Gradle Code

1 file changed, 10 insertions(+), 1 deletion(-)

[root@cdk-server package-ci]# git push origin master

Username for 'https://github.com': gitlayzer

Password for 'https://gitlayzer@github.com':

Enumerating objects: 5, done.

Counting objects: 100% (5/5), done.

Delta compression using up to 2 threads

Compressing objects: 100% (3/3), done.

Writing objects: 100% (3/3), 337 bytes | 337.00 KiB/s, done.

Total 3 (delta 2), reused 0 (delta 0), pack-reused 0

remote: Resolving deltas: 100% (2/2), completed with 2 local objects.

To https://github.com/gitlayzer/package-ci.git

f39b951..6557e7a master -> master

这里有一些小更改,参数化的选项的时候由于这三个软件都有-version所以改了一下。

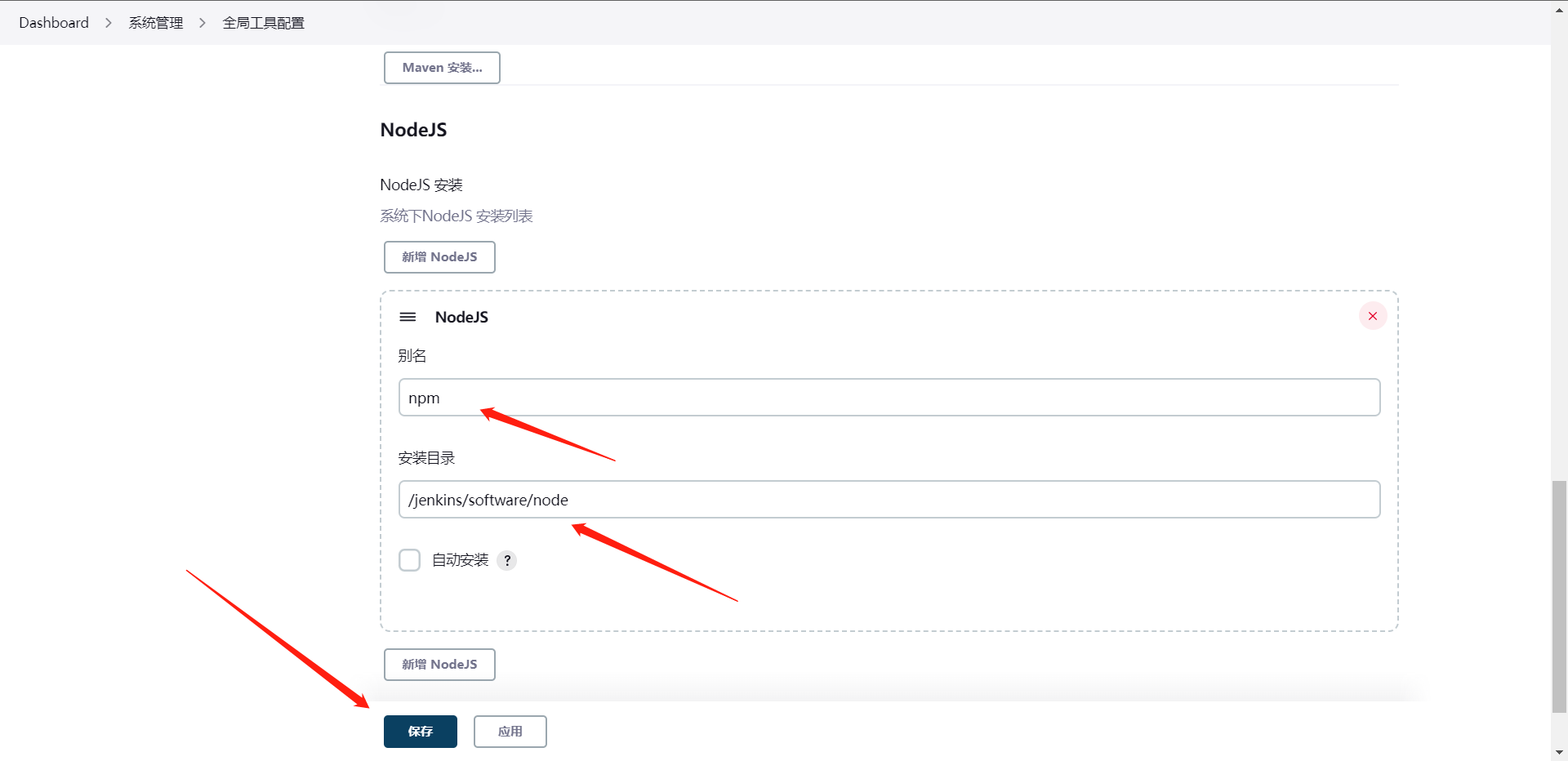

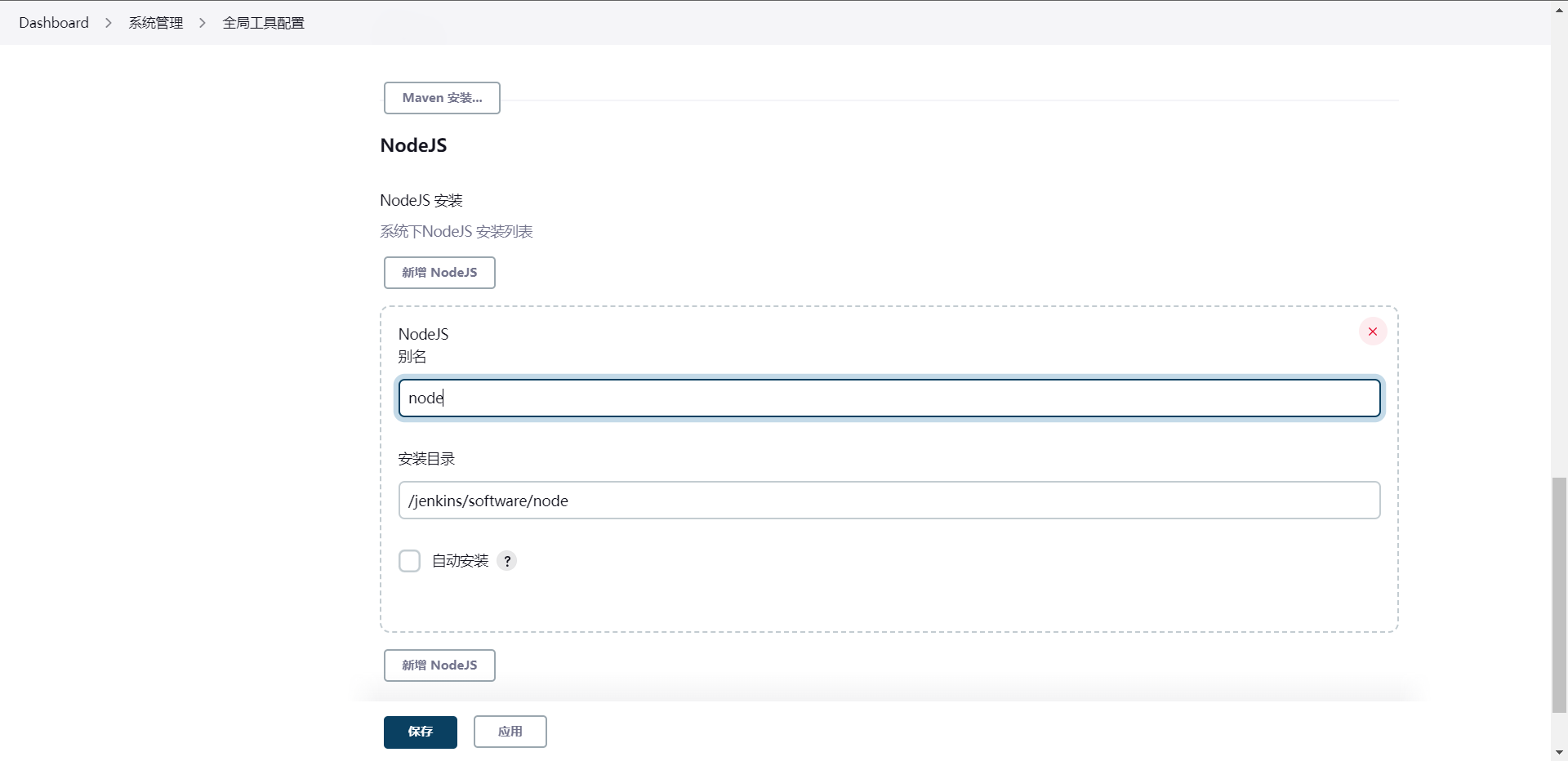

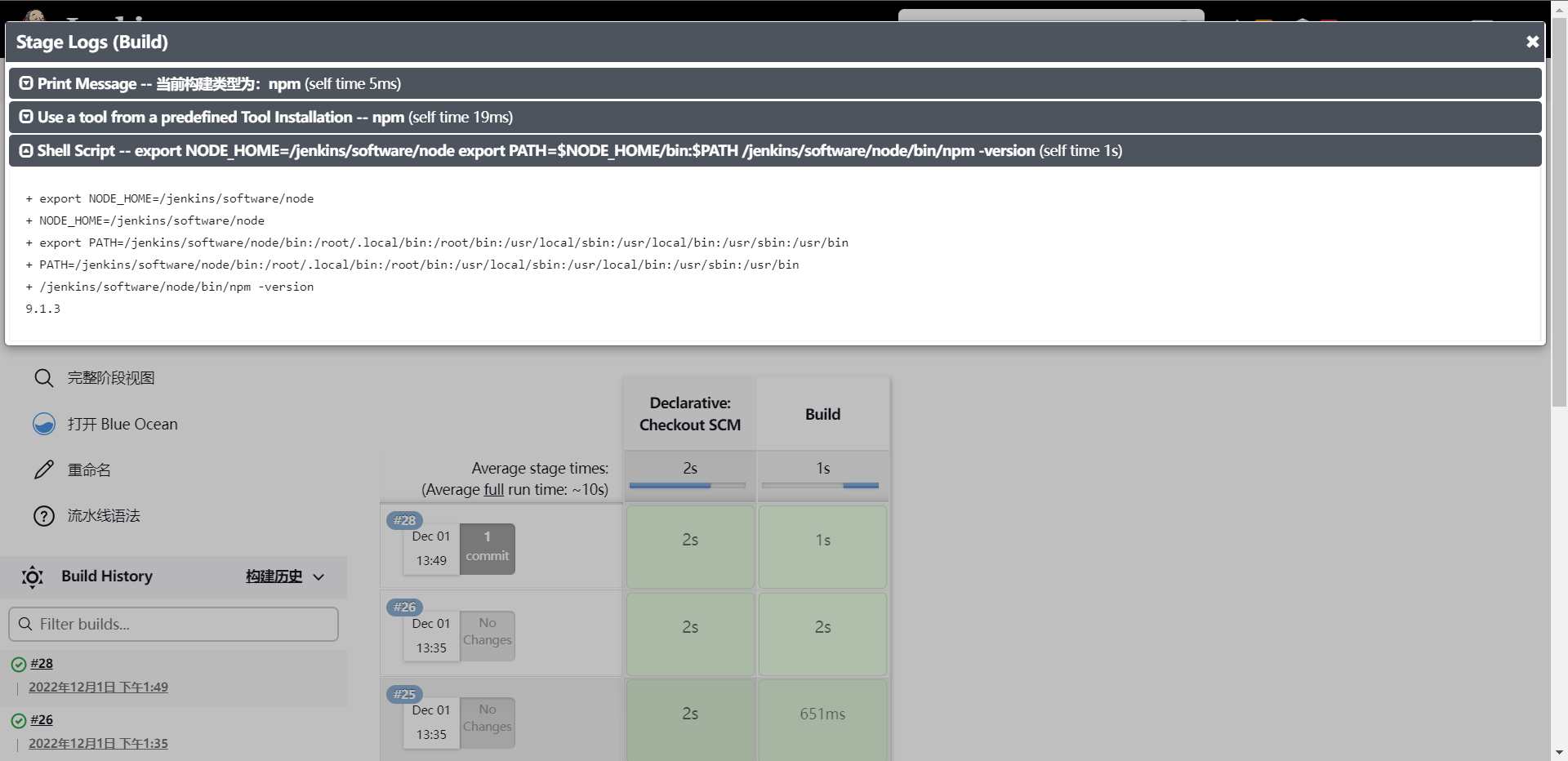

4:Npm

1:下载Node:https://nodejs.org/dist/v18.12.1/node-v18.12.1-linux-x64.tar.xz

[root@cdk-server software]# wget https://nodejs.org/dist/v18.12.1/node-v18.12.1-linux-x64.tar.xz

[root@cdk-server software]# tar xf node-v18.12.1-linux-x64.tar.xz

[root@cdk-server software]# mv node-v18.12.1-linux-x64 node

[root@cdk-server software]# cat /etc/profile | tail -n 5

export MAVEN_HOME=/jenkins/software/maven

export ANT_HOME=/jenkins/software/ant

export GRADLE_HOME=/jenkins/software/gradle

export NODE_HOME=/jenkins/software/node

export PATH=$PATH:$MAVEN_HOME/bin:$ANT_HOME/bin:$GRADLE_HOME/bin:$NODE_HOME/bin

[root@cdk-server software]# source /etc/profile

String buildShell = "${env.buildShell}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("MavenBuild") {

steps {

script{

mavenhome = tool "maven"

sh "${mavenhome}/bin/mvn ${buildShell}"

}

}

}

stage("AntBuild") {

steps {

script{

anthome = tool "ant"

sh "${anthome}/bin/ant ${buildShell}"

}

}

}

stage("GradleBuild") {

steps {

script{

gradlehome = tool "gradle"

sh "${gradlehome}/bin/gradle ${buildShell}"

}

}

}

stage("NpnBuild") {

steps {

script{

npmehome = tool "node"

sh "${npmehome}/bin/node -v"

}

}

}

}

}

[root@cdk-server package-ci]# git add .

[root@cdk-server package-ci]# git commit -m "Add Npm Code"

[master 2a0f42a] Add Npm Code

1 file changed, 9 insertions(+)

[root@cdk-server package-ci]# git push origin master

Username for 'https://github.com': gitlayzer

Password for 'https://gitlayzer@github.com':

Enumerating objects: 5, done.

Counting objects: 100% (5/5), done.

Delta compression using up to 2 threads

Compressing objects: 100% (3/3), done.

Writing objects: 100% (3/3), 331 bytes | 331.00 KiB/s, done.

Total 3 (delta 2), reused 0 (delta 0), pack-reused 0

remote: Resolving deltas: 100% (2/2), completed with 2 local objects.

To https://github.com/gitlayzer/package-ci.git

6557e7a..2a0f42a master -> master

这里去运行流水线可能会出错,我们直接全局装一下npm 就可以了

[root@cdk-server ~]# npm install -g npm

5:使用共享库整合构构建工具

[root@cdk-server package-ci]# ls

jenkinsfile LICENSE README.md

[root@cdk-server package-ci]# mkdir -p src/org/library

[root@cdk-server package-ci]# touch src/org/library/build.groovy

package org.linrary

def Build(buildType, buildShell) {

def buildTools = ["mvn": "maven", "ant": "ant", "gradle": "gradle"]

println("当前构建类型为:${buildType}")

buildHome = tool buildTools[buildType]

sh "${buildHome}/bin/${buildType} ${buildShell}"

}

@Library("jenkinslibrary") _

def build = new org.library.build()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

}

}

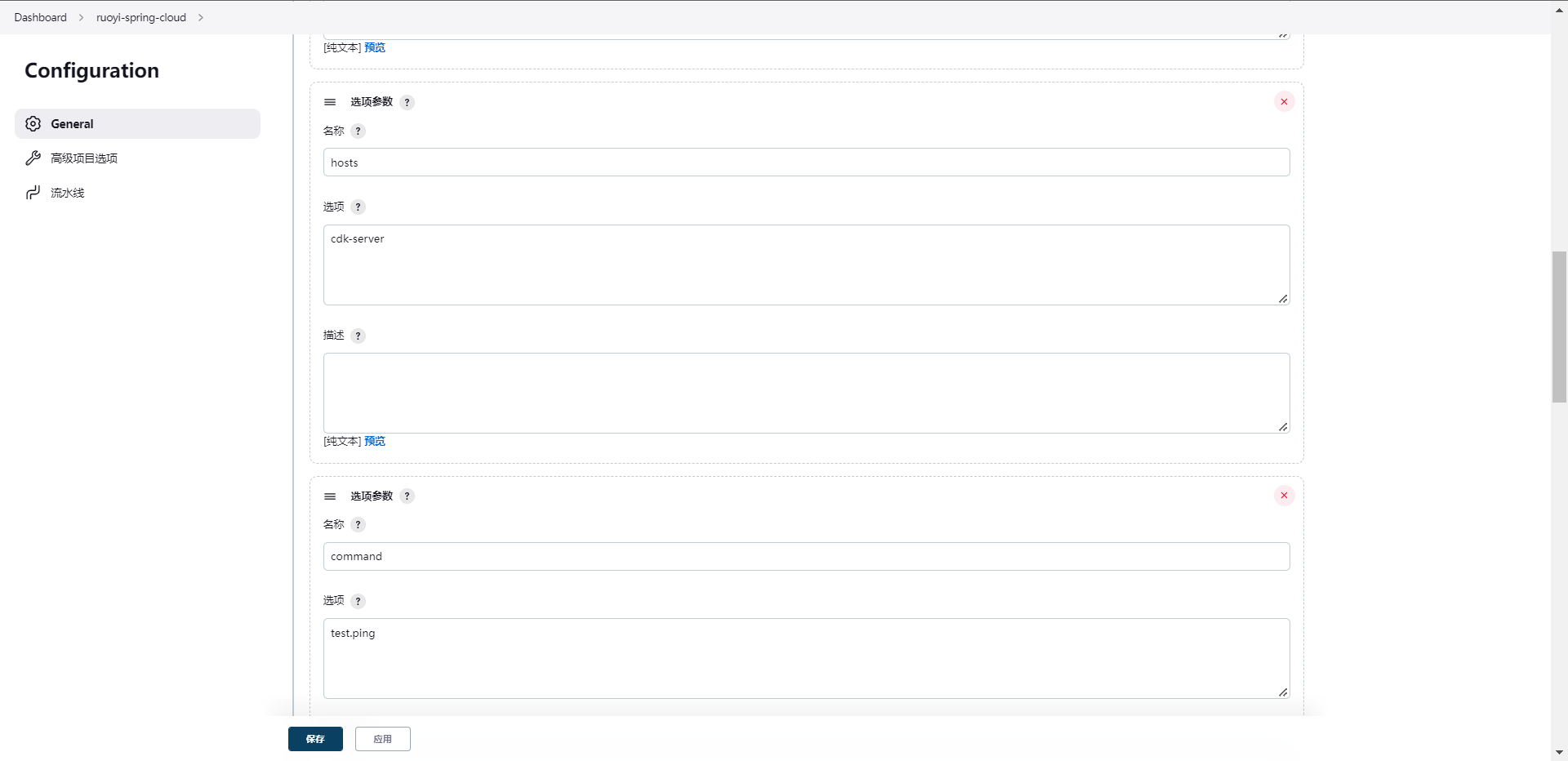

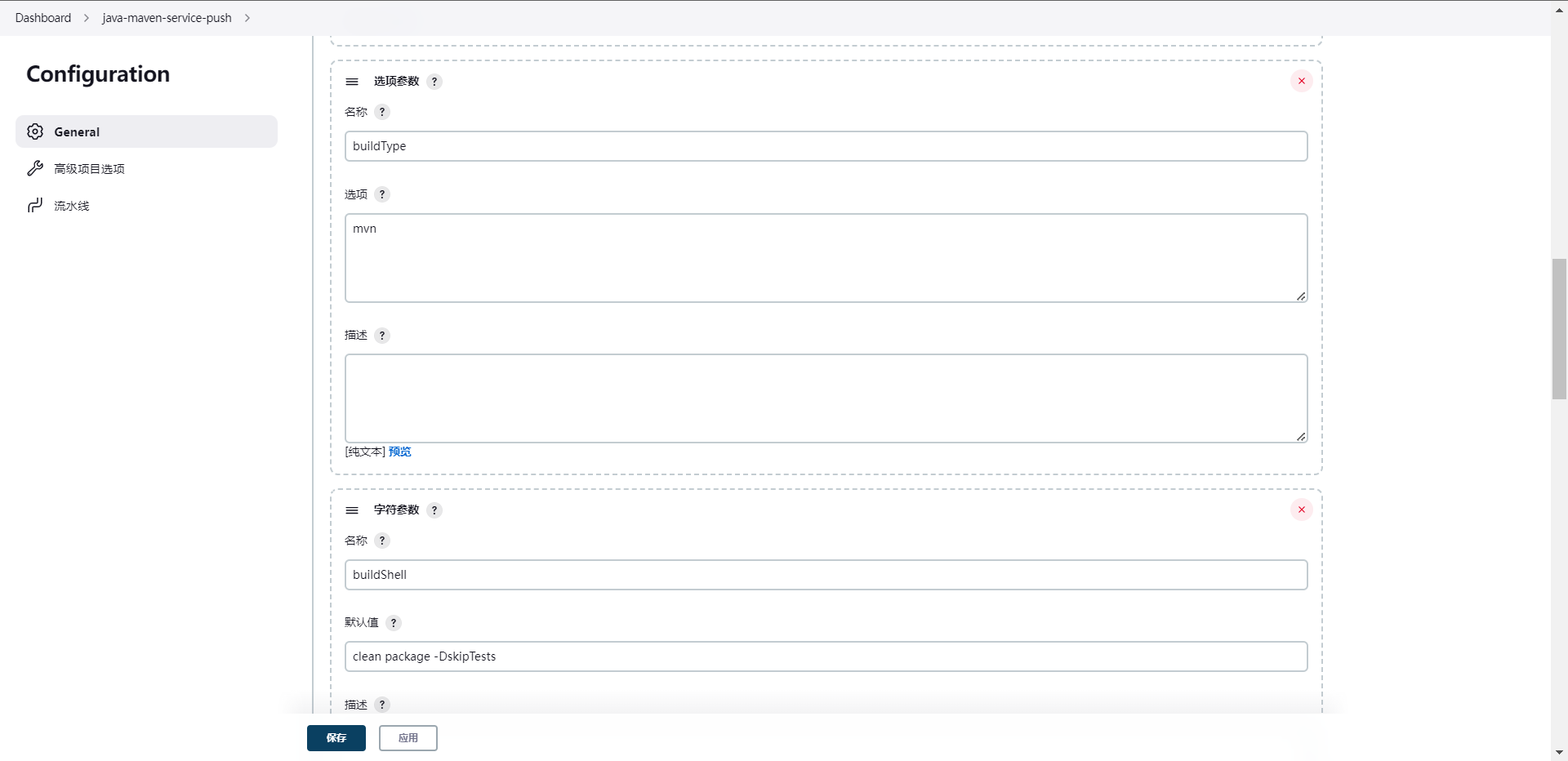

去创建参数选项

这个时候我们会发现,原来需要每条流水线都要写的方法,现在都可以统一引用这个共享库就可以实现了,当然这个时候有人可能会问了,我是否可以控制Library的版本,这个其实可以的。

@Library("jenkinslibrary@master") _

def build = new org.library.build()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

}

}

// 带npm,记得插件名称改为npm

package org.linrary

def Build(buildType, buildShell) {

def buildTools = ["mvn": "maven", "ant": "ant", "gradle": "gradle", "npm": "npm"]

println("当前构建类型为:${buildType}")

buildHome = tool buildTools[buildType]

if ("${buildType}" == "npm") {

sh """export NODE_HOME=${buildHome}

export PATH=\$NODE_HOME/bin:\$PATH

${buildHome}/bin/${buildType} ${buildShell}"""

} else {

sh "${buildHome}/bin/${buildType} ${buildShell}"

}

}

6:SaltStacck

1:部署SaltStack:https://repo.saltproject.io/#rhel

2:jenkins运行任务的节点需要安装salt-master,然后被控节点需要安装salt-minion

[root@cdk-server ~]# cat <<eof >> /etc/yum.repos.d/salt.repo

[salt-3004-repo]

name=Salt repo for RHEL/CentOS 8 PY3

baseurl=https://repo.saltproject.io/py3/redhat/8/x86_64/3004

enabled=1

gpgcheck=0

gpgkey=https://repo.saltproject.io/py3/redhat/8/x86_64/3004/SALTSTACK-GPG-KEY.pub

eof

[root@cdk-server ~]# yum install -y salt-master salt-minion

# 分别启动节点服务

# jenkins node节点

[root@cdk-server ~]# systemctl enable salt-master.service --now

# 业务服务器

[root@cdk-server ~]# systemctl enable salt-minion.service --now

[root@cdk-server ~]# cat /etc/salt/minion | grep "master: 10.0.0.12"

master: 10.0.0.12

[root@cdk-server ~]# systemctl restart salt-minion.service

# jenkins node节点

[root@cdk-server ~]# salt-key -L

Accepted Keys:

Denied Keys:

Unaccepted Keys:

cdk-server

Rejected Keys:

[root@cdk-server ~]# salt-key -A

The following keys are going to be accepted:

Unaccepted Keys:

cdk-server

Proceed? [n/Y] y

Key for minion cdk-server accepted.

[root@cdk-server ~]# salt-key -L

Accepted Keys:

cdk-server

Denied Keys:

Unaccepted Keys:

Rejected Keys:

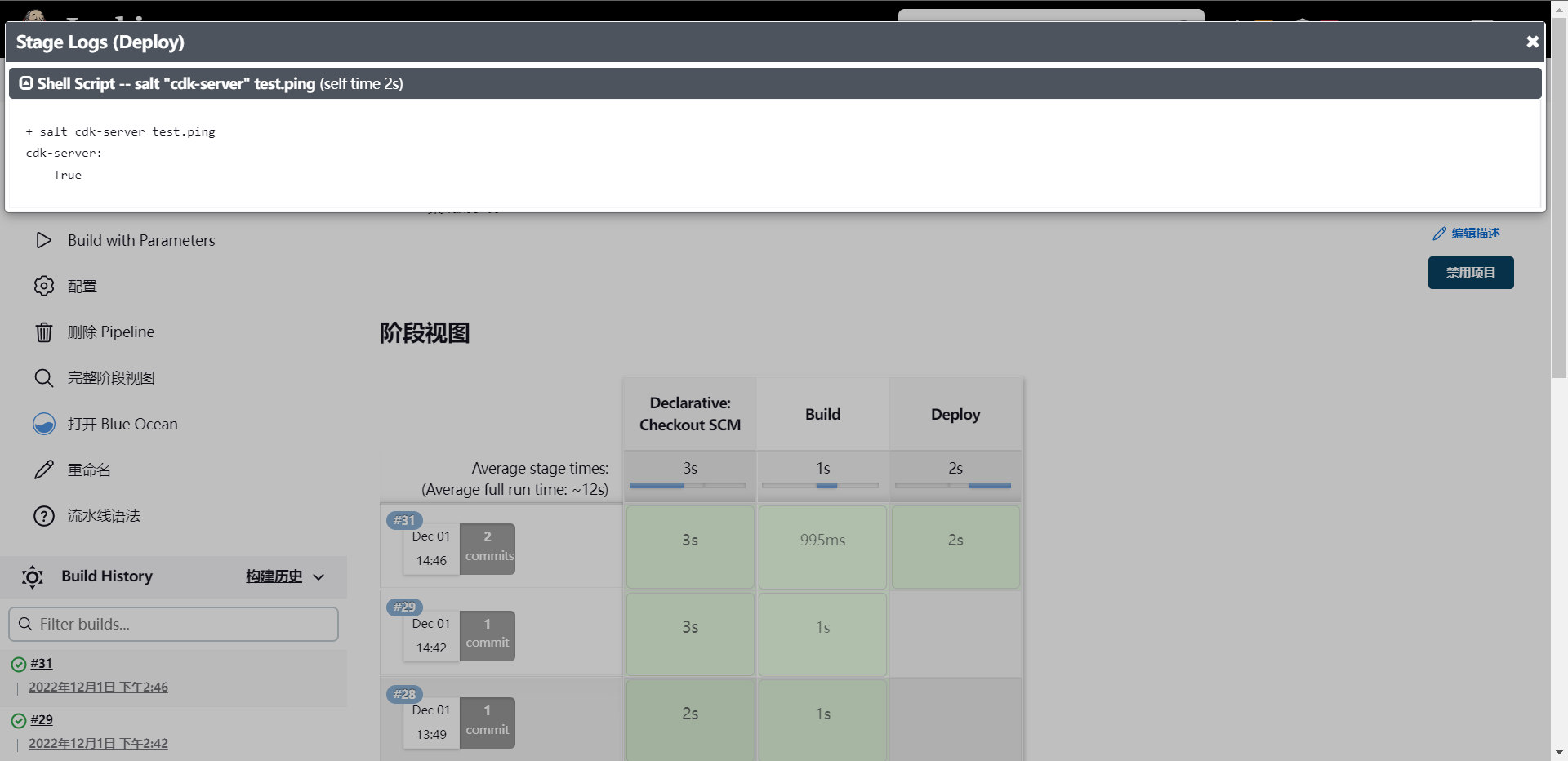

[root@cdk-server ~]# salt "cdk-server" test.ping

cdk-server:

True

# 那么下面我们就开始集成SaltStack到Jenkins中了,直接增加sharelibrary

[root@cdk-server library]# pwd

/root/package-ci/src/org/library

[root@cdk-server library]# touch deploy.groovy

package org.library

// saltstack

def SaltDeploy(hosts,command) {

sh "salt \"${hosts}\" ${command}"

}

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

stage("Deploy") {

steps {

script{

deploy.SaltDeploy(hosts,command)

}

}

}

}

}

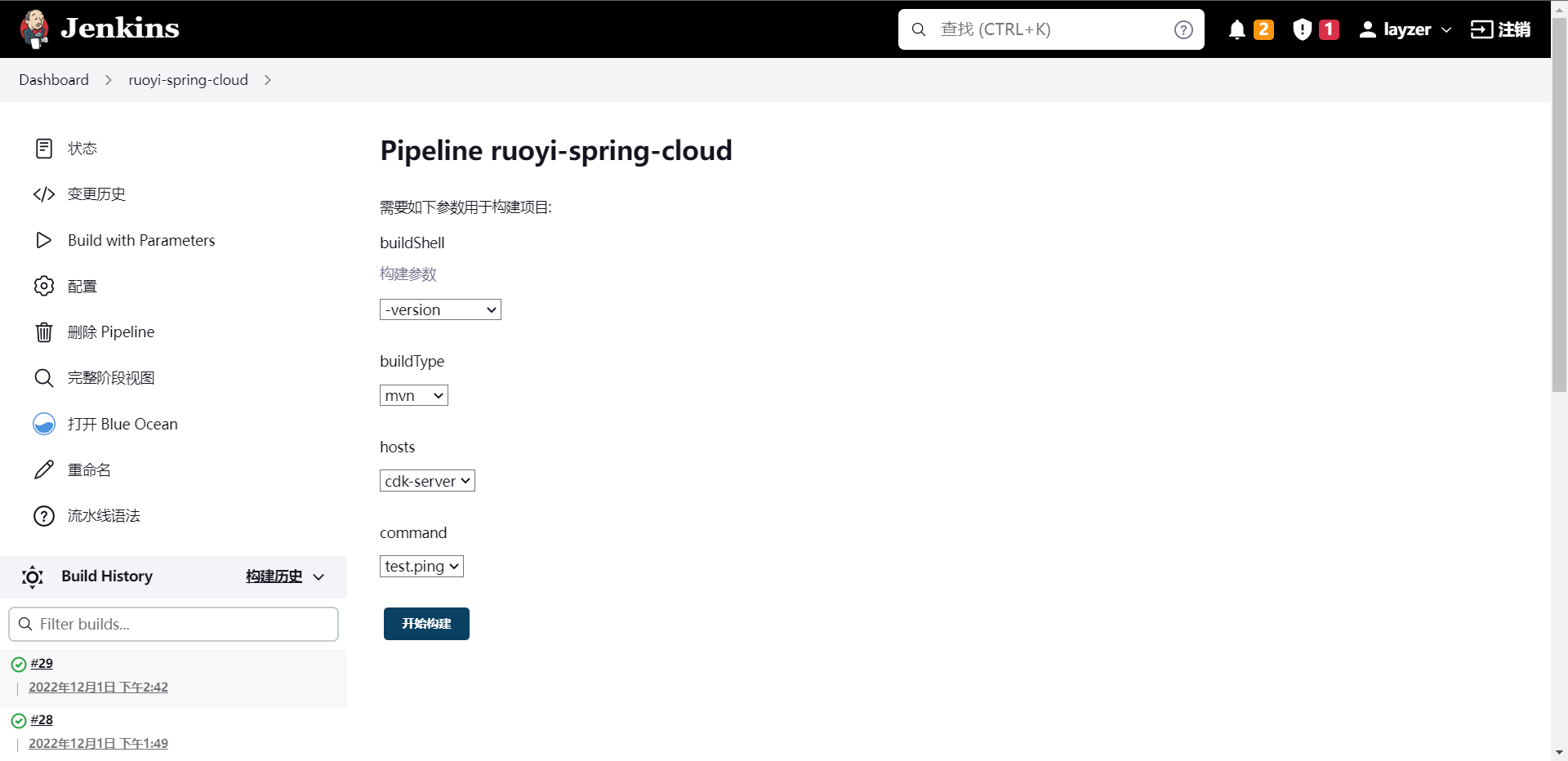

提交代码然后执行流水线

[root@cdk-server package-ci]# git add .

[root@cdk-server package-ci]# git commit -m "Add deploy file and edit jenkinsfile"

[master 79fbef7] Add deploy file and edit jenkinsfile

1 file changed, 12 insertions(+), 1 deletion(-)

[root@cdk-server package-ci]# git push origin master

Username for 'https://github.com': gitlayzer

Password for 'https://gitlayzer@github.com':

Enumerating objects: 5, done.

Counting objects: 100% (5/5), done.

Delta compression using up to 2 threads

Compressing objects: 100% (3/3), done.

Writing objects: 100% (3/3), 384 bytes | 384.00 KiB/s, done.

Total 3 (delta 2), reused 0 (delta 0), pack-reused 0

remote: Resolving deltas: 100% (2/2), completed with 2 local objects.

To https://github.com/gitlayzer/package-ci.git

c3470e8..79fbef7 master -> master

这样就等于是发布完成了,也就意味着我们集成Salt成功了,当然在选取主机的时候我们可以做成多选,然后部署多主机

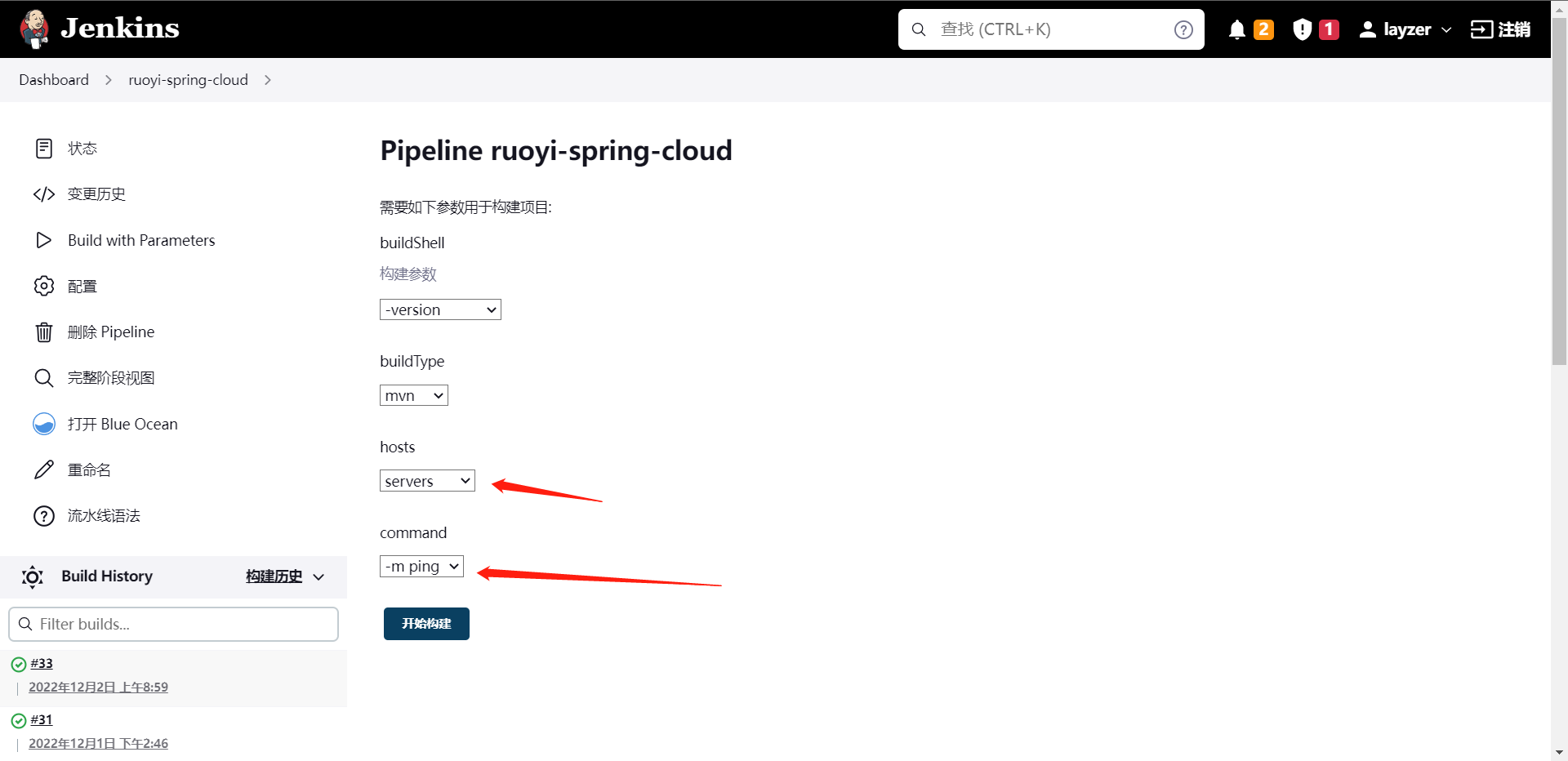

7:Ansible

上面接入了SaltStack的集成,那么我们这边再集成一下Ansible

1:安装ansible:安装在agent上就可以了

[root@cdk-server ~]# yum install -y ansible

2:对服务主机免密

# 全程回车

[root@cdk-server ~]# ssh-keygen -t rsa

# 实现免密

[root@cdk-server ~]# ssh-copy-id root@10.0.0.13

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

The authenticity of host '10.0.0.13 (10.0.0.13)' can't be established.

ED25519 key fingerprint is SHA256:mAg2DUdmrIwNfTbJU0EA4OYnGioxWvQIVM0kehyV4Cc.

This host key is known by the following other names/addresses:

~/.ssh/known_hosts:1: 10.0.0.11

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@10.0.0.13's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'root@10.0.0.13'"

and check to make sure that only the key(s) you wanted were added.

# 配置主机组

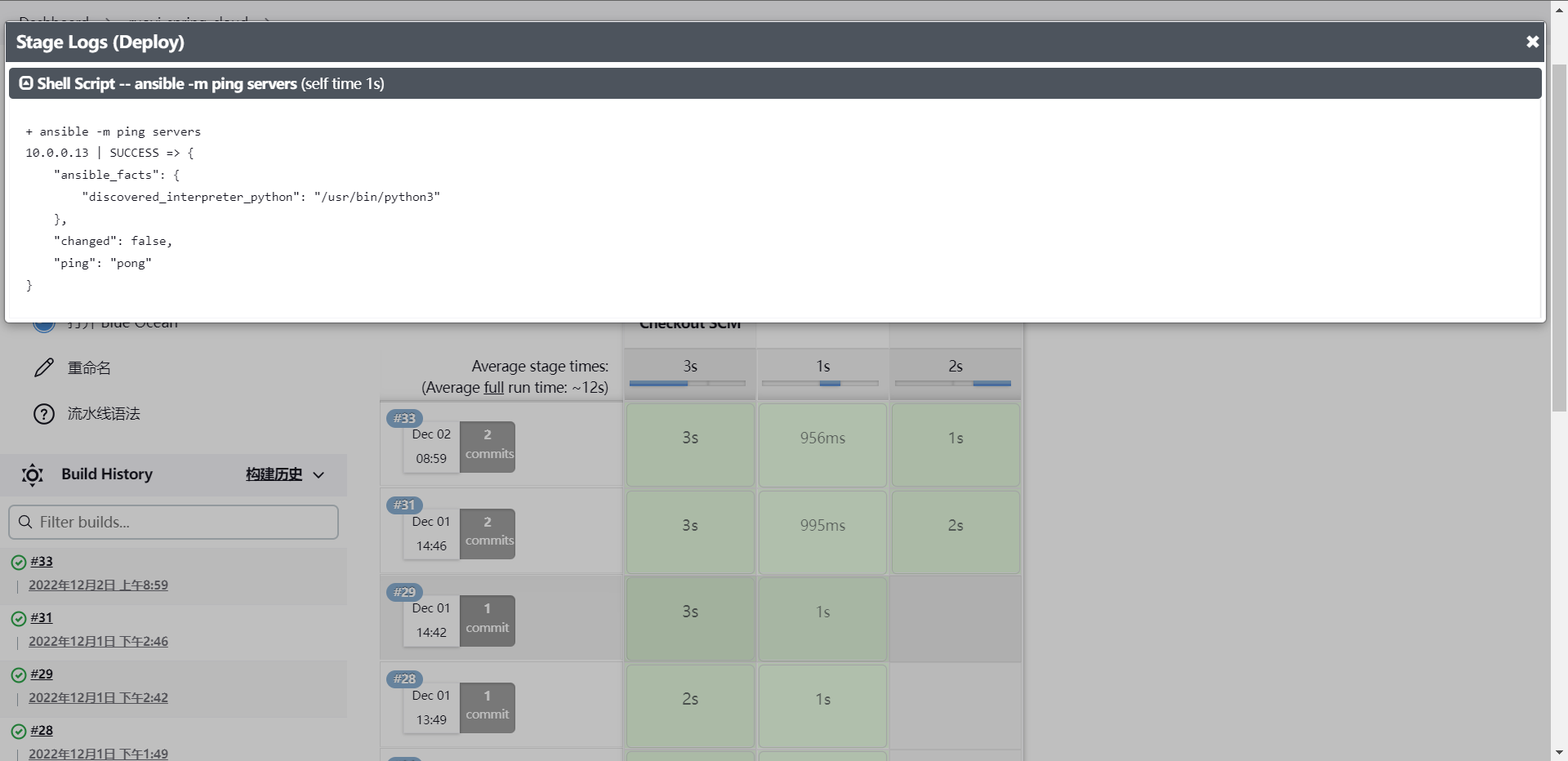

[root@cdk-server ~]# cat /etc/ansible/hosts | grep -v "#" | grep -v "^$"

[servers]

10.0.0.13

# 编写sharelibrary

package org.library

// saltstack

def SaltDeploy(hosts,command) {

sh "salt \"${hosts}\" ${command}"

}

// Ansible

def AnsibleDeploy(hosts,command) {

sh "ansible ${command} ${hosts}"

}

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

stage("Deploy") {

steps {

script{

deploy.AnsibleDeploy(hosts,command)

}

}

}

}

}

这样Ansible也就集成好了

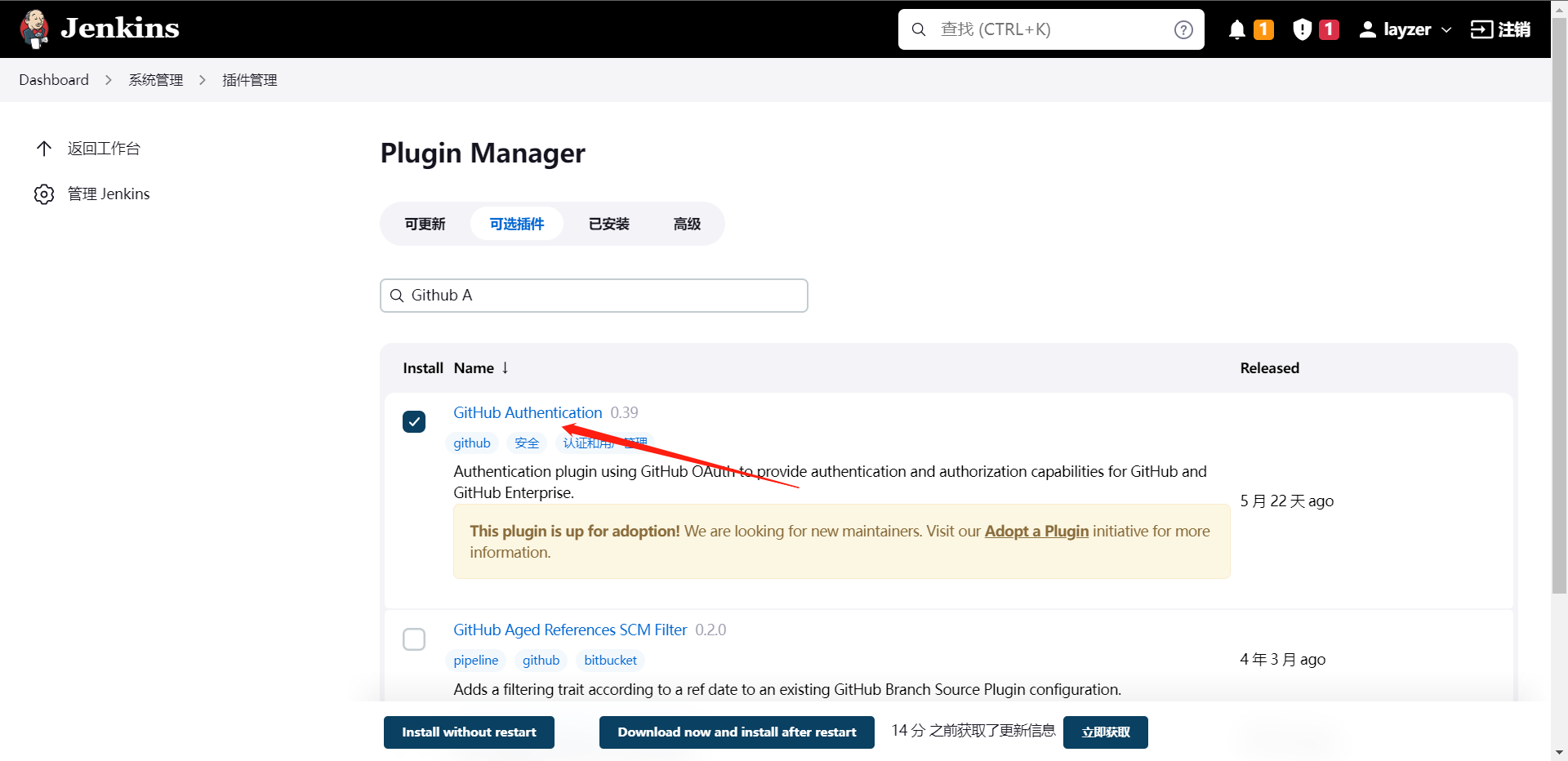

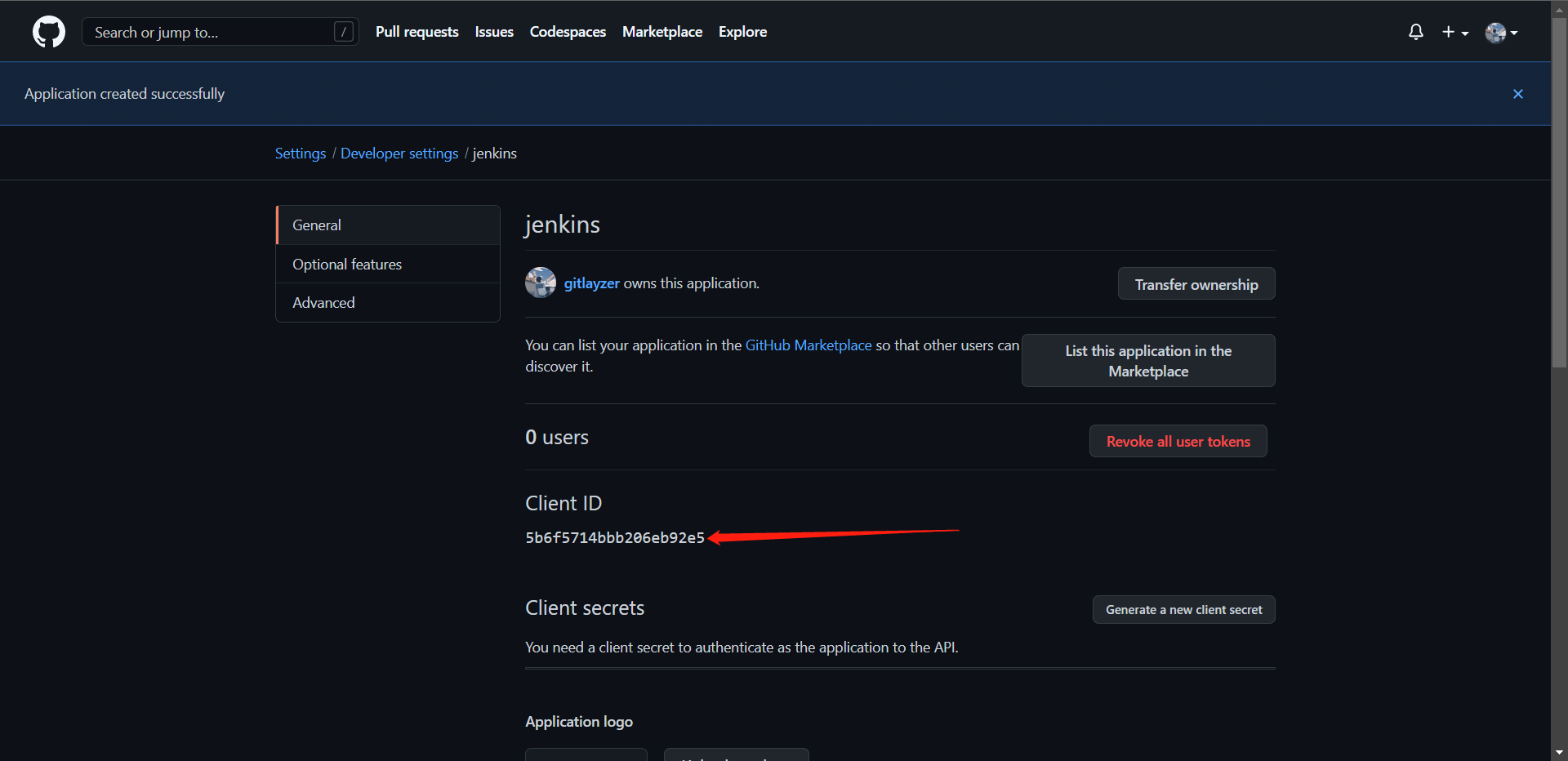

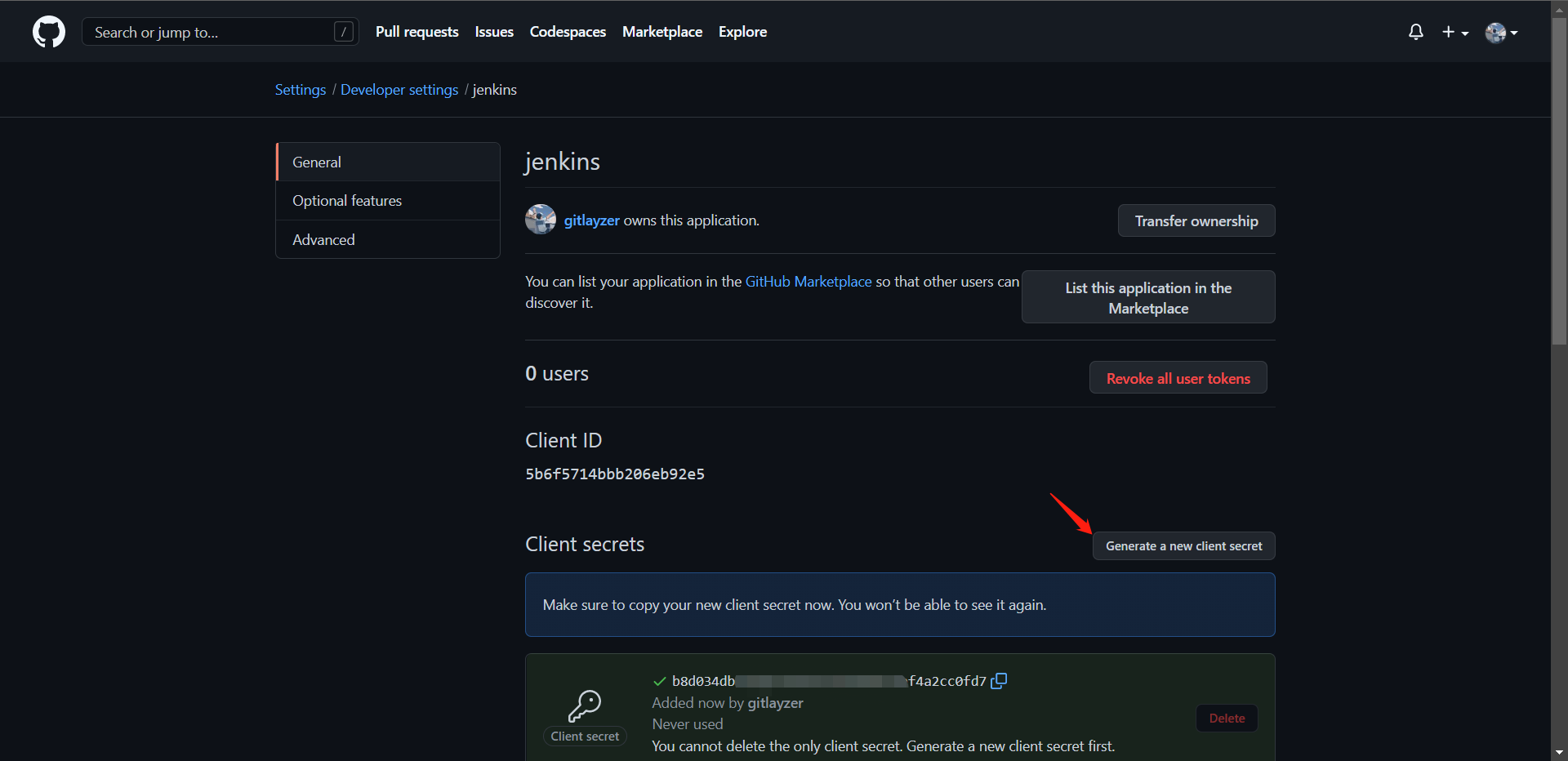

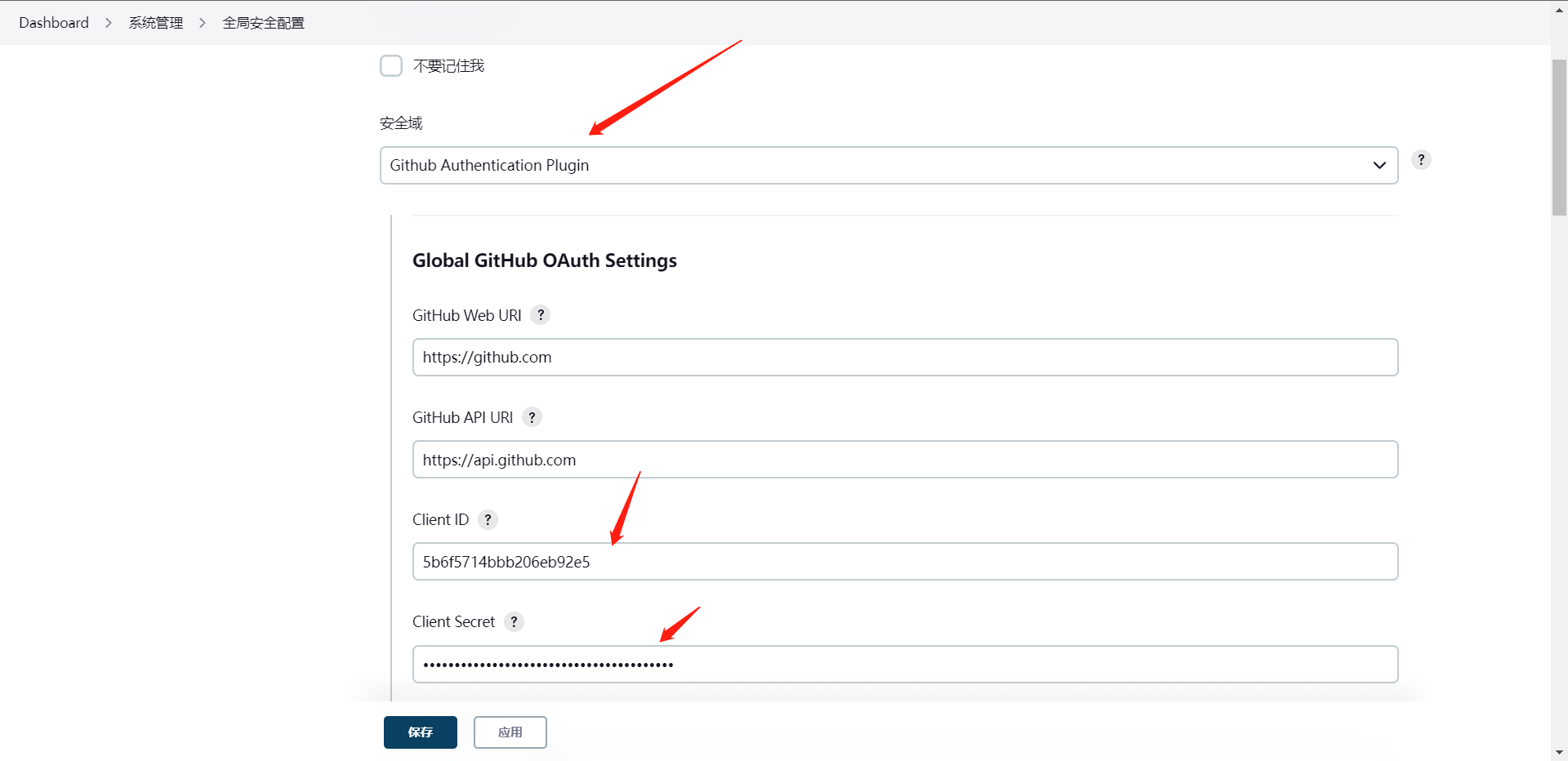

5:认证集成

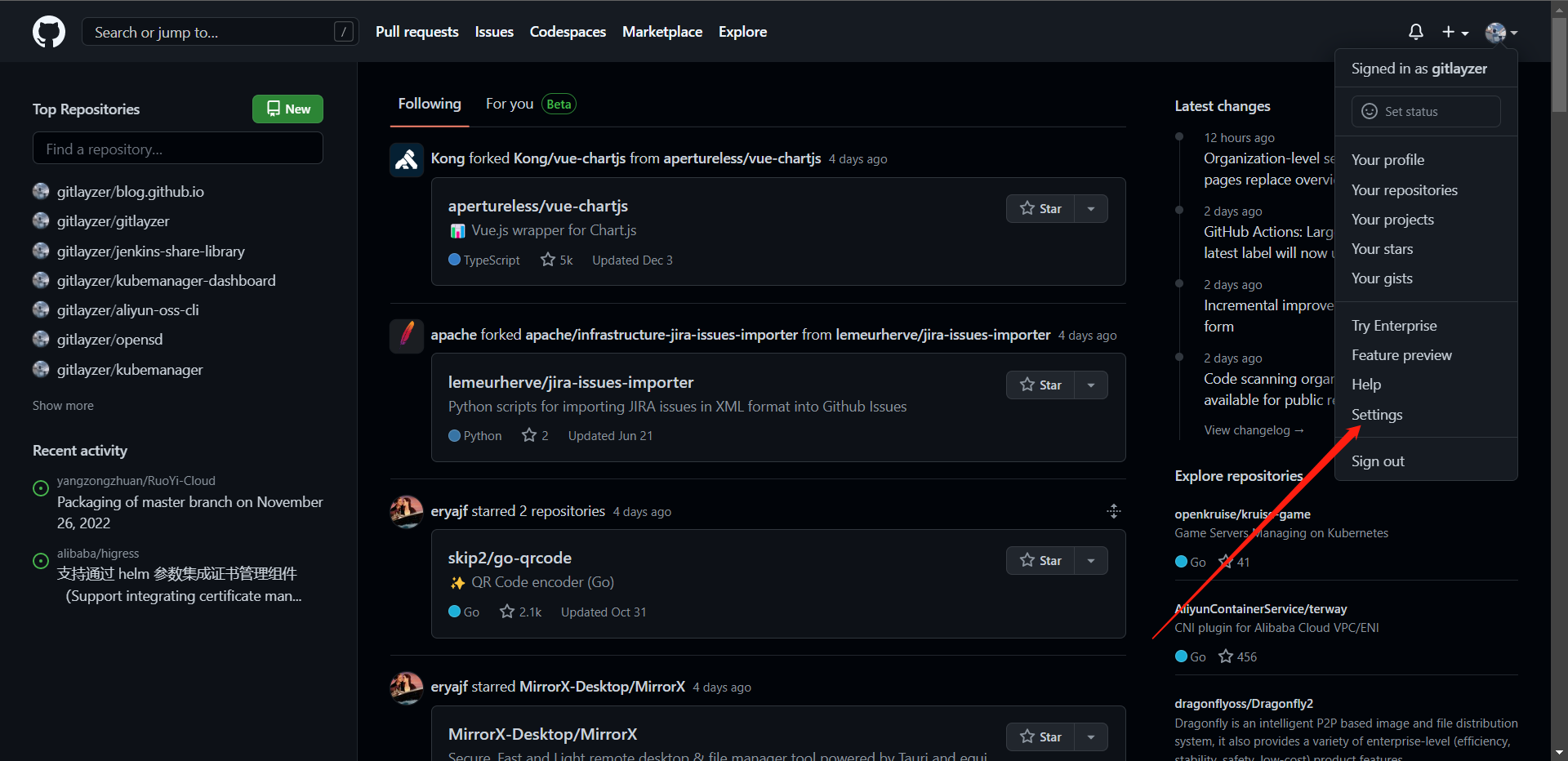

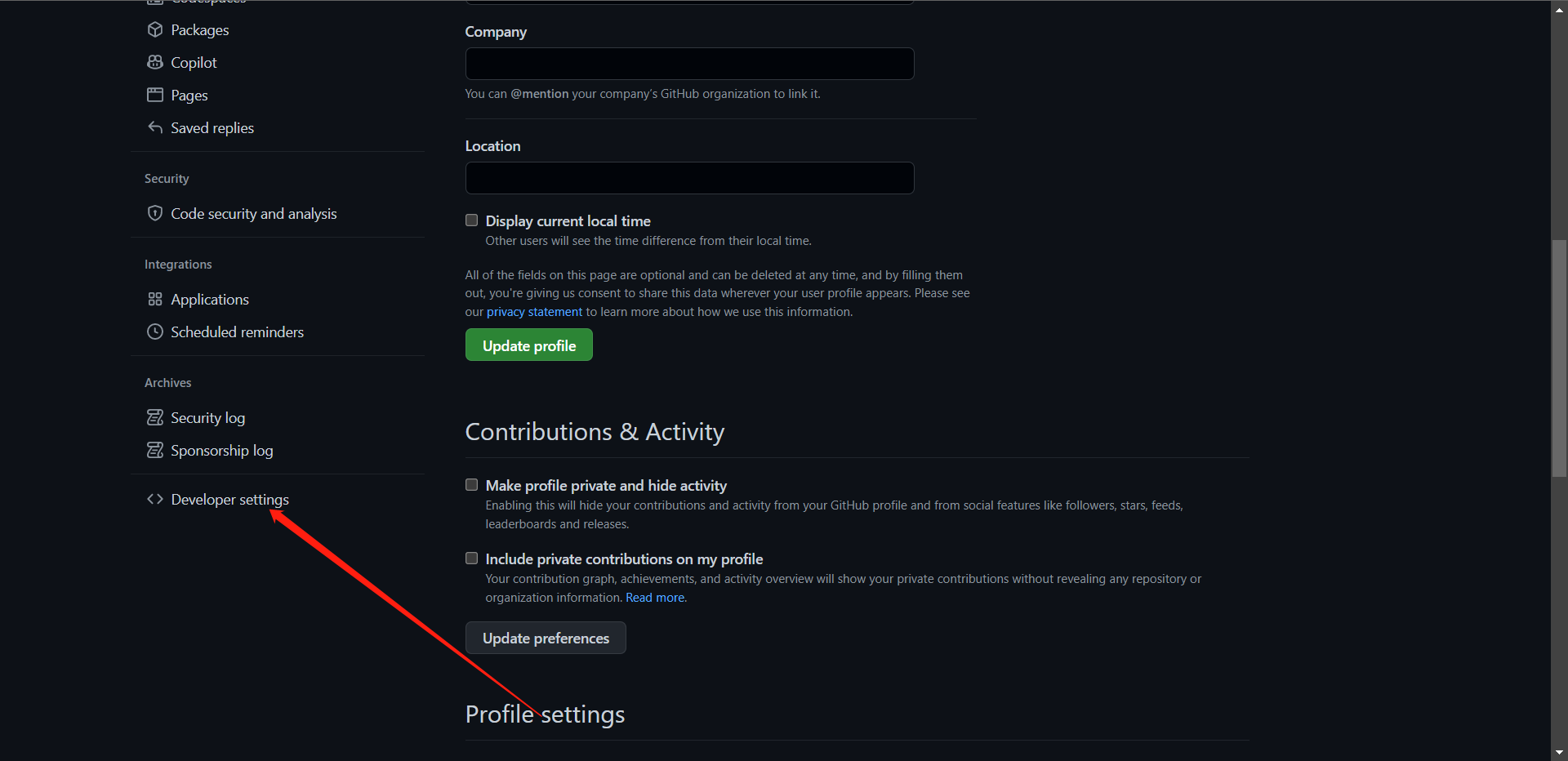

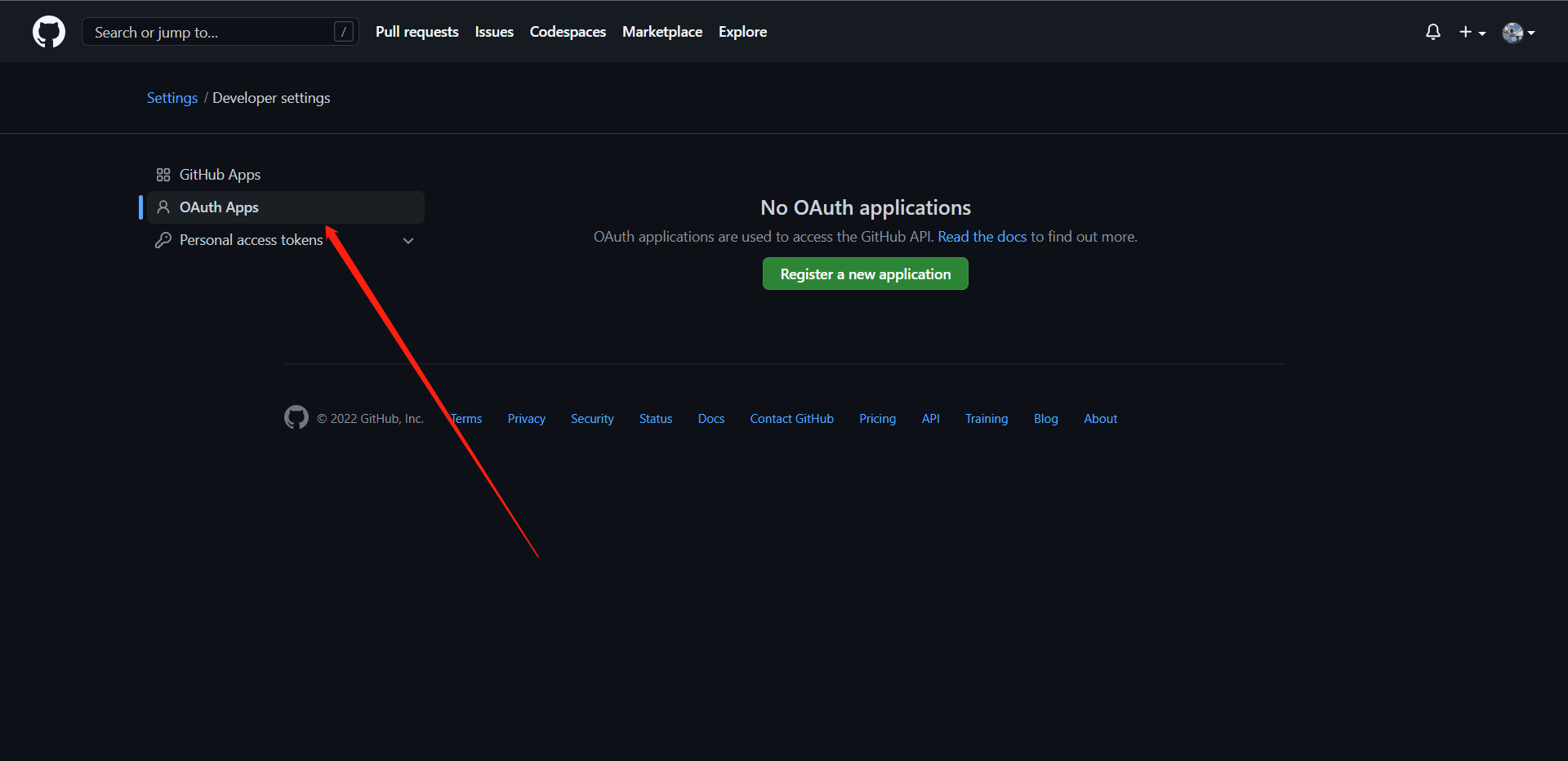

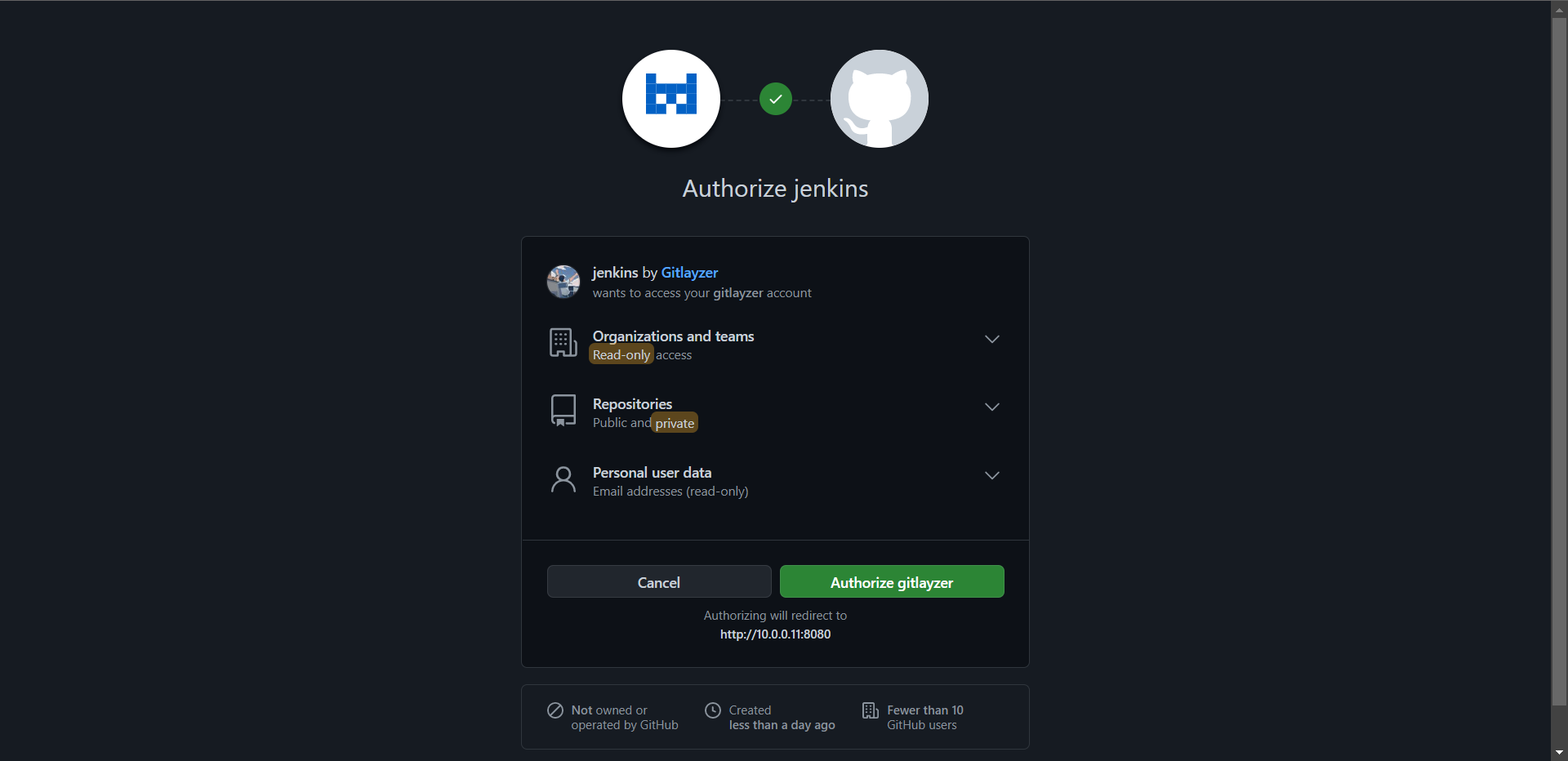

1:GitHub-SSO集成

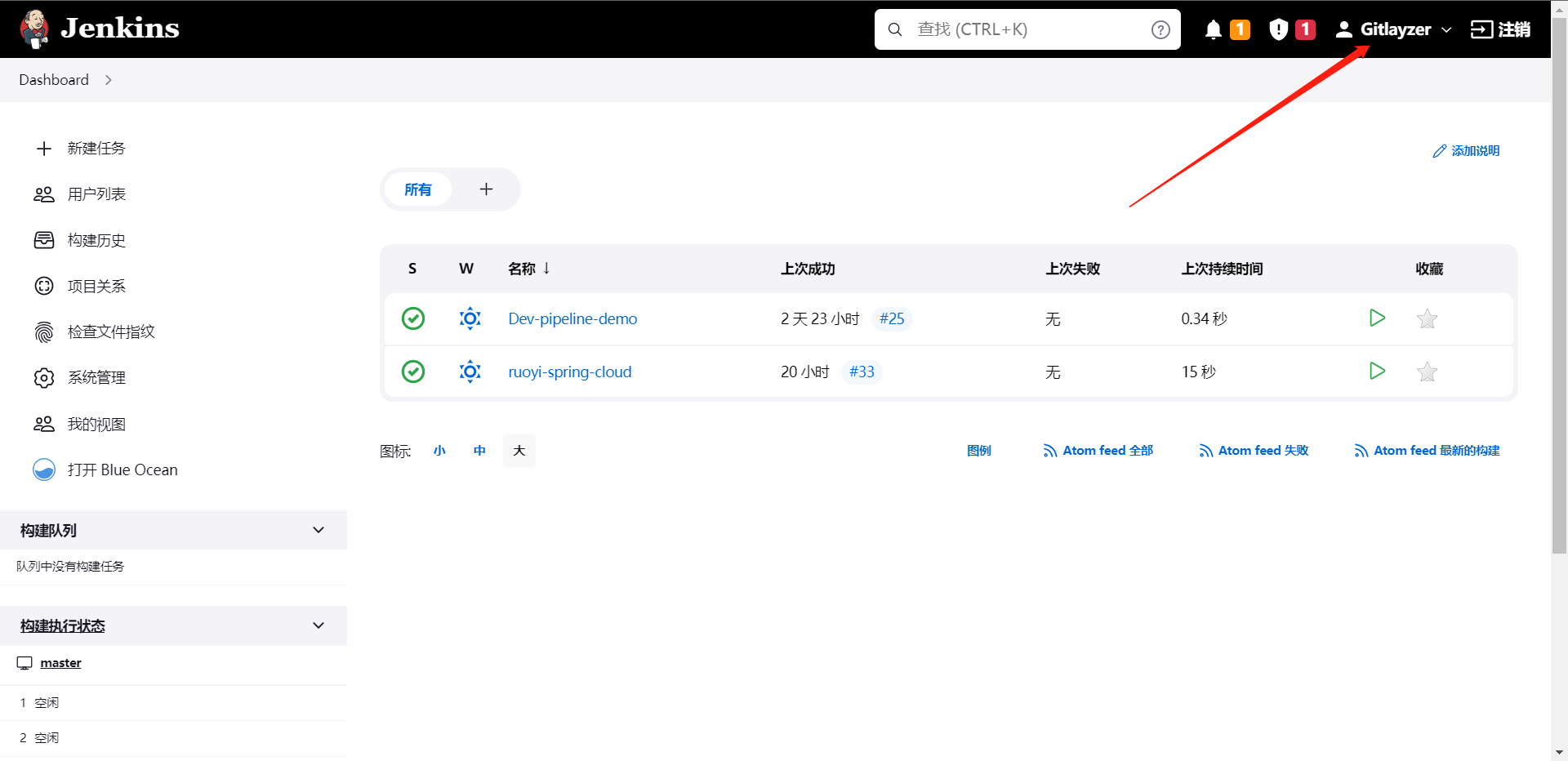

这样我们就集成了Github了,当然Gitlab基本也是一样的集成方法,那么这样就实现了公网开发集成Jenkins的登录了

6:版本控制系统集成

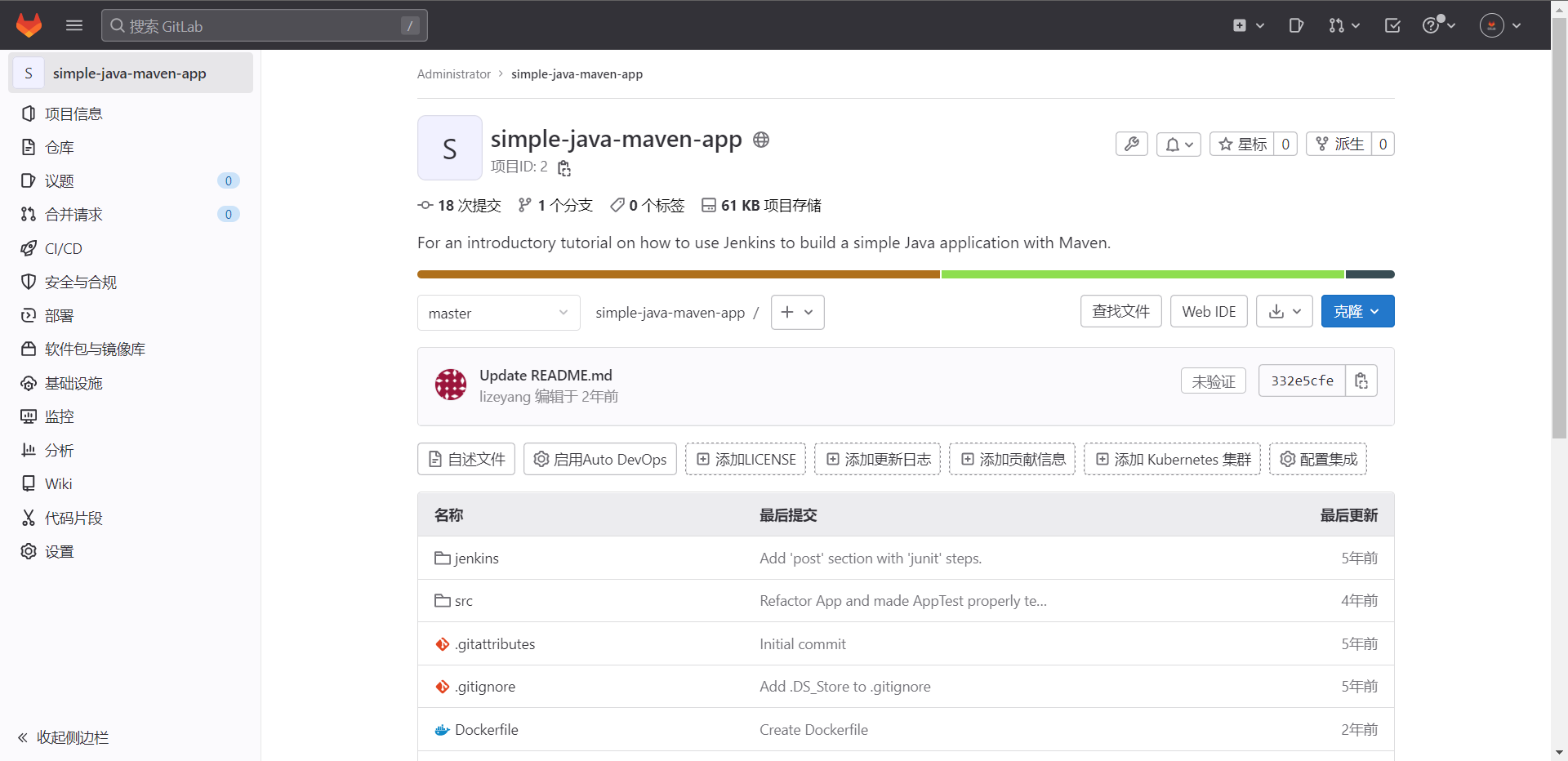

代码仓库:https://github.com/gitlayzer/simple-java-maven-app.git

# 安装gitlab,然后克隆代码到Gitlab

[root@cdk-server ~]# docker run -d --restart=always --hostname 10.0.0.11 -p 80:80 -p 2222:22 --privileged=true gitlab/gitlab-ce:latest

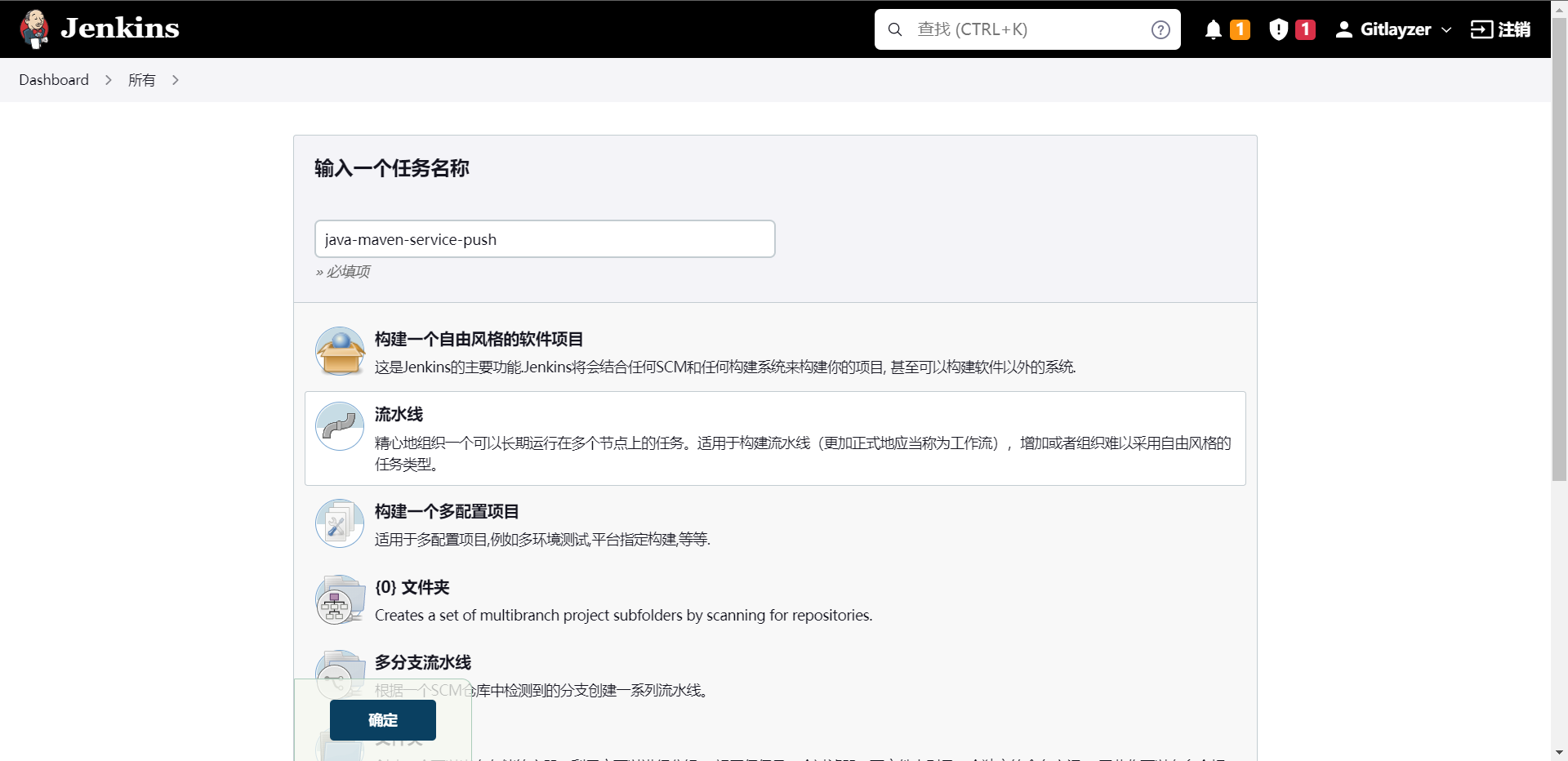

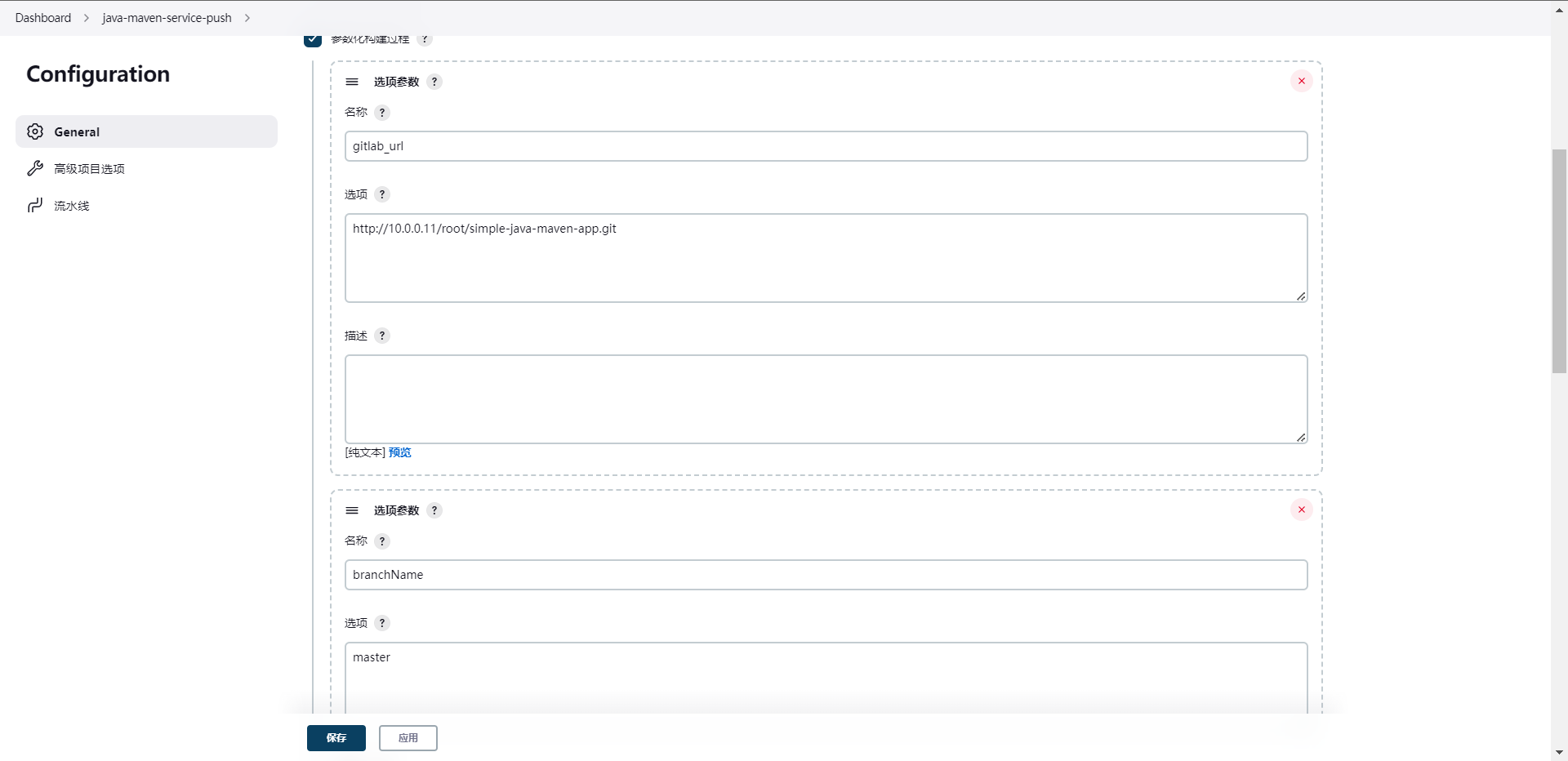

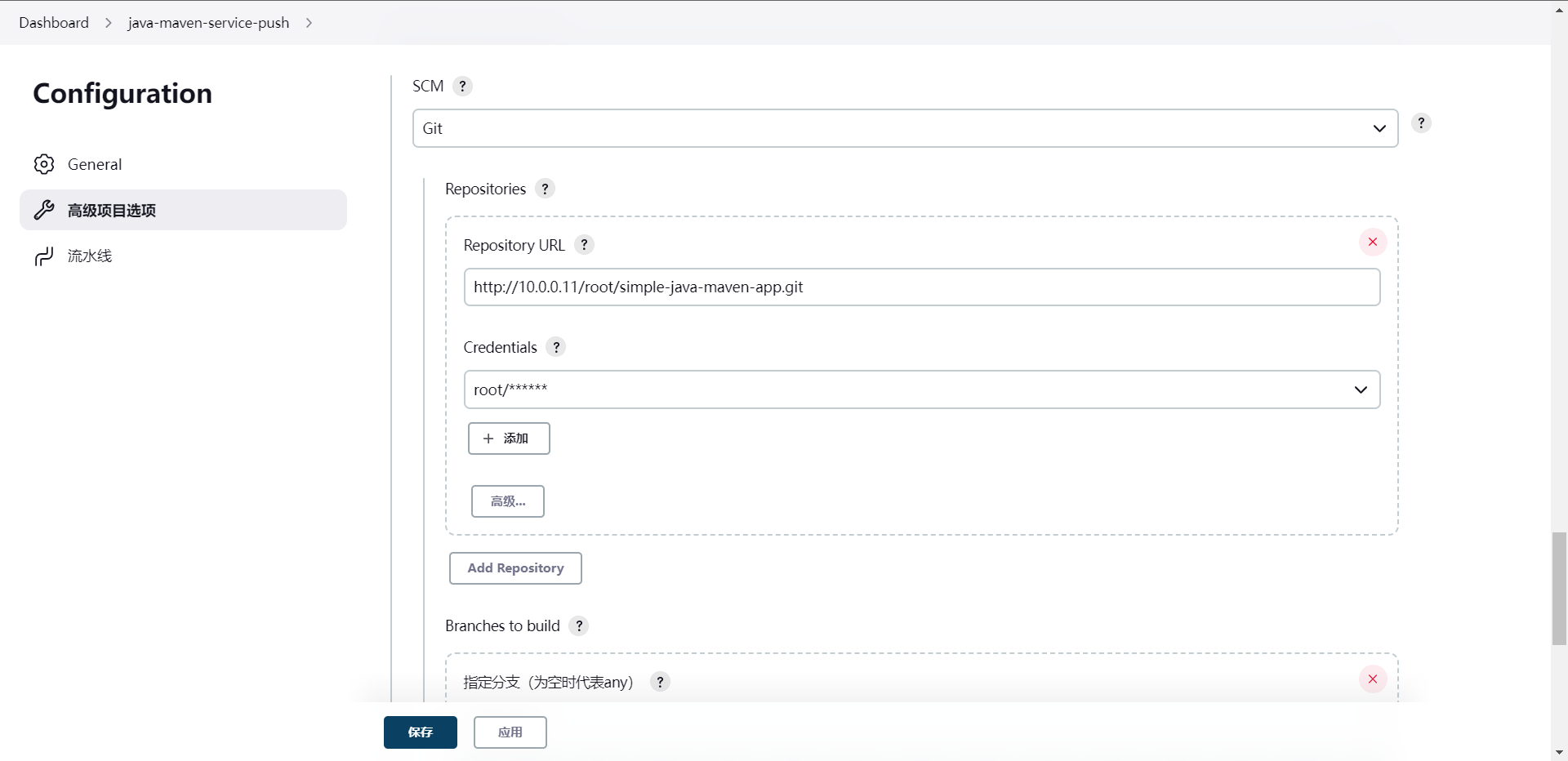

去Jenkins创建一个mvn的项目

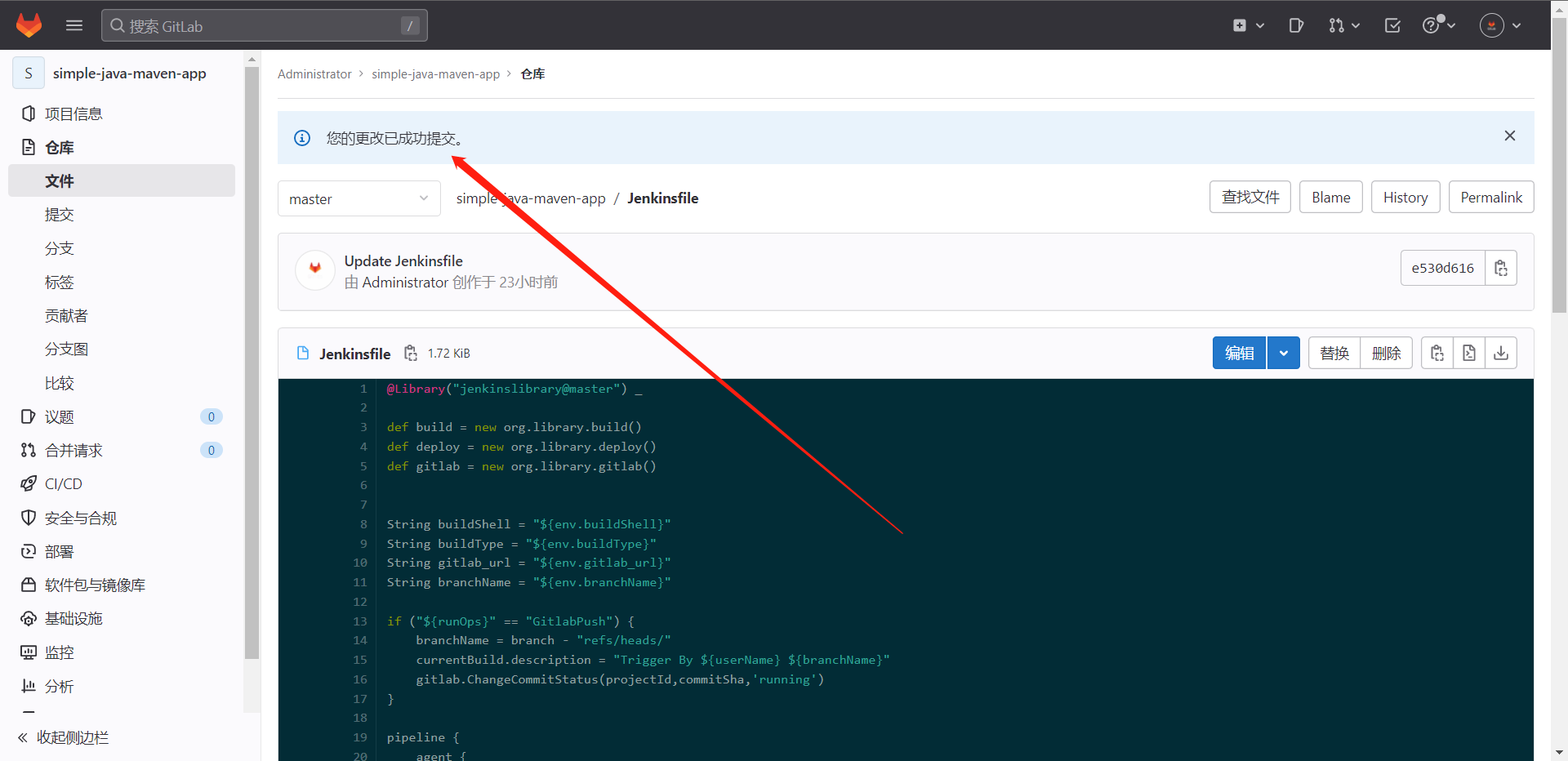

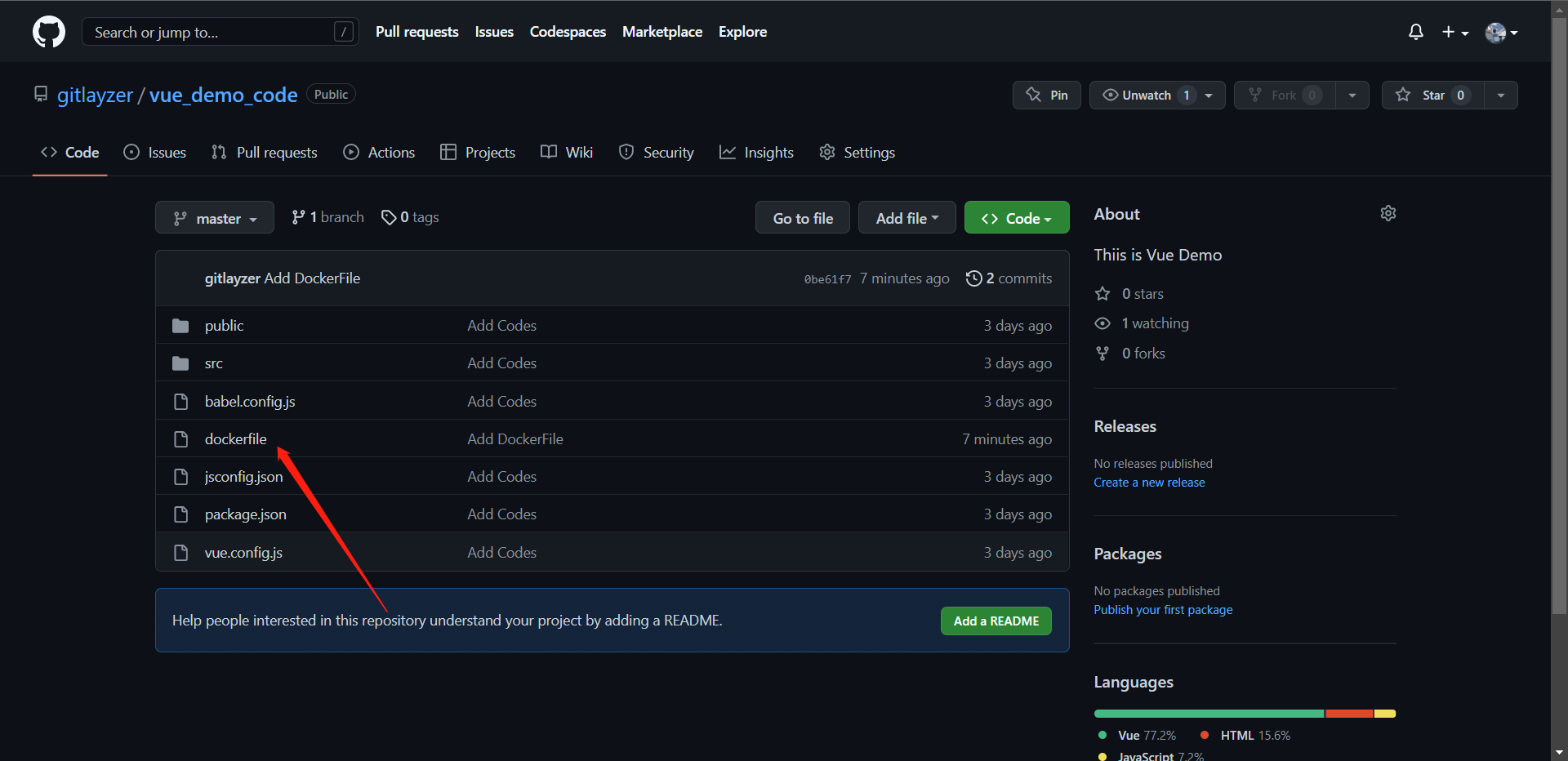

这个时候我们去修改一下Gitlab项目的JenkinsFile

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

String gitlab_url = "${env.gitlab_url}"

String branchName = "${env.branchName}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("CheckOut") {

steps {

script{

checkout([$class: 'GitSCM', branches: [[name: "${branchName}"]], extensions: [], userRemoteConfigs: [[credentialsId: 'gitlab', url: "${gitlab_url}"]]])

}

}

}

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

}

}

这里我们还是引用了原来的ShareLibrary的,我们把这个JenkinsFile放到Gitlab的代码内,去跑一下流水线看看是否可以拉到代码

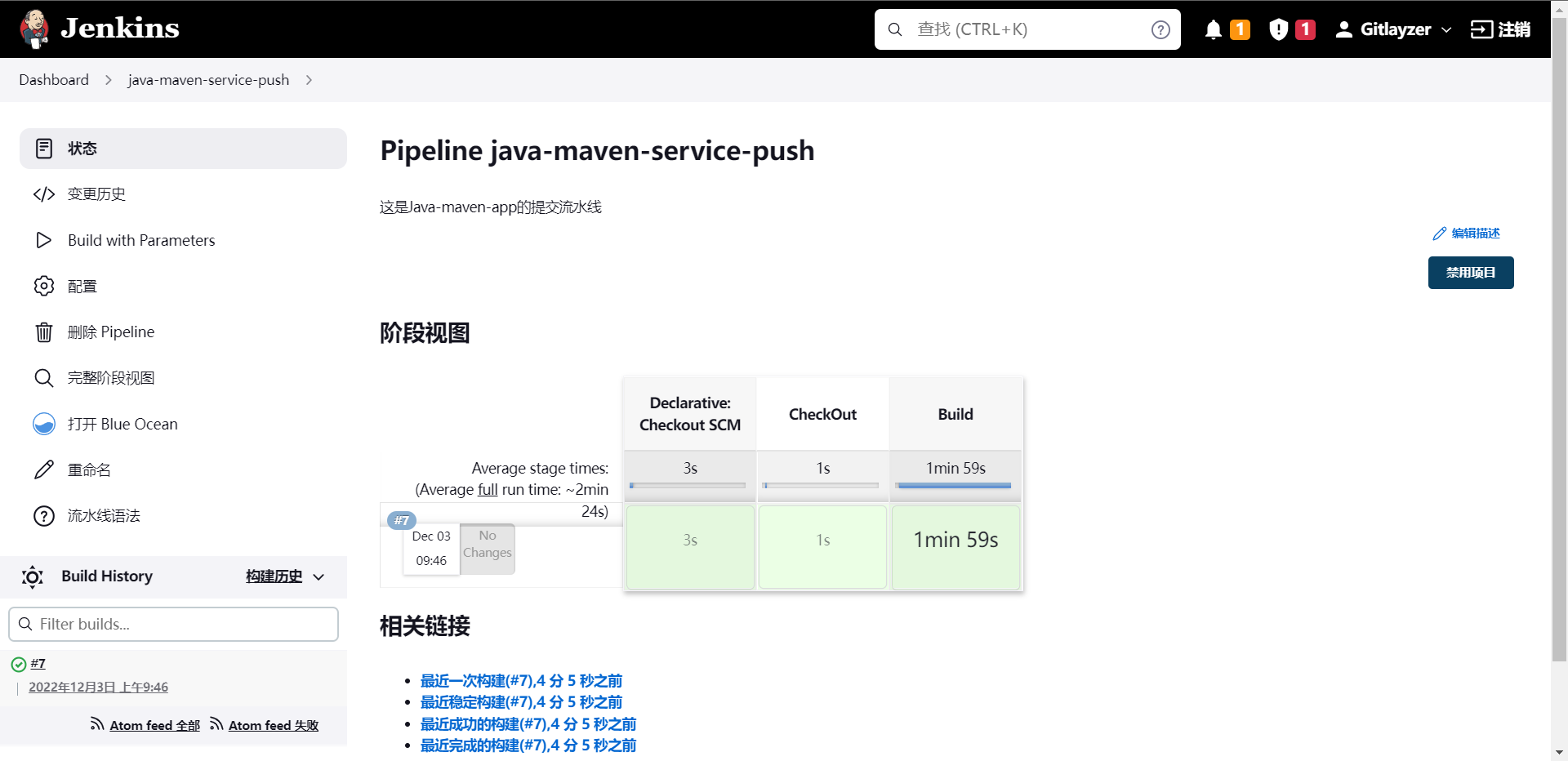

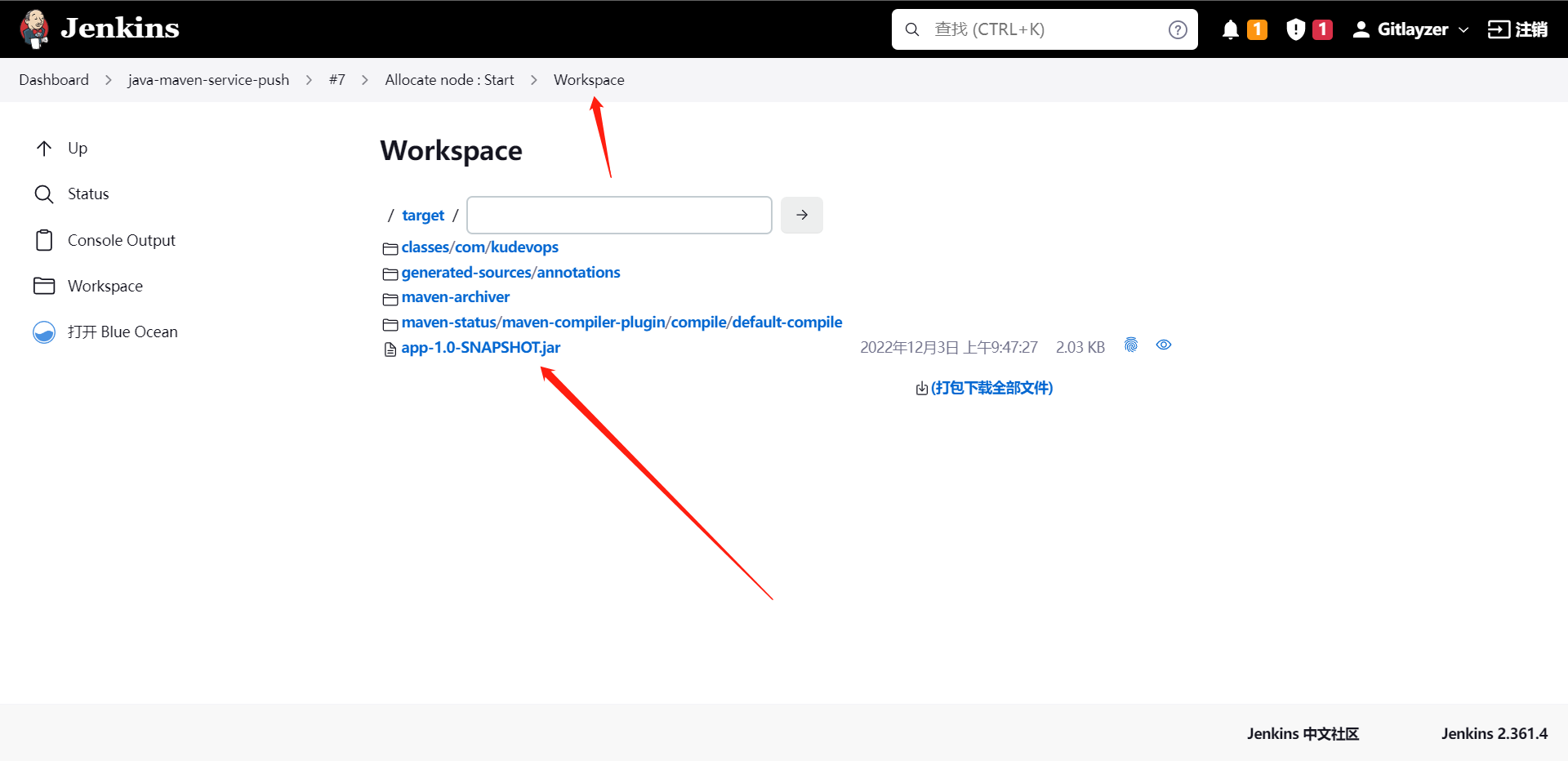

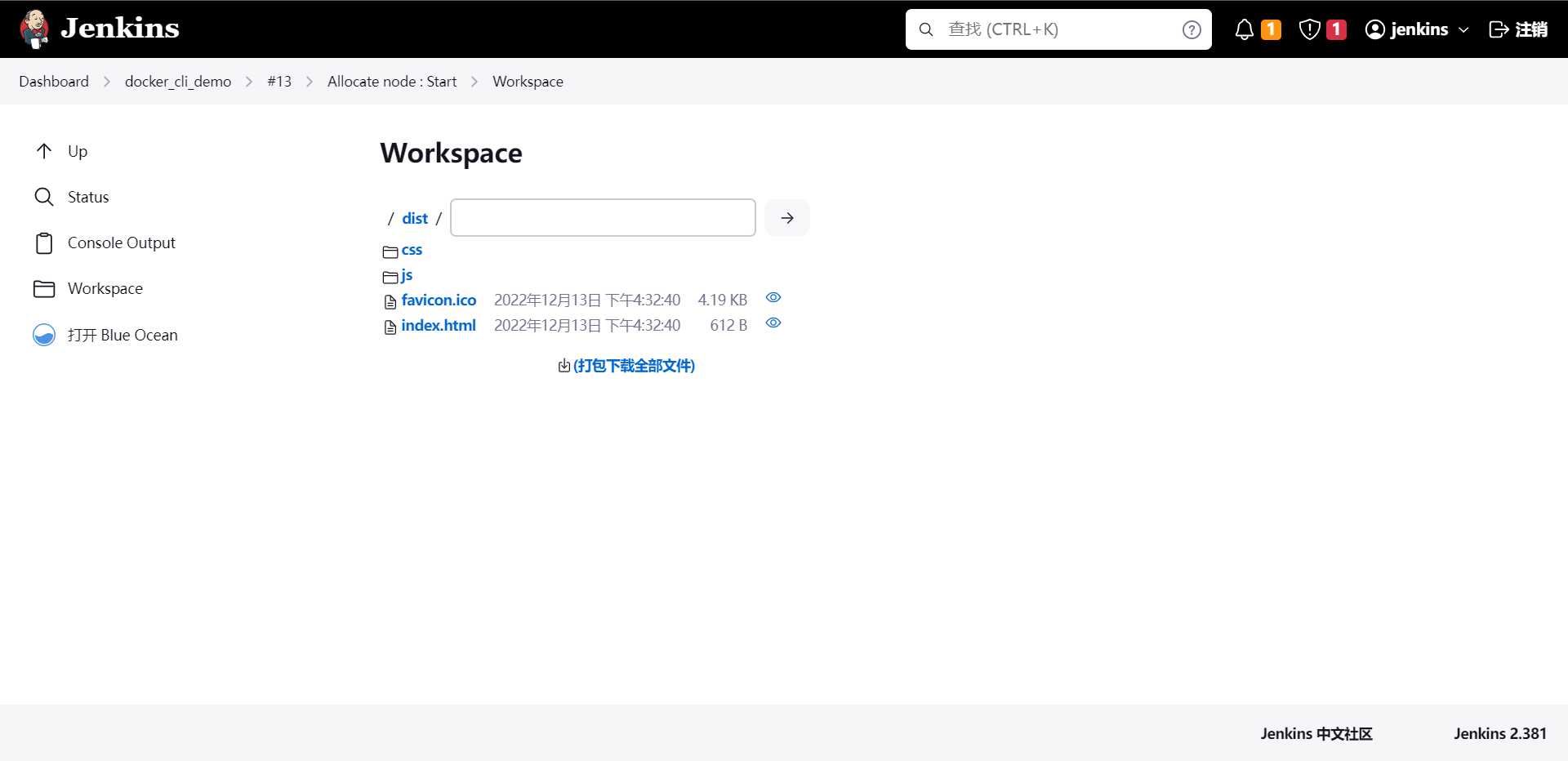

这个是我们打包出来的制品。

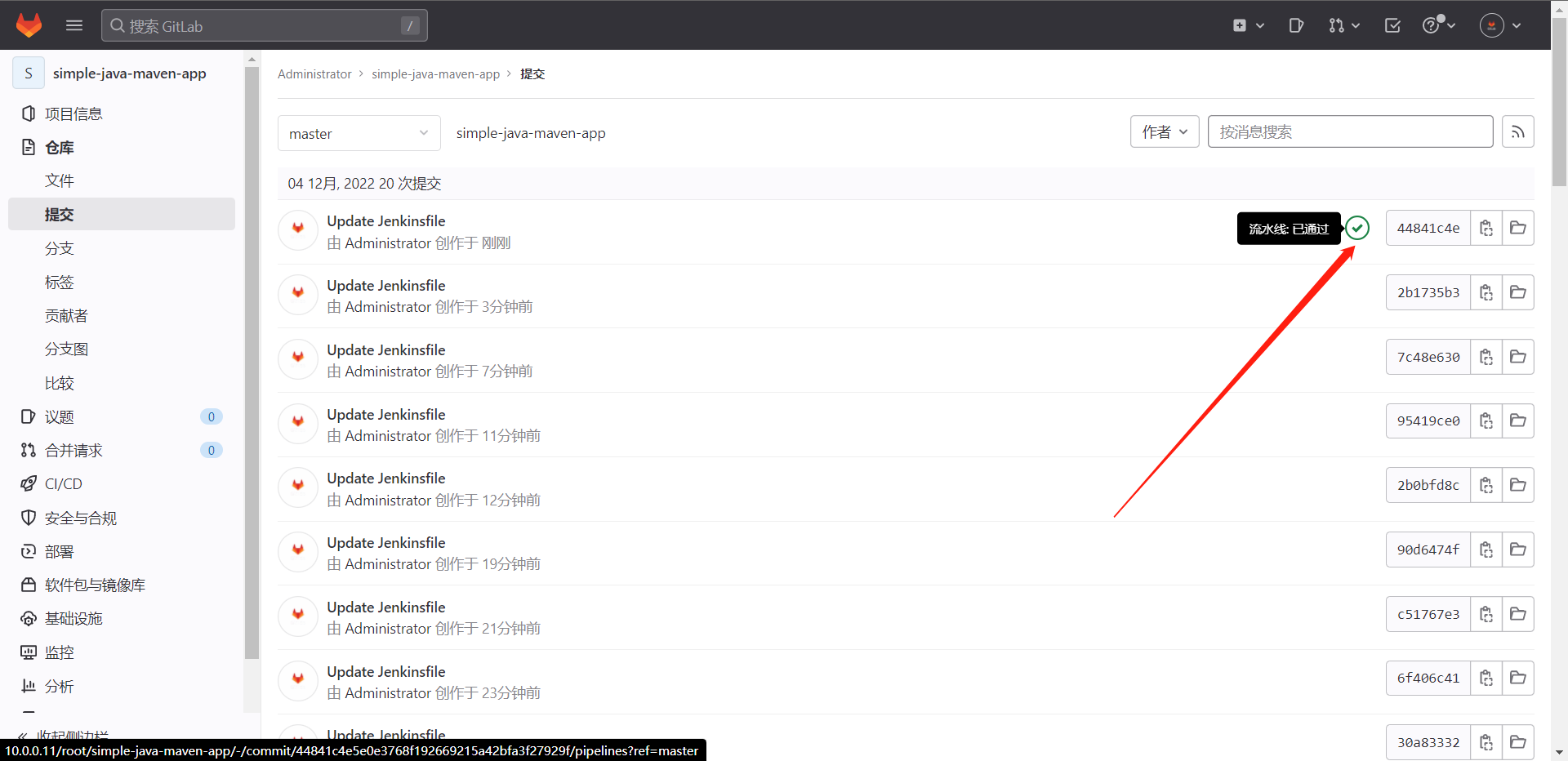

Pipeline状态:["pending","running","success","failed","canceled"]

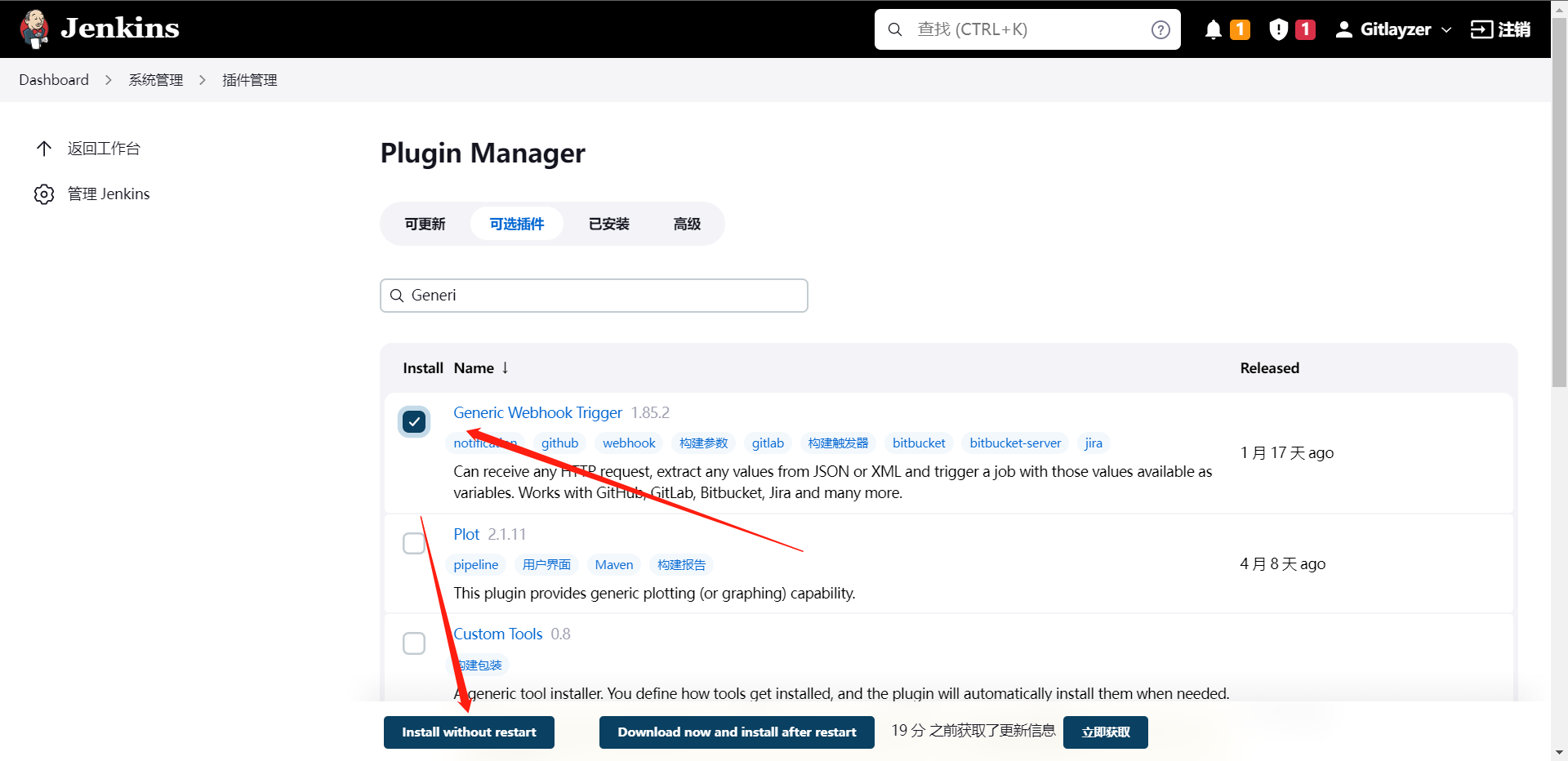

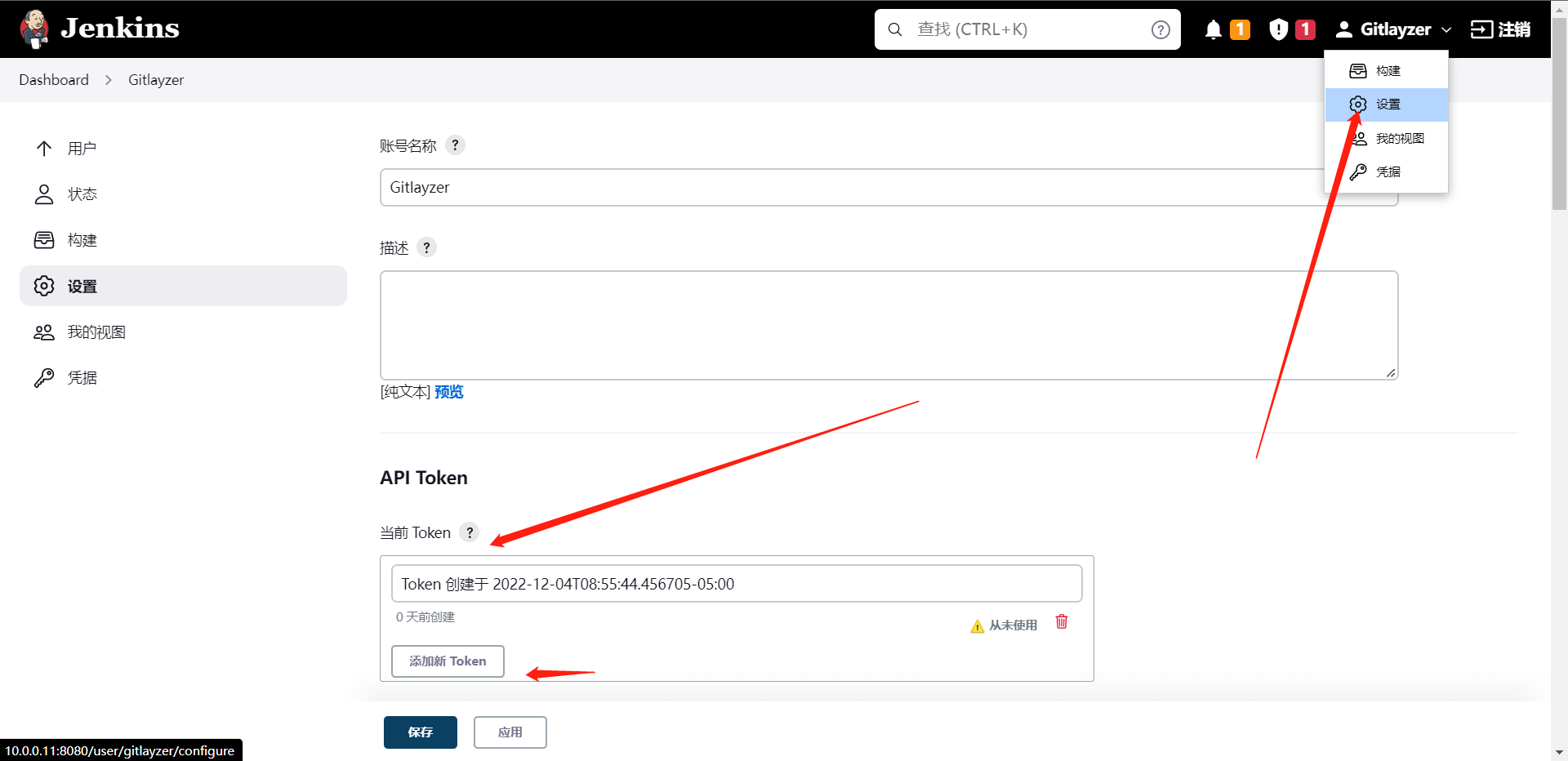

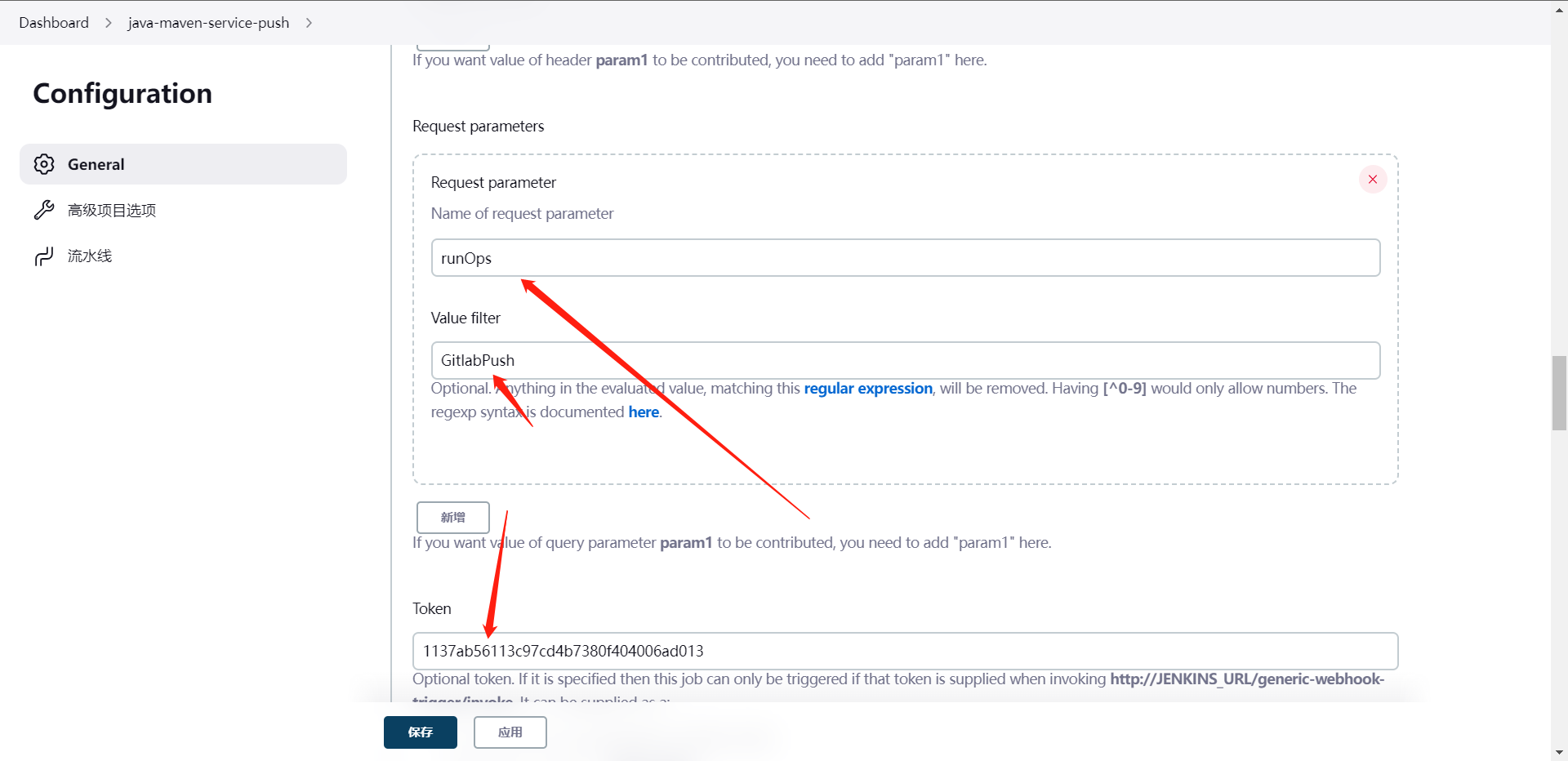

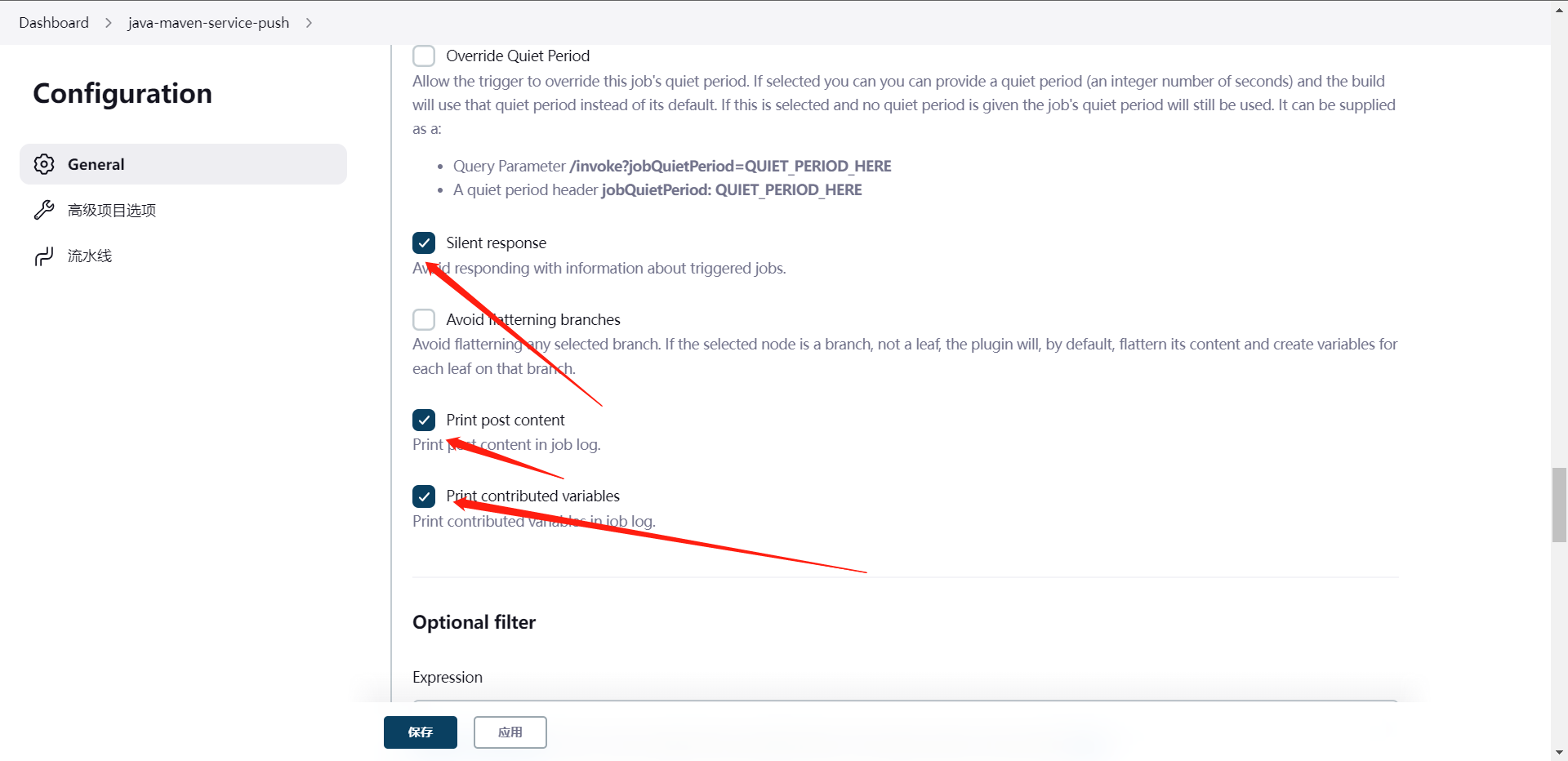

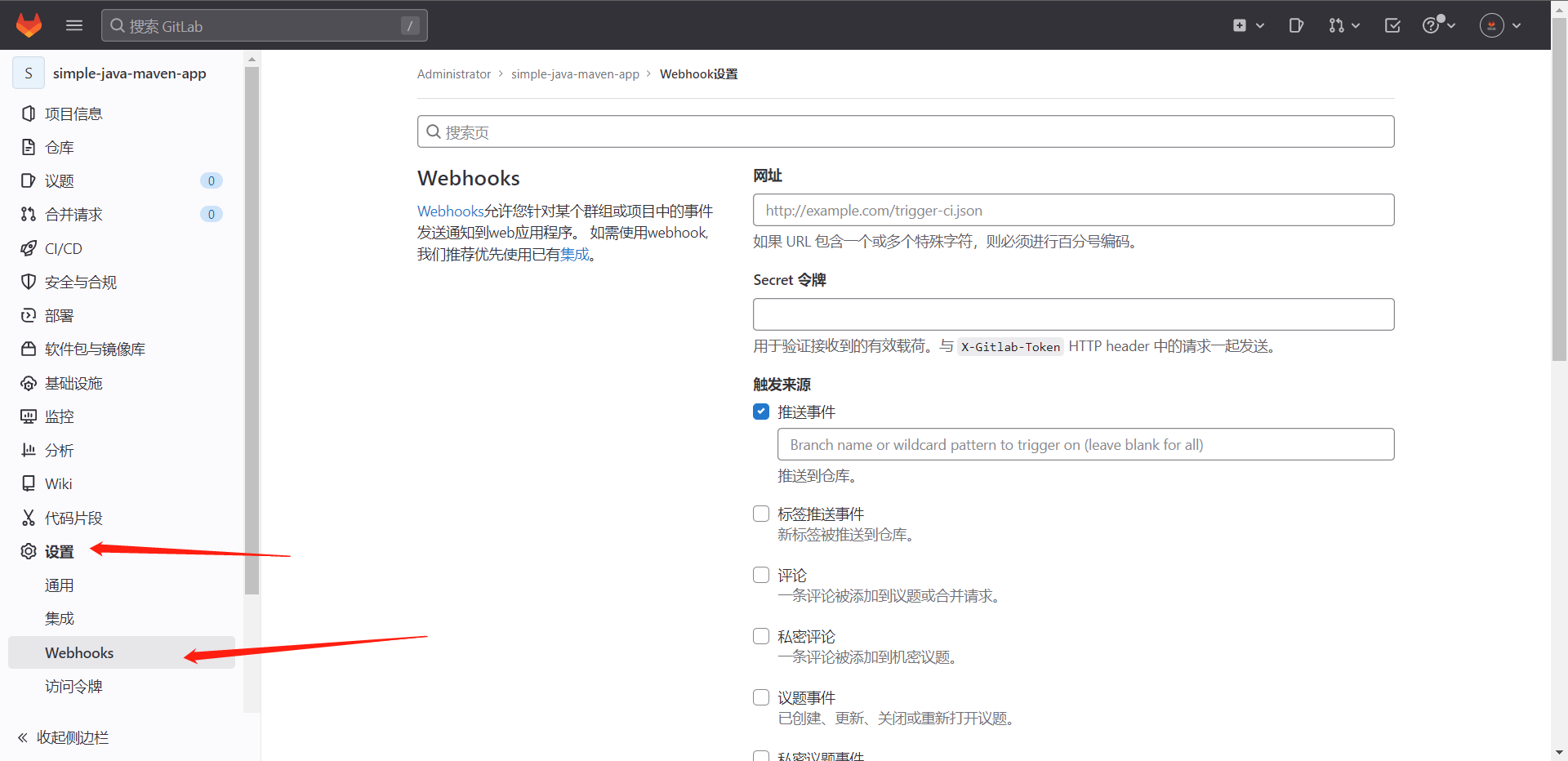

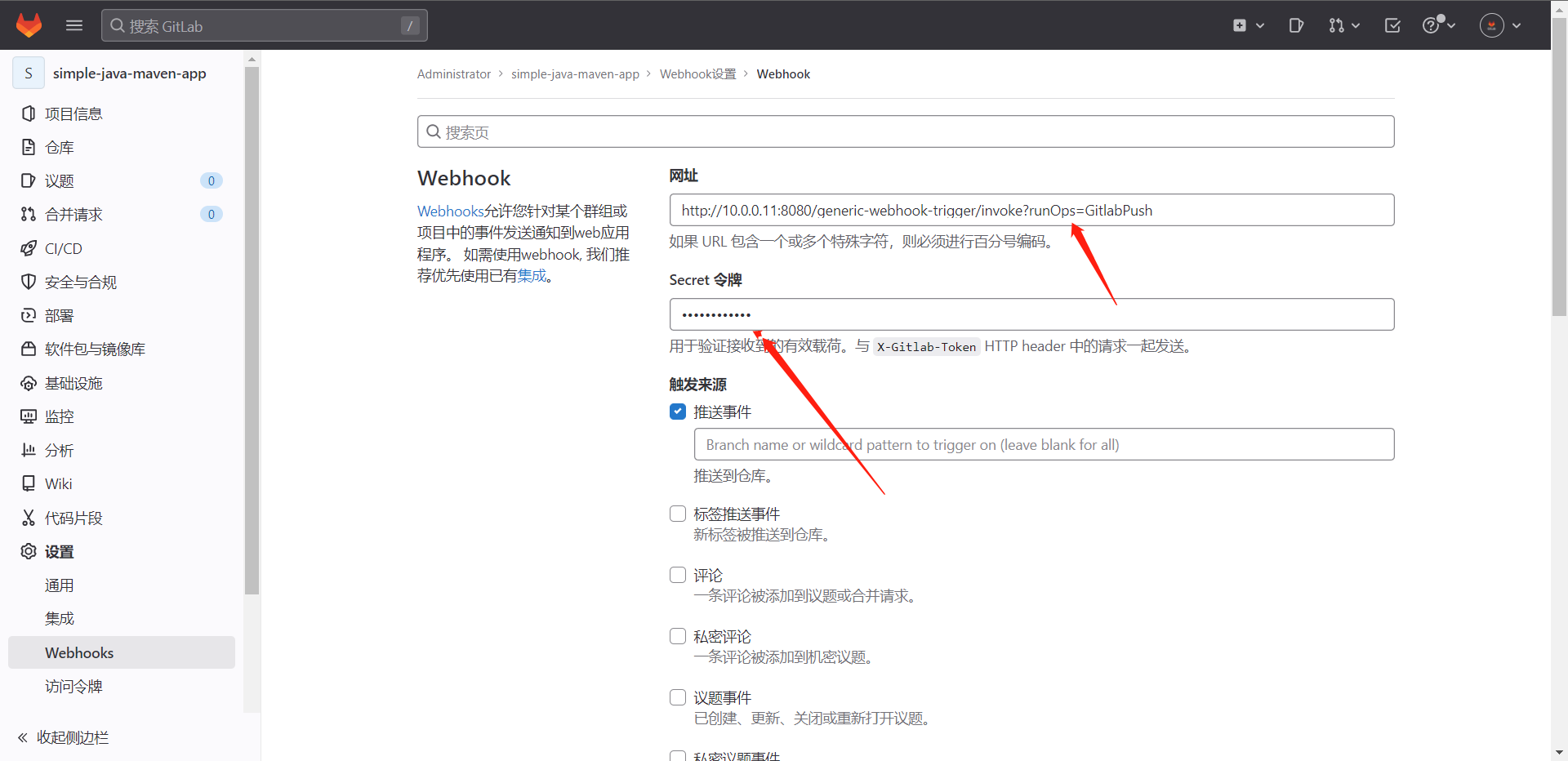

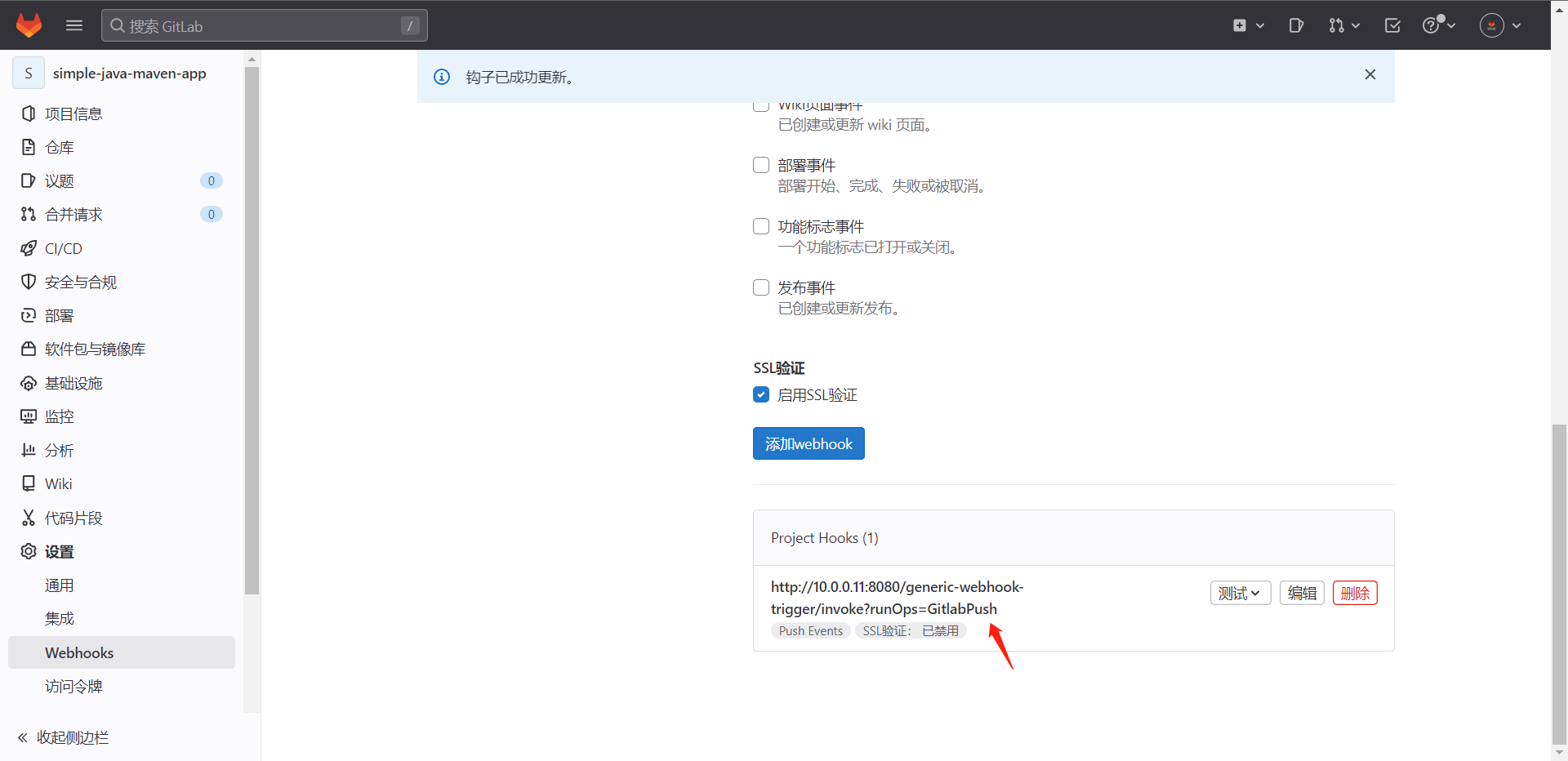

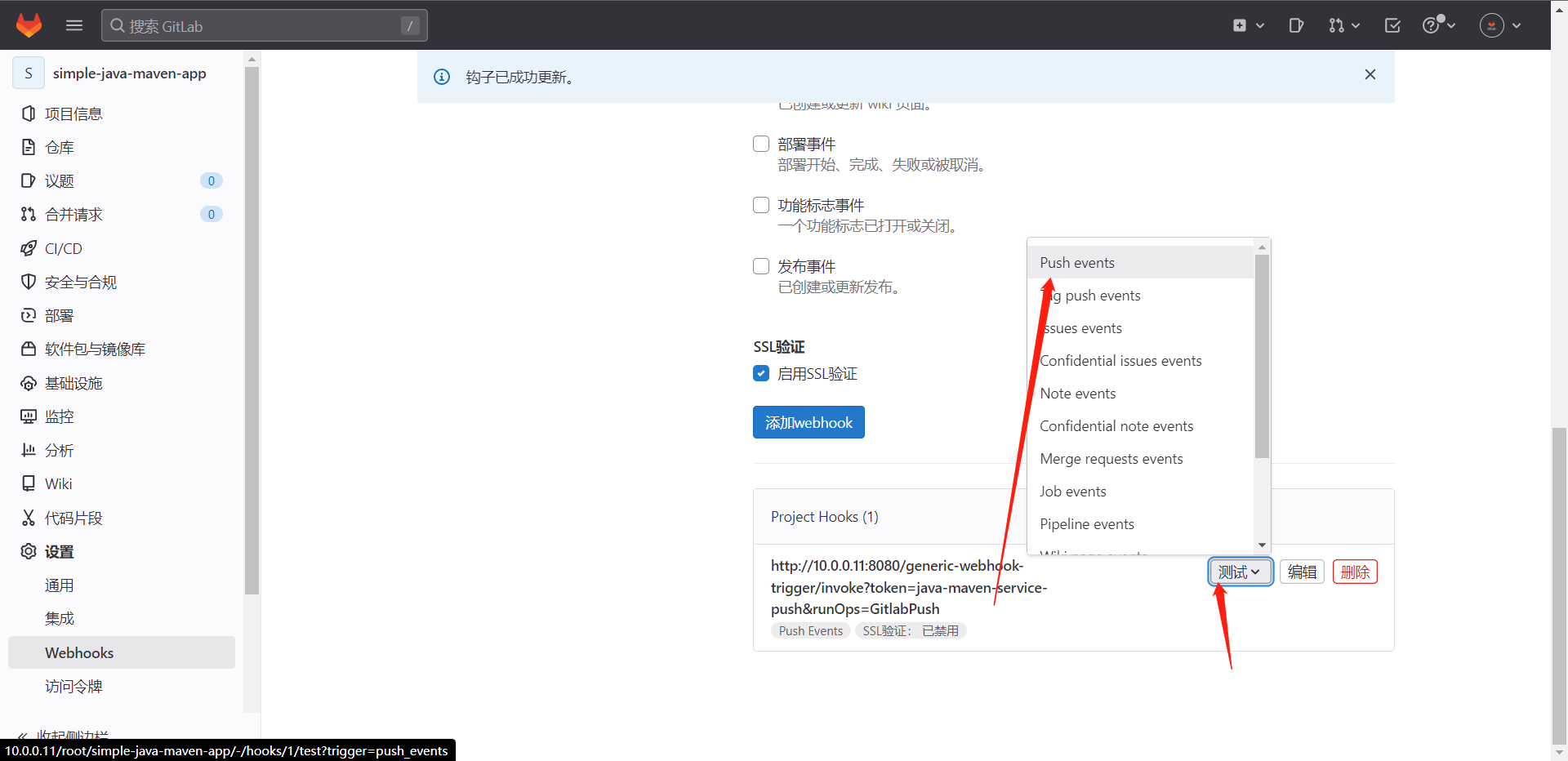

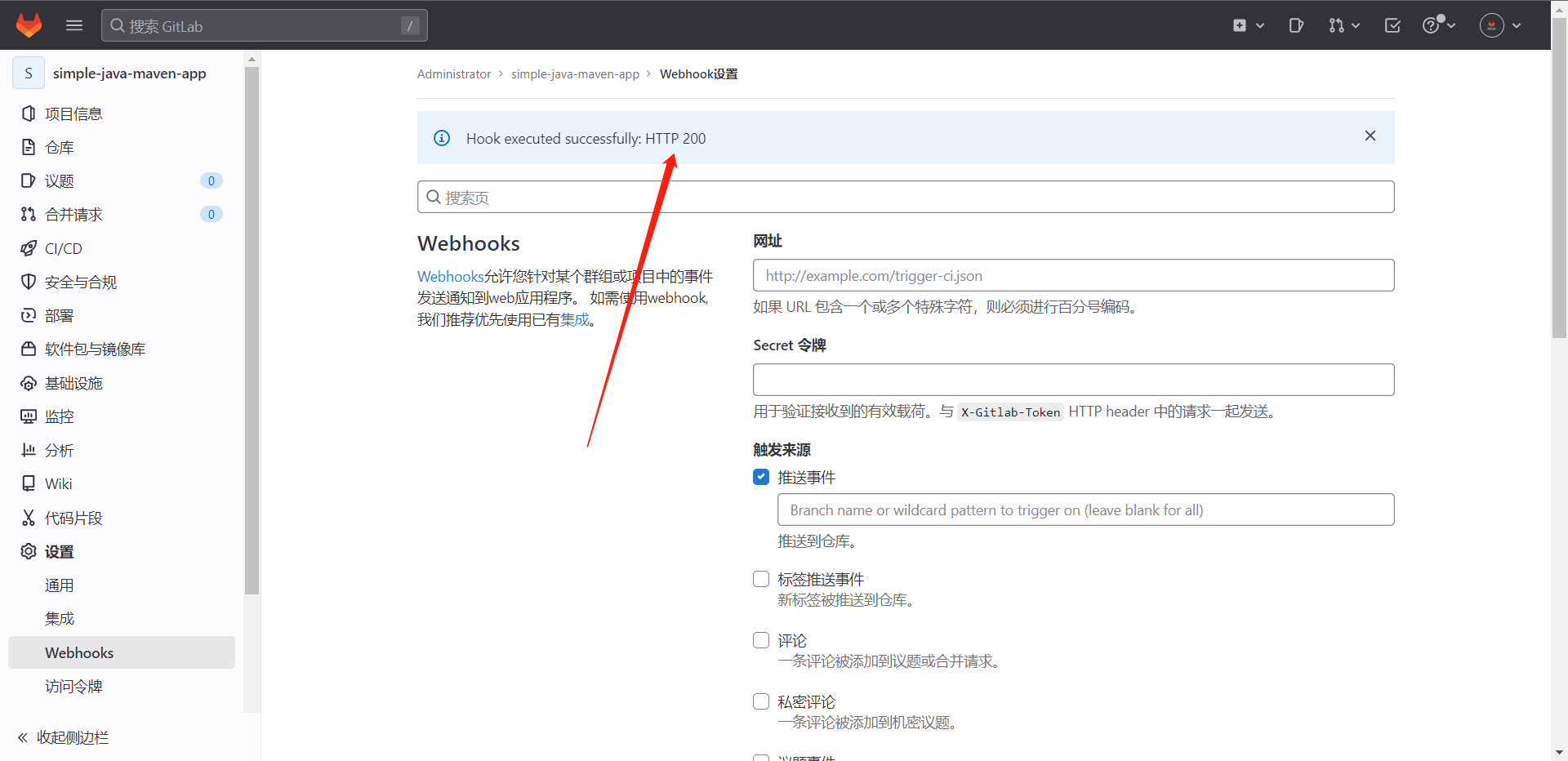

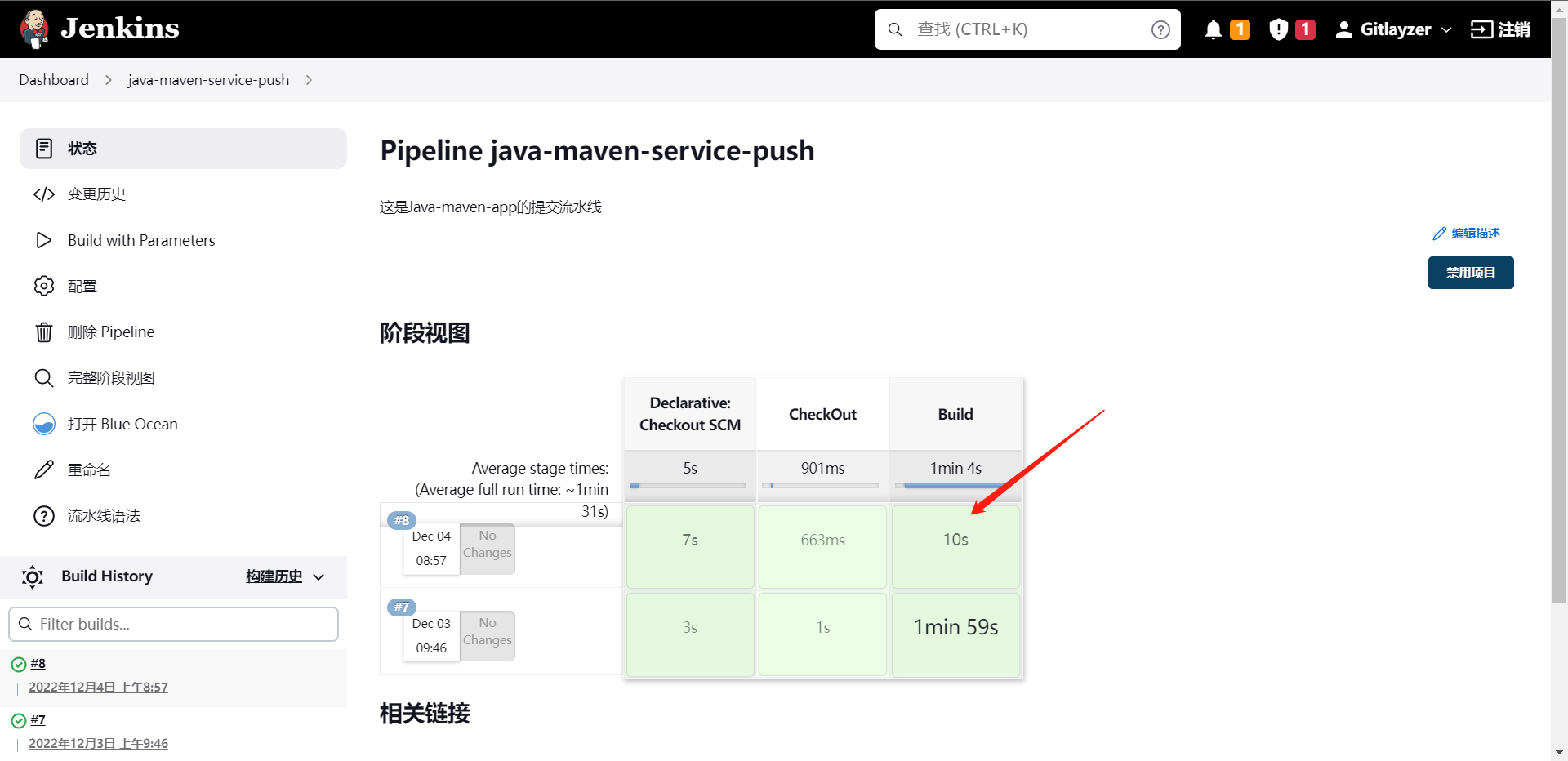

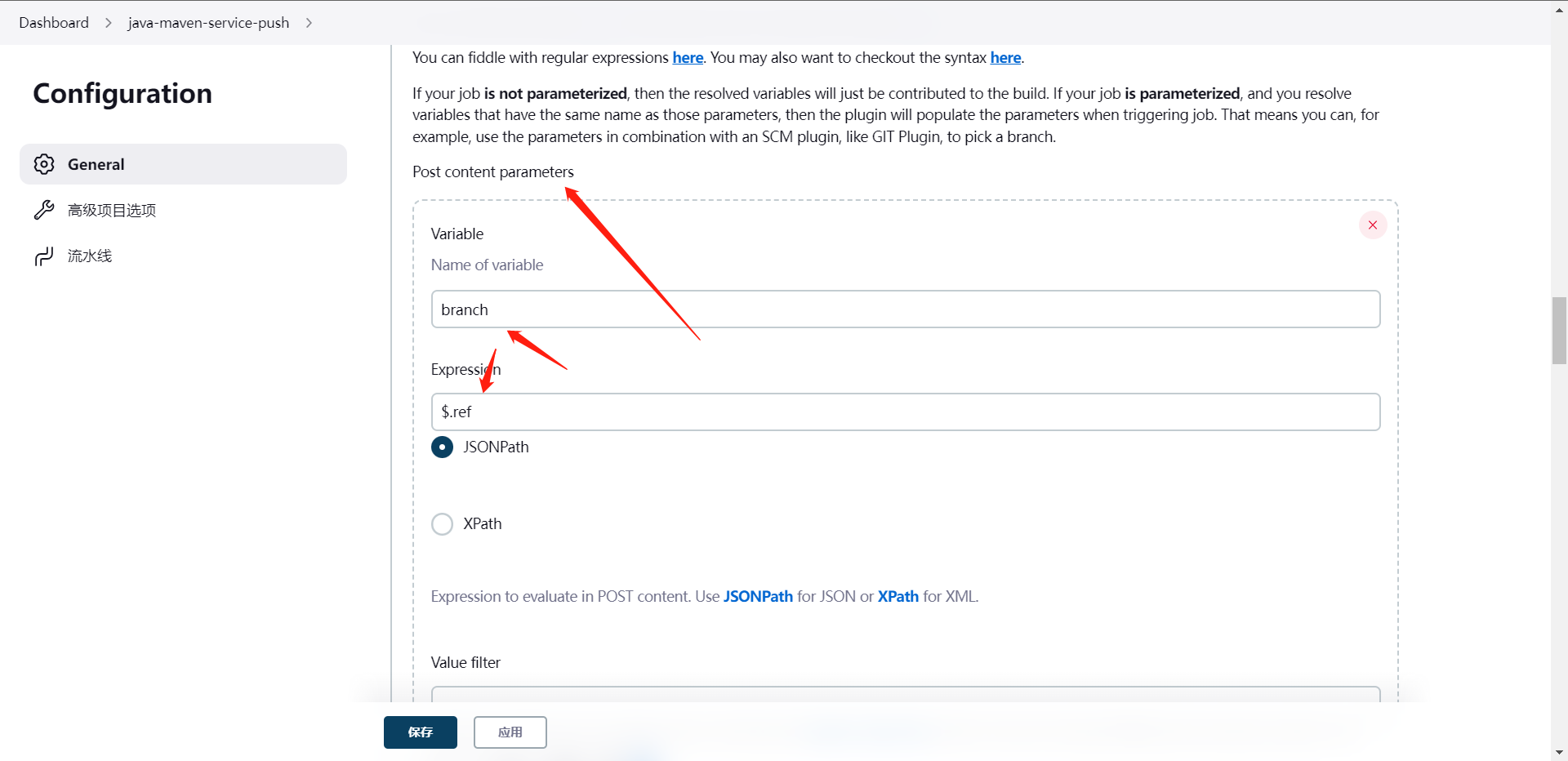

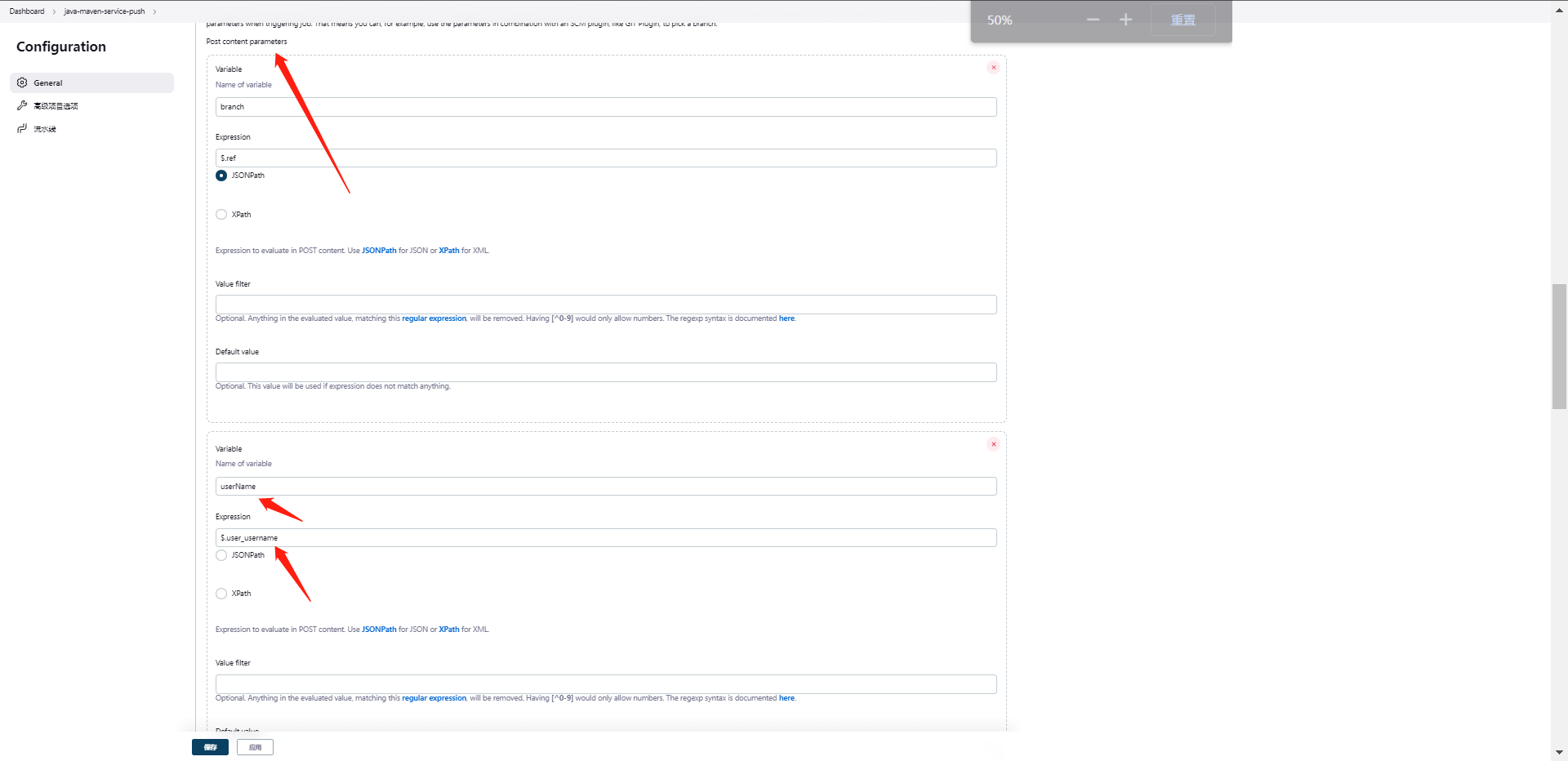

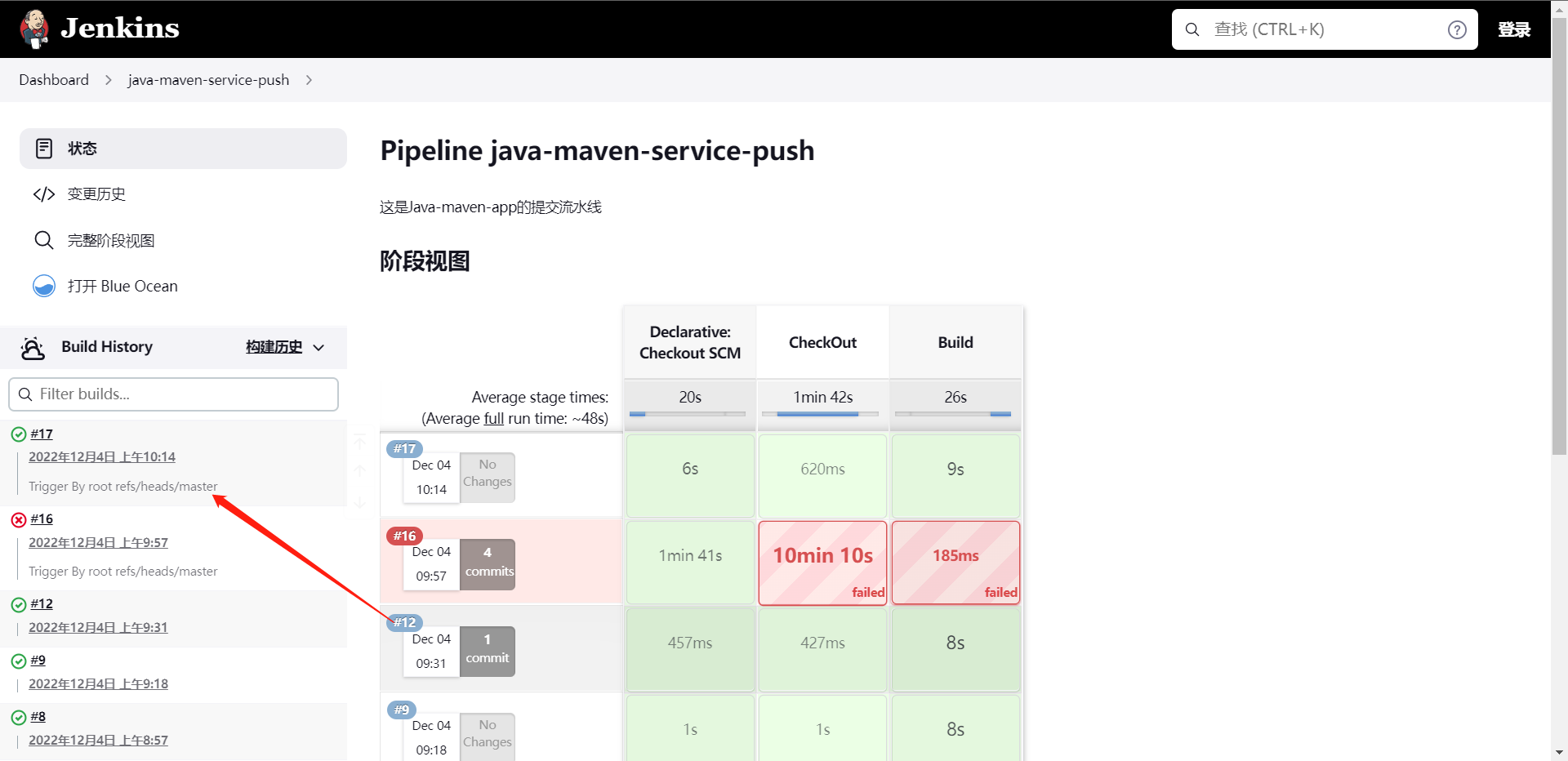

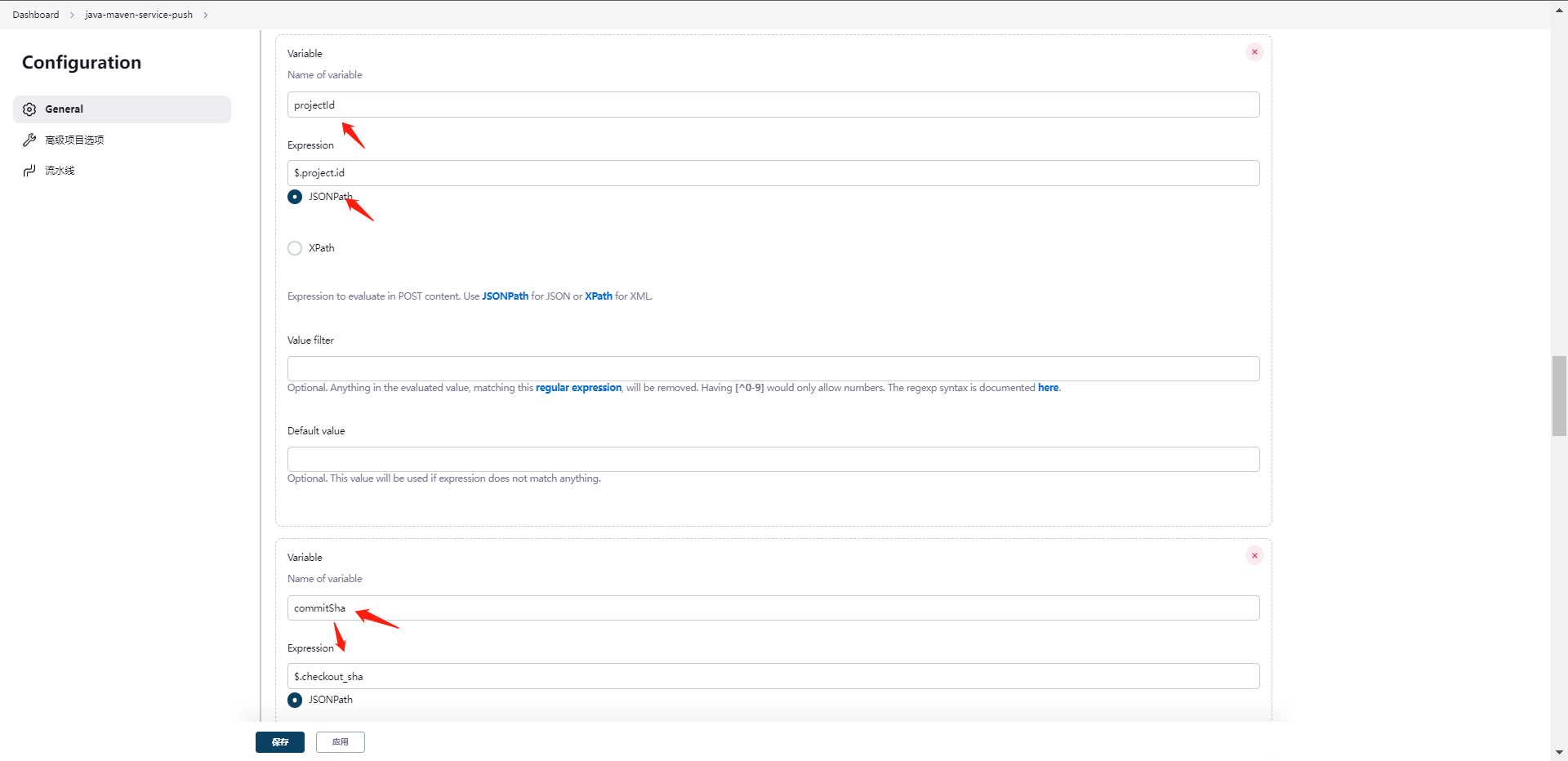

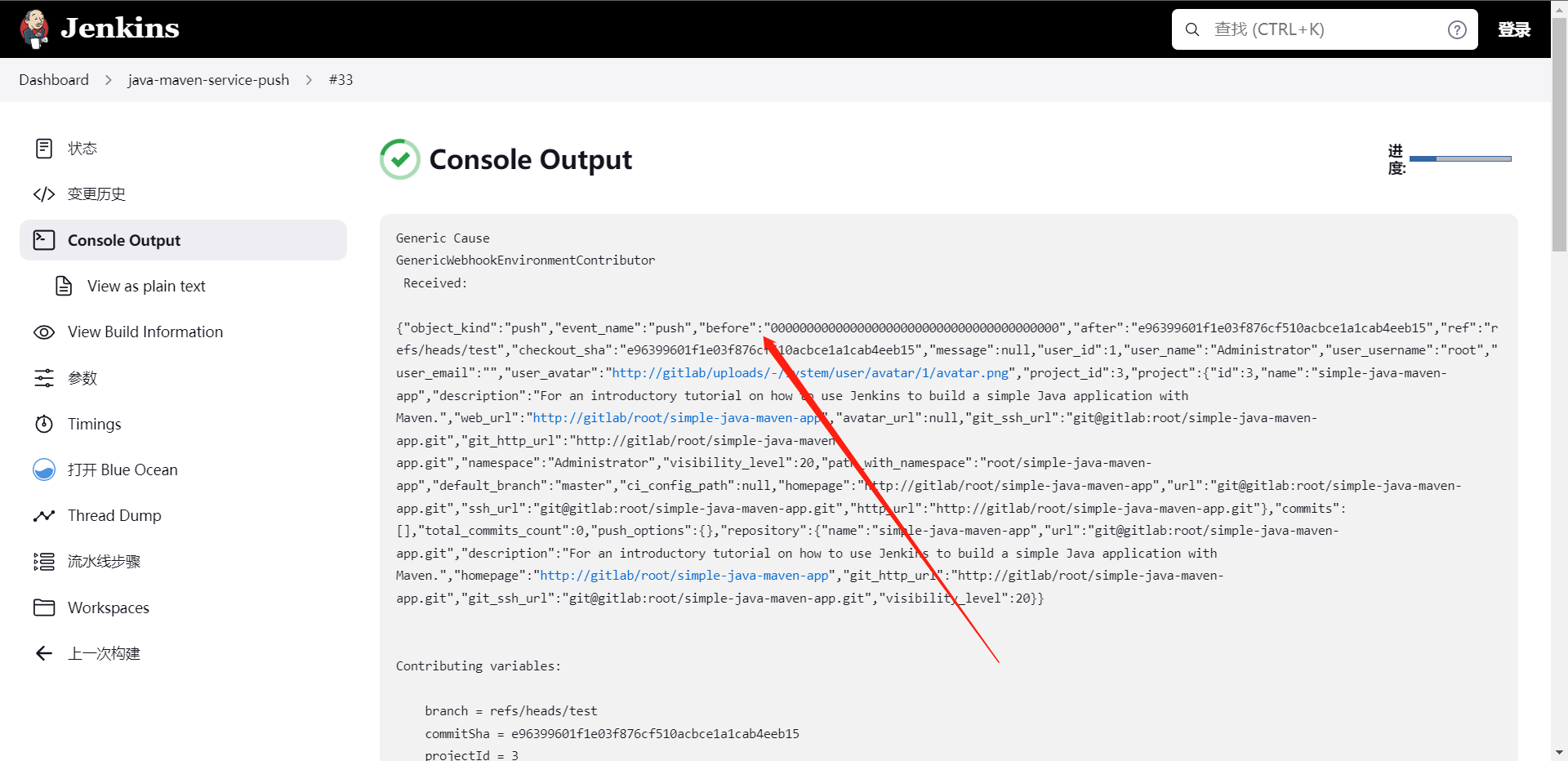

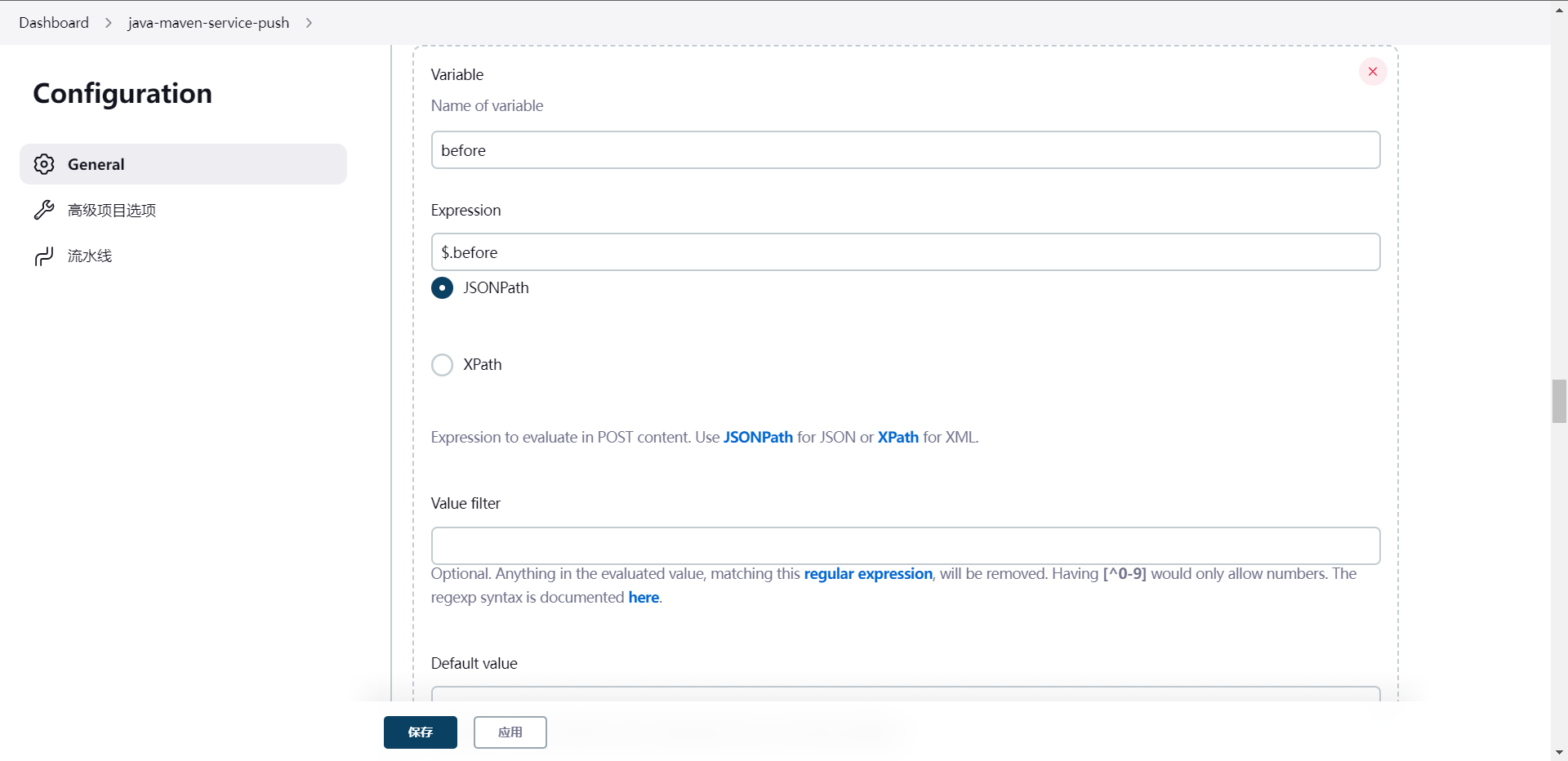

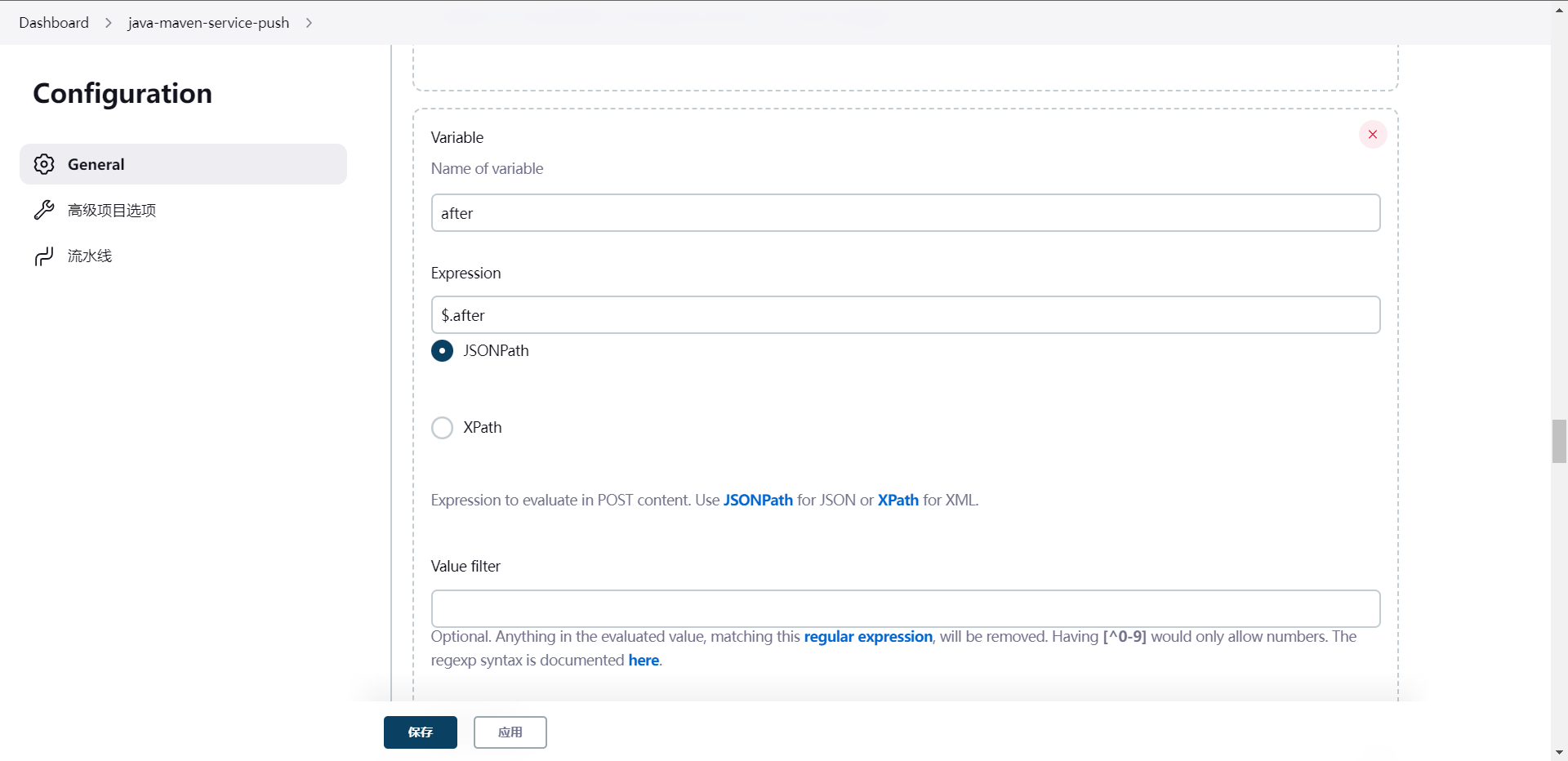

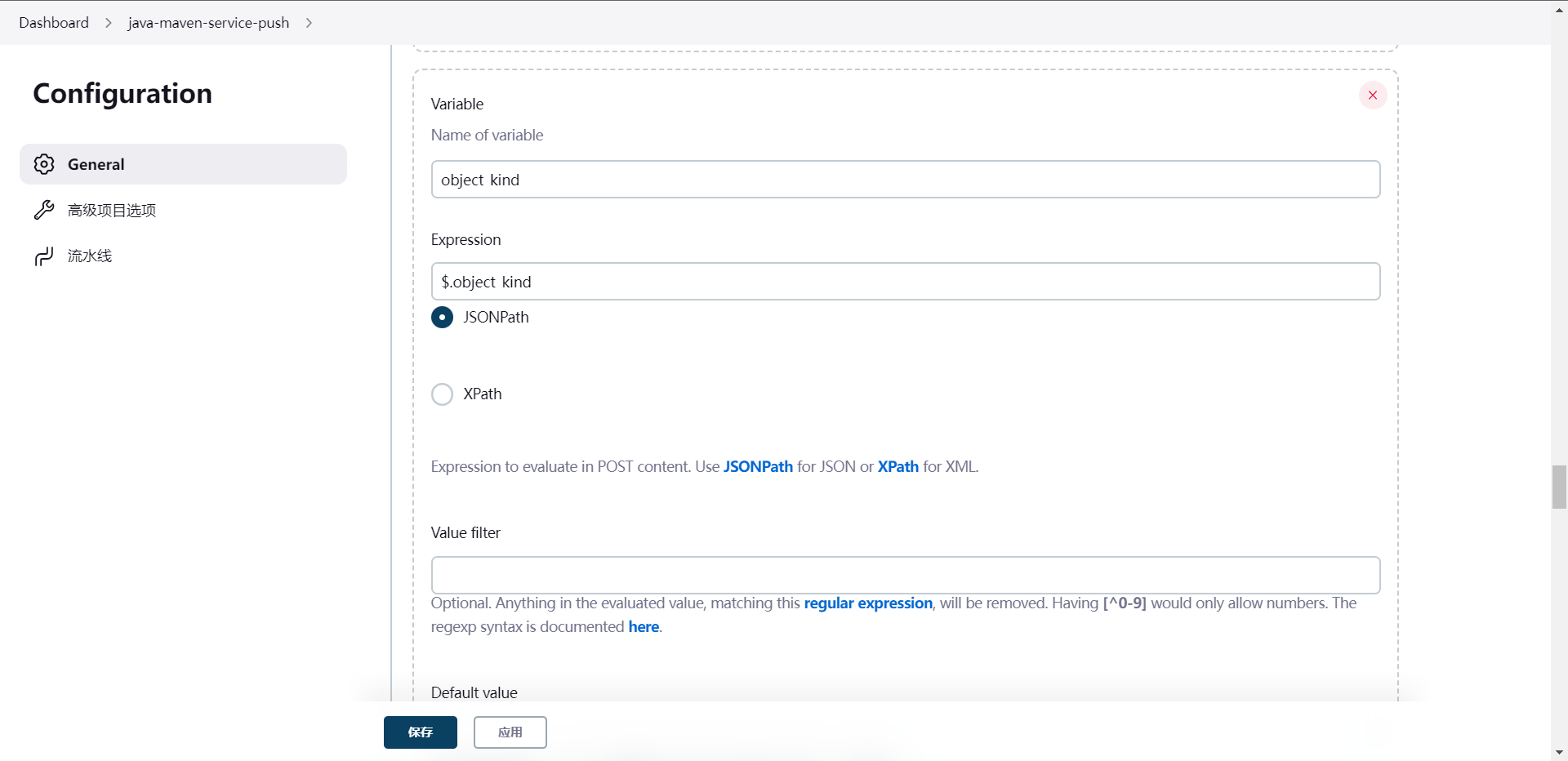

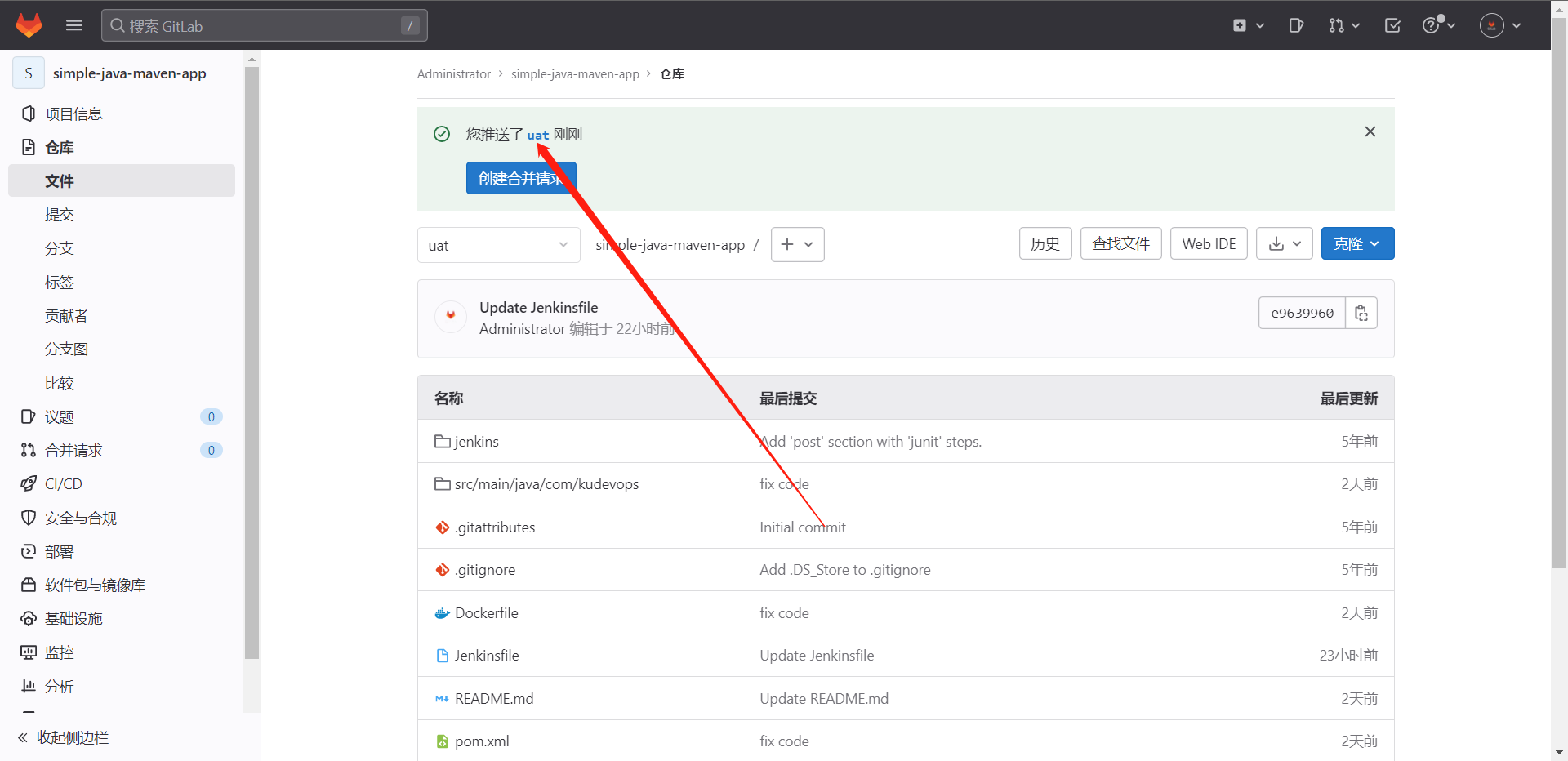

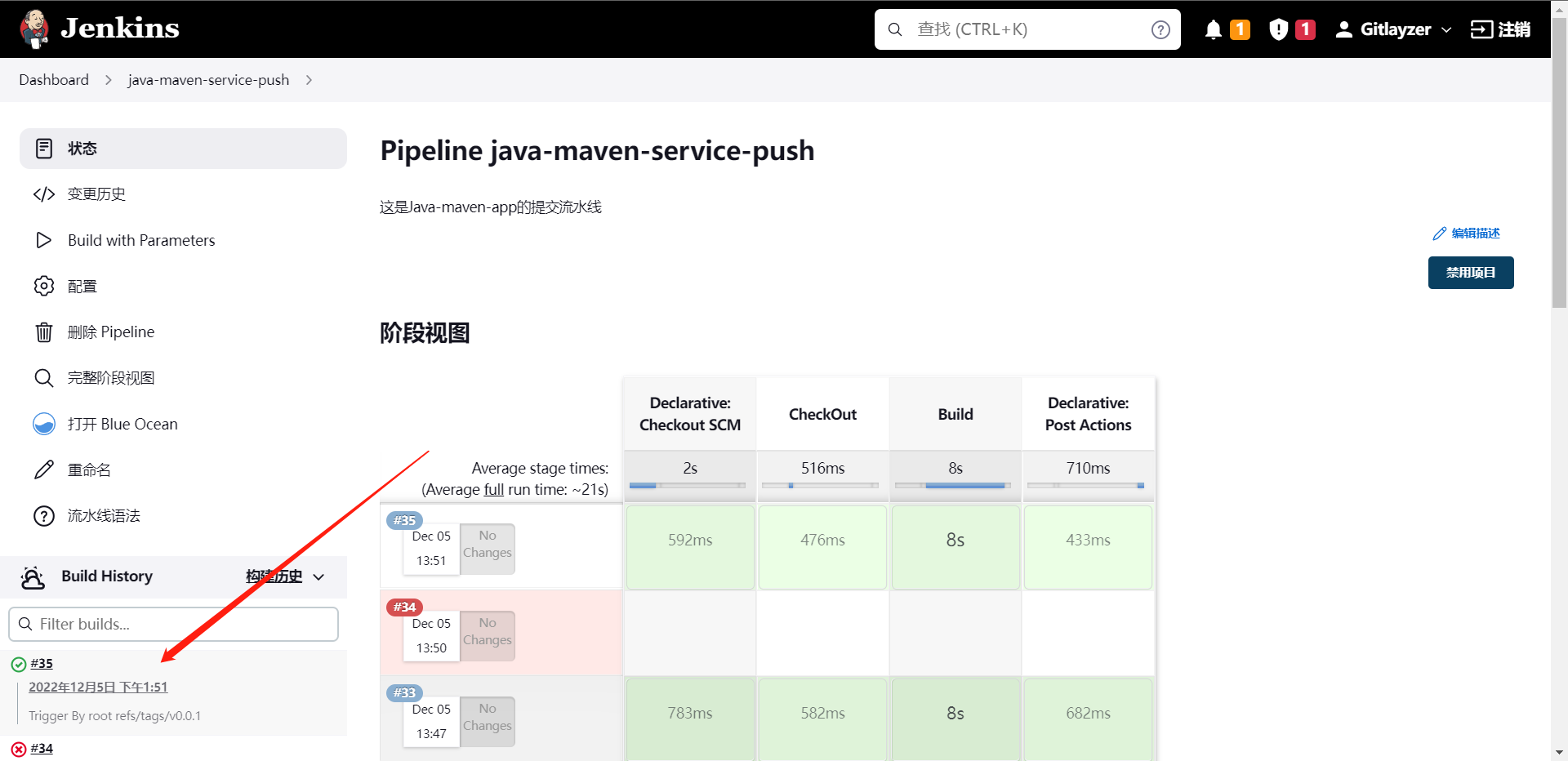

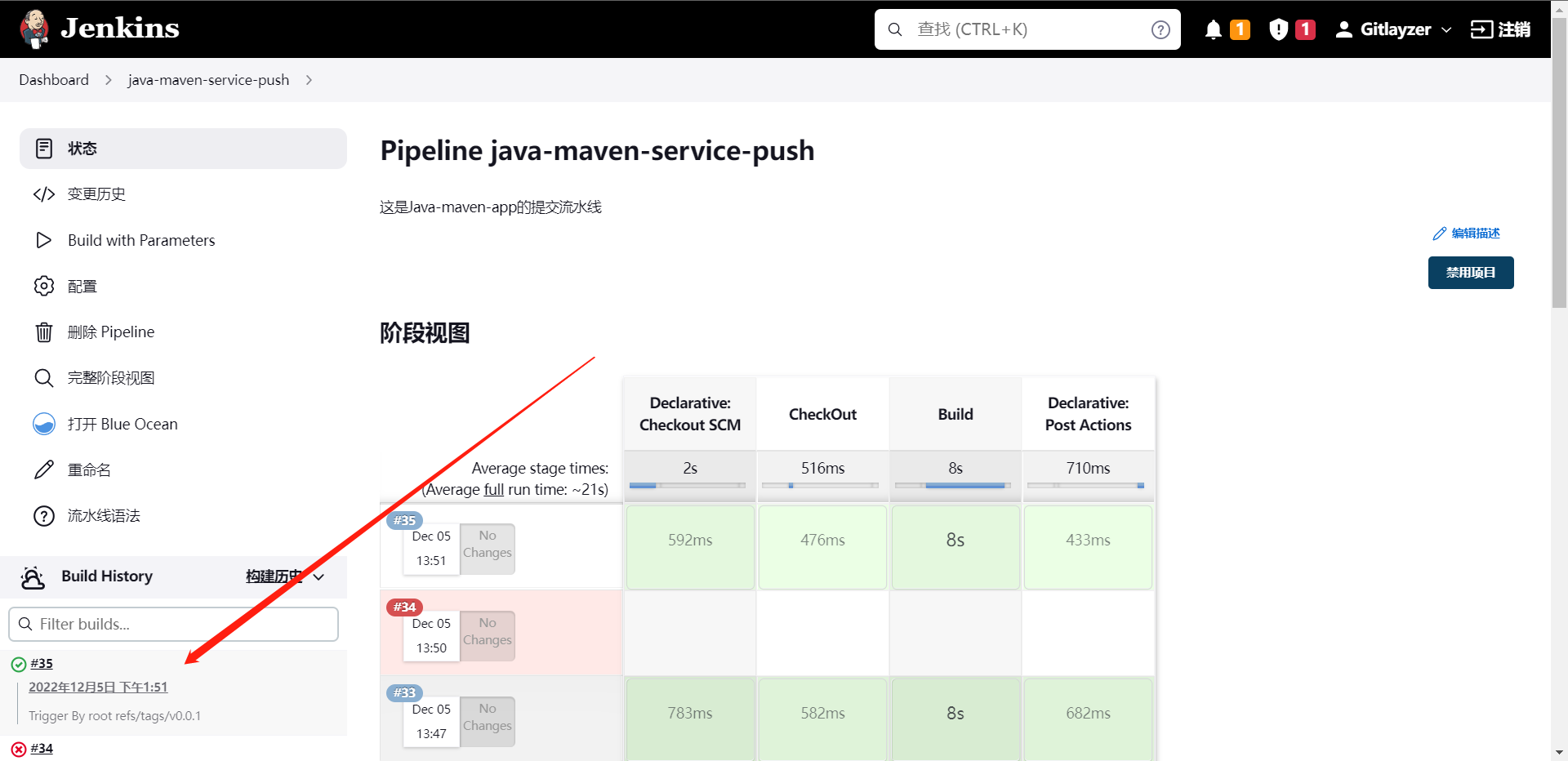

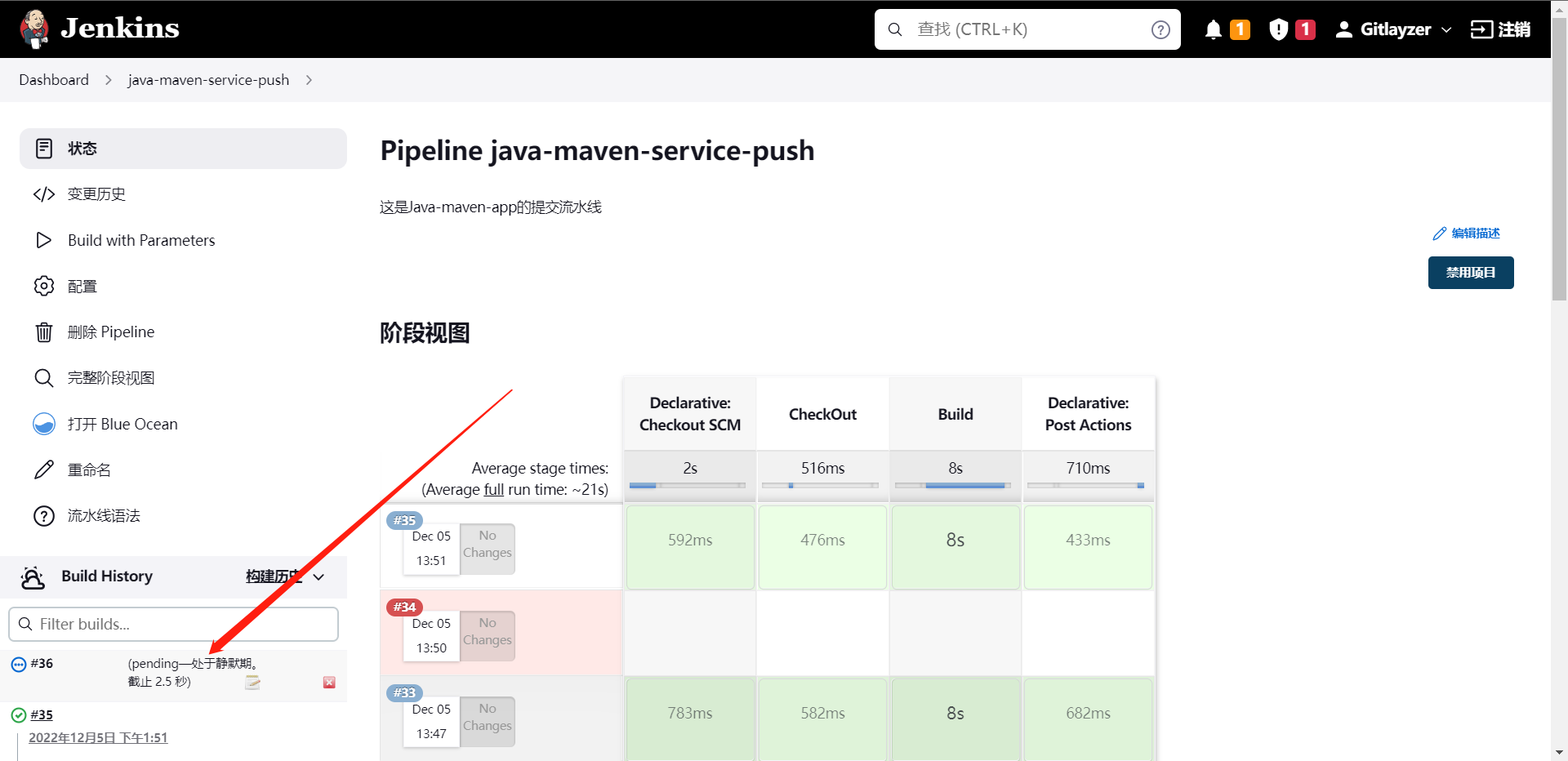

1:配置Gitlab提交流水线

我们配置这个操作的原因为了让开发可以提交完代码,由Gitlab自动去触发Jenkins的Pipeline流水线,这样我们就不需要再去Jenkin去执行这个流水线了,

这里有两种方法:

1:构建触发器中的Gitlab触发器

2:通用触发器

这样然后去配置Gitlab

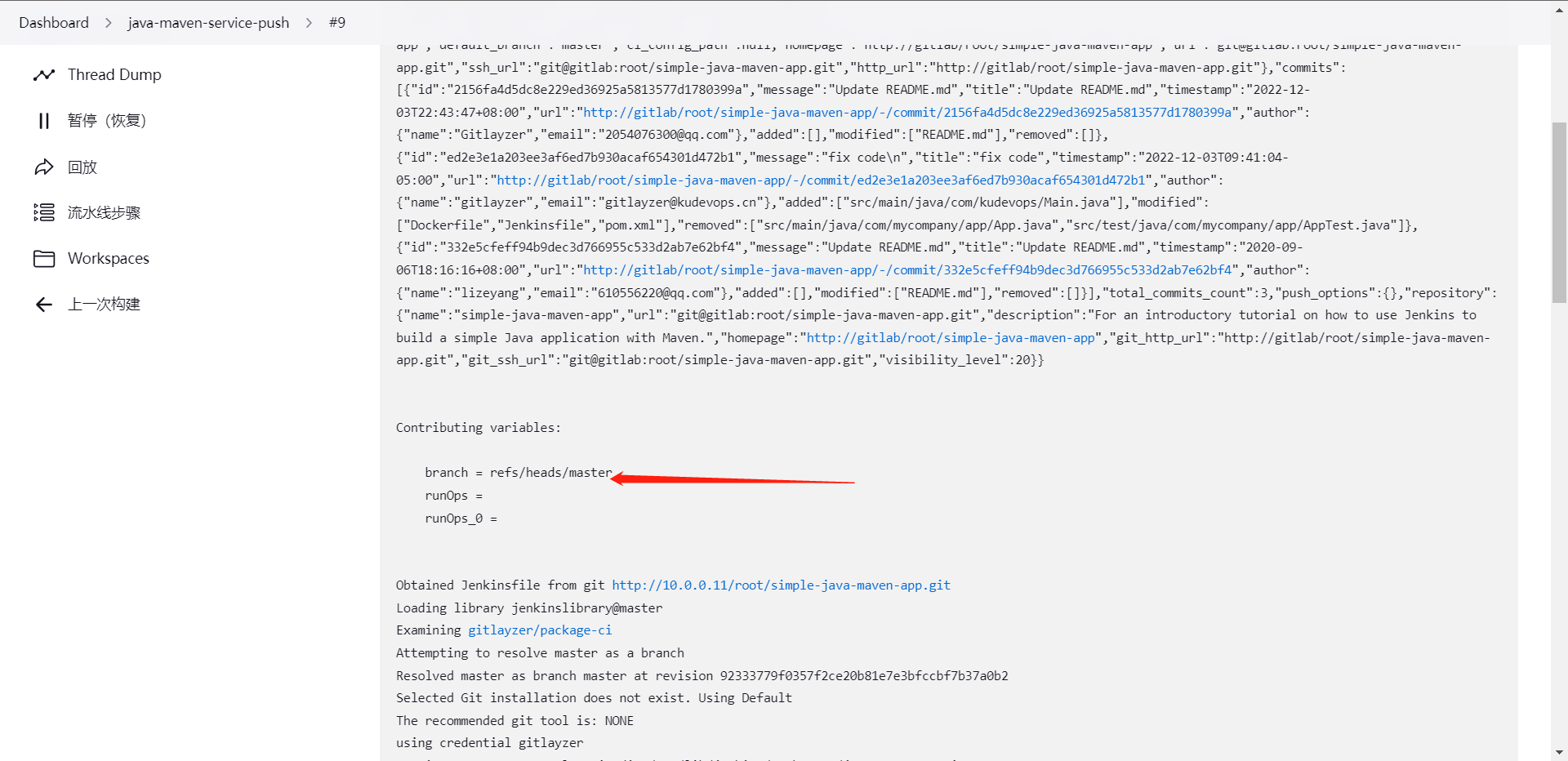

http://10.0.0.11:8080/generic-webhook-trigger/invoke?runOps=GitlabPush

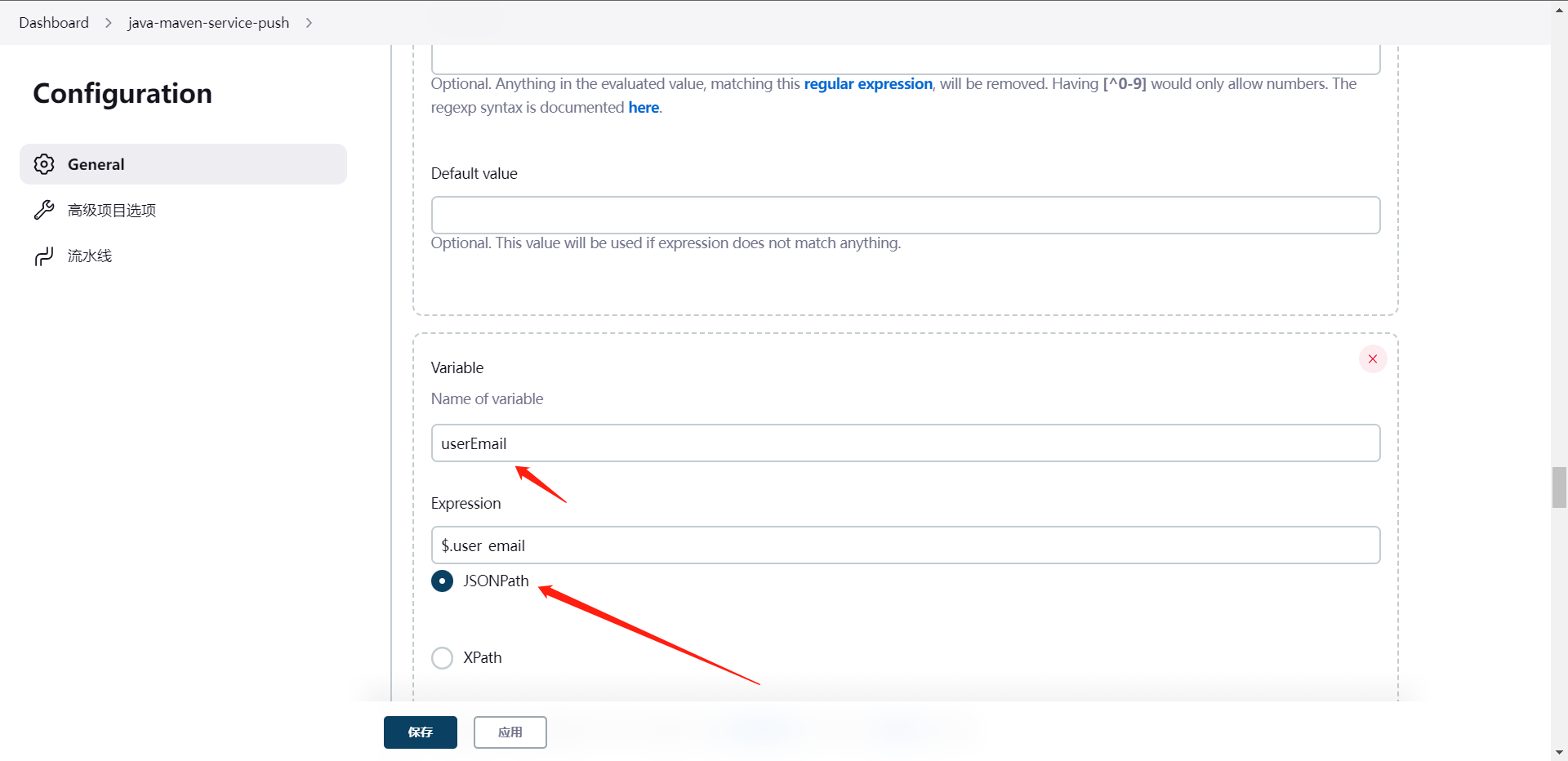

这样就实现了我们Gitlab推送玩请求就回去触发Jenkins的流水线了,当然这个时候我们会发现,我怎么实现我提交哪儿个分支,哪儿个分支去构建怎么办,那么我们下面来看看这个怎么配置,其实这个是依赖于类似`runOps`的参数的,我们要知道的是,只要我们配置了这个参数,那么它就会以变量的形式被注入到流水线的全局变量中,所以这个时候我们就可以想到方法来干这个事情了。

再次触发流水线

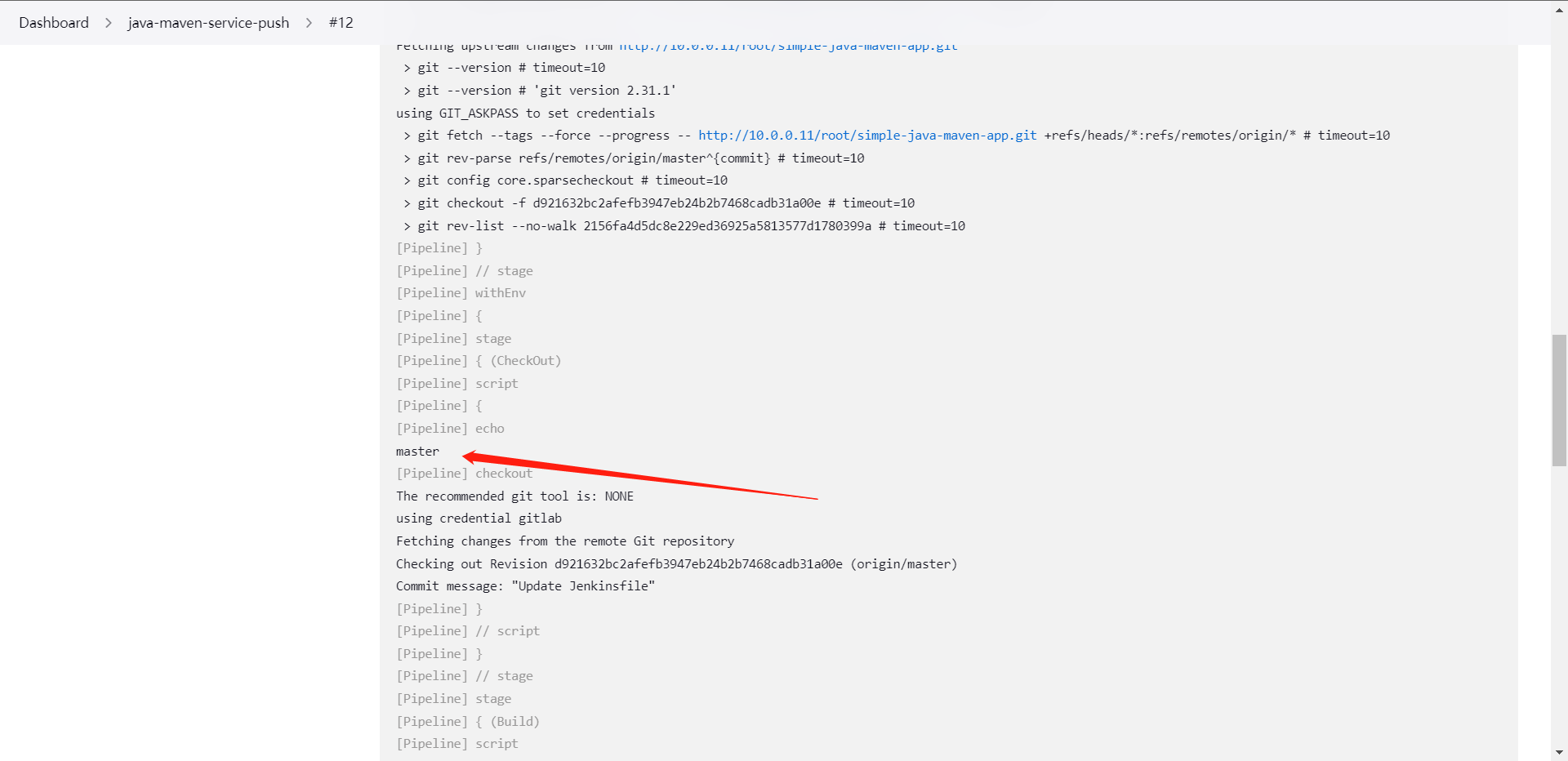

可以看到,我们拿到了分支,那么这个时候我们就要对JenkinsFile动动手脚了

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

String gitlab_url = "${env.gitlab_url}"

String branchName = "${env.branchName}"

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("CheckOut") {

steps {

script{

if ("${runOps}" == "GitlabPush") {

branchName = branch - "refs/heads/"

}

println("${branchName}")

checkout([$class: 'GitSCM', branches: [[name: "${branchName}"]], extensions: [], userRemoteConfigs: [[credentialsId: 'gitlab', url: "${gitlab_url}"]]])

}

}

}

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

}

}

前面有一个图我们做错了,就是配置那个runOps参数的时候那个GitlabPush不能写的,否则传参就是空值了。

根据我们的构建反馈,这里打印出了我们的branchName的值为master

下面我们再优化一下流水线,比如增加构建描述信息

变量名:currentBuild.description

需求:谁提交了,提价了哪个分支

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

String gitlab_url = "${env.gitlab_url}"

String branchName = "${env.branchName}"

if ("${runOps}" == "GitlabPush") {

branchName = branch - "refs/heads/"

currentBuild.description = "Trigger By ${userName} ${branchName}"

}

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("CheckOut") {

steps {

script{

println("${branchName}")

checkout([$class: 'GitSCM', branches: [[name: "${branchName}"]], extensions: [], userRemoteConfigs: [[credentialsId: 'gitlab', url: "${gitlab_url}"]]])

}

}

}

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

}

}

可以看到这里就给我们加了构建的信息

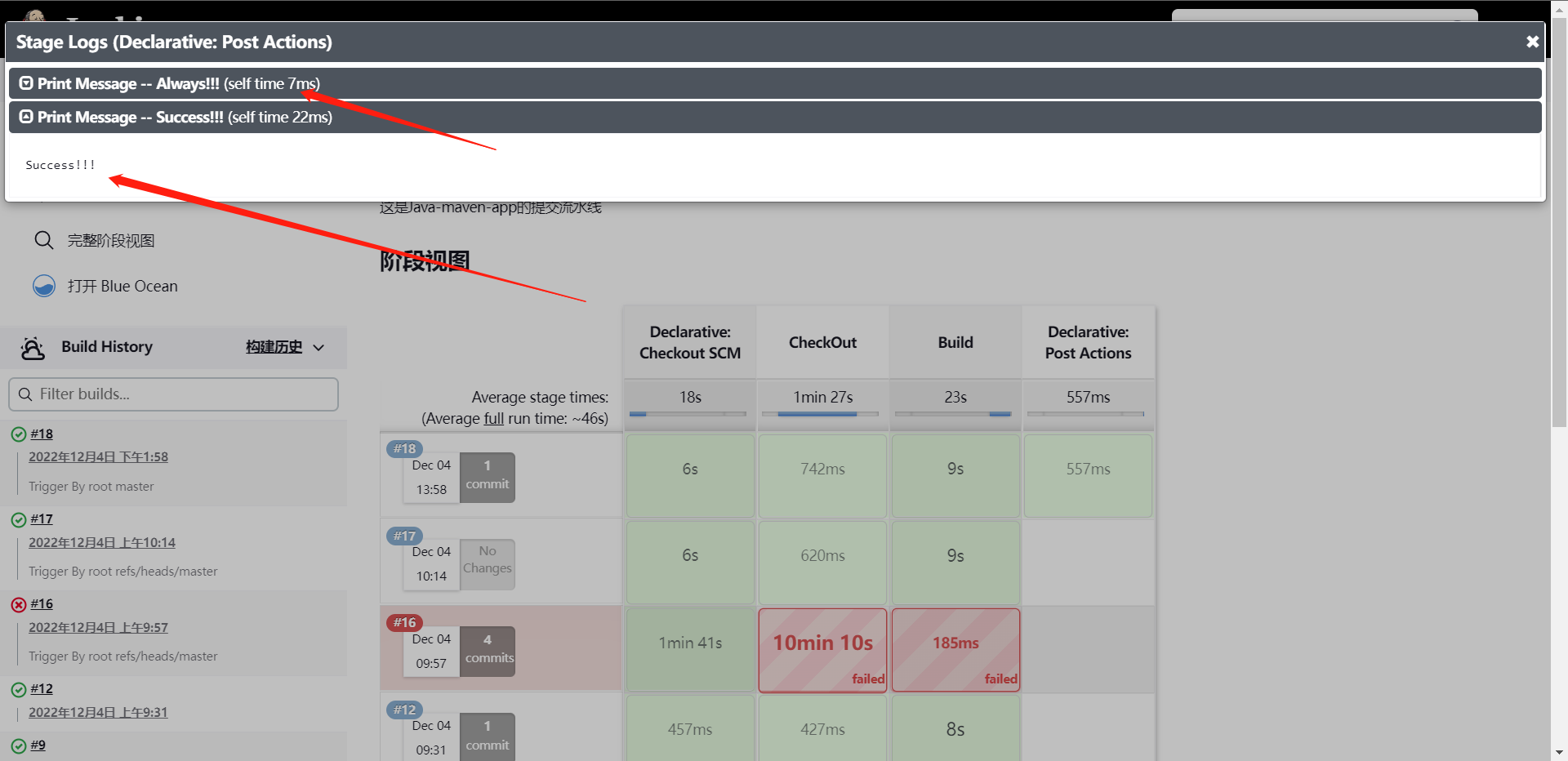

2:变更Commit状态

Pipeline接口:

1:状态:["pending","running","success","failed","canceled"]

2:API:prijects/${projectId}/statuses/${commitSha}?state=

这个情况我们一般使用的是Pipeline中的Post操作。

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

String gitlab_url = "${env.gitlab_url}"

String branchName = "${env.branchName}"

if ("${runOps}" == "GitlabPush") {

branchName = branch - "refs/heads/"

currentBuild.description = "Trigger By ${userName} ${branchName}"

}

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("CheckOut") {

steps {

script{

println("${branchName}")

checkout([$class: 'GitSCM', branches: [[name: "${branchName}"]], extensions: [], userRemoteConfigs: [[credentialsId: 'gitlab', url: "${gitlab_url}"]]])

}

}

}

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

}

post {

always {

script {

println("Always!!!")

}

}

success {

script {

println("Success!!!")

}

}

failure {

script {

println("Failed!!!")

}

}

aborted {

script {

println("Aborted!!!")

}

}

}

}

那么我们一般在这个状态下去触发修改Gitlab的状态,这个时候我们需要去写ShareLibrary了,我们看看怎么写。

package org.library

def HttpRequest(requestType,requestUrl,requestBody) {

def gitServer = "http://10.0.0.11/api/v4"

withCredentials([string(credentialsId: 'gitlab-token', variable: 'token')]) {

result = httpRequest customHeaders: [[maskValue: true, name: 'PRIVATE-TOKEN', value: "${token}"]],

httpMode: requestType,

contentType: "APPLICATION_JSON",

consoleLogResponseBody: true,

ignoreSslErrors: true,

requestBody: requestBody,

url: "${gitServer}/${requestUrl}"

}

return result

}

// 更改提交状态

def ChangeCommitStatus(projectId,commitSha,status) {

commitApi = "/projects/${projectId}/statuses/${commitSha}?state=${status}"

response = HttpRequest("POST",commitApi,'')

println(response)

return response

}

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

def gitlab = new org.library.gitlab()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

String gitlab_url = "${env.gitlab_url}"

String branchName = "${env.branchName}"

if ("${runOps}" == "GitlabPush") {

branchName = branch - "refs/heads/"

currentBuild.description = "Trigger By ${userName} ${branchName}"

gitlab.ChangeCommitStatus(projectId,commitSha,'running')

}

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("CheckOut") {

steps {

script{

println("${branchName}")

checkout([$class: 'GitSCM', branches: [[name: "${branchName}"]], extensions: [], userRemoteConfigs: [[credentialsId: 'gitlab', url: "${gitlab_url}"]]])

}

}

}

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

}

post {

always {

script {

println("Always!!!")

}

}

success {

script {

println("Success!!!")

gitlab.ChangeCommitStatus(projectId,commitSha,'success')

}

}

failure {

script {

println("Failed!!!")

gitlab.ChangeCommitStatus(projectId,commitSha,'failed')

}

}

aborted {

script {

println("Aborted!!!")

gitlab.ChangeCommitStatus(projectId,commitSha,'canceled')

}

}

}

}

当然这个其实就是一个优化的层面的东西,这个只是更容易的让我们辨别这个流水线的状态

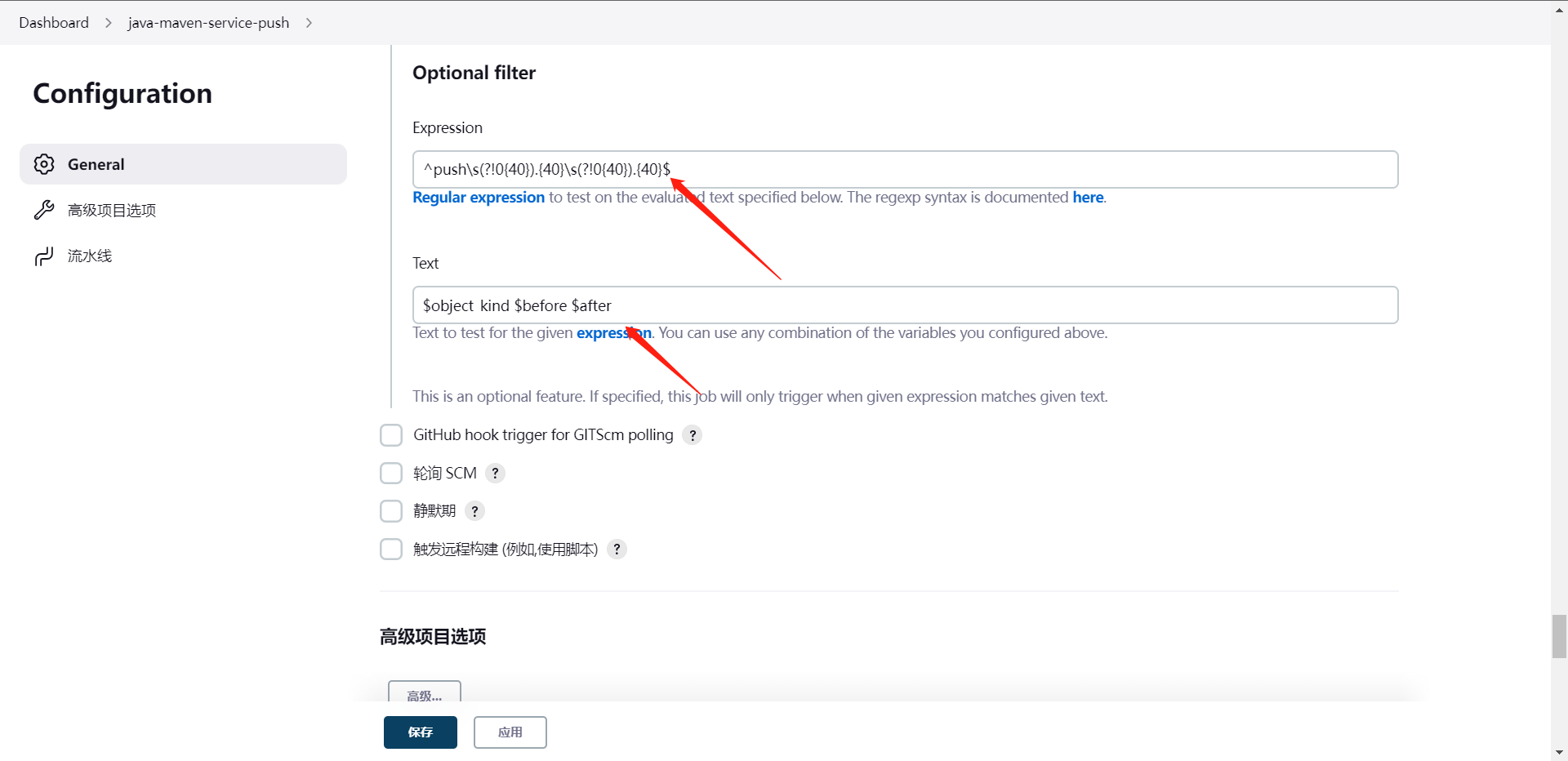

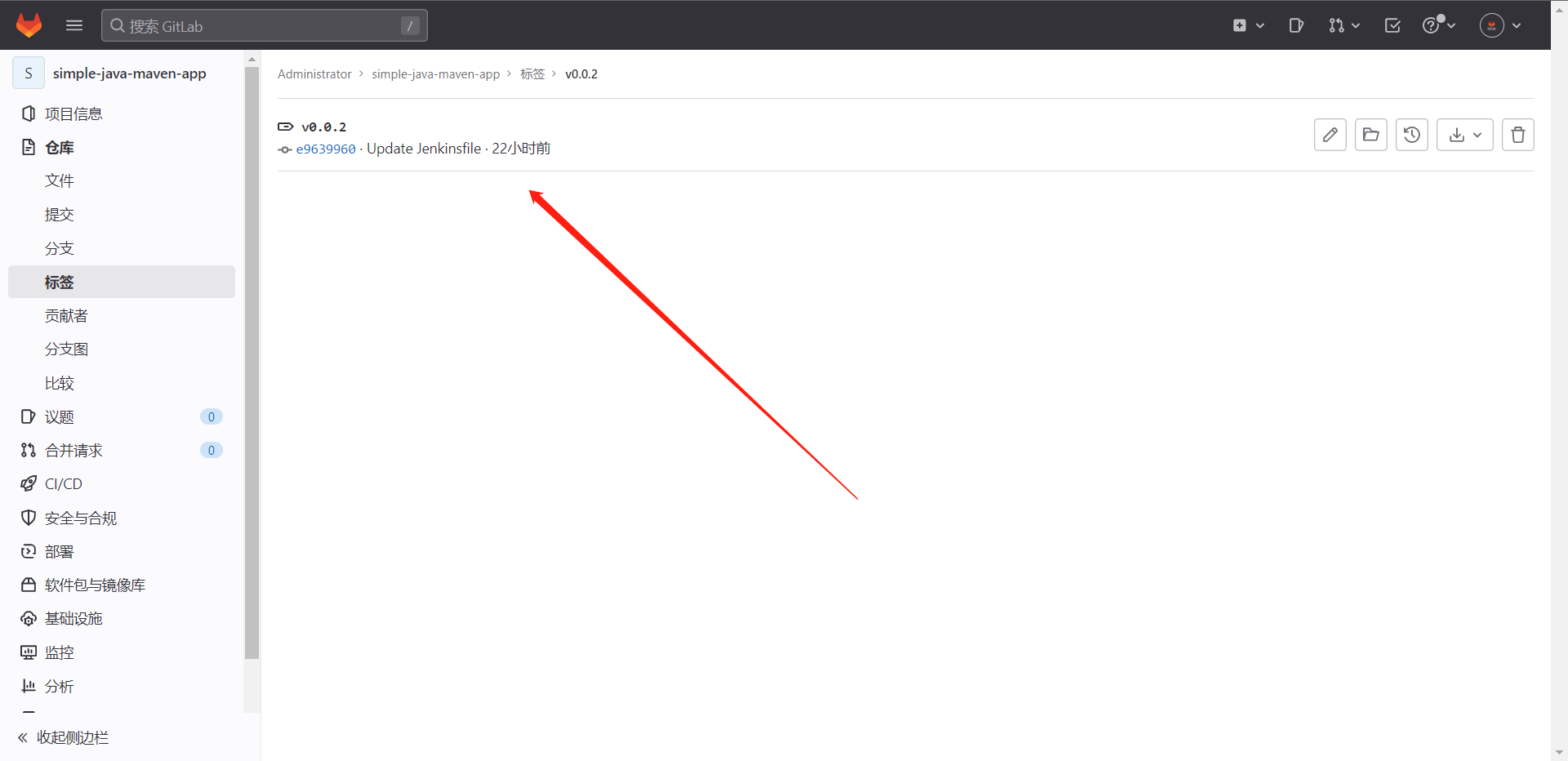

3:过滤特殊Push请求

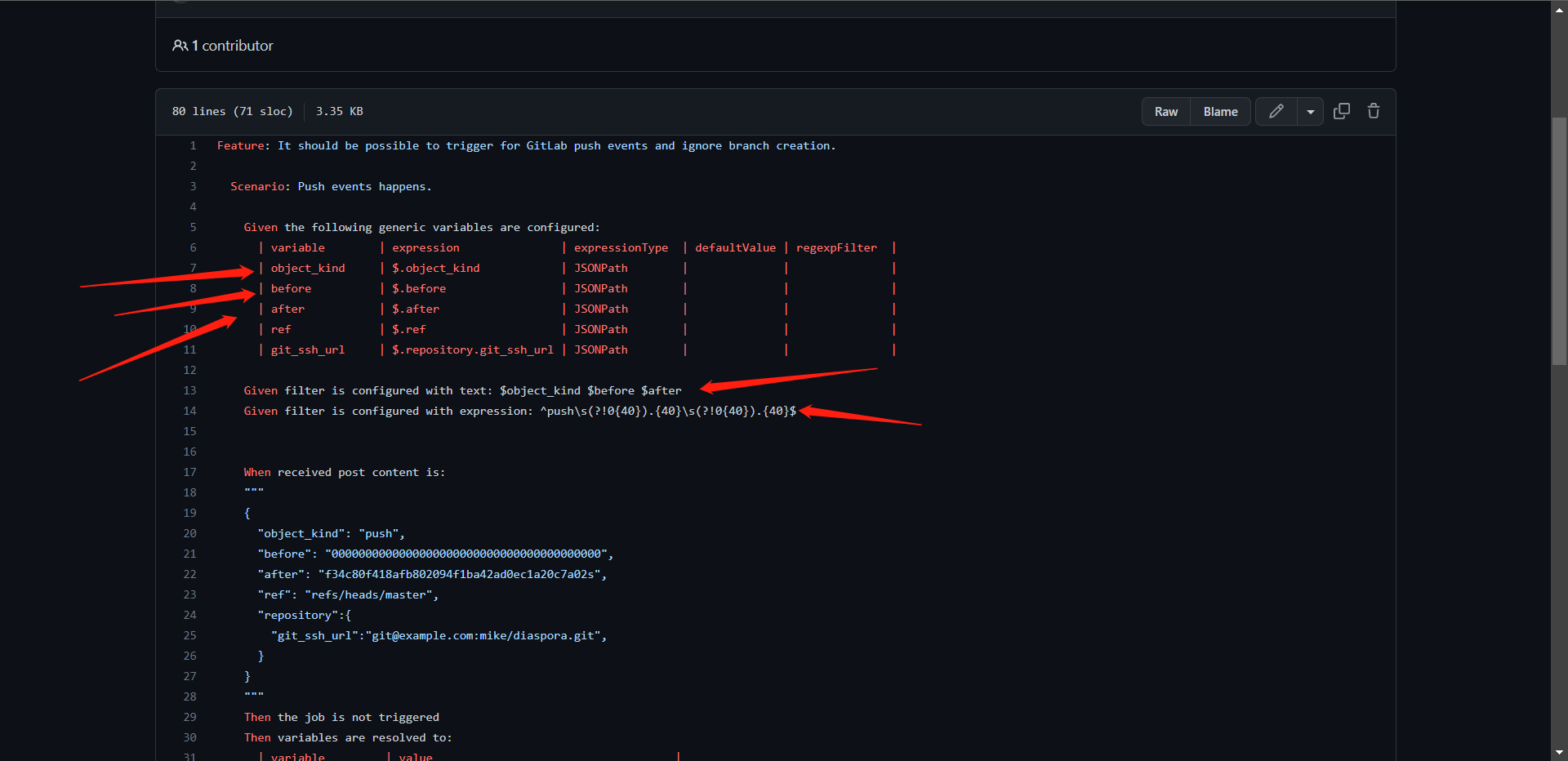

这个需求其实是当我们去创建一个新的分支的时候,那么gitlab认为它也是个push操作,那么这个时候它也会去触发Jenoins的流水线,所以,这个时候我们需要去把这个特殊操作给过滤掉,这就是我们的需求。

这里面其实有一个非常明显的特征,我们可以去创建一个分支来看看,这里可以看到40个0,当然这个就是我们所说的特点

这里其实创建标签也是一样的,也会触发只不过我们没有给webhook配置标签提交,所以它没有触发,我们可以去打开一下那个功能,看到的JSON也是一样的结果,标签也是很多`0`,我们直接在流水线的接着添加POST参数

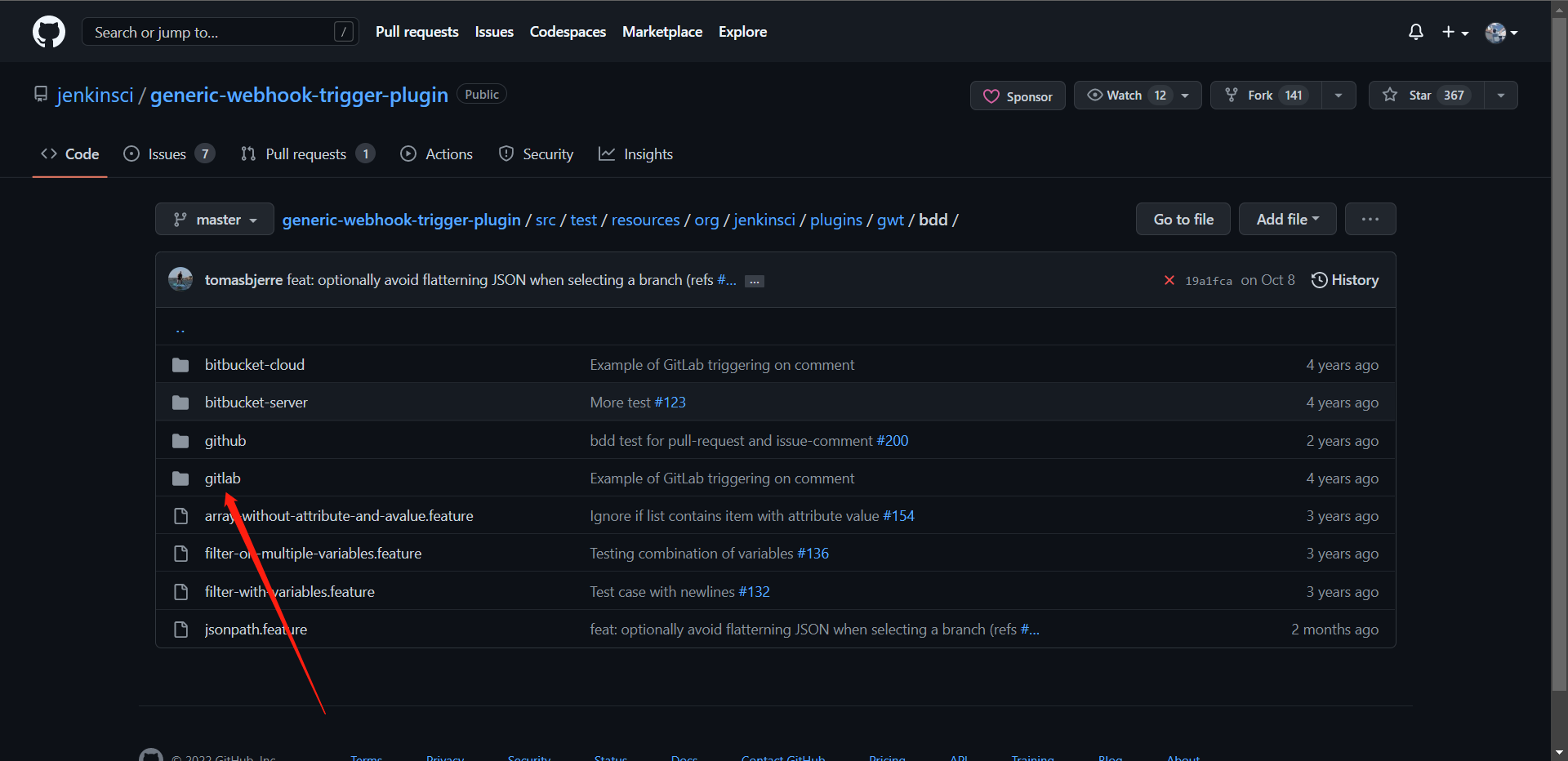

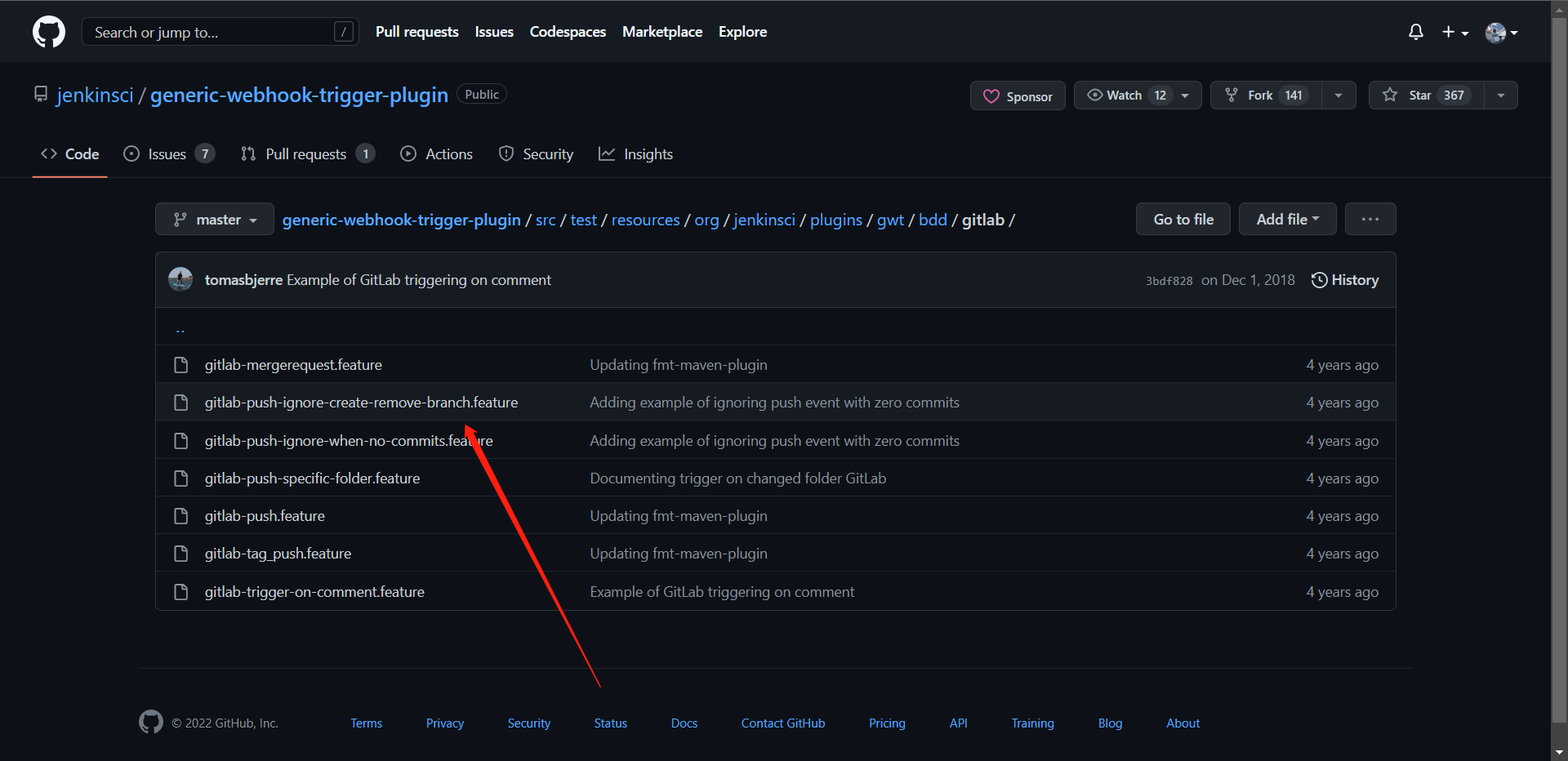

这里的参数获取可以通过如下地址获取

https://github.com/jenkinsci/generic-webhook-trigger-plugin/tree/master/src/test/resources/org/jenkinsci/plugins/gwt/bdd

这个时候其实我们就配置好了,我们再次去创建一个分支或者tag的时候我们的流水线就不会被再次触发了

这样,我们的流水线基本就优化好了,当然我们还要测一测正常的提交

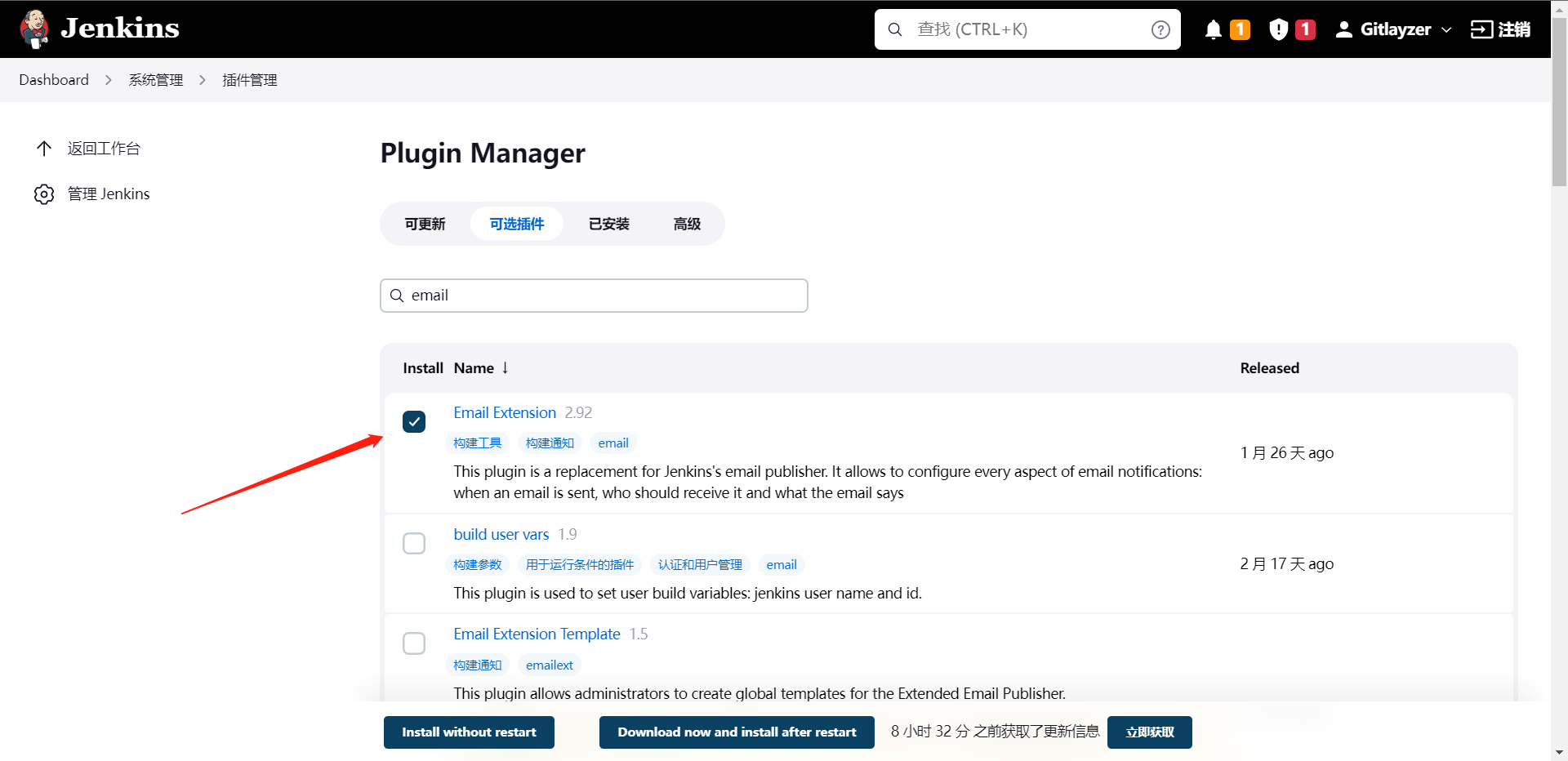

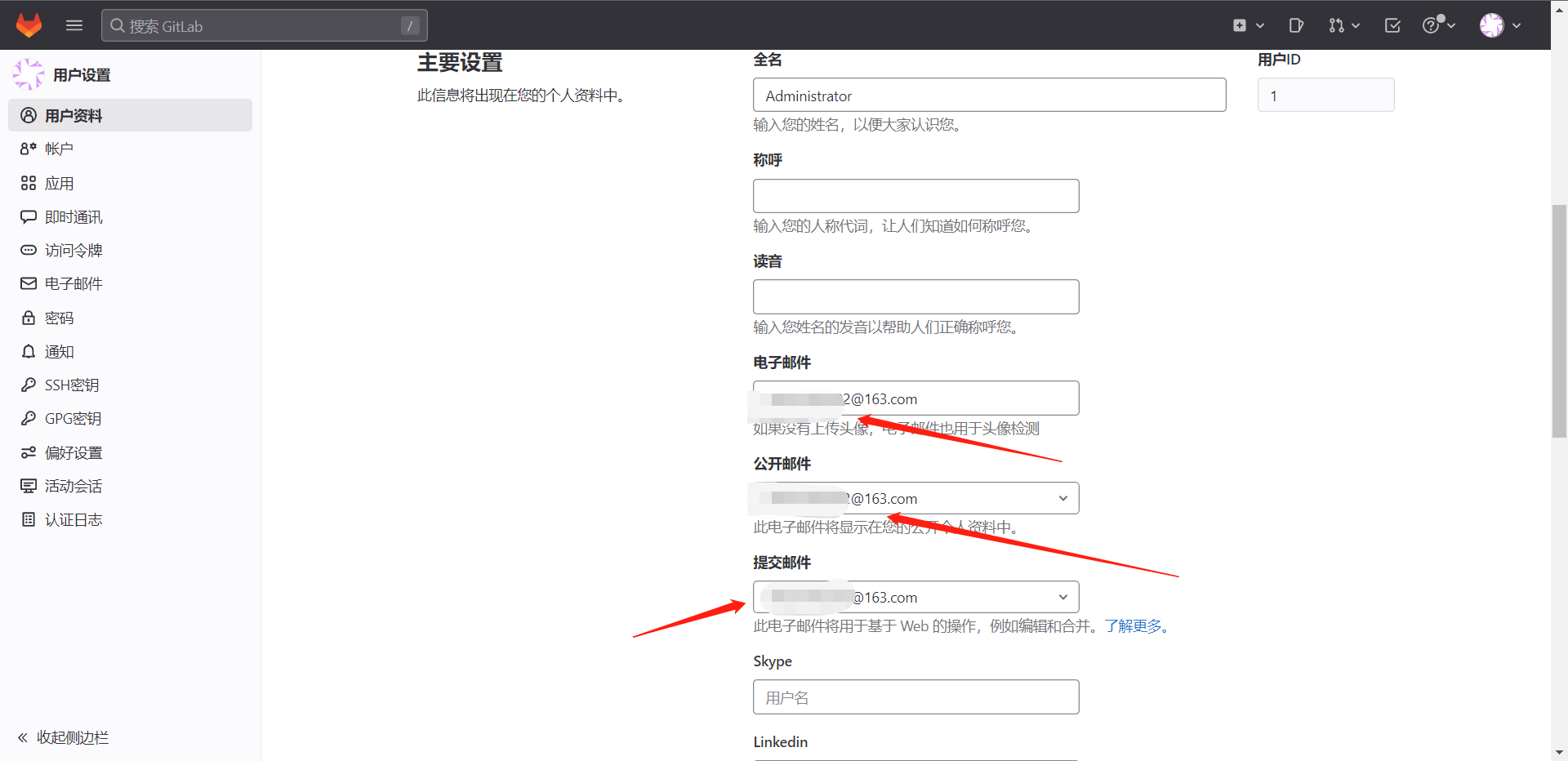

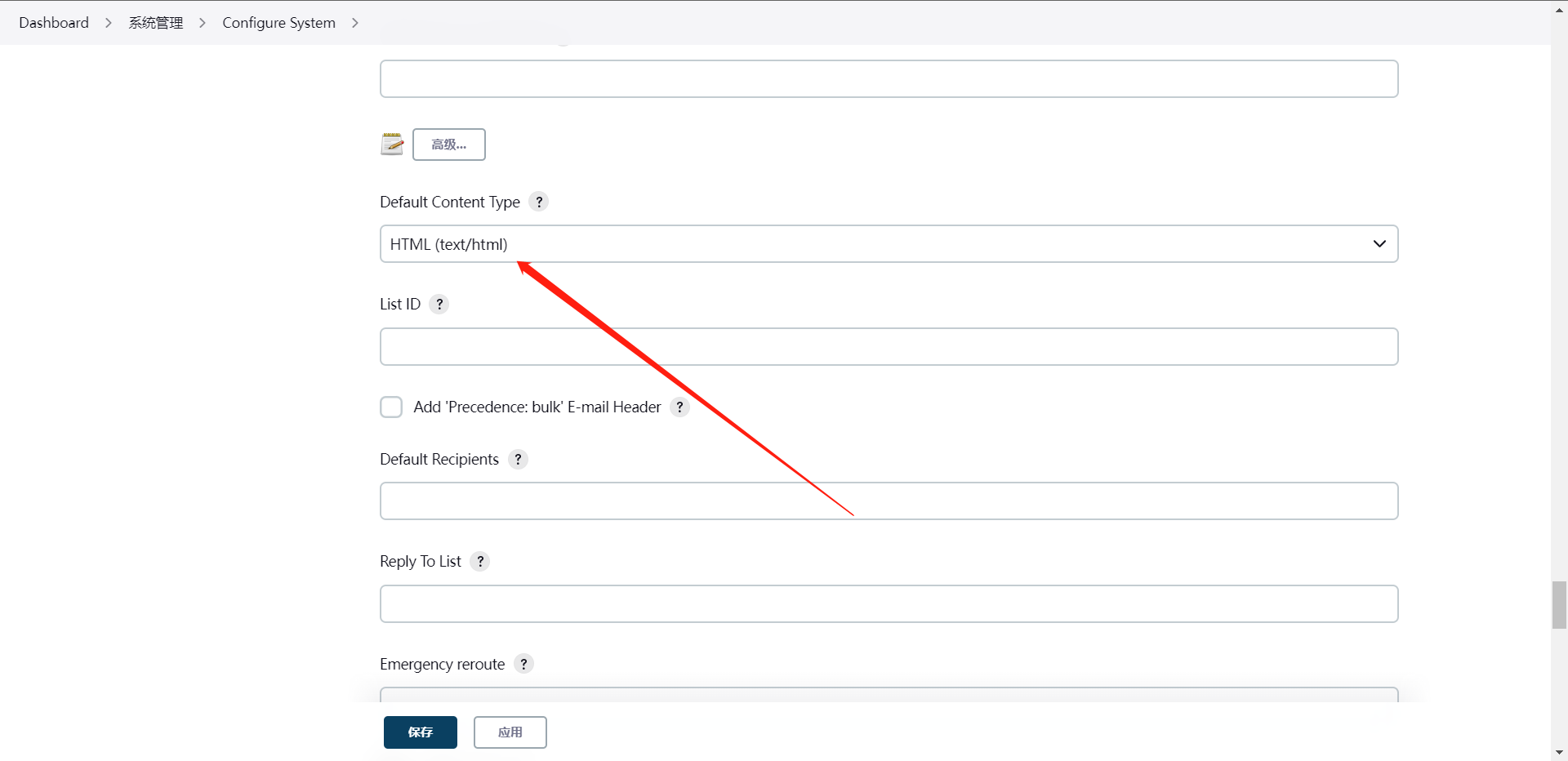

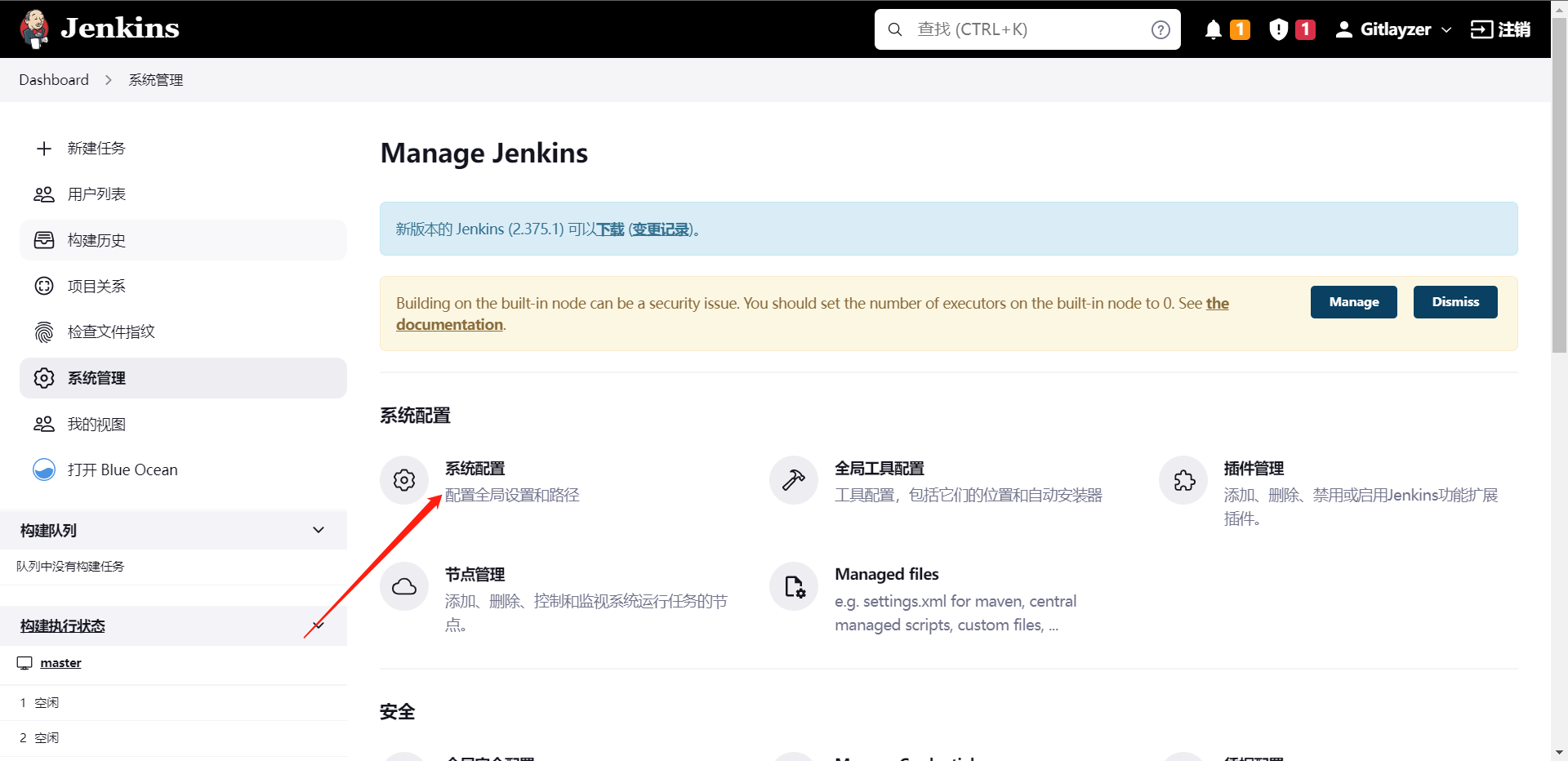

4:构建失败通知:email

这个操作其实是非常常见的,因为每次提交我们并不能及时去查看流水线状态,那么这个时候就需要我们去配置一个流水线状态提醒了,我们用最简单且最实用的方法就是邮件,当然也有其他的方法,比如:钉钉机器人,企业微信机器人,飞书,slack等等

def Email(status,emailUser){

emailext body: """

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>Alter</title>

</head>

<body marginheight="0" offset="0" style="text-align: center">

<img src="https://www.jenkins.io/favicon.ico" alt="logo" width="80" height="100">

<h2>项目名称: ${JOB_NAME}</h2>

<h2>构建人:${emailUser}</h2>

<h2>构建状态: ${status}</h2>

<h2>构建编号: ${BUILD_ID}</h2>

<h2>项目地址:<a href="${BUILD_URL}" target="_blank">${BUILD_URL}</a></h2>

<h2>构建日志:<a href="${BUILD_URL}console" target="_blank">${BUILD_URL}console</a></h2>

</body>

</html>""",

subject: "Jenkins-${JOB_NAME}项目构建信息",

to: emailUser

}

我们需要把以上信息去创建到我们的ShareLibrary

[root@cdk-server library]# cat toemail.groovy

def Email(status,emailUser){

emailext body: """

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>Alter</title>

</head>

<body marginheight="0" offset="0" style="text-align: center">

<img src="http://10.0.0.11:8080/static/c1130d2a/images/svgs/logo.svg" alt="logo" width="80" height="100">

<h2>项目名称: ${JOB_NAME}</h2>

<h2>构建人:${emailUser}</h2>

<h2>构建状态: ${status}</h2>

<h2>构建编号: ${BUILD_ID}</h2>

<h2>项目地址:<a href="${BUILD_URL}" target="_blank">${BUILD_URL}</a></h2>

<h2>构建日志:<a href="${BUILD_URL}console" target="_blank">${BUILD_URL}console</a></h2>

</body>

</html>""",

subject: "Jenkins-${JOB_NAME}项目构建信息",

to: emailUser

}

[root@cdk-server library]# git add .

[root@cdk-server library]# git commit -m "Add toemail code"

[master 1111dfe] Add toemail code

1 file changed, 21 insertions(+)

create mode 100644 src/org/library/toemail.groovy

[root@cdk-server library]# git push origin master

Enumerating objects: 10, done.

Counting objects: 100% (10/10), done.

Delta compression using up to 2 threads

Compressing objects: 100% (4/4), done.

Writing objects: 100% (6/6), 884 bytes | 884.00 KiB/s, done.

Total 6 (delta 2), reused 0 (delta 0), pack-reused 0

remote: Resolving deltas: 100% (2/2), completed with 2 local objects.

To https://github.com/gitlayzer/package-ci.git

d4c1cdd..1111dfe master -> master

随后我们就可以去应用这个组件到我们的Pipeline中了

这里有个问题就是我们发送邮件的目标是从Gitlab获取的,所以你的Gitlab用户必须绑定了邮箱才可以,但其实只要Gitlab绑定了邮件,你触发失败的流水线就会发送邮件告诉你了。

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

def gitlab = new org.library.gitlab()

def toemail = new org.library.toemail()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

String gitlab_url = "${env.gitlab_url}"

String branchName = "${env.branchName}"

if ("${runOps}" == "GitlabPush") {

branchName = branch - "refs/heads/"

currentBuild.description = "Trigger By ${userName} ${branchName}"

gitlab.ChangeCommitStatus(projectId,commitSha,'running')

}

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("CheckOut") {

steps {

script{

println("${branchName}")

checkout([$class: 'GitSCM', branches: [[name: "${branchName}"]], extensions: [], userRemoteConfigs: [[credentialsId: 'gitlab', url: "${gitlab_url}"]]])

}

}

}

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

}

post {

always {

script {

println("Always!!!")

}

}

success {

script {

println("Success!!!")

gitlab.ChangeCommitStatus(projectId,commitSha,'success')

toemail.Email("构建流水线成功",userEmail)

}

}

failure {

script {

println("Failed!!!")

gitlab.ChangeCommitStatus(projectId,commitSha,'failed')

toemail.Email("构建流水线失败",userEmail)

}

}

aborted {

script {

println("Aborted!!!")

gitlab.ChangeCommitStatus(projectId,commitSha,'canceled')

toemail.Email("构建流水线取消",userEmail)

}

}

}

}

这还不算完,我们还需要去配置Jenkins的发送邮箱的服务器

5:gitlab合并流水线

当流水线成功后合并

当我们打开这个功能之后我们会发现如果我们要合并的源分支的流水线执行不成功那么它是无法提交合并的,这个是Gitlab的一个小技巧吧

7:代码质量平台集成

1:架构介绍

1:一台SonarQube Server启动三个主要过程:

1.1:Web服务器,仅供开发人员,管理人员高质量的快照配置SonarQube实例

1.2:基于Elasticsearch的Search Server从UI进行后退搜索

1.3:Compute Engine服务器,负责处理代码分析报告并将其保存在SonerQube数据库中

2:一个SonerQube数据库要存储:

2.1:SonerQube实例的配置(安全性,插件设置等)

2.2:项目,视图等的质量快照。

3:服务器上安装了多个SonerQube插件,可能包括语言,SCM,集成,身份验证和管理插件

4:在构建/持续集成服务器上运行一个或多个SonerScanner,以分析项目

2:准备工作

1:运行SonerQube的唯一前提条件是在计算机上安装Java

2:实例至少需要2GB的内存,而OS则则需要1GB的可用内存

3:每个节点分配50GB的空间

4:安装具有出色读写性能的硬盘驱动器上("数据"文件夹中包含Elasticsearch索引,当服务器启动并运行时,将在该索引上进行大量的I/O操作)

5:SonerQube在服务器端不支持32位,但是SonerQube确在扫描仪侧却支持32位系统。

3:安装SonerQube

# 这里我选用的是Docker部署

[root@cdk-server ~]# docker pull sonarqube:community

[root@cdk-server ~]# docker run -d --name sonarqube --privileged=true -p 9000:9000 sonarqube:community

# 默认账户

admin/admin

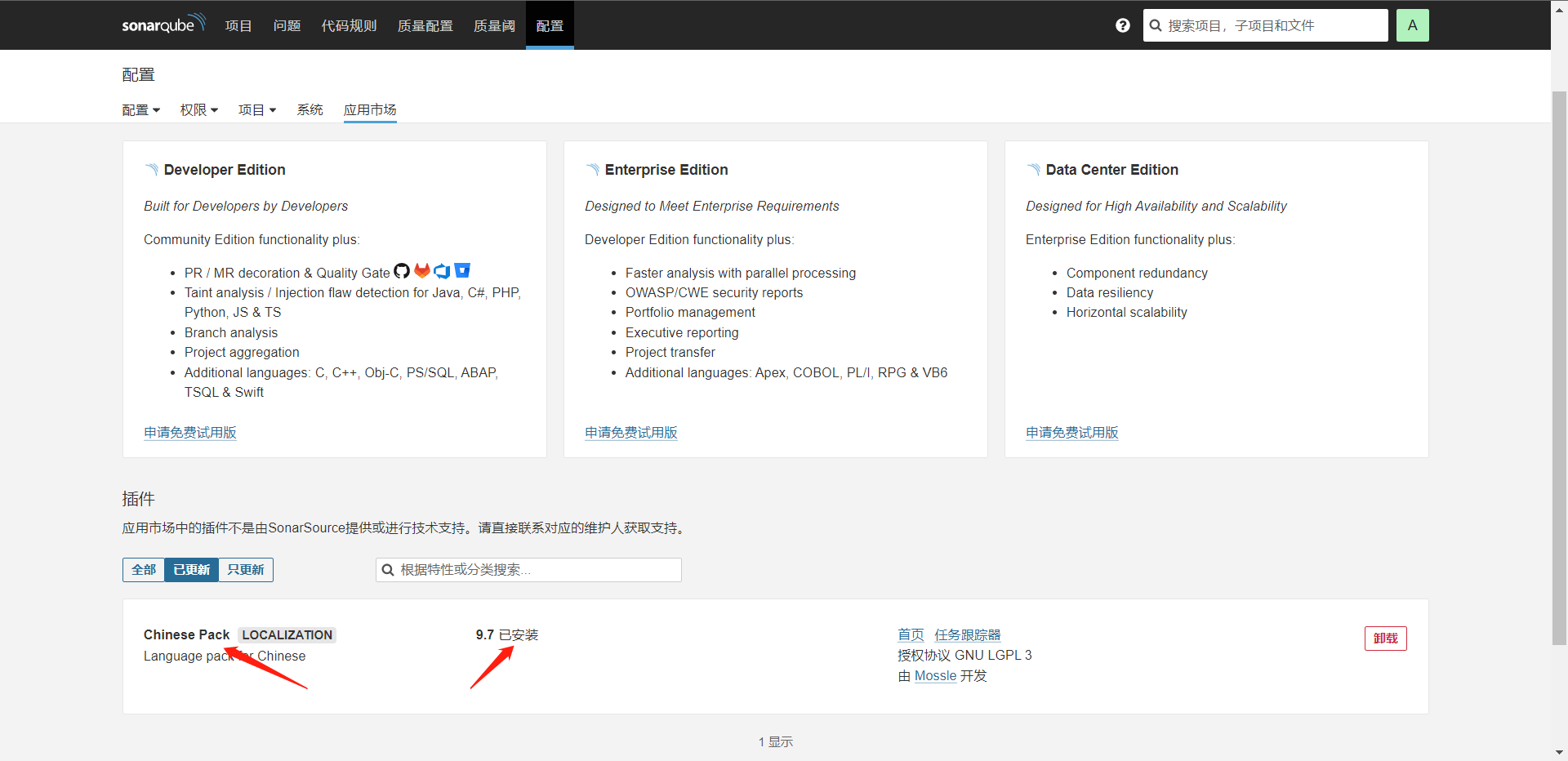

# 登录后会让修改密码,然后我们可以去安装中文插件,Gitlab,Github插件等

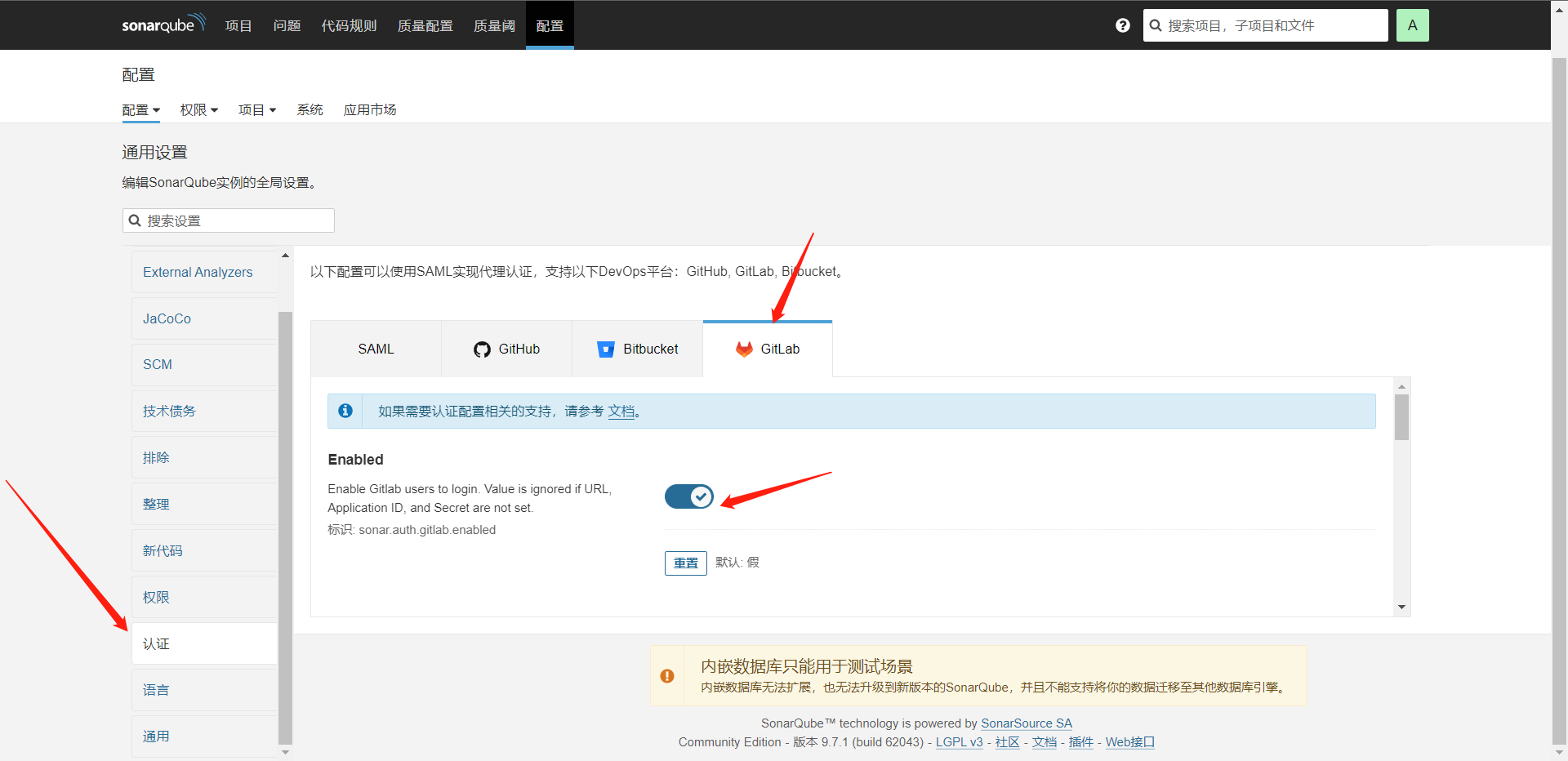

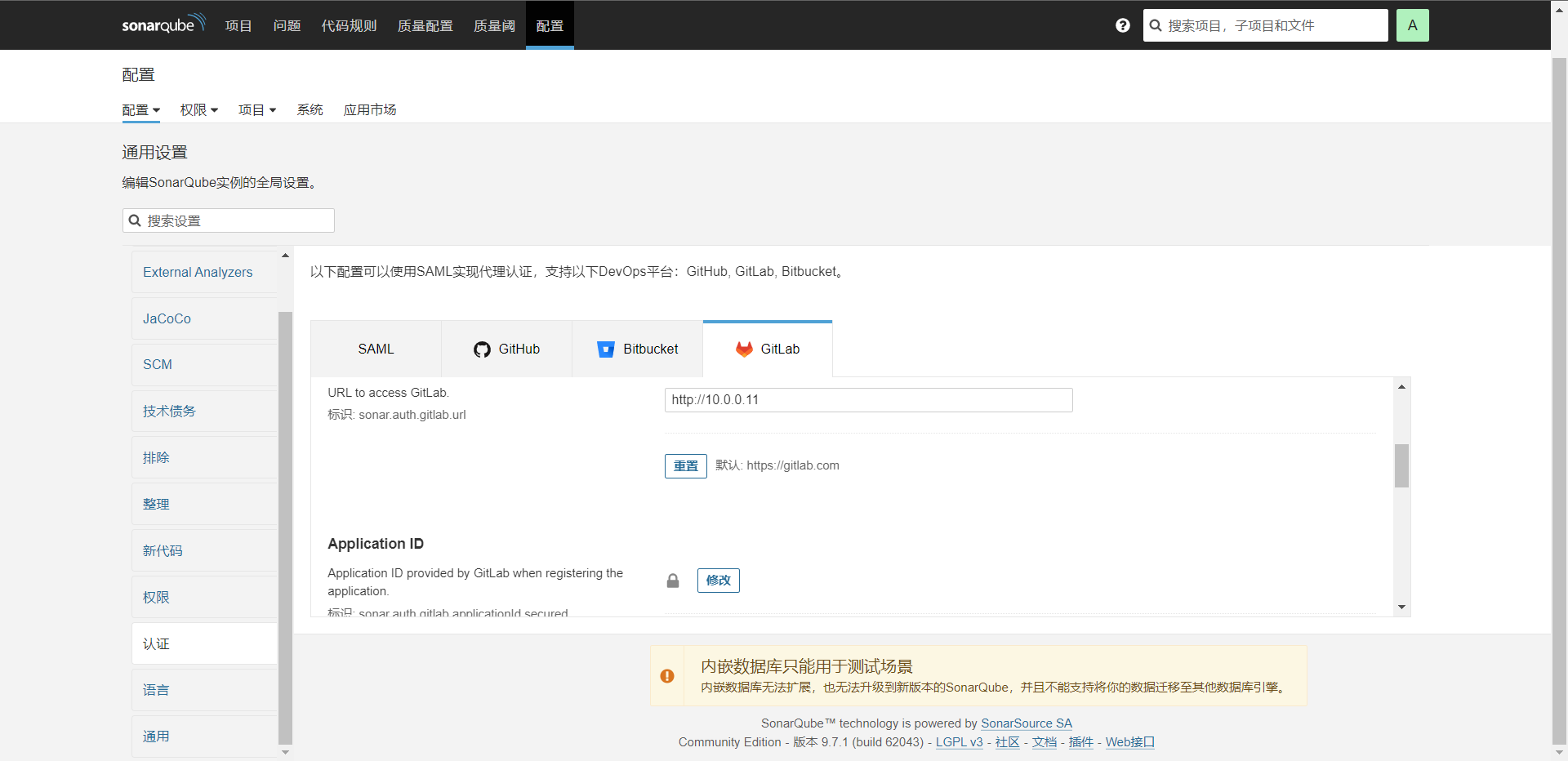

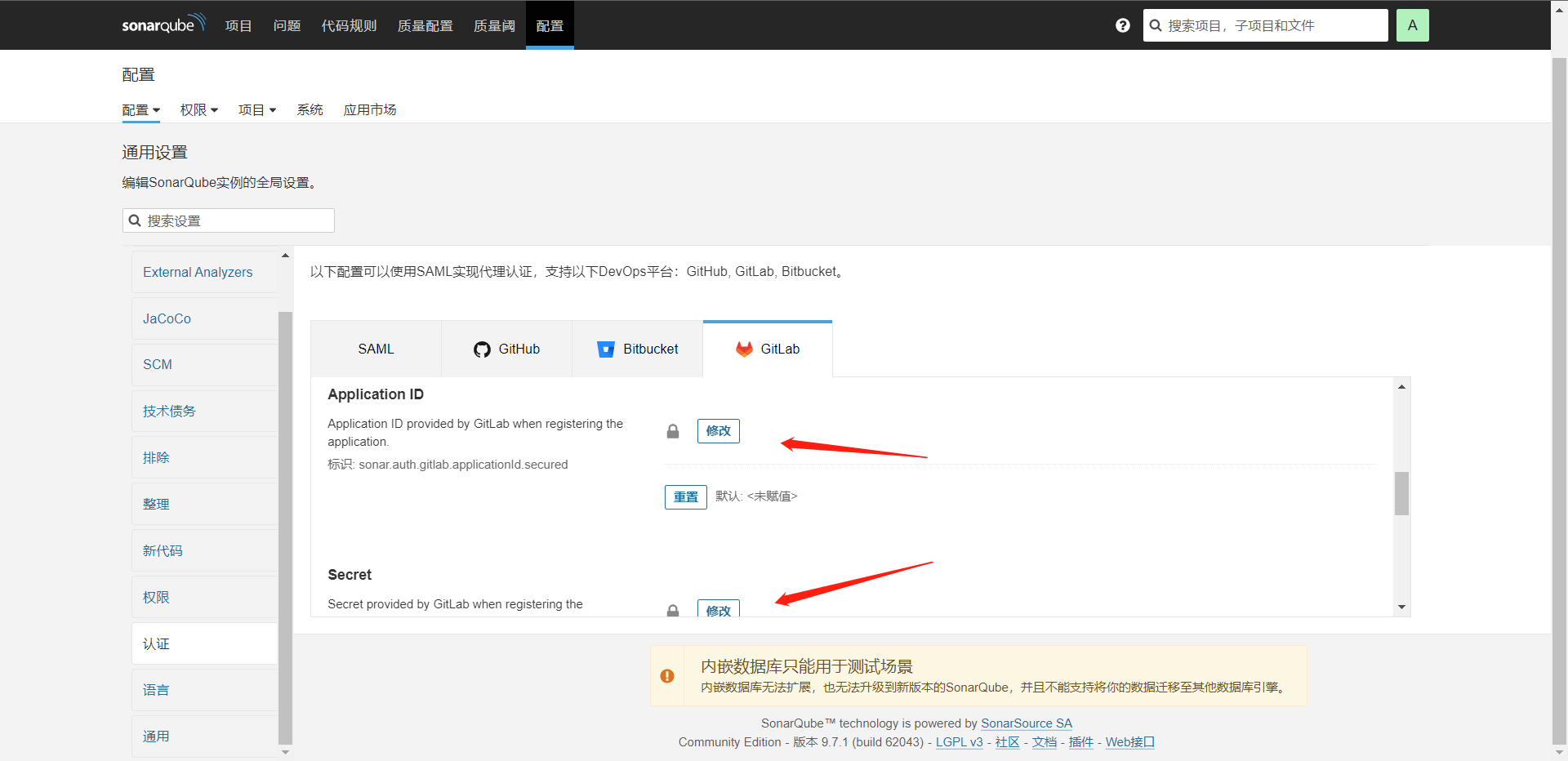

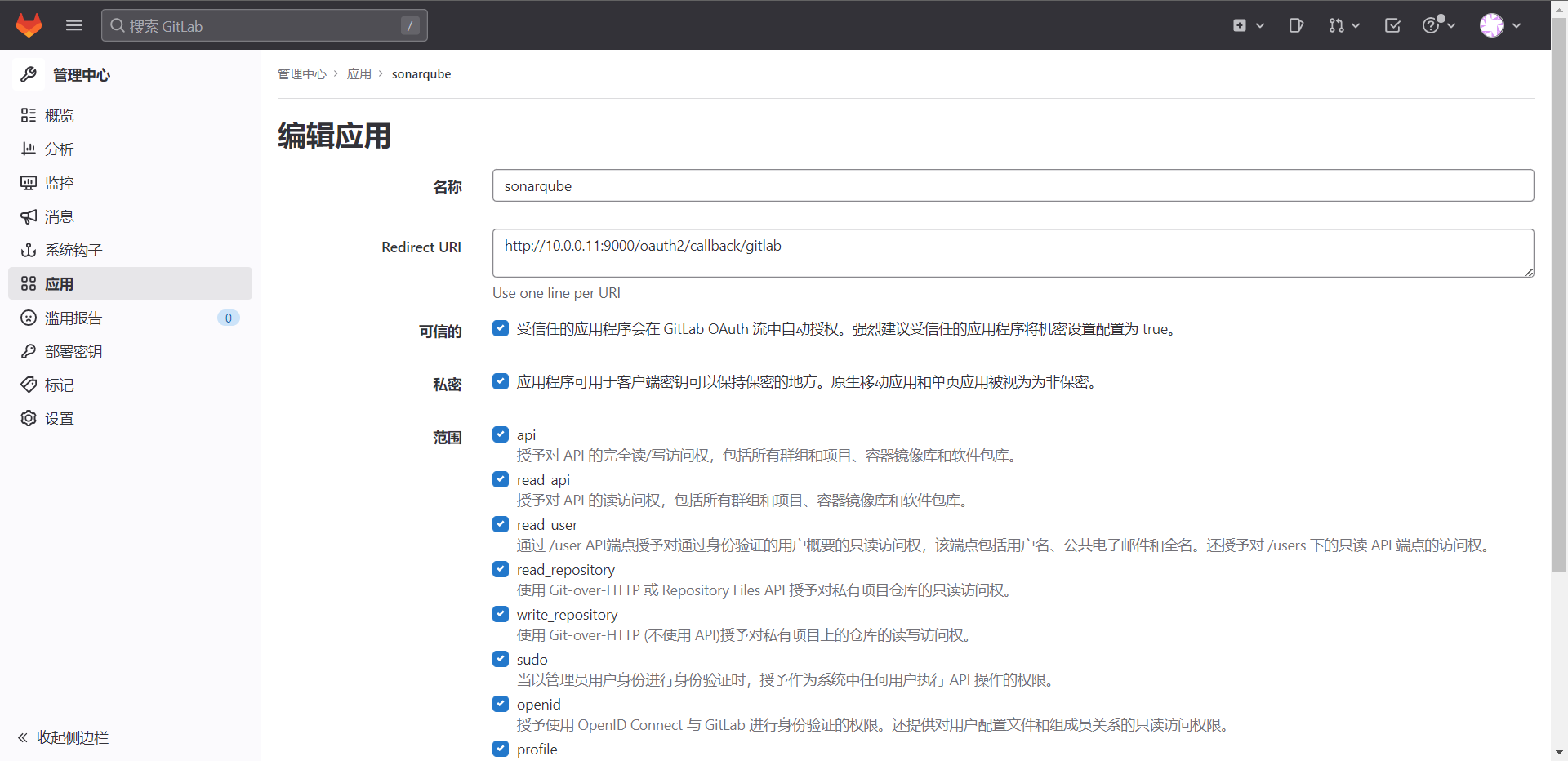

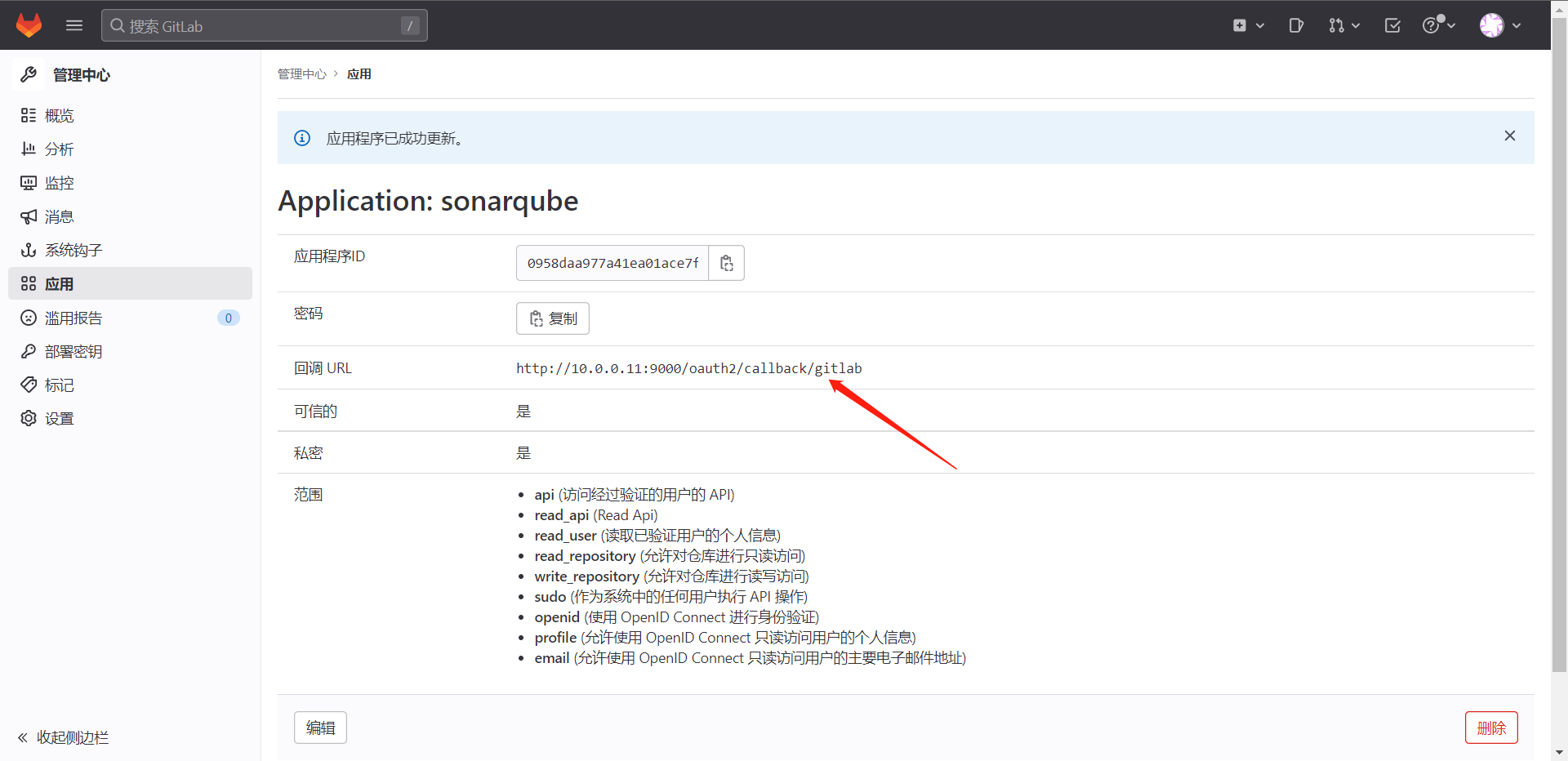

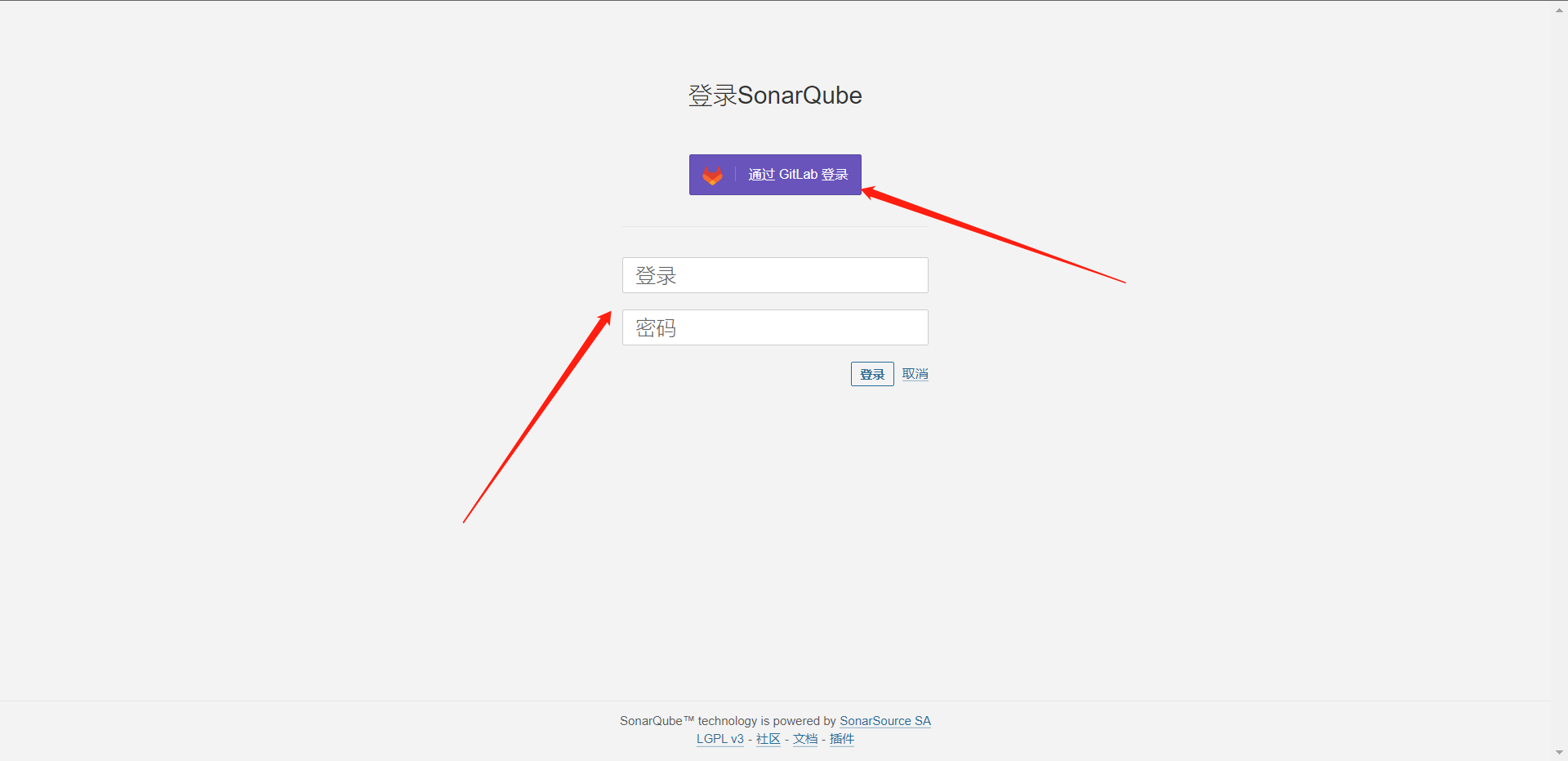

4:接入Gitlab或者Github认证

这里其实有个坑,根据官方所说在回调的时候地址必须是域名而不能是IP,所以我这里点击登录Gitlab会告诉我无效的URL。当然相对的Github的接入也是这样的。

5:SonarQube扫描仪配置

因为我们这个代码扫描工具其实是要么Jenkins的Agent集成在一起的,所以我们要去Agent上去安装SonarScanner这个工具。

https://binaries.sonarsource.com/?prefix=Distribution/sonar-scanner-cli/

https://binaries.sonarsource.com/Distribution/sonar-scanner-cli/sonar-scanner-cli-4.7.0.2747.zip

[root@cdk-server software]# cd /jenkins/software

[root@cdk-server software]# wget https://binaries.sonarsource.com/Distribution/sonar-scanner-cli/sonar-scanner-cli-4.7.0.2747.zip

[root@cdk-server software]# unzip sonar-scanner-cli-4.7.0.2747.zip

[root@cdk-server software]# mv sonar-scanner-4.7.0.2747 sonar-scanner

# 变更配置文件/jenkins/software/sonar-scanner/conf/sonar-scanner.properties

[root@cdk-server conf]# cat sonar-scanner.properties

#Configure here general information about the environment, such as SonarQube server connection details for example

#No information about specific project should appear here

#----- Default SonarQube server

sonar.host.url=http://10.0.0.11:9000

#----- Default source code encoding

sonar.sourceEncoding=UTF-8

# 配置环境变量

[root@cdk-server conf]# tail -n 6 /etc/profile

export MAVEN_HOME=/jenkins/software/maven

export ANT_HOME=/jenkins/software/ant

export GRADLE_HOME=/jenkins/software/gradle

export NODE_HOME=/jenkins/software/node

export SONAR_SCANNER=/jenkins/software/sonar-scanner

export PATH=$PATH:$MAVEN_HOME/bin:$ANT_HOME/bin:$GRADLE_HOME/bin:$NODE_HOME/bin:$SONAR_SCANNER/bin

[root@cdk-server conf]# source /etc/profile

# 验证

[root@cdk-server sonar-scanner]# sonar-scanner -h

INFO:

INFO: usage: sonar-scanner [options]

INFO:

INFO: Options:

INFO: -D,--define <arg> Define property

INFO: -h,--help Display help information

INFO: -v,--version Display version information

INFO: -X,--debug Produce execution debug output

6:本地扫描代码

这里我们还是选用Gitlab的项目

[root@cdk-server ~]# git clone http://10.0.0.11/root/simple-java-maven-app.git

# 然后我们需要编译和单测一下

[root@cdk-server ~]# cd simple-java-maven-app/

[root@cdk-server simple-java-maven-app]# mvn clean package

[root@cdk-server simple-java-maven-app]# mvn clean package

[INFO] Scanning for projects...

[INFO]

[INFO] --------------------------< org.example:app >---------------------------

[INFO] Building app 1.0-SNAPSHOT

[INFO] --------------------------------[ jar ]---------------------------------

[INFO]

[INFO] --- maven-clean-plugin:2.5:clean (default-clean) @ app ---

[INFO]

[INFO] --- maven-resources-plugin:2.6:resources (default-resources) @ app ---

[INFO] Using 'UTF-8' encoding to copy filtered resources.

[INFO] skip non existing resourceDirectory /root/simple-java-maven-app/src/main/resources

[INFO]

[INFO] --- maven-compiler-plugin:3.1:compile (default-compile) @ app ---

[INFO] Changes detected - recompiling the module!

[INFO] Compiling 1 source file to /root/simple-java-maven-app/target/classes

[INFO]

[INFO] --- maven-resources-plugin:2.6:testResources (default-testResources) @ app ---

[INFO] Using 'UTF-8' encoding to copy filtered resources.

[INFO] skip non existing resourceDirectory /root/simple-java-maven-app/src/test/resources

[INFO]

[INFO] --- maven-compiler-plugin:3.1:testCompile (default-testCompile) @ app ---

[INFO] No sources to compile

[INFO]

[INFO] --- maven-surefire-plugin:2.12.4:test (default-test) @ app ---

[INFO] No tests to run.

[INFO]

[INFO] --- maven-jar-plugin:2.4:jar (default-jar) @ app ---

[INFO] Building jar: /root/simple-java-maven-app/target/app-1.0-SNAPSHOT.jar

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 4.162 s

[INFO] Finished at: 2022-12-06T23:58:40-05:00

[INFO] ------------------------------------------------------------------------

[root@cdk-server simple-java-maven-app]#

[root@cdk-server simple-java-maven-app]# ls

Dockerfile jenkins Jenkinsfile pom.xml README.md src target

# 我们发现多了一个target目录

[root@cdk-server simple-java-maven-app]# ls target/

app-1.0-SNAPSHOT.jar classes generated-sources maven-archiver maven-status

# 而这些文件正是扫描所需要的文件,然后我们来分析一下项目

sonar-scanner -Dsonar.host.url=http://10.0.0.11:9000 \

-Dsonar.projectKey=java-maven-app \

-Dsonar.projectName=java-maven-app \

-Dsonar.projectVersion=0.1 \

-Dsonar.login=admin \

-Dsonar.password=xxzx@123 \

-Dsonar.ws.timeout=30 \

-Dsonar.projectDescription="This is My Demo App" \

-Dsonar.sources=src \

-Dsonar.sourceEncoding=UTF-8 \

-Dsonar.java.binnaries=target/classes \

# 下面是测试相关的配置

# -Dsonar.java.test.binaries=target/test-classes \

# -Dsonar.java.surefire.report=target/surefire-reports

[root@cdk-server simple-java-maven-app]# sonar-scanner -Dsonar.host.url=http://10.0.0.11:9000 \

> -Dsonar.projectKey=java-maven-app \

> -Dsonar.projectName=java-maven-app \

> -Dsonar.projectVersion=0.1 \

> -Dsonar.login=admin \

> -Dsonar.password=xxzx@123 \

> -Dsonar.ws.timeout=30 \

> -Dsonar.projectDescription="This is My Demo App" \

> -Dsonar.sources=src \

> -Dsonar.sourceEncoding=UTF-8 \

> -Dsonar.java.binnaries=target/classes

INFO: Scanner configuration file: /jenkins/software/sonar-scanner/conf/sonar-scanner.properties

INFO: Project root configuration file: NONE

INFO: SonarScanner 4.7.0.2747

INFO: Java 11.0.17 Red Hat, Inc. (64-bit)

INFO: Linux 5.14.0-70.13.1.el9_0.x86_64 amd64

INFO: User cache: /root/.sonar/cache

INFO: Scanner configuration file: /jenkins/software/sonar-scanner/conf/sonar-scanner.properties

INFO: Project root configuration file: NONE

INFO: Analyzing on SonarQube server 9.7.1.62043

INFO: Default locale: "en_US", source code encoding: "UTF-8"

INFO: Load global settings

INFO: Load global settings (done) | time=451ms

INFO: Server id: 147B411E-AYTooGxV_Ux7LEOx3nEm

INFO: User cache: /root/.sonar/cache

INFO: Load/download plugins

INFO: Load plugins index

INFO: Load plugins index (done) | time=396ms

INFO: Plugin [l10nzh] defines 'l10nen' as base plugin. This metadata can be removed from manifest of l10n plugins since version 5.2.

INFO: Load/download plugins (done) | time=11208ms

INFO: Process project properties

INFO: Process project properties (done) | time=30ms

INFO: Execute project builders

INFO: Execute project builders (done) | time=7ms

INFO: Project key: java-maven-app

INFO: Base dir: /root/simple-java-maven-app

INFO: Working dir: /root/simple-java-maven-app/.scannerwork

INFO: Load project settings for component key: 'java-maven-app'

INFO: Load quality profiles

INFO: Load quality profiles (done) | time=541ms

INFO: Load active rules

INFO: Load active rules (done) | time=10935ms

INFO: Load analysis cache

INFO: Load analysis cache (404) | time=188ms

INFO: Load project repositories

INFO: Load project repositories (done) | time=183ms

INFO: Indexing files...

INFO: Project configuration:

INFO: 1 file indexed

INFO: 0 files ignored because of scm ignore settings

INFO: Quality profile for java: Sonar way

INFO: ------------- Run sensors on module java-maven-app

INFO: Load metrics repository

INFO: Load metrics repository (done) | time=244ms

INFO: Sensor JavaSensor [java]

INFO: Configured Java source version (sonar.java.source): none

INFO: JavaClasspath initialization

INFO: JavaClasspath initialization (done) | time=10ms

INFO: JavaTestClasspath initialization

INFO: JavaTestClasspath initialization (done) | time=1ms

INFO: Server-side caching is enabled. The Java analyzer will not try to leverage data from a previous analysis.

INFO: Using ECJ batch to parse 1 Main java source files with batch size 22 KB.

INFO: Starting batch processing.

INFO: The Java analyzer cannot skip unchanged files in this context. A full analysis is performed for all files.

INFO: 100% analyzed

INFO: Batch processing: Done.

INFO: Did not optimize analysis for any files, performed a full analysis for all 1 files.

INFO: No "Test" source files to scan.

INFO: No "Generated" source files to scan.

INFO: Sensor JavaSensor [java] (done) | time=3730ms

INFO: Sensor JaCoCo XML Report Importer [jacoco]

INFO: 'sonar.coverage.jacoco.xmlReportPaths' is not defined. Using default locations: target/site/jacoco/jacoco.xml,target/site/jacoco-it/jacoco.xml,build/reports/jacoco/test/jacocoTestReport.xml

INFO: No report imported, no coverage information will be imported by JaCoCo XML Report Importer

INFO: Sensor JaCoCo XML Report Importer [jacoco] (done) | time=10ms

INFO: Sensor CSS Rules [javascript]

INFO: No CSS, PHP, HTML or VueJS files are found in the project. CSS analysis is skipped.

INFO: Sensor CSS Rules [javascript] (done) | time=6ms

INFO: Sensor C# Project Type Information [csharp]

INFO: Sensor C# Project Type Information [csharp] (done) | time=6ms

INFO: Sensor C# Analysis Log [csharp]

INFO: Sensor C# Analysis Log [csharp] (done) | time=74ms

INFO: Sensor C# Properties [csharp]

INFO: Sensor C# Properties [csharp] (done) | time=1ms

INFO: Sensor SurefireSensor [java]

INFO: parsing [/root/simple-java-maven-app/target/surefire-reports]

INFO: Sensor SurefireSensor [java] (done) | time=8ms

INFO: Sensor HTML [web]

INFO: Sensor HTML [web] (done) | time=12ms

INFO: Sensor Text Sensor [text]

INFO: 1 source file to be analyzed

INFO: 1/1 source file has been analyzed

INFO: Sensor Text Sensor [text] (done) | time=31ms

INFO: Sensor VB.NET Project Type Information [vbnet]

INFO: Sensor VB.NET Project Type Information [vbnet] (done) | time=7ms

INFO: Sensor VB.NET Analysis Log [vbnet]

INFO: Sensor VB.NET Analysis Log [vbnet] (done) | time=87ms

INFO: Sensor VB.NET Properties [vbnet]

INFO: Sensor VB.NET Properties [vbnet] (done) | time=0ms

INFO: ------------- Run sensors on project

INFO: Sensor Analysis Warnings import [csharp]

INFO: Sensor Analysis Warnings import [csharp] (done) | time=3ms

INFO: Sensor Zero Coverage Sensor

INFO: Sensor Zero Coverage Sensor (done) | time=27ms

INFO: Sensor Java CPD Block Indexer

INFO: Sensor Java CPD Block Indexer (done) | time=52ms

INFO: SCM Publisher SCM provider for this project is: git

INFO: SCM Publisher 1 source file to be analyzed

INFO: SCM Publisher 1/1 source file have been analyzed (done) | time=291ms

INFO: CPD Executor 1 file had no CPD blocks

INFO: CPD Executor Calculating CPD for 0 files

INFO: CPD Executor CPD calculation finished (done) | time=0ms

INFO: Analysis report generated in 262ms, dir size=119.2 kB

INFO: Analysis report compressed in 34ms, zip size=16.3 kB

INFO: Analysis report uploaded in 1898ms

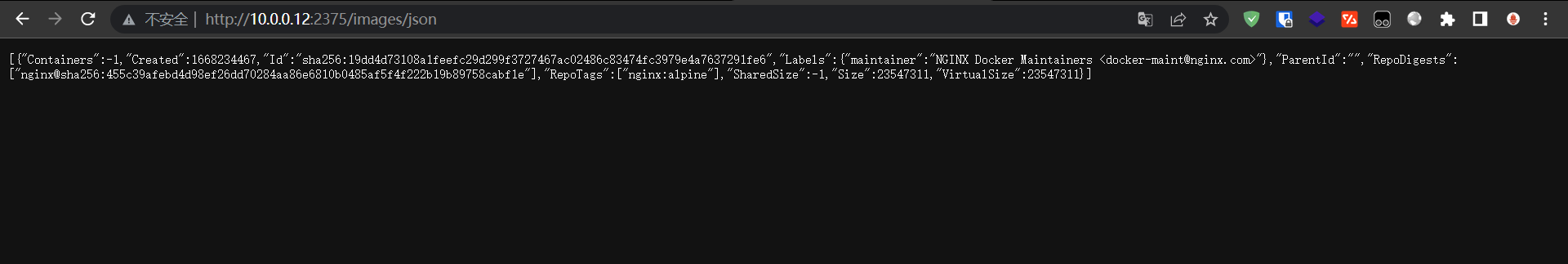

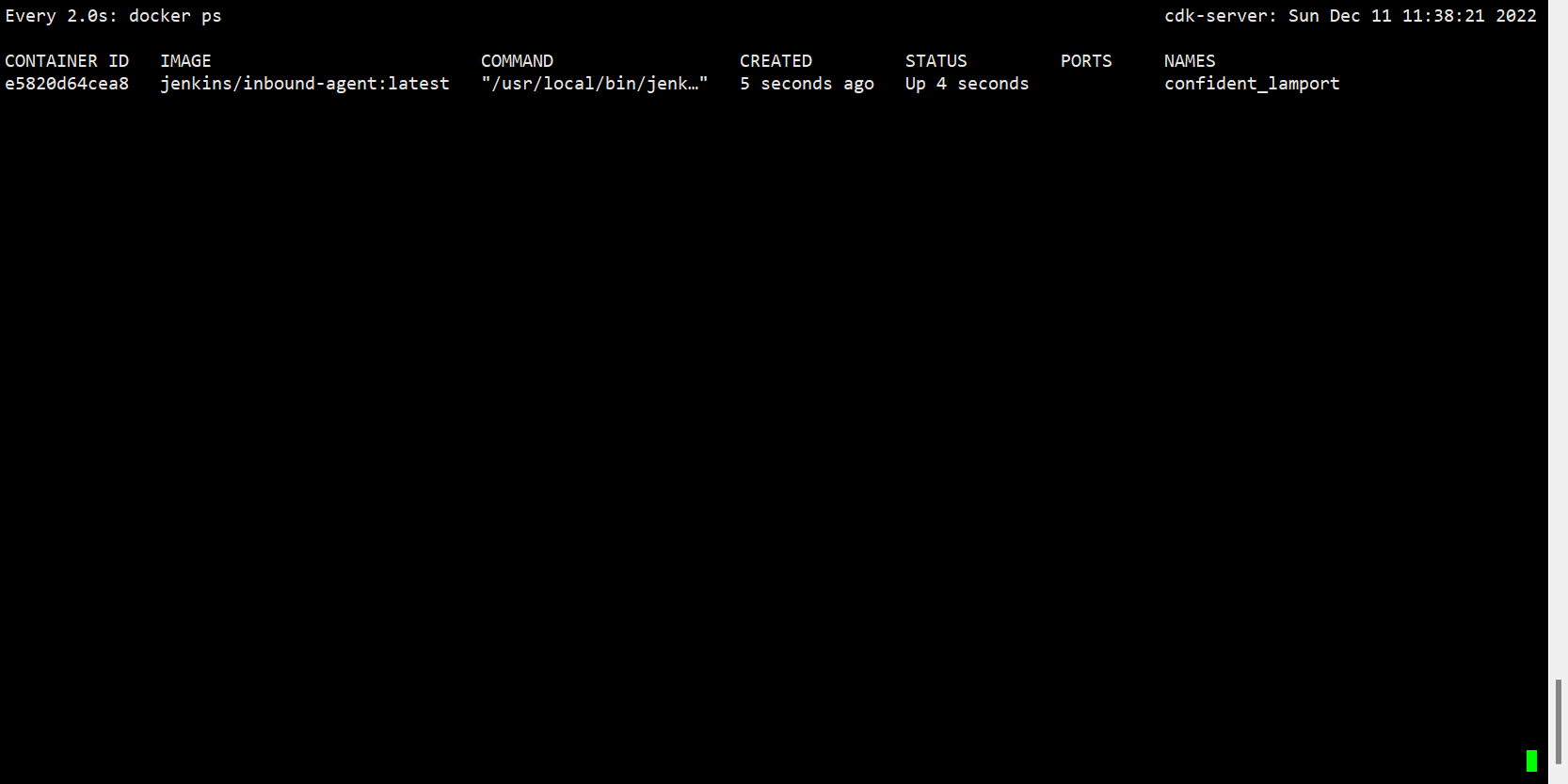

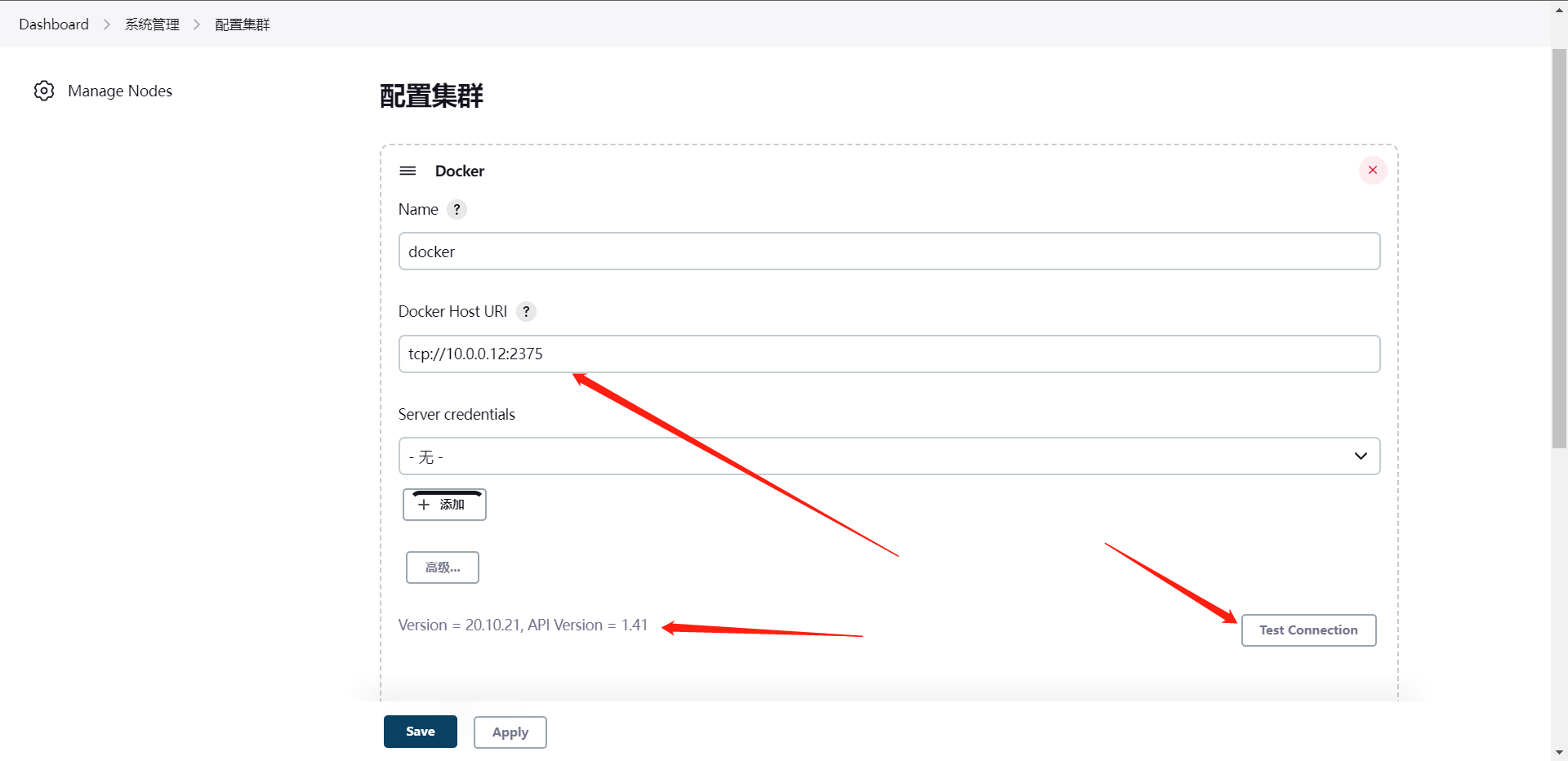

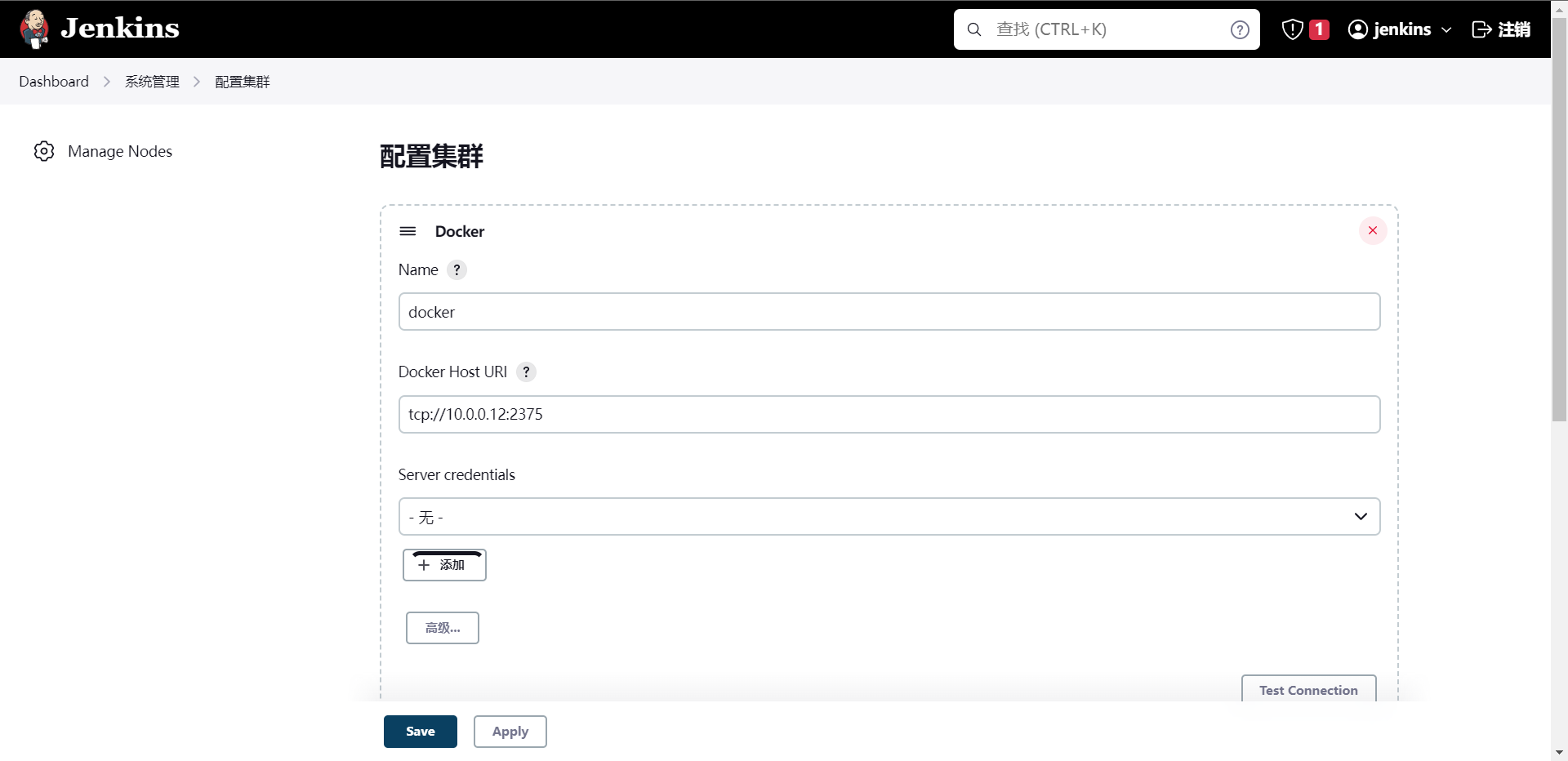

INFO: ANALYSIS SUCCESSFUL, you can find the results at: http://10.0.0.11:9000/dashboard?id=java-maven-app # 这个地址就是项目地址

INFO: Note that you will be able to access the updated dashboard once the server has processed the submitted analysis report

INFO: More about the report processing at http://10.0.0.11:9000/api/ce/task?id=AYTrEdJFitGoXwhtrtju

INFO: Analysis total time: 28.741 s

INFO: ------------------------------------------------------------------------

INFO: EXECUTION SUCCESS

INFO: ------------------------------------------------------------------------

INFO: Total time: 46.340s

INFO: Final Memory: 20M/73M

INFO: ------------------------------------------------------------------------

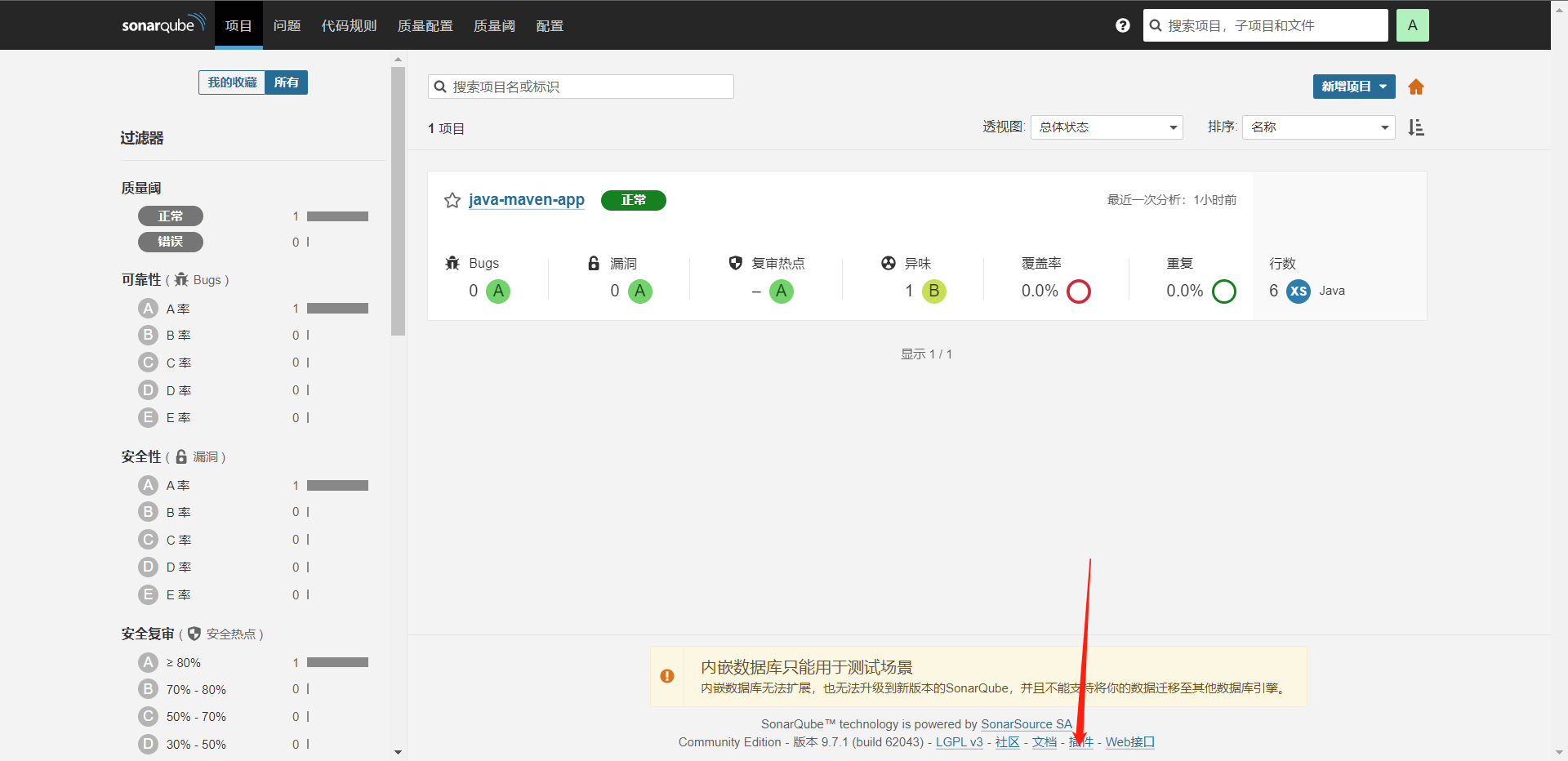

扫描完成,这个时候我们在SonarQube里就可以看到我们的扫描项目了

有可能我们在做的时候会碰见报错,当然我这里没碰到,可能是告诉我们没有安装插件包,因为我们是java,所以可以安装一下这个插件

https://github.com/SonarSource/sonar-java/archive/refs/tags/7.15.0.30507.tar.gz

[root@cdk-server ~]# wget https://github.com/SonarSource/sonar-java/archive/refs/tags/7.15.0.30507.tar.gz

[root@cdk-server ~]# tar xf 7.15.0.30507.tar.gz

[root@cdk-server ~]# cd sonar-java-7.15.0.30507/

# 编译插件

[root@cdk-server sonar-java-7.15.0.30507]# mvn clean package -DskipTests

# 这个编译时间是非常长的,编译好了传Docker的目录然后重启一下服务就好了,不过目前这个我扫描java倒是没出错

其实我认为最新版的插件其实基本上都已经集成在里面了,比如Sonar-java等

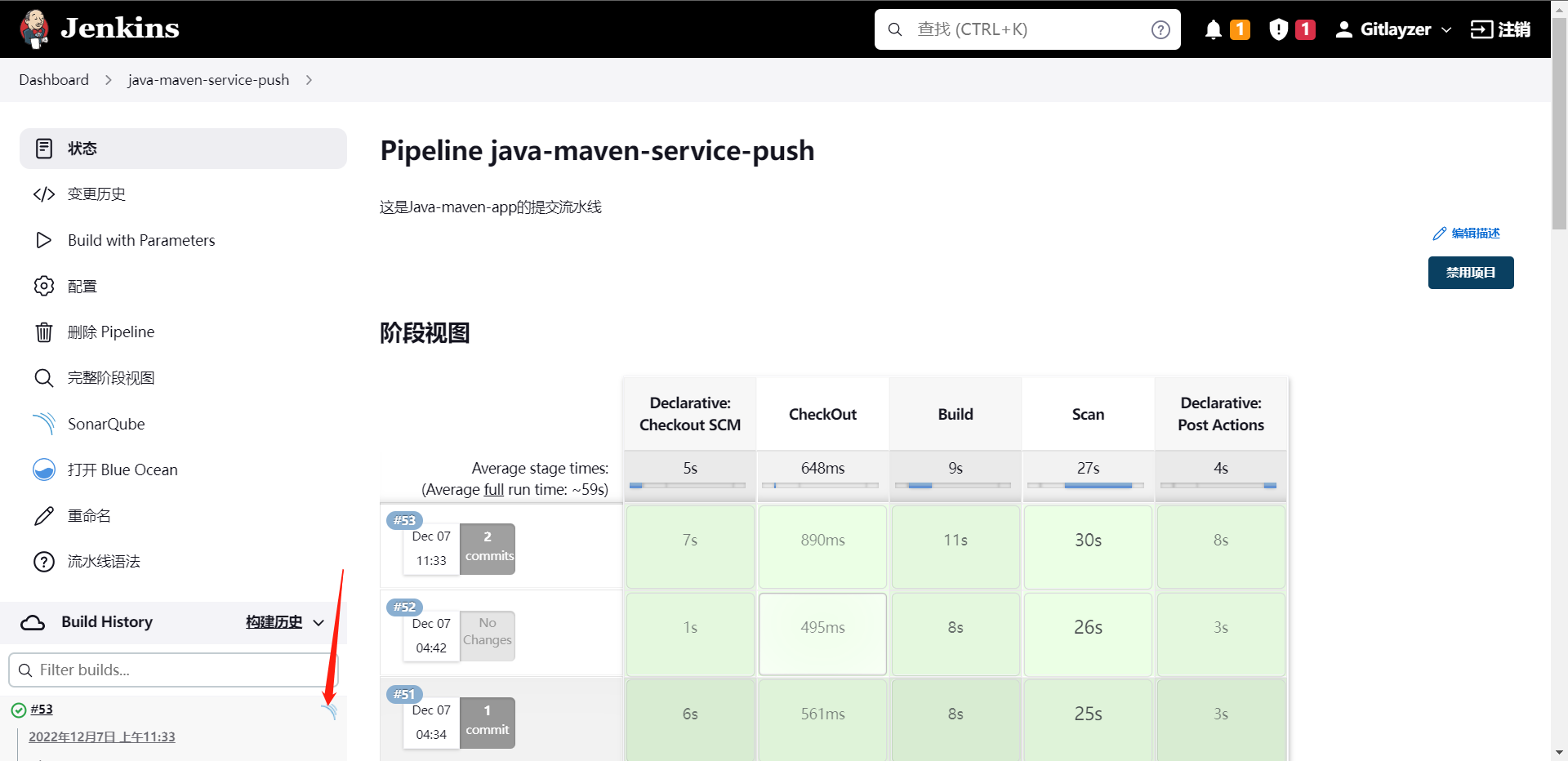

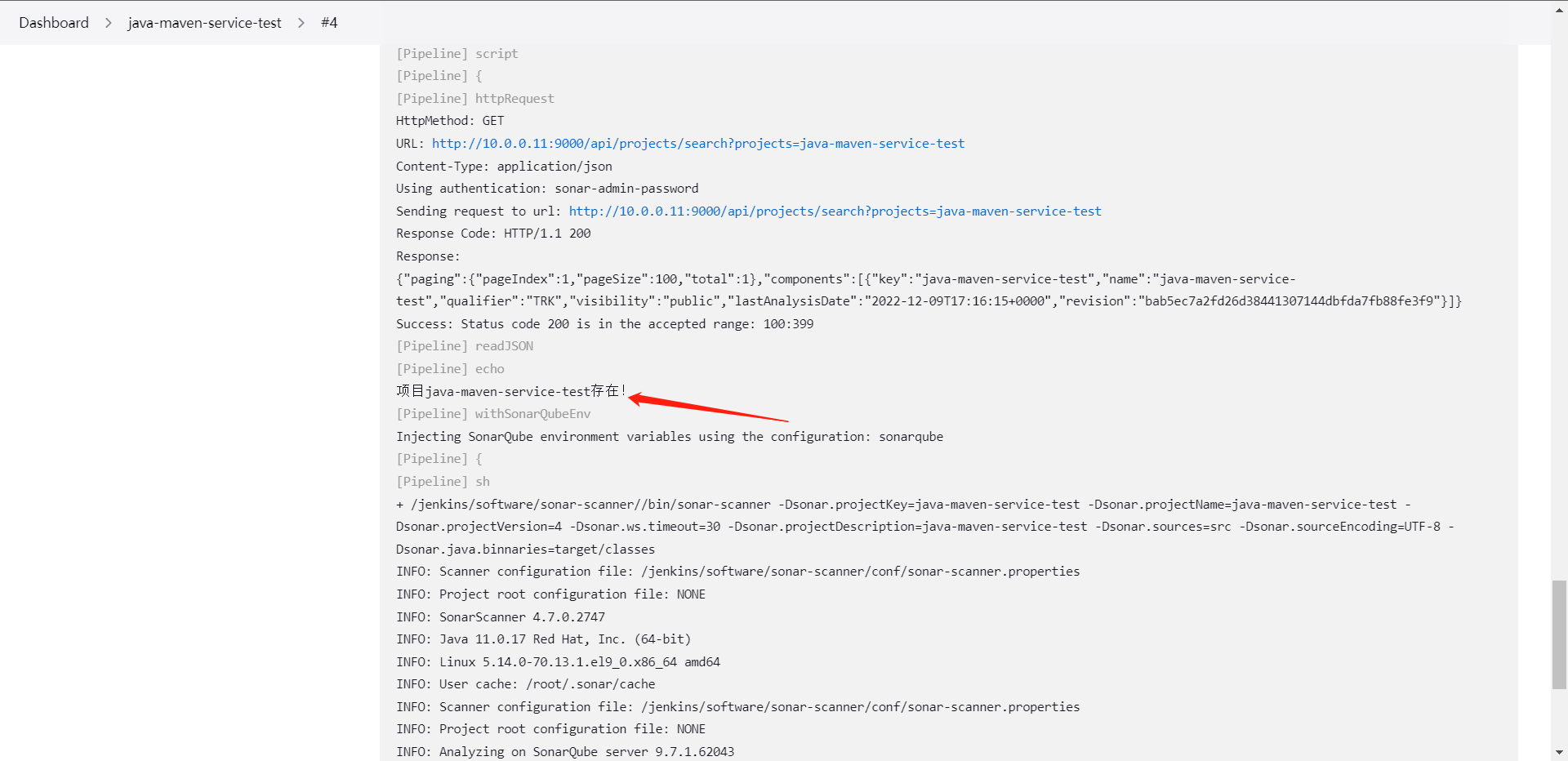

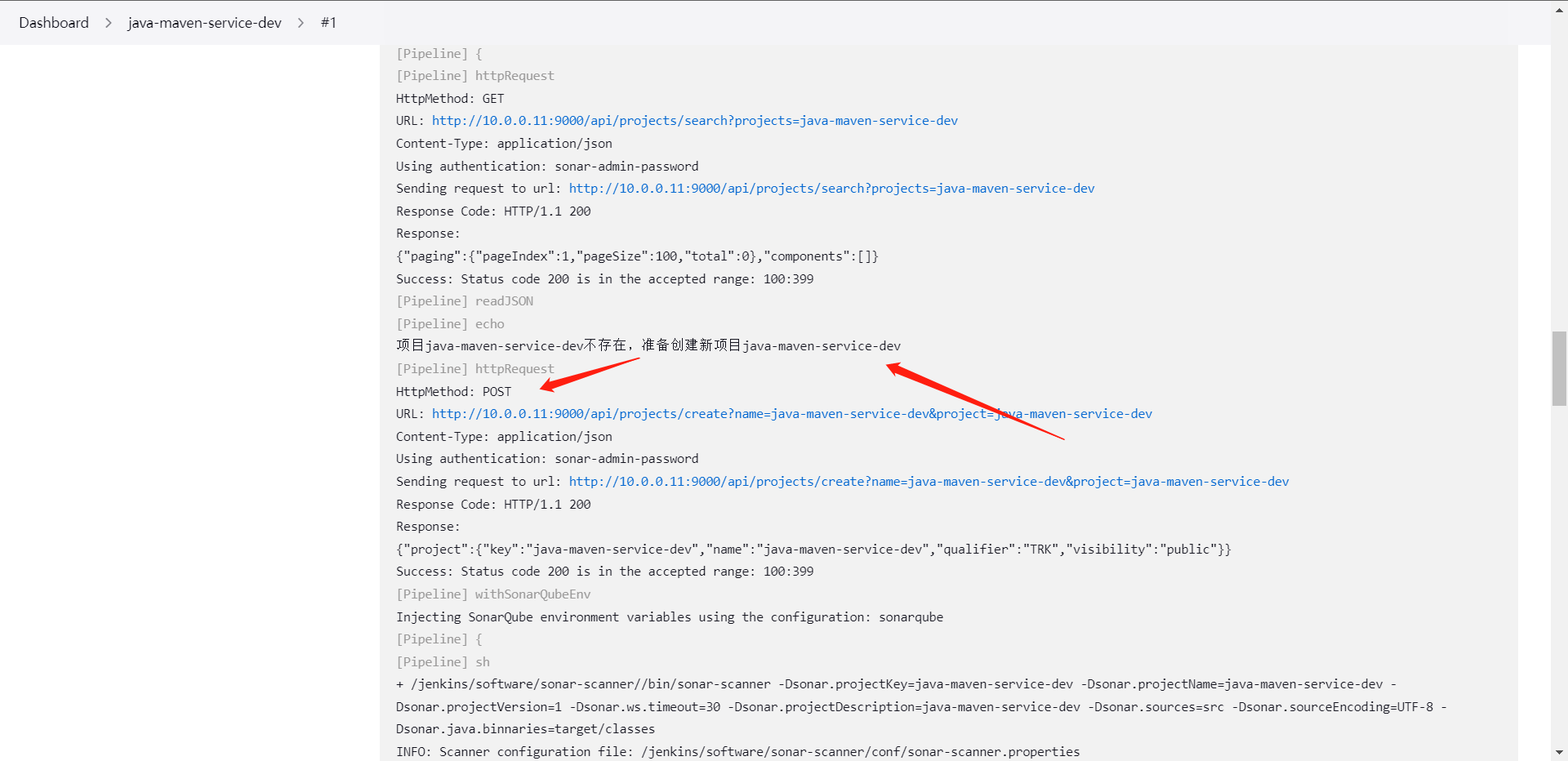

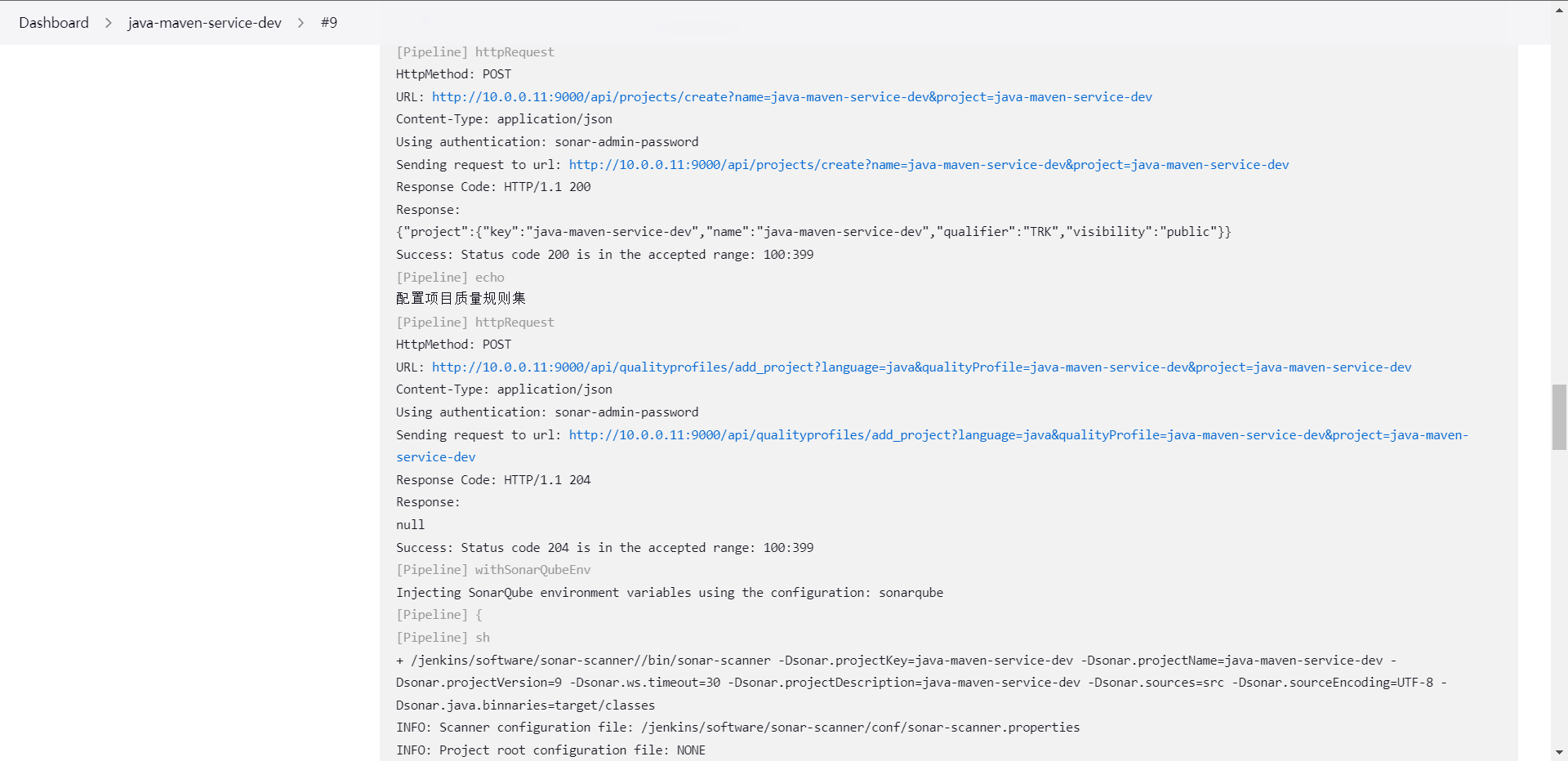

7:流水线接入自动化扫描

前面我们看到了是如何本地测试,但是我们的目标是将其接入Pipeline流水线,那么我们下面来看看如何将其接入我们的流水线,当然接入方式也是有两种

1:SonarQube插件

2:ShreLinrary共享库

那么我们这边就用ShareLibrary接入了

[root@cdk-server library]# touch sonarqube.groovy

package org.library

// 代码扫描

def SonarScan(projectName,projectDescription,projectPath,version){

def scannerHome = "/jenkins/software/sonar-scanner/"

def sonarServer = "http://10.0.0.11:9000"

sh """

${scannerHome}/bin/sonar-scanner -Dsonar.host.url=${sonarServer} \

-Dsonar.projectKey=${projectName} \

-Dsonar.projectName=${projectName} \

-Dsonar.projectVersion=${version} \

-Dsonar.login=admin \

-Dsonar.password=xxzx@123 \

-Dsonar.ws.timeout=30 \

-Dsonar.projectDescription="${projectDescription}" \

-Dsonar.sources="${projectPath}" \

-Dsonar.sourceEncoding=UTF-8 \

-Dsonar.java.binnaries=target/classes \

# 因为我的代码没有测试,所以这里就不加了,大家自己找的项目应该是有测试的记得加

# -Dsonar.java.test.binaries=target/test-classes \

# -Dsonar.java.surefire.report=target/surefire-reports

"""

}

[root@cdk-server library]# git add .

[root@cdk-server library]# git commit -m "add sonarqube code"

[master e76e207] add sonarqube code

1 file changed, 21 insertions(+)

create mode 100644 src/org/library/sonarqube.groovy

[root@cdk-server library]# git push origin master

Enumerating objects: 10, done.

Counting objects: 100% (10/10), done.

Delta compression using up to 2 threads

Compressing objects: 100% (4/4), done.

Writing objects: 100% (6/6), 772 bytes | 772.00 KiB/s, done.

Total 6 (delta 2), reused 0 (delta 0), pack-reused 0

remote: Resolving deltas: 100% (2/2), completed with 2 local objects.

To https://github.com/gitlayzer/package-ci.git

6ff8ea0..e76e207 master -> master

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

def gitlab = new org.library.gitlab()

def toemail = new org.library.toemail()

def sonar = new org.library.sonarqube()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

String gitlab_url = "${env.gitlab_url}"

String branchName = "${env.branchName}"

if ("${runOps}" == "GitlabPush") {

branchName = branch - "refs/heads/"

currentBuild.description = "Trigger By ${userName} ${branchName}"

gitlab.ChangeCommitStatus(projectId,commitSha,'running')

}

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("CheckOut") {

steps {

script{

println("${branchName}")

checkout([$class: 'GitSCM', branches: [[name: "${branchName}"]], extensions: [], userRemoteConfigs: [[credentialsId: 'gitlab', url: "${gitlab_url}"]]])

}

}

}

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

stage("Scan") {

steps {

script{

sonar.SonarScan("${JOB_NAME}","${JOB_NAME}","src","${BUILD_ID}")

}

}

}

}

post {

success {

script {

println("Success!!!")

gitlab.ChangeCommitStatus(projectId,commitSha,'success')

toemail.Email("构建流水线成功",userEmail)

}

}

}

}

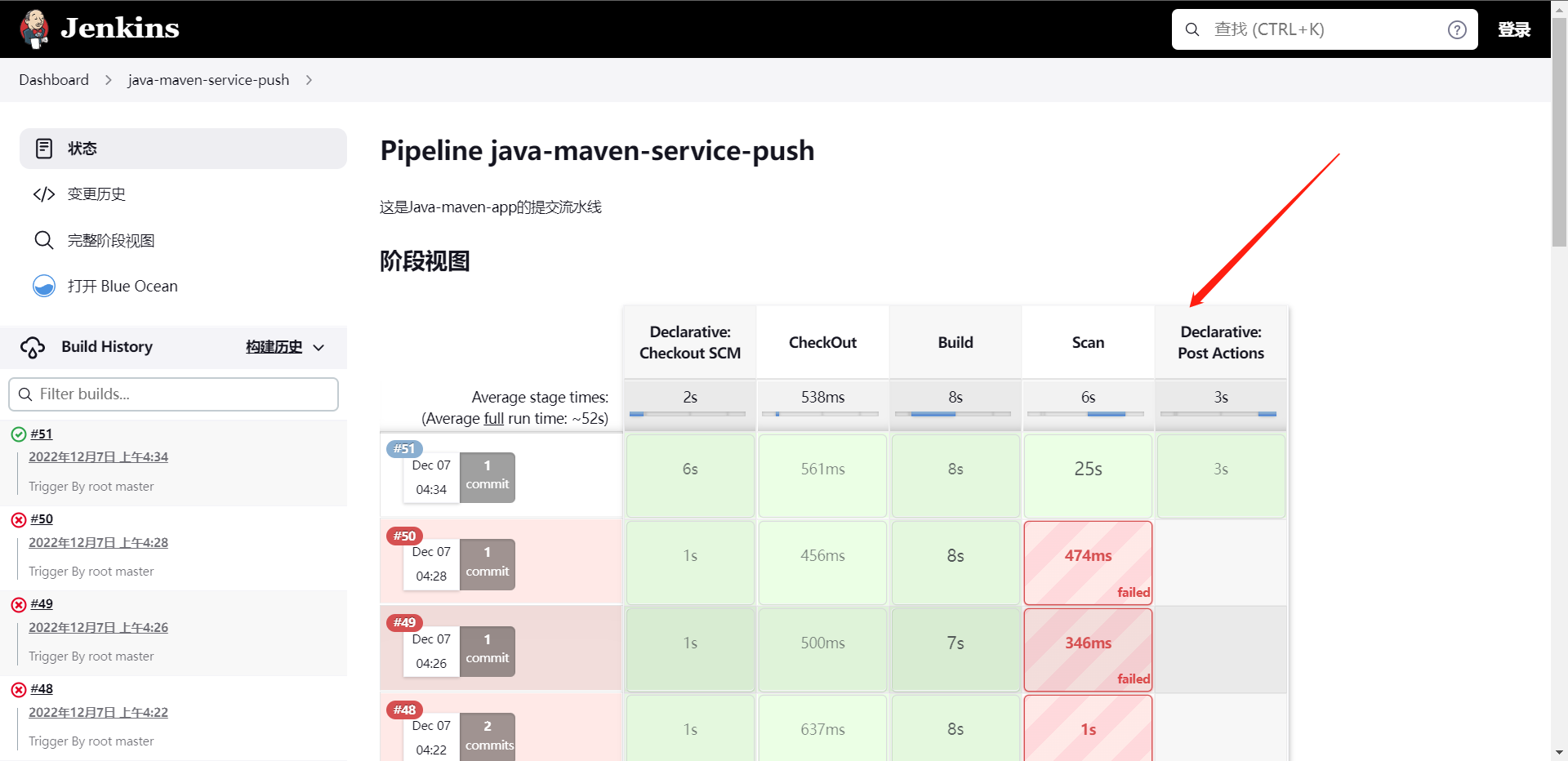

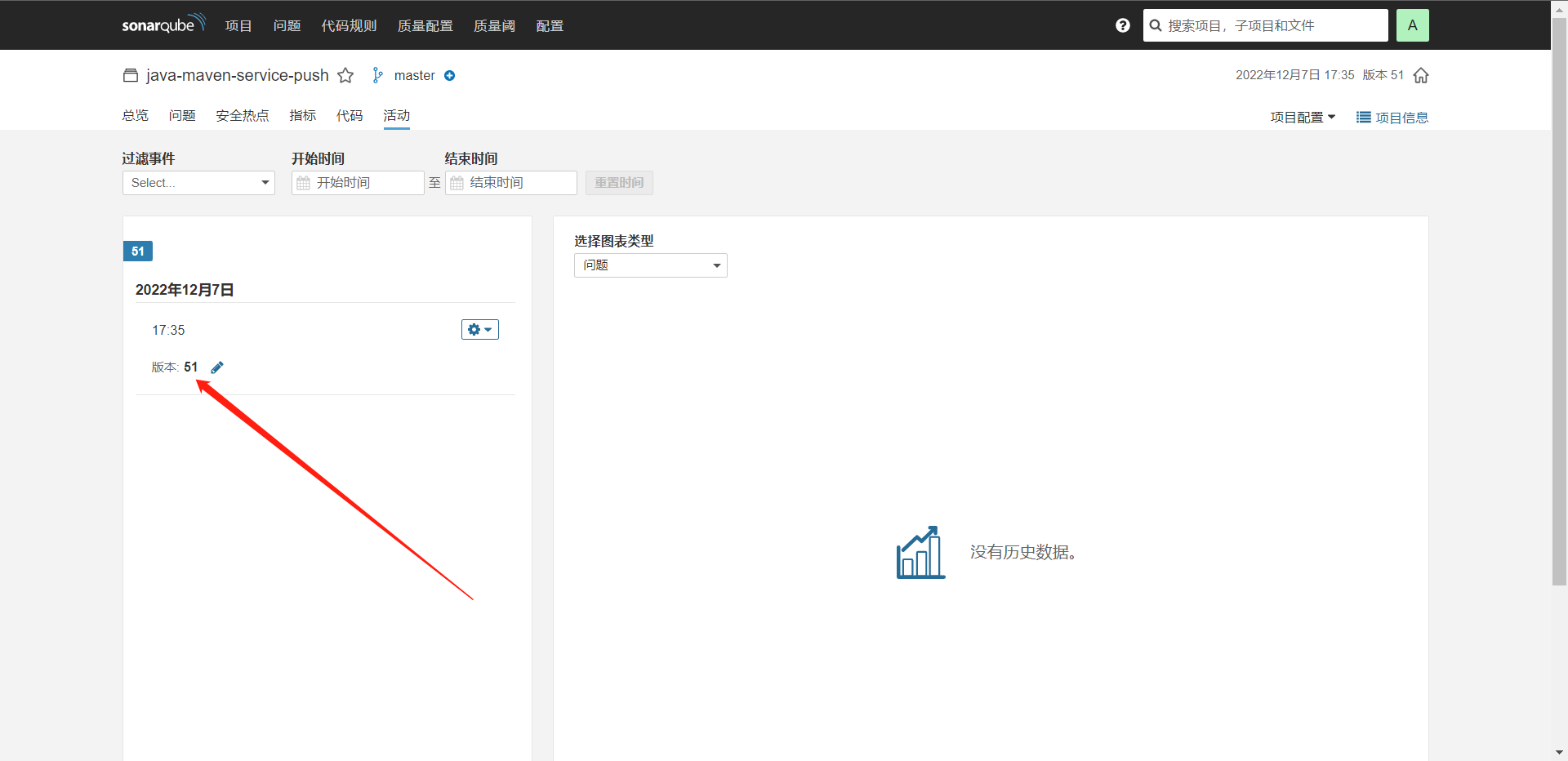

这个时候我们发现我们代码扫描也通过了,

这里就是我们定义的版本,基本上这就是我们结合流水线使用的了。

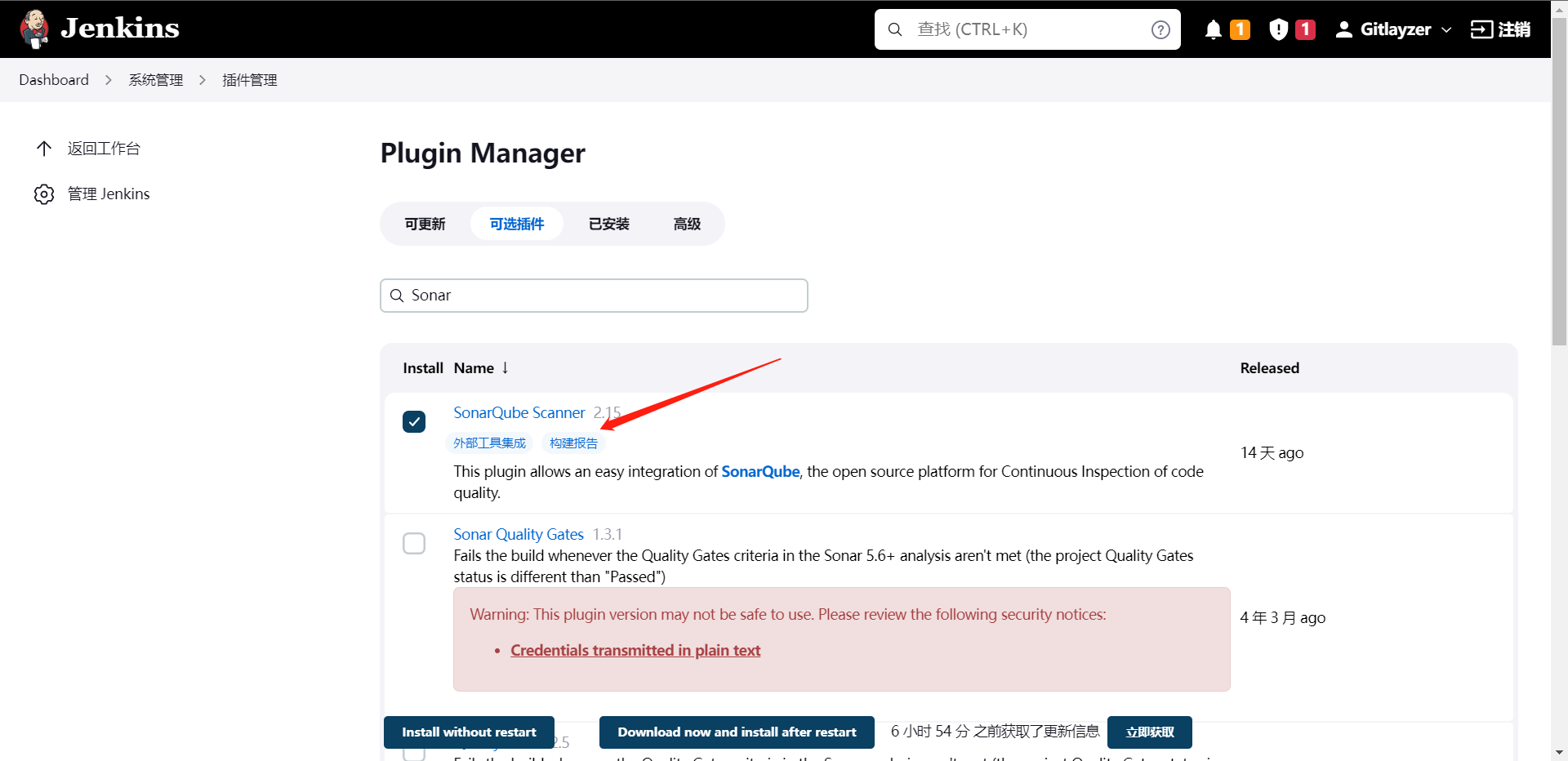

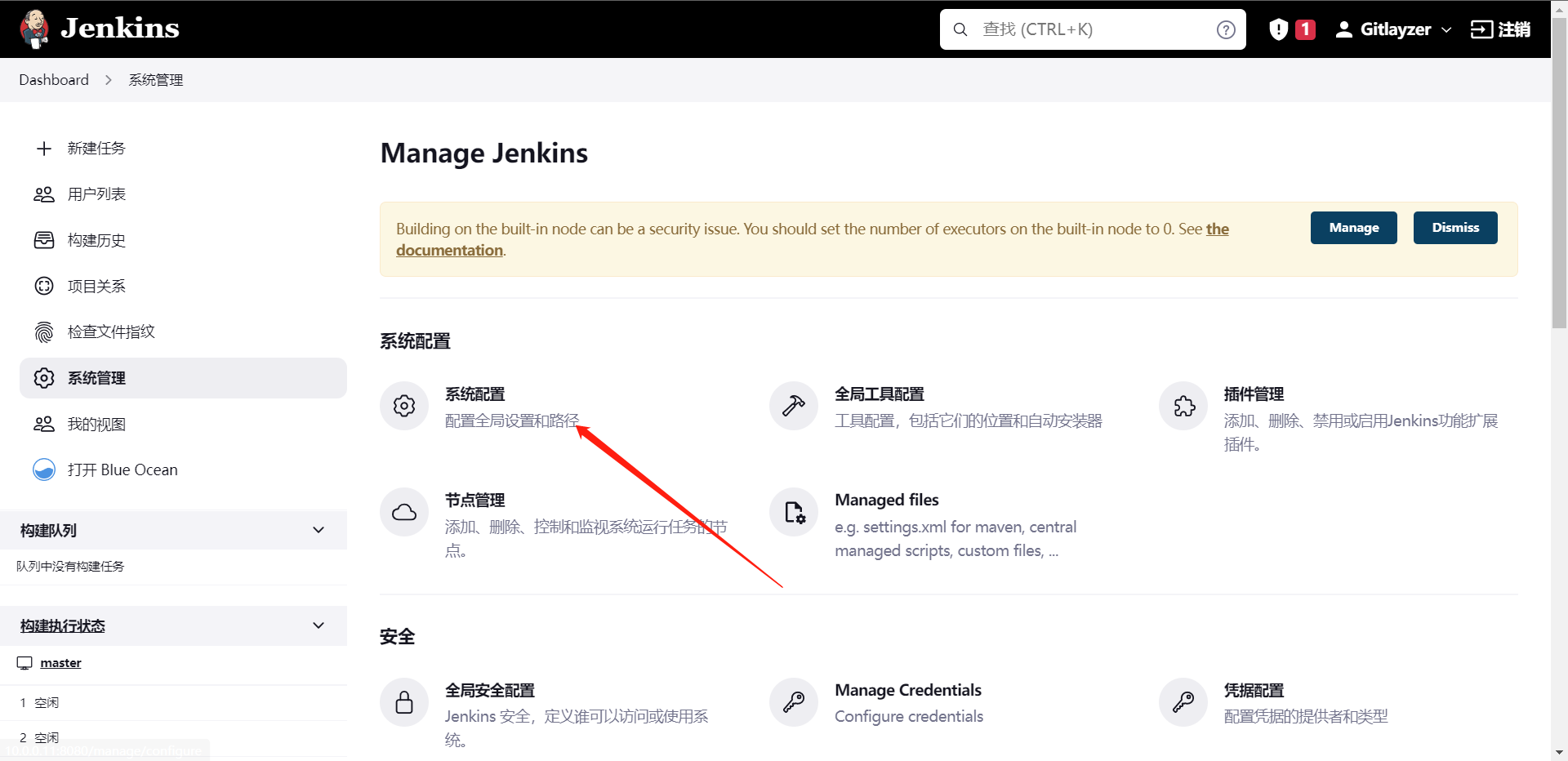

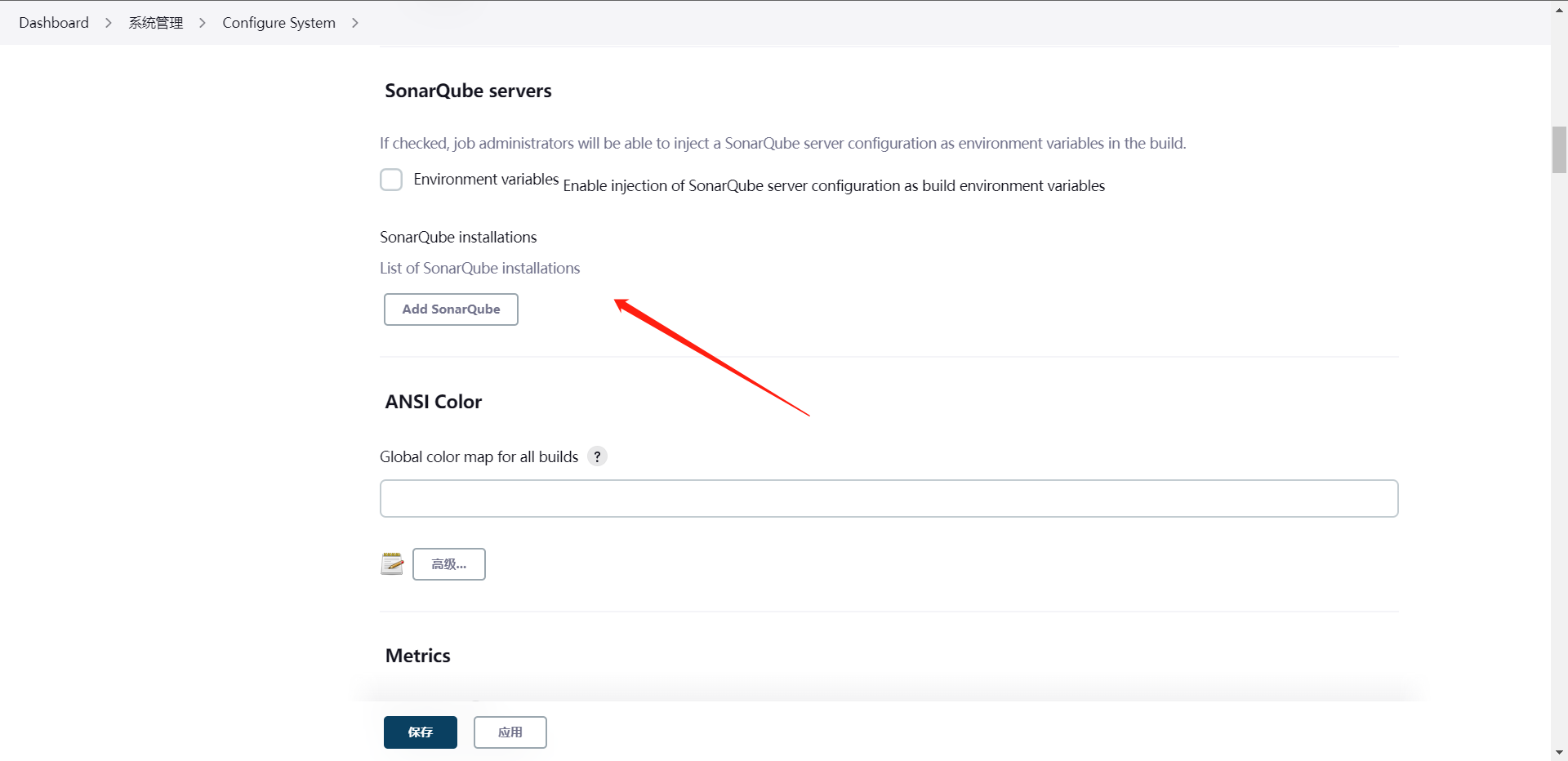

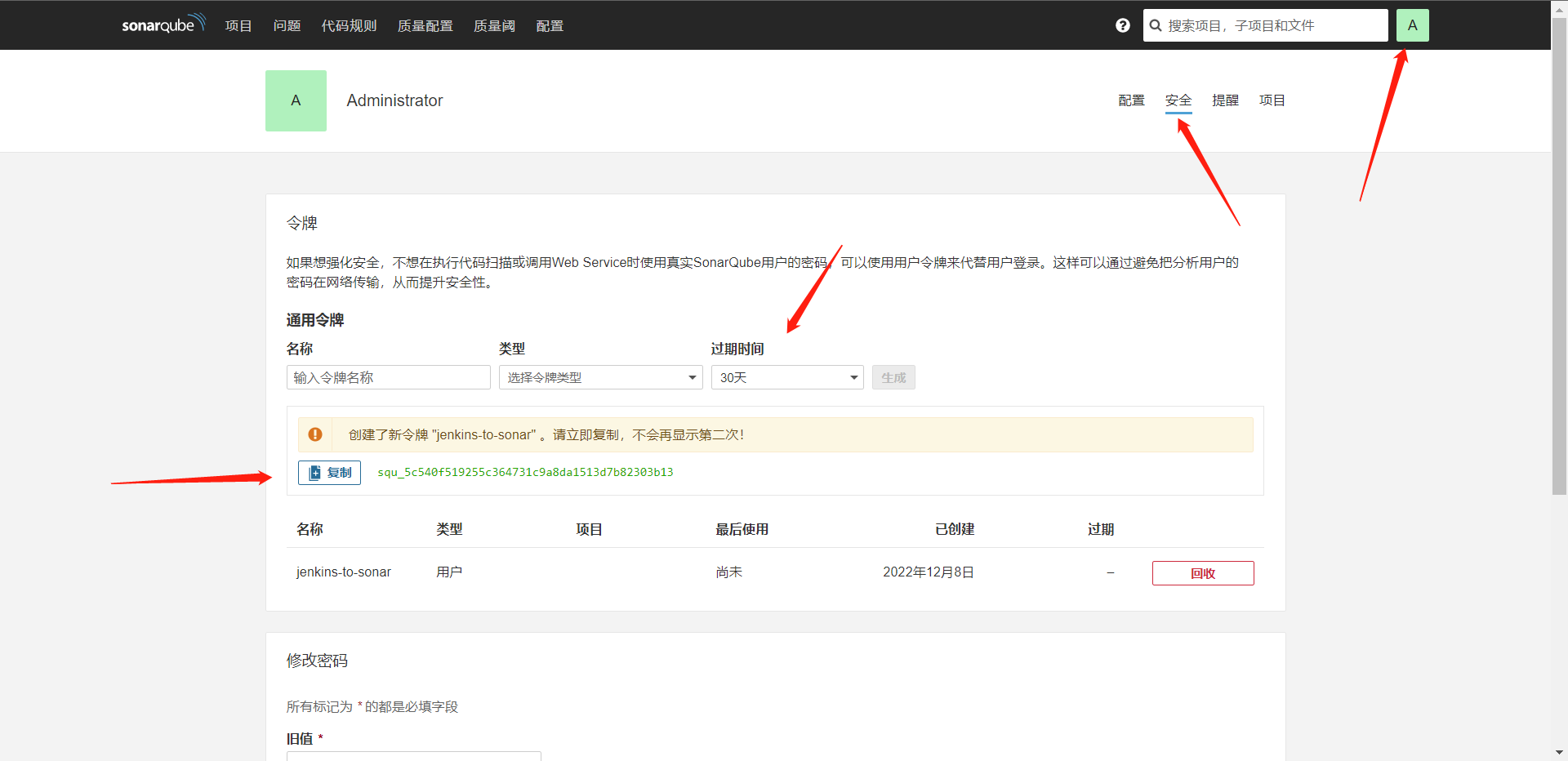

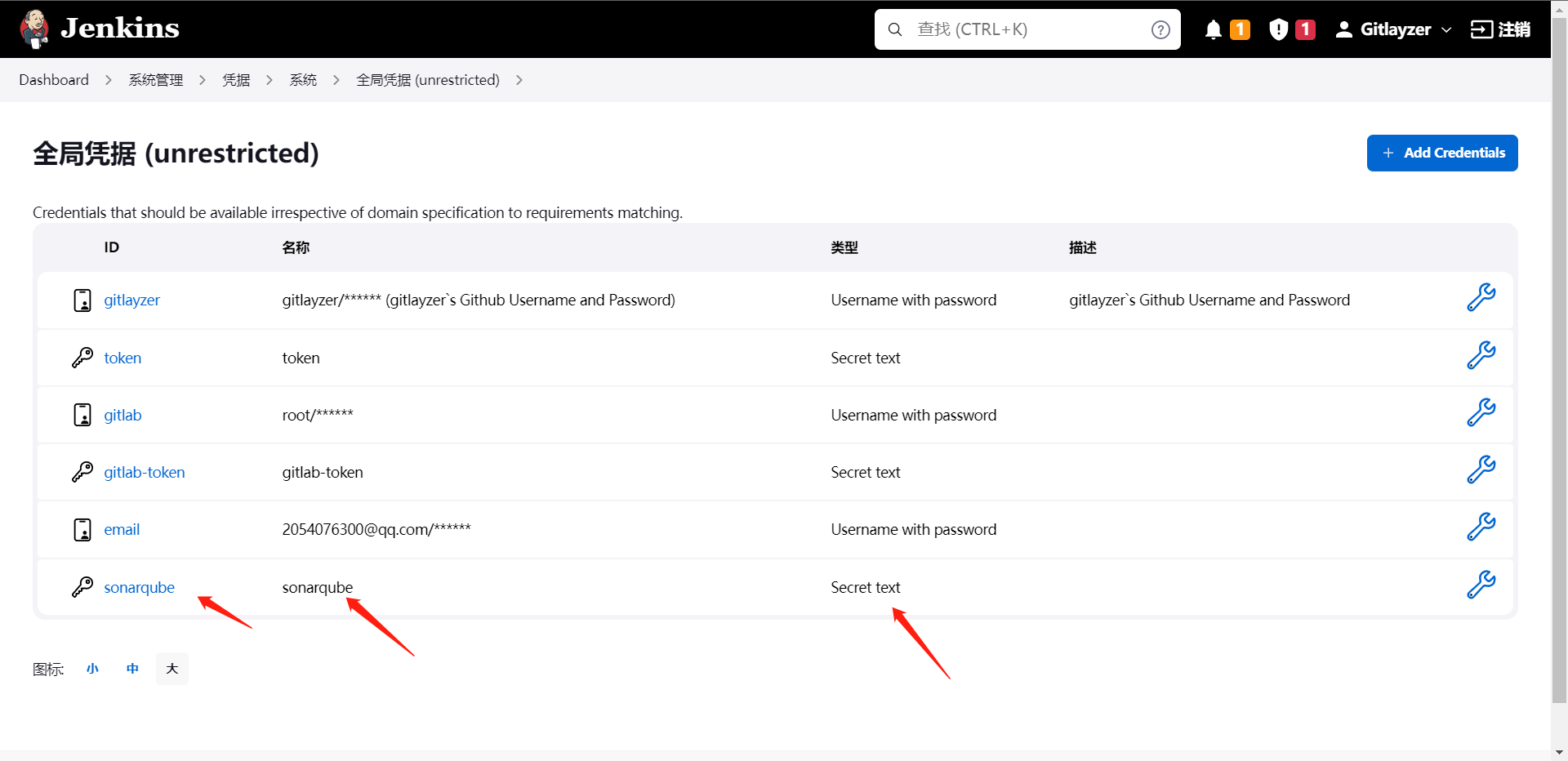

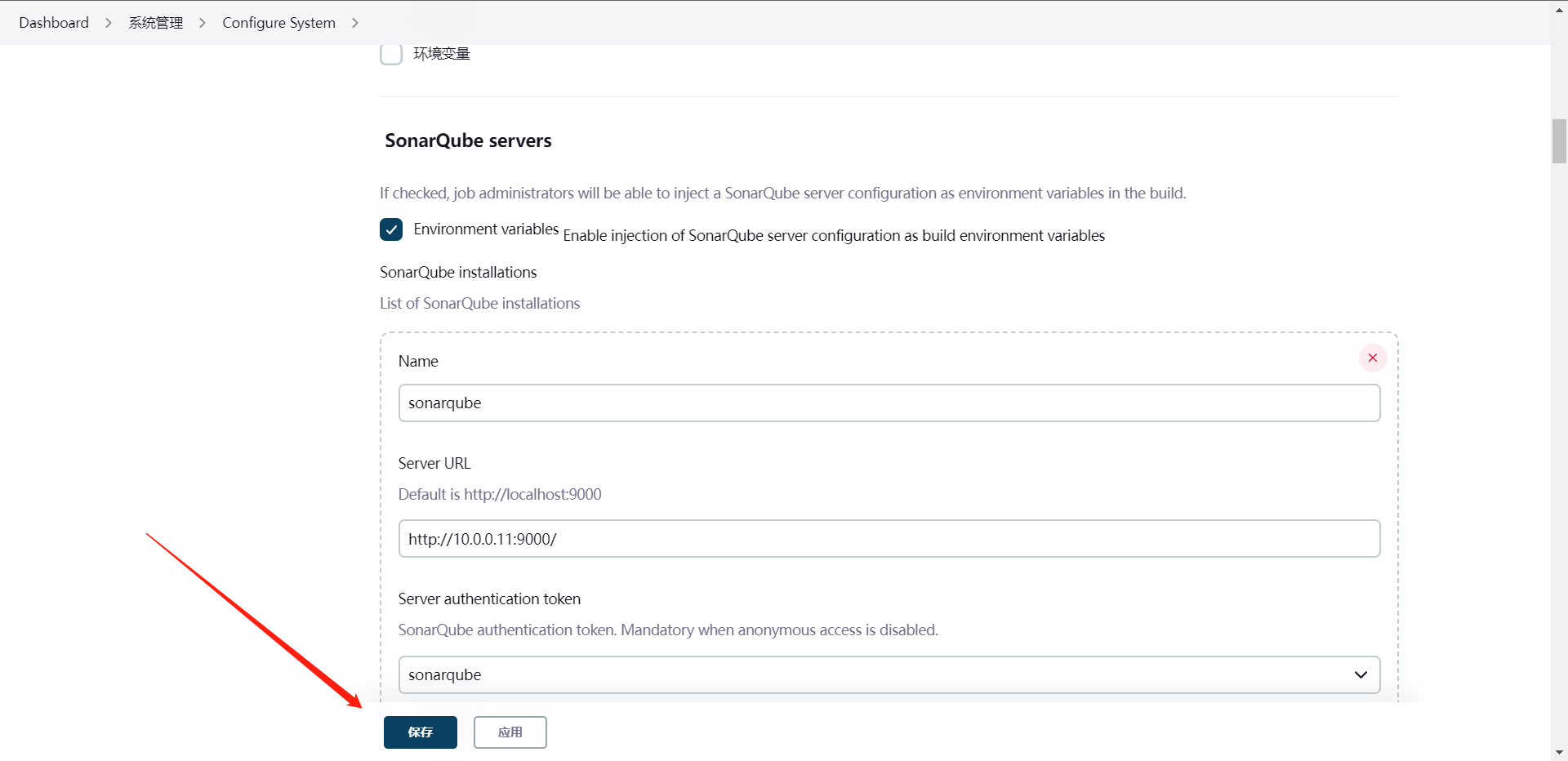

8:JenkinsSonar插件使用

package org.library

def SonarScan(projectName,projectDescription,projectPath,version){

withSonarQubeEnv("sonarqube"){

def scannerHome = "/jenkins/software/sonar-scanner/"

sh """

${scannerHome}/bin/sonar-scanner -Dsonar.projectKey=${projectName} \

-Dsonar.projectName=${projectName} \

-Dsonar.projectVersion=${version} \

-Dsonar.ws.timeout=30 \

-Dsonar.projectDescription="${projectDescription}" \

-Dsonar.sources="${projectPath}" \

-Dsonar.sourceEncoding=UTF-8 \

-Dsonar.java.binnaries=target/classes \

"""

}

}

[root@cdk-server library]# git add .

[root@cdk-server library]# git commit -m "edit sonarqube code"

[master df2667e] edit sonarqube code

1 file changed, 17 insertions(+), 16 deletions(-)

rewrite src/org/library/sonarqube.groovy (88%)

[root@cdk-server library]# git push origin master

Enumerating objects: 11, done.

Counting objects: 100% (11/11), done.

Delta compression using up to 2 threads

Compressing objects: 100% (4/4), done.

Writing objects: 100% (6/6), 499 bytes | 166.00 KiB/s, done.

Total 6 (delta 3), reused 0 (delta 0), pack-reused 0

remote: Resolving deltas: 100% (3/3), completed with 3 local objects.

To https://github.com/gitlayzer/package-ci.git

de7c9bc..df2667e master -> master

点击这个小标就可以直接跳转到SonarQube的项目信息中去了,这就是插件和不用插件的区别,

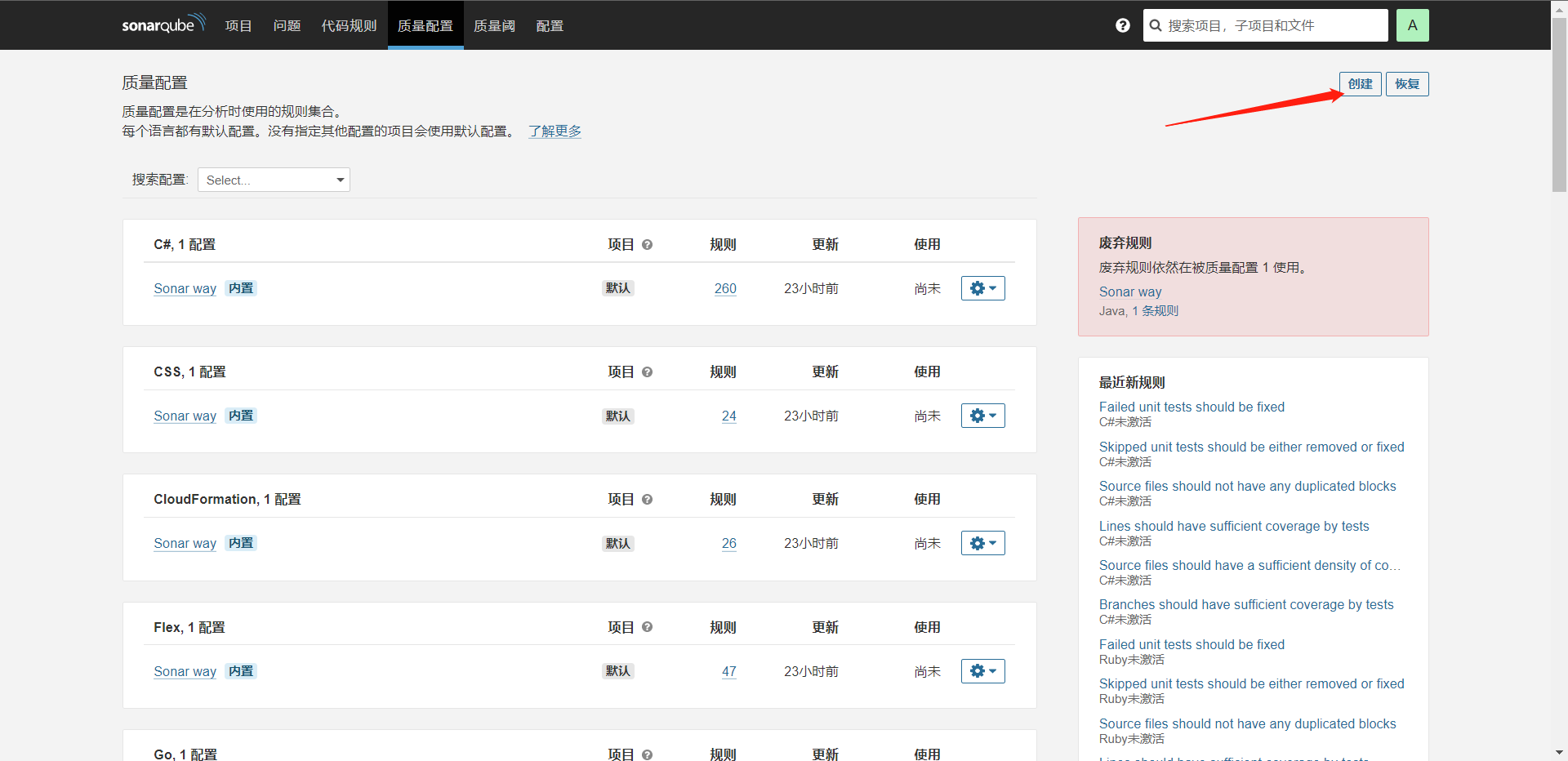

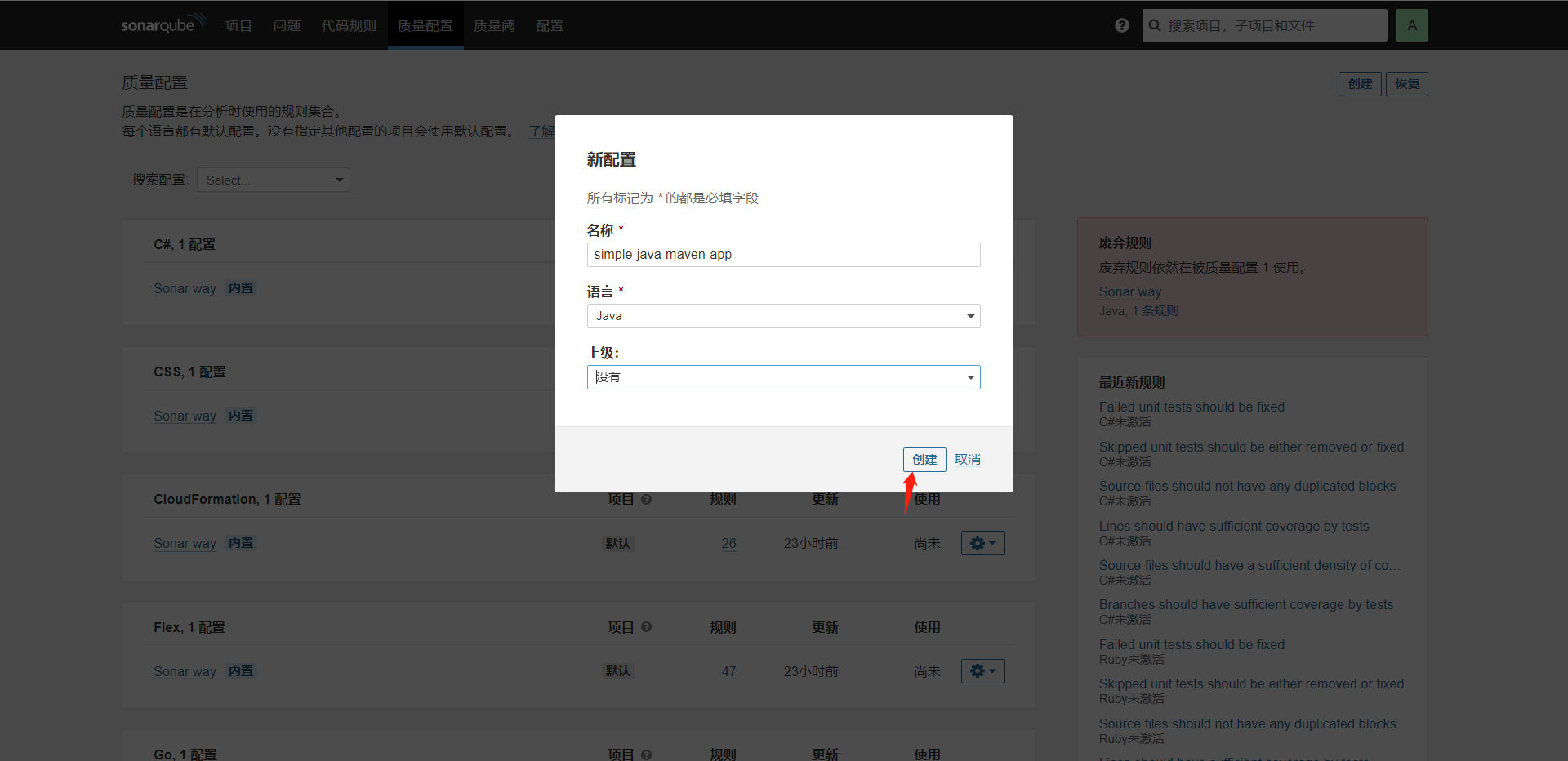

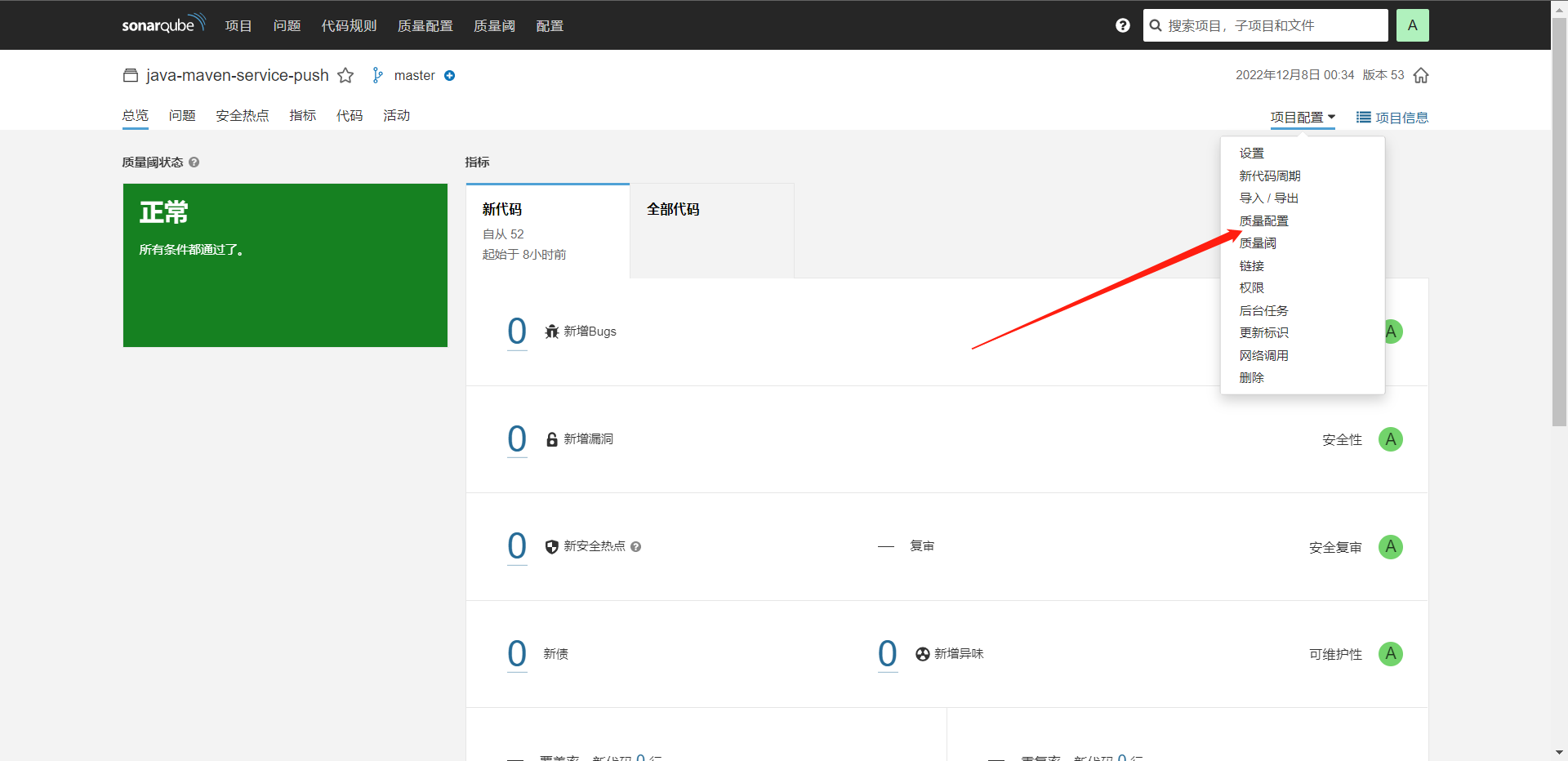

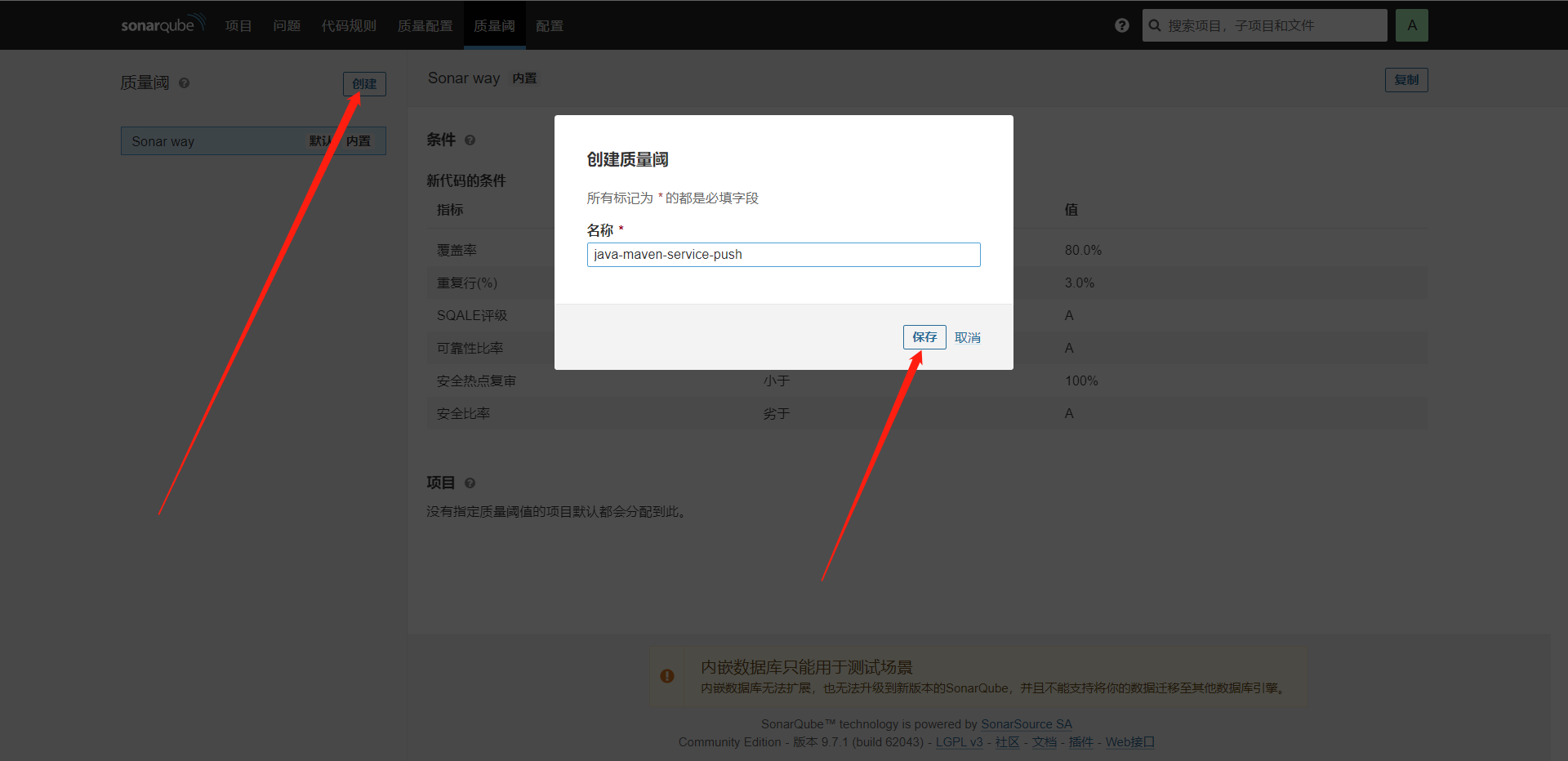

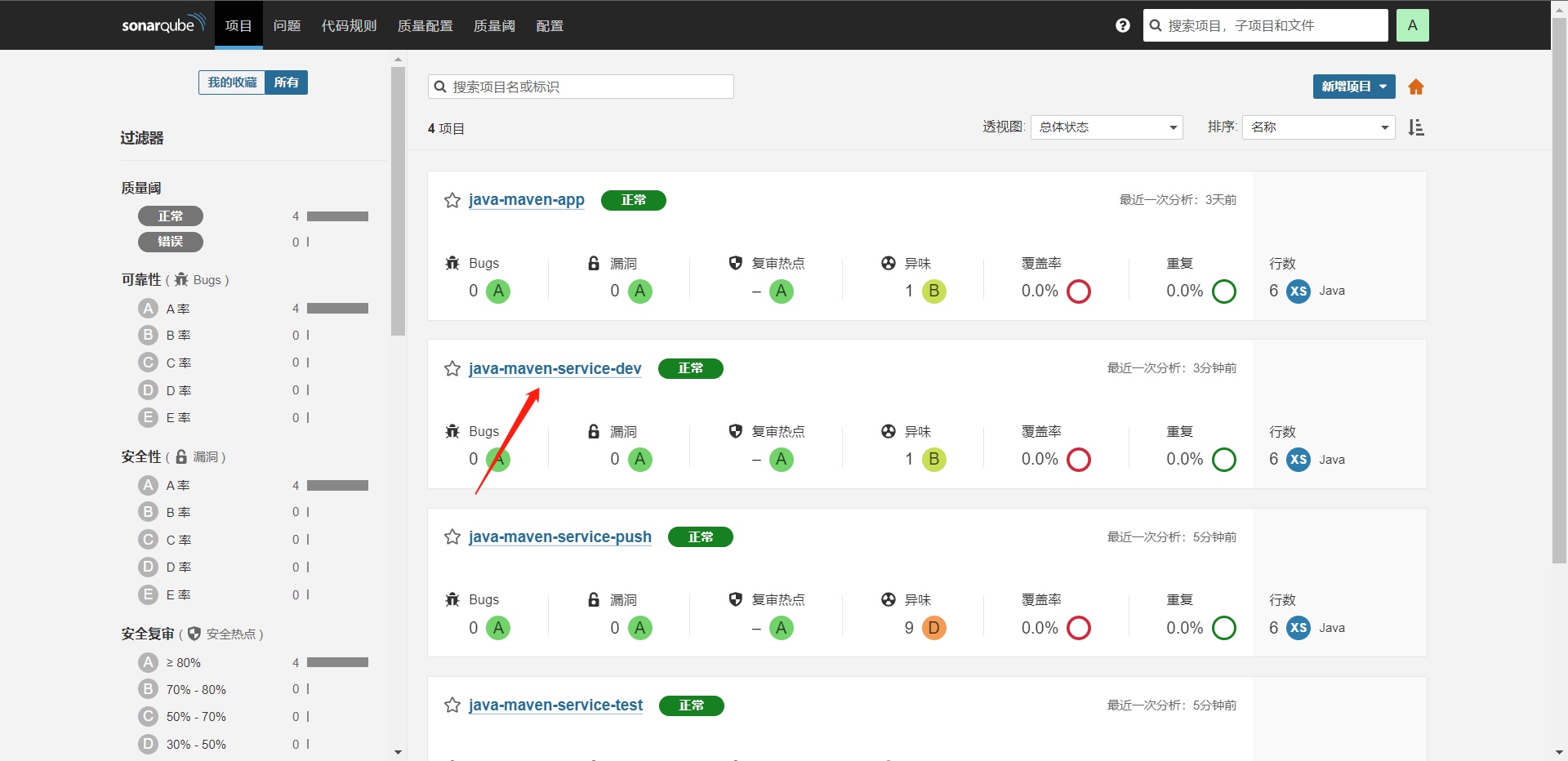

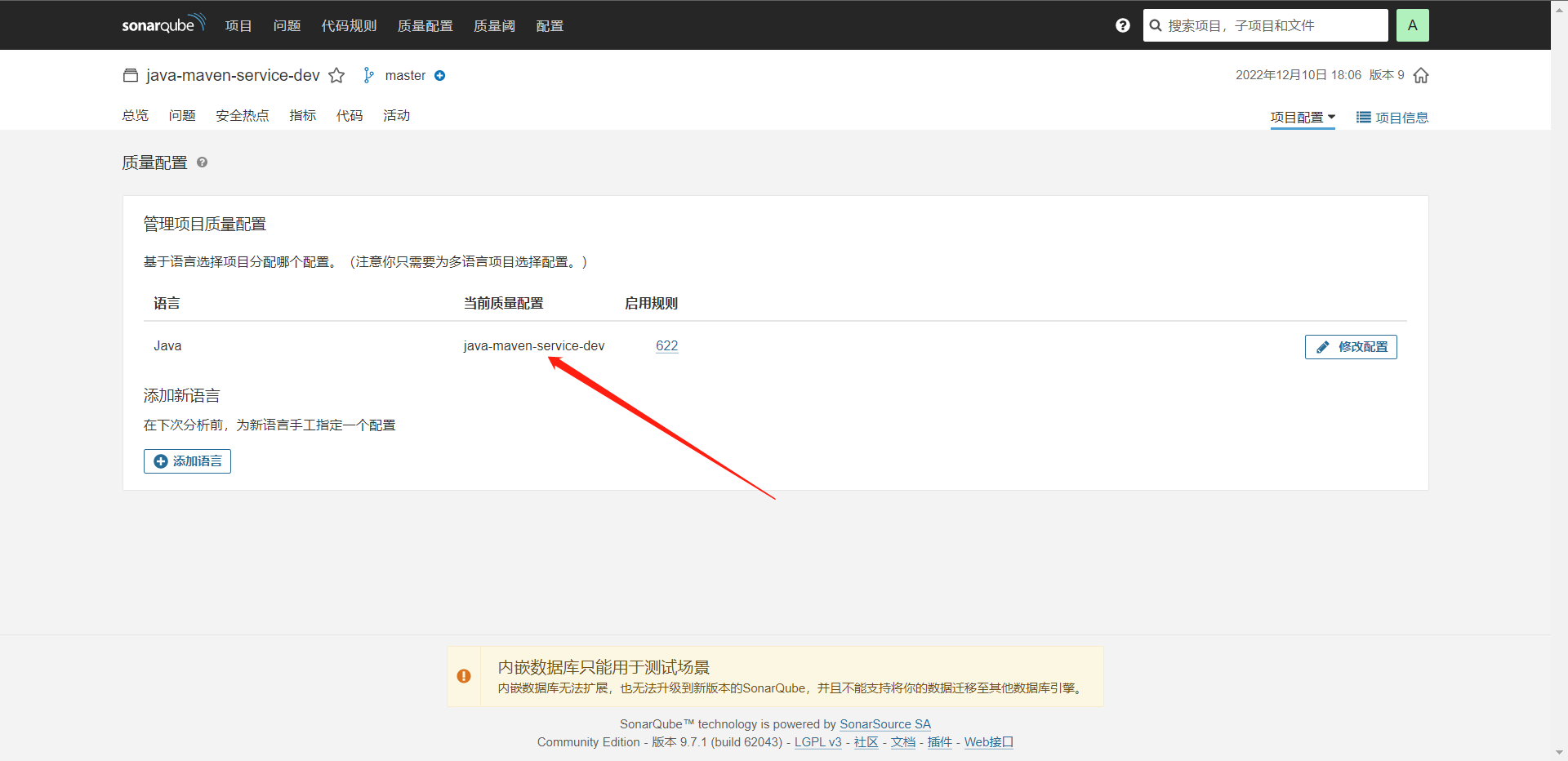

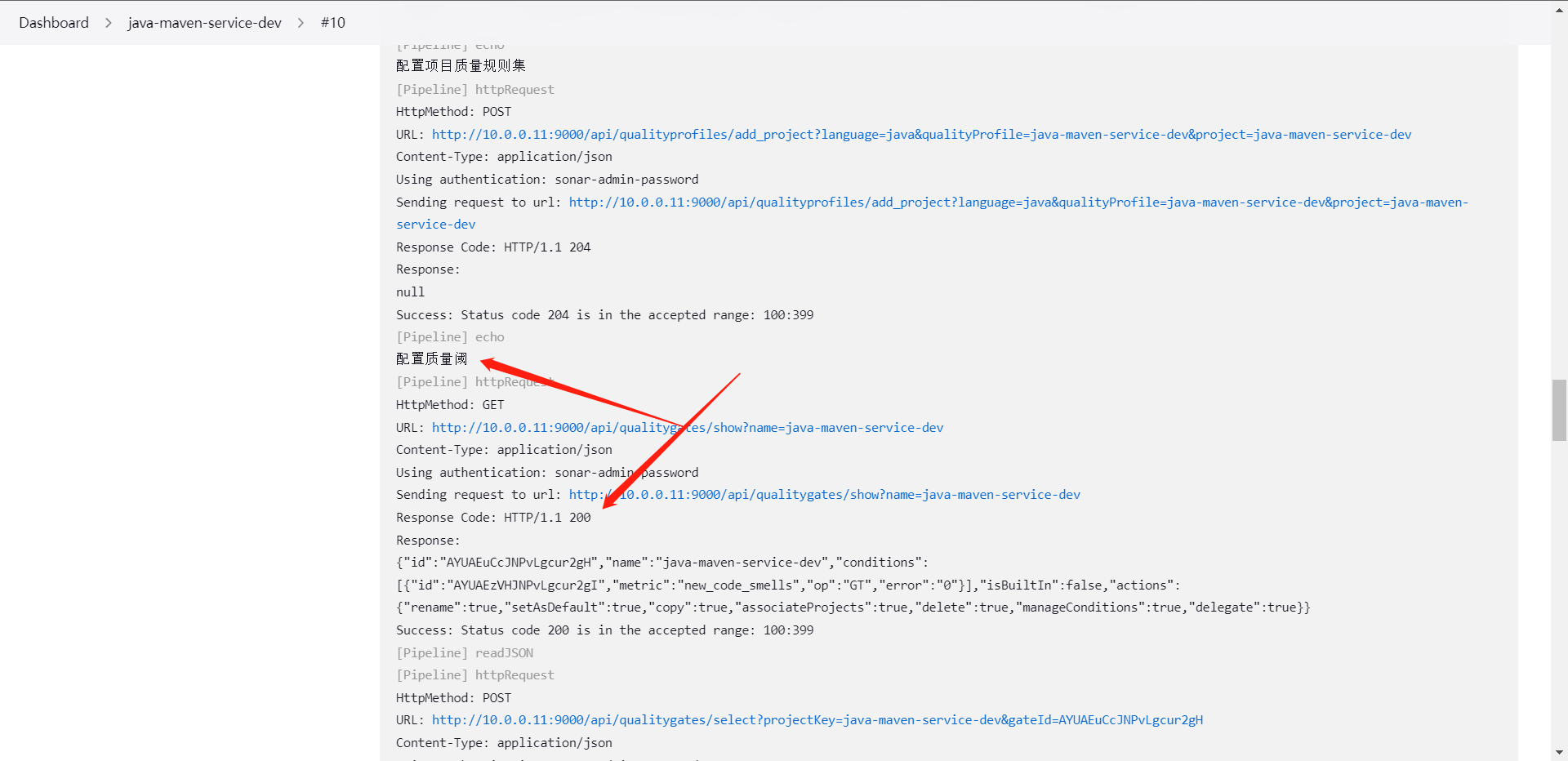

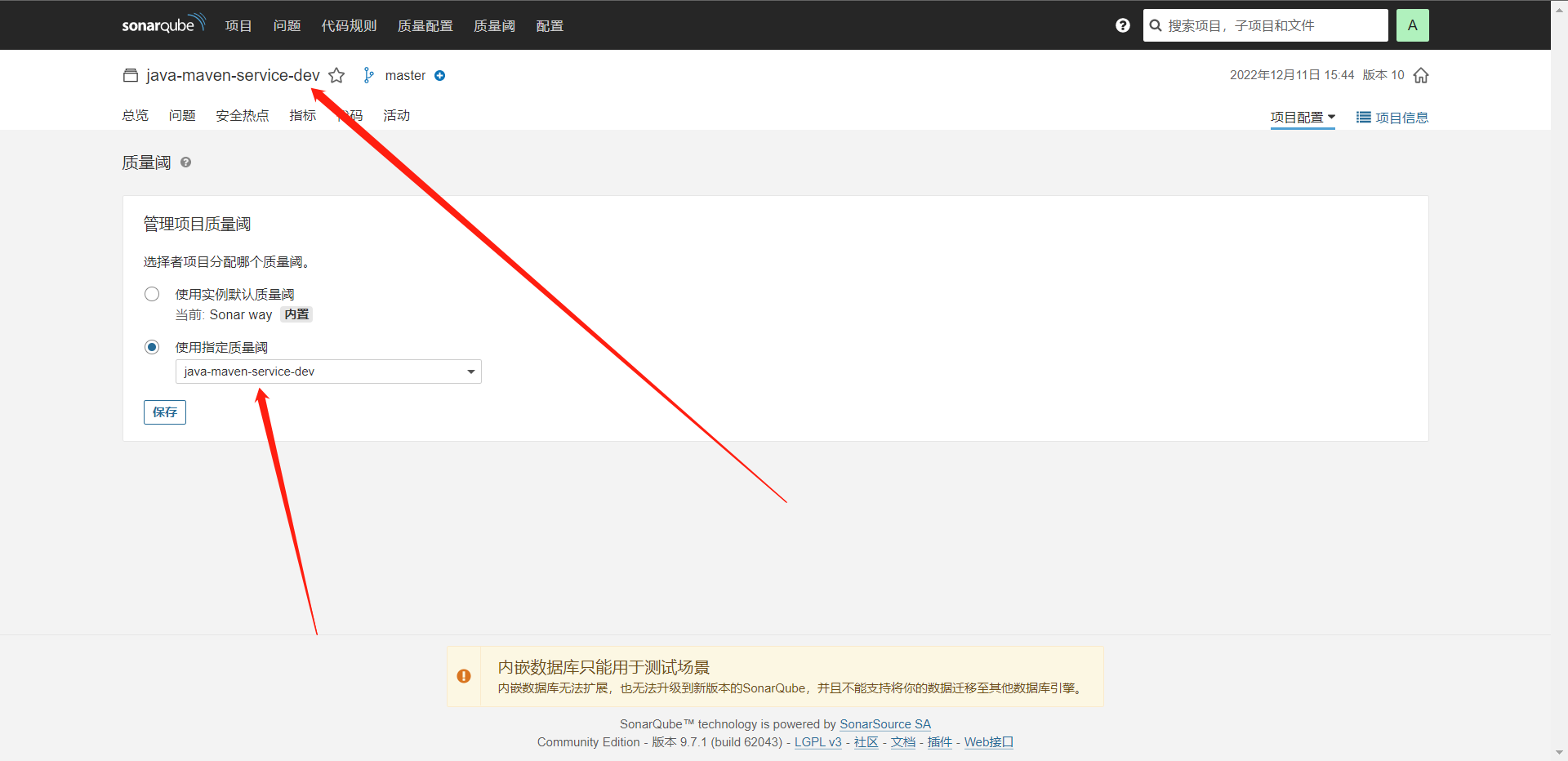

9:SonarQube项目管理

当我们的项目逐渐多起来之后我们会发现Bug也会随之增多,那么这个时候我们怎么去管理这些项目和Bug呢?

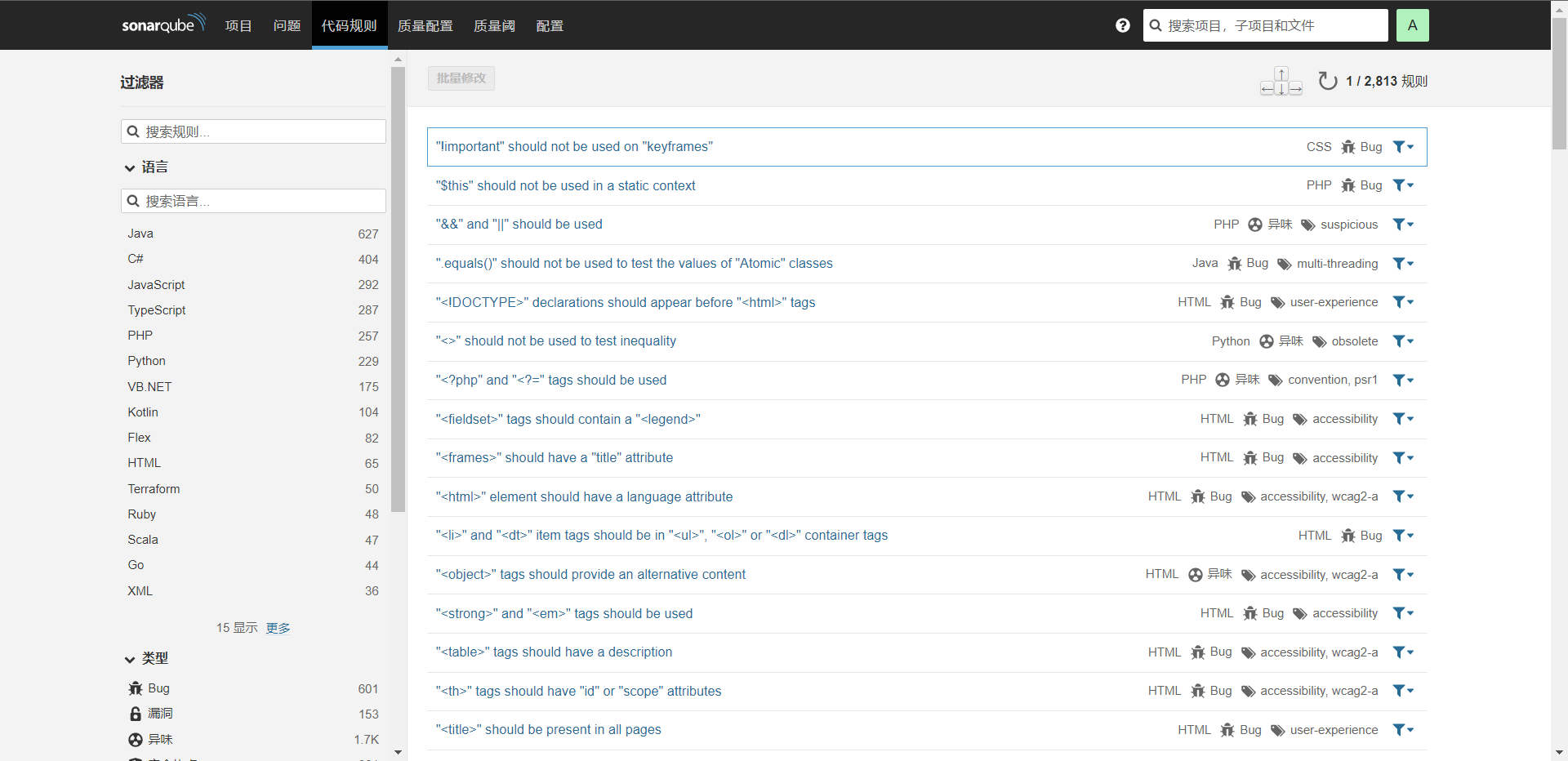

1:代码规则

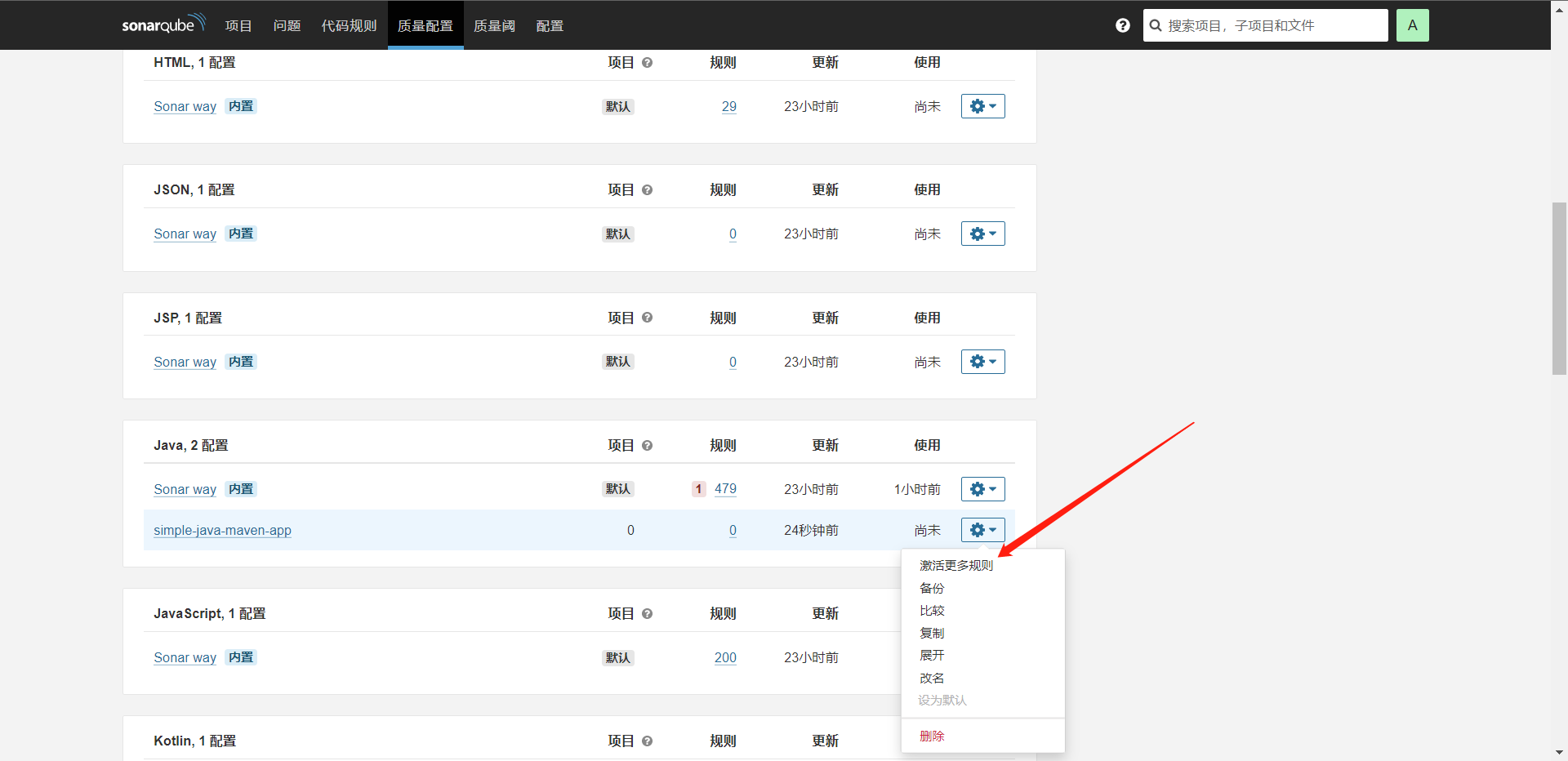

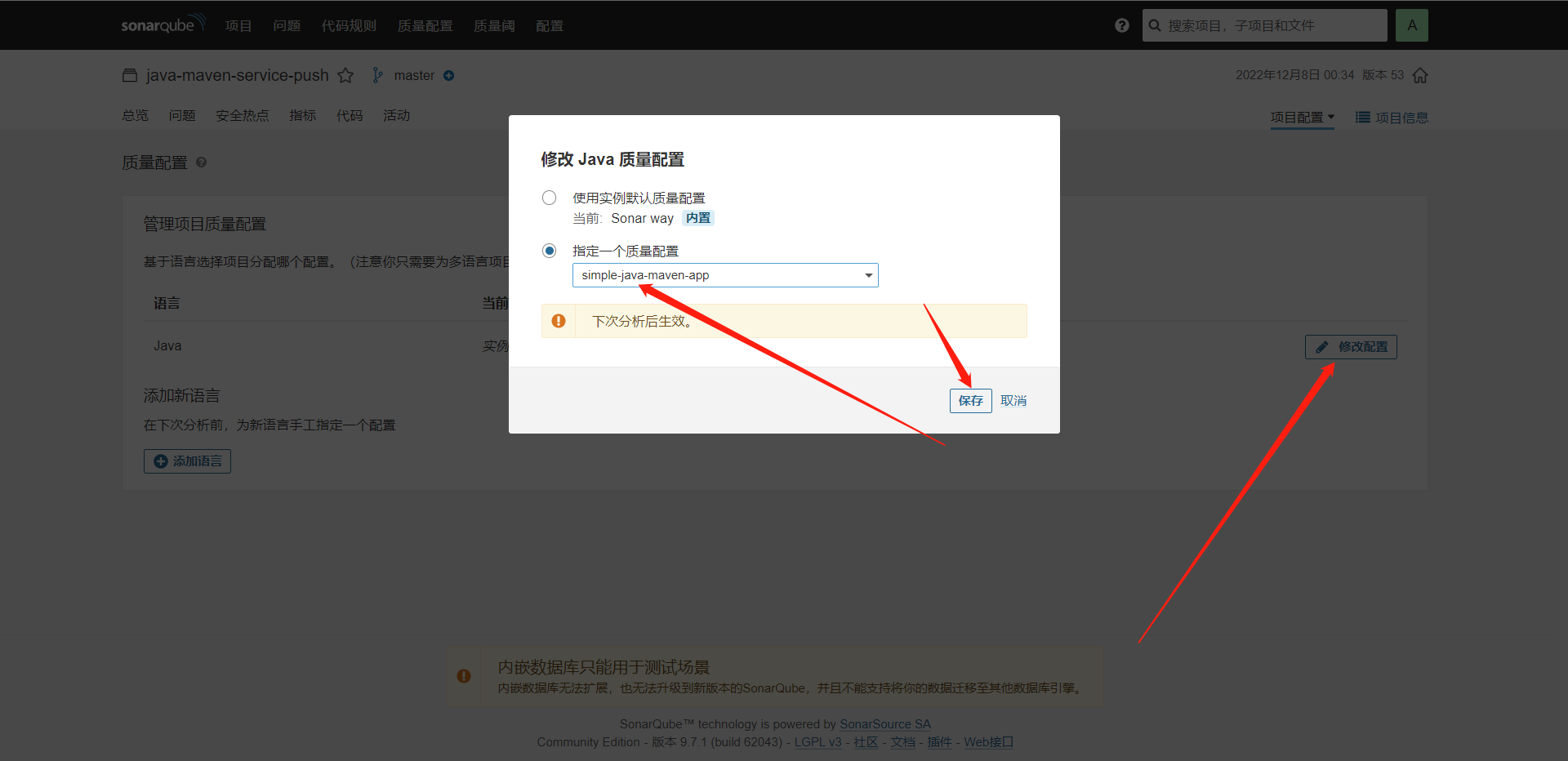

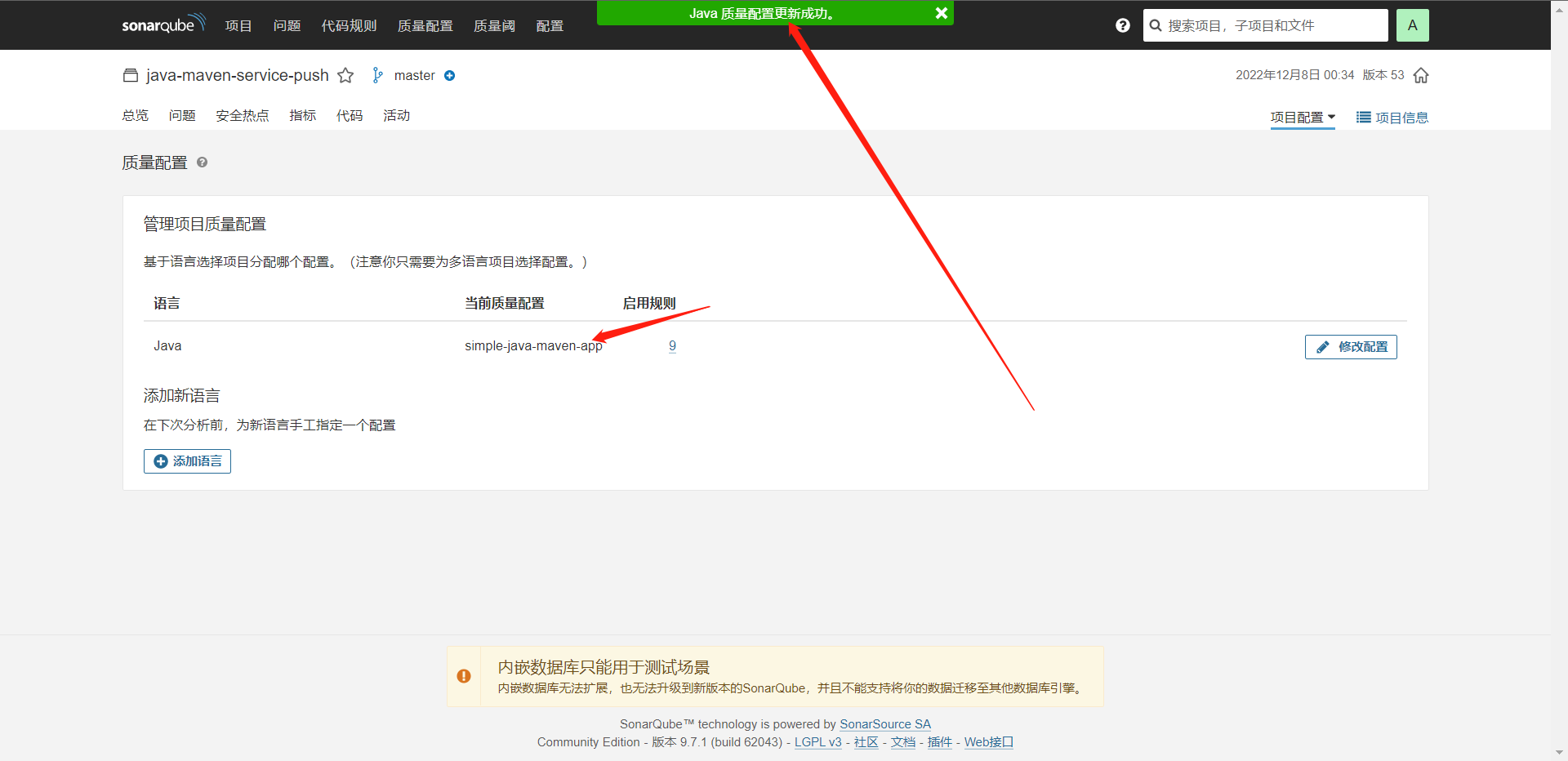

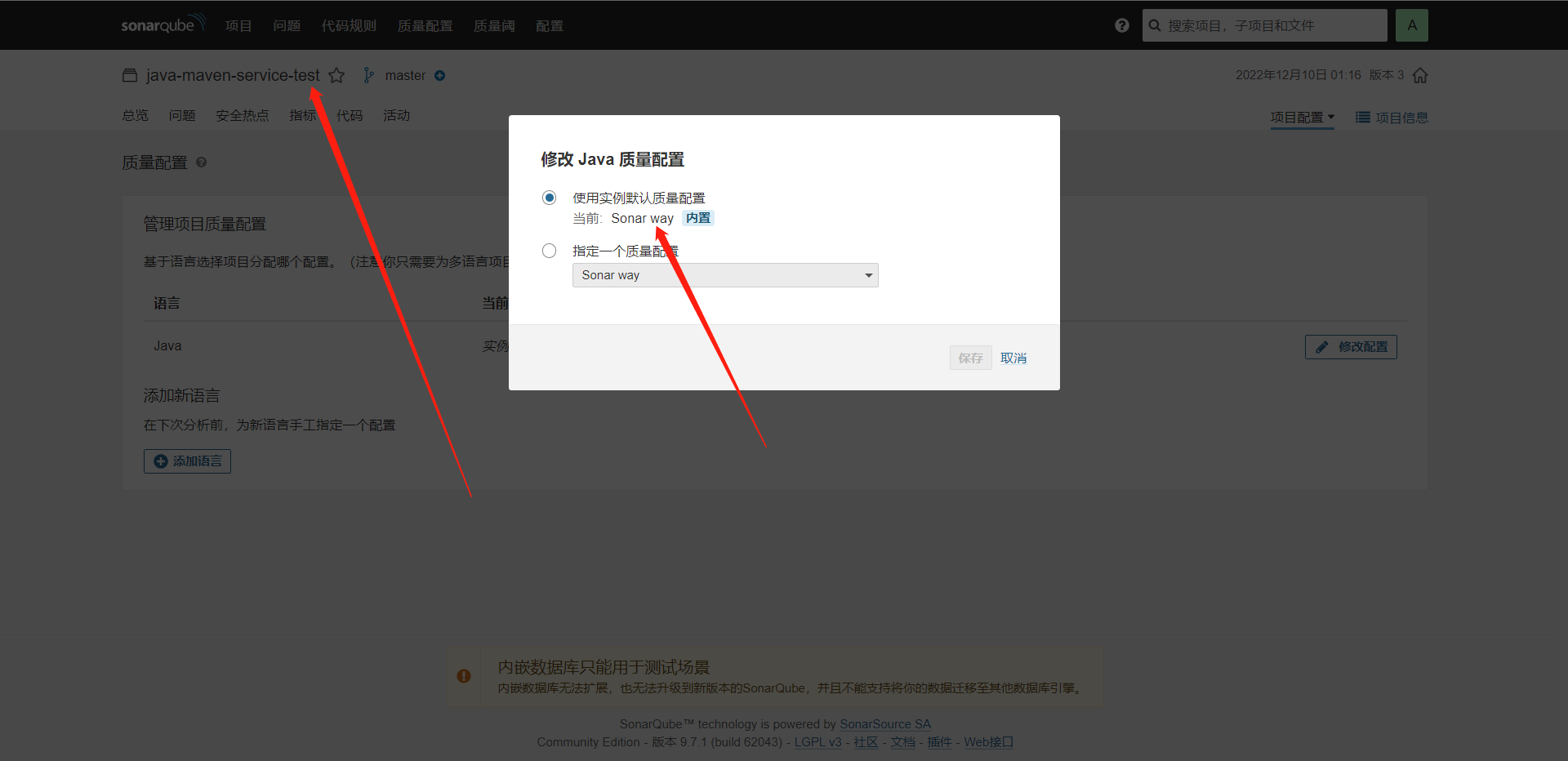

2:质量配置(重点)默认使用:Sonar way

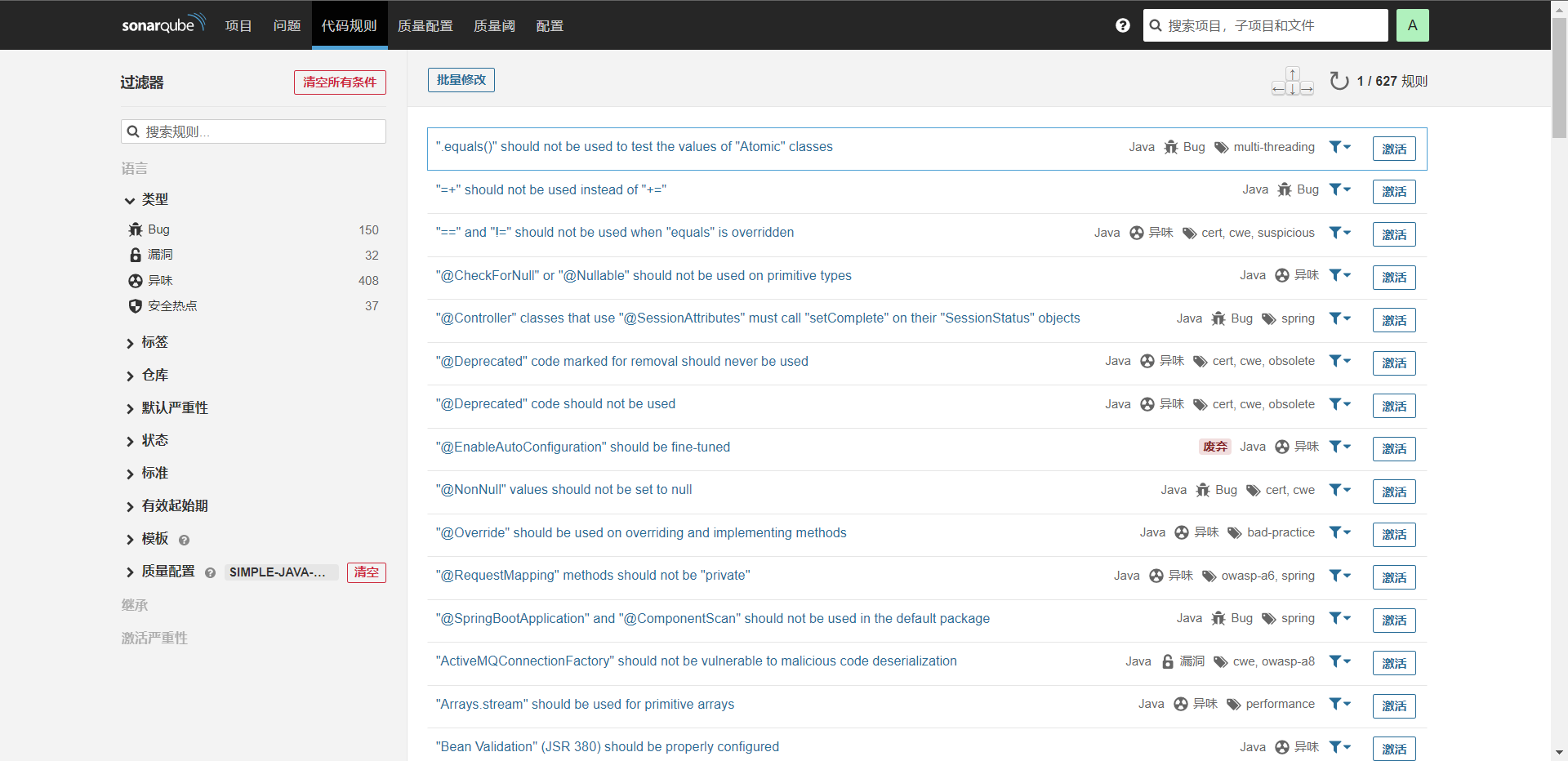

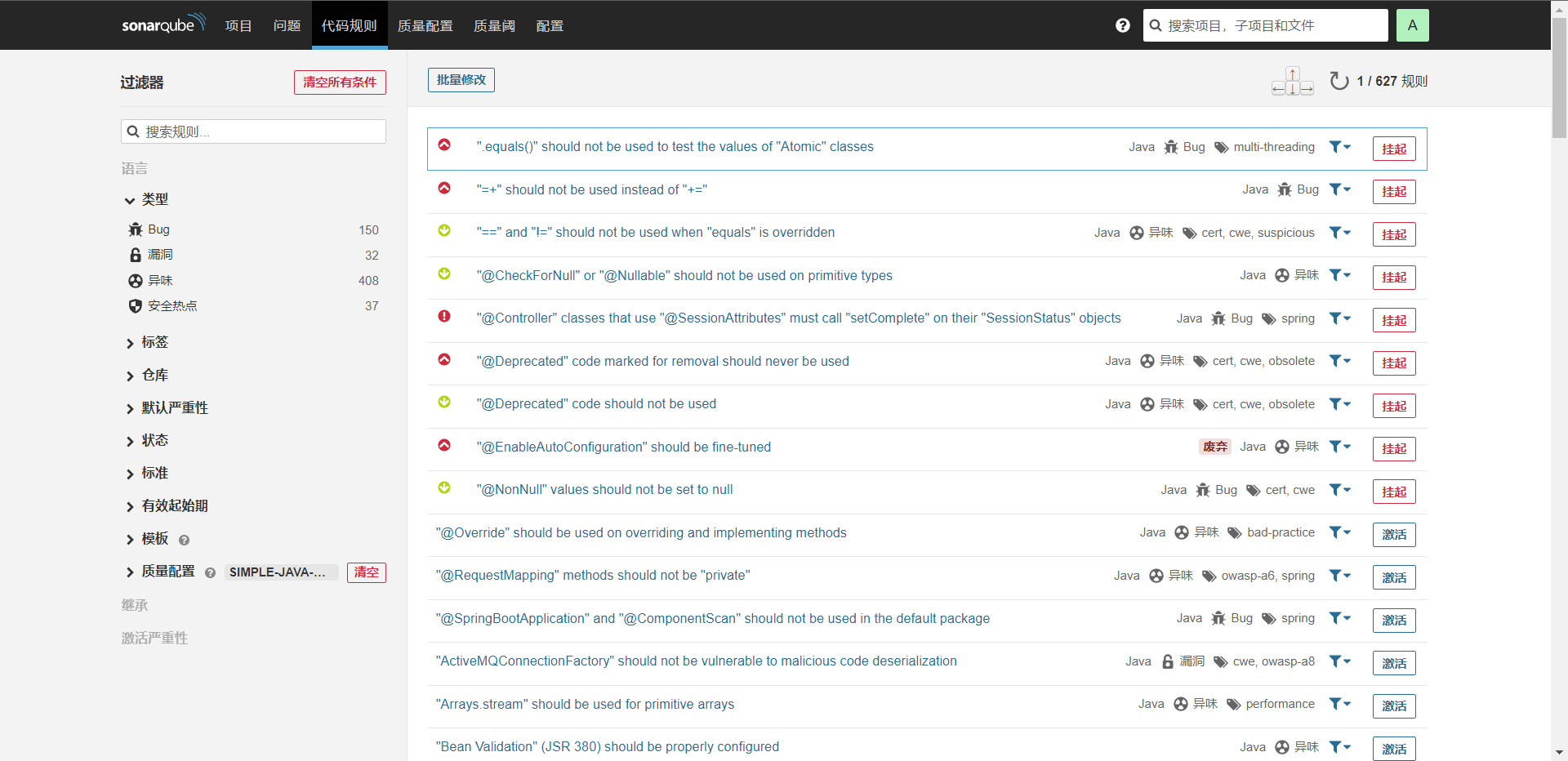

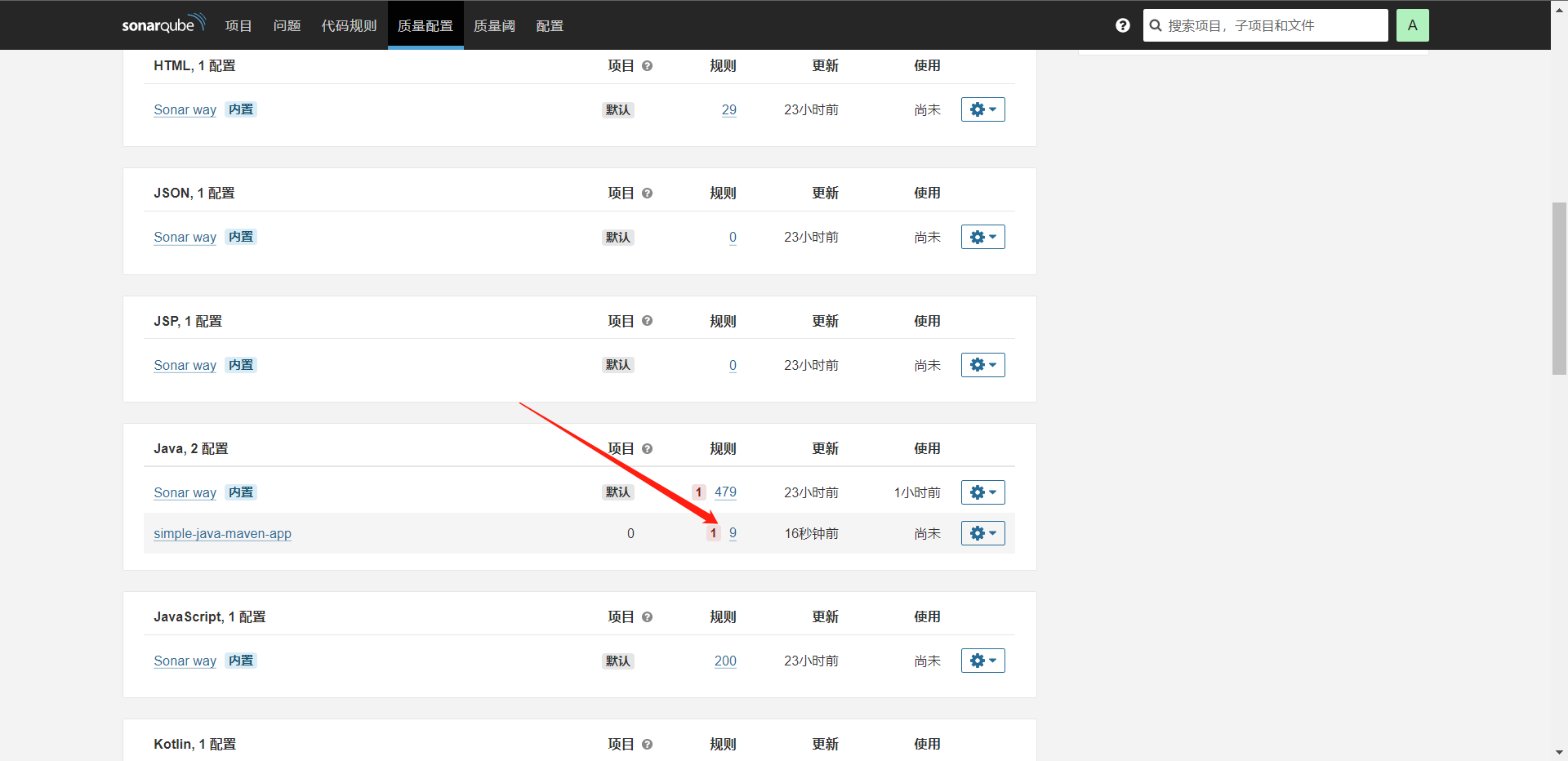

然后我们要知道的就是Sonar在扫描的时候是支持指定规则的,那么我们来看看如何指定规则

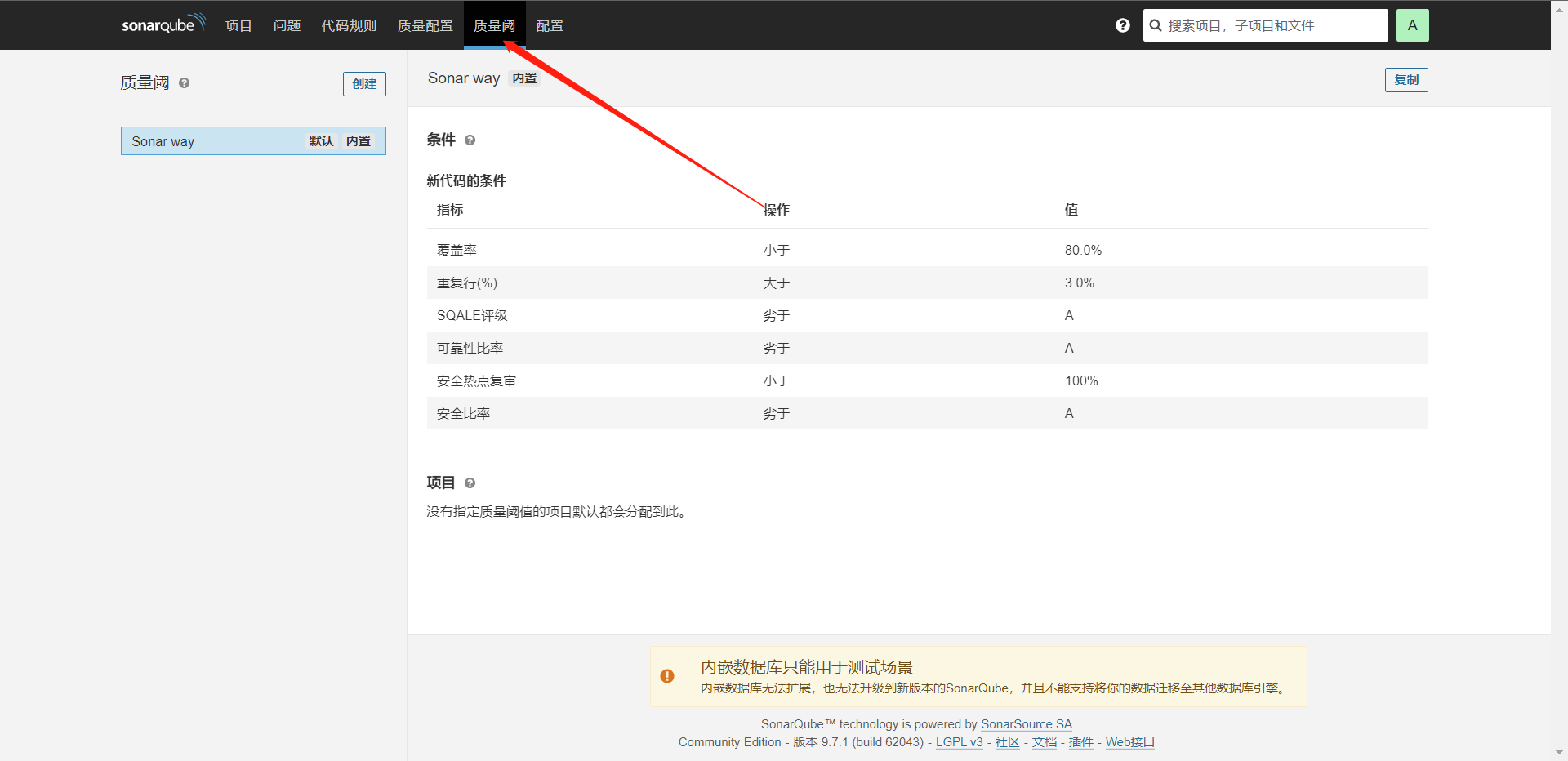

这个时候我们就可以变更规则了,那么我们下面再来看看质量阈怎么使用。

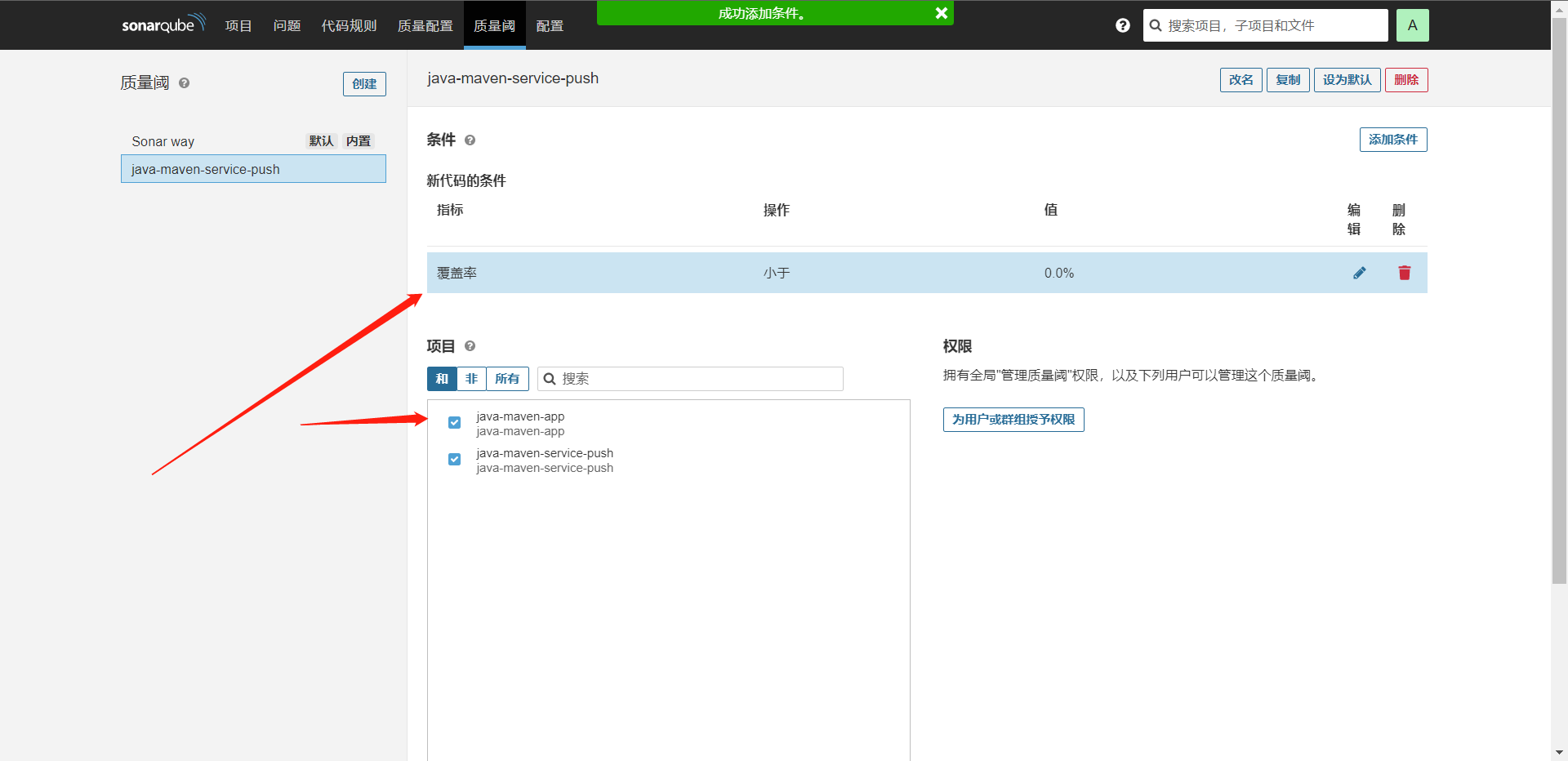

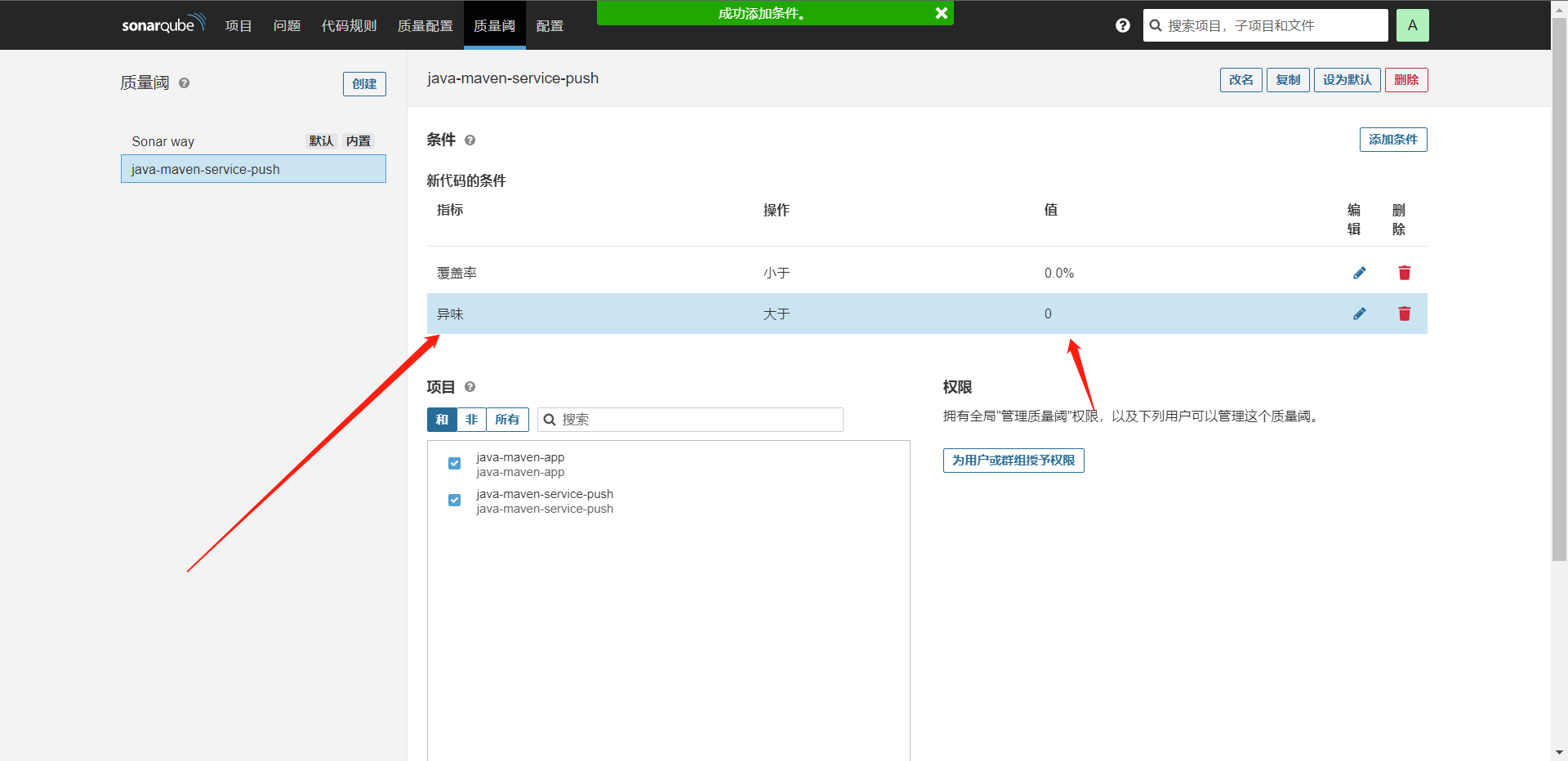

这样质量阈就配置好了,这个时候我们提交一下代码看看。

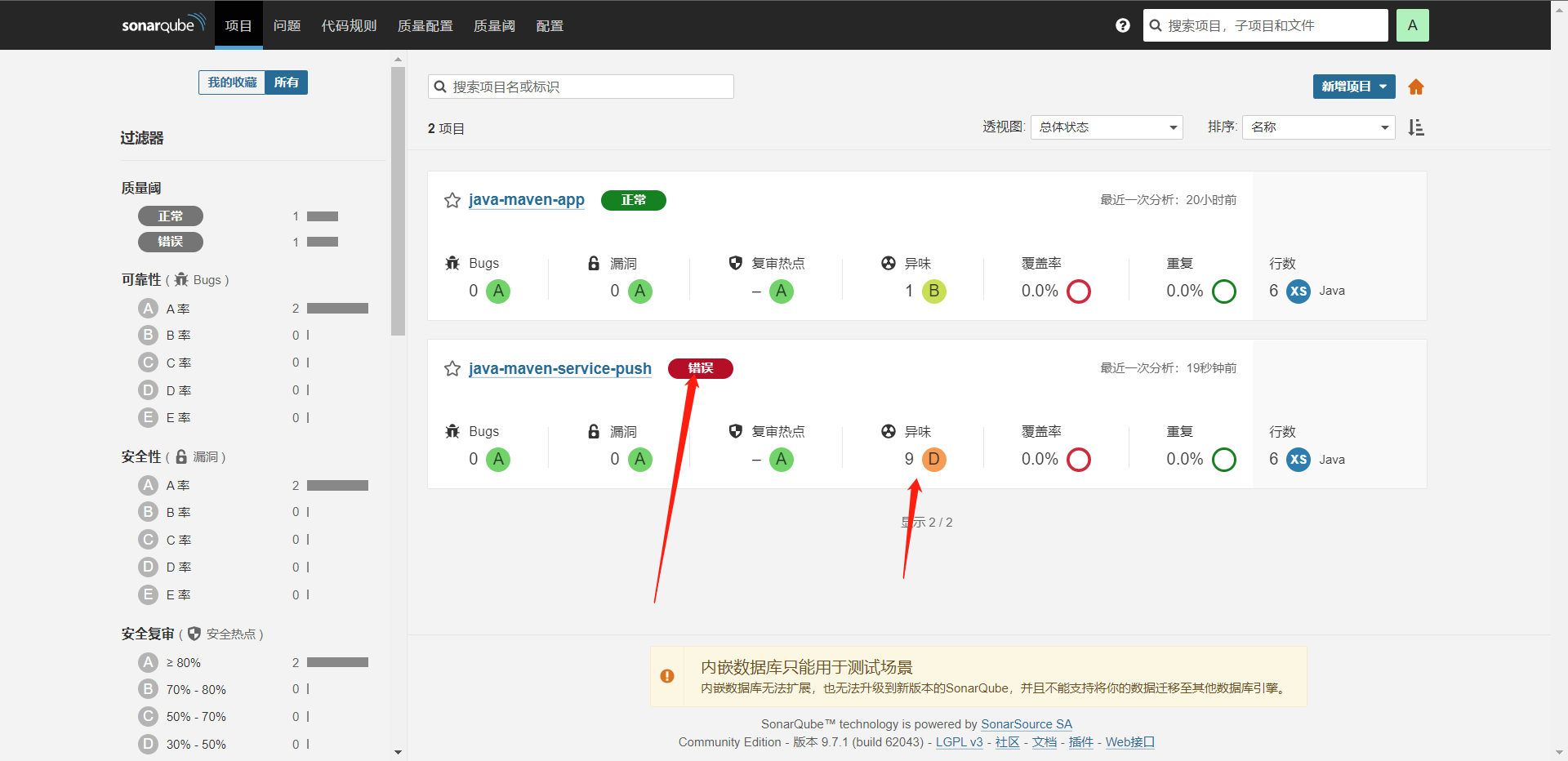

貌似没有什么问题,那么我们可以去多激活一些规则然后再测试,

可以看到报错了,因为`异味`达到了我们的阈值了,所以它就报错了,当然如果我们的规则够多,那么我们想想这个场景应该运用到哪儿里,我们是不是可以提醒一下管理员,告诉管理员代码扫描的信息而且随之终止流水线。

@Library("jenkinslibrary@master") _

def build = new org.library.build()

def deploy = new org.library.deploy()

def gitlab = new org.library.gitlab()

def toemail = new org.library.toemail()

def sonar = new org.library.sonarqube()

String buildShell = "${env.buildShell}"

String buildType = "${env.buildType}"

String gitlab_url = "${env.gitlab_url}"

String branchName = "${env.branchName}"

if ("${runOps}" == "GitlabPush") {

branchName = branch - "refs/heads/"

currentBuild.description = "Trigger By ${userName} ${branchName}"

gitlab.ChangeCommitStatus(projectId,commitSha,'running')

}

pipeline {

agent {

node {

label "build-1"

}

}

stages{

stage("CheckOut") {

steps {

script{

println("${branchName}")

checkout([$class: 'GitSCM', branches: [[name: "${branchName}"]], extensions: [], userRemoteConfigs: [[credentialsId: 'gitlab', url: "${gitlab_url}"]]])

}

}

}

stage("Build") {

steps {

script{

build.Build(buildType, buildShell)

}

}

}

stage("Scan") {

steps {

script{

sonar.SonarScan("${JOB_NAME}","${JOB_NAME}","src","${BUILD_ID}")

}

}

}

stage("Quality Gate"){

steps {

script {

def qg = waitForQualityGate()

if (qg.status != 'OK') {

error "Pipeline aborted due to quality gate failure: ${qg.status}"

}

}

}

}

}

post {

success {

script {

println("Success!!!")

gitlab.ChangeCommitStatus(projectId,commitSha,'success')

toemail.Email("构建流水线成功",userEmail)

}

}

}

}

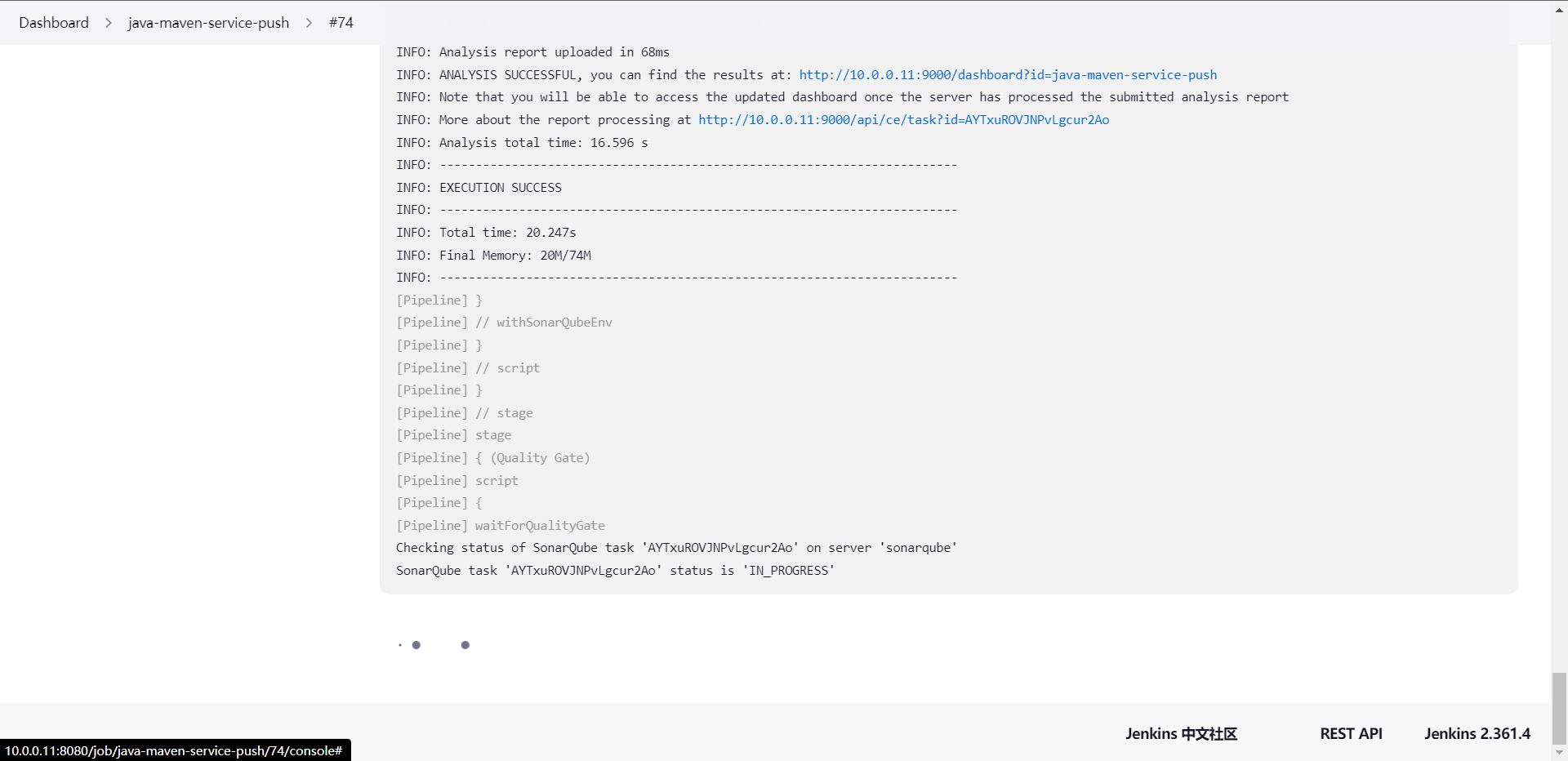

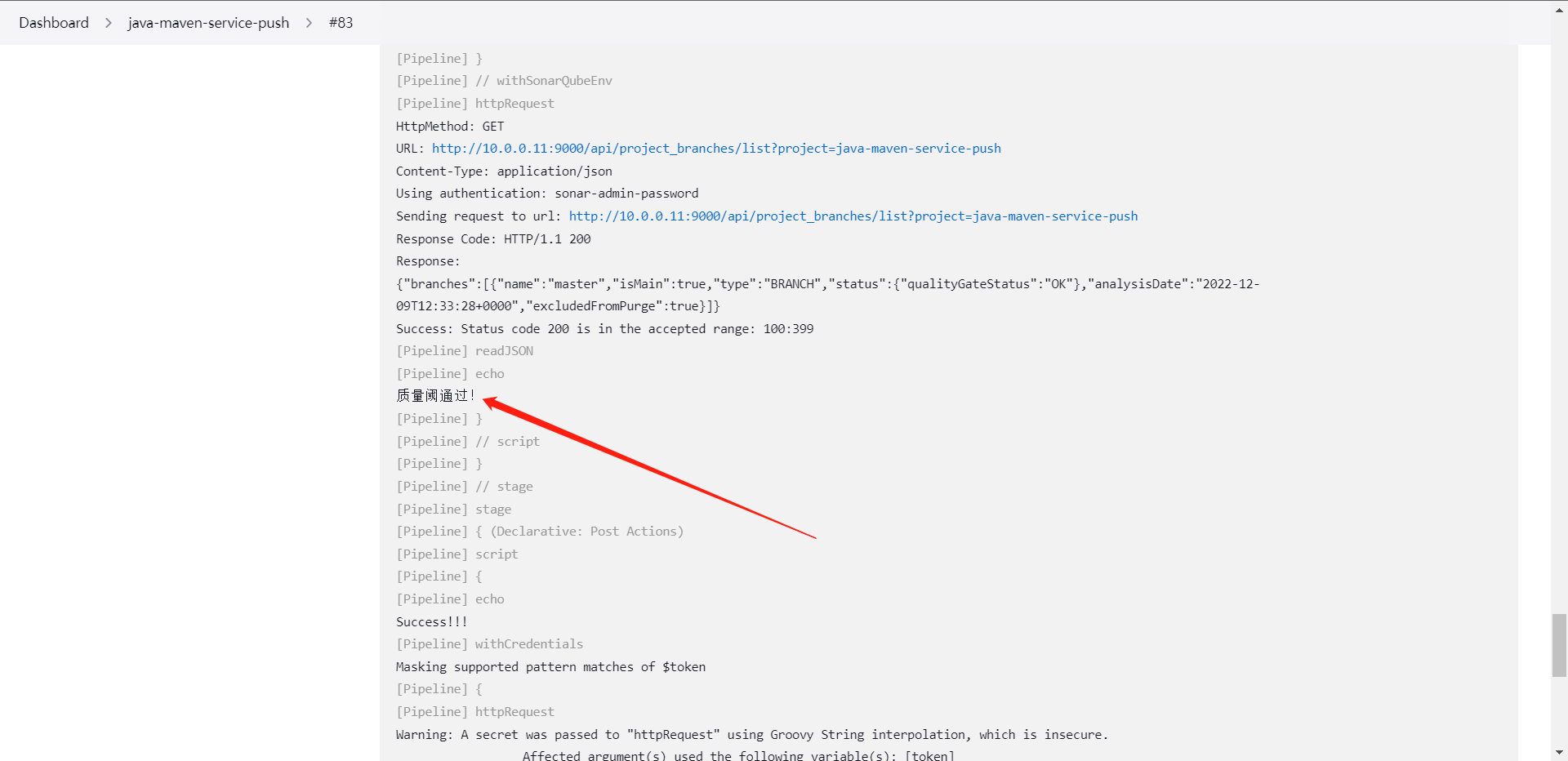

当然可以写到ShareLibrary内,也就是如下的写法,用插件还是很慢的,这个也没办法,等就可以了。

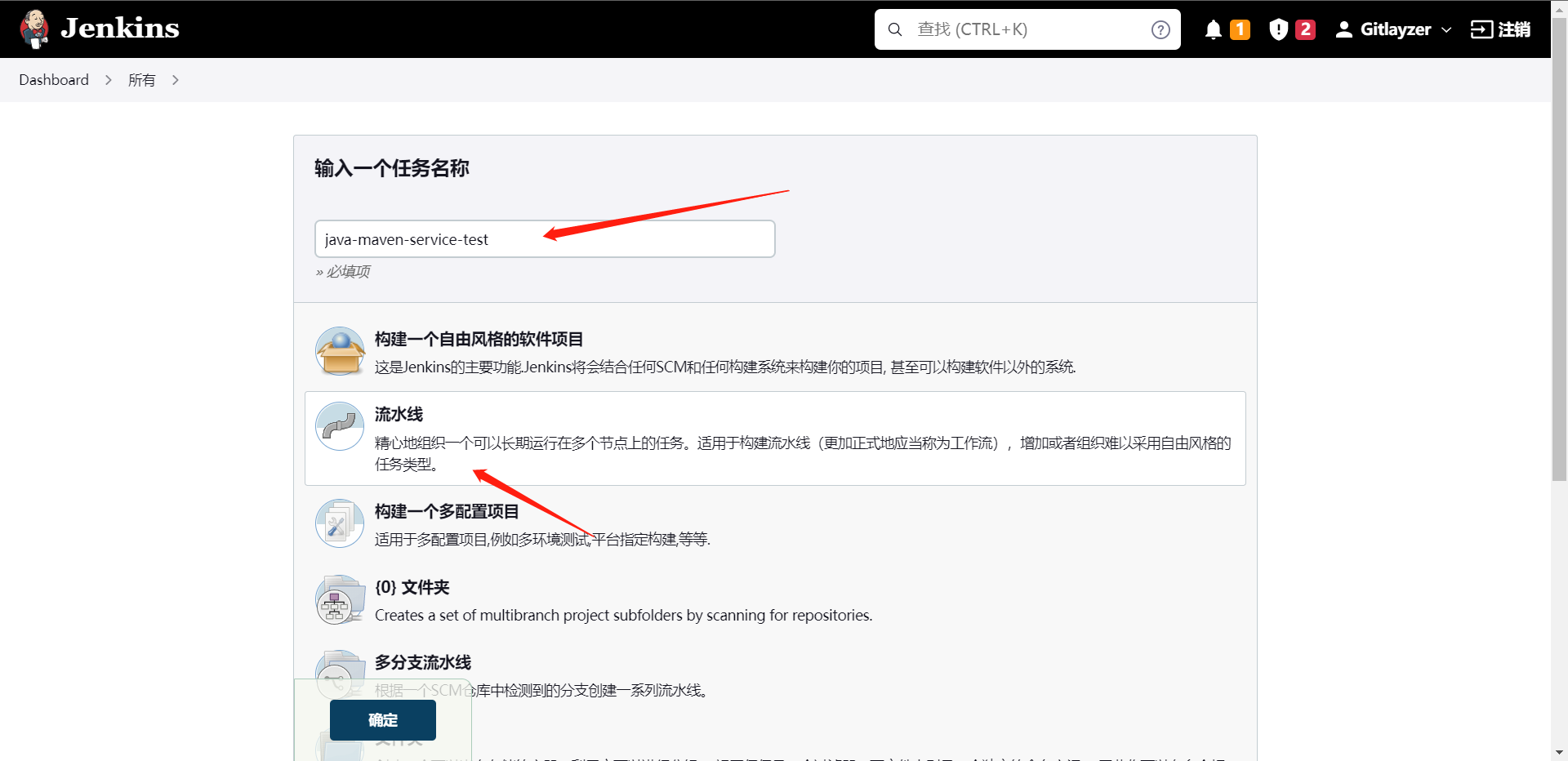

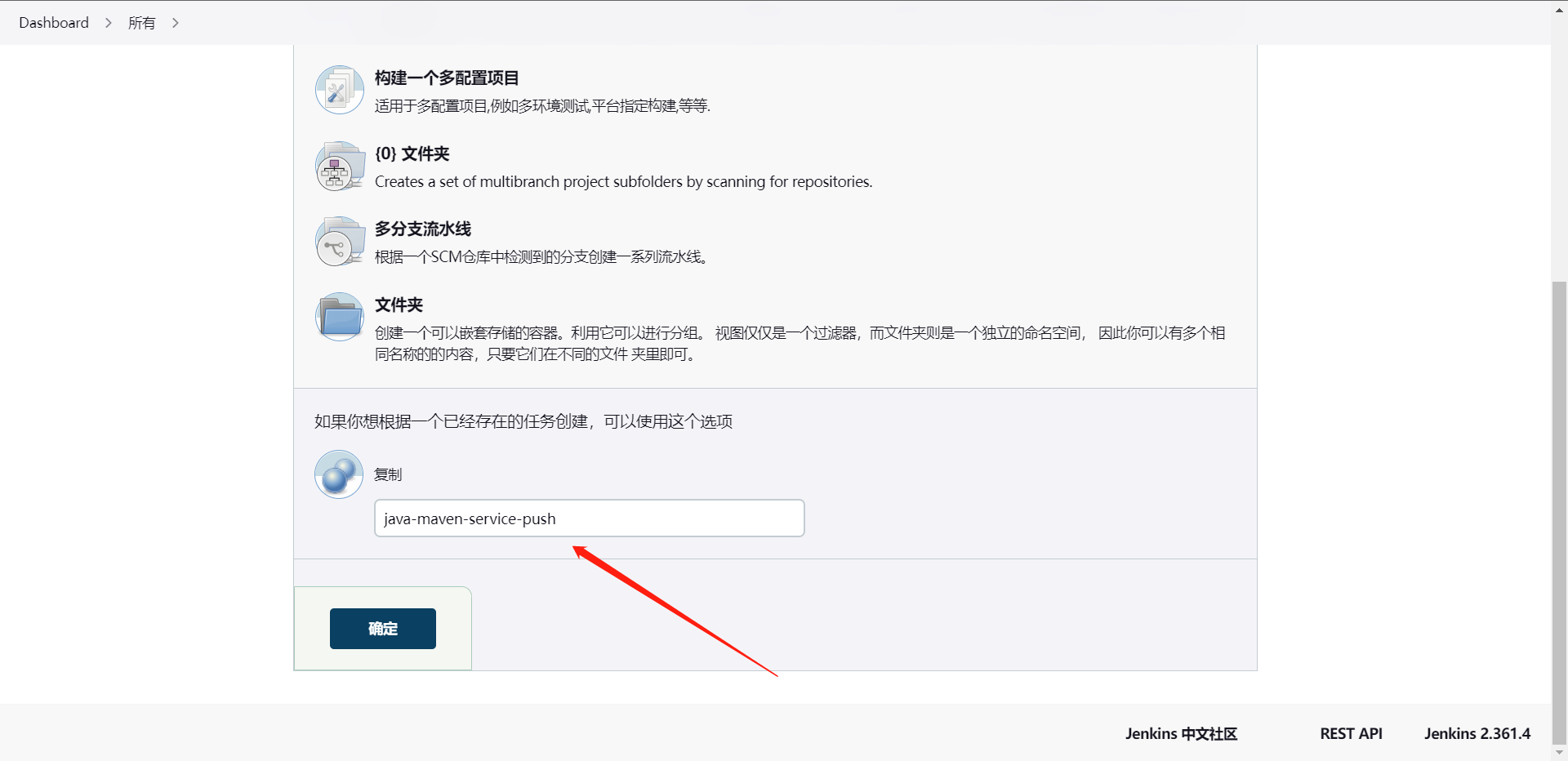

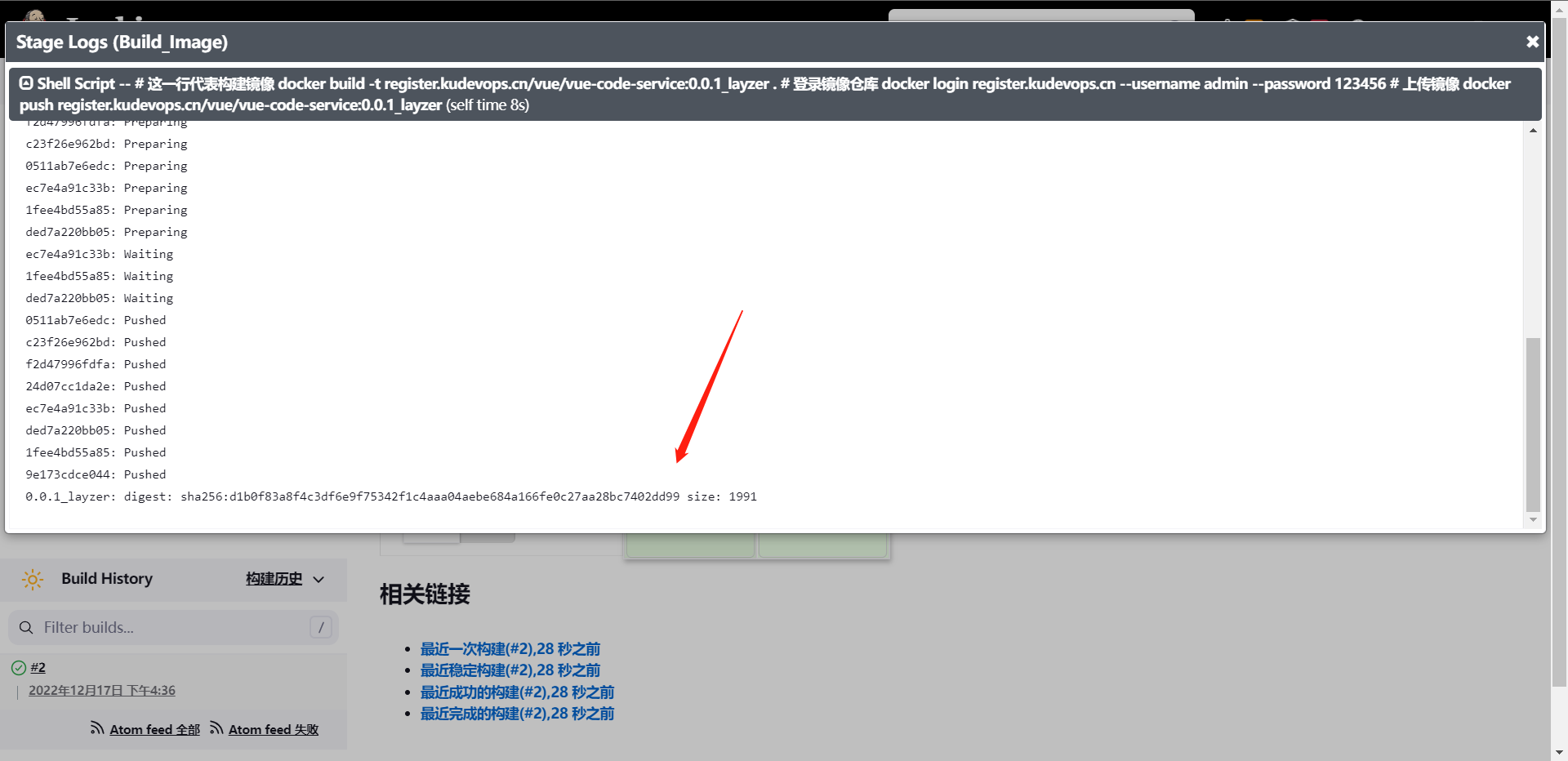

package org.library