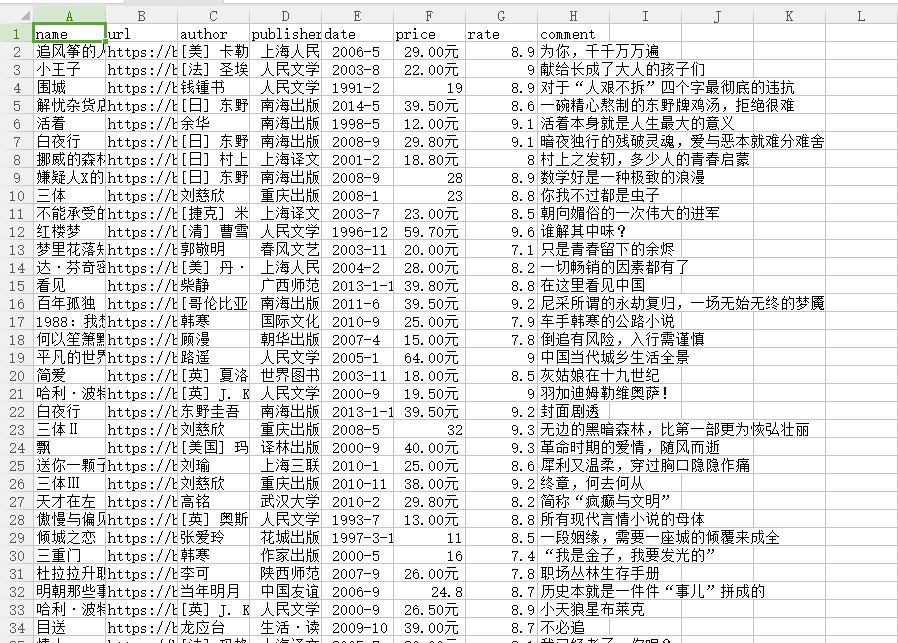

爬去豆瓣图书top250数据存储到csv中

from lxml import etree import requests import csv fp=open('C://Users/Administrator/Desktop/lianxi/doubanbook.csv','w+',newline='',encoding='utf-8') writer=csv.writer(fp) writer.writerow(('name','url','author','publisher','date','price','rate','comment')) headers={ #'User-Agent':'Nokia6600/1.0 (3.42.1) SymbianOS/7.0s Series60/2.0 Profile/MIDP-2.0 Configuration/CLDC-1.0' 'User-Agent':'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.87 Safari/537.36' } urls=['https://book.douban.com/top250?start={}'.format(str(i))for i in range(0,50,25)] for url in urls: html=requests.get(url,headers=headers) selector=etree.HTML(html.text) infos=selector.xpath('//tr[@class="item"]') for info in infos: name=info.xpath('td/div/a/@title')[0] url=info.xpath('td/div/a/@href')[0] book_infos=info.xpath('td/p/text()')[0] author=book_infos.split('/')[0] publisher=book_infos.split('/')[-3] date=book_infos.split('/')[-2] price=book_infos.split('/')[-1] rate=info.xpath('td/div/span[2]/text()')[0] comments=info.xpath('td/p/span/text()') comment=comments[0] if len(comments) != 0 else "空" writer.writerow((name,url,author,publisher,date,price,rate,comment)) fp.close()

浙公网安备 33010602011771号

浙公网安备 33010602011771号