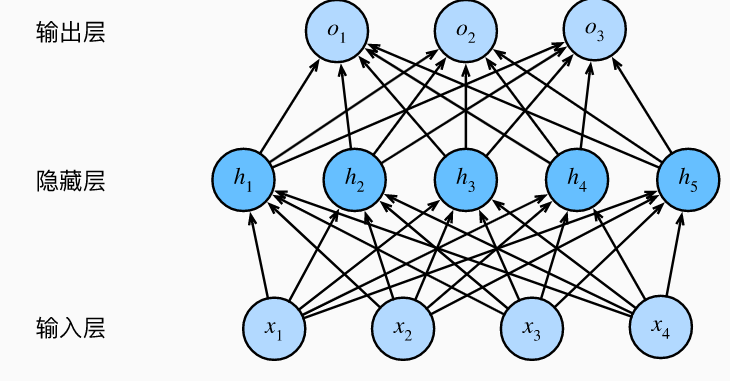

【深度学习pytorch】多层感知机

使用Fashion-mnist数据集,一个隐藏层:

多层感知机从零实现

import torch from torch import nn from d2l import torch as d2l batch_size = 256 train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size) input_nums, hidden_nums, output_nums = 784, 256, 10 W1 = nn.Parameter(torch.randn(input_nums, hidden_nums) * 0.01) b1 = nn.Parameter(torch.zeros(hidden_nums)) W2 = nn.Parameter(torch.randn(hidden_nums, output_nums) * 0.01) b2 = nn.Parameter(torch.zeros(output_nums)) params = [W1, b1, W2, b2] def net(X): X = X.reshape((-1, input_nums)) H = torch.matmul(X, W1) + b1 H = torch.relu(H) return torch.matmul(H, W2) + b2 loss = nn.CrossEntropyLoss(reduction="none") num_epochs, lr = 10, 0.1 updater = torch.optim.SGD(params, lr=lr) d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, updater)

多层感知机简洁实现

import torch from torch import nn from d2l import torch as d2l net = nn.Sequential(nn.Flatten(), nn.Linear(784, 256), nn.ReLU(), nn.Linear(256, 10)) def init_weights(m): if type(m) == nn.Linear: nn.init.normal_(m.weight, std=0.01) net.apply(init_weights) batch_size, lr, num_epochs = 256, 0.1, 10 loss = nn.CrossEntropyLoss(reduction='none') trainer = torch.optim.SGD(net.parameters(), lr=lr) train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size) d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, trainer)

浙公网安备 33010602011771号

浙公网安备 33010602011771号