scrapy简单分布式爬虫

经过一段时间的折腾,终于整明白scrapy分布式是怎么个搞法了,特记录一点心得。

虽然scrapy能做的事情很多,但是要做到大规模的分布式应用则捉襟见肘。有能人改变了scrapy的队列调度,将起始的网址从start_urls里分离出来,改为从redis读取,多个客户端可以同时读取同一个redis,从而实现了分布式的爬虫。就算在同一台电脑上,也可以多进程的运行爬虫,在大规模抓取的过程中非常有效。

准备:

1、windows一台(从:scrapy)

2、linux一台(主:scrapy\redis\mongo)

ip:192.168.184.129

3、python3.6

linux下scrapy的配置步骤:

1、安装python3.6 yum install openssl-devel -y 解决pip3不能使用的问题(pip is configured with locations that require TLS/SSL, however the ssl module in Python is not available) 下载python软件包,Python-3.6.1.tar.xz,解压后 ./configure --prefix=/python3 make make install 加上环境变量: PATH=/python3/bin:$PATH:$HOME/bin export PATH 安装完成后,pip3默认也已经安装完成了(安装前需要先yum gcc) 2、安装Twisted 下载Twisted-17.9.0.tar.bz2,解压后 cd Twisted-17.9.0, python3 setup.py install 3、安装scrapy pip3 install scrapy pip3 install scrapy-redis 4、安装redis 见博文redis安装与简单使用 错误:You need tcl 8.5 or newer in order to run the Redis test 1、wget http://downloads.sourceforge.net/tcl/tcl8.6.1-src.tar.gz 2、tar -xvf tcl8.6.1-src.tar.gz 3、cd tcl8.6.1/unix ; make; make install

cp /root/redis-3.2.11/redis.conf /etc/

启动:/root/redis-3.2.11/src/redis-server /etc/redis.conf & 5、pip3 install redis 6、安装mongodb 参考菜鸟教程:http://www.runoob.com/mongodb/mongodb-linux-install.html

启动:# mongod --bind_ip 192.168.184.129 &

7、pip3 install pymongo

windows上scrapy的部署步骤:

1、安装wheel pip install wheel 2、安装lxml https://pypi.python.org/pypi/lxml/4.1.0 3、安装pyopenssl https://pypi.python.org/pypi/pyOpenSSL/17.5.0 4、安装Twisted https://www.lfd.uci.edu/~gohlke/pythonlibs/ 5、安装pywin32 https://sourceforge.net/projects/pywin32/files/ 6、安装scrapy pip install scrapy

部署代码:

我以美剧天堂的电影爬取为简单例子,说一下分布式的实现,代码linux和windows上各放一份,配置一样,两者可同时运行爬取。

只列出需要修改的地方:

settings

设置爬取数据的存储数据库(mongodb),指纹和queue存储的数据库(redis)

ROBOTSTXT_OBEY = False # 禁止robot CONCURRENT_REQUESTS = 1 # scrapy调试queue的最大并发,默认16 ITEM_PIPELINES = { 'meiju.pipelines.MongoPipeline': 300, } MONGO_URI = '192.168.184.129' # mongodb连接信息 MONGO_DATABASE = 'mj' SCHEDULER = "scrapy_redis.scheduler.Scheduler" # 使用scrapy_redis的调度 DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter" # 在redis库中去重(url) # REDIS_URL = 'redis://root:kongzhagen@localhost:6379' # 如果redis有密码,使用这个配置 REDIS_HOST = '192.168.184.129' #redisdb连接信息 REDIS_PORT = 6379 SCHEDULER_PERSIST = True # 不清空指纹

piplines

存储到MongoDB的代码

import pymongo class MeijuPipeline(object): def process_item(self, item, spider): return item class MongoPipeline(object): collection_name = 'movies' def __init__(self, mongo_uri, mongo_db): self.mongo_uri = mongo_uri self.mongo_db = mongo_db @classmethod def from_crawler(cls, crawler): return cls( mongo_uri=crawler.settings.get('MONGO_URI'), mongo_db=crawler.settings.get('MONGO_DATABASE', 'items') ) def open_spider(self, spider): self.client = pymongo.MongoClient(self.mongo_uri) self.db = self.client[self.mongo_db] def close_spider(self, spider): self.client.close() def process_item(self, item, spider): self.db[self.collection_name].insert_one(dict(item)) return item

items

数据结构

import scrapy class MeijuItem(scrapy.Item): movieName = scrapy.Field() status = scrapy.Field() english = scrapy.Field() alias = scrapy.Field() tv = scrapy.Field() year = scrapy.Field() type = scrapy.Field()

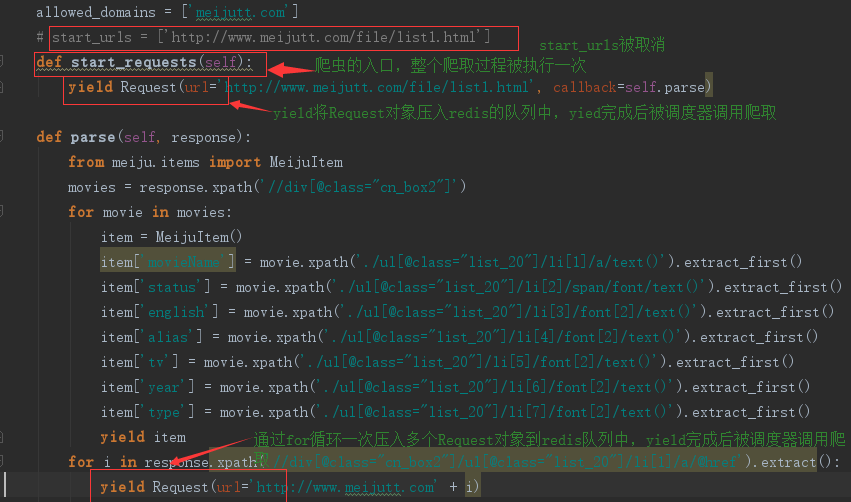

爬虫脚本mj.py

# -*- coding: utf-8 -*- import scrapy from scrapy import Request class MjSpider(scrapy.Spider): name = 'mj' allowed_domains = ['meijutt.com'] # start_urls = ['http://www.meijutt.com/file/list1.html'] def start_requests(self): yield Request(url='http://www.meijutt.com/file/list1.html', callback=self.parse) def parse(self, response): from meiju.items import MeijuItem movies = response.xpath('//div[@class="cn_box2"]') for movie in movies: item = MeijuItem() item['movieName'] = movie.xpath('./ul[@class="list_20"]/li[1]/a/text()').extract_first() item['status'] = movie.xpath('./ul[@class="list_20"]/li[2]/span/font/text()').extract_first() item['english'] = movie.xpath('./ul[@class="list_20"]/li[3]/font[2]/text()').extract_first() item['alias'] = movie.xpath('./ul[@class="list_20"]/li[4]/font[2]/text()').extract_first() item['tv'] = movie.xpath('./ul[@class="list_20"]/li[5]/font[2]/text()').extract_first() item['year'] = movie.xpath('./ul[@class="list_20"]/li[6]/font[2]/text()').extract_first() item['type'] = movie.xpath('./ul[@class="list_20"]/li[7]/font[2]/text()').extract_first() yield item for i in response.xpath('//div[@class="cn_box2"]/ul[@class="list_20"]/li[1]/a/@href').extract(): yield Request(url='http://www.meijutt.com' + i) # next = 'http://www.meijutt.com' + response.xpath("//a[contains(.,'下一页')]/@href")[1].extract() # print(next) # yield Request(url=next, callback=self.parse)

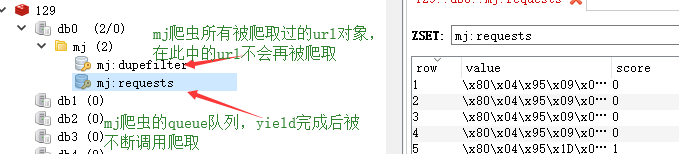

看一下redis中的情况:

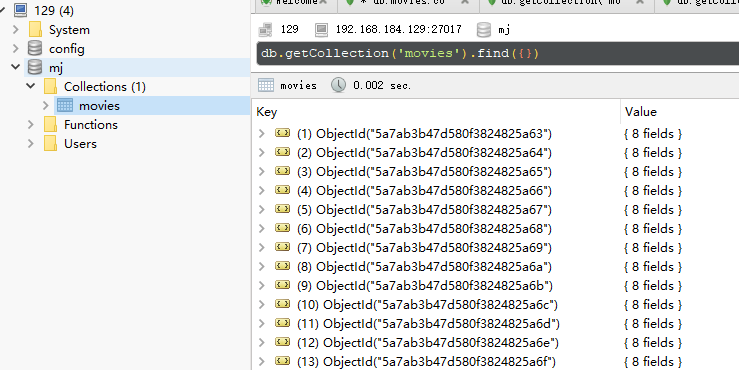

看看mongodb中的数据: