BeautifulSoup爬虫基础知识

安装beautiful soup模块

Windows:

pip install beautifulsoup4

Linux:

apt-get install python-bs4

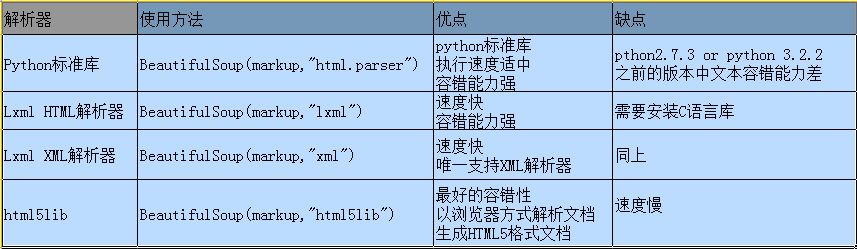

BS4解析器比较

BS官方推荐使用lxml作为解析器,因为其速度快,也比较稳定。那么lxml解析器是怎么安装的呢?

Windows下安装lxml方法:

1、pip安装

pip install lxml

安装出错,原因是需要Visual c++,在windows下通过pip安装lmxl总会出现问题,如果你非要使用pip去安装的话,就把依赖一一解决了再pip.

2、手工安装

1、先在http://www.lfd.uci.edu/~gohlke/pythonlibs/#lxml下载符合自己系统版本的lmxl,如lxml‑3.6.4‑cp27‑cp27m‑win_amd64.whl

2、安装wheel模块,pip install wheel

3、安装lxml模块,pip install lxml‑3.6.4‑cp27‑cp27m‑win_amd64.whl

Linux下安装lxml方法:

apt-get install python-lxml

BS4解析器的使用

<!DOCTYPE html> <html lang="en"> <head> <meta charset="UTF-8"> <title>武汉旅游景点</title> </head> <body> <div id="content"> <div class="title"> <h3>武汉景点</h3> </div> <ul class="table"> <li>景点<a>门票价格</a></li> </ul> <ul class="content"> <li nu="1">东湖<a class="price">60</a></li> <li nu="2">磨山<a class="price">60</a></li> <li nu="3">欢乐谷<a class="price">108</a></li> <li nu="4">海昌极地海洋世界<a class="price">150</a></li> <li nu="5" src="http://mm.howkuai.com/wp-content/uploads/2017a/03/06/limg.jpg">玛雅水上乐园<a class="price">150</a></li> </ul> </div> </body> </html>

#!/usr/bin/env python # _*_ coding:utf-8 _*_ from bs4 import BeautifulSoup soup = BeautifulSoup(open("scenery.html"),"lxml") print soup.prettify()

字符集的问题

当一个文件或网页导入BeautifulSoup之后,它会自动地很快猜测出文件或网页的常用字符编码,如果不能自动猜测出来的话可以用exclude_encoding和from_encoding来处理。

排除某种编码

soup = BeautifulSoup(open("scenery.html"),exclude_encodings=["iso-8859-7","gb2312"])

使用某种编码

soup = BeautifulSoup(open("scenery.html"),from_encoding="big5")

BS解析的原理

bs4将网页节点解析成了一个个Tag,然后根据标签名称、标签属性名称、标签属性值及顺序等将数据过滤出来。

1、根据标签名称查找标签

soup.TagName

soup.find(TagName)

soup.find_all(TagName)

#!/usr/bin/env python

# _*_ coding:utf-8 _*_

from bs4 import BeautifulSoup

soup = BeautifulSoup(open("scenery.html"),"lxml")

# 解析出第一个a标签

print soup.a

print soup.find("a")

# 解析出所有a标签

print soup.find_all("a")

结果:

<a>门票价格</a>

<a>门票价格</a>

[<a>\u95e8\u7968\u4ef7\u683c</a>, <a class="price">60</a>, <a class="price">60</a>, <a class="price">108</a>, <a class="price">150</a>, <a class="price">150</a>]

2、标签名称相同时,外加属性值解析数据

特殊写法:仅适用于查找class的内容,可以理解为专为class而设

soup.find(TagName,[attrsName])

soup.find_all(TagName,[attrsName])

万能写法,还可用于解析自定义属性:

soup.find(TagName,attrs={AttrName:AttrValue})

soup.find_all(TagName,attrs={AttrName:AttrValue})

#!/usr/bin/env python

# _*_ coding:utf-8 _*_

from bs4 import BeautifulSoup

soup = BeautifulSoup(open("scenery.html"),"lxml")

# 解析出第一个属性值为price的a标签

print soup.find("a","price")

print soup.find("a",attrs={"class":"price"})

# 解析出所有属性值为price的a标签

print soup.find_all("a","price")

结果: <a class="price">60</a> <a class="price">60</a> [<a class="price">60</a>, <a class="price">60</a>, <a class="price">108</a>, <a class="price">150</a>, <a class="price">150</a>] <li nu="1">东湖<a class="price">60</a></li>

解析标签值

# 解析属性值

print soup.find("li",attrs={"nu":"1"}).get("nu")

# 解析文本

print soup.find("li",attrs={"nu":"1"}).a.get_text()

结果:

1

60

显示属性的值

# 解析出属性nu=1的li标签

nu5 = soup.find("li",attrs={"nu":"5"})

# 解析nu=5的li标签的src属性值

print nu5.attrs['src']

根据文本找标签

r = re.compile("texttest")

soup.find("a",text=r).parent

查找内容为texttest的a标签的父标签

到此为止可以用BeautifulSoup做些简单的爬虫了。

用BeautifulSoup写一个简单的处理百度贴吧的例子,爬取百度贴吧中权利的游戏的贴子。

1 #!/usr/bin/env python 2 # _*_ coding:utf-8 _*_ 3 import urllib2 4 from bs4 import BeautifulSoup 5 import itemWrite 6 7 8 class Item(object): 9 title = None 10 firstAuthor = None 11 firstTime = None 12 reNum = None 13 content = None 14 lastAuthor = None 15 lastTime = None 16 17 class GetTiebaInfo(object): 18 def __init__(self,url): 19 self.url = url 20 self.pageSum = 5 21 self.urls = self.getUrls(self.pageSum) 22 self.items = self.spider(self.urls) 23 self.itemWrite("test.txt",self.items) 24 25 def getUrls(self,pageSum): 26 urls = [] 27 pns = [str(i*50) for i in range(pageSum)] 28 ul = self.url.split("=") 29 for pn in pns: 30 ul[-1] = pn 31 tmp = "=".join(ul) 32 urls.append(tmp) 33 return urls 34 35 def getResponseContent(self,url): 36 try: 37 response = urllib2.urlopen(url.encode("utf8")) 38 return response.read() 39 except: 40 print "url open faild" 41 return None 42 43 def spider(self,urls): 44 items = [] 45 for url in urls: 46 htmlContent = self.getResponseContent(url) 47 soup = BeautifulSoup(htmlContent,'lxml') 48 tagsli = soup.find_all("li",attrs={"class":" j_thread_list clearfix"}) 49 for tag in tagsli: 50 item = Item() 51 item.title = tag.find("a",attrs={"class":"j_th_tit "}).get_text().strip() 52 try: 53 item.firstAuthor = tag.find("span","frs-author-name-wrap").a.get_text().strip() 54 except: 55 item.firstAuthor = 'zzz' 56 item.firstTime = tag.find("span","pull-right is_show_create_time").get_text().strip() 57 item.reNum = tag.find("span",attrs={"title":u"回复"}).get_text().strip() 58 item.content = tag.find("div",attrs={"class":"threadlist_abs threadlist_abs_onlyline "}).get_text().strip() 59 item.lastAuthor = tag.find("span",attrs={"class":"tb_icon_author_rely j_replyer"}).a.get_text().strip() 60 item.lastTime = tag.find("span",attrs={"title":u"最后回复时间"}).get_text().strip() 61 items.append(item) 62 return items 63 64 def itemWrite(self,filename,items): 65 itemWrite.writeTotxt(filename,items) 66 67 68 if __name__ == '__main__': 69 url = u'http://tieba.baidu.com/f?kw=权利的游戏&ie=utf-8&pn=0' 70 Get = GetTiebaInfo(url)

1 #!/usr/bin/env python 2 # _*_ coding:utf-8 _*_ 3 4 # 写到文本文件 5 def writeTotxt(fileName,items): 6 with open(fileName,'w') as fp: 7 for item in items: 8 fp.write("title:%s\t author:%s\t firstTime:%s\n content:%s\n reNum:%s\t lastAuthor:%s\t lastTime:%s\n\n" 9 %(item.title.encode("utf8"),item.firstAuthor.encode("utf8"),item.firstTime.encode("utf8"),item.content.encode("utf8"),item.reNum.encode("utf8"),item.lastAuthor.encode("utf8"),item.lastTime.encode("utf8"))) 10 11 # 写到Excel文件 12 13 14 # 写到DB

itemWrite中我只写了一个将数据写入文本的函数,还有写入excel和db的函数没有完善,因为都很简单,不想写了,有个意思就行了。