flume从log4j收集日志输出到kafka

1. flume安装

(1)下载:wget http://archive.cloudera.com/cdh5/cdh/5/flume-ng-1.6.0-cdh5.7.1.tar.gz

(2)解压:tar zxvf flume-ng-1.6.0-cdh5.7.1.tar.gz

(3)环境变量:

export FLUME_HOME=/xxx/soft/apache-flume-1.6.0-cdh5.7.1-bin export PATH=$PATH:$FLUME_HOME/bin

source /etc/profile

vim conf/flume-env.sh

export JAVA_HOME=/letv/soft/java export HADOOP_HOME=/etc/hadoop

(4)创建avro.conf

vim conf/avro.conf a1.sources=source1 a1.channels=channel1 a1.sinks=sink1 a1.sources.source1.type=avro a1.sources.source1.bind=10.112.28.240 a1.sources.source1.port=4141 a1.sources.source1.channels = channel1 a1.channels.channel1.type=memory a1.channels.channel1.capacity=10000 a1.channels.channel1.transactionCapacity=1000 a1.channels.channel1.keep-alive=30 a1.sinks.sink1.type = org.apache.flume.sink.kafka.KafkaSink a1.sinks.sink1.topic = hdtest.topic.jenkin.com a1.sinks.sink1.brokerList = 10.11.97.57:9092,10.11.97.58:9092,10.11.97.60:9092 a1.sinks.sink1.requiredAcks = 0 a1.sinks.sink1.sink.batchSize = 20 a1.sinks.sink1.channel = channel1

source1.bind:flume安装的服务器

sink1.brokerList:kafka集群

sink1.topic:发往kafka的topic

(5)运行flume:

flume-ng agent --conf /xxx/soft/apache-flume-1.6.0-cdh5.7.1-bin/conf --conf-file /xxx/soft/apache-flume-1.6.0-cdh5.7.1-bin/conf/avro.conf --name a1 -Dflume.root.logger=INFO,console

2. 编写日志生产程序

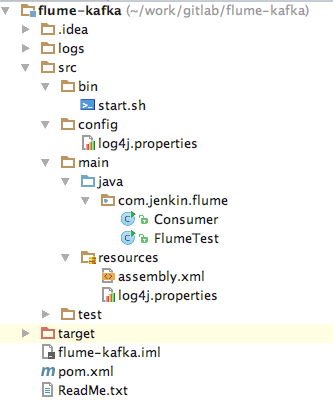

(1)工程结构

2. log4j.properties

log4j.rootLogger=INFO,console

# for package com.demo.kafka, log would be sent to kafka appender.

log4j.logger.com.demo.flume=info,stdout,file,flume

### flume ###

log4j.appender.flume = org.apache.flume.clients.log4jappender.Log4jAppender

log4j.appender.flume.Hostname = 10.112.28.240

log4j.appender.flume.Port = 4141

log4j.appender.flume.UnsafeMode = true

log4j.appender.flume.layout=org.apache.log4j.PatternLayout

log4j.appender.flume.layout.ConversionPattern=%d{yyyy-MM-dd HH:mm:ss} %p [%c:%L] - %m%n

# appender console

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.target=System.out

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d [%-5p] [%t] - [%l] %m%n

### file ###

log4j.appender.file=org.apache.log4j.DailyRollingFileAppender

log4j.appender.file.Threshold=INFO

log4j.appender.file.File=./logs/tracker/tracker.log

log4j.appender.file.Append=true

log4j.appender.file.DatePattern='.'yyyy-MM-dd

log4j.appender.file.layout=org.apache.log4j.PatternLayout

log4j.appender.file.layout.ConversionPattern=%d{yyyy-MM-dd HH:mm:ss} %c{1} [%p] %m%n

### stdout ###

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.Threshold=INFO

log4j.appender.stdout.Target=System.out

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d{yyyy-MM-dd HH:mm:ss} %c{1} [%p] %m%n

配置解释:flume服务器运行在10.112.28.240上,并且监听的端口为4141,在log4j中只需要将日志发送到10.112.28.240的4141端口就能成功的发送到flume上。flume会监听并收集该端口上的数据信息,然后将它转化成kafka event,并发送到kafka集群hdtest.topic.jenkin.com topic下。

3. 日志生产者

public class FlumeTest {

private static final Logger LOGGER = Logger.getLogger(FlumeTest.class);

public static void main(String[] args) throws InterruptedException {

for (int i = 20; i < 100; i++) {

LOGGER.info("Info [" + i + "]");

Thread.sleep(1000);

}

}

}

4. kafka日志消费者

public class Consumer {

public static void main(String[] args) {

System.out.println("begin consumer");

connectionKafka();

System.out.println("finish consumer");

}

@SuppressWarnings("resource")

public static void connectionKafka() {

Properties props = new Properties();

props.put("bootstrap.servers", "10.11.97.57:9092,10.11.97.58:9092,10.11.97.60:9092");

props.put("group.id", "testConsumer");

props.put("enable.auto.commit", "true");

props.put("auto.commit.interval.ms", "1000");

props.put("session.timeout.ms", "30000");

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props);

consumer.subscribe(Arrays.asList("hdtest.topic.jenkin.com"));

while (true) {

ConsumerRecords<String, String> records = consumer.poll(100);

try {

Thread.sleep(2000);

} catch (InterruptedException e) {

e.printStackTrace();

}

for (ConsumerRecord<String, String> record : records) {

System.out.printf("===================offset = %d, key = %s, value = %s", record.offset(), record.key(),

record.value());

}

}

}

}

浙公网安备 33010602011771号

浙公网安备 33010602011771号