K8S基础 - 02安装

推荐学习官网kubeadm: https://github.com/kubernetes/kubeadm/blob/main/docs/design/design_v1.10.md; 安装版本: v1.19.3

192.168.0.6 master: 安装docker、kubelet、kubeadm, 执行kubeadm init

192.168.0.7 node1: 安装docker、kubelet、kubeadm, 执行kubeadm join

192.168.0.8 node2

一、系统初始化

设置主机名、关闭SELinux、关闭防火墙、设置系统核心参数、关闭SWAP; 时间同步; K8S对主机名有特定要求,必须为 xxx.xxx.xxx

[root@k8s_master ~]# hostnamectl set-hostname k8s-master.bearpx.com [root@k8s_node1 ~]# hostnamectl set-hostname k8s-node01.bearpx.com [root@k8s_node2 ~]# hostnamectl set-hostname k8s-node02.bearpx.com # 添加主机名与IP对应关系 [root@localhost ~]# vi /etc/hosts [root@localhost ~]# sed -i 's/^SELINUX=enforcing$/SELINUX=disabled/' /etc/selinux/config [root@localhost ~]# getenforce Disabled [root@localhost ~]# systemctl disable firewalld.service [root@localhost ~]# vi /etc/sysctl.conf # kubenetes config net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 vm.swappiness = 0 net.ipv4.ip_forward = 1 [root@localhost ~]# sysctl -p [root@localhost ~]# swapoff -a [root@localhost ~]# sed -ir 's/.*swap.*/#&/' /etc/fstab [root@localhost ~]# vi /etc/fstab 注销swap挂载 # 时间同步 [root@localhost ~]# yum install ntpdate -y [root@localhost ~]# ntpdate time.windows.com

# 开启内核模块,CentOS8 已默认开启 [root@k8s-node33 ~]# vi /etc/sysconfig/modules/ipvs.modules modprobe -- ip_vs modprobe -- ip_vs_rr modprobe -- ip_vs_wrr modprobe -- ip_vs_sh modprobe -- nf_conntrack_ipv4 [root@k8s-node33 ~]# chmod +x /etc/sysconfig/modules/ipvs.modules [root@k8s-node33 ~]# bash /etc/sysconfig/modules/ipvs.modules [root@k8s-node33 ~]# lsmod | grep -e ip_vs ip_vs_sh 16384 0 ip_vs_wrr 16384 0 ip_vs_rr 16384 0 ip_vs 172032 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr nf_defrag_ipv6 20480 1 ip_vs nf_conntrack 155648 8 xt_conntrack,nf_conntrack_ipv4,nf_nat,ipt_MASQUERADE,nf_nat_ipv4,xt_nat,nf_conntrack_netlink,ip_vs libcrc32c 16384 4 nf_conntrack,nf_nat,xfs,ip_vs

二、安装Docker

Kubernetes v1.19.3 默认CRI(容器运行时)为Docker。

[root@localhost ~]# yum remove docker docker-client docker-client-latest docker-common docker-latest docker-latest-logrotate docker-logrotate docker-engine

[root@localhost ~]# yum -y update

[root@localhost ~]# yum -y install yum-utils device-mapper-persistent-data lvm2 bridge-utils.x86_64

#安装阿里云镜像源: yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

[root@localhost ~]# yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

已加载插件:fastestmirror

adding repo from: https://download.docker.com/linux/centos/docker-ce.repo

grabbing file https://download.docker.com/linux/centos/docker-ce.repo to /etc/yum.repos.d/docker-ce.repo

repo saved to /etc/yum.repos.d/docker-ce.repo

[root@localhost ~]# yum install docker-ce docker-ce-cli containerd.io

[root@localhost ~]# docker --version

Docker version 19.03.13, build 4484c46d9d

[root@localhost ~]# systemctl enable --now docker

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

[root@localhost ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://b5imc2v6.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"

]

}

[root@localhost ~]# systemctl start docker

三、安装Kubernetes组件

[root@localhost ~]# cat /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

[root@localhost ~]# yum makecache

# 指定版本安装: kubelet-1.19.3 kubectl-1.19.3 kubeadm-1.19.3

[root@localhost ~]# yum install -y kubelet kubeadm kubectl --disableexcludes=kubernetes

[root@localhost ~]# systemctl enable --now kubelet

[root@k8s-master ~]# rpm -ql kubelet /etc/kubernetes/manifests /etc/sysconfig/kubelet /usr/bin/kubelet /usr/lib/systemd/system/kubelet.service

[root@k8s-master ~]# cat /etc/sysconfig/kubelet KUBELET_EXTRA_ARGS=

四、下载镜像

[root@localhost ~]# kubeadm config images list

W1109 15:56:13.435335 3921 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

k8s.gcr.io/kube-apiserver:v1.19.3

k8s.gcr.io/kube-controller-manager:v1.19.3

k8s.gcr.io/kube-scheduler:v1.19.3

k8s.gcr.io/kube-proxy:v1.19.3

k8s.gcr.io/pause:3.2

k8s.gcr.io/etcd:3.4.13-0

k8s.gcr.io/coredns:1.7.0

[root@k8s-node31 ~]# cat pull_k8s_container.sh

#!/bin/bash

KUBE_VERSION=v1.21.0

PAUSE_VERSION=3.2

ETCD_VERSION=3.4.13-0

DNS_VERSION=1.7.0

PULL_CON_URL=registry.aliyuncs.com/google_containers

DST_CON_URL=k8s.gcr.io

for i in kube-apiserver kube-controller-manager kube-scheduler kube-proxy;

do

docker pull $PULL_SRC_URL/$i:$KUBE_VERSION

docker tag $PULL_SRC_URL/$i:$KUBE_VERSION $DST_CON_URL/kube-apiserver:$KUBE_VERSION

docker rmi $PULL_SRC_URL/$i:$KUBE_VERSION

done

docker pull $PULL_SRC_URL/pause:$PAUSE_VERSION

docker tag $PULL_SRC_URL/pause:$PAUSE_VERSION $DST_CON_URL/pause:$PAUSE_VERSION

docker rmi $PULL_SRC_URL/pause:$PAUSE_VERSION

docker pull $PULL_SRC_URL/etcd:$ETCD_VERSION

docker tag $PULL_SRC_URL/etcd:$ETCD_VERSION $DST_CON_URL/etcd:$ETCD_VERSION

docker rmi $PULL_SRC_URL/etcd:$ETCD_VERSION

#docker pull $PULL_SRC_URL/coredns:$DNS_VERSION

#docker tag $PULL_SRC_URL/coredns:$DNS_VERSION $DST_CON_URL/coredns:$DNS_VERSION

#docker rmi $PULL_SRC_URL/coredns:$DNS_VERSION

docker pull uhub.service.ucloud.cn/uxhy/coredns:v1.8.0

docker tag uhub.service.ucloud.cn/uxhy/coredns:v1.8.0 k8s.gcr.io/coredns/coredns:v1.8.0

docker rmi uhub.service.ucloud.cn/uxhy/coredns:v1.8.0

五、Master 服务器安装

如果未关闭SWAP

方法1: 在 /etc/sysconfig/kubelet 文件配置 KUBELET_EXTRA_ARGS="--fail-swap-on=false"

方法2: kubeadm init --kubernetes-version=v1.19.3 --apiserver-advertise-address 192.168.6.30 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12

--image-repository=registry.aliyuncs.com/google_containers --ignore-preflight-errors=Swap

[root@localhost ~]# kubeadm init --apiserver-advertise-address 192.168.0.6 --pod-network-cidr 10.244.0.0/16 --kubernetes-version=v1.19.3

W1109 16:33:15.764211 9559 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[init] Using Kubernetes version: v1.19.3

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local localhost.localdomain]

and IPs [10.96.0.1 192.168.0.6]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost localhost.localdomain] and IPs [192.168.0.6 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost localhost.localdomain] and IPs [192.168.0.6 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 29.006764 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.19" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node localhost.localdomain as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node localhost.localdomain as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: uy7klu.a0et5ruj1eghwe37

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.0.6:6443 --token uy7klu.a0et5ruj1eghwe37 \

--discovery-token-ca-cert-hash sha256:6f58d48b44cc79978467a1351a21b8365e9bb11a5d4f22ab882fd863152dd1cd

[root@k8s_master ~]# mkdir -p $HOME/.kube

[root@k8s_master ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s_master ~]# chown $(id -u):$(id -g) $HOME/.kube/config

部署CNI网络插件; 可修改默认镜像地址

[root@k8s_master ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

六、Node服务器安装

## 主机名设置错误报错 [root@k8s_node1 ~]# kubeadm join 192.168.0.6:6443 --token uy7klu.a0et5ruj1eghwe37 \ > --discovery-token-ca-cert-hash sha256:6f58d48b44cc79978467a1351a21b8365e9bb11a5d4f22ab882fd863152dd1cd nodeRegistration.name: Invalid value: "k8s_node1": a DNS-1123 subdomain must consist of lower case alphanumeric characters, '-' or '.', and must start and end with an alphanumeric character (e.g. 'example.com', regex used for validation is '[a-z0-9]([-a-z0-9]*[a-z0-9])?(\.[a-z0-9]([-a-z0-9]*[a-z0-9])?)*') To see the stack trace of this error execute with --v=5 or higher [root@k8s_node1 ~]# hostnamectl set-hostname k8s-node01.bearpx.com [root@k8s-node01 ~]# kubeadm join 192.168.0.6:6443 --token uy7klu.a0et5ruj1eghwe37 \ > --discovery-token-ca-cert-hash sha256:6f58d48b44cc79978467a1351a21b8365e9bb11a5d4f22ab882fd863152dd1cd [preflight] Running pre-flight checks [WARNING Hostname]: hostname "k8s-node01.bearpx.com" could not be reached [WARNING Hostname]: hostname "k8s-node01.bearpx.com": lookup k8s-node01.bearpx.com on 219.147.1.66:53: no such host [preflight] Reading configuration from the cluster... [preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml' [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Starting the kubelet [kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap... This node has joined the cluster: * Certificate signing request was sent to apiserver and a response was received. * The Kubelet was informed of the new secure connection details. Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

默认token有效期为24小时, 过期之后,需要重新创建token

[root@k8s-master ~]# kubeadm token create --print-join-command W0225 11:06:09.991872 71639 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io] kubeadm join 192.168.6.30:6443 --token wmiicj.9mdby4kjcidcs30o --discovery-token-ca-cert-hash sha256:bb8c4e2ead1f29d4d6513750f500f1087360cb5ec38af1c210eb07a5b04e643e

在 Master查看节点状态和 kube-system 命名空间下的Pod状态

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master.bearpx.com Ready master 37d v1.19.3

k8s-node31.bearpx.com Ready <none> 37d v1.19.3

k8s-node32.bearpx.com Ready <none> 20d v1.19.3

k8s-node33.bearpx.com Ready <none> 37d v1.19.3

[root@k8s-master ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-f9fd979d6-cgpdh 1/1 Running 10 17d

coredns-f9fd979d6-kprjc 1/1 Running 11 17d

etcd-k8s-master.bearpx.com 1/1 Running 9 37d

kube-apiserver-k8s-master.bearpx.com 1/1 Running 9 37d

kube-controller-manager-k8s-master.bearpx.com 1/1 Running 10 37d

kube-flannel-ds-gsvsk 1/1 Running 9 37d

kube-flannel-ds-kxqrl 1/1 Running 8 37d

kube-flannel-ds-v5p4l 1/1 Running 10 37d

kube-flannel-ds-wcdg6 1/1 Running 6 20d

kube-proxy-5nmrc 1/1 Running 9 37d

kube-proxy-ls4n4 1/1 Running 6 20d

kube-proxy-mlwpp 1/1 Running 9 37d

kube-proxy-xdhsp 1/1 Running 8 37d

kube-scheduler-k8s-master.bearpx.com 1/1 Running 11 37d

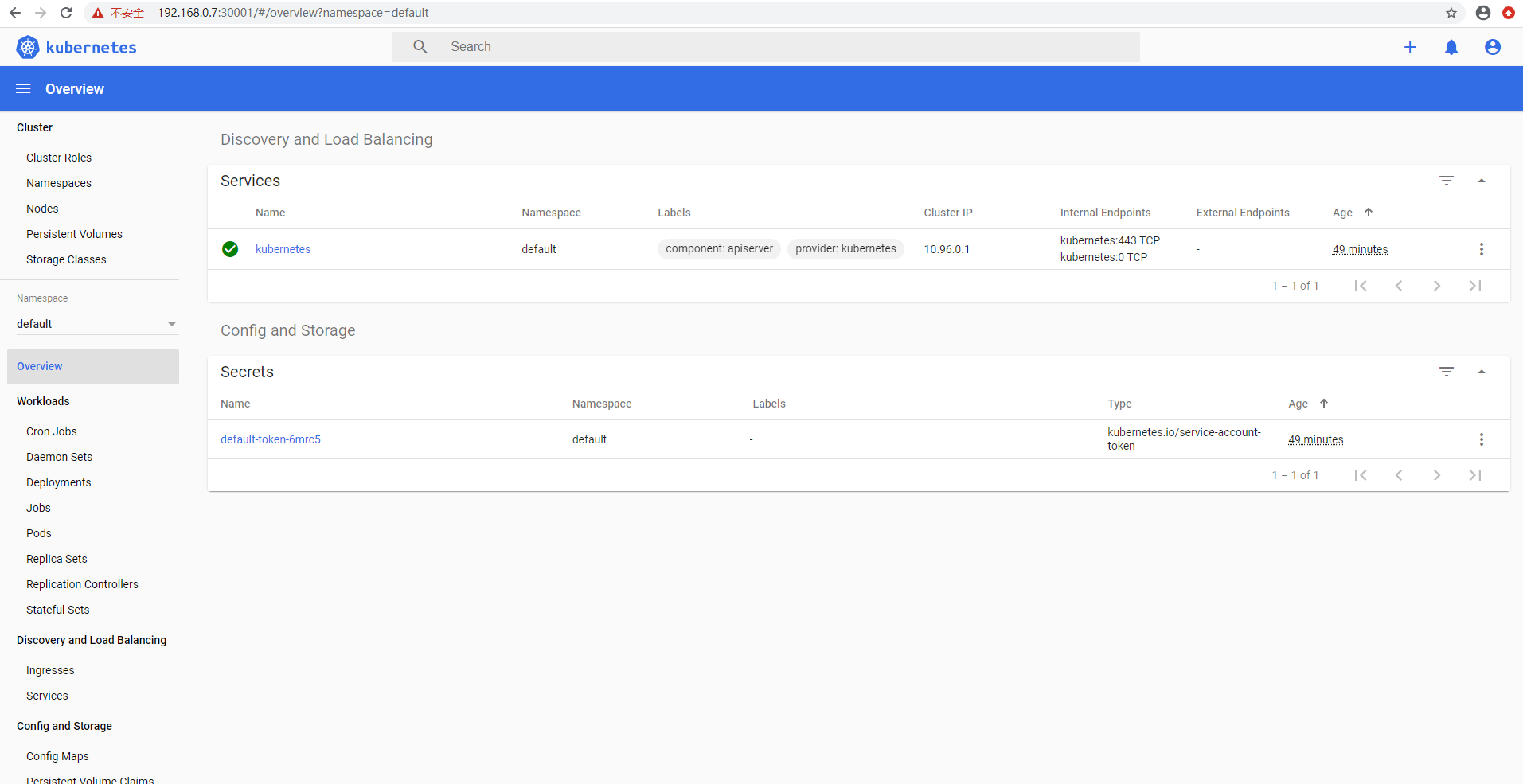

七、安装Dashboard

[root@k8s-node01 ~]# vi /etc/hosts

151.101.108.133 raw.githubusercontent.com

[root@k8s-node01 ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta8/aio/deploy/recommended.yaml

[root@k8s-node01 ~]# sed -i 's/kubernetesui/registry.cn-hangzhou.aliyuncs.com\/loong576/g' recommended.yaml

[root@k8s-node01 ~]# sed -i '/targetPort: 8443/a\ \ \ \ \ \ nodePort: 30001\n\ \ type: NodePort' recommended.yaml

从主节点拷贝 admin.conf文件到从节点

[root@k8s-master ~]# scp -r /etc/kubernetes/admin.conf 192.168.0.7:/etc/kubernetes/admin.conf

[root@k8s-node01 ~]# vi .bash_profile

export KUBECONFIG=/etc/kubernetes/admin.conf

[root@k8s-node01 ~]# source .bash_profile

[root@k8s-node01 ~]# kubectl apply -f recommended.yaml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created

[root@k8s-node01 ~]# kubectl get all -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

pod/dashboard-metrics-scraper-5d9f7fd578-gwqt9 1/1 Running 0 2m44s

pod/kubernetes-dashboard-79564f7bb4-d8pvh 1/1 Running 0 2m44s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/dashboard-metrics-scraper ClusterIP 10.105.152.175 <none> 8000/TCP 2m44s

service/kubernetes-dashboard NodePort 10.111.89.175 <none> 443:30001/TCP 2m45s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/dashboard-metrics-scraper 1/1 1 1 2m44s

deployment.apps/kubernetes-dashboard 1/1 1 1 2m44s

NAME DESIRED CURRENT READY AGE

replicaset.apps/dashboard-metrics-scraper-5d9f7fd578 1 1 1 2m44s

replicaset.apps/kubernetes-dashboard-79564f7bb4 1 1 1 2m44s

[root@k8s-node01 ~]# kubectl create serviceaccount dashboard-admin -n kube-system serviceaccount/dashboard-admin created [root@k8s-node01 ~]# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin created [root@k8s-node01 ~]# kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}') Name: dashboard-admin-token-9f9pv Namespace: kube-system Labels: <none> Annotations: kubernetes.io/service-account.name: dashboard-admin kubernetes.io/service-account.uid: 04ce8f4e-970e-44c6-bc60-bc5e9d884abb Type: kubernetes.io/service-account-token Data ==== ca.crt: 1066 bytes namespace: 11 bytes token: eyJhbGciOiJSUzI1NiIsImtpZCI6Ik1nRUxxOHZ3MFBoOGFIOE8tSmNhbVIwa1QycDJ1OVptU2hpU2J6b01tRXMifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZX J2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tOWY5cHYiLCJrdWJlcm5ldGVzLmlvL3Nlcn ZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiMDRjZThmNGUtOTcwZS00NGM2LWJjNjAtY mM1ZTlkODg0YWJiIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.TBTLEXimSiJEcyW5MVKcRkPKqIT0_p5c24wR6dEYG7n-Hs0KU-CO5JK73msCk-ZXYb-IsNTbSk598 ynYn6K1nLQGFEQA65uAwKhmznpzQtPk_-0yRdOqA921Mc_isJpQDyziCoddujTfpMQ_SBdWM3OXvJ_qp0lIxpo_2HrpmWYXbbQUDX8OTlXhcwS7cAPy8THjJURm6KGDuyVbSk8FsXOZD7BHX_cBmltYipRPK_IvypMRdpWlB2dDwa RRzjcxubsCSSGCSMKZDzuaz57CT2LALr3fBcYGanNNHoVPl22TnvTQvVbBoi8XzlgYfef8G9dYPh07Cw4Pm_xqutb-0g

访问: https://192.168.234.33:30001

Chrome 访问https, 证书不被认可, 提示NET::ERR_CERT_INVALID,无法访问。

解决方法:

在Chrome提示"您的连接不是私密连接"页面的空白区域点击一下,然后输入"thisisunsafe"(页面不会有任何输入提示),输入完成后会自动继续访问

八、Kubernetes 命令

[root@k8s-master ~]# kubeadm init --help

Run this command in order to set up the Kubernetes control plane

The "init" command executes the following phases:

```

preflight Run pre-flight checks

certs Certificate generation

/ca Generate the self-signed Kubernetes CA to provision identities for other Kubernetes components

/apiserver Generate the certificate for serving the Kubernetes API

/apiserver-kubelet-client Generate the certificate for the API server to connect to kubelet

/front-proxy-ca Generate the self-signed CA to provision identities for front proxy

/front-proxy-client Generate the certificate for the front proxy client

/etcd-ca Generate the self-signed CA to provision identities for etcd

/etcd-server Generate the certificate for serving etcd

/etcd-peer Generate the certificate for etcd nodes to communicate with each other

/etcd-healthcheck-client Generate the certificate for liveness probes to healthcheck etcd

/apiserver-etcd-client Generate the certificate the apiserver uses to access etcd

/sa Generate a private key for signing service account tokens along with its public key

kubeconfig Generate all kubeconfig files necessary to establish the control plane and the admin kubeconfig file

/admin Generate a kubeconfig file for the admin to use and for kubeadm itself

/kubelet Generate a kubeconfig file for the kubelet to use *only* for cluster bootstrapping purposes

/controller-manager Generate a kubeconfig file for the controller manager to use

/scheduler Generate a kubeconfig file for the scheduler to use

kubelet-start Write kubelet settings and (re)start the kubelet

control-plane Generate all static Pod manifest files necessary to establish the control plane

/apiserver Generates the kube-apiserver static Pod manifest

/controller-manager Generates the kube-controller-manager static Pod manifest

/scheduler Generates the kube-scheduler static Pod manifest

etcd Generate static Pod manifest file for local etcd

/local Generate the static Pod manifest file for a local, single-node local etcd instance

upload-config Upload the kubeadm and kubelet configuration to a ConfigMap

/kubeadm Upload the kubeadm ClusterConfiguration to a ConfigMap

/kubelet Upload the kubelet component config to a ConfigMap

upload-certs Upload certificates to kubeadm-certs

mark-control-plane Mark a node as a control-plane

bootstrap-token Generates bootstrap tokens used to join a node to a cluster

kubelet-finalize Updates settings relevant to the kubelet after TLS bootstrap

/experimental-cert-rotation Enable kubelet client certificate rotation

addon Install required addons for passing Conformance tests

/coredns Install the CoreDNS addon to a Kubernetes cluster

/kube-proxy Install the kube-proxy addon to a Kubernetes cluster

```

Usage:

kubeadm init [flags]

kubeadm init [command]

Available Commands:

phase Use this command to invoke single phase of the init workflow

Flags:

--apiserver-advertise-address string The IP address the API Server will advertise it's listening on. If not set the default network interface will be used.

--apiserver-bind-port int32 Port for the API Server to bind to. (default 6443)

--apiserver-cert-extra-sans strings Optional extra Subject Alternative Names (SANs) to use for the API Server serving certificate. Can be both IP addresses and DNS names.

--cert-dir string The path where to save and store the certificates. (default "/etc/kubernetes/pki")

--certificate-key string Key used to encrypt the control-plane certificates in the kubeadm-certs Secret.

--config string Path to a kubeadm configuration file.

--control-plane-endpoint string Specify a stable IP address or DNS name for the control plane.

--cri-socket string Path to the CRI socket to connect. If empty kubeadm will try to auto-detect this value; use this option only if you have more than one CRI installed

or if you have non-standard CRI socket.

--dry-run Don't apply any changes; just output what would be done.

--experimental-patches string Path to a directory that contains files named "target[suffix][+patchtype].extension". For example, "kube-apiserver0+merge.yaml" or

just "etcd.json". "patchtype" can be one of "strategic", "merge" or "json" and they match the patch formats supported by kubectl. The default "patchtype" is "strategic".

"extension" must be either "json" or "yaml". "suffix" is an optional string that can be used to determine which patches are applied first alpha-numerically.

--feature-gates string A set of key=value pairs that describe feature gates for various features. Options are:

IPv6DualStack=true|false (ALPHA - default=false)

PublicKeysECDSA=true|false (ALPHA - default=false)

-h, --help help for init

--ignore-preflight-errors strings A list of checks whose errors will be shown as warnings. Example: 'IsPrivilegedUser,Swap'. Value 'all' ignores errors from all checks.

--image-repository string Choose a container registry to pull control plane images from (default "k8s.gcr.io")

--kubernetes-version string Choose a specific Kubernetes version for the control plane. (default "stable-1")

--node-name string Specify the node name.

--pod-network-cidr string Specify range of IP addresses for the pod network. If set, the control plane will automatically allocate CIDRs for every node.

--service-cidr string Use alternative range of IP address for service VIPs. (default "10.96.0.0/12")

--service-dns-domain string Use alternative domain for services, e.g. "myorg.internal". (default "cluster.local")

--skip-certificate-key-print Don't print the key used to encrypt the control-plane certificates.

--skip-phases strings List of phases to be skipped

--skip-token-print Skip printing of the default bootstrap token generated by 'kubeadm init'.

--token string The token to use for establishing bidirectional trust between nodes and control-plane nodes.

The format is [a-z0-9]{6}\.[a-z0-9]{16} - e.g. abcdef.0123456789abcdef

--token-ttl duration The duration before the token is automatically deleted (e.g. 1s, 2m, 3h). If set to '0', the token will never expire (default 24h0m0s)

--upload-certs Upload control-plane certificates to the kubeadm-certs Secret.

Global Flags:

--add-dir-header If true, adds the file directory to the header of the log messages

--log-file string If non-empty, use this log file

--log-file-max-size uint Defines the maximum size a log file can grow to. Unit is megabytes. If the value is 0, the maximum file size is unlimited. (default 1800)

--rootfs string [EXPERIMENTAL] The path to the 'real' host root filesystem.

--skip-headers If true, avoid header prefixes in the log messages

--skip-log-headers If true, avoid headers when opening log files

-v, --v Level number for the log level verbosity

Use "kubeadm init [command] --help" for more information about a command.

[root@k8s-master ~]# kubectl --help kubectl controls the Kubernetes cluster manager. Find more information at: https://kubernetes.io/docs/reference/kubectl/overview/ Basic Commands (Beginner): create Create a resource from a file or from stdin. expose 使用 replication controller, service, deployment 或者 pod 并暴露它作为一个 新的 Kubernetes Service run 在集群中运行一个指定的镜像 set 为 objects 设置一个指定的特征 Basic Commands (Intermediate): explain 查看资源的文档 get 显示一个或更多 resources edit 在服务器上编辑一个资源 delete Delete resources by filenames, stdin, resources and names, or by resources and label selector Deploy Commands: rollout Manage the rollout of a resource scale Set a new size for a Deployment, ReplicaSet or Replication Controller autoscale 自动调整一个 Deployment, ReplicaSet, 或者 ReplicationController 的副本数量 Cluster Management Commands: certificate 修改 certificate 资源. cluster-info 显示集群信息 top Display Resource (CPU/Memory/Storage) usage. cordon 标记 node 为 unschedulable uncordon 标记 node 为 schedulable drain Drain node in preparation for maintenance taint 更新一个或者多个 node 上的 taints Troubleshooting and Debugging Commands: describe 显示一个指定 resource 或者 group 的 resources 详情 logs 输出容器在 pod 中的日志 attach Attach 到一个运行中的 container exec 在一个 container 中执行一个命令 port-forward Forward one or more local ports to a pod proxy 运行一个 proxy 到 Kubernetes API server cp 复制 files 和 directories 到 containers 和从容器中复制 files 和 directories. auth Inspect authorization Advanced Commands: diff Diff live version against would-be applied version apply 通过文件名或标准输入流(stdin)对资源进行配置 patch 使用 strategic merge patch 更新一个资源的 field(s) replace 通过 filename 或者 stdin替换一个资源 wait Experimental: Wait for a specific condition on one or many resources. convert 在不同的 API versions 转换配置文件 kustomize Build a kustomization target from a directory or a remote url. Settings Commands: label 更新在这个资源上的 labels annotate 更新一个资源的注解 completion Output shell completion code for the specified shell (bash or zsh) Other Commands: alpha Commands for features in alpha api-resources Print the supported API resources on the server api-versions Print the supported API versions on the server, in the form of "group/version" config 修改 kubeconfig 文件 plugin Provides utilities for interacting with plugins. version 输出 client 和 server 的版本信息 Usage: kubectl [flags] [options] Use "kubectl <command> --help" for more information about a given command. Use "kubectl options" for a list of global command-line options (applies to all commands).

[root@k8s-master ~]# kubectl api-versions admissionregistration.k8s.io/v1 admissionregistration.k8s.io/v1beta1 apiextensions.k8s.io/v1 apiextensions.k8s.io/v1beta1 apiregistration.k8s.io/v1 apiregistration.k8s.io/v1beta1 apps/v1 authentication.k8s.io/v1 authentication.k8s.io/v1beta1 authorization.k8s.io/v1 authorization.k8s.io/v1beta1 autoscaling/v1 autoscaling/v2beta1 autoscaling/v2beta2 batch/v1 batch/v1beta1 certificates.k8s.io/v1 certificates.k8s.io/v1beta1 coordination.k8s.io/v1 coordination.k8s.io/v1beta1 discovery.k8s.io/v1beta1 events.k8s.io/v1 events.k8s.io/v1beta1 extensions/v1beta1 networking.k8s.io/v1 networking.k8s.io/v1beta1 node.k8s.io/v1beta1 policy/v1beta1 rbac.authorization.k8s.io/v1 rbac.authorization.k8s.io/v1beta1 scheduling.k8s.io/v1 scheduling.k8s.io/v1beta1 storage.k8s.io/v1 storage.k8s.io/v1beta1 v1

[root@k8s-master ~]# kubectl api-resources NAME SHORTNAMES APIGROUP NAMESPACED KIND bindings true Binding componentstatuses cs false ComponentStatus configmaps cm true ConfigMap endpoints ep true Endpoints events ev true Event limitranges limits true LimitRange namespaces ns false Namespace nodes no false Node persistentvolumeclaims pvc true PersistentVolumeClaim persistentvolumes pv false PersistentVolume pods po true Pod podtemplates true PodTemplate replicationcontrollers rc true ReplicationController resourcequotas quota true ResourceQuota secrets true Secret serviceaccounts sa true ServiceAccount services svc true Service mutatingwebhookconfigurations admissionregistration.k8s.io false MutatingWebhookConfiguration validatingwebhookconfigurations admissionregistration.k8s.io false ValidatingWebhookConfiguration customresourcedefinitions crd,crds apiextensions.k8s.io false CustomResourceDefinition apiservices apiregistration.k8s.io false APIService controllerrevisions apps true ControllerRevision daemonsets ds apps true DaemonSet deployments deploy apps true Deployment replicasets rs apps true ReplicaSet statefulsets sts apps true StatefulSet tokenreviews authentication.k8s.io false TokenReview localsubjectaccessreviews authorization.k8s.io true LocalSubjectAccessReview selfsubjectaccessreviews authorization.k8s.io false SelfSubjectAccessReview selfsubjectrulesreviews authorization.k8s.io false SelfSubjectRulesReview subjectaccessreviews authorization.k8s.io false SubjectAccessReview horizontalpodautoscalers hpa autoscaling true HorizontalPodAutoscaler cronjobs cj batch true CronJob jobs batch true Job certificatesigningrequests csr certificates.k8s.io false CertificateSigningRequest leases coordination.k8s.io true Lease endpointslices discovery.k8s.io true EndpointSlice events ev events.k8s.io true Event ingresses ing extensions true Ingress ingressclasses networking.k8s.io false IngressClass ingresses ing networking.k8s.io true Ingress networkpolicies netpol networking.k8s.io true NetworkPolicy runtimeclasses node.k8s.io false RuntimeClass poddisruptionbudgets pdb policy true PodDisruptionBudget podsecuritypolicies psp policy false PodSecurityPolicy clusterrolebindings rbac.authorization.k8s.io false ClusterRoleBinding clusterroles rbac.authorization.k8s.io false ClusterRole rolebindings rbac.authorization.k8s.io true RoleBinding roles rbac.authorization.k8s.io true Role priorityclasses pc scheduling.k8s.io false PriorityClass csidrivers storage.k8s.io false CSIDriver csinodes storage.k8s.io false CSINode storageclasses sc storage.k8s.io false StorageClass volumeattachments storage.k8s.io false VolumeAttachment

[root@k8s-master ~]# kubectl describe node node01.kunking.com [root@k8s-master ~]# kubectl get pod mynginx-app-bddc44777-9smtc -o yaml

九、Kubernets使用介绍

9.1 主机网络

[root@k8s-node31 ~]# ip a

2: ens32: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:50:56:2e:b5:db brd ff:ff:ff:ff:ff:ff

inet 192.168.6.31/24 brd 192.168.6.255 scope global noprefixroute ens32

valid_lft forever preferred_lft forever

inet6 fe80::250:56ff:fe2e:b5db/64 scope link

valid_lft forever preferred_lft forever

4: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group default

link/ether 16:ca:ee:95:ea:7a brd ff:ff:ff:ff:ff:ff

inet 10.244.1.0/32 brd 10.244.1.0 scope global flannel.1

valid_lft forever preferred_lft forever

inet6 fe80::14ca:eeff:fe95:ea7a/64 scope link

valid_lft forever preferred_lft forever

5: cni0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UP group default qlen 1000

link/ether 7a:de:e5:1f:bf:de brd ff:ff:ff:ff:ff:ff

inet 10.244.1.1/24 brd 10.244.1.255 scope global cni0

valid_lft forever preferred_lft forever

inet6 fe80::78de:e5ff:fe1f:bfde/64 scope link

valid_lft forever preferred_lft forever

9.2 资源

- RESTful

- GET,PUT, DELETE, POST, ...

- kubectl run, get, edit, ...

- 资源:对象

- workload: Pod, ReplicaSet, Deployment, StatefulSet, DaemonSet, Job, Cronjob,...

- 服务发现及均衡: Service, Ingress,... service_ip:service_port --> pod_ip:pod_port

- 配置与存储: Volume, CSI

- ConfigMap, Secret

- DownwardAPI

- 集群级资源

- Namespace, Node, Role, ClusterRole, RoleBinding, ClusterRoleBinding

- 元数据型资源

- HPA, PodTemplate, LimitRange

- 创建资源的方法

- apiserver仅接收JSON格式的资源定义;

- yaml格式提供配置清单,apiserver可自动将其转化为json格式,而后再提交。

9.3 大部分资源的配置清单

- apiVersion: group/version

- $ kubectl api-versions

- kind: 资源类别

- metadata: 元数据

- name

- namespace

- labels

- annotations

- 每个资源的引用PATH: /api/GROUP/VERSION/namespaces/NAMESPACE/TYPE/NAME

- spec: 期望的状态, disired state

- status: 当前状态, current state, 由kubernetes集群维护

[root@k8s-master ~]# kubectl explain service

KIND: Service

VERSION: v1

DESCRIPTION:

Service is a named abstraction of software service (for example, mysql)

consisting of local port (for example 3306) that the proxy listens on, and

the selector that determines which pods will answer requests sent through

the proxy.

FIELDS:

apiVersion <string>

APIVersion defines the versioned schema of this representation of an

object. Servers should convert recognized schemas to the latest internal

value, and may reject unrecognized values. More info:

https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#resources

kind <string>

Kind is a string value representing the REST resource this object

represents. Servers may infer this from the endpoint the client submits

requests to. Cannot be updated. In CamelCase. More info:

https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#types-kinds

metadata <Object>

Standard object's metadata. More info:

https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#metadata

spec <Object>

Spec defines the behavior of a service.

https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#spec-and-status

status <Object>

Most recently observed status of the service. Populated by the system.

Read-only. More info:

https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#spec-and-status

[root@k8s-master ~]# kubectl explain pods

[root@k8s-master ~]# kubectl explain pods.metadata

[root@k8s-master ~]# kubectl explain pods.spec.containers.ports