Pytorch tutorial 之Transfer Learning

引自官方: Transfer Learning tutorial

Ng在Deeplearning.ai中讲过迁移学习适用于任务A、B有相同输入、任务B比任务A有更少的数据、A任务的低级特征有助于任务B。对于迁移学习,经验规则是如果任务B的数据很小,那可能只需训练最后一层的权重。若有足够多的数据则可以重新训练网络中的所有层。如果重新训练网络中的所有参数,这个在训练初期称为预训练(pre-training),因为事先利用任务A的权重初始化。在预训练的基础上更新权重,那么这个过程叫微调(fine tuning)。微调有两种方式:全局、局部。全局微调:在预训练的基础上重新更新所有权重。局部微调:例如冻结卷积层的权重,另其为特征提取器,而只更新最后的一两层全连接。这也是迁移学习的两种方式。

下面分别讨论这两种学习方式:

问题描述:蚂蚁和蜜蜂的二分类,利用resnet18预训练。

一. 全局微调

1. Load Dada

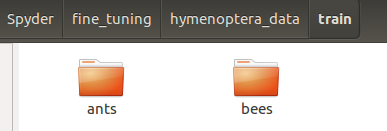

利用 torchvision.datasets.ImageFolder 实现,即需要将每一类的所有图片单独放到每一个文件夹下,文件夹的命名即为类名。这里将数据设置为训练集与验证集,采用字典的形式。

![]()

data_transforms = { 'train': transforms.Compose([ transforms.RandomResizedCrop(224), # 裁剪到224,224 transforms.RandomHorizontalFlip(), # 随机水平翻转给定的PIL.Image,概率为0.5。即:一半的概率翻转,一半的概率不翻转。 transforms.ToTensor(), # 把一个取值范围是[0,255]的PIL.Image或者shape为(H,W,C)的numpy.ndarray,转换成形状为[C,H,W],取值范围是[0,1.0]的FloadTensor transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]) ]), 'val': transforms.Compose([ transforms.Resize(256), transforms.CenterCrop(224), transforms.ToTensor(), transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]) ]), } data_dir = 'hymenoptera_data' image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x), # 同时进行transform data_transforms[x]) for x in ['train', 'val']} dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=4, shuffle=True, num_workers=4) for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']} # 训练集与验证集数量

class_names = image_datasets['train'].classes # 样本类别名(子文件夹名) use_gpu = torch.cuda.is_available() # 检验是否可用cuda

2. Visualize a few images

可视化一个批量数据,利用 torchvision.utils.make_grid 实现。

此时make_grid的输入仍为Tensor(C,W,H),而imshow的时候要转回(W,H,C)。而后要乘以方差并加上均值。注意之前的的预处理(减均值除方差)操作应该只是在一个batch上进行的,并非在全部样本上操作。

def imshow(inp, title=None): """Imshow for Tensor.""" inp = inp.numpy().transpose((1, 2, 0)) mean = np.array([0.485, 0.456, 0.406]) std = np.array([0.229, 0.224, 0.225]) inp = std * inp + mean inp = np.clip(inp, 0, 1) plt.imshow(inp) if title is not None: plt.title(title) plt.pause(0.001) # pause a bit so that plots are updated # Get a batch of training data inputs, classes = next(iter(dataloaders['train'])) # 取一个abtch的样本操作 # Make a grid from batch out = torchvision.utils.make_grid(inputs) # 此时的输入为Tensor imshow(out, title=[class_names[x] for x in classes])

3. Traning the model

这里的主要操作有:Scheduling the learning rate(规划学习率)、Saving the best model(保存最优模型)

先介绍 scheduler 的用法:

optim模块除了常规的用法外(一个参数组):

optim.SGD(model.parameters(), lr=1e-2, momentum=.9)

还可以制定任意一层的学习率(多个参数组):下面为两个参数组

optim.SGD([ {'params': model.base.parameters()}, {'params': model.classifier.parameters(), 'lr': 1e-3} ], lr=1e-2, momentum=0.9)

那么多个参数组如何进一步调整学习率呢?用到了 torch.optim.lr_scheduler ,它提供了几种方法来根据epoches的数量调整学习率。有些优化算法已经拥有了学习率衰减参数lr_decay ,例如:

class torch.optim.Adagrad(params, lr=0.01, lr_decay=0, weight_decay=0)

首先介绍: class torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda, last_epoch=-1)

其中optimizer就是包装好的优化器, lr_lambda即为操作学习率的函数。将每个参数组的学习速率设置为初始的lr乘以一个给定的函数。当last_epoch=-1时,将初始lr设置为lr。

>>> # Assuming optimizer has two groups. 这里假定有两个参数组,固有两个函数 >>> lambda1 = lambda epoch: epoch // 30 >>> lambda2 = lambda epoch: 0.95 ** epoch >>> scheduler = LambdaLR(optimizer, lr_lambda=[lambda1, lambda2]) # lambda的结果作为乘法因子与学习率相乘 >>> for epoch in range(100): >>> scheduler.step() # 在训练的时候进行迭代 >>> train(...) >>> validate(...)

然后介绍: torch.optim.lr_scheduler.StepLR(optimizer, step_size, gamma=0.1, last_epoch=-1)

其中optimizer就是包装好的优化器,step_size (int) 为学习率衰减期,指几个epoch衰减一次。gamma为学习率衰减的乘积因子。 默认为0.1 。当last_epoch=-1时,将初始lr设置为lr。

>>> # Assuming optimizer uses lr = 0.5 for all groups 假定初始的所有参数组学习率都为0.5 >>> # lr = 0.05 if epoch < 30 因为衰减器为30个epoch,所以没够30个epoch学习率乘以0.1 >>> # lr = 0.005 if 30 <= epoch < 60 >>> # lr = 0.0005 if 60 <= epoch < 90 >>> # ... >>> scheduler = StepLR(optimizer, step_size=30, gamma=0.1) >>> for epoch in range(100): >>> scheduler.step() >>> train(...) >>> validate(...)

好了,来看一下训练的代码吧:

def train_model(model, criterion, optimizer, scheduler, num_epochs=25): since = time.time() best_model_wts = copy.deepcopy(model.state_dict()) # 先深拷贝一份当前模型的参数,后面迭代过程中若遇到更优模型则替换 best_acc = 0.0 # 初始准确率 for epoch in range(num_epochs): print('Epoch {}/{}'.format(epoch, num_epochs - 1)) print('-' * 10) # Each epoch has a training and validation phase for phase in ['train', 'val']: if phase == 'train': scheduler.step() # 训练的时候进行学习率规划,其定义在下面给出 model.train(True) # Set model to training mode 设置为训练模式 else: model.train(False) # Set model to evaluate mode 设置为测试模式 running_loss = 0.0 running_corrects = 0 # Iterate over data. for data in dataloaders[phase]: # get the inputs inputs, labels = data # wrap them in Variable if use_gpu: inputs = Variable(inputs.cuda()) labels = Variable(labels.cuda()) else: inputs, labels = Variable(inputs), Variable(labels) # zero the parameter gradients optimizer.zero_grad() # forward outputs = model(inputs) _, preds = torch.max(outputs.data, 1) loss = criterion(outputs, labels) # backward + optimize only if in training phase if phase == 'train': loss.backward() optimizer.step() # statistics running_loss += loss.data[0] * inputs.size(0) running_corrects += torch.sum(preds == labels.data) epoch_loss = running_loss / dataset_sizes[phase] epoch_acc = running_corrects / dataset_sizes[phase] print('{} Loss: {:.4f} Acc: {:.4f}'.format( phase, epoch_loss, epoch_acc)) # deep copy the model if phase == 'val' and epoch_acc > best_acc: # 当验证时遇到了更好的模型则予以保留 best_acc = epoch_acc best_model_wts = copy.deepcopy(model.state_dict()) # 深拷贝模型参数 print() time_elapsed = time.time() - since print('Training complete in {:.0f}m {:.0f}s'.format( time_elapsed // 60, time_elapsed % 60)) print('Best val Acc: {:4f}'.format(best_acc)) # load best model weights model.load_state_dict(best_model_wts) # 载入最优模型参数 return model

4. Finetuning the convnet

model_ft = models.resnet18(pretrained=True) # model_ft即为含训练好参数的残差网络 num_ftrs = model_ft.fc.in_features # 最后一个全连接的输入维度,这里实为512 model_ft.fc = nn.Linear(num_ftrs, 2) # 将最后一个全连接由(512, 1000)改为(512, 2) 因为原网络是在1000类的ImageNet数据集上训练的 if use_gpu: model_ft = model_ft.cuda() # 将网络里的变量也调用cuda criterion = nn.CrossEntropyLoss() # 多累交叉熵 # Observe that all parameters are being optimized optimizer_ft = optim.SGD(model_ft.parameters(), lr=0.001, momentum=0.9) # 单参数组 # Decay LR by a factor of 0.1 every 7 epochs exp_lr_scheduler = lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1) # 每7个epoch衰减0.1倍

model_ft = train_model(model_ft, criterion, optimizer_ft, exp_lr_scheduler, num_epochs=25)

这里预训练好的model_ft 就是一个model,可以查看其参数与结构:

print (model_ft) # 查看网络结构 for name, para in model_ft.named_parameters(): # 查看网络参数名字与尺寸 print(name,':', para.size())

5. Visualizing the model predictions

可视化预测结果:

def visualize_model(model, num_images=6): was_training = model.training # 检验是否是训练模式 model.eval() # 模式设置为测试模式 images_so_far = 0 fig = plt.figure() for i, data in enumerate(dataloaders['val']): inputs, labels = data if use_gpu: inputs, labels = Variable(inputs.cuda()), Variable(labels.cuda()) else: inputs, labels = Variable(inputs), Variable(labels) outputs = model(inputs) _, preds = torch.max(outputs.data, 1) for j in range(inputs.size()[0]): images_so_far += 1 ax = plt.subplot(num_images//2, 2, images_so_far) ax.axis('off') ax.set_title('predicted: {}'.format(class_names[preds[j]])) imshow(inputs.cpu().data[j]) if images_so_far == num_images: model.train(mode=was_training) return model.train(mode=was_training)

二. 局部微调

ConvNet as fixed feature extractor

将conv的参数都固定,只调整全连接。

model_conv = torchvision.models.resnet18(pretrained=True) for param in model_conv.parameters(): param.requires_grad = False # 将所有参数求导设为否 # Parameters of newly constructed modules have requires_grad=True by default num_ftrs = model_conv.fc.in_features model_conv.fc = nn.Linear(num_ftrs, 2) # 取代最后一个全连接 if use_gpu: model_conv = model_conv.cuda() criterion = nn.CrossEntropyLoss() # Observe that only parameters of final layer are being optimized as # opoosed to before. optimizer_conv = optim.SGD(model_conv.fc.parameters(), lr=0.001, momentum=0.9) # Decay LR by a factor of 0.1 every 7 epochs exp_lr_scheduler = lr_scheduler.StepLR(optimizer_conv, step_size=7, gamma=0.1)

需要注意的是:新构建的model的参数默认为 requires_grad=True !

model_conv = train_model(model_conv, criterion, optimizer_conv, exp_lr_scheduler, num_epochs=25)

训练结果比全局调优还好一些。

浙公网安备 33010602011771号

浙公网安备 33010602011771号