Kubernetes容器集群管理环境 - 完整部署(中篇)

接着Kubernetes容器集群管理环境 - 完整部署(上篇)继续往下部署:

八、部署master节点

master节点的kube-apiserver、kube-scheduler 和 kube-controller-manager 均以多实例模式运行:kube-scheduler 和 kube-controller-manager 会自动选举产生一个 leader 实例,其它实例处于阻塞模式,当 leader 挂了后,重新选举产生新的 leader,从而保证服务可用性;kube-apiserver 是无状态的,需要通过 kube-nginx 进行代理访问,从而保证服务可用性;下面部署命令均在k8s-master01节点上执行,然后远程分发文件和执行命令。

下载最新版本二进制文件

[root@k8s-master01 ~]# cd /opt/k8s/work

[root@k8s-master01 work]# wget https://dl.k8s.io/v1.14.2/kubernetes-server-linux-amd64.tar.gz

[root@k8s-master01 work]# tar -xzvf kubernetes-server-linux-amd64.tar.gz

[root@k8s-master01 work]# cd kubernetes

[root@k8s-master01 work]# tar -xzvf kubernetes-src.tar.gz

将二进制文件拷贝到所有 master 节点:

[root@k8s-master01 ~]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp kubernetes/server/bin/{apiextensions-apiserver,cloud-controller-manager,kube-apiserver,kube-controller-manager,kube-proxy,kube-scheduler,kubeadm,kubectl,kubelet,mounter} root@${node_master_ip}:/opt/k8s/bin/

ssh root@${node_master_ip} "chmod +x /opt/k8s/bin/*"

done

8.1 - 部署高可用 kube-apiserver 集群

这里部署一个三实例kube-apiserver集群环境,它们通过nginx四层代理进行访问,对外提供一个统一的vip地址,从而保证服务可用性。下面部署命令均在k8s-master01节点上执行,然后远程分发文件和执行命令。

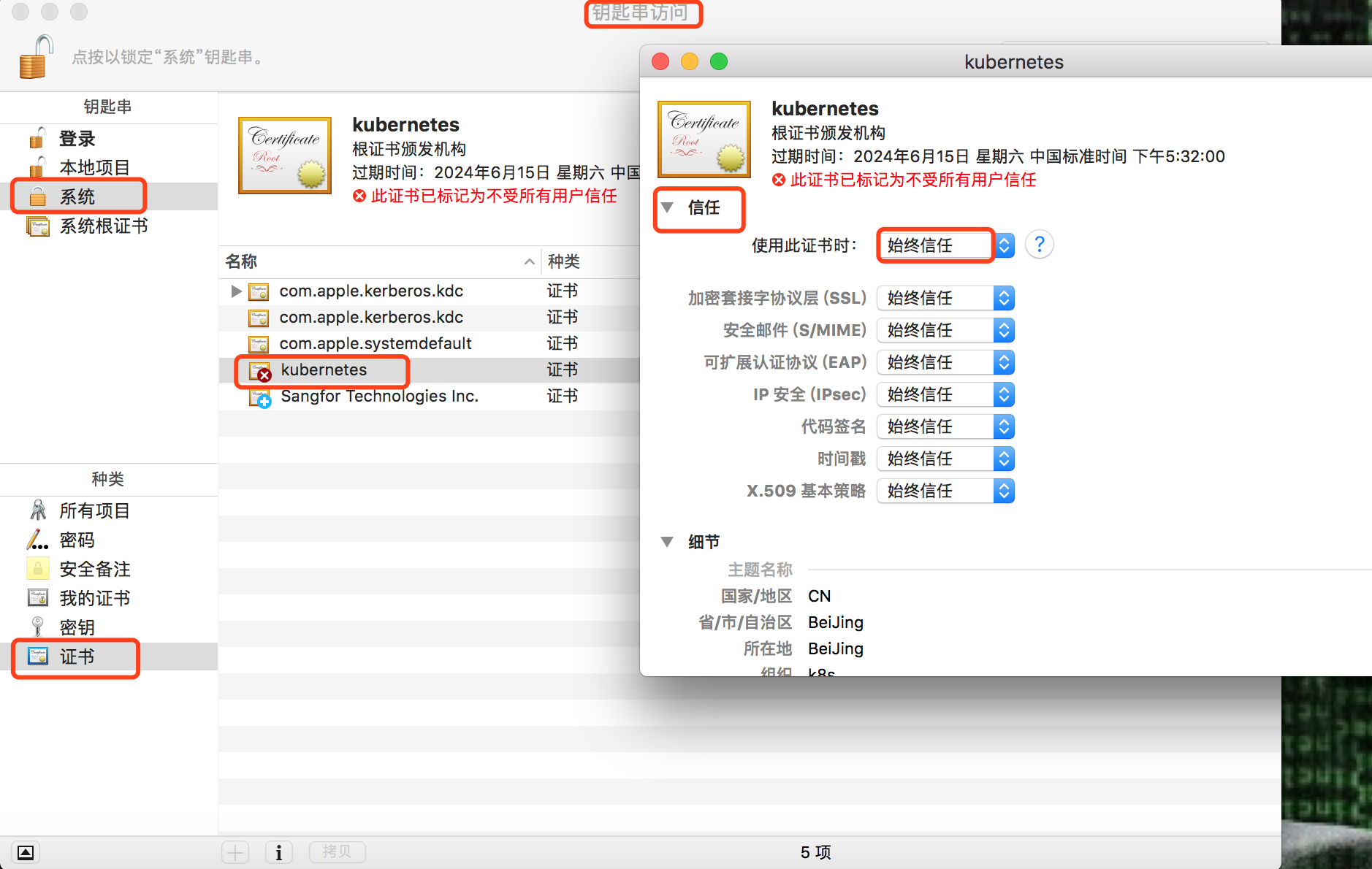

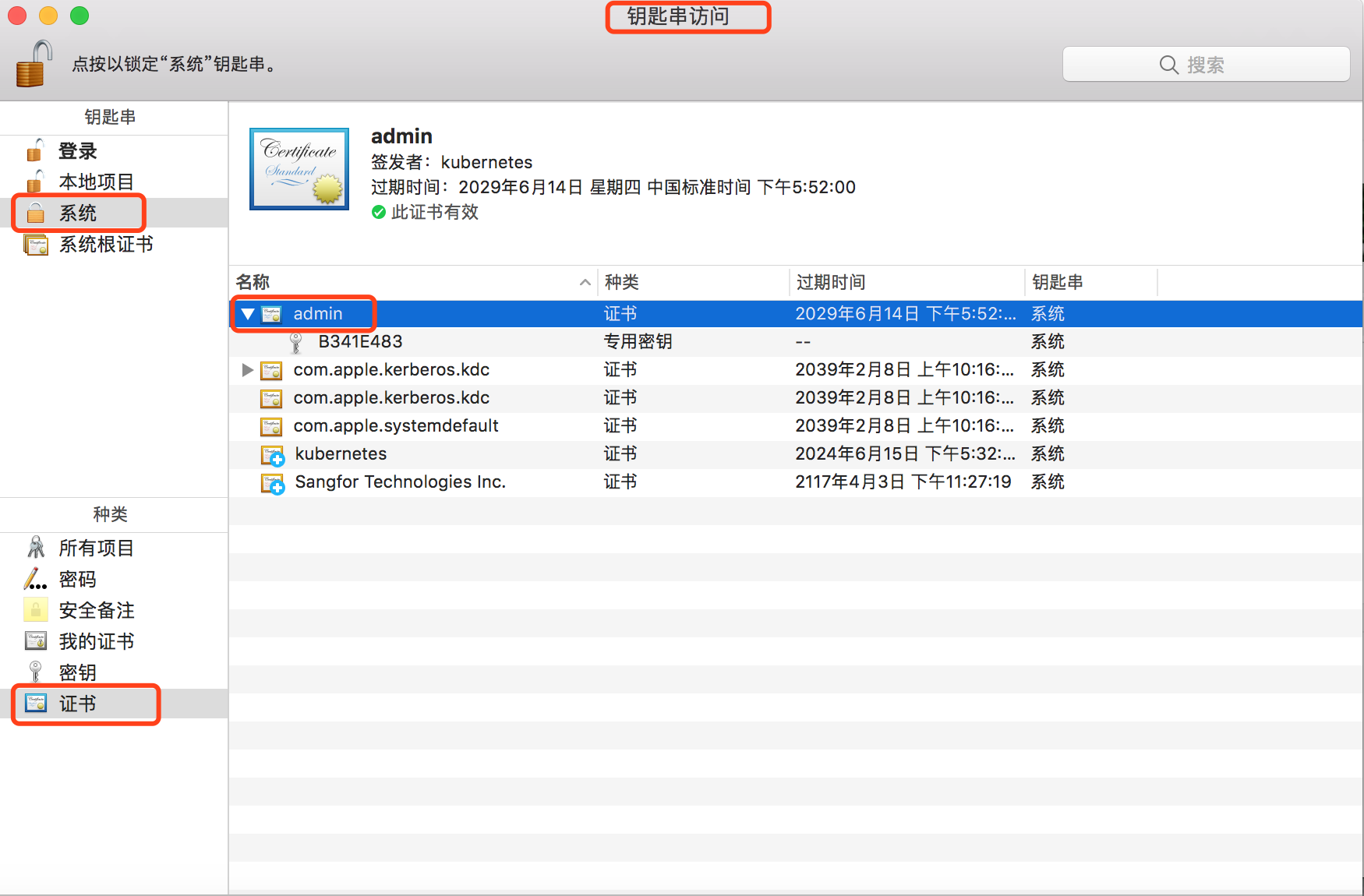

1) 创建 kubernetes 证书和私钥

创建证书签名请求:

[root@k8s-master01 ~]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# cat > kubernetes-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"172.16.60.250",

"172.16.60.241",

"172.16.60.242",

"172.16.60.243",

"${CLUSTER_KUBERNETES_SVC_IP}",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "4Paradigm"

}

]

}

EOF

解释说明:

• hosts 字段指定授权使用该证书的 IP 或域名列表,这里列出了 VIP 、apiserver 节点 IP、kubernetes 服务 IP 和域名;

• 域名最后字符不能是 .(如不能为 kubernetes.default.svc.cluster.local.),否则解析时失败,提示:

x509: cannot parse dnsName "kubernetes.default.svc.cluster.local.";

• 如果使用非 cluster.local 域名,如 opsnull.com,则需要修改域名列表中的最后两个域名为:kubernetes.default.svc.opsnull、kubernetes.default.svc.opsnull.com

• kubernetes 服务 IP 是 apiserver 自动创建的,一般是 --service-cluster-ip-range 参数指定的网段的第一个IP,后续可以通过如下命令获取:

[root@k8s-master01 work]# kubectl get svc kubernetes

The connection to the server 172.16.60.250:8443 was refused - did you specify the right host or port?

上面报错是因为kube-apiserver服务此时没有启动,后续待apiserver服务启动后,以上命令就可以获得了。

生成证书和私钥:

[root@k8s-master01 work]# cfssl gencert -ca=/opt/k8s/work/ca.pem \

-ca-key=/opt/k8s/work/ca-key.pem \

-config=/opt/k8s/work/ca-config.json \

-profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes

[root@k8s-master01 work]# ls kubernetes*pem

kubernetes-key.pem kubernetes.pem

将生成的证书和私钥文件拷贝到所有 master 节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

ssh root@${node_master_ip} "mkdir -p /etc/kubernetes/cert"

scp kubernetes*.pem root@${node_master_ip}:/etc/kubernetes/cert/

done

2) 创建加密配置文件

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# cat > encryption-config.yaml <<EOF

kind: EncryptionConfig

apiVersion: v1

resources:

- resources:

- secrets

providers:

- aescbc:

keys:

- name: key1

secret: ${ENCRYPTION_KEY}

- identity: {}

EOF

将加密配置文件拷贝到 master 节点的 /etc/kubernetes 目录下:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp encryption-config.yaml root@${node_master_ip}:/etc/kubernetes/

done

3) 创建审计策略文件

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# cat > audit-policy.yaml <<EOF

apiVersion: audit.k8s.io/v1beta1

kind: Policy

rules:

# The following requests were manually identified as high-volume and low-risk, so drop them.

- level: None

resources:

- group: ""

resources:

- endpoints

- services

- services/status

users:

- 'system:kube-proxy'

verbs:

- watch

- level: None

resources:

- group: ""

resources:

- nodes

- nodes/status

userGroups:

- 'system:nodes'

verbs:

- get

- level: None

namespaces:

- kube-system

resources:

- group: ""

resources:

- endpoints

users:

- 'system:kube-controller-manager'

- 'system:kube-scheduler'

- 'system:serviceaccount:kube-system:endpoint-controller'

verbs:

- get

- update

- level: None

resources:

- group: ""

resources:

- namespaces

- namespaces/status

- namespaces/finalize

users:

- 'system:apiserver'

verbs:

- get

# Don't log HPA fetching metrics.

- level: None

resources:

- group: metrics.k8s.io

users:

- 'system:kube-controller-manager'

verbs:

- get

- list

# Don't log these read-only URLs.

- level: None

nonResourceURLs:

- '/healthz*'

- /version

- '/swagger*'

# Don't log events requests.

- level: None

resources:

- group: ""

resources:

- events

# node and pod status calls from nodes are high-volume and can be large, don't log responses for expected updates from nodes

- level: Request

omitStages:

- RequestReceived

resources:

- group: ""

resources:

- nodes/status

- pods/status

users:

- kubelet

- 'system:node-problem-detector'

- 'system:serviceaccount:kube-system:node-problem-detector'

verbs:

- update

- patch

- level: Request

omitStages:

- RequestReceived

resources:

- group: ""

resources:

- nodes/status

- pods/status

userGroups:

- 'system:nodes'

verbs:

- update

- patch

# deletecollection calls can be large, don't log responses for expected namespace deletions

- level: Request

omitStages:

- RequestReceived

users:

- 'system:serviceaccount:kube-system:namespace-controller'

verbs:

- deletecollection

# Secrets, ConfigMaps, and TokenReviews can contain sensitive & binary data,

# so only log at the Metadata level.

- level: Metadata

omitStages:

- RequestReceived

resources:

- group: ""

resources:

- secrets

- configmaps

- group: authentication.k8s.io

resources:

- tokenreviews

# Get repsonses can be large; skip them.

- level: Request

omitStages:

- RequestReceived

resources:

- group: ""

- group: admissionregistration.k8s.io

- group: apiextensions.k8s.io

- group: apiregistration.k8s.io

- group: apps

- group: authentication.k8s.io

- group: authorization.k8s.io

- group: autoscaling

- group: batch

- group: certificates.k8s.io

- group: extensions

- group: metrics.k8s.io

- group: networking.k8s.io

- group: policy

- group: rbac.authorization.k8s.io

- group: scheduling.k8s.io

- group: settings.k8s.io

- group: storage.k8s.io

verbs:

- get

- list

- watch

# Default level for known APIs

- level: RequestResponse

omitStages:

- RequestReceived

resources:

- group: ""

- group: admissionregistration.k8s.io

- group: apiextensions.k8s.io

- group: apiregistration.k8s.io

- group: apps

- group: authentication.k8s.io

- group: authorization.k8s.io

- group: autoscaling

- group: batch

- group: certificates.k8s.io

- group: extensions

- group: metrics.k8s.io

- group: networking.k8s.io

- group: policy

- group: rbac.authorization.k8s.io

- group: scheduling.k8s.io

- group: settings.k8s.io

- group: storage.k8s.io

# Default level for all other requests.

- level: Metadata

omitStages:

- RequestReceived

EOF

分发审计策略文件:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp audit-policy.yaml root@${node_master_ip}:/etc/kubernetes/audit-policy.yaml

done

4) 创建后续访问 metrics-server 使用的证书

创建证书签名请求:

[root@k8s-master01 work]# cat > proxy-client-csr.json <<EOF

{

"CN": "aggregator",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "4Paradigm"

}

]

}

EOF

CN 名称为 aggregator,需要与 metrics-server 的 --requestheader-allowed-names 参数配置一致,否则访问会被 metrics-server 拒绝;

生成证书和私钥:

[root@k8s-master01 work]# cfssl gencert -ca=/etc/kubernetes/cert/ca.pem \

-ca-key=/etc/kubernetes/cert/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes proxy-client-csr.json | cfssljson -bare proxy-client

[root@k8s-master01 work]# ls proxy-client*.pem

proxy-client-key.pem proxy-client.pem

将生成的证书和私钥文件拷贝到所有 master 节点:

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp proxy-client*.pem root@${node_master_ip}:/etc/kubernetes/cert/

done

5) 创建 kube-apiserver systemd unit 模板文件

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# cat > kube-apiserver.service.template <<EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

WorkingDirectory=${K8S_DIR}/kube-apiserver

ExecStart=/opt/k8s/bin/kube-apiserver \\

--advertise-address=##NODE_MASTER_IP## \\

--default-not-ready-toleration-seconds=360 \\

--default-unreachable-toleration-seconds=360 \\

--feature-gates=DynamicAuditing=true \\

--max-mutating-requests-inflight=2000 \\

--max-requests-inflight=4000 \\

--default-watch-cache-size=200 \\

--delete-collection-workers=2 \\

--encryption-provider-config=/etc/kubernetes/encryption-config.yaml \\

--etcd-cafile=/etc/kubernetes/cert/ca.pem \\

--etcd-certfile=/etc/kubernetes/cert/kubernetes.pem \\

--etcd-keyfile=/etc/kubernetes/cert/kubernetes-key.pem \\

--etcd-servers=${ETCD_ENDPOINTS} \\

--bind-address=##NODE_MASTER_IP## \\

--secure-port=6443 \\

--tls-cert-file=/etc/kubernetes/cert/kubernetes.pem \\

--tls-private-key-file=/etc/kubernetes/cert/kubernetes-key.pem \\

--insecure-port=0 \\

--audit-dynamic-configuration \\

--audit-log-maxage=15 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-mode=batch \\

--audit-log-truncate-enabled \\

--audit-log-batch-buffer-size=20000 \\

--audit-log-batch-max-size=2 \\

--audit-log-path=${K8S_DIR}/kube-apiserver/audit.log \\

--audit-policy-file=/etc/kubernetes/audit-policy.yaml \\

--profiling \\

--anonymous-auth=false \\

--client-ca-file=/etc/kubernetes/cert/ca.pem \\

--enable-bootstrap-token-auth \\

--requestheader-allowed-names="" \\

--requestheader-client-ca-file=/etc/kubernetes/cert/ca.pem \\

--requestheader-extra-headers-prefix="X-Remote-Extra-" \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-username-headers=X-Remote-User \\

--service-account-key-file=/etc/kubernetes/cert/ca.pem \\

--authorization-mode=Node,RBAC \\

--runtime-config=api/all=true \\

--enable-admission-plugins=NodeRestriction \\

--allow-privileged=true \\

--apiserver-count=3 \\

--event-ttl=168h \\

--kubelet-certificate-authority=/etc/kubernetes/cert/ca.pem \\

--kubelet-client-certificate=/etc/kubernetes/cert/kubernetes.pem \\

--kubelet-client-key=/etc/kubernetes/cert/kubernetes-key.pem \\

--kubelet-https=true \\

--kubelet-timeout=10s \\

--proxy-client-cert-file=/etc/kubernetes/cert/proxy-client.pem \\

--proxy-client-key-file=/etc/kubernetes/cert/proxy-client-key.pem \\

--service-cluster-ip-range=${SERVICE_CIDR} \\

--service-node-port-range=${NODE_PORT_RANGE} \\

--logtostderr=true \\

--enable-aggregator-routing=true \\

--v=2

Restart=on-failure

RestartSec=10

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

解释说明:

--advertise-address:apiserver 对外通告的 IP(kubernetes 服务后端节点 IP);

--default-*-toleration-seconds:设置节点异常相关的阈值;

--max-*-requests-inflight:请求相关的最大阈值;

--etcd-*:访问 etcd 的证书和 etcd 服务器地址;

--experimental-encryption-provider-config:指定用于加密 etcd 中 secret 的配置;

--bind-address: https 监听的 IP,不能为 127.0.0.1,否则外界不能访问它的安全端口 6443;

--secret-port:https 监听端口;

--insecure-port=0:关闭监听 http 非安全端口(8080);

--tls-*-file:指定 apiserver 使用的证书、私钥和 CA 文件;

--audit-*:配置审计策略和审计日志文件相关的参数;

--client-ca-file:验证 client (kue-controller-manager、kube-scheduler、kubelet、kube-proxy 等)请求所带的证书;

--enable-bootstrap-token-auth:启用 kubelet bootstrap 的 token 认证;

--requestheader-*:kube-apiserver 的 aggregator layer 相关的配置参数,proxy-client & HPA 需要使用;

--requestheader-client-ca-file:用于签名 --proxy-client-cert-file 和 --proxy-client-key-file 指定的证书;在启用了 metric aggregator 时使用;

如果 --requestheader-allowed-names 不为空,则--proxy-client-cert-file 证书的 CN 必须位于 allowed-names 中,默认为 aggregator;

--service-account-key-file:签名 ServiceAccount Token 的公钥文件,kube-controller-manager 的 --service-account-private-key-file 指定私钥文件,两者配对使用;

--runtime-config=api/all=true: 启用所有版本的 APIs,如 autoscaling/v2alpha1;

--authorization-mode=Node,RBAC、--anonymous-auth=false: 开启 Node 和 RBAC 授权模式,拒绝未授权的请求;

--enable-admission-plugins:启用一些默认关闭的 plugins;

--allow-privileged:运行执行 privileged 权限的容器;

--apiserver-count=3:指定 apiserver 实例的数量;

--event-ttl:指定 events 的保存时间;

--kubelet-*:如果指定,则使用 https 访问 kubelet APIs;需要为证书对应的用户(上面 kubernetes*.pem 证书的用户为 kubernetes) 用户定义 RBAC 规则,否则访问 kubelet API 时提示未授权;

--proxy-client-*:apiserver 访问 metrics-server 使用的证书;

--service-cluster-ip-range: 指定 Service Cluster IP 地址段;

--service-node-port-range: 指定 NodePort 的端口范围;

注意:

如果kube-apiserver机器没有运行 kube-proxy,则需要添加 --enable-aggregator-routing=true 参数(这里master节点没有作为node节点使用,故没有运行kube-proxy,需要加这个参数)

requestheader-client-ca-file 指定的 CA 证书,必须具有 client auth and server auth!!

为各节点创建和分发 kube-apiserver systemd unit 文件

替换模板文件中的变量,为各节点生成 systemd unit 文件:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for (( i=0; i < 3; i++ ))

do

sed -e "s/##NODE_MASTER_NAME##/${NODE_MASTER_NAMES[i]}/" -e "s/##NODE_MASTER_IP##/${NODE_MASTER_IPS[i]}/" kube-apiserver.service.template > kube-apiserver-${NODE_MASTER_IPS[i]}.service

done

其中:NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP;

[root@k8s-master01 work]# ll kube-apiserver*.service

-rw-r--r-- 1 root root 2718 Jun 18 10:38 kube-apiserver-172.16.60.241.service

-rw-r--r-- 1 root root 2718 Jun 18 10:38 kube-apiserver-172.16.60.242.service

-rw-r--r-- 1 root root 2718 Jun 18 10:38 kube-apiserver-172.16.60.243.service

分发生成的 systemd unit 文件, 文件重命名为 kube-apiserver.service;

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp kube-apiserver-${node_master_ip}.service root@${node_master_ip}:/etc/systemd/system/kube-apiserver.service

done

6) 启动 kube-apiserver 服务

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

ssh root@${node_master_ip} "mkdir -p ${K8S_DIR}/kube-apiserver"

ssh root@${node_master_ip} "systemctl daemon-reload && systemctl enable kube-apiserver && systemctl restart kube-apiserver"

done

注意:启动服务前必须先创建工作目录;

检查 kube-apiserver 运行状态

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

ssh root@${node_master_ip} "systemctl status kube-apiserver |grep 'Active:'"

done

预期输出:

>>> 172.16.60.241

Active: active (running) since Tue 2019-06-18 10:42:42 CST; 1min 6s ago

>>> 172.16.60.242

Active: active (running) since Tue 2019-06-18 10:42:47 CST; 1min 2s ago

>>> 172.16.60.243

Active: active (running) since Tue 2019-06-18 10:42:51 CST; 58s ago

确保状态为 active (running),否则查看日志,确认原因(journalctl -u kube-apiserver)

7)打印 kube-apiserver 写入 etcd 的数据

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# ETCDCTL_API=3 etcdctl \

--endpoints=${ETCD_ENDPOINTS} \

--cacert=/opt/k8s/work/ca.pem \

--cert=/opt/k8s/work/etcd.pem \

--key=/opt/k8s/work/etcd-key.pem \

get /registry/ --prefix --keys-only

预期会打印出很多写入到etcd中的数据信息

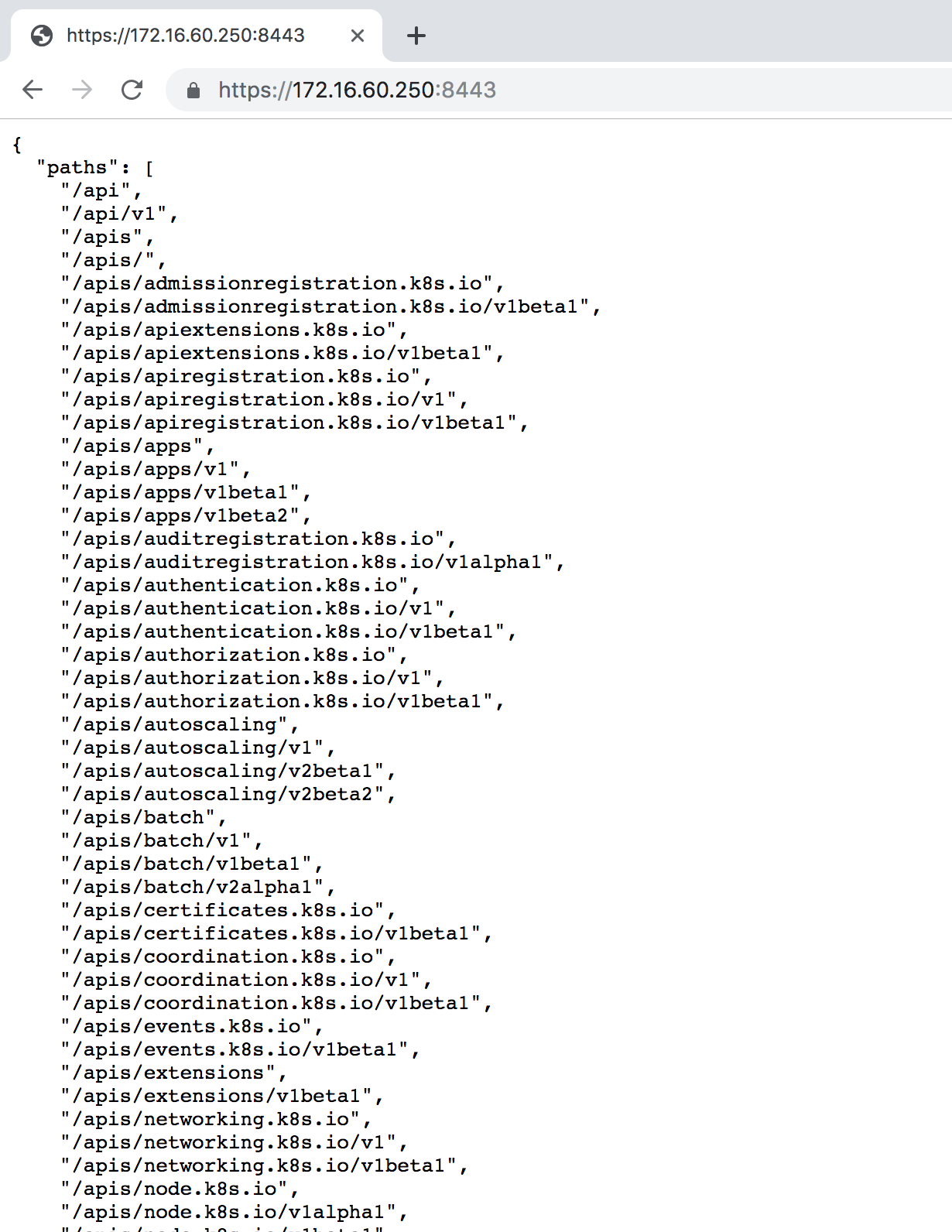

8)检查集群信息

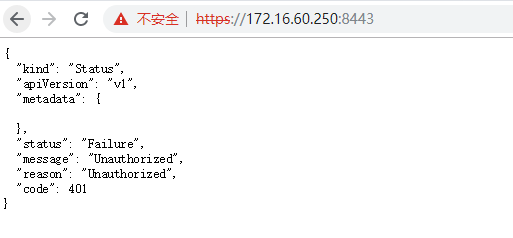

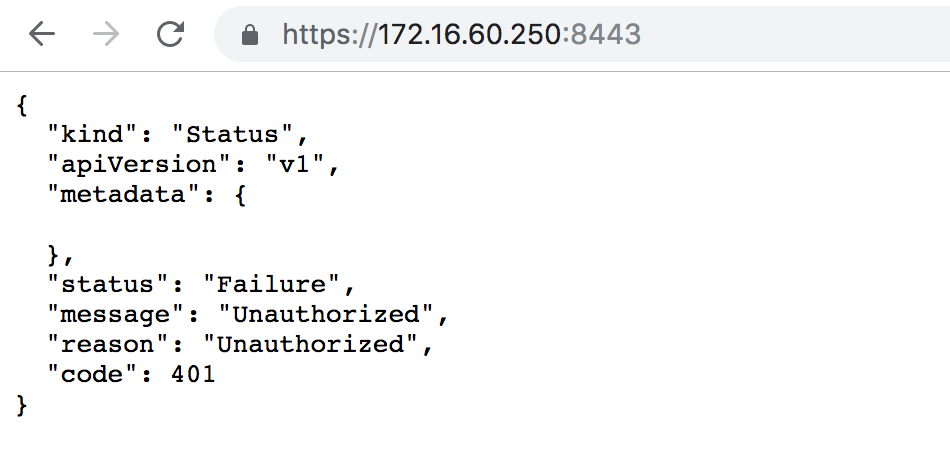

[root@k8s-master01 work]# kubectl cluster-info

Kubernetes master is running at https://172.16.60.250:8443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

[root@k8s-master01 work]# kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.254.0.1 <none> 443/TCP 8m25s

查看集群状态信息

[root@k8s-master01 work]# kubectl get componentstatuses #或者执行命令"kubectl get cs"

NAME STATUS MESSAGE ERROR

controller-manager Unhealthy Get http://127.0.0.1:10252/healthz: dial tcp 127.0.0.1:10252: connect: connection refused

scheduler Unhealthy Get http://127.0.0.1:10251/healthz: dial tcp 127.0.0.1:10251: connect: connection refused

etcd-0 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

controller-managerhe 和 schedule状态为Unhealthy,是因为此时还没有部署这两个组件,待后续部署好之后再查看~

这里注意:

-> 如果执行 kubectl 命令式时输出如下错误信息,则说明使用的 ~/.kube/config 文件不对,请切换到正确的账户后再执行该命令:

The connection to the server localhost:8080 was refused - did you specify the right host or port?

-> 执行 kubectl get componentstatuses 命令时,apiserver 默认向 127.0.0.1 发送请求。当 controller-manager、scheduler 以集群模式运行时,有可能和kube-apiserver

不在一台机器上,这时 controller-manager 或 scheduler 的状态为 Unhealthy,但实际上它们工作正常。

9) 检查 kube-apiserver 监听的端口

[root@k8s-master01 work]# netstat -lnpt|grep kube

tcp 0 0 172.16.60.241:6443 0.0.0.0:* LISTEN 15516/kube-apiserve

需要注意:

6443: 接收 https 请求的安全端口,对所有请求做认证和授权;

由于关闭了非安全端口,故没有监听 8080;

10)授予 kube-apiserver 访问 kubelet API 的权限

在执行 kubectl exec、run、logs 等命令时,apiserver 会将请求转发到 kubelet 的 https 端口。

这里定义 RBAC 规则,授权 apiserver 使用的证书(kubernetes.pem)用户名(CN:kuberntes)访问 kubelet API 的权限:

[root@k8s-master01 work]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

11)查看kube-apiserver输出的metrics

需要用到根证书

使用nginx的代理端口获取metrics

[root@k8s-master01 work]# curl -s --cacert /opt/k8s/work/ca.pem --cert /opt/k8s/work/admin.pem --key /opt/k8s/work/admin-key.pem https://172.16.60.250:8443/metrics|head

# HELP APIServiceOpenAPIAggregationControllerQueue1_adds (Deprecated) Total number of adds handled by workqueue: APIServiceOpenAPIAggregationControllerQueue1

# TYPE APIServiceOpenAPIAggregationControllerQueue1_adds counter

APIServiceOpenAPIAggregationControllerQueue1_adds 12194

# HELP APIServiceOpenAPIAggregationControllerQueue1_depth (Deprecated) Current depth of workqueue: APIServiceOpenAPIAggregationControllerQueue1

# TYPE APIServiceOpenAPIAggregationControllerQueue1_depth gauge

APIServiceOpenAPIAggregationControllerQueue1_depth 0

# HELP APIServiceOpenAPIAggregationControllerQueue1_longest_running_processor_microseconds (Deprecated) How many microseconds has the longest running processor for APIServiceOpenAPIAggregationControllerQueue1 been running.

# TYPE APIServiceOpenAPIAggregationControllerQueue1_longest_running_processor_microseconds gauge

APIServiceOpenAPIAggregationControllerQueue1_longest_running_processor_microseconds 0

# HELP APIServiceOpenAPIAggregationControllerQueue1_queue_latency (Deprecated) How long an item stays in workqueueAPIServiceOpenAPIAggregationControllerQueue1 before being requested.

直接使用kube-apiserver节点端口获取metrics

[root@k8s-master01 work]# curl -s --cacert /opt/k8s/work/ca.pem --cert /opt/k8s/work/admin.pem --key /opt/k8s/work/admin-key.pem https://172.16.60.241:6443/metrics|head

[root@k8s-master01 work]# curl -s --cacert /opt/k8s/work/ca.pem --cert /opt/k8s/work/admin.pem --key /opt/k8s/work/admin-key.pem https://172.16.60.242:6443/metrics|head

[root@k8s-master01 work]# curl -s --cacert /opt/k8s/work/ca.pem --cert /opt/k8s/work/admin.pem --key /opt/k8s/work/admin-key.pem https://172.16.60.243:6443/metrics|head

8.2 - 部署高可用 kube-controller-manager 集群

该集群包含 3 个节点,启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用时,阻塞的节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。为保证通信安全,本文档先生成 x509 证书和私钥,kube-controller-manager 在如下两种情况下使用该证书:与 kube-apiserver 的安全端口通信; 在安全端口(https,10252) 输出 prometheus 格式的 metrics;下面部署命令均在k8s-master01节点上执行,然后远程分发文件和执行命令。

1)创建 kube-controller-manager 证书和私钥

创建证书签名请求:

[root@k8s-master01 ~]# cd /opt/k8s/work

[root@k8s-master01 work]# cat > kube-controller-manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"172.16.60.241",

"172.16.60.242",

"172.16.60.243"

],

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:kube-controller-manager",

"OU": "4Paradigm"

}

]

}

EOF

• hosts 列表包含所有 kube-controller-manager 节点 IP;

• CN 为 system:kube-controller-manager、O 为 system:kube-controller-manager,kubernetes 内置的 ClusterRoleBindings system:kube-controller-manager

赋予 kube-controller-manager 工作所需的权限。

生成证书和私钥

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# cfssl gencert -ca=/opt/k8s/work/ca.pem \

-ca-key=/opt/k8s/work/ca-key.pem \

-config=/opt/k8s/work/ca-config.json \

-profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

[root@k8s-master01 work]# ll kube-controller-manager*pem

-rw------- 1 root root 1679 Jun 18 11:43 kube-controller-manager-key.pem

-rw-r--r-- 1 root root 1517 Jun 18 11:43 kube-controller-manager.pem

将生成的证书和私钥分发到所有 master 节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp kube-controller-manager*.pem root@${node_master_ip}:/etc/kubernetes/cert/

done

2) 创建和分发 kubeconfig 文件

kube-controller-manager 使用 kubeconfig 文件访问 apiserver,该文件提供了 apiserver 地址、嵌入的 CA 证书和 kube-controller-manager 证书:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/k8s/work/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-controller-manager.kubeconfig

[root@k8s-master01 work]# kubectl config set-credentials system:kube-controller-manager \

--client-certificate=kube-controller-manager.pem \

--client-key=kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=kube-controller-manager.kubeconfig

[root@k8s-master01 work]# kubectl config set-context system:kube-controller-manager \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=kube-controller-manager.kubeconfig

[root@k8s-master01 work]# kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

分发 kubeconfig 到所有 master 节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp kube-controller-manager.kubeconfig root@${node_master_ip}:/etc/kubernetes/

done

3) 创建和分发kube-controller-manager system unit 文件

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# cat > kube-controller-manager.service.template <<EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

WorkingDirectory=${K8S_DIR}/kube-controller-manager

ExecStart=/opt/k8s/bin/kube-controller-manager \\

--profiling \\

--cluster-name=kubernetes \\

--controllers=*,bootstrapsigner,tokencleaner \\

--kube-api-qps=1000 \\

--kube-api-burst=2000 \\

--leader-elect \\

--use-service-account-credentials=true \\

--concurrent-service-syncs=2 \\

--bind-address=0.0.0.0 \\

--tls-cert-file=/etc/kubernetes/cert/kube-controller-manager.pem \\

--tls-private-key-file=/etc/kubernetes/cert/kube-controller-manager-key.pem \\

--authentication-kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \\

--client-ca-file=/etc/kubernetes/cert/ca.pem \\

--requestheader-allowed-names="" \\

--requestheader-client-ca-file=/etc/kubernetes/cert/ca.pem \\

--requestheader-extra-headers-prefix="X-Remote-Extra-" \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-username-headers=X-Remote-User \\

--authorization-kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \\

--cluster-signing-cert-file=/etc/kubernetes/cert/ca.pem \\

--cluster-signing-key-file=/etc/kubernetes/cert/ca-key.pem \\

--experimental-cluster-signing-duration=8760h \\

--horizontal-pod-autoscaler-sync-period=10s \\

--concurrent-deployment-syncs=10 \\

--concurrent-gc-syncs=30 \\

--node-cidr-mask-size=24 \\

--service-cluster-ip-range=${SERVICE_CIDR} \\

--pod-eviction-timeout=6m \\

--terminated-pod-gc-threshold=10000 \\

--root-ca-file=/etc/kubernetes/cert/ca.pem \\

--service-account-private-key-file=/etc/kubernetes/cert/ca-key.pem \\

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \\

--logtostderr=true \\

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

解释说明:

下面两行一般要去掉,否则执行"kubectl get cs"检查集群状态时,controller-manager状态会为"Unhealthy"

--port=0:关闭监听非安全端口(http),同时 --address 参数无效,--bind-address 参数有效;

--secure-port=10252

--bind-address=0.0.0.0: 在所有网络接口监听 10252 端口的 https /metrics 请求;

--kubeconfig:指定 kubeconfig 文件路径,kube-controller-manager 使用它连接和验证 kube-apiserver;

--authentication-kubeconfig 和 --authorization-kubeconfig:kube-controller-manager 使用它连接 apiserver,对 client 的请求进行认证和授权。kube-controller-manager 不再使用 --tls-ca-file 对请求 https metrics 的 Client 证书进行校验。如果没有配置这两个 kubeconfig 参数,则 client 连接 kube-controller-manager https 端口的请求会被拒绝(提示权限不足)。

--cluster-signing-*-file:签名 TLS Bootstrap 创建的证书;

--experimental-cluster-signing-duration:指定 TLS Bootstrap 证书的有效期;

--root-ca-file:放置到容器 ServiceAccount 中的 CA 证书,用来对 kube-apiserver 的证书进行校验;

--service-account-private-key-file:签名 ServiceAccount 中 Token 的私钥文件,必须和 kube-apiserver 的 --service-account-key-file 指定的公钥文件配对使用;

--service-cluster-ip-range :指定 Service Cluster IP 网段,必须和 kube-apiserver 中的同名参数一致;

--leader-elect=true:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

--controllers=*,bootstrapsigner,tokencleaner:启用的控制器列表,tokencleaner 用于自动清理过期的 Bootstrap token;

--horizontal-pod-autoscaler-*:custom metrics 相关参数,支持 autoscaling/v2alpha1;

--tls-cert-file、--tls-private-key-file:使用 https 输出 metrics 时使用的 Server 证书和秘钥;

--use-service-account-credentials=true: kube-controller-manager 中各 controller 使用 serviceaccount 访问 kube-apiserver;

为各节点创建和分发 kube-controller-mananger systemd unit 文件

替换模板文件中的变量,为各节点创建 systemd unit 文件:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for (( i=0; i < 3; i++ ))

do

sed -e "s/##NODE_MASTER_NAME##/${NODE_MASTER_NAMES[i]}/" -e "s/##NODE_MASTER_IP##/${NODE_MASTER_IPS[i]}/" kube-controller-manager.service.template > kube-controller-manager-${NODE_MASTER_IPS[i]}.service

done

注意: NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP;

[root@k8s-master01 work]# ll kube-controller-manager*.service

-rw-r--r-- 1 root root 1878 Jun 18 12:45 kube-controller-manager-172.16.60.241.service

-rw-r--r-- 1 root root 1878 Jun 18 12:45 kube-controller-manager-172.16.60.242.service

-rw-r--r-- 1 root root 1878 Jun 18 12:45 kube-controller-manager-172.16.60.243.service

分发到所有 master 节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp kube-controller-manager-${node_master_ip}.service root@${node_master_ip}:/etc/systemd/system/kube-controller-manager.service

done

注意:文件重命名为 kube-controller-manager.service;

启动 kube-controller-manager 服务

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

ssh root@${node_master_ip} "mkdir -p ${K8S_DIR}/kube-controller-manager"

ssh root@${node_master_ip} "systemctl daemon-reload && systemctl enable kube-controller-manager && systemctl restart kube-controller-manager"

done

注意:启动服务前必须先创建工作目录;

检查服务运行状态

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

ssh root@${node_master_ip} "systemctl status kube-controller-manager|grep Active"

done

预期输出结果:

>>> 172.16.60.241

Active: active (running) since Tue 2019-06-18 12:49:11 CST; 1min 7s ago

>>> 172.16.60.242

Active: active (running) since Tue 2019-06-18 12:49:11 CST; 1min 7s ago

>>> 172.16.60.243

Active: active (running) since Tue 2019-06-18 12:49:12 CST; 1min 7s ago

确保状态为 active (running),否则查看日志,确认原因(journalctl -u kube-controller-manager)

kube-controller-manager 监听 10252 端口,接收 https 请求:

[root@k8s-master01 work]# netstat -lnpt|grep kube-controll

tcp 0 0 172.16.60.241:10252 0.0.0.0:* LISTEN 25709/kube-controll

检查集群状态,controller-manager的状态为"ok"

注意:当kube-controller-manager集群中的1个或2个节点的controller-manager服务挂掉,只要有一个节点的controller-manager服务活着,

则集群中controller-manager的状态仍然为"ok",仍然会继续提供服务!

[root@k8s-master01 work]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get http://127.0.0.1:10251/healthz: dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

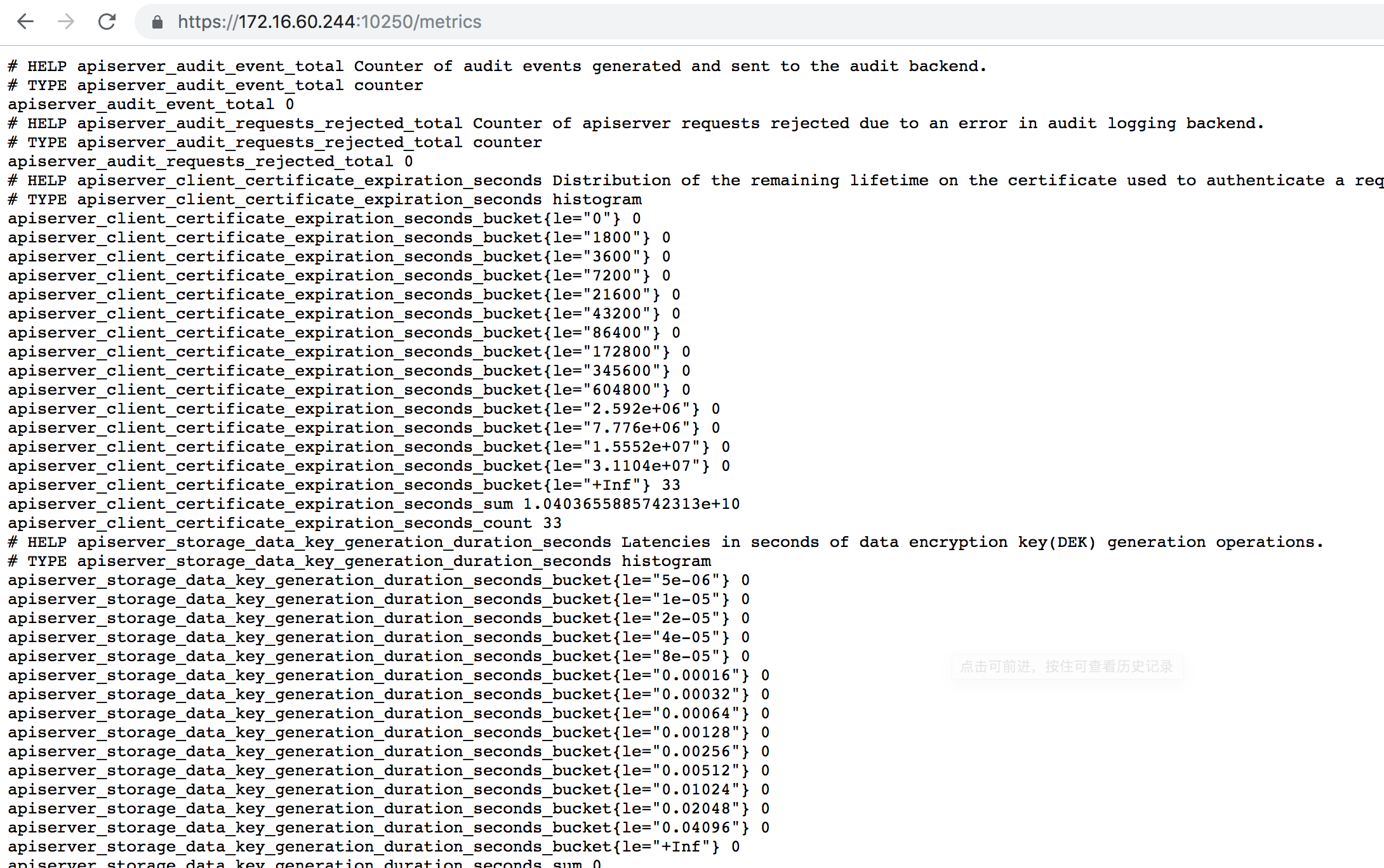

4) 查看输出的 metrics

注意:以下命令在3台kube-controller-manager节点上执行。

由于在kube-controller-manager启动文件中关掉了"--port=0"和"--secure-port=10252"这两个参数,则只能通过http方式获取到kube-controller-manager

输出的metrics信息。kube-controller-manager一般不会被访问,只有在监控时采集metrcis指标数据时被访问。

[root@k8s-master01 work]# curl -s http://172.16.60.241:10252/metrics|head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP apiserver_audit_requests_rejected_total Counter of apiserver requests rejected due to an error in audit logging backend.

# TYPE apiserver_audit_requests_rejected_total counter

apiserver_audit_requests_rejected_total 0

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="1800"} 0

[root@k8s-master01 work]# curl -s --cacert /etc/kubernetes/cert/ca.pem http://172.16.60.241:10252/metrics |head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP apiserver_audit_requests_rejected_total Counter of apiserver requests rejected due to an error in audit logging backend.

# TYPE apiserver_audit_requests_rejected_total counter

apiserver_audit_requests_rejected_total 0

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="1800"} 0

[root@k8s-master01 work]# curl -s --cacert /etc/kubernetes/cert/ca.pem http://127.0.0.1:10252/metrics |head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP apiserver_audit_requests_rejected_total Counter of apiserver requests rejected due to an error in audit logging backend.

# TYPE apiserver_audit_requests_rejected_total counter

apiserver_audit_requests_rejected_total 0

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="1800"} 0

[root@k8s-master01 ~]# curl -s --cacert /opt/k8s/work/ca.pem --cert /opt/k8s/work/admin.pem --key /opt/k8s/work/admin-key.pem http://172.16.60.241:10252/metrics |head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP apiserver_audit_requests_rejected_total Counter of apiserver requests rejected due to an error in audit logging backend.

# TYPE apiserver_audit_requests_rejected_total counter

apiserver_audit_requests_rejected_total 0

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="1800"} 0

5) kube-controller-manager 的权限

ClusteRole system:kube-controller-manager 的权限很小,只能创建 secret、serviceaccount 等资源对象,各 controller 的权限分散到 ClusterRole system:controller:XXX 中:

[root@k8s-master01 work]# kubectl describe clusterrole system:kube-controller-manager

Name: system:kube-controller-manager

Labels: kubernetes.io/bootstrapping=rbac-defaults

Annotations: rbac.authorization.kubernetes.io/autoupdate: true

PolicyRule:

Resources Non-Resource URLs Resource Names Verbs

--------- ----------------- -------------- -----

secrets [] [] [create delete get update]

endpoints [] [] [create get update]

serviceaccounts [] [] [create get update]

events [] [] [create patch update]

tokenreviews.authentication.k8s.io [] [] [create]

subjectaccessreviews.authorization.k8s.io [] [] [create]

configmaps [] [] [get]

namespaces [] [] [get]

*.* [] [] [list watch]

需要在 kube-controller-manager 的启动参数中添加 --use-service-account-credentials=true 参数,这样 main controller 会为各 controller 创建对应的 ServiceAccount XXX-controller。

内置的 ClusterRoleBinding system:controller:XXX 将赋予各 XXX-controller ServiceAccount 对应的 ClusterRole system:controller:XXX 权限。

[root@k8s-master01 work]# kubectl get clusterrole|grep controller

system:controller:attachdetach-controller 141m

system:controller:certificate-controller 141m

system:controller:clusterrole-aggregation-controller 141m

system:controller:cronjob-controller 141m

system:controller:daemon-set-controller 141m

system:controller:deployment-controller 141m

system:controller:disruption-controller 141m

system:controller:endpoint-controller 141m

system:controller:expand-controller 141m

system:controller:generic-garbage-collector 141m

system:controller:horizontal-pod-autoscaler 141m

system:controller:job-controller 141m

system:controller:namespace-controller 141m

system:controller:node-controller 141m

system:controller:persistent-volume-binder 141m

system:controller:pod-garbage-collector 141m

system:controller:pv-protection-controller 141m

system:controller:pvc-protection-controller 141m

system:controller:replicaset-controller 141m

system:controller:replication-controller 141m

system:controller:resourcequota-controller 141m

system:controller:route-controller 141m

system:controller:service-account-controller 141m

system:controller:service-controller 141m

system:controller:statefulset-controller 141m

system:controller:ttl-controller 141m

system:kube-controller-manager 141m

以 deployment controller 为例:

[root@k8s-master01 work]# kubectl describe clusterrole system:controller:deployment-controller

Name: system:controller:deployment-controller

Labels: kubernetes.io/bootstrapping=rbac-defaults

Annotations: rbac.authorization.kubernetes.io/autoupdate: true

PolicyRule:

Resources Non-Resource URLs Resource Names Verbs

--------- ----------------- -------------- -----

replicasets.apps [] [] [create delete get list patch update watch]

replicasets.extensions [] [] [create delete get list patch update watch]

events [] [] [create patch update]

pods [] [] [get list update watch]

deployments.apps [] [] [get list update watch]

deployments.extensions [] [] [get list update watch]

deployments.apps/finalizers [] [] [update]

deployments.apps/status [] [] [update]

deployments.extensions/finalizers [] [] [update]

deployments.extensions/status [] [] [update]

6)查看kube-controller-manager集群中当前的leader

[root@k8s-master01 work]# kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml

apiVersion: v1

kind: Endpoints

metadata:

annotations:

control-plane.alpha.kubernetes.io/leader: '{"holderIdentity":"k8s-master02_4e449819-9185-11e9-82b6-005056ac42a4","leaseDurationSeconds":15,"acquireTime":"2019-06-18T04:55:49Z","renewTime":"2019-06-18T05:04:54Z","leaderTransitions":3}'

creationTimestamp: "2019-06-18T04:03:07Z"

name: kube-controller-manager

namespace: kube-system

resourceVersion: "4604"

selfLink: /api/v1/namespaces/kube-system/endpoints/kube-controller-manager

uid: fa824018-917d-11e9-90d4-005056ac7c81

可见,当前的leader为k8s-master02节点。

测试 kube-controller-manager 集群的高可用

停掉一个或两个节点的 kube-controller-manager 服务,观察其它节点的日志,看是否获取了 leader 权限。

比如停掉k8s-master02节点的kube-controller-manager 服务

[root@k8s-master02 ~]# systemctl stop kube-controller-manager

[root@k8s-master02 ~]# ps -ef|grep kube-controller-manager

root 25677 11006 0 13:06 pts/0 00:00:00 grep --color=auto kube-controller-manager

接着观察kube-controller-manager集群当前的leader情况

[root@k8s-master01 work]# kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml

apiVersion: v1

kind: Endpoints

metadata:

annotations:

control-plane.alpha.kubernetes.io/leader: '{"holderIdentity":"k8s-master03_4e4c28b5-9185-11e9-b98a-005056ac7136","leaseDurationSeconds":15,"acquireTime":"2019-06-18T05:06:32Z","renewTime":"2019-06-18T05:06:57Z","leaderTransitions":4}'

creationTimestamp: "2019-06-18T04:03:07Z"

name: kube-controller-manager

namespace: kube-system

resourceVersion: "4695"

selfLink: /api/v1/namespaces/kube-system/endpoints/kube-controller-manager

uid: fa824018-917d-11e9-90d4-005056ac7c81

发现当前leader已经转移到k8s-master03节点上了!!

8.3 - 部署高可用 kube-scheduler 集群

该集群包含 3 个节点,启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用后,剩余节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。为保证通信安全,本文档先生成 x509 证书和私钥,kube-scheduler 在如下两种情况下使用该证书:与kube-apiserver 的安全端口通信;在安全端口(https,10251) 输出 prometheus 格式的 metrics;下面部署命令均在k8s-master01节点上执行,然后远程分发文件和执行命令。

1)创建 kube-scheduler 证书和私钥

创建证书签名请求:

[root@k8s-master01 ~]# cd /opt/k8s/work

[root@k8s-master01 work]# cat > kube-scheduler-csr.json <<EOF

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"172.16.60.241",

"172.16.60.242",

"172.16.60.243"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:kube-scheduler",

"OU": "4Paradigm"

}

]

}

EOF

解释说明:

hosts 列表包含所有 kube-scheduler 节点 IP;

CN 和 O 均为 system:kube-scheduler,kubernetes 内置的 ClusterRoleBindings system:kube-scheduler 将赋予 kube-scheduler 工作所需的权限;

生成证书和私钥:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# cfssl gencert -ca=/opt/k8s/work/ca.pem \

-ca-key=/opt/k8s/work/ca-key.pem \

-config=/opt/k8s/work/ca-config.json \

-profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

[root@k8s-master01 work]# ls kube-scheduler*pem

kube-scheduler-key.pem kube-scheduler.pem

将生成的证书和私钥分发到所有 master 节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp kube-scheduler*.pem root@${node_master_ip}:/etc/kubernetes/cert/

done

2) 创建和分发 kubeconfig 文件

kube-scheduler 使用 kubeconfig 文件访问 apiserver,该文件提供了 apiserver 地址、嵌入的 CA 证书和 kube-scheduler 证书:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/k8s/work/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-scheduler.kubeconfig

[root@k8s-master01 work]# kubectl config set-credentials system:kube-scheduler \

--client-certificate=kube-scheduler.pem \

--client-key=kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=kube-scheduler.kubeconfig

[root@k8s-master01 work]# kubectl config set-context system:kube-scheduler \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=kube-scheduler.kubeconfig

[root@k8s-master01 work]# kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

分发 kubeconfig 到所有 master 节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp kube-scheduler.kubeconfig root@${node_master_ip}:/etc/kubernetes/

done

3) 创建 kube-scheduler 配置文件

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# cat >kube-scheduler.yaml.template <<EOF

apiVersion: kubescheduler.config.k8s.io/v1alpha1

kind: KubeSchedulerConfiguration

bindTimeoutSeconds: 600

clientConnection:

burst: 200

kubeconfig: "/etc/kubernetes/kube-scheduler.kubeconfig"

qps: 100

enableContentionProfiling: false

enableProfiling: true

hardPodAffinitySymmetricWeight: 1

healthzBindAddress: 0.0.0.0:10251

leaderElection:

leaderElect: true

metricsBindAddress: 0.0.0.0:10251

EOF

注意:这里的ip地址最好用0.0.0.0,不然执行"kubectl get cs"查看schedule的集群状态会是"Unhealthy"

--kubeconfig:指定 kubeconfig 文件路径,kube-scheduler 使用它连接和验证 kube-apiserver;

--leader-elect=true:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

替换模板文件中的变量:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for (( i=0; i < 3; i++ ))

do

sed -e "s/##NODE_MASTER_NAME##/${NODE_MASTER_NAMES[i]}/" -e "s/##NODE_MASTER_IP##/${NODE_MASTER_IPS[i]}/" kube-scheduler.yaml.template > kube-scheduler-${NODE_MASTER_IPS[i]}.yaml

done

注意:NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP;

[root@k8s-master01 work]# ll kube-scheduler*.yaml

-rw-r--r-- 1 root root 399 Jun 18 14:57 kube-scheduler-172.16.60.241.yaml

-rw-r--r-- 1 root root 399 Jun 18 14:57 kube-scheduler-172.16.60.242.yaml

-rw-r--r-- 1 root root 399 Jun 18 14:57 kube-scheduler-172.16.60.243.yaml

分发 kube-scheduler 配置文件到所有 master 节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp kube-scheduler-${node_master_ip}.yaml root@${node_master_ip}:/etc/kubernetes/kube-scheduler.yaml

done

注意:重命名为 kube-scheduler.yaml;

4)创建 kube-scheduler systemd unit 模板文件

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# cat > kube-scheduler.service.template <<EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

WorkingDirectory=${K8S_DIR}/kube-scheduler

ExecStart=/opt/k8s/bin/kube-scheduler \\

--config=/etc/kubernetes/kube-scheduler.yaml \\

--bind-address=0.0.0.0 \\

--tls-cert-file=/etc/kubernetes/cert/kube-scheduler.pem \\

--tls-private-key-file=/etc/kubernetes/cert/kube-scheduler-key.pem \\

--authentication-kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \\

--client-ca-file=/etc/kubernetes/cert/ca.pem \\

--requestheader-allowed-names="" \\

--requestheader-client-ca-file=/etc/kubernetes/cert/ca.pem \\

--requestheader-extra-headers-prefix="X-Remote-Extra-" \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-username-headers=X-Remote-User \\

--authorization-kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \\

--logtostderr=true \\

--v=2

Restart=always

RestartSec=5

StartLimitInterval=0

[Install]

WantedBy=multi-user.target

EOF

为各节点创建和分发 kube-scheduler systemd unit 文件

替换模板文件中的变量,为各节点创建 systemd unit 文件:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for (( i=0; i < 3; i++ ))

do

sed -e "s/##NODE_MASTER_NAME##/${NODE_MASTER_NAMES[i]}/" -e "s/##NODE_MASTER_IP##/${NODE_MASTER_IPS[i]}/" kube-scheduler.service.template > kube-scheduler-${NODE_MASTER_IPS[i]}.service

done

其中:NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP;

[root@k8s-master01 work]# ll kube-scheduler*.service

-rw-r--r-- 1 root root 981 Jun 18 15:30 kube-scheduler-172.16.60.241.service

-rw-r--r-- 1 root root 981 Jun 18 15:30 kube-scheduler-172.16.60.242.service

-rw-r--r-- 1 root root 981 Jun 18 15:30 kube-scheduler-172.16.60.243.service

分发 systemd unit 文件到所有 master 节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

scp kube-scheduler-${node_master_ip}.service root@${node_master_ip}:/etc/systemd/system/kube-scheduler.service

done

5) 启动 kube-scheduler 服务

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

ssh root@${node_master_ip} "mkdir -p ${K8S_DIR}/kube-scheduler"

ssh root@${node_master_ip} "systemctl daemon-reload && systemctl enable kube-scheduler && systemctl restart kube-scheduler"

done

注意:启动服务前必须先创建工作目录;

检查服务运行状态

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_master_ip in ${NODE_MASTER_IPS[@]}

do

echo ">>> ${node_master_ip}"

ssh root@${node_master_ip} "systemctl status kube-scheduler|grep Active"

done

预期输出结果:

>>> 172.16.60.241

Active: active (running) since Tue 2019-06-18 15:33:29 CST; 1min 12s ago

>>> 172.16.60.242

Active: active (running) since Tue 2019-06-18 15:33:30 CST; 1min 11s ago

>>> 172.16.60.243

Active: active (running) since Tue 2019-06-18 15:33:30 CST; 1min 11s ago

确保状态为 active (running),否则查看日志,确认原因: (journalctl -u kube-scheduler)

看看集群状态,此时状态均为"ok"

[root@k8s-master01 work]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

6) 查看输出的 metrics

注意:以下命令要在kube-scheduler集群节点上执行。

kube-scheduler监听10251和10259端口:

10251:接收 http 请求,非安全端口,不需要认证授权;

10259:接收 https 请求,安全端口,需要认证授权;

两个接口都对外提供 /metrics 和 /healthz 的访问。

[root@k8s-master01 work]# netstat -lnpt |grep kube-schedule

tcp6 0 0 :::10251 :::* LISTEN 6075/kube-scheduler

tcp6 0 0 :::10259 :::* LISTEN 6075/kube-scheduler

[root@k8s-master01 work]# lsof -i:10251

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

kube-sche 6075 root 3u IPv6 628571 0t0 TCP *:10251 (LISTEN)

[root@k8s-master01 work]# lsof -i:10259

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

kube-sche 6075 root 5u IPv6 628574 0t0 TCP *:10259 (LISTEN)

下面几种方式均能获取到kube-schedule的metrics数据信息(分别使用http的10251 和 https的10259端口)

[root@k8s-master01 work]# curl -s http://172.16.60.241:10251/metrics |head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP apiserver_audit_requests_rejected_total Counter of apiserver requests rejected due to an error in audit logging backend.

# TYPE apiserver_audit_requests_rejected_total counter

apiserver_audit_requests_rejected_total 0

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="1800"} 0

[root@k8s-master01 work]# curl -s http://127.0.0.1:10251/metrics |head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP apiserver_audit_requests_rejected_total Counter of apiserver requests rejected due to an error in audit logging backend.

# TYPE apiserver_audit_requests_rejected_total counter

apiserver_audit_requests_rejected_total 0

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="1800"} 0

[root@k8s-master01 work]# curl -s --cacert /etc/kubernetes/cert/ca.pem http://172.16.60.241:10251/metrics |head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP apiserver_audit_requests_rejected_total Counter of apiserver requests rejected due to an error in audit logging backend.

# TYPE apiserver_audit_requests_rejected_total counter

apiserver_audit_requests_rejected_total 0

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="1800"} 0

[root@k8s-master01 work]# curl -s --cacert /etc/kubernetes/cert/ca.pem http://127.0.0.1:10251/metrics |head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP apiserver_audit_requests_rejected_total Counter of apiserver requests rejected due to an error in audit logging backend.

# TYPE apiserver_audit_requests_rejected_total counter

apiserver_audit_requests_rejected_total 0

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="1800"} 0

[root@k8s-master01 work]# curl -s --cacert /opt/k8s/work/ca.pem --cert /opt/k8s/work/admin.pem --key /opt/k8s/work/admin-key.pem https://172.16.60.241:10259/metrics |head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP apiserver_audit_requests_rejected_total Counter of apiserver requests rejected due to an error in audit logging backend.

# TYPE apiserver_audit_requests_rejected_total counter

apiserver_audit_requests_rejected_total 0

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="1800"} 0

7)查看当前的 leader

[root@k8s-master01 work]# kubectl get endpoints kube-scheduler --namespace=kube-system -o yaml

apiVersion: v1

kind: Endpoints

metadata:

annotations:

control-plane.alpha.kubernetes.io/leader: '{"holderIdentity":"k8s-master01_5eac29d7-919b-11e9-b242-005056ac7c81","leaseDurationSeconds":15,"acquireTime":"2019-06-18T07:33:31Z","renewTime":"2019-06-18T07:41:13Z","leaderTransitions":0}'

creationTimestamp: "2019-06-18T07:33:31Z"

name: kube-scheduler

namespace: kube-system

resourceVersion: "12218"

selfLink: /api/v1/namespaces/kube-system/endpoints/kube-scheduler

uid: 5f466875-919b-11e9-90d4-005056ac7c81

可见,当前的 leader 为 k8s-master01 节点。

测试 kube-scheduler 集群的高可用

随便找一个或两个 master 节点,停掉 kube-scheduler 服务,看其它节点是否获取了 leader 权限。

比如停掉k8s-master01节点的kube-schedule服务,查看下leader的转移情况

[root@k8s-master01 work]# systemctl stop kube-scheduler

[root@k8s-master01 work]# ps -ef|grep kube-scheduler

root 6871 2379 0 15:42 pts/2 00:00:00 grep --color=auto kube-scheduler

再次看看当前的leader,发现leader已经转移为k8s-master02节点了

[root@k8s-master01 work]# kubectl get endpoints kube-scheduler --namespace=kube-system -o yaml

apiVersion: v1

kind: Endpoints

metadata:

annotations:

control-plane.alpha.kubernetes.io/leader: '{"holderIdentity":"k8s-master02_5efade79-919b-11e9-bbe2-005056ac42a4","leaseDurationSeconds":15,"acquireTime":"2019-06-18T07:43:03Z","renewTime":"2019-06-18T07:43:12Z","leaderTransitions":1}'

creationTimestamp: "2019-06-18T07:33:31Z"

name: kube-scheduler

namespace: kube-system

resourceVersion: "12363"

selfLink: /api/v1/namespaces/kube-system/endpoints/kube-scheduler

uid: 5f466875-919b-11e9-90d4-005056ac7c81

九、部署node工作节点

kubernetes node节点运行的组件有docker、kubelet、kube-proxy、flanneld。下面部署命令均在k8s-master01节点上执行,然后远程分发文件和执行命令。

安装依赖包

[root@k8s-master01 ~]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 ~]# for node_node_ip in ${NODE_NODE_IPS[@]}

do

echo ">>> ${node_node_ip}"

ssh root@${node_node_ip} "yum install -y epel-release"

ssh root@${node_node_ip} "yum install -y conntrack ipvsadm ntp ntpdate ipset jq iptables curl sysstat libseccomp && modprobe ip_vs "

done

9.1 - 部署 docker 组件

docker 运行和管理容器,kubelet 通过 Container Runtime Interface (CRI) 与它进行交互。下面操作均在k8s-master01上执行,然后远程分发文件和执行命令。

1) 下载和分发 docker 二进制文件

[root@k8s-master01 ~]# cd /opt/k8s/work

[root@k8s-master01 work]# wget https://download.docker.com/linux/static/stable/x86_64/docker-18.09.6.tgz

[root@k8s-master01 work]# tar -xvf docker-18.09.6.tgz

分发二进制文件到所有node节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_ip in ${NODE_NODE_IPS[@]}

do

echo ">>> ${node_node_ip}"

scp docker/* root@${node_node_ip}:/opt/k8s/bin/

ssh root@${node_node_ip} "chmod +x /opt/k8s/bin/*"

done

2) 创建和分发 systemd unit 文件

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# cat > docker.service <<"EOF"

[Unit]

Description=Docker Application Container Engine

Documentation=http://docs.docker.io

[Service]

WorkingDirectory=##DOCKER_DIR##

Environment="PATH=/opt/k8s/bin:/bin:/sbin:/usr/bin:/usr/sbin"

EnvironmentFile=-/run/flannel/docker

ExecStart=/opt/k8s/bin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

Restart=on-failure

RestartSec=5

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

Delegate=yes

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

注意事项:

-> EOF 前后有双引号,这样 bash 不会替换文档中的变量,如 $DOCKER_NETWORK_OPTIONS (这些环境变量是 systemd 负责替换的。);

-> dockerd 运行时会调用其它 docker 命令,如 docker-proxy,所以需要将 docker 命令所在的目录加到 PATH 环境变量中;

-> flanneld 启动时将网络配置写入 /run/flannel/docker 文件中,dockerd 启动前读取该文件中的环境变量 DOCKER_NETWORK_OPTIONS ,然后设置 docker0 网桥网段;

-> 如果指定了多个 EnvironmentFile 选项,则必须将 /run/flannel/docker 放在最后(确保 docker0 使用 flanneld 生成的 bip 参数);

-> docker 需要以 root 用于运行;

-> docker 从 1.13 版本开始,可能将 iptables FORWARD chain的默认策略设置为DROP,从而导致 ping 其它 Node 上的 Pod IP 失败,遇到这种情况时,需要手动设置策略为 ACCEPT:

# iptables -P FORWARD ACCEPT

并且把以下命令写入 /etc/rc.local 文件中,防止节点重启iptables FORWARD chain的默认策略又还原为DROP

# /sbin/iptables -P FORWARD ACCEPT

分发 systemd unit 文件到所有node节点机器:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# sed -i -e "s|##DOCKER_DIR##|${DOCKER_DIR}|" docker.service

[root@k8s-master01 work]# for node_node_ip in ${NODE_NODE_IPS[@]}

do

echo ">>> ${node_node_ip}"

scp docker.service root@${node_node_ip}:/etc/systemd/system/

done

3) 配置和分发 docker 配置文件

使用国内的仓库镜像服务器以加快 pull image 的速度,同时增加下载的并发数 (需要重启 dockerd 生效):

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# cat > docker-daemon.json <<EOF

{

"registry-mirrors": ["https://docker.mirrors.ustc.edu.cn","https://hub-mirror.c.163.com"],

"insecure-registries": ["docker02:35000"],

"max-concurrent-downloads": 20,

"live-restore": true,

"max-concurrent-uploads": 10,

"debug": true,

"data-root": "${DOCKER_DIR}/data",

"exec-root": "${DOCKER_DIR}/exec",

"log-opts": {

"max-size": "100m",

"max-file": "5"

}

}

EOF

分发 docker 配置文件到所有 node 节点:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_ip in ${NODE_NODE_IPS[@]}

do

echo ">>> ${node_node_ip}"

ssh root@${node_node_ip} "mkdir -p /etc/docker/ ${DOCKER_DIR}/{data,exec}"

scp docker-daemon.json root@${node_node_ip}:/etc/docker/daemon.json

done

4) 启动 docker 服务

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_ip in ${NODE_NODE_IPS[@]}

do

echo ">>> ${node_node_ip}"

ssh root@${node_node_ip} "systemctl daemon-reload && systemctl enable docker && systemctl restart docker"

done

检查服务运行状态

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_ip in ${NODE_NODE_IPS[@]}

do

echo ">>> ${node_node_ip}"

ssh root@${node_node_ip} "systemctl status docker|grep Active"

done

预期输出结果:

>>> 172.16.60.244

Active: active (running) since Tue 2019-06-18 16:28:32 CST; 42s ago

>>> 172.16.60.245

Active: active (running) since Tue 2019-06-18 16:28:31 CST; 42s ago

>>> 172.16.60.246

Active: active (running) since Tue 2019-06-18 16:28:32 CST; 42s ago

确保状态为 active (running),否则查看日志,确认原因 (journalctl -u docker)

5) 检查 docker0 网桥

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_ip in ${NODE_NODE_IPS[@]}

do

echo ">>> ${node_node_ip}"

ssh root@${node_node_ip} "/usr/sbin/ip addr show flannel.1 && /usr/sbin/ip addr show docker0"

done

预期输出结果:

>>> 172.16.60.244

3: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group default

link/ether c6:c2:d1:5a:9a:8a brd ff:ff:ff:ff:ff:ff

inet 172.30.88.0/32 scope global flannel.1

valid_lft forever preferred_lft forever

4: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:27:3c:5e:5f brd ff:ff:ff:ff:ff:ff

inet 172.30.88.1/21 brd 172.30.95.255 scope global docker0

valid_lft forever preferred_lft forever

>>> 172.16.60.245

3: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group default

link/ether 02:36:1d:ab:c4:86 brd ff:ff:ff:ff:ff:ff

inet 172.30.56.0/32 scope global flannel.1

valid_lft forever preferred_lft forever

4: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:6f:36:7d:fb brd ff:ff:ff:ff:ff:ff

inet 172.30.56.1/21 brd 172.30.63.255 scope global docker0

valid_lft forever preferred_lft forever

>>> 172.16.60.246

3: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group default

link/ether 4e:73:d1:0e:27:c0 brd ff:ff:ff:ff:ff:ff

inet 172.30.72.0/32 scope global flannel.1

valid_lft forever preferred_lft forever

4: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:21:39:f4:9e brd ff:ff:ff:ff:ff:ff

inet 172.30.72.1/21 brd 172.30.79.255 scope global docker0

valid_lft forever preferred_lft forever

确认各node节点的docker0网桥和flannel.1接口的IP一定要处于同一个网段中(如下 172.30.88.0/32 位于 172.30.88.1/21 中)!!!

到任意一个node节点上查看 docker 的状态信息

[root@k8s-node01 ~]# ps -elfH|grep docker

0 S root 21573 18744 0 80 0 - 28180 pipe_w 16:32 pts/2 00:00:00 grep --color=auto docker

4 S root 21147 1 0 80 0 - 173769 futex_ 16:28 ? 00:00:00 /opt/k8s/bin/dockerd --bip=172.30.88.1/21 --ip-masq=false --mtu=1450

4 S root 21175 21147 0 80 0 - 120415 futex_ 16:28 ? 00:00:00 containerd --config /data/k8s/docker/exec/containerd/containerd.toml --log-level debug

[root@k8s-node01 ~]# docker info

Containers: 0

Running: 0

Paused: 0

Stopped: 0

Images: 0

Server Version: 18.09.6

Storage Driver: overlay2

Backing Filesystem: xfs

Supports d_type: true

Native Overlay Diff: true

Logging Driver: json-file

Cgroup Driver: cgroupfs

Plugins:

Volume: local

Network: bridge host macvlan null overlay

Log: awslogs fluentd gcplogs gelf journald json-file local logentries splunk syslog

Swarm: inactive

Runtimes: runc

Default Runtime: runc

Init Binary: docker-init

containerd version: bb71b10fd8f58240ca47fbb579b9d1028eea7c84

runc version: 2b18fe1d885ee5083ef9f0838fee39b62d653e30

init version: fec3683

Security Options:

seccomp

Profile: default

Kernel Version: 4.4.181-1.el7.elrepo.x86_64

Operating System: CentOS Linux 7 (Core)

OSType: linux

Architecture: x86_64

CPUs: 4

Total Memory: 3.859GiB

Name: k8s-node01

ID: R24D:75E5:2OWS:SNU5:NPSE:SBKH:WKLZ:2ZH7:6ITY:3BE2:YHRG:6WRU

Docker Root Dir: /data/k8s/docker/data

Debug Mode (client): false

Debug Mode (server): true

File Descriptors: 22

Goroutines: 43

System Time: 2019-06-18T16:32:44.260301822+08:00

EventsListeners: 0

Registry: https://index.docker.io/v1/

Labels:

Experimental: false

Insecure Registries:

docker02:35000

127.0.0.0/8

Registry Mirrors:

https://docker.mirrors.ustc.edu.cn/

https://hub-mirror.c.163.com/

Live Restore Enabled: true

Product License: Community Engine

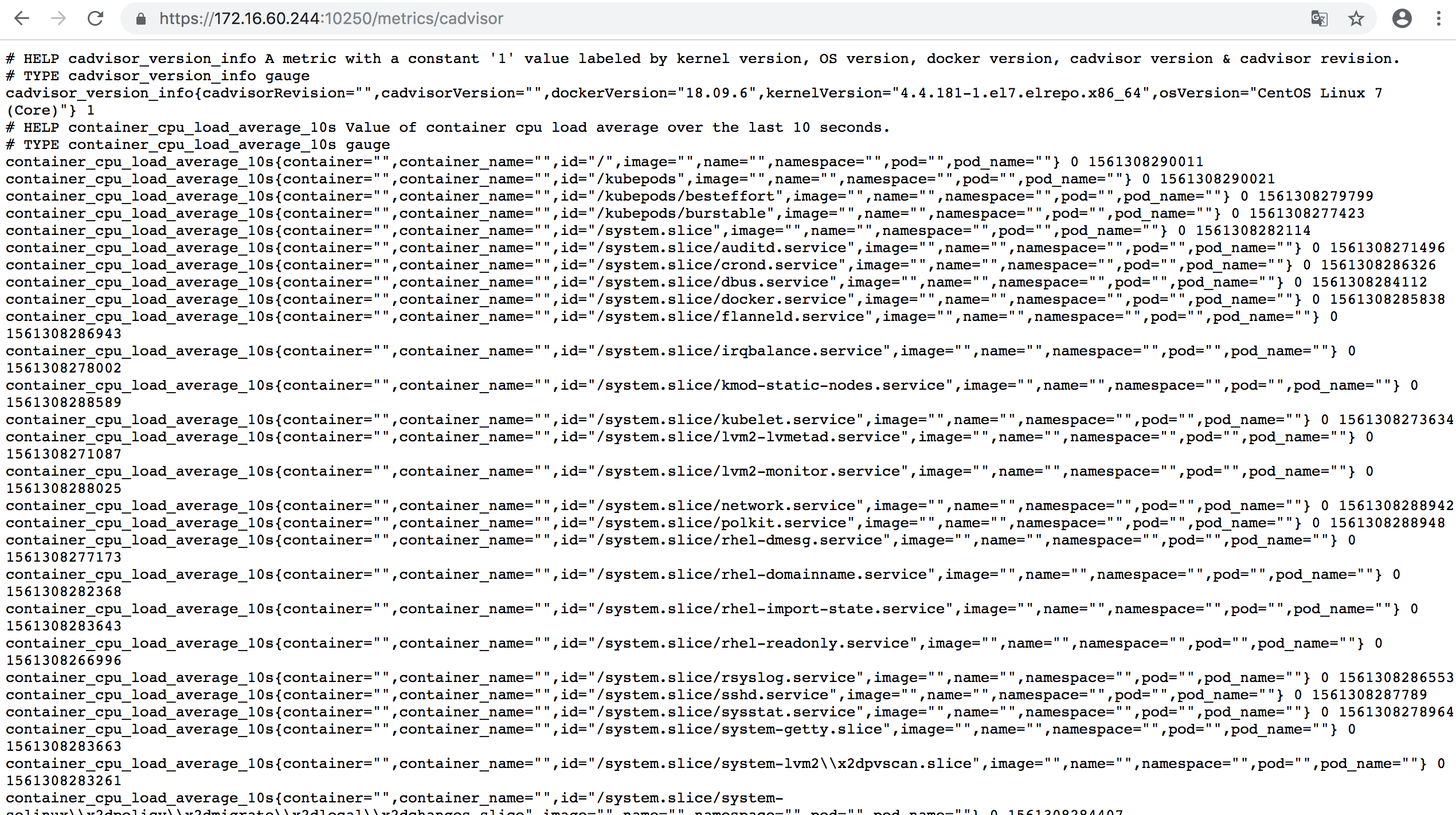

9.2 - 部署 kubelet 组件

kubelet 运行在每个node节点上,接收 kube-apiserver 发送的请求,管理 Pod 容器,执行交互式命令,如 exec、run、logs 等。kubelet 启动时自动向 kube-apiserver 注册节点信息,内置的 cadvisor 统计和监控节点的资源使用情况。为确保安全,部署时关闭了 kubelet 的非安全 http 端口,对请求进行认证和授权,拒绝未授权的访问(如 apiserver、heapster 的请求)。下面部署命令均在k8s-master01节点上执行,然后远程分发文件和执行命令。

1)下载和分发 kubelet 二进制文件

[root@k8s-master01 ~]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_ip in ${NODE_NODE_IPS[@]}

do

echo ">>> ${node_node_ip}"

scp kubernetes/server/bin/kubelet root@${node_node_ip}:/opt/k8s/bin/

ssh root@${node_node_ip} "chmod +x /opt/k8s/bin/*"

done

2)创建 kubelet bootstrap kubeconfig 文件

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_name in ${NODE_NODE_NAMES[@]}

do

echo ">>> ${node_node_name}"

# 创建 token

export BOOTSTRAP_TOKEN=$(kubeadm token create \

--description kubelet-bootstrap-token \

--groups system:bootstrappers:${node_node_name} \

--kubeconfig ~/.kube/config)

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/cert/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kubelet-bootstrap-${node_node_name}.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=kubelet-bootstrap-${node_node_name}.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=kubelet-bootstrap-${node_node_name}.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=kubelet-bootstrap-${node_node_name}.kubeconfig

done

解释说明: 向 kubeconfig 写入的是 token,bootstrap 结束后 kube-controller-manager 为 kubelet 创建 client 和 server 证书;

查看 kubeadm 为各节点创建的 token:

[root@k8s-master01 work]# kubeadm token list --kubeconfig ~/.kube/config

TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS

0zqowl.aye8f834jtq9vm9t 23h 2019-06-19T16:50:43+08:00 authentication,signing kubelet-bootstrap-token system:bootstrappers:k8s-node03

b46tq2.muab337gxwl0dsqn 23h 2019-06-19T16:50:43+08:00 authentication,signing kubelet-bootstrap-token system:bootstrappers:k8s-node02

heh41x.foguhh1qa5crpzlq 23h 2019-06-19T16:50:42+08:00 authentication,signing kubelet-bootstrap-token system:bootstrappers:k8s-node01

解释说明:

-> token 有效期为 1 天,超期后将不能再被用来 boostrap kubelet,且会被 kube-controller-manager 的 tokencleaner 清理;

-> kube-apiserver 接收 kubelet 的 bootstrap token 后,将请求的 user 设置为 system:bootstrap:<Token ID>,group 设置为 system:bootstrappers,

后续将为这个 group 设置 ClusterRoleBinding;

查看各 token 关联的 Secret:

[root@k8s-master01 work]# kubectl get secrets -n kube-system|grep bootstrap-token

bootstrap-token-0zqowl bootstrap.kubernetes.io/token 7 88s

bootstrap-token-b46tq2 bootstrap.kubernetes.io/token 7 88s

bootstrap-token-heh41x bootstrap.kubernetes.io/token 7 89s

3) 分发 bootstrap kubeconfig 文件到所有node节点

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_name in ${NODE_NODE_NAMES[@]}

do

echo ">>> ${node_node_name}"

scp kubelet-bootstrap-${node_node_name}.kubeconfig root@${node_node_name}:/etc/kubernetes/kubelet-bootstrap.kubeconfig

done

4) 创建和分发 kubelet 参数配置文件

从 v1.10 开始,部分 kubelet 参数需在配置文件中配置,kubelet --help 会提示:

DEPRECATED: This parameter should be set via the config file specified by the Kubelet's --config flag

创建 kubelet 参数配置文件模板:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# cat > kubelet-config.yaml.template <<EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: "##NODE_NODE_IP##"

staticPodPath: ""

syncFrequency: 1m

fileCheckFrequency: 20s

httpCheckFrequency: 20s

staticPodURL: ""

port: 10250

readOnlyPort: 0

rotateCertificates: true

serverTLSBootstrap: true

authentication:

anonymous:

enabled: false

webhook:

enabled: true

x509:

clientCAFile: "/etc/kubernetes/cert/ca.pem"

authorization:

mode: Webhook

registryPullQPS: 0

registryBurst: 20

eventRecordQPS: 0

eventBurst: 20

enableDebuggingHandlers: true

enableContentionProfiling: true

healthzPort: 10248

healthzBindAddress: "##NODE_NODE_IP##"

clusterDomain: "${CLUSTER_DNS_DOMAIN}"

clusterDNS:

- "${CLUSTER_DNS_SVC_IP}"

nodeStatusUpdateFrequency: 10s

nodeStatusReportFrequency: 1m

imageMinimumGCAge: 2m

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

volumeStatsAggPeriod: 1m

kubeletCgroups: ""

systemCgroups: ""

cgroupRoot: ""

cgroupsPerQOS: true

cgroupDriver: cgroupfs

runtimeRequestTimeout: 10m

hairpinMode: promiscuous-bridge

maxPods: 220

podCIDR: "${CLUSTER_CIDR}"

podPidsLimit: -1

resolvConf: /etc/resolv.conf

maxOpenFiles: 1000000

kubeAPIQPS: 1000

kubeAPIBurst: 2000

serializeImagePulls: false

evictionHard:

memory.available: "100Mi"

nodefs.available: "10%"

nodefs.inodesFree: "5%"

imagefs.available: "15%"

evictionSoft: {}

enableControllerAttachDetach: true

failSwapOn: true

containerLogMaxSize: 20Mi

containerLogMaxFiles: 10

systemReserved: {}

kubeReserved: {}

systemReservedCgroup: ""

kubeReservedCgroup: ""

enforceNodeAllocatable: ["pods"]

EOF

解释说明:

-> address:kubelet 安全端口(https,10250)监听的地址,不能为 127.0.0.1,否则 kube-apiserver、heapster 等不能调用 kubelet 的 API;

-> readOnlyPort=0:关闭只读端口(默认 10255),等效为未指定;

-> authentication.anonymous.enabled:设置为 false,不允许匿名�访问 10250 端口;

-> authentication.x509.clientCAFile:指定签名客户端证书的 CA 证书,开启 HTTP 证书认证;

-> authentication.webhook.enabled=true:开启 HTTPs bearer token 认证;

-> 对于未通过 x509 证书和 webhook 认证的请求(kube-apiserver 或其他客户端),将被拒绝,提示 Unauthorized;

-> authroization.mode=Webhook:kubelet 使用 SubjectAccessReview API 查询 kube-apiserver 某 user、group 是否具有操作资源的权限(RBAC);

-> featureGates.RotateKubeletClientCertificate、featureGates.RotateKubeletServerCertificate:自动 rotate 证书,证书的有效期取决于

kube-controller-manager 的 --experimental-cluster-signing-duration 参数;

-> 需要 root 账户运行;

为各节点创建和分发 kubelet 配置文件:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_ip in ${NODE_NODE_IPS[@]}

do

echo ">>> ${node_node_ip}"

sed -e "s/##NODE_NODE_IP##/${node_node_ip}/" kubelet-config.yaml.template > kubelet-config-${node_node_ip}.yaml.template

scp kubelet-config-${node_node_ip}.yaml.template root@${node_node_ip}:/etc/kubernetes/kubelet-config.yaml

done

5)创建和分发 kubelet systemd unit 文件

创建 kubelet systemd unit 文件模板:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# cat > kubelet.service.template <<EOF

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=${K8S_DIR}/kubelet

ExecStart=/opt/k8s/bin/kubelet \\

--allow-privileged=true \\

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \\

--cert-dir=/etc/kubernetes/cert \\

--cni-conf-dir=/etc/cni/net.d \\

--container-runtime=docker \\

--container-runtime-endpoint=unix:///var/run/dockershim.sock \\

--root-dir=${K8S_DIR}/kubelet \\

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \\

--config=/etc/kubernetes/kubelet-config.yaml \\

--hostname-override=##NODE_NODE_NAME## \\

--pod-infra-container-image=registry.cn-beijing.aliyuncs.com/k8s_images/pause-amd64:3.1 \\

--image-pull-progress-deadline=15m \\

--volume-plugin-dir=${K8S_DIR}/kubelet/kubelet-plugins/volume/exec/ \\

--logtostderr=true \\

--v=2

Restart=always

RestartSec=5

StartLimitInterval=0

[Install]

WantedBy=multi-user.target

EOF

解释说明:

-> 如果设置了 --hostname-override 选项,则 kube-proxy 也需要设置该选项,否则会出现找不到 Node 的情况;

-> --bootstrap-kubeconfig:指向 bootstrap kubeconfig 文件,kubelet 使用该文件中的用户名和 token 向 kube-apiserver 发送 TLS Bootstrapping 请求;

-> K8S approve kubelet 的 csr 请求后,在 --cert-dir 目录创建证书和私钥文件,然后写入 --kubeconfig 文件;

-> --pod-infra-container-image 不使用 redhat 的 pod-infrastructure:latest 镜像,它不能回收容器的僵尸;

为各节点创建和分发 kubelet systemd unit 文件:

[root@k8s-master01 work]# cd /opt/k8s/work

[root@k8s-master01 work]# source /opt/k8s/bin/environment.sh

[root@k8s-master01 work]# for node_node_name in ${NODE_NODE_NAMES[@]}

do

echo ">>> ${node_node_name}"

sed -e "s/##NODE_NODE_NAME##/${node_node_name}/" kubelet.service.template > kubelet-${node_node_name}.service

scp kubelet-${node_node_name}.service root@${node_node_name}:/etc/systemd/system/kubelet.service

done

6)Bootstrap Token Auth 和授予权限

-> kubelet启动时查找--kubeletconfig参数对应的文件是否存在,如果不存在则使用 --bootstrap-kubeconfig 指定的 kubeconfig 文件向 kube-apiserver 发送证书签名请求 (CSR)。

-> kube-apiserver 收到 CSR 请求后,对其中的 Token 进行认证,认证通过后将请求的 user 设置为 system:bootstrap:<Token ID>,group 设置为 system:bootstrappers,

这一过程称为 Bootstrap Token Auth。

-> 默认情况下,这个 user 和 group 没有创建 CSR 的权限,kubelet 启动失败,错误日志如下:

# journalctl -u kubelet -a |grep -A 2 'certificatesigningrequests'

May 9 22:48:41 k8s-master01 kubelet[128468]: I0526 22:48:41.798230 128468 certificate_manager.go:366] Rotating certificates

May 9 22:48:41 k8s-master01 kubelet[128468]: E0526 22:48:41.801997 128468 certificate_manager.go:385] Failed while requesting a signed certificate from the master: cannot cre