Requests请求库

阅读目录

一 介绍

Python内置为我们提供了一个内置的模块叫urllib,是用于访问网络资源的,但是由于它内部缺少一些实用的功能,所以用起来比较麻烦。后来出现了一个第三方模块叫 "Requests",Requests 继承了urllib2的所有特性。Requests支持HTTP连接保持和连接池,支持使用cookie保持会话,支持文件上传,支持自动确定响应内容的编码,支持国际化的 URL 和 POST 数据自动编码。换句话说就是requests模块的功能比urllib更加强大!

Requests可以模拟浏览器的请求,比起之前用到的urllib模块更加便捷,因为requests本质上就是基于urllib3来封装的。

1、安装

安装:pip3 install requests

2、各种请求方式

>>> import requests >>> r = requests.get('https://api.github.com/events') >>> r = requests.post('http://httpbin.org/post', data = {'key':'value'}) >>> r = requests.put('http://httpbin.org/put', data = {'key':'value'}) >>> r = requests.delete('http://httpbin.org/delete') >>> r = requests.head('http://httpbin.org/get') >>> r = requests.options('http://httpbin.org/get') 建议在正式学习requests前,先熟悉下HTTP协议 https://www.cnblogs.com/kermitjam/articles/9692568.html

官网链接:http://docs.python-requests.org/en/master/

二 基于GET请求

1、基本请求

import requests response = requests.get('https://www.cnblogs.com/kermitjam/') print(response.text)

2、带参数的GET请求->params

from urllib.parse import urlencode import requests # q后面携带的是中文墨菲定律 response1 = requests.get('https://list.tmall.com/search_product.htm?q=%C4%AB%B7%C6%B6%A8%C2%C9') print(response1.text) # 因为字符编码的问题,以至于中文变成了一些特殊的字符,所以我们要找到一种解决方案 url = 'https://list.tmall.com/search_product.htm?' + urlencode({'q': '墨菲定律'}) response2 = requests.get(url) print(response2.text) # get方法为我们提供了一个参数params,它内部其实就是urlencode response3 = requests.get('https://list.tmall.com/search_product.htm?', params={"q": "墨菲定律"}) print(response3.text)

3、带参数的GET请求->headers

通常我们发送请求的时候都需要带上请求头,请求头是将爬虫程序伪装成浏览器的关键,最为常用的请求头如下:

Host # 当前目标站点的地址 Referer # 大型网站通常都会根据该参数判断请求的来源 User-Agent # 用来表示你的爬虫程序是浏览器客户端 Cookie # Cookie信息虽然包含在请求头里,但requests模块有单独的参数来处理他,headers={}内就不要放它了

# 添加headers(浏览器会识别请求头,不加可能会被拒绝访问,比如访问https://www.zhihu.com/explore) import requests response = requests.get('https://www.zhihu.com/explore') print(response.status_code) # 400 # 自己定制headers headers = { 'User-Agent': 'Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/46.0.2490.76 Mobile Safari/537.36', } response = requests.get('https://www.zhihu.com/explore', headers=headers) print(response.status_code) # 200

4、带参数的GET请求->cookies

import uuid import requests url = 'http://httpbin.org/cookies' cookies = dict(id=str(uuid.uuid4())) res = requests.get(url, cookies=cookies)

print(res.json())

5、GET请求跳过github登录

''' # GET请求跳过github 1.去github登录,赋值网页的cookies信息 2.直接携带cookies往settings/emails页面发送get请求查看手机号码是否在页面中 ''' import requests url = 'https://github.com/settings/emails' # 登录之后获取的cookies COOKIES = { 'Cookie': 'has_recent_activity=1; _device_id=7461de19eff07d6573a56a066339a960; _octo=GH1.1.319526069.1558361584; user_session=QRx3XyXhwr3AHjuII-Wxb8_ierjBAwbevSrHm4Rv6ZwXEUh-; __Host-user_session_same_site=QRx3XyXhwr3AHjuII-Wxb8_ierjBAwbevSrHm4Rv6ZwXEUh-; logged_in=yes; dotcom_user=TankJam; _ga=GA1.2.962318257.1558361589; _gat=1; tz=Asia%2FShanghai; _gh_sess=NFB0ZHIxckxVZjBkaXh1YlFwenRkOVNXQnczR0FnYy9VWUN6ZEdGOE4rSzR0U2FWZlRjM0JRdURTUWV5NVF0cmdIZVBiaUlUYTBSWGxnNklERFRuQzhaOFVBVW1SZ2ZjOHMzQWwxWHdKb1F3elh4M3JCbEkyVGZiMGNZVnlmeXVud1V3NFdGbVZFR2EvL0JUT1FQQzdaSTM5V3Uzc0F4WWxRWkxURGZDOWxSNEd1WXBScEREOHJvL0VGeFRCMlRUZDZ5bFZLemRvRkhmRUI4b0MyRWtVYmxDcm03VmlQcGJoZVkyMnRvMXJEVUI4VnhuUTVWREFXVXZORWcvYnJpWVl3a2w0MnJhbGFmd2JKY2pWWkJZYXc9PS0tOVBOVlNQTkFBZkdtNTBFaGgrc2pRZz09--e89729402a6138aeeddc8a191997ec1199eeaa5c' } HEADERS = { 'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_14_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.157 Safari/537.36' } response = requests.get(url, headers=HEADERS, cookies=COOKIES) print('15622792660' in response.text) # True

三 基于POST请求

1、介绍

''' GET请求: (HTTP默认的请求方法就是GET) * 没有请求体 * 数据必须在1K之内! * GET请求数据会暴露在浏览器的地址栏中 GET请求常用的操作: 1. 在浏览器的地址栏中直接给出URL,那么就一定是GET请求 2. 点击页面上的超链接也一定是GET请求 3. 提交表单时,表单默认使用GET请求,但可以设置为POST POST请求 (1). 数据不会出现在地址栏中 (2). 数据的大小没有上限 (3). 有请求体 (4). 请求体中如果存在中文,会使用URL编码! !!!requests.post()用法与requests.get()完全一致,特殊的是requests.post()有一个data参数,用来存放请求体数据! '''

2、发送post请求,模拟浏览器的登录行为

对于登录来说,应该在登录输入框内输错用户名或密码然后抓包分析通信流程,假如输对了浏览器就直接跳转了,还分析什么鬼?就算累死你也找不到数据包。

''' POST请求自动登录github。 github反爬: 1.session登录请求需要携带login页面返回的cookies 2.email页面需要携带session页面后的cookies ''' import requests import re # 一 访问login获取authenticity_token login_url = 'https://github.com/login' headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36', 'Referer': 'https://github.com/' } login_res = requests.get(login_url, headers=headers) # print(login_res.text) authenticity_token = re.findall('name="authenticity_token" value="(.*?)"', login_res.text, re.S)[0] # print(authenticity_token) login_cookies = login_res.cookies.get_dict() # 二 携带token在请求体内往session发送POST请求 session_url = 'https://github.com/session' session_headers = { 'Referer': 'https://github.com/login', 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36', } form_data = { "commit": "Sign in", "utf8": "✓", "authenticity_token": authenticity_token, "login": "tankjam", "password": "kermit46709394", 'webauthn-support': "supported" } # 三 开始测试是否登录 session_res = requests.post( session_url, data=form_data, cookies=login_cookies, headers=session_headers, # allow_redirects=False ) session_cookies = session_res.cookies.get_dict() url3 = 'https://github.com/settings/emails' email_res = requests.get(url3, cookies=session_cookies) print('15622792660' in email_res.text)

''' POST请求自动登录之Session对象: 通过requests.session可以携带之前所有请求的cookies ''' import requests import re # 一 访问login获取authenticity_token login_url = 'https://github.com/login' headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36', 'Referer': 'https://github.com/' } session = requests.session() login_res = session.get(login_url, headers=headers) # print(login_res.text) authenticity_token = re.findall('name="authenticity_token" value="(.*?)"', login_res.text, re.S)[0] # print(authenticity_token) # 二 携带token在请求体内往session发送POST请求 session_url = 'https://github.com/session' session_headers = { 'Referer': 'https://github.com/login', 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36', } form_data = { "commit": "Sign in", "utf8": "✓", "authenticity_token": authenticity_token, "login": "tankjam", "password": "kermit46709394", 'webauthn-support': "supported" } # 三 开始测试是否登录 session_res = session.post( session_url, data=form_data, # cookies=login_cookies, headers=session_headers, # allow_redirects=False ) url3 = 'https://github.com/settings/emails' email_res = session.get(url3) print('15622792660' in email_res.text)

3、注意

''' 注意点: 1.没有指定请求头,#默认的请求头:application/x-www-form-urlencoed。 2.如果我们自定义请求头是application/json,并且用data传值, 则服务端取不到值。 3.ost里面带有json参数,默认的请求头:application/json ''' import requests # 没有指定请求头,#默认的请求头:application/x-www-form-urlencoed requests.post(url='xxxxxxxx', data={'xxx': 'yyy'}) # 如果我们自定义请求头是application/json,并且用data传值, 则服务端取不到值 requests.post(url='', data={'id': 9527, }, headers={ 'content-type': 'application/json' }) # post里面带有json参数,默认的请求头:application/json requests.post(url='', json={'id': 9527, }, )

四 响应Response

1、response属性

import requests headers = { 'User-Agent': 'Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/46.0.2490.76 Mobile Safari/537.36', } response = requests.get('https://www.github.com', headers=headers) # response响应 print(response.status_code) # 获取响应状态码 print(response.url) # 获取url地址 print(response.text) # 获取文本 print(response.content) # 获取二进制流 print(response.headers) # 获取页面请求头信息 print(response.history) # 上一次跳转的地址 print(response.cookies) # # 获取cookies信息 print(response.cookies.get_dict()) # 获取cookies信息转换成字典 print(response.cookies.items()) # 获取cookies信息转换成字典 print(response.encoding) # 字符编码 print(response.elapsed) # 访问时间

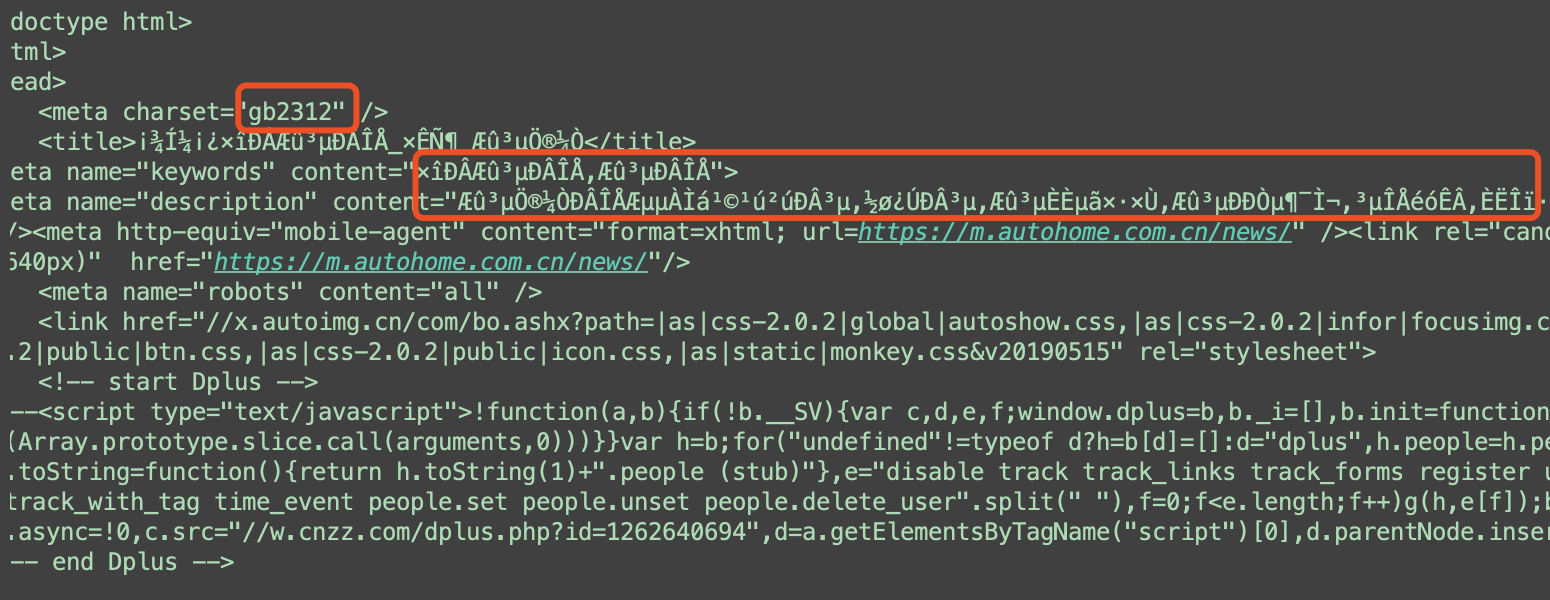

2、编码问题

import requests headers = { 'User-Agent': 'Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/46.0.2490.76 Mobile Safari/537.36', } # 编码问题 response = requests.get('http://www.autohome.com/news', headers=headers) # print(response.text) # 汽车之家网站返回的页面内容编码为gb2312,而requests的默认编码为ISO-8859-1,如果不设置成gbk则中文乱码 response.encoding = 'gbk' print(response.text)

3、获取二进制数据

import requests # 获取二进制流 url = 'https://timgsa.baidu.com/timg?image&quality=80&size=b9999_10000&sec=1557981645442&di=688744cc87ffd353a5720e5d942d588b&imgtype=0&src=http%3A%2F%2Fk.zol-img.com.cn%2Fsjbbs%2F7692%2Fa7691515_s.jpg' response = requests.get(url) print(response.content) # 一次性写入二进制流 with open('dog_baby.jpg', 'wb') as f: f.write(response.content)

# stream=True、iter_content() import requests url = 'https://vd3.bdstatic.com/mda-ic4pfhh3ex32svqi/hd/mda-ic4pfhh3ex32svqi.mp4?auth_key=1557973824-0-0-bfb2e69bb5198ff65e18065d91b2b8c8&bcevod_channel=searchbox_feed&pd=wisenatural&abtest=all.mp4' response = requests.get(url, stream=True) print(response.content) with open('love_for_GD.mp4', 'wb') as f: for content in response.iter_content(): f.write(content)

4、解析json

# 解析json import requests import json response = requests.get('https://landing.toutiao.com/api/pc/realtime_news/') print(response.text) # 返回json格式数据 # 通过json模块反序列化,但是太麻烦了 new_dict = json.loads(response.text) print(new_dict) # 返回的response内部给我们提供了解析json数据的方法 new_dict = response.json() print(new_dict)

5、Redirection and History

By default Requests will perform location redirection for all verbs except HEAD. We can use the history property of the Response object to track redirection. The Response.history list contains the Response objects that were created in order to complete the request. The list is sorted from the oldest to the most recent response. For example, GitHub redirects all HTTP requests to HTTPS: >>> r = requests.get('http://github.com') >>> r.url 'https://github.com/' >>> r.status_code 200 >>> r.history [<Response [301]>] If you're using GET, OPTIONS, POST, PUT, PATCH or DELETE, you can disable redirection handling with the allow_redirects parameter: >>> r = requests.get('http://github.com', allow_redirects=False) >>> r.status_code 301 >>> r.history [] If you're using HEAD, you can enable redirection as well: >>> r = requests.head('http://github.com', allow_redirects=True) >>> r.url 'https://github.com/' >>> r.history [<Response [301]>]

# redirect and history ''' commit: Sign in utf8: ✓ authenticity_token: login页面中获取 login: kermitjam password: kermit46709394 'webauthn-support': 'supported' ''' import requests import re # 一 访问login获取authenticity_token login_url = 'https://github.com/login' headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36', 'Referer': 'https://github.com/' } login_res = requests.get(login_url, headers=headers) # print(login_res.text) authenticity_token = re.findall('name="authenticity_token" value="(.*?)"', login_res.text, re.S)[0] print(authenticity_token) login_cookies = login_res.cookies.get_dict() # 二 携带token在请求体内往session发送POST请求 session_url = 'https://github.com/session' session_headers = { 'Referer': 'https://github.com/login', 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36', } form_data = { "commit": "Sign in", "utf8": "✓", "authenticity_token": authenticity_token, "login": "tankjam", "password": "kermit46709394", 'webauthn-support': 'supported' } # 三 开始测试history # 测试1 session_res = requests.post( session_url, data=form_data, cookies=login_cookies, headers=session_headers, # allow_redirects=False ) print(session_res.url) # 跳转后的地址>https://github.com/ print(session_res.history) # 返回的是一个列表,里面有跳转前的response对象 print(session_res.history[0].text) # 跳转前的response.text # 测试2 session_res = requests.post( session_url, data=form_data, cookies=login_cookies, headers=session_headers, allow_redirects=False ) print(session_res.status_code) # 302 print(session_res.url) # 取消跳转,返回的是当前url print(session_res.history) # 返回一个空列表

五 高级用法(了解)

1、SSL Cert Verification

- https://www.xiaohuar.com/

#证书验证(大部分网站都是https) import requests # 如果是ssl请求,首先检查证书是否合法,不合法则报错,程序终端 response = requests.get('https://www.xiaohuar.com') print(response.status_code) # 改进1:去掉报错,但是会报警告 import requests response = requests.get('https://www.xiaohuar.com', verify=False) # 不验证证书,报警告,返回200 print(response.status_code) # 改进2:去掉报错,并且去掉警报信息 import requests import urllib3 urllib3.disable_warnings() # 关闭警告 response = requests.get('https://www.xiaohuar.com', verify=False) print(response.status_code) # 改进3:加上证书 # 很多网站都是https,但是不用证书也可以访问,大多数情况都是可以携带也可以不携带证书 # 知乎\百度等都是可带可不带 # 有硬性要求的,则必须带,比如对于定向的用户,拿到证书后才有权限访问某个特定网站 import requests import urllib3 # urllib3.disable_warnings() # 关闭警告 response = requests.get( 'https://www.xiaohuar.com', # verify=False, cert=('/path/server.crt', '/path/key')) print(response.status_code)

2、超时设置

# 超时设置 # 两种超时:float or tuple # timeout=0.1 # 代表接收数据的超时时间 # timeout=(0.1,0.2) # 0.1代表链接超时 0.2代表接收数据的超时时间 import requests response = requests.get('https://www.baidu.com', timeout=0.0001)

3、使用代理

# 官网链接: http://docs.python-requests.org/en/master/user/advanced/#proxies # 代理设置:先发送请求给代理,然后由代理帮忙发送(封ip是常见的事情) import requests proxies={ # 带用户名密码的代理,@符号前是用户名与密码 'http':'http://tank:123@localhost:9527', 'http':'http://localhost:9527', 'https':'https://localhost:9527', } response=requests.get('https://www.12306.cn', proxies=proxies) print(response.status_code) # 支持socks代理,安装:pip install requests[socks] import requests proxies = { 'http': 'socks5://user:pass@host:port', 'https': 'socks5://user:pass@host:port' } respone=requests.get('https://www.12306.cn', proxies=proxies) print(respone.status_code)

''' 爬取西刺免费代理: 1.访问西刺免费代理页面 2.通过re模块解析并提取所有代理 3.通过ip测试网站对爬取的代理进行测试 4.若test_ip函数抛出异常代表代理作废,否则代理有效 5.利用有效的代理进行代理测试 <tr class="odd"> <td class="country"><img src="//fs.xicidaili.com/images/flag/cn.png" alt="Cn"></td> <td>112.85.131.99</td> <td>9999</td> <td> <a href="/2019-05-09/jiangsu">江苏南通</a> </td> <td class="country">高匿</td> <td>HTTPS</td> <td class="country"> <div title="0.144秒" class="bar"> <div class="bar_inner fast" style="width:88%"> </div> </div> </td> <td class="country"> <div title="0.028秒" class="bar"> <div class="bar_inner fast" style="width:97%"> </div> </div> </td> <td>6天</td> <td>19-05-16 11:20</td> </tr> re: <tr class="odd">(.*?)</td>.*?<td>(.*?)</td> ''' import requests import re import time HEADERS = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36', } def get_index(url): time.sleep(1) response = requests.get(url, headers=HEADERS) return response def parse_index(text): ip_list = re.findall('<tr class="odd">.*?<td>(.*?)</td>.*?<td>(.*?)</td>', text, re.S) for ip_port in ip_list: ip = ':'.join(ip_port) yield ip def test_ip(ip): print('测试ip: %s' % ip) try: proxies = { 'https': ip } # ip测试网站 ip_url = 'https://www.ipip.net/' # 使用有效与无效的代理对ip测试站点进行访问,若返回的结果为200则代表当前测试ip正常 response = requests.get(ip_url, headers=HEADERS, proxies=proxies, timeout=1) if response.status_code == 200: return ip # 若ip代理无效则抛出异常 except Exception as e: print(e) # 使用代理爬取nba def spider_nba(good_ip): url = 'https://china.nba.com/' proxies = { 'https': good_ip } response = requests.get(url, headers=HEADERS, proxies=proxies) print(response.status_code) print(response.text) if __name__ == '__main__': base_url = 'https://www.xicidaili.com/nn/{}' for line in range(1, 3677): ip_url = base_url.format(line) response = get_index(ip_url) ip_list = parse_index(response.text) for ip in ip_list: # print(ip) good_ip = test_ip(ip) if good_ip: # 真是代理,开始测试 spider_nba(good_ip)

4、认证设置

# 认证设置 ''' 登录网站时,会弹出一个框,要求你输入用户名与密码(类似于alert),此时无法进入html页面,待授权通过后才能进入html页面。 Requests模块为我们提供了多种身份认证方式,包括基本身份认证等... 其原理指的是通过输入用户名与密码获取用户的凭证来识别用户,然后通过token对用户进行授权。 基本身份认证: HTTP Basic Auth是HTTP1.0提出的认证方式。客户端对于每一个realm,通过提供用户名和密码来进行认证的方式当认证失败时,服务器收到客户端请求,返回401。 ''' import requests # 通过访问github的api来测试 url = 'https://api.github.com/user' HEADERS = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36', } # 测试1,失败返回401 response = requests.get(url, headers=HEADERS) print(response.status_code) # 401 print(response.text) ''' 打印结果: { "message": "Requires authentication", "documentation_url": "https://developer.github.com/v3/users/#get-the-authenticated-user" } ''' # 测试2,通过requests.auth内的HTTPBasicAuth进行认证,认证成功返回用户信息 from requests.auth import HTTPBasicAuth response = requests.get(url, headers=HEADERS, auth=HTTPBasicAuth('tankjam', 'kermit46709394')) print(response.text) # 测试3,通过requests.get请求内的auth参数默认就是HTTPBasicAuth,认证成功返回用户信息 response = requests.get(url, headers=HEADERS, auth=('tankjam', 'kermit46709394')) print(response.text) ''' 打印结果: { "login": "TankJam", "id": 38001458, "node_id": "MDQ6VXNlcjM4MDAxNDU4", "avatar_url": "https://avatars2.githubusercontent.com/u/38001458?v=4", "gravatar_id": "", "url": "https://api.github.com/users/TankJam", "html_url": "https://github.com/TankJam", "followers_url": "https://api.github.com/users/TankJam/followers", "following_url": "https://api.github.com/users/TankJam/following{/other_user}", "gists_url": "https://api.github.com/users/TankJam/gists{/gist_id}", "starred_url": "https://api.github.com/users/TankJam/starred{/owner}{/repo}", "subscriptions_url": "https://api.github.com/users/TankJam/subscriptions", "organizations_url": "https://api.github.com/users/TankJam/orgs", "repos_url": "https://api.github.com/users/TankJam/repos", "events_url": "https://api.github.com/users/TankJam/events{/privacy}", "received_events_url": "https://api.github.com/users/TankJam/received_events", "type": "User", "site_admin": false, "name": "kermit", "company": null, "blog": "", "location": null, "email": null, "hireable": null, "bio": null, "public_repos": 6, "public_gists": 0, "followers": 0, "following": 0, "created_at": "2018-04-02T09:39:33Z", "updated_at": "2019-05-14T07:47:20Z", "private_gists": 0, "total_private_repos": 1, "owned_private_repos": 1, "disk_usage": 8183, "collaborators": 0, "two_factor_authentication": false, "plan": { "name": "free", "space": 976562499, "collaborators": 0, "private_repos": 10000 } } '''

5、异常处理

# 异常处理 import requests from requests.exceptions import * # 可以查看requests.exceptions获取异常类型 try: r = requests.get('http://www.baidu.com', timeout=0.00001) except ReadTimeout: print('===:') except ConnectionError: # 网络不通 print('-----') except Timeout: print('aaaaa') except RequestException: print('Error')

6、上传文件

# 6.上传文件 import requests # 上传文本文件 files1 = {'file': open('user.txt', 'rb')} response = requests.post('http://httpbin.org/post', files=files1) print(response.status_code) # 200 print(response.text) # 200 # 上传图片文件 files2 = {'jpg': open('小狗.jpg', 'rb')} response = requests.post('http://httpbin.org/post', files=files2) print(response.status_code) # 200 print(response.text) # 200 # 上传视频文件 files3 = {'movie': open('love_for_GD.mp4', 'rb')} response = requests.post('http://httpbin.org/post', files=files3) print(response.status_code) # 200 print(response.text) # 200

六 课后作业

''' 1.爬取豆瓣电影top250 https://movie.douban.com/top250 2.爬取豆瓣电影影评 https://movie.douban.com/review/best/ 3.爬取笔趣者网热门小说 https://www.biqukan.com/paihangbang/ 4.爬取搜狗图片主页图片 https://pic.sogou.com/ '''

import requests import re # response = requests.get("https://movie.douban.com/top250") # print(response) # print(response.text) # 获取url def get_html(url): response = requests.get(url) return response # 获取正则后的标签 re.S是匹配所有的字符包括空格 def parser_html(response): # 使用re正则匹配出图片地址、评分、评论人数 res = re.findall(r'<div class="item">.*?<a href=(.*?)>.*?<span class="title">(.*?)</span>.*?<span class="rating_num".*?>(.*?)</span>.*?<span>(\d+)人评价', response.text, re.S) print(res) return res # 文本模式实现数据持久化 def store(ret): with open("douban.text", "a", encoding="utf8") as f: for item in ret: f.write(" ".join(item) + "\n") # 爬虫三部曲 def do_spider(url): # 1.爬取资源 # "https://movie.douban.com/top250" response = get_html(url) # 2.解析资源 ret = parser_html(response) # 3.数据持久化 store(ret) # 启动爬虫函数 def main(): import time c = time.time() # 初始页数 count = 0 for i in range(10): # 豆瓣电影网爬取数据规律 url = "https://movie.douban.com/top250?start=%s&filter=" % count do_spider(url) count += 25 print(time.time() - c) if __name__ == '__main__': main()

''' 绿皮书影评: 影评接口 第一页: https://movie.douban.com/subject/27060077/comments?status=P https://movie.douban.com/subject/27060077/comments?start=0&limit=20&sort=new_score&status=P&comments_only=1 第二页: https://movie.douban.com/subject/27060077/comments?start=20&limit=20&sort=new_score&status=P&comments_only=1 第二十五页: https://movie.douban.com/subject/27060077/comments?start=480&limit=20&sort=new_score&status=P&comments_only=1 注意: 1.登录前豆瓣影评提供10页 2.登录过后影评豆瓣提供25页 ''' ''' 登录前 ''' import requests import re HEADERS = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.77 Safari/537.36', } def get_page(url): response = requests.get(url, headers=HEADERS) movie_json = response.json() # print(movie_json) data = movie_json.get('html') print(data) return data def parse_data(data): # 正则匹配 # 用户id,用户名,用户主页,用户头像,用户评价,评价时间,影评 res = re.findall('<div class="comment-item" data-cid="(.*?)">.*?<a title="(.*?)" href="(.*?)">.*?src="(.*?)".*?<span class="allstar50 rating" title="(.*?)">.*?<span class="comment-time " title="(.*?)">.*?<span class="short">(.*?)</span>', data, re.S) print(res) if __name__ == '__main__': base_url = 'https://movie.douban.com/subject/27060077/comments?start={}&limit=20&sort=new_score&status=P&comments_only=1' num = 0 for line in range(10): url = base_url.format(num) num += 20 # print(url) data = get_page(url) parse_data(data)

浙公网安备 33010602011771号

浙公网安备 33010602011771号