基于 MPI/OpenMP 混合编程的大规模多体(N-Body)问题仿真实验

完整代码:

#include <iostream>

#include <ctime>

#include <mpi.h>

#include <omp.h>

#include <cstdlib>

#include <iomanip>

#include <Windows.h>

#include <cmath>

#include <algorithm>

using namespace std;

const long double G = 6.67 * pow(10, -11);

const int STAR_NUM = 32;

const int THREAD_NUM = 4;

const long int TIME_STEP = 3600;

const int MAX_PROCESS = 128;

struct Star

{

long double x, y, z; // Position

long double vx, vy, vz; // Speed

long double ax, ay, az; // Acceleration

long double nax, nay, naz; // Acceleration of next iteration

long double m; // Mass

};

Star stars[STAR_NUM];

Star temp[STAR_NUM];

Star buffer[STAR_NUM];

void updateNextAcceration(Star& a, Star& b) {

long double dx = a.x - b.x;

long double dy = a.y - b.y;

long double dz = a.z - b.z;

long double r2 = pow(dx, 2) + pow(dy, 2) + pow(dz, 2);

long double A = G * a.m / r2;

long double r = sqrt(r2);

long double k = A / r;

b.nax += k * dx;

b.nay += k * dy;

b.naz += k * dz;

}

void updateAcceration(Star& star) {

star.ax = star.nax;

star.ay = star.nay;

star.az = star.naz;

star.nax = 0;

star.nay = 0;

star.naz = 0;

}

void updateSpeed(Star& star) {

star.vx += star.ax * TIME_STEP;

star.vy += star.ay * TIME_STEP;

star.vz += star.az * TIME_STEP;

}

void updatePosition(Star& star) {

star.x += star.vx * TIME_STEP;

star.y += star.vy * TIME_STEP;

star.z += star.vz * TIME_STEP;

}

void print(Star* stars, int num=STAR_NUM) {

cout << setiosflags(ios::left) << setw(4) << "Num" << setw(16) << "x" << setw(16) << "y" << setw(16) << "z"

<< setw(16) << "Speed x" << setw(16) << "Speed y" << setw(16) << "Speed z"

<< setw(16) << "Acceleration x" << setw(16) << "Acceleration y" << setw(16) << "Acceleration z"

<< setw(16) << "Next a x" << setw(16) << "Next a y" << setw(16) << "Next a z"

<< setw(16) << "Mass" << endl;

for (int i = 0; i < num; i++) {

cout << setiosflags(ios::left) << setw(4) << i << setw(16) << stars[i].x << setw(16) << stars[i].y << setw(16) << stars[i].z

<< setw(16) << stars[i].vx << setw(16) << stars[i].vy << setw(16) << stars[i].vz

<< setw(16) << stars[i].ax << setw(16) << stars[i].ay << setw(16) << stars[i].az

<< setw(16) << stars[i].nax << setw(16) << stars[i].nay << setw(16) << stars[i].naz

<< setw(16) << stars[i].m << endl;

}

}

int main(int argc, char* argv[]) {

MPI_Init(&argc, &argv);

int rank, size, real_size;

MPI_Comm_rank(MPI_COMM_WORLD, &rank);

MPI_Comm_size(MPI_COMM_WORLD, &size);

real_size = min(STAR_NUM, size);

// 每个进程的工作量

int part = STAR_NUM / real_size;

if (part * real_size < STAR_NUM) part++; // 每个进程的工作量已确定

// 现在要剔除不需要的进程

size = STAR_NUM / part;

if (size * part < STAR_NUM) size++; // 进程数已确定

MPI_Comm COMM_WORLD;

if (rank < size) {

MPI_Comm_split(MPI_COMM_WORLD, 1, rank, &COMM_WORLD);

}else {

MPI_Comm_split(MPI_COMM_WORLD, MPI_UNDEFINED, rank, &COMM_WORLD);

}

MPI_Comm_rank(COMM_WORLD, &rank);

MPI_Comm_size(COMM_WORLD, &size);

//int part = STAR_NUM / size;

//if (part * size < STAR_NUM) part++;

// Create custome mpi datatype.

const int nitems = 13;

int blocklengths[nitems] = { 1,1,1,1, 1,1, 1,1, 1,1, 1,1,1 };

MPI_Datatype types[nitems] = { MPI_LONG_DOUBLE,MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE, MPI_LONG_DOUBLE };

MPI_Datatype MPI_STAR;

MPI_Aint offsets[nitems];

offsets[0] = offsetof(Star, x);

offsets[1] = offsetof(Star, y);

offsets[2] = offsetof(Star, z);

offsets[3] = offsetof(Star, vx);

offsets[4] = offsetof(Star, vy);

offsets[5] = offsetof(Star, vz);

offsets[6] = offsetof(Star, ax);

offsets[7] = offsetof(Star, ay);

offsets[8] = offsetof(Star, az);

offsets[9] = offsetof(Star, nax);

offsets[10] = offsetof(Star, nay);

offsets[11] = offsetof(Star, naz);

offsets[12] = offsetof(Star, m);

MPI_Type_create_struct(nitems, blocklengths, offsets, types, &MPI_STAR);

MPI_Type_commit(&MPI_STAR);

omp_set_num_threads(THREAD_NUM);

if (rank == 0) {

cout << "Generating origin data..." << endl;

srand(time(NULL));

for (int i = 0; i < STAR_NUM; i++) {

stars[i].m = pow(10, 21) + ((long double)rand() / (RAND_MAX)) * pow(10, 22);

stars[i].x = pow(10, 7) + ((long double)rand() / (RAND_MAX)) * pow(10, 8);

stars[i].y = pow(10, 7) + ((long double)rand() / (RAND_MAX)) * pow(10, 8);

stars[i].z = pow(10, 7) + ((long double)rand() / (RAND_MAX)) * pow(10, 8);

stars[i].vx = 0;

stars[i].vy = 0;

stars[i].vz = 0;

stars[i].ax = 0;

stars[i].ay = 0;

stars[i].az = 0;

stars[i].nax = 0;

stars[i].nay = 0;

stars[i].naz = 0;

}

}

MPI_Bcast(&stars, STAR_NUM, MPI_STAR, 0, COMM_WORLD);

int loopstart = part * rank;

int loopend = min(STAR_NUM, (rank + 1) * part);

while (true)

{

// 利用 OpenMP 加速此循环

#pragma omp parallel for

for (int i = loopstart; i < loopend; i++) {

Star* s = &(stars[i]);

updatePosition(*s);

}

// 计算下次的加速度需要来自其他进程的数据

MPI_Request req;

//MPI_Ibcast(&stars, STAR_NUM, MPI_STAR, rank, COMM_WORLD, &req);

//MPI_Gather(&stars, STAR_NUM, MPI_STAR, &temp, STAR_NUM, MPI_STAR, rank, COMM_WORLD);

int start = part * rank;

int end = min(part * (rank + 1), STAR_NUM);

int* displs, * rcounts;

displs = (int*)malloc(size * sizeof(int));

rcounts = (int*)malloc(size * sizeof(int));

for (int i = 0; i < size; i++) {

displs[i] = i * part;

rcounts[i] = end - start;

}

//cout << "rank " << rank << "end - start" << end << " - " << start << endl;

MPI_Gatherv(&stars[start], end - start, MPI_STAR, &temp, rcounts, displs, MPI_STAR, 0, COMM_WORLD);

MPI_Bcast(&temp, STAR_NUM, MPI_STAR, 0, COMM_WORLD);

// 现在 temp 中存的是更新后的数据

for (int i = loopstart; i < loopend; i++) {

for (int j = 0; j < STAR_NUM; j++) {

if (i == j) continue;

updateNextAcceration(temp[j], stars[i]);

}

}

//print(stars);

for (int i = loopstart; i < loopend; i++) {

cout << rank<<" "<<setiosflags(ios::left) << setw(4) << i << setw(16) << stars[i].x << setw(16) << stars[i].y << setw(16) << stars[i].z

<< setw(16) << stars[i].vx << setw(16) << stars[i].vy << setw(16) << stars[i].vz

<< setw(16) << stars[i].ax << setw(16) << stars[i].ay << setw(16) << stars[i].az

<< setw(16) << stars[i].nax << setw(16) << stars[i].nay << setw(16) << stars[i].naz

<< setw(16) << stars[i].m << endl;

}

// 利用 OpenMP 加速此循环

#pragma omp parallel for

for (int i = loopstart; i < loopend; i++) {

Star* s = &(stars[i]);

updateAcceration(*s);

}

// 利用 OpenMP 加速此循环

#pragma omp parallel for

for (int i = loopstart; i < loopend; i++) {

Star* s = &(stars[i]);

updateSpeed(*s);

}

}

}

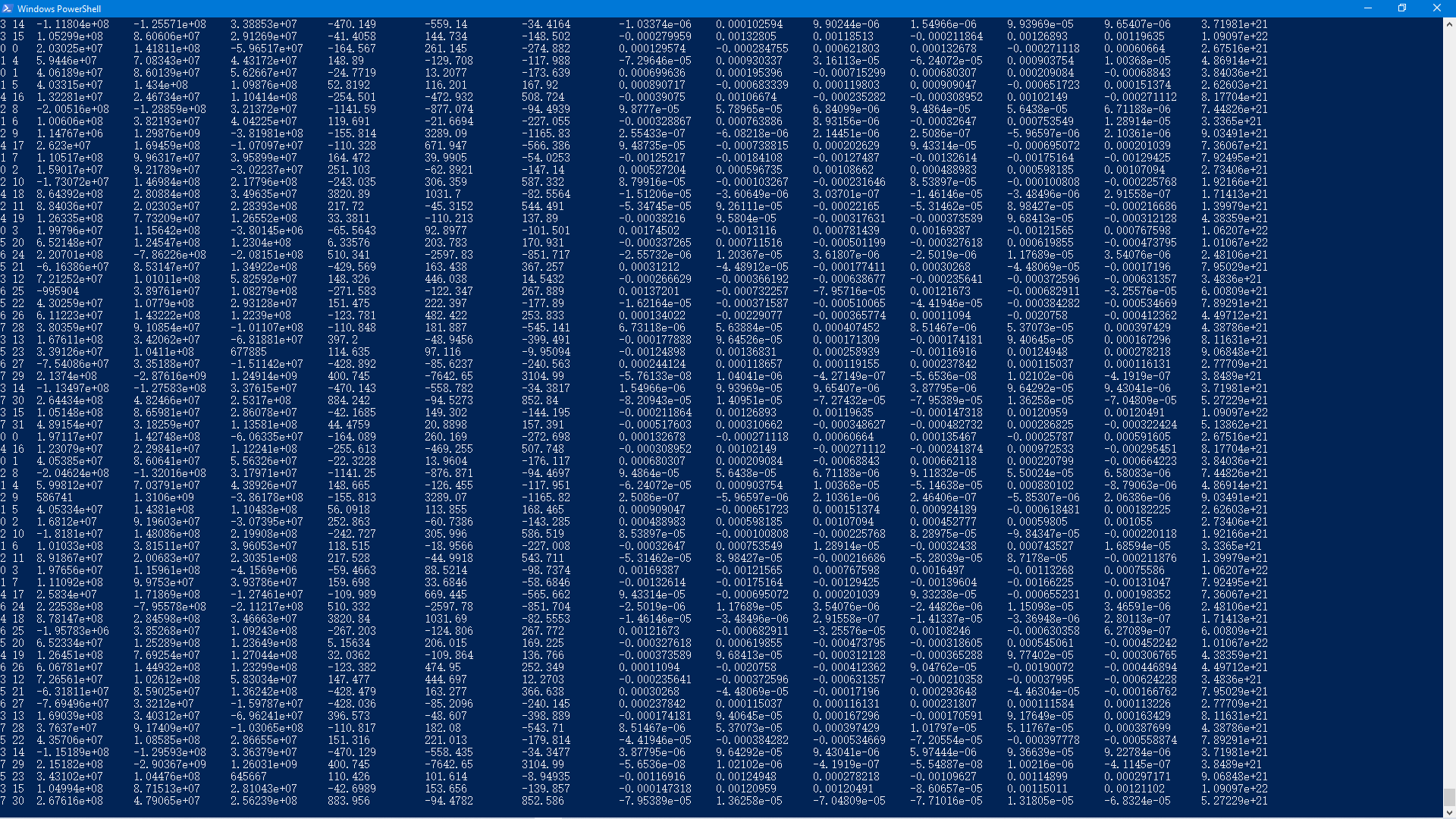

运行截图:

【未经允许,禁止转载】