测试环境 - 基于K8s搭建Prometheus和Grafana环境

前言

本文操作系统环境为Windows 10;

本文k8s环境为minikube v1.15.1(如何部署minikube请自行baidu);

本文Docker环境版本为v19.03.13;

本文环境所用Namespace,使用得是k8s自带得kube-system;

本文环境为单节点部署,不涉及高可用场景;

本文假设minikube、kubectl两个基本命令已经可以正常使用。

部署

启动k8s

minikube start

拉去镜像

minikube ssh #ssh进入k8s环境

docker pull prom/node-exporter:latest #下载node监控基础镜像

docker pull prom/prometheus:latest #下载Prometheus基础镜像

docker pull grafana/grafana:latest #下载grafana基础镜像

exit #退出ssh连接环境

创建监控信息

- 准备node-exporter.yaml文件

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: kube-system

labels:

k8s-app: node-exporter

spec:

selector:

matchLabels:

k8s-app: node-exporter

template:

metadata:

labels:

k8s-app: node-exporter

spec:

containers:

- image: prom/node-exporter

name: node-exporter

ports:

- containerPort: 9100

protocol: TCP

name: http

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: node-exporter

name: node-exporter

namespace: kube-system

spec:

ports:

- name: http

port: 9100

nodePort: 31672

protocol: TCP

type: NodePort

selector:

k8s-app: node-exporter

- 执行创建命令

kubectl create -f node-exporter.yaml

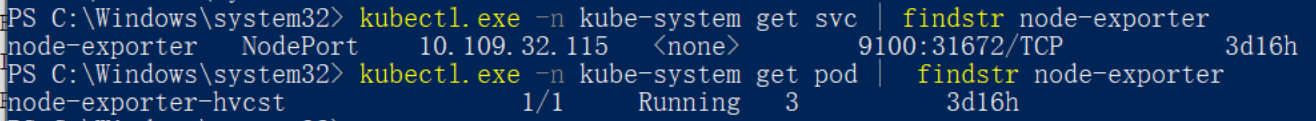

- 查看实际部署情况

部署Prometheus

rbac-setup.yaml

- 配置文件

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups:

- extensions

resources:

- ingresses

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: kube-system

- 执行脚本

kubectl create -f rbac-setup.yaml

configmap.yaml

- 配置文件

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: kube-system

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

rule_files:

- /etc/prometheus/rules.yml

alerting:

alertmanagers:

- static_configs:

- targets: ["alertmanager:9093"]

scrape_configs:

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-services'

kubernetes_sd_configs:

- role: service

metrics_path: /probe

params:

module: [http_2xx]

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__address__]

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

target_label: kubernetes_name

- job_name: 'kubernetes_node'

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

# 基于endpoint的服务发现,不再经过service代理层面

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape, __meta_kubernetes_endpoint_port_name]

regex: true;prometheus-node-exporter

action: keep

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: (.+)(?::\d+);(\d+)

replacement: $1:$2

# 去掉label name中的前缀__meta_kubernetes_service_label_

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

# 为了区分所属node,把instance 从node-exporter ep的实例,替换成ep所在node的ip

- source_labels: [__meta_kubernetes_pod_host_ip]

regex: '(.*)'

replacement: '${1}'

target_label: instance

- 执行脚本

kubectl create -f configmap.yaml

prometheus.deploy.yaml

- 配置文件

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

name: prometheus-deployment

name: prometheus

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

containers:

- image: prom/prometheus:latest

name: prometheus

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus"

- "--storage.tsdb.retention=24h"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: "/prometheus"

name: data

- mountPath: "/etc/prometheus"

name: config-volume

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 500m

memory: 2500Mi

serviceAccountName: prometheus

volumes:

- name: data

emptyDir: {}

- name: config-volume

configMap:

name: prometheus-config

- 执行脚本

kubectl create -f prometheus.deploy.yml

prometheus.svc.yaml

- 配置文件

---

kind: Service

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus

namespace: kube-system

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

nodePort: 30003

selector:

app: prometheus

- 执行脚本

kubectl create -f prometheus.svc.yml

部署grafana

pv.yaml

- 配置文件

apiVersion: v1

kind: PersistentVolume

metadata:

name: pvtest

labels:

name: pvtest

spec:

nfs:

path: /data/volumes/v1

server: nfs

accessModes: ["ReadWriteMany","ReadWriteOnce"]

capacity:

storage: 5Gi

- 执行脚本

kubectl apply -f pv.yaml

pvc.yaml

- 配置文件

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvctest

namespace: kube-system

spec:

accessModes: ["ReadWriteMany"]

resources:

requests:

storage: 4Gi

- 执行脚本

kubectl apply -f pvc.yaml

grafana-deploy.yaml

- 配置文件

apiVersion: apps/v1

kind: Deployment

metadata:

name: grafana

namespace: kube-system

labels:

app: grafana

spec:

replicas: 1

selector:

matchLabels:

app: grafana

template:

metadata:

labels:

app: grafana

spec:

volumes:

- name: storage

persistentVolumeClaim:

claimName: pvctest

securityContext:

fsGroup: 472

runAsUser: 472

containers:

- name: grafana

image: grafana/grafana:latest

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3000

name: grafana

env:

- name: GF_SECURITY_ADMIN_USER

value: admin

- name: GF_SECURITY_ADMIN_PASSWORD

value: admin321

resources:

limits:

memory: "1Gi"

cpu: "500m"

requests:

memory: "500Mi"

cpu: "100m"

volumeMounts:

- mountPath: /var/lib/grafana

subPath: ""

name: storage

- 执行脚本

kubectl create -f grafana-deploy.yaml

grafana-svc.yaml

- 配置文件

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: kube-system

labels:

app: grafana

component: core

spec:

type: NodePort

ports:

- port: 3000

targetPort: 3000

nodePort: 30004

selector:

app: grafana

- 执行脚本

kubectl create -f grafana-svc.yaml

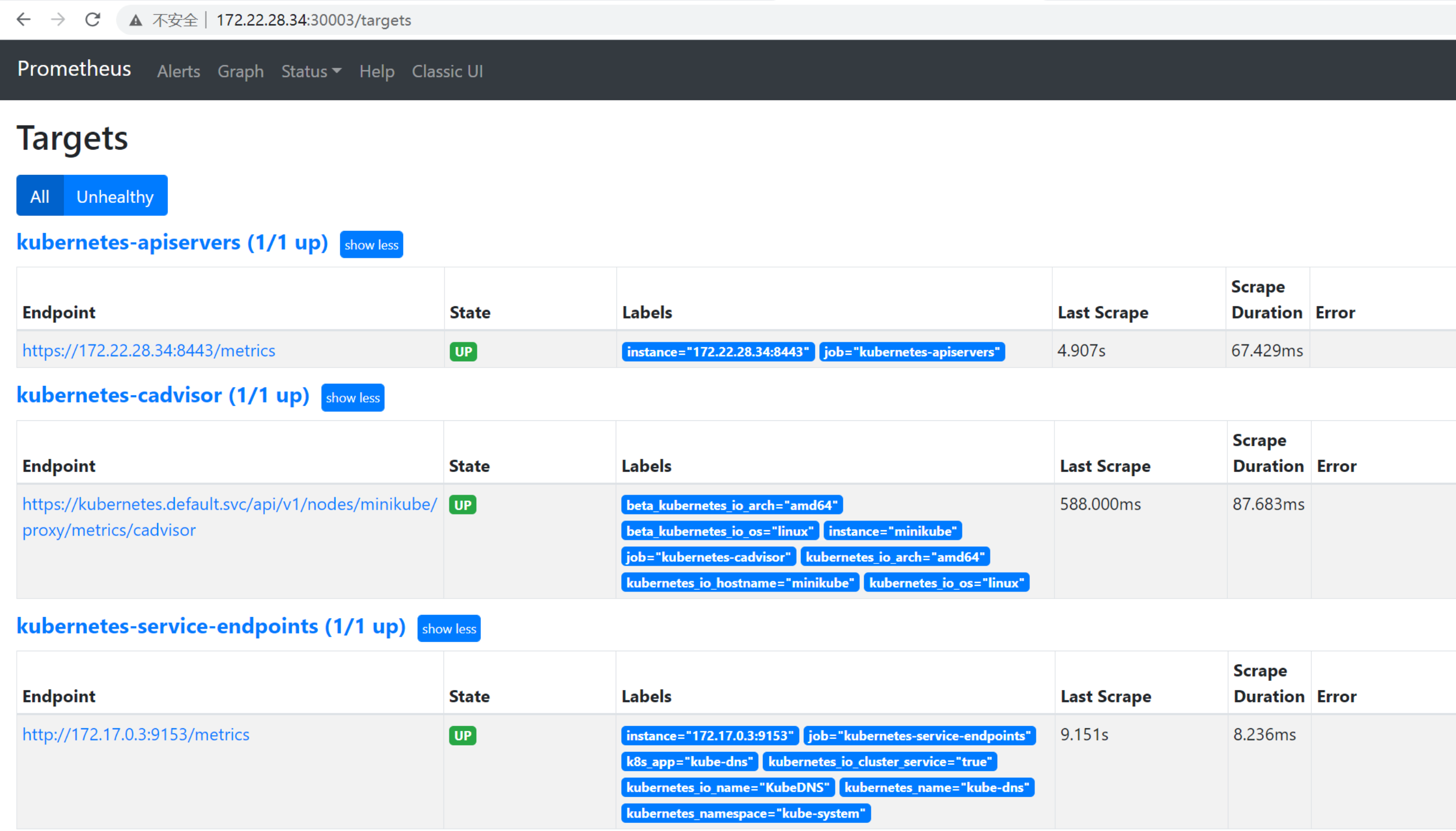

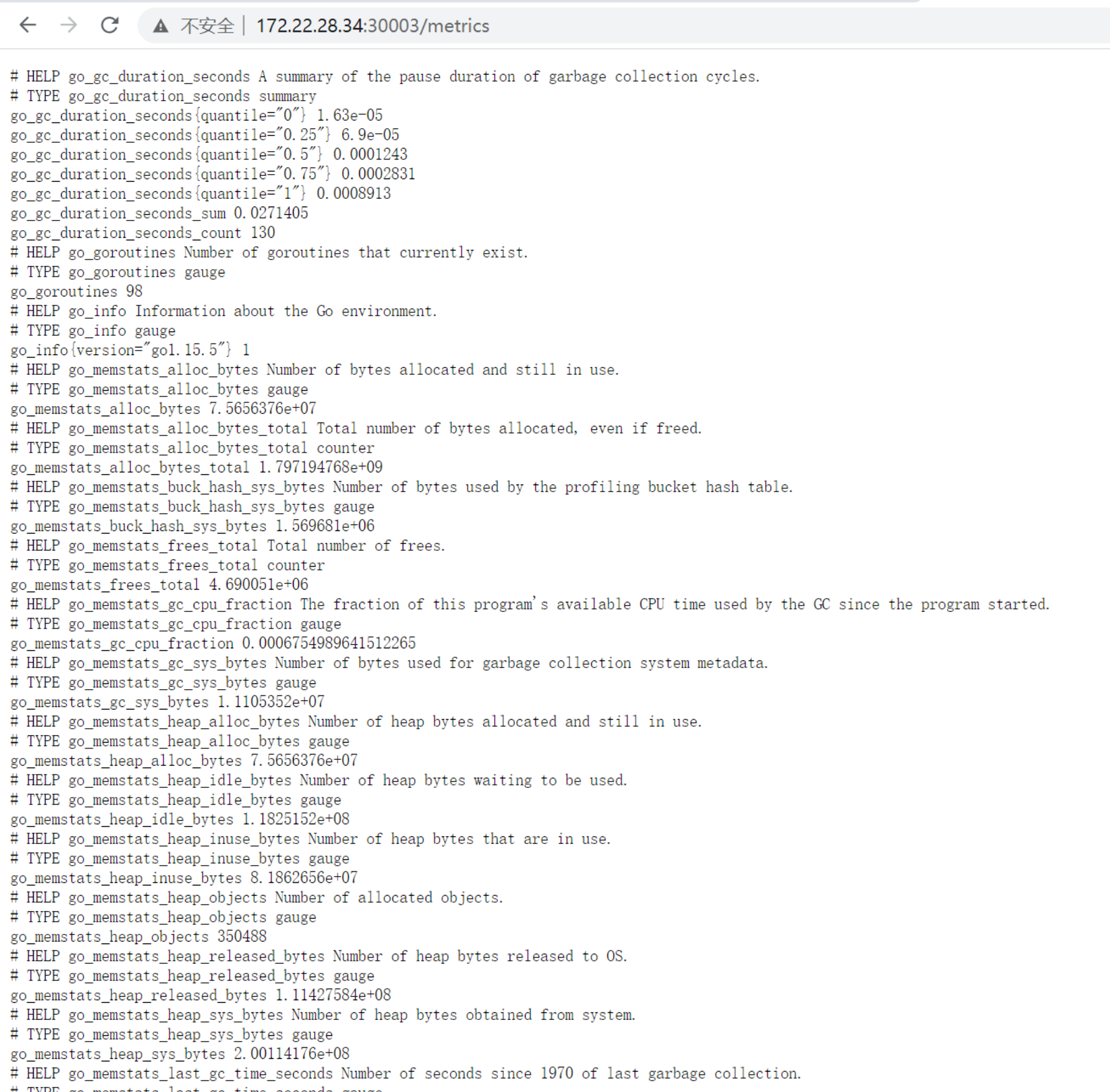

环境验证

-

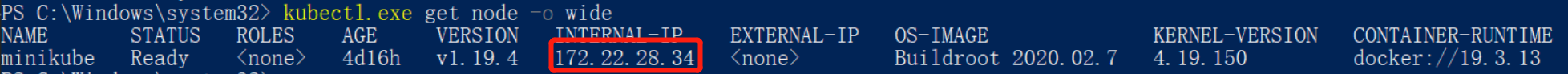

查看Node节点IP

-

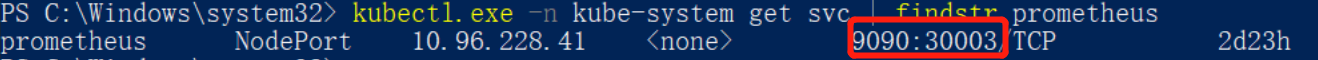

查看Prometheus对外端口

-

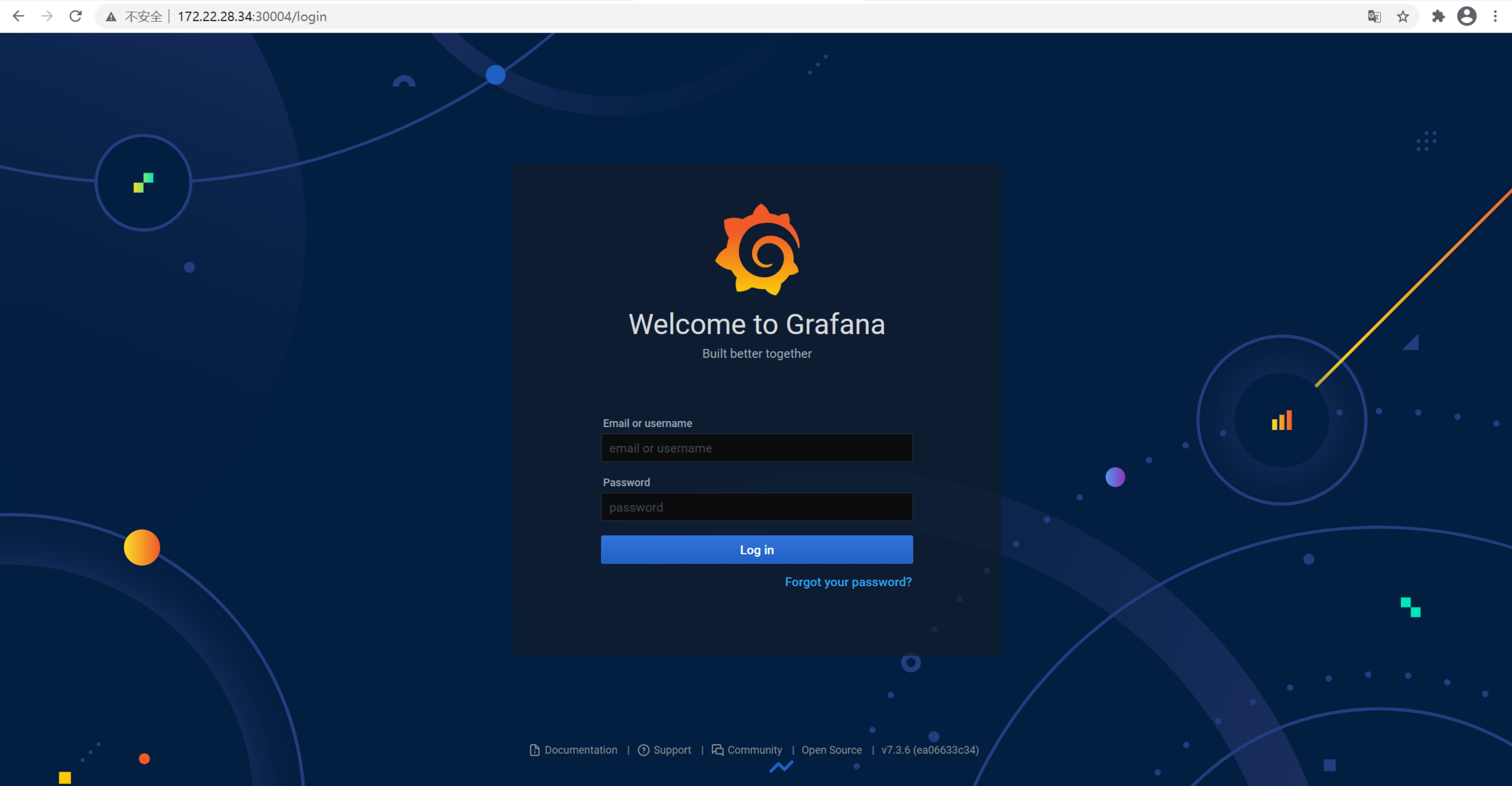

查看Grafana对外端口

-

页面查看Prometheus

-

页面查看Grafana(登录账号: admin/admin321)