使用 Ceph 作为 OpenStack 的统一存储解决方案

2019-04-25 19:37 云物互联 阅读(2806) 评论(0) 编辑 收藏 举报目录

文章目录

前文列表

《手动部署 OpenStack Rocky 双节点》

《手动部署 Ceph Mimic 三节点》

统一存储解决方案

OpenStack 使用 Ceph 作为后端存储可以带来以下好处:

- 不需要购买昂贵的商业存储设备,降低 OpenStack 的部署成本

- Ceph 同时提供了块存储、文件系统和对象存储,能够完全满足 OpenStack 的存储类型需求

- RBD COW 特性支持快速的并发启动多个 OpenStack 实例

- 为 OpenStack 实例默认的提供持久化卷

- 为 OpenStack 卷提供快照、备份以及复制功能

- 为 Swift 和 S3 对象存储接口提供了兼容的 API 支持

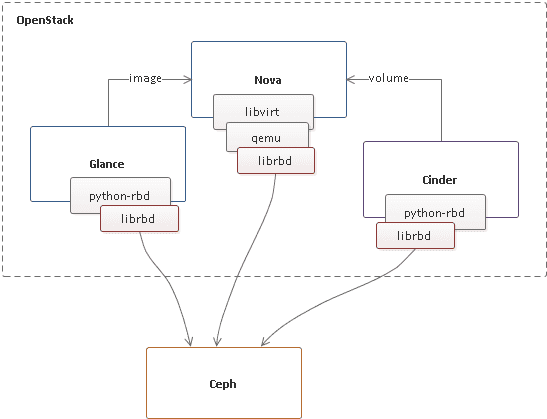

在生产环境中,我们经常能够看见将 Nova、Cinder、Glance 与 Ceph RBD 进行对接。除此之外,还可以将 Swift、Manila 分别对接到 Ceph RGW 与 CephFS。Ceph 作为统一存储解决方案,有效降低了 OpenStack 云环境的复杂性与运维成本。

配置 Ceph RBD

NOTE:以下执行步骤基于现有的 OpenStack 双节点和 Ceph 三节点集群之上,请参照前文列表中提供的部署架构。

- Images: OpenStack Glance manages images for VMs. Images are immutable. OpenStack treats images as binary blobs and downloads them accordingly.

- Volumes: Volumes are block devices. OpenStack uses volumes to boot VMs, or to attach volumes to running VMs. OpenStack manages volumes using Cinder services.

- Guest Disks: Guest disks are guest operating system disks. By default, when you boot a virtual machine, its disk appears as a file on the filesystem of the hypervisor (usually under /var/lib/nova/instances//). Prior to OpenStack Havana, the only way to boot a VM in Ceph was to use the boot-from-volume functionality of Cinder. However, now it is possible to boot every virtual machine inside Ceph directly without using Cinder, which is advantageous because it allows you to perform maintenance operations easily with the live-migration process. Additionally, if your hypervisor dies it is also convenient to trigger nova evacuate and run the virtual machine elsewhere almost seamlessly.

为 Glance、Nova、Cinder 创建专用的 RBD Pools:

# glance-api

ceph osd pool create images 128

rbd pool init images

# cinder-volume

ceph osd pool create volumes 128

rbd pool init volumes

# cinder-backup [可选]

ceph osd pool create backups 128

rbd pool init backups

# nova-compute

ceph osd pool create vms 128

rbd pool init vms

为 OpenStack 节点上安装 Ceph 客户端:

ssh-copy-id -i ~/.ssh/id_rsa.pub root@controller

ssh-copy-id -i ~/.ssh/id_rsa.pub root@compute

# 依旧在 /opt/ceph/deploy 执行

[root@ceph-node1 deploy]# ceph-deploy install controller compute

[root@ceph-node1 deploy]# ceph-deploy --overwrite-conf admin controller compute

[root@controller ~]# ceph -s

cluster:

id: d82f0b96-6a69-4f7f-9d79-73d5bac7dd6c

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph-node1,ceph-node2,ceph-node3

mgr: ceph-node1(active), standbys: ceph-node2, ceph-node3

osd: 9 osds: 9 up, 9 in

rgw: 3 daemons active

data:

pools: 8 pools, 544 pgs

objects: 221 objects, 2.2 KiB

usage: 9.5 GiB used, 80 GiB / 90 GiB avail

pgs: 544 active+clean

[root@compute ~]# ceph -s

cluster:

id: d82f0b96-6a69-4f7f-9d79-73d5bac7dd6c

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph-node1,ceph-node2,ceph-node3

mgr: ceph-node1(active), standbys: ceph-node2, ceph-node3

osd: 9 osds: 9 up, 9 in

rgw: 3 daemons active

data:

pools: 8 pools, 544 pgs

objects: 221 objects, 2.2 KiB

usage: 9.5 GiB used, 80 GiB / 90 GiB avail

pgs: 544 active+clean

- 确保 glance-api 节点安装了 python-rbd

[root@controller ~]# rpm -qa | grep python-rbd

python-rbd-13.2.5-0.el7.x86_64

- 确保 nova-compute、cinder-volume 节点都安装了 ceph-common

[root@controller ~]# rpm -qa | grep ceph-common

ceph-common-13.2.5-0.el7.x86_64

[root@compute ~]# rpm -qa | grep ceph-common

ceph-common-13.2.5-0.el7.x86_64

通过 cephx 为 Glance、Cinder 创建用户:

# glance-api

ceph auth get-or-create client.glance mon 'profile rbd' osd 'profile rbd pool=images'

# cinder-volume

ceph auth get-or-create client.cinder mon 'profile rbd' osd 'profile rbd pool=volumes, profile rbd pool=vms, profile rbd pool=images'

# cinder-backup [可选]

ceph auth get-or-create client.cinder-backup mon 'profile rbd' osd 'profile rbd pool=backups'

为新建用户 client.cinder 和 client.glance 创建 keyring 文件,允许以 OpenStack Cinder、Glance 用户访问 Ceph Cluster:

# glance-api

ceph auth get-or-create client.glance | ssh root@controller sudo tee /etc/ceph/ceph.client.glance.keyring

ssh root@controller sudo chown glance:glance /etc/ceph/ceph.client.glance.keyring

# cinder-volume

ceph auth get-or-create client.cinder | ssh root@controller sudo tee /etc/ceph/ceph.client.cinder.keyring

ssh root@controller sudo chown cinder:cinder /etc/ceph/ceph.client.cinder.keyring

# cinder-backup [可选]

ceph auth get-or-create client.cinder-backup | ssh root@controller sudo tee /etc/ceph/ceph.client.cinder-backup.keyring

ssh root@controller sudo chown cinder:cinder /etc/ceph/ceph.client.cinder-backup.keyring

# nova-compute

ceph auth get-or-create client.cinder | ssh root@compute sudo tee /etc/ceph/ceph.client.cinder.keyring

计算节点上的 Libvirt 进程在挂载或卸载一个由 Cinder 提供的 Volume 时需要访问 Ceph Cluster。所以需要创建 client.cinder 用户的访问秘钥并添加到 Libvirt 守护进程。注意,在 Controller 和 Compute 节点上都运行着 nova-compute,所以在两个节点上的 Libvirt 都要添加,而且都要添加同一个 Secret,即 cinder-volume 使用的 Secret。

# 生成随机 UUID,作为 Libvirt 秘钥的唯一标识

# 只需要生成一次,所有的 cinder-volume、nova-compute 都是用同一个 UUID。

[root@compute ~]# uuidgen

4810c760-dc42-4e5f-9d41-7346db7d7da2

## Compute

ceph auth get-key client.cinder | ssh root@compute tee /tmp/client.cinder.key

## Controller

ceph auth get-key client.cinder | ssh root@controller tee /tmp/client.cinder.key

# 下列部署在两个节点上都执行

# 创建 Libvirt 秘钥文件

[root@compute ~]# cat > /tmp/secret.xml <<EOF

> <secret ephemeral='no' private='no'>

> <uuid>4810c760-dc42-4e5f-9d41-7346db7d7da2</uuid>

> <usage type='ceph'>

> <name>client.cinder secret</name>

> </usage>

> </secret>

> EOF

# 定义一个 Libvirt 秘钥

[root@compute ~]# sudo virsh secret-define --file /tmp/secret.xml

Secret 4810c760-dc42-4e5f-9d41-7346db7d7da2 created

# 设置秘钥的值,值为 Ceph client.cinder 用户的 key,Libvirt 凭此 key 就可能以 Cinder 的用户访问 Ceph Cluster

[root@compute ~]# sudo virsh secret-set-value --secret 4810c760-dc42-4e5f-9d41-7346db7d7da2 --base64 $(cat /tmp/client.cinder.key)

Secret value set

[root@compute ~]# sudo virsh secret-list

UUID Usage

--------------------------------------------------------------------------------

4810c760-dc42-4e5f-9d41-7346db7d7da2 ceph client.cinder secret

将 Ceph RBD 作为 Glance 后端存储

Glance 为 OpenStack 提供镜像及其元数据注册服务,Glance 支持对接多种后端存储。与 Ceph 完成对接后,Glance 上传的 Image 会作为块设备储存在 Ceph 集群中。新版本的 Glance 也开始支持 enabled_backends 了,可以同时对接多个 Storage Provider。

对接 Glance API

# /etc/glance/glance-api.conf

[default]

# ENABLE COPY-ON-WRITE CLONING OF IMAGES

show_image_direct_url = True

[glance_store]

## Local File

# stores = file,http

# default_store = file

# filesystem_store_datadir = /var/lib/glance/images/

## Ceph RBD

stores = rbd

default_store = rbd

rbd_store_pool = images

rbd_store_user = glance

rbd_store_ceph_conf = /etc/ceph/ceph.conf

rbd_store_chunk_size = 8

[paste_deploy]

flavor = keystone

重启 glance-api 服务:

systemctl restart openstack-glance-api

创建 Image

注意,对接 Ceph 之后,通常会以 RAW 格式创建 Glance Image,而不再使用 QCOW2 格式,否则创建虚拟机时需要进行镜像复制,没有利用 Ceph RBD COW 的优秀特性。

[root@controller ~]# qemu-img info cirros-0.3.4-x86_64-disk.img

image: cirros-0.3.4-x86_64-disk.img

file format: qcow2

virtual size: 39M (41126400 bytes)

disk size: 13M

cluster_size: 65536

Format specific information:

compat: 0.10

refcount bits: 16

[root@controller ~]# qemu-img convert -f qcow2 -O raw cirros-0.3.4-x86_64-disk.img cirros-0.3.4-x86_64-disk.raw

[root@controller ~]# qemu-img info cirros-0.3.4-x86_64-disk.raw

image: cirros-0.3.4-x86_64-disk.raw

file format: raw

virtual size: 39M (41126400 bytes)

disk size: 18M

[root@controller ~]# openstack image create --container-format bare --disk-format raw --file cirros-0.3.4-x86_64-disk.raw --unprotected --public cirros_raw

+------------------+------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+------------------+------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| checksum | 56730d3091a764d5f8b38feeef0bfcef |

| container_format | bare |

| created_at | 2019-04-26T09:44:00Z |

| disk_format | raw |

| file | /v2/images/d18923bd-86fc-4f77-b5e8-976d3b1c367c/file |

| id | d18923bd-86fc-4f77-b5e8-976d3b1c367c |

| min_disk | 0 |

| min_ram | 0 |

| name | cirros_raw |

| owner | a2b55e37121042a1862275a9bc9b0223 |

| properties | direct_url='rbd://d82f0b96-6a69-4f7f-9d79-73d5bac7dd6c/images/d18923bd-86fc-4f77-b5e8-976d3b1c367c/snap', os_hash_algo='sha512', os_hash_value='34f5709bc2363eafe857ba1344122594a90a9b8cc9d80047c35f7e34e8ac28ef1e14e2e3c13d55a43b841f533435e914b01594f2c14dd597ff9949c8389e3006', os_hidden='False' |

| protected | False |

| schema | /v2/schemas/image |

| size | 41126400 |

| status | active |

| tags | |

| updated_at | 2019-04-26T09:44:03Z |

| virtual_size | None |

| visibility | public |

+------------------+------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

[root@ceph-node1 ~]# rbd ls images

d18923bd-86fc-4f77-b5e8-976d3b1c367c

[root@ceph-node1 ~]# rbd info images/d18923bd-86fc-4f77-b5e8-976d3b1c367c

rbd image 'd18923bd-86fc-4f77-b5e8-976d3b1c367c':

size 39 MiB in 5 objects

order 23 (8 MiB objects)

id: 1dc7d182f52ff

block_name_prefix: rbd_data.1dc7d182f52ff

format: 2

features: layering

op_features:

flags:

create_timestamp: Fri Apr 26 05:44:00 2019

[root@ceph-node1 ~]# rbd snap ls images/d18923bd-86fc-4f77-b5e8-976d3b1c367c

SNAPID NAME SIZE TIMESTAMP

8 snap 39 MiB Fri Apr 26 05:44:03 2019

[root@ceph-node1 ~]# rbd info images/d18923bd-86fc-4f77-b5e8-976d3b1c367c@snap

rbd image 'd18923bd-86fc-4f77-b5e8-976d3b1c367c':

size 39 MiB in 5 objects

order 23 (8 MiB objects)

id: 1dc7d182f52ff

block_name_prefix: rbd_data.1dc7d182f52ff

format: 2

features: layering

op_features:

flags:

create_timestamp: Fri Apr 26 05:44:00 2019

protected: True

[root@ceph-node1 ~]# rados ls -p images

rbd_data.1dc7d182f52ff.0000000000000001

rbd_data.1dc7d182f52ff.0000000000000000

rbd_directory

rbd_data.1dc7d182f52ff.0000000000000004

rbd_id.d18923bd-86fc-4f77-b5e8-976d3b1c367c

rbd_data.1dc7d182f52ff.0000000000000002

rbd_info

rbd_data.1dc7d182f52ff.0000000000000003

rbd_header.1dc7d182f52ff

可以看见,当创建一个 raw 格式的 Glance Image 时,在 Ceph 中实际执行了:

- 在 Pool images 中新建了一个 {glance_image_uuid} 块设备,块设备的 Object Size 为 8M,对应的 Objects 有 2 个,足以放下 cirros.raw 13M 的数据。

- 对新建块设备执行快照。

- 对该快照执行保护。

创建一个 Glance Image 相当于执行了以下指令:

rbd -p ${GLANCE_POOL} create --size ${SIZE} ${IMAGE_ID}

rbd -p ${GLANCE_POOL} snap create ${IMAGE_ID}@snap

rbd -p ${GLANCE_POOL} snap protect ${IMAGE_ID}@snap

删除一个 Glance Image 相当于执行了以下指令:

rbd -p ${GLANCE_POOL} snap unprotect ${IMAGE_ID}@snap

rbd -p ${GLANCE_POOL} snap rm ${IMAGE_ID}@snap

rbd -p ${GLANCE_POOL} rm ${IMAGE_ID}

使用 Ceph 作为 Cinder 的后端存储

Cinder 为 OpenStack 提供卷服务,支持非常广泛的后端存储类型。对接 Ceph 后,Cinder 创建的 Volume 本质就是 Ceph RBD 的块设备,当 Volume 被虚拟机挂载后,Libvirt 会以 rbd 协议的方式使用这些 Disk 设备。除了 cinder-volume 之后,Cinder 的 Backup 服务也可以对接 Ceph,将备份的 Image 以对象或块设备的形式上传到 Ceph 集群。

对接 Cinder Volume

# /etc/cinder/cinder.conf

[DEFAULT]

...

enabled_backends = lvm,ceph

glance_api_version = 2

glance_api_servers = http://controller:9292

[lvm]

volume_driver = cinder.volume.drivers.lvm.LVMVolumeDriver

volume_backend_name = lvm

volume_group = cinder-volumes

iscsi_protocol = iscsi

iscsi_helper = lioadm

[ceph]

volume_driver = cinder.volume.drivers.rbd.RBDDriver

volume_backend_name = ceph

rbd_pool = volumes

rbd_ceph_conf = /etc/ceph/ceph.conf

rbd_flatten_volume_from_snapshot = false

rbd_max_clone_depth = 5

rbd_store_chunk_size = 4

rados_connect_timeout = -1

# for cephx authentication

rbd_user = cinder

# User cinder 访问 Ceph Cluster 所使用的 Secret UUID

rbd_secret_uuid = 4810c760-dc42-4e5f-9d41-7346db7d7da2

重启 cinder-volume 服务:

systemctl restart openstack-cinder-volume

对接 Cinder Backup

# /etc/cinder/cinder.conf

[DEFAULT]

...

backup_driver = cinder.backup.drivers.ceph

backup_ceph_conf = /etc/ceph/ceph.conf

backup_ceph_user = cinder-backup

backup_ceph_chunk_size = 134217728

backup_ceph_pool = backups

backup_ceph_stripe_unit = 0

backup_ceph_stripe_count = 0

restore_discard_excess_bytes = true

启动 cinder-backup 服务:

systemctl enable openstack-cinder-backup.service

systemctl start openstack-cinder-backup.service

systemctl status openstack-cinder-backup.service

[root@controller ~]# openstack volume service list

+------------------+-----------------+------+---------+-------+----------------------------+

| Binary | Host | Zone | Status | State | Updated At |

+------------------+-----------------+------+---------+-------+----------------------------+

| cinder-scheduler | controller | nova | enabled | up | 2019-04-27T04:44:57.000000 |

| cinder-volume | controller@lvm | nova | enabled | up | 2019-04-27T04:44:59.000000 |

| cinder-volume | controller@ceph | nova | enabled | up | 2019-04-27T04:45:00.000000 |

| cinder-backup | controller | nova | enabled | up | 2019-04-27T04:44:55.000000 |

+------------------+-----------------+------+---------+-------+----------------------------+

创建空白 Volume

创建一个 RBD Type Volume:

[root@controller ~]# openstack volume type create --public --property volume_backend_name="ceph" ceph_rbd

+-------------+--------------------------------------+

| Field | Value |

+-------------+--------------------------------------+

| description | None |

| id | 5cfadfea-df0a-4c2c-917c-2575cc968b5d |

| is_public | True |

| name | ceph_rbd |

| properties | volume_backend_name='ceph' |

+-------------+--------------------------------------+

[root@controller ~]# openstack volume type create --public --property volume_backend_name="lvm" local_lvm

+-------------+--------------------------------------+

| Field | Value |

+-------------+--------------------------------------+

| description | None |

| id | cb718d85-1abf-43e9-a644-a02365ec6e66 |

| is_public | True |

| name | local_lvm |

| properties | volume_backend_name='lvm' |

+-------------+--------------------------------------+

[root@controller ~]# openstack volume create --type ceph_rbd --size 1 ceph_rbd_vol01

+---------------------+--------------------------------------+

| Field | Value |

+---------------------+--------------------------------------+

| attachments | [] |

| availability_zone | nova |

| bootable | false |

| consistencygroup_id | None |

| created_at | 2019-04-25T11:05:43.000000 |

| description | None |

| encrypted | False |

| id | 1f8a3f58-72b2-4c81-958c-d2f15d835fe2 |

| migration_status | None |

| multiattach | False |

| name | ceph_rbd_vol01 |

| properties | |

| replication_status | None |

| size | 1 |

| snapshot_id | None |

| source_volid | None |

| status | creating |

| type | ceph_rbd |

| updated_at | None |

| user_id | 92602c24daa24f019f05ecb95f1ce68e |

+---------------------+--------------------------------------+

[root@controller ~]# openstack volume list

+--------------------------------------+----------------+-----------+------+-------------+

| ID | Name | Status | Size | Attached to |

+--------------------------------------+----------------+-----------+------+-------------+

| 1f8a3f58-72b2-4c81-958c-d2f15d835fe2 | ceph_rbd_vol01 | available | 1 | |

+--------------------------------------+----------------+-----------+------+-------------+

查看 Pool images 的 Objects 信息:

[root@ceph-node1 ~]# rbd ls volumes

volume-1f8a3f58-72b2-4c81-958c-d2f15d835fe2

[root@ceph-node1 ~]# rbd info volumes/volume-1f8a3f58-72b2-4c81-958c-d2f15d835fe2

rbd image 'volume-1f8a3f58-72b2-4c81-958c-d2f15d835fe2':

size 1 GiB in 256 objects

order 22 (4 MiB objects)

id: 149a77b0d206a

block_name_prefix: rbd_data.149a77b0d206a

format: 2

features: layering

op_features:

flags:

create_timestamp: Thu Apr 25 07:05:45 2019

[root@ceph-node1 ~]# rados ls -p volumes

rbd_directory

rbd_info

rbd_header.149a77b0d206a

rbd_id.volume-1f8a3f58-72b2-4c81-958c-d2f15d835fe2

可见,在 Pool volumes 下创建了一个 volume-{cinder_volume_uuid} 块设备,而且此时的块设备是没有 Objects 的,这是因为该 Volume 暂时没有任何的数据,所以 RBD 也不会存储数据的 Objects。在 Attached Volume 并不断写入数据之后,RBD 块设备的 Objects 也会慢慢的增加,体现了 RBD 的精简置备特性。

创建一个空白 Volume,相当于执行了以下指令:

rbd -p ${CINDER_POOL} create --new-format --size ${SIZE} volume-${VOLUME_ID}

从镜像创建 Volume

[root@controller ~]# openstack volume create --image d18923bd-86fc-4f77-b5e8-976d3b1c367c --type ceph_rbd --size 1 clone_from_image_cirros_raw

+---------------------+--------------------------------------+

| Field | Value |

+---------------------+--------------------------------------+

| attachments | [] |

| availability_zone | nova |

| bootable | false |

| consistencygroup_id | None |

| created_at | 2019-04-26T09:44:52.000000 |

| description | None |

| encrypted | False |

| id | 1bf49373-ded2-4f5d-90cd-919c0b0b1ed6 |

| migration_status | None |

| multiattach | False |

| name | clone_from_image_cirros_raw |

| properties | |

| replication_status | None |

| size | 1 |

| snapshot_id | None |

| source_volid | None |

| status | creating |

| type | ceph_rbd |

| updated_at | None |

| user_id | 92602c24daa24f019f05ecb95f1ce68e |

+---------------------+--------------------------------------+

[root@controller ~]# openstack volume list

+--------------------------------------+-----------------------------+-----------+------+-------------+

| ID | Name | Status | Size | Attached to |

+--------------------------------------+-----------------------------+-----------+------+-------------+

| 1bf49373-ded2-4f5d-90cd-919c0b0b1ed6 | clone_from_image_cirros_raw | available | 1 | |

+--------------------------------------+-----------------------------+-----------+------+-------------+

[root@ceph-node1 ~]# rbd ls volumes

volume-1bf49373-ded2-4f5d-90cd-919c0b0b1ed6

[root@ceph-node1 ~]# rbd info volumes/volume-1bf49373-ded2-4f5d-90cd-919c0b0b1ed6

rbd image 'volume-1bf49373-ded2-4f5d-90cd-919c0b0b1ed6':

size 1 GiB in 256 objects

order 22 (4 MiB objects)

id: 1dcad1ca7dcf8

block_name_prefix: rbd_data.1dcad1ca7dcf8

format: 2

features: layering

op_features:

flags:

create_timestamp: Fri Apr 26 05:44:53 2019

parent: images/d18923bd-86fc-4f77-b5e8-976d3b1c367c@snap

overlap: 39 MiB

[root@ceph-node1 ~]# rados ls -p volumes

rbd_id.volume-1bf49373-ded2-4f5d-90cd-919c0b0b1ed6

rbd_directory

rbd_children

rbd_info

rbd_header.1dcad1ca7dcf8

可见,从镜像创建 Volume 的时候应用了 Ceph RBD COW Clone 功能,这是通过 glance-api.conf [DEFAULT] show_image_direct_url = True 来开启。这个配置项的作用是持久化 Image 的 location,此时 Glance RBD Driver 才可以通过 Image location 执行 Clone 操作。并且还会根据指定的 Volume Size 来调整 RBD Image 的 Size。

从镜像创建一个 Volume,相当于执行了以下指令:

rbd clone ${GLANCE_POOL}/${IMAGE_ID}@snap ${CINDER_POOL}/volume-${VOLUME_ID}

if [[ -n "${SIZE}" ]]; then

rbd resize --size ${SIZE} ${CINDER_POOL}/volume-${VOLUME_ID}

fi

创建 Volume 的快照

[root@controller ~]# openstack volume snapshot create --volume clone_from_image_cirros_raw clone_from_image_cirros_raw-snap01

+-------------+--------------------------------------+

| Field | Value |

+-------------+--------------------------------------+

| created_at | 2019-04-26T10:29:18.661664 |

| description | None |

| id | c5b1b170-e12f-4fba-bf83-1a4c206bd8fb |

| name | clone_from_image_cirros_raw-snap01 |

| properties | |

| size | 1 |

| status | creating |

| updated_at | None |

| volume_id | 1bf49373-ded2-4f5d-90cd-919c0b0b1ed6 |

+-------------+--------------------------------------+

[root@controller ~]# openstack volume snapshot list

+--------------------------------------+------------------------------------+-------------+-----------+------+

| ID | Name | Description | Status | Size |

+--------------------------------------+------------------------------------+-------------+-----------+------+

| c5b1b170-e12f-4fba-bf83-1a4c206bd8fb | clone_from_image_cirros_raw-snap01 | None | available | 1 |

+--------------------------------------+------------------------------------+-------------+-----------+------+

[root@ceph-node1 ~]# rbd snap ls volumes/volume-1bf49373-ded2-4f5d-90cd-919c0b0b1ed6

SNAPID NAME SIZE TIMESTAMP

4 snapshot-c5b1b170-e12f-4fba-bf83-1a4c206bd8fb 1 GiB Fri Apr 26 06:29:19 2019

[root@ceph-node1 ~]# rbd info volumes/volume-1bf49373-ded2-4f5d-90cd-919c0b0b1ed6@snapshot-c5b1b170-e12f-4fba-bf83-1a4c206bd8fb

rbd image 'volume-1bf49373-ded2-4f5d-90cd-919c0b0b1ed6':

size 1 GiB in 256 objects

order 22 (4 MiB objects)

id: 1dcad1ca7dcf8

block_name_prefix: rbd_data.1dcad1ca7dcf8

format: 2

features: layering

op_features:

flags:

create_timestamp: Fri Apr 26 05:44:53 2019

protected: True

parent: images/d18923bd-86fc-4f77-b5e8-976d3b1c367c@snap

overlap: 39 MiB

对一个 Volume 执行快照,就相当于执行了以下指令:

rbd -p ${CINDER_POOL} snap create volume-${VOLUME_ID}@snapshot-${SNAPSHOT_ID}

rbd -p ${CINDER_POOL} snap protect volume-${VOLUME_ID}@snapshot-${SNAPSHOT_ID}

创建 Volume 备份

如果说快照时一个时间机器,那么备份就是一个异地的时间机器,它具有容灾的含义。所以一般来说 Ceph Pool backup 应该与 Pool images、volumes 以及 vms 处于不同的灾备隔离域。

一般的,备份具有以下类型:

- 全量备份

- 增量备份

- 差异备份

执行全量备份(第一次备份):

[root@controller ~]# openstack volume backup create --name ceph_rbd_vol01-bk190426 --force af7ce6b7-12c2-4bdf-933c-884a4f514617

[root@controller ~]# openstack volume backup show ceph_rbd_vol01-bk190426

+-----------------------+--------------------------------------+

| Field | Value |

+-----------------------+--------------------------------------+

| availability_zone | nova |

| container | backups |

| created_at | 2019-04-26T10:46:34.000000 |

| data_timestamp | 2019-04-26T10:46:34.000000 |

| description | None |

| fail_reason | None |

| has_dependent_backups | False |

| id | 6ca1a537-f37c-4aab-a3d3-19953a5627a2 |

| is_incremental | False |

| name | ceph_rbd_vol01-bk190426 |

| object_count | 0 |

| size | 1 |

| snapshot_id | None |

| status | available |

| updated_at | 2019-04-26T10:46:38.000000 |

| volume_id | af7ce6b7-12c2-4bdf-933c-884a4f514617 |

+-----------------------+--------------------------------------+

[root@ceph-node1 ~]# rbd snap ls volumes/volume-af7ce6b7-12c2-4bdf-933c-884a4f514617

SNAPID NAME SIZE TIMESTAMP

10 backup.6ca1a537-f37c-4aab-a3d3-19953a5627a2.snap.1556275596.5 1 GiB Fri Apr 26 06:46:37 2019

[root@ceph-node1 ~]# rbd info backups/volume-af7ce6b7-12c2-4bdf-933c-884a4f514617.backup.base

rbd image 'volume-af7ce6b7-12c2-4bdf-933c-884a4f514617.backup.base':

size 1 GiB in 256 objects

order 22 (4 MiB objects)

id: 1e69b2612219f

block_name_prefix: rbd_data.1e69b2612219f

format: 2

features: layering

op_features:

flags:

create_timestamp: Fri Apr 26 06:46:36 2019

[root@ceph-node1 ~]# rbd snap ls backups/volume-af7ce6b7-12c2-4bdf-933c-884a4f514617.backup.base

SNAPID NAME SIZE TIMESTAMP

6 backup.6ca1a537-f37c-4aab-a3d3-19953a5627a2.snap.1556275596.5 1 GiB Fri Apr 26 06:46:38 2019

[root@ceph-node1 ~]# rbd info backups/volume-af7ce6b7-12c2-4bdf-933c-884a4f514617.backup.base@backup.6ca1a537-f37c-4aab-a3d3-19953a5627a2.snap.1556275596.5

rbd image 'volume-af7ce6b7-12c2-4bdf-933c-884a4f514617.backup.base':

size 1 GiB in 256 objects

order 22 (4 MiB objects)

id: 1e69b2612219f

block_name_prefix: rbd_data.1e69b2612219f

format: 2

features: layering

op_features:

flags:

create_timestamp: Fri Apr 26 06:46:36 2019

protected: False

执行 Volume 的全量备份,就相当于执行了以下指令:

# 在 Pool backups 创建一个同等大小的块设备

rbd -p ${BACKUP_POOL} create --size ${VOLUME_SIZE} volume-${VOLUME_ID}.backup.base

# 对源块设备(Volume)执行快照

NEW_SNAP=volume-${VOLUME_ID}@backup.${BACKUP_ID}.snap.${TIMESTAMP}

rbd -p ${CINDER_POOL} snap create ${NEW_SNAP}

# 先通过 export-diff 导出源块设备的差量文件,然后通过 import-diff 导入到 backups 对应的块设备中

rbd export-diff ${CINDER_POOL}/volume-${VOLUME_ID}${NEW_SNAP} - | rbd import-diff --pool ${BACKUP_POOL} - volume-${VOLUME_ID}.backup.base

执行增量备份:

[root@controller ~]# openstack volume backup create --name ceph_rbd_vol01-bk190426 --force --incremental af7ce6b7-12c2-4bdf-933c-884a4f514617

# 对源块设备(Volume)执行快照

rbd -p ${CINDER_POOL} snap create volume-${VOLUME_ID}@backup.${BACKUP_ID}.snap.${TIMESTAMP}

# 先通过 export-diff 比较两个 Volume 快照之间的增量数据并导出,然后通过 import-diff 导入到 backups 对应的块设备中

rbd export-diff --pool ${CINDER_POOL} --from-snap backup.${PARENT_ID}.snap.${LAST_TIMESTAMP} ${CINDER_POOL}/volume-${VOLUME_ID}@backup.${BACKUP_ID}.snap.${TIMESTRAMP} - \

| rbd import-diff --pool ${BACKUP_POOL} - ${BACKUP_POOL}/volume-${VOLUME_ID}.backup.base

# 删除上一个 Volume 的快照

rbd -p ${CINDER_POOL} snap rm volume-${VOLUME_ID}.backup.base@backup.${PARENT_ID}.snap.${LAST_TIMESTAMP}

使用 Ceph 作为 Nova 的后端存储

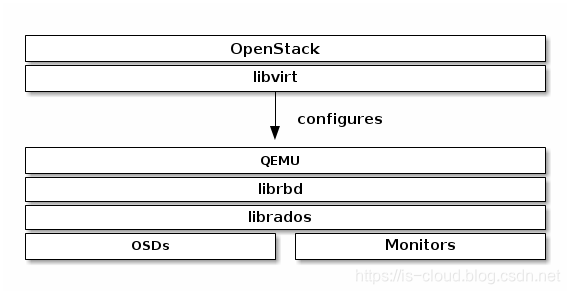

Nova 为 OpenStack 提供计算服务,对接 Ceph 主要是希望将实例的系统磁盘文件储存到 Ceph 集群中。与其说是对接 Nova,更准确来说是对接 QEMU-KVM/libvirt,因为 librbd 早已原生集成到其中。

对接 Nova Compute

修改两个节点上的 Ceph Client 配置,启用 RBD 客户端缓存和管理 Socket,有助于提升性能和便于查看故障日志:

$ mkdir -p /var/run/ceph/guests/ /var/log/qemu/

$ chown qemu:qemu /var/run/ceph/guests /var/log/qemu/

# /etc/ceph/ceph.conf

[client]

rbd cache = true

rbd cache writethrough until flush = true

admin socket = /var/run/ceph/guests/$cluster-$type.$id.$pid.$cctid.asok

log file = /var/log/qemu/qemu-guest-$pid.log

rbd concurrent management ops = 20

修改 Nova 配置:

- Controller

# /etc/nova/nova.conf

...

[libvirt]

virt_type = qemu

images_type = rbd

images_rbd_pool = vms

images_rbd_ceph_conf = /etc/ceph/ceph.conf

rbd_user = cinder

rbd_secret_uuid = 4810c760-dc42-4e5f-9d41-7346db7d7da2

disk_cachemodes="network=writeback"

inject_password = false

inject_key = false

inject_partition = -2

live_migration_flag="VIR_MIGRATE_UNDEFINE_SOURCE,VIR_MIGRATE_PEER2PEER,VIR_MIGRATE_LIVE,VIR_MIGRATE_PERSIST_DEST"

- Compute

# /etc/nova/nova.conf

...

[libvirt]

virt_type = qemu

images_type = rbd

images_rbd_pool = vms

images_rbd_ceph_conf = /etc/ceph/ceph.conf

rbd_user = cinder

rbd_secret_uuid = 4810c760-dc42-4e5f-9d41-7346db7d7da2

disk_cachemodes="network=writeback"

inject_password = false

inject_key = false

inject_partition = -2

live_migration_flag="VIR_MIGRATE_UNDEFINE_SOURCE,VIR_MIGRATE_PEER2PEER,VIR_MIGRATE_LIVE,VIR_MIGRATE_PERSIST_DEST"

创建虚拟机并挂载卷

[root@controller ~]# openstack server create --image d18923bd-86fc-4f77-b5e8-976d3b1c367c --flavor 66ddc38d-452a-40b6-a0f3-f867658754ff --nic net-id=f31b2060-ccc0-457a-948c-d805a7680faf VM1

[root@controller ~]# openstack server show VM1

+-------------------------------------+----------------------------------------------------------+

| Field | Value |

+-------------------------------------+----------------------------------------------------------+

| OS-DCF:diskConfig | MANUAL |

| OS-EXT-AZ:availability_zone | nova |

| OS-EXT-SRV-ATTR:host | compute |

| OS-EXT-SRV-ATTR:hypervisor_hostname | compute |

| OS-EXT-SRV-ATTR:instance_name | instance-00000028 |

| OS-EXT-STS:power_state | Running |

| OS-EXT-STS:task_state | None |

| OS-EXT-STS:vm_state | active |

| OS-SRV-USG:launched_at | 2019-04-26T10:03:57.000000 |

| OS-SRV-USG:terminated_at | None |

| accessIPv4 | |

| accessIPv6 | |

| addresses | vlan-net-100=192.168.1.13 |

| config_drive | |

| created | 2019-04-26T10:03:40Z |

| flavor | mini (66ddc38d-452a-40b6-a0f3-f867658754ff) |

| hostId | 489693032f8676c0ce48995cffce9c4e00bd0b10739e5c0ca33f8559 |

| id | 65bd684f-0414-4b58-9924-af681091be09 |

| image | cirros_raw (d18923bd-86fc-4f77-b5e8-976d3b1c367c) |

| key_name | None |

| name | VM1 |

| progress | 0 |

| project_id | a2b55e37121042a1862275a9bc9b0223 |

| properties | |

| security_groups | name='default' |

| status | ACTIVE |

| updated | 2019-04-26T10:03:57Z |

| user_id | 92602c24daa24f019f05ecb95f1ce68e |

| volumes_attached | |

+-------------------------------------+----------------------------------------------------------+

[root@controller ~]# openstack server add volume VM1 ceph_rbd_vol01

[root@controller ~]# openstack volume show ceph_rbd_vol01

+--------------------------------+------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+--------------------------------+------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| attachments | [{u'server_id': u'65bd684f-0414-4b58-9924-af681091be09', u'attachment_id': u'185204ba-9a8d-4ef3-ad7c-9db1e6baa558', u'attached_at': u'2019-04-26T10:04:58.000000', u'host_name': u'compute', u'volume_id': u'af7ce6b7-12c2-4bdf-933c-884a4f514617', u'device': u'/dev/vdb', u'id': u'af7ce6b7-12c2-4bdf-933c-884a4f514617'}] |

| availability_zone | nova |

| bootable | false |

| consistencygroup_id | None |

| created_at | 2019-04-26T09:57:08.000000 |

| description | None |

| encrypted | False |

| id | af7ce6b7-12c2-4bdf-933c-884a4f514617 |

| migration_status | None |

| multiattach | False |

| name | ceph_rbd_vol01 |

| os-vol-host-attr:host | controller@ceph#ceph |

| os-vol-mig-status-attr:migstat | None |

| os-vol-mig-status-attr:name_id | None |

| os-vol-tenant-attr:tenant_id | a2b55e37121042a1862275a9bc9b0223 |

| properties | |

| replication_status | None |

| size | 1 |

| snapshot_id | None |

| source_volid | None |

| status | in-use |

| type | ceph_rbd |

| updated_at | 2019-04-26T10:04:59.000000 |

| user_id | 92602c24daa24f019f05ecb95f1ce68e |

+--------------------------------+------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

查看 Libvirt 虚拟机的 XML 文件:

[root@controller ~]# virsh dumpxml instance-00000028

...

<disk type='network' device='disk'>

<driver name='qemu' type='raw' cache='writeback'/>

<auth username='cinder'>

<secret type='ceph' uuid='4810c760-dc42-4e5f-9d41-7346db7d7da2'/>

</auth>

<source protocol='rbd' name='vms/65bd684f-0414-4b58-9924-af681091be09_disk'>

<host name='172.18.22.234' port='6789'/>

<host name='172.18.22.235' port='6789'/>

<host name='172.18.22.236' port='6789'/>

</source>

<target dev='vda' bus='virtio'/>

<alias name='virtio-disk0'/>

<address type='pci' domain='0x0000' bus='0x00' slot='0x04' function='0x0'/>

</disk>

<disk type='network' device='disk'>

<driver name='qemu' type='raw' cache='writeback' discard='unmap'/>

<auth username='cinder'>

<secret type='ceph' uuid='4810c760-dc42-4e5f-9d41-7346db7d7da2'/>

</auth>

<source protocol='rbd' name='volumes/volume-af7ce6b7-12c2-4bdf-933c-884a4f514617'>

<host name='172.18.22.234' port='6789'/>

<host name='172.18.22.235' port='6789'/>

<host name='172.18.22.236' port='6789'/>

</source>

<target dev='vdb' bus='virtio'/>

<serial>af7ce6b7-12c2-4bdf-933c-884a4f514617</serial>

<alias name='virtio-disk1'/>

<address type='pci' domain='0x0000' bus='0x00' slot='0x06' function='0x0'/>

</disk>

可见,Libvirt 虚拟机对 Ceph RBD 块设备的使用时不需要首先 map 到计算节点本地的,而是直接通过 rbd 协议走网络传输。其中系统盘对接 Pool vms,数据盘对接 Pool volumes。

查看 Pool vms 状态信息:

[root@compute ~]# rbd ls vms

65bd684f-0414-4b58-9924-af681091be09_disk

[root@compute ~]# rbd info vms/65bd684f-0414-4b58-9924-af681091be09_disk

rbd image '65bd684f-0414-4b58-9924-af681091be09_disk':

size 1 GiB in 128 objects

order 23 (8 MiB objects)

id: 1df232909a23e

block_name_prefix: rbd_data.1df232909a23e

format: 2

features: layering

op_features:

flags:

create_timestamp: Fri Apr 26 06:03:48 2019

parent: images/d18923bd-86fc-4f77-b5e8-976d3b1c367c@snap

overlap: 39 MiB

[root@compute ~]# rados ls -p vms

rbd_header.1df232909a23e

rbd_data.1df232909a23e.0000000000000003

rbd_directory

rbd_children

rbd_data.1df232909a23e.0000000000000002

rbd_info

rbd_data.1df232909a23e.0000000000000000

rbd_id.65bd684f-0414-4b58-9924-af681091be09_disk

rbd_data.1df232909a23e.0000000000000001

可见,虚拟机根磁盘对应的块设备 {nova_instance_uuid}_disk 同样是一个从 Pool images COW Clone 得到块设备。假如 Glance NOT ENABLE COPY-ON-WRITE CLONING OF IMAGES,那么就需要通过从 Pool images 拷贝到 Pool vms 的方式得到跟磁盘。块设备 {nova_instance_uuid}_disk 的 Objects 也是随着虚拟机的运行而逐渐增加,直到用满位置。

Boot from image 就相当于执行来了以下指令:

rbd clone ${GLANCE_POOL}/${IMAGE_ID}@snap ${NOVA_POOL}/${SERVER_ID}_disk

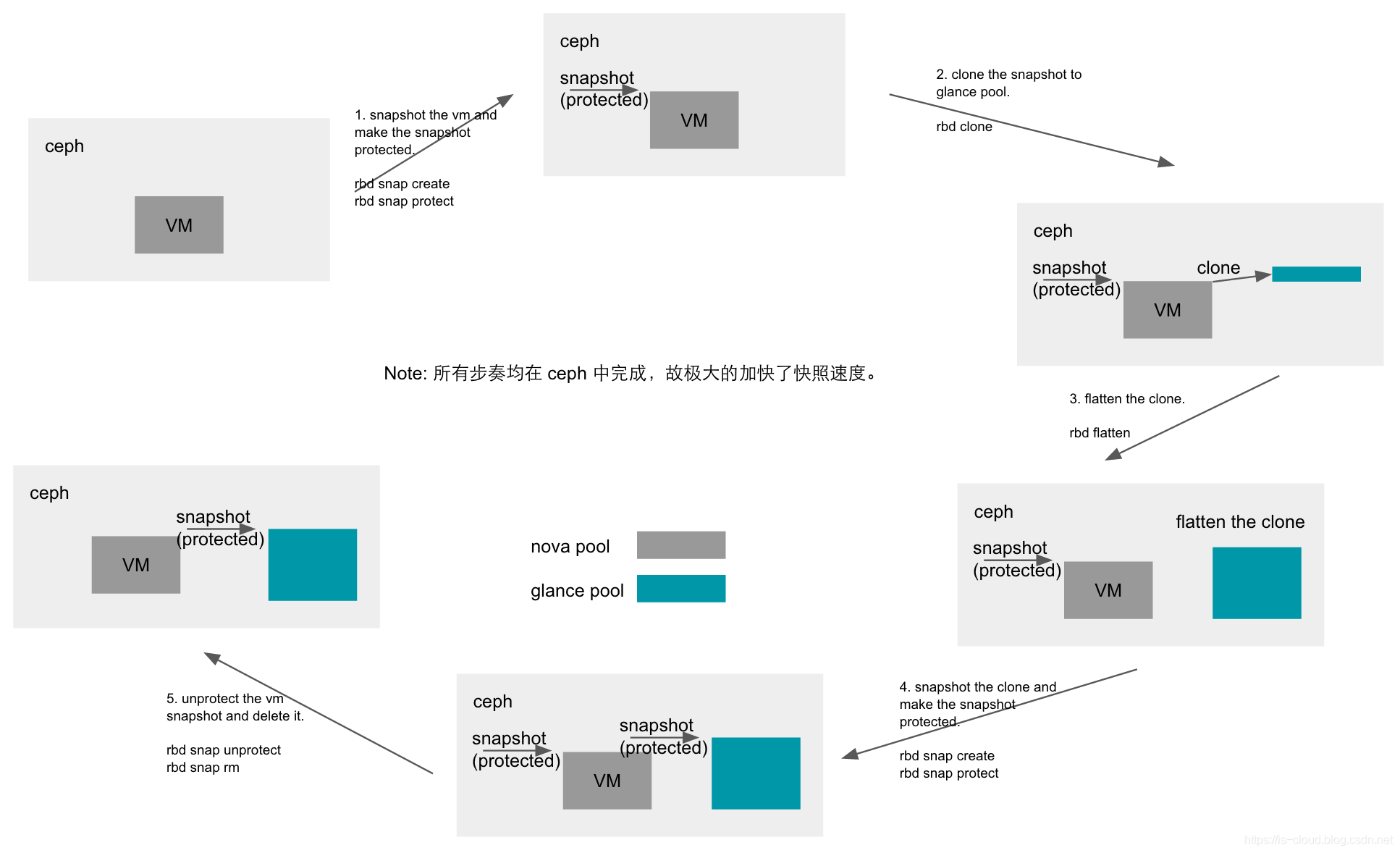

借助 Ceph 实现秒级启动虚拟机

在没有 Ceph 的应用场景中,虚拟机是这样启动的:先探测镜像文件是否已经缓存在计算节点本地。如果没有,则从 Glance 下载;如果已经缓存,则直接启动虚拟机。因此,虚拟机启动的用时很大程度上受到了 Glance 网络 I/O 的影响。同样的,在为虚拟机创建快照时,也需要 Commit 当前镜像数据到 Glance 中,这类操作的时间往往是比较长的。

通过上述章节的内容可以得知,当 Nova、Cinder、Glance 都对接到 Ceph 之后,可以有效解决虚拟机启动慢的问题,达到秒级启动的效果。因为:

- 虚拟机直接通过 rbd 协议访问 Ceph 块设备,不需要下载镜像文件。

- Boot from image 的虚拟机以及 Create from image 的 Volume 都可以通过 Ceph RBD COW Clone 的方式快速得到。

- 虚拟机的快照,实际上就是 Ceph 块设备的快照,在 Ceph 集群中直接完成,不需要从本地上传。

问题 1:无法重启虚拟机

将卷挂载到实例并重启之后发现虚拟机无法正常启动,错误日志为启动 Domain 的时候没有找到对应的 Secret:

File "/usr/lib/python2.7/site-packages/nova/virt/libvirt/driver.py", line 5550, in _create_domain

...

libvirtError: Secret not found: no secret with matching uuid '4810c760-dc42-4e5f-9d41-7346db7d7da2'

查看 Compute 上的 Libvirt Secret,的确没有 4810c760-dc42-4e5f-9d41-7346db7d7da2,这个应该是 Controller 的 Libvirt Secret:

[root@compute ~]# virsh secret-list

UUID Usage

--------------------------------------------------------------------------------

457eb676-33da-42ec-9a8c-9293d545c337 ceph client.cinder secret

所以我们尝试将会两个节点上的 Secret 统一:

# 删除旧 Secret

[root@compute ~]# virsh secret-undefine 457eb676-33da-42ec-9a8c-9293d545c337

Secret 457eb676-33da-42ec-9a8c-9293d545c337 deleted

[root@compute ~]# virsh secret-list

UUID Usage

--------------------------------------------------------------------------------

# 重新定义 Secret

[root@compute ~]# sudo virsh secret-define --file secret.xml

Secret 4810c760-dc42-4e5f-9d41-7346db7d7da2 created

[root@compute ~]# sudo virsh secret-set-value --secret 4810c760-dc42-4e5f-9d41-7346db7d7da2 --base64 $(cat client.cinder.key)

Secret value set

[root@compute ~]# sudo virsh secret-list

UUID Usage

--------------------------------------------------------------------------------

4810c760-dc42-4e5f-9d41-7346db7d7da2 ceph client.cinder secret

# 修改 nova.conf 配置文件

[libvirt]

...

rbd_secret_uuid = 4810c760-dc42-4e5f-9d41-7346db7d7da2

问题 2:无法解除 RBD 镜像保护(未解决)

删除镜像的时候,无法解除 RBD 镜像保护:

PermissionError: [errno 1] error unprotecting snapshot baadb5b8-97c8-4cc9-97af-8e66a2dd3785@snap

删除 Volume 快照的时候,无法解除 RBD 镜像保护:

PermissionError: [errno 1] error unprotecting snapshot volume-1bf49373-ded2-4f5d-90cd-919c0b0b1ed6@snapshot-c5b1b170-e12f-4fba-bf83-1a4c206bd8fb