ELK_大保健

前言

什么是ELK?

通俗来讲,ELK是由Elasticsearch、Logstash、Kibana 三个开源软件的组成的一个组合体,这三个软件当中,每个软件用于完成不同的功能,ELK 又称为ELK stack,官方域名为stactic.co,ELK stack的主要优点有如下几个:

处理方式灵活: elasticsearch是实时全文索引,具有强大的搜索功能

配置相对简单:elasticsearch全部使用JSON 接口,logstash使用模块配置,kibana的配置文件部分更简单。

检索性能高效:基于优秀的设计,虽然每次查询都是实时,但是也可以达到百亿级数据的查询秒级响应。

集群线性扩展:elasticsearch和logstash都可以灵活线性扩展

前端操作绚丽:kibana的前端设计比较绚丽,而且操作简单

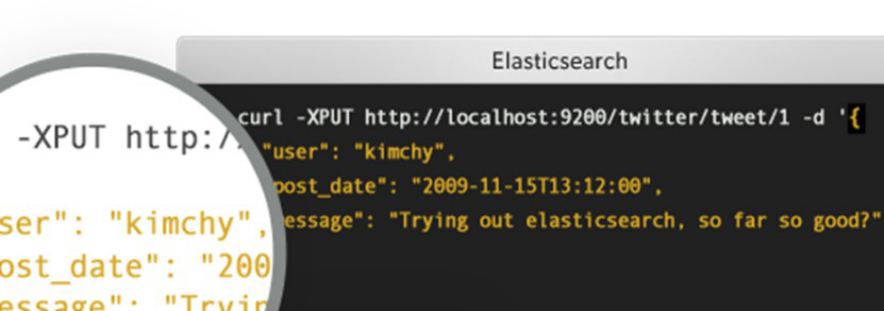

什么Elasticsearch?

是一个高度可扩展的开源全文搜索和分析引擎,它可实现数据的实时全文搜索搜索、支持分布式可实现高可用、提供API接口,可以处理大规模日志数据,比如Nginx、Tomcat、系统日志等功能。

什么是Logstash?

可以通过插件实现日志收集和转发,支持日志过滤,支持普通log、自定义json格式的日志解析。

什么是kibana?

主要是通过接口调用elasticsearch的数据,并进行前端数据可视化的展现。

一、elasticsearch部署

1.1 环境初始化

最小化安装 Centos 7.2 x86_64操作系统的虚拟机,vcpu 2,内存4G或更多,操作系统盘50G,主机名设置规则为linux-hostX.exmaple.com,其中host1和host2为elasticsearch服务器,为保证效果特额外添加一块单独的数据磁盘大小为50G并格式化挂载到/data。

1.1.1 主机名和磁盘挂载

[root@localhost ~]# hostnamectl set-hostname linux-host1.exmaple.com && reboot #各服务器配置自己的主机名并重启

[root@localhost ~]# hostnamectl set-hostname linux-host2.exmaple.com && reboot

[root@linux-host1 ~]# mkdir /elk

挂载方式1:

[root@linux-host1 ~]# mount /dev/sdb /elk/

[root@linux-host1 ~]# echo " /dev/sdb /elk/ xfs defaults 0 0" >> /etc/fstab

hostX 。。。。。

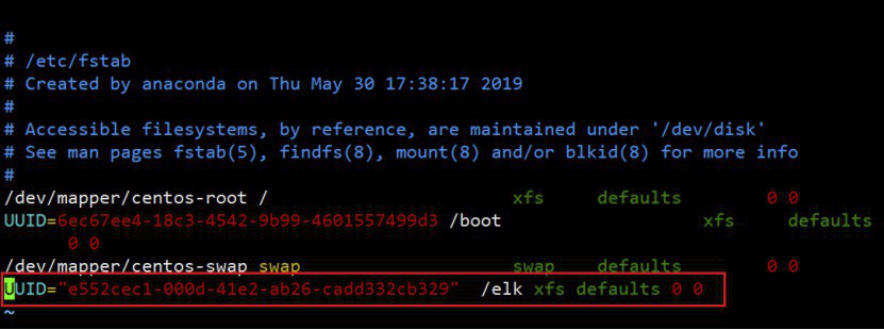

挂载方式2:

(1)先查看磁盘的UUID

[root@linux-host1 ~]# blkid //复制对应磁盘的UUID

[root@linux-host1 ~]# vim /etc/fstab //在此文件中输入磁盘UUID和设备类型 UUID="e552cec1-000d-41e2-ab26-cadd332cb329" 挂载路径 xfs defaults 0 0

1.1.2 防火强和selinux

关闭防所有服务器的火墙和selinux,包括web服务器、redis和logstash服务器的防火墙和selinux全部关闭,此步骤是为了避免出现因为防火墙策略或selinux安全权限引起的各种未知问题,以下只显示了host1和host2的命令,但是其他服务器都要执行。

[root@linux-host1 ~]# systemctl disable firewalld

[root@linux-host1 ~]# systemctl disable NetworkManager

[root@linux-host1 ~]# sed -i '/SELINUX/s/enforcing/disabled/' /etc/selinux/config

[root@linux-host1 ~]# echo "* soft nofile 65536" >> /etc/security/limits.conf

[root@linux-host1 ~]# echo "* hard nofile 65536" >> /etc/security/limits.conf

hostX 。。。。。。

1.1.3 各服务器配置本地域名解析

[root@linux-host1 ~]# vim /etc/hosts

192.168.56.11 linux-host1.exmaple.com

192.168.56.12 linux-host2.exmaple.com

192.168.56.13 linux-host3.exmaple.com

192.168.56.14 linux-host4.exmaple.com

192.168.56.15 linux-host5.exmaple.com

192.168.56.16 linux-host6.exmaple.com

1.1.4 设置epel源,安装基本操作命令并同步时间

[root@linux-host1 ~]# wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

[root@linux-host1 ~]# yum install -y net-tools vim lrzsz tree screen lsof tcpdump wget ntpdate

[root@linux-host1 ~]# cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

[root@linux-host1 ~]# echo "*/5 * * * * ntpdate time1.aliyun.com &> /dev/null && hwclock -w" >> /var/spool/cron/root

[root@linux-host1 ~]# systemctl restart crond

[root@linux-host1 ~]# reboot #重启检查各项配置是否生效,没有问题的话给虚拟机做快照以方便后期还原

1.2 在host1和host2分别安装elasticsearch

1.2.1 在两台服务器准备java环境

因为elasticsearch服务运行需要java环境,因此两台elasticsearch服务器需要安装java环境,可以使用以下方式安装:

方式一:直接使用yum安装openjdk

[root@linux-host1 ~]# yum install java-1.8.0*

方式二:本地安装在oracle官网下载rpm安装包:

[root@linux-host1 ~]# yum localinstall jdk-8u92-linux-x64.rpm

方式三:下载二进制包自定义profile环境变量:

下载地址:http://www.oracle.com/technetwork/java/javase/downloads/jdk8-downloads-2133151.html

[root@linux-host1 ~]# tar xvf jdk-8u121-linux-x64.tar.gz -C /usr/local/

[root@linux-host1 ~]# ln -sv /usr/local/jdk1.8.0_121 /usr/local/jdk

[root@linux-host1 ~]# vim /etc/profile

export HISTTIMEFORMAT="%F %T whoami "

export JAVA_HOME=/usr/local/jdk

export CLASSPATH=.:$JAVA_HOME/jre/lib/rt.jar:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export PATH=$PATH:$JAVA_HOME/bin

[root@linux-host1 ~]# source /etc/profile

[root@linux-host1 ~]# java -version

java version "1.8.0_121" #确认可以出现当前的java版本号

Java(TM) SE Runtime Environment (build 1.8.0_121-b13)

Java HotSpot(TM) 64-Bit Server VM (build 25.121-b13, mixed mode)

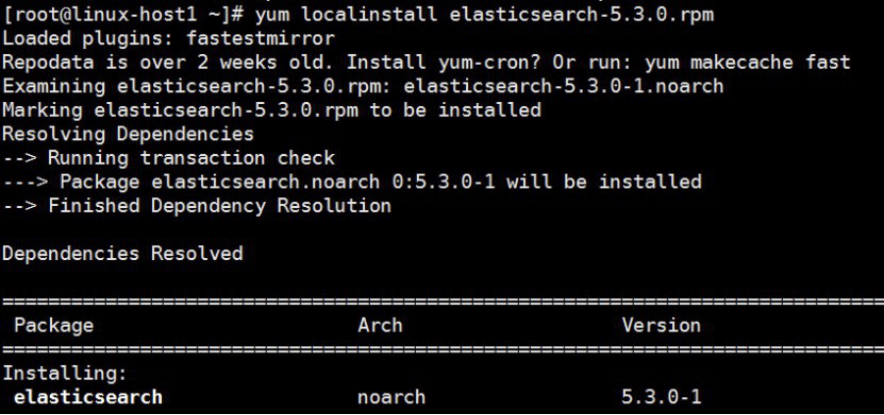

1.3 官网下载elasticsearch并安装

下载地址:https://www.elastic.co/downloads/elasticsearch,当前最新版本5.3.0

1.3.1 两台服务器分别安装elasticsearch:

[root@linux-host1 ~]# yum –y localinstall elasticsearch-5.3.0.rpm

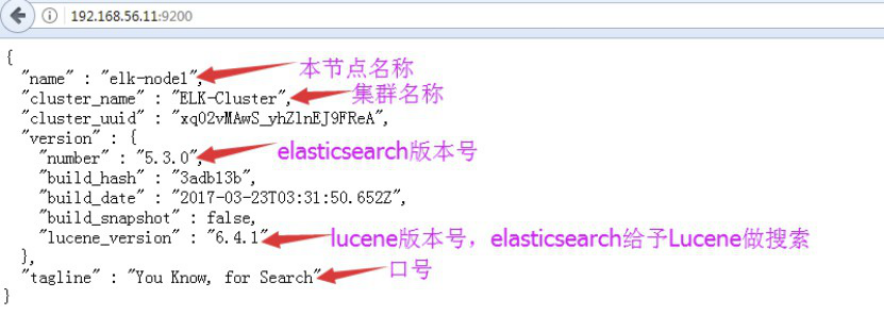

1.3.2 编辑各elasticsearch服务器的配置

[root@linux-host1 ~]# grep "^[a-Z]" /etc/elasticsearch/elasticsearch.yml

cluster.name: ELK-Cluster #ELK的集群名称,名称相同即属于是同一个集群

node.name: elk-node1 #本机在集群内的节点名称

path.data: /elk/data #数据保存目录

path.logs: /elk/logs #日志保存目

bootstrap.memory_lock: true #服务启动的时候锁定足够的内存,防止数据写入swap

network.host: 0.0.0.0 #监听IP

http.port: 9200

discovery.zen.ping.unicast.hosts: ["192.168.56.11", "192.168.56.12"]

注意:给存储数据的目录/elk的权限为elasticsearch用户

1.3.3 修改内存限制,并同步配置文件

[root@linux-host1 ~]# vim /usr/lib/systemd/system/elasticsearch.service #修改内存限制

LimitMEMLOCK=infinity #去掉注释,可以最大化使用内存

[root@linux-host1 ~]# vim /etc/elasticsearch/jvm.options

22 -Xms2g

23 -Xmx2g #最小和最大内存限制,为什么最小和最大设置一样大?

https://www.elastic.co/guide/en/elasticsearch/reference/current/heap-size.html

官方配置文档最大建议30G以内。

将以上配置文件scp到host2并修改自己的node名称

[root@linux-host1~]#scp /etc/elasticsearch/elasticsearch.yml 192.168.56.12:/etc/elasticsearch/

[root@linux-host2 ~]# grep "^[a-Z]" /etc/elasticsearch/elasticsearch.yml

cluster.name: ELK-Cluster

node.name: elk-node2 #与host1不能相同

path.data: /data/elk

path.logs: /data/elk

bootstrap.memory_lock: true

network.host: 0.0.0.0

http.port: 9200

discovery.zen.ping.unicast.hosts: ["192.168.56.11", "192.168.56.12"]

1.3.4 目录权限更改

各服务器创建数据和日志目录并修改目录权限为elasticsearch:

[root@linux-host1 ~]# mkdir /elk/{data,logs}

[root@linux-host1 ~]# ll /elk/

total 0

drwxr-xr-x 2 root root 6 Apr 18 18:44 data

drwxr-xr-x 2 root root 6 Apr 18 18:44 logs

[root@linux-host1 ~]# chown elasticsearch.elasticsearch /elk/ -R

[root@linux-host1 ~]# ll /elk/

total 0

drwxr-xr-x 2 elasticsearch elasticsearch 6 Apr 18 18:44 data

drwxr-xr-x 2 elasticsearch elasticsearch 6 Apr 18 18:44 logs

1.3.5 启动elasticsearch服务并验证

[root@linux-host1 ~]# systemctl restart elasticsearch

[root@linux-host1 ~]# tail -f /elk/logs/ELK-Cluster.log

[root@linux-host1 ~]# tail -f /elk/logs/

1.3.6 验证端口监听成功

1.3.7 通过浏览器访问elasticsearch服务端口

插件是为了完成不同的功能,官方提供了一些插件但大部分是收费的,另外也有一些开发爱好者提供的插件,可以实现对elasticsearch集群的状态监控与管理配置等功能。

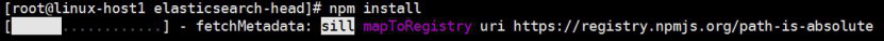

1.4.1 安装5.x版本的head插件

在elasticsearch 5.x版本以后不再支持直接安装head插件,而是需要通过启动一个服务方式,git地址:https://github.com/mobz/elasticsearch-head

[root@linux-host1 ~]# yum install -y npm

NPM的全称是Node Package Manager,是随同NodeJS一起安装的包管理和分发工具,它很方便让JavaScript开发者下载、安装、上传以及管理已经安装的包。

[root@linux-host1 ~]# cd /usr/local/src/

[root@linux-host1 src]#git clone git://github.com/mobz/elasticsearch-head.git

[root@linux-host1 src]# cd elasticsearch-head/

[root@linux-host1 elasticsearch-head]# yum install npm -y

[root@linux-host1 elasticsearch-head]# npm install grunt -save

[root@linux-host2 elasticsearch-head]# ll node_modules/grunt #确认生成文件

[root@linux-host1 elasticsearch-head]# npm install #执行安装

[root@linux-host1 elasticsearch-head]# npm run start & #后台启动服务

1.4.1.1 服务配置文件

开启跨域访问支持,然后重启elasticsearch服务:

[root@linux-host1 ~]# vim /etc/elasticsearch/elasticsearch.yml

http.cors.enabled: true #最下方添加

http.cors.allow-origin: "*"

[root@linux-host1 ~]# /etc/init.d/elasticsearch restart

1.4.1.2 docker版本启动head插件:

[root@linux-host1 ~]# yum install docker -y

[root@linux-host1 ~]# systemctl start docker && systemctl enable docker

[root@linux-host1 ~]# docker run -d -p 9100:9100 mobz/elasticsearch-head:5

然后重新连接:

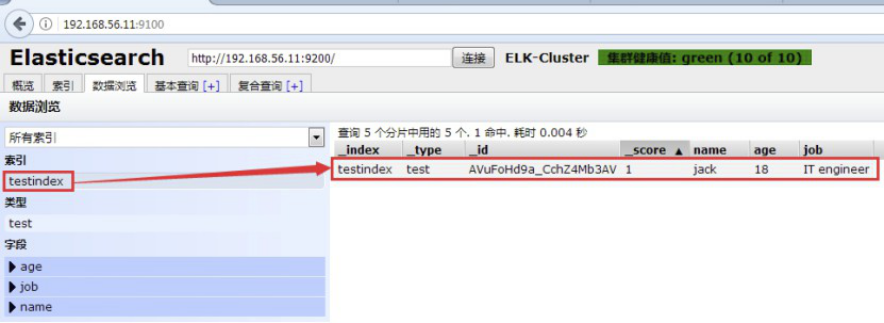

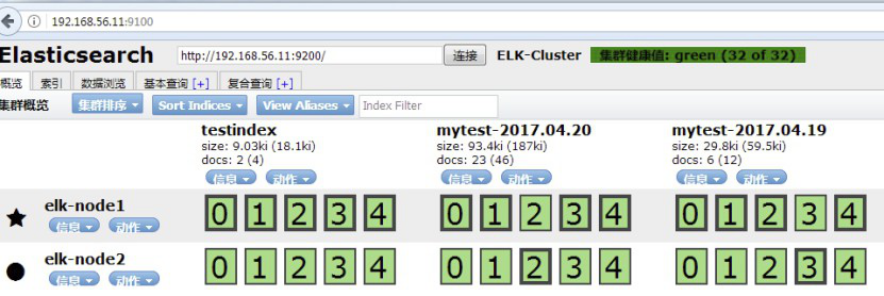

1.4.1.5:查看数据:

1.4.1.6:Master与Slave的区别:

Master的职责:

统计各node节点状态信息、集群状态信息统计、索引的创建和删除、索引分配的管理、关闭node节点等

Slave的职责:

同步数据、等待机会成为Master

1.4.1.7 导入本地的docker镜像:

[root@linux-host2 ~]# *docker save docker.io/mobz/elasticsearch-head > /opt/elasticsearch-head-docker.tar.gz #导出镜像*

[root@linux-host1 src]# *docker load < /opt/elasticsearch-head-docker.tar.gz #**导入*

[root@linux-host1 src]# docker images#验证

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/mobz/elasticsearch-head 5 b19a5c98e43b 4 months ago 823.9 MB

[root@linux-host1 src]# docker run -d -p 9100:9100 --name elastic docker.io/mobz/elasticsearch-head:5从本地docker images启动容器

1.4.2 elasticsearch插件之kopf:

1.4.2.1 kopf:

Git地址为https://github.com/lmenezes/elasticsearch-kopf,但是目前还不支持5.x版本的elasticsearch,但是可以安装在elasticsearc 1.x或2.x的版本安装。

1.5 监控elasticsearch集群状态

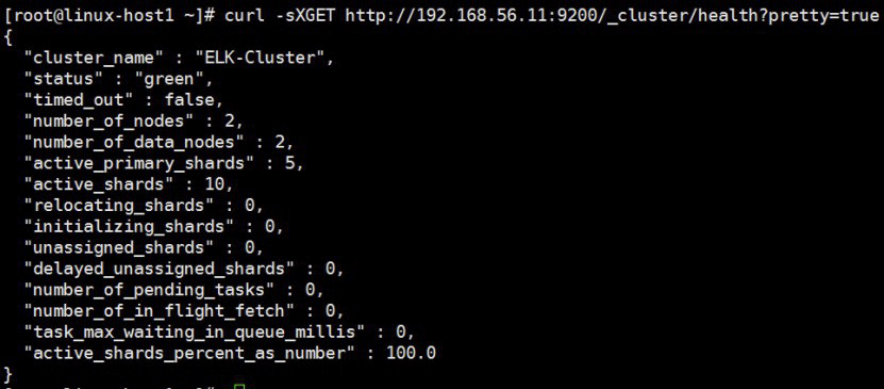

1.5.1:通过shell命令获取集群状态:

curl –sXGET http://192.168.56.11:9200/_cluster/health?pretty=true

获取到的是一个json格式的返回值,那就可以通过python对其中的信息进行分析,例如对status进行分析,如果等于green(绿色)就是运行在正常,等于yellow(黄色)表示副本分片丢失,red(红色)表示主分片丢失

[root@linux-host1 ~]# cat els-cluster-monitor.py

#!/usr/bin/env python

#coding:utf-8

#Author Zhang Jie

import smtplib

from email.mime.text import MIMEText

from email.utils import formataddr

import subprocess

body = ""

false="false"

obj= subprocess.Popen(("curl -sXGET http://192.168.56.11:9200/_cluster/health?pretty=true"),shell=True, stdout=subprocess.PIPE)

data = obj.stdout.read()

data1 = eval(data)

status = data1.get("status")

if status == "green":

print "50"

else:

print "100"

1.5.3 脚本执行结果:

[root@linux-host1 ~]# python els-cluster-monitor.py

50

二、部署logstash:

2.1 logstash环境准备及安装:

Logstash是一个开源的数据收集引擎,可以水平伸缩,而且logstash整个ELK当中拥有最多插件的一个组件,其可以接收来自不同来源的数据并统一输出到指定的且可以是多个不同目的地。

2.1.1 环境准备:

关闭防火墙和selinux,并且安装java环境

[root@linux-host3 ~]# systemctl stop firewalld

[root@linux-host3 ~]# systemctl disable firewalld

[root@linux-host3 ~]# sed -i '/SELINUX/s/enforcing/disabled/' /etc/selinux/config

[root@linux-host3 ~]# yum install jdk-8u121-linux-x64.rpm

[root@linux-host3 ~]# java -version

java version "1.8.0_121"

Java(TM) SE Runtime Environment (build 1.8.0_121-b13)

Java HotSpot(TM) 64-Bit Server VM (build 25.121-b13, mixed mode)

[root@linux-host3 ~]# reboot

2.1.2 安装logstash:

[root@linux-host3 ~]# yum install logstash-5.3.0.rpm

[root@linux-host3 ~]# chown logstash.logstash /usr/share/logstash/data/queue –R #权限更改为logstash用户和组,否则启动的时候日志报错

2.2 测试logstash

2.2.1 测试标准输入和输出:

[root@linux-host3 ~]# /usr/share/logstash/bin/logstash -e 'input { stdin{} } output { stdout{ codec => rubydebug }}' #标准输入和输出

hello

{

"@timestamp" => 2017-04-20T02:30:01.600Z, #当前事件的发生时间,

"@version" => "1", #事件版本号,一个事件就是一个ruby对象

"host" => "linux-host3.exmaple.com", #标记事件发生在哪里

"message" => "hello" #消息的具体内容

}

2.2.2 测试输出到文件

[root@linux-host3 ~]# /usr/share/logstash/bin/logstash -e 'input { stdin{} } output { file { path => "/tmp/log-%{+YYYY.MM.dd}messages.gz"}}'

hello

11:01:15.229 [[main]>worker1] INFO logstash.outputs.file - Opening file {:path=>"/tmp/log-2017-04-20messages.gz"}

[root@linux-host3 ~]# tail /tmp/log-2017-04-20messages.gz #打开文件验证

2.2.3测试输出到elasticsearch:

[root@linux-host3 ~]# /usr/share/logstash/bin/logstash -e 'input { stdin{} } output { elasticsearch {hosts => ["192.168.56.11:9200"] index => "mytest-%{+YYYY.MM.dd}" }}'

2.2.4 elasticsearch服务器验证收到数据:

[root@linux-host1 ~]# ll /elk/data/nodes/0/indices/

total 0

drwxr-xr-x 8 elasticsearch elasticsearch 59 Apr 19 19:08 JbnPSBGxQ_WbxT8jF5-TLw

drwxr-xr-x 8 elasticsearch elasticsearch 59 Apr 19 20:18 kZk1UbsjTliYfooevuQVdQ

drwxr-xr-x 4 elasticsearch elasticsearch 27 Apr 19 19:24 m6EiWqngS0C1bspg8JtmBg

drwxr-xr-x 8 elasticsearch elasticsearch 59 Apr 20 08:49 YhtJ1dEXSOa0YEKhe6HW8w

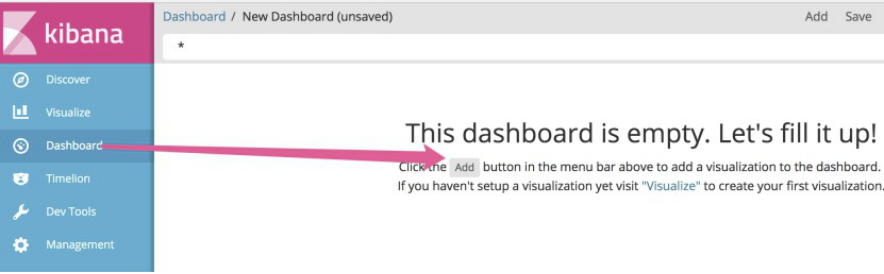

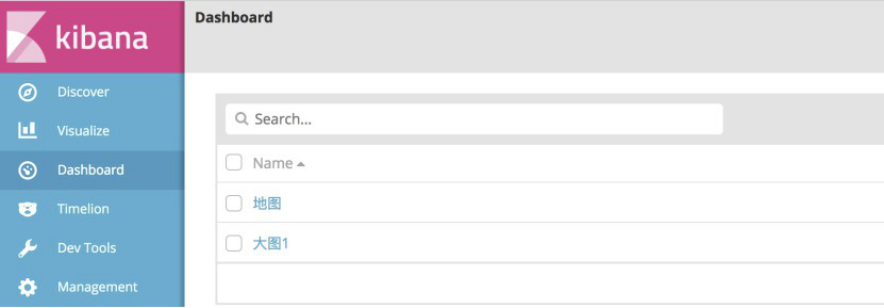

三、kibana部署及日志收集:

Kibana是一个通过调用elasticsearch服务器进行图形化展示搜索结果的开源项目。

3.1 安装并配置kibana:

可以通过rpm包或者二进制的方式进行安装

3.1.1 rpm方式:

[root@linux-host1 ~]# yum localinstall kibana-5.3.0-x86_64.rpm

[root@linux-host1 ~]# grep -n "^[a-Z]" /etc/kibana/kibana.yml

2:server.port: 5601 #监听端口

7:server.host: "0.0.0.0" #监听地址

21:elasticsearch.url: http://192.168.56.11:9200 #elasticsearch服务器地址

3.1.2 启动kibana服务并验证:

[root@linux-host1 ~]# systemctl start kibana

[root@linux-host1 ~]# systemctl enable kibana

[root@linux-host1 ~]# ss -tnl | grep 5601

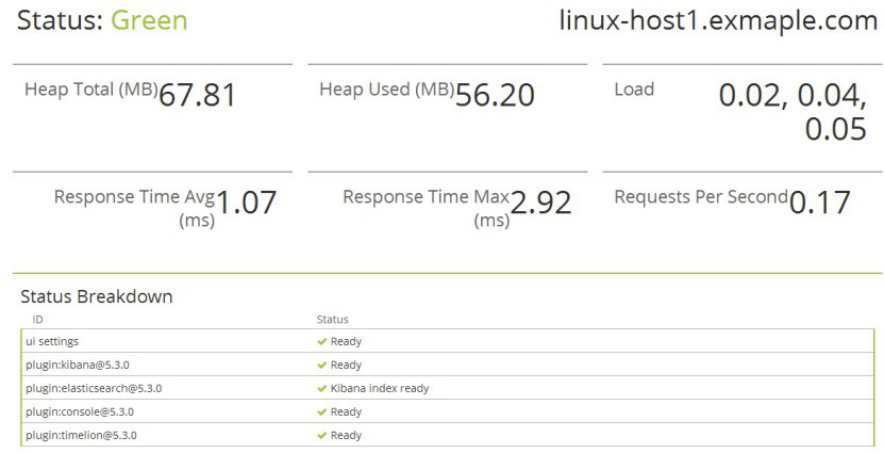

3.1.3 查看状态:

http://192.168.56.11:5601/status

3.1.4:kibana验证数据:

如果默认没有显示柱状的图,可能是最近没有写入新的数据,可以查看较长日期当中的数据或者通过logstash新写入数据即可:

四、通过logstash收集日志:

4.1 收集单个系统日志并输出至文件:

前提需要logstash用户对被收集的日志文件有读的权限并对写入的文件有写权限。

4.1.1logstash 配置文件:

[root@linux-host3 ~]# cat /etc/logstash/conf.d/system-log.conf

input {

file {

type => "messagelog"

path => "/var/log/messages"

start_position => "beginning" #第一次从头收集,之后从新添加的日志收集

}

}

output {

file {

path => "/tmp/%{type}.%{+yyyy.MM.dd}"

}

}

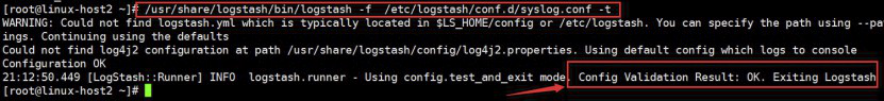

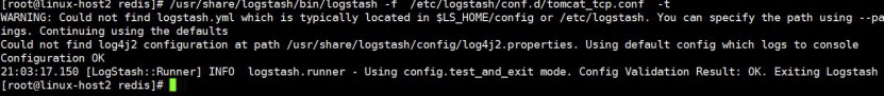

4.2 检测配置文件语法是否正确

[root@linux-host2 ~# /usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/syslog.conf –t

4.1.3 生成数据并验证

[root@linux-host3 ~]# echo "test" >> /var/log/messages

[root@linux-host3 ~]# tail /tmp/messagelog.2017.04.20 #验证是否生成文件

{"path":"/var/log/messages","@timestamp":"2017-04-20T07:12:16.001Z","@version":"1","host":"linux-host3.exmaple.com","message":"test","type":"messagelog"}

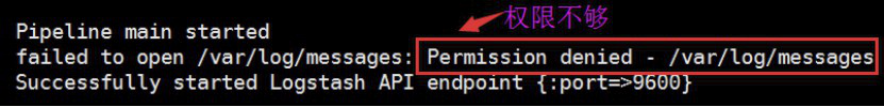

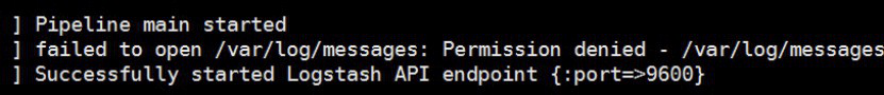

4.1.4 查看logstash日志,确认有权限收集日志

4.1.5 授权读取文件

[root@linux-host2 ~]# chmod 644 /var/log/messages

4.2 通过logstash收集多个日志

4.2.1 logstash配置

[root@linux-host3 logstash]# cat /etc/logstash/conf.d/system-log.conf

input {

file {

path => "/var/log/messages" #日志路径

type => "systemlog" #事件的唯一类型

start_position => "beginning" #第一次收集日志的位置

stat_interval => "3" #日志收集的间隔时间

}

file {

path => "/var/log/secure"

type => "securelog"

start_position => "beginning"

stat_interval => "3"

}

}

output {

if [type] == "systemlog" {

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "system-log-%{+YYYY.MM.dd}"

}}

if [type] == "securelog" {

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "secury-log-%{+YYYY.MM.dd}"

}}

}

4.2.2 重启logstash并查看日志是否有报错

[root@linux-host3 ~]# chmod 644 /var/log/secure

[root@linux-host3 ~]# chmod 644 /var/log/messages

[root@linux-host3 logstash]# systemctl restart logstash

4.2.3 向被收集的文件中写入数据

[root@linux-host3 logstash]# echo "test" >> /var/log/secure

[root@linux-host3 logstash]# echo "test" >> /var/log/messages

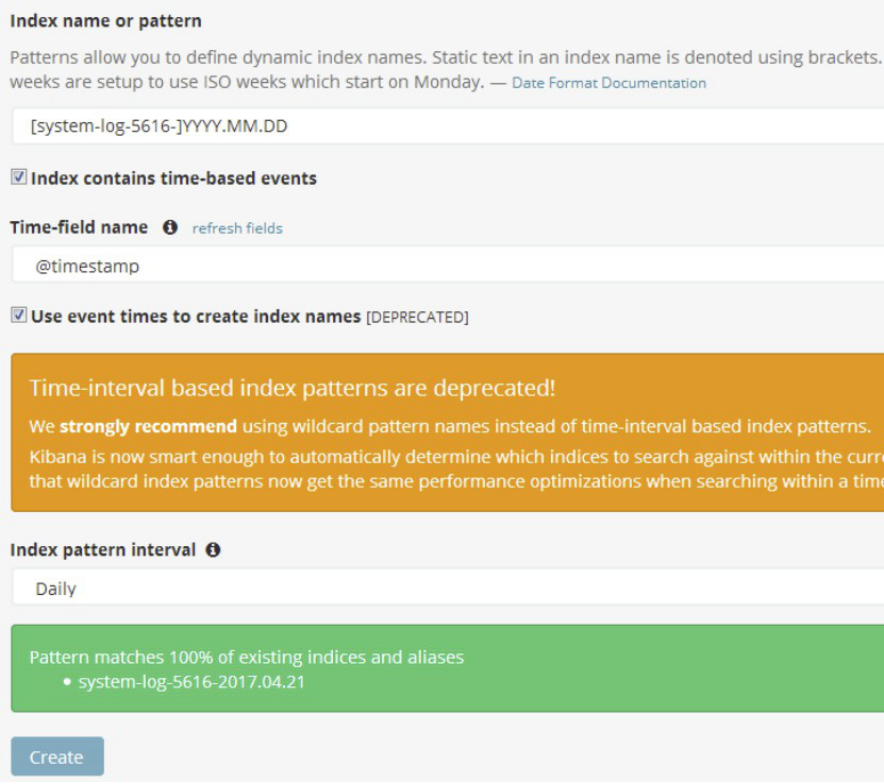

4.2.4 在kibana 界面添加 system-messsages索引

4.3 通过logtsash收集tomcat和java日志:

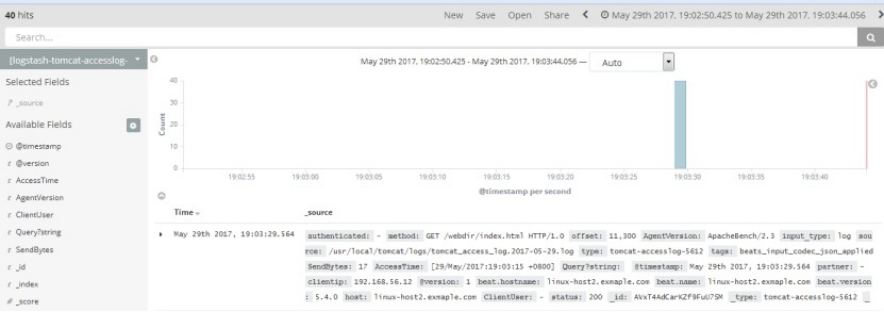

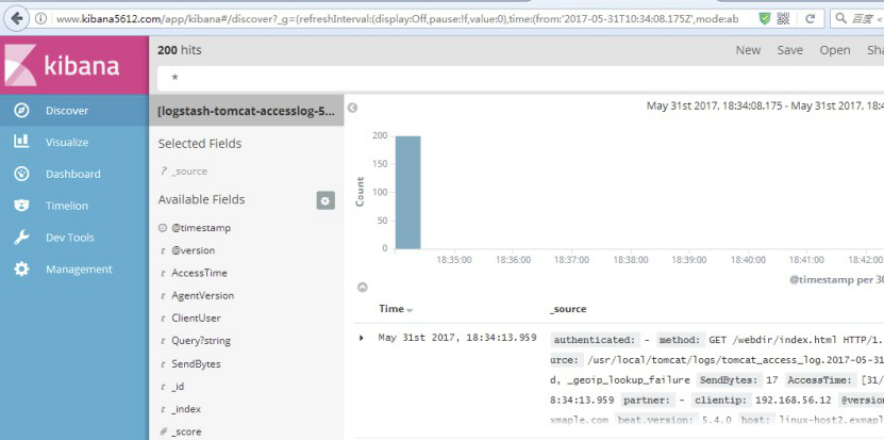

收集Tomcat服务器的访问日志以及Tomcat错误日志进行实时统计,在kibana页面进行搜索展现,每台Tomcat服务器要安装logstash负责收集日志,然后将日志转发给elasticsearch进行分析,在通过kibana在前端展现,配置过程如下:

4.3.1 服务器部署tomcat服务

需要安装java环境,并自定义一个web界面进行测试。

4.3.1.1 配置java环境并部署tomcat

[root@linux-host6 ~]# yum install jdk-8u121-linux-x64.rpm

[root@linux-host6 ~]# cd /usr/local/src/

[root@linux-host6 src]# tar xvf apache-tomcat-8.0.38.tar.gz

[root@linux-host6 src]# ln -sv /usr/local/src/apache-tomcat-8.0.38 /usr/local/tomcat

‘/usr/local/tomcat’ -> ‘/usr/local/src/apache-tomcat-8.0.38’

[root@linux-host6 tomcat]# cd /usr/local/tomcat/webapps/

[root@linux-host6 webapps]#mkdir /usr/local/tomcat/webapps/webdir

[root@linux-host6 webapps]# echo "Tomcat Page" > /usr/local/tomcat/webapps/webdir/index.html

[root@linux-host6 webapps]# ../bin/catalina.sh start

[root@linux-host6 webapps]# ss -tnl | grep 8080

LISTEN 0 100 :::8080 :::*

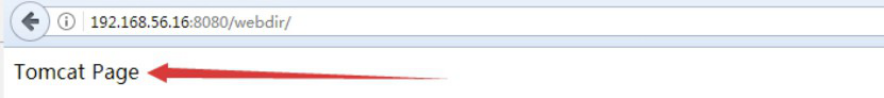

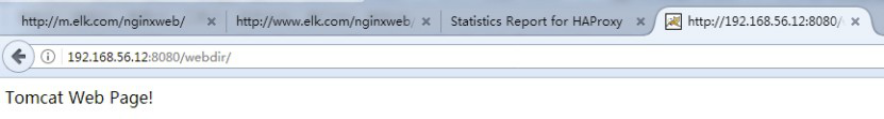

4.3.1.2 确认web可以访问

4.3.1.3 tomcat日志转json

[root@linux-host6 tomcat]# vim conf/server.xml

<Valve className="org.apache.catalina.valves.AccessLogValve" directory="logs"

prefix="tomcat_access_log" suffix=".log"

pattern="{"clientip":"%h","ClientUser":"%l","authenticated":"%u","AccessTime":"%t","method":"%r","status":"%s","SendBytes":"%b","Query?string":"%q","partner":"%{Referer}i","AgentVersion":"%{User-Agent}i"}"/>

[root@linux-host6 tomcat]# ./bin/catalina.sh stop

[root@linux-host6 tomcat]# rm -rf logs/* #删除或清空之前的访问日志

[root@linux-host6 tomcat]# ./bin/catalina.sh start #启动并访问tomcat界面

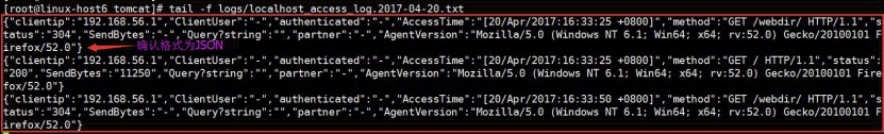

[root@linux-host6 tomcat]# tail -f logs/localhost_access_log.2017-04-20.txt

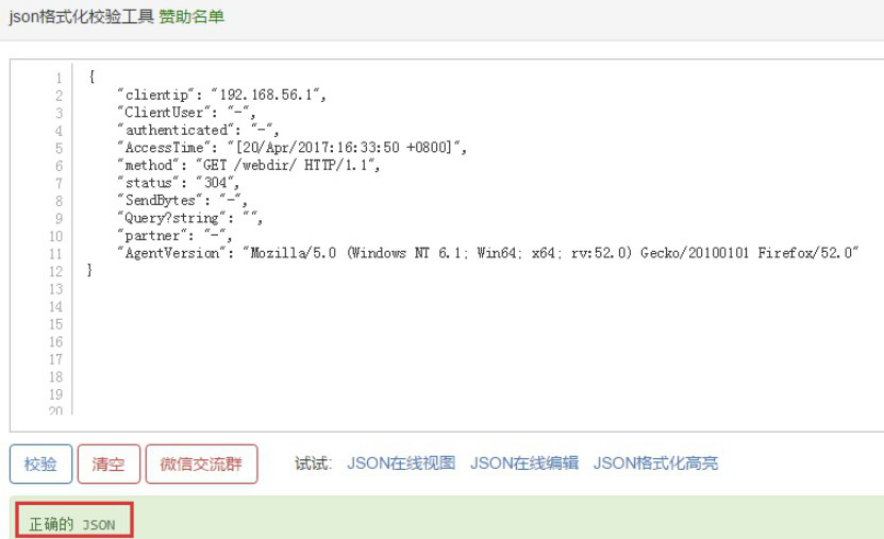

4.3.1.4 验证日志是否json格式

4.3.1.5 如何获取日志行中的IP

Python 脚本解析:

#!/usr/bin/env python

#coding:utf-8

#Author Zhang Jie

data ={"clientip":"192.168.56.1","ClientUser":"-","authenticated":"-","AccessTime":"[20/May/2017:21:46:22 +0800]","method":"GET /webdir/ HTTP/1.1","status":"200","SendBytes":"12","Query?string":"","partner":"-","AgentVersion":"Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/56.0.2924.87 Safari/537.36"}

ip=data["clientip"]

print ip

4.3.2 在tomcat服务器安装logstash收集tomcat和系统日志:

需要部署tomcat并安装配置logstash

4.3.2.1 安装配置logstash

[root@linux-host6 ~]# yum install logstash-5.3.0.rpm -y

[root@linux-host6 ~]# vim /etc/logstash/conf.d/tomcat.conf

[root@linux-host6 ~]# cat /etc/logstash/conf.d/tomcat.conf

input {

file {

path => "/usr/local/tomcat/logs/localhost_access_log.*.txt"

start_position => "end"

type => "tomct-access-log"

}

file {

path => "/var/log/messages"

start_position => "end"

type => "system-log"

}

}

output {

if [type] == "tomct-access-log" {

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "logstash-tomcat-5616-access-%{+YYYY.MM.dd}"

codec => "json"

}}

if [type] == "system-log" {

elasticsearch {

hosts => ["192.168.56.12:9200"] #写入到不通的ES服务器

index => "system-log-5616-%{+YYYY.MM.dd}"

}}

}

4.3.2.2 重启logstash并确认

[root@linux-host6 ~]# systemctl restart logstash #更改完配置文件重启logstash

[root@linux-host6 ~]# tail -f /var/log/logstash/logstash-plain.log #验证日志

[root@linux-host6 ~]# chmod 644 /var/log/messages #修改权限

[root@linux-host6 ~]# systemctl restart logstash #再次重启logstash

4.3.2.3 访问tomcat并生成日志

[root@linux-host6 ~]# echo "2017-02-21" >> /var/log/messages

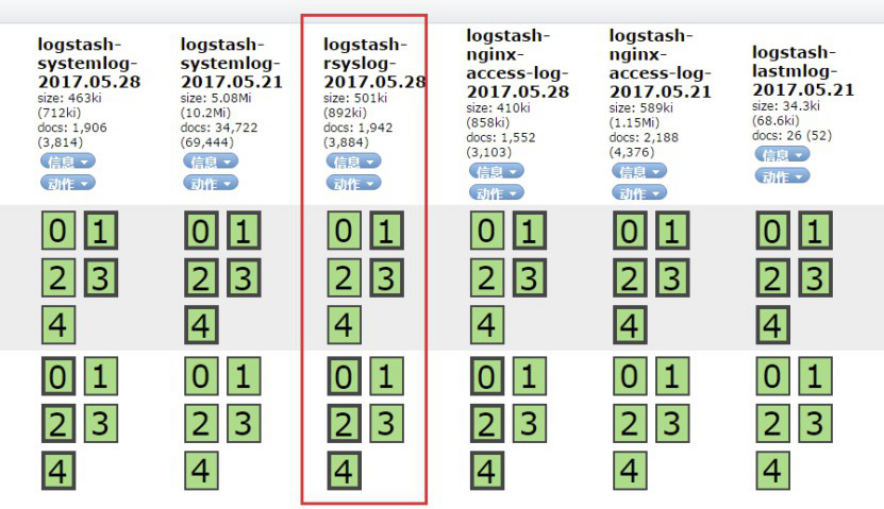

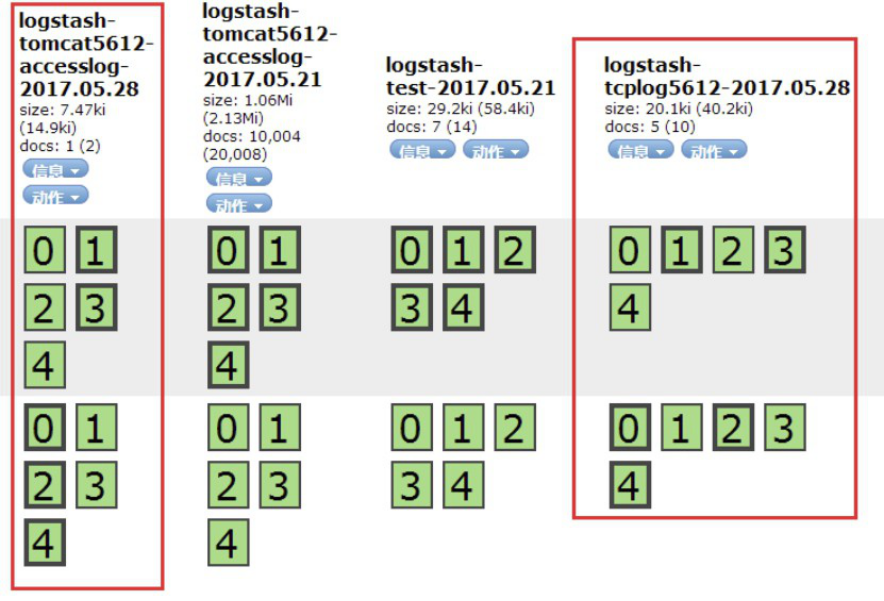

4.3.2.4 访问head插件验证索引

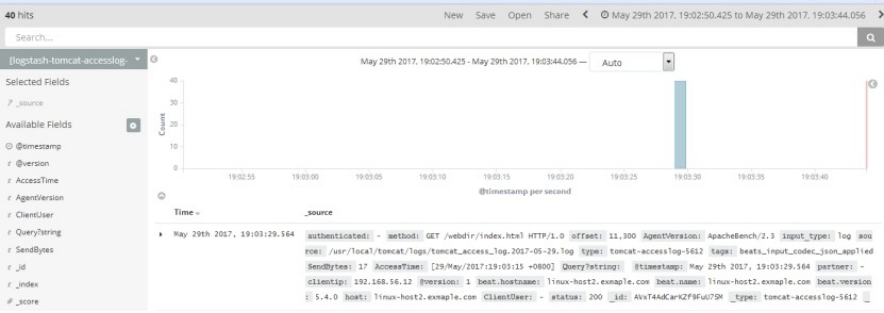

4.3.2.8 在其他服务器使用ab批量访问并验证数据

[root@linux-host3 ~]# yum install httpd-tools –y

[root@linux-host3 ~]# ab -n1000 -c100 http://192.168.56.16:8080/webdir/

4.3.3 收集java日志

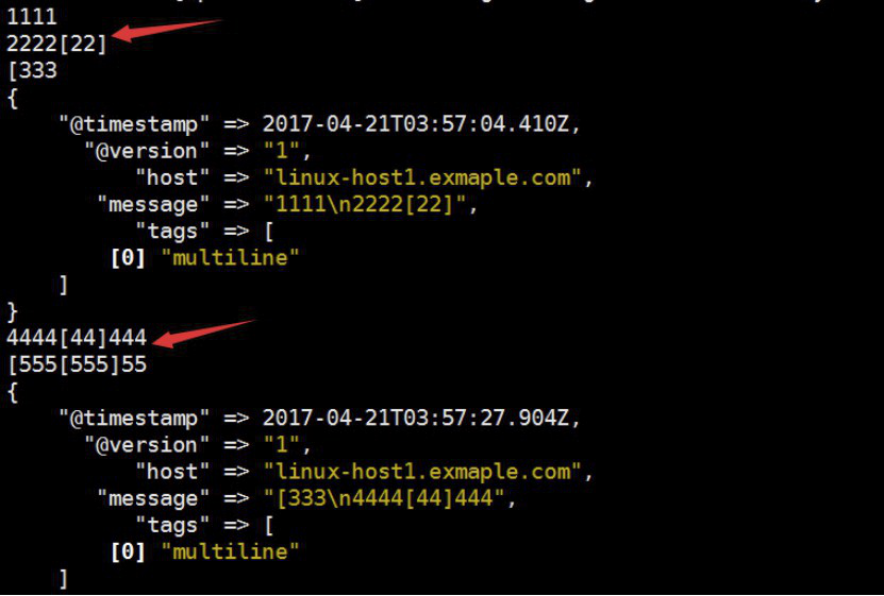

使用codec的multiline插件实现多行匹配,这是一个可以将多行进行合并的插件,而且可以使用what指定将匹配到的行与前面的行合并还是和后面的行合并,https://www.elastic.co/guide/en/logstash/current/plugins-codecs-multiline.html

4.3.3.1在elastisearch服务器上部署logstash

[root@linux-host1 ~]# chown logstash.logstash /usr/share/logstash/data/queue -R

[root@linux-host1 ~]# ll -d /usr/share/logstash/data/queue

drwxr-xr-x 2 logstash logstash 6 Apr 19 20:03 /usr/share/logstash/data/queue

[root@linux-host1 ~]# cat /etc/logstash/conf.d/java.conf

input {

stdin {

codec => multiline {

pattern => "^[" #当遇到[开头的行时候将多行进行合并

negate => true #true为匹配成功进行操作,false为不成功进行操作

what => "previous" #与上面的行合并,如果是下面的行合并就是next

}}

}

filter { #日志过滤,如果所有的日志都过滤就写这里,如果只针对某一个过滤就写在input里面的日志输入里面

}

output {

stdout {

codec => rubydebug

}}

4.3.3.2 测试可以正常启动

[root@linux-host1 ~]#/usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/java.conf

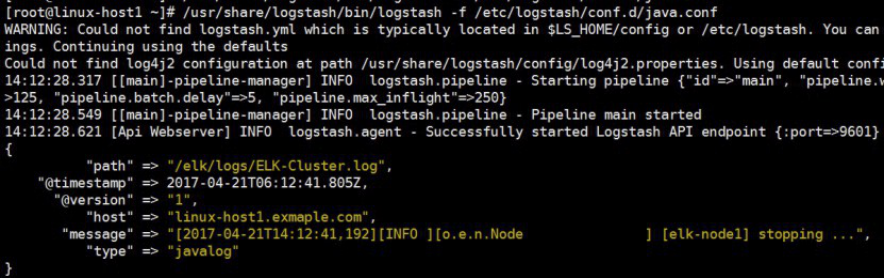

4.3.3.4 配置读取日子文件写入到文件中

[root@linux-host1 ~]# vim /etc/logstash/conf.d/java.conf

input {

file {

path => "/elk/logs/ELK-Cluster.log"

type => "javalog"

start_position => "beginning"

codec => multiline {

pattern => "^["

negate => true

what => "previous"

}}

}

output {

if [type] == "javalog" {

stdout {

codec => rubydebug

}

file {

path => "/tmp/m.txt"

}}

}

4.3.3.5 语法验证

[root@linux-host1 ~]# /usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/java.conf -t

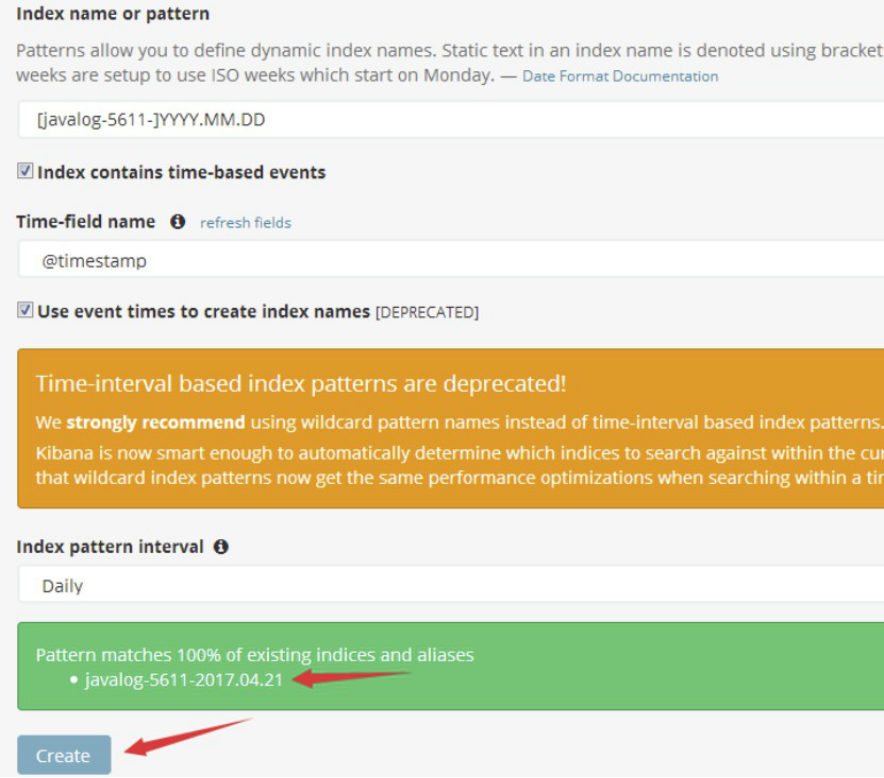

4.3.3.6 将输出改为elasticsearch

更改后的内容如下:

[root@linux-host1 ~]# cat /etc/logstash/conf.d/java.conf

input {

file {

path => "/elk/logs/ELK-Cluster.log"

type => "javalog"

start_position => "beginning"

codec => multiline {

pattern => "^["

negate => true

what => "previous"

}}

}

output {

if [type] == "javalog" {

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "javalog-5611-%{+YYYY.MM.dd}"

}}

}

[root@linux-host1 ~]# systemctl restart logstash

然后重启一下elasticsearch服务,目前是为了生成新的日志,以验证logstash能否自动收集新生成的日志。

[root@linux-host1 ~]# systemctl restart elasticsearch

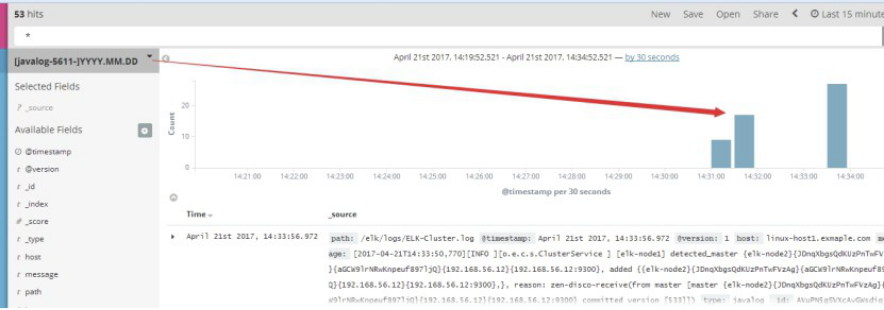

4.3.3.7 kibana界面添加javalog-5611索引

4.3.3.8 生成数据

[root@linux-host1 ~]# cat /elk/logs/ELK-Cluster.log >> /tmp/1

[root@linux-host1 ~]# cat /tmp/1 >> /elk/logs/ELK-Cluster.log

4.3.3.9 kibana界面查看数据:

4.3.3.10 关于sincedb

[root@linux-host1~]# cat /var/lib/logstash/plugins/inputs/file/.sincedb_1ced15cfacdbb0380466be84d620085a

134219868 0 2064 29465 #记录了收集文件的inode信息

[root@linux-host1 ~]# ll -li /elk/logs/ELK-Cluster.log

134219868 -rw-r--r-- 1 elasticsearch elasticsearch 29465 Apr 21 14:33 /elk/logs/ELK-Cluster.log

4.4 收集Nginx 日志

4.4.1 部署Nginx服务

[root@linux-host6 ~]# yum install gcc gcc-c++ automake pcre pcre-devel zlip zlib-devel openssl openssl-devel

[root@linux-host6 ~]# cd /usr/local/src/

[root@linux-host6 src]# wget http://nginx.org/download/nginx-1.10.3.tar.gz

[root@linux-host6 src]# tar xvf nginx-1.10.3.tar.gz

[root@linux-host6 src]# cd nginx-1.10.3

[root@linux-host6 nginx-1.10.3]# ./configure --prefix=/usr/local/nginx-1.10.3

[root@linux-host6 nginx-1.10.3]# make && make install

[root@linux-host6 nginx-1.10.3]# ln -sv /usr/local/nginx-1.10.3 /usr/local/nginx

‘/usr/local/nginx’ -> ‘/usr/local/nginx-1.10.3’

4.4.2 编辑配置文件并准备web页面

[root@linux-host6 nginx-1.10.3]# cd /usr/local/nginx

[root@linux-host6 nginx]# vim conf/nginx.conf

48 location /web {

49 root html;

50 index index.html index.htm;

51 }

[root@linux-host6 nginx]# mkdir /usr/local/nginx/html/web

[root@linux-host6 nginx]# echo " Nginx WebPage! " > /usr/local/nginx/html/web/index.html

4.4.3 测试Nginx配置

/usr/local/nginx/sbin/nginx -t #测试配置文件语法

/usr/local/nginx/sbin/nginx #启动服务

/usr/local/nginx/sbin/nginx -s reload #重读配置文件

4.4 .4 启动Nginx并验证

[root@linux-host6 nginx]# /usr/local/nginx/sbin/nginx -t

nginx: the configuration file /usr/local/nginx-1.10.3/conf/nginx.conf syntax is ok

nginx: configuration file /usr/local/nginx-1.10.3/conf/nginx.conf test is successful

[root@linux-host6 nginx]# /usr/local/nginx/sbin/nginx

[root@linux-host6 nginx]# lsof -i:80

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

nginx 17719 root 6u IPv4 90721 0t0 TCP *:http (LISTEN)

nginx 17720 nobody 6u IPv4 90721 0t0 TCP *:http (LISTEN)

4.4.5 访问Nginx页面

4.4.6 将nginx日志转换为json格式

[root@linux-host6 nginx]# vim conf/nginx.conf

log_format access_json '{"@timestamp":"$time_iso8601",'

'"host":"$server_addr",'

'"clientip":"$remote_addr",'

'"size":$body_bytes_sent,'

'"responsetime":$request_time,'

'"upstreamtime":"$upstream_response_time",'

'"upstreamhost":"$upstream_addr",'

'"http_host":"$host",'

'"url":"$uri",'

'"domain":"$host",'

'"xff":"$http_x_forwarded_for",'

'"referer":"$http_referer",'

'"status":"$status"}';

access_log /var/log/nginx/access.log access_json;

[root@linux-host6 nginx]# mkdir /var/log/nginx

[root@linux-host6 nginx]# /usr/local/nginx/sbin/nginx -t

nginx: the configuration file /usr/local/nginx-1.10.3/conf/nginx.conf syntax is ok

nginx: configuration file /usr/local/nginx-1.10.3/conf/nginx.conf test is successful

4.4.7 确认日志格式为json

[root@linux-host6 nginx]# tail /var/log/nginx/access.log

{"@timestamp":"2017-04-21T17:03:09+08:00","host":"192.168.56.16","clientip":"192.168.56.1","size":0,"responsetime":0.000,"upstreamtime":"-","upstreamhost":"-","http_host":"192.168.56.16","url":"/web/index.html","domain":"192.168.56.16","xff":"-","referer":"-","status":"304"}

4.4.8 配置 logstash收集nginx访问日志:

[root@linux-host6 conf.d]# vim nginx.conf

input {

file {

path => "/var/log/nginx/access.log"

start_position => "end"

type => "nginx-accesslog"

codec => json

}

}

output {

if [type] == "nginx-accesslog" {

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "logstash-nginx-accesslog-5616-%{+YYYY.MM.dd}"

}}

}

4.4.9 kibana界面添加索引:

4.5 收集 TCP/UDP日志

通过logstash的tcp/udp插件收集日志,通常用于在向elasticsearch日志补录丢失的部分日志,可以将丢失的日志通过一个TCP端口直接写入到elasticsearch服务器。

4.5.1 logstash配置文件,先进行收集测试:

[root@linux-host6 ~]# cat /etc/logstash/conf.d/tcp.conf

input {

tcp {

port => 9889

type => "tcplog"

mode => "server"

}

}

output {

stdout {

codec => rubydebug

}

}

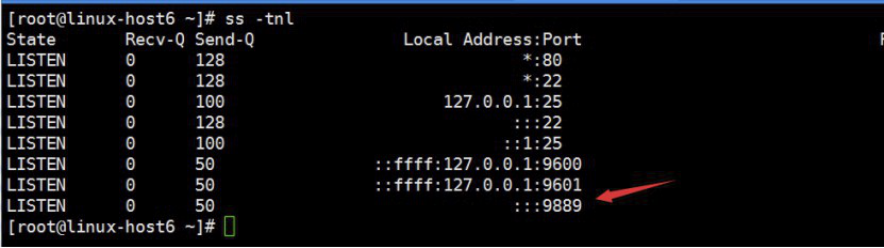

4.5.2 验证端口启动成功

[root@linux-host6 src]# /usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/tcp.conf

4.5.3 在其他服务器安装nc命令

NetCat简称nc,在网络工具中有“瑞士军刀”美誉,其功能实用,是一个简单、可靠的网络工具,可通过TCP或UDP协议传输读写数据,另外还具有很多其他功能。

[root@linux-host1 ~]# yum instll nc –y

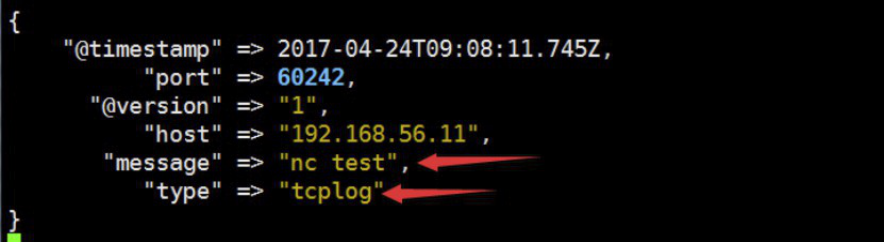

[root@linux-host1 ~]# echo "nc test" | nc 192.168.56.16 9889

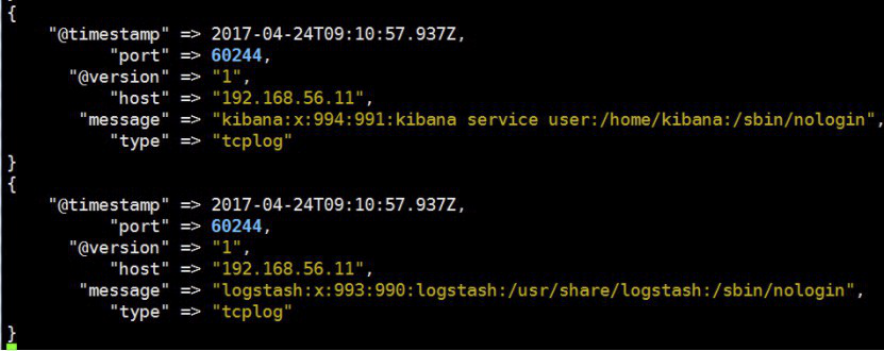

4.5.4 验证logstash 是否接收到数据

4.5.5 通过 nc命令发送一个文件

[root@linux-host1 ~]# nc 192.168.56.16 9889 < /etc/passwd

4.5.6 logstash 验证数据

4.5.7 通过伪设备的方式发送消息

在类Unix操作系统中,设备节点并不一定要对应物理设备。没有这种对应关系的设备是伪设备。操作系统运用了它们提供的多种功能,tcp只是dev下面众多伪设备当中的一种设备。

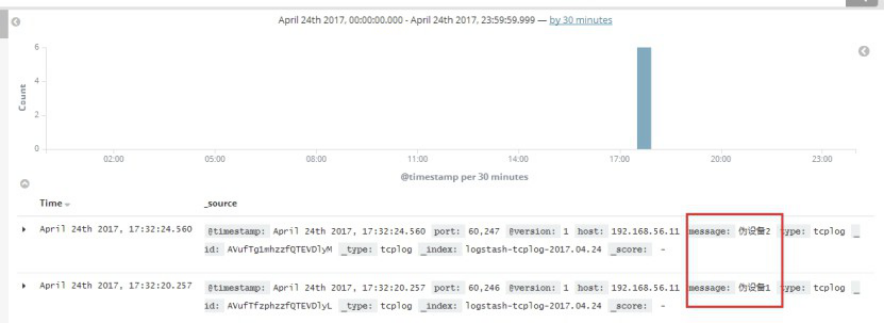

[root@linux-host1 ~]# echo "伪设备" > /dev/tcp/192.168.56.16/9889

4.5.8 logstash验证数据

4.5.9 将输出改为elasicsearch

[root@linux-host6 conf.d]# vim /etc/logstash/conf.d/tcp.conf

input {

tcp {

port => 9889

type => "tcplog"

mode => "server"

}

}

output {

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "logstash-tcplog-%{+YYYY.MM.dd}"

}

}

[root@linux-host6 conf.d]# systemctl restart logstas

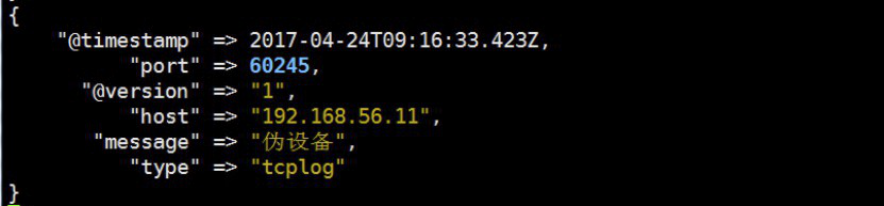

4.5.10 通过nc命令或伪设备输入日志:

[root@linux-host1 ~]# echo "伪设备1" > /dev/tcp/192.168.56.16/9889

[root@linux-host1 ~]# echo "伪设备2" > /dev/tcp/192.168.56.16/9889

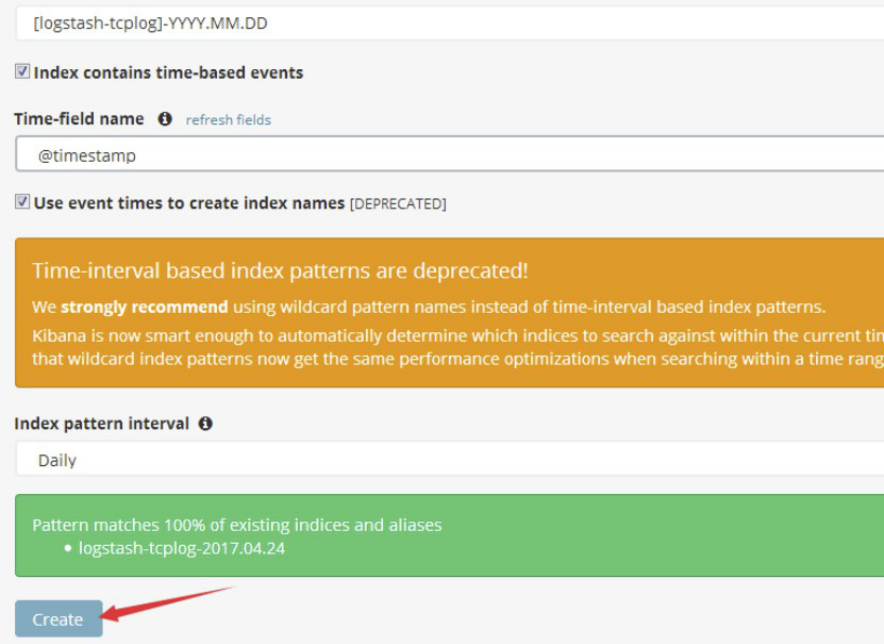

4.5.11 在kibana界面添加索引:

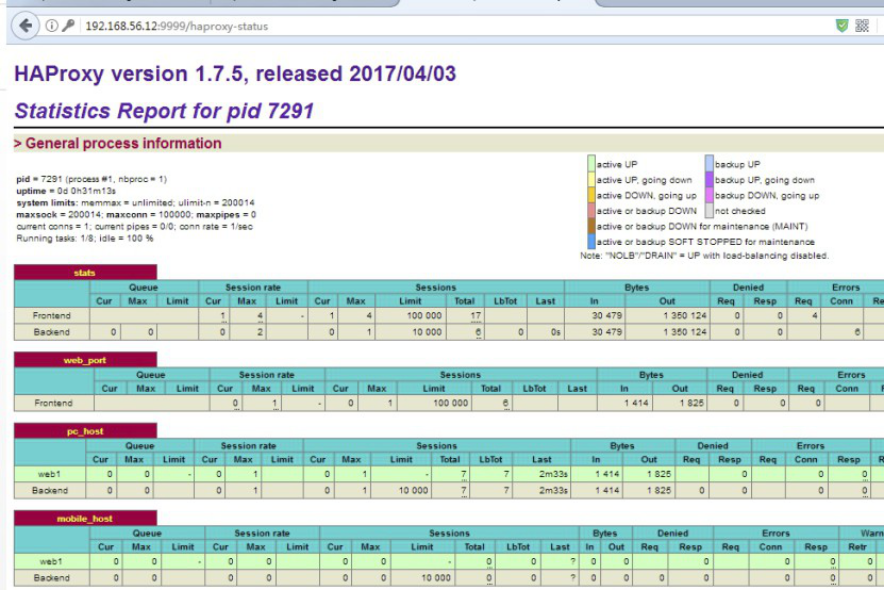

4.6 通过rsyslog收集haproxy日志

在centos 6及之前的版本叫做syslog,centos 7开始叫做rsyslog,根据官方的介绍,rsyslog(2013年版本)可以达到每秒转发百万条日志的级别,官方网址:http://www.rsyslog.com/,确认系统安装的版本命令如下:

[root@linux-host1 ~]# yum list syslog

Installed Packages rsyslog.x86_64 7.4.7-12.el7

4.6.1 编译安装配置haproxy:

[root@linux-host2 ~]# cd /usr/local/src/

[root@linux-host2 src]# tar xvf haproxy-1.7.5.tar.gz

[root@linux-host2 src]# cd haproxy-1.7.5

[root@linux-host2 haproxy-1.7.5]# yum install gcc pcre pcre-devel openssl openssl-devel –

[root@linux-host2 haproxy-1.7.5]# make TARGET=linux2628 USE_PCRE=1 USE_OPENSSL=1 USE_ZLIB=1 PREFIX=/usr/local/haproxy

[root@linux-host2 haproxy-1.7.5]# make install PREFIX=/usr/local/haproxy

[root@linux-host2 haproxy-1.7.5]# /usr/local/haproxy/sbin/haproxy -v #确认版本

HA-Proxy version 1.7.5 2017/04/03

Copyright 2000-2017 Willy Tarreau <willy@haproxy.org

[root@linux-host2 haproxy-1.7.5]# vim /usr/lib/systemd/system/haproxy.service

[Unit]

Description=HAProxy Load Balancer

After=syslog.target network.target

[Service]

EnvironmentFile=/etc/sysconfig/haproxy

ExecStart=/usr/sbin/haproxy-systemd-wrapper -f /etc/haproxy/haproxy.cfg -p /run/haproxy.pid $OPTIONS

ExecReload=/bin/kill -USR2 $MAINPID

[Install]

WantedBy=multi-user.target

[root@linux-host2 haproxy-1.7.5]# cp /usr/local/src/haproxy-1.7.5/haproxy-systemd-wrapper /usr/sbin/

[root@linux-host2 haproxy-1.7.5]# cp /usr/local/src/haproxy-1.7.5/haproxy /usr/sbin/

[root@linux-host2 haproxy-1.7.5]# vim /etc/sysconfig/haproxy #系统级配置文件

# Add extra options to the haproxy daemon here. This can be useful for

# specifying multiple configuration files with multiple -f options.

# See haproxy(1) for a complete list of options.

OPTIONS=""

[root@linux-host2 haproxy-1.7.5]# mkdir /etc/haproxy

[root@linux-host2 haproxy-1.7.5]# cat /etc/haproxy/haproxy.cfg

global

maxconn 100000

chroot /usr/local/haproxy

uid 99

gid 99

daemon

nbproc 1

pidfile /usr/local/haproxy/run/haproxy.pid

log 127.0.0.1 local6 info

defaults

option http-keep-alive

option forwardfor

maxconn 100000

mode http

timeout connect 300000ms

timeout client 300000ms

timeout server 300000ms

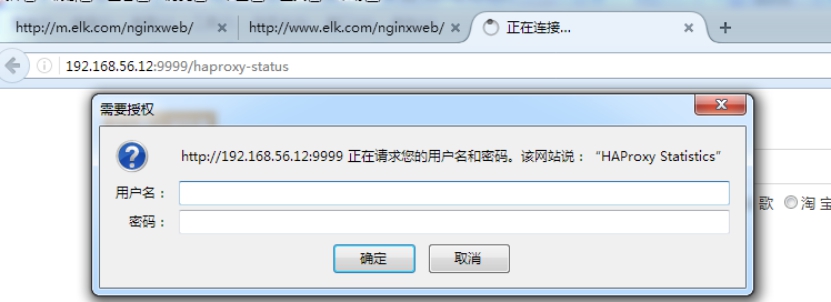

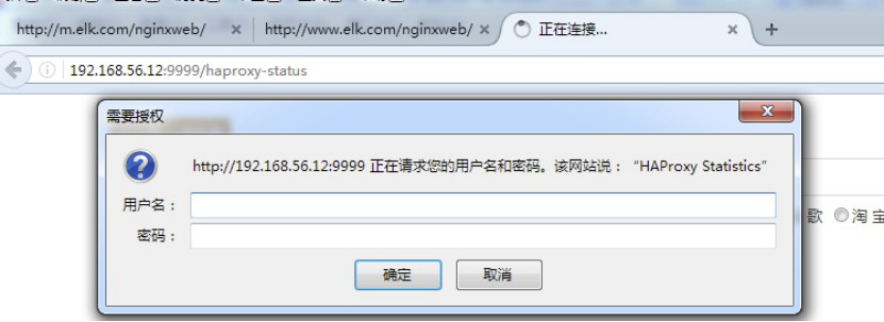

listen stats

mode http

bind 0.0.0.0:9999

stats enable

log global

stats uri /haproxy-status

stats auth haadmin:123456

#frontend web_port

frontend web_port

bind 0.0.0.0:80

mode http

option httplog

log global

option forwardfor

###################ACL Setting##########################

acl pc hdr_dom(host) -i www.elk.com

acl mobile hdr_dom(host) -i m.elk.com

###################USE ACL##############################

use_backend pc_host if pc

use_backend mobile_host if mobile

########################################################

backend pc_host

mode http

option httplog

balance source

server web1 192.168.56.11:80 check inter 2000 rise 3 fall 2 weight 1

backend mobile_host

mode http

option httplog

balance source

server web1 192.168.56.11:80 check inter 2000 rise 3 fall 2 weight 1

4.6.2 编辑rsyslog服务配置文件

$ModLoad imudp

$UDPServerRun 514

$ModLoad imtcp

$InputTCPServerRun 514 #去掉15/16/19/20行前面的注释

local6.* @@192.168.56.11:516 #最后面一行添加,local6对应haproxy配置文件定义的local级别

4.6.3 重新启动haproxy和rsyslog服务

[root@linux-host2 ~]# systemctl restart haproxy

[root@linux-host2 ~]# systemctl restart rsyslog

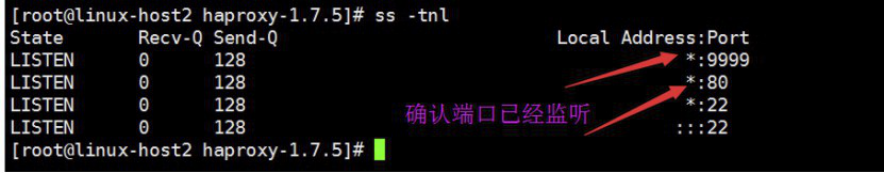

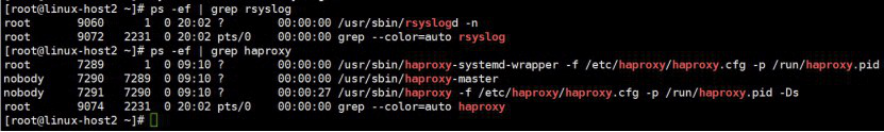

4.6.4 验证端口服务:

确认服务进程已经存在:

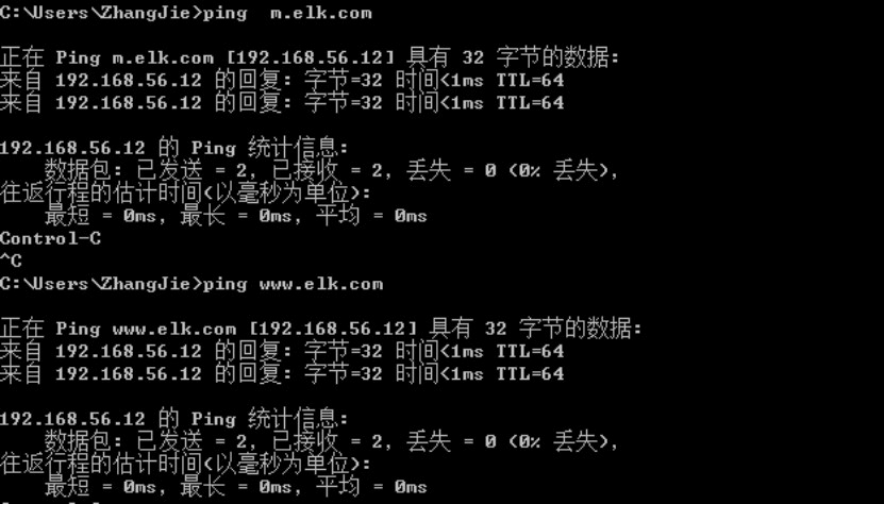

C:\Windows\System32\drivers\etc

192.168.56.12 www.elk.com

192.168.56.12 m.elk.com

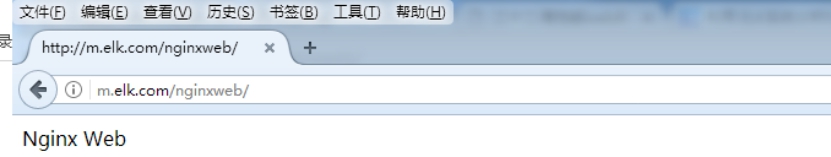

4.6.6 测试域名及访问

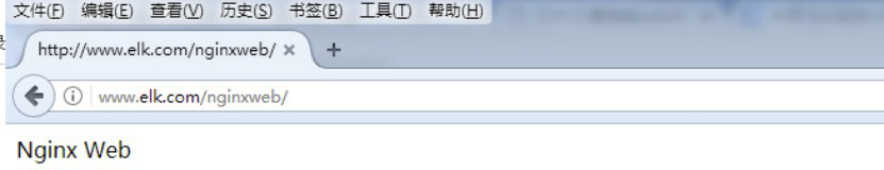

启动后端web服务器的nginx:

[root@linux-host1 ~]# /usr/local/nginx/sbin/nginx

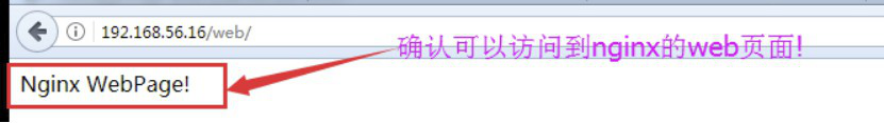

确认可以访问到nginx的web界面:

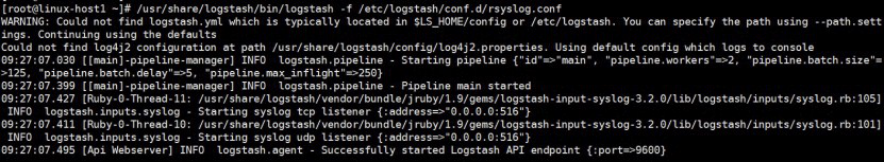

4.6.7 编辑logstash配置文件

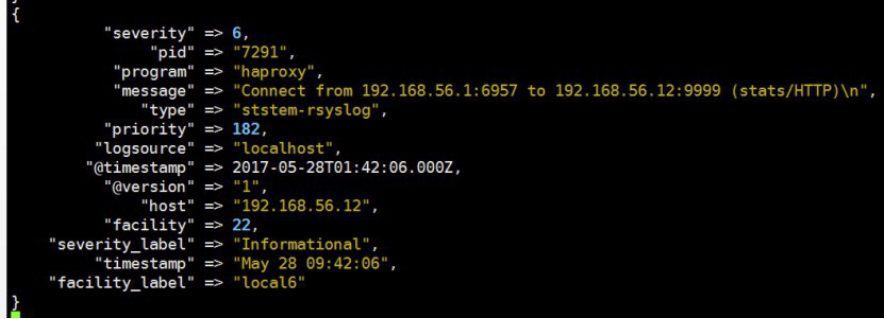

配置logstash监听一个本地端口作为日志输入源,haproxy服务器的rsyslog输出IP和端口要等同于logstash服务器监听的IP:端口,本次的配置是在Host1上开启logstash,在Host2上收集haproxy的访问日志并转发至Host1服务器的logstash进行处理,logstash的配置文件如下:

[root@linux-host1 conf.d]# cat /etc/logstash/conf.d/rsyslog.conf

input{

syslog {

type => "ststem-rsyslog"

port => "516" #监听一个本地的端口

}}

output{

stdout{

codec => rubydebug

}}

4.6.8 通过 -f命令测试logstash

[root@linux-host1 conf.d]# /usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/rsyslog.conf

4.6.9 web访问 haproxy并验证数据

添加本地解析:

[root@linux-host1 ~]# tail –n2 /etc/hosts

192.168.56.12 www.elk.com

192.168.56.12 m.elk.com

[root@linux-host1 ~]# curl http://www.elk.com/nginxweb/index.html

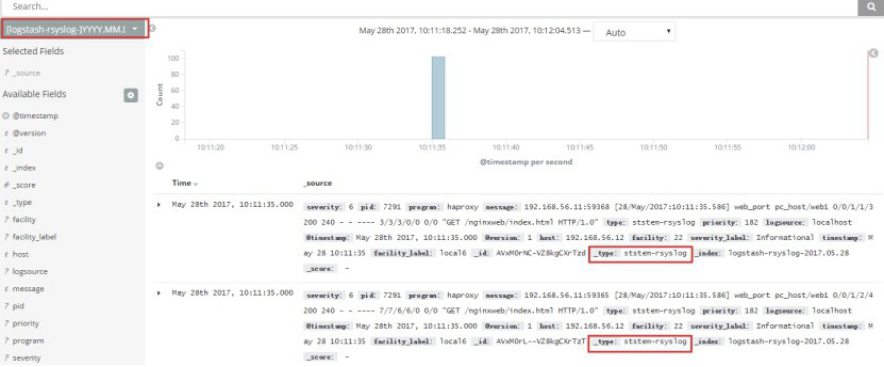

4.6.13 将输出改为elastics:

[root@linux-host1 conf.d]# cat /etc/logstash/conf.d/rsyslog.conf

input{

syslog {

type => "ststem-rsyslog"

port => "516"

}}

output{

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "logstash-rsyslog-%{+YYYY.MM.dd}"

}

}

[root@linux-host6 conf.d]# systemctl restart logstash

4.6.14 web反问haproxy以生成新日志

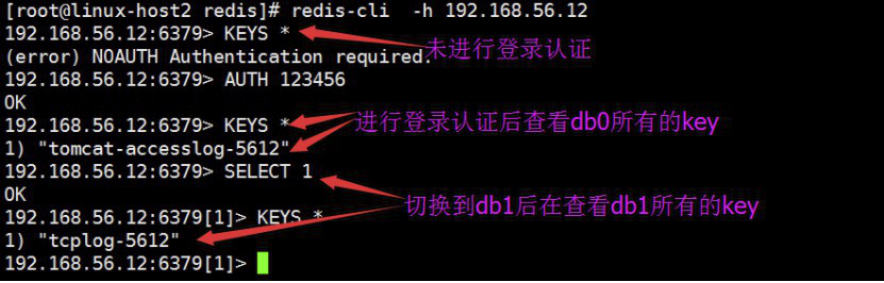

4.7 logstash收集日志并写入redis:

用一台服务器按照部署redis服务,专门用于日志缓存使用,用于web服务器产生大量日志的场景,例如下面的服务器内存即将被使用完毕,查看是因为redis服务保存了大量的数据没有被读取而占用了大量的内存空间。

整体架构:

4.7.1 部署redis

[root@linux-host2 ~]# cd /usr/local/src/

[root@linux-host2 src]# wget http://download.redis.io/releases/redis-3.2.8.tar.gz

[root@linux-host2 src]# tar xvf redis-3.2.8.tar.gz

[root@linux-host2 src]# ln -sv /usr/local/src/redis-3.2.8 /usr/local/redis

‘/usr/local/redis’ -> ‘/usr/local/src/redis-3.2.8’

[root@linux-host2 src]#cd /usr/local/redis/deps

[root@linux-host2 redis]# yum install gcc

[root@linux-host2 deps]# make geohash-int hiredis jemalloc linenoise lua

[root@linux-host2 deps]# cd ..

[root@linux-host2 redis]# make

[root@linux-host2 redis]# vim redis.conf

[root@linux-host2 redis]# grep "^[a-Z]" redis.conf #主要改动的地方

bind 0.0.0.0

protected-mode yes

port 6379

tcp-backlog 511

timeout 0

tcp-keepalive 300

daemonize yes

supervised no

pidfile /var/run/redis_6379.pid

loglevel notice

logfile ""

databases 16

save ""

rdbcompression no #是否压缩

rdbchecksum no #是否校验

[root@linux-host2 redis]# ln -sv /usr/local/redis/src/redis-server /usr/bin/

‘/usr/bin/redis-server’ -> ‘/usr/local/redis/src/redis-server’

[root@linux-host2 redis]# ln -sv /usr/local/redis/src/redis-cli /usr/bin/

‘/usr/bin/redis-cli’ -> ‘/usr/local/redis/src/redis-cli’

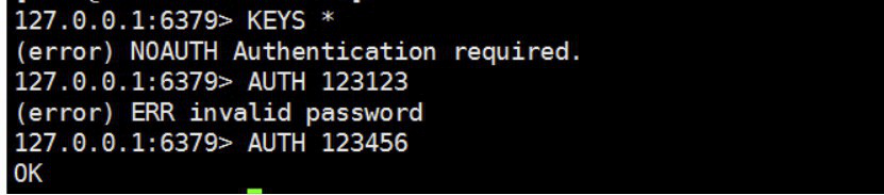

4.7.2 设置redis访问密码

为安全考虑,生产环境必须设置reids连接密码:

[root@linux-host2 redis]# redis-cli

127.0.0.1:6379> config set requirepass 12345678 #动态设置,重启后无效

OK

480 requirepass 123456 #redis.conf配置文件

4.7.3 启动并测试redis服务

[root@linux-host2 redis]# redis-server /usr/local/redis/redis.conf #启动服务

[root@linux-host2 redis]# redis-cli

127.0.0.1:6379> ping

PONG

4.7.4 配置logstash将日志写入redis

将tomcat服务器的logstash收集之后的tomcat 访问日志写入到redis服务器,然后通过另外的logstash将redis服务器的数据取出在写入到elasticsearch服务器。

官方文档:https://www.elastic.co/guide/en/logstash/current/plugins-outputs-redis.html

[root@linux-host2 tomcat]# cat /etc/logstash/conf.d/tomcat_tcp.conf

input {

file {

path => "/usr/local/tomcat/logs/tomcat_access_log.*.log"

type => "tomcat-accesslog-5612"

start_position => "beginning"

stat_interval => "2"

}

tcp {

port => 7800

mode => "server"

type => "tcplog-5612"

}

}

output {

if [type] == "tomcat-accesslog-5612" {

redis {

data_type => "list"

key => "tomcat-accesslog-5612"

host => "192.168.56.12"

port => "6379"

db => "0"

password => "123456"

}}

if [type] == "tcplog-5612" {

redis {

data_type => "list"

key => "tcplog-5612"

host => "192.168.56.12"

port => "6379"

db => "1"

password => "123456"

}}

}

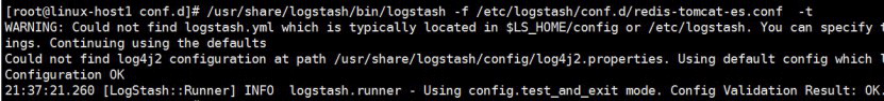

4.7.5 测试logstash配置语法是否正确

[root@linux-host2 ~]# /usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/tomcat.conf -t

4.7.6 访问tomcat的web页面并生成系统日志

[root@linux-host1 ~]# echo "伪设备1" > /dev/tcp/192.168.56.12/7800

[root@linux-host3 ~]# cat /etc/logstash/conf.d/redis-to-els.conf

[root@linux-host1 conf.d]# cat /etc/logstash/conf.d/redis-tomcat-es.conf

input {

redis {

data_type => "list"

key => "tomcat-accesslog-5612"

host => "192.168.56.12"

port => "6379"

db => "0"

password => "123456"

codec => "json"

}

redis {

data_type => "list"

key => "tcplog-5612"

host => "192.168.56.12"

port => "6379"

db => "1"

password => "123456"

}

}

output {

if [type] == "tomcat-accesslog-5612" {

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "logstash-tomcat5612-accesslog-%{+YYYY.MM.dd}"

}}

if [type] == "tcplog-5612" {

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "logstash-tcplog5612-%{+YYYY.MM.dd}"

}}

}

4.7.9 测试logstash

[root@linux-host1 conf.d]# /usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/redis-to-els.conf

4.7.15 kibana验证tcp日志

4.7.16 按照上述流程如何配置收集message日志

Kafuka:

http://kafka.apache.org/downloads.html

4.8 使用filebeat替代logstash收集日志

Filebeat是轻量级单用途的日志收集工具,用于在没有安装java的服务器上专门收集日志,可以将日志转发到logstash、elasticsearch或redis等场景中进行下一步处理。

官网下载地址:https://www.elastic.co/downloads/beats/filebeat

官方文档:https://www.elastic.co/guide/en/beats/filebeat/current/filebeat-configuration-details.html

4.8.1 确认日志格式为json格式

先访问web服务器,以产生一定的日志,然后确认是json格式,因为下面的课程中会使用到:

[root@linux-host2 ~]# ab -n100 -c100 http://192.168.56.16:8080/web

4.8.2 确认日志格式,后续会用日志做统计

[root@linux-host2 ~]# tail /usr/local/tomcat/logs/localhost_access_log.2017-04-28.txt

{"clientip":"192.168.56.15","ClientUser":"-","authenticated":"-","AccessTime":"[28/Apr/2017:21:16:46 +0800]","method":"GET /webdir/ HTTP/1.0","status":"200","SendBytes":"12","Query?string":"","partner":"-","AgentVersion":"ApacheBench/2.3"}

{"clientip":"192.168.56.15","ClientUser":"-","authenticated":"-","AccessTime":"[28/Apr/2017:21:16:46 +0800]","method":"GET /webdir/ HTTP/1.0","status":"200","SendBytes":"12","Query?string":"","partner":"-","AgentVersion":"ApacheBench/2.3"}

4.8.3 安装配置filebeat

[root@linux-host2 ~]# systemctl stop logstash #停止logstash服务(如果有安装)

[root@linux-host2 src]# wget https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-5.3.2-x86_64.rpm

[root@linux-host6 src]# yum install filebeat-5.3.2-x86_64.rpm -y

4.8.4 配置filebeat收集系统日志

[root@linux-host2 ~]# cd /etc/filebeat/

[root@linux-host2 filebeat]# cp filebeat.yml filebeat.yml.bak #备份源配置文件

4.8.4.1 filebeat收集多个系统日志并输出到本地文件

[root@linux-host2 ~]# grep -v "#" /etc/filebeat/filebeat.yml | grep -v "^$"

grep -v "#" /etc/filebeat/filebeat.yml | grep -v "^$"

filebeat.prospectors:

- input_type: log

paths:

- /var/log/messages

- /var/log/*.log

exclude_lines: ["^DBG","^$"] #不收取的

#include_lines: ["^ERR", "^WARN"] #只收取的

document_type: system-log-5612 #类型,会在每条日志中插入标记

output.file:

path: "/tmp"

filename: "filebeat.txt"

4.8.4.2 启动filebeat服务并验证本地文件是否有效

[root@linux-host2 filebeat]# systemctl start filebeat

4.8.5 filebeat收集单个类型日志并写入redis

Filebeat支持将数据直接写入到redis服务器,本步骤为写入到redis当中的一个可以,另外filebeat还支持写入到elasticsearch、logstash等服务器。

4.8.5.1 fiebeat配置

[root@linux-host2 ~]# grep -v "#" /etc/filebeat/filebeat.yml | grep -v "^$"

filebeat.prospectors:

- input_type: log

paths:

- /var/log/messages

- /var/log/*.log

exclude_lines: ["^DBG","^$"]

document_type: system-log-5612

output.redis:

hosts: ["192.168.56.12:6379"]

key: "system-log-5612" #为了后期日志处理,建议自定义key名称

db: 1 #使用第几个库

timeout: 5 #超时时间

password: 123456 #redis密码

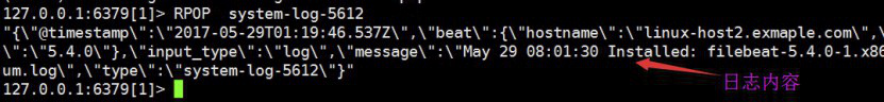

4.8.5.2 验证redis是否有数据

4.8.5.3 查看redis中的日志数据

注意选择的db是否和filebeat写入一致

4.8.5.4 配置logstash从redis读取上面的日志

[root@linux-host1 ~]# cat /etc/logstash/conf.d/redis-systemlog-es.conf

input {

redis {

host => "192.168.56.12"

port => "6379"

db => "1"

key => "system-log-5612"

data_type => "list"

}

}

output {

if [type] == "system-log-5612" {

elasticsearch {

hosts => ["192.168.56.11:9200"]

index => "system-log-5612"

}}

}

[root@linux-host1 ~]# systemctl restart logstash #重启logstash服务

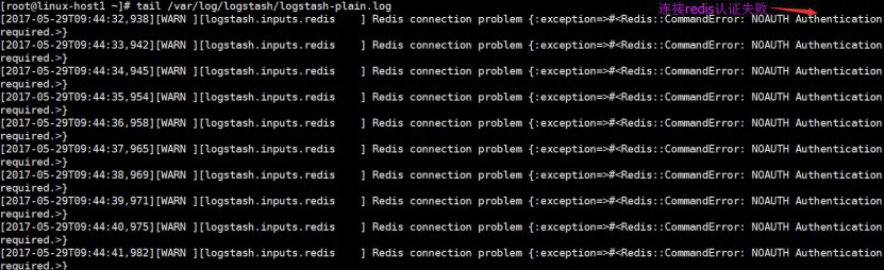

4.8.5.5 查看logstash服务日志

4.8.6 监控redis数据长度

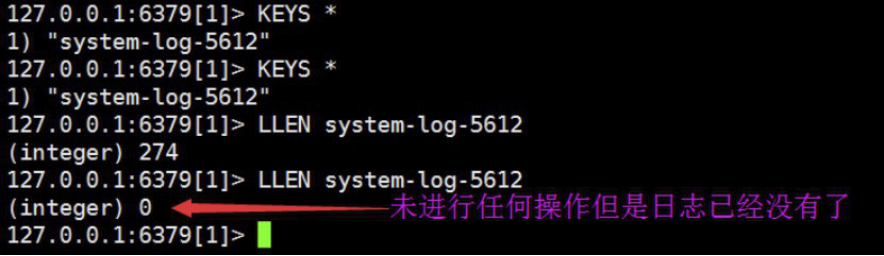

实际环境当中,可能会出现reids当中堆积了大量的数据而logstash由于种种原因未能及时提取日志,此时会导致redis服务器的内存被大量使用,甚至出现如下内存即将被使用完毕的情景:

查看reids中的日志队列长度发现有大量的日志堆积在redis 当中:

4.8.6.1 脚本内容

#!/usr/bin/env python

#coding:utf-8

#Author Zhang jie

import redis

def redis_conn():

pool=redis.ConnectionPool(host="192.168.56.12",port=6379,db=0,password=123456)

conn = redis.Redis(connection_pool=pool)

data = conn.llen('tomcat-accesslog-5612')

print(data)

redis_conn()

4.9 日志收集实战

4.9.1 架构规划

在下面的图当中从左向右看,当要访问ELK日志统计平台的时候,首先访问的是两台nginx+keepalived做的负载高可用,访问的地址是keepalived的IP,当一台nginx代理服务器挂掉之后也不影响访问,然后nginx将请求转发到kibana,kibana再去elasticsearch获取数据,elasticsearch是两台做的集群,数据会随机保存在任意一台elasticsearch服务器,redis服务器做数据的临时保存,避免web服务器日志量过大的时候造成的数据收集与保存不一致导致的日志丢失,可以临时保存到redis,redis可以是集群,然后再由logstash服务器在非高峰时期从redis持续的取出即可,另外有一台mysql数据库服务器,用于持久化保存特定的数据,web服务器的日志由filebeat收集之后发送给另外的一台logstash,再有其写入到redis即可完成日志的收集,从图中可以看出,redis服务器处于前端结合的最中间,其左右都要依赖于redis的正常运行,web服务删个日志经过filebeat收集之后通过日志转发层的logstash写入到redis不同的key当中,然后提取层logstash再从redis将数据提取并安按照不同的类型写入到elasticsearch的不同index当中,用户最终通过nginx代理的kibana查看到收集到的日志的具体内容:

4.9.2 filebeat收集日志转发至 logstash

官方文档:https://www.elastic.co/guide/en/beats/filebeat/current/logstash-output.html

目前只收集了系统日志,下面将tomcat的访问日志和启动时生成的catalina.txt文件的日志进行收集,另外测试多行匹配,并将输出改为logstash进根据日志类型判断写入到不同的redis key当中,在一个filebeat服务上面同时收集不同类型的日志,比如收集系统日志的时候还要收集tomcat的访问日志,那么直接带来的问题就是要在写入至redis的时候要根据不同的日志类型写入到reids不通的key当中,首先通过logstash监听一个端口,并做标准输出测试,具体配置为:

4.9.2.1 结合logstash进行输出测试

[root@linux-host1 conf.d]# cat beats.conf

input {

beats {

port => 5044

}

}

#将输出改为文件进行临时输出测试

output {

file {

path => "/tmp/filebeat.txt"

}

}

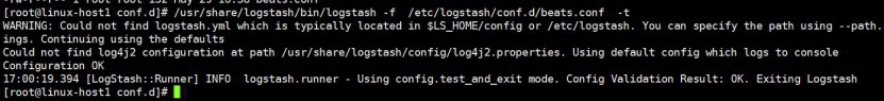

4.9.2.2 测试语法

[root@linux-host1 conf.d]# /usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/beats.conf -t

4.9.2.3 启动logstash

[root@linux-host1 conf.d]# ll

total 8

-rw-r--r-- 1 root root 139 May 29 17:39 beats.conf

-rw-r--r-- 1 root root 319 May 29 16:16 redis-systemlog-es.conf #保留个配置,后面在会在filebeat验证多个输出,比如同事输出到redis和logstash。

[root@linux-host1 conf.d]# systemctl restart logstash #重启服务

4.9.2.4 更改web服务器的filebeat 配置

[root@linux-host2 ~]# grep -v "#" /etc/filebeat/filebeat.yml | grep -v "^$"

filebeat.prospectors:

- input_type: log

paths:

- /var/log/messages

- /var/log/*.log

exclude_lines: ["^DBG","^$"]

document_type: system-log-5612

output.redis:

hosts: ["192.168.56.12:6379"]

key: "system-log-5612"

db: 1

timeout: 5

password: 123456

output.logstash:

hosts: ["192.168.56.11:5044"] #logstash 服务器地址,可以是多个

enabled: true #是否开启输出至logstash,默认即为true

worker: 1 #工作线程数

compression_level: 3 #压缩级别

#loadbalance: true #多个输出的时候开启负载

4.9.2.5 重启filebeat

[root@linux-host2 ~]# systemctl restart filebeat

4.2.6 手动更改messages文件内容

[root@linux-host2 filebeat]# echo "test" >> /var/log/messages

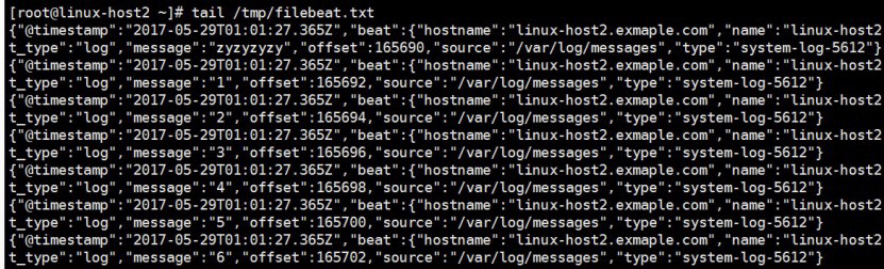

4.9.2.7 在logstash服务器验证是否输出至指定的文件

可以验证filebeat可以同时进行多目标的输出。

4.9.3 filebeat收集多类型 的日志文件

本次将tomcat的访问日志进行一起收集,即同时收集了服务器的系统日志和tomcat 的访问日志,并且定义不同的日志type,最后统一转发给logstash进行进一步处理:

4.9.3.1 将web服务器的filebeat更改如下

[root@linux-host2 filebeat]# grep -v "#" /etc/filebeat/filebeat.yml | grep -v "^$"

filebeat.prospectors:

- input_type: log

paths:

- /var/log/messages

- /var/log/*.log

exclude_lines: ["^DBG","^$"]

document_type: system-log-5612

- input_type: log

paths:

- /usr/local/tomcat/logs/tomcat_access_log.*.log

document_type: tomcat-accesslog-5612

output.logstash:

hosts: ["192.168.56.11:5044","192.168.56.11:5045"] #多个logstash服务器

enabled: true

worker: 1

compression_level: 3

loadbalance: true

4.9.3.2 重启filebeat服务

[root@linux-host2 ~]# systemctl restart filebeat

4.9.3.4 更改logstash服务,增加一个beat配置

[root@linux-host1 conf.d]# cp beats.conf beats-5045.conf

[root@linux-host1 conf.d]# cat beats-5045.conf

input {

beats {

port => 5045 #重新开启一个端口

codec => "json"

}

}

output {

file {

path => "/tmp/filebeat.txt"

}

}

4.9.3.5 重启logstash服务并验证端口

[root@linux-host1 conf.d]# systemctl restart logstash

4.9.3.6 访问tomcat的web页面并修改messages文件内容

[root@linux-host2 filebeat]# echo "test" >> /var/log/messages

[root@linux-host2 filebeat]# ab -n10 -c5 http://192.168.56.12:8080/webdir/index.html

4.9.3.8 将两个beat的出改为redis

输出部分的配置是一样的,只是输入的部分的端口一个是5044一个是5045。

[root@linux-host1 conf.d]# cat beats.conf

input {

beats {

port => 5044

codec => "json"

}

}

output {

if [type] == "system-log-5612" {

redis {

host => "192.168.56.12"

port => "6379"

db => "1"

key => "system-log-5612"

data_type => "list"

password => "123456"

}}

if [type] == "tomcat-accesslog-5612" {

redis {

host => "192.168.56.12"

port => "6379"

db => "0"

key => "tomcat-accesslog-5612"

data_type => "list"

password => "123456"

}}

}

[root@linux-host1 conf.d]# cat beats-5045.conf

input {

beats {

port => 5045

codec => "json"

}

}

output {

if [type] == "system-log-5612" {

redis {

host => "192.168.56.12"

port => "6379"

db => "1"

key => "system-log-5612"

data_type => "list"

password => "123456"

}}

if [type] == "tomcat-accesslog-5612" {

redis {

host => "192.168.56.12"

port => "6379"

db => "0"

key => "tomcat-accesslog-5612"

data_type => "list"

password => "123456"

}}

}

4.9.3.9 重新生成日志

[root@linux-host2 filebeat]# echo "test1" >> /var/log/messages

[root@linux-host2 filebeat]# echo "test2" >> /var/log/messages

[root@linux-host2 filebeat]# ab -n10 -c5 http://192.168.56.12:8080/webdir/index.html

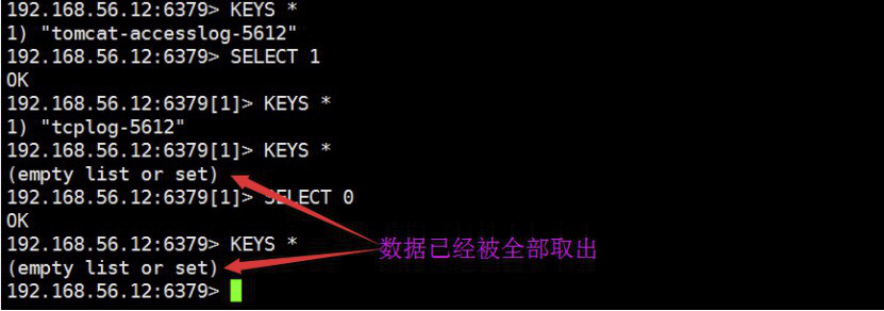

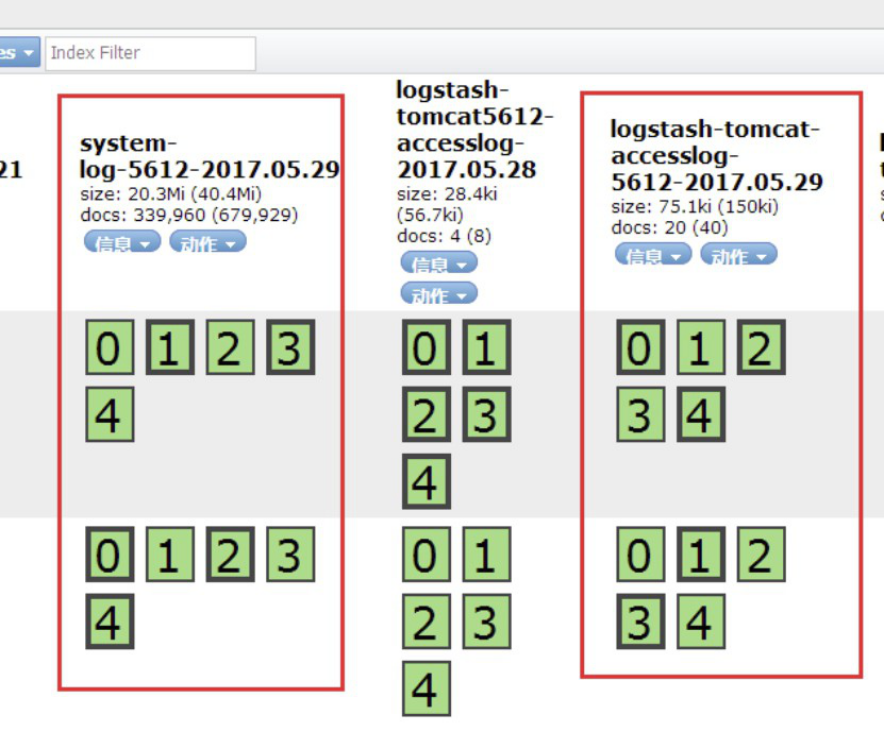

4.9.3.10 验证redis是否有日志:

4.9.3.11配置logstash从redis中读取日志再写入到elasticsearch

[root@linux-host2 conf.d]# cat redis-es.conf

input {

redis {

host => "192.168.56.12"

port => "6379"

db => "1"

key => "system-log-5612"

data_type => "list"

password => "123456"

}

redis {

host => "192.168.56.12"

port => "6379"

db => "0"

key => "tomcat-accesslog-5612"

data_type => "list"

password => "123456"

codec => "json" #对于json格式的日志定义编码格式

}

}

output {

if [type] == "system-log-5612" {

elasticsearch {

hosts => ["192.168.56.12:9200"]

index => "logstash-system-log-5612-%{+YYYY.MM.dd}"

}}

if [type] == "tomcat-accesslog-5612" {

elasticsearch {

hosts => ["192.168.56.12:9200"]

index => "logstash-tomcat-accesslog-5612-%{+YYYY.MM.dd}"

}}

}

4.9.3.12 验证配置文件并重启服务

[root@linux-host2 conf.d]# /usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/redis-es.conf -t

[root@linux-host2 conf.d]# systemctl restart logstash

4.9.3.13 验证redis数据是否消失或减少

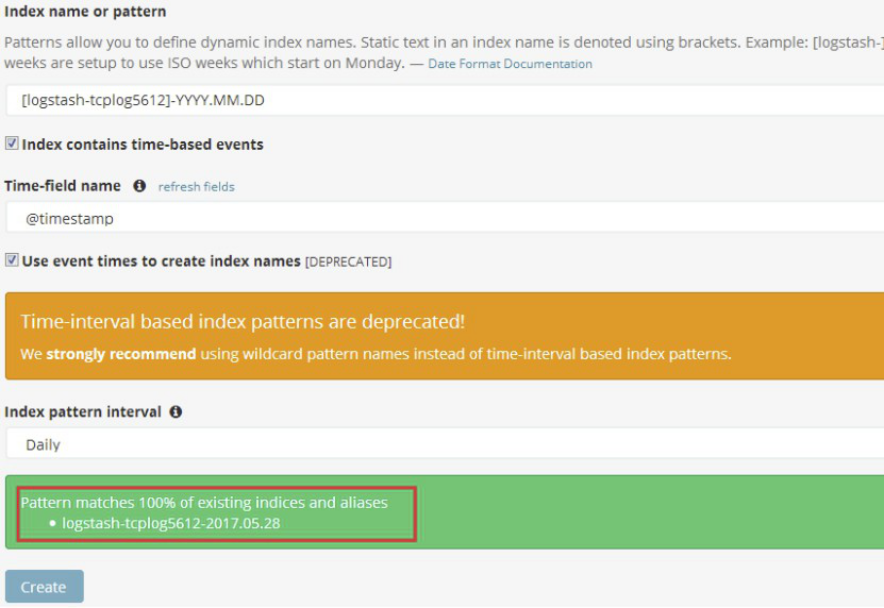

4.9.3.15 在kibana界面删除之前创建全部索引,再分别添加新的索引

添加系统日志索引:

添加tomcat访问日志索引:

4.9.3.17 kibana界面验证系统日志

4.9.4 通过haproxy代理kibana

Host2已经安装过haproxy,因此直接配置host2的haproxy即可并安装一个kibana即可:

4.9.4.1 安装配置并启动kibana

[root@linux-host2 src]# rpm -ivh kibana-5.3.0-x86_64.rpm

[root@linux-host2 src]# grep "^[a-Z]" /etc/kibana/kibana.yml

server.port: 5601

server.host: "127.0.0.1"

elasticsearch.url: "http://192.168.56.12:9200"

[root@linux-host2 src]# systemctl start kibana

[root@linux-host2 src]# systemctl enable kibana

4.9.4.2 编辑haproxy配置文件

[root@linux-host2 ~]# cat /etc/haproxy/haproxy.cfg

global

maxconn 100000

chroot /usr/local/haproxy

uid 99

gid 99

daemon

nbproc 1

pidfile /usr/local/haproxy/run/haproxy.pid

log 127.0.0.1 local6 info

defaults

option http-keep-alive

option forwardfor

maxconn 100000

mode http

timeout connect 300000ms

timeout client 300000ms

timeout server 300000ms

listen stats

mode http

bind 0.0.0.0:9999

stats enable

log global

stats uri /haproxy-status

stats auth haadmin:q1w2e3r4ys

#frontend web_port

frontend web_port

bind 0.0.0.0:80

mode http

option httplog

log global

option forwardfor

###################ACL Setting##########################

acl pc hdr_dom(host) -i www.elk.com

acl mobile hdr_dom(host) -i m.elk.com

acl kibana hdr_dom(host) -i www.kibana5612.com

###################USE ACL##############################

use_backend pc_host if pc

use_backend mobile_host if mobile

use_backend kibana_host if kibana

########################################################

backend pc_host

mode http

option httplog

balance source

server web1 192.168.56.11:80 check inter 2000 rise 3 fall 2 weight 1

backend mobile_host

mode http

option httplog

balance source

server web1 192.168.56.11:80 check inter 2000 rise 3 fall 2 weight 1

backend kibana_host

mode http

option httplog

balance source

server web1 127.0.0.1:5601 check inter 2000 rise 3 fall 2 weight 1

4.9.4.3 重启haproxy

[root@linux-host2 ~]# systemctl reload haproxy

4.9.4.4 添加本地域名解析

C:\Windows\System32\drivers\etc

192.168.56.11 www.kibana5611.com

192.168.56.12 www.kibana5612.com

4.9.4.5 浏览器访问域名

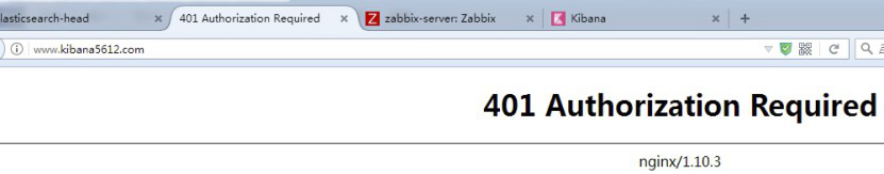

4.9.5 通过nginx代理kibana并实现登陆认证

将nginx 作为反向代理服务器,并增加登录用户认证的目的,可以有效避免其他人员随意访问kibana页面。

4.9.5.1 关闭上一步骤的haproxy,然后安装nginx

[root@linux-host2 src]# systemctl disable haproxy

[root@linux-host2 src]# systemctl disable haproxy

[root@linux-host2 src]# tar xf nginx-1.10.3.tar.gz

[root@linux-host2 nginx-1.10.3]# ./configure --prefix=/usr/local/nginx

[root@linux-host2 nginx-1.10.3]# make && make install

4.9.5.2 准备systemctl启动文件

[root@linux-host2 nginx-1.10.3]# vim /usr/lib/systemd/system/nginx.service

[Unit]

Description=The nginx HTTP and reverse proxy server

After=network.target remote-fs.target nss-lookup.target

[Service]

Type=forking

PIDFile=/run/nginx.pid #和nginx 配置文件的保持一致

ExecStartPre=/usr/bin/rm -f /run/nginx.pid

ExecStartPre=/usr/sbin/nginx -t

ExecStart=/usr/sbin/nginx

ExecReload=/bin/kill -s HUP $MAINPID

KillSignal=SIGQUIT

TimeoutStopSec=5

KillMode=process

PrivateTmp=true

[Install]

WantedBy=multi-user.target

4.9.5.3 配置并启动nginx

[root@linux-host2 nginx-1.10.3]# ln -sv /usr/local/nginx/sbin/nginx /usr/sbin/

[root@linux-host2 nginx-1.10.3]# useradd www -u 2000

[root@linux-host2 nginx-1.10.3]# chown www.www /usr/local/nginx/ -R

[root@linux-host2 nginx-1.10.3]# vim /usr/local/nginx/conf/nginx.conf

user www www;

worker_processes 1;

pid /run/nginx.pid; #更改pid文件路径与启动脚本必须一致

[root@linux-host2 nginx-1.10.3]# systemctl start nginx

[root@linux-host2 nginx-1.10.3]# systemctl enable nginx #普通用户能否启动nignx?

Created symlink from /etc/systemd/system/multi-user.target.wants/nginx.service to /usr/lib/systemd/system/nginx.service.

4.9.5.4 访问nginx服务器

4.9.5.5 配置nginx代理kibana

[root@linux-host2 conf]# mkdir /usr/local/nginx/conf/conf.d/

[root@linux-host2 conf]# vim /usr/local/nginx/conf/nginx.conf

include /usr/local/nginx/conf/conf.d/*.conf;

[root@linux-host2 conf]# vim /usr/local/nginx/conf/conf.d/kibana5612.conf

upstream kibana_server {

server 127.0.0.1:5601 weight=1 max_fails=3 fail_timeout=60;

}

server {

listen 80;

server_name www.kibana5612.com;

location / {

proxy_pass http://kibana_server;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection 'upgrade';

proxy_set_header Host $host;

proxy_cache_bypass $http_upgrade;

}

}

4.9.5.6 重启Nginx

[root@linux-host2 conf]# chown www.www /usr/local/nginx/ -R

[root@linux-host2 conf]# systemctl restart nginx

4.9.5.7 验证访问

[root@linux-host2 conf]# ab -n100 -c10 http://192.168.56.12:8080/webdir/index.html

4.9.5.8 实现登陆认证

[root@linux-host2 conf]# yum install httpd-tools –y

[root@linux-host2 conf]# htpasswd -bc /usr/local/nginx/conf/htpasswd.users zhangjie 123456

Adding password for user zhangjie

[root@linux-host2 conf]# htpasswd -b /usr/local/nginx/conf/htpasswd.users zhangtao 123456

Adding password for user zhangtao

[root@linux-host2 conf]# cat /usr/local/nginx/conf/htpasswd.users

zhangjie:$apr1$x7K2F2rr$xq8tIKg3JcOUyOzSVuBpz1

zhangtao:$apr1$vBg99m3i$hV/ayYIsDTm950tonXEJ11

[root@linux-host2 conf]# vim /usr/local/nginx/conf/conf.d/kibana5612.conf

upstream kibana_server {

server 127.0.0.1:5601 weight=1 max_fails=3 fail_timeout=60;

}

server {

listen 80;

server_name www.kibana5612.com;

auth_basic "Restricted Access";

auth_basic_user_file /usr/local/nginx/conf/htpasswd.users;

location / {

proxy_pass http://kibana_server;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection 'upgrade';

proxy_set_header Host $host;

proxy_cache_bypass $http_upgrade;

}

}

[root@linux-host2 conf]# chown www.www /usr/local/nginx/ -R

[root@linux-host2 conf]# systemctl reload nginx

4.9.5.9 验证登陆

适应浏览器冲重新打开nginx监听的域名,可以发现需要密码才可以登录

4.9.5.10 如果不输入密码无法登陆

除非点击取消之后提示需要认证

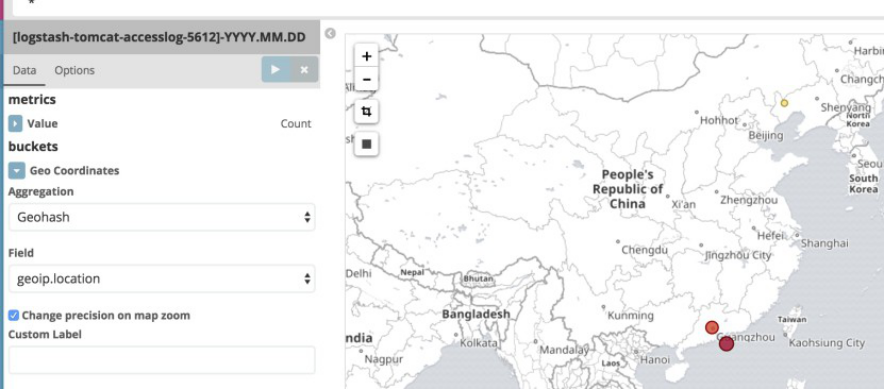

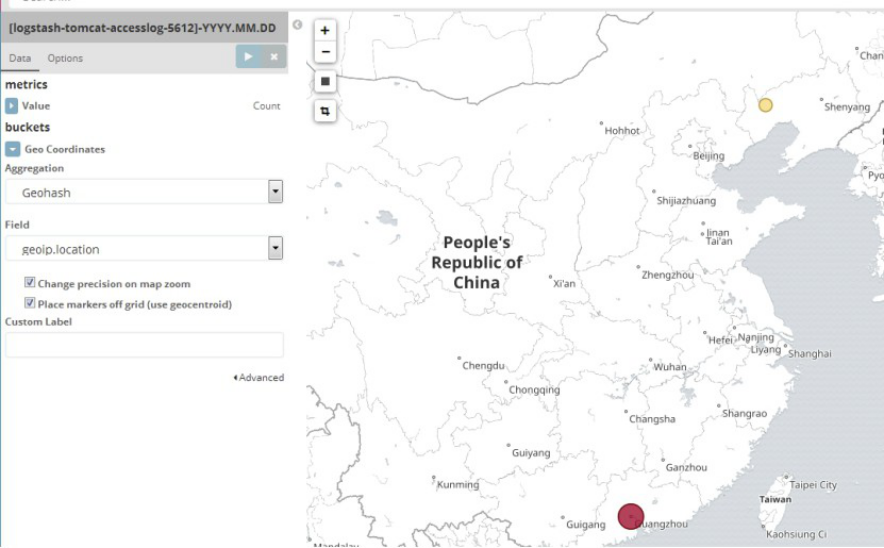

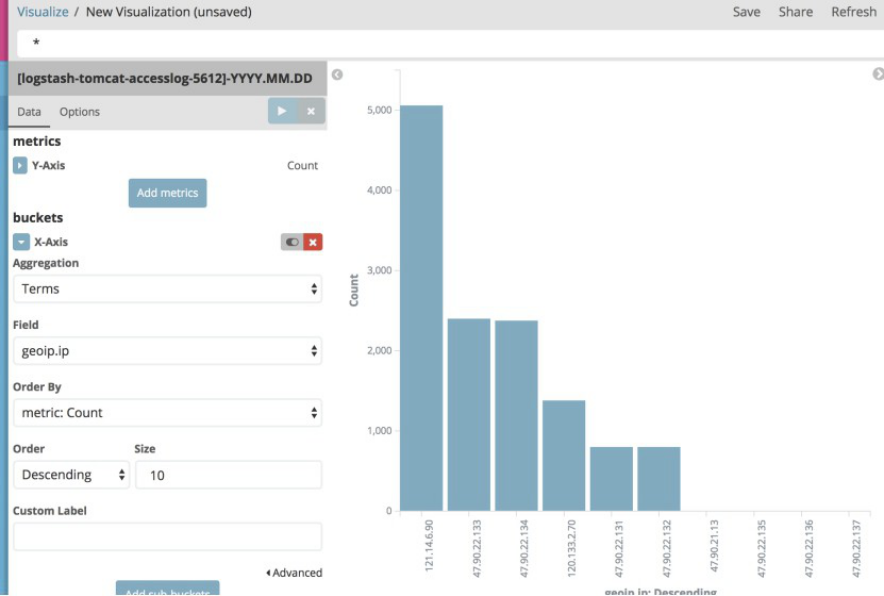

4.10 通过地图统计客户IP所在城市

4.10.1 下载并解压地址数据文件

在logstash2版本的时候使用的是http://geolite.maxmind.com/download/geoip/database/GeoLiteCity.dat.gz,但是在logstash5版本时候更换为了http://geolite.maxmind.com/download/geoip/database/GeoLite2-City.tar.gz,即5版本和2版本使用的是不一样的地址库文件:

[root@linux-host2 ~]# cd /etc/logstash/

[root@linux-host2 logstash]# wget http://geolite.maxmind.com/download/geoip/database/GeoLite2-City.tar.gz

[root@linux-host2 logstash]# gunzip GeoLite2-City.tar.gz

[root@linux-host2 logstash]# tar xf GeoLite2-City.tar

4.10.2 配置logstash使用地址库

[root@linux-host2 logstash]# cat conf.d/redis-es.conf

input {

redis {

host => "192.168.56.12"

port => "6379"

db => "1"

key => "system-log-5612"

data_type => "list"

password => "123456"

}

redis {

host => "192.168.56.12"

port => "6379"

db => "0"

key => "tomcat-accesslog-5612"

data_type => "list"

password => "123456"

codec => "json"

}

}

filter {

if [type] == "tomcat-accesslog-5612" {

geoip {

source => "clientip"

target => "geoip"

database => "/etc/logstash/GeoLite2-City_20170502/GeoLite2-City.mmdb"

add_field => [ "geoip", "%{geoip}" ]

add_field => [ "geoip", "%{geoip}" ]

}

mutate {

convert => [ "geoip", "float"]

}

}

}

output {

if [type] == "system-log-5612" {

elasticsearch {

hosts => ["192.168.56.12:9200"]

index => "logstash-system-log-5612-%{+YYYY.MM.dd}"

}}

if [type] == "tomcat-accesslog-5612" {

elasticsearch {

hosts => ["192.168.56.12:9200"]

index => "logstash-tomcat-accesslog-5612-%{+YYYY.MM.dd}"

}

# jdbc {

# connection_string => "jdbc:mysql://192.168.56.11/elk?user=elk&password=123456&useUnicode=true&characterEncoding=UTF8"

# statement => ["INSERT INTO elklog(host,clientip,status,AgentVersion) VALUES(?,?,?,?)", "host","clientip","status","AgentVersion"]

# }

}

}

4.10.3 重启logstash服务并写入日志数据

[root@linux-host2 logstash]# systemctl restart logstash

[root@linux-host2 logs]# cat tets.log >> tomcat_access_log.2017-05-30.log

4.10.4 验证kibana界面是否可以看到地图数据

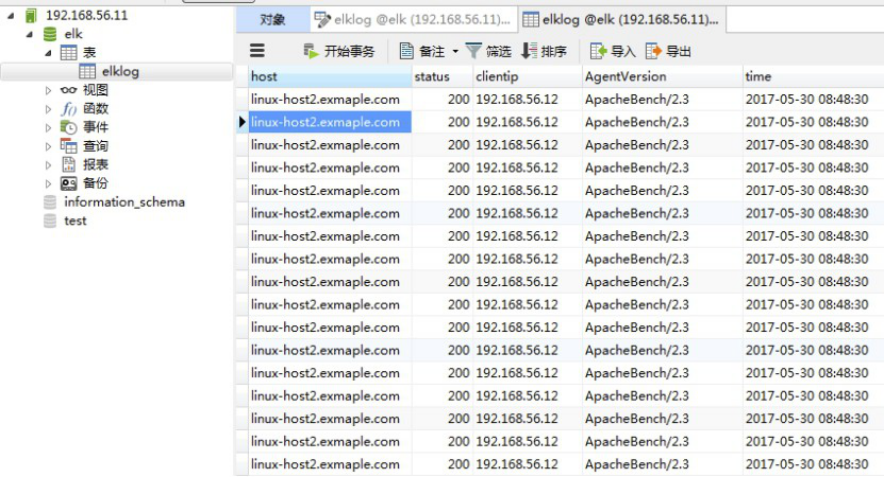

4.11 日志写入数据库

写入数据库的目的是用于持久化保存重要数据,比如状态码、客户端IP、客户端浏览器版本等等,用于后期按月做数据统计等。

4.11.1 安装MySQL数据库

[root@linux-host1 src]# tar xvf mysql-5.6.34-onekey-install.tar.gz

[root@linux-host1 src]# ./mysql-install.sh

[root@linux-host1 src]# /usr/local/mysql/bin/mysql_secure_installation

4.11.2 授权用户登陆

[root@linux-host1 src]# ln -s /var/lib/mysql/mysql.sock /tmp/mysql.sock

mysql> create database elk character set utf8 collate utf8_bin;

Query OK, 1 row affected (0.00 sec)

mysql> grant all privileges on elk.* to elk@"%" identified by '123456';

Query OK, 0 rows affected (0.00 sec)

mysql> flush privileges;

Query OK, 0 rows affected (0.00 sec)

4.11.3 测试用户可以远程登陆

4.11.4 logstash配置mysql-connector-java包

MySQL Connector/J是MySQL官方JDBC驱动程序,JDBC(Java Data Base Connectivity,java数据库连接)是一种用于执行SQL语句的Java API,可以为多种关系数据库提供统一访问,它由一组用Java语言编写的类和接口组成。

官方下载地址:https://dev.mysql.com/downloads/connector/

[root@linux-host1 src]# mkdir -pv /usr/share/logstash/vendor/jar/jdbc

[root@linux-host1 src]# cp mysql-connector-java-5.1.42-bin.jar /usr/share/logstash/vendor/jar/jdbc/

[root@linux-host1 src]# chown logstash.logstash /usr/share/logstash/vendor/jar/ -R

4.11.5 更改gem源

国外的gem源由于网络原因,从国内访问太慢而且不稳定,还经常安装不成功,因此之前一段时间很多人都是使用国内淘宝的gem源https://ruby.taobao.org/,现在淘宝的gem源虽然还可以使用已经停止维护更新,其官方介绍推荐使用https://gems.ruby-china.org。

[root@linux-host1 src]# yum install gem

[root@linux-host1 src]# gem sources --add https://gems.ruby-china.org/ --remove https://rubygems.org/

https://ruby.taobao.org/ added to sources

https://rubygems.org/ removed from sources

[root@linux-host1 src]# gem source list

* CURRENT SOURCES *

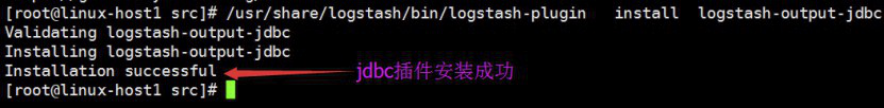

4.11.6 安装配置插件

[root@linux-host1 src]# /usr/share/logstash/bin/logstash-plugin list #当前已经安装的所有插件

[root@linux-host1 src]# /usr/share/logstash/bin/logstash-plugin install logstash-output-jdbc

4.11.7 连接数据库创建表

time的默认值设置为CURRENT_TIMESTAMP

4.11.9 配置logstash将日志写入数据库

[root@linux-host2 ~]# cat /etc/logstash/conf.d/redis-es.conf

input {

redis {

host => "192.168.56.12"

port => "6379"

db => "1"

key => "system-log-5612"

data_type => "list"

password => "123456"

}

redis {

host => "192.168.56.12"

port => "6379"

db => "0"

key => "tomcat-accesslog-5612"

data_type => "list"

password => "123456"

codec => "json"

}

}

output {

if [type] == "system-log-5612" {

elasticsearch {

hosts => ["192.168.56.12:9200"]

index => "logstash-system-log-5612-%{+YYYY.MM.dd}"

}}

if [type] == "tomcat-accesslog-5612" {

elasticsearch {

hosts => ["192.168.56.12:9200"]

index => "logstash-tomcat-accesslog-5612-%{+YYYY.MM.dd}"

}

jdbc {

connection_string => "jdbc:mysql://192.168.56.11/elk?user=elk&password=123456&useUnicode=true&characterEncoding=UTF8"

statement => ["INSERT INTO elklog(host,clientip,status,AgentVersion) VALUES(?,?,?,?)", "host","clientip","status","AgentVersion"]

}}

}

4.11.10 检查logstash配置并重启服务

[root@linux-host2 ~]# /usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/redis-es.conf -t

[root@linux-host2 ~]# systemctl restart logstash

4.11.11 访问tomcat以产生日志

[root@linux-host2 ~]# ab -n10 -c5 http://192.168.56.12:8080/webdir/index.html

4.11.12 验证数据是否写入数据

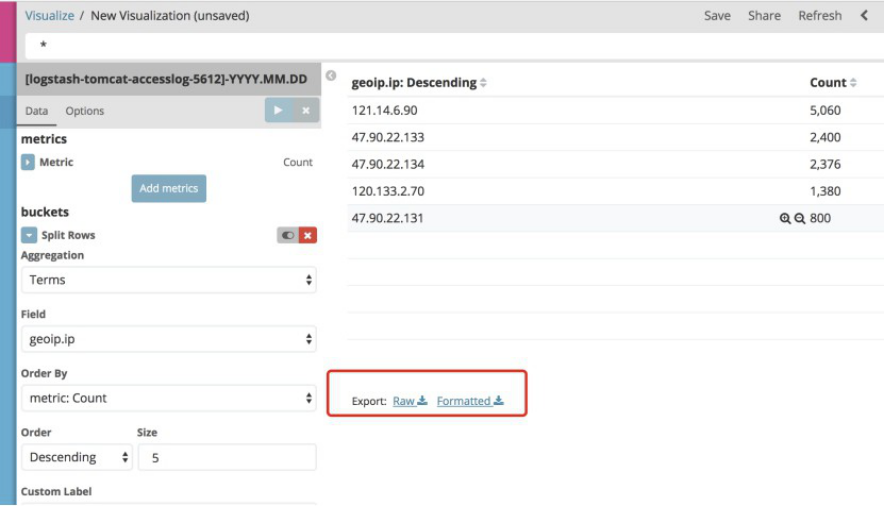

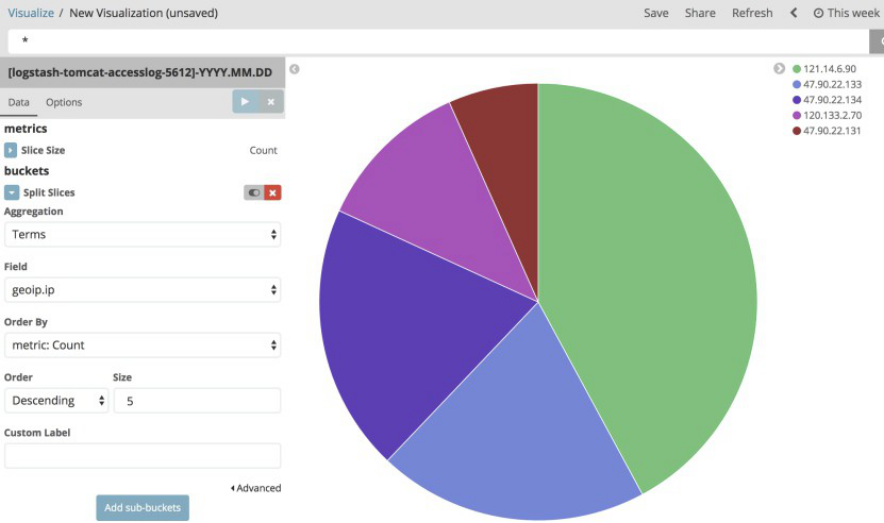

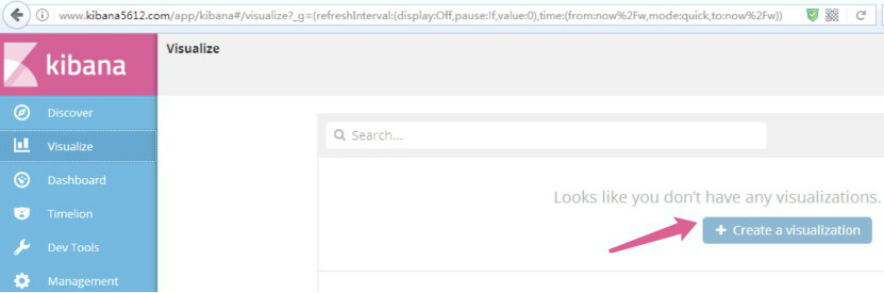

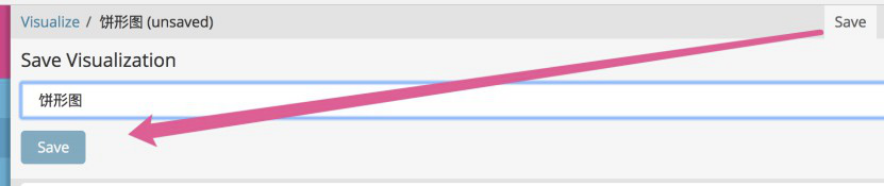

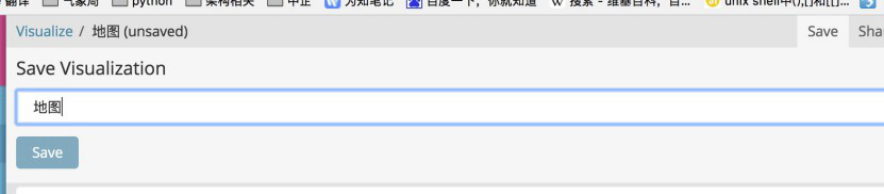

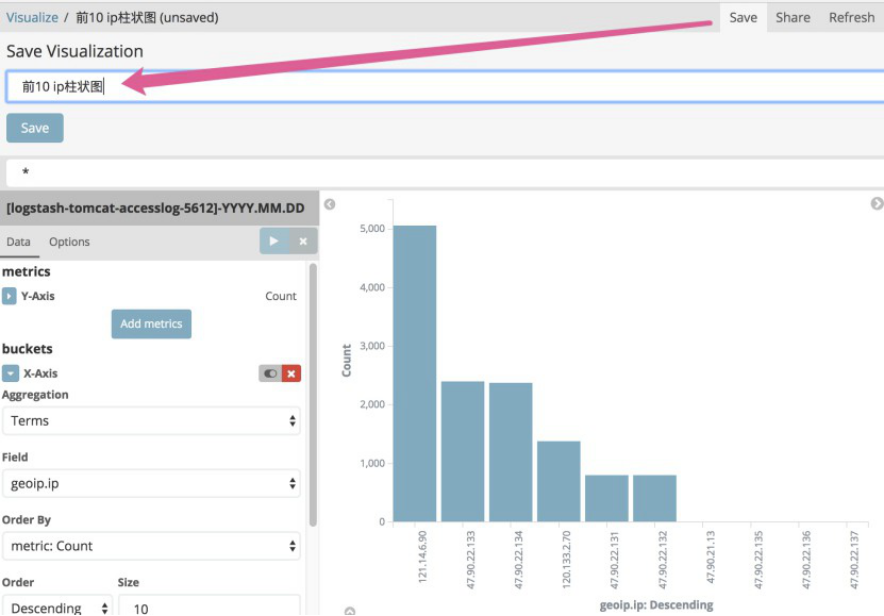

4.12 kibana 画图功能详解

Kibana支持多重图从展示功能,需要日志是json格式的支持,具体如新:

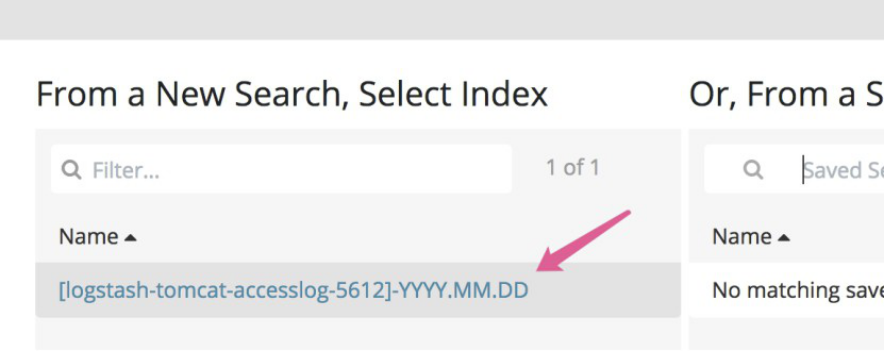

4.12.1 区域图

选择要统计的index名称:

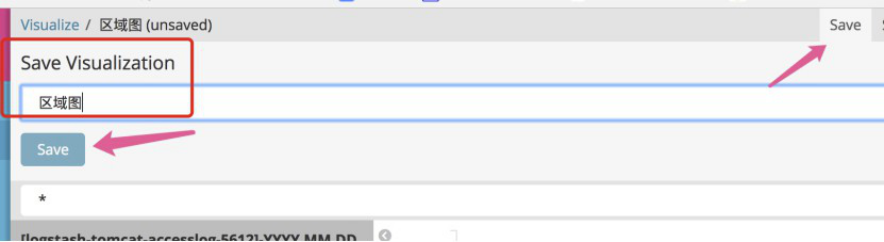

保存查询结果,方便下次可以直接点击查询:

下次点击visualize之后,可以直接自定义名称进行查询:

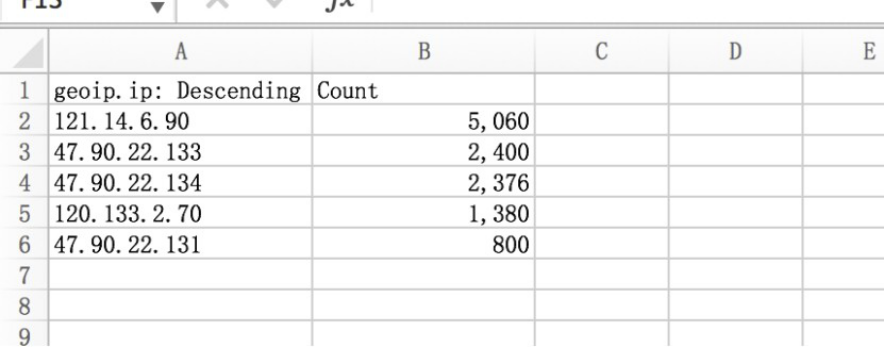

可以讲结果倒出,通过excel查看:

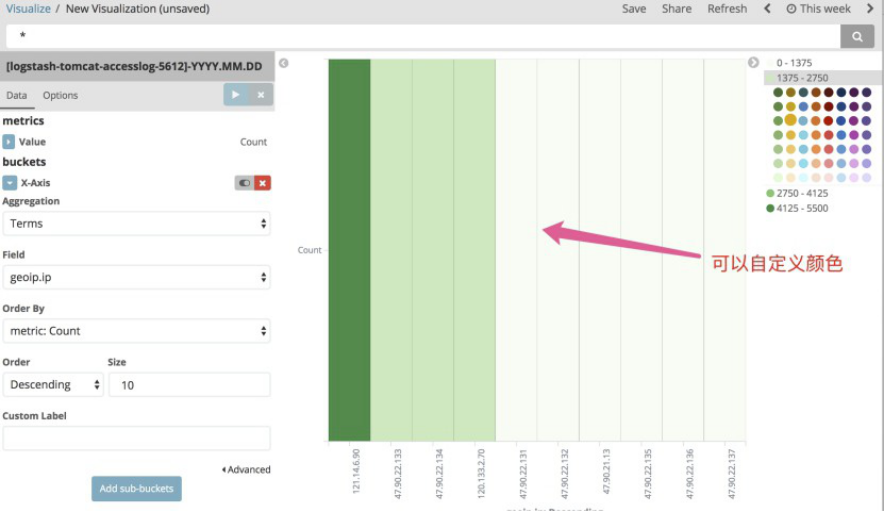

4.12.3 热图 heatmap chart

可以自定义颜色显示

4.12.4 线图 line chart

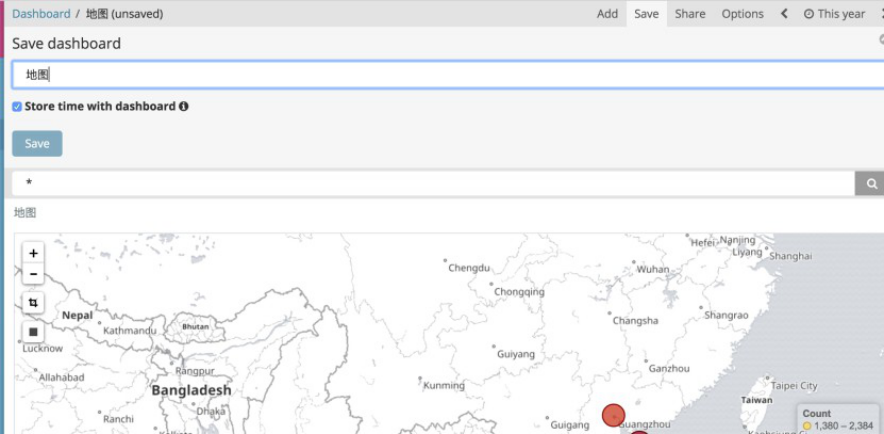

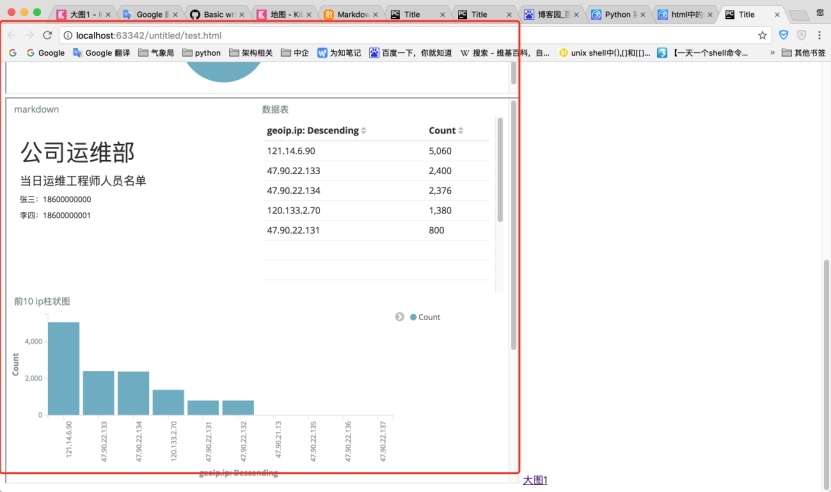

4.13 添加一个大图

4.13.1 添加之前保存的搜素结果到的第一张图

添加保存好的搜索结果:

在添加一个地图:

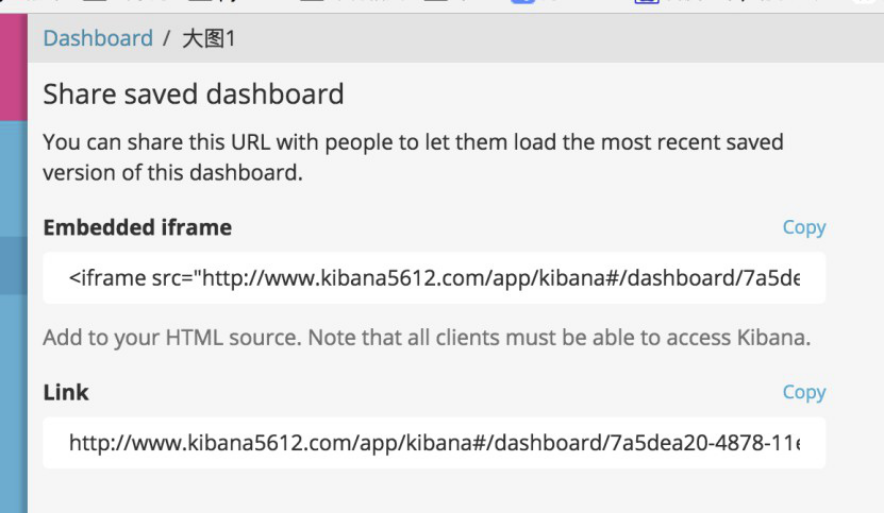

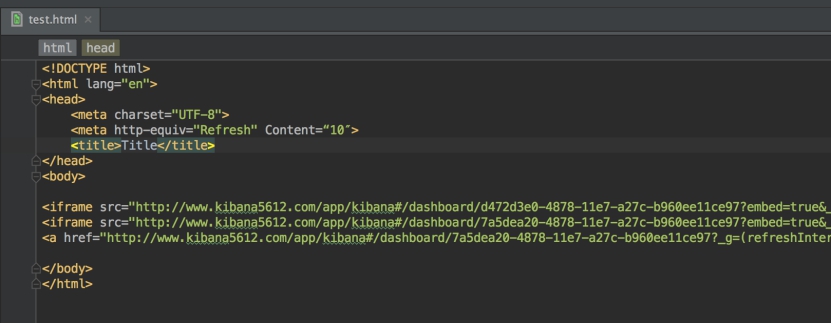

4.13.4 写一个html页面

浏览器打开页面:

扩展

按照下面的规划图,自行根据实际需求设计并搭建一个日志收集系统,要求有详细的实施文档或博客,搭建zabbix监控,对elasticsearch/redis/进行监控并设置报警。