Spark基础实验五——Spark SQL编程初级实践

一、实验目的

(1)通过实验掌握 Spark SQL 的基本编程方法;

(2)熟悉 RDD 到 DataFrame 的转化方法;

(3)熟悉利用 Spark SQL 管理来自不同数据源的数据。

二、实验平台

操作系统: Ubuntu16.04

Spark 版本:2.1.0

数据库:MySQL

三、实验内容和要求

1.Spark SQL 基本操作

将下列 JSON 格式数据复制到 Linux 系统中,并保存命名为 employee.json。

{ "id":1 , "name":" Ella" , "age":36 }

{ "id":2, "name":"Bob","age":29 }

{ "id":3 , "name":"Jack","age":29 }

{ "id":4 , "name":"Jim","age":28 }

{ "id":4 , "name":"Jim","age":28 }

{ "id":5 , "name":"Damon" }

{ "id":5 , "name":"Damon" }

为 employee.json 创建 DataFrame,并写出 Scala 语句完成下列操作:

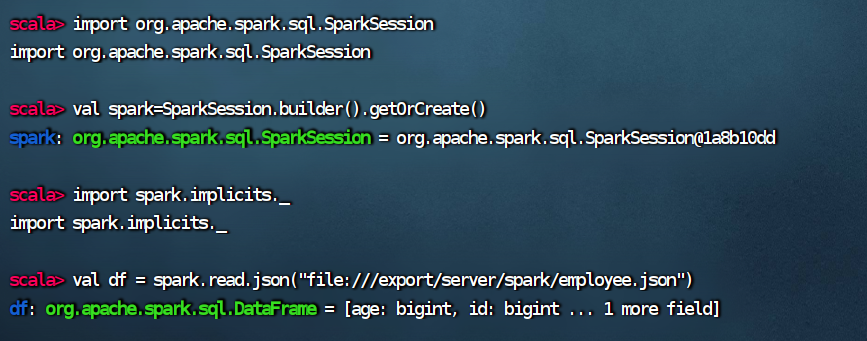

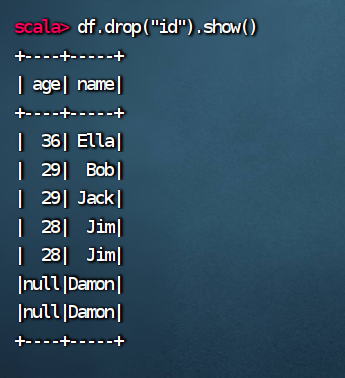

scala> import org.apache.spark.sql.SparkSession import org.apache.spark.sql.SparkSession scala> val spark=SparkSession.builder().getOrCreate() spark: org.apache.spark.sql.SparkSession = org.apache.spark.sql.SparkSession@1a8b10dd scala> import spark.implicits._ import spark.implicits._ scala> val df = spark.read.json("file:///export/server/spark/employee.json") df: org.apache.spark.sql.DataFrame = [age: bigint, id: bigint ... 1 more field]

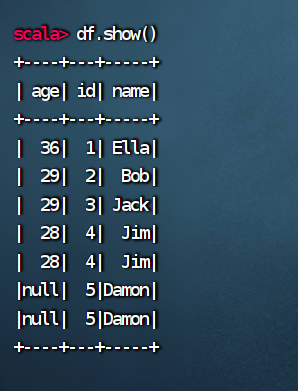

(1) 查询所有数据;

(2) 查询所有数据,并去除重复的数据;

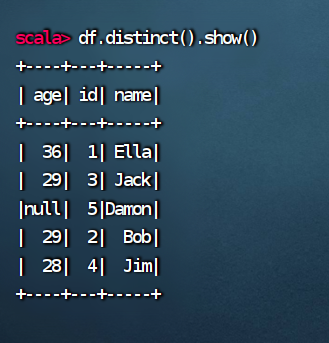

(3) 查询所有数据,打印时去除 id 字段

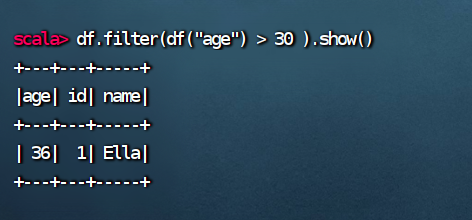

(4) 筛选出 age>30 的记录;

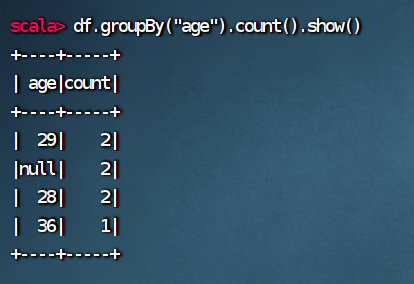

(5) 将数据按 age 分组;

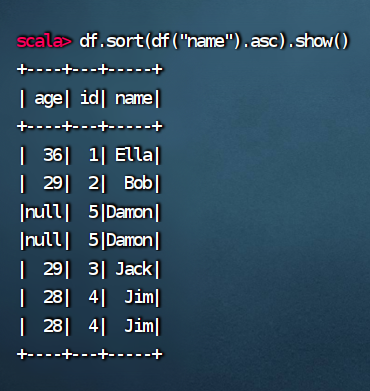

(6) 将数据按 name 升序排列;

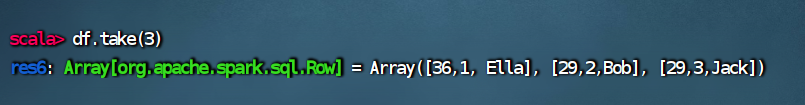

(7) 取出前 3 行数据;

(8) 查询所有记录的 name 列,并为其取别名为 username;

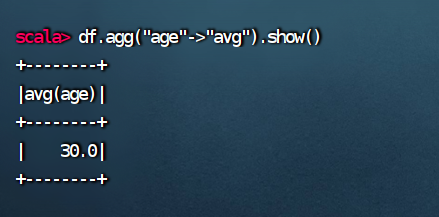

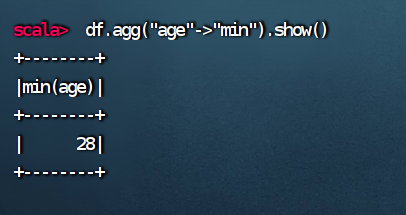

(9) 查询年龄 age 的平均值;

(10) 查询年龄 age 的最小值。

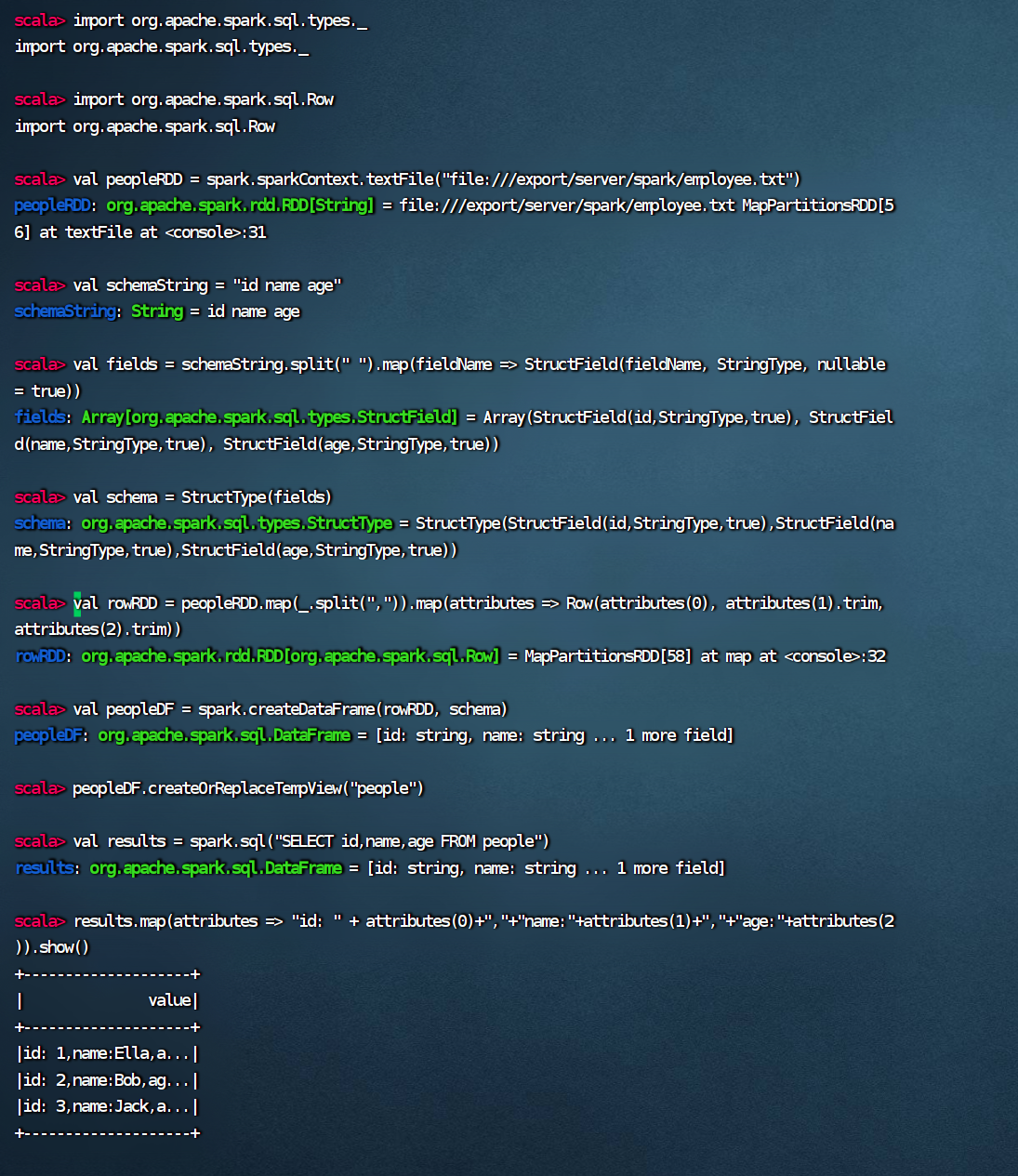

2.编程实现将 RDD 转换为 DataFrame

源文件内容如下(包含 id,name,age)

1,Ella,36 2,Bob,29 3,Jack,29

请将数据复制保存到 Linux 系统中,命名为 employee.txt,实现从 RDD 转换得到

DataFrame,并按“id:1,name:Ella,age:36”的格式打印出 DataFrame 的所有数据。请写出程序代码。

scala> import org.apache.spark.sql.types._ import org.apache.spark.sql.types._ scala> import org.apache.spark.sql.Row import org.apache.spark.sql.Row scala> val peopleRDD = spark.sparkContext.textFile("file:///export/server/spark/employee.txt") peopleRDD: org.apache.spark.rdd.RDD[String] = file:///export/server/spark/employee.txt MapPartitionsRDD[56] at textFile at <console>:31 scala> val schemaString = "id name age" schemaString: String = id name age scala> val fields = schemaString.split(" ").map(fieldName => StructField(fieldName, StringType, nullable = true)) fields: Array[org.apache.spark.sql.types.StructField] = Array(StructField(id,StringType,true), StructField(name,StringType,true), StructField(age,StringType,true)) scala> val schema = StructType(fields) schema: org.apache.spark.sql.types.StructType = StructType(StructField(id,StringType,true),StructField(name,StringType,true),StructField(age,StringType,true)) scala> val rowRDD = peopleRDD.map(_.split(",")).map(attributes => Row(attributes(0), attributes(1).trim, attributes(2).trim)) rowRDD: org.apache.spark.rdd.RDD[org.apache.spark.sql.Row] = MapPartitionsRDD[58] at map at <console>:32 scala> val peopleDF = spark.createDataFrame(rowRDD, schema) peopleDF: org.apache.spark.sql.DataFrame = [id: string, name: string ... 1 more field] scala> peopleDF.createOrReplaceTempView("people") scala> val results = spark.sql("SELECT id,name,age FROM people") results: org.apache.spark.sql.DataFrame = [id: string, name: string ... 1 more field] scala> results.map(attributes => "id: " + attributes(0)+","+"name:"+attributes(1)+","+"age:"+attributes(2)).show() +--------------------+ | value| +--------------------+ |id: 1,name:Ella,a...| |id: 2,name:Bob,ag...| |id: 3,name:Jack,a...| +--------------------+

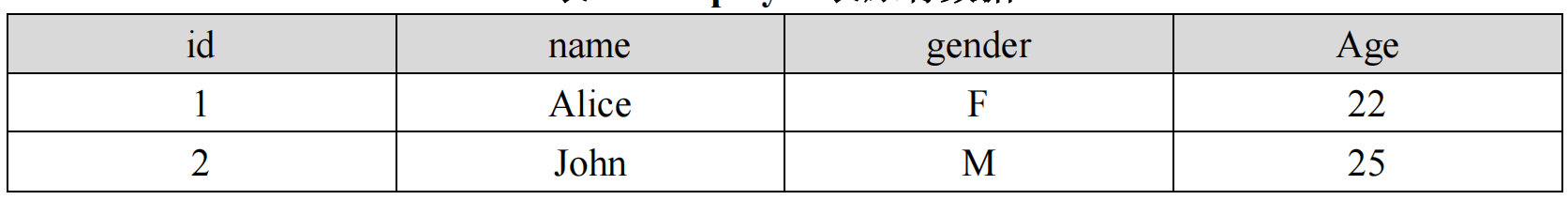

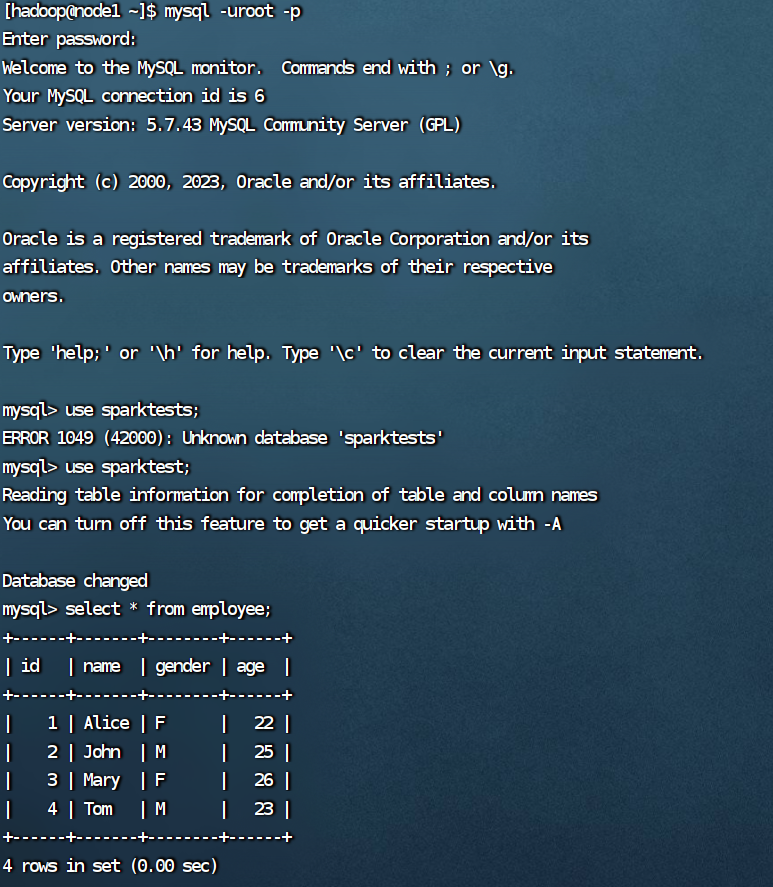

3. 编程实现利用 DataFrame 读写 MySQL 的数据

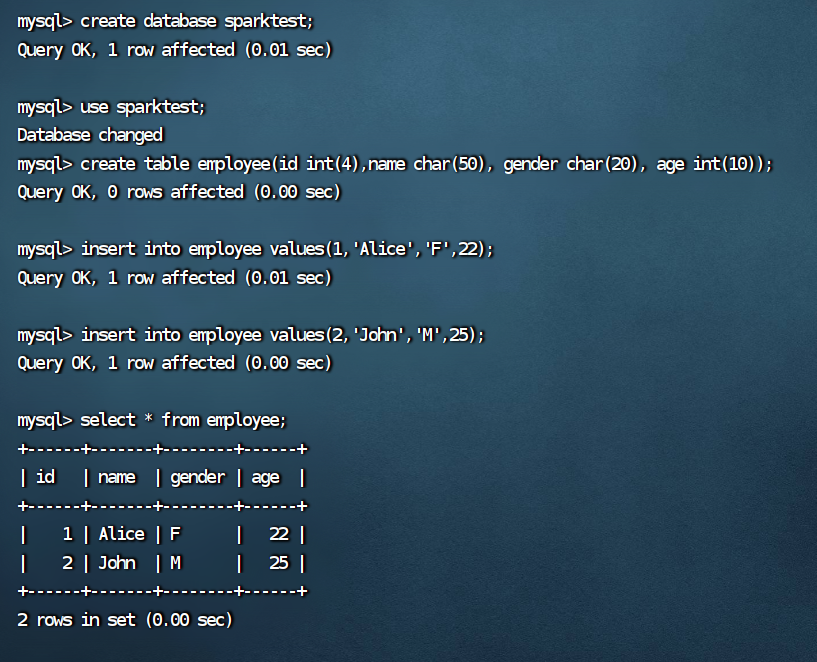

(1)在 MySQL 数据库中新建数据库 sparktest,再创建表 employee,包含如表 6-2 所示的

两行数据。

表 6-2 employee 表原有数据

mysql -uroot -p mysql> create database sparktest; Query OK, 1 row affected (0.01 sec) mysql> use sparktest; Database changed mysql> create table employee(id int(4),name char(50), gender char(20), age int(10)); Query OK, 0 rows affected (0.00 sec) mysql> insert into employee values(1,'Alice','F',22); Query OK, 1 row affected (0.01 sec) mysql> insert into employee values(2,'John','M',25); Query OK, 1 row affected (0.00 sec) mysql> select * from employee; +------+-------+--------+------+ | id | name | gender | age | +------+-------+--------+------+ | 1 | Alice | F | 22 | | 2 | John | M | 25 | +------+-------+--------+------+ 2 rows in set (0.00 sec)

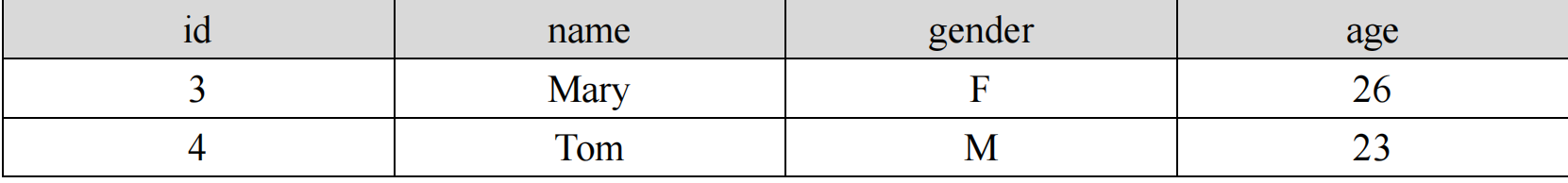

(2)配置 Spark 通过 JDBC 连接数据库 MySQL,编程实现利用 DataFrame 插入如表 6-3 所

示的两行数据到 MySQL 中,最后打印出 age 的最大值和 age 的总和。

表 6-3 employee 表新增数据

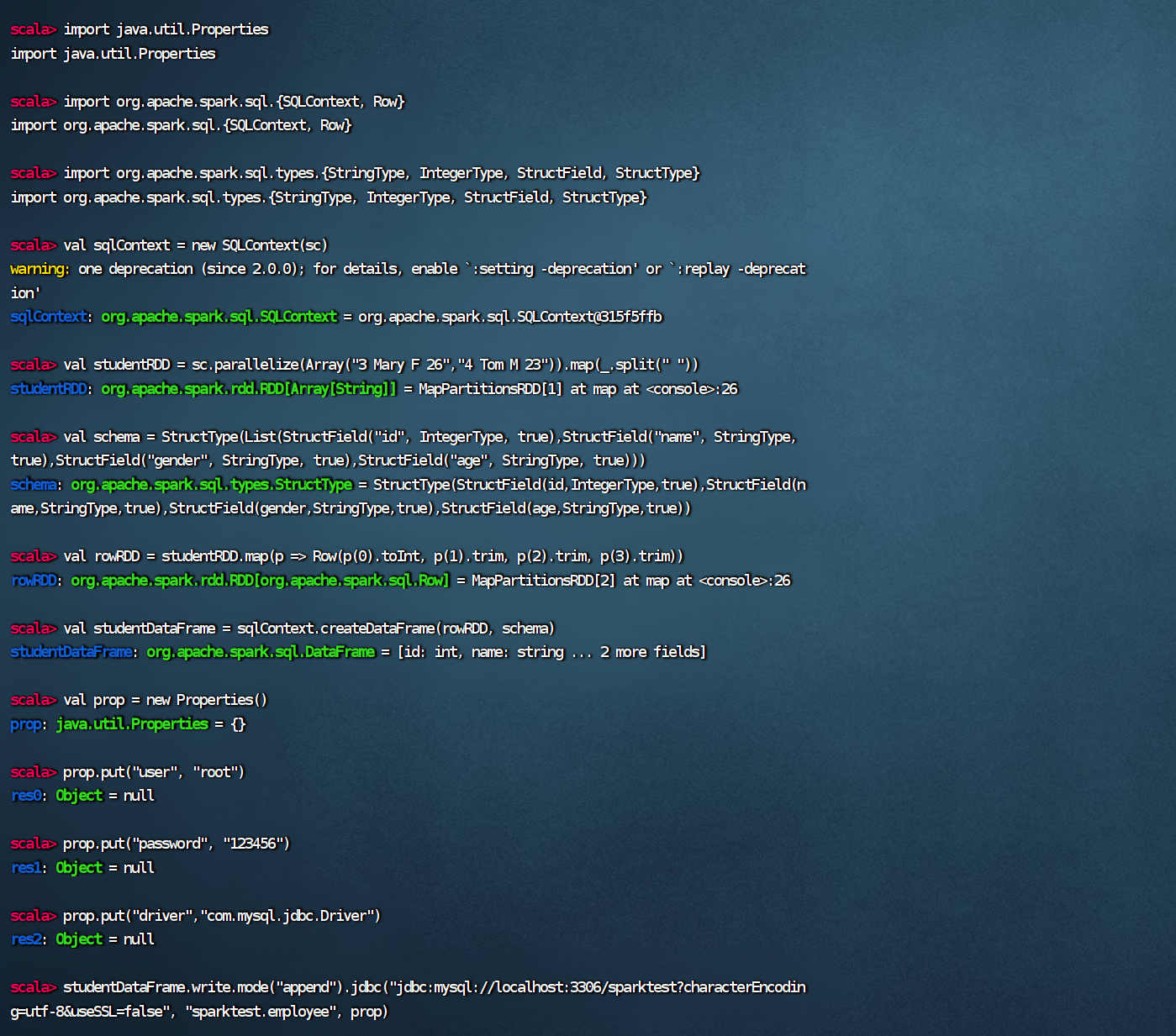

cd/export/server/spark#进入spark

./bin/spark-shell --jars /export/server/spark/mysql-connector-java-5.1.46/mysql-connector-java-5.1.46-bin.jar --driver-class-path /export/server/spark/mysql-connector-java-5.1.46-bin.jar

scala> import java.util.Properties import java.util.Properties scala> import org.apache.spark.sql.{SQLContext, Row} import org.apache.spark.sql.{SQLContext, Row} scala> import org.apache.spark.sql.types.{StringType, IntegerType, StructField, StructType} import org.apache.spark.sql.types.{StringType, IntegerType, StructField, StructType} scala> val sqlContext = new SQLContext(sc) warning: one deprecation (since 2.0.0); for details, enable `:setting -deprecation' or `:replay -deprecation' sqlContext: org.apache.spark.sql.SQLContext = org.apache.spark.sql.SQLContext@315f5ffb scala> val studentRDD = sc.parallelize(Array("3 Mary F 26","4 Tom M 23")).map(_.split(" ")) studentRDD: org.apache.spark.rdd.RDD[Array[String]] = MapPartitionsRDD[1] at map at <console>:26 scala> val schema = StructType(List(StructField("id", IntegerType, true),StructField("name", StringType, true),StructField("gender", StringType, true),StructField("age", StringType, true))) schema: org.apache.spark.sql.types.StructType = StructType(StructField(id,IntegerType,true),StructField(name,StringType,true),StructField(gender,StringType,true),StructField(age,StringType,true)) scala> val rowRDD = studentRDD.map(p => Row(p(0).toInt, p(1).trim, p(2).trim, p(3).trim)) rowRDD: org.apache.spark.rdd.RDD[org.apache.spark.sql.Row] = MapPartitionsRDD[2] at map at <console>:26 scala> val studentDataFrame = sqlContext.createDataFrame(rowRDD, schema) studentDataFrame: org.apache.spark.sql.DataFrame = [id: int, name: string ... 2 more fields] scala> val prop = new Properties() prop: java.util.Properties = {} scala> prop.put("user", "root") res0: Object = null scala> prop.put("password", "123456") res1: Object = null scala> prop.put("driver","com.mysql.jdbc.Driver") res2: Object = null scala> studentDataFrame.write.mode("append").jdbc("jdbc:mysql://localhost:3306/sparktest?characterEncoding=utf-8&useSSL=false", "sparktest.employee", prop)

浙公网安备 33010602011771号

浙公网安备 33010602011771号