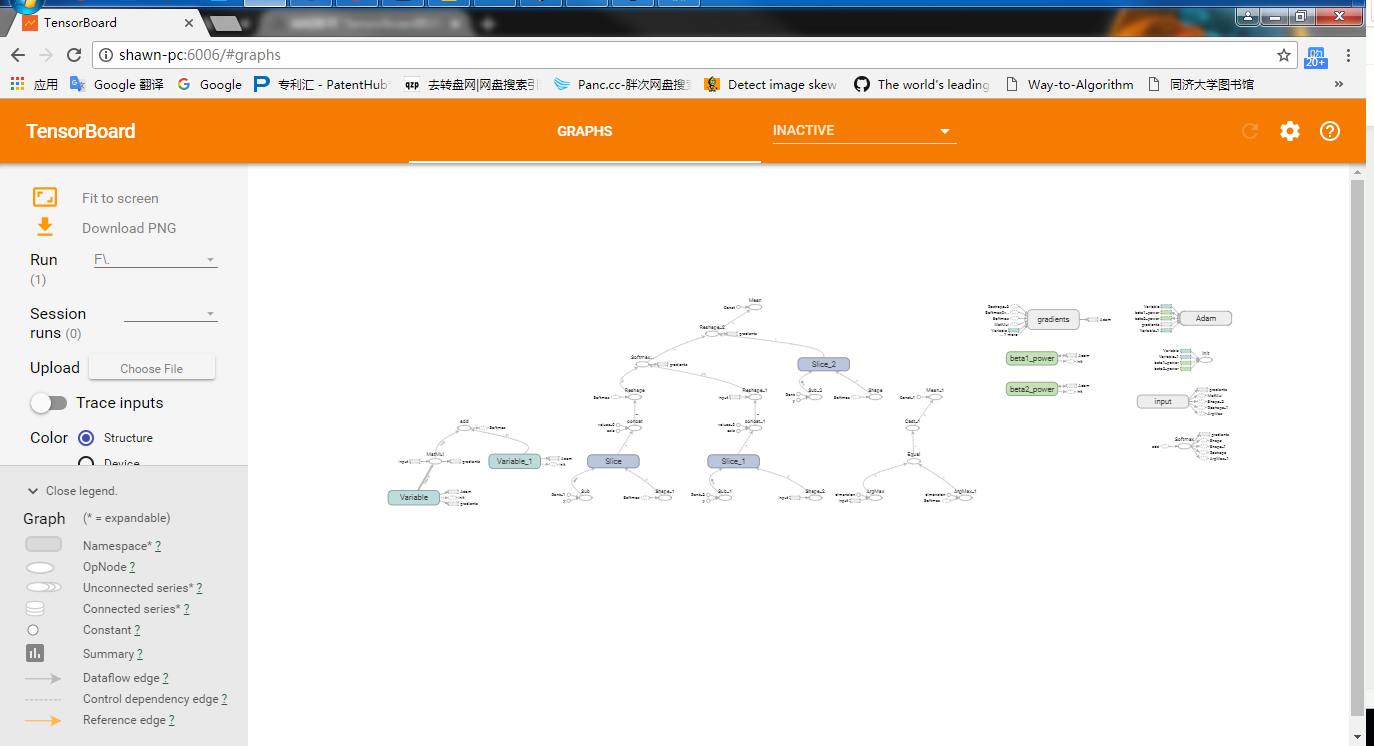

tensorboard的使用

1 # -*- coding: utf-8 -*- 2 """ 3 Created on: 2017/10/29 4 @author : Shawn 5 function : 6 """ 7 import tensorflow as tf 8 from tensorflow.examples.tutorials.mnist import input_data 9 10 # 入口函数 11 if __name__ == '__main__': 12 13 # 载入数据 14 mnist = input_data.read_data_sets("MNIST_data", one_hot=True) 15 16 # 每个批次的大小 17 batch_size= 100 18 19 # 计算一共有多少个批次 20 n_batch= mnist.train.num_examples // batch_size 21 22 # 命名空间 23 with tf.name_scope('input'): 24 # 定义两个placeholder 25 x = tf.placeholder(tf.float32, [None, 784], name='x-input') # 输入层784个神经元 26 y = tf.placeholder(tf.float32, [None, 10], name='y-input') # 输出层10个神经元,10类 27 28 W = tf.Variable(tf.zeros([784, 10])) 29 b = tf.Variable(tf.zeros([10])) 30 prediction = tf.nn.softmax(tf.matmul(x, W)+b) 31 32 # 二次代价函数 33 # loss = tf.reduce_mean(tf.square(y-prediction)) 34 loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=prediction)) 35 36 # 使用梯度下降法 37 # train_step= tf.train.GradientDescentOptimizer(0.2).minimize(loss) # 0.2为学习率 38 train_step = tf.train.AdamOptimizer(1e-1).minimize(loss) 39 40 # 初始化变量 41 init = tf.global_variables_initializer() 42 43 # 结果存在一个bool类型的列表中 44 correct_prediction = tf.equal(tf.argmax(y,1), tf.argmax(prediction, 1)) # agmax返回一维张量中最大值所在的位置 45 46 # 求准确率 47 accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) 48 49 with tf.Session() as sess: 50 sess.run(init) 51 writer = tf.summary.FileWriter('logs/', sess.graph) 52 53 # 把所有图片训练21次 54 for epoch in range(1): 55 56 # 训练n_batch批次 57 for batch in range(n_batch): 58 batch_xs, batch_ys = mnist.train.next_batch(batch_size) 59 sess.run(train_step, feed_dict={x:batch_xs, y:batch_ys}) 60 61 acc = sess.run(accuracy, feed_dict={x:mnist.test.images, y:mnist.test.labels}) 62 print ("Iter " + str(epoch)+", Testing Accuracy" + str(acc)) 63 64 pass

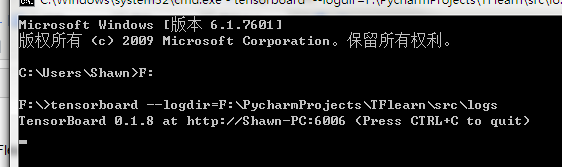

进入cmd:

tensorboard --logdir=F:\PycharmProjects\TFlearn\src\logs

输出一个网址:

用google浏览器或者火狐打开