Line Search and Quasi-Newton Methods

Gradient Descent

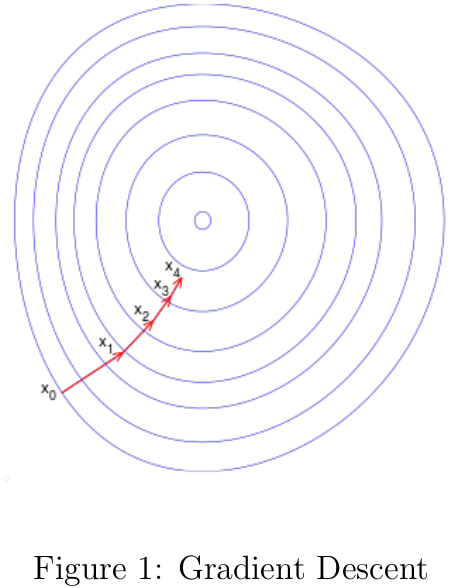

机器学习中很多模型的参数估计都要用到优化算法,梯度下降是其中最简单也用得最多的优化算法之一。梯度下降(Gradient Descent)[3]也被称之为最快梯度(Steepest Descent),可用于寻找函数的局部最小值。梯度下降的思路为,函数值在梯度反方向下降是最快的,只要沿着函数的梯度反方向移动足够小的距离到一个新的点,那么函数值必定是非递增的,如图1所示。

梯度下降思想的数学表述如下: \begin{equation} b=a-\alpha \nabla F(a)\Rightarrow f(a)\geq f(b) \end{equation} 其中\(f(x)\)为存在下界的可导函数。根据该思路,如果我们从\(x_0\)为出发点,每次沿着当前函数梯度反方向移动一定距离\(\alpha_k\),得到序列\(x_0,x_1,\cdots,x_n\): \begin{equation} x_{k+1}=x_k-\alpha_k\nabla f(x_k), 0\leq k\leq n \end{equation} 对应的各点函数值序列之间的关系为: \begin{equation} f(x_0)\geq f(x_1)\geq f(x_2)\geq\cdots\geq f(x_n) \end{equation} 很显然,当\(n\)达到一定值时,函数\(f(x)\)是会收敛到局部最小值的。算法1简单描述了一般化的梯度优化方法。 在算法1中,我们需要选择一个搜索方向\(d_k\)满足以下关系: \begin{equation} f(x_k+\alpha d_k)<f(x_k)\; \forall\alpha\in (0,\epsilon] \end{equation} 当\(d_k=-\nabla f(x)\)时\(f(x)\)下降最快,但是只要满足\(\nabla f(x_k)^Td_k<0\)的\(d_k\)都可以作为搜素方向。一般搜索方向表述为如下形式: \begin{equation} d_k=-B_k\nabla f(x_k) \end{equation} 其中\(B_k\)为正定矩阵。当\(B_k=I\)时对应最快梯度下降算法;当\(B_k=H(x_k)^{-1}\)时对应牛顿法,如果\(H(x_k)=\nabla^2f(x_k)\)为正定矩阵。 在迭代过程中用于更新\(x_k\)的步长\(\alpha_k\)可以是常数也可以是变化的。如果\(\alpha_k\)足够小,收敛是可以得到保证的,但这意味这迭代次数\(n\)要很大时函数才会收敛(图2(a));如果\(\alpha_k\)比较大,更新后的点很可能越过局部最优解(图2(b))。有什么方法可以帮助我们自动确定最优步长呢?下面要说的线性搜索就包含一组解决方案。

在算法1中,我们需要选择一个搜索方向\(d_k\)满足以下关系: \begin{equation} f(x_k+\alpha d_k)<f(x_k)\; \forall\alpha\in (0,\epsilon] \end{equation} 当\(d_k=-\nabla f(x)\)时\(f(x)\)下降最快,但是只要满足\(\nabla f(x_k)^Td_k<0\)的\(d_k\)都可以作为搜素方向。一般搜索方向表述为如下形式: \begin{equation} d_k=-B_k\nabla f(x_k) \end{equation} 其中\(B_k\)为正定矩阵。当\(B_k=I\)时对应最快梯度下降算法;当\(B_k=H(x_k)^{-1}\)时对应牛顿法,如果\(H(x_k)=\nabla^2f(x_k)\)为正定矩阵。 在迭代过程中用于更新\(x_k\)的步长\(\alpha_k\)可以是常数也可以是变化的。如果\(\alpha_k\)足够小,收敛是可以得到保证的,但这意味这迭代次数\(n\)要很大时函数才会收敛(图2(a));如果\(\alpha_k\)比较大,更新后的点很可能越过局部最优解(图2(b))。有什么方法可以帮助我们自动确定最优步长呢?下面要说的线性搜索就包含一组解决方案。

Line Search

在给定搜索方向\(d_k\)的前提下,线性搜索要解决的问题如下: \begin{equation} \alpha=arg\;\underset{\alpha\geq 0}{\min}h(\alpha)=arg\;\underset{\alpha\geq 0}{\min}f(x_k+\alpha d_k) \end{equation} 如果\(h(\alpha)\)是可微的凸函数,我们能通过解析解直接求得上式最优的步长;但非线性的优化问题需要通过迭代形式求得近似的最优步长。对于上式,局部或全局最优解对应的导数为\(h'(\alpha)=\nabla f(x_k+\alpha d_k)^Td_k=0\)。因为\(d_k\)与\(f(x_k)\)在\(x_k\)处的梯度方向夹角大于90度,因此\(h'(0)\leq 0\),如果能找到\(\hat{\alpha}\)使得\(h'(\hat{\alpha})>0\),那么必定存在\(\alpha^{\star}\in [0,\hat{\alpha})\)使得\(h'(\alpha^{\star})=0\)。有多种迭代算法可以求得\(\alpha^{\star}\)的近似值,下面选择几种典型的介绍。

Bisection Search

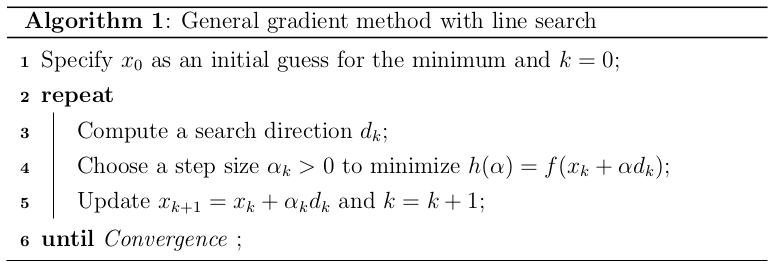

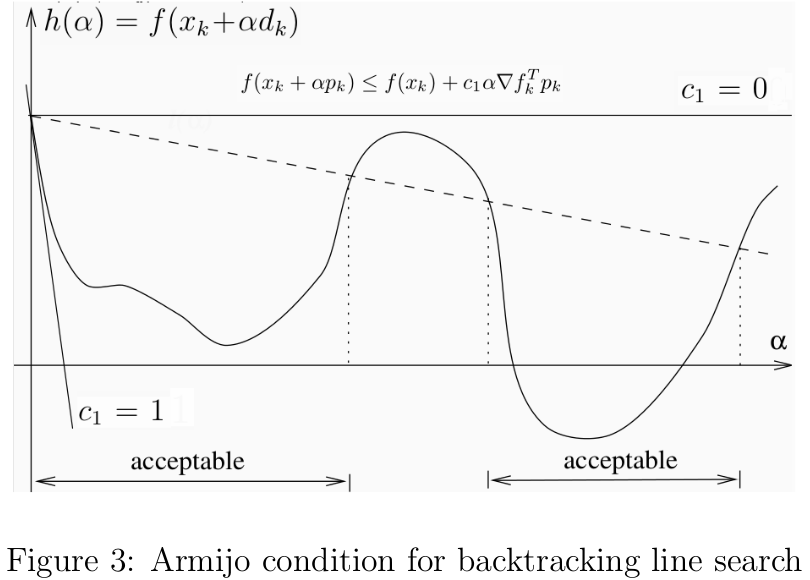

二分线性搜索(Bisection Line Search)[2]可用于求解函数的根,其思想很简单,就是不断将现有区间划分为两半,选择必定含有使\(h'(\alpha)=0\)的半个区间作为下次迭代的区间,直到寻得\(h'(\alpha^{\star})\approx 0\)为止,算法描述见2。  二分线性搜素可以确保\(h(\alpha)\)是收敛的,只要\(h(\alpha)\)在区间\((0,\hat{\alpha})\)上是连续的且\(h'(0)\)和\(h(\hat{\alpha})\)异号。经历\(n\)次迭代后,当前区间\([\alpha_l,\alpha_h]\)的长度为: \begin{equation} L=\left(\frac{1}{2}\right)^n\hat{\alpha} \end{equation} 由迭代的终止条件之一\(\alpha_h-\alpha_l\geq\epsilon\)知迭代次数的上界为: \begin{equation} L\leq \epsilon\Rightarrow k\leq\left[\log_2\left(\frac{\hat{\alpha}}{\epsilon}\right)\right] \end{equation} 下面给出二分搜索的Python代码

二分线性搜素可以确保\(h(\alpha)\)是收敛的,只要\(h(\alpha)\)在区间\((0,\hat{\alpha})\)上是连续的且\(h'(0)\)和\(h(\hat{\alpha})\)异号。经历\(n\)次迭代后,当前区间\([\alpha_l,\alpha_h]\)的长度为: \begin{equation} L=\left(\frac{1}{2}\right)^n\hat{\alpha} \end{equation} 由迭代的终止条件之一\(\alpha_h-\alpha_l\geq\epsilon\)知迭代次数的上界为: \begin{equation} L\leq \epsilon\Rightarrow k\leq\left[\log_2\left(\frac{\hat{\alpha}}{\epsilon}\right)\right] \end{equation} 下面给出二分搜索的Python代码

1 def bisection(dfun,theta,args,d,low,high,maxiter=1e4): 2 """ 3 #Functionality:find the root of the function(fun) in the interval [low,high] 4 #@Parameters 5 #dfun:compute the graident of function f(x) 6 #theta:Parameters of the model 7 #args:other variables needed to compute the value of dfun 8 #[low,high]:the interval which contains the root 9 #maxiter:the max number of iterations 10 """ 11 eps=1e-6 12 val_low=np.sum(dfun(theta+low*d,args)*d.T) 13 val_high=np.sum(dfun(theta+high*d,args)*d.T) 14 if val_low*val_high>0: 15 raise Exception('Invalid interval!') 16 iter_num=1 17 while iter_num<maxiter: 18 mid=(low+high)/2 19 val_mid=np.sum(dfun(theta+mid*d,args)*d.T) 20 if abs(val_mid)<eps or abs(high-low)<eps: 21 return mid 22 elif val_mid*val_low>0: 23 low=mid 24 else: 25 high=mid 26 iter_num+=1

Backtracking

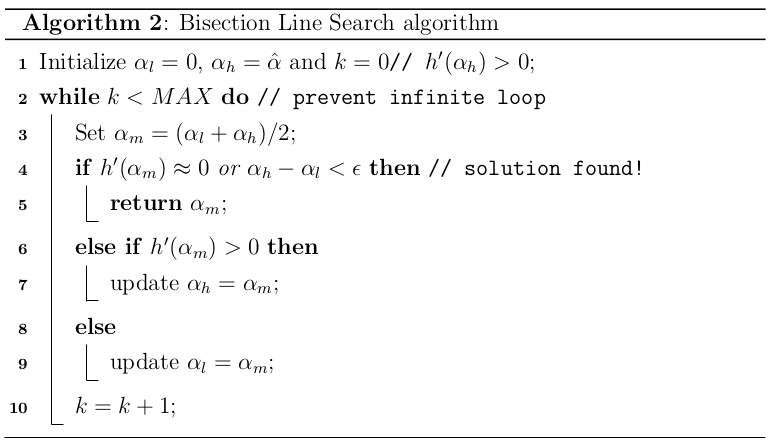

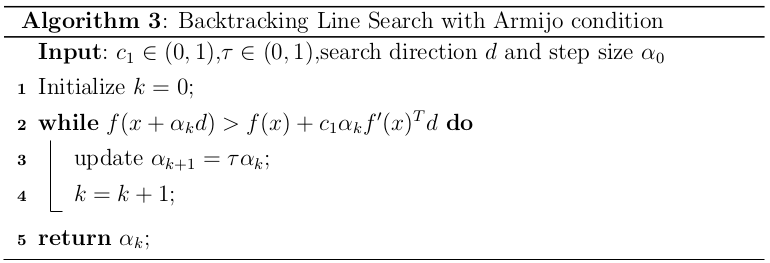

回溯线性搜索(Backing Line Search)[1]基于Armijo准则计算搜素方向上的最大步长,其基本思想是沿着搜索方向移动一个较大的步长估计值,然后以迭代形式不断缩减步长,直到该步长使得函数值\(f(x_k+\alpha d_k)\)相对与当前函数值\(f(x_k)\)的减小程度大于期望值(满足Armijo准则)为止。Armijo准则(见图3)的数学描述如下: \begin{equation} f(x_k+\alpha d_k)\leq f(x_k)+c_1\alpha f'(x_k)^Td_k \end{equation} 其中\(f:\mathbb{R}^n\rightarrow\mathbb{R}\),\(c_1\in(0,1)\),\(\alpha\)为步长,\(d_k\in\mathbb{R}^n\)为满足\(f'(x_k)^Td_k<0\)的搜索方向。 但是仅凭Armijo准则不足以求得较好的步长,根据前面的梯度下降的知识可知,只要\(\alpha\)足够小就能满足Armijo准则。因此常用的策略就是从较大的步长开始,然后以\(\tau\in(0,1)\)的速度缩短步长,直到满足Armijo准则为止,这样选出来的步长不至于太小,对应的算法描述见3。前面介绍的二分线性搜索的目标是求得满足\(h'(\alpha)\approx 0\)的最优步长近似值,而回溯线性搜索放松了对步长的约束,只要步长能使函数值有足够大的变化即可。前者可以少计算几次搜索方向,但在计算最优步长上花费了不少代价;后者退而求其次,找到一个差不多的步长即可,那么代价就是要多计算几次搜索方向。

但是仅凭Armijo准则不足以求得较好的步长,根据前面的梯度下降的知识可知,只要\(\alpha\)足够小就能满足Armijo准则。因此常用的策略就是从较大的步长开始,然后以\(\tau\in(0,1)\)的速度缩短步长,直到满足Armijo准则为止,这样选出来的步长不至于太小,对应的算法描述见3。前面介绍的二分线性搜索的目标是求得满足\(h'(\alpha)\approx 0\)的最优步长近似值,而回溯线性搜索放松了对步长的约束,只要步长能使函数值有足够大的变化即可。前者可以少计算几次搜索方向,但在计算最优步长上花费了不少代价;后者退而求其次,找到一个差不多的步长即可,那么代价就是要多计算几次搜索方向。 接下来,我们要证明回溯线性搜索在Armijo准则下的收敛性问题[6]。因为\(h'(0)=f'(x_k)^Td_k<0\),且\(0<c_1<1\),则有 \begin{equation} h'(0)<c_1h'(0)<0 \end{equation} 根据导数的基本定义,结合上式,有如下关系: \begin{equation} h'(0)=\lim_{\alpha\rightarrow 0}\frac{h(\alpha)-h(0)}{\alpha}=\lim_{\alpha\rightarrow 0}\frac{f(x_k+\alpha d_k)-f(x_k)}{\alpha}<ch'(0) \end{equation} 因此,存在一个步长\(\hat{\alpha}>0\),对任意的\(\alpha\in(0,\hat{\alpha})\),下式均成立 \begin{equation} \frac{f(x_k+\alpha d_k)-f(x_k)}{\alpha}<cf'(x_k)^Td_k \end{equation} 即\(\forall \alpha\in(0,\hat{\alpha}),f(x_k+\alpha d_k)<f(x_k)+c\alpha f'(x_k)^Td_k\)。 下面给出基于Armijo准则的线性搜索Python代码:

接下来,我们要证明回溯线性搜索在Armijo准则下的收敛性问题[6]。因为\(h'(0)=f'(x_k)^Td_k<0\),且\(0<c_1<1\),则有 \begin{equation} h'(0)<c_1h'(0)<0 \end{equation} 根据导数的基本定义,结合上式,有如下关系: \begin{equation} h'(0)=\lim_{\alpha\rightarrow 0}\frac{h(\alpha)-h(0)}{\alpha}=\lim_{\alpha\rightarrow 0}\frac{f(x_k+\alpha d_k)-f(x_k)}{\alpha}<ch'(0) \end{equation} 因此,存在一个步长\(\hat{\alpha}>0\),对任意的\(\alpha\in(0,\hat{\alpha})\),下式均成立 \begin{equation} \frac{f(x_k+\alpha d_k)-f(x_k)}{\alpha}<cf'(x_k)^Td_k \end{equation} 即\(\forall \alpha\in(0,\hat{\alpha}),f(x_k+\alpha d_k)<f(x_k)+c\alpha f'(x_k)^Td_k\)。 下面给出基于Armijo准则的线性搜索Python代码:

1 def ArmijoBacktrack(fun,dfun,theta,args,d,stepsize=1,tau=0.5,c1=1e-3): 2 """ 3 #Functionality:find an acceptable stepsize via backtrack under Armijo rule 4 #@Parameters 5 #fun:compute the value of objective function 6 #dfun:compute the gradient of objective function 7 #theta:a vector of parameters of the model 8 #stepsize:initial step size 9 #c1:sufficient decrease Parameters 10 #tau:rate of shrink of stepsize 11 """ 12 slope=np.sum(dfun(theta,args)*d.T) 13 obj_old=costFunction(theta,args) 14 theta_new=theta+stepsize*d 15 obj_new=costFunction(theta_new,args) 16 while obj_new>obj_old+c1*stepsize*slope: 17 stepsize*=tau 18 theta_new=theta+stepsize*d 19 obj_new=costFunction(theta_new,args) 20 return stepsize

Interpolation

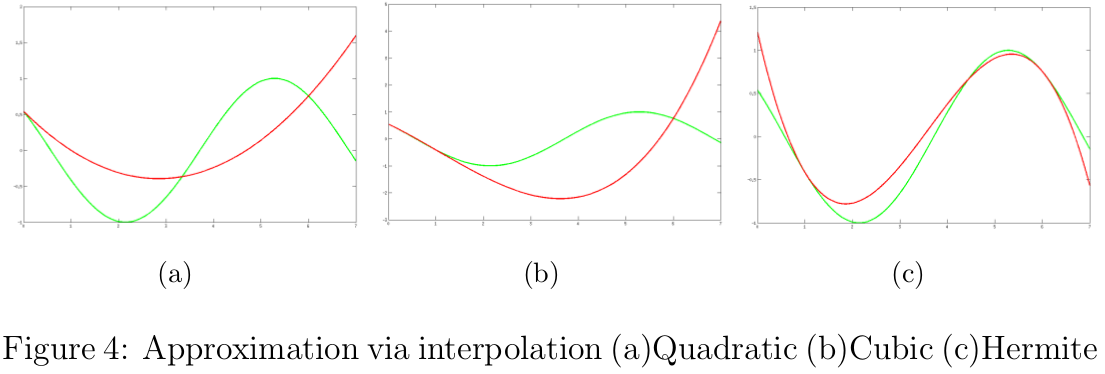

基于Armijo准则的回溯线性搜索的收敛速度无法得到保证,特别是要回退很多次后才能落入满足Armijo准则的区间。如果我们根据已有的函数值和导数信息,采用多项式插值法(Interpolation)[12,6,5,9]拟合函数,然后根据该多项式函数估计函数的极值点,这样选择合适步长的效率会高很多。 假设我们只有\(x_k\)处的函数值\(f(x_k)\)及其倒数\(f'(x_k)\),且第一次尝试的步长为\(\alpha_0\)。如果\(\alpha_0\)不满足条件,那么我们根据这些信息可以构造一个二次近似函数\(h_q(\alpha)\) \begin{equation} h_q(\alpha)=\left(\frac{h(\alpha_0)-h(0)-\alpha_0h'(0)}{\alpha_0^2}\right)\alpha^2+h'(0)\alpha+h(0) \end{equation} 注意,该二次函数满足\(h_q(0)=h(0)\),\(h_q'(0)=h'(0)\)和\(h_q(\alpha_0)=h(\alpha_0)\),如图4(a)所示。接下来,根据\(h_q(\alpha)\)的最小值估计下一个步长: \begin{equation} \alpha_1=\frac{h'(0)\alpha_0^2}{2[h(0)+h'(0)\alpha_0-h(\alpha_0)]} \end{equation} 如果\(\alpha_1\)仍然不满足条件,我们可以继续重复上述过程,直到得到的步长满足条件为止。假设我们在整个线性搜索过程中都用二次插值函数,那么最好有\(c_1\in(0,0.5]\),为什么呢?简单证明一下:如果\(\alpha_0\)不满足Armijo准则,那么必定存在比\(\alpha_0\)小的步长满足该准则,所以利用二次插值函数估算的步长\(\alpha_1<\alpha_0\)才合理。结合\(\alpha_0\)不满足Armijo准则和\(\alpha_1<\alpha_0\),可知\(c_1\leq 0.5\)。 如果我们已经尝试了多个步长,却每次只用上一次步长的相关信息构造二次函数,未免是对计算资源的浪费,其实我们可以利用多个步长的信息构造信息量更大更准确的插值函数的。在计算导数的代价大于计算函数值的代价时,应尽量避免计算\(h'(\alpha)\),下面给出一个三次插值函数\(h_c(\alpha)\),如图4(b)所示 \begin{equation} h_c(\alpha)=a\alpha^3+b\alpha^2+h'(0)\alpha+h(0) \end{equation} 其中 \begin{equation} \left[\begin{array}{c} a\\ b \end{array}\right] =\frac{1}{\alpha_{i-1}^2\alpha_i^2(\alpha_i-\alpha_{i-1})} \left[\begin{array}{cc} \alpha_{i-1}^2 & -\alpha_i^2\\ -\alpha_{i-1}^3 & \alpha_i^3 \end{array}\right] \left[\begin{array}{c} h(\alpha_i)-h(0)-h'(0)\alpha_i\\ h(\alpha_{i-1})-h(0)-h'(0)\alpha_{i-1} \end{array}\right] \end{equation} 对\(h_c(\alpha)\)求导,可得极值点\(\alpha_{i+1}\in[0,\alpha_i]\)的形式如下: \begin{equation} \alpha_{i+1}=\frac{-b+\sqrt{b^2-3ah'(0)}}{3a} \end{equation} 利用以上的三次插值函数求解下一个步长的过程不断重复,直到步长满足条件为止。如果出现\(a=0\)的情况,三次插值函数退化为二次插值函数,在实现该算法时需要注意这点。在此过程中,如果\(\alpha_i\)太小或\(\alpha_{i-1}\)与\(\alpha_i\)太接近,需要重置\(\alpha_i=\alpha_{i-1}/2\),该保护措施(safeguards)保证下一次的步长不至于太小[6,5]。为什么会有这个作用呢?1)因为\(\alpha_{i+1}\in[0,\alpha_i]\),所以当\(\alpha_i\)很小时\(\alpha_{i+1}\)也很小;2)当\(\alpha_{i-1}\)与\(\alpha_i\)太靠近时有\(a\approx b\approx\infty\),根据\(\alpha_{i+1}\)的表达式可知\(\alpha_{i+1}\approx 0\)。 但是,在很多情况下,计算函数值后只需付出较小的代价就能顺带计算出导数值或其近似值,这使得我们可以用更精确的三次Hermite多项式[6]进行插值,如图4(c)所示 \begin{equation} \begin{array}{rl} H_3(\alpha)=&\left[1+2\frac{\alpha_i-\alpha}{\alpha_i-\alpha_{i-1}}\right]\left[\frac{\alpha-\alpha_{i-1}}{\alpha_i-\alpha_{i-1}}\right]^2h(\alpha_{i})\\ &+\left[1+2\frac{\alpha-\alpha_{i-1}}{\alpha_i-\alpha_{i-1}}\right]\left[\frac{\alpha_{i+1}-\alpha}{\alpha_i-\alpha_{i-1}}\right]^2h(\alpha_{i-1})\\ &+(\alpha-\alpha_i)\left[\frac{\alpha-\alpha_{i-1}}{\alpha_i-\alpha_{i-1}}\right]^2h'(\alpha_i)\\ &+(\alpha-\alpha_{i-1})\left[\frac{\alpha_i-\alpha}{\alpha_i-\alpha_{i-1}}\right]^2h'(\alpha_{i-1}) \end{array} \end{equation} 其中,三次Hermite多项式满足\(H_3(\alpha_{i-1})=h(\alpha_{i-1})\),\(H_3(\alpha_{i})=h(\alpha_{i})\),\(H_3'(\alpha_{i-1})=h'(\alpha_{i-1})\)和\(H_3'(\alpha_{i})=h'(\alpha_{i})\)。\(H(\alpha)\)的最小值只可能在两侧的端点或者极值点处,令\(H_3'(\alpha)=0\)可得下一个步长: \begin{equation} \alpha_{i+1}=\alpha_i-(\alpha_i-\alpha_{i-1})\left[\frac{h'(\alpha_i)+d_2-d_1}{h'(\alpha_i)-h'(\alpha_{i-1})+2d_2}\right] \end{equation} 其中 \begin{equation} d_1=h'(\alpha_i)+h'(\alpha_{i-1})-3\left[\frac{h(\alpha_i)-h(\alpha_{i-1})}{\alpha_i-\alpha_{i-1}}\right] \end{equation} \begin{equation} d_2=sign(\alpha_i-\alpha_{i-1})\sqrt{d_1^2-h'(\alpha_{i-1})h'(\alpha_i)} \end{equation} 下面给出二次插值及三次插值的Python代码:

下面给出二次插值及三次插值的Python代码:

1 def quadraticInterpolation(a,h,h0,g0): 2 """ 3 #Functionality:Approximate h(a) with a quadratic function and return its stationary point 4 #@Parameters 5 #a:current stepsize 6 #h:a function value about stepsize,h(a)=f(x_k+a*d) 7 #h:h(0)=f(x_k) 8 #g0:h'(0)=f'(0) 9 """ 10 numerator=g0*a**2 11 denominator=2*(g0*a+h0-h) 12 if abs(denominator)<1e-12:#indicates that a is almost 0 13 return a 14 return numerator/denominator

def cubicInterpolation(a0,h0,a1,h1,h,g): """ #Functionality:Approximate h(x) with a cubic function and return its stationary point #This version of cubic interpolation computes h'(x) as few as possible,suitable for the case in which computing derivative is more expensive than computing function values #@Parameters #a0 and a1 are stepsize it previous two iterations #h0:h(a0) #h1:h(a1) #h:h(0)=f(x) #g:h'(0) """ mat=matlib.matrix([[a0**2,-a1**2],[-a0**3,a1**3]]) vec=matlib.matrix([[h1-h-g*a1],[h0-h-g*a0]]) ab=mat*vec/(a0**2*a1**2*(a1-a0)) a=ab[0,0] b=ab[1,0] if abs(a)<1e-12:#a=0 and cubic function is a quadratic one return -g/(2*b) return (-b+np.sqrt(b**2-3*a*g))/(3*a)

def cubicInterpolationHermite(a0,h0,g0,a1,h1,g1): """ #Functionality:Approximate h(a) with a cubic Hermite polynomial function and return its stationary point #This version of cubic interpolation computes h(a) as few as possible,suitable for the case in which computing derivative is easier than computing function values #@Parameters #a0 and a1 are stepsize it previous two iterations #h0:h(a0) #g0:h'(a0) #h1:h(a1) #g1:h'(a1) """ d1=g0+g1-3*(h1-h0)/(a1-a0) d2=np.sign(a1-a0)*np.sqrt(d1**2-g0*g1) res=a1-(a1-a0)*(g1+d2-d2)/(g1-g0+2*d2) return res

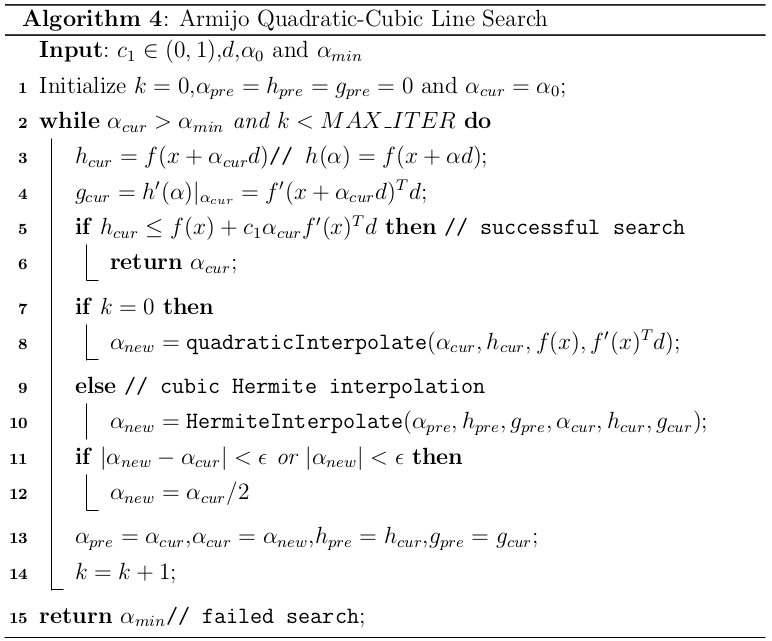

基于Armijo准则的线性搜索的算法描述如下[4]  对应的Armijo线性搜索的Python代码如下:

对应的Armijo线性搜索的Python代码如下:

1 def ArmijoLineSearch(fun,dfun,theta,args,d,a0=1,c1=1e-3,a_min=1e-7,max_iter=1e5): 2 """ 3 #Functionality:Line search under Armijo condition with quadratic and cubic interpolation 4 #@Parameters 5 #fun:objective Function 6 #dfun:compute the gradient of fun 7 #theta:a vector of parameters of the model 8 #args:other variables needed for fun and func 9 #d:search direction 10 #a0:initial stepsize 11 #c1:constant used in Armijo condition 12 #a_min:minimun of stepsize 13 #max_iter:maximum of the number of iterations 14 """ 15 eps=1e-6 16 c1=min(c1,0.5)#c1 should<=0.5 17 a_pre=h_pre=g_pre=0 18 a_cur=a0 19 f_val=fun(theta,args) #h(0)=f(0) 20 g_val=np.sum(dfun(theta,args)*d.T) #h'(0)=f'(x)^Td 21 h_cur=g_cur=0 22 k=0 23 while a_cur>a_min and k<max_iter: 24 h_cur=fun(theta+a_cur*d,args) 25 g_cur=np.sum(dfun(theta+a_cur*d,args)*d.T) 26 if h_cur<=f_val+c1*a_cur*g_val: #meet Armijo condition 27 return a_cur 28 if not k: #k=0,use quadratic interpolation 29 a_new=quadraticInterpolation(a_cur,h_cur,f_val,g_val) 30 else: #k>0,use cubic Hermite interpolation 31 a_new=cubicInterpolationHermite(a_pre,h_pre,g_pre,a_cur,h_cur,g_cur) 32 if abs(a_new-a_cur)<eps or abs(a_new)<eps: #safeguard procedure 33 a_new=a_cur/2 34 a_pre=a_cur 35 a_cur=a_new 36 h_pre=h_cur 37 g_pre=g_cur 38 k+=1 39 return a_min #failed search

Wolfe Search

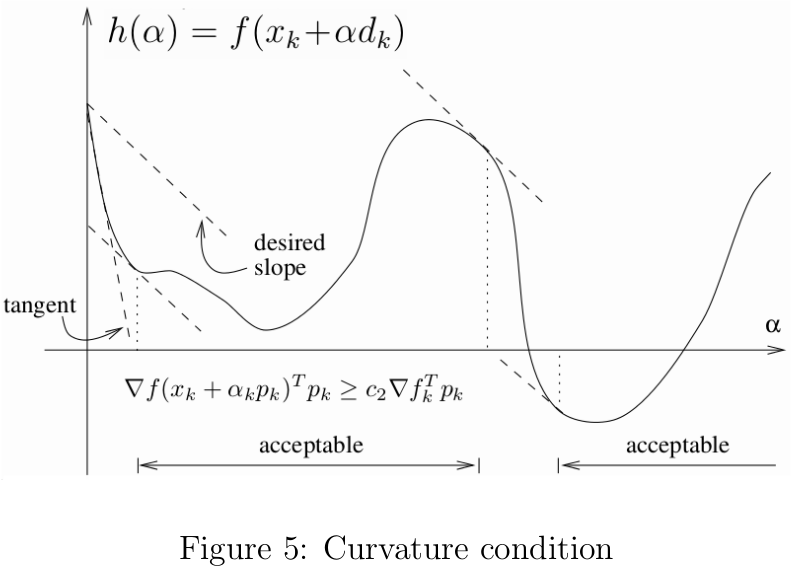

前面说到单凭Armijo准则(不考虑回溯策略)选出的步长可能太小,为了排除这些微小的步长,我们加上曲率的约束条件(如图5所示) \begin{equation} h'(\alpha)=f'(x_k+\alpha d_k)^Td_k\geq c_2f'(x_k)^Td_k \end{equation}其中\(c_2\in(c_1,1)\),\(c_1\)为Armijo准则中的常量。 当\(h'(\alpha)\)为很小的负数甚至为正数时,说明从\(x_k\)沿着\(d_k\)移动\(\alpha\)后的函数梯度方向与搜索方向的夹角接近90度,继续向前移动已经不能很明显减小函数值了,此时可以停止沿着\(d_k\)继续搜索;反之,说明继续减小函数值的空间还是很大的,可以继续向前搜索。Armijo准则与曲率约束两者合起来称为Wolfe准则[5]: \begin{equation} \left\lbrace \begin{array}{rl} f(x_k+\alpha d_k)&\leq f(x_k)+c_1\alpha f'(x_k)^Td_k\\ f'(x_k+\alpha d_k)^Td_k&\geq c_2f'(x_k)^Td_k \end{array} \right. \end{equation} 其中\(0<c_1<c_2<1\)。如图6所示,满足Wolfe准则的步长也许离\(h(\alpha)\)的极值点较远。我们可以修改曲率约束条件使得步长落到\(h(\alpha)\)的极值点的一个较宽的领域中,强Wolfe准则对步长\(\alpha\)的约束如下: \begin{equation} \left\lbrace \begin{array}{rl} f(x_k+\alpha d_k)&\leq f(x_k)+c_1\alpha f'(x_k)^Td_k\\ |f'(x_k+\alpha d_k)^Td_k|&\geq c_2|f'(x_k)^Td_k| \end{array} \right. \end{equation} 强Wolfe准则不允许\(h'(\alpha)\)为太大的正数,可以排除远离极值点的区间。

当\(h'(\alpha)\)为很小的负数甚至为正数时,说明从\(x_k\)沿着\(d_k\)移动\(\alpha\)后的函数梯度方向与搜索方向的夹角接近90度,继续向前移动已经不能很明显减小函数值了,此时可以停止沿着\(d_k\)继续搜索;反之,说明继续减小函数值的空间还是很大的,可以继续向前搜索。Armijo准则与曲率约束两者合起来称为Wolfe准则[5]: \begin{equation} \left\lbrace \begin{array}{rl} f(x_k+\alpha d_k)&\leq f(x_k)+c_1\alpha f'(x_k)^Td_k\\ f'(x_k+\alpha d_k)^Td_k&\geq c_2f'(x_k)^Td_k \end{array} \right. \end{equation} 其中\(0<c_1<c_2<1\)。如图6所示,满足Wolfe准则的步长也许离\(h(\alpha)\)的极值点较远。我们可以修改曲率约束条件使得步长落到\(h(\alpha)\)的极值点的一个较宽的领域中,强Wolfe准则对步长\(\alpha\)的约束如下: \begin{equation} \left\lbrace \begin{array}{rl} f(x_k+\alpha d_k)&\leq f(x_k)+c_1\alpha f'(x_k)^Td_k\\ |f'(x_k+\alpha d_k)^Td_k|&\geq c_2|f'(x_k)^Td_k| \end{array} \right. \end{equation} 强Wolfe准则不允许\(h'(\alpha)\)为太大的正数,可以排除远离极值点的区间。 那么到底是否存在满足强Wolfe准则的步长呢?假设\(h(\alpha)=f(x_k+\alpha d_k)\)连续可微,在整个\(\alpha>0\)的定义域上存在下界。因为\(0<c_1<1\),所以\(l(\alpha)=f(x_k)+\alpha c_1f'(x_k)^Td_k\)必然与\(h(\alpha)\)至少有一个交点。假设\(\alpha'\)为最小的交点对应的步长,则有 \begin{equation} f(x_k+\alpha'd_k)=f(x_k)+\alpha'c_1f'(x_k)^Td_k \end{equation} 那么对于满足\(\alpha\in(0,\alpha')\)的步长必然都满足Armijo准则。根据零值定理,存在\(\alpha''\in(0,\alpha')\)满足 \begin{equation} f(x_k+\alpha'd_k)-f(x_k)=\alpha'f'(x_k+\alpha''d_k)^Td_k \end{equation} 结合上面两个关系式,由\(0<c_1<c_2\)和\(f'(x_k)^Td_k<0\),可得 \begin{equation} f'(x_k+\alpha''d_k)^Td_k=c_1f'(x_k)^Td_k>c_2f'(x_k)^Td_k \end{equation} 由此可知,\(\alpha''\)满足强Wolfe准则。如果\(h(\alpha)\)是一个较为平滑的函数,那么包含\(\alpha''\)的较小领域都会满足强Wolfe准则。 如果在线性搜索过程中利用强Wolfe准则,可以更精确得找到更靠近极值点的步长,在目前线性搜索中用得很多。基于强Wolfe准则的线性搜索包含两个阶段:第一个阶段从初始步长开始,不断增大步长,直到找到一个满足强Wolfe准则的步长或包含该步长的区间为止;第二个阶段是在已知包含满足强Wolfe准则步长区间的基础上,不断缩减区间,直到找到满足强Wolfe准则的步长为止。基于强Wolfe准则的线性搜索算法描述如下5

那么到底是否存在满足强Wolfe准则的步长呢?假设\(h(\alpha)=f(x_k+\alpha d_k)\)连续可微,在整个\(\alpha>0\)的定义域上存在下界。因为\(0<c_1<1\),所以\(l(\alpha)=f(x_k)+\alpha c_1f'(x_k)^Td_k\)必然与\(h(\alpha)\)至少有一个交点。假设\(\alpha'\)为最小的交点对应的步长,则有 \begin{equation} f(x_k+\alpha'd_k)=f(x_k)+\alpha'c_1f'(x_k)^Td_k \end{equation} 那么对于满足\(\alpha\in(0,\alpha')\)的步长必然都满足Armijo准则。根据零值定理,存在\(\alpha''\in(0,\alpha')\)满足 \begin{equation} f(x_k+\alpha'd_k)-f(x_k)=\alpha'f'(x_k+\alpha''d_k)^Td_k \end{equation} 结合上面两个关系式,由\(0<c_1<c_2\)和\(f'(x_k)^Td_k<0\),可得 \begin{equation} f'(x_k+\alpha''d_k)^Td_k=c_1f'(x_k)^Td_k>c_2f'(x_k)^Td_k \end{equation} 由此可知,\(\alpha''\)满足强Wolfe准则。如果\(h(\alpha)\)是一个较为平滑的函数,那么包含\(\alpha''\)的较小领域都会满足强Wolfe准则。 如果在线性搜索过程中利用强Wolfe准则,可以更精确得找到更靠近极值点的步长,在目前线性搜索中用得很多。基于强Wolfe准则的线性搜索包含两个阶段:第一个阶段从初始步长开始,不断增大步长,直到找到一个满足强Wolfe准则的步长或包含该步长的区间为止;第二个阶段是在已知包含满足强Wolfe准则步长区间的基础上,不断缩减区间,直到找到满足强Wolfe准则的步长为止。基于强Wolfe准则的线性搜索算法描述如下5

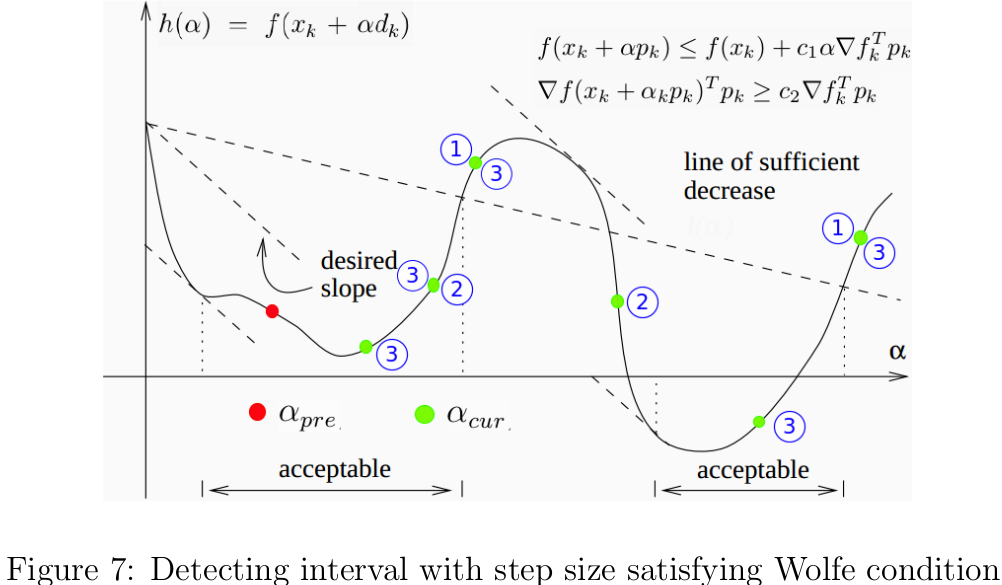

在算法5中,\(\alpha_{new}\)的更新是因为在区间\((\alpha_{pre},\alpha_{cur})\)内没有满足Wolfe准则的步长,所以要选取一个大于\(\alpha_{cur}\)的步长\(\alpha_{new}\)。在算法中,我们是用二次插值函数计算\(\alpha_{new}\)的,所以要求\(0<c_1<0.5\)。当然,也可以用其他方法,比如让\(\alpha_{cur}\)乘以一个大于1的常数,只要能较快找到一个包含满足Wolfe的区间即可。所以,该算法每次尝试的步长\(\alpha_{cur}\)的逐渐递增的;一旦找到了包含满足Wolfe准则的步长的区间,立即调用\(zoom\)函数不断缩短区间,并返回满足Wolfe的步长。根据算法逻辑,我们可以推断出\(\alpha_{pre}\)满足Armijo准则,但违背曲率约束,而且导数为负数。由上述三个条件,可知\(\alpha_{pre}\)必定位于满足Wolfe准则的区间的左侧的呈下降趋势的曲线上,只要\(\alpha_{cur}\)位于该区间的右侧即可。那么怎样判断区间\((\alpha_{pre},\alpha_{cur})\)是否包含满足Wolfe准则的步长呢?下面给出三种\(\alpha_{cur}\)位于该区间的右侧的充分条件:

- \(\alpha_{cur}\)不满足Armijo准则;

- \(h(\alpha_{cur})\geq h(\alpha_{pre})\);

- \(h'(\alpha_{cur})\geq 0\)

这一点结合图7就很容易理解了,我在图中分别用红色和绿色点标注了\(\alpha_{pre}\)和\(\alpha_{cur}\)可能的位置,蓝色带数字的圆圈注明了\(\alpha_{cur}\)满足哪些条件。  基于Wolfe准则的线性搜索Python代码如下:

基于Wolfe准则的线性搜索Python代码如下:

1 def WolfeLineSearch(fun,dfun,theta,args,d,a0=1,c1=1e-4,c2=0.9,a_min=1e-7,max_iter=1e5): 2 """ 3 #Functionality:find a stepsize meeting Wolfe condition 4 #@Parameters 5 #fun:objective Function 6 #dfun:compute the gradient of fun 7 #theta:a vector of parameters of the model 8 #args:other variables needed for fun and func 9 #d:search direction 10 #a0:intial stepsize 11 #c1:constant used in Armijo condition 12 #c2:constant used in curvature condition 13 #a_min:minimun of stepsize 14 #max_iter:maximum of the number of iterations 15 """ 16 eps=1e-16 17 c1=min(c1,0.5) 18 a_pre=0 19 a_cur=a0 20 f_val=fun(theta,args) #h(0)=f(x) 21 g_val=np.sum(dfun(theta,args)*d.T) 22 h_pre=f_val #h'(0)=f'(x)^Td 23 k=0 24 while k<max_iter and abs(a_cur-a_pre)>=eps: 25 h_cur=fun(theta+a_cur*d,args) #f(x+ad) 26 if h_cur>f_val+c1*a_cur*g_val or h_cur>=h_pre and k>0: 27 return zoom(fun,dfun,theta,args,d,a_pre,a_cur,c1,c2) 28 g_cur=np.sum(dfun(theta+a_cur*d,args)*d.T) 29 if abs(g_cur)<=-c2*g_val:#satisfy Wolfe condition 30 return a_cur 31 if g_cur>=0: 32 return zoom(fun,dfun,theta,args,d,a_pre,a_cur,c1,c2) 33 a_new=quadraticInterpolation(a_cur,h_cur,f_val,g_val) 34 a_pre=a_cur 35 a_cur=a_new 36 h_pre=h_cur 37 k+=1 38 return a_min

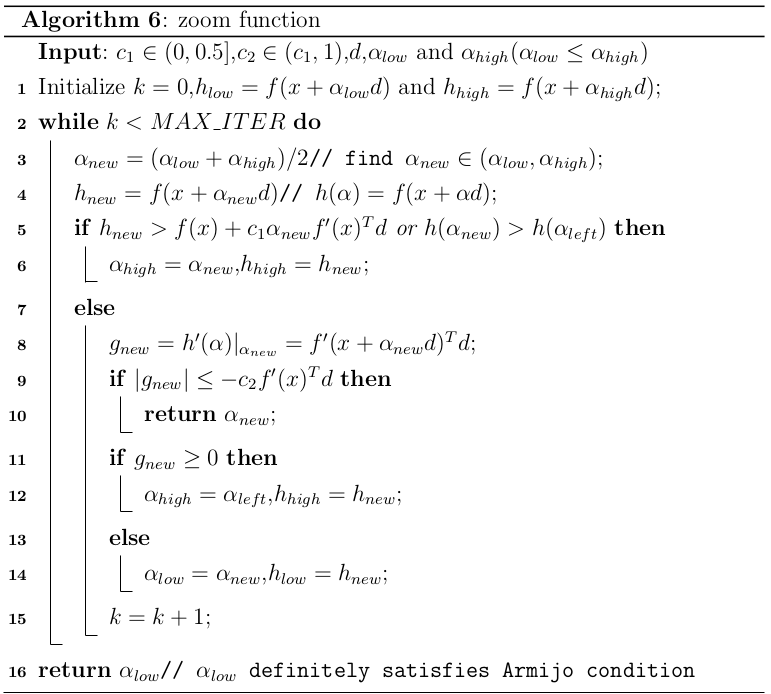

zoom函数的算法描述见6。zoom函数中需要传入搜寻区间\([\alpha_{low},\alpha_{high}]\),其中\(\alpha_{low}<\alpha_{high}\)。本文中的zoom函数与文献[5]中的内容略有差异,但是本文的zoom函数思路更简单和清晰。由算法5中分析得到的调用zoom函数的条件,知道\(\alpha_{low}\)必须满足Armijo准则,且位于所有满足Wolfe准则的步长的左侧。我们先取\([\alpha_{low},\alpha_{high}]\)区间的中值作为下一个测试的步长\(\alpha_{new}\),如果恰好满足Wolfe准则,则直接返回。如果\(\alpha_{new}\)违反Armijo准则或大于\(h(\alpha_{low})\),显然区间\([\alpha_{low},\alpha_{new}]\)包含满足Wolfe准则的步长,因此用\(\alpha_{new}\)替换\(\alpha_{high}\)以缩短区间长度;否则,\(\alpha_{new}\)必然也满足Armijo准则,如果\(h'(\alpha_{new})>0\),则\(\alpha_{new}\)与\(\alpha_{high}\)都在满足Wolfe准则的区间右侧,用\(\alpha_{new}\)替代\(\alpha_{high}\),反之则用\(\alpha_{new}\)替代\(\alpha_{low}\)。上述的迭代过程不断缩短步长,知道求得满足Wolfe准则的步长为止。如果在有限迭代次数内搜索失败,则返回必然满足Armijo准则的步长\(\alpha_{low}\)。

zoom函数对应的Python代码如下:

1 def zoom(fun,dfun,theta,args,d,a_low,a_high,c1=1e-3,c2=0.9,max_iter=1e4): 2 """ 3 #Functionality:enlarge the interval to find a stepsize meeting Wolfe condition 4 #@Parameters 5 #fun:objective Function 6 #dfun:compute the gradient of fun 7 #theta:a vector of parameters of the model 8 #args:other variables needed for fun and func 9 #d:search direction 10 #[a_low,a_high]:interval containing a stepsize satisfying Wolfe condition 11 #c1:constant used in Armijo condition 12 #c2:constant used in curvature condition 13 #max_iter:maximum of the number of iterations 14 """ 15 if a_low>a_high: 16 print('low:%f,high:%f'%(a_low,a_high)) 17 raise Exception('Invalid interval of stepsize in zoom procedure') 18 eps=1e-16 19 h=fun(theta,args) #h(0)=f(x) 20 g=np.sum(dfun(theta,args)*d.T) #h'(0)=f'(x)^Td 21 k=0 22 h_low=fun(theta+a_low*d,args) 23 h_high=fun(theta+a_high*d,args) 24 if h_low>h+c1*a_low*g: 25 raise Exception('Left endpoint violates Armijo condition in zoom procedure') 26 while k<max_iter and abs(a_high-a_low)>=eps: 27 a_new=(a_low+a_high)/2 28 h_new=fun(theta+a_new*d,args) 29 if h_new>h+c1*a_new*g or h_new>h_low: 30 a_high=a_new 31 h_high=h_new 32 else: 33 g_new=np.sum(dfun(theta+a_new*d,args)*d.T) 34 if abs(g_new)<=-c2*g: #satisfy Wolfe condition 35 return a_new 36 if g_new*(a_high-a_low)>=0: 37 a_high=a_new 38 h_high=h_new 39 else: 40 a_low=a_new 41 h_low=h_new 42 k+=1 43 return a_low #a_low definitely satisfy Armijo condition

Newton's Method

牛顿法(Newton's method)[8]以迭代方式求解函数的根,其基本思想是从一个初始点出发,不断在当前点\(x_k\)处用切线近似函数\(f(x)\),并求得该切线与\(x\)轴的交点作为下一次的迭代初始点\(x_{k+1}\),直到找到\(f(x)=0\)的近似解为止。Newton法可用于二次可微函数\(f(x)\)的最优化问题。 在\(x_k\)处用二阶泰勒展开来对\(f(x_k)\)其进行逼近。 \begin{equation} f(x_{k}+\triangle x)\approx f(x_k)+f'(x_k)\triangle x+\frac{1}{2}{\triangle x}^TB_k\triangle x \end{equation} 现在,我们的目标是在\(x^{k}\)附近求得使\(f(x)\)取得极小值的\(\triangle x\)。将上式对\(\triangle x\)求导可得函数\(f'(x)\)在\(x_{k+1}=x_k+\triangle x\)处的线性近似如下: \begin{equation} f'(x_{k+1})=f'(x_k)+B_k(x_{k+1}-x_k) \end{equation} 其中\(B_k=\nabla^2f(x_k)\)为\(f(x)\)在\(x_k\)处对应的Hessian矩阵。由于函数的极值点一般都对应\(f'(x)=0\),令\(f'(x_{k+1})=0\)并化简可得迭代公式为: \begin{equation} x_{k+1}=x_k-B_k^{-1}f'(x_k) \end{equation} 牛顿迭代法收敛速度很快,对于二次函数可以一次性找到最优解。但用于求解优化问题时,需要付出很大的代价求得函数的一阶导数、二阶导数及其逆矩阵。此外,有的函数还存在不可导、Hessian矩阵不可逆、迭代点之间存在循环(即\(x_{k+t}=x_k\))等情形,这些都成为了牛顿法的应用障碍。牛顿迭代法用于求解极值点\(f'(x)=0\)的步骤见算法7。当然,也可用牛顿法求最优步长,只需将算法7中的函数\(f(x)\)替换为关于步长的函数\(h(\alpha)\)即可。

Quasi-Newton Method

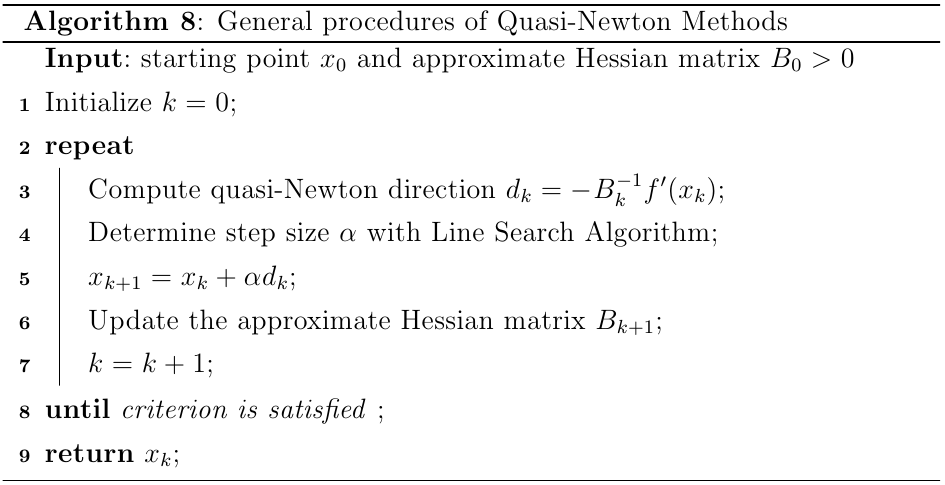

拟牛顿(Quasi-Newton)[11]算法可用于求解函数的局部最优解,也就是那些导数为0的驻点。牛顿法用于解决优化问题时,事先假设原函数可用二次函数近似,然后用一阶和二阶导数寻找局部最优解。而在拟牛顿算法中,不需要准确计算Hessian矩阵,取而代之的是运用下面的拟牛顿条件分析连续两个梯度向量得到的近似值矩阵\(B_k\): \begin{equation} f'(x_{k+1})-f'(x_k)\approx B_{k+1}(x_{k+1}-x_k) \end{equation} 拟牛顿算法的算法流程见8,其基本思想是利用矩阵\(B_k\)计算牛顿方向的近似值\(d_k\)。各种拟牛顿算法的主要差异在于近似Hessian矩阵的更新策略,表1列出了部分主流的拟牛顿算法的迭代更新规则,其中\(s_k=x_{k+1}-x_k=-\alpha_kB_k^{-1}f'(x_k)\),\(y_k=f'(x_{k+1})-f'(x_k)\)。

拟牛顿算法中最常用的是BFGS,其针对有限内存的机器的算法变种L-BFGS[4]在机器学习领域又备受青睐。BFGS需要存储\(n\times n\)的矩阵\(H_k\)用于近似Hessian矩阵的逆矩阵;而L-BFGS仅需要存储过去\(m\)(\(m\)一般小于10)对\(n\)维的更新数据\((x,f'(x_i))\)即可。L-BFGS的空间复杂度为线性,特别适用于变量非常多的优化问题。BFGS的算法描述很容易写出来,如下:

拟牛顿算法中最常用的是BFGS,其针对有限内存的机器的算法变种L-BFGS[4]在机器学习领域又备受青睐。BFGS需要存储\(n\times n\)的矩阵\(H_k\)用于近似Hessian矩阵的逆矩阵;而L-BFGS仅需要存储过去\(m\)(\(m\)一般小于10)对\(n\)维的更新数据\((x,f'(x_i))\)即可。L-BFGS的空间复杂度为线性,特别适用于变量非常多的优化问题。BFGS的算法描述很容易写出来,如下:

1 def BFGS(fun,dfun,theta,args,H=None,mode=0,eps=1e-12,max_iter=1e4): 2 """ 3 #Functionality:find the minimum of objective function f(x) 4 #@Parameters 5 #fun:objective function f(x) 6 #dfun:compute the gradient of f(x) 7 #args:parameters needed by fun and dfun 8 #theta:start vector of parameters of the model 9 #H:initial inverse Hessian approximation 10 #mode:index of line search algorithm 11 """ 12 x_pre=x_cur=theta 13 g=dfun(x_cur,args) 14 I=matlib.eye(theta.size) 15 if not H:#initialize H as an identity matrix 16 H=I 17 k=0 18 while k<max_iter and np.sum(np.abs(g))>eps: 19 d=-g*H 20 step=LineSearch(fun,dfun,x_pre,args,d,1,mode) 21 x_cur=x_pre+step*d 22 s=step*d 23 y=dfun(x_cur,args)-dfun(x_pre,args) 24 ys=np.sum(y*s.T) 25 if abs(ys)<eps: 26 return x_cur 27 change=(ys+np.sum(y*H*y.T))*(s.T*s)/(ys**2)-(H*y.T*s+s.T*y*H)/ys 28 H+=change 29 g=dfun(x_cur,args) 30 x_pre=x_cur 31 k+=1 32 return x_cur

下面我们分析如何构造下L-BFGS的算法[10,13]。假设我们现在处于优化过程的第\(k(k\geq m)\)次迭代,参数为\(x_k\),梯度\(g_k=f'(x_k)\),已经保存的\(m\)条更新数据为\(s_k=x_{k+1}-x_k\)及\(y_k=g_{k+1}-g_k\)。我们最终需要计算的是搜索方向\(d_k=-H_kg_k\),于是令\(V_k=(I-\rho_ky_ks_k^T)\),\(\rho_k=1/(y_k^Ts_k)\),将表1中BFGS的\(H_{k}\)的更新规则展开,我们可以得到下式: \begin{equation} \begin{array}{rl} &H_{k}g_k\\ =&V_{k-1}^TH_{k-1}V_{k-1}g_k+s_{k-1}\rho_{k-1}s_{k-1}^Tg_k\\ =&V_{k-1}^TV_{k-2}^TH_{k-2}V_{k-2}V_{k-1}g_k+V_{k-1}^Ts_{k-2}\rho_{k-2}s_{k-2}^TV_{k-1}g_k+s_{k-1}\rho_{k-1}s_{k-1}^Tg_k\\ =&(V_{k-1}^TV_{k-2}^T\cdots V_{k-m}^T)H_{k-m}(V_{k-m}\cdots V_{k-2}V_{k-1})g_k\\ &+(V_{k-1}^TV_{k-2}^T\cdots V_{k-m+1}^T)s_{k-m}\rho_{k-m}s_{k-m}^T(V_{k-m+1}\cdots V_{k-1}V_k)g_k\\ &+(V_{k-1}^TV_{k-2}^T\cdots V_{k-m+2}^T)s_{k-m+1}\rho_{k-m+1}s_{k-m+1}^T(V_{k-m+2}\cdots V_{k-2}V_{k-1})g_k\\ &+ \cdots\\ &+V_{k-1}^Ts_{k-2}\rho_{k-2}s_{k-2}^TV_{k-1}g_k\\ &+ s_{k-1}\rho_{k-1}s_{k-1}^Tg_k \end{array} \end{equation} 上式非常有规律,这就为迭代求解奠定了很好的基础。我们令\(q_0=g_k\),则当\(1\leq i\leq m\)时有 \begin{equation} q_i=(V_{k-i}\cdots V_{k-2}V_{k-1})g_k \end{equation} \begin{equation} a_i=\rho_{k-i}s_{k-i}^Tq_{i-1} \end{equation} 那么可以得到如下的迭代规则: \begin{equation} \begin{array}{rl} q_i&=V_{k-i+1}q_{i-1}\\ &=q_{i-1}-\rho_{k-i+1}y_{k-i+1}s_{k-i+1}^Tq_{i-1}\\ &=q_{i-1}-a_{i-1}y_{k-i+1} \end{array} \end{equation} 到目前为止,我们已经可以求解出\(H_{k}g_k\)所有项的右半部分,那左半部分如何处理?在这里采用不断提前最左端的公因式的方法完成迭代过程: \begin{equation} H_{k}g_k=P_1=V_{k-1}^TP_{2}+s_{k-1}a_1 \end{equation} \begin{equation} P_{2}=V_{k-2}^TP_{3}+s_{k-2}a_2 \end{equation} 重复该过程,很快就可以发现规律: \begin{equation} \begin{array}{rl} P_{i}&=V_{k-i}^TP_{i+1}+s_{k-i}a_i\\ &=P_{i+1}+s_{k-i}(a_i-\rho_{k-i}y_{k-i}^TP_{i+1}) \end{array} \end{equation} 其中\(P_{m+1}=H_{k-m}q_m\)。 根据上述分析,我们可以得到L-BFGS的求解搜索方向的算法9。根据算法9的整个流程,可知通过两个循环\(m\)次的迭代运算即可出计算当前的搜索方向,需要存储历史数据\(\{s_{k-i},y_{k-i}|i=1,\cdots,m\}\)和临时数据\(\{a_{k-i}|i=1,\cdots,m\}\),所以算法的时间和空间复杂度均为\(O(mn)\)。如果目前处于迭代的初期,已有的历史数据少于\(m\),那么就用这些已有的数据,在后续迭代过程中不断新增历史数据即可;若干当前的迭代次数不小于\(m\),那么在每次计算出搜索方向后,即可用\(s_k\)和\(y_k\)替换\(s_{k-m}\)和\(y_{k-m}\)组成新的\(m\)对历史更新数据。

在算法9中,需要给出矩阵\(H_{k-m}\)。在第一次迭代时,\(H_{k-m}\)被初始化为单位阵,在随后的迭代过程中\(H_{k-m}=\gamma_kI\),其中 \begin{equation} \gamma_k=\frac{y_{k-1}^Ts_{k-1}}{y_{k-1}^Ty_{k-1}} \end{equation} 另外,在内存受限的系统中存储\(n\times n\)不是很现实的想法。用上述的方法,我们仅需存储一个标量\(\gamma_k\)即可,这是一个简单却又高效的做法[13]。 最后,附上L-BFGS的Python版本代码:

1 def LBFGS(fun,dfun,theta,args,mode=0,eps=1e-12,max_iter=1e4): 2 """ 3 #Functionality:find the minimum of objective function f(x) with LBFGS 4 #@Parameters 5 #fun:objective function f(x) 6 #dfun:compute the gradient of f(x) 7 #args:parameters needed by fun and dfun 8 #theta:start vector of parameters of the model 9 #H:initial inverse Hessian approximation 10 #mode:index of line search algorithm 11 """ 12 x_pre=x_cur=theta 13 s_arr=[] 14 y_arr=[] 15 Hscale=1 16 k=0 17 while k<max_iter: 18 g=dfun(x_cur,args) 19 d=LBFGSSearchDirection(y_arr,s_arr,Hscale,-g) 20 step=LineSearch(fun,dfun,x_pre,args,d,1,mode) 21 s=step*d 22 x_cur=x_pre+s 23 y=dfun(x_cur,args)-dfun(x_pre,args) 24 ys=np.sum(y*s.T) 25 if np.sum(np.abs(s))<eps: 26 return x_cur 27 x_pre=x_cur 28 k+=1 29 y_arr,s_arr,Hscale=LBFGSUpdate(y,s,y_arr,s_arr) 30 return x_cur 31 32 33 def LBFGSSearchDirection(y_arr,s_arr,Hscale,g): 34 """ 35 #Functionality:estimate search direction using with LBFGS 36 #@Parameters 37 #y_arr:m*dim matrix,where y_arr[i,:]=f'(x_{i+1})-f'(x_i) 38 #s_arr:m*dim matrix,where s_arr[i,:]=x_{k+1}-x_k 39 #Hscale:a scale to initilize the inverse of Hessian matrix 40 #g:a row vector representing -f'(x_{k}) 41 """ 42 histNum=len(s_arr)#number of update data stored 43 if not histNum: 44 return g 45 dim=s_arr[0].size 46 a_arr=[0 for i in range(histNum)] 47 rho=[0 for i in range(histNum)] 48 q=g 49 for i in range(1,histNum+1): 50 s=s_arr[histNum-i] 51 y=y_arr[histNum-i] 52 rho[histNum-i]=1/np.sum(s*y.T) 53 a_arr[i-1]=rho[histNum-i]*np.sum(s*q.T) 54 q-=(a_arr[i-1]*y) 55 P=Hscale*q 56 for i in range(histNum,0,-1): 57 y=y_arr[histNum-i] 58 s=s_arr[histNum-i] 59 beta=rho[histNum-i]*np.sum(y*P.T) 60 P+=s*(a_arr[i-1]-beta) 61 return P 62 63 64 def LBFGSUpdate(y,s,oldy,olds,m=1e2): 65 """ 66 #Functionality:refresh the historical update data 67 #@Parameters 68 #y:f'(x_{k+1})-f'(x_k) 69 #s:x_{k+1}-x_k 70 #oldy:[y0,y1,...],which is a list 71 #olds:[s0,s1,...],which is a list 72 #m:number of historical data to store(default:100) 73 """ 74 eps=1e-12 75 Hscale=np.sum(y*s.T/y*y.T) #a scale to initialize H_{k-m} 76 if Hscale<eps:#skip update 77 return oldy,olds,Hscale 78 79 cur_m=len(oldy) 80 if cur_m>=m: 81 oldy.pop(0) 82 olds.pop(0) 83 oldy.append(copy.deepcopy(y)) 84 olds.append(copy.deepcopy(s)) 85 return oldy,olds,Hscale

References

[1] Backtracking line search. http://en.wikipedia.org/wiki/Backtracking_line_search.

[2] Bisection method. http://en.wikipedia.org/wiki/Bisection_method.

[3] Gradient descent. http://en.wikipedia.org/wiki/Gradient_descent.

[4] Limited-memory bfgs. http://en.wikipedia.org/wiki/Limited-memory_BFGS.

[5] Line search methods. http://pages.cs.wisc.edu/~ferris/cs730/chap3.pdf.

[6] Line search methods:step length selection. http://terminus.sdsu.edu/SDSU/Math693a_f2013/Lectures/06/lecture.pdf.

[7] Math 408a line search methods. https://www.math.washington.edu/~burke/crs/408/lectures/L7-line-search.pdf.

[8] Newton’s method. http://en.wikipedia.org/wiki/Newton%27s_method.

[9] Nonlinear programming algorithms. http://www.math.bme.hu/~bog/GlobOpt/Chapter5.pdf.

[10] Oerview of quasi-newton optimization methods. https://homes.cs.washington.edu/~galen/files/quasi-newton-notes.pdf.

[11] Quasi-newton method. http://en.wikipedia.org/wiki/Quasi-Newton_method.

[12] Unconstrained minimization. http://www.ing.unitn.it/~bertolaz/2-teaching/2011-2012/AA-2011-2012-OPTIM/lezioni/slides-mND.pdf.

[13] Dong C Liu and Jorge Nocedal. On the limited memory bfgs method for large scale optimization. Mathematical programming, 45(1-3):503–528,1989.

作者:JeromeWang

邮箱:yunfeiwang@hust.edu.cn

出处:http://www.cnblogs.com/jeromeblog/

本文版权归作者所有,欢迎转载,未经作者同意必须保留此段声明,且在文章页面明显位置给出原文连接,否则保留追究法律责任的权利。

浙公网安备 33010602011771号

浙公网安备 33010602011771号