oozie中mapreduce程序的workflow.xml文件配置新版本

<!-- Licensed to the Apache Software Foundation (ASF) under one or more contributor license agreements. See the NOTICE file distributed with this work for additional information regarding copyright ownership. The ASF licenses this file to you under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. --> <workflow-app xmlns="uri:oozie:workflow:0.5" name="mr-wordcount-wf 子定义名称"> <start to="mr-node-wordcount "/> <action name="mr-node-wordcount 自定义节点名称"> <map-reduce> <job-tracker>${jobTracker}</job-tracker> <name-node>${nameNode}</name-node> <prepare> <delete path="${nameNode}/${oozieDataRoot}/${outputDir}"/> </prepare> <configuration> <property> <name>mapred.mapper.new-api</name> <value>true</value> </property> <property>-- 新版本写法 <name>mapred.reducer.new-api</name> <value>true</value> </property> <property> --老版改为新版本的key 这里的配置就是 map-reduce中driver中的配置。 队列名称 <name>mapreduce.job.queuename</name> <value>${queueName}</value> </property> <property> --map 类的主类 内部类的写法:WordCount$WordCountMapper <name>mapreduce.job.map.class</name> <value>com.it.hadoop.senior.mapreduce.WordCount$WordCountMapper</value> </property> <property> <name>mapreduce.job.reduce.class</name> <value>com.IT.hadoop.senior.mapreduce.WordCount$WordCountReducer</value> </property> <property> --输出key的类型 <name>mapreduce.map.output.key.class</name> <value>org.apache.hadoop.io.Text</value> </property> <property> <name>mapreduce.map.output.value.class</name> <value>org.apache.hadoop.io.IntWritable</value> </property> <property> <name>mapreduce.job.output.key.class</name> <value>org.apache.hadoop.io.Text</value> </property> <property> <name>mapreduce.job.output.value.class</name> <value>org.apache.hadoop.io.IntWritable</value> </property> <property>--输入输出目录 <name>mapreduce.input.fileinputformat.inputdir</name> <value>${nameNode}/${oozieDataRoot}/${inputDir}</value> </property> <property> <name>mapreduce.output.fileoutputformat.outputdir</name> <value>${nameNode}/${oozieDataRoot}/${outputDir}</value> </property> </configuration> </map-reduce> <ok to="end"/> <error to="fail"/> </action> <kill name="fail"> <message>Map/Reduce failed, error message[${wf:errorMessage(wf:lastErrorNode())}]</message> </kill> <end name="end"/> </workflow-app>

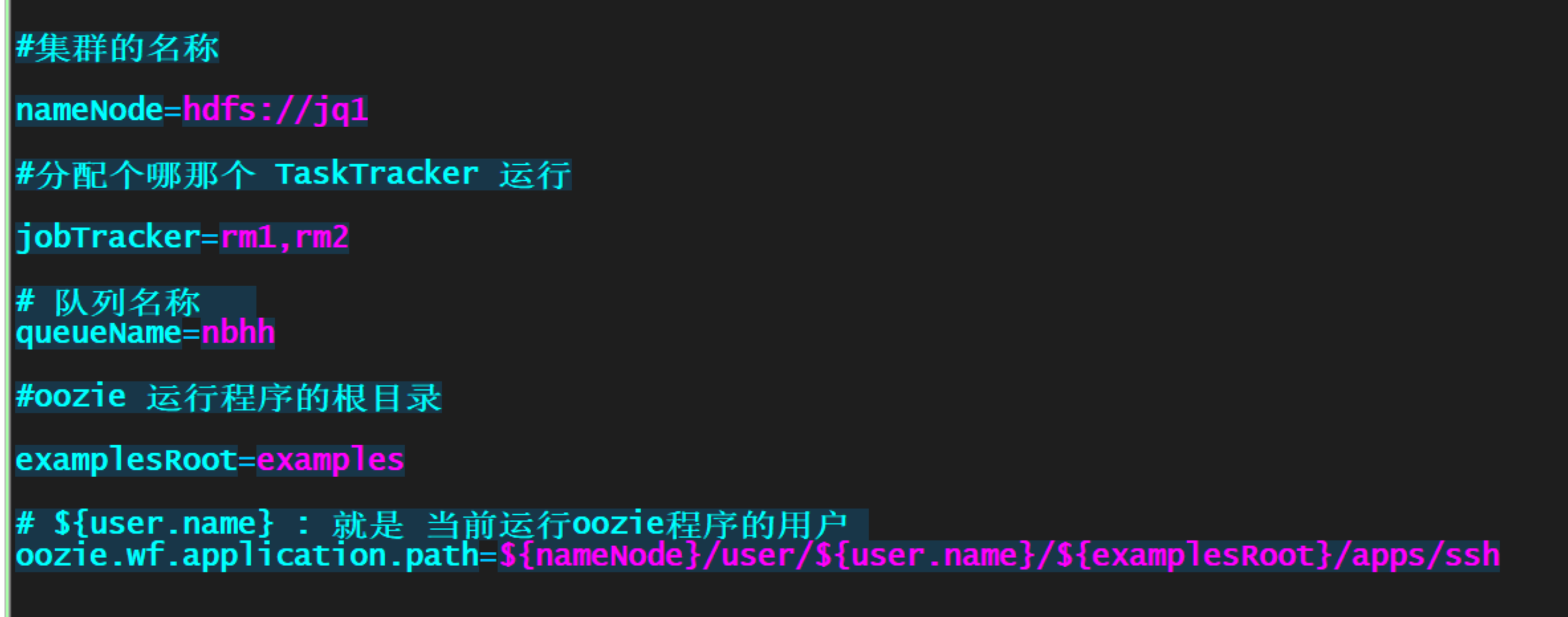

job.properties 文件配置详解:

传播知识,分享快乐!

作者:IT_BULL

出处:http://www.cnblogs.com/itBulls/

本文版权归作者和博客园共有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出原文连接,否则保留追究法律责任的权利。

博客园-博客园。

浙公网安备 33010602011771号

浙公网安备 33010602011771号