kubernetes系列01-1.18.2集群安装

一、基础配置

修改主机名

# 在 172.17.32.23 上: hostnamectl set-hostname k8s-master01 bash # 在 172.17.32.38 上: hostnamectl set-hostname k8s-master02 bash # 在 172.17.32.43 上: hostnamectl set-hostname k8s-master03 bash # 在 172.17.32.32 上: hostnamectl set-hostname k8s-node01 bash

配置hosts解析

各个节点操作

cat <<EOF >>/etc/hosts 172.17.32.23 k8s-master01 172.17.32.38 k8s-master02 172.17.32.43 k8s-master03 172.17.32.32 k8s-node01 EOF echo "127.0.0.1 $(hostname)" >> /etc/hosts

二、安装docker

创建文件存放目录

mkdir /application

cat /application/install-docker.sh

#!/bin/bash

# 在 master 节点和 worker 节点都要执行

# 安装 docker

# 参考文档如下

# https://docs.docker.com/install/linux/docker-ce/centos/

# https://docs.docker.com/install/linux/linux-postinstall/

# 卸载旧版本

yum remove -y docker \

docker-client \

docker-client-latest \

docker-ce-cli \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-selinux \

docker-engine-selinux \

docker-engine

# 设置 yum repository

yum install -y yum-utils \

device-mapper-persistent-data \

lvm2

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# 安装并启动 docker

yum install -y docker-ce-19.03.11 docker-ce-cli-19.03.11 containerd.io-1.2.13

mkdir /etc/docker || true

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://registry.cn-hangzhou.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"

]

}

EOF

mkdir -p /etc/systemd/system/docker.service.d

# Restart Docker

systemctl daemon-reload

systemctl enable docker

systemctl restart docker

# 安装 nfs-utils

# 必须先安装 nfs-utils 才能挂载 nfs 网络存储

yum install -y nfs-utils

yum install -y wget

# 关闭 防火墙

systemctl stop firewalld

systemctl disable firewalld

# 关闭 SeLinux

setenforce 0

sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

# 关闭 swap

swapoff -a

yes | cp /etc/fstab /etc/fstab_bak

cat /etc/fstab_bak |grep -v swap > /etc/fstab

# 修改 /etc/sysctl.conf

# 如果有配置,则修改

sed -i "s#^net.ipv4.ip_forward.*#net.ipv4.ip_forward=1#g" /etc/sysctl.conf

sed -i "s#^net.bridge.bridge-nf-call-ip6tables.*#net.bridge.bridge-nf-call-ip6tables=1#g" /etc/sysctl.conf

sed -i "s#^net.bridge.bridge-nf-call-iptables.*#net.bridge.bridge-nf-call-iptables=1#g" /etc/sysctl.conf

sed -i "s#^net.ipv6.conf.all.disable_ipv6.*#net.ipv6.conf.all.disable_ipv6=1#g" /etc/sysctl.conf

sed -i "s#^net.ipv6.conf.default.disable_ipv6.*#net.ipv6.conf.default.disable_ipv6=1#g" /etc/sysctl.conf

sed -i "s#^net.ipv6.conf.lo.disable_ipv6.*#net.ipv6.conf.lo.disable_ipv6=1#g" /etc/sysctl.conf

sed -i "s#^net.ipv6.conf.all.forwarding.*#net.ipv6.conf.all.forwarding=1#g" /etc/sysctl.conf

# 可能没有,追加

echo "net.ipv4.ip_forward = 1" >> /etc/sysctl.conf

echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.conf

echo "net.bridge.bridge-nf-call-iptables = 1" >> /etc/sysctl.conf

echo "net.ipv6.conf.all.disable_ipv6 = 1" >> /etc/sysctl.conf

echo "net.ipv6.conf.default.disable_ipv6 = 1" >> /etc/sysctl.conf

echo "net.ipv6.conf.lo.disable_ipv6 = 1" >> /etc/sysctl.conf

echo "net.ipv6.conf.all.forwarding = 1" >> /etc/sysctl.conf

# 执行命令以应用

sysctl -p

# 配置K8S的yum源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 卸载旧版本

yum remove -y kubelet kubeadm kubectl

# 安装kubelet、kubeadm、kubectl

# 将 ${1} 替换为 kubernetes 版本号,例如 1.19.0

# yum install -y kubelet-${1} kubeadm-${1} kubectl-${1}

# 重启 docker,并启动 kubelet

systemctl daemon-reload

systemctl restart docker

# systemctl enable kubelet && systemctl start kubelet

docker version

执行安装dockersh /application/install-docker.sh

三、安装 kubenetes 1.18.2

在 master01 master02 master03 node01 上安装kubeadm 和 kubelet

yum install kubeadm-1.18.2 kubelet-1.18.2 -y systemctl enable kubelet systemctl restart kubelet

安装各个组件

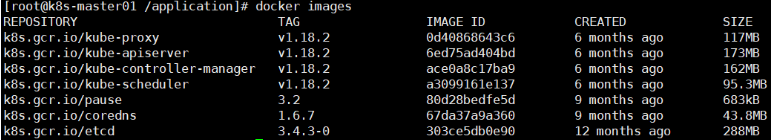

pause版本是3.2,用到的镜像是k8s.gcr.io/pause:3.2

etcd版本是3.4.3,用到的镜像是k8s.gcr.io/etcd:3.4.3-0

cordns版本是1.6.7,用到的镜像是k8s.gcr.io/coredns:1.6.7

apiserver、scheduler、controller-manager、kube-proxy版本是1.18.2,用到的镜像分别是 :

k8s.gcr.io/kube-apiserver:v1.18.2

k8s.gcr.io/kube-controller-manager:v1.18.2

k8s.gcr.io/kube-scheduler:v1.18.2

k8s.gcr.io/kube-proxy:v1.18.2

【说明:每一个master节点上都需要安装】

安装包百度盘:链接:https://pan.baidu.com/s/1WI-6pFzSWL5gCNxMXL6ykQ 提取码:bwen 解压

docker load -i <image_name>.tar.gz # 全部解压

在这里所有master节点的镜像信息为以下:

初始化ApiServer

初始化ApiServer的Load Balance(私网)

监听端口:6443/TCP

后端资源组:k8s-master01,k8s-master02,k8s-master03

后端端口:6443

开启 按源地址保持会话

Load Balance 可以的选择有

-

nginx

-

haproxy

-

keepalived

-

云厂商提供的负载均衡产品

【注意:在这里我们使用的是云主机,我们使用腾讯云的CLB负载均衡产品】

-

购买内网云负载均衡

-

进行配置

初始化第一个master节点

# 只在第一个master上执行

# 替换apiserver.demo 为你想要的dnsName,也可以直接使用apiserver.demo

export APISERVER_NAME=apiserver.demo

# Kubernetes 容器组所在的网段,该网段安装完成后,由 kubernetes 创建,事先并不存在于您的物理网络中

export POD_SUBNET=10.244.0.1/16

echo "127.0.0.1 ${APISERVER_NAME}" >> /etc/hosts

# 配置 kubeadm 文件

cat <<EOF > /application/kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.18.2

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

controlPlaneEndpoint: "apiserver.demo:6443"

networking:

serviceSubnet: "10.96.0.0/16"

podSubnet: "10.244.0.0/16"

dnsDomain: "cluster.local"

EOF

# 初始化 k8s 集群

cd /application

kubeadm init --config kubeadm-config.yaml

# 出现 Your Kubernetes control-plane has initialized successfully! 成功

授权操作k8s资源

# 在master01上执行 mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

安装calico网络插件

安装calico需要的镜像有:

quay.io/calico/cni:v3.9.2 quay.io/calico/kube-controllers:v3.9.2 quay.io/calico/pod2daemon-flexvol:v3.9.2 quay.io/calico/node:v3.5.3

还有需要的calico-3.9.2.yaml文件也在网盘

百度网盘:链接:https://pan.baidu.com/s/1yZ4OiJEf_Mi4USW3pjmc2g 提取码:bwen

cd /application/

# 将calico-3.9.2.yaml文件传入

sed -i "s#192\.168\.0\.0/16#${POD_SUBNET}#" calico-3.9.2.yaml

kubectl apply -f /application/calico-3.9.2.yaml

拷贝证书

将 master01 上面的文件拷贝到 master02 和 master03 上

# 在 master02 和 master 03 上执行 cd /root && mkdir -p /etc/kubernetes/pki/etcd &&mkdir -p ~/.kube/ # 把 master01 上面的证书复制到 master02 和 master03 上 scp /etc/kubernetes/pki/ca.crt k8s-master02:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/ca.key k8s-master02:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/sa.key k8s-master02:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/sa.pub k8s-master02:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/front-proxy-ca.crt k8s-master02:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/front-proxy-ca.key k8s-master02:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/etcd/ca.crt k8s-master02:/etc/kubernetes/pki/etcd/ scp /etc/kubernetes/pki/etcd/ca.key k8s-master02:/etc/kubernetes/pki/etcd/ scp /etc/kubernetes/pki/ca.crt k8s-master03:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/ca.key k8s-master03:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/sa.key k8s-master03:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/sa.pub k8s-master03:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/front-proxy-ca.crt k8s-master03:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/front-proxy-ca.key k8s-master03:/etc/kubernetes/pki/ scp /etc/kubernetes/pki/etcd/ca.crt k8s-master03:/etc/kubernetes/pki/etcd/ scp /etc/kubernetes/pki/etcd/ca.key k8s-master03:/etc/kubernetes/pki/etcd/

初始化其他master节点

# 在 master01 上执行

kubeadm init phase upload-certs --upload-certs

# 获得以下内容:

# [upload-certs] Using certificate key:

# baac735689ed0d0fcae1dc4cddcafcaba63bf33787307017e4719e4999abe84f

# 在 master01 上执行

kubeadm token create --print-join-command

# 获得以下内容

# kubeadm join apiserver.demo:6443 --token 80cvwb.aiu7v5svs411ilc6 --discovery-token-ca-cert-hash sha256:2283acdd1b0bd3a3d92fcff7675860518eefa96434329382f3d49ea2a717ff53

# 【刚刚获得了 key 和 join 命令】

# ===============初始化第二,三个master节点================

# 替换172.17.64.13为你的 apiserver loadbalance地址ip

export APISERVER_IP=172.17.64.13

# 替换 apiserver.demo 为 前面已经使用的 dnsName

export APISERVER_NAME=apiserver.demo

echo "${APISERVER_IP} ${APISERVER_NAME}" >> /etc/hosts

# master02 和 master01 加入

kubeadm join apiserver.demo:6443 --token 80cvwb.aiu7v5svs411ilc6 --discovery-token-ca-cert-hash sha256:2283acdd1b0bd3a3d92fcff7675860518eefa96434329382f3d49ea2a717ff53 --control-plane --certificate-key baac735689ed0d0fcae1dc4cddcafcaba63bf33787307017e4719e4999abe84f

# 在 master02 和 master03 上执行

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

检查apiserver.demo:6443

curl -ik https://apiserver.demo:6443/version # 返回 200

初始化node节点

# 只在 worker 节点执行

# 替换 x.x.x.x 为 ApiServer LoadBalancer 的 IP 地址

export MASTER_IP=172.17.64.13

# 替换 apiserver.demo 为初始化 master 节点时所使用的 APISERVER_NAME

export APISERVER_NAME=apiserver.demo

echo "${MASTER_IP} ${APISERVER_NAME}" >> /etc/hosts

# 加入 work 节点

kubeadm join apiserver.demo:6443 --token 80cvwb.aiu7v5svs411ilc6 --discovery-token-ca-cert-hash sha256:2283acdd1b0bd3a3d92fcff7675860518eefa96434329382f3d49ea2a717ff53

解决node节点无法使用kubectl命令

# master01节点上执行 scp /etc/kubernetes/admin.conf k8s-node01:/etc/kubernetes/ # node节点上执行 mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

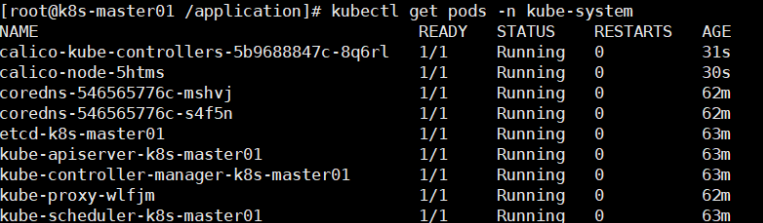

最终结果

本文来自博客园,作者:子禾org,转载请注明原文链接:https://www.cnblogs.com/ic-wen/p/13959923.html

浙公网安备 33010602011771号

浙公网安备 33010602011771号