Hadoop(二)--Hadoop运行模式

一、Hadoop运行环境搭建

准备干净的Centos7虚拟机,关闭防火墙,selinux,修改好主机名,添加主机映射

1.1、创建普通用户并授权

#添加用户

[root@hadoop101 ~]# useradd hadoop && echo 123456|passwd --stdin hadoop

Changing password for user hadoop.

passwd: all authentication tokens updated successfully.

#visudo授权

[root@hadoop101 ~]# visudo

root ALL=(ALL) ALL

hadoop ALL=(ALL) ALL #授权

1.2、创建文件夹并授权

#在/opt目录下创建module、software文件夹 [hadoop@hadoop101 ~]$ cd /opt/ [hadoop@hadoop101 opt]$ sudo mkdir software module 修改module、software文件夹的所有者 [hadoop@hadoop101 opt]$ sudo chown hadoop:hadoop module/ software/ [hadoop@hadoop101 opt]$ ll total 0 drwxr-xr-x 2 hadoop hadoop 6 Jan 15 15:50 module drwxr-xr-x 2 hadoop hadoop 6 Jan 15 15:50 software

1.3、安装jdk

#上传jdk [hadoop@hadoop101 software]$ ls jdk-8u144-linux-x64.tar.gz [hadoop@hadoop101 software]$ tar xf jdk-8u144-linux-x64.tar.gz -C /opt/module/ [hadoop@hadoop101 software]$ cd /opt/module/jdk1.8.0_144/ [hadoop@hadoop101 jdk1.8.0_144]$ pwd /opt/module/jdk1.8.0_144 #配置环境变量 [hadoop@hadoop101 jdk1.8.0_144]$ sudo vim /etc/profile #JAVA_HOME export JAVA_HOME=/opt/module/jdk1.8.0_144 export PATH=$PATH:$JAVA_HOME/bin [hadoop@hadoop101 jdk1.8.0_144]$ source /etc/profile [hadoop@hadoop101 jdk1.8.0_144]$ java -version java version "1.8.0_144" Java(TM) SE Runtime Environment (build 1.8.0_144-b01) Java HotSpot(TM) 64-Bit Server VM (build 25.144-b01, mixed mode)

1.4、安装Hadoop

Hadoop下载地址:https://archive.apache.org/dist/hadoop/common/hadoop-2.7.2/

[hadoop@hadoop101 software]$ ls hadoop-2.7.2.tar.gz jdk-8u144-linux-x64.tar.gz [hadoop@hadoop101 software]$ pwd /opt/software [hadoop@hadoop101 software]$ tar xf hadoop-2.7.2.tar.gz -C /opt/module/ [hadoop@hadoop101 software]$ cd /opt/module/hadoop-2.7.2/ [hadoop@hadoop101 hadoop-2.7.2]$ ls bin etc include lib libexec LICENSE.txt NOTICE.txt README.txt sbin share #设置环境变量 [hadoop@hadoop101 hadoop-2.7.2]$ sudo vim /etc/profile ##HADOOP_HOME export HADOOP_HOME=/opt/module/hadoop-2.7.2 export PATH=$PATH:$HADOOP_HOME/bin export PATH=$PATH:$HADOOP_HOME/sbin [hadoop@hadoop101 hadoop-2.7.2]$ source /etc/profile [hadoop@hadoop101 hadoop-2.7.2]$ hadoop version Hadoop 2.7.2 Subversion Unknown -r Unknown Compiled by root on 2017-05-22T10:49Z Compiled with protoc 2.5.0 From source with checksum d0fda26633fa762bff87ec759ebe689c This command was run using /opt/module/hadoop-2.7.2/share/hadoop/common/hadoop-common-2.7.2.jar

1.5、Hadoop目录结构

[hadoop@hadoop101 hadoop-2.7.2]$ ll total 28 drwxr-xr-x 2 hadoop hadoop 194 May 22 2017 bin drwxr-xr-x 3 hadoop hadoop 20 May 22 2017 etc drwxr-xr-x 2 hadoop hadoop 106 May 22 2017 include drwxr-xr-x 3 hadoop hadoop 20 May 22 2017 lib drwxr-xr-x 2 hadoop hadoop 239 May 22 2017 libexec -rw-r--r-- 1 hadoop hadoop 15429 May 22 2017 LICENSE.txt -rw-r--r-- 1 hadoop hadoop 101 May 22 2017 NOTICE.txt -rw-r--r-- 1 hadoop hadoop 1366 May 22 2017 README.txt drwxr-xr-x 2 hadoop hadoop 4096 May 22 2017 sbin drwxr-xr-x 4 hadoop hadoop 31 May 22 2017 share ----------------------------------------------------------- (1)bin目录:存放对Hadoop相关服务(HDFS,YARN)进行操作的脚本 (2)etc目录:Hadoop的配置文件目录,存放Hadoop的配置文件 (3)lib目录:存放Hadoop的本地库(对数据进行压缩解压缩功能) (4)sbin目录:存放启动或停止Hadoop相关服务的脚本 (5)share目录:存放Hadoop的依赖jar包、文档、和官方案例

二、Hadoop运行模式-本地运行模式

文档:https://hadoop.apache.org/docs/r2.7.2/hadoop-project-dist/hadoop-common/SingleCluster.html

2.1、官方Grep案例

[hadoop@hadoop101 hadoop-2.7.2]$ mkdir input

[hadoop@hadoop101 hadoop-2.7.2]$ cp etc/hadoop/*.xml input

#从input读入并输出到output文件夹,过滤以dfs开头的文本

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar grep input output 'dfs[a-z.]+'

[hadoop@hadoop101 hadoop-2.7.2]$ ll output/

total 4

-rw-r--r-- 1 hadoop hadoop 11 Jan 15 16:21 part-r-00000

-rw-r--r-- 1 hadoop hadoop 0 Jan 15 16:21 _SUCCESS

[hadoop@hadoop101 hadoop-2.7.2]$ cat output/part-r-00000

1 dfsadmin

[hadoop@hadoop101 hadoop-2.7.2]$ cat output/_SUCCESS注意:当再次执行命令时,报错org.apache.hadoop.mapred.FileAlreadyExistsException: Output directory file:/opt/module/hadoop-2.7.2/output already exists,需要将output目录删除后再执行

2.2、官方WordCount案例

[hadoop@hadoop101 hadoop-2.7.2]$ mkdir wcinput

[hadoop@hadoop101 hadoop-2.7.2]$ touch wcinput/wc.input

[hadoop@hadoop101 hadoop-2.7.2]$ vim wcinput/wc.input

[hadoop@hadoop101 hadoop-2.7.2]$ cat wcinput/wc.input

hadoop yarn

hadoop mapreduce

atguigu

atguigu

[hadoop@hadoop101 hadoop-2.7.2]$ hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount wcinput wcoutput

[hadoop@hadoop101 hadoop-2.7.2]$ cat wcoutput/*

atguigu 2

hadoop 2

mapreduce 1

yarn 1三、Hadoop运行环境-伪分布式运行模式

3.1、启动HDFS并运行MapReduce程序

3.1.1、配置集群

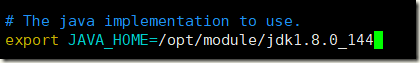

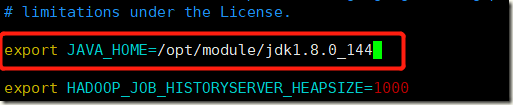

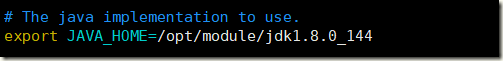

1)配置:hadoop-env.sh

#修改JAVA_HOME 路径

[hadoop@hadoop101 hadoop-2.7.2]$ cd etc/hadoop/

[hadoop@hadoop101 hadoop]$ vim hadoop-env.sh

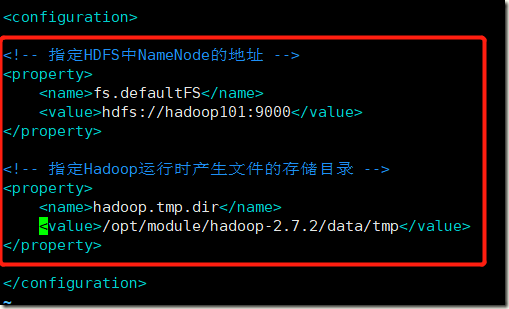

export JAVA_HOME=/opt/module/jdk1.8.0_1442)配置:core-site.xml

可以在window主机上配置host解析

[hadoop@hadoop101 hadoop]$ vim core-site.xml

<!-- 指定HDFS中NameNode的地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop101:9000</value>

</property>

<!-- 指定Hadoop运行时产生文件的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-2.7.2/data/tmp</value>

</property>

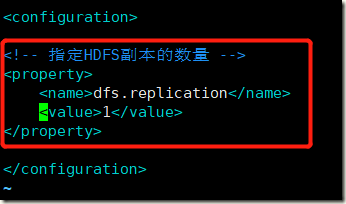

3)配置:hdfs-site.xml

[hadoop@hadoop101 hadoop]$ vim hdfs-site.xml

<!-- 指定HDFS副本的数量 -->

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

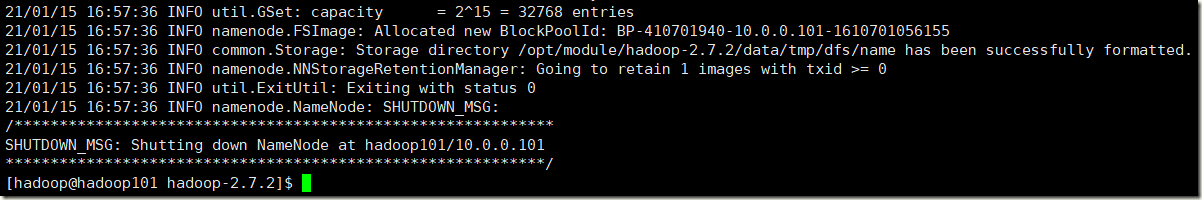

3.1.2、启动集群

1)格式化NameNode(第一次启动时格式化,以后就不要总格式化)

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hdfs namenode -format

2)启动NameNode

[hadoop@hadoop101 hadoop-2.7.2]$ sbin/hadoop-daemon.sh start namenode starting namenode, logging to /opt/module/hadoop-2.7.2/logs/hadoop-hadoop-namenode-hadoop101.out [hadoop@hadoop101 hadoop-2.7.2]$ jps 16134 NameNode 16203 Jps

3)启动DataNode

[hadoop@hadoop101 hadoop-2.7.2]$ sbin/hadoop-daemon.sh start datanode starting datanode, logging to /opt/module/hadoop-2.7.2/logs/hadoop-hadoop-datanode-hadoop101.out [hadoop@hadoop101 hadoop-2.7.2]$ jps 16228 DataNode 16134 NameNode 16301 Jps

3.1.3、查看集群

1)查看是否启动成功

[hadoop@hadoop101 hadoop-2.7.2]$ jps 16228 DataNode 16134 NameNode 16319 Jps

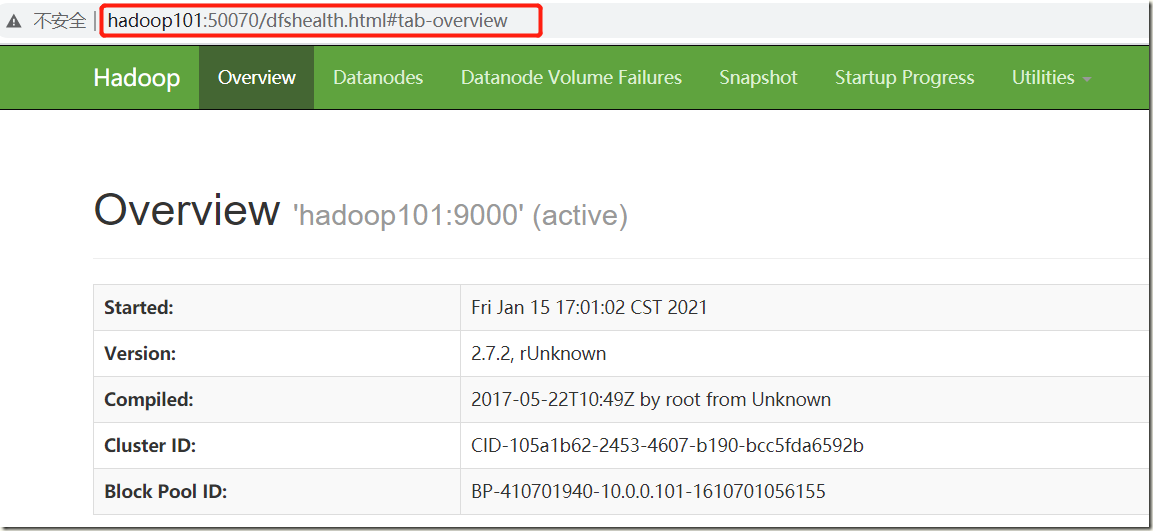

2)web端查看HDFS文件系统

http://hadoop101:50070/dfshealth.html#tab-overview

注意:如果不能查看,看如下帖子处理

http://www.cnblogs.com/zlslch/p/6604189.html

3)查看产生的Log日志

[hadoop@hadoop101 hadoop-2.7.2]$ cd logs/ [hadoop@hadoop101 logs]$ ll total 60 -rw-rw-r-- 1 hadoop hadoop 23762 Jan 15 17:01 hadoop-hadoop-datanode-hadoop101.log -rw-rw-r-- 1 hadoop hadoop 716 Jan 15 17:01 hadoop-hadoop-datanode-hadoop101.out -rw-rw-r-- 1 hadoop hadoop 27497 Jan 15 17:01 hadoop-hadoop-namenode-hadoop101.log -rw-rw-r-- 1 hadoop hadoop 716 Jan 15 17:01 hadoop-hadoop-namenode-hadoop101.out -rw-rw-r-- 1 hadoop hadoop 0 Jan 15 17:01 SecurityAuth-hadoop.audit

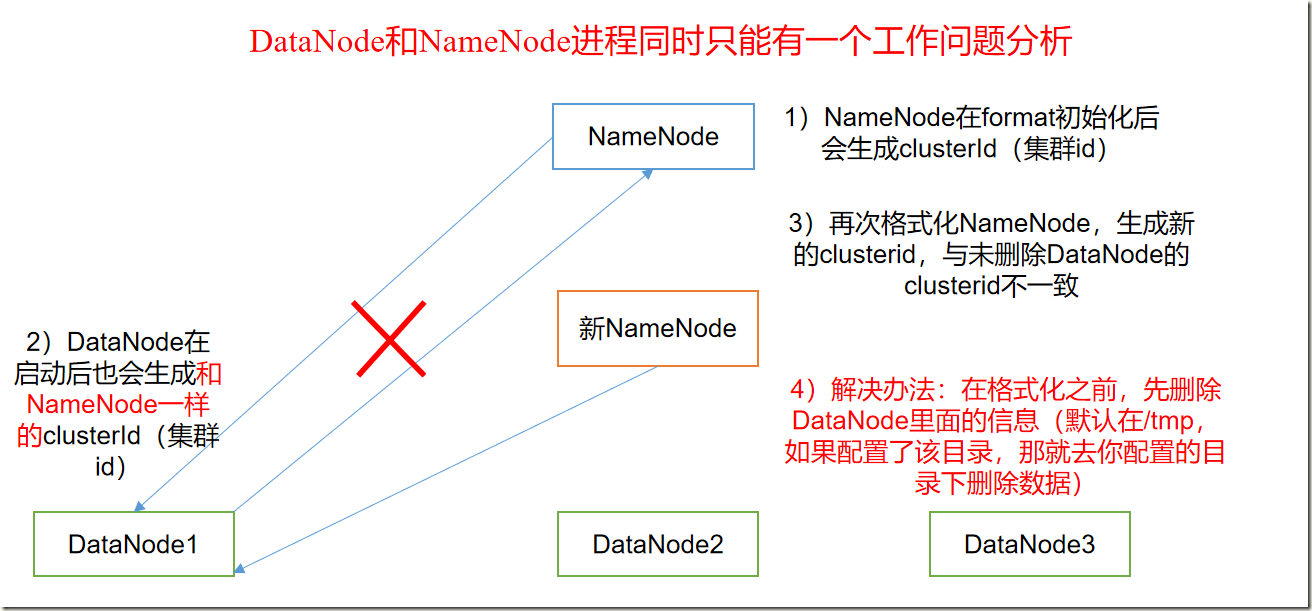

4)思考:为什么不能一直格式化NameNode,格式化NameNode,要注意什么?

[hadoop@hadoop101 current]$ pwd

/opt/module/hadoop-2.7.2/data/tmp/dfs/data/current

[hadoop@hadoop101 current]$ cat VERSION

#Fri Jan 15 17:01:47 CST 2021

storageID=DS-1d6d4c32-c3f3-4558-b4fd-9911ee993692

clusterID=CID-105a1b62-2453-4607-b190-bcc5fda6592b

cTime=0

datanodeUuid=161755c8-d446-44b7-8982-8fe216916926

storageType=DATA_NODE

layoutVersion=-56

[hadoop@hadoop101 current]$ pwd

/opt/module/hadoop-2.7.2/data/tmp/dfs/name/current

[hadoop@hadoop101 current]$ cat VERSION

#Fri Jan 15 16:57:36 CST 2021

namespaceID=2008667983

clusterID=CID-105a1b62-2453-4607-b190-bcc5fda6592b

cTime=0

storageType=NAME_NODE

blockpoolID=BP-410701940-10.0.0.101-1610701056155

layoutVersion=-63注意:格式化NameNode,会产生新的集群id,导致NameNode和DataNode的集群id不一致,集群找不到已往数据。所以,格式NameNode时,一定要先删除data数据和log日志,然后再格式化NameNode。

3.1.4、集群操作

1)在HDFS文件系统上创建一个input文件夹

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -mkdir -p /user/atguigu/input

2)将测试文件内容上传到文件系统上

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -put wcinput/wc.input /user/atguigu/input

3)查看上传的文件是否正确

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -ls /user/atguigu/input Found 1 items -rw-r--r-- 1 hadoop supergroup 45 2021-01-15 17:28 /user/atguigu/input/wc.input [hadoop@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -cat /user/atguigu/input/wc.input hadoop yarn hadoop mapreduce atguigu atguigu

4)运行MapReduce程序

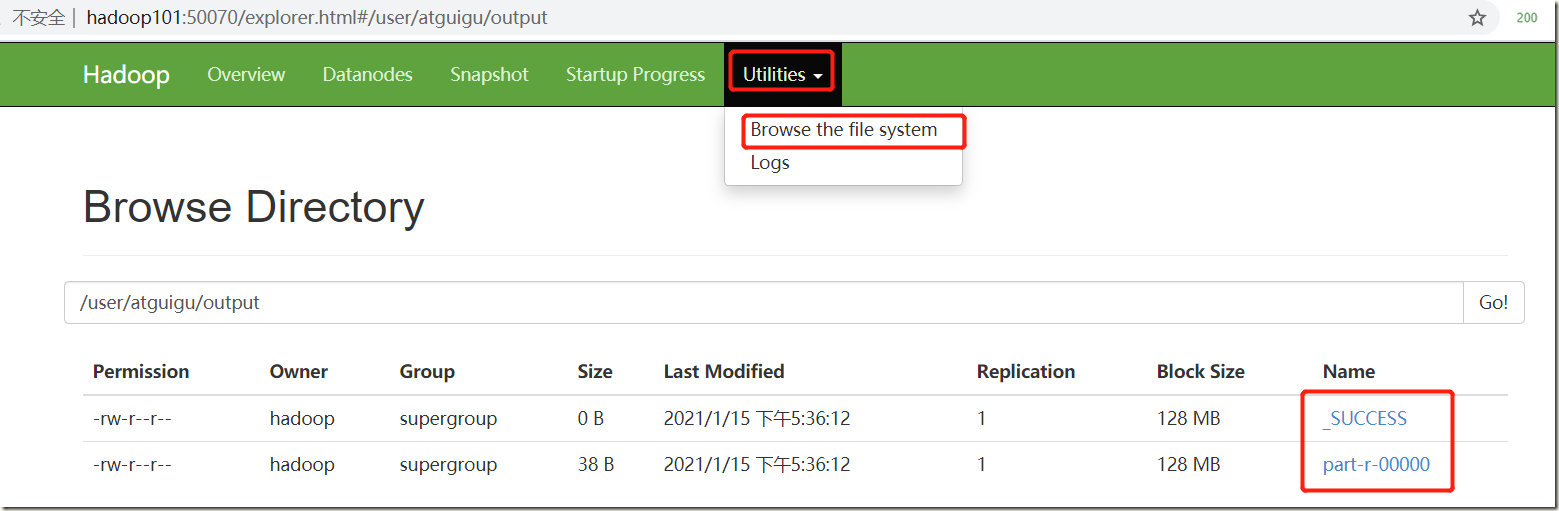

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount /user/atguigu/input/ /user/atguigu/output

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -cat /user/atguigu/output/*

atguigu 2

hadoop 2

mapreduce 1

yarn 1

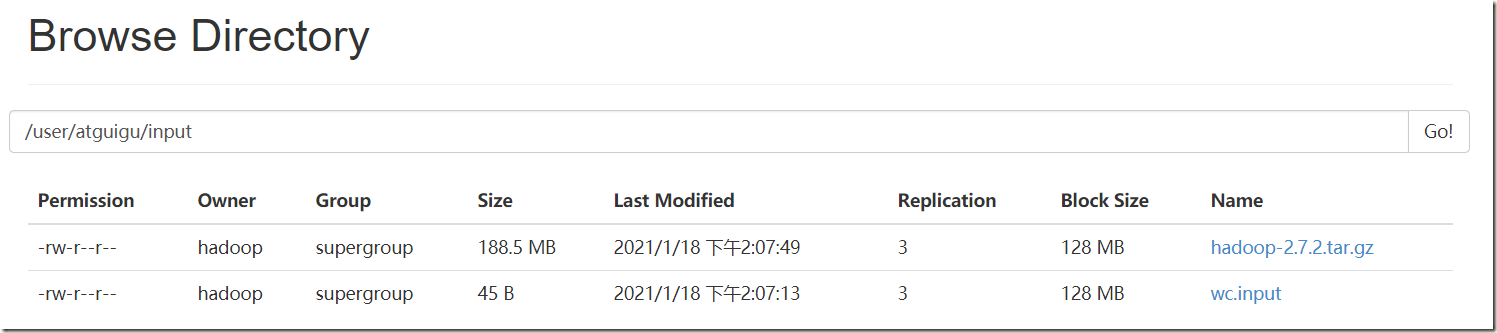

浏览器查看:

5)将测试文件内容下载到本地

[hadoop@hadoop101 hadoop-2.7.2]$ hdfs dfs -get /user/atguigu/output/part-r-00000 ./wcoutput/

6)删除输出结果

[hadoop@hadoop101 hadoop-2.7.2]$ hdfs dfs -rm -r /user/atguigu/output 21/01/15 17:42:10 INFO fs.TrashPolicyDefault: Namenode trash configuration: Deletion interval = 0 minutes, Emptier interval = 0 minutes. Deleted /user/atguigu/output

3.2、启动YARN并运行MapReduce程序

3.2.1、配置集群

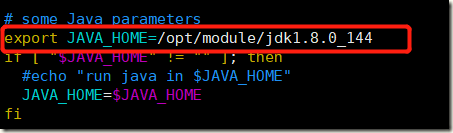

1)配置yarn-env.sh

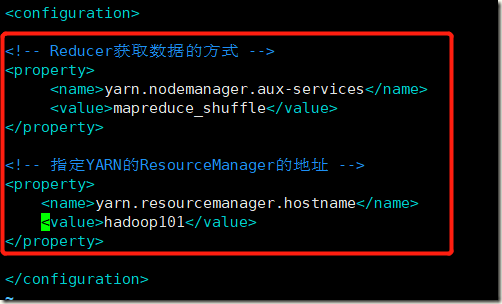

2)配置yarn-site.xml

[hadoop@hadoop101 hadoop]$ vim yarn-site.xml

<!-- Reducer获取数据的方式 -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!-- 指定YARN的ResourceManager的地址 -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop101</value>

</property>3)配置:mapred-env.sh

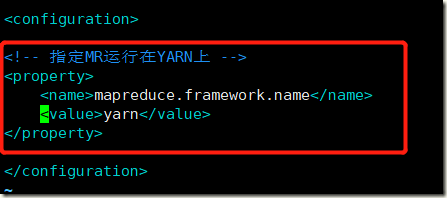

4)配置: (对mapred-site.xml.template重新命名为) mapred-site.xml

[hadoop@hadoop101 hadoop]$ mv mapred-site.xml.template mapred-site.xml

[hadoop@hadoop101 hadoop]$ vim mapred-site.xml

<!-- 指定MR运行在YARN上 -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

3.2.2、启动集群

1)启动前必须保证NameNode和DataNode已经启动

[hadoop@hadoop101 hadoop]$ jps 16228 DataNode 16134 NameNode 17207 Jps

2)启动ResourceManager

[hadoop@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /opt/module/hadoop-2.7.2/logs/yarn-hadoop-resourcemanager-hadoop101.out [hadoop@hadoop101 hadoop-2.7.2]$ jps 16228 DataNode 17460 Jps 16134 NameNode 17238 ResourceManager

3)启动NodeManager

[hadoop@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh start nodemanager starting nodemanager, logging to /opt/module/hadoop-2.7.2/logs/yarn-hadoop-nodemanager-hadoop101.out [hadoop@hadoop101 hadoop-2.7.2]$ jps 17490 NodeManager 16228 DataNode 16134 NameNode 17238 ResourceManager 17593 Jps

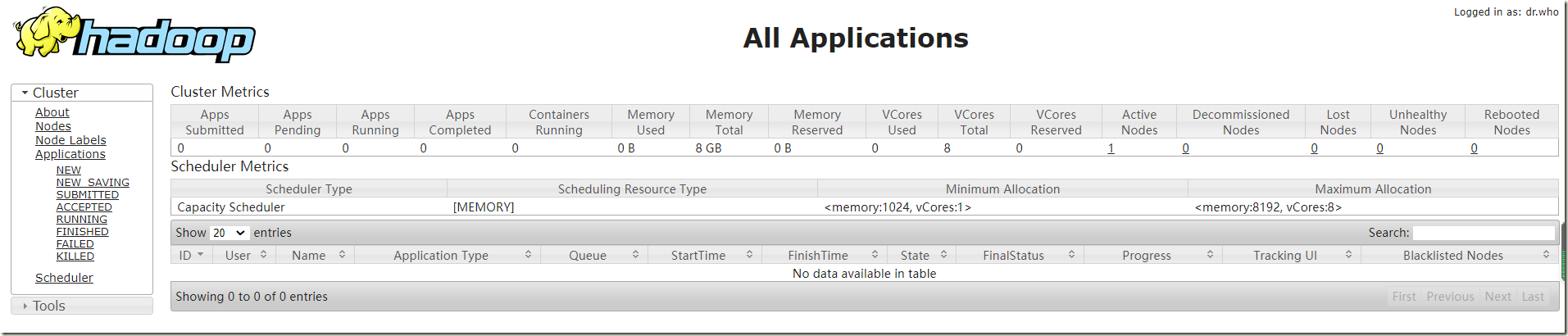

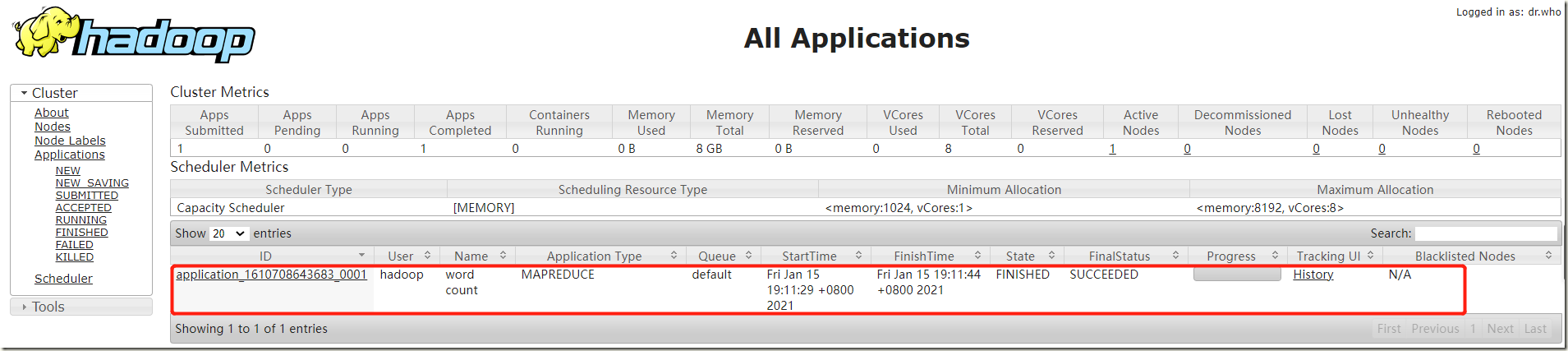

3.2.3、集群操作

1)YARN的浏览器页面查看

2)删除文件系统上的output文件

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -rm -R /user/atguigu/output

3)执行MapReduce程序

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount /user/atguigu/input /user/atguigu/output

4)查看运行结果

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -cat /user/atguigu/output/*

atguigu 2

hadoop 2

mapreduce 1

yarn 1

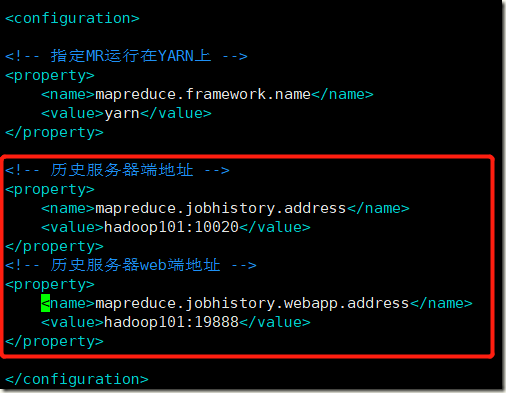

3.3、配置历史服务器

为了查看程序的历史运行情况,需要配置一下历史服务器

1)配置mapred-site.xml,在该文件里面增加如下配置

<!-- 历史服务器端地址 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop101:10020</value>

</property>

<!-- 历史服务器web端地址 -->

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop101:19888</value>

</property>2)启动历史服务器

[hadoop@hadoop101 hadoop-2.7.2]$ sbin/mr-jobhistory-daemon.sh start historyserver starting historyserver, logging to /opt/module/hadoop-2.7.2/logs/mapred-hadoop-historyserver-hadoop101.out [hadoop@hadoop101 hadoop-2.7.2]$ jps 17490 NodeManager 16228 DataNode 18132 Jps 16134 NameNode 17238 ResourceManager 18094 JobHistoryServer

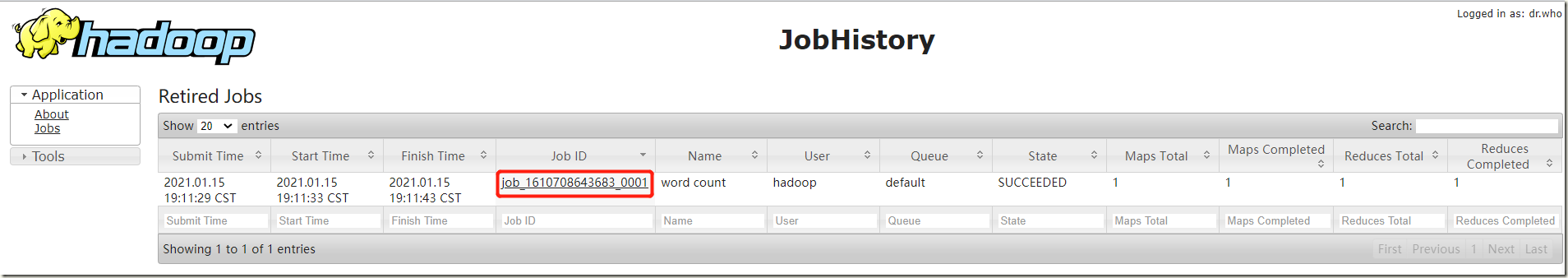

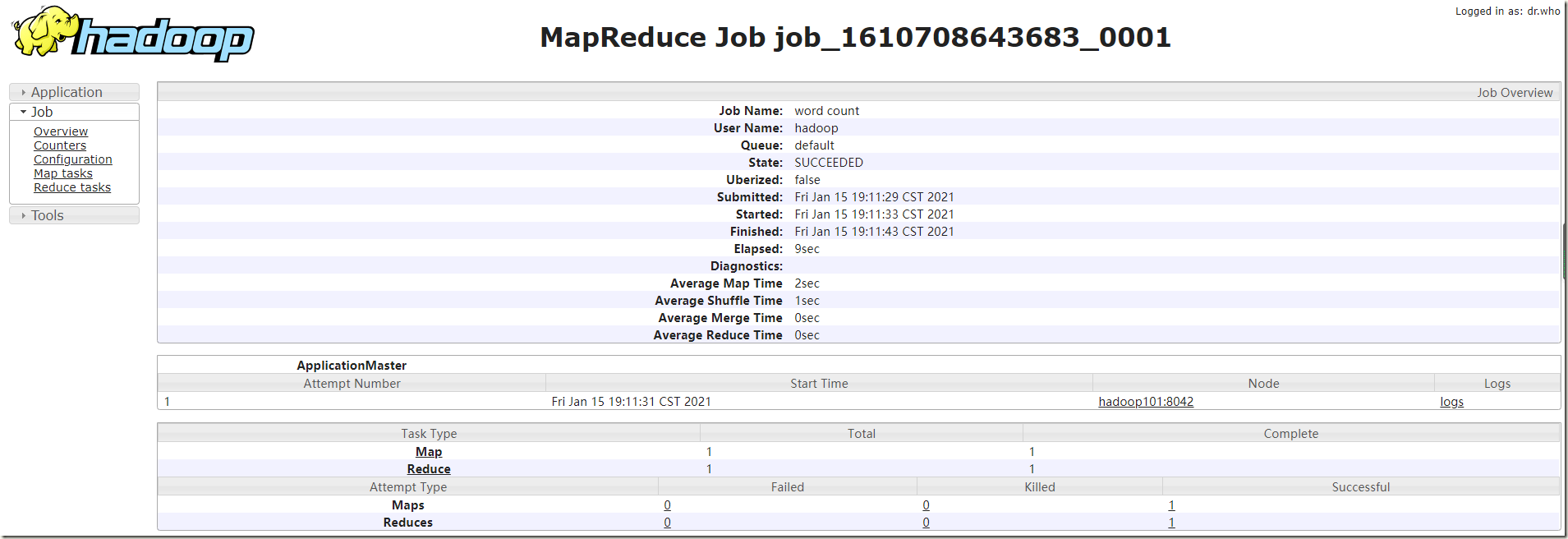

3)查看JobHistory

http://hadoop101:19888/jobhistory

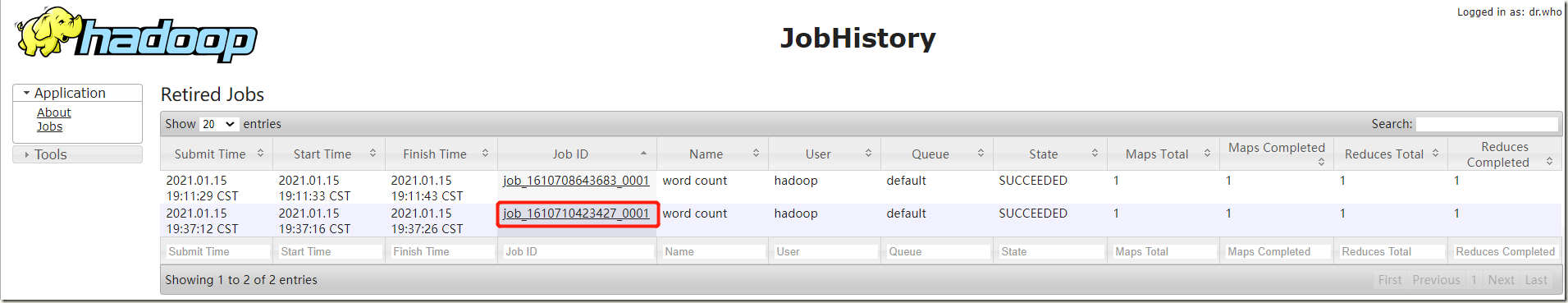

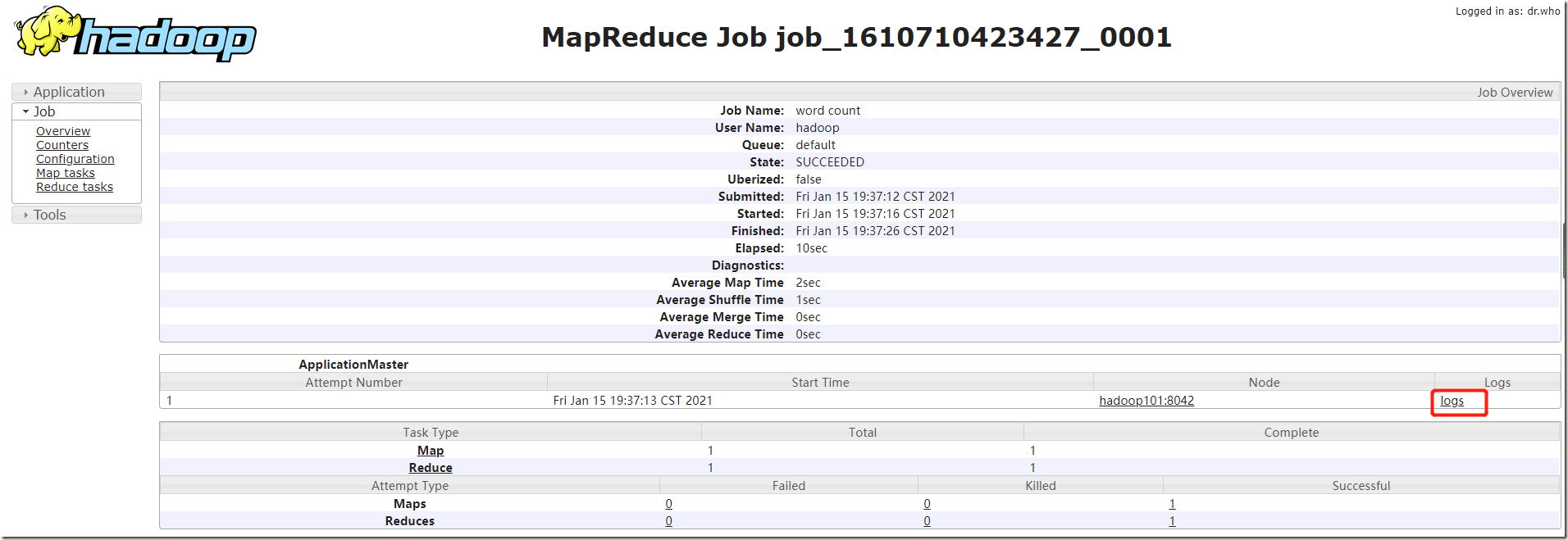

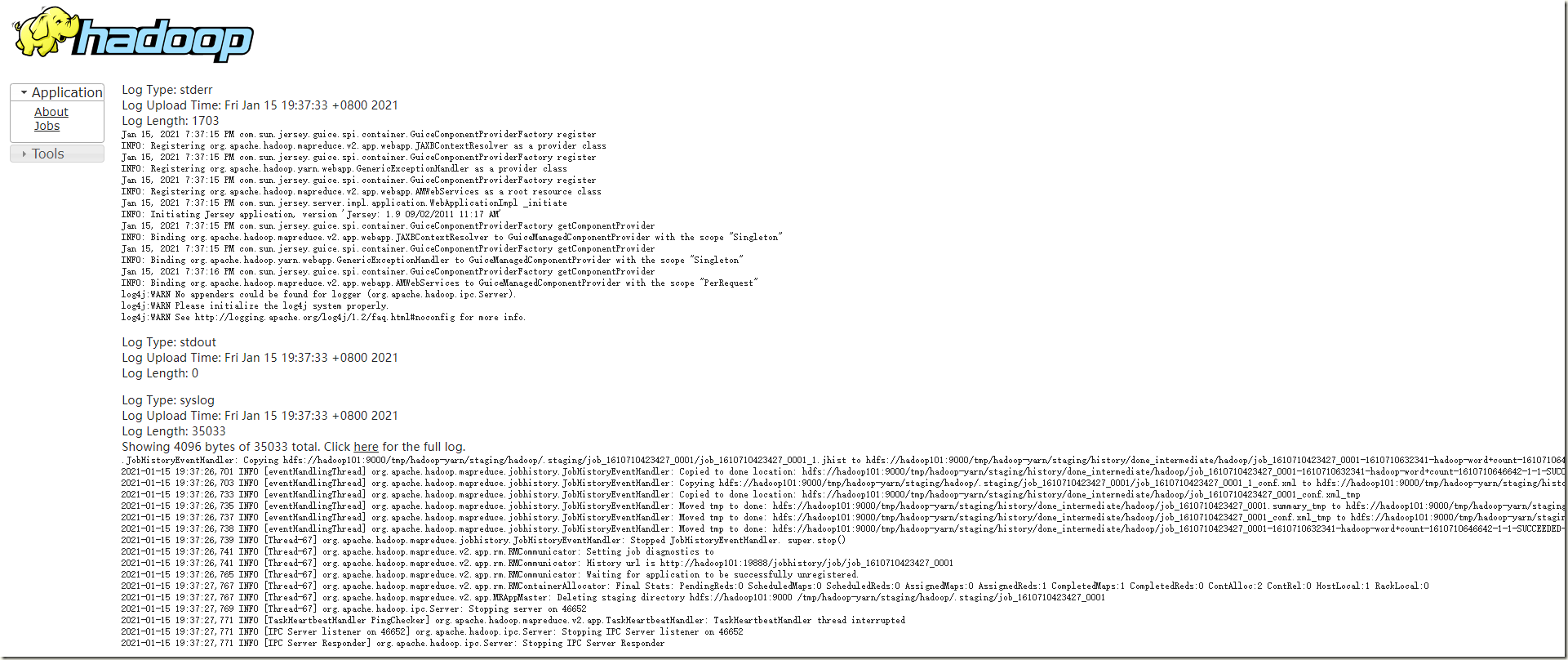

3.4、配置日志的聚集

日志聚集概念:应用运行完成以后,将程序运行日志信息上传到HDFS系统上。

日志聚集功能好处:可以方便的查看到程序运行详情,方便开发调试。

注意:开启日志聚集功能,需要重新启动NodeManager 、ResourceManager和HistoryManager。

开启日志聚集功能具体步骤如下:

1)配置yarn-site.xml,在该文件里面增加如下配置。

<!-- 日志聚集功能使能 -->

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<!-- 日志保留时间设置7天 -->

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

2)关闭NodeManager 、ResourceManager和HistoryManager

[hadoop@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh stop resourcemanager stopping resourcemanager [hadoop@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh stop nodemanager stopping nodemanager [hadoop@hadoop101 hadoop-2.7.2]$ sbin/mr-jobhistory-daemon.sh stop historyserver stopping historyserver

3) 启动NodeManager 、ResourceManager和HistoryManager

[hadoop@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /opt/module/hadoop-2.7.2/logs/yarn-hadoop-resourcemanager-hadoop101.out [hadoop@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh start nodemanager starting nodemanager, logging to /opt/module/hadoop-2.7.2/logs/yarn-hadoop-nodemanager-hadoop101.out [hadoop@hadoop101 hadoop-2.7.2]$ sbin/mr-jobhistory-daemon.sh start historyserver starting historyserver, logging to /opt/module/hadoop-2.7.2/logs/mapred-hadoop-historyserver-hadoop101.out [hadoop@hadoop101 hadoop-2.7.2]$ jps 18722 JobHistoryServer 16228 DataNode 18324 ResourceManager 16134 NameNode 18760 Jps 18569 NodeManager

4)删除HDFS上已经存在的输出文件

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -rm -R /user/atguigu/output 21/01/15 19:34:53 INFO fs.TrashPolicyDefault: Namenode trash configuration: Deletion interval = 0 minutes, Emptier interval = 0 minutes. Deleted /user/atguigu/output

5) 执行WordCount程序

[hadoop@hadoop101 hadoop-2.7.2]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount /user/atguigu/input /user/atguigu/output

6)查看日志

http://hadoop101:19888/jobhistory

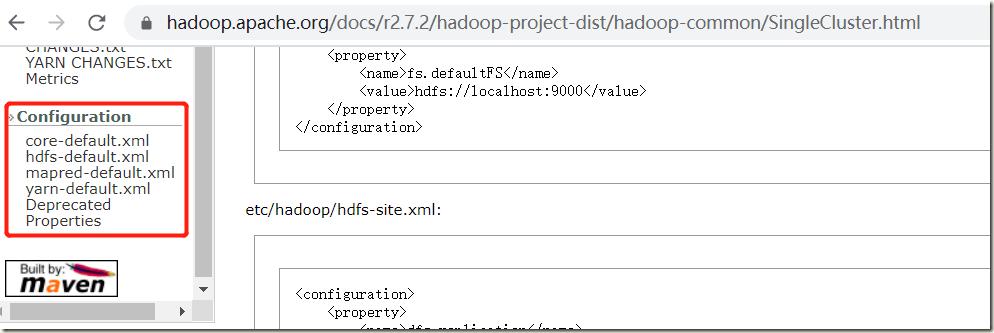

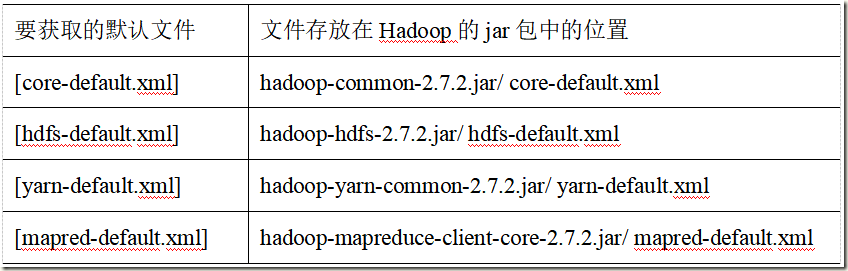

3.5、配置文件说明

Hadoop配置文件分两类:默认配置文件和自定义配置文件,只有用户想修改某一默认配置值时,才需要修改自定义配置文件,更改相应属性值

1)默认配置文件

2)自定义配置文件

core-site.xml、hdfs-site.xml、yarn-site.xml、mapred-site.xml四个配置文件存放在$HADOOP_HOME/etc/hadoop这个路径上,用户可以根据项目需求重新进行修改配置

四、Hadoop运行环境-完全分布式运行模式(*)

4.1、环境准备

准备3台干净的centos7服务器

1)关闭防火墙,配置静态ip,修改主机名,配置hosts解析

2)安装jdk并配置环境变量

3)安装hadoop并配置环境变量

4)添加普通用户hadoop,并配置sudo权限

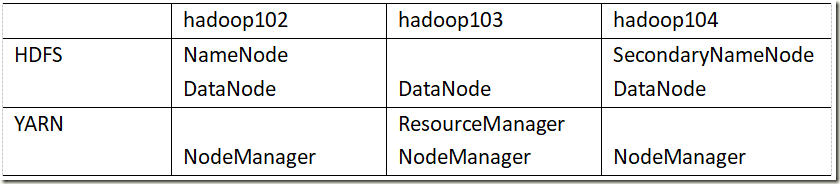

4.2、集群规划部署

4.3、免密登录配置

#hadoop102免密登录hadoop102,hadoop103,hadoop104 [hadoop@hadoop102 ~]$ ssh-keygen -t rsa [hadoop@hadoop102 ~]$ ssh-copy-id hadoop102 [hadoop@hadoop102 ~]$ ssh-copy-id hadoop103 [hadoop@hadoop102 ~]$ ssh-copy-id hadoop104 #在hadoop102上采用root账号,配置一下无密登录到hadoop102、hadoop103、hadoop104 #在hadoop103上采用hadoop账号配置一下无密登录到hadoop102、hadoop103、hadoop104服务器上

4.4、集群分发脚本

[root@hadoop102 ~]# cat xsync #!/bin/bash #1 获取输入参数个数,如果没有参数,直接退出 pcount=$# if((pcount==0)); then echo no args; exit; fi #2 获取文件名称 p1=$1 fname=`basename $p1` echo fname=$fname #3 获取上级目录到绝对路径 pdir=`cd -P $(dirname $p1); pwd` echo pdir=$pdir #4 获取当前用户名称 user=`whoami` #5 循环 for((host=103; host<105; host++)); do echo ------------------- hadoop$host -------------- rsync -rvl $pdir/$fname $user@hadoop$host:$pdir done [root@hadoop102 ~]# chmod 777 xsync [root@hadoop102 ~]# cp xsync /usr/local/bin/

4.5、配置集群

4.5.1、核心配置文件

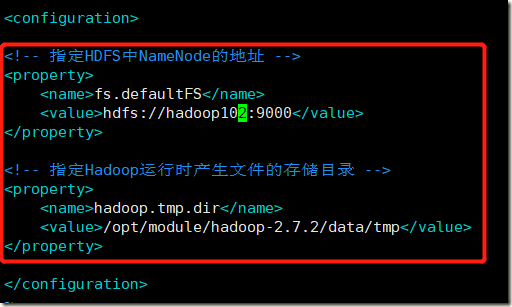

[root@hadoop102 hadoop]# vim core-site.xml

<configuration>

<!-- 指定HDFS中NameNode的地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop102:9000</value>

</property>

<!-- 指定Hadoop运行时产生文件的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-2.7.2/data/tmp</value>

</property>

</configuration>

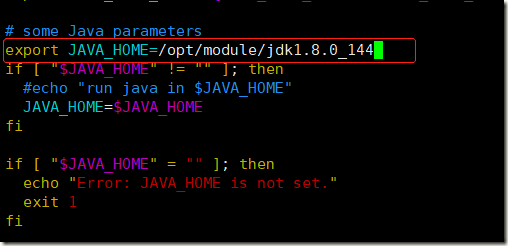

4.5.2、HDFS配置文件

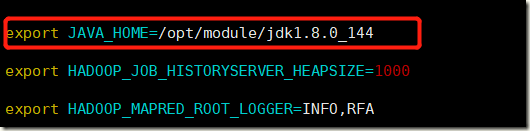

1)配置hadoop-env.sh

2)配置hdfs-site.xml

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<!-- 指定Hadoop辅助名称节点主机配置 -->

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop104:50090</value>

</property>

4.5.3、YARN配置文件

1)配置yarn-env.sh

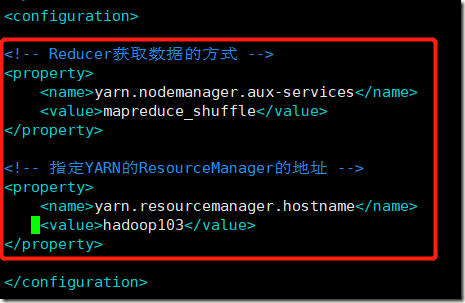

2)配置yarn-site.xml

<!-- Reducer获取数据的方式 -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!-- 指定YARN的ResourceManager的地址 -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop103</value>

</property>

4.5.4、MapReduce配置文件

1)配置mapred-env.sh

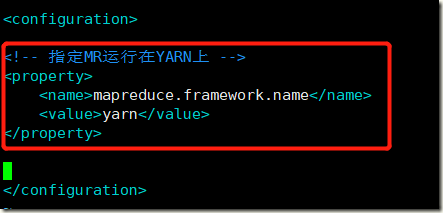

2)配置mapred-site.xml

[hadoop@hadoop102 hadoop]$ cp mapred-site.xml.template mapred-site.xml

[hadoop@hadoop102 hadoop]$ vim mapred-site.xml

<!-- 指定MR运行在YARN上 -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

4.5.5、分发配置好的Hadoop配置文件

[hadoop@hadoop102 hadoop]$ xsync /opt/module/hadoop-2.7.2/

4.6、启动集群

4.6.1、配置slaves

[hadoop@hadoop102 hadoop]$ pwd /opt/module/hadoop-2.7.2/etc/hadoop [hadoop@hadoop102 hadoop]$ vim slaves [hadoop@hadoop102 hadoop]$ cat slaves hadoop102 hadoop103 hadoop104

注意:该文件中添加的内容结尾不允许有空格,文件中不允许有空行。

同步该文件:

[hadoop@hadoop102 hadoop]$ xsync slaves

4.6.2、启动集群

1)如果集群是第一次启动,需要格式化NameNode(注意格式化之前,一定要先停止上次启动的所有namenode和datanode进程,然后再删除data和log数据)

[hadoop@hadoop102 hadoop-2.7.2]$ bin/hdfs namenode –format

2)启动HDFS

[hadoop@hadoop102 hadoop-2.7.2]$ sbin/start-dfs.sh Starting namenodes on [hadoop102] hadoop102: starting namenode, logging to /opt/module/hadoop-2.7.2/logs/hadoop-hadoop-namenode-hadoop102.out hadoop104: starting datanode, logging to /opt/module/hadoop-2.7.2/logs/hadoop-hadoop-datanode-hadoop104.out hadoop103: starting datanode, logging to /opt/module/hadoop-2.7.2/logs/hadoop-hadoop-datanode-hadoop103.out hadoop102: starting datanode, logging to /opt/module/hadoop-2.7.2/logs/hadoop-hadoop-datanode-hadoop102.out Starting secondary namenodes [hadoop104] hadoop104: starting secondarynamenode, logging to /opt/module/hadoop-2.7.2/logs/hadoop-hadoop-secondarynamenode-hadoop104.out #查看 [hadoop@hadoop102 hadoop-2.7.2]$ jps 16161 Jps 15826 NameNode 15954 DataNode [hadoop@hadoop103 hadoop-2.7.2]$ jps 15344 DataNode 15430 Jps [hadoop@hadoop104 hadoop-2.7.2]$ jps 15321 Jps 15180 DataNode 15278 SecondaryNameNode

3)启动YARN

[hadoop@hadoop103 hadoop-2.7.2]$ sbin/start-yarn.sh starting yarn daemons starting resourcemanager, logging to /opt/module/hadoop-2.7.2/logs/yarn-hadoop-resourcemanager-hadoop103.out hadoop102: starting nodemanager, logging to /opt/module/hadoop-2.7.2/logs/yarn-hadoop-nodemanager-hadoop102.out hadoop104: starting nodemanager, logging to /opt/module/hadoop-2.7.2/logs/yarn-hadoop-nodemanager-hadoop104.out hadoop103: starting nodemanager, logging to /opt/module/hadoop-2.7.2/logs/yarn-hadoop-nodemanager-hadoop103.out #查看 [hadoop@hadoop103 hadoop-2.7.2]$ jps 15344 DataNode 15584 NodeManager 15810 Jps 15478 ResourceManager [hadoop@hadoop102 hadoop-2.7.2]$ jps 15826 NameNode 15954 DataNode 16306 Jps 16203 NodeManager [hadoop@hadoop104 hadoop-2.7.2]$ jps 15474 Jps 15369 NodeManager 15180 DataNode 15278 SecondaryNameNode

注意:NameNode和ResourceManger如果不是同一台机器,不能在NameNode上启动 YARN,应该在ResouceManager所在的机器上启动YARN。

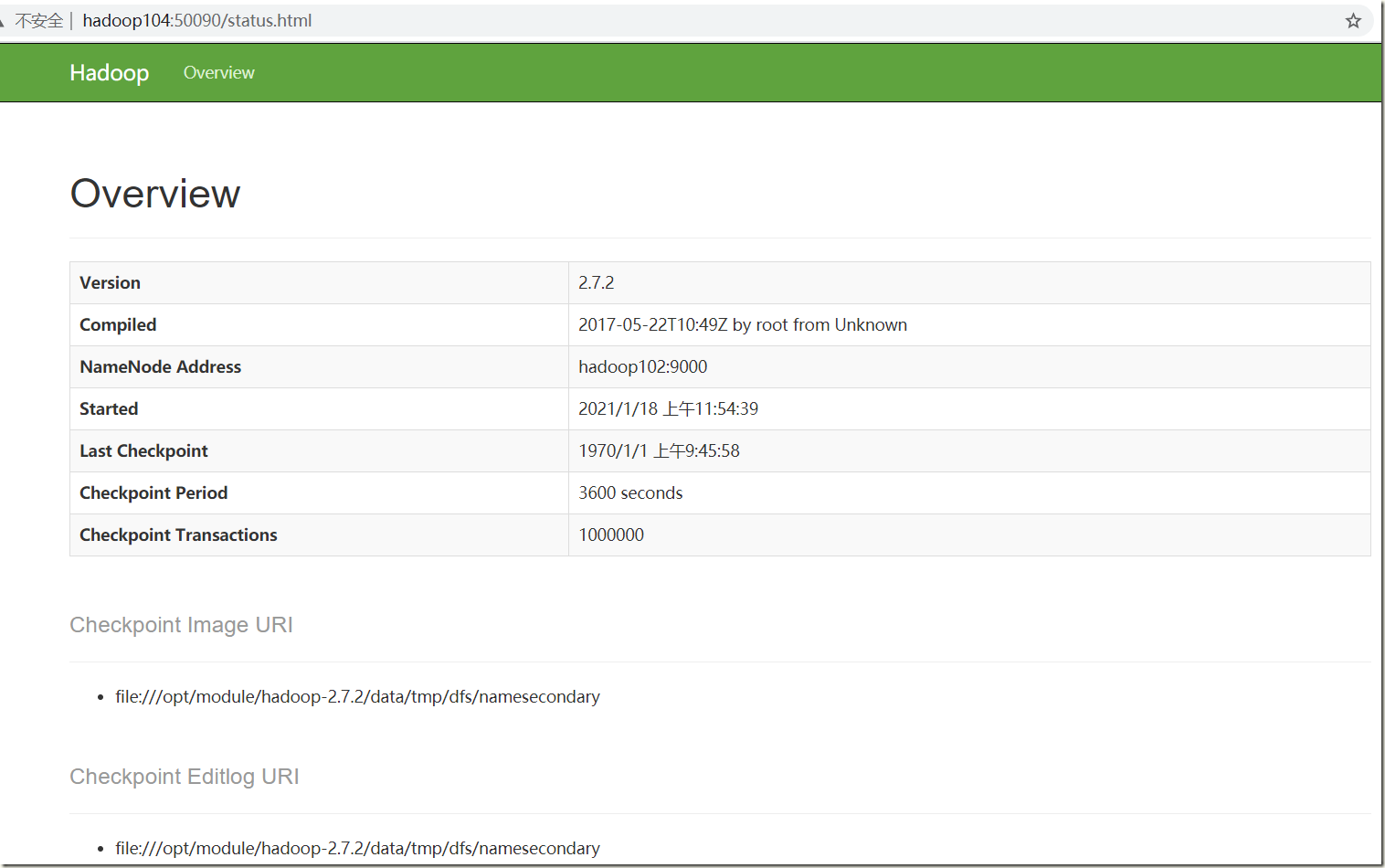

4)Web端查看

http://hadoop104:50090/status.html

4.7、集群基本测试

1)上传文件到集群

#上传小文件 [hadoop@hadoop104 hadoop-2.7.2]$ hdfs dfs -mkdir -p /user/atguigu/input [hadoop@hadoop104 hadoop-2.7.2]$ hdfs dfs -put wcinput/wc.input /user/atguigu/input #上传大文件 [hadoop@hadoop104 hadoop-2.7.2]$ bin/hadoop fs -put /opt/software/hadoop-2.7.2.tar.gz /user/atguigu/input

2)查看文件存放在什么位置

[hadoop@hadoop104 subdir0]$ pwd /opt/module/hadoop-2.7.2/data/tmp/dfs/data/current/BP-687521131-10.0.0.102-1610942023266/current/finalized/subdir0/subdir0 [hadoop@hadoop104 subdir0]$ ll total 194552 -rw-rw-r-- 1 hadoop hadoop 45 Jan 18 14:07 blk_1073741825 -rw-rw-r-- 1 hadoop hadoop 11 Jan 18 14:07 blk_1073741825_1001.meta -rw-rw-r-- 1 hadoop hadoop 134217728 Jan 18 14:07 blk_1073741826 -rw-rw-r-- 1 hadoop hadoop 1048583 Jan 18 14:07 blk_1073741826_1002.meta -rw-rw-r-- 1 hadoop hadoop 63439959 Jan 18 14:07 blk_1073741827 -rw-rw-r-- 1 hadoop hadoop 495635 Jan 18 14:07 blk_1073741827_1003.meta [hadoop@hadoop104 subdir0]$ cat blk_1073741825 hadoop yarn hadoop mapreduce atguigu atguigu

3)拼接大文件

[hadoop@hadoop104 subdir0]$ cat blk_1073741826 >> tmp.file [hadoop@hadoop104 subdir0]$ cat blk_1073741827 >> tmp.file [hadoop@hadoop104 subdir0]$ tar -zxvf tmp.file [hadoop@hadoop104 subdir0]$ ll total 587768 -rw-rw-r-- 1 hadoop hadoop 45 Jan 18 14:07 blk_1073741825 -rw-rw-r-- 1 hadoop hadoop 11 Jan 18 14:07 blk_1073741825_1001.meta -rw-rw-r-- 1 hadoop hadoop 134217728 Jan 18 14:07 blk_1073741826 -rw-rw-r-- 1 hadoop hadoop 1048583 Jan 18 14:07 blk_1073741826_1002.meta -rw-rw-r-- 1 hadoop hadoop 63439959 Jan 18 14:07 blk_1073741827 -rw-rw-r-- 1 hadoop hadoop 495635 Jan 18 14:07 blk_1073741827_1003.meta drwxr-xr-x 9 hadoop hadoop 149 May 22 2017 hadoop-2.7.2 #恢复的大文件 -rw-rw-r-- 1 hadoop hadoop 197657687 Jan 18 14:13 tmp.file

4)下载

[hadoop@hadoop104 hadoop-2.7.2]$ bin/hadoop fs -get /user/atguigu/input/hadoop-2.7.2.tar.gz ./

4.8、集群启动/停止方式总结

1)各个服务组件逐一启动/停止

#分别启动/停止HDFS组件 hadoop-daemon.sh start / stop namenode / datanode / secondarynamenode #启动/停止YARN yarn-daemon.sh start / stop resourcemanager / nodemanager

2)各个模块分开启动/停止(配置ssh是前提)常用

#整体启动/停止HDFS start-dfs.sh / stop-dfs.sh #整体启动/停止YARN start-yarn.sh / stop-yarn.sh

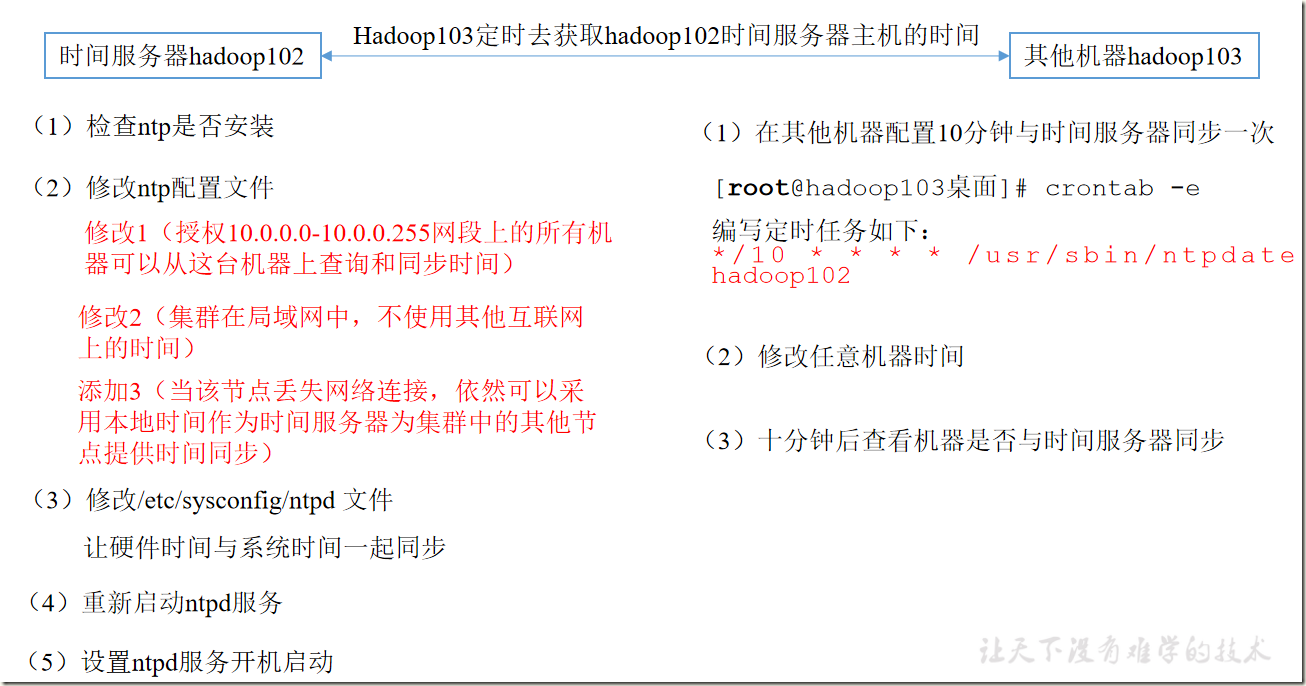

4.9、时间同步

时间同步的方式:找一个机器,作为时间服务器,所有的机器与这台集群时间进行定时的同步,比如,每隔十分钟,同步一次时间。

4.9.1、时间服务器配置(必须root用户)

1)检查ntp是否安装

[root@hadoop102 ~]# rpm -qa|grep ntp [root@hadoop102 ~]# yum install ntp -y [root@hadoop102 ~]# rpm -qa|grep ntp ntpdate-4.2.6p5-29.el7.centos.2.x86_64 ntp-4.2.6p5-29.el7.centos.2.x86_64

2)修改ntp配置文件

a)修改1(授权10.0.0.0-10.0.0.255网段上的所有机器可以从这台机器上查询和同步时间) #restrict 192.168.1.0 mask 255.255.255.0 nomodify notrap为 restrict 192.168.1.0 mask 255.255.255.0 nomodify notrap b)修改2(集群在局域网中,不使用其他互联网上的时间) server 0.centos.pool.ntp.org iburst server 1.centos.pool.ntp.org iburst server 2.centos.pool.ntp.org iburst server 3.centos.pool.ntp.org iburst为 #server 0.centos.pool.ntp.org iburst #server 1.centos.pool.ntp.org iburst #server 2.centos.pool.ntp.org iburst #server 3.centos.pool.ntp.org iburst c)添加3(当该节点丢失网络连接,依然可以采用本地时间作为时间服务器为集群中的其他节点提供时间同步) server 127.127.1.0 fudge 127.127.1.0 stratum 10

3)修改/etc/sysconfig/ntpd 文件

[root@hadoop102 ~]# vim /etc/sysconfig/ntpd # Command line options for ntpd OPTIONS="-g" SYNC_HWCLOCK=yes #让硬件时间与系统时间一起同步

4)启动ntpd服务

[root@hadoop102 ~]# systemctl start ntpd [root@hadoop102 ~]# systemctl enable ntpd

4.9.2、其他机器配置(必须root用户)

[root@hadoop103 ~]# /usr/sbin/ntpdate hadoop102 -bash: /usr/sbin/ntpdate: No such file or directory [root@hadoop103 ~]# yum install ntp -y #不需要启动 [root@hadoop103 ~]# /usr/sbin/ntpdate hadoop102 18 Jan 14:44:34 ntpdate[16084]: adjust time server 10.0.0.102 offset 0.002712 sec #设置定时任务 [root@hadoop103 ~]# crontab -l */10 * * * * /usr/sbin/ntpdate hadoop102

五、常见错误及解决

1)防火墙没关闭、或者没有启动YARN

INFO client.RMProxy: Connecting to ResourceManager at hadoop108/192.168.10.108:8032

2)主机名称配置错误

3)IP地址配置错误

4)ssh没有配置好

5)root用户和atguigu两个用户启动集群不统一

6)配置文件修改不细心

7)未编译源码

Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

17/05/22 15:38:58 INFO client.RMProxy: Connecting to ResourceManager at hadoop108/192.168.10.108:8032

8)不识别主机名称

java.net.UnknownHostException: hadoop102: hadoop102

at java.net.InetAddress.getLocalHost(InetAddress.java:1475)

at org.apache.hadoop.mapreduce.JobSubmitter.submitJobInternal(JobSubmitter.java:146)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1290)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1287)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:415)

解决办法:

(1)在/etc/hosts文件中添加192.168.1.102 hadoop102

(2)主机名称不要起hadoop hadoop000等特殊名称

9)DataNode和NameNode进程同时只能工作一个。

10)jps发现进程已经没有,但是重新启动集群,提示进程已经开启。原因是在linux的根目录下/tmp目录中存在启动的进程临时文件,将集群相关进程删除掉,再重新启动集群。

11)jps不生效。

原因:全局变量hadoop java没有生效。解决办法:需要source /etc/profile文件。

12)8088端口连接不上

cat /etc/hosts #注释掉如下代码 #127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 #::1 hadoop102

-------------------------------------------

个性签名:独学而无友,则孤陋而寡闻。做一个灵魂有趣的人!