ELK日志收集记录

logstash在需要收集日志的服务器里运行,将日志数据发送给es

在kibana页面查看es的数据

es和kibana安装:

rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearch cat << EOF >/etc/yum.repos.d/elasticsearch.repo [elasticsearch] name=Elasticsearch repository for 8.x packages baseurl=https://artifacts.elastic.co/packages/8.x/yum gpgcheck=1 gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch enabled=0 autorefresh=1 type=rpm-md EOF yum install -y --enablerepo=elasticsearch elasticsearch

# 安装完成后,在终端里可以找到es的密码

# 修改密码:'/usr/share/elasticsearch/bin/elasticsearch-reset-password -u elastic'

# config file: /etc/elasticsearch/elasticsearch.yml

# network.host: 0.0.0.0 允许其他服务器访问

# http.port 修改成可以外部访问的端口

# 启动es

systemctl start elasticsearch.service

# 测试是否可以访问:curl --cacert /etc/elasticsearch/certs/http_ca.crt -u elastic https://localhost:es_host

# 如果要在其他服务器里访问的话,需要先把证书移过去:/etc/elasticsearch/certs/http_ca.crt,直接复制证书的内容,在客户端保存成一个证书文件即可

# 在客户端里测试是否可以访问:curl --cacert path_to_ca.crt -u elastic https://localhost:es_host

# install kibana cat << EOF >/etc/yum.repos.d/kibana.repo [kibana-8.x] name=Kibana repository for 8.x packages baseurl=https://artifacts.elastic.co/packages/8.x/yum gpgcheck=1 gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch enabled=1 autorefresh=1 type=rpm-md EOF

# kibana和es可以安装到同一台服务器 yum install -y kibana # /etc/kibana/kibana.yml 修改server.port为外部可以访问的端口,server.host修改为0.0.0.0允许其他服务器访问,elasticsearch部分的可以先不用设置, # root用户使用:/usr/share/kibana/bin/kibana --allow-root systemctl start kibana.service # 首次打开kibana页面需要添加elastic的token,使用如下命令生成token # /usr/share/elasticsearch/bin/elasticsearch-create-enrollment-token -s kibana

# 登录的时候也需要es的用户名和密码

# 登录成功之后,/etc/kibana/kibana.yml的底部会自动添加elasticsearch的连接信息

需要收集日志的服务器里安装logstash:

rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearch cat <<EOF > /etc/yum.repos.d/logstash.repo [logstash-8.x] name=Elastic repository for 8.x packages baseurl=https://artifacts.elastic.co/packages/7.x/yum gpgcheck=1 gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch enabled=1 autorefresh=1 type=rpm-md EOF yum install -y logstash ln -s /usr/share/logstash/bin/logstash /usr/bin/logstash # install filebeat rpm --import https://packages.elastic.co/GPG-KEY-elasticsearch cat <<EOF > /etc/yum.repos.d/filebeat.repo [elastic-8.x] name=Elastic repository for 8.x packages baseurl=https://artifacts.elastic.co/packages/8.x/yum gpgcheck=1 gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch enabled=1 autorefresh=1 type=rpm-md EOF yum install -y filebeat

ln -s /usr/share/filebeat/bin/filebeat /usr/bin/logstash #filebeat->logstash->ES #filebeat从具体目录里拿文件的内容发送给logstash,logstash将数据发送给es

midr -m 777 -p /data/logstash

cat <<EOF >/data/logstash/filebeat.conf filebeat.inputs: - type: log paths: - /your_log_path/*.log output.logstash: hosts: ["127.0.0.1:5044"] EOF cat <<EOF >/data/logstash/logstash.conf # Sample Logstash configuration for creating a simple # Beats -> Logstash -> Elasticsearch pipeline. input { beats { port => 5044 client_inactivity_timeout => 600 } } filter{ mutate{ remove_field => ["agent"] remove_field => ["ecs"] remove_field => ["event"] remove_field => ["tags"] remove_field => ["@version"] remove_field => ["input"] remove_field => ["log"] } } output { elasticsearch { hosts => ["https://es_ip_address:es_port"] index => "log-from-logstash" user => "es_user_name" password => "es_password" ssl_certificate_authorities => "path_to_es_http_ca.crt" } } EOF

#es_http_ca.crt的内容和es服务器里的/etc/elasticsearch/certs/http_ca.crt内容相同 #filter里移除一些不必要的字段 #启动 logstash -f /data/logstash/logstash.conf >/dev/null 2>&1 & filebeat -e -c /data/logstash/filebeat.conf >/dev/null 2>&1 &

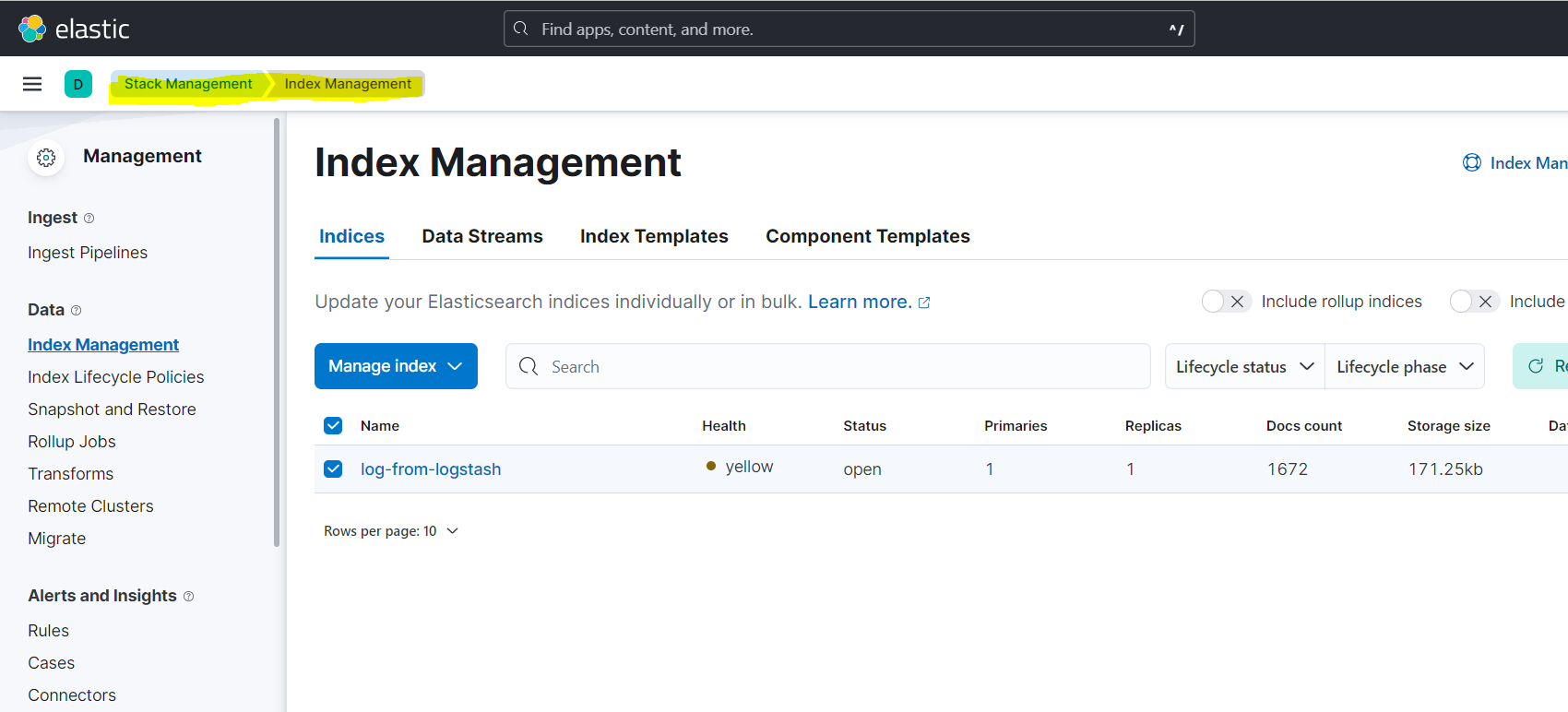

启动之后,filebeat.conf里配置的日志路径里可以copy一些文件做测试,或者已经有一些日志文件的话,都可以在kabana里看到配置的index被自动创建:

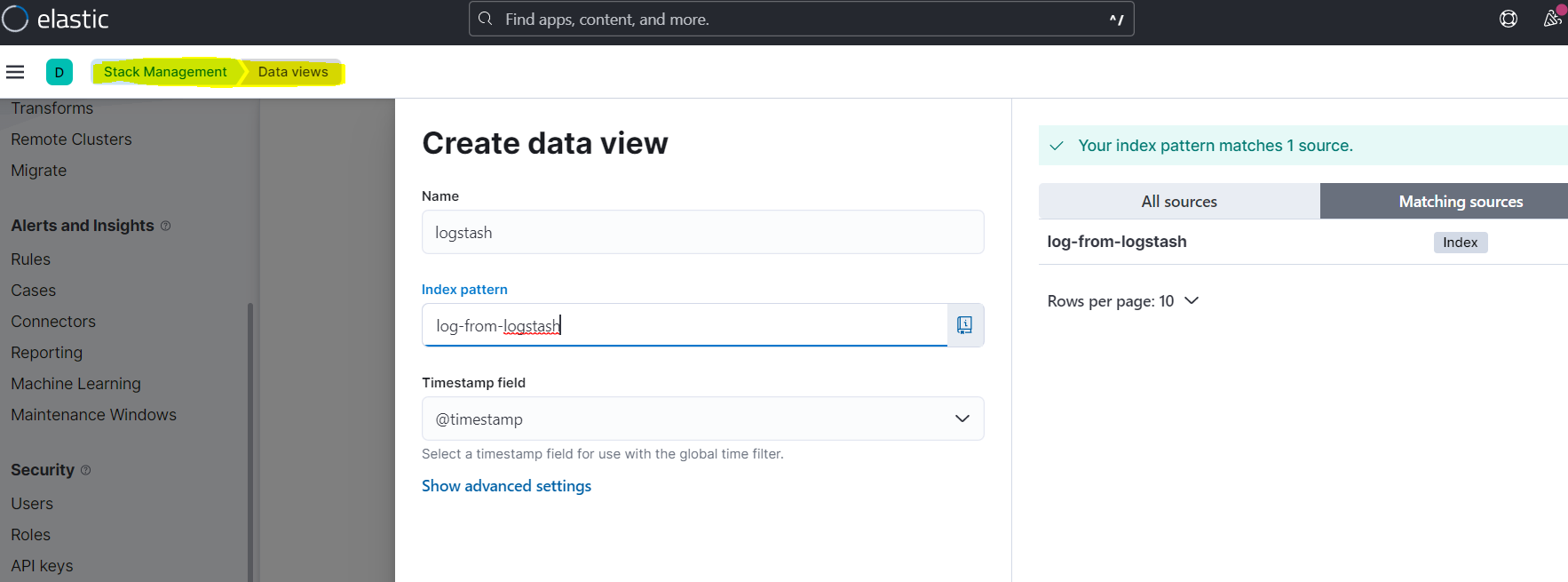

创建一个DataView就可以查看index里的文档内容:

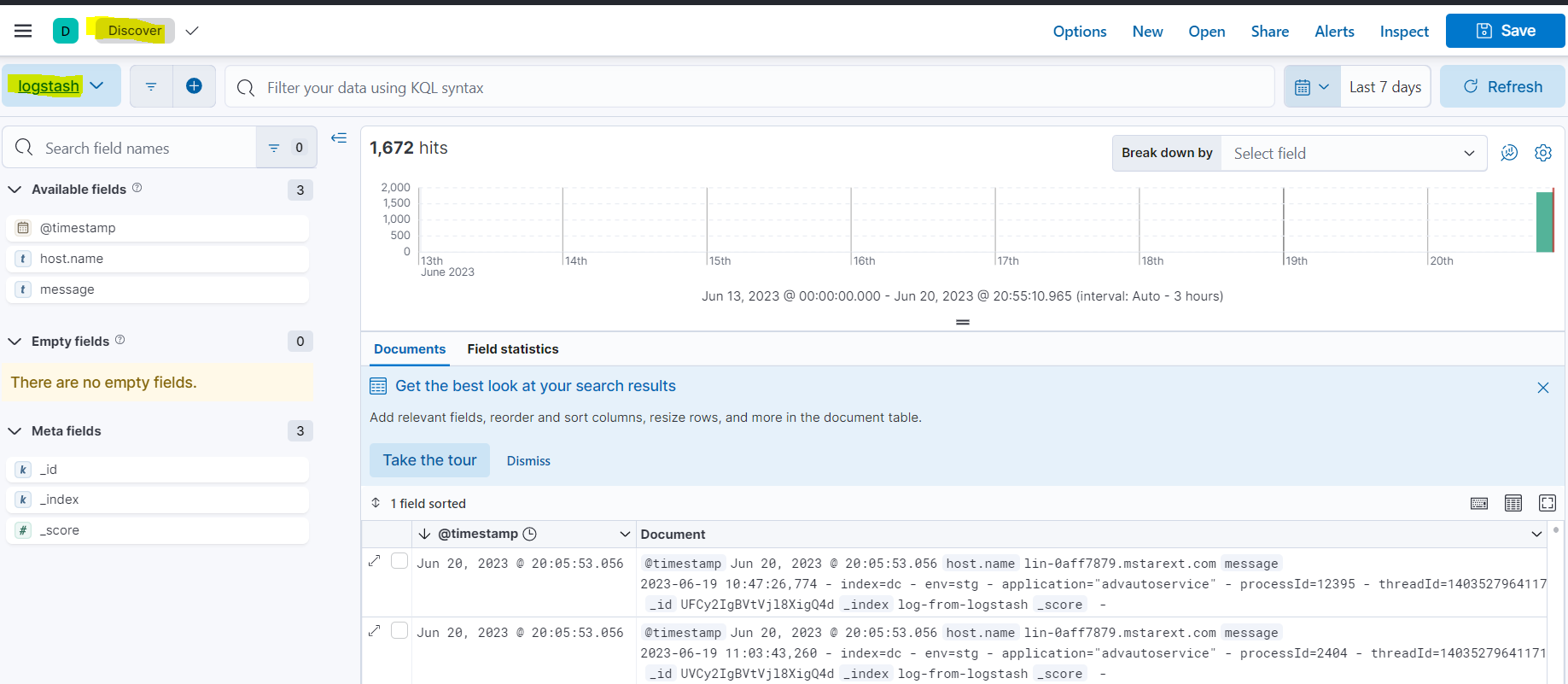

在Discover里选择配置的dataview查看数据:

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 被坑几百块钱后,我竟然真的恢复了删除的微信聊天记录!

· 没有Manus邀请码?试试免邀请码的MGX或者开源的OpenManus吧

· 【自荐】一款简洁、开源的在线白板工具 Drawnix

· 园子的第一款AI主题卫衣上架——"HELLO! HOW CAN I ASSIST YOU TODAY

· Docker 太简单,K8s 太复杂?w7panel 让容器管理更轻松!