代码实现:

import numpy as np import matplotlib from matplotlib import pyplot as plt # Default parameters for plots matplotlib.rcParams['font.size'] = 20 matplotlib.rcParams['figure.titlesize'] = 20 matplotlib.rcParams['figure.figsize'] = [9, 7] matplotlib.rcParams['font.family'] = ['STKaiti'] matplotlib.rcParams['axes.unicode_minus']=False import tensorflow as tf import timeit cpu_data = [] gpu_data = [] for n in range(9): n = 10**n # 创建在CPU上运算的2个矩阵 with tf.device('/cpu:0'): cpu_a = tf.random.normal([1, n]) cpu_b = tf.random.normal([n, 1]) print(cpu_a.device, cpu_b.device) # 创建使用GPU运算的2个矩阵 with tf.device('/gpu:0'): gpu_a = tf.random.normal([1, n]) gpu_b = tf.random.normal([n, 1]) print(gpu_a.device, gpu_b.device) def cpu_run(): with tf.device('/cpu:0'): c = tf.matmul(cpu_a, cpu_b) return c def gpu_run(): with tf.device('/gpu:0'): c = tf.matmul(gpu_a, gpu_b) return c # 第一次计算需要热身,避免将初始化阶段时间结算在内 cpu_time = timeit.timeit(cpu_run, number=10) gpu_time = timeit.timeit(gpu_run, number=10) print('warmup:', cpu_time, gpu_time) # 正式计算10次,取平均时间 cpu_time = timeit.timeit(cpu_run, number=10) gpu_time = timeit.timeit(gpu_run, number=10) print('run time:', cpu_time, gpu_time) cpu_data.append(cpu_time/10) gpu_data.append(gpu_time/10) del cpu_a,cpu_b,gpu_a,gpu_b x = [10**i for i in range(9)] cpu_data = [1000*i for i in cpu_data] gpu_data = [1000*i for i in gpu_data] plt.plot(x, cpu_data, 'C1') plt.plot(x, cpu_data, color='C1', marker='s', label='CPU') plt.plot(x, gpu_data,'C0') plt.plot(x, gpu_data, color='C0', marker='^', label='GPU') plt.gca().set_xscale('log') plt.gca().set_yscale('log') plt.ylim([0,100]) plt.xlabel('矩阵大小n:(1xn)@(nx1)') plt.ylabel('运算时间(ms)') plt.legend() plt.savefig('gpu-time.svg')

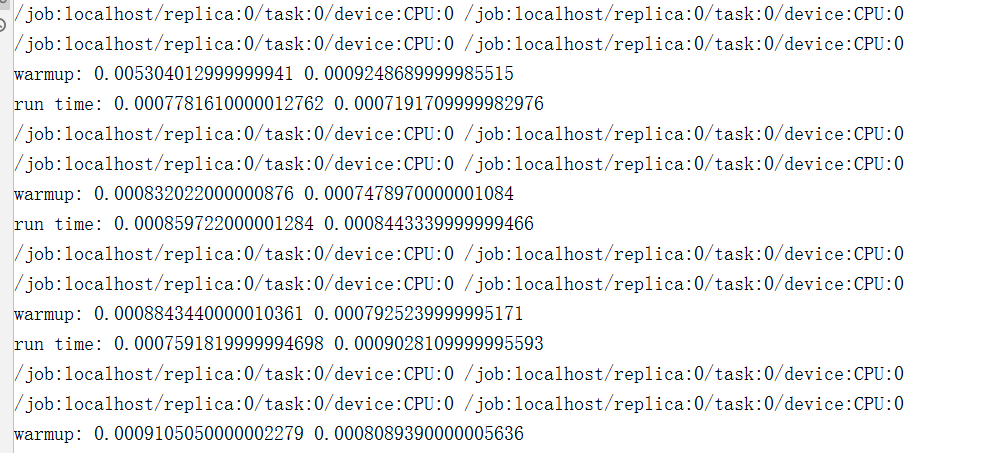

执行结果:

本文来自博客园,作者:大码王,转载请注明原文链接:https://www.cnblogs.com/huanghanyu/

posted on

posted on

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 开发者必知的日志记录最佳实践

· SQL Server 2025 AI相关能力初探

· Linux系列:如何用 C#调用 C方法造成内存泄露

· AI与.NET技术实操系列(二):开始使用ML.NET

· 记一次.NET内存居高不下排查解决与启示

· 阿里最新开源QwQ-32B,效果媲美deepseek-r1满血版,部署成本又又又降低了!

· 开源Multi-agent AI智能体框架aevatar.ai,欢迎大家贡献代码

· Manus重磅发布:全球首款通用AI代理技术深度解析与实战指南

· 被坑几百块钱后,我竟然真的恢复了删除的微信聊天记录!

· AI技术革命,工作效率10个最佳AI工具