1 f=open('tomato.txt','r',encoding="UTF-8")

2 text=f.read()

3 #with open('C:\\Users\\MyPC\\PycharmProjects\\untitled3\\tomato.txt','r',) as f:

4 # text=f.read()

5 #text1=text.decode(["encoding"]) #解码

6

7

8 fo=''',。“”?!:;'''

9 for ch in fo:

10 text=text.replace(ch,'') #标点符号、特殊符号的处理

11

12 import jieba #导入结巴,进行中文分词

13 Story=f

14 print(list(jieba.cut(Story)))

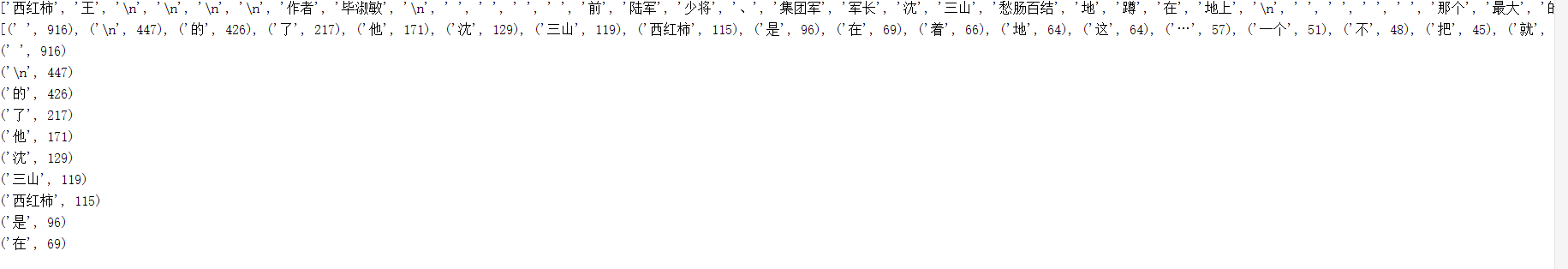

15

16 List=list(jieba.cut(Story))

17 strset=set(List)

18

19 strDict={}

20 for word in List:

21 strDict[word]=List.count(word)

22

23 #进行词频排序

24 wcList=list(strDict.items())

25 wcList.sort(key=lambda x:x[1],reverse=True)

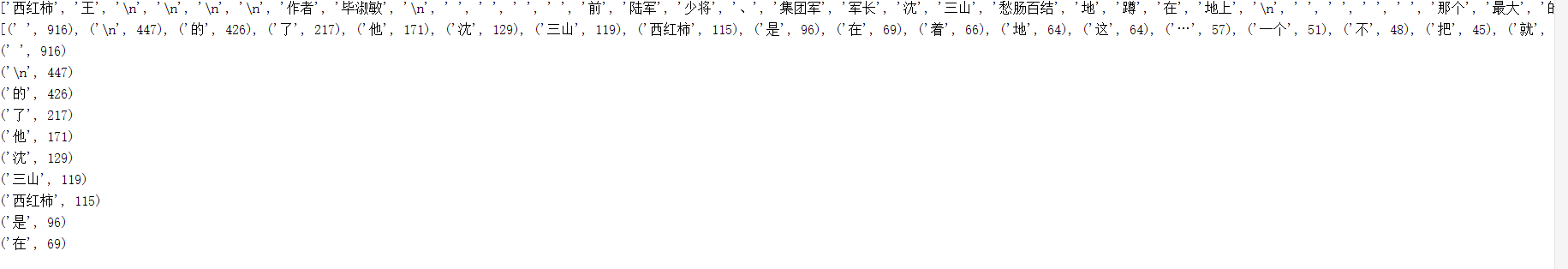

26 print(wcList)

27

28 for i in range(10):

29 print(wcList[i])

结果: