数据分类实验的python程序

实验设置要求:

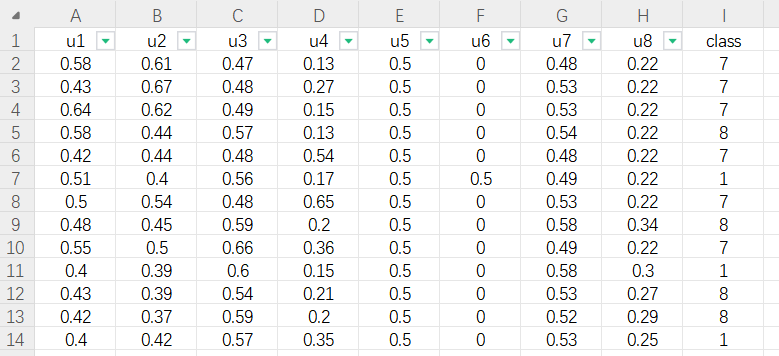

- 数据集:共12个,从本地文件夹中包含若干个以xlsx为后缀的Excel文件,每个文件中有一个小规模数据,有表头,最后一列是分类的类别class,其他列是特征,数值的。

- 实验方法:XGBoost、AdaBoost、SVM (采用rbf核)、Neural Network分类器

- 输出:分类准确率,即十折交叉验证的准确率均值和方差,并重复5次实验,不同数据的实验结果分别保存至各自的一个csv文件。

- 其他要求:SVC增加rbf参数设置,默认为0.001、MLPClassifier为1层隐层神经网络,隐层节点为100. XGBoost和AdaBoost弱分类器设置。 cross_val_score增加数据标准化和n_jobs设置。由于数据的类别可能是非连续的字符形式,增加class的映射

Excel中的数据形式如下:

python程序如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 | import osimport pandas as pdimport numpy as npfrom sklearn.model_selection import KFold, cross_val_scorefrom sklearn.svm import SVCfrom sklearn.ensemble import AdaBoostClassifierfrom sklearn.neural_network import MLPClassifierimport xgboost as xgbfrom sklearn.preprocessing import StandardScaler, LabelEncoderfrom sklearn.pipeline import Pipelinefrom concurrent.futures import ThreadPoolExecutor# 1. 读取文件夹中的所有.xlsx文件data_folder = "./分类数据集" file_list = [f for f in os.listdir(data_folder) if f.endswith('.xlsx')]result_folder = "./results"# 定义分类器classifiers = { "XGBoost": xgb.XGBClassifier(), "AdaBoost": AdaBoostClassifier(n_estimators=50), #https://scikit-learn.org/stable/modules/generated/sklearn.ensemble.AdaBoostClassifier.html "SVM_rbf": SVC(C=1.0, kernel="rbf", gamma='scale'), #gamma默认为1 / (n_features * X.var()), #可以根据数据集进行调整 "Neural_Network": MLPClassifier(hidden_layer_sizes=(100), max_iter=1000)}# # 对每一个文件进行分类实验# def process_file(file):# 对每一个文件进行分类实验for file in file_list: df = pd.read_excel(os.path.join(data_folder, file)) X = df.iloc[:, :-1].values y = df.iloc[:, -1].values # 类别映射 le = LabelEncoder() y = le.fit_transform(y) results = { "Classifier": [], "Experiment 1": [], "Experiment 2": [], "Experiment 3": [], "Experiment 4": [], "Experiment 5": [], "Mean Accuracy": [], "Accuracy Variance": [] } # 使用四种分类器 for clf_name, clf in classifiers.items(): all_accuracies = [] # 使用标准化和分类器创建流水线 pipeline = Pipeline([ ('scaler', StandardScaler()), ('classifier', clf) ]) # 重复5次实验 for exp_num in range(1, 6): print(clf_name, exp_num) kf = KFold(n_splits=10, shuffle=True, random_state=None) accuracies = cross_val_score(pipeline, X, y, cv=kf,n_jobs=16) results[f"Experiment {exp_num}"].append(np.mean(accuracies)) all_accuracies.extend(accuracies) results["Classifier"].append(clf_name) results["Mean Accuracy"].append(np.mean(all_accuracies)) results["Accuracy Variance"].append(np.var(all_accuracies)) # 保存到.csv文件 result_df = pd.DataFrame(results) result_df.to_csv(os.path.join(result_folder, f"results_{file.replace('.xlsx', '.csv')}"), index=False)# # 使用多线程处理文件# with ThreadPoolExecutor() as executor:# executor.map(process_file, file_list)print("Experiments completed!") |

标签:

machine learning

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 阿里最新开源QwQ-32B,效果媲美deepseek-r1满血版,部署成本又又又降低了!

· 开源Multi-agent AI智能体框架aevatar.ai,欢迎大家贡献代码

· Manus重磅发布:全球首款通用AI代理技术深度解析与实战指南

· 被坑几百块钱后,我竟然真的恢复了删除的微信聊天记录!

· 没有Manus邀请码?试试免邀请码的MGX或者开源的OpenManus吧