102102151 黄靖 作业二

要求:在中国气象网(http://www.weather.com.cn)给定城市集合的7日天气预报,并保存在数据库。

Gitee:https://gitee.com/HJ_orange/crawl_project/tree/master/实践作业2

代码如下

from bs4 import BeautifulSoup

from bs4 import UnicodeDammit

import urllib.request

import sqlite3

# 创建 SQLite 数据库连接

conn = sqlite3.connect('weather_data.db')

cursor = conn.cursor()

# 创建一个表用于存储天气数据

cursor.execute('''

CREATE TABLE IF NOT EXISTS WeatherData (

id INTEGER PRIMARY KEY,

area TEXT,

date TEXT,

weather TEXT,

temperature TEXT

)

''')

url = "http://www.weather.com.cn/weather/101010100.shtml"

try:

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/117.0.0.0 Safari/537.36 Edg/117.0.2045.47"}

req = urllib.request.Request(url, headers=headers)

data = urllib.request.urlopen(req)

data = data.read()

dammit = UnicodeDammit(data, ["utf-8", "gbk"])

data = dammit.unicode_markup

soup = BeautifulSoup(data, "lxml")

lis = soup.select("ul[class='t clearfix'] li")

i = 0

print("{:<10}\t{:<10}\t{:<10}\t\t{:<10}{:<10}".format("id","地区","日期","天气信息","温度"))

for li in lis:

try:

i = i + 1

date = li.select('h1')[0].text

weather = li.select('p[class="wea"]')[0].text

temp = li.select('p[class="tem"] span')[0].text + "/" + li.select('p[class="tem"] i')[0].text

# 将数据插入到数据库中

cursor.execute('''

INSERT INTO WeatherData (area, date, weather, temperature) VALUES (?, ?, ?, ?)

''', ("北京", date, weather, temp))

conn.commit() # 提交事务

print("{:<10}\t{:<10}\t{:<10}\t{:<10}\t{:<10}".format(i, "北京", date, weather, temp))

except Exception as err:

print(err)

except Exception as err:

print(err)

finally:

# 关闭数据库连接

conn.close()

conn = sqlite3.connect('weather_data.db')

c = conn.cursor()

for row in c.execute('SELECT * FROM WeatherData'):

print(row)

conn.close()

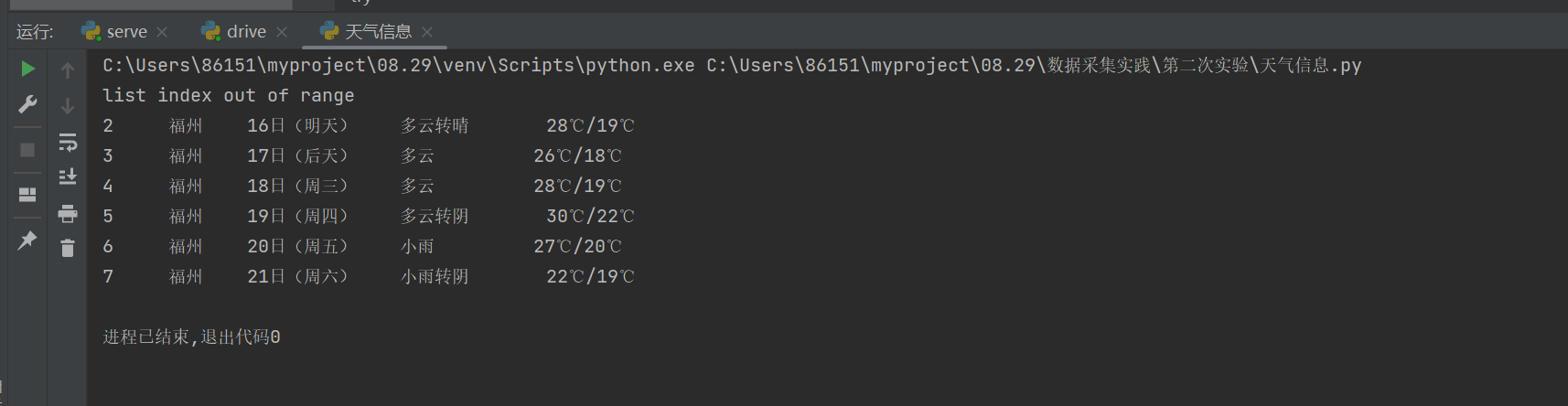

运行结果

心得体会

这是比较简单的复现,总体来说难度不大,让我对beautiful soup库理解更为深刻,能更熟练的从html中解析文档,并从中提取到所需数据;

同时也熟悉了sqlite3库的基本操作,包括如何连接表,创建表。

Task2

要求:用requests和自选提取信息方法定向爬取股票相关信息,并存储在数据库中。

候选网站:东方财富网:https://www.eastmoney.com/新浪股票:http://finance.sina.com.cn/stock/

Gitee:https://gitee.com/HJ_orange/crawl_project/tree/master/实践作业2

代码如下

import requests

import json

import sqlite3

# 创建一个SQLite数据库连接

conn = sqlite3.connect('my_database.db')

cursor = conn.cursor()

# 定义数据库表结构

cursor.execute('''CREATE TABLE IF NOT EXISTS my_table (

id INTEGER PRIMARY KEY,

code TEXT,

name TEXT,

latest_price REAL,

change_percent REAL,

change_amount REAL,

volume INTEGER,

turnover REAL,

amplitude REAL,

high REAL,

low REAL,

opening_price REAL,

yesterday_close REAL,

volume_ratio REAL,

turnover_rate REAL,

pe_ratio REAL,

pb_ratio REAL

)''')

headers = {

'user-agent' :'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/117.0.0.0 Safari/537.36 Edg/117.0.2045.47',

'cookie' : 'qgqp_b_id=a7c5d47be8ad882fee56fc695bab498d; st_si=17803153105309; st_asi=delete; HAList=ty-0-300045-%u534E%u529B%u521B%u901A; st_pvi=56620321867111; st_sp=2023-10-07%2015%3A19%3A51; st_inirUrl=https%3A%2F%2Fwww.eastmoney.com%2F; st_sn=52; st_psi=20231007155656228-113200301321-9129123788'

}

keypage = input("请输入要搜索的特定页面(用空格分隔):")

searchlist = list(map(int, keypage.split()))

for page in searchlist:

response = requests.get(url=f'http://76.push2.eastmoney.com/api/qt/clist/get?cb=jQuery112405166990298085778_1696666115151&pn={page}&pz=20&po=1&np=1&ut=bd1d9ddb04089700cf9c27f6f7426281&fltt=2&invt=2&wbp2u=|0|0|0|web&fid=f3&fs=m:0+t:6,m:0+t:80,m:1+t:2,m:1+t:23,m:0+t:81+s:2048&fields=f1,f2,f3,f4,f5,f6,f7,f8,f9,f10,f12,f13,f14,f15,f16,f17,f18,f20,f21,f23,f24,f25,f22,f11,f62,f128,f136,f115,f152&_=1696666115152',headers=headers)

data = response.text

start = response.text.find('(')

end = response.text.rfind(')')

data = response.text[start + 1:end]

data = json.loads(data)

data = data['data']['diff']

plist = ['f12','f14','f2','f3','f4','f5','f6','f7','f15','f16','f17','f18','f10','f8','f9','f23']

for i in range(len(data)):

row = (i + 1,) + tuple(data[i][j] for j in plist)

cursor.execute('INSERT INTO my_table VALUES (?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?,?)', row)

conn.commit() # 提交更改并关闭连接

conn.close()

print("{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}{:<10}".format("序号","代码","名称","最新价","涨跌幅","涨跌额","成交量","成交额","振幅","最高","最低","今开","昨收","量比","换手率","市盈率","市净率"))

for i in range(len(data)):

slist = []

print("{:<10}".format(i+1),end="")

for j in plist:

slist.append(data[i][j])

for k in slist:

print("{:<10}".format(k),end="")

print()

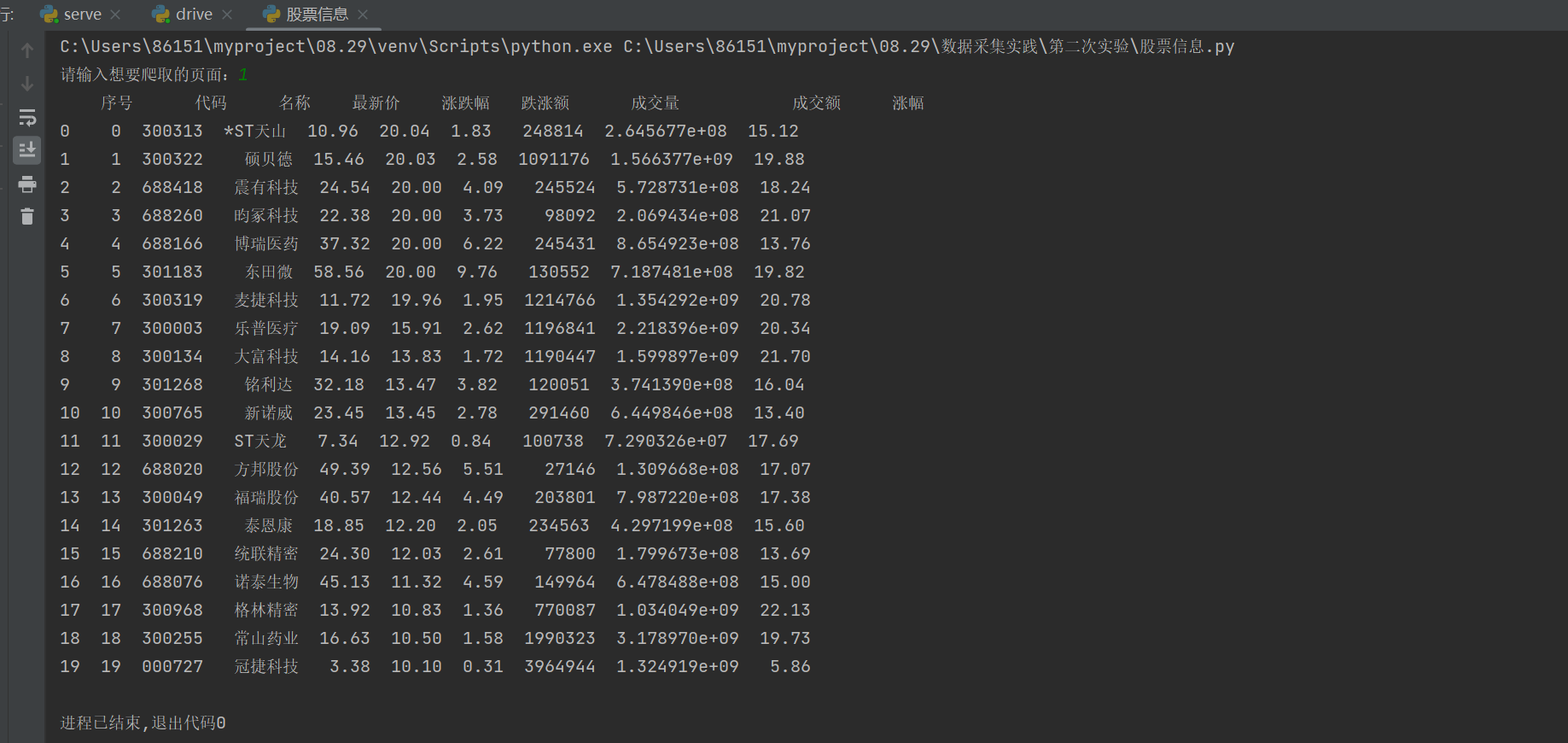

运行结果

心得体会

相比前面的方法 不一样的点在于通过js文件获取到所要爬取数据的url,了解了更多js文件提取信息方面的方法。

同时也需要理解接收服务器json响应的过程,并使用正则表达式去除外层函数调用。

Task3

要求:爬取中国大学2021主榜(https://www.shanghairanking.cn/rankings/bcur/2021)所有院校信息,并存储在数据库中,同时将浏览器F12调试分析的过程录制Gif加入至博客中。

Gitee:https://gitee.com/HJ_orange/crawl_project/tree/master/实践作业2

代码如下:

import requests

import re

import sqlite3

headers = {

'user-agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/118.0.0.0 Safari/537.36 Edg/118.0.2088.46'

}

conn = sqlite3.connect('daxue.db')

cursor = conn.cursor()

cursor.execute('''CREATE TABLE IF NOT EXISTS daxue (

id INTEGER PRIMARY KEY,

name text,

score text

)''')

url = 'https://www.shanghairanking.cn/_nuxt/static/1697106492/rankings/bcur/2021/payload.js'

response = requests.get(url=url,headers=headers)

data = response.text

namelist = re.findall("univNameCn:(.*?),univNameEn:",data)

scorelist = re.findall("score:(.*?),",data)

print("{:<10}{:<10}{:<10}".format("排名","学校","总分"))

x = 0

for i,j in zip(namelist,scorelist):

x=x+1

print("{:<10}{:<10}{:<10}".format(x,i,j))

row = (x,i,j)

cursor.execute('INSERT INTO daxue VALUES (?, ?, ?)', row)

conn.commit() # 提交更改并关闭连接

conn.close()

抓包过程

心得体会

在这个实验中更加深刻的体会到正则表达式匹配在一些特殊情况下的优越性。