mask_rcnn调用训练好的模型

mask_rcnn调用训练好的模型

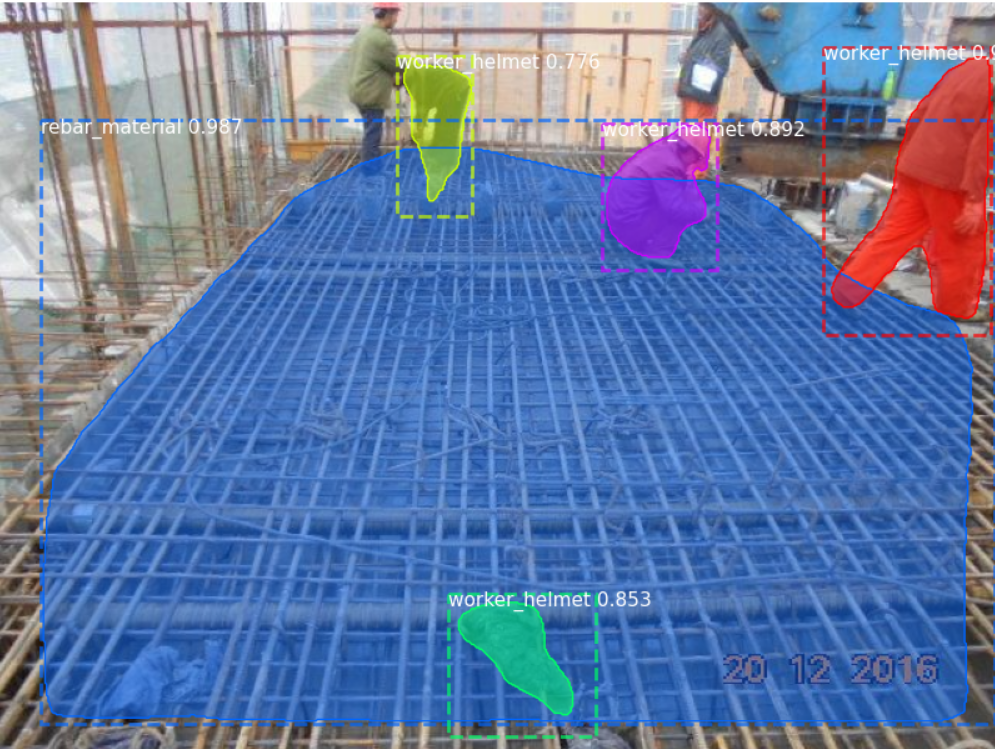

# -*- coding: utf-8 -*- import os import sys import random import math import numpy as np import skimage.io import matplotlib import matplotlib.pyplot as plt import cv2 import time from mrcnn.config import Config from datetime import datetime # Root directory of the project ROOT_DIR = os.getcwd() # Import Mask RCNN from mrcnn import utils import mrcnn.model as modellib from mrcnn import visualize # Import COCO config #from samples.coco import coco # Directory to save logs and trained model MODEL_DIR = os.path.join(ROOT_DIR, "logs") # Local path to trained weights file COCO_MODEL_PATH = os.path.join(MODEL_DIR ,"lh0050.h5") # Download COCO trained weights from Releases if needed # Directory of images to run detection on IMAGE_DIR = os.path.join(ROOT_DIR, "images") class ShapesConfig(Config): """Configuration for training on the toy shapes dataset. Derives from the base Config class and overrides values specific to the toy shapes dataset. """ # Give the configuration a recognizable name NAME = "shapes" # Train on 1 GPU and 8 images per GPU. We can put multiple images on each # GPU because the images are small. Batch size is 8 (GPUs * images/GPU). GPU_COUNT = 1 IMAGES_PER_GPU = 1 # Number of classes (including background) NUM_CLASSES = 1 + 18 # background + 3 shapes # Use small images for faster training. Set the limits of the small side # the large side, and that determines the image shape. IMAGE_MIN_DIM = 320 IMAGE_MAX_DIM = 640 # Use smaller anchors because our image and objects are small RPN_ANCHOR_SCALES = (8 * 6, 16 * 6, 32 * 6, 64 * 6, 128 * 6) # anchor side in pixels # Reduce training ROIs per image because the images are small and have # few objects. Aim to allow ROI sampling to pick 33% positive ROIs. TRAIN_ROIS_PER_IMAGE =100 # Use a small epoch since the data is simple STEPS_PER_EPOCH = 100 # use small validation steps since the epoch is small VALIDATION_STEPS = 50 #import train_tongue #class InferenceConfig(coco.CocoConfig): class InferenceConfig(ShapesConfig): # Set batch size to 1 since we'll be running inference on # one image at a time. Batch size = GPU_COUNT * IMAGES_PER_GPU GPU_COUNT = 1 IMAGES_PER_GPU = 1 config = InferenceConfig() # Create model object in inference mode. model = modellib.MaskRCNN(mode="inference", model_dir=MODEL_DIR, config=config) # Load weights trained on MS-COCO model.load_weights(COCO_MODEL_PATH, by_name=True) # COCO Class names # Index of the class in the list is its ID. For example, to get ID of # the teddy bear class, use: class_names.index('teddy bear') class_names = ['bg','worker_helmet','worker_nohelmet','rebar_working','rebar_material', 'steel','concrete_pouring','formwork_working','formwork_material','scaffolding', 'excavator','bulldozer','dump_truck','concrete_bucket','concrete_mixer', 'concrete_pump','tower_crane','crane','machine_other', 'pile','container','concrete_mixing_station','construction_base','beam','pile_cap'] # Load a random image from the images folder file_names = next(os.walk(IMAGE_DIR))[2] image = skimage.io.imread(os.path.join(IMAGE_DIR, random.choice(file_names))) a=datetime.now() # Run detection results = model.detect([image], verbose=1) b=datetime.now() # Visualize results print("times:",(b-a).seconds) r = results[0] visualize.display_instances(image, r['rois'], r['masks'], r['class_ids'], class_names, r['scores'])

##################################33

QQ 3087438119

浙公网安备 33010602011771号

浙公网安备 33010602011771号