【人工智能导论:模型与算法】MOOC 8.3 误差后向传播(BP) 例题 编程验证 Pytorch版本

上一篇文章【人工智能导论:模型与算法】MOOC 8.3 误差后向传播(BP) 例题 编程验证 - HBU_DAVID - 博客园 (cnblogs.com)

使用python实现,主要是为了观察链式求导的过程。

链式法则求导还是比较麻烦的,特别是层数比较深的时候,计算量很大,过程也很复杂,编程实现非常困难。

真正在做深度学习时,不需要编程实现求梯度过程。框架可以通过计算图自动求导,大大提高了效率。

以pytorch为例:

构建好计算图之后,求梯度只需一句话:L.backward()。

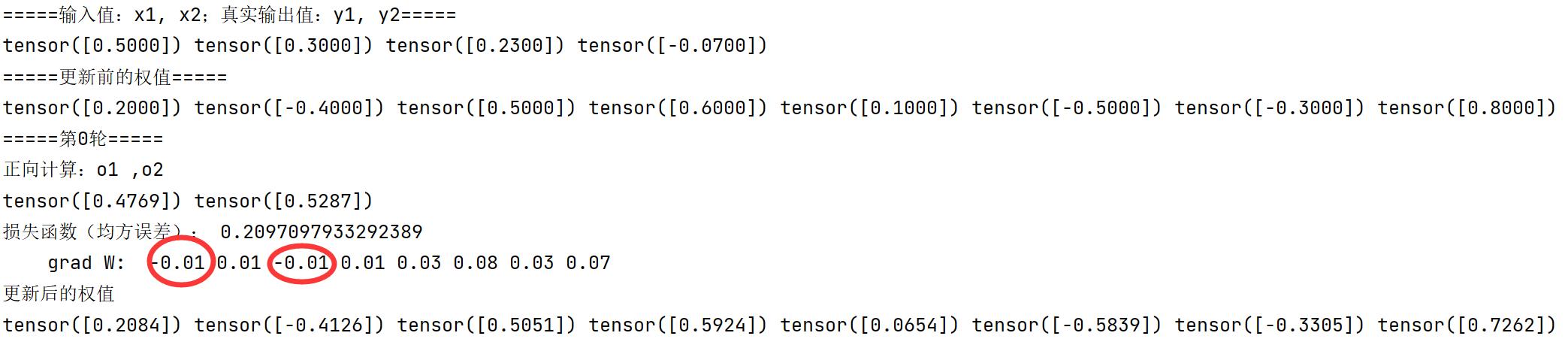

【存在问题】:第一轮求出的梯度,w1,w3是正确值的相反数,其他参数的梯度都是正确的。应该是还有理解不到位的地方。

源代码如下:

# https://blog.csdn.net/qq_41033011/article/details/109325070 # https://github.com/Darwlr/Deep_learning/blob/master/06%20Pytorch%E5%AE%9E%E7%8E%B0%E5%8F%8D%E5%90%91%E4%BC%A0%E6%92%AD.ipynb # torch.nn.Sigmoid(h_in) import torch x1, x2 = torch.Tensor([0.5]), torch.Tensor([0.3]) y1, y2 = torch.Tensor([0.23]), torch.Tensor([-0.07]) print("=====输入值:x1, x2;真实输出值:y1, y2=====") print(x1, x2, y1, y2) w1, w2, w3, w4, w5, w6, w7, w8 = torch.Tensor([0.2]), torch.Tensor([-0.4]), torch.Tensor([0.5]), torch.Tensor( [0.6]), torch.Tensor([0.1]), torch.Tensor([-0.5]), torch.Tensor([-0.3]), torch.Tensor([0.8]) # 权重初始值 w1.requires_grad = True w2.requires_grad = True w3.requires_grad = True w4.requires_grad = True w5.requires_grad = True w6.requires_grad = True w7.requires_grad = True w8.requires_grad = True def sigmoid(z): a = 1 / (1 + torch.exp(-z)) return a def forward_propagate(x1, x2): in_h1 = w1 * x1 + w3 * x2 out_h1 = sigmoid(in_h1) # out_h1 = torch.sigmoid(in_h1) in_h2 = w2 * x1 + w4 * x2 out_h2 = sigmoid(in_h2) # out_h2 = torch.sigmoid(in_h2) in_o1 = w5 * out_h1 + w7 * out_h2 out_o1 = sigmoid(in_o1) # out_o1 = torch.sigmoid(in_o1) in_o2 = w6 * out_h1 + w8 * out_h2 out_o2 = sigmoid(in_o2) # out_o2 = torch.sigmoid(in_o2) print("正向计算:o1 ,o2") print(out_o1.data, out_o2.data) return out_o1, out_o2 def loss_fuction(x1, x2, y1, y2): # 损失函数 y1_pred, y2_pred = forward_propagate(x1, x2) # 前向传播 loss = (1 / 2) * (y1_pred - y1) ** 2 + (1 / 2) * (y2_pred - y2) ** 2 # 考虑 : t.nn.MSELoss() print("损失函数(均方误差):", loss.item()) return loss def update_w(w1, w2, w3, w4, w5, w6, w7, w8): # 步长 step = 1 w1.data = w1.data - step * w1.grad.data w2.data = w2.data - step * w2.grad.data w3.data = w3.data - step * w3.grad.data w4.data = w4.data - step * w4.grad.data w5.data = w5.data - step * w5.grad.data w6.data = w6.data - step * w6.grad.data w7.data = w7.data - step * w7.grad.data w8.data = w8.data - step * w8.grad.data w1.grad.data.zero_() # 注意:将w中所有梯度清零 w2.grad.data.zero_() w3.grad.data.zero_() w4.grad.data.zero_() w5.grad.data.zero_() w6.grad.data.zero_() w7.grad.data.zero_() w8.grad.data.zero_() return w1, w2, w3, w4, w5, w6, w7, w8 if __name__ == "__main__": print("=====更新前的权值=====") print(w1.data, w2.data, w3.data, w4.data, w5.data, w6.data, w7.data, w8.data) for i in range(1): print("=====第" + str(i) + "轮=====") L = loss_fuction(x1, x2, y1, y2) # 前向传播,求 Loss,构建计算图 L.backward() # 自动求梯度,不需要人工编程实现。反向传播,求出计算图中所有梯度存入w中 print("\tgrad W: ", round(w1.grad.item(), 2), round(w2.grad.item(), 2), round(w3.grad.item(), 2), round(w4.grad.item(), 2), round(w5.grad.item(), 2), round(w6.grad.item(), 2), round(w7.grad.item(), 2), round(w8.grad.item(), 2)) w1, w2, w3, w4, w5, w6, w7, w8 = update_w(w1, w2, w3, w4, w5, w6, w7, w8) print("更新后的权值") print(w1.data, w2.data, w3.data, w4.data, w5.data, w6.data, w7.data, w8.data)

运行结果如下:

精简版源代码(可读性降低,初学者看原版代码好一些)

查看代码

import torch

x = [0.5, 0.3] # x0, x1 = 0.5, 0.3

y = [0.23, -0.07] # y0, y1 = 0.23, -0.07

print("输入值 x0, x1:", x[0], x[1])

print("输出值 y0, y1:", y[0], y[1])

w = [torch.Tensor([0.2]), torch.Tensor([-0.4]), torch.Tensor([0.5]), torch.Tensor(

[0.6]), torch.Tensor([0.1]), torch.Tensor([-0.5]), torch.Tensor([-0.3]), torch.Tensor([0.8])] # 权重初始值

for i in range(0, 8):

w[i].requires_grad = True

print("权值w0-w7:")

for i in range(0, 8):

print(w[i].data, end=" ")

def forward_propagate(x): # 计算图

in_h1 = w[0] * x[0] + w[2] * x[1]

out_h1 = torch.sigmoid(in_h1)

in_h2 = w[1] * x[0] + w[3] * x[1]

out_h2 = torch.sigmoid(in_h2)

in_o1 = w[4] * out_h1 + w[6] * out_h2

out_o1 = torch.sigmoid(in_o1)

in_o2 = w[5] * out_h1 + w[7] * out_h2

out_o2 = torch.sigmoid(in_o2)

print("正向计算,隐藏层h1 ,h2:", end="")

print(out_h1.data, out_h2.data)

print("正向计算,预测值o1 ,o2:", end="")

print(out_o1.data, out_o2.data)

return out_o1, out_o2

def loss(x, y): # 损失函数

y_pre = forward_propagate(x) # 前向传播

loss_mse = (1 / 2) * (y_pre[0] - y[0]) ** 2 + (1 / 2) * (y_pre[1] - y[1]) ** 2 # 考虑 : t.nn.MSELoss()

print("损失函数(均方误差):", loss_mse.item())

return loss_mse

if __name__ == "__main__":

for k in range(1):

print("\n=====第" + str(k+1) + "轮=====")

l = loss(x, y) # 前向传播,求 Loss,构建计算图

l.backward() # 反向传播,求出计算图中所有梯度存入w中. 自动求梯度,不需要人工编程实现。

print("w的梯度: ", end=" ")

for i in range(0, 8):

print(round(w[i].grad.item(), 2), end=" ") # 查看梯度

step = 1 # 步长

for i in range(0, 8):

w[i].data = w[i].data - step * w[i].grad.data # 更新权值

w[i].grad.data.zero_() # 注意:将w中所有梯度清零

print("\n更新后的权值w:")

for i in range(0, 8):

print(w[i].data, end=" ")

浙公网安备 33010602011771号

浙公网安备 33010602011771号