centos7的Kubernetes部署记录

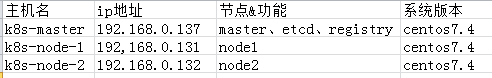

一、使用vm创建了三个centos系统,基本细节如下:

1.1 修改三台机器对应的主机名:

[root@localhost ~] hostnamectl --static set-hostname k8s-master

[root@localhost ~] hostnamectl --static set-hostname k8s-node-1

[root@localhost ~] hostnamectl --static set-hostname k8s-node-2

1.2 修改三台机器的hosts文件:

[root@localhost ~] echo '192.168.0.137 k8s-master registry etcd' >> /etc/hosts

1.3 关闭三台机器的防火墙

[root@localhost ~] systemctl stop firewalld.service

[root@localhost ~] systemctl disable firewalld.service

二、master主机部署

2.1 通过yum下载etcd

[root@localhost ~]# yum install etcd -y

yum安装的etcd默认配置文件在/etc/etcd/etcd.conf。编辑配置文件,更改带有背景色的行:

[root@localhost ~]# vi /etc/etcd/etcd.conf

1 #[Member] 2 #ETCD_CORS="" 3 ETCD_DATA_DIR="/var/lib/etcd/default.etcd" 4 #ETCD_WAL_DIR="" 5 #ETCD_LISTEN_PEER_URLS="http://localhost:2380" 6 ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379,http://0.0.0.0:4001" 7 #ETCD_MAX_SNAPSHOTS="5" 8 #ETCD_MAX_WALS="5" 9 ETCD_NAME=master 10 #ETCD_SNAPSHOT_COUNT="100000" 11 #ETCD_HEARTBEAT_INTERVAL="100" 12 #ETCD_ELECTION_TIMEOUT="1000" 13 #ETCD_QUOTA_BACKEND_BYTES="0" 14 # 15 #[Clustering] 16 #ETCD_INITIAL_ADVERTISE_PEER_URLS="http://localhost:2380" 17 ETCD_ADVERTISE_CLIENT_URLS="http://etcd:2379,http://etcd:4001" 18 #ETCD_DISCOVERY="" 19 #ETCD_DISCOVERY_FALLBACK="proxy" 20 #ETCD_DISCOVERY_PROXY="" 21 #ETCD_DISCOVERY_SRV="" 22 #ETCD_INITIAL_CLUSTER="default=http://localhost:2380" 23 #ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

2.2 启动并验证状态

[root@localhost ~]# systemctl start etcd

[root@localhost ~]# systemctl enable etcd [root@localhost ~]# etcdctl set testdir/testkey0 0 0 [root@localhost ~]# etcdctl get testdir/testkey0 0 [root@localhost ~]# etcdctl -C http://etcd:4001 cluster-health member 8e9e05c52164694d is healthy: got healthy result from http://0.0.0.0:2379 cluster is healthy [root@localhost ~]# etcdctl -C http://etcd:2379 cluster-health member 8e9e05c52164694d is healthy: got healthy result from http://0.0.0.0:2379 cluster is healthy

2.3 安装docker

[root@k8s-master ~]# yum install docker -y

修改Docker配置文件,使其允许从registry中拉取镜像。修改有背景色的行

1 [root@k8s-master ~]# vi /etc/sysconfig/docker 2 # /etc/sysconfig/docker 3 # Modify these options if you want to change the way the docker daemon runs 4 OPTIONS='--selinux-enabled --log-driver=journald --signature-verification=false' 5 if [ -z "${DOCKER_CERT_PATH}" ]; then 6 DOCKER_CERT_PATH=/etc/docker 7 fi 8 OPTIONS='--insecure-registry registry:5000'

设置开机自启动并开启服务

[root@k8s-master ~]# chkconfig docker on

[root@k8s-master ~]# service docker start

2.4 安装kubernets

yum install kubernetes -y

在kubernetes master上需要运行以下组件:

Kubernets API Server

Kubernets Controller Manager

Kubernets Scheduler

修改相应配置:

[root@k8s-master ~]# vi /etc/kubernetes/apiserver ### # kubernetes system config # # The following values are used to configure the kube-apiserver # # The address on the local server to listen to. KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0" # The port on the local server to listen on. KUBE_API_PORT="--port=8080" # Port minions listen on # KUBELET_PORT="--kubelet-port=10250" # Comma separated list of nodes in the etcd cluster KUBE_ETCD_SERVERS="--etcd-servers=http://etcd:2379" # Address range to use for services KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16" # default admission control policies KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota" # Add your own! KUBE_API_ARGS=""

[root@k8s-master ~]# vi /etc/kubernetes/config ### # kubernetes system config # # The following values are used to configure various aspects of all # kubernetes services, including # # kube-apiserver.service # kube-controller-manager.service # kube-scheduler.service # kubelet.service # kube-proxy.service # logging to stderr means we get it in the systemd journal KUBE_LOGTOSTDERR="--logtostderr=true" # journal message level, 0 is debug KUBE_LOG_LEVEL="--v=0" # Should this cluster be allowed to run privileged docker containers KUBE_ALLOW_PRIV="--allow-privileged=false" # How the controller-manager, scheduler, and proxy find the apiserver KUBE_MASTER="--master=http://k8s-master:8080"

设置服务开机启动:

systemctl enable kube-apiserver.service systemctl start kube-apiserver.service systemctl enable kube-controller-manager.service systemctl start kube-controller-manager.service systemctl enable kube-scheduler.service systemctl start kube-scheduler.service

三、部署node

3.1 安装docker

[root@k8s-master ~]# yum install docker -y

修改docker配置文件,使其支持拉取master上面的私有镜像

[root@k8s-master ~]# vi /etc/docker/daemon.json

{

"insecure-registries":["192.168.0.137:5000"]

}

并设置开机启动:

[root@k8s-master ~]# chkconfig docker on

[root@k8s-master ~]# service docker start

3.2 安装kubernets

yum install kubernetes -y

在kubernetes node上需要运行以下组件:

Kubelet

Kubernets Proxy

相应的要更改以下几个配置文件信息:

[root@K8s-node-1 ~]# vi /etc/kubernetes/config # # The following values are used to configure various aspects of all # kubernetes services, including # # kube-apiserver.service # kube-controller-manager.service # kube-scheduler.service # kubelet.service # kube-proxy.service # logging to stderr means we get it in the systemd journal KUBE_LOGTOSTDERR="--logtostderr=true" # journal message level, 0 is debug KUBE_LOG_LEVEL="--v=0" # Should this cluster be allowed to run privileged docker containers KUBE_ALLOW_PRIV="--allow-privileged=false" # How the controller-manager, scheduler, and proxy find the apiserver KUBE_MASTER="--master=http://k8s-master:8080"

[root@K8s-node-1 ~]# vi /etc/kubernetes/kubelet ### # kubernetes kubelet (minion) config # The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces) KUBELET_ADDRESS="--address=0.0.0.0" # The port for the info server to serve on # KUBELET_PORT="--port=10250" # You may leave this blank to use the actual hostname KUBELET_HOSTNAME="--hostname-override=k8s-node-1" # location of the api-server KUBELET_API_SERVER="--api-servers=http://k8s-master:8080" # pod infrastructure container KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=registry.access.redhat.com/rhel7/pod-infrastructure:latest" # Add your own! KUBELET_ARGS=""

启动服务并设置开机自启动:

systemctl enable kubelet.service systemctl start kubelet.service systemctl enable kube-proxy.service systemctl start kube-proxy.service

3.3 查看状态

在master上查看集群中节点及节点状态:

[root@k8s-master kubermanage]# kubectl get nodes NAME STATUS AGE k8s-node-1 Ready 7h k8s-node-2 Ready 7h

四、创建覆盖网络——Flannel

4.1 安装 Flannel

yum install flannel -y

4.2 配置 Flannel

master、node上均编辑/etc/sysconfig/flanneld

[root@k8s-master ~]# vi /etc/sysconfig/flanneld # etcd url location. Point this to the server where etcd runs FLANNEL_ETCD_ENDPOINTS="http://etcd:2379" # etcd config key. This is the configuration key that flannel queries # For address range assignment FLANNEL_ETCD_PREFIX="/atomic.io/network" # Any additional options that you want to pass #FLANNEL_OPTIONS=""

4.3 配置etcd中关于flannel的key

Flannel使用Etcd进行配置,来保证多个Flannel实例之间的配置一致性,所以需要在etcd上进行如下配置:(‘/atomic.io/network/config’这个key与上文/etc/sysconfig/flannel中的配置项FLANNEL_ETCD_PREFIX是相对应的,错误的话启动就会出错)

master创建key:

[root@k8s-master ~]# etcdctl mk /atomic.io/network/config '{ "Network": "10.0.0.0/16" }' { "Network": "10.0.0.0/16" }

4.4 启动flannel之后依次重启服务:

在master执行:

systemctl enable flanneld.service systemctl start flanneld.service systemctl restart docker systemctl restart kube-apiserver.service systemctl restart kube-controller-manager.service systemctl restart kube-scheduler.service

在node上执行:

systemctl enable flanneld.service

systemctl start flanneld.service

systemctl restart docker

systemctl restart kubelet.service

systemctl restart kube-proxy.service

五、部署pod

vi test-rc.yaml

apiVersion: v1 kind: ReplicationController metadata: name: test-controller spec: replicas: 2 #即2个备份 selector: name: test template: metadata: labels: name: test spec: containers: - name: test image: 192.168.0.137:5000/h1 #从137 master主机上面的私有仓库拉取镜像 ports: - containerPort: 80

编写文件之后 :

[root@k8s-master kubermanage]# kubectl create -f test-rc.yaml

之后可以通过 kubectl get pods获取到创建的pod的状态,假如一直处于创建状态(ContainerCreating)或者创建失败,使用 kubectl describe pod pod名称获取错误原因

可能会有如下错误发生:

报错一:image pull failed for registry.access.redhat.com/rhel7/pod-infrastructure:latest, this may be because there are no credentials on this request. details: (open /etc/docker/certs.d/registry.access.redhat.com/redhat-ca.crt: no such file or directory) 解决方案:yum install *rhsm* -y 报错二:Failed to create pod infra container: ImagePullBackOff; Skipping pod "redis-master-jj6jw_default(fec25a87-cdbe-11e7-ba32-525400cae48b)": Back-off pulling image "registry.access.redhat.com/rhel7/pod-infrastructure:latest 解决方法:试试通过手动下载 docker pull registry.access.redhat.com/rhel7/pod-infrastructure:latest

成功之后两个node机器会自动开启docker容器,在其中一个node机器通过 docker ps 可以查看运行容器列表。

六、 使测试的apache pod外网可访问

编写nodepod文件:

vi test-nodeport.yaml apiVersion: v1 kind: Service metadata: name: test-service-nodeport spec: ports: - port: 80 targetPort: 80 nodePort: 31580 protocol: TCP type: NodePort selector: name: test

其中 80为node容器里apache默认端口号,31580为对外端口号 name: test表示创建test pod的服务

kubectl create -f test-nodeport.yaml 创建test service

在浏览器里面输入:http://192.168.0.131:31580 或者 http://192.168.0.132:31580 均可访问到apache主页面。

另外如果想输入 http://192.168.0.137:31580 也能访问,则在master端也需要启动kube-proxy服务:

systemctl start kube-proxy

参考链接:

http://www.cnblogs.com/kevingrace/p/5575666.html

浙公网安备 33010602011771号

浙公网安备 33010602011771号